Abstract

This two-wave panel survey puts five pathways (i.e., antisocial and prosocial personality, intergroup identity conflict, recent victimization experiences, social media habits) against each other to test their unique over-time relationship with digital hate perpetration. Using autoregressive structural equation modeling, psychopathy, need for social approval, and recent victimization emerged as universally relevant for both incivility and intolerance. Notably, recent victimization had the strongest link, highlighting that breaking victimization-perpetration cycles may be key in combating digital hate. Furthermore, Machiavellianism and social media habits selectively predicted online intolerance. All these generally and selectively relevant paths point toward distinct opportunities to reduce aggressive communication.

Despite providing users with low-threshold access to welcoming communities and ingenious content to be both entertained and enlightened, digital hate is thriving across mainstream social media platforms (e.g., Facebook, YouTube, TikTok, X, Instagram). This phenomenon is even more pronounced on platforms popular among fringe groups (e.g., Telegram, 8Chan) or alt-tech platforms (e.g., Truth Social, Gab), where it is fuel for intergroup conflict threatening societal cohesion. Digital hate refers to “any kind of digitally transmitted malicious expression performed by and directed against an individual or a collective,” including a broad range of conceptually noisy constructs (Matthes et al., 2025) from a large family of phenomena that involve ordinary meanings of hate (Brown, 2017). Users who post and share such content are the primary culprits—even if their motives may be well-meaning, for instance, to ignite what they perceive as necessary discussion. Psychological mechanisms underlying digital hate perpetration (understood without accounting for users’ self-attributed intentions) have been examined heavily for phenomena like cyberbullying (Farrington et al., 2023). A recent Delphi study by Weber et al. (2024) further provided an in-depth exploration of various perpetration mechanisms for online hate speech (i.e., hostile speech targeting social groups and their members for their visible and/or assigned characteristics).

Compared to illegal hate speech, psychological reasons behind why social media users engage in uncivil (i.e., violations of interpersonal norms, for instance, rude language, name-calling, or shouting) and intolerant speech (i.e., violations of democratic norms, for instance, exclusionary and dehumanizing language questioning social groups’ democratic rights; both Rossini, 2022) have received less scholarly attention. These forms of speech typically fall into some kind of legal gray area still covered by free-speech principles, so much so that they are often covered by institutionalized news media, sometimes to their own detriment (Goovaerts, 2025). Since platforms are not necessarily required to remove uncivil and (not blatantly) intolerant speech (e.g., in Austria where this study was conducted), both may reach larger audiences and influence discourse even more lastingly.

What brings people to violating these norms on social media has been investigated from various perspectives. Accordingly, literature has documented evidence for different perpetration mechanisms. These mechanisms range from antisocial dispositions, through which users tend to exhibit reduced regard for other users’ well-being (Frischlich et al., 2021), to prosocial motivations reflecting tendencies to seek social approval from audiences to the disadvantage of other users (Jiang et al., 2024). They further include identitarian drivers related to users’ self-categorization as members of ingroups that are perceived as requiring defense against outgroups (Reichelmann & Costello, 2021), previous (online) victimization experiences that can facilitate malevolent social media activity via diverse routes (Frischlich et al., 2021), and social media habits (Ceylan et al., 2023).

This study contributes to this vibrant research field in three ways: First, prior research usually examined only a single path to digital hate perpetration, thus limiting insights into relative impacts. Guided by nonepisodic mechanisms outlined in the general aggression model (GAM; Allen & Anderson, 2017) as an established framework that integrates various theoretical streams, we examine a comprehensive prediction model for digital hate perpetration that involves five distinct distal drivers (i.e., antisocial and prosocial traits, intergroup identity conflict, recent victimization, and habitual sharing of social media content). Second, extant literature is scarce on conceptually nuanced evidence on unique predictive profiles for gray-area speech offenses. Based on recent conceptualizations of incivility and intolerance, our study provides discriminative findings on whether each mechanism’s relevance varies meaningfully across these modes of norm-violating speech. Third, digital hate perpetration has been investigated primarily via cross-sectional survey methodologies (Bührer et al., 2024). Such approaches are limited in their ability to determine (Granger) causality and even directionality and often suffer from sampling error and common-method bias, resulting in a potentially distorted basis for robust evidence. Moreover, because cyberbullying is the most thoroughly examined phenomenon, prior research has focused predominantly on adolescents and young adults (Matthes et al., 2025). In contrast, this study presents much-needed evidence from a quota-representative two-wave panel survey. This methodology allows for estimating directional over-time relationships with a less biased measurement approach and better statistical control given that exogenous and endogenous variables are not measured at the same time and autocorrelations are accounted for, respectively.

Five Paths to Digital Hate Perpetration

Decades of psychological research on aggressive behavior have led to a vast theoretical richness for understanding, explaining, and predicting both hate perpetration in general and digital hate perpetration as a more specific phenomenon. Integrating well-established mechanisms from different domain-specific theories of aggression (e.g., cognitive-neoassociation theory, social learning theory, script theory, excitation transfer theory, social interaction theory), the GAM (Allen & Anderson, 2017) provides a sufficiently broad approach that can serve as a guide for distinguishing distinct general (i.e., nonepisodic) prediction paths to digital hate perpetration.

In a nutshell, the GAM differentiates between proximal and distal factors. While proximal factors determine processes during each single episode of aggressive behavior, distal factors include biological, environmental, and personality risk factors that are generally present influencing how episodic processes unfold. Given that this study’s two-wave survey methodology is suited for examining distal factors by means of an aggregational measurement approach, we focused exclusively on personality traits and environmental factors that we considered representative for distinct factors predicting digital hate perpetration. Specifically, according to the GAM, all these factors may principally facilitate that people understand and appraise interpersonal situations aggressively and, eventually, engage in hostile actions—including digital interactions and digitally transmitted perpetration acts. As such, the GAM provides an integrative framework that theoretically embeds several stable mechanisms within a dynamic situational process model, hence specifying their role in motivating digital hate perpetration over longer periods.

Concerning personality risk factors, we refer to both the long-standing tradition of investigating antisocial personality traits as precursors of antagonistic actions and the recently emerging understanding of aggressive behaviors as prosocially motivated via interpersonal gratifications received from like-minded others. Specifically, to cover both perspectives, we focus on the dark triad as a popular multidimensional antisocial personality concept and people’s need for social approval as a straightforward dispositional manifestation of the social approval-based theory of online hate (see Walther, 2024).

With respect to environmental risk factors, we accounted for three research lines to which prior research has assigned major roles in motivating digital hate perpetration: (a) intergroup dynamics, (b) self-related victimization experiences that happened recently, and (c) technological affordances. More precisely, this study addresses identity centrality as a central concept related to the social identity theory of intergroup conflict (see Tajfel & Turner, 1986), which is typically considered pivotal for explaining intergroup aggression based on an individual’s self-concept within heterogeneous societies. People’s prior victimization was selected to reflect domain-specific experiences of being targeted by digital hate in social media environments. Finally, we considered social media habits to account for cognitive-behavioral action impulses related to platform’s communicative affordances, which have been argued being instrumental for perpetration (Kakavand, 2024). Accordingly, this selection of cross-situationally relevant mechanisms, albeit not being fully exhaustive, is suited to cover key aspects of individuals’ (internalized) environment currently discussed as crucial to predict engagement in hateful online speech.

Antisocial Personality Traits

Among antisocial personality traits, the so-called “dark triad” (i.e., psychopathy, Machiavellianism, and narcissism), as proposed by Paulhus and Williams (2002), is arguably among the most common models to explain digital hate perpetration. Psychopathy manifests as a lack of empathy, poor impulse control, and remorselessness. Individuals high in Machiavellianism are characterized by cynicism, manipulation, and indifference toward exploiting others, while narcissism entails self-indulgence, feelings of superiority, and belittling behavior (Moor & Anderson, 2019). While these traits do not represent clinically relevant disorders, they are considered undesirable in most social settings. Importantly, interpersonally belligerent behaviors can even be more pronounced in environments in which perpetrators can remain anonymous (e.g., social media platforms). These environments can reinforce a general pattern where psychopathy emerges as strongest and most consistent correlate, followed by Machiavellianism and narcissism, where findings are weaker and, especially for the latter, inconsistent (Moor & Anderson, 2019). However, digital hate phenomena are neither conceptualized nor operationalized consistently across studies (Matthes et al., 2025), limiting generalizability. Apart from this, it has also been shown that findings may vary across behavioral facets. For example, depending on whether cyberstalking was measured as duplicitous or invasive, it correlated with psychopathy and narcissism or only with psychopathy, respectively (March et al., 2022). Similarly, Brankovic et al. (2023) concluded that psychopathy is associated with riskier and more severe cyberstalking, while narcissism and Machiavellianism are related to covert manifestations of it.

Regarding uncivil or intolerant speech on social media, clear evidence concerning antisocial personality traits is scarce. While Koban et al. (2018) found no statistically meaningful links between any of the dark triad and uncivil commenting intentions, Frischlich et al. (2021) found that psychopathy and Machiavellianism, but not narcissism, were indeed associated with heightened online incivility. Further, Sorokowski et al. (2020) identified psychopathy as the only significant correlate, whereas Mungall et al. (2024) found that various facets of all dark triad traits were related to political online incivility. Concerning intolerance in the sense of Rossini (2022), research is even more lacking. Nevertheless, all dark triad traits have indeed been associated with intolerant attitudes, with narcissism exhibiting notable inconsistencies once more (Anderson & Cheers, 2018). Similar relationships with the dark triad have also been documented for other digital hate concepts that share phenomenological roots with intolerant online speech (Srinivas et al., 2024). Based on the dark triad’s constitutive tendency toward social norm violations and accounting for both the robust findings for psychopathy and Machiavellianism and the inconsistent ones for narcissism, we propose the following hypotheses and research question:

Prosocial Personality Traits

While antisocial traits may drive individuals toward malevolence, links between prosocial traits, such as the recently highlighted social approval orientation (Walther, 2024), and digital hate perpetration are less obvious. Need for social approval describes people’s desire to adhere to social norms, please others, and conform to socially acceptable behavior. Social media platforms capitalize on this need by providing users with means to reward behaviors through likes or positive comments. Within social media communities where antisociality may be considered somewhat normative (see, e.g., Oz et al., 2018), this can reinforce hateful actions, even if perpetrators’ primary intention is to fulfill their social approval needs via group-conforming behavior (Piccoli et al., 2020). On a cognitive level, strong needs for social approval have also been found related to diminished sensitivity to injustice (Bhandary, 2018), indicating reduced awareness of associated harms.

Although prior research indicates that seeking social approval can drive digital hate perpetration in related phenomena, such as cyberaggression (Soares et al., 2023), direct empirical evidence regarding uncivil and intolerant speech is scarce. Incivility has been associated with increased engagement metrics, such as recommendations (Muddiman & Stroud, 2017) or up-votes (Shmargad et al., 2022), which may be suggestive of their normative status among a large-enough user group who approve such speech (Jiang et al., 2024). Concerning intolerance, perceived social approval has been found to mediate between observing and perpetrating online hate (Chung et al., 2023). Any presence of targets who apparently belong to mutually despised social groups may strengthen in-group cohesion while simultaneously deepening out-group rejection, creating contexts where individuals strongly driven by seeking social approval may be particularly motivated to engage in derogatory behaviors (Walther, 2024). Given that uncivil and intolerant speech may thus garner social approval not only in fringe communities but also among users in mainstream online communities (especially since social disapproval is rarely uttered visibly even in the latter contexts), we posit:

Intergroup Identity Conflict

An individual’s social identity comprises aspects of their self-concept shaped by memberships in different groups (Tajfel & Turner, 1986), which are typically based on distinct attributes like, for instance, self-perceived gender or nationality. Whether specific social identities are activated largely depends on group salience and personal significance, which together builds someone’s identity centrality (i.e., self-ascribed importance of an identity for one’s self-concept; Cameron, 2004). Social media platforms can serve as potent triggers given that they provide access to like-minded people where risks of perceiving in-group criticisms as personal attacks is especially high (Stroud et al., 2017) and sometimes group norms even encourage out-group hate (Halperin, 2008).

Identity centrality plays a significant role in understanding various digital hate perpetration phenomena. Previous research, for instance, found that a firm gamer identity can predict sexual harassment in online video games (Tang et al., 2020) and that homogeneous identity bubbles may be linked to cyberaggression (Zych et al., 2023). Research on the relationships between identity centrality and uncivil online communication in particular is rare (Ng et al., 2020); however, Rains et al. (2017) documented that an increased presence of out-group members (which may serve as a cue for identity centrality to become more salient) can result in more uncivil discussions. In contrast, extensive research has been conducted on identity centrality and intolerance. While some social identities, such as nonpoliticized religious affiliations, may serve as protective factors (Hasangani, 2022), numerous findings suggest that intolerant behavior is highly associated with, among others, strong national (Reichelmann & Costello, 2021), as well as gender and sexual identities (Chua & Wilson, 2023). Following key premises of social identity theory, this may be explained by ingroup favoritism and, in particular, outgroup antagonism being more influential for people with strong identity centrality. In other words, individuals who assign great importance to their social identities are likely more sensitive to ingroup vulnerability (i.e., experiencing one’s group in greater need for protection) and outgroup threats (i.e., feeling that other groups are a greater danger to one’s group) and, by implication, more strongly motivated to act upon these perceptions with incivility or intolerance. Particularly in polarized social media environments, this may be most relevant for individuals with high identity centrality, potentially triggering uncivil or intolerant responses. We propose:

Recent Victimization Experiences

Various theories explain why some targets may turn into perpetrators. Routine activity theory suggests that certain social media activities (like scrolling frequently on potentially harmful sites) could desensitize individuals, leading them to engage in malicious behaviors themselves, even if (or sometimes because) they had previously been targeted in some way (Räsänen et al., 2016). Similarly, social cognitive theory (Bandura, 1986) assumes that individuals learn and imitate behaviors from others against them, which can result in increased hateful engagement as they adopt perceived norms (Povedano et al., 2015) or seek to emulate individuals who wield symbolic power over others (Estévez et al., 2020). Additionally, theories such as the cycle of violence (Widom, 1989) and general strain theory (Agnew, 1992) propose that hate targets may seek vengeance for perceived mistreatment. Social media environments could exacerbate these mechanisms, as victimized perpetrators may benefit from anonymity and online disinhibition.

Several studies have identified relationships between victimization and perpetration across digital hate phenomena. Importantly, such findings do not appear to be isolated cases as meta-analyses of longitudinal data have indeed indicated that previous victimization predicts digital hate perpetration (Marciano et al., 2020). Rare findings regarding online incivility or intolerance in particular point in a similar direction. Frischlich et al. (2021) demonstrated that prior victimization was the strongest correlate for incivility, and the association between victimization and intolerance has been observed across samples (Wachs et al., 2022). While all this evidence supports digital hate victimization as a predictor of digital hate perpetration, non-cross-sectional studies focusing specifically on incivility and intolerance are lacking. Nevertheless, given the robust evidence, we propose:

Social Media Habits

Many social media behaviors are habitualized, especially if they do not involve lengthy action sequences but can be executed immediately without much friction (Schnauber-Stockmann et al., 2023). Social media habits are generally understood as cue-driven, automatically instigated, and reward-independent action impulses (Mazar & Wood, 2018) that are in one way or another related to social media use. Once firmly habitualized, social media habits may be instigated upon a sensed presence of various cues (Bayer et al., 2016) and executed without much conscious thought nor inhibitory control even when initially associated rewards are absent or to some extent outweighed by costs.

Although platforms often moderate content that may violate their policy (or laws), for instance, by reducing visibility to others, social media algorithms are nevertheless attuned toward maximizing user engagement. Because of this preference, users may be rewarded for producing or disseminating content that can hold other users’ attention or even animate engagement. This may benefit the formation of posting and sharing habits for societally controversial speech or material situated only just within policy boundaries that may, for instance, resonate strongly among hatemongers and within echo chambers (Goel et al., 2023). The relevance of such social media habits has recently been highlighted by Ceylan et al. (2023) for (mis-)information content, documenting that habit-driven users are more likely to share false news beyond their political beliefs without consideration to accuracy concerns and informational consequences. Gray-area violations of interpersonal and democratic norms have been established as highly engaging in terms of social media metrics within certain communities (Su et al., 2021). Such metrics may initially act as rewards and later as instigation cues, making it plausible to assume that firm social media habits may also apply for posting and sharing incivility and intolerance once users are internally or externally cued. Alternatively, it is also plausible to assume that users who tend to post and share content on social media automatically without much thought are generally less likely to reflect upon what kind of speech they may disseminate. Thus, they may also be less likely to inhibit their habitual impulses from being enacted. Based on both of these explanations, we hypothesized:

Method

It needs to be noted that this study was conducted in the context of a more comprehensive two-wave panel survey about Austrian’s experience with and perception of different kinds of digital hate. Notably, mid-sized Central European Austria represents a particularly interesting context for investigating digital hate perpetration because it combines, on the one hand, a polarized political environment where the major far-right Freedom Party is increasingly successful not only at elections but also in shaping (online) discourse with, on the other hand, firm antihate legislation (i.e., the Hass-im-Netz-Bekämpfungsgesetz), facilitating the removal of harmful online content and legal persecution of perpetrators.

Aiming for a 2-month interval between waves (which was opted for in order to be able to meaningfully examine a variable of interest of presumably moderate stability while accounting for both the possibility of disruptive real-world events taking place and recent experiences with panel mortality), data were collected between July 27 and August 5, 2023, for Wave 1 (W1) and September 27 and October 6, 2023, for Wave 2 (W2). Screened by the Institutional Review Board of the Faculty of Social Sciences at University of Vienna (ID: 20230705_029), the entire survey was considered minimal ethical risk. Supplemental material, including German item wordings and item-level descriptive statistics, datasets, cleaning and analysis scripts, and detailed outcome files are available at https://osf.io/gycjr.

Participants

A professional market research institute was commissioned for recruiting an Austrian sample based on representative quotas for gender identification, age, and education. Invitees needed to be at least 16 years old and had to provide informed consent in order to participate, which also involved their willingness to answer questions about their experiences with hostile online content in different roles (i.e., as perpetrator, bystander, and target). Datasets from participants who (a) dropped out during survey completion, (b) skipped items concerning their incivility or intolerance perpetration, or (c) incorrectly answered any attention checks at W1 were excluded. Due to their small number prohibiting a meaningful analysis, participants who (d) identified as nonbinary/diverse (n = 3) were also excluded.

After exclusions, the final sample for W1 consisted of N = 1,521 participants (age M = 48.45, standard deviation [SD] = 15.28, range: 16–89 years), including n = 780 (51.3%) female- and n = 741 (48.7%) male-identifying individuals. Concerning their education, n = 1,160 (76.3%) did not have a university degree, while n = 361 (23.7%) had successfully completed postsecondary education. For W2, the final sample size was N = 1,032 (age M = 50.25, SD = 14.84, range: 17–89 years) and comprised n = 520 (50.4%) participants who identified as female and n = 512 (49.6%) who identified as male, as well as n = 815 (78.9%) without and n = 218 (21.1%) with university degree. Dropout analysis between participants who completed both waves and those who only completed W1 (n = 489) indicated small effect sizes for a few variables with minimal practical relevance (see Supplemental Appendix: Table SA1 for detailed results).

Procedure

Participants first received detailed information about the survey content (including a content warning and self-help resources) and their rights, after which they were asked for consent. The complete survey involved several subprojects with distinct research questions. Specifically, the survey first asked for demographics and social media use (including sharing habits), followed by blocks about (a) general digital hate experiences (across perpetrator, bystander, and target roles), (b) personality traits (including the dark triad, social approval motivation, and identity centrality), (c) experiences with digital hate against specifically defined targets, and (d) personal attitudes. After these blocks, participants were debriefed. Scales within each block and items within each scale were randomly ordered. Both waves were identical except for personality traits only being included in W1 and attitudes being positioned between both digital hate blocks (to minimize response fatigue).

Measures

To reduce participants’ effort and further prevent response fatigue, we either favored available short-scales (e.g., for habits or the dark triad) over longer versions or, if no short versions existed, adapted existing scales by selecting high-loadings items (as it is commonly practiced in multi-wave survey research). Unless indicated otherwise (e.g., when we self-constructed items for lack of existing scales), German-language items from systematically validated translations were used where possible; if no such translations existed, we ourselves (being proficient in both German and English) translated the original items in an iterative process (see Supplemental Appendix: Table SA2 for original and translated item wordings and item-level statistics). For descriptive purposes, unit-weighted sum scores and internal reliability coefficients for all constructs are presented in Supplemental Appendix: Table SA3.

Antisocial Personality Traits: Dark Triad

Psychopathy, Machiavellianism, and narcissism were measured with nine items from Jonason and Webster (2010; German items by Küfner et al., 2015). Notably, we also measured sadism via a self-translated version of Plouffe et al. (2017); however, given almost perfect covariance with psychopathy (r = 0.84), sadism was dropped. Participants were asked to specify how much statements apply to them on 5-point Likert scales (1 = not at all, 5 = completely). A confirmatory factor analysis (CFA) showed good fit for the three-factor solution, χ2(24) = 90.78, p < .001, comparative fit index [CFI]] = 0.978, root mean square error of approximation [RMSEA] = 0.043 (90% confidence interval [CI] [0.035, 0.051]), standardized root mean square residual [SRMR] = 0.030.

Prosocial Personality Traits: Need for Social Approval

We operationalized social approval needs via four items adapted from Martin (1984; German items self-translated), for which participants were asked to indicate how much statements apply to them on 5-point Likert scales (1 = not at all, 5 = completely). CFA demonstrated acceptable model fit (except for RMSEA) for the one-factor solution, χ2(2) = 53.88, p < .001, CFI = 0.960, RMSEA = 0.131 (90% CI [0.106, 0.157]), SRMR = 0.036.

Intergroup Identity Conflict: Identity Centrality

As a comprehensive measure of identity centrality, we used three sets of four adapted items from Leach et al. (2008; German items by Roth & Mazziotta, 2015), asking participants to state how much statements referring to their (a) gender identity, (b) nationality, and (c) sexual orientation apply to them on 5-point Likert scales (1 = not at all, 5 = completely). Out of arguably countless alternatives, we opted for these facets because we considered them among the most basic for one’s personal identity and, thus, more stable and less individualized compared to age, class, profession, education, political affiliation, hobby, or fandom, particularly since our primary goal was to grasp participants’ identity centrality per se rather than distinct identity strengths. A second-order CFA revealed acceptable fit, χ2(51) = 636.66, p < .001, CFI = 0.934, RMSEA = 0.087 (90% CI [0.082, 0.093]), SRMR = 0.051.

Recent Victimization Experience

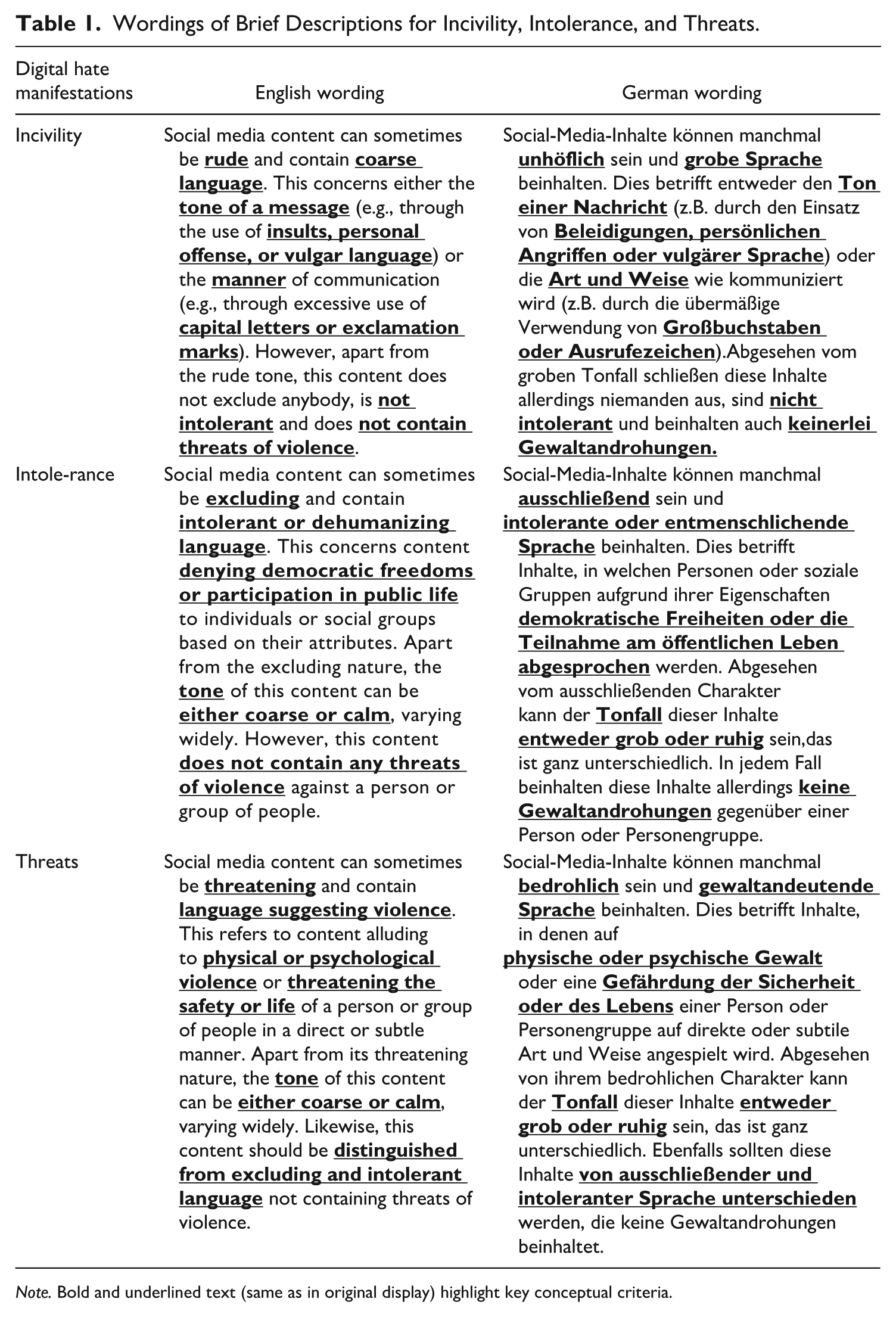

After providing participants with brief definitions of uncivil, intolerant, and threatening social media communication (see Table 1 for concrete wordings) self-constructed following Rossini (2022) and Pradel et al. (2024), we asked participants via three items to indicate how frequently they have been personally attacked by similar posts or comments during the last 4 weeks using 5-point Likert scales (1 = never, 5 = all the time).

Wordings of Brief Descriptions for Incivility, Intolerance, and Threats.

Note. Bold and underlined text (same as in original display) highlight key conceptual criteria.

Social Media Habits

Habitual posting and sharing of social media content was measured with an adaptation of Ceylan et al.’s (2023) four-item scale (which itself is a domain-specific version of an established version of the self-report habit index [SRHI]; Gardner et al., 2016; German items self-translated). Participants were asked to indicate how much statements apply to them on 5-point Likert scales (1 = not at all, 5 = completely). CFA showed good model fit for the one-factor solution, χ2(2) = 15.16, p = .001, CFI = 0.986, RMSEA = 0.066 (90% CI [0.052, 0.081]), SRMR = 0.017.

Online Incivility and Intolerance Perpetration

To capture digital hate perpetration, we constructed two identical three-item scales addressing public, semipublic, and private communication channels, one each for incivility and intolerance (for which the same definitions were provided as for recent victimization). Unlike for victimization, we did not ask for threats due to possible legal repercussions. For both constructs, we asked participants to specify how frequently they have sent, used, or shared similar content within the respective channels during the last 4 weeks, answered via 5-point Likert scales (1 = never, 5 = all the time). CFA revealed good fit for the two-factor solution in both waves, χ2(8) = 27.08, p = .001, CFI = 0.992, RMSEA = 0.040 (90% CI [0.029, 0.051]), SRMR = 0.014 and χ2(8) = 37.23, p < .001, CFI = 0.978, RMSEA = 0.060 (90% CI [0.049, 0.071]), SRMR = 0.026, respectively. Given online incivility and intolerance perpetration correlated highly in both waves (r = 0.75 in W1 and r = 0.76 in W2), we compared the proposed two-factor solutions with more parsimonious one-factor solutions. In both waves, the alternative one-factor solution turned out inferior to the proposed two-factor solution, Δχ2(1) = 364.19, p < .001 in W1 and Δχ2(1) = 176.55, p < .001 in W2. Since subsequent analysis accounted for autocorrelation, measurement invariance testing was conducted by comparing a model where factor loadings of matching items were constrained to be equal across waves with a freely estimating model. Since model fits did not differ significantly, Δχ2(1) = 1.12, p = .891, we accepted metric invariance.

Covariates

Demographics were entered as covariates. Gender identification was measured by asking with which gender participants identify (1 = male, 2 = female, 3 = diverse). Education was assessed by asking for the highest completed education (1 = no completed education, 2 = compulsory school, 3 = lower vocational school, 4 = medium-level vocational school, 5 = general education, 6 = higher vocational school, 7 = college or university) and collapsed into two categories for participants with and without completed university education. Additionally, we assessed how old participants were via a slide bar and their self-ascribed political leaning via a semantic differential (1 = very much left, 7 = very much right).

Statistical Analysis

For statistical analysis, we conducted structural equation modeling (SEM) using robust maximum-likelihood estimator and full-information maximum-likelihood procedure to estimate how strongly several distal factors may facilitate digital hate perpetration (or underlying mechanisms leading to it) over time when simultaneously entered into a regression formula. Robustness checks using Satorra-Bentler corrected maximum likelihood with complete data was conducted in addition to the primary approach. Aside from abovementioned covariates, we controlled for autoregressive links of our outcome variables of interest (i.e., incivility and intolerance perpetration), whose indicators’ residuals were allowed to covary across waves.

Results

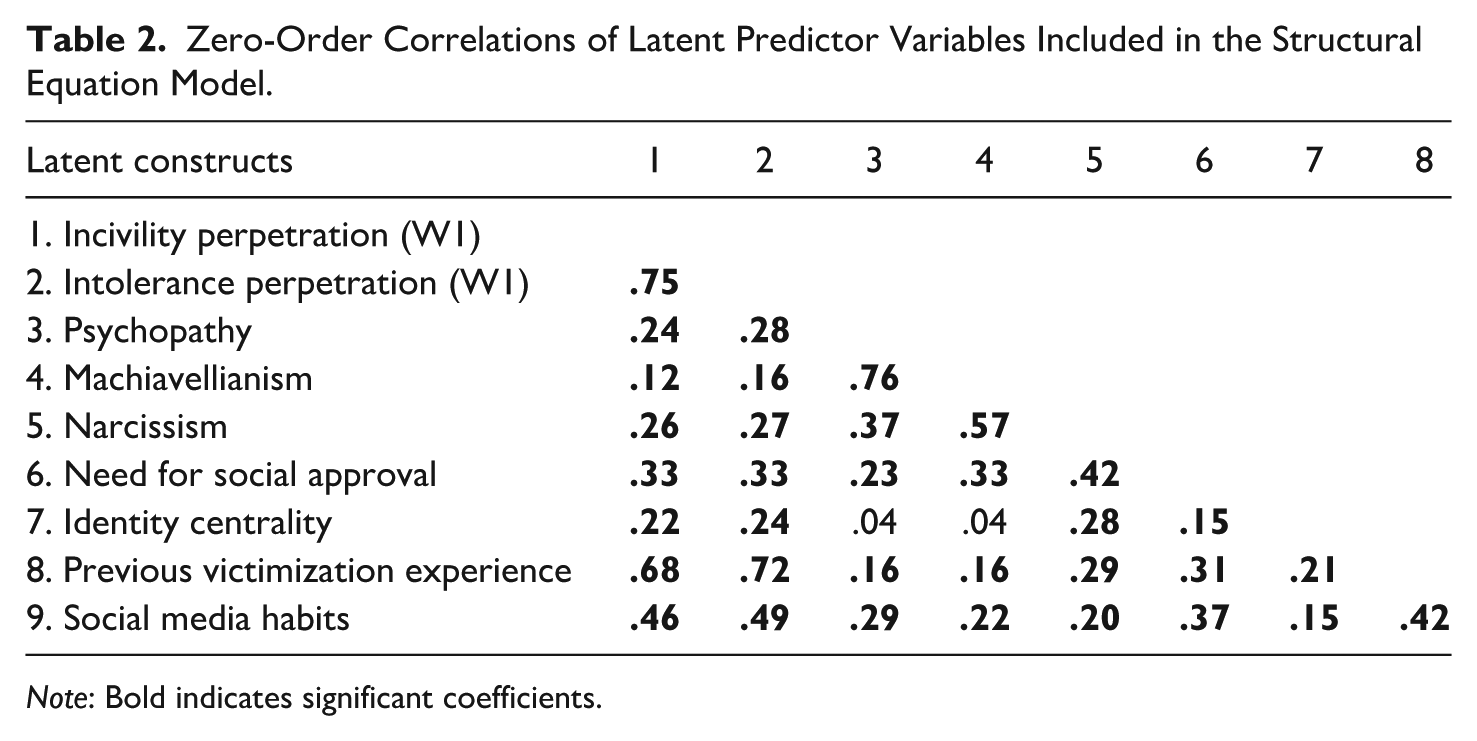

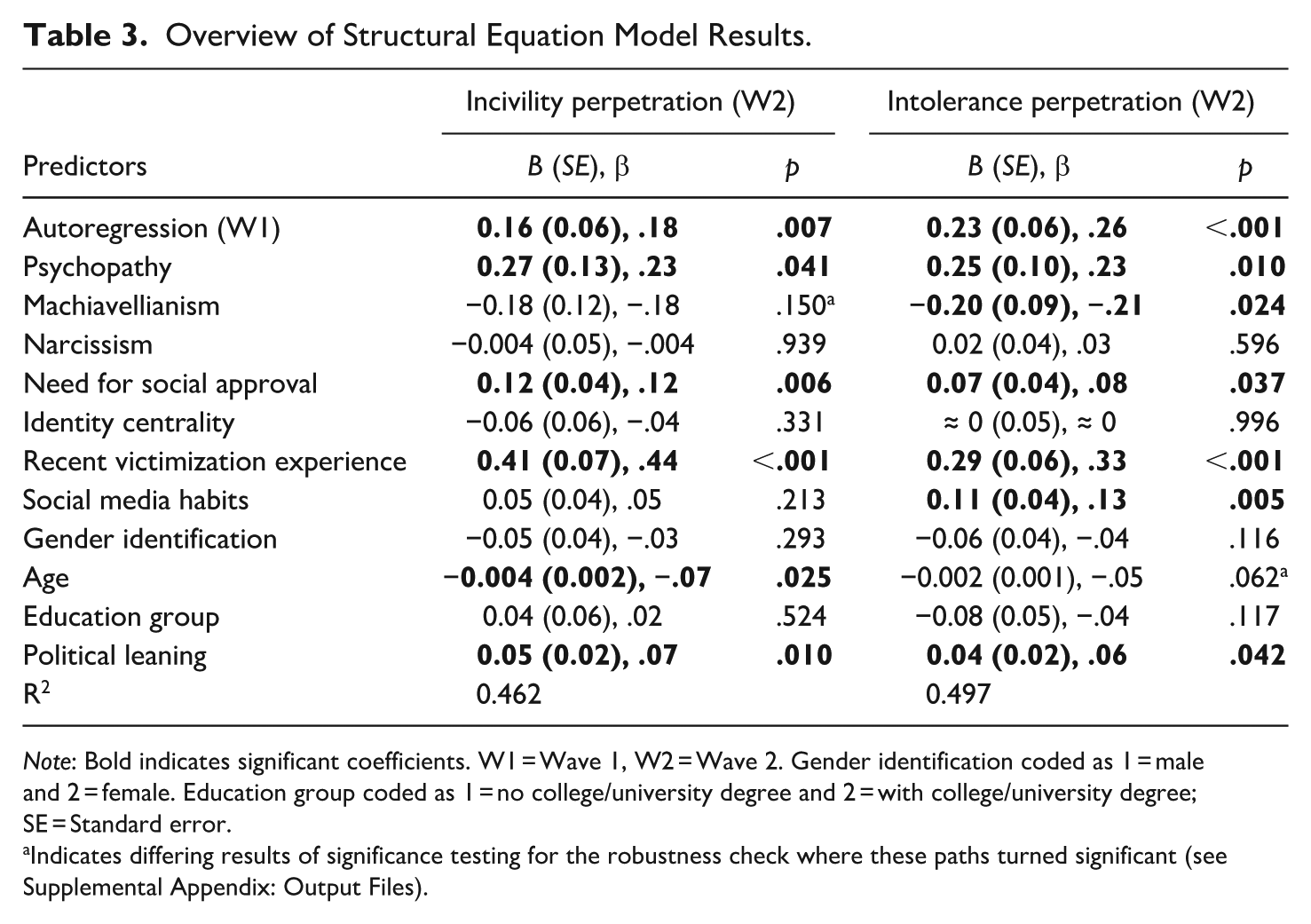

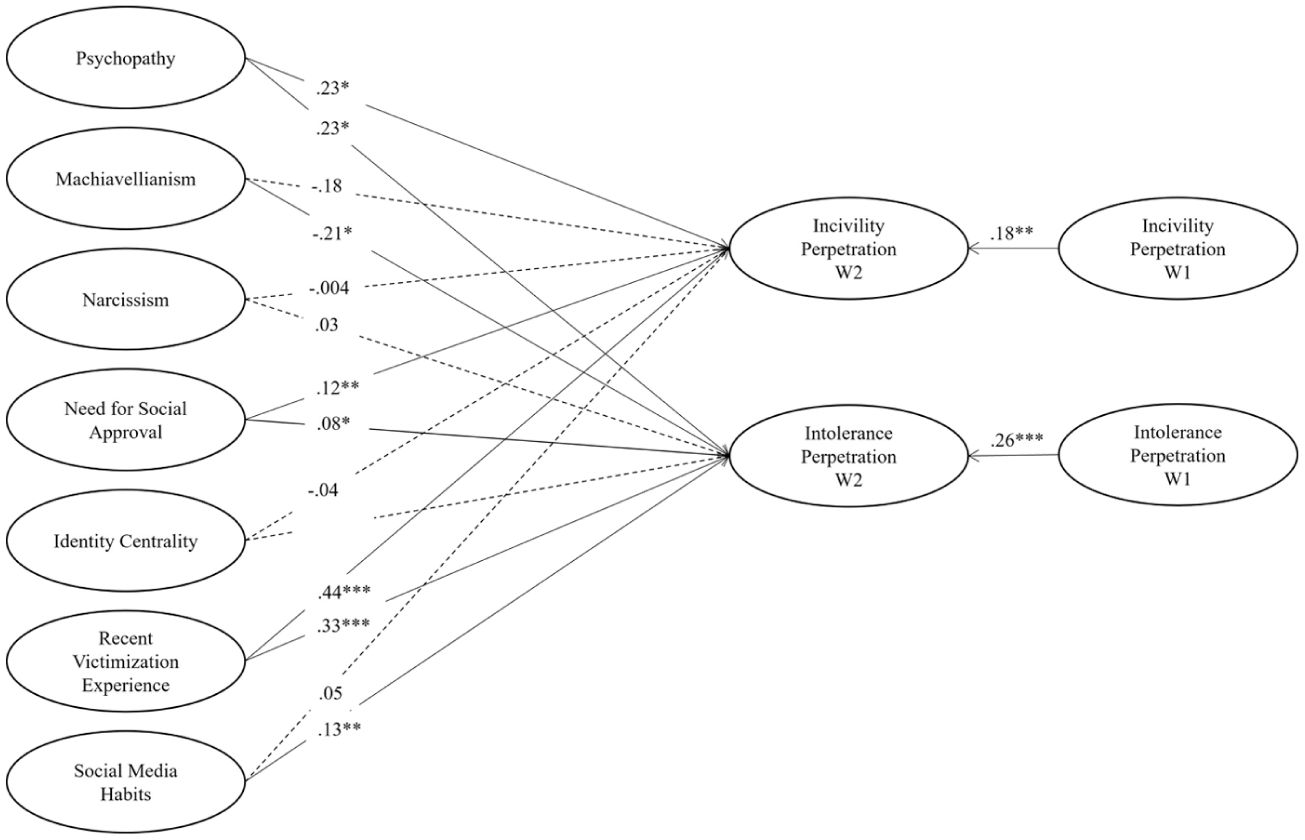

Zero-order correlations are reported in Table 2. Main results are summarized in Table 3 and Figure 1. Overall, both the measurement model and the autoregressive SEM revealed good model fit, χ2(838) = 2,328.22, p < .001, CFI = 0.953, RMSEA = 0.034 (90% CI [0.033, 0.036]), SRMR = 0.056 and χ2(1,008) = 3,306.33, p < .001, CFI = 0.931, RMSEA = 0.039 (90% CI [0.037, 0.040]), SRMR = 0.071, respectively. Testing the measurement model against common convergent and discriminant validity criteria, we first found, with regard to the former, that all indicators were significantly related to their respective constructs (ps < .001), exhibiting standardized factor loadings consistently above .5 (λs ≥ .54) and, for the most part (i.e., 36 out of 47), even above .7, which is typically considered ideal (Hair et al., 2019), and that each construct’s average variance extracted (AVE) value exceeded the conventional threshold of .5 (AVEs ≥ 0.53), except for psychopathy (AVE = 0.41). However, according to the seminal work by Fornell and Larcker (1981), convergent validity can still be assumed based on reliability alone where psychopathy is slightly below the commonly accepted threshold (McDonald’s ω = .68). Altogether, these results hint at questionable convergent validity for psychopathy that needs to be considered when interpreting subsequent findings. Second, concerning discriminant validity, we checked both whether AVE values of any two constructs were greater than their shared variance (i.e., the Fornell–Larker criterion) and whether heterotrait-monotrait (HTMT) ratios were below 0.85. These analyses revealed that psychopathy and Machiavellianism did not comply with the Fornell–Larker criterion, meaning that they do not appear to be distinct enough to be differentiated reliably. This apparent overlap is also reflected by a high correlation between latent variables (r = 0.76). Although their HTMT ratio did not come to the same conclusion, respective coefficients need to be interpreted very cautiously. Finally, a robustness check with an alternative estimator that only uses complete data did not show substantial differences in model fit and only minor deviations concerning path coefficients (see Table 3 and Supplemental Appendix: Output Files).

Zero-Order Correlations of Latent Predictor Variables Included in the Structural Equation Model.

Note: Bold indicates significant coefficients.

Overview of Structural Equation Model Results.

Note: Bold indicates significant coefficients. W1 = Wave 1, W2 = Wave 2. Gender identification coded as 1 = male and 2 = female. Education group coded as 1 = no college/university degree and 2 = with college/university degree; SE = Standard error.

Indicates differing results of significance testing for the robustness check where these paths turned significant (see Supplemental Appendix: Output Files).

Overview plot for structural equation modeling results.

Incivility Perpetration

After controlling for the autoregression, B = 0.16, SE = 0.06, β = .18, p = .007, results indicated that psychopathy, B = 0.27, SE = 0.13, β = .23, p = .041, need for social approval, B = 0.12, SE = 0.05, β = .12, p = .006, and recent victimization experience, B = 0.41, SE = 0.07, β = .44, p < .001, were significantly associated with incivility perpetration over time. In other words, participants whose answers to our measurement instruments suggest a profound lack of empathy and remorse (i.e., psychopathy), a strong desire to please others and avoid rejection (i.e., need for social approval), and that they had been at the receiving end of hateful communication during the last 4 weeks before W1 (i.e., recent victimization experience) turned out more frequently engaging in incivility toward others on social media at W2. Accordingly, H1a, H3a, and H5a were supported. Conversely, over-time associations with Machiavellianism, narcissism, identity centrality, and social media content habit were too small to emerge as statistically meaningful (see Table 3). Thus, H2a, H4a, and H6a cannot be supported. RQ1a is answered inconclusively with a null finding. Lastly, only age, B = −0.004, SE = 0.002, β = −.07, p = .025, and political leaning, B = 0.05, SE = 0.02, β = .07, p = .010, emerged as a significant covariate indicating that younger and more right-leaning participants were more likely to engage in uncivil communication during the last 4 weeks before W2. Whether participants identified themselves as male or female nor whether or not they had completed postsecondary education made not much of a difference (see Table 3).

Intolerance Perpetration

Concerning participants’ intolerance perpetration on social media during the last 4 weeks before W2, our analysis revealed that psychopathy, B = 0.25, SE = 0.10, β = .23, p = .010, Machiavellianism, B = −0.20, SE = 0.09, β = −.21, p = .024, need for social approval, B = 0.07, SE = 0.04, β = .08, p = .037, recent victimization experience, B = 0.29, SE = 0.06, β = .33, p < .001, and social media habits, B = 0.11, SE = 0.04, β = .13, p = .005, exhibited significant links after controlling for the autoregressive effect, B = 0.23, SE = 0.06, β = .26, p < .001. This means that participants who scored high on nonclinical psychopathy, have a strong tendency to manipulate and exploit others for their own gain (i.e., Machiavellianism), greatly seek social approval of others, had been targeted by digital hate before W1, and tend to post and share social media content automatically without much conscious thought (i.e., social media habits) were more likely to engage in intolerant communication on social media during the last 4 weeks before W2. These results provide supporting evidence for H1b, H3b, H5b, and H6b, as well as contradictory evidence for H2b (where a positive relationship was assumed). Narcissism and identity centrality did not turn out significantly associated with intolerance perpetration (see Table 3), thus answering RQ1b inconclusively and not supporting H4b. Concerning the assessed covariates, only political leaning, B = 0.04, SE = 0.02, β = .06, p = .042, was linked to intolerance perpetration significantly, again indicating more engagement among more right-leaning participants (see Table 3).

Discussion

Digital hate perpetration has emerged as a pressing societal concern. Yet, our understanding of what drives different kinds of malicious social media communication remains limited. We addressed this gap by simultaneously investigating five pathways plausibly leading to online incivility (i.e., violations of interpersonal norms) and intolerance (i.e., violations of democratic norms; Rossini, 2022). Specifically, we explored over-time relationships with (a) antisocial personality (operationalized via psychopathy, Machiavellianism, and narcissism), (b) a prosocial personality (operationalized via need for social approval), (c) intergroup identity conflict (operationalized as a trait with higher-order social identity centrality), (d) recently happening previous victimization experiences, and (e) social media habits by conducting a two-wave panel with a quota-based Austrian general population sample. Results revealed that incivility and intolerance perpetration were both positively predicted by psychopathy, need for social approval, and recent victimization experiences. Additionally, engagement in intolerance was negatively associated with Machiavellianism and positively linked to social media habits. Guided by the GAM as an integrative theoretical framework that broadly situates different distinct nonepisodic drivers within a situationally relevant process model about aggressive thought-processes and behaviors, the present study thus highlights the content-agnostic role of recent victimization experiences, antisocial and prosocial personalities, as well as the more content-specific role of social media habits, in motivating interpersonal aggression in digital realms.

Antisocial Personality Traits: Psychopathy as a Robust Driver Across Contents

Regarding antisocial personality traits, we found that (subclinical) psychopathy predicted both incivility and intolerance. Thus, participants who tend to lack impulse control, empathy, and remorse appeared to be more prone to digital hate perpetration. However, despite their very extensive deployment across the world (see Vize et al., 2018 for numbers on the English version alone), short-form operationalizations of psychopathy are notorious for their inadequate convergent validity (Knitter et al., 2025; Miller et al., 2012), which also turned out true for the present study. This suggests that even validated and frequently used short-form psychopathy items may elicit self-appraisals that are too heterogeneous to converge adequately on a well-defined latent construct, thereby limiting clear conceptual interpretations. In other words: Our findings are based on loosely rather than closely related representations of (subclinical) psychopathy. Machiavellianism did not emerge as hypothesized; instead, it showed a negative effect on intolerance, suggesting that greater Machiavellian tendencies reduced perpetration. Several reasons may explain this unexpected result. First, psychopathy and Machiavellianism were highly correlated, so much so that they failed in a key discriminative validity criterion, fueling already existing concerns about conceptual distinguishability (Miller et al., 2017). Although traits can be highly correlated and low in collinearity at the same time (Hamilton, 1987), it is likely that shared variances led to a change in the sign of the regression coefficient (given their positive zero-order corrections) and increased probability of inferential error (even though additional exploratory analysis without psychopathy rather showed nonsignificant relationships). Apart from that, it needs to be noted that by controlling for shared variance between psychopathy and Machiavellianism, we partialized out their conceptual overlap (i.e., their socially aversive nature). This may then leave the latter to refer primarily to charming manipulativeness suitable to reach personal goals (see Jonason & Luévano, 2013), which would be incompatible with incivility and could explain inhibition of intolerance. Instead, Machiavellianism may be more conducive to other phenomena, such as those facilitating short-term sexual exchanges, as seen in abusive sexting (Clancy et al., 2019). Additionally, Mungall et al. (2024) found that the planfulness dimension of Machiavellianism was linked to reduced online political incivility, suggesting that some facets may be more essential for understanding its role in digital hate perpetration. Lastly, narcissism failed to predict incivility and intolerance, aligning with prior findings (Frischlich et al., 2021; Koban et al., 2018). A more nuanced measurement of vulnerable and grandiose narcissism, with the former manifesting in aggressive and the latter in defensive ways of masking self-perceived inadequacies (Carrotte & Anderson, 2019), might have provided clearer insights.

Prosocial Personality Traits: Social Approval Matters (More) for Incivility

Concerning prosocial personality, results confirmed the recently emphasized role of social approval as a positive predictor of digital hate perpetration (Walther, 2024). Given that our trait-level operationalization involves a tendency to please others and follow social norms, it can be inferred that dispositional desires to fit in with certain audiences facilitates both incivility and, albeit to a weaker degree, intolerance, thus generalizing what has been observed in peer-driven digital hate phenomena like cyberbullying (Piccoli et al., 2020).

A plausible explanation for why intolerance perpetration appears to be less strongly driven by social approval needs compared to using uncivil language may be that engaging in intolerant speech involves a greater risk of receiving intense disapproval from others, at least in heterogeneous social media communities. Accordingly, it might be counterproductive for satisfying one’s approval needs outside of homogeneously intolerant groups. Said differently: If users would contemplate potential risks and benefits of different choices for gaining social approval and avoiding social disapproval, choosing intolerant speech might not be considered worthwhile if not within the right social environment. Alternatively, it could also be that people are simply more reluctant to express intolerant thoughts, even if only pretended for social acceptance, because it might violate their personal beliefs. Given that incivility is primarily a matter of tone rather than substance (Sydnor, 2018), it might produce less reactance. In other words, seeking social approval via social media may not trump all other needs, particularly not identity needs like upholding one’s core principles.

Consequently, it appears plausible to assume a significant reduction in hostility if communities (either bottom-up via individual or collective action or top-down via moderation and platform management) would disapprove of such behavior (Jiang et al., 2024). With communication shifting from in-person to online, people may increasingly seek social acceptance on social media, resulting in those craving social approval to form strong bonds with affirming communities (Walther, 2024), especially if they lack offline approval. Particularly with respect to intolerance, these dynamics raise concerns, as they may gradually lure individuals into radicalization (Burston, 2024).

Intergroup Identity Conflict: Identity Centrality versus Identity Threat

Our analysis of identity centrality revealed no significant effects on either incivility or intolerance. This null finding could be attributed to several factors. First, while social identity theory may suggest that strong centrality across social identities (i.e., trait-like identity centrality) is associated with perceptions of ingroup vulnerability and outgroup antagonism, it does not directly imply a perceived need to defend one’s identity and, more specifically, endorse attitudes conducive to spreading digital hate (Chua & Wilson, 2023). Thus, future research may benefit from measuring identity threat (Ma et al., 2023) rather than identity centrality given that it is closer to the presumably effective mechanism in question. Second, previous studies have indicated that high identity centrality could lead to greater acceptance of group-based stressors (Crane et al., 2018), potentially mitigating tendencies to engage in uncivil or intolerant behavior, as possibly offensive content by others might not be taken too seriously. Third, it is also possible that perpetrators’ online communities form around attributes other than gender, nationality, or sexual orientation (or more specific meanings, which we did not fully cover with our items). Such communities can create homogeneous identity bubbles where group-confirming behavior like digital hate perpetration is reinforced (Zych et al., 2023), akin to the role of identification with deviant peers in cyberbullying (Piccoli et al., 2020).

Recent Victimization Experience: Potential Victimization-Perpetration Cycles

In line with correlational evidence (Frischlich et al., 2021), recently experienced victimization positively predicted both incivility and intolerance perpetration, providing support from two-wave data for victim-perpetrator links. While our design might not allow for a decisive confirmation of a transition from being targeted by to targeting (due to relatively high coincidence in W1), it suggests that prior victimization may have a unique impact on digital hate perpetration even when previous perpetration is controlled. Accordingly, future research should delve into underlying mechanisms to elucidate why some targets become perpetrators while others do not. Specifically, exploring social-cognitive and emotional processes, such as moral disengagement and revenge, could provide insights as their relevance for victim-perpetrator cycles has been observed in other hateful phenomena (Wachs et al., 2022).

Social Media Habits: Intolerance May Come with Social Rewards, Incivility Not

Partially replicating Ceylan et al. (2023), we found that participants with firm habits of posting and sharing social media content more frequently engaged in intolerance but not in incivility perpetration. In other words, social media users who generally tend to disseminate messages and content automatically without much additional thought were not significantly more likely to state that they have used foul language and insults on social media; however, they were more inclined to post or share discriminatory and dehumanizing content. Given that habits are based on cue-driven activation and, unless inhibited, execution of internalized actions, these differential findings suggest a firmer (or less inhibitable) mental cue-behavior representation underlying intolerance compared to incivility.

Why this might be the case is subject to speculation. It could be that intolerance has (whereas incivility has not) provided enough social rewards, which may come within social media as visible attention (e.g., views, shares, or reposts) or feedback by others (e.g., reactions or follow-up commentary), in order to act as a cue for thoroughly habitualized action. In particular when it comes to habitual sharing, it might also be important to consider that much intolerant content is intentionally designed to be somewhat ambiguous and, thus, subtle (Schmid et al., 2024). Because of that, existing habits may not be inhibited as easily as for arguably more saliently expressed incivility. What needs to be highlighted here is that it is unclear whether habitual posting or sharing of intolerant social media content reflects support of said expressions or merely serves to enter or prompt debates about what is wrong about it. Alternatively, such behavior may just be a consequence of not noticing often more subtly expressed intolerance during reception. That is, habitual dissemination of intolerance may not necessarily be grounded on harmful intentions but could also have had a prosocial original meaning. Exploring both social rewards and original intentions of such social media habits (before they become fully habitualized) may be just as exciting for future research as whether prosocial intentions lead overall to the desired (or rather undesired) consequences.

Limitations

Several limitations should be considered. First, while the two-wave panel survey method and autoregressive SEM may be appropriate for testing temporal predictions with advanced statistical control, it cannot make meaningful causal claims. Second, reliance on self-reports entails risks of response tendencies such as social desirability bias, particularly in sensitive topics like digital hate perpetration, and common method bias.

Third, while using short scales minimizes response fatigue and, by implication, panel mortality, it may reduce constructs to their conceptual core, sacrificing multidimensionality. Irrespective of its popularity, this ended up particularly problematic for the short-form measure of the dark triad.

Fourth, our operationalizations of incivility and intolerance perpetration relied on brief descriptions and aggregated frequency ratings for a period of 4 weeks. Alternative operationalizations, such as, on a smaller scale, presenting stimulus examples or, on a larger scale, using more tangible intensive longitudinal and/or objective web-tracking methods (see, Maier et al., 2025), may yield different results but come with unique ethical objections. Relatedly, participants’ engagement in incivility and intolerance correlated highly, indicating behavioral concurrence and partially explaining similar findings.

Fifth, we did not specify whom participants had targeted nor why they engaged in it nor supposedly why they themselves were victimized but instead focused on target- and intention-agnostic operationalizations. Nevertheless, we acknowledge that further specification could have implications for some tested mechanisms, particularly social identity, need for approval, and recently experienced victimization.

Sixth, we recruited a sample in Austria, a mid-sized Central European country with sociopolitical circumstances that resemble developments across and beyond Europe. These include highly polarized political discourse, especially concerning hate-prone issues such as immigration, growing political and discursive power for far-right actors and institutions, broader wealth inequality and economic struggles. Nevertheless, single-country research is inherently limited in terms of generalizability, which may be most plausible for regions with similar sociopolitical profiles (e.g., parts of Germany or, more generally, Central Europe) but quickly becomes questionable for less similar countries (particularly from the Global South).

Seventh, while the 2-month interval may be suitable for moderately stable behaviors and outcomes, it cannot capture short- and long-term dynamics underlying digital hate perpetration. Lastly, while predictors were carefully selected without claiming exhaustiveness, other variables, such as trait aggression or life history parameters (including less recent instances of victimization online and also offline), could also play significant roles.

Conclusion

Digital hate perpetration emerged as a key challenge in digitized societies. Differentiating between uncivil and intolerant online speech, this study indicates shared drivers (i.e., psychopathy, need for social approval, and recently happening previous victimization experiences) but also notable differences in what may determine users’ engagement in such norm-violating communication. Recent victimization emerged as a particularly strong driver, especially for incivility perpetration, emphasizing the necessity to think about role fluidity and cyclical victimization-perpetration dynamics. Interestingly, digital hate perpetration was not solely driven by antisocial personality traits but counterintuitively also by participants’ desire for social approval, which opens up an alternative perspective for explaining (and counteracting) hostile speech. Intolerance, on the other hand, was also predicted by habitual sharing and posting of social media content, suggesting either some sort of social rewards underlying engagement and dissemination of intolerant speech or an alarming effectiveness of common mainstreaming strategies that need to be addressed immediately by platform providers. Overall, this study contributes to digital hate perpetration scholarship by laying bare how (much) different roads lead to uncivil and intolerant online engagement, with each warranting distinct attention in order to be effectively countered. While future research may need to dive deeper into how these mechanisms interact with social and environmental factors (i.e., meso- and macro-level conditions), this study thus provides essential insights for developing effective countermeasures and recommendations for stakeholders.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the European Research Council (ERC) as part of the ERC Advanced Grant project Digital Hate: Perpetrators, Audiences, and (Dis)Empowered Targets (DIGIHATE; Grant Agreement ID: 101055073).

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.