Abstract

This meta-analysis examines 24 experimental studies on deepfake effects on credibility, emotions, and sharing intention, comprising 20,685 participants from 10 countries. Moderator effects of media literacy, control type, video topic, literacy type, gender, age, and country on individual responses were also analyzed. Effect sizes indicated deepfakes’ impact on elevated emotions. Media literacy moderated the effects of deepfake exposure on diminished credibility and sharing intention. The moderator effect of no literacy on emotions was positive. The results suggest that critical media consumers with media literacy, depending on the topic and type, can mitigate the adverse effects of deepfakes.

In the generative artificial intelligence (AI) era, deepfakes have become ubiquitous and easy to create, often making the public fall for them. Defined as a form of disinformation to purposively deceive recipients and impact their beliefs, attitudes, and behaviors (Hameleers et al., 2024), the sophistication and indistinguishability of deepfakes from real audio, images, and videos with advanced technology pose threats to human discourse, trustworthiness, and democracy (Diakopoulos & Johnson, 2021). From another perspective, deepfakes do not always have adverse implications. When used for prosocial purposes, they can convey authentic messages in specific settings for learning effects (Eberl et al., 2022).

Varying conditions yield the effects of deepfakes on individual responses, referred to as differences between individuals in the changes (e.g., media use) that occur following a stimulus (e.g., exposure or experience; Hopkins, 2018). Related research has evaluated audience experiences with deepfakes in political interest and news skepticism (Ahmed, 2021), information believability (Hameleers & Marquart, 2023), audience engagement (Lee & Shin, 2022), and mental health (Yu et al., 2021). However, the findings provided by the studies are inconsistent, demonstrate discrepancies, and vary depending on the conditions, which calls for a summary of the implications in a systematic review and meta-analysis to better understand the effects of deepfakes. Meta-analytical studies help enhance theory development and clarification by analyzing divergent effects and relationships (Han & Balabanis, 2023). A systematic review and meta-analysis can offer a quantitative synthesis to a tidy framework for examining the social influences of deepfakes on human attitudes and behaviors.

This study aims to review deepfakes’ effects on receiver cognitions, emotions, and behavioral intentions through a systematic review and meta-analysis. Although a meta-analysis on AI over humans for persuasion finds its effects (Huang & Wang, 2023) and a meta-synthesis of qualitative studies on deepfakes discovers motivations, uncertainties, and repercussions (Vasist & Krishnan, 2023), the effects of deepfakes on individual responses in the current AI ecosystem with versatility and diversity remain unanswered. To focus on the effects of deepfakes on individual responses compared to other media modalities, this study selects experimental studies that provide differences in cognitive, emotional, and behavioral responses to deepfake experiences. Considering the rapidly spreading usage, increasing research attention, and societal concerns regarding deepfake consumption, a summary and review of extant empirical findings can derive reliable conclusions that account for the relationship between deepfake exposure and individual responses. The results may offer constructive suggestions for deepfake researchers, educational institutions, and media professionals.

Literature Review

Exposure to Deepfakes and Individual Responses

In the effects of deepfakes, multiple theories attest to the claim that deepfakes are a powerful technology influencing societal and individual responses (Vasist & Krishnan, 2023). Two levels involve theoretical explanations of the relationship between deepfakes and individual responses: macro and micro. At the macro level, social shaping of technology (SST) accounts for technological change as a social process. SST assumes that technology and society are mutually inclusive, evolving together in an interdependent manner. Social factors are drawn from social products in the technology. As deepfakes evolve, the dissemination at a dramatic pace shapes society’s experiences and networks by altering individuals’ cognition, emotion, and behavior (Armstrong, 2025).

At the micro-level, deepfakes offer a direct construction of reality perceptions or a realism heuristic (Sundar et al., 2021). According to the dual-process models of information processing (Petty & Cacioppo, 1986) and the heuristic-systematic model (Chaiken & Trope, 1999), the systematic processing route requires effortful modes of thinking to arrive at judgments because message recipients focus on the arguments presented in the message. Heuristic processing is an effortless route that requires lower cognitive effort in receiving information because recipients pay attention to the source cues, such as the style of a message. Compared to text-based modalities, audiovisual deepfakes can stimulate more heuristic than systematic processing by overriding the scrutinized validation of the presented textual message. Related to the dual-process models, the MAIN (modality, agency, interactivity, and navigability) model postulates that richer modalities with audiovisual cues trigger cognitive heuristics, resulting in higher perceived realism of conveyed messages (Sundar, 2008), cognition (Lee & Hameleers, 2024), emotional engagement (Ahmed & Chua, 2023), and sharing intention (Ahmed, 2021).

Another micro-level theory, priming, suggests that individuals’ exposure to information stimuli can influence their subsequent evaluations (Tulving, 1983). As information recipients activate tags in their minds, the stimulus triggers heuristic evaluations of closely related information. Exposure to deepfakes is assumed to create mental associations with malicious information, which can cloud information recipients’ cognitive response (Ahmed et al., 2023), emotion (Iacobucci et al., 2021), and intention (Sharma et al., 2023). Combined with priming and heuristic processing, truth default theory (TDT) posits that individuals accept honesty passively (Levine, 2014). TDT presumes that other people are treated truthfully unless message recipients systematically process the information cues and are conscious of the possibility of deceit. A truth default in information exchange activates priming and truth bias, an inclination toward believing regardless of truthfulness. When people are in a truth default state, they tend to follow the heuristic rather than the systematic route. This good faith in truth-default communication invokes heuristic processing for truth bias judgments (Hameleers, 2023; Street & Masip, 2015), cognition (Hameleers et al., 2024), and emotion and sharing intention (Akinyemi et al., 2024).

A cohesive concept emerges from an integrated perspective of the above-mentioned theories and models regarding deepfake communication: media richness, the media’s ability to transmit needed information (Daft & Lengel, 1986). Richer audiovisual modalities of disinformation tend to deceive information recipients by activating the heuristic route and priming close resources, leading to the acceptance of truth bias. Heuristic processing can bias systematic processing when information is ambiguous and interpreted in different ways (Chaiken & Maheswaran, 1994). In contextualized media richness features of fabricated information, individuals are likely to accept the honesty of information by default and heuristically, and be prone to deception. Richer media relates to information credibility, cognitive trust, emotion, and behavior either positively (Malhotra & Ramalingam, 2025) or negatively (Chen et al., 2024).

Most studies on the effects of deepfakes are grounded in these theories that suggest that exposure affects individual responses cognitively, affectively, or behaviorally (Lee & Hameleers, 2024; Lu & Chu, 2023). The current systematic review and meta-analysis assume that media-rich audiovisual deepfakes affect information evaluations under various conditions. With truth defaults and heuristics, the rich media format in deepfakes influences individual responses compared to other narrative modalities. Therefore, individual responses are classified into three prominent forms: cognitive, emotional, and behavioral.

Cognitive Responses

According to Weikmann et al. (2025), information heuristics influence cognitive responses in deepfakes, depending on stimulus conditions. Richer media can generate engaged evaluations of deepfake exposure. Cognitive responses assess the extent to which individuals understand, learn, and know about stimuli. One study used a video with three conditions: (a) a misinformation accusation, (b) a disinformation accusation (deepfake), and (c) no fact-check label. In credibility evaluation by the study participants, they rated the deepfake condition as less credible and authentic than the misinformation condition and the no fact-check group (Hameleers & Marquart, 2023). Another study by Lee and Shin (2022) found that participants exposed to deepfake news had higher source vividness than those who encountered fake news in text-only and text-photo formats. Increased source vividness enhanced the credibility and engagement intentions of the fake news. A study by Hameleers et al. (2024) found that deepfakes containing blatant lies (outright falsehoods) were generally perceived as less credible, regardless of whether they were related to domestic or foreign political figures. In the same study, subtle distortions (mild manipulations) in deepfakes were often deemed more credible, particularly when they featured leaders with whom participants identified or felt a connection or relevance.

Health-related deepfakes can be a cause of misperceptions. Deepfakes of health information have significantly increased misperceptions of cold water drinking and cancer (Lee & Hameleers, 2024) as well as the flu vaccine (Lee et al., 2024). There is a theoretical assumption that people develop counter-attitudes toward corrective information and reaffirm their belief in misinformation if the pre-exposure to misinformation remains in their cognitive memory, a phenomenon known as the boomerang effect (Tseng et al., 2022). However, in the study, an experiment testing exposure to text, image, and video deepfakes, followed by corrective information in the same modalities, found no boomerang effect after correction in all modalities. The results indicate that corrective information successfully reduced participants’ perceived credibility and potential action regarding misinformation about coronavirus disease (COVID-19). These findings imply that while deepfake videos can be influential in spreading misinformation, corrective information can effectively reduce their impact on credibility.

The concept of information credibility encompasses perceived accuracy, reliability, validity, and objectivity (Metzger et al., 2003; Thon & Jucks, 2017). In evaluating information accuracy as a component of credibility, perceived accuracy was higher for individuals exposed to video deepfakes than those exposed to cheapfakes (crudely manipulated media using low-cost tools and editing techniques) of Vladimir Putin’s controversial statements (Ahmed & Chua, 2023). People with higher cognitive abilities were less likely to perceive deepfakes as accurate, regardless of the format in which they were presented. Studies show that deepfakes are generally perceived as less credible than other forms of information (Clayton et al., 2020). To summarize, deepfakes have a significant impact on cognitive individual responses, including perceived credibility.

Emotional Responses

Emotional responses indicate how individuals react to situations or stimuli by expressing feelings (Niedenthal & Halberstadt, 1995). Individuals can become emotionally engaged with deepfake content as it becomes increasingly realistic with AI-based technology. For example, people’s engagement level with deepfake human faces tends to be low, especially when these faces display positive emotional expressions like smiling and likability, suggesting that deepfake faces matter less compared to real faces (Eiserbeck et al., 2023). Deepfake resurrection narratives—deceased individuals are digitally recreated to deliver messages in public service announcements, such as drunk driving and domestic violence—elicited strong compassion and surprise (Lu & Chu, 2023). These narratives effectively raised awareness and promoted prosocial behaviors, supporting policy changes and activism. Facial expressiveness, as manipulated in deepfakes through gazing, nodding, and smiling, influenced high levels of evaluation (Renier et al., 2024). When deepfakes are created for social causes, they can positively resonate with individuals’ perceptions of their competence, warmth, and likability. In microtargeted conditions, people could develop attitudes toward deepfake content. For instance, highly religious Christians showed much more unfavorable attitudes toward politicians and political parties than the non-religious group after deepfake exposure (Dobber et al., 2021).

Eberl et al. (2022) found that students rated both videos (real vs. deepfake) similarly, but slightly higher for deepfake in terms of the effects of physical attractiveness. Specifically, teachers who were more attractive in real videos received lower ratings than those who were less attractive in deepfake videos. Additionally, self-enhancement through deepfakes led to positive assessments of facial expression, body shape, and self-esteem (Wu et al., 2021). Therefore, the effects of deepfakes on emotional responses appear to demonstrate positive results.

Behavioral Responses

In some related studies, researchers have found that individuals translate their experience of deepfake exposure into a behavioral intention, defined as individuals’ readiness to act. For example, those who were trained in media literacy were less likely to have information-sharing intentions of deepfake videos (Hwang et al., 2021). Deepfakes as harmful applications can increase users’ ability to recognize them, leading to a negative impact on users’ sharing intention (Iacobucci et al., 2021). Those who recognize deepfakes grow a lower susceptibility to believing willfully misleading claims of disinformation. These results offer the possibility of society’s defense against deepfakes in the form of reasoned interventions.

Motivational factors, namely ideological incompatibility with a political party, brand hatred, and moral consciousness, affect the intention to share political deepfake videos (Sharma et al., 2023). As such, users’ increased intention to verify political deepfake videos reduces sharing intention. Therefore, deepfake exposure affecting sharing intention can be shaped by viewing conditions. In summary, deepfakes tend to engender adverse effects on behaviors. Notably, the recognition of deepfakes induces less sharing intention because individuals realize that such behavior can harm others.

Hypotheses and Research Questions

Effects of Deepfakes on Cognitive Responses

Research indicates that study participants rate deepfake content as less credible and authentic than other media modalities in political messages (Hameleers, 2024; Hameleers et al., 2022). When deepfakes were pre-notified with media literacy, the credibility evaluation of the information was lower than when it was not (Dobber et al., 2021). Moreover, lies and deception detection in deepfakes (Hameleers et al., 2024; Weikmann et al., 2025) were perceived as less credible than other modalities (Hameleers & Marquart, 2023). Following the review, a hypothesis is posed:

Effects of Deepfakes on Emotional Responses

Effects on emotions are relatively positive from a prosocial effect perspective. Deepfakes in resurrection narratives have elicited compassion (Lu & Chu, 2023), satisfaction (Eberl et al., 2022), and warmth (Lee & Shin, 2022). Vividness was higher in the deepfake group than in the control conditions (Hwang et al., 2021). This meta-analysis examined affective evaluations of deepfake exposure as emotional responses. They are empathy, immersion, vividness, surprise, compassion, impression, warmth, identification, likability, open-mindedness, enthusiasm, and satisfaction. Drawn from the review, this study sets the following hypothesis.

Effects of Deepfakes on Behavioral Responses

Available studies on the sharing intention of deepfake videos primarily focus on the dissemination of political information (Ahmed, 2022, 2023). Fear of missing out, cognitive abilities, prior information to recognize the video’s artificiality, and labels on deepfakes influence sharing intentions (Soto-Sanfiel & Saha, 2024). Experiencing deepfakes discourages individuals from sharing information with others (Hwang et al., 2021; Iacobucci et al., 2021). Viewers of deepfakes tend to exhibit a reduced intention to share content. The hypothesis reflecting this prediction is:

Moderators for the Effects of Deepfakes on Individual Responses

As moderators, this meta-analysis examined how differences in the characteristics of selected studies explained the heterogeneity in the effects of deepfake exposure on individual responses: perceived credibility, emotional responses, and sharing intention. Most deepfake studies are set in either the presence or absence of the media literacy condition, which is defined as the ability to critically analyze media content to determine its personal and social outcomes (Ahmed, 2021; Hameleers, 2024). In this analysis, media literacy refers to the literacy related to disinformation. Given the role of media literacy in shaping individual responses, it stands to reason that the literacy condition moderates its effects. Deepfake information is viewed as less credible when accompanied by media literacy than when it is not (Hameleers & Marquart, 2023; Hwang et al., 2021).

Varying moderating conditions regarding media literacy determine the effects. For example, study participants who were indirectly implied that they were viewing deepfakes because a known politician spoke about apparently incorrect information perceived them as less credible than the same information in textual format (Hameleers, 2024). When study participants were not informed of the nature of the deepfake condition, they tended to detect it and evaluate it accordingly (Hameleers et al., 2022). The false tag (the tag describing the video is false) on deepfake news alleviated news engagement for those who perceived the video to be highly vivid. In the same study, political ideology and media literacy were moderators that influenced how participants reacted to deepfakes. Those with higher media literacy were more likely to critically assess and reject misleading content, regardless of its source (Hameleers et al., 2024).

With emotional responses, media literacy moderated the influence of exposure on positive outcomes. Some deepfakes were viewed as less emotionally engaged than other modalities in no media literacy studies (Eberl et al., 2022). When media literacy serves as a moderator, exposure causes positive effects on attractiveness and satisfaction (Wu et al., 2021).

Realizing that the video narrative is a fabricated deepfake after learning through literacy is a moderator affecting reduced sharing intention (Ahmed, 2023). Media literacy activates cognitive abilities and self-regulation, impacting sharing tendencies negatively (Ahmed, 2022). The false tag of media literacy abridged individuals’ engagement intentions of disinformation news (Lee & Shin, 2022). From the study findings, this study poses the following hypotheses.

Meta-analyses dealing with media effects analyze types of control groups as moderators through subgroup differences (Siew et al., 2023). Depending on the types of control groups, such as video and text, the effects of media on consequences provide distinctive implications. Deepfake video topics may moderate the relationship between treatment exposure and consequences. For example, media content moderators have been shown to affect emotional responses on a digital health platform (Petrakaki & Kornelakis, 2025). Content literacy (topics presented in the media message) increased skepticism and a critical understanding of media portrayal (Jeong et al., 2012).

Going further than the presence or absence of media literacy, types of literacy, or the level of experience can make a difference (Canet & Sánchez-Castillo, 2024). Despite pre-exposure warnings as media literacy that informed study participants that a magician performed the psychic demonstration without real psychic abilities, participants’ beliefs in the abilities still significantly increased (Kuhn et al., 2023). However, in another experiment, participants were shown how the psychic demonstration was staged after they had witnessed it. This debunking significantly reduced their beliefs in psychic abilities. The findings suggest that providing clear explanations of how mis (dis) information is created can be a more powerful tool than a simple pre-exposure warning in mitigating its impact (Shin & Lee, 2022). Therefore, this study takes an additional step toward media literacy moderators by categorizing types of literacy in terms of complexity (Huang et al., 2024). To this end, regarding experimental settings (control group types, video topics, and literacy types) as moderators, the current study asks the following research question.

Prior research on deepfake effects has utilized gender and age as potential moderators, as they have been associated with information evaluation and social outcomes (Renier et al., 2024). In addition, considering the cultural differences in individual responses to deepfakes across countries, it is plausible that the country where participants are sampled would affect the magnitude of the effects of deepfake exposure on individual responses. Prior research has used national differences as a moderator to measure the effects of media exposure on social outcomes (Chu et al., 2022). Derived from these considerations, the following research question is asked:

Method

Search Strategy

Given the nature of deepfake media modality and its public availability, all studies included in the analysis were experimental in design. In experimental settings, study participants were exposed to various media modalities (e.g., control, text, image, and audiovisual deepfakes) and responded to the experience. Following the Preferred Reporting Items for Systematic Reviews and Meta-Analysis (PRISMA) guidelines (Moher et al., 2010; Page et al., 2021), this study searched for experimental studies on deepfake effects on individual differences based on a 27-item checklist and a four-phase flow diagram (see Appendix for complete search strategies). The database search for this study took place on October 23, 2024. The researchers conducted searches with library service assistance using the following databases with no data restrictions: Association for Computer Machinery Library, Communication & Mass Media Complete, Academic Search Complete, ProQuest, PsycINFO, PubMed, Sage Communication Journals, and Web of Science. Studies on all dates were collected. The following subject headings were used: “deepfake,” OR “deep fake,” OR “deepfakes,” OR “deep fakes,” AND “experiment,” AND “effect.”

Eligibility Criteria

Two researchers reviewed each study to determine whether it met the eligibility criteria. Studies were selected if they included the following criteria: (a) experimental studies that compared treatment groups (deepfake) and control groups (no deepfake) to examine individual responses, (b) a measure of perceived credibility, emotional responses, and sharing intention, and (c) studies with means and standard deviations for effect size calculation. Studies were excluded if they (a) did not meet all the inclusion criteria, (b) were experimental studies that did not include baseline data for deepfake exposure and individual response outcomes, (c) did not measure deepfake video effects, or (d) were not written in English. Enrolled publications were selected according to the following criteria: “academic journals,” “research articles,” “peer-reviewed journals,” “full texts,” and “all dates.” As this analysis focused on primary research results, books and chapters were excluded.

Study Screening

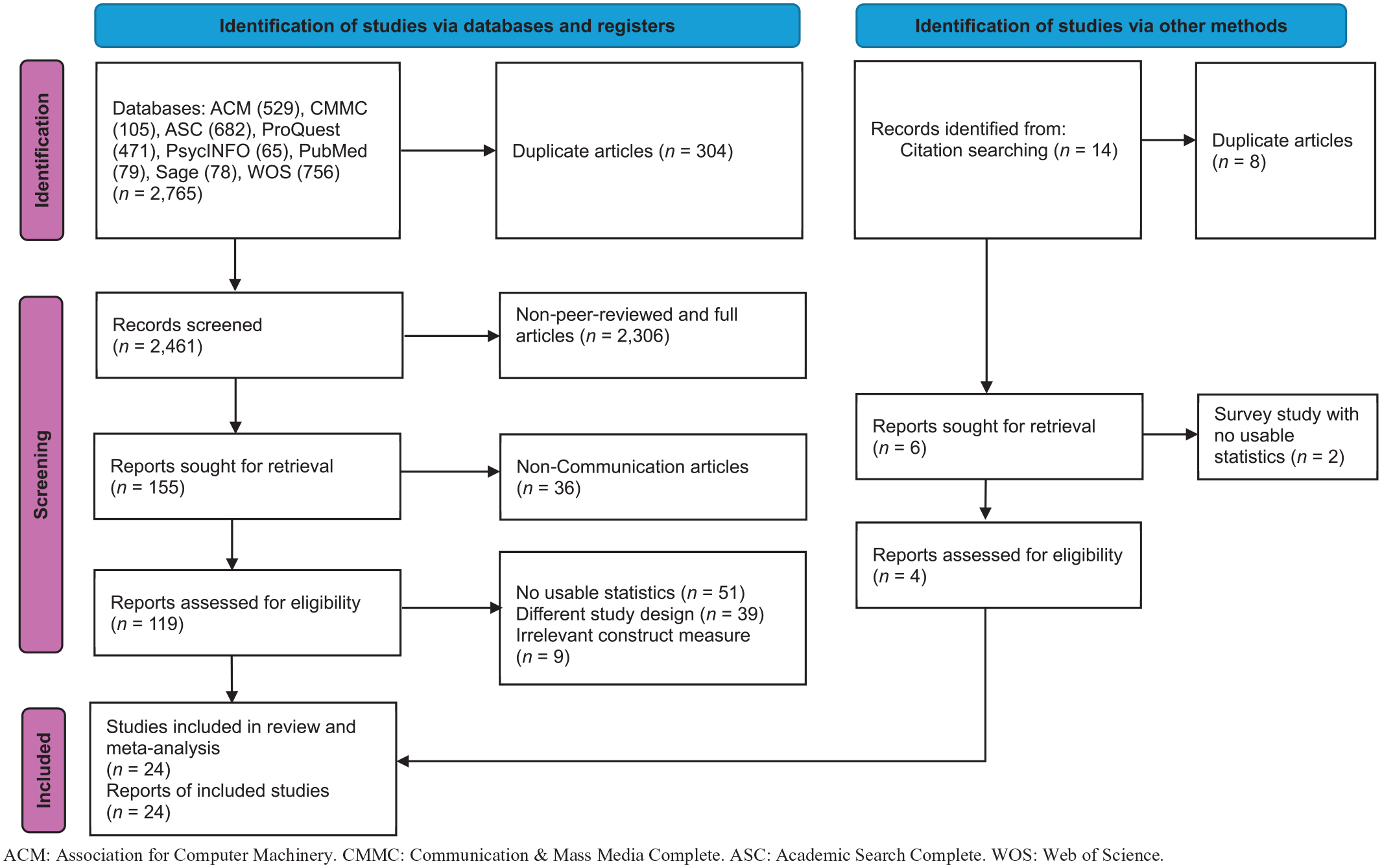

The PRISMA flow diagram for this study is presented in Figure 1, illustrating the review and exclusion process. Two reviewers conducted systematic reviews of the studies obtained from all databases. Both reviewers reached a consensus on the final list of studies for meta-analysis. Duplicate articles were sorted out and removed from the first search records (N = 2,765; n = 304). A total of 2,461 studies were included in the review of titles and abstracts. The two reviewers screened the titles and abstracts of each study, removing or moving them to a full-text review based on the eligibility criteria. All irrelevant studies, after reading abstracts, were excluded. In the cases of disagreement, further discussion followed to reach a consensus. Continued clarification between the two reviewers was conducted regarding the inclusion and exclusion criteria of the selected studies. To request further information, the researchers contacted the authors of some deepfake experimental studies without standard deviations. Only those studies that received the authors’ responses were included.

PRISMA Flow Diagram of Study Selection Process.

Data Extraction and Coding

Full-text articles were obtained for each study through downloads or library requests. A total of 119 studies were included in the full-text reviews. The two reviewers read the full-text articles to verify the studies’ eligibility for analysis. Particularly, studies were excluded if they did not measure deepfake videos (i.e., they measure only mis (dis) information). When there was no indication of the control condition in the selected studies, the text-only, authentic information, low-definition deepfakes, or cheapfake groups were used as the control group for comparison, as they served as reference groups to compare with deepfakes. In the final process, with the addition of four studies identified through citation searching, 24 studies met the inclusion criteria and were selected for data extraction and coding in this study. The two coders cross-checked the coding of each study’s statistical data, which were compiled into a CSV (comma-separated value) file.

Individual Response Measures

Experimental and control conditions captured subjects’ exposure to deepfake videos (experimental) or other modalities that the studies set as the control condition. Subjects’ responses in post-experimental surveys were recorded as means and standard deviations. The individual response variables assessed were perceived credibility, emotional responses, and sharing intention. Perceived credibility was measured as the agreement with the degree to which the perceived information is trustworthy and accurate, ranging from strongly disagree to strongly agree (Hameleers & Marquart, 2023). Emotional responses were overall assessments of the deepfake video for empathy, immersion, vividness, surprise, compassion, impression, warmth, identification, likability, open-mindedness, enthusiasm, and satisfaction, ranging from not applying at all to fully applying (Eberl et al., 2022). Sharing intention measured the likelihood of subjects sharing the video on social media or other channels, ranging from not at all likely to very likely (Ahmed & Chua, 2023).

Moderators

For media literacy, a subgroup was coded into two categories: media literacy—no media literacy. If a study provided media literacy training (e.g., prewarning, deepfake indication, and debrief about deepfakes), it was coded as media literacy present. Those studies without such training were coded as absent. The type of control group, as a moderator, was coded into three categories: text, audio, and video. News articles are an example of the text control group. When the control group received an audio story only, it was coded as audio. Authentic, cheapfake, and low-definition deepfake videos were coded as the video control group. Regarding deepfake video topics, the coding categories included entertainment (e.g., Kim Kardashian), commercial (e.g., Mark Zuckerberg for business), education (e.g., teachers), politics (e.g., politicians), health (e.g., vaccines), and persona (an AI-generated deepfake person). Regarding the type of literacy, three categories were employed: none, simple warning, and detailed correction. Studies with no literacy were coded as none, whereas the studies that provided a simple sign (e.g., this information is false) were coded as simple warnings. Those studies with detailed correction information (i.e., longer explanations of the deepfake video or instructions) were coded as detailed correction. For the moderator of gender, this study used the percentage of female participants. The mean age of the sample assessed age as a moderator. The country was coded into either Eastern or Western countries. North American and European countries were coded as Western countries. All others were coded as Eastern countries.

Data Analysis: Effect Size Calculation

Statistical analyses, including the calculation of effect sizes, assessment of publication bias, examination of heterogeneity in the data, and moderator analyses, were performed using the R package “metafor.” Subgroup analyses were conducted with “meta.” With the means, standard deviations, and number of cases of the experimental and control groups, the effect sizes (Hedges’ g) were calculated. After study screening, studies with the outcome measurements of continuous variables were presented as mean differences. All effect sizes were bound by 95% confidence intervals (CIs) according to a random effects model, assuming variability among studies. Thus, it provides more generalizable results relative to a fixed effects model. Hedges’ g values are related to Cohen’s d and can be interpreted using the same conventions as Cohen’s d (Lakens, 2013). Hedges’ g values of 0.2, 0.5, and over 0.8 indicate small, medium, and large effects, respectively.

The risk of bias for the studies’ quality assessment was conducted through intercoder consensus. Heterogeneity was further explored using subgroup analyses of I2 to determine the variance between studies. The significant Q suggests that the effect sizes are heterogeneous. When I2 exceeds 50%, this suggests that the effect sizes are heterogeneous. The differences between endpoint and baseline data were calculated as the effect size in the meta-analysis. Publication bias was assessed by examining funnel plots and Egger statistics. No publication bias exists when a funnel plot shows asymmetry and statistics are nonsignificant (Borenstein et al., 2009).

To assess the effect of moderators on the relationship between deepfake exposure and individual responses, subgroup analyses were conducted on the categorical moderators (e.g., media literacy, control group type, video topics, literacy type, and country), while random effects moderation analyses were implemented on the continuous moderators (e.g., gender and age). Studies that did not report the individual responses of interest were excluded from the moderator analysis.

Results

Study Characteristics and Assessment of Risk of Bias

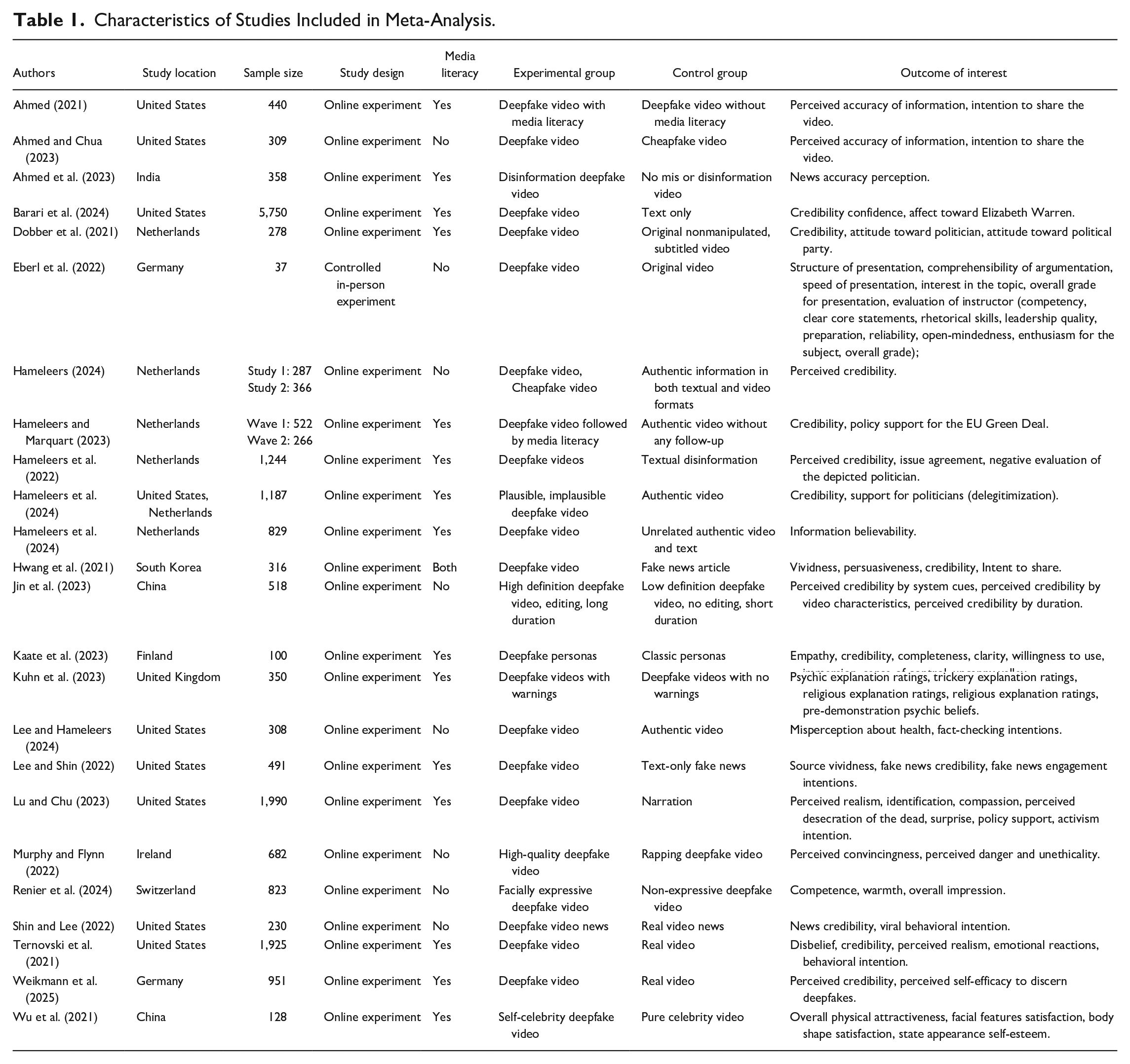

Twenty-four studies were included in the final analyses, totaling 20,685 participants (Table 1). The studies were conducted in the United States (n = 8, 33.33%), followed by the Netherlands (n = 5, 20.83%), Germany (n = 2, 8.33%), and China (n = 2, 8.33%). Fifteen studies (62.50%) provided study participants with media literacy. Eight studies (33.33%) did not provide media literacy before experiments, and one used both (4.17%). Females of the sample were 59.3%. The mean age of the sample was 31.4. Twenty studies examined participants from Western countries (83.3%) and four from Eastern countries (16.7%).

Characteristics of Studies Included in Meta-Analysis.

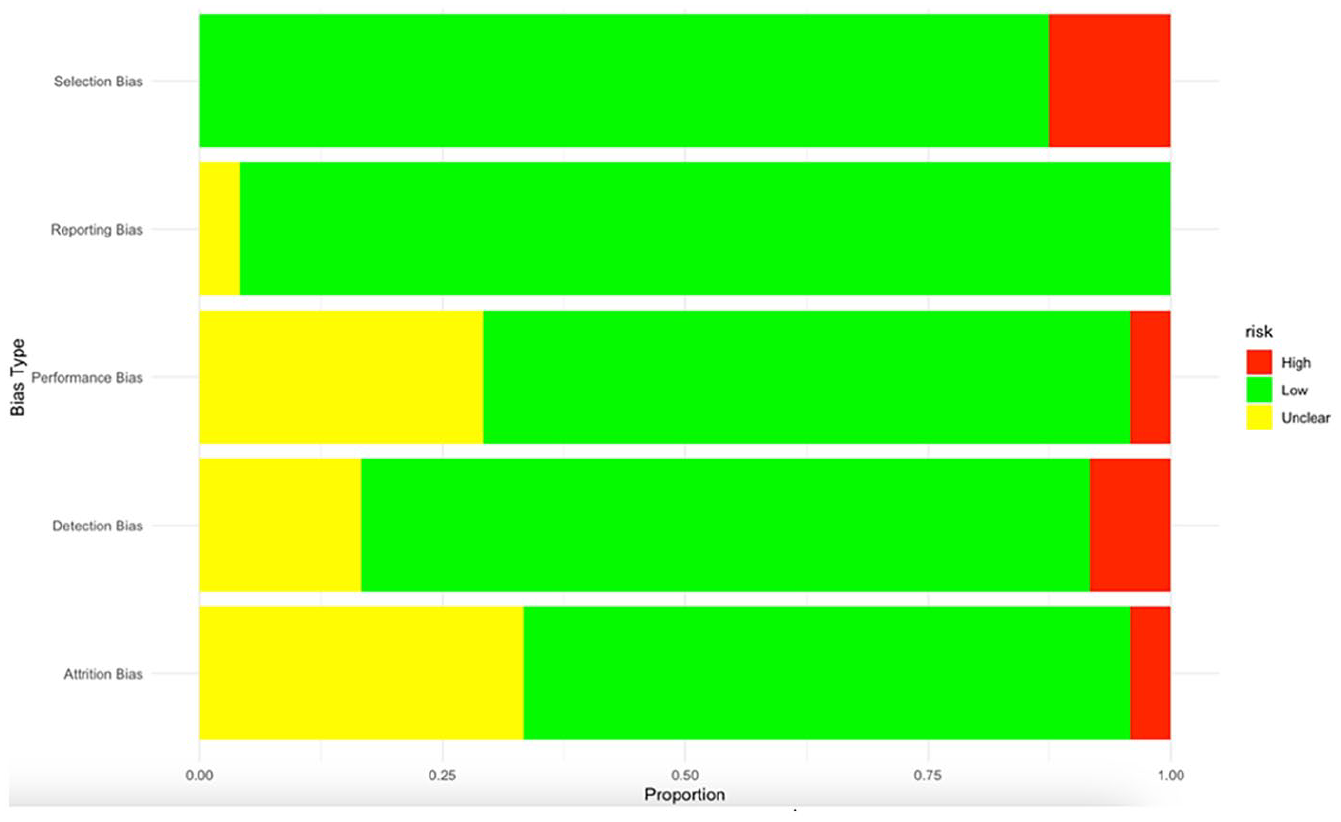

The quality of the selected studies was assessed using a risk of bias analysis (Shuster, 2011). The assessment included a judgment (low, high, or unclear risk of bias) in five domains: (a) selection bias, (b) reporting bias, (c) performance bias, (d) detection bias, and (e) attrition bias. The two coders cross-checked the entered data for reliability. After discussion, Cohen’s Kappa for selection bias was 83%, reporting bias 91%, performance bias 91%, detection bias 89%, and attrition bias 100% with an average of 90.8%, an acceptable reliability.

Most studies in the data had a low selection bias (87.5%), indicating that participants were representative of the target population. Nearly all studies were low in reporting bias (95.3%) by showing all measured outcomes and the results of tested hypotheses. Several unclear studies (29.1%) on performance bias did not provide detailed information about assignments to different groups. Some studies were unclear regarding attrition bias (33.3%) due to not reporting dropout rates or addressing issues with missing data (Figure 2).

Risk of Bias Graph.

Effect Size Estimates

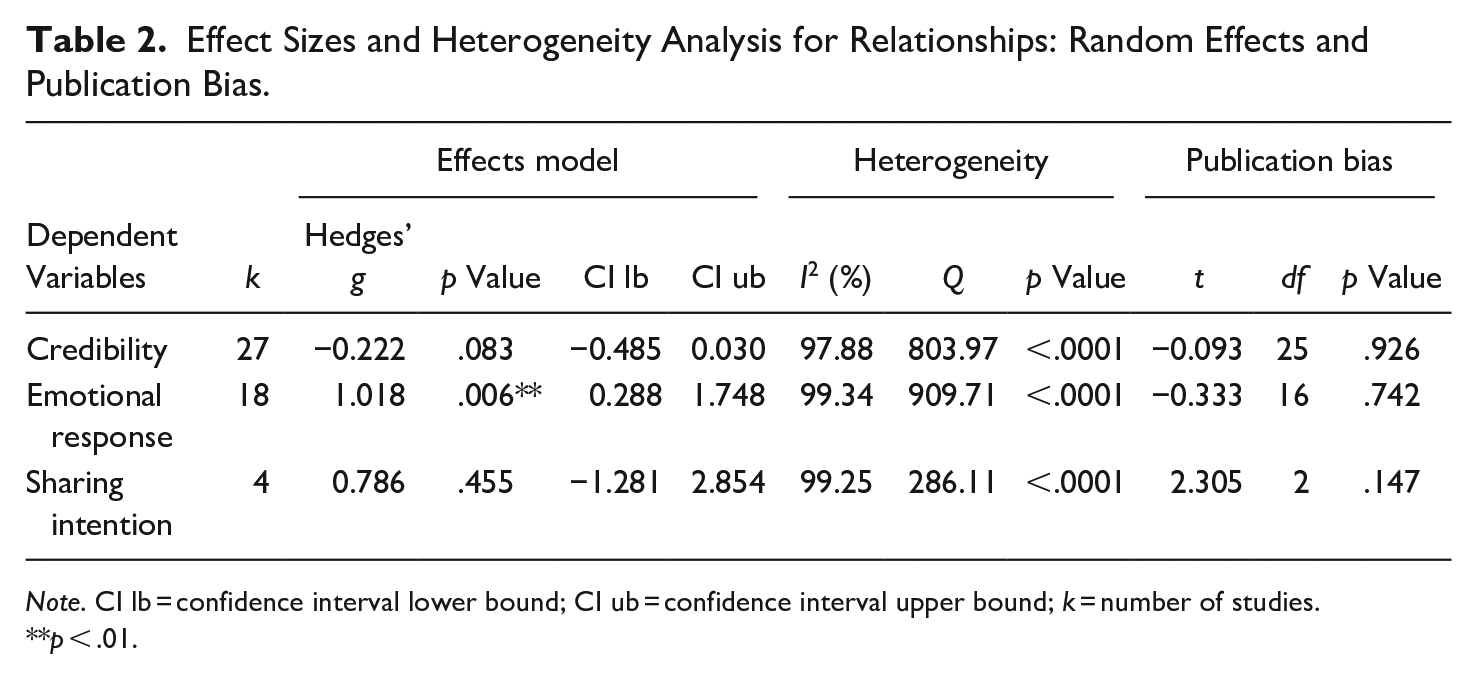

A meta-analysis was conducted for individual response indicators: perceived credibility, emotional responses, and sharing intention (Table 2).

Effect Sizes and Heterogeneity Analysis for Relationships: Random Effects and Publication Bias.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; k = number of studies.

p < .01.

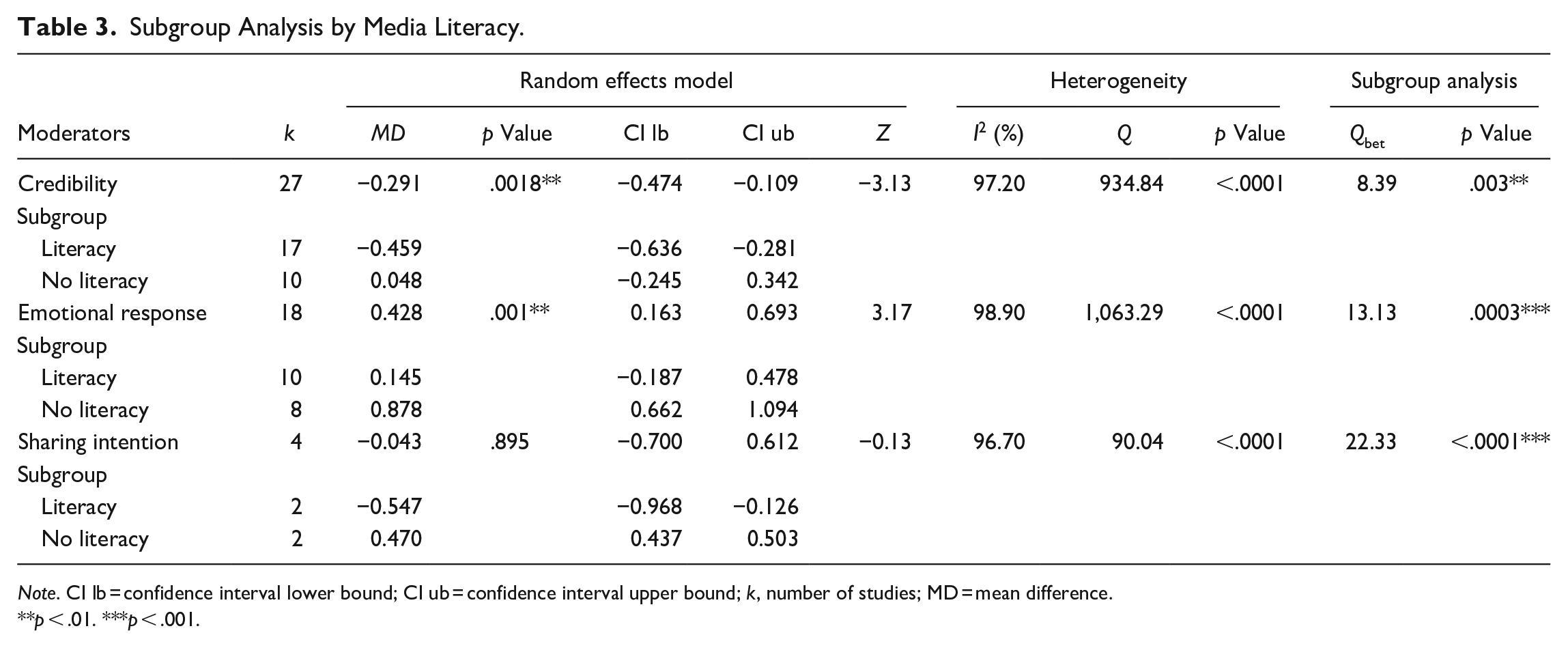

Moderator Analysis

Subgroup Analysis by Media Literacy.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; k, number of studies; MD = mean difference.

p < .01. ***p < .001.

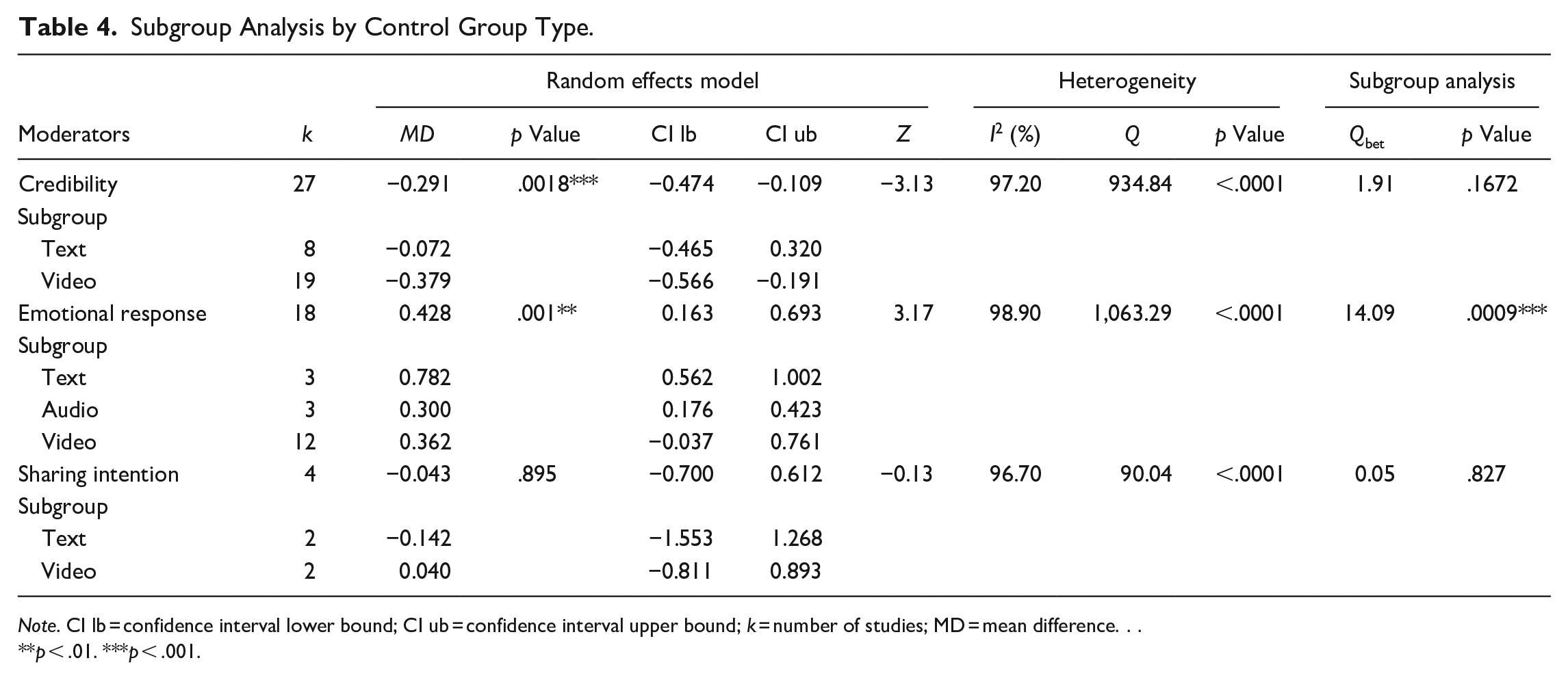

Subgroup Analysis by Control Group Type.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; k = number of studies; MD = mean difference. . .

p < .01. ***p < .001.

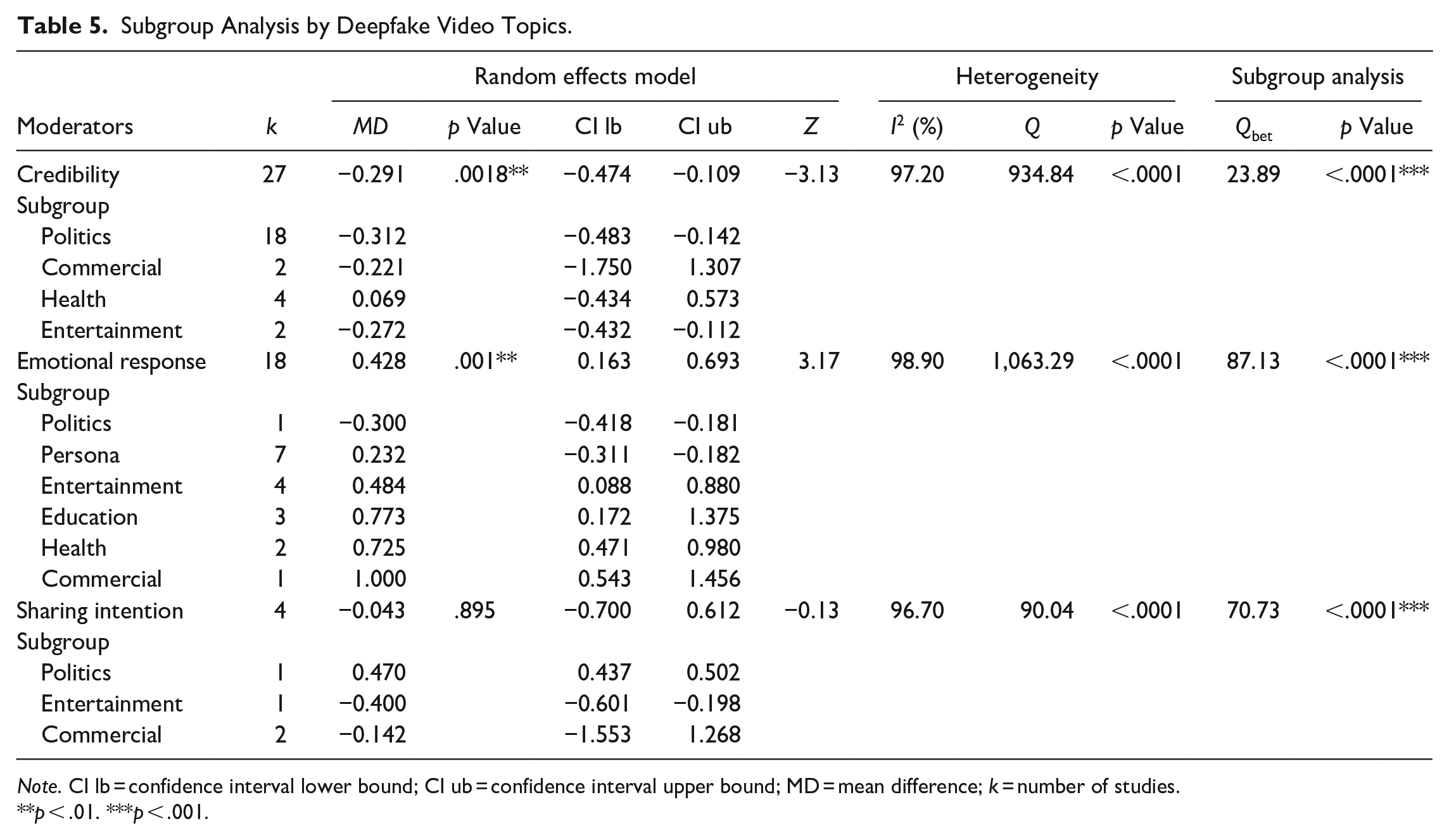

Regarding deepfake video topics, political videos yielded robust subgroup effects on reduced credibility (MD = −0.312; Qbet = 23.89, p < .001; Table 5). Education (MD = 0.773) and health deepfake videos (MD = 0.725) were more effective than others in affecting positive emotional responses (Qbet = 87.13, p < .001). Political videos (MD = 0.470; Qbet = 22.33, p < .001) were more impactful than entertainment videos in generating sharing intention.

Subgroup Analysis by Deepfake Video Topics.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; MD = mean difference; k = number of studies.

p < .01. ***p < .001.

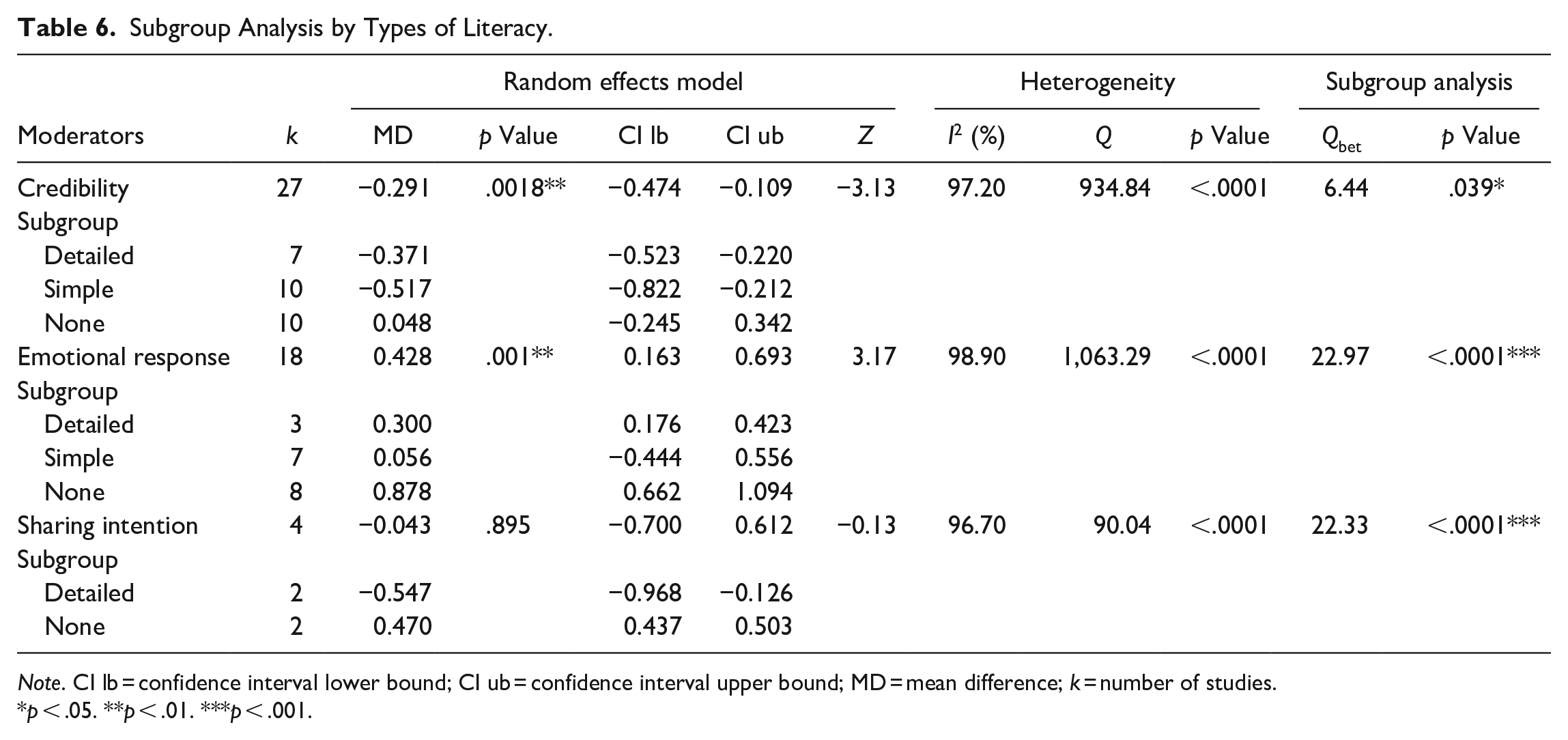

As to the type of media literacy, both detailed corrections (MD = −0.371; Qbet = 6.44, p < .05) and simple information (MD = −0.517; Qbet = 6.44, p < .05) were more effective than no literacy in lowering credibility evaluation (Table 6). No literacy (MD = 0.878; Qbet = 22.97, p < .001) was more effective than simple literacy training (MD = 0.056) in affecting positive emotional responses. Detailed corrections were more effective than no literacy in diminishing sharing intention (MD = −0.547; Qbet = 22.33, p < .001; Table 6).

Subgroup Analysis by Types of Literacy.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; MD = mean difference; k = number of studies.

p < .05. **p < .01. ***p < .001.

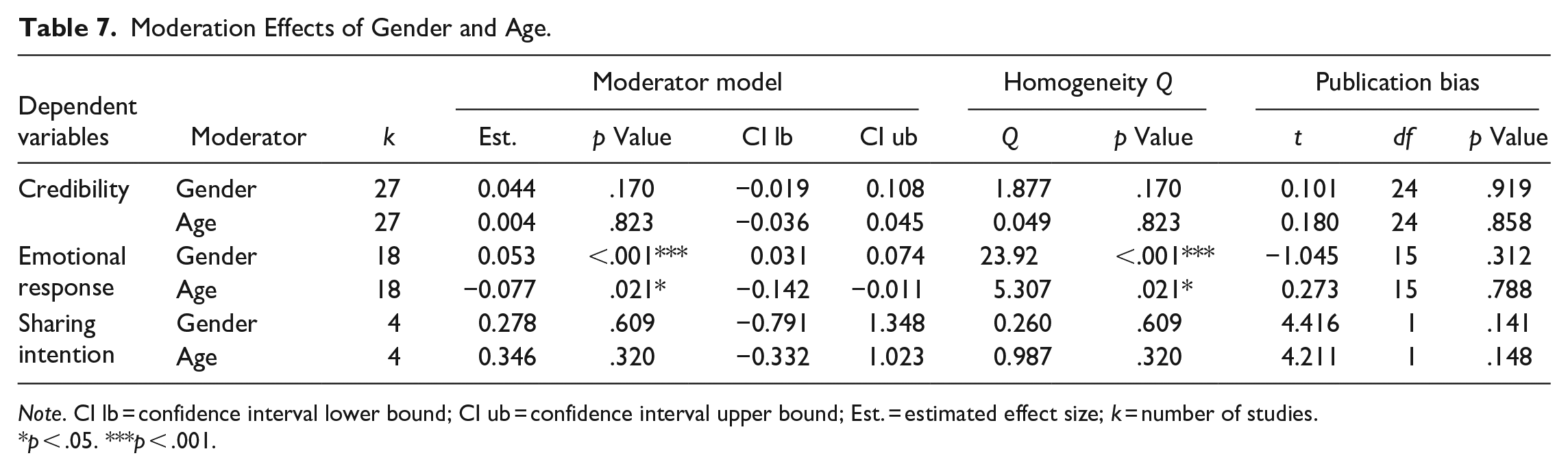

Moderation Effects of Gender and Age.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; Est. = estimated effect size; k = number of studies.

p < .05. ***p < .001.

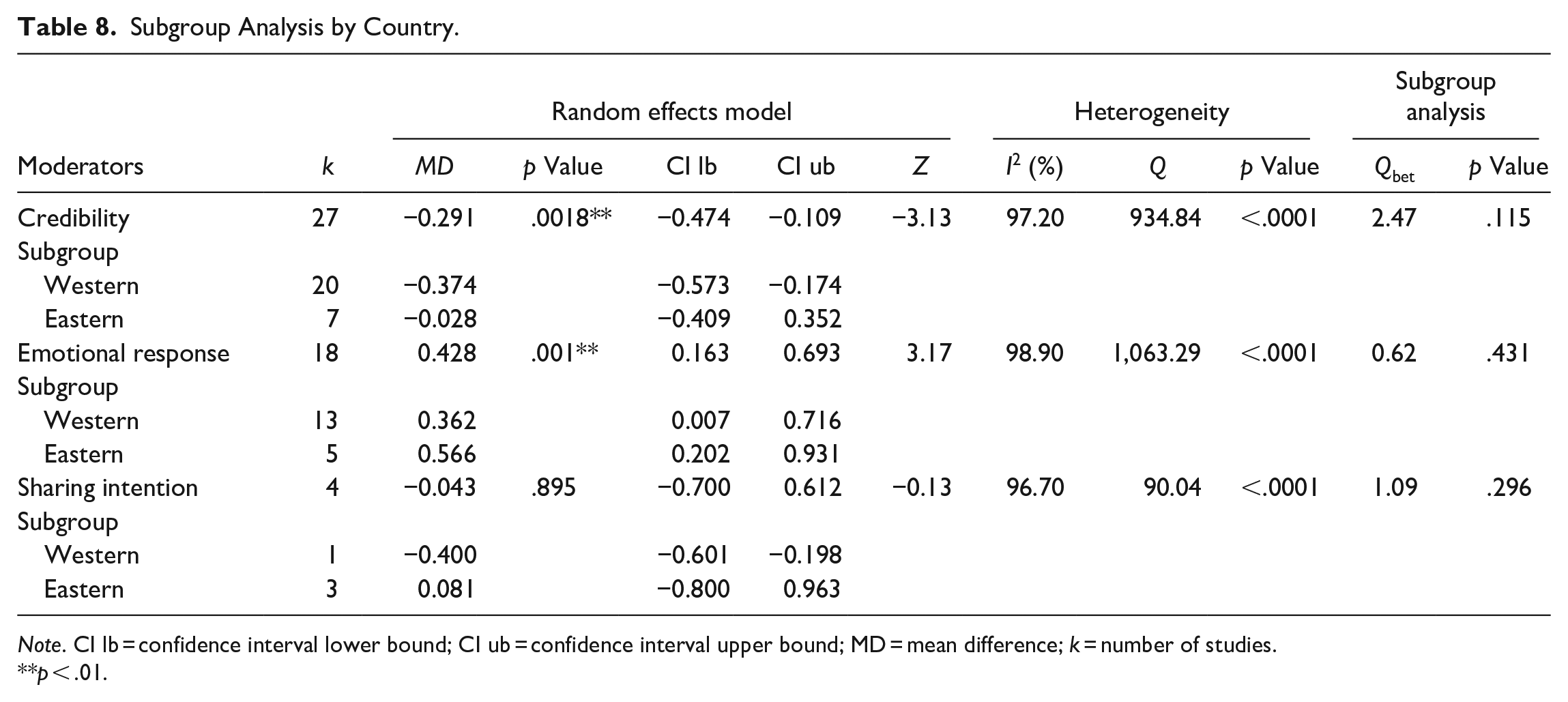

Subgroup Analysis by Country.

Note. CI lb = confidence interval lower bound; CI ub = confidence interval upper bound; MD = mean difference; k = number of studies.

p < .01.

Publication Bias

Potential publication bias was assessed using funnel plots and Egger’s regression tests of asymmetry (Tables 2 and 7). No publication bias was detected in the meta-analysis as the funnel plots were symmetric, and Egger’s regression tests were statistically nonsignificant. The results suggest that the selected studies’ effect sizes are not skewed but relatively balanced and representative of the sample.

Discussion

The effects of deepfakes on perceived information credibility, emotional responses, and sharing intention offer instructive points for communication scholarship and practice regarding disinformation and audience responses. This study’s findings attest to the micro- and macro-level influences of deepfakes. Based on the audience’s heuristic processing of rich media in deepfakes, this meta-analysis focuses on studies that examined how audiences accept manipulated information.

First, the findings show that although the overall effect is close to significance, exposure to deepfakes does not affect reduced credibility. There was no significant effect of deepfakes on sharing intention in the overall findings. These results suggest that the effects of deepfake exposure on message reception and interpretation are conditional. High variability across studies regarding deepfake exposure yielded inconsistent effects overall (Hameleers et al., 2024; Lee & Shin, 2022). As seen in the significantly adverse moderation effect of media literacy on the relationship between deepfake exposure and credibility in this study, the overall effect size might have masked the impact due to heterogeneity in media literacy across the studies. However, the meta-analysis reveals evidence of positive effects on emotional responses when deepfakes are positioned for prosocial purposes (Eiserbeck et al., 2023). When utilized positively for social causes, compassion, and likability, media practices involving deepfakes can enhance emotional engagement and play a significant pragmatic role in emotional responses.

Second, as the moderation effects of media literacy on individual responses are found, within a specific subgroup, the effects of deepfake exposure on reduced credibility and sharing intention are apparent. Those with media literacy are more likely to detect deepfakes and rate them as less credible, and have a lower intention to share, than those with no literacy. When the presence and absence of media literacy groups are combined in the overall analysis, these effects tend to be diluted, veiling media literacy’s impact. The moderation effect explains that relational significance is evident in subgroup analysis but not in the overall group analysis. The results suggest that media training, including on deepfakes, can counteract the truth default. As such, this meta-analysis highlights the crucial role of media literacy. In the context of political information deepfakes in a previous study, respondents with prior knowledge or literacy training recognized deepfakes as less credible (Hameleers et al., 2022). Direct or indirect deepfake detection can mitigate the negative impact of deepfakes. The results suggest that diverse approaches to detection through literacy can resist deepfakes (Iacobucci et al., 2021). For example, media literacy programs that enhance discernment by teaching people to recognize deepfake creation techniques and identify inconsistencies in videos are recommended (Huang et al., 2024). The results call for researchers to pay greater attention to developing literacy programs that discount deepfake content. Pertinent media literacy is crucial in mitigating the adverse effects of manipulative deepfakes on audience perceptions and behaviors.

On the positive side, the subgroup analysis of literacy in emotional responses revealed that study participants were more emotionally engaged in response to deepfake exposure when there was no literacy training. The results indicate that researchers should explore varying effects of deepfakes on compassion, immersion, and likability in social campaigns, health, education, and community engagement (Lu & Chu, 2023). In particular, no literacy can be more effective if the purpose is prosocial.

Third, notable findings from the subgroup analysis of control group types, video topics, and types of literacy suggest that political deepfake videos have a visible impact on reducing credibility, followed by entertainment videos. Setting media literacy aside, individuals tend to share political deepfakes compared to entertainment deepfakes when they struggle to identify deepfakes (Momeni, 2024). Therefore, although deepfakes with media literacy can reduce credibility and sharing intention, there is a risk that they will share political deepfakes, highlighting the significant role of detailed media literacy. One positive sign is that viewers are likely to notice disinformation in the video, given their cognitive ability and prior knowledge (Barari et al., 2024).

As the results confirm, detailed corrections are more effective than others in cognitive and behavioral responses (Luitse, 2019). Strategic media literacy can yield effective outcomes in countering disinformation videos. Creating literacy programs by politics, entertainment, and health for credibility, emotional responses, and sharing intention can provide relevant education for mitigating adverse effects. From the current study’s moderator analysis results, media literacy in content (politics for reduced credibility and education and health for positive emotions), detailed corrections for reduced credibility and sharing intention, and no literacy for favorable emotions may induce resilience to deepfakes and increase positive consequences.

Fourth, in moderation analyses, gender was a significant moderator in the effect of deepfake exposure on emotional responses. Notably, females viewed deepfakes as more emotional than males. Females tend to be more highly engaged with deepfake content than males. Past research supports these findings, as it has discovered that women are more compassionate and understanding toward deepfakes, while men tend to express distrust (Herring et al., 2022). Females’ emotional engagement with deepfakes suggests that deepfake technology can be utilized for positive interventions (Wu et al., 2021). In the analysis of age as a moderator, older adults are less likely than younger adults to experience emotional engagement for positive consequences. Targeted literacy programs for older adults that emphasize media analysis, introduce them to media production with prosocial purposes in mind, and provide hands-on activities to learn about deepfakes can make them deepfake literate (Ying et al., 2024).

Theoretical and Practical Implications

As deepfakes permeate audiences’ daily media consumption, they have the potential to affect information interpretation and public opinion. By processing deepfakes heuristically at the personal level, richer modalities with audiovisual cues can trigger cognitive, emotional, and behavioral heuristics, resulting in distorted perceived realism and trust bias (Hameleers, 2024). At the societal level, deepfakes may be part of audiences’ social information shaping (MacKenzie & Wajcman, 1999). While deepfakes may enhance communication, media richness with overloaded or manipulated sensory cues makes messages less trustworthy. Individuals may associate immersive media with potential deception, particularly in perceived authenticity with literacy. Based on this study’s findings, a conceptual model can be proposed. Using inoculation theory, which builds resistance to persuasive messages by preemptive actions (Huang et al., 2024), the proposed conditional media richness model can examine how information cues with forewarning and counterarguing literacy enhance cognitive and behavioral responses by consciously assessing the content. More specifically, rich media in deepfake with deception detection triggers sensory cues leading to critical cognitive and behavioral evaluations (Ahmed, 2023; Chen et al., 2024).

Media literacy programs can be underscored in diverse social sectors. As this meta-analysis demonstrates that media literacy results in significant differences in individual responses, emphasizing the awareness of credibility, information reception, and actions in the programs may help prevent audiences’ deceit from deepfakes. Social sectors can delve into media literacy, which entails a wide range of detailed disinformation simulations and critical media assessments to enhance the public’s resilience to deepfakes (Hwang et al., 2021). Media literacy, encompassing disinformation and deepfakes, with multiple sessions and detailed corrections, can provide awareness and skill sets to access and evaluate fake media (Huang et al., 2024).

As Ternovski et al. (2021) point out, however, indiscriminate deepfake warnings can erode trust in the information the audience views, causing a deception default. Therefore, media literacy needs careful approaches because, due to literacy, authentic information can be mistaken for deepfakes. In strategic plans, news organizations can enhance their media literacy through public campaigns to raise public awareness. Educational institutions can incorporate media literacy and the use of AI for fact-checking (Lelo, 2024) into their AI literacy curriculum. With respect to positive deepfakes, health campaigners, educators, and advertisers may utilize deepfakes without literacy for health messages, learning, entertainment, and social causes to prompt emotional responses and actions. However, caution is necessary to prevent the malicious effects of deepfakes on emotional behaviors (e.g., fake donation requests).

Limitations and Future Research

Some inherent study limitations might affect research integrity and the implications of the findings, although communication researchers have used systematic review and meta-analysis to review and aggregate the results of empirical studies on the effects of media. Even though thorough searches and agreements were obtained, inadvertent losses of relevant studies might have occurred, which could limit the effectiveness and impact of this analysis. Future research can replicate this topic to update this study’s results. This relatively small number of studies in the data may limit the evaluation of the entire picture of deepfake effects. Future research can include newly published studies for comprehensive measures of cognitive, emotional, and behavioral responses. Another challenge in meta-analyses for individual responses was the multiplicity of measuring emotional responses. Such restrictions might have caused some missing measures of emotions.

Besides, the individual response outcomes examined in the studies do not fully capture the significant consequences of deepfake exposure. For example, few studies included in this systematic review and meta-analysis examined more direct outcomes, such as appraisals, attitudes, self-efficacy, and social participation. A meta-analysis of survey studies about deepfakes measuring antecedents and outcomes is suggested, as an increasing number of studies are being conducted in this domain. Going beyond the cognitive, emotional, and behavioral responses covered in this study, audiences’ social engagement and participation variables can be considered inseparable from the outcomes of deepfake exposure.

Conclusion

This systematic review and meta-analysis discovered that exposure to deepfakes leads to assessments of decreased credibility and sharing intention in the media literacy context and increased emotional engagement. Media literacy, acting as a moderator, made significant differences in some individual responses. With the literacy education study participants received, they critically evaluated the information’s credibility and had less intention to share. Educational initiatives that critically evaluate information through media or social literacy can reduce the audience’s susceptibility to dis (mis) information (Ahmed et al., 2025). The synthesis of the random effects models, subgroup analyses, and moderation analyses indicates that deepfakes can produce intended effects depending on the conditions. An optimistic perspective is that critical media consumers can mitigate deepfakes’ negative impact.

From this systematic review and meta-analysis on deepfake effects and the role of literacy in mitigating adverse effects on audience cognition, emotion, and behavior, several forward-looking agendas can be addressed. First, deepfake detection and recognition through literacy training are not sufficient. Leveraging deep learning models and sophisticated AI algorithms may improve identifying disinformation audio–visual content (Kyianytsia & Faуvishenko, 2025). Second, another technology that can help create authentic media records is blockchain. By verifying the authenticity of media content, some blockchain video frames for anomalies can detect deepfake manipulations (Jain et al., 2025). The effects of these detection technologies (e.g., comparing detected videos with undetected videos and media literacy experiences) on individual responses can be further examined in deepfake research.

Third, alongside the covered research topics, the effects of deepfake exposure on social trust, opinion polarization, and social participation emerge as key research agendas on disinformation, given their significant social implications (Alanazi et al., 2025). These deepfake research topics are worth attention in future studies. Fourth, divergent and expanded media literacy programs can be tested for their effects on resilience and critical evaluation of deepfake content (Kyianytsia & Faуvishenko, 2025). For example, media literacy programs based on topics, types, gender, and age can be tailored and offered for effective outcomes. Fifth, as this study finds positive effects of deepfakes on emotional responses among those with limited literacy, their impacts on various sectors, such as entertainment, education, and health, can be further explored (Alanazi et al., 2025). Moreover, global comparisons of deepfake effects and interdisciplinary studies collaborating between communication scholars and detection scientists can yield productive outcomes. In conclusion, research attempts that address emerging deepfake agendas can potentially mitigate their adverse effects and amplify positive impacts on the audience.

Footnotes

Appendix

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.