Abstract

Effective science communication (SciComm) is crucial, but training scalability remains challenging. We explored whether generative AI (GenAI) could provide feedback to enhance SciComm strategies. In an online iterative distillation exercise, SciComm trainees (

Keywords

Introduction

We live in a science- and technology-driven world, where scientific developments increasingly influence policy decisions, societal norms, and individual behaviors. Therefore, effective science communication (SciComm) is critical in bridging the gap between scientific communities and the public (Burns et al., 2003; National Academies of Sciences, Engineering, and Medicine, 2017).

However, most scientists are not trained to communicate their research beyond the academic sphere (Cerrato et al., 2018; Mercer-Mapstone & Kuchel, 2017). While academic writing and discipline-specific communication are core components of scientific training, SciComm to general audiences often requires an entirely different set of skills, such as storytelling, simplification, and audience adaptation (Baram-Tsabari & Lewenstein, 2017).

As a result, specialized SciComm training programs have emerged to foster clarity, audience awareness, framing, and the use of metaphors and narratives as foundational competencies (Dahlstrom, 2014; Illingworth & Allen, 2016). Nevertheless, the distribution, accessibility, and institutional integration of such training remain uneven globally. Existing programs vary significantly in time allocation and resource demands from participants, ranging from brief workshops to year-long curricula (Aurbach et al., 2019; Massarani et al., 2023). The GlobalSCAPE project identified 122 science communication teaching programs in 31 countries, predominantly located in the United States and Europe, highlighting an international disparity (Massarani et al., 2023). That is, access remains highly uneven globally, particularly outside of major research institutions and in developing regions (Massarani et al., 2023).

This availability barrier scientists are facing is caused by the dispersity of SciComm training, including limited access to skilled trainers and high-quality training programs. Many institutions lack instructors with specialized SciComm expertise, and the number of available programs does not meet the growing demand (Bankston & McDowell, 2018; Washburn et al., 2022). An additional barrier is a resource one. Time constraints and limited resources are frequently cited by scientists and faculty as significant obstacles. The demands of teaching, research, and administrative responsibilities leave little bandwidth for additional training or engagement efforts (Mannino et al., 2021; Washburn et al., 2022). Moreover, effective SciComm development necessitates sustained institutional commitment—integrating training into university strategies and developing shared infrastructure (Greig et al., 2024). The absence of incentives such as academic credit or formal recognition in promotion and tenure decisions further discourages participation (Washburn et al., 2022) and poses an institutional barrier.

These barriers introduce a scalability challenge: how can we support the communication skill development of large numbers of scientists without significantly increasing resource demands?

One potential solution lies in emerging technologies—specifically, generative artificial intelligence (GenAI). GenAI has already demonstrated promise in helping users draft and refine content. Could it also assist scientists in improving their SciComm outputs?

GenAI in Academic and Scientific Contexts: An Emerging Landscape

Generative AI is rapidly reshaping academic and scientific writing practices. Lin (2023, 2025) provides a comprehensive framework for understanding how scientists can productively integrate AI tools across the research life cycle, from literature review through manuscript preparation, while maintaining critical oversight. Khalifa and Albadawy (2024) similarly argue that GenAI represents an essential productivity tool for academic writing and research, capable of reducing time burdens when used appropriately. Across communication domains, patterns suggest GenAI offers efficiency gains while raising important questions about quality, attribution, and trust. In journalism, for instance, AI tools have demonstrated value for routine tasks but also prompted concerns about editorial standards (Dijkstra et al., 2024; Lewis et al., 2025). Computational and linguistics studies attempting to detect AI-generated text have revealed challenges in distinguishing AI-assisted from human writing, particularly after iterative revision (Weber-Wulff et al., 2023; Yildiz Durak et al., 2025), underscoring broader questions about authenticity and accountability.

GenAI in Educational Writing Contexts: Feedback and Skill Development

More directly relevant to training contexts, emerging research examines GenAI’s role in supporting writing pedagogy. Steiss et al. (2024) compared ChatGPT-generated feedback to human feedback on student essays, finding that AI feedback could support revision processes, particularly for structural and clarity improvements, though with limitations in addressing complex rhetorical dimensions. Banihashem et al. (2024) compared peer-generated and AI-generated feedback in essay writing, revealing that both facilitate improvements but prompt different types of revisions. These findings suggest GenAI may serve as a complementary feedback source in educational settings, raising questions about its effectiveness for developing specific writing competencies.

GenAI and Science Communication: Opportunities, Challenges, and Gaps

In the science communication domain, preliminary work has begun addressing GenAI’s role and implications (Kessler et al. 2025). Alvarez et al. (2024) outline both opportunities—enhanced accessibility and efficiency in communicating science to public audiences—and risks, including potential trust erosion, attribution concerns, and the challenge of maintaining scientific nuance in AI-mediated messages. Kessler et al. (2025) suggested different ways GenAI can be used by scientists and working habits, to support ethical use of GenAI for SciComm, while Kwon (2025a; 2025b) examines scientists’ attitudes toward AI in academic writing, revealing cautious optimism mixed with concerns about appropriate integration.

Together, these strands of research stress the need for empirical evidence-based understanding of the practical use of GenAI in communicating science. To date, empirical evaluation of the content generated with GenAI support in the field of SciComm is scarce. Such evidence is essential for guiding both research and practice in science communication. It will enable the development of frameworks that are sensitive to different contexts and that address both the promise and the perils of GenAI-mediated communication, preparing researchers and practitioners to navigate this rapidly changing reality.

Overall, the high demand and limited accessibility of SciComm training programs create a scalability challenge. On the other hand, the rapid integration of GenAI into communication practices is reshaping both the methodology and content of SciComm training and its potential for supporting scientists on a larger scale. But does GenAI support the production of better-quality SciComm products? To answer this, we must first empirically evaluate the utilization of SciComm strategies in texts produced with GenAI feedback. This article aims to provide empirical evidence for this question.

In this study, we aim to evaluate the utilization of science communication strategies in texts from a SciComm research perspective. We build upon established rubrics developed to assess the use of core SciComm training concepts (e.g., clarity, relevance, emotional engagement), providing a systematic framework to judge the effectiveness of GenAI-assisted communication products. Therefore, our research questions leading this study are:

What are SciComm training participants’ assessment preferences regarding SciComm products supported by GenAI?

Does feedback provided by GenAI support better SciComm products?

In what ways does GenAI feedback support the use of SciComm skills?

Methodology

Research Context and Participants

Participants were drawn from five SciComm training programs conducted at three international technological universities between February and April 2024. These programs, led by the first author, aimed to strengthen participants’ ability to communicate science clearly and engagingly to non-expert audiences. The curriculum focused on essential SciComm principles, including reducing jargon, recognizing the “curse of knowledge,” and making science relatable through everyday language, narrative, humor, and analogies. Emphasis was placed on message distillation, inspired by journalistic practices, and on empathy-driven communication informed by improvisational theater methods (Alda, 2010; Bass et al., 2016; Bernstein, 2014). Each session began with an overview of science communication, including relevant research-based content tailored to participant backgrounds. The pedagogy was team-taught, informal, and interactive, designed to foster practical skills, effective strategies, and confidence. Participants’ academic level spanned from undergraduates (20%), postgraduate students, and researchers (Postdocs) (80%) coming from a wide range of STEM fields, such as life sciences, mathematics, physics, and science education. About half of the participants were Female (48.7%).

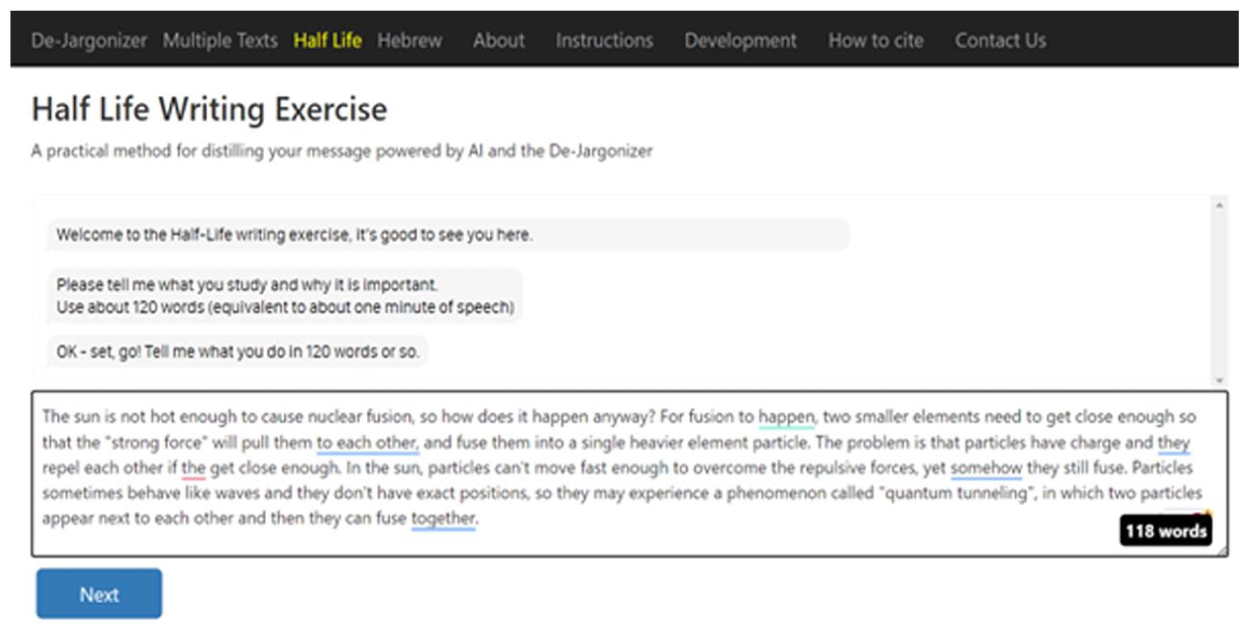

A central activity in the training was the “Half-life” exercise, which guides participants through successive reductions of their message length to sharpen clarity and focus (Aurbach et al., 2018). Traditionally delivered face-to-face with peer feedback, the exercise was adapted for this study into a writing-based format via an online interactive platform. Participants were asked to explain their science to a non-scientific audience (“Please tell me what you study and why it is important. Use about 120 words [equivalent to about one minute of speech]”), revised their descriptions over several iterations, receiving feedback from a computerized tool, replacing the original peer feedback. The interface can be seen in a screenshot in Figure 1.

A Snapshot of the Online Computerized Tool Used During the Training and Its Interface, Asking Training Participants to Write About What They Study and Its Importance in 120 Words.

A total of 81 participants initially engaged in the training. Three were excluded from analysis at their request (

The De-Jargonizer is an automated tool for assessing and improving vocabulary clarity (Rakedzon et al., 2017). It analyzes the frequency of each word in a text using a large reference corpus of over 90 million words drawn from approximately 250,000 BBC articles (2020–2023), representing contemporary general and science-related English. Each unique word (“word type”) is matched against this corpus, which includes both British and American spellings, to determine its frequency.

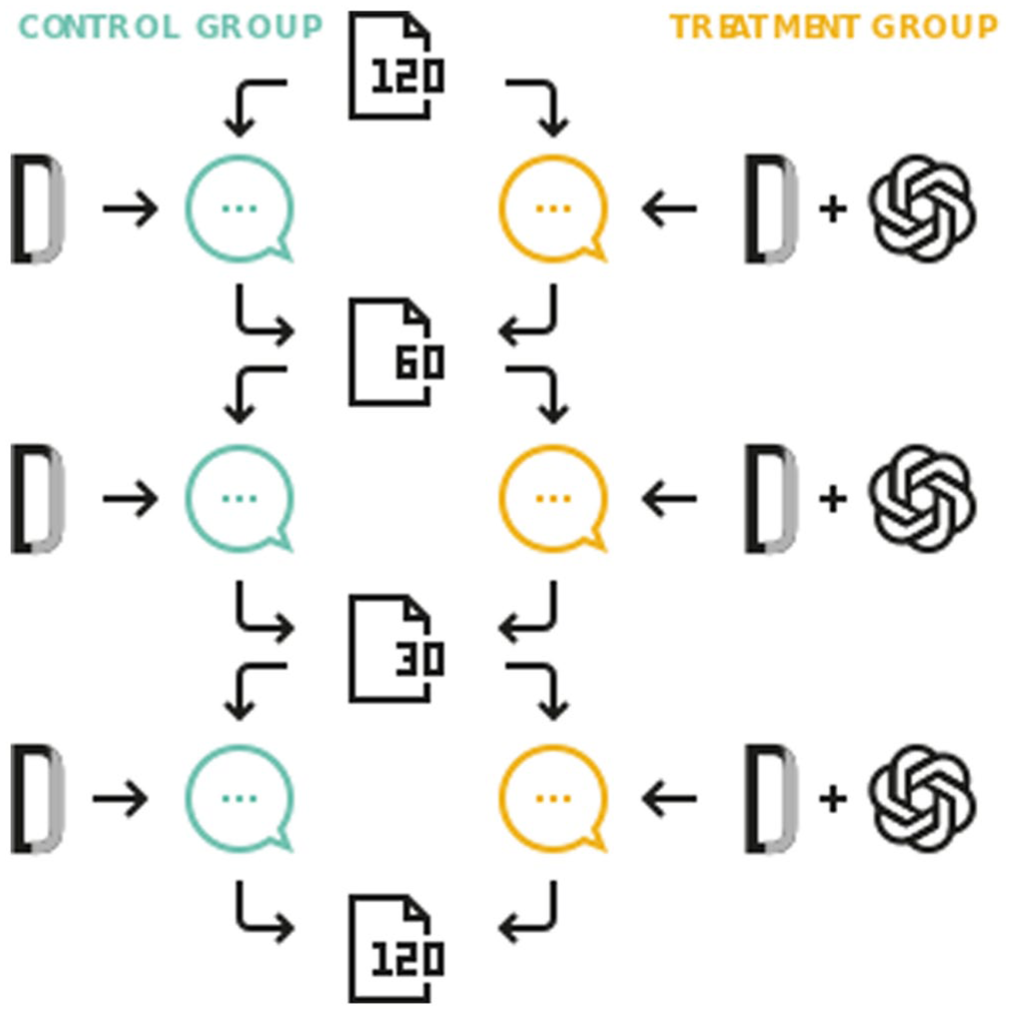

Based on this dictionary-based word recognition and frequency matching, the tool categorizes words into three levels: high-frequency/common (words appearing more than 1,000 times in the corpus [e.g., behavior], shown in black), mid-frequency (words appearing 50–1,000 times [e.g., protein], shown in orange), and jargon/technical levels (words appearing fewer than 50 times [e.g., dendritic], shown in red), with effective public communication expected to contain less than 2% jargon. This color-coding visually highlights technical terms and helps users identify and reduce jargon to improve text accessibility. Effective public communication is expected to contain less than 2% jargon. The tool also provides a numerical “De-jargonizer score” ranging from 0 to 100, where higher scores indicate greater clarity and accessibility. If all words are common, the score equals 100; the presence of mid-frequency and jargon words proportionally reduces this value. The calculation of the score is based on the following equation:

The De-jargonizer can analyze text files in multiple formats (.txt,.htm,.html,.docx) and presents results interactively, displaying word frequencies, category percentages, and overall scores beside the text. Its word list and frequency data are updated annually to ensure continued linguistic relevance.

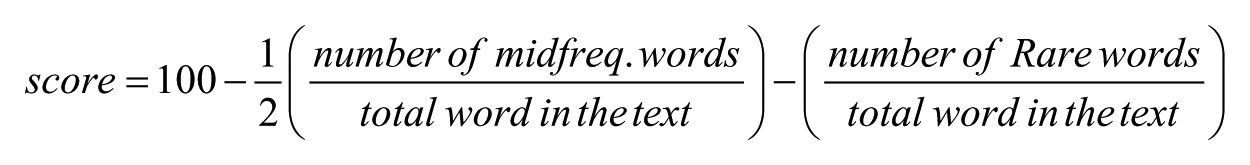

Participation in the study was limited to individuals who provided signed informed consent, in accordance with ethical guidelines approved by the institutional review board (IRB approval number: 2023-002). The first and final iterations of each participant’s text were used to comprise the data set, representing the original version (“Pre,” 120 words, written before any feedback) and the revised version (“Post,” 120 words, revised after receiving three rounds of feedback on reduced-length texts). This pre-post comparison formed the basis for assessing the impact of the feedback intervention. The overall study structure is illustrated in Figure 2.

Study Design.

Data Collection and Management

The adapted exercise was conducted online using a digital platform that automatically recorded each iteration of participants’ texts, the De-Jargonizer feedback, and, for the experimental group, the GenAI feedback provided. Data were stored in a secure (accessed only by the researchers) Google Sheet with timestamps and anonymized participant IDs. Any identifying personal information was removed prior to analysis in accordance with data privacy protocols. Texts containing confidential research findings (as indicated by participants during consent) were excluded from public datasets but included in aggregate analyses.

The feedback provided by GenAI was generated using OpenAI API model gpt-3.5-turbo-instruct with temperature 0.7 for creativity and focus in the response, max tokens set on 150 (to limit response length, this ensures short, concise answers, preventing overly long or rambling text) Top P:1.0 to consider the full range of possible words when generating text. Frequency and presence penalties were set to 0.0, so no discouragement of repetition and introduction of new topics was supported. That is, the model might repeat words or phrases if it thinks that’s relevant, and it won’

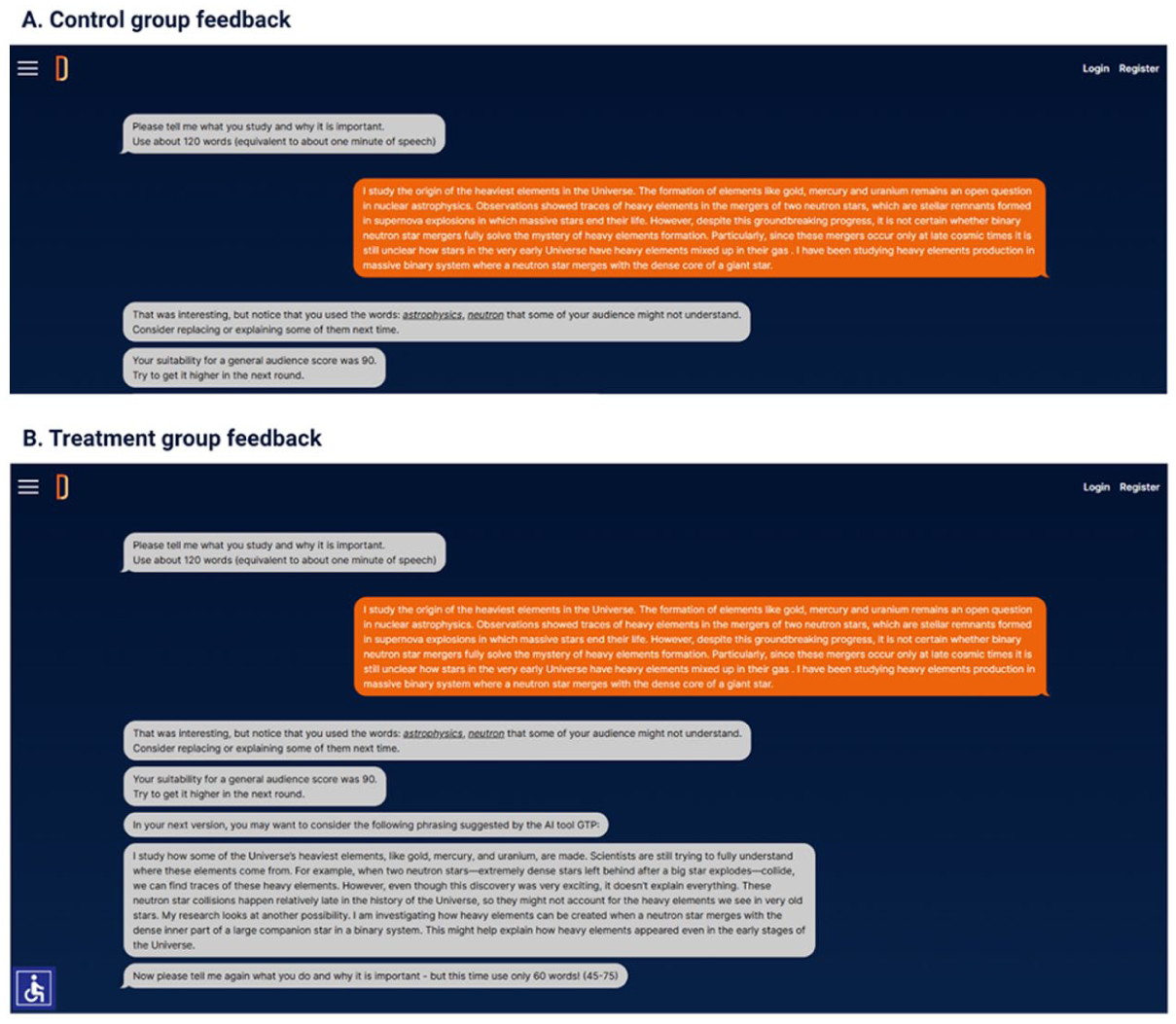

An example of the two types of feedback is shown in Figure 3.

Screenshot of the Feedback Provided by the Online Platform to Participants. (A) Control group feedback. (B) Treatment group feedback.

Participants were free to adopt any part of the feedback suggested or even nothing at all. A descriptive comparison of texts for word retention suggested that participants kept about 62.7% of their original words, on average, in their final version. This moderate level of textual change highlights the influence, but also the selectivity, of GenAI feedback. We acknowledge that the specific GenAI prompt could shape the nature and scope of the feedback provided, potentially influencing which SciComm strategies are emphasized. In this study, the prompt was intentionally designed not to r instruct the AI to focus on rhetorical devices or SciComm strategy such as humor or metaphor. Our aim was to observe the kind of “organic” feedback and suggestions GenAI would provide in an unguided, real-world context, thereby reflecting how scientists might use such tools independently. Ultimately, participants had full agency over the revision process, deciding which AI-generated suggestions to incorporate, modify, or disregard.

Analysis Framework

To evaluate the impact of the intervention on the use of SciComm strategies, this study employed a quantitative approach combining participant self-assessment with expert textual analysis. That is, a quantitative assessment of participants’ self-evaluation and text analysis was used to analyze and compare the SciComm strategies used in the texts. Participants reflected on their revised texts and indicated (from a given set of options) which version—original or final—they preferred in terms of clarity and impact. This reflection was used to answer the first research question.

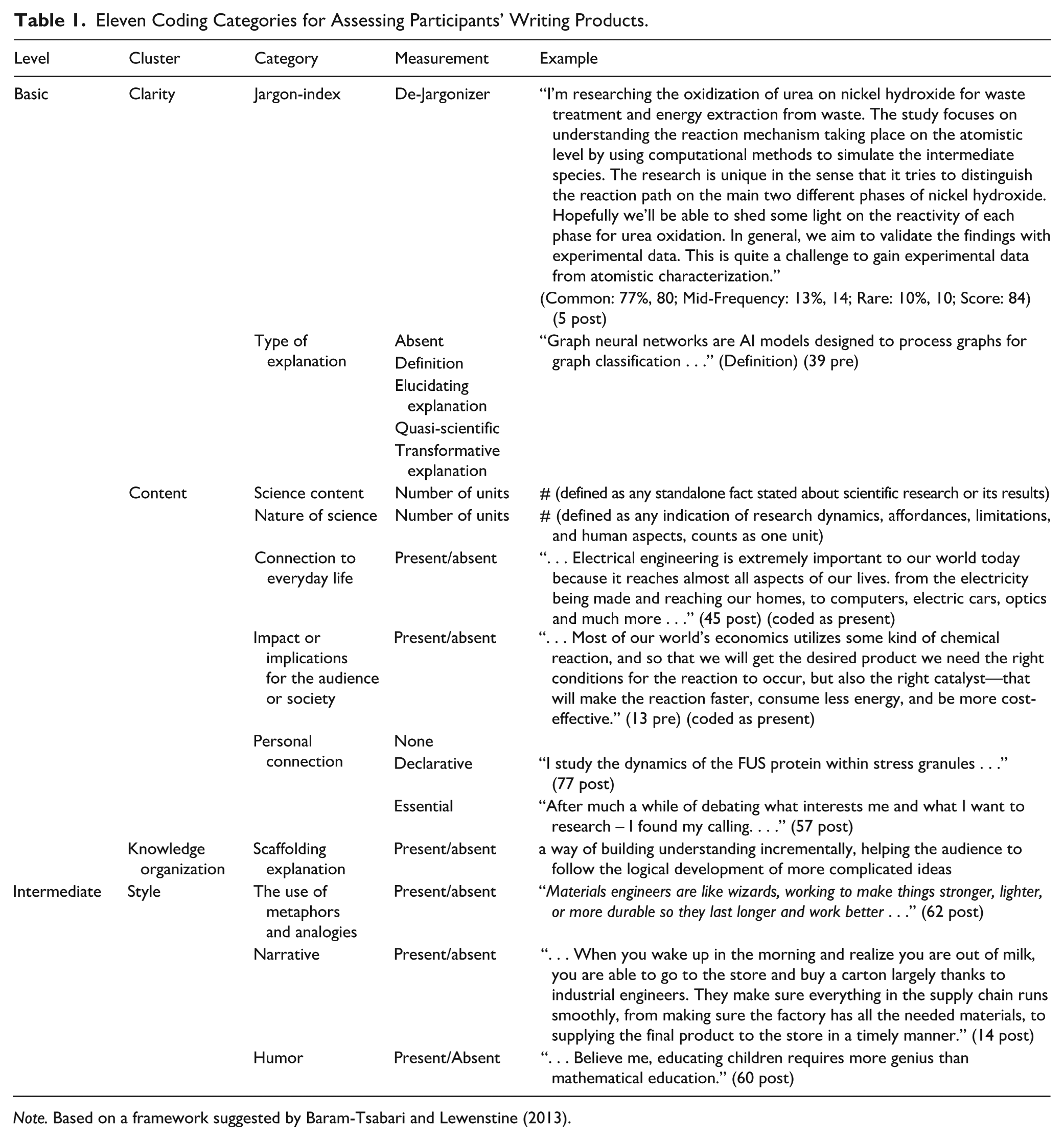

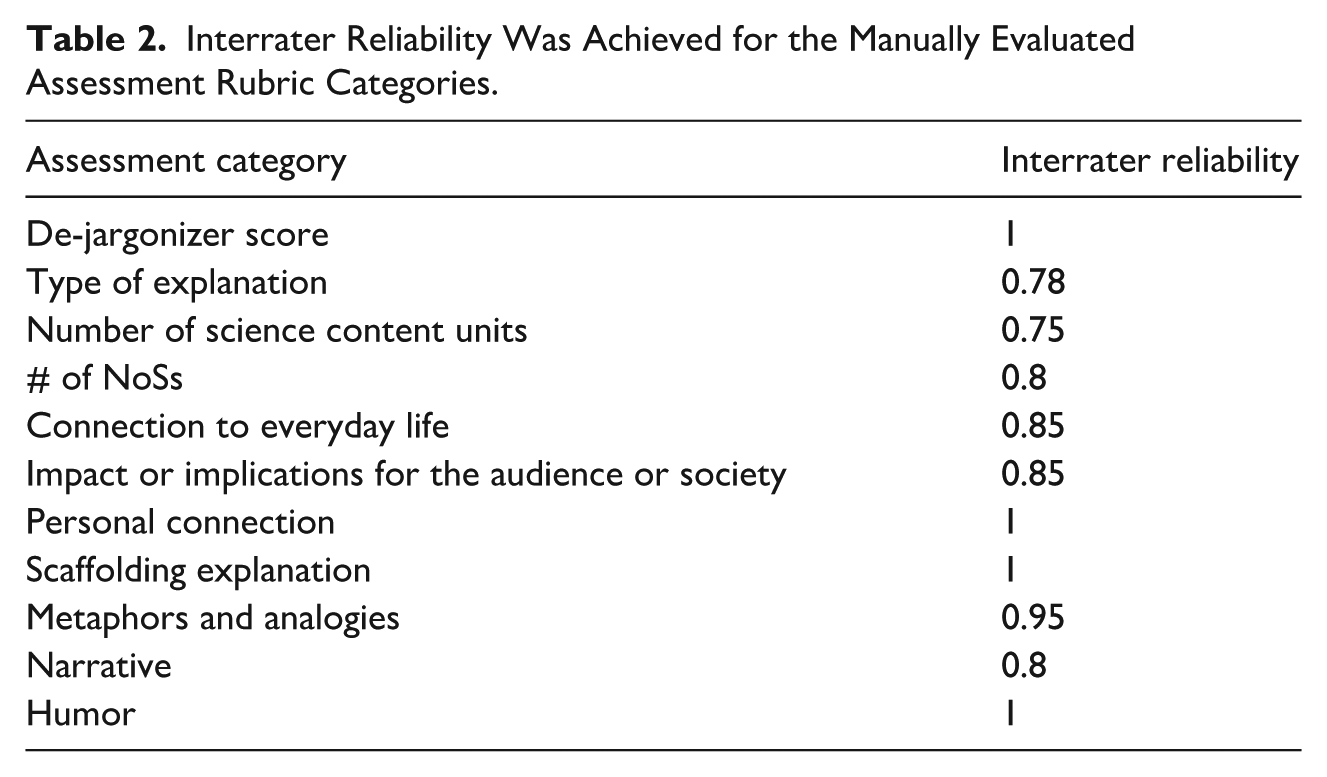

Textual Content Analysis: In parallel, a single-blinded, independent evaluation of the texts was conducted by SciComm researchers. That is, the researchers (who did not take part as participants in the training programs) were given anonymized texts randomly without knowing from which training it came, whether they originated from participants in the treatment or control group, nor whether the texts they were evaluating were pre (original) or post (final version after iterations). As part of the leading researcher, other SciComm researchers were not aware of the research hypothesis during the coding phase. The analysis followed a deductive framework using an adapted rubric based on Baram-Tsabari and Lewenstein (2013) and Barel-Ben David and Baram-Tsabari (2021), which was developed to assess scientists’ writing for diverse publics. This rubric captures key SciComm dimensions such as clarity (e.g., jargon reduction), narrative coherence, and personal or emotional engagement. Comparison of scores across groups and over time (pre- vs. post-feedback) provided empirical insight into the efficacy of the GenAI-enhanced intervention and answered the second research question. Table 1 portrays the full adapted 11-category rubric used to answer our third research question.

Eleven Coding Categories for Assessing Participants’ Writing Products.

An intercoder reliability process was conducted with two peer coders in four consecutive rounds. In each round of coding, 5% of the database was randomly selected using the Excel randomizer. Each selected text was independently coded and then compared with the researcher’s coding. Following each round, deliberation sessions were held to refine the categories and update the codebook accordingly to achieve acceptable reliability. In the fourth round, 10% were coded. Interrater reliability results are shown in Table 2.

Interrater Reliability Was Achieved for the Manually Evaluated Assessment Rubric Categories.

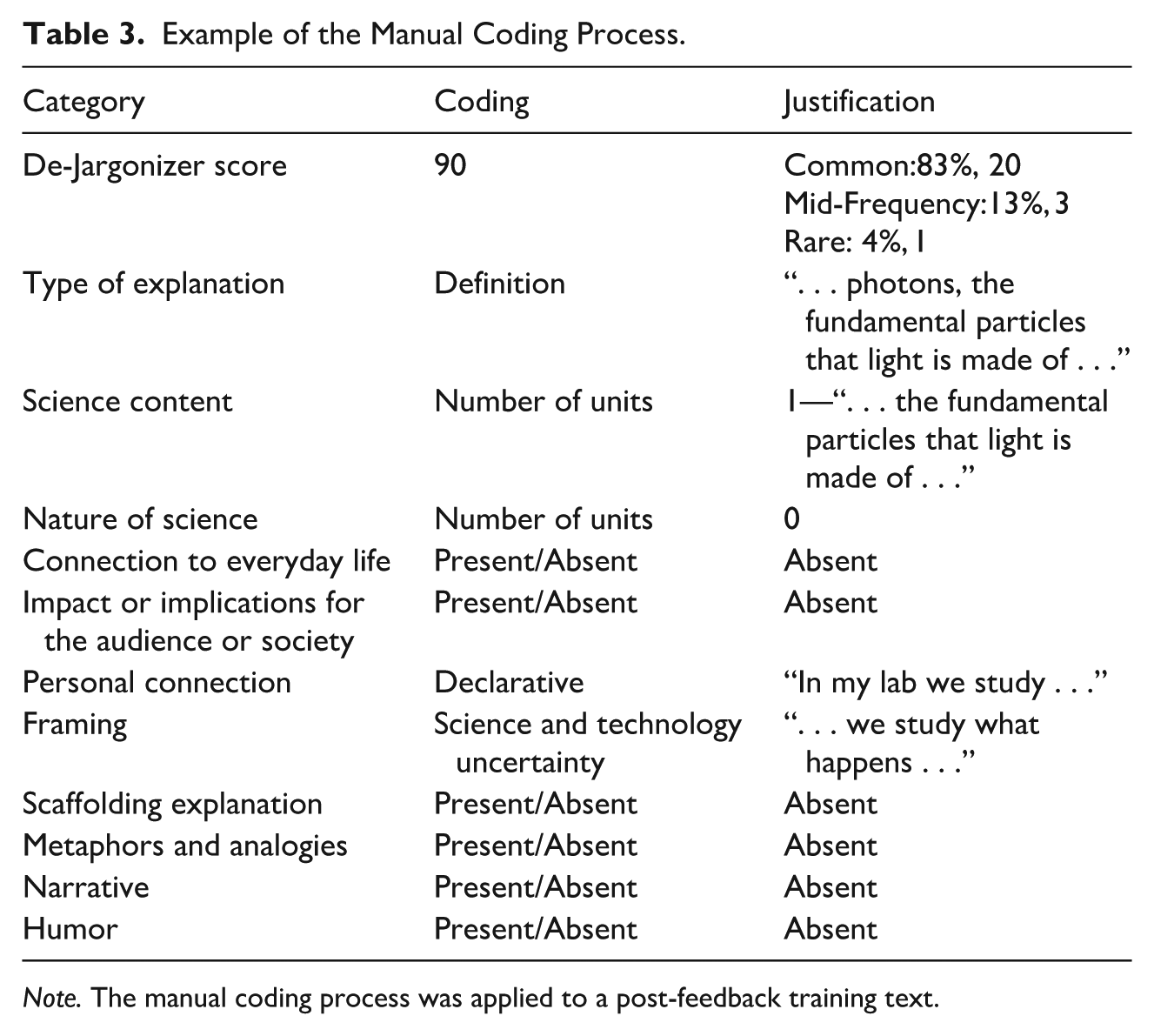

An example of the full coding process is illustrated in Table 3 using the following text:

“In my lab we study what happens to photons, the fundamental particles that light is made of, when we send them through opaque materials.”

Example of the Manual Coding Process.

Using the codebook, this text was manually assessed into the 11 categories as follows:

As a rule of thumb, using narrative, scaffolding information, and reducing the use of jargon comprise an easier reading for non-scientists.

Statistical Analysis

Descriptive statistics were used to evaluate the difference in participants’ preferences between the two experiment groups. In addition, as a first step, we wanted to measure the change in the overall quality of the control and treatment texts. To do so, we normalized the scores between 0 and 1 and calculated the overall normalized difference (Delta=post-pre), referring to a higher score as a better SciComm quality text, and assuming higher scores for the treatment group. To evaluate changes in the different categories used to evaluate participants’ science communication text quality over time and across conditions, we conducted a combination of T-tests (for ordinal categories, e.g., De-Jargonizer score, Number of science content units, and Number of NoS references). For binary categories (Connection to everyday life, Impact or implications for the audience or society, Scaffolding explanation, Metaphors and analogies, Narrative, and Humor), we performed a χ2 test. This mixed analytic approach was selected to accommodate both continuous and ordinal category types.

Results

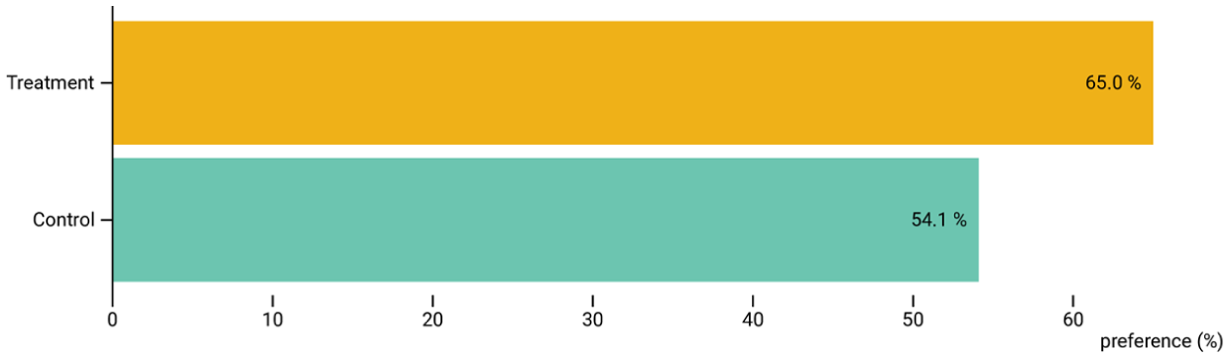

RQ1 focused on SciComm training participants’ assessment preferences regarding SciComm products supported by GenAI. An independent samples

Participants’ Preferences for Texts.

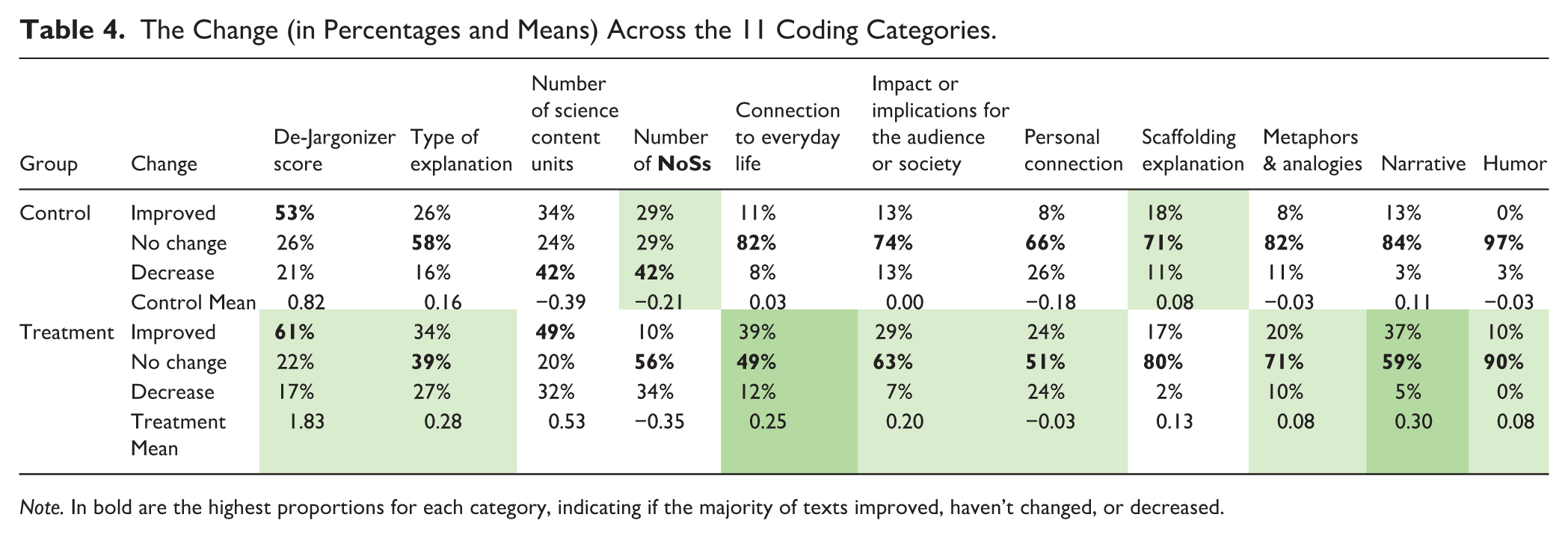

RQ2 aimed to measure whether the GenAI feedback intervention resulted in an overall improvement of the texts from the usage of SciComm strategies; this evaluation was done by the researchers. The overall text scores showed a significant improvement in the treatment group (

The Change (in Percentages and Means) Across the 11 Coding Categories.

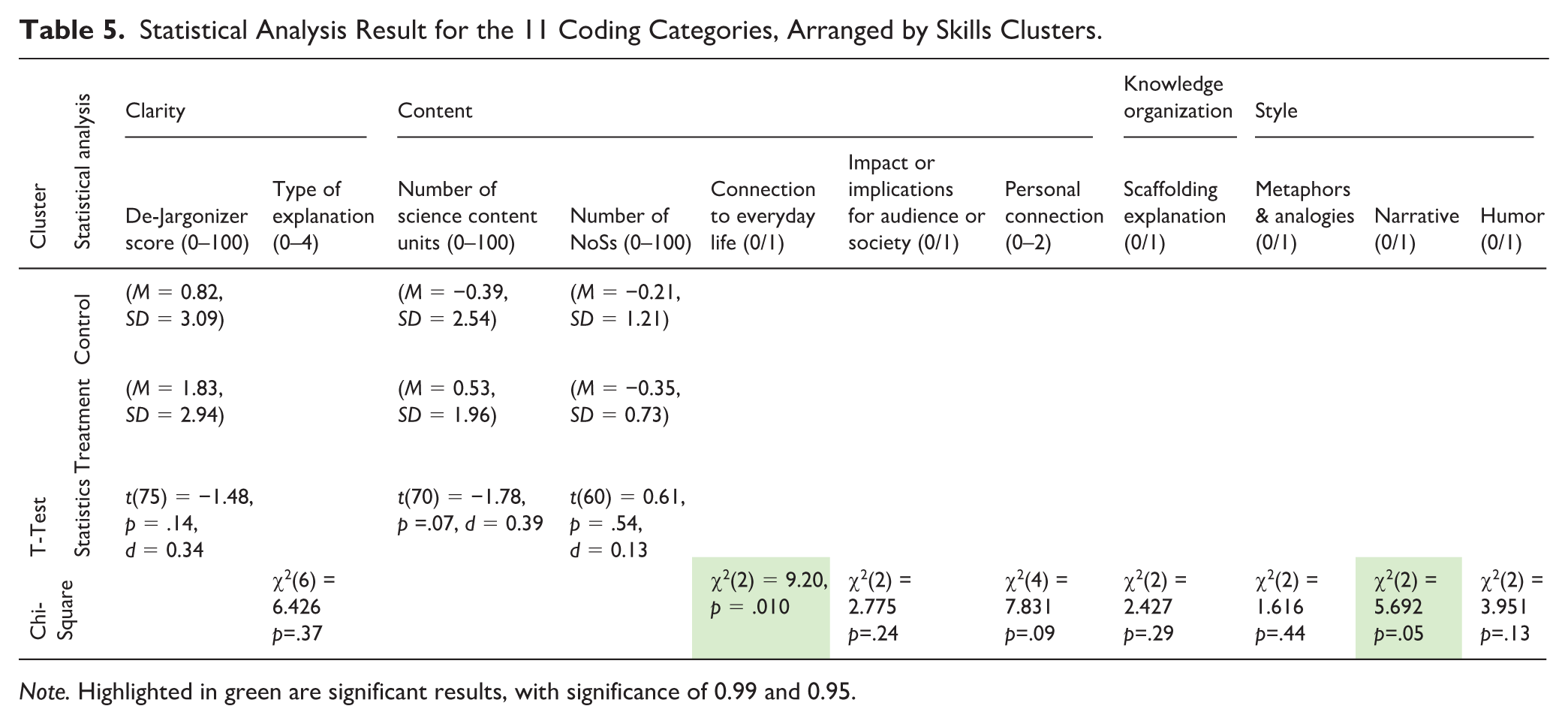

RQ3 examined the SciComm skills, represented by the different coding categories, that were affected by the GenAI feedback, to support our understanding of GenAI's potential support for SciComm practice. The results of the statistical tests for each category show that only two categories have significantly improved between the pre- and post-texts and across groups—‘Connection to everyday life’ and the use of “Narrative” (Table 5).

Statistical Analysis Result for the 11 Coding Categories, Arranged by Skills Clusters.

Following is an in-depth description of the results for each category, collated by their clusters:

Clarity

Content

Knowledge Organization

Style

Discussion

The increasing influence of scientific and technological advancements on policy, societal norms, and daily life underscores the critical importance of communicating complex scientific concepts clearly and compellingly to nonexpert audiences. Despite this growing necessity, formal science communication training is largely absent from most academic curricula. This gap has led to the development of specialized SciComm programs designed to equip scientists with essential tools for clarity, engagement, and audience awareness. However, issues of accessibility and limited resources mean such training is not universally available. Simultaneously, the rapid emergence of GenAI presents new possibilities for enhancing accessibility and improving communication products. This dual landscape—limited access to training and the potential of GenAI—creates a fertile ground for exploring GenAI as a tool to support scientists’ SciComm endeavors. It also highlights the urgent need for empirical research into whether and how GenAI can effectively support these efforts.

This study’s primary contribution is an empirical examination of GenAI’s potential to enhance SciComm products by providing targeted feedback. Analyzing texts written by scientists before and after receiving computerized feedback during a training program, using a validated SciComm assessment rubric, allowed us to measure the change in the texts’ SciComm principles and strategies usage. Building on previous work that demonstrated gains in foundational SciComm competencies like clarity and audience awareness by scientists participating in conventional face-to-face training programs (Barel-Ben David & Baram-Tsabari, 2021; Rakedzon & Baram-Tsabari, 2017), this study expands the lens to evaluate whether and how AI-assisted feedback can facilitate these improvements.

Our findings indicate that participants in the treatment group showed a higher tendency to prefer the revised versions of their texts created with GenAI assistance. This observation aligns with current research highlighting users’ heuristics and perceptions regarding the quality of GenAI-generated texts (Arce-Urriza et al., 2025). It also highlights the need to foster “good working habits” (Hendriks et al. 2025), fostering critical and responsible use of GenAI in SciComm, and to mitigate aura effects.

From a SciComm assessment perspective, participants in both groups significantly reduced their overall use of jargon following feedback, as evidenced by improved De-Jargonizer scores. This reflects a concrete gain in clarity-related skills, consistent with previous findings that simplifying technical language is among the more accessible outcomes of SciComm interventions (Barel-Ben David & Baram-Tsabari, 2021; Rakedzon & Baram-Tsabari, 2017).

Notably, the most significant findings, especially in light of previous research, were the marked increases in participants’ assertion of relevance (connecting scientific concepts to everyday life) and their use of narrative structures within the treatment group. These improvements indicate enhanced audience awareness and storytelling capabilities—skills central to effective science communication (Dahlstrom, 2014; Illingworth & Allen, 2016; Mercer-Mapstone & Kuchel, 2017; National Academies of Sciences, Engineering, and Medicine, 2017).

When conflating all assessment categories and looking at the overall text scores, the overall outcome demonstrates a clear and significant improvement in the SciComm strategies applied in the post-treatment group scores, suggesting our GenAI-enhanced intervention evoked more use of SciComm strategies. However, a nuanced evaluation revealed that not all outcomes improved equally. Consistent with previous work, “clarity” remained the primary cluster showing significant improvement, while more advanced clusters, such as “knowledge organization,” did not exhibit similar changes. Interestingly, we observed a positive change in the “content” and “style” clusters within the treatment group, a shift not previously noted in SciComm training evaluation studies. This improvement in what Baram-Tsabari and Lewenstein (2017) referred to as “intermediate and advanced” SciComm skills suggests the added value GenAI could contribute to communicating science in a more accessible and effective way.

Despite being essential components of SciComm training curricula, these advanced elements demand more than just conceptual understanding; they require creative application and sustained practice. For instance, while the use of everyday-life connections increased in the treatment group, the incorporation of humor and metaphor showed minimal to no gains. As noted in the Data Collection section, our prompt design did not instruct the AI to enhance specific rhetorical or SciComm strategies. This open-ended approach may partially explain the limited observed changes in elements like humor or metaphor, highlighting how prompt specificity can shape GenAI-mediated feedback. Nonetheless, these findings suggest that while participants were exposed to the importance of these strategies, integrating them into writing remains a higher-order skill. Such skills were not readily invoked by GenAI feedback alone and likely require more iterative learning and deeper engagement. This pattern aligns with earlier observations (Baram-Tsabari & Lewenstein, 2017) that SciComm skills exist along a spectrum of complexity. Mechanical aspects of communication—such as avoiding passive voice or reducing jargon—are often easier to adopt than abstract or creative elements like audience framing or storytelling (Rakedzon & Baram-Tsabari, 2017; Barel-Ben David & Baram-Tsabari, 2021). The use of assessment rubrics and coding categories was crucial in capturing these higher-order competencies, distinguishing them from the more technical, easily quantifiable features analyzed via automated tools.

Our study uses a powerful exercise used in SciComm training—the “half-Life” exercise. Although this exercise is originally done orally, in pairs, we wanted to address the scalability challenge SciComm training programs face. Focusing on written communication is justified by its accessibility and scalability for scientists. Whether through social media, news media, or other platforms, writing enables researchers to reach a far larger audience than face-to-face interactions. Therefore, writing remains a critical skill for public engagement, particularly in digital spaces. Previous work on SciComm pedagogy suggests that while oral formats promote immediacy and adaptability in audience interaction, written formats enhance opportunities for reflection and precision in framing (Baram-Tsabari & Lewenstein, 2017; Illingworth & Allen, 2016). Thus, the written version retains the exercise’s focus on audience-centered simplification but emphasizes clarity and narrative structure over real-time delivery. This study’s focus on written outputs acknowledges this and highlights the need for future research on skill transferability across different communication modalities.

To gain a more profound understanding of the observed shift in SciComm strategies used and their actual impact on the texts’ SciComm quality, future research should involve non-scientists in rating the pre- and post-tests. This would provide an in-depth understanding of how the changes in deployment of SciComm strategies made a text more engaging, interesting, and clear from the authentic target audience’s perspective. Furthermore, while this study provides empirical evidence for the improvement of SciComm quality products assessed by scientists (self-assessment) and SciComm researchers, it lacks the direct perspective of the target audience. Such future examination would complement this current study design and will allow us insights into the impact of applying different SciComm strategies for an organic perspective.

Although this study focuses on the practical effects of GenAI feedback on the use of SciComm strategies, the broader ethical implications of GenAI use warrant attention. These include concerns about authorship and transparency in AI-assisted writing (van Dis et al., 2023), the reproduction of biases embedded in training data (Bender et al., 2021), and the substantial environmental costs associated with training and operating large language models (Strubell et al., 2020). Acknowledging these issues is essential for the responsible integration of GenAI into science communication research and practice. Eventually, it requires a critical and responsible use from scientists to integrate these tools, as noted by Lin (2023, 2025), and the need for scientists to cultivate “all habits” when and how they use GenAI in their workflows (Hendriks et al., 2025). Future work should therefore complement empirical evaluations with ethical analyses that address fairness, accountability, sustainability, and the behavioral norms shaping effective GenAI-mediated communication.

Limitations

While our triangulation approach enhanced the robustness of this study, several limitations must be acknowledged. First, despite high intercoder reliability, subjective judgments inherent in manual coding categories remain a potential source of variability. Second, automated readability tools like the De-Jargonizer cannot fully account for semantic nuance or contextual meaning, which may lead to inaccurate assessments of clarity. For instance, words like “values” or “positive trend” may be technically common but carry field-specific interpretations that could confuse lay readers (Somerville & Hassol, 2011). To mitigate these limitations, we used both manual and automated methods to enhance validity. Third, the low rate of significant results suggests a potential sample size challenge. Building on the significant overall results, a bigger sample size might unravel more significant or nuanced results. Finally, the long-term retention of the observed improvements remains unknown and warrants further investigation.

Conclusions

Our results indicate solid potential for integrating GenAI support into SciComm training and content production, offering more accessible ways to provide feedback to scientists. When compared to previous work using the same rubric that showed improvement primarily in “basic” SciComm skills like jargon reduction (Barel-Ben David & Baram-Tsabari, 2021), GenAI feedback in this study appears to support the increased deployment of more advanced SciComm skills, such as the use of narrative structure, thereby increasing the potential of making texts more engaging for readers. Conclusively, SciComm training programs should acknowledge the new reality of GenAI and strategically incorporate evidence-based AI tools, while also managing participants’ expectations and concerns about their use. Ultimately, a strategic blend of foundational instruction and advanced skill-building, potentially augmented by GenAI, may best equip scientists to meet the growing demand for effective public engagement and also address the training scalability challenge. That is, seeing that GenAI has the potential to support applying a larger variety of SciComm strategies when writing non-scientists means scientists that undergone training can get this support at hand and on demand, hopefully supporting their communication efforts and maybe increasing their frequency of doing so.

Footnotes

Ethical Approval

This study was reviewed and approved by the Behavioral Sciences Research Ethics Committee of the Technion—Israel Institute of Technology (IRB approval number: 2023-002) on 09/01/2023.

Informed Consent

Participation in the study was limited to individuals who provided signed informed consent, in accordance with ethical guidelines approved by the Behavioral Sciences Research Ethics Committee.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Minerva Stiftung and the VW Foundation (Az. 99994).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statements

Data were stored in a secure (accessed only by the researchers) Google Sheet with timestamps and anonymized participant IDs. Any identifying personal information was removed prior to analysis in accordance with data privacy protocols. Nonetheless, the data set contains confidential research findings (as indicated by participants during consent), therefore, needed to be excluded from public datasets but included in aggregate analyses.