Abstract

Scientists increasingly use generative artificial intelligence (GenAI) to aid in science communication tasks due to limited time and resources. GenAI can function as a writing assistant, idea generator, or collaborative tool. While it offers promising support, it is crucial to address potential pitfalls, such as misinformation due to its stochastic nature, dependency on prompt specificity, and the risk of reproducing biases and stereotypes. To mitigate these issues, scientists should be trained in competencies that foster ethical and effective interactions with GenAI, ensuring they develop what we call “Good Working Habits” for science communication with GenAI.

Keywords

Scientists as agents in science communication are expected to carry a substantial load and responsibility in communication efforts. While scientists are authentic informants about scientific issues and scientific methods, and are highly trusted by members of a broader public (Fiske & Dupree, 2014), communication is usually not part of their professional training. Science communication training for scientists aims to foster scientists’ practical skills in and reflexivity about communicating science, and beyond this, their knowledge and critical reflection of tools that can support their communication endeavors (Lewenstein & Baram-Tsabari, 2022). In this paper, we focus on generative artificial intelligence (GenAI) as a tool in scientists’ science communication endeavors and discuss how science communication training can foster how scientists work with and reflect on GenAI to communicate (their) science.

The potential for using GenAI in communication lies in its ease and accessibility. Anyone with an internet connection can now construct human-like text and speech, images, music, video, and even code. GenAI, based on large language models (LLM), and AI text-to-image generators are trained on vast datasets of either text in natural language or images and are usually fine-tuned using human interference (Dwivedi et al., 2023; Riley & Mason-Wilkes, 2023). Uses of GenAI reach far beyond what is publicly available through chatbot-based applications. Within science, GenAI is already widely utilized, both for doing (Fecher et al., 2025; Hosseini et al., 2023; Pividori & Greene, 2024) and for communicating science (Biyela et al., 2024; Henke, 2024; Holford et al., 2023; Schäfer, 2023; Tatalovic, 2018). However, the extent in which GenAI is used to support scientists’ science communication should be carefully weighed (Biyela et al., 2024), and scientists should be trained in responsibly using GenAI (Alvarez et al., 2024). This is important, as quality standards such as accuracy, accessibility, or transparency (Fähnrich et al., 2023) apply to any science communication, no matter the mode of generation.

In this paper, we explore the dual-edged nature of GenAI in science communication, addressing both the unique benefits of communicating with scientists and the pitfalls. We argue that while GenAI can serve as a valuable tool, its effective use requires specific competencies and critical awareness among scientists. Thus, we introduce “Good Working Habits” of using GenAI, meaning both practical skills of using technology to achieve high-quality results (Ways of Doing) and reflective and critical thinking about the appropriateness of using GenAI to achieve communication aims and potential shortcomings in its outputs (Ways of Thinking). By “Good Working Habits,” we do not imply a normative goal but refer to those practices that lead to scientists being successful in reaching their communication goals, while using supportive tools such as GenAI. In the following sections, we discuss what the two facets of Good Working Habits might entail, and how to support scientists in using GenAI in science communication training.

Good Working Habits As Ways of Thinking

Being Aware of Inaccuracies Produced by GenAI

Despite AI-generated outputs being often highly comprehensible and thus credible to many audiences (Spitale et al., 2023), the way they are generated is incompatible with epistemic values of science (such as accuracy, objectivity, or reproducibility, see Allchin, 1999), for two reasons:

First, GenAI output is highly dependent on training data, and current tools are mostly trained on digitally available text, with scientific literature (and less so: science communication) likely a tiny fraction. Furthermore, quality criteria inherent to science are not yet applied (e.g., a preprint is treated the same as a published paper). As a result, GenAI tools may inaccurately report on new scientific knowledge in cases in which (a) a previously established theory has recently been refuted, (b) new research has led to questioning a previously established scientific claim, or (c) a phenomenon has just recently been taken under scientific scrutiny.

Second, GenAI does not possess knowledge. It continuously reproduces its outputs based on linguistic likelihood, which may lead to LLM reproducing highly circulated scientific myths (the stochastic parrot, Schäfer, 2023). LLM may also create misinformation when “hallucinating” knowledge when there is little basis in the training data for generating an answer (Löser & Tresp, 2023; Teubner et al., 2023). Moreover, ChatGPT seems to display a tendency to produce balanced accounts (Teubner et al., 2023), possibly leading to a biased understanding of science in people’s minds (Boykoff & Boykoff, 2004). Such inaccuracies result in misinformation that echoes conspiracy theories or might strengthen prevalent misconceptions.

In consequence, scientists must learn to critically reflect on the scientific accuracy of AI-generated answers (Chavanayarn, 2023) and its ability to communicate following quality criteria, especially in the implementation of science communication. One strategy is to work with at least two different AI technologies for each task. In this way, one can assess the value of the other’s output under the supervision of the scientist. Another Good Working Habit could be to input most of the content while using GenAI for the rhetorical problem space (Cress & Kimmerle, 2023), that is, for improving comprehensibility or adaptation to target groups. This strategy also evades the danger of relying solely on AI-generated information based on unknown training data. GenAI could even take over a rudimentary role as a personalized press office to a scientist or lab: Customized chatbots can be trained with research articles to communicate about them with an inquiring user (Valle & Barone, 2024). However, output must be checked for accuracy consistently.

Being Aware of Misunderstandings Produced by GenAI

In many science communication training programs, a leading concept is “knowing your audience” and adapting your message accordingly (Baram-Tsabari & Lewenstein, 2017). Central to this is de-jargonizing language, that is, reducing highly specialized terminology (essential for inner-scientific communication) to easy-to-understand language for people outside academia. There have been several approaches to identifying jargon and measuring its occurrence in science communication texts (Rakedzon et al., 2017; Sharon & Baram-Tsabari, 2014). GenAI is quite effective in helping scientists to easily modify text to reduce its difficulty and jargon (Pividori & Greene, 2024).

However, several problems follow suit. First, it is unclear how GenAI identifies which words are jargon, and which are not. For example, “theory” might mean something different in scientific and everyday language. Second, some jargon has highly discipline-specific meaning. For example, “energy” or “hypothesis” is conceptualized differently in biology, physics, and psychology (Donovan et al., 2015). By only using the likelihood of a word occurring in a text, GenAI may not correctly identify the disciplinary meaning of a word it identifies as jargon. Third, reducing jargon requires careful consideration. Dropping jargon in favor of language more familiar to public audiences might lead to oversimplification, as the specificity and exactness of jargon referring to underlying concepts is lost when exchanging a highly jargonized term like “osmium” with “a very dense metal.”

Moreover, while reducing jargon may increase comprehensibility, it may also decrease perceptions of expertise in audiences (Thon & Jucks, 2017; Zimmermann & Jucks, 2018). Especially—but not exclusively—for female researchers, it is important to demarcate themselves as experts, even more so for topics that are contested in societal discourse, being a trustworthy topic expert is important (Bromme & Hendriks, 2024). Therefore, eliminating all jargon from science communication might not be a successful strategy in all situations.

A strategy for improving the comprehensiveness of science communication while retaining—but explaining and enriching—some jargon is the use of examples, comparisons, or metaphors. However, using an inadequate example or metaphor might evoke common misconceptions hindering the adoption of scientific explanations (Shtulman & Legare, 2020). For example, comparing genes with blueprints, or brains with computers is not successful in fostering an adequate understanding of underlying scientific concepts, and instead fosters mechanistic thinking, or even associations to intelligent design (Pigliucci & Boudry, 2011). GenAI will likely fall back on the most often-used analogies and metaphors in everyday speech when suggesting examples of metaphors. In addition, as GenAI lacks conceptual understanding of the communicated idea, it is not yet able to identify the underlying principles and structures of a problem to choose a more adequate and novel metaphor. This problem also applies to AI-generated images of scientific phenomena (e.g., bacteria).

To account for these problems, science communication training should address audience effects of using jargon, examples, and metaphors. For example, scientists should reflect on prioritizing conceptual correctness over comprehensibility, and be aware of possible negative side effects of simplification, such as evoking misconceptions or endangering their trustworthiness as experts. Training should discuss the ambiguity around AI’s defaults regarding clarity and readability, and the examples and suggested metaphors should be subjected to critical scrutiny and reflection. A conceptual Good Working Habit might be getting feedback from GenAI tools regarding jargon words, recognizing the essential ones, and practicing different ways of explaining them, without omitting them.

Being Aware That GenAI May Reproduce Bias and Harmful Stereotypes

Beyond providing an “engaging and customized experience” for users (Biyela et al., 2024), GenAI has the potential to promote fairness in science communication by providing tools to reduce users’ barriers to access (Malone, 2024). A user might eliminate language barriers by translating text into their audience’s first language, another user might use GenAI to adapt engagement to an audience with a visual or hearing impairment and use GenAI that can translate between modalities (text-speech-visuals). GenAI is a tool for scientists to make their science communication more accessible to people with diverse backgrounds, and thus fairer by increasing epistemic justice.

Despite these potentials for fair access to science communication through GenAI, there are some ethical concerns. First, training databases of current LLM in languages other than English (e.g., Hebrew or German), are most likely much smaller than the English training database (Löser & Tresp, 2023), which may affect the accuracy and quality of results. Second, scientists who use GenAI to find out about and adapt communication to specific target audiences must be aware that GenAI is prone to reproduce language, cultural, ability, or gender bias—also based on the composition of training data (Biyela et al., 2024; Chavanayarn, 2023; Chen et al., 2024; Dwivedi et al., 2023). While the former issue may be solved with time, the latter has ethical implications. For example, if a scientist addresses a specific target group based on superficial or biased characteristics which GenAI had previously suggested, this may not only deflect negatively on the communicating scientist, it may also evoke distrust in science communication.

This makes it even more important to include ethics and justice issues in science communication training (Lewenstein & Baram-Tsabari, 2022). Using GenAI as an interaction partner, although limited, might support this aspect of ethical issues. Engaging in interactions with GenAI that takes on roles of different target persons, asking it for feedback on versions of text as such, or asking it to try out several versions of text in different styles and critically reviewing it might highlight our own biases. Furthermore, training programs should increase scientists’ awareness that comprehensibility is not all there is in terms of accessibility, and at best, they invoke trainees to actively listen to and learn from their audiences instead of harping on unoriginal or even harmful stereotypes.

Good Working Habits As Ways of Doing

Learning How to Prompt

The core to Good Working Habits as ways of doing is knowing how to utilize GenAI to achieve satisfactory results for science communication, and prompts are central to achieve this: Prompt engineering is a way to improve the outcomes of GenAI by adjusting one’s language input to increase the correctness and efficiency of answers (Teubner et al., 2023). While prompting is a skill that must be acquired to use GenAI in any domain (and several guides exist; Giray, 2023; White et al., 2023), some additional challenges can be outlined in the context of science communication.

Most fundamentally, users must be aware that prompts can be directed at the content problem space (what to communicate) or the rhetorical problem space (how to communicate) (Cress & Kimmerle, 2023). Importantly, prompt engineering is an iterative process. For example, science communication trainers could recommend testing prompts iteratively, by first addressing the content problem space (e.g., “summarize a central theory that informs my research”), and then prompting the rhetorical problem space, by adding a style descriptor (e.g., “make it funny”), or a genre (e.g., “in the form of a poem”). When mastering simple prompts, more advanced strategies could be taught, such as including examples of science communication strategies or quality criteria (Fähnrich et al., 2023; Taddicken et al., 2024). Another option is formulating “priming prompts,” that is, entering examples in the chat asking to generate answers in the exact specific style (or with a specific person as speaker or target person, etc.). While these prompting techniques are effective when generating text or visuals, they can also be incorporated when prompting GenAI to give feedback or engage in conversation with the user.

Learning how to prompt is a vital basis for scientists who want to use GenAI for communicating science. However, it requires critical reflection of the content problem space and the rhetorical problem space: GenAI may echo other’s styles (especially when using priming prompts) to the point of unified communication styles. As “LLMs are algorithms that compress the input data and reproduce it in an approximate manner” (Teubner et al., 2023, p. 99), AI-generated outputs may—for now—lack innovation, novelty, and creativity (Dwivedi et al., 2023) and produce effects similar to “groupthink.” Science communication training should, therefore, reflect with scientists on their personal viewpoints about a topic and the degree to which they aim to include personal authenticity and style in their science communication, which may also benefit their reliability and trustworthiness from a user perspective (Seidel et al., 2023; Zhang & Lu, 2024).

GenAI As Co-Trainer

Not only for science communication training, scientists can also employ GenAI as a teaching method in their communication practice. Scientists can ask GenAI to provide personalized feedback (Ruwe & Mayweg-Paus, 2024) or incorporate meta-cognitive prompts (Stadtler & Bromme, 2008), such as sending reminders to talk slowly, leaving out technical terms, or asking the audience questions during a talk.

Moreover, science communication trainers can design lessons incorporating GenAI that provide predetermined prompts with goals of specific science communication tasks or tasks that incorporate GenAT in chatbot form (Rakedzon et al., 2023). Another method would be collectively reflecting on the quality of AI-based science communication, with the aim of developing classroom standards for good quality science communication. In doing so, teaching Good Working Habits as Way of Doing and Ways of Thinking can be easily combined.

Using GenAI as a Science Communication Toolbox

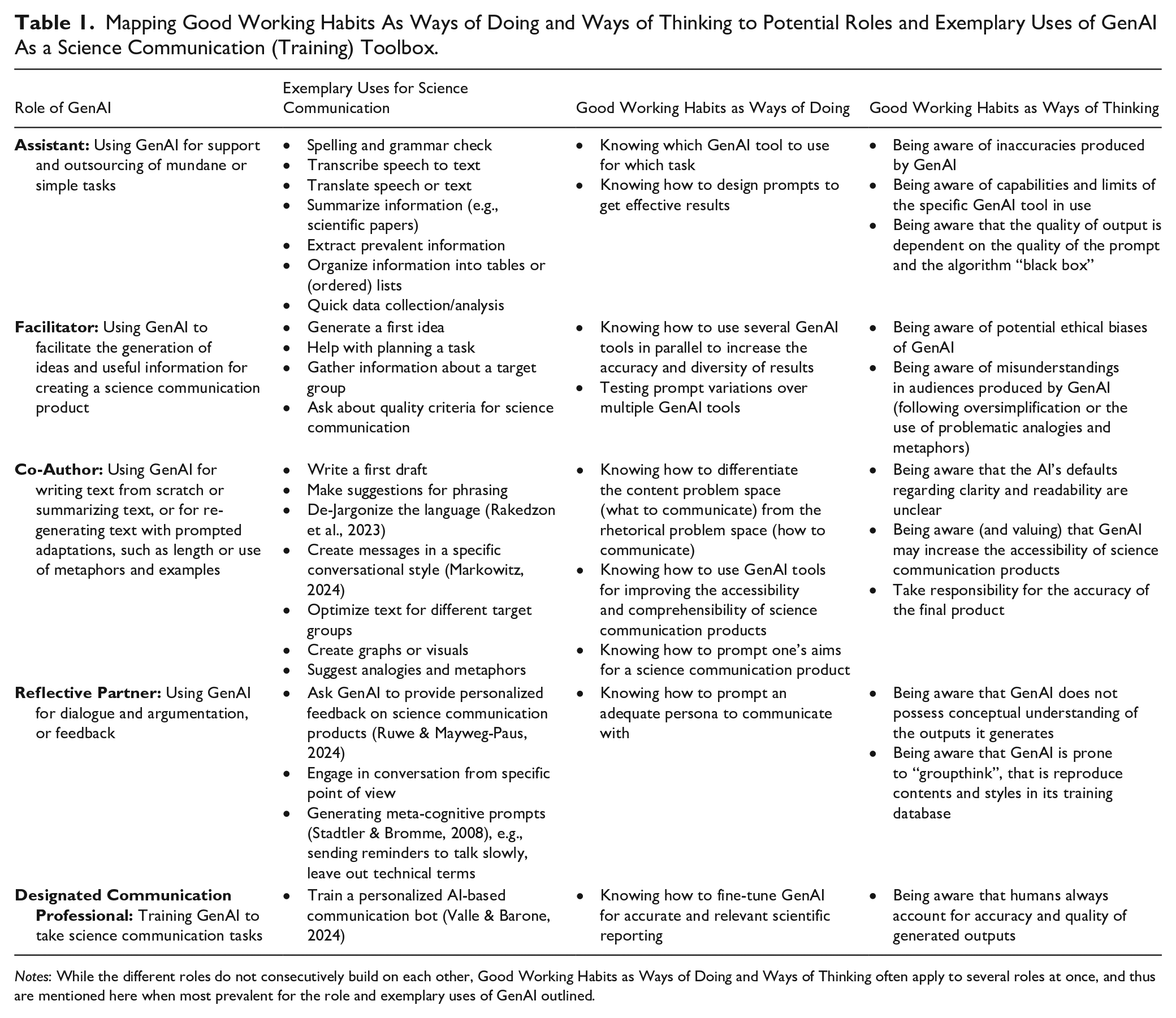

Despite its previously mentioned limitations, GenAI is, first and foremost, a tool that can be used to support communication tasks. For example, LLM can potentially be used to summarize, or translate text, produce lists and tables, write code, and even engage in conversation (Henke, 2024). GenAI can relieve their users from tedious tasks, such as producing lists and tables, and instead allow them to focus on the more difficult and creative tasks (Löser & Tresp, 2023)—GenAI can be an Assistant in creating science communication products. Second, a scientist may also utilize GenAI as a Facilitator by generating and testing new ideas, for example, to overcome sitting in front of the proverbial blank page. Third, a researcher could choose to form a “hybrid team” (Dwivedi et al., 2023, p. 8) with GenAI, which then acts as a Co-Author to their science communication efforts. For example, when explaining scientific concepts, GenAI can be prompted to create messages in a specific conversational style and follow explanatory sequences (Li et al., 2024; Markowitz, 2024). Fourth, GenAI can be consulted as a Reflective Partner. GenAI is an excellent tool for engaging in conversations, gathering feedback just like one would with a personalized tutor (Ruwe & Mayweg-Paus, 2023), or gathering information about target audiences. Finally, scientists may train GenAI-based chatbots as Designated Communication Professionals to provide answers based on their scientific papers (Valle & Barone, 2024).

In sum, GenAI holds potential for scientists in their science communication endeavors in its roles as assistant, facilitator, co-author, and reflective partner. Table 1 provides an overview of these roles, based on other work which elaborates on potentials of GenAI for science communication in general (Biyela et al., 2024; Henke, 2024; Holford et al., 2023; Schäfer, 2023; Tatalovic, 2018), and associated Good Working Habits.

Mapping Good Working Habits As Ways of Doing and Ways of Thinking to Potential Roles and Exemplary Uses of GenAI As a Science Communication (Training) Toolbox.

Notes: While the different roles do not consecutively build on each other, Good Working Habits as Ways of Doing and Ways of Thinking often apply to several roles at once, and thus are mentioned here when most prevalent for the role and exemplary uses of GenAI outlined.

Discussion

As scientists increasingly participate in public engagement activities but are usually short on resources, GenAI may seem a great tool to help with communication. Overall, here we explore Generative AI (GenAI) as a versatile science communication tool. As GenAI can handle tasks like summarizing, translating, creating lists, as well as support idea generation, collaborative writing, engaging in conversations, and providing tailored feedback, users may focus on the creative and complex content-work of science communication. However, GenAI also has drawbacks. These drawbacks are at the core of GenAI as technology, which is stochastic and not epistemic by design—it reproduces text or images based on probabilities in its training databases but cannot tell truth from lies, no less scientific consensus from pseudoscientific myth. Some of these issues may be solved in the future with evolving models and algorithms. It may become possible for scientists to fine-tune a model using scientific databases (research papers and previous science communication products) or employ the use of retrieval-augmented generation (RAG), which integrates real-time data retrieval with text generation, to improve the quality and accuracy of its outputs.

For now, we argue that science communication should follow an epistemic rationale—and that it is fundamentally built on the unique capacity of scientists’ knowledge and expertise as quality control. We argue that scientists need “Good Working Habits” to effectively use GenAI for science communication. That is, beyond knowing how to use prompts to improve outputs, scientists need science communication-specific competencies (that is attitudes, knowledge, practical skills, and a reflexive mind-set) that will allow them the reflective and critical perspective to evaluate GenAI outputs and suggestions. At the core, these are centering communication to target audiences instead of sticking to a deficit model, viewing science communication as co-constructive (and sometimes even co-productive), and being transparent about the scientific process and value judgments within to build trust (Lewenstein & Baram-Tsabari, 2022).

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the following grants—Junior Research Group “Communicating Scientists: Challenges, Competencies, Contexts (fourC),” funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under Germany’s Excellence Strategy—UP 8/1; Minerva Fellowship Program funded by the German Federal Ministry for Education and Research; Niedersächsisches Vorab, Research Cooperation Lower Saxony—Israel. Lower Saxony Ministry for Science and Culture (MWK), Germany [grant no. 11-76251-2345/2021 (ZN 3854)].

AI Statement

An initial brainstorming on ways to incorporate GenAI in science communication training and the title was done with GPT-4.o. Parts of this manuscript were edited for language and clarity using GenAI (GPT-4.o and Grammarly). All conceptual and theoretical ideas were developed entirely by the authors, and they take full responsibility for the contents of this manuscript.