Abstract

Few studies have explored how science communication projects are evaluated and what impact they have. This study aims to fill this gap by analyzing the results of science communication projects carried out by academics. Drawing on the theory of change and evaluation models, possible results of science communication projects are conceptually distinguished at the levels of outputs, outcomes, and impacts. The study draws on a dataset of 128 science communication projects funded by the Swiss National Science Foundation from 2012 to 2022. Quantitative content analysis reveals few rigorous evaluation designs and a focus on reporting outputs, while outcomes and societal impacts are often neglected.

Keywords

Introduction

Recently, there has been an increasing interest in measuring the impact of science communication in both research and practice (Jensen, 2014; Weitkamp, 2015). As science communication continues to play an essential role at the interface between science and society, understanding its effects and societal impact becomes more important for policymakers, researchers, and science communicators alike (Fecher & Hebing, 2021; Fu et al., 2016). Science communication is often associated with the goal of stimulating interest in science, promoting public engagement, or improving scientific literacy (Metcalfe, 2019; Weingart & Joubert, 2019). However, it can also have unintended and unexpected negative consequences, such as diminishing interest or trust in science (Watermeyer, 2012). Therefore, it is important to empirically examine whether science communication activities achieve their intended effects in practice or not (Jensen, 2019).

As governments around the world come under growing pressure to demonstrate that investments of public money in research have positive impacts on society, funding agencies increasingly expect academics to engage in science communication with the public and sometimes allocate their own budgets for this purpose (Hill, 2016; Palmer & Schibeci, 2014). Along with this, expectations for evaluation of public outreach and engagement are increasing (Fogg-Rogers et al., 2015). Although the importance of evaluating and measuring the effects of science communication has been emphasized, it is often neglected in practice (Jensen, 2019). Large-scale assessments of science communication projects based on project evaluations are relatively rare (for exceptions see, e.g., Fu et al., 2016; Ziegler et al., 2021), especially with regard to outreach activities carried out by academics.

This study aims to fill this gap by examining how academics evaluate their own science communication projects, if at all, and what exactly the effects of their projects are. Drawing on the theory of change and the idea of logic models, the effects of science communication are distinguished at the levels of outputs, outcomes, and impacts. Empirically, this study relies on a unique dataset of all 128 science communication projects funded by the Swiss National Science Foundation (SNSF) between 2012 and 2022. For a comprehensive meta-analysis of these projects, the SNSF granted access to academics’ project applications, final reports, and project data. A standardized quantitative content analysis was conducted to uncover gaps in evaluation practices and between promised and realized results.

Literature Review

The increasing importance of communicating about science and engaging the public has led funding agencies to invest significantly in science communication programs (Hill, 2016). More and more government funding agencies are requiring academics to develop an outreach plan for communicating with lay audiences in addition to conducting excellent research (Brenninkmeijer, 2022; Palmer & Schibeci, 2014). As a result, academics are increasingly incentivized to communicate their research, for example, appearing as experts in mass media or at public forums (Peters, 2021; Scheu et al., 2014). However, relatively few of them are trained in science communication (Ho et al., 2020; Rauchfleisch et al., 2021). As the science communication funding landscape expands and budgets rise, so do funders’ expectations for demonstrating tangible impacts and accumulating evidence of effective science communication practices (Fogg-Rogers et al., 2015; Jensen & Gerber, 2020; King et al., 2015). One of the driving countries is the United Kingdom, which introduced a standardized evaluation framework, the Research Excellence Framework (REF), in 2014 to guide a nation-wide assessment of the societal impact of research (Hill, 2016). The San Francisco Declaration on Research Assessment (DORA), launched a decade ago, has stimulated numerous efforts around the globe to improve evaluation and measure the real-world impact of research on society (Gagliardi et al., 2023). As a result, policy makers, institutions, and funding agencies in various countries—including the Netherlands, Sweden, Italy, Australia, and the United States—require academics to assess the impact of their research not only in scientific terms but also in terms of societal impact (Fogg-Rogers et al., 2015; Jensen et al., 2022).

Academics as Science Communicators

Given the important role of individual academics in the science-society nexus, there is a growing body of research on academics as science communicators (Calice et al., 2023). Numerous studies have investigated academics’ motivations for communicating with lay audiences (e.g., Kessler et al., 2022; Rauchfleisch et al., 2021) or their understanding of public engagement (e.g., Davies, 2013; Watermeyer, 2012). Other studies have explored the need for and effectiveness of science communication trainings for academics (e.g., Ho et al., 2020; Rodgers et al., 2018). Research shows that academics have a rather broad understanding of public engagement (Davies, 2013; Watermeyer, 2012), ranging from “one-way” activities that focus on information sharing and education such as public lectures, representing a knowledge-deficit mind-set (e.g., Besley & Nisbet, 2013; Metcalfe, 2019), to “two-way” activities such as deliberative forums, open days, or science festivals (Calice et al., 2023; Ho et al., 2020). However, little is known about how academics subsequently self-evaluate the results and report on the impact of their outreach formats.

In the field of research evaluation, a distinction is often made between two kinds of impact:

Since funders increasingly require academics to assess and report both on the scientific and societal impact of their research (Brenninkmeijer, 2022), there is growing criticism that funders impose unrealistic expectations for societal impact, which may lead academics to “overclaim” (potential) impact in order to improve their chances of success in the fierce competition for third-party funding (King et al., 2015). This goes hand in hand with a development that some refer to as impact “inflation” or “utopianism” in grant applications (Chubb & Watermeyer, 2017) driven by increased pressures on academics to “marketize” impact. When it comes to reporting on the impact achieved, there is also a growing risk that self-reported evaluations of research—especially when disclosed to funders—may degenerate into a mere “success story,” while unachieved results are omitted or glossed over (King et al., 2015; Ziegler et al., 2021).

Evaluation of Science Communication

There is a long tradition in science communication research of studying the short- or medium-term effects of science communication messages, formats, or visuals on audiences (Besley et al., 2018), demonstrating a breadth of cognitive, attitudinal, emotional, behavioral, or physiological changes (e.g., König et al., 2023; Mede, 2022). Effects studies are often based on experiments or experimental surveys and attempt to isolate the effects of science communication on audiences (Mede, 2022), which often has the disadvantage of low external validity and generalizability to other contexts. In real-world science communication scenarios, the use of such controlled study designs is often not feasible. For assessing science communication in field settings,

Empirical studies regarding science communication evaluations in practice are scarce. Three types of studies can be found: First,

Two common findings stand out in these different studies: First, evaluation of science communication is considered important, but still not widespread, and is often based on self-reports (see Jensen, 2014; Phillips et al., 2018; Watermeyer, 2012). Typically, evaluations focus on easily countable indicators such as media coverage or visitor numbers (e.g., Bühler et al., 2007; Höhn, 2011; Weingart & Joubert, 2019). The emphasis on media attention is partly driven by funding bodies that attach importance to mass media coverage (Scheu et al., 2014; Weingart, 2022). Measurement of effects is often not robust, as sophisticated designs capable of capturing changes are rare (Ziegler et al., 2021). Second, the hoped-for effects on participants’ attitudes or behavior are often small, possibly indicating that the intended goals were too ambitious (Pellegrini, 2021).

Evaluation Stages

Since evaluations aim to attribute effects to specific science communication projects or interventions, they can draw on “logic models” that distinguish different stages of effects and make assumptions about logical pathways between the stages to achieve these effects (Friedman, 2008). Such models reflect assumptions from program theory and the theory of change (Clark & Taplin, 2012; Funnell & Rogers, 2011; see also Macnamara, 2018), which posit that a specific project or intervention will lead to certain anticipated and desired changes through a series of stages, at each of which data can be collected to measure change. This idea, widely used in public administration and research evaluation, has also proven fruitful for the evaluation of science communication, as it helps to disentangle and measure the communication effects of outreach activities at different stages (Friedman, 2008; Fu et al., 2016; Weitkamp, 2015).

Compared with evaluation in other fields, the evaluation of communication is arguably challenged by fundamental difficulties in capturing “change”: Measuring communication effects is difficult and scholars are often unable to establish direct effects between exposure to communication and cognitive, attitudinal, or behavioral changes (Brinberg & Lydon-Staley, 2023). This is because communication or media effects are often indirect, conditional, transactional and person-specific, and may occur with a delay (Valkenburg et al., 2016); hence, it is difficult to attribute causality to exposure to communication (Mede, 2022). Compared with other subfields of communication or advertising, science communication is moreover special because it is characterized by the uncertainty and complexity inherent in science-related topics and is often directed toward a general public rather than a specific target group (e.g., Metag, 2017). Studies show that individuals differ strongly in their predispositions toward science (e.g., Schäfer et al., 2018) and that such predispositions are quite stable (Metag, 2017) and rather difficult to change through communication. Overall, these characteristics make evaluation of science communication and measurement of changes per se challenging. This is exacerbated when the responsibility for the evaluation of a specific temporary science communication project lies with academics, who are not specifically trained in science communication or its evaluation and may not be familiar with evaluation models, methods, and metrics.

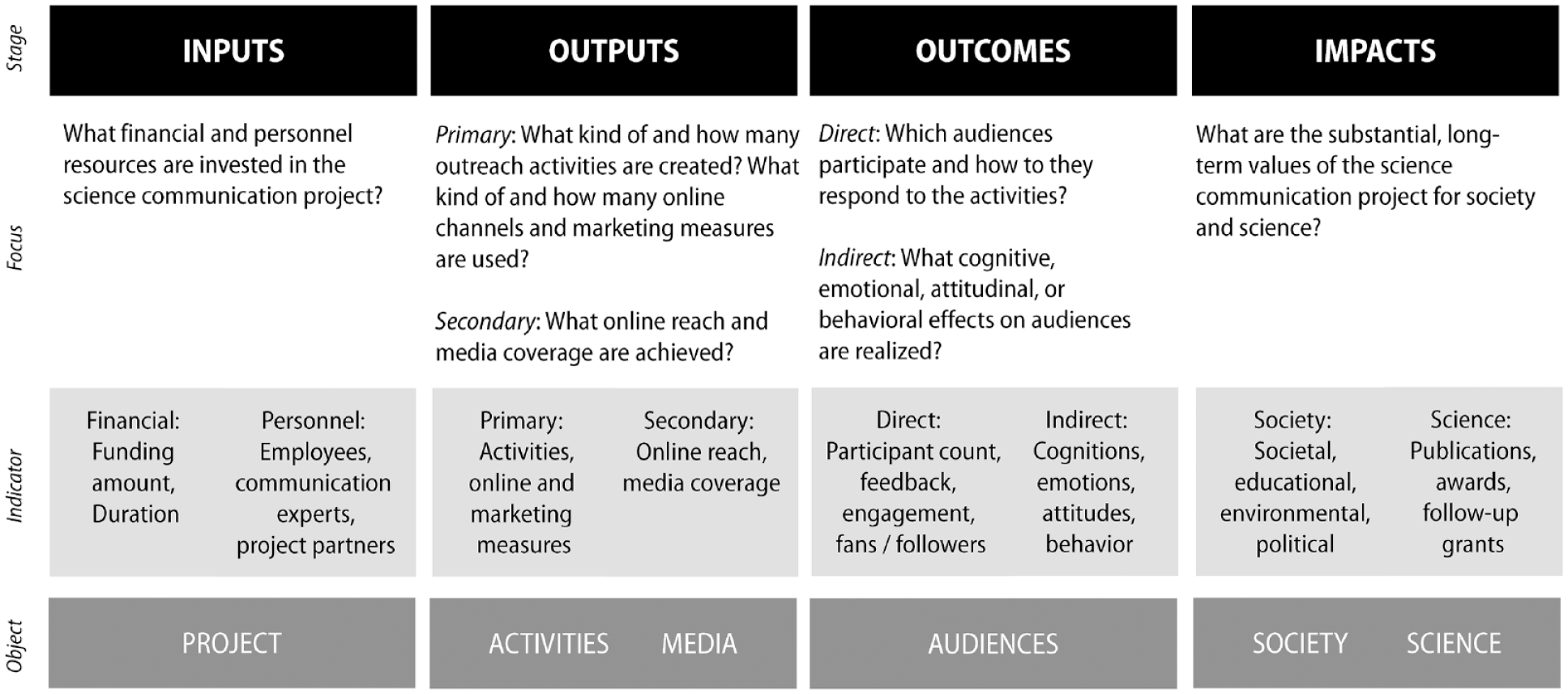

At their core, science communication evaluation models distinguish between four stages (Raupp & Osterheider, 2019; Volk, 2023; similarly Pellegrini, 2021):

Conceptual model for evaluation of science communication projects.

For each stage, the model presents the focus of evaluation and illustrates possible indicators and the object of evaluation (inspired by DPRG & ICV, 2011):

Evaluation Methods and Designs

Sound evaluation methods and designs are essential to record the effects of science communication projects along the above-mentioned stages (Jensen, 2019). The entire spectrum of qualitative and quantitative social science research methods can be used, including standardized surveys, semi-structured interviews or focus groups, observations of participants, experiments, eye tracking or knowledge tests (e.g., Fu et al., 2016; Niemann et al., 2023; Pellegrini, 2021). Especially for evaluating interactive activities such as science festivals, a number of autonomous and informal feedback methods can be used, such as graffiti walls, snapshot interviews, before-and-after-drawings, guestbooks or feedback cards (Campos, 2022; Grand & Sardo, 2017). Moreover, content analyses of media resonance, website or social media analyses can be used to evaluate mediated formats of science communication. Different evaluation methods are typically combined in multimethod designs to measure science communication projects along different stages (Grand & Sardo, 2017).

Evaluation can take place before the outreach activity (“formative evaluation”), during the activity (“processual evaluation”), after the activity (“summative evaluation”), or both before and after (pre- and post-test-design) (Fu et al., 2016; Grand & Sardo, 2017). To reliably measure knowledge or attitude changes among participants, pre- and post-test-designs and a comparison of the measurement results before and after the activity are inevitably required. In practice, however, evaluation is more often carried out “summatively” (Ziegler et al., 2021), that is, visitors self-report whether they have learned something after participating in an activity and have to assess their knowledge gain themselves. Control groups are very rarely used in science communication evaluations to assess whether there are differences between groups that have (not) been exposed to an outreach activity (Pellegrini, 2021). The fact that elaborate evaluation designs are still rare in practice is often attributed to a lack of time and budget or missing knowledge on the part of the evaluators (Grand & Sardo, 2017; Jensen, 2019; Pellegrini, 2021). In addition, lack of support and interest in evaluations as well as concerns about possible negative results are also barriers (Sörensen et al., 2024; Volk, 2023).

While there is no large-scale empirical evidence on the uptake of evaluation models for science communication, there are indications that several funding agencies (e.g., the Commonwealth Scientific and Industrial Research Organization (CSIRO) in Australia or the Science Foundation Ireland (SFI) in Ireland) as well as some university communication departments (Sörensen et al., 2024) have adopted the idea of logic models for science communication evaluation. However, it remains unclear how academics engaging in science communication projects evaluate their own efforts, if at all, and whether they draw on such models, and what methods they adopt. Moreover, it is not known what evidence is created by such evaluations about the effects of science communication at different stages. Thus, two research questions (RQs) were formulated:

Data and Method

To answer the research questions, this study analyzed a dataset comprising a full population of 128 science communication projects funded by the SNSF over the last 10 years. Switzerland has a competitive higher education system with world-renowned universities, and Swiss academics are quite active in the field of science communication (Rauchfleisch et al., 2021). Since 2012, the SNSF has been funding science communication projects that promote dialog between science and society with up to 200,000 Swiss Francs (CHF) for a maximum of 36 months as part of the “Agora” funding program. The Agora scheme is open to all researchers holding a Ph.D. at Swiss institutions in all disciplines, regardless of their experiences in science communication. On the recommendation of the SNSF, the projects often involve not only researchers but also communication experts and external partners who have the expertise (e.g., pedagogical and artistic qualifications) or networks (e.g., with associations) necessary for the successful implementation of outreach projects. Funding applications are reviewed by external reviewers and funding decisions are made by a commission of academics and professional science communicators.

Dataset

The SNSF made available data from its archives for all 128 science communication projects funded since 2012 and concluded by May 2022. Access to non-public data archives of funding organizations is rare, and although ex-post assessments of funding lines are often commissioned externally by funders, the results of such assessments are rarely published (Bornmann et al., 2007), so there are few studies examining patterns in funding schemes (for exceptions, see, e.g., van der Lee & Ellemers, 2015; Witteman et al., 2019). The SNSF provided the author with access to three document sources for each project: (a)

Of course, several limitations must be considered when interpreting such data: It can be assumed that academics have an interest in presenting their projects as impactful or successful to funders (King et al., 2015). As the analysis is based on self-reports by the grant holders and not an independent assessment by the external reviewer committee, it is not possible to ascertain whether the reported results are valid, actually correct, or presented in an exaggeratedly positive manner. Nonetheless, the dataset is an interesting source for analyzing whether or not robust self-evaluations occur, what metrics academics use to signal success, whether they also report failures, and whether there are gaps between promised and realized results.

A complete sample description of the 128 science communication projects can be found in Supplementary Material 1. All data protection and privacy regulations for handling confidential data and project information were adhered to (see Supplementary Material 2). The total page count for each project averaged 40.4 pages (

Operationalization and Data Analysis

Data analysis took place from July to October 2022. Three student coders were responsible for coding the material after attending an intensive 2-week coding training led by the author, which covered roughly 20% of the material and in which coding inconsistencies were intensively discussed and jointly resolved. The material was provided in English, but due to Switzerland’s multilingual characteristics, a few sources were obtained in French, German, or Italian, which were then coded by the coders with bilingual backgrounds. The entire material for each project, including the (a)

Overall intercoder reliability was satisfactory given the extensive length of the project descriptions and the high complexity of the material, with an average percent agreement rate of 82% between two coders. All inconsistencies between the double codings were checked and resolved by the author by reviewing the original material in a final step and deciding on the definitive coding to ensure a high standard of analytical quality.

Evaluation Methods and Designs

To answer RQ1, it was coded whether an evaluation was conducted (agreement: 81%), and what type (agreement: 78%; e.g., summative), what design (agreement: 64%; e.g., qualitative), and what methods (agreement: 89%; e.g., feedback and survey) were used (following, e.g., Fu et al., 2016; Grand & Sardo, 2017; Pellegrini, 2021; Phillips et al., 2018; Ziegler et al., 2021).

Outputs, Outcomes, and Impacts

To answer RQ2, the planned and achieved outputs, outcomes, and impacts of the science communication projects were coded.

For

For

For

The type of

The data analysis relied on descriptive statistics and was carried out in SPSS.

Results

The analysis of the 128 science communication projects shows how such projects are evaluated, if at all, and with what methods (RQ1), and what results these projects have achieved in terms of outputs, outcomes, and impacts (RQ2). As expected, almost all projects were described by grantees as successful in achieving their goals. But quite often, these were unsubstantiated claims: Almost one-third of projects had not conducted evaluations, and the outputs, outcomes, and impacts achieved were often not transparently reported.

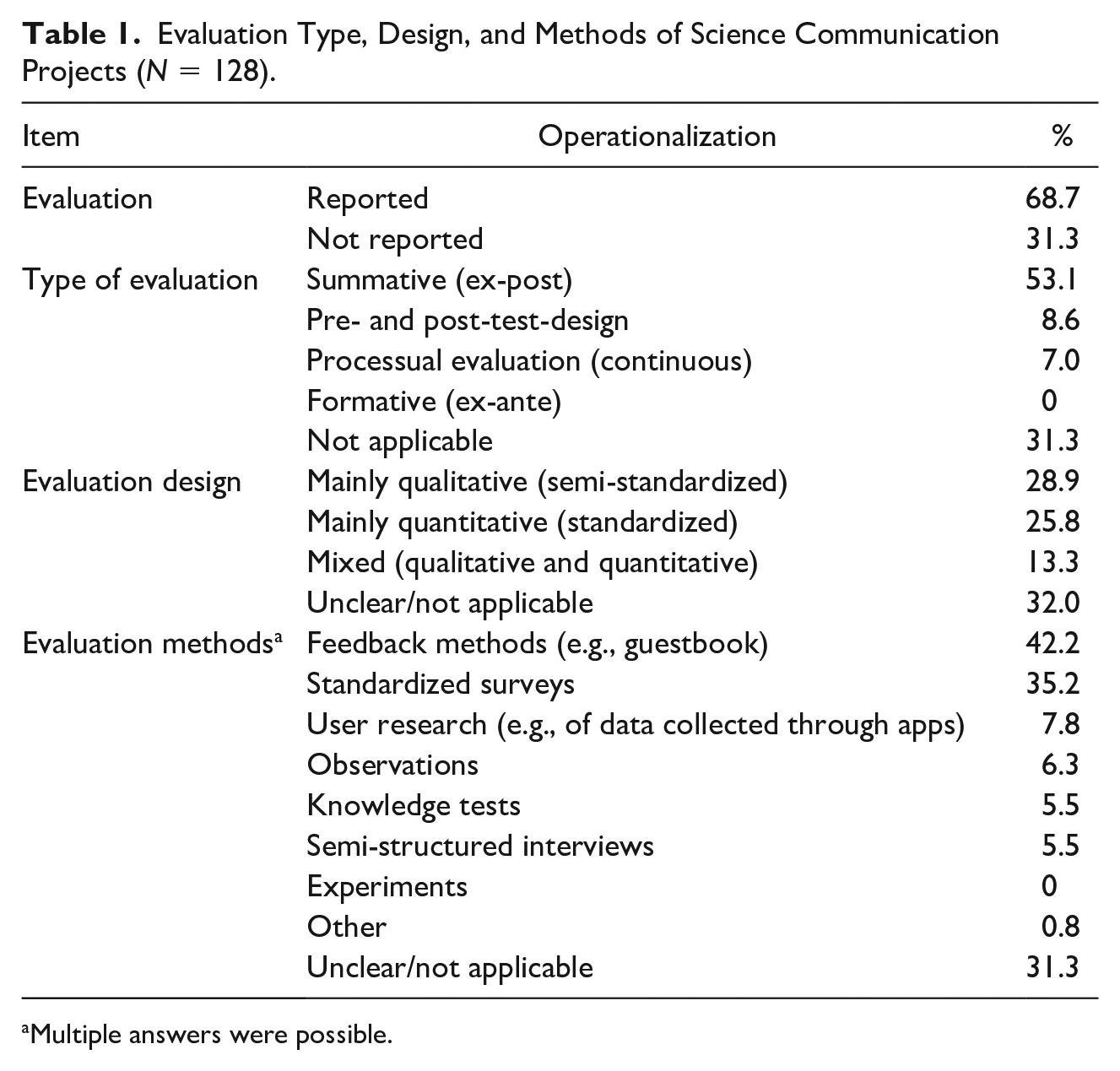

Evaluation Methods and Designs

The results for RQ1 show that 68.7% of the 128 projects conducted an evaluation, while 31.3% did not (see Table 1). There are few mixed methods approaches and rigorous evaluation designs: 43% chose a single method for evaluation, 19.5% combined two evaluation methods, and 8 projects triangulated three or more evaluation methods. The most frequently used methods were feedback methods (42.2%) and standardized surveys (35.2%), followed by user research (7.8%; e.g., analysis of user data collected via citizen science apps). The share of projects using a mainly qualitative (28.9%) or mainly quantitative evaluation design (25.8%) was almost equal; a combination of qualitative and quantitative methods was found in 13.3% of projects. In the remaining projects, the design remained unclear. Summative evaluation at the end of a project was the most common (53.1%). Pre- and post-test-designs relying on before-and-after measurements were used in 8.6% of projects—however, this would be precisely necessary to reliably record changes in audiences’ knowledge, attitudes, or behavioral intentions. In 7% of projects, a processual evaluation was reported.

Evaluation Type, Design, and Methods of Science Communication Projects (

Multiple answers were possible.

In most projects, it was not identifiable that a specific evaluation model was used for the purpose of evaluation. Only in a handful of projects did academics explicitly base their evaluation on a logic model that distinguished outputs, outcomes, and impacts and described the logical pathways leading to intended results.

Outputs, Outcomes, and Impacts of Science Communication Projects

The findings for RQ2 reveal what kind of activities were created in the science communication projects and how academics report on the results of their projects.

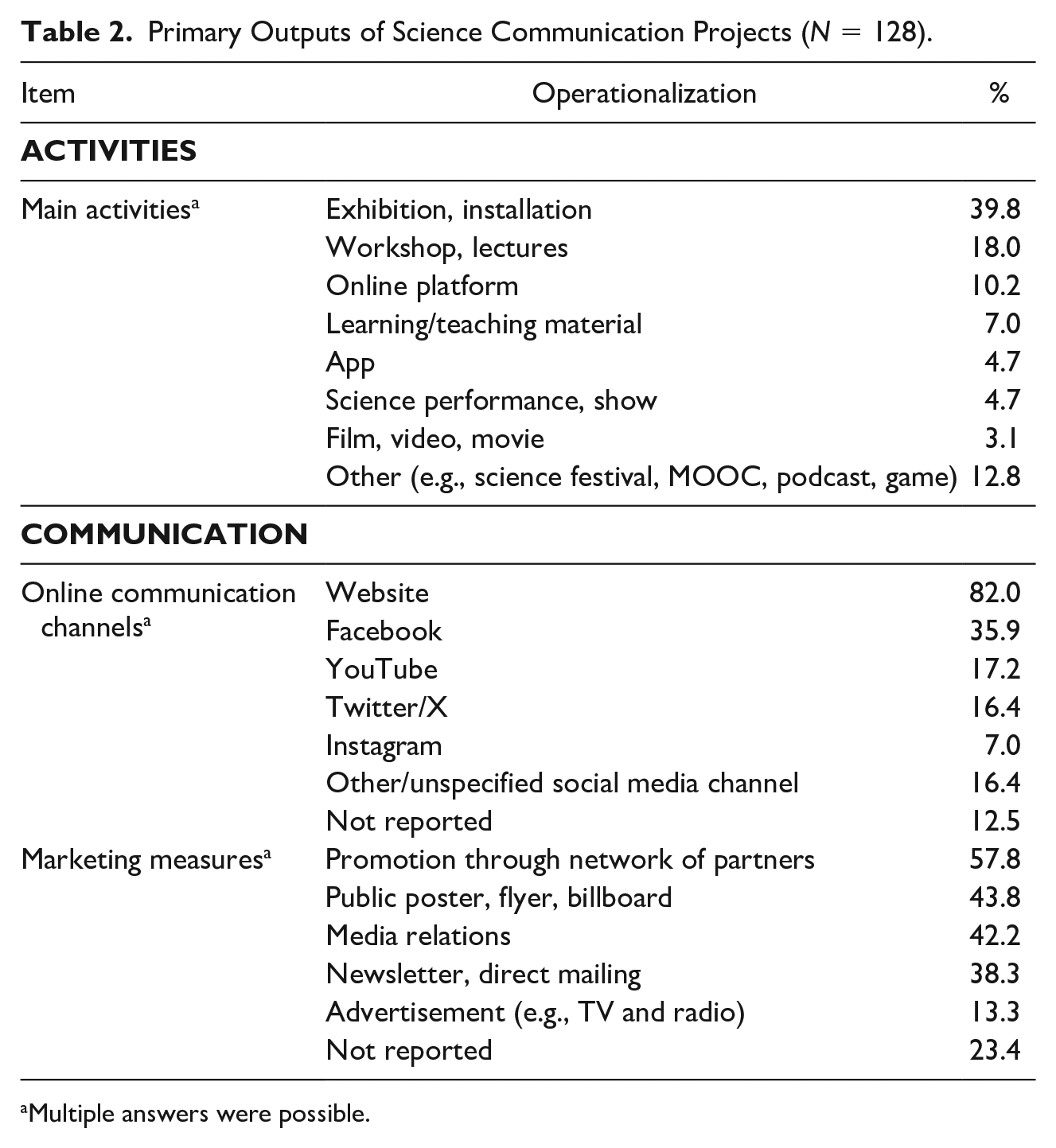

Outputs

For

Primary Outputs of Science Communication Projects (

Multiple answers were possible.

For online communication, most projects used a website (82.0%), whereas social media use was comparatively low, led by Facebook (35.9%), YouTube (17.2%), and Twitter/X (16.4%). Overall, 61.7% used more than one online channel. 12.5% did not report using online media to address their target audiences. To increase public visibility, projects also used traditional marketing measures: They most often relied on partners to promote outreach activities through their network (57.8%), created posters or flyers (43.8%), used media relations (42.2%), or sent out direct mailings or newsletters to reach target groups (e.g., teachers) (38.3%).

Notably, one-third of the projects (31%) reported that they were not able to successfully carry out all the planned activities, but that they had to deviate from their plans to develop or implement (a certain number of) activities due to various obstacles. In these cases, detailed justifications were given in the final reports for not fulfilling certain project goals set out in the grant application. Most of the obstacles were related to COVID-19 pandemic constraints, but a few also mentioned difficulties in the project team, unrealistic goal setting, or unforeseen problems with project planning and staffing.

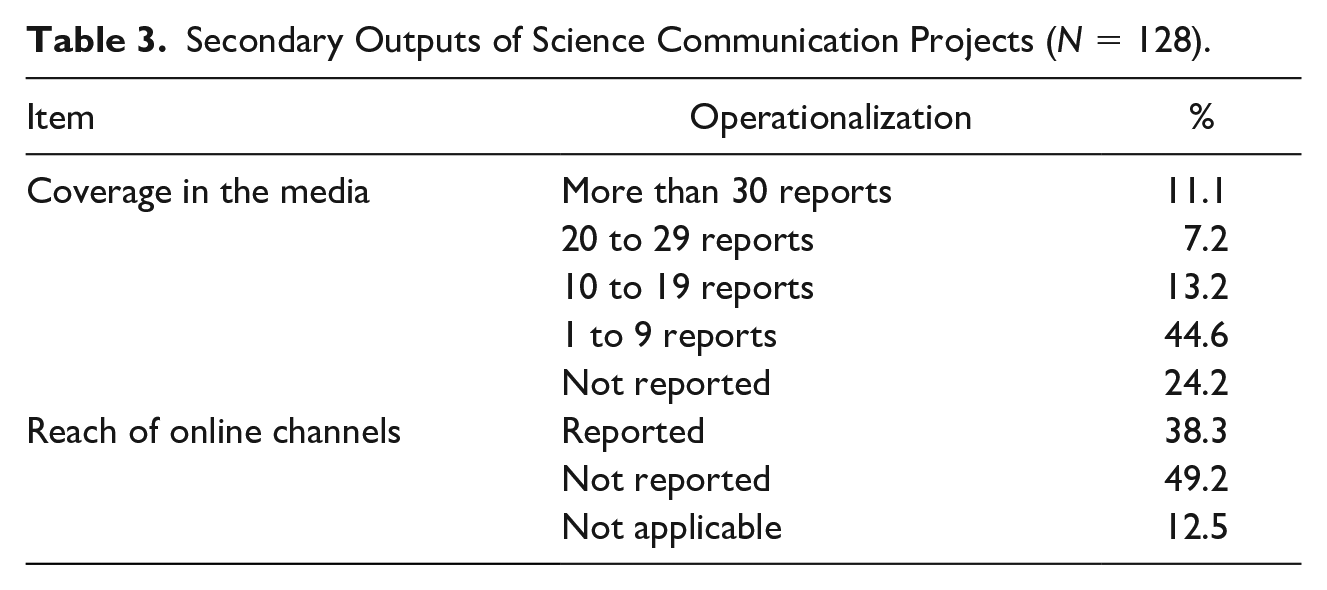

For

Secondary Outputs of Science Communication Projects (

In terms of online communication, while most projects used some type of online channel, only 38.3% reported whether people had possibly seen the content. Online reach was most commonly reported for websites (30.4%). However, the metrics and time periods reported varied widely, making it impossible to compare the data and accumulating evidence of effective science communication online. 6

Outcomes

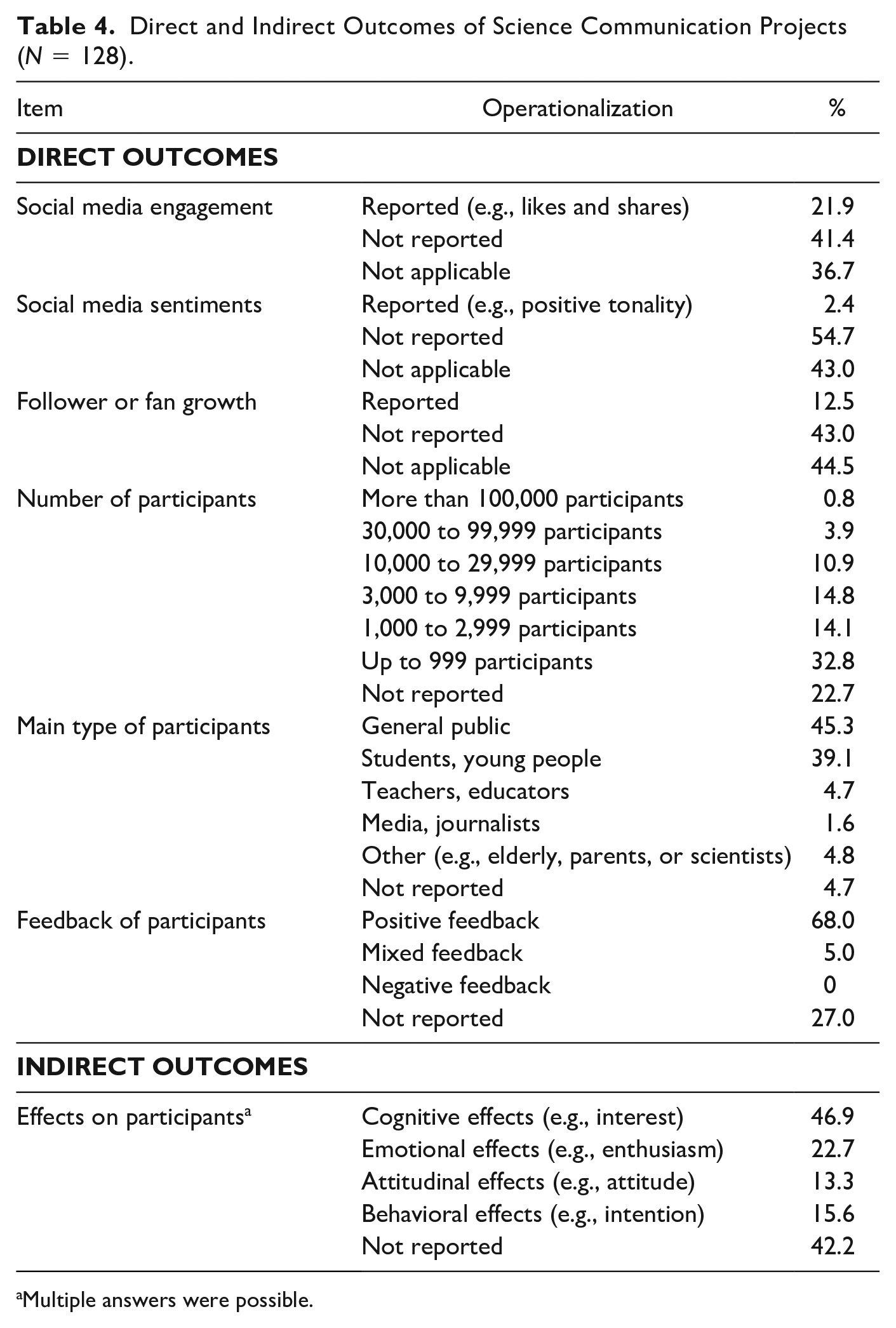

For

Direct and Indirect Outcomes of Science Communication Projects (

Multiple answers were possible.

Relatively few projects analyzed peoples’ initial response to social media or online content: 21.9% reported on social media engagement, focusing on the number of likes, shares, or uploads (e.g., in citizen science app). However, only 2.4% analyzed user comments to determine social media sentiments. The number of new fans, followers, or subscribers was reported by 12.5%. Again, such results were reported using different metrics and time periods, such as absolute follower growth versus relative follower growth, or followers per platform or aggregated across platforms. Therefore, the data presented were not comparable.

For

Impacts

Finally, the results show that long-term, substantial impact is rarely reported, and when it is, such narrative statements focus more on

Discussion

The analysis of 128 science communication projects carried out by academics between 2012 and 2022 provides valuable insights into the evaluation practices and the outputs, outcomes, and impacts achieved by such projects.

First, the results show that most projects were evaluated (RQ1), but in one-third of projects, no evaluation was carried out by the academics. The study points to a paucity of more robust evaluation practices: The findings suggest that evaluations are hardly guided by logic models and underlying assumptions from the theory of change of how planned science communication activities lead to certain, logically anticipated outcomes that then lead to certain impacts. The projects often capture participants’ self-reported shifts in knowledge or attitudes, using summative evaluations that rely on cross-sectional data, rather than pre- and post-test-designs (e.g., knowledge tests). In line with previous research (Fu et al., 2016; Metcalfe et al., 2012), feedback forms or standardized surveys dominate, and only a quarter of projects combine different evaluation methods—although this would be necessary to track results along different stages. Overall, the analysis confirms previous studies which reveal that the evaluation of science communication is still fairly immature (e.g., Jensen, 2014; Metcalfe et al., 2012; Pellegrini, 2021; Sörensen et al., 2024; Ziegler et al., 2021).

Second, the analysis highlights the emphasis on demonstrating secondary outputs and direct outcomes (RQ2), that is, media coverage (75.8%), participant count (77.3%), and immediate feedback (72.0%). While nearly half of the projects reported changes in participants’ cognitions (46.9%), other indirect outcomes such as effects on attitudes, emotions, or behaviors, as well as long-term impact on society were often overlooked in reporting. Again, these results underline earlier research that shows a dominance of relatively simple output and outcome measurements in science communication (e.g., Bühler et al., 2007; Höhn, 2011; Sörensen et al., 2024; Weitkamp, 2015). 42.2% of projects did not mention any indirect outcomes for target groups and twice as many projects were silent on long-term impact, pointing to a more general lack of transparent reporting that has been problematized in previous research (Fu et al., 2016). Aside from the gap in omitting certain results, such as online reach (missing in 49.2% of projects) or online engagement (missing in 41.4% of projects), the projects that did track such outcomes often used different metrics to signal success. This renders evaluation outcomes incomparable and hinders a holistic understanding of science communication effectiveness on digital platforms.

There could be several reasons why there was a lack of sound evaluation: One explanation is that academics preferred investing time or budget in outreach activities rather than devoting resources to rigorous evaluations of one-off, temporary projects. Another plausible assumption is that academics lacked know-how of evaluation models and methods, or interest in self-evaluation (Ho et al., 2020; Metcalfe et al., 2012). Perhaps, communication experts involved in the projects provided too little advice on designing robust evaluations—or they themselves lacked the necessary time, resources, or methodological expertise (Jensen, 2014). Further reasons could also lie with the target audiences, who may have been reluctant to participate in surveys, as problematized in several reports, underlining the need for more unobtrusive and informal evaluation methods such as feedback walls (e.g., Grand & Sardo, 2017).

The fact that final project reports focused more on reporting outputs and direct outcomes might be related to the easy access to such (mostly digital) data (Volk & Buhmann, 2023) or because the funder specifically requested data on the volume of media output. An additional explanation could lie in project goals, which emphasized providing access to or raising awareness of scientific topics—and therefore required output-related metrics such as reach, visits, or likes—rather than outcome-related goals such as changing attitudes toward certain scientific topics. This focus on one-way knowledge transfer could also possibly reflect a deficit model understanding of science communication (Besley & Nisbet, 2013). Notably, in terms of indirect outcomes and impacts, the comparison of grant applications and final reports unravels that the results achieved often fall short of the promises made at the application stage. Given a trend toward inflationary impact statements driven by an increasingly fierce competition for funding (Chubb & Watermeyer, 2017), it was anticipated that grant applications would attempt to portray a project as potentially impactful. Indeed, grant applications appear to have over-promised effects and impacts. This may have been intentional to improve the chances of success in attracting the grant (King et al., 2015). However, it is also plausible to assume that academics were overly optimistic in their goal setting because they lacked training and experience with science communication projects (Ho et al., 2020; Phillips et al., 2018; Rodgers et al., 2018). The formulation of unrealistic goals might also be related to a systemic lack of a larger evidence base about what kind of changes can realistically be achieved through (often short- or one-time) exposures to science communication, particularly given that scientific topics are inherently complex and attitudes toward science are quite stable (Metag, 2017). In addition, the fact that communication effects are often indirect, conditional, person-specific or delayed can serve as an explanation for why some projects may not have found the direct effects expected.

In line with expectations, the projects were presented as successful in almost all final reports. Yet, the claims of success (e.g., positive feedback and changed attitudes) were rarely backed up with empirical evidence from evaluations, making them not particularly compelling and credible. Since the hoped-for effects and impacts did not always materialize to the desired extent, one might expect the grantees to justify why this was the case. A quarter to a third of the projects reflected on unrealized activities or target groups. In the remaining reports, the discrepancy between planned and achieved goals was not explained. Such a lack of transparency and full disclosure of project results (e.g., omitting the visitor count) can possibly be interpreted as a strategy to gloss over unachieved project goals or sweep unfulfilled promises under the table. In some cases, the impression was even created that some grantees made little effort in demonstrating accountability in the final reports. Particularly surprising is the fact that the societal impact achieved was hardly “marketized” and most final reports were silent on the subject. One explanation for this could be that statements about long-term societal impact naturally clash with the timing of reporting, as such impact may only not surface until months or years after the end of a project (Weitkamp, 2015). The emphasis on reporting scientific impact, in turn, may be due to the fact that academics are accustomed to signaling success in scientific currencies in final reports to funders (Brenninkmeijer, 2022).

Conclusion

This study builds on a unique dataset of all 128 science communication projects funded by the SNSF from 2012 to 2022—presenting the first analysis of its kind in science communication research. The standardized content analysis of grant applications, final reports, and project data reveals that academics have developed a breadth of different outreach activities to inform, educate, or engage the public in science, which are often participatory, interactive, and dialogical in nature. However, a systematic assessment of the effectiveness of these activities is rare, as few projects apply rigorous evaluation designs and combine multiple evaluation methods. Furthermore, many projects emphasize media attention and participant count, but neglect reporting on the effects on audiences and societal impact.

This is also noteworthy in view of the limitations inherent in the dataset already discussed: Like many studies in the field of evaluation (e.g., Fu et al., 2016), the data sources analyzed are based on self-reports and not on independent external assessments. Very likely, academics had an interest in presenting their projects as “success stories” to the funding body (Jensen et al., 2022). Indeed, the majority of projects were described as successful, but evidence for the claimed success was often vague or missing. Future studies could use in-depth interviews or surveys of academics and funders to explore (lack of) motivation, competencies, and perceived challenges related to self-evaluation and impact assessment. Particularly, studies should further illuminate the pressures on academics to over-sell societal impact and analyze impact statements from a critical perspective (Chubb & Watermeyer, 2017; King et al., 2015).

To accelerate progress and leverage the effectiveness of science communication, a scientifically rigorous evaluation of science communication, including outputs, outcomes, and impacts, is essential. This study has made both a conceptual and an empirical contribution in this regard: The conceptual model presented in Figure 1 can inform future research and provide guidance for academics and practitioners in developing logic models for science communication evaluation, which describe the intended outcomes and impacts and define the logical pathways to achieve them. A recourse to the theory of change and communication effects research can help systematize different effects of science communication in a causal logical relationship and justify how certain science communication activities ultimately lead to societal impact. Future measurements need to consider the unique characteristics of science communication—especially the challenge of validly measuring change at the outcome stage—and address these with adequate pre- and post-test-designs. The study’s empirical evidence can stimulate reflection on evaluation requirements but also on realistic expectations about the effects of science communication in the context of short-term exposure, which is crucial as unfounded expectations can lead to disappointments both for academics, funders, and policy makers (King et al., 2015). Furthermore, the findings raise the question of the usefulness of academics’ self-evaluations, which may run the risk of becoming success stories rather than transparent reflections of project results and failures. This complicates meta-evaluations of evaluations and conclusions on the societal impact of funding schemes.

Importantly, the responsibility for better science communication evaluation should not be primarily assigned to academics (Palmer & Schibeci, 2014). Rather, greater collaboration should be incentivized between academics and professional science communication, who can advise researchers regarding the strategic use of media relations and social media (Besley et al., 2021) and take responsibility for the (quasi-external) evaluation of project results. At the same time, training of academics in science communication and its evaluation would still be desirable (Ho et al., 2020). Such training should also be offered by funding organizations (King et al., 2015): Several funders have developed toolboxes or guidelines for evaluating public engagement—such as the UK Research and Innovation (2020) in England, the SFI (2015) in Ireland, or CSIRO (2020) in Australia—including possible indicators, customizable evaluations templates (e.g., survey and feedback form), or best practice evaluations from previous projects. However, to make evaluation data comparable, clear guidelines and standards, or even selected mandatory success metrics, are needed for reporting results. Moreover, new approaches to impact assessment are needed that account for the long-term nature of impact and disciplinary differences (Brenninkmeijer, 2022). Funders need to address these issues if they require academics to conduct evaluations and intend to draw insights from such evaluation data (Fu et al., 2016; Jensen & Gerber, 2020), and they should also make these data available for meta-analytical research purposes. Addressing the identified challenges can contribute to a more robust evaluation of science communication, enhancing the potential for impactful and evidence-based science communication in the future.

Supplemental Material

sj-docx-1-scx-10.1177_10755470241253858 – Supplemental material for Assessing the Outputs, Outcomes, and Impacts of Science Communication: A Quantitative Content Analysis of 128 Science Communication Projects

Supplemental material, sj-docx-1-scx-10.1177_10755470241253858 for Assessing the Outputs, Outcomes, and Impacts of Science Communication: A Quantitative Content Analysis of 128 Science Communication Projects by Sophia Charlotte Volk in Science Communication

Footnotes

Acknowledgements

The author thanks the SNSF for access to the data and the three student assistants Talita Bernasconi, Damiano Lombardi, and Stefanie Thai from the University of Zurich for their help in coding the material. Moreover, the author also thanks Damiano Lombardi and Mike S. Schäfer for their thoughtful comments on the first draft of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research was funded by the Swiss National Science Foundation (SNSF).

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.