Abstract

This study examines how informal caregivers of older adults form expectations of artificial intelligence-based chatbots (AI chatbots) for caregiving support, drawing on the human–machine communication framework. Findings from six focus group discussions in Singapore showed that informal caregivers’ expectations were multidimensional—encompassing the functional, relational, and sociotechnical aspects. While participants generally expected AI chatbots to assist with informational and practical caregiving tasks, they remained skeptical regarding their capabilities to provide accurate medical advice, emotional support, and complex health care decision-making. Expectations were shaped by machine heuristics, prior technology experiences, caregiving experiences, and sociotechnical contexts. Theoretical and practical implications were discussed.

The rapid development of communication technologies, particularly artificial intelligence-based chatbots (AI chatbots), is transforming the landscape of health care. AI chatbots are computer programs that simulate human-like interactions by performing text- or voice-based communication tasks (Agarwal & Wadhwa, 2020; Natale, 2019). In health care, AI chatbots have been used to provide personalized guidance, emotional support, and chronic disease management (Chew & Achananuparp, 2022), holding promises for improving health care accessibility and efficiency (Vo et al., 2023). However, the adoption of AI chatbots in health care remains challenging given the widely documented expectation violation experiences and negative perceptions among users (Chew & Achananuparp, 2022; Luger & Sellen, 2016 ; Vo et al., 2023). Research shows that users’ expectations of emerging technologies like AI and automation—beliefs about what these technologies could and should do—are critical to shaping user experience and trust, which in turn impact technology adoption (Chen & Sundar, 2024; Lew & Walther, 2023; Rheu et al., 2024; Sartori & Bocca, 2023). Understanding user expectations is therefore essential—not only for designing more effective AI health tools, but also for communicating their capabilities and limitations in ways that foster realistic expectations and trust in the technology.

While a growing number of studies have examined patient or public expectations of AI in health care (Nong & Ji, 2025; Vo et al., 2023; Xu et al., 2021), little is known about the expectations of informal caregivers—those who provide unpaid care to family members or friends with health or functional challenges. Informal caregivers of older adults present a key user group for AI chatbots, with distinct needs arising from their caregiving burden and lack of support. When caring for older adults, these caregivers often assume multiple roles: they provide physical assistance, coordinate medical appointments, make health care decisions, and address social isolation among older adults (Schulz et al., 2016, 2020). Managing these duties imposes significant stress and burden, exacerbated by insufficient support such as timely information, guidance, and empathy from existing health care systems and social networks (Schulz et al., 2020; Wittenberg-Lyles et al., 2014). AI chatbots present an opportunity to help fill these support gaps by providing on-demand access to health information, empathetic communication, and decision-making support. If implemented effectively, such chatbots could lighten caregivers’ workload and improve their perceived social support, ultimately enhancing health outcomes for both them and care recipients (Chiou et al., 2009; Ferraris et al., 2022; Kelley et al., 2017). Given the high stakes of caregiving, it is critical to understand what informal caregivers expect from AI chatbots as a baseline for developing technologies that truly meet their support needs.

Notably, user expectations are not formed in a vacuum. People develop their initial understanding of technologies through a mix of personal beliefs, prior experiences, and contextual factors (Bonito et al., 1999; Kaplan et al., 2023; Olsson, 2014; Rosén et al., 2024). For example, science communication research shows that public trust in emerging communication technologies like AI and automation evolves with exposure and direct interaction (National Science Board & National Science Foundation, 2024). This suggests that caregivers’ prior experiences with AI or chatbot technologies may play a key role in shaping their expectations of AI chatbots for caregiving support. In health care, contextual factors such as people’s awareness of health care landscapes and trust in health care systems also influence expectations for AI applications (Lysø et al., 2024; Nong & Ji, 2025). In short, informal caregivers’ expectations are likely shaped by multiple, intertwined considerations. It remains unclear, however, which considerations are most salient when informal caregivers form their expectations. Without this nuanced understanding, it will be difficult to design tailored communication strategies that effectively introduce AI chatbots to informal caregivers.

To address the research gaps, this study draws on the Human–Machine Communication (HMC) perspective and human–technology interaction and caregiving literature to examine how informal caregivers form their expectations of AI chatbots. This study has twofold research aims. First, we aim to understand what informal caregivers expect from AI chatbots in the context of caregiving support. Second, we aim to reveal how these expectations are formed. To achieve these aims, we conducted six focus group discussions with 54 informal caregivers of older adults in Singapore—a context chosen for its rapidly aging population and strong reliance on informal caregiving (Chan et al., 2013), as well as its government investment in digital health innovation (Yi et al., 2024). Singapore’s multicultural environment and high public trust in government health care initiatives also provide a rich setting to explore how sociocultural environments might shape expectations of AI (Goh & Ho, 2024; Yi et al., 2024). By presenting our qualitative findings, we contribute to an in-depth understanding of human–AI communication in caregiving. The study also offers practical insights for designing AI tools that align with caregivers’ needs and for communicating about these technologies in ways that build user trust.

AI Chatbots for Social Support in Informal Caregiving

AI chatbots are increasingly employed to deliver social support in health care settings. Social support refers to the availability of supportive resources within one’s social network (Gottlieb & Bergen, 2010), with action-facilitating and nurturant support as two main categories. Action-facilitating types of support can foster behaviors that mitigate a stressor, including informational (i.e., information or advice) and tangible support (i.e., services and goods); nurturant support, in contrast, helps cope with emotional strain resulting from a stressor, encompassing emotional (i.e., empathy and understanding), esteem (i.e., validation and compliments), and network (i.e., companionship and belonging) (Cutrona & Suhr, 1992). In clinical contexts, AI chatbots are designed to provide these forms of social support, such as offering cognitive and behavioral interventions and helping with scheduling appointments, providing general health information, and sending medication reminders (Aggarwal et al., 2023; Altamimi et al., 2023; Xu et al., 2021). By providing such social support, AI chatbots can promote positive health behaviors in patients and alleviate the burden on health care systems (Altamimi et al., 2023).

Despite these promising developments, the use of AI chatbots to support informal caregivers remains underdeveloped (J. Li et al., 2020; Ruggiano et al., 2021). This is a notable gap, given the unique challenges faced by informal caregivers, who are under immense pressure to provide high-quality care for their loved ones in the context of an increasingly aging population (Roth et al., 2015; Schulz et al., 2020). The responsibilities of caregiving extend beyond managing daily activities to include ensuring medication compliance, arranging medical appointments, and offering emotional support to older adults, leading to significant physical, psychological, and emotional strain (Schulz et al., 2020). Compounding these challenges, informal caregivers often reported insufficient social support from their existing social networks, such as family, friends, and health care professionals (Culberson et al., 2023). Given these unmet needs, AI chatbots could serve as a supplementary support resource by offering informal caregivers health care information, emotional support, and caregiving assistance. Prior research has shown that informal caregivers’ received and perceived social support can influence not only their own subjective burden and well-being (Chiou et al., 2009; Ferraris et al., 2022) but also the health outcomes and well-being of care recipients (Ferraris et al., 2022; Kelley et al., 2017). For example, Kelley et al. (2017) found that higher support perceived by caregivers predicted subsequent health improvements in care recipients. These findings suggest the potential of AI chatbots to strengthen caregiver support systems.

However, realizing this promise requires first understanding informal caregivers’ perspectives: how do they perceive an AI supporter, and what do they expect such chatbots to do or not do for them? Focusing on informal caregivers’ expectations is important, as such knowledge can guide the design of chatbot technologies that truly meet their support needs. From a science communication standpoint, it can also help set realistic expectations when introducing AI chatbots to informal caregivers and guide target communication strategies to improve user trust in this technology (Hong et al., 2021).

User Expectations and Human–Machine Communication Perspective

Users often approach emerging technologies with pre-existing expectations, which serve as mental benchmarks for evaluating a system’s capabilities and behaviors (Bonito et al., 1999; Rosén et al., 2022, 2024). These expectations, either positive or negative, reflect what people believe a technology could and should do (Nong & Ji, 2025; Olsson, 2014). Empirical evidence found that initial expectations of AI and robotic systems affected actual user experience and the development of trust over time (Glikson & Woolley, 2020; Jokinen & Wilcock, 2017). Similarly, Expectancy Violation Theory also posits that people hold shared expectations about how communicators should behave, and these expectations can be violated during interactions. Positive violations occur when behavior is more favorable than expected, while negative violations occur when it is less favorable (Burgoon et al., 2002). Such violations draw heightened attention and trigger evaluative processing, with outcomes influenced by both the valence of the violation and the communicator’s reward value (Burgoon et al., 2002). In the context of AI chatbots, unmet expectations, especially negative expectation violations, can lead to reduced trust and diminished effectiveness in support delivery (Chen & Sundar, 2024; Rheu et al., 2024). Therefore, it is critical to understand informal caregivers’ initial expectations of AI chatbots to avoid negative expectation violation experiences and foster user trust and adoption (Lew & Walther, 2023).

To investigate informal caregivers’ expectations, this study draws on the human–machine communication (HMC) framework (Guzman & Lewis, 2020). HMC suggests people create meaning in interactions with communicative technologies, such as AI chatbots (Guzman, 2018). Communicative technologies are not only designed to function as communicators but are also interpreted by people as such. The framework thus provides a perspective to understand how individuals interpret an AI chatbot’s role and capabilities, the factors contributing to such interpretations, and how such conceptualization informs their interactions with the AI technology (Guzman & Lewis, 2020). Specifically, HMC highlights three intertwined aspects of AI as a communicator: (1) the functional dimensions of how AI technologies are designed as communicators and how people perceive them within the role; (2) the relational aspects of how people understand the relationship between AI technologies and themselves; and (3) the metaphysical aspects of how people understand the boundary between human and AI and ethical and legal considerations. This multidimensional perspective moves beyond a purely utilitarian account to include relational, social, and ontological considerations, offering a nuanced perspective to understand user expectations.

Existing research on AI chatbots has used the HMC framework to investigate users’ perceptions and expectations of the technology (e.g., Abendschein et al., 2021; Lew & Walther, 2023; Meng et al., 2023). Functional concerns, particularly the human and machine-like characteristics of AI systems, appear to be a salient factor driving how people evaluate AI chatbots in health care (Lew & Walther, 2023; Su et al., 2021). For instance, users expect AI technologies to be more responsive to feedback and to offer personalized, customized recommendations. Users also expected AI chatbots to act as empathetic listeners, providing users with a sense of being understood and emotional support (Su et al., 2021). In addition to expectations that enhance the benefits of chatbot use, users also seek to minimize potential harms, such as concerns about privacy breaches (Chew & Achananuparp, 2022). There are also concerns about the accuracy of information provided by AI technologies, particularly in unexpected situations, and whether that information is vetted by experts in the field (Chew & Achananuparp, 2022).

In contrast, the relational and metaphysical aspects of user expectations have received comparatively less empirical attention. HMC posits that people’s interactions with AI are embedded in social contexts where they make sense of their relationship with AI (Sundar, 2020). People often perceive AI as socially situated actors and may assign them roles based on existing human analogues (e.g., Edwards et al., 2016; Reeves & Nass, 1996). For instance, chatbots may be seen as assistants, coaches, or companions—roles that carry specific social expectations (Nißen et al., 2022; Xygkou et al., 2024). Regarding the metaphysical aspect, people often speculate about the ontological boundaries between humans and machines, as well as the ethical and legal questions surrounding the development and use of AI (Guzman, 2020). As Guzman and Lewis (2020) argue, users’ judgment about what AI should be allowed to do is central to shaping their interaction with these technologies.

While the HMC framework has advanced understanding of user interpretations of AI chatbots, its application to the health care domain remains limited. The informational and emotional complexity of caregiving, coupled with informal caregivers’ multifaceted support needs, likely shapes unique expectations of AI chatbots across functional, relational, and metaphysical dimensions. Existing research has found that users’ expectations are context-dependent and influenced by their motivations for deploying the technology (Greiner & Lemoine, 2025; Lew & Walther, 2023). Thus, there is a critical need to investigate informal caregivers’ specific expectations within the caregiving contexts to inform more socially and ethically responsive chatbot design in health care.

Formation of Expectations for AI-Based Caregiving Support

Moreover, it is equally important to understand how informal caregivers’ expectations are formed. While some scholars have attempted to understand the considerations contributing to user expectations of health care AI technologies (Lysø et al., 2024), few studies have systematically examined how various considerations come together in forming user expectations of communicative AI. Such knowledge can not only help advance theorization within HMC (Guzman & Lewis, 2020), but also inform targeted science communication strategies that clearly convey chatbot capabilities and roles in caregiving contexts.

Research in human–technology interaction has suggested that expectations of emerging technologies do not develop in isolation. Instead, users’ early perceptions are shaped by an interplay of human, technological, and contextual influences (Bonito et al., 1999; Kaplan et al., 2023; Olsson, 2014; Rosén et al., 2024). One critical human factor is users’ beliefs about how technologies work, also referred to as folk theories (Butler, 2000; Dogruel, 2021). These folk beliefs are not always correct, but they serve as cognitive shortcuts that shape individuals’ behaviors and expectations of these systems (Sharabi, 2021). Similarly, the machine heuristics perspective argues that people rely on mental shortcuts to form their expectations of technologies (Sundar & Kim, 2019). Users tend to attribute qualities such as accuracy, objectivity, and reliability to machines (i.e., positive machine heuristic)—but also assume limitations in empathy, creativity, or social dexterity (i.e., negative machine heuristic) (Sundar, 2020; Yang & Sundar, 2024). Furthermore, machine heuristics can influence how people assess the appropriateness of different tasks for AI. For instance, users were more accepting of AI in mechanical tasks instead of tasks that require human traits, such as emotional labor or moral judgment (Yang & Sundar, 2024). These machine heuristics can thus shape people’s expectations of AI chatbots in different support tasks (Sundar, 2020; Yang & Sundar, 2024).

Another human factor is direct experience with technology, which can modify or override initial heuristics. While global survey data show uncertainty and variation in how people view AI and automation (3M, 2022; Zhang & Dafoe, 2019), these perceptions and expectations often shift with direct interaction and personal experience (National Science Board & National Science Foundation, 2024). Studies on automated parking features and robots also supported that familiarity with the technologies can increase user trust perceptions, perceived importance, and willingness to use such technologies over time (Sanders et al., 2017; Tenhundfeld et al., 2020). Similarly, the HMC framework also argues that people’s interactions with early AI technologies shape their expectations of AI chatbots (Guzman & Lewis, 2020; Richardson et al., 2021). Therefore, it is plausible to assume that informal caregivers will consider their personal experience with using AI applications when forming expectations of AI chatbots.

When relying on AI chatbots for caregiving support, contextual and sociocultural environments may also play a role in forming their expectations. Prior research found that space- and time-related factors, such as different usage contexts and people’s interactions with early AI technologies shape their expectations of AI chatbots (Guzman & Lewis, 2020; Richardson et al., 2021). In the health care context, prior research suggested that people’s awareness of the medical landscape and their own health-related experiences influence their expectations of the use of AI technologies (Lysø et al., 2024; Nong & Ji, 2025). For informal caregivers, their lived caregiving challenges and embedded sociocultural contexts may further inform their expectations of AI chatbots for various forms of caregiving support. Together, these examples suggest the need to explore how informal caregivers form expectations by taking into account cognitive, experiential, contextual, and sociocultural considerations (Lysø et al., 2024).

Based on the above discussions, user expectations of AI chatbots for caregiving support are still underrepresented. To fill in these research gaps, this study aims to provide a comprehensive picture of informal caregivers’ expectations of various aspects of AI chatbots for caregiving support. Further, we aim to examine how different considerations play a role in the formation of these expectations. We propose the following two research questions:

Research Question 1 (RQ1): What are informal caregivers’ expectations of AI chatbots for caregiving support?

Research Question 2 (RQ2): What shapes informal caregivers’ expectations of AI chatbots for caregiving support?

Method

To understand informal caregivers’ expectations of AI chatbots for social support, this study conducted six focus group discussions with 54 participants in Singapore between May and June 2024. We adopted the focus group discussion method because the approach provides an in-depth understanding of public perceptions and expectations about unfamiliar topics, such as novel technologies (Lysø et al., 2024), by facilitating open disclosures among people sharing similar experiences (Tracy, 2024).

Recruitment and Participants

Eligible participants met two criteria: (1) Singaporean citizens or permanent residents aged 21 years old and above and (2) current or former informal caregivers for senior family members (aged 65 years old and above) with chronic diseases. We recruited participants through purposive and snowball sampling. Recruitment advertisements were posted through various channels that informal caregivers often access (e.g., elderly daycares and nursing homes). Participants from earlier focus groups were also asked to recommend other informal caregivers.

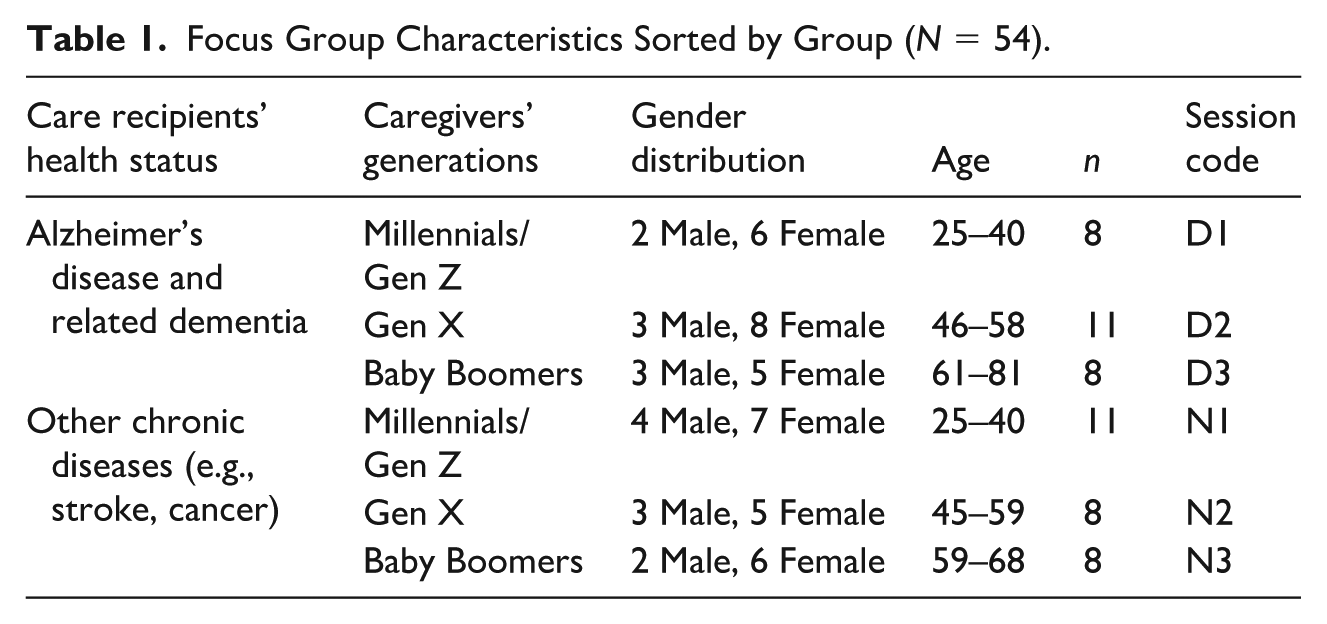

To capture diversified insights from informal caregivers, we adopted a multi-group design by purposively recruiting participants from different age groups and caregiving contexts. Participants were grouped by generation—Baby Boomers, Generation X, and Millennials and Generation Z (Pew Research Center, 2020)—because prior research showed that different generations exhibited varying perceptions and usage preferences of novel health care technologies (Fox & Connolly, 2018). We also categorized informal caregivers of older adults with different health statuses since research suggests that informal caregivers of patients with Alzheimer’s and related dementia (ADRD) faced unique stressors and had distinct support needs compared with informal caregivers taking care of people with other chronic diseases (Karg et al., 2018). Table 1 outlines the demographic characteristics of participants.

Focus Group Characteristics Sorted by Group (N = 54).

Data Collection

After obtaining Institutional Review Board approval from the authors’ institution, six focus group discussions were conducted with 54 informal caregivers in a conference room at a large university in Singapore. Each session lasted approximately two hours. All sessions were audio- and video-recorded after obtaining participants’ consent. Participants were compensated SG$100 for their participation in the discussion upon completion. All focus group discussions were conducted in English, the lingua franca in Singapore. Each focus group was moderated by a doctoral student majoring in social science with the help of assistant moderators. A semi-structured moderator’s guide, including questions and prompts aligned with the research aim, was developed for this study.

In each session, the moderator began by introducing participants to the definition, capabilities, and use context of AI chatbots through a video of the technology. The video had been reviewed by experts in conversational AI to ensure the accuracy and trustworthiness of the content presented. Following the video, participants were first asked to share their existing social support networks related to their caregiving experiences and use experiences of rule-based or AI-powered chatbots. To investigate caregivers’ expectations of AI chatbots for caregiving support, the moderator employed a semi-structured question guide with open-ended questions, allowing participants to elaborate freely on their perspectives. The moderator probed three key areas: (1) participants’ expectations of AI chatbots in providing action-facilitating and nurturant support, (2) the reasons behind these expectations, and (3) participants’ perceived advantages and limitations of AI chatbots compared with human sources of support. A sample open-ended question included: “How do you think an AI chatbot should perform when providing caregiving-related information?”

Data Analysis

After completing all focus group sessions, a student assistant whose native language was English transcribed the videos and audios verbatim, including both verbal and nonverbal expressions of the participants. To protect participant confidentiality, identifiable information of each participant was removed from the final transcripts, with each participant’s name replaced by an alphanumeric code (e.g., D1P1 stands for participant 1 from the Millennials/Generation Z-ADRD group).

A constant comparative approach (Glaser et al., 1968) was used to analyze the transcripts using NVivo software. Two coders—a doctoral student and a research assistant in social science—engaged in a three-step analysis: open coding, axial coding, and theme development (Tracy, 2024). In the open coding stage, both coders read the transcripts line-by-line and independently generated initial codes. They made constant comparisons of all initial codes to find out their similarities and differences and then discussed the individual codes to resolve any disagreements. During the axial coding stage, both coders categorized the initial codes into second-level codes, which served to theorize the transcripts. This process was guided by theoretical concepts examined in the literature review. For example, the HMC framework helped structure the data around different dimensions of user expectations. In addition, the concept of machine heuristics informs the organization of codes related to the cognitive shortcuts and assumptions participants made when forming expectations of AI chatbots. In the final stage, the coders collaboratively refined and merged the second-level codes into higher-level themes to address the two research questions (Tracy, 2024). Throughout the analysis, we adopted an abductive analytic approach (Thompson, 2022)—moving iteratively between the empirical data and theoretical frameworks to allow for both emergent insights and theory-informed interpretation.

Results

In this study, all participants were current or former caregivers of senior family members with chronic health conditions. Most were caring for parents or parents-in-law, with a smaller number caring for grandparents, spouses, or siblings. Their caregiving responsibilities were wide-ranging, covering both dealing with care recipients’ daily needs (e.g., bathing, feeding) and complex independent-living tasks (e.g., medical appointments, medication management, and decision-making). Participants frequently highlighted challenges and unmet needs in caregiving, including the heavy scheduling of hospital visits and logistical burdens, difficulties in accessing timely and reliable health care information, emotional strain, and social isolation. While support was available from family members, paid helpers, health care professionals, peer groups, and online resources, many felt these were insufficient. For example, several reported being unable to obtain genuine understanding and empathy from family members and friends and noted that caregiver-specific training was lacking within the health care system. In addition, most participants had prior experiences with AI chatbots, such as ChatGPT and Google Gemini, or rule-based chatbots from the shopping platform or government websites.

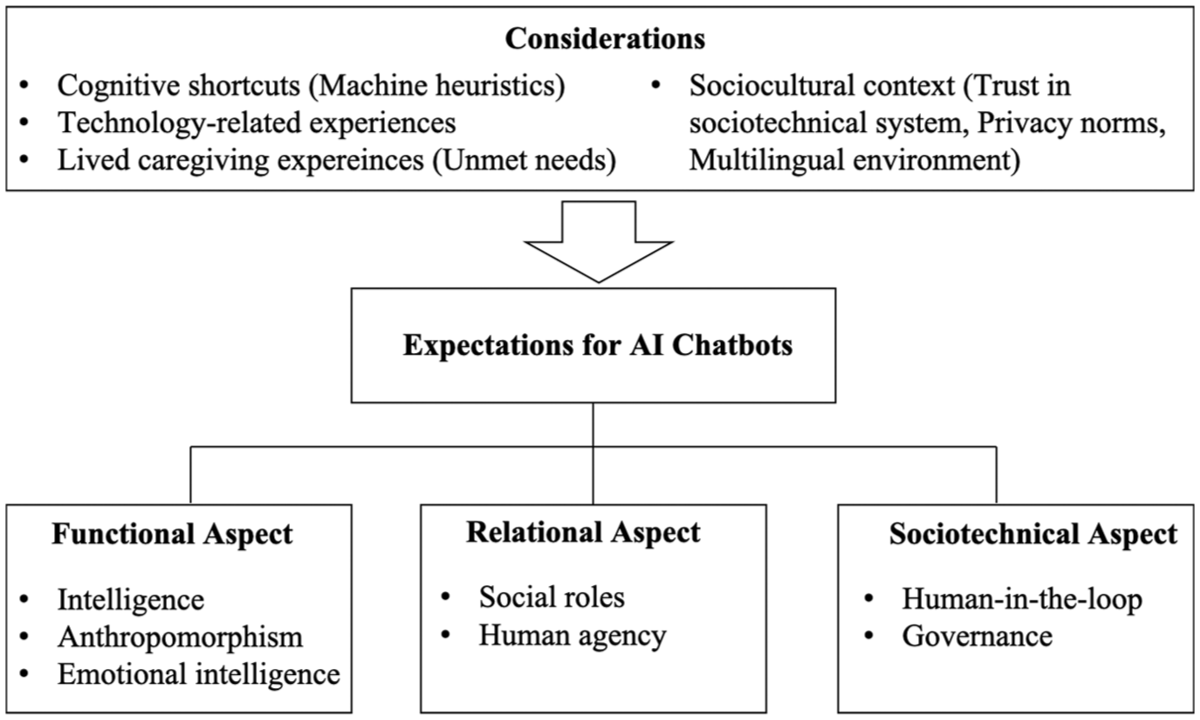

Overall, participants held multidimensional expectations on AI chatbots, including functional, relational, and sociotechnical aspects, largely reflecting their mental models. Mental models refer to individuals’ cognitive representations of external reality (Johnson-Laird, 1983; Jones et al., 2011), often informing how they envision unfamiliar topics (Grimes et al., 2021; Ho & Tan, 2023; Southwell et al., 2018). In this study, mental models are participants’ understanding of how AI chatbots work and their capabilities and limitations in the context of caregiving support. These mental models shaped expectations and drew upon different sources, including personal beliefs, prior experiences with related technologies, and cultural knowledge shared within a community (Jones et al., 2011; Southwell et al., 2018). Correspondingly, we found that participants’ mental models and expectations of AI chatbots stemmed from their machine heuristics, prior interaction experiences with AI or chatbot technologies, lived caregiving experiences, and the sociocultural context in Singapore.

Across the sample, most expectations and mental models were consistent across participant groups, with one notable exception: perceptions of human agency across caregiver generations. Younger caregivers were more open to relying on AI chatbots for a wide range of caregiving tasks, whereas older caregivers placed greater trust in their own subjective experiences when making health care decisions.

To present the findings, we first outline the subthemes related to participants’ expectations and mental models of AI chatbots across functional, relational, and sociotechnical aspects (RQ1). We then summarize how cognitive, experiential, contextual, and sociocultural considerations interplayed to shape these expectations and mental models (RQ2). Figure 1 illustrates a conceptual framework of how participants form their expectations of AI chatbots in the context of caregiving support. A detailed account of participants’ expectations and related considerations is provided in the Supplemental Appendix.

An Overview of User Expectations of AI Chatbots and Their Considerations in the Caregiving Context.

RQ1: Multidimensional Expectations

Functional Aspect: Intelligence, Anthropomorphism, and Emotional Intelligence

Participants widely expressed their expectations of functional aspects of AI chatbots, including intelligence, anthropomorphism, and emotional intelligence. Intelligence represents AI chatbots’ machine-like attributes, while anthropomorphism and emotional intelligence represent human-like qualities in AI chatbots. Specifically, anthropomorphism refers to interface-driven heuristics, such as voice, tone, and culturally relevant language use, while emotional intelligence encompasses deeper content-level evaluations of AI chatbots’ capabilities in detecting, interpreting, and responding appropriately to human emotions.

Intelligence

Participants across groups expected AI chatbots to be intelligent—able to provide accurate and relevant information and personalized solutions to meet their informational and practical needs in caregiving. They expected that AI chatbots should “really understand my problems and offer really tailored, pinpointed solutions” (D2P4). Particularly, some participants emphasized the importance of AI chatbots to provide localized health care information, such as hospital information and support groups for caregivers, considering its relevance to their caregiver experiences. Participants also envisioned chatbots to create “personalized profiles” for both caregivers and care recipients based on user information such as older adults’ health conditions, physiological index, and daily routines (N1P7). Building on this information, AI chatbots could make tailored caregiving plans for caregivers, including older adults’ disease development progress, medical appointment schedules, and reminders.

However, there remained contradictory expectations about AI’ capabilities to provide medical information among participants. While some believed that AI chatbots should provide up-to-date and accurate information regarding diagnosis, medication, and treatment, others argued that the technology should not be used for medical advice. The failure of existing AI chatbots, such as ChatGPT, in mentioning medication side effects made participants skeptical about their capabilities to provide reliable medical advice (D2P3). As one participant mentioned, “AI chatbots can also be dangerous and misleading and mislead caregivers into giving the wrong care for the patient” (N1P8).

Overall, participants have positive expectations of the intelligence of AI chatbots, except for their capabilities for providing accurate medical advice. These perspectives reflect a mental model of AI chatbots as an “information engine”: generally capable of processing data and generating localized, tailored health care guidance, but unreliable for complex medical decision-making.

Anthropomorphism

Participants expected AI chatbots to exhibit anthropomorphic qualities through human-like interface design and communication modalities. They emphasized that AI chatbots should communicate with caregivers through voice-based interaction using a human-like voice so that interaction would “feel like talking with a real person” (N2P1). To achieve voice-based interaction, AI chatbots were expected to recognize and understand multiple languages, dialects, and accents from users. In particular, participants expected that AI chatbots should understand Singaporean caregivers’ diverse language, including Singlish, Hokkien, and Cantonese (N3P5). These features were viewed as enhancing engagement in human–AI interaction, especially in a multilingual caregiving context.

This human-like attribute can also be used to support older adults. Some participants mentioned that older adults face loneliness, requesting emotional support from caregivers. In these situations, AI chatbots were expected to “chat” with older adults to meet their social and emotional needs when caregivers are unavailable, which in turn “helps the caregiving journey” (D1P4).

These preferences reflect participants’ consistent and positive expectations for anthropomorphic qualities of AI chatbots. This suggests their mental model that AI chatbots are human-like social partners, in which a natural voice and culturally relevant language recognition enhance human–AI interaction.

Emotional intelligence

Unlike anthropomorphism, participants expressed mixed expectations of AI chatbots’ emotional intelligence. Several participants were optimistic that AI chatbots could detect users’ emotional states and express empathy. For example, they envisioned the technologies could “listen to your situation and have a feeling for you” (N3P4) or “recognize our caregiver burnout” (D1P5) and then give emotional support during emotional discussions.

However, a larger group of participants believed that AI chatbots could not be relied upon for emotional support. They argued that chatbots cannot sensitively detect human emotions or truly understand their feelings, thus failing to provide authentic emotional support. This negative expectation reflects participants’ mental model of AI chatbots—AI chatbots could not achieve emotional intelligence comparable to humans, and they believed that emotional support should still mainly come from human touch.

Together, these contrasting expectations on emotional intelligence revealed a tension between the desire for emotionally responsive AI and discomfort with AI becoming too human-like in providing emotional support.

Relational Aspect: Social Roles and Human Agency

Participants also expressed expectations of their relationship with AI chatbots. Participants have assigned some social roles to AI chatbots for caregiving support based on existing human analogues in health care systems and their social networks. They also have expectations for their own agency in relation to AI chatbots during interactions, termed as human agency in this study.

Social Roles

Participants expected that AI chatbots would assume multiple social roles—AI as a health care advisor, personal assistant, therapist, and companion. Several participants from Generation X and the Baby Boomer generation emphasized the health care advisor role, expecting AI to be “smarter” (N2P1) and “more professional” (N2P7) than informal caregivers in health care expertise and knowledge. This role was especially salient when mentioning AI chatbots’ capability in providing health care information. As one participant explained, “We don’t know what the treatment is or what causes certain things. So, we will trust that the AI will be giving us professional advice.” (N2P7).

Several participants from the Millennial and Generation Z generation also viewed personal assistants as another social role of AI chatbots. They expected AI chatbots to arrange diverse caregiving practical tasks for them, such as logistics issues and medical appointments, as discussed earlier. This can be represented by a quote: “AI chatbots are almost like a helper, but not physically, that is arranging everything for you” (N1P4). In this assistant role, participants also expected AI chatbots to help check their bias in decision-making, like “an objective partner” (N1P4).

In the domain of nurturant support, participants across groups expected AI chatbots to act as a therapist that could facilitate users’ introspection and “provide new perspectives” when they were stuck with negative emotions (N1P2). For those who have positive expectations of AI’s emotional intelligence, they expected AI chatbots to serve as a companion—“a listening ear” that could accept venting unconditionally and accompany caregivers in times of need or when they felt stigmatized to talk about sensitive topics to other people (D3P5).

These role assignments reflect a mental model of AI chatbots as analogues to existing caregiving actors rather than as entirely novel entities. Participants interpreted AI chatbots through familiar social roles in the health care system. Specifically, preferences for certain roles demonstrate their diverse caregiving challenges, including uncertainty in health care, difficulties in accessing trustworthy information and resources (health care advisor), heavy logistical burdens (personal assistant), and emotional strain and stigma in caregiving (therapist and companion).

Human agency

Participants emphasized the importance of human agency in interactions with AI chatbots, including informal caregivers’ ability to customize the attributes of AI chatbots and to make informed decisions based on the AI’s output. Participants across groups were expected to determine AI chatbots’ appearance and communication style in interactions. Some envisioned embodied chatbots with customizable avatars, such as simulating their family members, while others preferred to choose AI chatbots’ communication styles based on their personal preferences.

In addition, participants from the Generation X and Baby Boomer groups emphasized that caregivers should make health care decisions by themselves based on AI chatbots’ assistance. AI chatbots were considered useful in providing health care information and suggestions for reference, but “when something happened, the decision still fell back on caregivers” (D3P8). As one participant explained,

I feel caregiving is still on a personal basis, and I can’t rely on everything based on the chatbot and tell me what I need to do. . . I can live without chatbot because I’ve been doing that for a few years now on how to take care of my dad. (N2P3)

These expectations reflect a mental model of AI chatbots as tools or advanced machines rather than autonomous agents. This view was particularly salient among older caregivers with stronger self-efficacy and extensive caregiving experience, who emphasized their responsibility in decision-making and relied on their own judgment. Rather than delegating authority to AI, they positioned chatbots as supportive tools that empower—rather than replace—informal caregivers.

Sociotechnical Aspect: Human-in-the-Loop and Governance

An emerging theme from this study was participants’ expectations about the sociotechnical aspects of AI chatbots, referring to how AI chatbots should be developed and managed within the broader social and technical context. This includes expectations for human-in-the-loop systems, where health care professionals remain involved in chatbot operations, and for governance, where regulatory bodies oversee the ethical use of AI.

Human-in-the-loop

Participants across groups expected an AI-human collaboration mode where AI chatbots and different health care professionals complement each other to create an integrated approach to caregiving support. This “multidisciplinary” support system requires AI capabilities and human expertise to jointly address caregiving needs (D1P4).

In the development phase of AI chatbots, health care professionals should be involved in authenticating AI-generated information to ensure its accuracy. During human–AI interaction, participants expected to involve human sources of support, such as doctors, nurses, pharmacists, caregivers, and social workers when situations exceeded the AI chatbots’ capabilities. For instance, with complex medical issues beyond the chatbot’s capabilities, participants expected AI chatbots to seamlessly transfer cases to health professionals for more professional support (D1P4). This may help effectively reduce their hospital visits as well as relieve the health care burden. Similarly, in the nurturant support domain, informal caregivers desired immediate interventions by social workers and therapists when AI chatbots detected caregiver burnout or distress in communication.

This expectation reflects a mental model of AI chatbots as collaborators within a human-in-the-loop system, where human expertise complements machine efficiency. Participants believed that AI chatbots lack judgment compared to human health care professionals, especially when dealing with emergent and complex problems that are prevalent in the caregiving context.

Governance

Participants across groups had high expectations for the government and health care institutions to establish and enforce guidelines to ensure the ethical and responsible use of AI chatbots in health care. They expected AI chatbots to be developed and operated by legitimate providers, supported by laws and regulations. Many participants suggested local health care institutions host AI chatbots, such as “SingHealth” (D1P2).

Furthermore, they expected the government to regulate the operation of AI chatbots to enhance data security and protect user privacy. They proposed using Singapore’s Personal Data Protection Act (PDPA) to govern AI chatbot operations (D3P7). The PDPA could govern the collection, use, disclosure, and protection of personal data. These government efforts can ensure AI chatbots’ authority and credibility, improving participants’ willingness to adopt these emerging technologies.

These expectations reflect informal caregivers’ desire for responsible and well-regulated AI to protect their privacy in health care. Caregiving-related issues disclosed to AI chatbots, such as their emotional problems and older adults’ health conditions, were considered as private among many participants. They worried about the potential leakage of their personal information to “various groups automatically” (D3P1), being exploited for other purposes, like advertising or scams, and posing threats to them. As one participant mentioned, “At the end of the day, can I have the assurance that it will not be used against me?” (D2P7).

RQ2: Considerations in Expectation Formation

Across the above-mentioned expectations and mental models of AI chatbots, four themes emerged as key considerations—machine heuristics, technology-related experiences, lived caregiving experiences, and sociocultural contexts—in the formation of these expectations.

Machine Heuristics

Participants often drew on intuitive beliefs about machines when articulating their understanding and expectations of AI chatbots. These heuristics served as a baseline reference point in how participants described their mental models of whether AI was envisioned as a reliable “information engine” or dismissed as incapable of handling complex health care decisions.

Specifically, positive heuristics that AI is accurate and efficient were associated with expectations for AI chatbots to handle logistics, scheduling, or information retrieval. By contrast, negative heuristics that AI is inherently unemotional and lacking judgment limited trust in AI for emotional support or complex medical advice. As one participant explained, “it can only provide very basic, minimum support. . .the AI chatbot might not be able to read our mind and solve our problem” (D2P5). Instead, participants believed caregivers and health care professionals should remain responsible for emergent and complex problems, which was reflected in their expectations for human agency and human-in-the-loop.

Technology-Related Experiences

Another key consideration was participants’ prior interaction experiences with AI systems, such as ChatGPT, Google Gemini, and rule-based service chatbots in banking or e-commerce. These experiences—whether disappointing or encouraging—informed how participants described mental models of what AI could and could not do, which in turn were evident in the multidimensional expectations they expressed for AI chatbots.

Negative experiences with inaccurate or irrelevant responses were linked to doubts about AI’s reliability in medical advice, despite general trust in its accuracy, and to expectations for human-in-the-loop safeguards. Similarly, difficulties with accent or dialect recognition were connected to demands for culturally adaptive communication. For example, one participant explained, “Every time the chatbot doesn’t understand me, I got to repeat myself. . .It really adds to my frustration. So, recognizing different accents is a must-have ability” (N1P6). However, some positive experiences recalibrated earlier machine heuristics in the context of emotional support. A few participants described surprisingly empathetic conversations with AI, which encouraged them to imagine chatbots as potential companions or therapists.

Lived Caregiving Experiences

Caregivers’ expectations were grounded in the realities of daily responsibilities, reflecting their unmet informational, logistical, emotional, and social caregiving needs. They envisioned AI chatbots’ potential to compensate for support gaps in the existing health care system.

Participants’ uncertainty about medical information was connected to expectations for chatbots to act as health care advisors, while heavy logistical burdens contributed to optimism about AI as a personal assistant. As one participant explained, “We are trained in terms of loss, morals, skill set, money making, family, and friends, but we are not trained as caregivers.” (N1P9). In addition, participants’ openness to emotionally responsive chatbots often arose from social isolation and unmet emotional needs. Limited understanding from family and friends in coping with stress and burnout led some participants to hope for empathy from AI chatbots, even while recognizing the technology’s limitations in providing social support.

Sociocultural Context

Beyond cognitive, experiential, and contextual considerations, expectations were also embedded in the sociocultural contexts. Singapore’s unique multilingual cultures, privacy norms, and institutional trust were integral to how participants described their expectations on the functional and sociotechnical aspects of AI. For example, the country’s multilingual landscape was associated with participants’ expectations for chatbots capable of handling diverse languages, accents, and dialects.

In addition, strong privacy norms among Singaporeans heightened concerns about disclosure of health and emotional information, reinforcing expectations for governance and data protection. High institutional trust also aligned with participants’ imaginings of AI embedded in a human-in-the-loop system, overseen by health care professionals and regulated by government agencies. As one participant put it, “Definitely we would trust government bodies” (D1P6). Thus, privacy norms and institutional trust were described as important in participants’ conceptualizations of AI chatbots as a legitimate source of support only when institutional safeguards and credibility were in place.

Taken together, the multidimensional expectations were not static preferences but rather were constructed through these considerations. By grounding their mental models in the interplay among heuristics, prior technology experiences, lived caregiving needs, and the sociocultural environment, participants articulated their understandings of what AI chatbots could—and could not—do in caregiving support.

Discussion

This study explored how informal caregivers of older adults formed their expectations of AI chatbots for caregiving support. Through focus group discussions, we developed a conceptual framework about informal caregivers’ multidimensional expectations—encompassing AI chatbots’ functionality, AI-human relationships, and the sociotechnical system in which AI chatbots operate. Overall, participants have nuanced, context-dependent expectations of AI chatbots for caregiving support. While they have high expectations of AI chatbots to assist with informational and practical tasks in caregiving, they remain skeptical regarding the provision of accurate medical advice or genuine emotional support. These expectations are not static; rather, they are dynamically formed by the interplay of machine heuristics, prior technology experiences, lived caregiving experiences, and the broader sociocultural contexts.

Sociotechnical Dimensions of Expectations

The recognition of sociotechnical aspects as a dimension of expectations marks a point of departure for research on HMC and human–technology interaction. While prior work has examined perceptions of AI’s capabilities and human–AI relationships (e.g., Abendschein et al., 2021; Lew & Walther, 2023; Meng et al., 2023), far less is known about users’ expectations for how AI technologies should be developed, managed, and regulated in health care. Our findings show that caregivers expect both health care professionals and government institutions to play central roles in ensuring responsible use of AI in health care. This expands HMC scholarship by positioning institutional actors—not only individual users or technologies—as crucial to expectation formation. Future research should investigate sociotechnical dimensions more explicitly, examining how users envision institutional roles in human–AI interactions across contexts.

Task-Based Differentiation in Expectations

Another contribution is the identification of task-based differentiation in informal caregivers’ expectations, providing a nuanced understanding of mental models of AI chatbots in caregiving contexts. Unlike prior research that treats user expectations of communicative AI as static (Chew & Achananuparp, 2022; Su et al., 2021), our findings reveal that participants actively adjust their expectations depending on the specific caregiving support needed. They reported higher expectations for chatbots’ intelligence in logistical and informational tasks, conceptualizing chatbot technologies as information engines. In contrast, they expressed lower expectations for AI in domains requiring accurate medical advice, complex judgment, or emotional sensitivity, viewing such capabilities as uniquely human. This distinction suggests informal caregivers’ awareness of the boundaries of AI—what AI could and could not do based on the realities of caregiving. It also extends Yang and Sundar’s (2024) conceptualization of positive and negative machine heuristics by showing how caregivers apply and navigate these heuristics across different caregiving tasks. These findings point to promising directions for future research on how users perceive and evaluate AI technologies across different support domains.

Human–AI Boundary in Emotional Intelligence

The contrast between expectations of AI and humans in providing emotional support adds a new perspective to the prevailing argument in HMC research that users viewed AI chatbots as less socially attractive than human supporters (Bae Brandtzæg et al., 2021; Lew & Walther, 2023). Participants consistently described humans as having more advanced emotional capabilities than AI chatbots, echoing Lysø et al.’s (2024) observation of human preference for emotional support in health care. Extending this, our findings reveal caregivers’ nuanced definitions of emotional intelligence in caregiving contexts, including recognizing caregiver burnout, understanding emotional needs, offering empathetic responses, and providing fresh perspectives. These nuanced expectations reinforce a boundary that distinguishes authentic emotional support from mechanized substitutes. This suggests an important direction for future research on people’s understanding of AI’s emotional intelligence in emotionally and socially sensitive contexts.

Notably, our findings point to a clear ambivalence in participants’ expectations regarding intelligence, anthropomorphism, and emotional intelligence. On the one hand, they wanted AI that could demonstrate intelligence, speak in natural-sounding voices, and respond with a degree of emotional sensitivity. On the other hand, they were uneasy when chatbots seemed too human-like or attempted to mimic authentic emotional support in domains they regarded as uniquely human. This paradox aligns with the uncanny valley effect (Mori et al., 2012; Stein & Ohler, 2017), which describes the unease people feel when machines approximate—but never fully achieve—human realism. Similar patterns have been reported in other contexts, where users judged AI chatbot emotional expressions as “forced” or “insincere” (Krauter, 2024; H. Li & Zhang, 2024). These findings refine the functional and metaphysical dimensions of the HMC framework by showing that the appropriate degree of human-likeness in communicative AI is context-specific; greater human-likeness does not automatically translate into greater trust. Future work should examine how chatbot design can balance expertise, supportive communication, and subtle empathy to mitigate uncanny valley effects in caregiving contexts.

Human–AI Collaboration in Complex Caregiving Tasks

Another contribution concerns the irreplaceable role of human expertise in complex caregiving. Earlier literature has found that users were aware of the strengths and weaknesses of AI in clinical settings, recognizing that while AI can facilitate more efficient and personalized medication, health care professionals are still important for communication and decision-making (Amann et al., 2023; Lysø et al., 2024). Similarly, our participants stressed that AI would not replace humans in caregiving support and emphasized the importance of involving health care professionals in the implementation of AI chatbots, especially in emergent and complex medical situations. This suggests users’ trust in health care professionals in the sociotechnical system of AI and reinforces the “human-in-the-loop” perspective in health care. This finding also suggests the importance of collaborative intelligence highlighted in a growing body of HMC research: effective support arises not from AI autonomy alone but from meaningful human–AI collaboration (Altamimi et al., 2023; Holzinger, 2016; Lysø et al., 2024).

Dynamics of Expectation Formation

Our attempt at identifying the interplay between cognitive, experiential, contextual, and sociocultural considerations in expectation formation advances theorization of the HMC and provides insights into science communication. While machine heuristics—such as the belief that “machines are accurate” or “machines are unemotional”—may inform initial perceptions (Sundar, 2020; Yang & Sundar, 2024), caregivers’ past experiences with AI or chatbots often play a more critical role in shaping their expectations. Negative interaction experiences, such as encountering inaccurate information and breaches of data privacy, heightened skepticism and lowered expectations of AI chatbots’ capabilities. In contrast, positive experiences with AI’s intelligence led to greater optimism about AI chatbots’ potential in providing social support. These “expectation violation” experiences, either with positive or negative valence, highlight that users’ direct interactions with earlier generations of AI can reshape later expectations (Guzman & Lewis, 2020; Richardson et al., 2021). This finding aligns with broader research on trust in AI, which demonstrates that trust perceptions are not static but evolve through user experience (National Science Board & National Science Foundation, 2024; Sanders et al., 2017; Tenhundfeld et al., 2020).

Beyond experiences with AI technologies, caregivers’ subjective experiences with caregiving could be influential on their expectations as a key contextual consideration. The daily realities of caregiving—ranging from the need for accurate and timely information to the desire for personalized support plans and even emotional reassurance—inform what caregivers hope for and expect from AI. For many, the intensity and diversity of caregiving responsibilities do not simply reinforce existing beliefs about AI; instead, these needs often complicate or challenge reliance on simple machine heuristics. For example, caregivers struggling with limited support networks may wish for AI chatbots that can recognize distress and provide empathetic responses, even as they remain skeptical of current technological capabilities. This dynamic reflects the complexity of caregiving, as documented in prior research (Roth et al., 2015; Schulz et al., 2020) and highlights the risk of treating user expectations as uniform. Our findings suggest that meaningful evaluation or design of AI chatbots must be grounded in a thorough understanding of users’ lived experiences and support needs (H. Li & Zhang, 2024). Attending to these challenges is not only essential for developing AI systems that are relevant and responsive but may also help alleviate caregiver burden and enhance care quality.

Sociocultural Contexts and Trust in AI

The identification of sociocultural considerations such as multilingual contexts and trust in the government and health care institutions brings an additional perspective to the bodies of literature on human–technology interaction and science communication. Participants expected chatbots to recognize multiple languages, dialects, and accents, which is a unique expectation rooted in Singapore’s well-established multilingual and multicultural environment (Cavallaro & Chin, 2020). In addition, participants preferred chatbots developed, operated, and regulated by government and health care institutions, challenging the findings from Western contexts where industry-driven development is often emphasized. For example, Zhang and Dafoe (2019) found that Americans placed more trust in technology companies and non-governmental organizations than in the government to manage AI. In contrast, our findings suggest Singaporeans place general trust in the government in the sociotechnical system (Goh & Ho, 2024), including its capability to manage and regulate AI chatbots. Specifically, Singaporeans consider AI chatbots developed by the government and reputable organizations to be more credible and demonstrate a higher level of trust in them compared to other AI chatbots (Ho et al., 2025; Ou et al., 2025). Therefore, these findings show that user trust in AI is embedded not only in design features but also in sociocultural values and governance structures. Thus, we recommend a context-sensitive approach to studying and implementing AI chatbots (Liu et al., 2024). Future research should also examine trust perceptions and expectations of health care AI across diverse sociocultural environments.

Theoretical Implications

This study makes several theoretical contributions. It extends the human–machine communication (HMC) perspective by introducing a conceptual framework of users’ multidimensional expectations of AI chatbots in caregiving support. In particular, the emergence of sociotechnical considerations as a new dimension underscores the need to examine chatbots not only as communicative partners but also as components embedded within broader health care and governance systems. We also refine the machine heuristics perspective by showing that caregivers do not uniformly apply positive or negative heuristics; rather, expectations vary by task type. In addition, we contribute to the theorization of emotional intelligence in HMC by revealing the boundaries caregivers draw between “authentic” human empathy and chatbot emotional responses, highlighting how inappropriate anthropomorphic design may trigger uncanny valley effects.

Furthermore, this study advances a dynamic perspective of expectation formation, demonstrating that expectations of communicative AI are not fixed at first encounter but evolve through the interplay of cognitive, experiential, contextual, and sociocultural considerations. This insight invites future research to develop measurable components of these factors and test their predictive power in caregiving and cross-cultural settings. Finally, to our knowledge, this study provides the first in-depth account of informal caregivers’ nuanced expectations of AI chatbots for support. Our findings show how task demands, emotional labor, and lived caregiving experiences shape caregivers’ unique expectations of AI in health care. Future research could build on this work to assess the real-world impact of integrating AI chatbots into caregiving systems.

Practical Implications

By identifying informal caregivers’ expectations, this study has practical implications for different stakeholders in AI chatbot development, management, and communication. For technology developers, our findings highlight the need to enhance chatbot intelligence for accurate health information while tailoring systems to context. Capabilities such as multilingual support and access to localized resources are essential in diverse societies. To reduce user discomfort and avoid expectation violations, developers should balance technical expertise with supportive communication and subtle expressions of empathy. Clearly defined social roles—such as health care advisor, personal assistant, or companion—can further align chatbot functions with caregivers’ needs and foster positive perceptions.

For health care practitioners, they should promote effective collaboration between AI and human experts. By leveraging the strengths of both AI and health care professionals, a more integrated support system can be achieved to address the multifaceted needs of informal caregivers. Policymakers should also govern the implementations of AI chatbots to address the public’s privacy and security concerns by establishing data protection laws and regulations. These approaches can contribute to ethical and legal AI in health care.

The findings also offer guidance for science communication professionals in engaging the public on the role of AI chatbots in caregiving. First, given informal caregivers’ mental models of these tools, it is important to frame AI chatbots as complementary support. Communicators should emphasize chatbots’ supportive roles while acknowledging the irreplaceable value of human empathy and judgment in caregiving. Second, communicators should carefully articulate AI’s actual emotional capabilities in ways that resonate with informal caregivers’ own understandings of emotional support while minimizing expectation violations. Third, because some concerns about misinformation—such as omissions of side effects—may arise from limited digital or prompt literacy, communication professionals should develop educational interventions that include onboarding resources to help caregivers frame queries effectively and interpret responses critically. Such strategies can strengthen both caregivers’ trust in AI chatbots and their perceptions of the technology’s usefulness in caregiving contexts.

Limitations of Study and Direction for Future Research

There are several limitations to this study. First, this study focused on informal caregivers’ expectations of AI chatbots without measuring their AI literacy and AI use behaviors. Considering AI literacy and usage may influence people’s perceptions of AI chatbots in health care, future research should consider examining these factors to provide evidence of individual differences in expectations of AI chatbots. Second, our findings offer an in-depth understanding of caregivers’ expectations in Singapore, particularly among English-speaking and tech-aware populations, but they may not capture the perspectives of caregivers from other linguistic, cultural, or socio-economic backgrounds. As such, participants’ positive and negative expectations of AI chatbots should be viewed as exploratory and context-specific rather than universally representative. Future research with more diverse samples—including caregivers from rural areas, lower-income groups, and different national contexts—is needed to validate and extend these insights. Third, since individuals’ expectations may evolve over time with the rapid development of AI chatbots and updated user experiences, focus group discussions may not capture the dynamics of caregivers’ sociotechnical expectations. Future research would benefit from longitudinal studies to capture how people’s expectations evolve over time and across different health care contexts.

Conclusion

This study offers valuable insights into informal caregivers’ expectations of AI chatbots for social support. While participants expect AI chatbots to facilitate caregiving support by improving their intelligence and anthropomorphism and fulfilling a range of social roles, they emphasize the irreplaceable roles of human expertise and human touch in the development and implementation of AI-based support. Human actors in health care systems should predominate in providing medical advice and emotional support and decision-making in caregiving, with AI chatbots serving as complementary tools. The formation of these sociotechnical expectations is not only by users’ understanding of technologies but also by their prior technology experiences, lived caregiving experiences, and sociocultural context. Future research should further investigate how these factors interact to shape users’ both positive and negative expectations of AI in health care.

Supplemental Material

sj-docx-1-scx-10.1177_10755470251398722 – Supplemental material for The Future of AI Support in Health Care: Understanding Informal Caregivers’ Multidimensional Expectations of AI Chatbots

Supplemental material, sj-docx-1-scx-10.1177_10755470251398722 for The Future of AI Support in Health Care: Understanding Informal Caregivers’ Multidimensional Expectations of AI Chatbots by Nova M. Huang and Shirley S. Ho in Science Communication

Footnotes

Acknowledgements

We would like to express our gratitude to Dr. Chan Guo Wei Alvin, Dr. Wen Rong, and Dr. Simon Mingyan Lin for their valuable input in reviewing our video material on AI chatbots. We also thank Mr. Bryan for his assistance in conducting the focus group discussions and data analysis. Large language models were utilized for copy-editing purposes in the preparation of this manuscript.

Ethical Considerations

This study obtained approval from the Institutional Review Board (IRB) of Nanyang Technological University (IRB-2023-258). All participants read and signed consent forms before participating in the study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work is supported by the Singapore Ministry of Education AcRF Tier 1 Award No. RG111/22. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the Ministry of Education.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data for this study can be obtained by submitting a request to the corresponding author.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.