Abstract

This study conducted a 2 (chatbot expertise cues: high vs. low) × 2 (correction sidedness: one-sided vs. two-sided) between-subjects experiment to investigate the effects of chatbot features and output framing on misinformation correction and public perceptions of cultivated meat. Results highlighted the importance of chatbot expertise cues in shaping credibility perceptions of misinformation correction, with two-sided corrections from high-expertise chatbots perceived as more credible than those from low-expertise chatbots. Credibility perceptions mediated the interaction effect between chatbot expertise cues and correction sidedness on individuals’ attitudes and consumption intentions towards cultivated meat. Both theoretical and practical implications of these findings were discussed.

The evolution of artificial intelligence (AI) has significantly impacted the creation and dissemination of information, introducing both challenges and opportunities within the information environment (Wolfe & Mitra, 2024). This development has been particularly influential in the field of science communication, where AI exhibits a dual role. On one hand, the misuse of AI exacerbates the spread of scientifically relevant misinformation by simplifying and accelerating the production of deceptive content, a phenomenon prominently observed during the COVID-19 pandemic (Monteith et al., 2024). On the other hand, AI offers advanced algorithmic solutions and computational tools that significantly enhance fact-checking initiatives and reduce the spread of scientific misinformation (Demartini et al., 2020; Moon et al., 2023; Sun & Pan, 2024). This dual role of AI—its capacity to both hinder and aid science communication—necessitates a deeper investigation into how AI tools can be optimized to enhance the accuracy and impact of science-related messaging.

Research has demonstrated the efficacy of AI tools, such as conversational chatbots, in achieving persuasive objectives across various contexts in science communication (e.g., Wang & Peng, 2023). For instance, Altay et al. (2022) indicated that AI-enabled chatbots could foster positive attitudes toward novel food technologies (e.g., genetically modified [GM] foods) by enhancing human-AI interactive argumentation. Similarly, Moon et al. (2023) observed that fact-checking COVID-related misinformation using AI sources led to less motivated reasoning than fact-checking attributed to human experts. Despite the evident potential of AI in persuasive science communication, its effectiveness in combating science-related misinformation remains unclear. Further examination is needed to understand how the characteristics of AI agents and the features of the misinformation corrections they deliver might affect people’s resistance to scientifically relevant misinformation and influence their attitudes and behavioral intentions toward scientific issues and technological innovations.

Recent studies on persuasion have highlighted the significant impact of AI features on individuals’ attitudes and behaviors (e.g., Cheung & Ho, 2024; Ho & Cheung, 2024; Wu et al., 2024). For example, Wu et al. (2024) found that the specialization or expertise of AI agents substantially affects persuasion outcomes, with specialized applications more likely to engender greater trust and behavioral intentions than the generalist counterparts. Furthermore, the framing of AI-generated content shapes individuals’ attitudinal and behavioral responses; for instance, products recommended by AI as vices (negatively framed) elicit stronger adverse reactions than those framed as virtues (Yang et al., 2024). While the individual effects of AI expertise and content framing are well-documented, the interaction between these two aspects of factors in AI-mediated communication remains underexplored. Therefore, when deploying AI chatbots to address science-related misinformation, it is essential to examine how chatbot characteristics (e.g., expertise) and the construction of the misinformation corrections it provides (e.g., correction sidedness) jointly influence individuals’ cognitive, attitudinal, and behavioral responses. This calls for further integration of the theoretical frameworks from human-computer interaction and media framing to enhance the effectiveness of AI-facilitated misinformation correction and foster public understanding of scientific issues.

Informed by the theoretical perspectives of the Computers as Social Actors (CASA) paradigm (Nass & Moon, 2000) and message sidedness theory (e.g., Allen, 1991), this study examines the role of chatbot expertise and the sidedness of misinformation correction provided by the chatbot in combating scientific misinformation and shaping both attitudinal and behavioral responses toward emerging technologies. The CASA paradigm suggests that individuals interact with computers similarly to how they interact with humans, applying comparable heuristics to both human-computer and human-human interactions (Nass & Moon, 2000). Message sidedness theory posits that a message’s persuasive impact varies by its framing: One-sided messages present a single viewpoint, while two-sided messages include both supporting and opposing viewpoints (Allen, 1991).

Using an experimental design, this study explores how chatbot expertise cues (high vs. low) and correction sidedness (one-sided vs. two-sided) influence individuals’ credibility perceptions of the corrections provided by the chatbot, as well as their attitudes, support, and consumption intentions toward cultivated meat—an emerging and controversial technology particularly susceptible to misinformation. Theoretically, this study advances our understanding of how the source characteristics and output framing of AI chatbots individually and jointly shape public perceptions of scientifically relevant issues, contributing to the literature on human-AI interaction, science communication, and misinformation correction. Practically, these findings could inform strategies for employing advanced communication technologies, such as AI chatbots, to effectively counter misinformation in scientific domains.

Literature Review

Study Context: Scientific Misinformation on Cultivated Meat

This study evaluates the effectiveness of AI-powered chatbots in correcting misinformation and shaping public attitudes and behavioral intentions toward cultivated meat—a novel food technology involving the cultivation of animal cells outside an animal’s body (Bryant & Barnett, 2020). Singapore, the first country to commercialize cultivated meat in 2019 to strengthen food security (Ives, 2020), serves as the context for this research. Despite the potential of cultivated meat to provide a sustainable alternative to conventional livestock farming, addressing environmental, health, and ethical concerns (Treich, 2021), low familiarity among the Singaporean public increases its susceptibility to misinformation (Bryant & Barnett, 2020; Ou & Ho, 2024a, 2024b). Prevailing misinformation on cultivated meat includes unfounded claims that cultivated meat is derived from cancerous cells (AP News, n.d.), lacks proper labeling when sold (Mueller, n.d.), and is developed with hidden agendas (Reuters, n.d.). Without effective interventions, such misinformation may persistently skew public perception. This study therefore explores how AI chatbots can be strategically deployed to correct such misinformation and enhance public understanding, ultimately supporting informed discourse on this innovative food technology.

AI Chatbot for Misinformation Correction

Scientific misinformation, defined as information that is misleading or false relative to the best scientific evidence and contradicts statements from entities adhering to scientific principles (Southwell et al., 2022), remains a persistent challenge. Numerous institutions struggle with employing fact-checkers to identify and correct problematic online content, often facing issues related to scalability, quality, and timeliness of corrections (Zhou et al., 2024). In addition, laypersons frequently encounter difficulties in finding reliable sources for fact-checking. A recent study by Aslett et al. (2024) indicated that individuals attempting to fact-check recent misinformation via search engines often failed to locate credible sources, frequently encountering a lack of reliable information to debunk false claims. Consequently, there is a critical need for robust, technology-powered platforms capable of scaling up and accelerating misinformation correction.

In science communication, AI tools such as conversational chatbots have become essential for increasing public engagement and understanding of complex topics (Altay et al., 2022; Wang & Peng, 2023). These tools employ advanced algorithms to not only present information but also to facilitate interactive dialogues, enhancing the dynamism of communication. AI chatbots also serve as effective platforms for information verification and fact-checking, providing accessible and convenient solutions (Zhao et al., 2023). For instance, a fact-checking chatbot developed by Zhou et al. (2024) uses a large language model to efficiently produce high-quality debunking messages, substantially reducing users’ misconceptions. A study by Costello et al. (2024) found that dialogs with AI chatbots could reduce belief in conspiracy theories. During the COVID-19 pandemic, various chatbots were deployed globally for fact-checking and misinformation correction, demonstrating their efficacy in combating misinformation (e.g., Amiri & Karahanna, 2022). With features like instant fact-checking, personalization, and interactivity, conversational AI chatbots show considerable potential for addressing scientific misinformation more effectively and efficiently.

Recent studies have shown how chatbot design elements affect their persuasive efficacy in science communication. Specifically, integrating social cues that signal a chatbot’s competence or human-like qualities significantly enhances users’ trust and influences their attitudes and behavioral intentions regarding issues such as biodiversity conservation (Wang & Peng, 2023) and healthcare consultations (Liu et al., 2022). While extensive research has explored the impact of these social cues as source factors influencing user responses, the role of content factors—such as the structure and presentation of chatbot-generated content—remains underexplored. Previous research has demonstrated significant interactions between source factors (e.g., source expertise) and content factors (e.g., message sidedness) in driving attitudinal and behavioral changes in the digital media context (Flanagin et al., 2020), yet their interplay in AI-driven communication remains understudied. Thus, this study aims to explore how chatbot source characteristics and content features of their corrections may jointly influence individuals’ attitudinal and behavioral responses to scientific issues, with a particular focus on chatbot-mediated corrections of scientific misinformation about cultivated meat.

Chatbot Expertise Cues

Expertise cues in a chatbot refer to elements signaling its specialized knowledge, skills, or abilities in a particular domain (Liew & Tan, 2021). These cues are designed to enhance the chatbot’s credibility and trustworthiness by conveying competence, which is crucial for increasing persuasive effectiveness in human-computer interaction (Sundar, 2008). The CASA paradigm suggests that individuals apply similar social heuristics when interacting with computers as they would with humans (Nass & Moon, 2000). According to CASA, users engaging with chatbots for misinformation correction expect the chatbot to demonstrate expertise in the relevant field, interpreting expertise cues as social signals that reflect the chatbot’s competence and specialization (Liew & Tan, 2021). In the context of virtual agents, Liew and Tan (2021) identified various cues that convey agent expertise, including appearance, social prestige, communication style, and specialization labels. For example, specialization cues in web agents that highlight their specific (vs. generalized) functions have been shown to foster greater trust and positive attitudes toward both the agents and the information they present (Koh & Sundar, 2010).

Extensive reviews and empirical studies consistently show that perceived source expertise is a pivotal factor in judging information credibility (Go et al., 2014; Metzger et al., 2003; Ou & Ho, 2024a). In science-related misinformation correction, source expertise is essential for effectively addressing misinformation on complex topics, such as GM foods (Wang, 2021) and the Zika virus (Vraga & Bode, 2017). For instance, Vraga and Bode (2017) found that health misinformation corrections from high-expertise sources, like the Centers for Disease Control and Prevention, were considered more credible than those from laypersons. Similarly, Wang (2024) demonstrated that individuals were more likely to accept a corrective message from an expert source than from a social peer.

High-expertise cues also positively influence user attitudes and behavioral intentions by enhancing the credibility and professionalism of the correction source (e.g., Vraga & Bode, 2017; Yuan et al., 2019). Research shows that perceived expertise directly impacts attitude formation, particularly when users engage with novel or scientifically complex information (Wang & Peng, 2023). Given that cultivated meat is a relatively new and sometimes controversial topic, individuals are likely to form more favorable attitudes if the correction comes from a source perceived as knowledgeable and professional. For example, studies in science communication and food technology (e.g., Yuan et al., 2019) have shown that expert-authored content on novel foods, such as GM foods, is more persuasive, leading to more positive public attitudes.

In addition, support for novel technologies, especially in food science, often hinges on the perceived reliability of the information source (e.g., Chambers et al., 2023). Expertise cues not only imply knowledge but also suggest that the chatbot’s corrections and recommendations are trustworthy and well-informed. This can foster greater confidence in such technologies, thereby increasing support for cultivated meat. Behavioral intentions, such as the intention to consume novel food products, could also be strongly influenced by the perceived expertise of the correction source. When users perceive the source as highly knowledgeable, they are more likely to trust its guidance, leading to increased openness to recommendations (Go et al., 2014). In the case of cultivated meat, users who see the chatbot as an expert in correcting related misinformation will be more likely to consider consuming it, trusting that the corrections are based on accurate, research-backed insights. Studies in health communication (Vraga & Bode, 2017) and food science (Yuan et al., 2019) have demonstrated that high-expertise sources significantly increase individuals’ willingness to adopt behaviors aligned with expert recommendations.

Taken together, given the significant impact of source expertise on credibility perceptions, as well as on attitudinal and behavioral responses to emerging technologies, we propose the following hypothesis:

Effects of Correction Sidedness

Message sidedness refers to the framing of a message based on the number of perspectives it includes, which significantly influences its persuasiveness (Allen, 1991). Specifically, a two-sided message presents arguments both for and against an issue, whereas a one-sided message supports only one perspective (Winter & Krämer, 2012). Two-sided messages can be further classified based on whether opposing arguments are refuted: two-sided non-refutational messages present both positive and negative information without countering the negative, while two-sided refutational messages provide both sides but immediately counter the negative argument to strengthen the positive perspective (Allen, 1991). This study focuses on the two-sided refutational approach, where misinformation is initially acknowledged but quickly countered with corrective information, thereby enhancing the effectiveness of the correction.

Originally explored in advertising and marketing, message sidedness has also been found to influence the selection of science-related articles (Winter & Krämer, 2012) and to enhance the credibility and persuasiveness of misinformation corrections (Wang & Huang, 2021; Xiao & Su, 2021). Two-sided refutational messages, in particular, are considered more persuasive and credible for correcting misinformation, as supported by inoculation theory (McGuire, 1964). According to this theory, presenting a weaker version of an opposing argument within a two-sided message strengthens the persuasiveness of the primary argument by preemptively addressing potential misinformation. This approach not only alerts individuals to misleading information but also bolsters the resilience of their understanding through proactive refutation (Lewandowsky & Van Der Linden, 2021). Research has demonstrated the effectiveness of two-sided correction messages in addressing misinformation on topics such as vaping (Wang & Huang, 2021) and human papillomavirus (HPV) vaccination (Xiao & Su, 2021). Given these findings, we expect that two-sided misinformation correction is more likely to enhance people’s trust in correction messages than one-sided correction.

Two-sided refutational corrective messages can also contribute to positive attitudinal and behavioral shifts toward the subject of correction. When users see that a chatbot presents and refutes opposing views on cultivated meat, they are more likely to view the chatbot as competent, fair, and objective. This perception fosters trust in the message, leading to more positive attitudes toward cultivated meat. Prior research in science communication (Lin & Kim, 2022; Xiao & Su, 2021) has shown that individuals tend to develop more favorable attitudes toward scientific topics when exposed to two-sided messages. Two-sided corrections against misinformation about cultivated meat also foster greater support by acknowledging and addressing users’ potential doubts or misconceptions, thereby enhancing their confidence in this novel food. Studies on controversial topics, such as GM foods and HPV vaccination, indicate that two-sided refutational messages increase support by reinforcing the credibility of the message (Wang & Huang, 2021; Xiao & Su, 2021).

Furthermore, the balanced nature of a two-sided correction can positively influence behavioral intentions, such as intentions to consume cultivated meat. When an AI chatbot openly discusses and counters misinformation through a two-sided correction, users are more likely to trust the information provided, which increases the likelihood of adopting the recommended behavior. Inoculation theory supports this effect, positing that refutational messages can preemptively inoculate individuals against misinformation, strengthening their intention to act in accordance with the corrected information (Lewandowsky & Van Der Linden, 2021). Empirical studies in health and food science (Chambers et al., 2023; Vraga & Bode, 2017) show that individuals are more inclined to follow recommendations from credible, two-sided sources, suggesting that a two-sided correction from a chatbot could significantly enhance intentions to try cultivated meat. Therefore, we propose the following hypothesis:

The Interaction Between Chatbot Expertise Cues and Correction Sidedness

The relationship between source expertise and message sidedness influencing credibility perceptions is well-documented in traditional digital environments, indicating an additive effect of these two factors on credibility perceptions. For example, Chen (2016) found that two-sided online product reviews written by experts are perceived as more useful than those authored by novices. Similarly, Flanagin et al. (2020) showed that two-sided messages on online encyclopedia sites enhance users’ perceptions of content credibility, particularly on platforms that blend expert and user-generated content. However, the interplay between expertise cues and sidedness framing in shaping credibility perceptions within AI-mediated misinformation correction has not been thoroughly investigated, highlighting the need for a more detailed exploration of how these factors interact in the context of AI-driven communication.

Moreover, the interplay between message sidedness and source expertise has been shown to significantly influence attitudes and behavioral intentions, albeit not specifically within AI-mediated contexts. Uribe et al. (2016) found that two-sided consumer reviews from high-expertise sources significantly boost purchase intentions compared to one-sided reviews from low-expertise sources. Kamins et al. (1989) found that two-sided advertisements with celebrity endorsements more effectively foster positive attitudes and increase purchase intentions than one-sided advertisements from non-celebrity endorsers. Collectively, these studies highlight the crucial role of message sidedness and communicator expertise in boosting message credibility and shaping recipient responses, suggesting a fertile area for exploration in AI-driven communication strategies.

Building upon established research demonstrating the synergistic interaction effects between source expertise and message sidedness on credibility perceptions, attitudes, and behaviors, this study posits that within AI-mediated communication, the impact of a chatbot’s expertise level may be significantly enhanced by two-sided messaging compared to one-sided messaging. This hypothesis is supported by research indicating that information perceived as unbalanced or from less reputable sources is generally considered of lower quality (e.g., Flanagin et al., 2020). Accordingly, we contend that misinformation corrections delivered by chatbots with higher (vs. lower) expertise cues are likely to be more effective in shaping credibility perception, attitudes, and behavioral intention, especially when incorporating two-sided (vs. one-sided) corrections. Therefore, we propose:

The Mediating Role of Correction Credibility

Existing research has established that perceptions of message credibility serve as a vital mediator between information source or message attributes and changes in individuals’ attitudes and behaviors across various communication contexts, including advertising (Jäger &Weber, 2020) and science communication (Wang & Huang, 2021). For example, Jäger and Weber (2020) demonstrated that the way green product benefits are framed—through high or low construal levels—affects purchase intentions by influencing perceived message credibility. Similarly, Wang and Huang (2021) showed that the perceived believability of a message positively mediates the relationship between message sidedness and support for prohibiting e-cigarette use, particularly when the message is presented narratively rather than as factual information. These studies highlight that attitudinal and subsequent behavioral changes are contingent upon the credibility of the information presented.

In the context of emerging scientific issues like cultivated meat, where the product is not widely known or accessible and claims about it are difficult to verify, perceptions of relevant message credibility play a pivotal role in shaping public attitudes and behavioral intentions (Jäger & Weber, 2020). As such, credibility perceptions serve as a vital link between chatbot-delivered messages about cultivated meat and public responses to this novel food. Consequently, we argue that the impact of chatbot expertise (source characteristics) and correction sidedness (message features) on people’s attitudes and consumption intentions toward cultivated meat is mediated by their perceptions of credibility. Given the aforementioned reasoning, corrective messages that present two-sided arguments from a high-expertise chatbot are likely to be perceived as more credible than one-sided corrections from a general expertise chatbot, leading to more favorable attitudinal and behavioral outcomes. Accordingly, we posit:

Method

After receiving ethical approval (IRB No.: IRB-2021-998) from Nanyang Technological University, we conducted a 2 (chatbot expertise cues: high vs. low) × 2 (correction sidedness: one-sided vs. two-sided) between-subjects online experiment. The experiment was conducted from 3 April to 23 April 2024. We engaged a market research firm to recruit participants who were Singaporeans or permanent residents aged 21 years or older.

Participants

Among the 261 completed responses, seven were excluded due to straight-lining and speeding, leaving a final sample of 254 participants. The average age was 41.30 years (SD = 11.63). The sample was almost evenly split by gender, with 48.8% male and 51.2% female participants. Ethnically, 69.7% were Chinese, 25.2% Malay, 1.2% Indian, 2.4% Eurasian, and 1.8% from other ethnic groups. The most common education level was a bachelor’s degree or equivalent. The median household income ranged from SGD 11,000 to SGD 11,999.

Experiment Materials and Manipulations

The experimental materials include an introductory description of an AI-powered chatbot and a screenshot showing a conversation between the chatbot and a user, illustrating the process in which the AI chatbot corrects a piece of misinformation about cultivated meat.

Material Selection

To select the most appropriate piece of misinformation about cultivated meat for our main experiment, we conducted a pilot test involving 99 participants from a local university, including both students and staff. Participants were asked to evaluate seven circulating misinformation statements about cultivated meat on their familiarity and credibility, using a scale from 1 to 5. These false statements were sourced from reputable fact-checking sites and news websites (e.g., Reuters and The Associated Press). The statement claiming that “cultivated meat production contains a high quantity of antibiotics” was deemed the least familiar (M = 2.22) but most credible (M = 3.12). Therefore, it was chosen for further investigation in the main experiment.

The correction message designed to counter the selected misinformation about cultivated meat was based on research from Hanga et al. (2020) and Saied et al. (2023). These sources suggest that, unlike conventional meat, cultivated meat does not use large amounts of antibiotics. The message refuted the claim that “cultivated meat production contains a high quantity of antibiotics” by emphasizing three key points: (a) cultivated meat reduces the risk of antibiotic misuse prevalent in traditional farming, (b) the production process maintains antibiotic-free environments through strict sterility and control measures, and (c) cultivated meat is subject to thorough regulatory oversight to ensure adherence to stringent food safety standards and prevent antibiotic misuse. To ensure the scientific accuracy of the correction, two experts in bioengineering and cultivated meat were consulted to review, refine, and confirm the content’s accuracy.

Experimental Manipulations

We manipulated the chatbot’s expertise cues by altering its introductory description, following the approach of previous research (e.g., Wu et al., 2024). In the high-expertise cues condition, the chatbot was introduced as a fact-checking specialist focused on debunking misinformation about novel foods, developed with the expertise of the Singapore Food Agency and a local university. It sourced information from professional organizations, such as the World Health Organization, the Food and Drug Administration, the Centers for Disease Control and Prevention, and established fact-checking sites like Snopes.com. In contrast, the low-expertise cues condition presented the chatbot as a general AI tool for various tasks, including food recommendations, weather updates, and news, drawing information from a range of online sources, including social media platforms (e.g., Facebook, Twitter, TikTok), personal blogs, commercial sites, and search engines.

We also built upon existing studies (e.g., Wang & Huang, 2021; Xiao & Su, 2021) to manipulate the sidedness of the corrections. In the two-sided correction condition, the chatbot first acknowledged and then refuted the misinformation claim. It started by acknowledging the assertion that cultivated meat requires certain quantities of antibiotics for uncontaminated cell growth due to the lack of an immune system in the cells extracted for cultivating meat. This was followed by a strong refutation, arguing that such claims are false and misleading, and using the aforementioned three key arguments to support this refutation. In contrast, in the one-sided correction condition, the chatbot directly refuted the misinformation without acknowledging any aspect of the misleading claim, providing three supportive points in its rebuttal. To ensure consistency and avoid confounding variables, the introductory descriptions and correction messages were standardized in length across conditions, with introductions set at 102 words, and correction messages between 402 and 404 words. Further details on the materials used are available in the Appendix.

Procedure

In the main experiment, all participants who accessed our online study were first directed to read the study information sheet and provide electronic consent. They then reviewed brief definitions of cultivated meat, antibiotics, and AI-powered chatbots to ensure a basic understanding of the terms used in the experiment. Next, participants completed pre-test questions assessing their knowledge of AI, chatbots, and cultivated meat, as well as their trust in and prior attitudes toward AI and chatbots. Following this, they were randomly assigned to one of four conditions, each varying in chatbot expertise cues and correction sidedness. Each condition presented a static screenshot of an interaction between a user and the chatbot, demonstrating how the chatbot addresses a piece of misinformation about cultivated meat. Participants then completed attention-check questions, manipulation-check questions, and measures of the dependent variables. Finally, they provided demographic information and were debriefed upon completing the experiment.

Measures

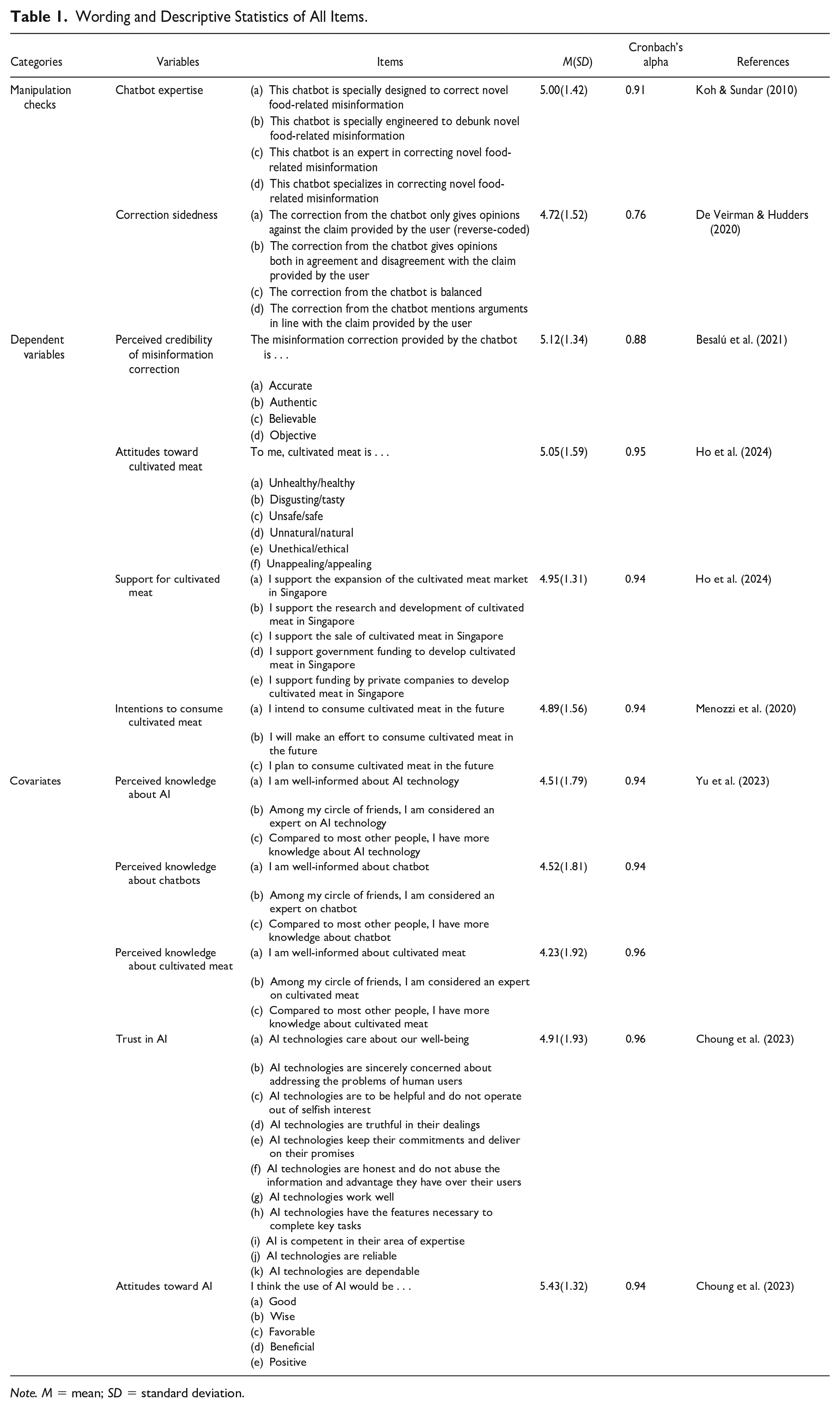

Unless otherwise stated, all variables were measured using a scale from 1 (strongly disagree) to 7 (strongly agree). All measurements were adapted from previous research. Minor adjustments were made to tailor the items specifically for the context of this study. All items used for manipulation checks, dependent variables, and control variables, along with their references and descriptive statistics, are listed in Table 1.

Wording and Descriptive Statistics of All Items.

Note. M = mean; SD = standard deviation.

Data Analysis

We conducted a two-way univariate analysis of covariance (ANCOVA) to test H1–H3, examining the main and interaction effects of chatbot expertise and correction sidedness. For H4 which examined the mediation effects of perceived credibility of misinformation correction, we employed a moderated mediation analysis using Model 8 from the PROCESS macro of Hayes et al. (2017) in SPSS version 27.0. This analysis considered chatbot expertise as the independent variable, correction sidedness as the moderator, and perceived credibility of the correction as the mediator. Attitudes toward cultivated meat, support for cultivated meat, and intentions to consume cultivated meat were included as dependent variables. We performed the analysis with 95% confidence intervals and 5,000 bootstrapped samples. In addition, we controlled for age; education; knowledge about AI, chatbots, and cultivated meat; attitudes toward AI; and trust in AI in both the ANCOVA and the moderated mediation analysis.

Results

Manipulation Checks

Results from two independent samples t-tests indicated that chatbots with higher expertise cues were perceived as more professional (M = 5.30, SD = 1.01) in correcting misinformation on cultivated meat than chatbots with lower expertise cues (M = 4.70, SD = 1.39), t (252) = 15.705, p < .001. This indicated that participants exposed to the chatbot described as having greater expertise in correcting novel food-related misinformation perceived it as more professional in this role. In addition, participants who read a two-sided correction felt the correction both agreed with and refuted the argument in the misinformation more (M = 5.10, SD = .95) than those who read a one-sided correction (M = 4.11, SD = 1.62), t (252) = 69.23, p < .001. Therefore, the manipulations of chatbot expertise cues and correction sidedness were successful. Participants received points as compensation that they could redeem in the form of gift vouchers.

Test of Hypotheses

Results from the ANCOVA tests revealed that the main effects of chatbot expertise cues on credibility perceptions of misinformation correction were significant, (F(1, 242) = 32.89, p < .001, ηp2 = .12), thereby supporting H1a. The chatbot with higher expertise cues (M = 5.38, SD = 0.88) elicited greater credibility perceptions of the correction than the chatbot with lower expertise cues (M = 4.86, SD = 1.24). However, the main effects of chatbot expertise cues on attitudes toward cultivated meat (Mlow-expertise cues = 5.15, Mhigh-expertise cues = 4.94, F(1, 242) = .01, p = .98, ηp2 = .00), support for cultivated meat (Mlow-expertise cues = 5.00, Mhigh-expertise cues = 4.90, F(1, 242) = .18, p = .67, ηp2 = .00), and intentions to consume cultivated meat (Mlow-expertise cues = 4.92, Mhigh-expertise cues = 4.84, F(1, 242) = .86, p = .36, ηp2 = .00) were not significant. Therefore, H1(b), (c), and (d) were not supported.

In addition, the main effects of correction sidedness on credibility perceptions of misinformation correction (Mone-sided = 5.20, Mtwo-sided = 5.03, F(1, 242) = .01, p = .93, ηp2 = .00), attitudes toward cultivated meat (Mone-sided = 5.25, Mtwo-sided = 4.84, F(1, 242) = .48, p = .49, ηp2 = .00), support for cultivated meat (Mone-sided = 5.12, Mtwo-sided = 4.77, F(1, 242) = .43, p = .51, ηp2 = .00), and intentions to consume cultivated meat (Mone-sided = 4.98, Mtwo-sided = 4.77, F(1, 242) = 1.09, p = .30, ηp2 = .00) were not significant. Therefore, H2(a)–H2(d) were rejected.

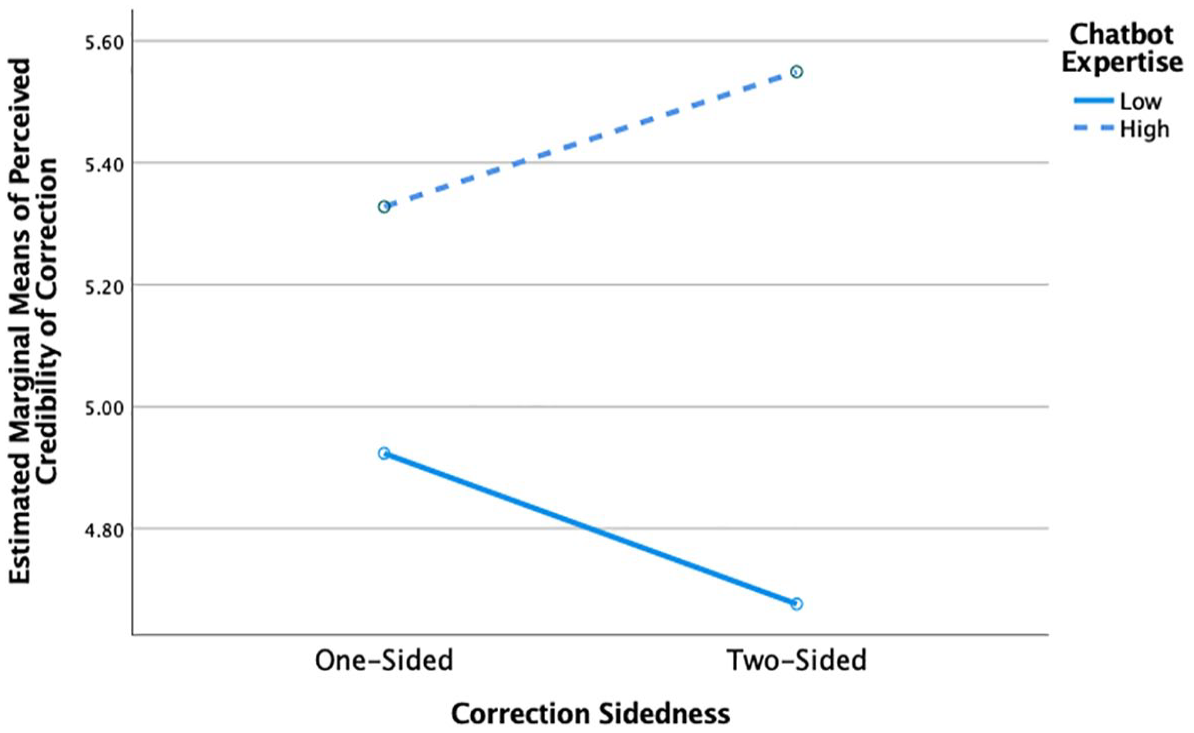

Moreover, there is a significant interaction effect between chatbot expertise cues and correction sidedness on credibility perceptions of misinformation correction, (F(1, 242) = 4.30, p = .039, ηp2 = .02). A simple-effects analysis suggested a significant difference in credibility perceptions between high- and low-expertise chatbot conditions when the misinformation corrections employed one-sided framing (Mlow-expertise cues = 5.03, Mhigh-expertise cues = 5.39, F(1, 242) = 6.84, p < .01, ηp2 = .03). This difference was further amplified when the corrections featured two-sided framing (Mhigh-expertise cues = 5.37, Mlow-expertise cues = 4.68, F(1, 242) = 29.18, p < .001, ηp2 = .11), supporting H3(a). This synergistic interaction effect demonstrates that while high-expertise cues enhance credibility perceptions of misinformation correction in both one-sided and two-sided conditions, the effect is stronger with two-sided framing. This suggests that the combination of high expertise and two-sided framing is most effective for maximizing credibility perceptions in misinformation-correction contexts.

This significant synergistic interaction effect between chatbot expertise cues and correction sidedness was plotted in Figure 1. However, the interaction effects between chatbot expertise cues and correction sidedness on attitudes toward cultivated meat (F(1, 242) = .03, p = .86, ηp2 = .00), support for cultivated meat (F(1, 242) = .01, p = .94, ηp2 = .00), and intentions to consume cultivated meat (Mone-sided = 4.98, Mtwo-sided = 4.77, F(1, 242) = .02, p = .88, ηp2 = .00) were not significant, addressing H3(b) and (c).

The Significant Interaction Between Chatbot Expertise and Message Sidedness on Perceived Credibility of Misinformation Correction.

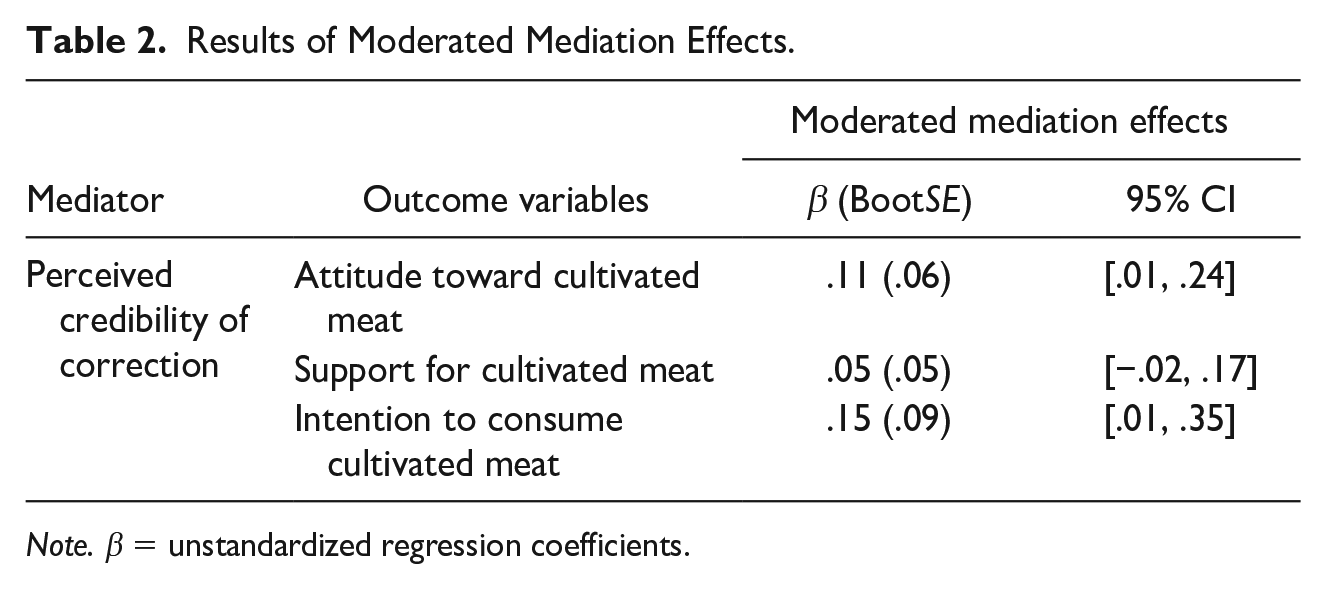

Finally, the moderated mediated analysis demonstrated that the moderated mediation on attitudes (β = .11, BootSE = .06, 95% CI [.01, .24]) and consumption intentions (β = .15. BootSE = .09, 95% CI [.01, .35]) through credibility perceptions is statistically significant. Specifically, credibility perceptions of correction mediated the influence of chatbot expertise cues on attitudes (β = .08, BootSE = .02, 95% CI [.02, .16]) and consumption intentions (β = .11, BootSE = .06, 95% CI [.01, .25]) when the correction was one-sided. This mediation effect was stronger for attitudes (β = .16, SE = .04, 95% CI [.02, .29]) and consumption intentions (β = .25, BootSE = .11, 95% CI [.06, .50]) when the correction was two-sided. Hence, H4(a) and H4(c) were supported. However, the moderated mediation effect on support for cultivated meat through credibility perception of correction was not significant (β = .05, BootSE = .05, 95% CI [−.02, .17]), thereby rejecting H4(b). Table 2 shows the results of the moderated mediation analysis.

Results of Moderated Mediation Effects.

Note. β = unstandardized regression coefficients.

Discussion

The widespread misinformation requires robust technology-driven tools for effective debunking. Recent advancements in AI have significantly improved AI-powered chatbots, making them viable tools for information verification and fact-checking (Xiao et al., 2023). Informed by the CASA paradigm and message sidedness theory, this study examines how chatbot features like expertise cues and the framing of outputs (i.e., correction sidedness) influence the effectiveness of misinformation correction and individuals’ attitudinal and behavioral response to emerging technologies such as cultivated meat. We found that chatbot expertise cues, more than sidedness of the corrective content, significantly enhance credibility perceptions of misinformation correction. In addition, the interplay between chatbot expertise cues and correction sidedness affects credibility perceptions and, in turn, shapes attitudes and consumption intentions toward cultivated meat.

Corrections from chatbots with high-expertise cues in addressing novel-food misinformation were perceived as more credible than those from chatbots with low-expertise cues, aligning with research on source expertise and credibility (Flanagin et al., 2020; Ou & Ho, 2024a). However, unlike prior studies linking expertise to attitudinal and behavioral changes (Rashedi & Seyed Siahi, 2020; Yoon et al., 1998), chatbot expertise did not significantly affect attitudes or intentions to consume cultivated meat. This discrepancy may stem from the complexity and novelty of cultivated meat, prompting individuals to rely more on pre-existing beliefs or skepticism. Factors like personal food safety beliefs (Bryant & Barnett, 2020), perceptions of naturalness (Fidder & Graça, 2023), and media habits (Ho et al., 2024) likely play stronger roles in shaping attitudes and intentions.

The findings show that correction sidedness—whether one-sided or two-sided—did not significantly impact participants’ perceptions of chatbot credibility, attitudes, or intentions regarding cultivated meat. This aligns with Wang and Huang’s (2021) findings in the context of e-cigarettes. The lack of effect suggests that participants may prioritize source expertise over message structure, particularly for novel or complex topics where they lack domain knowledge (Lucassen et al., 2013; Wang & Huang, 2021). For misinformation correction on such topics, framing corrections as two-sided alone is insufficient to enhance credibility; the chatbot’s perceived expertise remains critical. Users also need the assurance of the chatbot’s expertise to view the two-sided correction as trustworthy. The complexity of two-sided corrections, which present both sides of an issue, may lead to cognitive overload, requiring substantial mental effort to process (Deck & Jahedi, 2015; Sweller, 2023). For less invested or knowledgeable participants, the absence of expertise cues can hinder efficient information processing, reducing engagement and perceived credibility. This highlights that the cognitive load from two-sided corrections can limit their effectiveness, emphasizing the need for both message framing and clear expertise cues to enhance correction credibility.

Our findings reveal a synergistic interaction effect between correction sidedness and chatbot expertise cues on the credibility perceptions of corrections provided by the chatbot. A significant difference in credibility perceptions was observed between high- and low-chatbot expertise conditions when corrections employed one-sided framing. This difference was further amplified when the corrections featured two-sided framing. This suggests that the high congruence between source expertise and the comprehensive nature of the message content (i.e., two-sidedness) can amplify credibility perceptions in human-AI interactions. This may be because users typically expect expert sources to provide comprehensive and balanced information (e.g., Manninen, 2020). Chatbots with low-expertise cues might not be expected to handle complex, two-sided arguments as effectively, which can lead to reduced credibility in both one-sided and two-sided conditions. This aligns with previous findings that people place greater trust in two-sided messages provided by experts than in one-sided messages from novices (Flanagin et al., 2020).

Credibility perceptions of misinformation corrections delivered by chatbots act as a crucial mediator between chatbot expertise cues and correction sidedness and people’s attitudes and consumption intentions toward cultivated meat. This effect can be explained by cognitive dissonance theory, which suggests that individuals strive for consistency in their beliefs and attitudes (Cooper, 2012). Consequently, individuals may adjust their attitudes and behaviors to better align with their credibility perceptions of information provided by the chatbot, potentially enhancing their view of and willingness to consume cultivated meat (Singh & Banerjee, 2018). This indicates that effective AI communication, which presents expert sources and balanced messages, can significantly impact individuals’ attitudes and behaviors through people’s credibility perceptions.

Theoretical Implications

This study integrates the CASA paradigm and message sidedness theory to examine how AI features and output framing shape cognitive, attitudinal, and behavioral responses, bridging science communication, human-AI interaction, and misinformation correction. CASA highlights the role of chatbot expertise in enhancing credibility, while the message sidedness theory explains the persuasive value of two-sided messages. The findings reveal that combining expertise cues with two-sided corrections enhances credibility perceptions and indirectly influences attitudes and behaviors toward cultivated meat. This underscores how AI chatbots can emulate effective human communication strategies to establish credibility, especially in misinformation correction.

We offered new insights into how the features of misinformation correction and AI agency attributes influence persuasive outcomes through credibility judgments, rather than direct impact. By revealing that credibility judgments mediate the relationship between chatbot features (source attributes and message structure) and audience responses, we highlight the complex role that credibility perceptions play in the persuasion process. This contributes to existing literature by suggesting that the effectiveness of misinformation correction is not just about the direct transmission of facts but also about how these facts are framed and by whom. Existing research predominantly focuses on how corrections reduce belief in and sharing of misinformation (e.g., Walter et al., 2020). Our study broadens this by exploring how AI-facilitated misinformation correction affects persuasion, specifically impacting people’s attitudes and behavioral intentions through trust in AI-provided corrections. This enhances the literature on misinformation correction, AI-mediated communication, and persuasion, highlighting the importance of designing AI-driven communication tools that effectively convey information and boost perceived credibility to significantly influence audience attitudes and behaviors.

The results also highlight that message sidedness amplifies the impact of expertise cues on credibility perceptions in human-AI interactions. Nonetheless, message sidedness alone does not substantially affect credibility judgments or persuasive outcomes. While traditionally effective in enhancing credibility and persuasion, applying message sidedness directly to AI communications proves challenging, highlighting the complexities of adapting this theory from human-human to human-AI interactions. Moreover, unlike prior studies like Cheng et al. (2024), which relied on surveys to assess attitudes toward COVID-19 misinformation correction by chatbots, our experimental approach enhances robustness, providing a stronger foundation for causal conclusions regarding the effects of chatbot features on the effectiveness of misinformation correction.

Practical Implications

This study offers practical insights for designing AI chatbots to combat scientific misinformation. Enhancing a chatbot’s specialization and sourcing information from credible, authoritative sources can increase the effectiveness of misinformation correction. For example, including clickable sources for easy verification and clarifying the origins of the correction can strengthen AI-driven debunking efforts. The observed synergistic effects between chatbot expertise and correction sidedness suggest that two-sided corrections from specialized chatbots foster higher trust, more favorable attitudes, and positive behavioral intentions toward scientific topics. Therefore, misinformation-correction chatbots could benefit from integrating expertise cues with two-sided correction framing in a complementary manner. Specifically, the chatbot should clearly communicate its expertise in a field related to the misinformation topic while simultaneously implementing two-sided correction framing. For instance, when addressing misinformation about cultivated meat, the chatbot could introduce itself as an expert developed in collaboration with reputable food science organizations or research institutions. It should then present the misinformation or common misconceptions first, signaling its understanding of opposing perspectives, followed by a balanced, research-based correction.

Limitations and Future Directions

This study has several limitations that suggest directions for future research. First, it focused solely on chatbot expertise cues and correction sidedness within the context of cultivated meat. Future research could extend to other areas of science affected by misinformation to determine if similar effects occur. Second, despite providing an introduction to cultivated meat and controlling for the effects of knowledge and attitudes toward it, individual responses to misinformation and corrections may vary, especially between those who have and have not tried cultivated meat. Further studies should investigate these differences and adjust for their influence. Third, our method involved using static screenshots of interactions between a user and the chatbot, which did not allow participants to interact with the chatbot in real time and may affect the generalizability of our findings to actual interactions. Building on prior research examining the effectiveness of misinformation correction (Aikin et al., 2017; Paquin et al., 2022), as well as its impact on user engagement (Giombi et al., 2022) and behavioral responses (e.g., Aikin et al., 2019), future studies could incorporate live interactions to enhance external validity. Finally, this study selected cultivated meat as a representative case of emerging food technology, given its significant public impact in Singapore and susceptibility to misinformation. However, this focus may limit the broader applicability of the findings. Future research could extend this work to other emerging technologies, such as plant-based proteins, GM foods, or other novel food innovations.

Footnotes

Appendix

Acknowledgements

The authors would like to express our appreciation to Dr. Larry Loo and Mr. Yuxuan Tang for their professional suggestions and insightful comments on our misinformation correction materials.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the Ministry of Education Academic Research Fund Tier 1 Grant (grant number: RT16/20)