Abstract

High compliance is a priority for successful ecological momentary assessment (EMA) research, but meta-analyses of between-study differences show that reasons for missed prompts remain unclear. We examined compliance data from a 14-week, 182-survey EMA study of undergraduate alcohol use to test differences over time and across survey types between participants with better and worse compliance rates, and to evaluate the impact of incentives on ongoing participation. Participants were

Ecological momentary assessment (EMA), also known as experience sampling or ambulatory assessment, is an approach to study design that collects real-time data on participant behaviors, emotions, and experiences in their natural environments (Shiffman et al., 2008) and in ways that intrude minimally on participants’ daily lives (Burke et al., 2017). At their inception in the 1980s, participants carried pagers and completed paper surveys whenever they were “beeped” by the study team (Csikszentmihalyi & Larson, 1987). Technological advances in recent years mean that EMA studies can take place on participants’ personal smartphones with great ease and little expense. EMA has unsurprisingly grown in popularity, and the share of published scientific articles using these methods was over 6 times higher in 2021 compared with 2008, when Shiffman and colleagues published their summative annual review on EMA. 1

Compared with in-lab experimental methods and even to longer-term diary and longitudinal studies, the short-term windows of data collection throughout the day result in many missed assessments that threaten the validity of inferences drawn from EMA studies (Sun et al., 2020). Understanding

Between-Study Similarities in Compliance

Meta-analyses show that on average, participants in EMA studies respond to 75% to 82% of the prompts they receive over the course of their study involvement (Jones et al., 2019; Ottenstein & Werner, 2022; Williams et al., 2021; Wrzus & Neubauer, 2022). Studies with fewer prompts per day and shorter surveys generally did not have better compliance rates than studies with more prompts per day and longer surveys, consistent with experimental evidence (Eisele et al., 2022; Hasselhorn et al., 2022). Other design factors showing no effect on compliance included type of device (e.g., personal smartphone vs. study-loaned device), whether prompts were at fixed versus random times of day, and whether studies included event-contingent responding. Paid samples had slightly better compliance than unpaid, but different strategies for payments—such as bonuses for completing more surveys—were not related to better compliance rates. Gender, age, and clinical versus community sample participant sources were also unrelated to compliance.

For researchers designing EMA studies, it is tempting to conclude that a high compliance rate is achievable irrespective of design factors in the study. However, studies reviewed across meta-analyses had compliance rates ranging as low as 9.8% up to 100%. Compliance rates below 80%—a heuristic benchmark minimum for EMA studies (Jones et al., 2019)—were reported in nearly half of the studies reviewed by Wrzus and Neubauer (2022). A conclusion from their study was that self-selection is a key reason for the lack of between-study differences observed. Reasons that some people might not adhere to a study protocol likely discourage them from agreeing to participate in the first place. Tests of

Clues pointing to potential sources of differences in compliance and attrition over time are scarce. One EMA study explored this question intensively by equipping student participants with passive audio listening devices and coding their behavior and context in proximity to when EMA survey responses were requested (Sun et al., 2020). Simply being a participant who completed many versus few surveys over the course of the study allowed for nearly all completed reports to be correctly classified as complete. However, just 21% of

Compliance in Longer Duration Studies

A surprising null finding from meta-analyses of EMA compliance is that longer duration studies do not have consistently poorer compliance than shorter studies. Two meta-analyses classified study durations as <1 week, 1 to 2 weeks, and 2 or more weeks in length and found no mean differences in compliance rates (Jones et al., 2019; Williams et al., 2021). One meta-analysis found a small negative correlation between compliance rate and the total number of study days (Ottenstein & Werner, 2022). The largest meta-analysis included just seven studies (out of several hundred) that reported total study lengths exceeding 30 days, and compliance rate was unrelated to study length (Wrzus & Neubauer, 2022). These results are difficult to reconcile with the universally acknowledged problems of attrition and missing data in longitudinal survey research and underscore the limits of a between-study approach to understanding EMA compliance. In an integrative data analysis of 10 EMA studies lasting 4 to 6 days, an overall compliance of 78% was close to the average rate reported in each meta-analysis, but compliance declined from a high of 83% on the first day to 73% on the fifth day (Rintala et al., 2019). Overall rates mask patterns of attrition and offer no insights suggesting who drops out, when and why they drop out, and how we might best encourage retention, especially for EMA studies lasting weeks or months.

The longer duration design of the present study offers a unique opportunity to explore compliance trends. We issued surveys on 56 days spread out over 14 weekends. At 13 surveys per weekend, participants had the opportunity to contribute as many as 182 responses. The longer the study, however, the more opportunities for attrition. In one 7-day EMA study of students participating in exchange for course credit, compliance above 80% on Day 1 dropped to around 65% on Day 4 (Silvia et al., 2013). A paid sample of first-year undergraduates had even worse compliance, with 70% missing 3 or more days’ worth of surveys over the course of a 7-day protocol (Bedard et al., 2017). As shown in meta-analyses, there were no reliable differences in compliance between studies using different designs, but these tests do not rule out within-study differences in compliance

Financial Incentives

The sole criterion that predicted higher compliance in between-study meta-analyses was the use of incentives. Studies offering monetary incentives averaged compliance rates of 82.2%, significantly higher than studies offering nonmonetary incentives (77.5%) or no incentives (76.2%; Wrzus & Neubauer, 2022). However, considerable variability remains and half the studies in Wrzus and Neubauer’s meta-analysis with no incentives achieved a compliance rate above 80%, although none of these studies exceeded 28 days and most lasted for 1 week or less. In a three-sample study with long tracking periods ranging from 35 to 62 days, compliance rates were low when student participants received only course credit (32.8%) and higher when they received money (76.7%) or a prize (71.4%; Harari et al., 2017). Compliance rates also waned more rapidly in the credit-only versus compensation samples. Participants in the present study were all compensated at a rate of $1 per completed survey, with payments issued as gift cards on a weekly basis. However, our design also included two additional forms of compensation that allow us to test for potential effects of incentives on compliance: The first was the use of a “surprise” bonus payment: After about 5 and 10 weeks of participation, participants received unannounced $5 gift cards thanking them for their ongoing commitment to our study. The second was the use of daily prize draws: On Fridays, Saturdays, and Sundays, we randomly selected a $25 gift card winner from among the students who participated the previous day. Taking advantage of these supplemental forms of compensation, the third aim of our exploratory study was to test for differences in compliance rates before and after receiving prizes and bonuses to determine whether these strategies boosted compliance.

The Current Study

We use data drawn from a 14-week ecological momentary assessment study of undergraduate alcohol drinkers to accomplish three aims: First, we classify participants into groups based on their overall study compliance rates and compare them on several demographic, personality, mental health, and substance use measures. Second, we visualize trends over time in compliance and model rates of change in week-to-week compliance, within-week compliance, and need for reminders across respondent categories and survey types. Third, we test whether compliance rates differ on weeks before and after participants received surprise bonuses and prizes. Our design is exploratory and no part of this study was preregistered. Sample size was restricted to the number available from our existing data. All data decisions and measures in the study are reported below. Supplemental materials, raw data, and analysis code are available on the Open Science Framework (OSF) page for this project (https://osf.io/d4ea8).

Method

Participants and Procedure

Participants were

Interested students were directed to complete an eligibility survey. Eligibility criteria included (a) being enrolled as an undergraduate student at our university, (b) aged 25 or younger, and (c) planning to drink at least twice a month during the Fall semester. Ineligible students who completed our screening survey were thanked for their time. Eligible students were invited to provide their name and university-assigned email address. A

Participants who initiated the intake survey received a $10

The EMA study phase consisted of 13 surveys per week for 14 weeks. Participants received text message alerts at the same times each day and had 2 to 4 hours to complete each survey. The first alert arrived in the

Students were compensated $1 in amazon cash for each survey they completed, with payments sent out every Tuesday. To boost retention and promote student engagement, we conducted daily draws awarding $25 in amazon cash to one winner per day, Friday through Sunday. Participants who completed at least one survey on the previous day (i.e., Thursday through Saturday) were entered into the draw. After Weeks 5 and 10, we also distributed two unannounced $5 bonuses to students who remained enrolled in the study.

Measures

Demographic Characteristics

On our intake survey, students provided their age, gender identity, racial/ethnic background, parents’ education and income, frequency of cannabis and alcohol use in the past year, and indicators of food insecurity (details available in a data dictionary included on our OSF project page; https://osf.io/d4ea8).

Personality, Mental Health, and Substance Use

We include a detailed data dictionary of measures of personality, mental health, and substance use on our OSF project page. Briefly, we calculated average scores for depression (Center for Epidemiological Studies—Depression; CES-D; Andresen et al., 1994), anxiety (Generalized Anxiety Disorder; GAD; Spitzer et al., 2006), and life satisfaction (Satisfaction with Life Scale; Diener et al., 1985). Subscale scores were created for personality (i.e., extraversion, agreeableness, conscientiousness, negative emotionality, and open-mindedness; BFI-2-S; Soto & John, 2017) and impulsivity measures (i.e., negative urgency, perseverance, premeditation, sensation seeking, and positive urgency; Whiteside & Lynam, 2001). Participants also reported on their past-year frequencies of alcohol and cannabis use.

Weekly Overall Compliance

For each person, we summed the number of surveys they completed in a given week (i.e., 0–13), divided by the number of possible surveys (i.e., 13), and multiplied by 100 to arrive at a

Weekly Compliance by Survey Type

We separately calculated compliance rates for each person on each of the morning, midday, early, and late evening surveys. Participants could complete up to four morning surveys and three midday, early, and late evening surveys per week. For each person, we summed the number of surveys completed that week for a given survey type (i.e., 0 to 3 or 4), divided by the number of possible surveys (3 or 4), and multiplied by 100 to arrive at

Reminders

We sent up to two text message reminders for each survey to encourage completion, delivered at the same time each day for each survey type and only to participants who had not yet completed their survey. For a given survey, anyone who started a survey after the first or second reminder was sent was recorded as needing one or two reminders, respectively, before completing the survey. We calculated mean numbers of reminders (overall and by survey) each week to examine trends over time in the need for reminders.

Responder Category

Because of our interest in using compliance rates to predict compliance trends—effectively “double-counting” our outcome variable—we devised a categorical measure of participants’ overall levels of compliance across the duration of our study. Cut points were selected based on heuristic benchmarks for acceptable levels of compliance balanced against the need to have comparable numbers of people in each category. We designated participants as

Surprise Bonuses

We distributed surprise (unannounced) $5 gift card bonuses twice over the course of the study. These were not mentioned in the consent form and were not disclosed in advance to participants. Surprise bonuses were issued in Weeks 5 and 10 (for participants who began the study in Weeks 1 to 3) or Weeks 6 and 11 (for participants who began the study in Weeks 4 and 5). We recorded each participant’s weekly compliance for the weeks immediately preceding and following each bonus.

Daily Draws

Participants who completed at least one survey on a given Thursday, Friday, or Saturday were entered into a daily draw for a $25 gift card the next day. Over the course of the study, we distributed 42 gift cards to 38 people (four people won a gift card more than once). For each draw winner, we recorded their compliance rate from the week preceding their win, the week of their win, and the week following their win.

Analysis Strategy

Descriptive and inferential analyses for this study were performed using R software (R Core Team, 2021) and packages

Results

Compliance Summary

The final sample size for the EMA study was

Demographic Characteristics

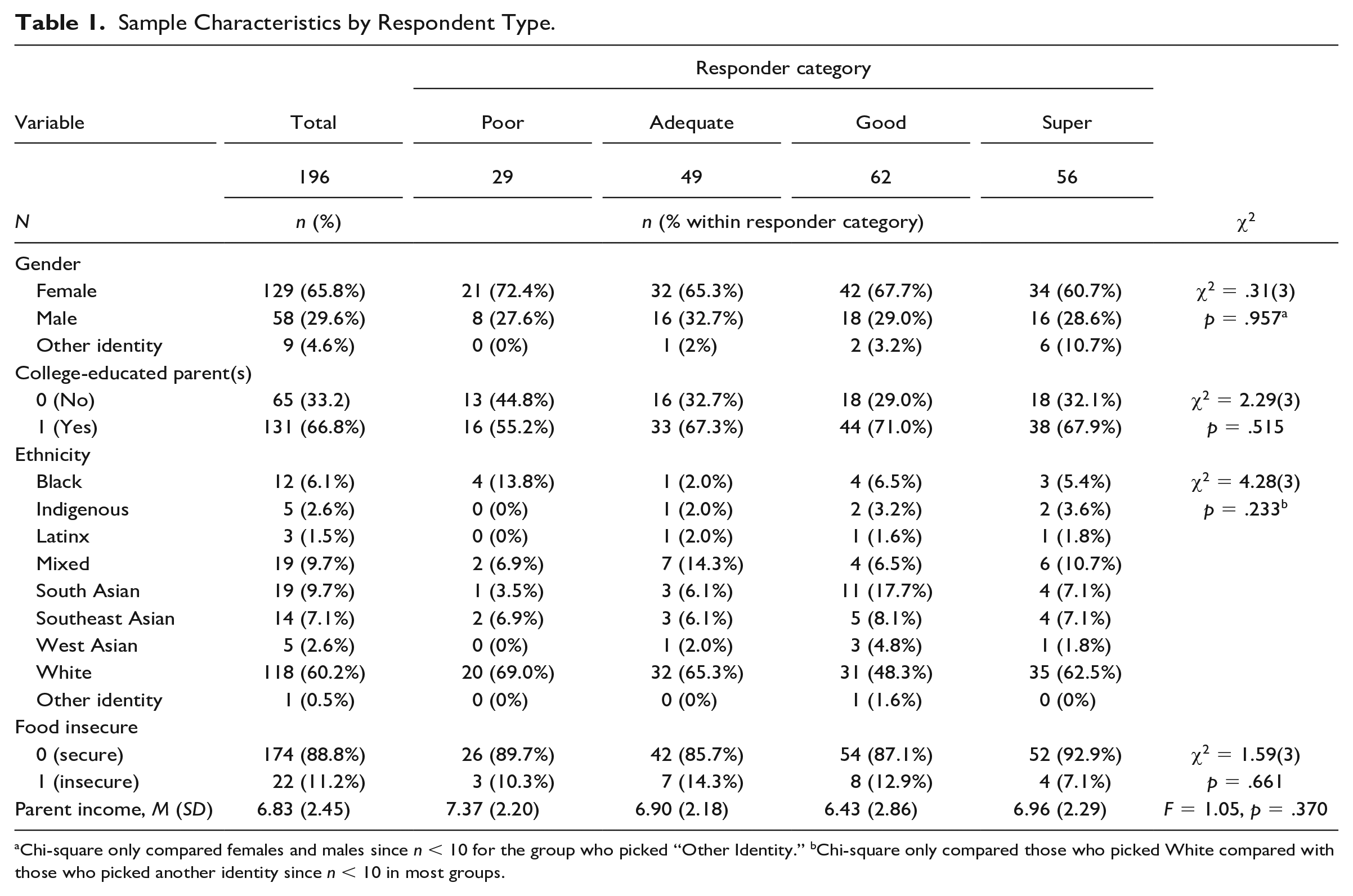

Participants identified as female (65.8%;

On average, participants were 20.61 (

Sample Characteristics by Respondent Type.

Chi-square only compared females and males since

Personality, Mental Health, and Substance Use

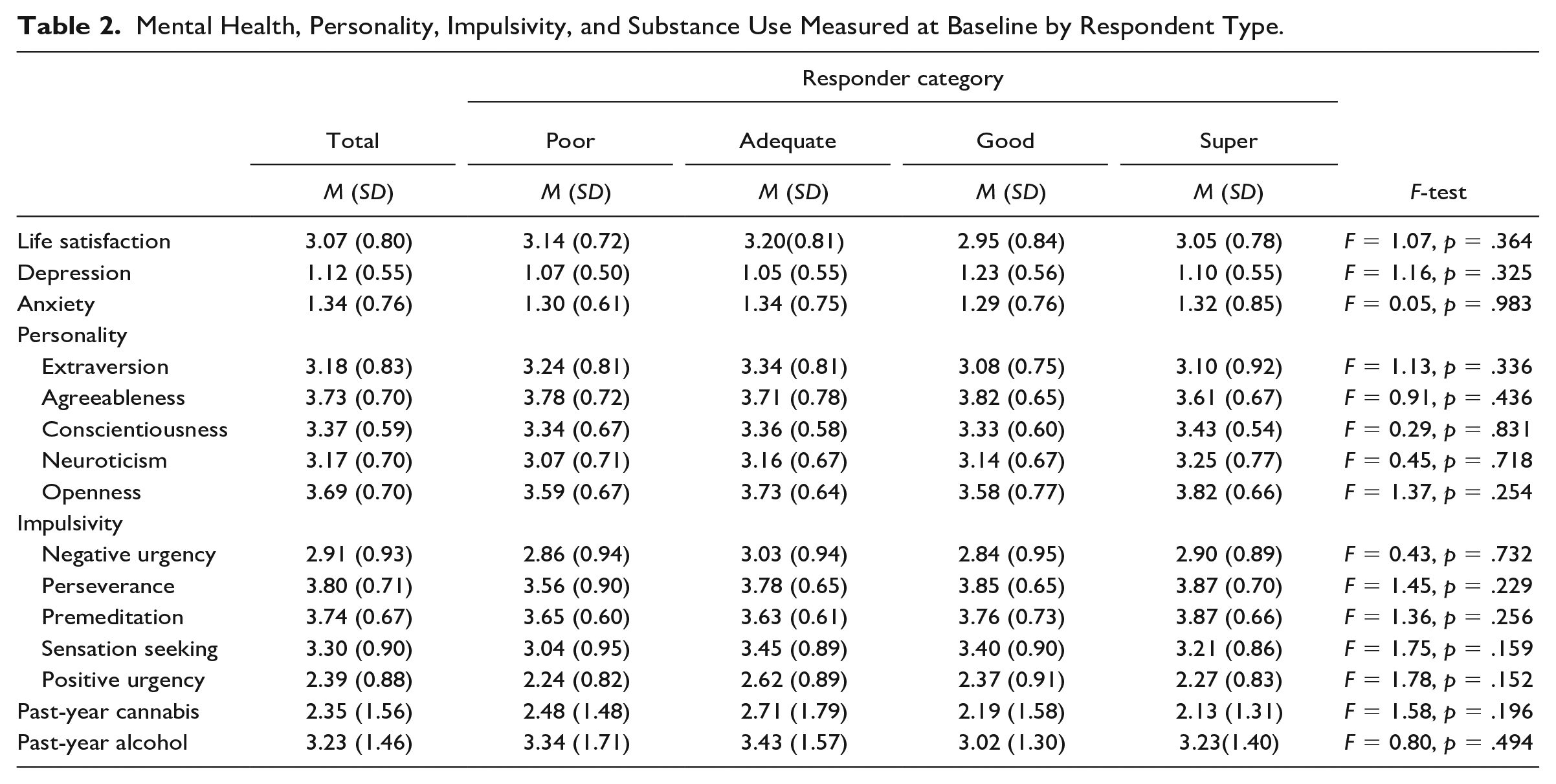

Table 2 shows average personality, mental health, and substance use scores for the total sample and by respondent category. Depression (1.12) and anxiety (1.34) scores suggest that, on average, participants were feeling anxious and depressed “less than 2 days” to “2-5 days” in the past 2 weeks. Personality scores fell slightly above the neutral midpoint of the scale for all traits (extraversion, neuroticism, agreeableness, conscientiousness, and openness). Impulsivity subscales showed average scores for negative and positive urgency between “disagree” and “neutral” while perseverance, premeditation, and sensation seeking scores were slightly higher, falling between “neutral” and “agree.” Participants reported using cannabis between “once a month” and “2-3 times per month” in the past year, on average. Average alcohol use in the past year fell between “once a week” and “2-6 times per week.” None of the tests of personality and mental health variables showed significant differences between responder types.

Mental Health, Personality, Impulsivity, and Substance Use Measured at Baseline by Respondent Type.

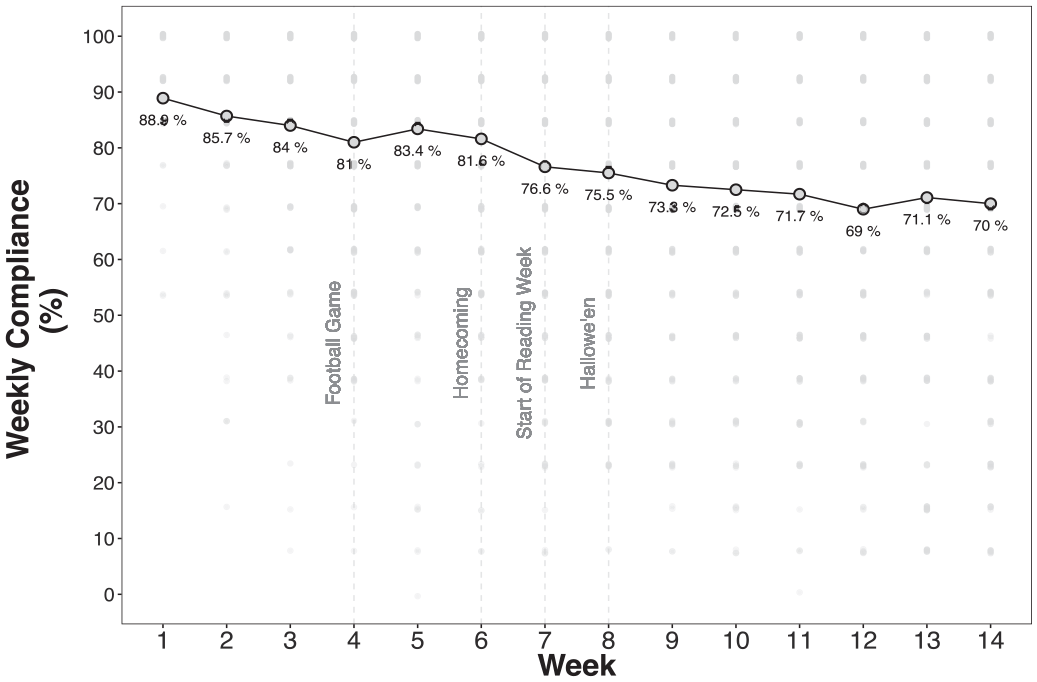

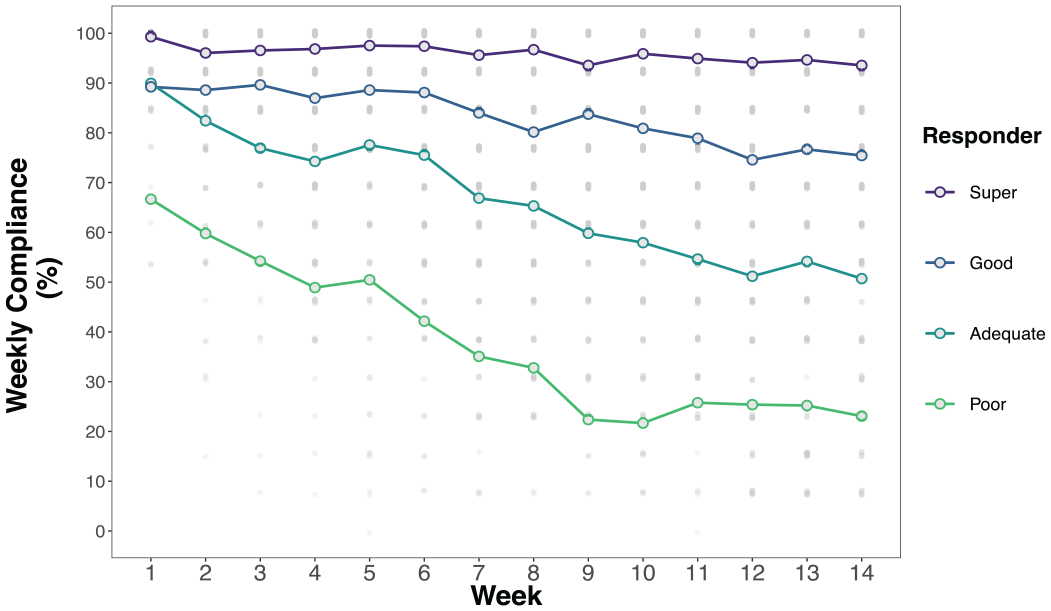

Trends in Compliance

Figure 1 shows that average overall compliance gradually declined over the 14 weeks of data collection, from a high of 88.9% in Week 1 to 70% in Week 14. Mixed effects regression results predicting compliance rates showed significant main effects of Week (i.e., time), Responder Type, and Survey Type (complete model results in Supplement 2). As suggested by Figures 1, 2, and 3, two-way interactions between responder type, survey type, and survey week confirm that compliance rates and trends varied (a nonsignificant three-way interaction was removed to simplify the model). Simple slopes and contrast analyses are summarized in Tables 2, 3, and 4.

Weekly Study Compliance Rates.

Weekly Compliance Rates by Responder Type.

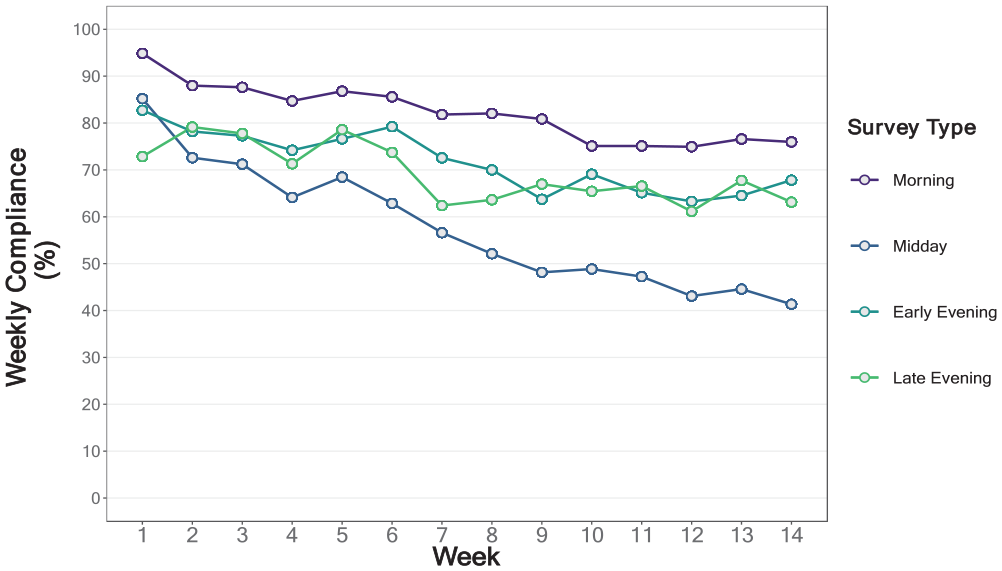

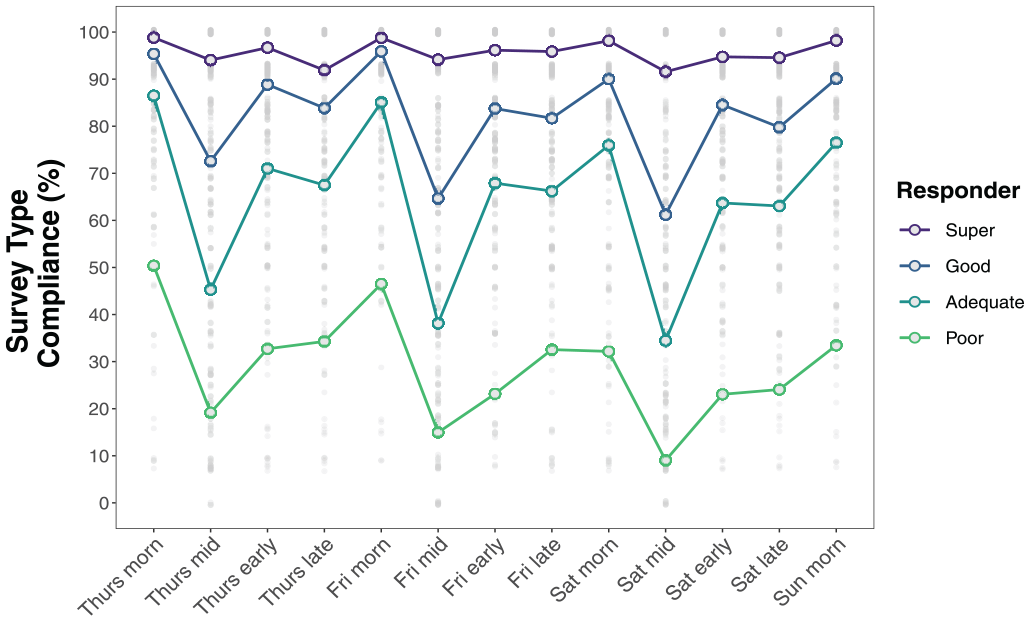

Weekly Compliance by Survey Type.

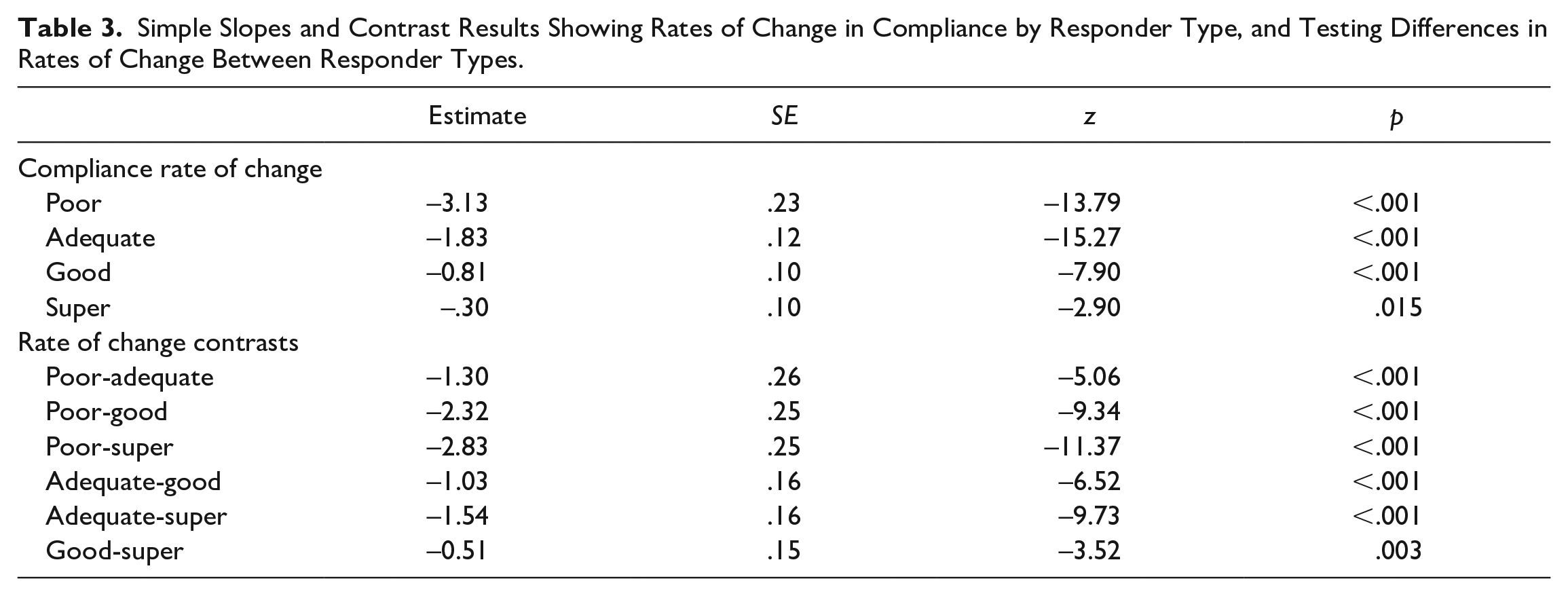

Simple Slopes and Contrast Results Showing Rates of Change in Compliance by Responder Type, and Testing Differences in Rates of Change Between Responder Types.

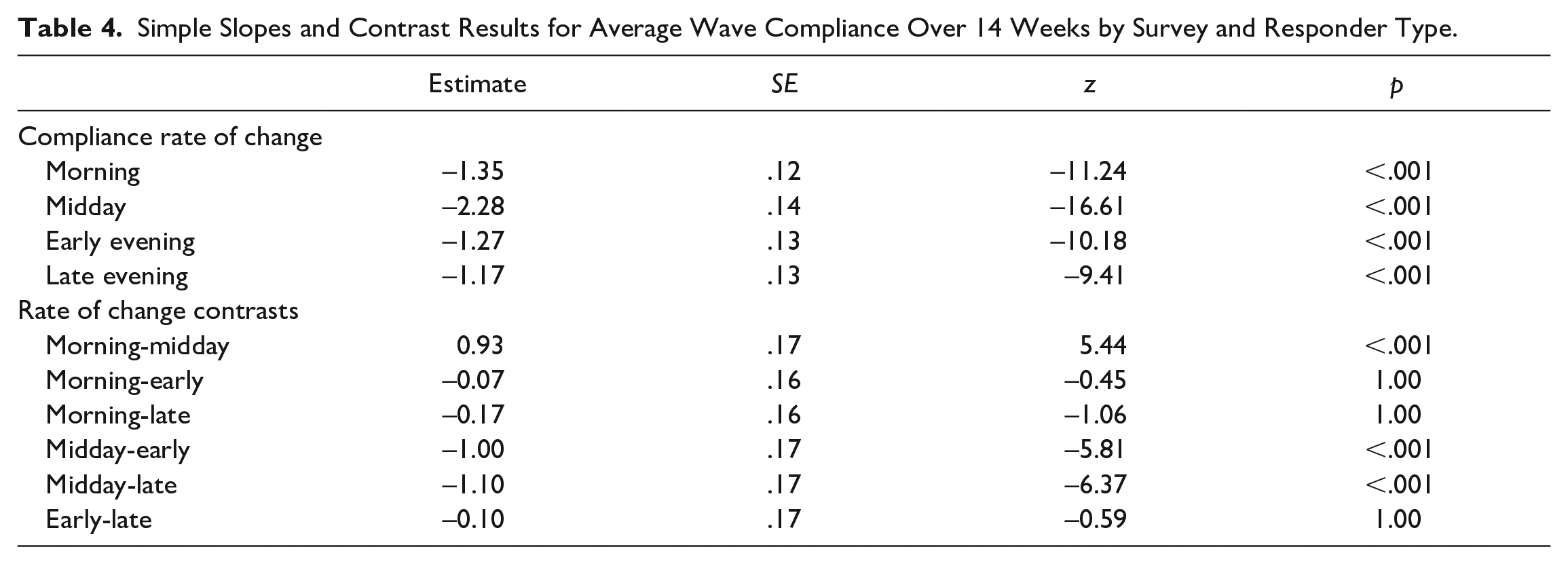

Simple Slopes and Contrast Results for Average Wave Compliance Over 14 Weeks by Survey and Responder Type.

Table 3 shows that all responder categories had significant reductions in compliance over the 14 weeks, but reductions were steepest among less compliant participants (see Figure 2). For example, compliance rates among

Table 4 shows that all survey types had significant reductions in compliance over the 14 weeks, with no significant differences in reduction rates between the morning, early evening, and late evening surveys (on average, we observed a reduction of 1.17% to 1.35% per week for these surveys). In contrast, reductions over time in midday survey compliance were twice as large, 2.28% per week, and significantly larger than any other survey type (see Figure 3).

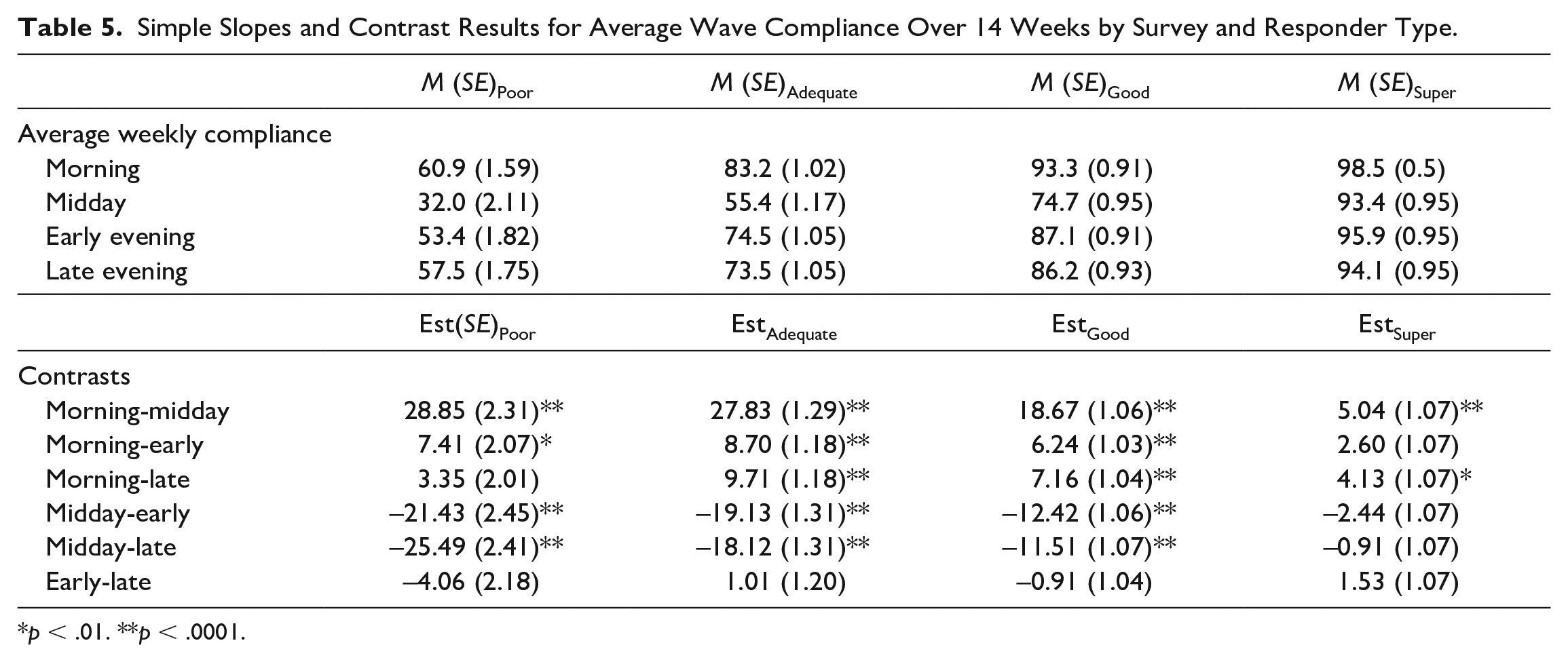

Finally, Table 5 shows that compliance rates were significantly higher for morning surveys compared with most other surveys for all responder groups. For example, poor responders completed 28% more morning surveys compared with midday and 7% more morning than early evening surveys. Among adequate and good responders, morning compliance was significantly higher than for all other survey types and midday compliance was significantly lower than for all other survey types. Early and late evening surveys had similar compliance rates in all responder groups. Super responders stood out as having fairly consistent compliance rates across surveys, showing just two statistically significant differences in compliance: Morning survey compliance was 5% higher than midday and 4% higher than late evening compliance in this group. Survey Type × Responder Compliance differences are illustrated in Figure 4.

Simple Slopes and Contrast Results for Average Wave Compliance Over 14 Weeks by Survey and Responder Type.

Within-Week Compliance Rates by Survey and Responder Type.

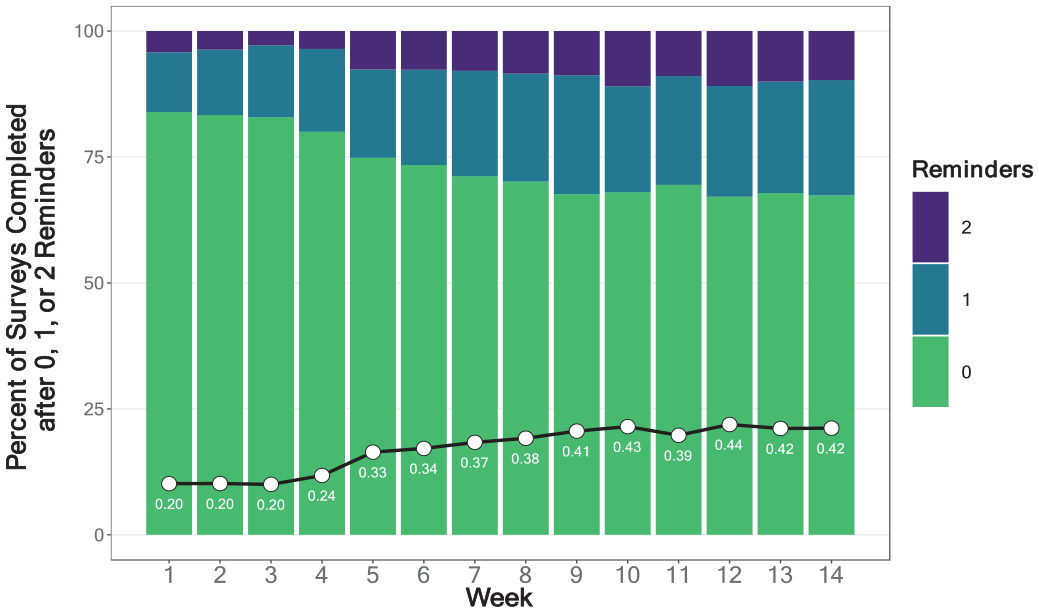

Trends Over Time in Reminders Needed to Achieve Survey Completion

Figure 5 shows that among participants who completed surveys, the average number of reminders needed to achieve survey completion increased over time, from 0.20 in Week 1 (83.9% of surveys were submitted without a reminder) to 0.42 in Week 14 (67.4% of surveys were submitted without a reminder). Complete model results predicting reminders are available in Supplement 3 on our OSF project page (https://osf.io/d4ea8). We found significant effects of Week, Responder Type, Survey Type, and their two-way interactions (a nonsignificant three-way interaction was removed to simplify the model). Participants in all responder categories needed more reminders over time, and the need for reminders was higher in less-compliant versus more-compliant responder groups. There were no differences in need for reminders across survey types.

Need for Reminders: Percent of Completed Surveys Returned After 0, 1, or 2 Reminders by Week.

Effects of Monetary Incentives

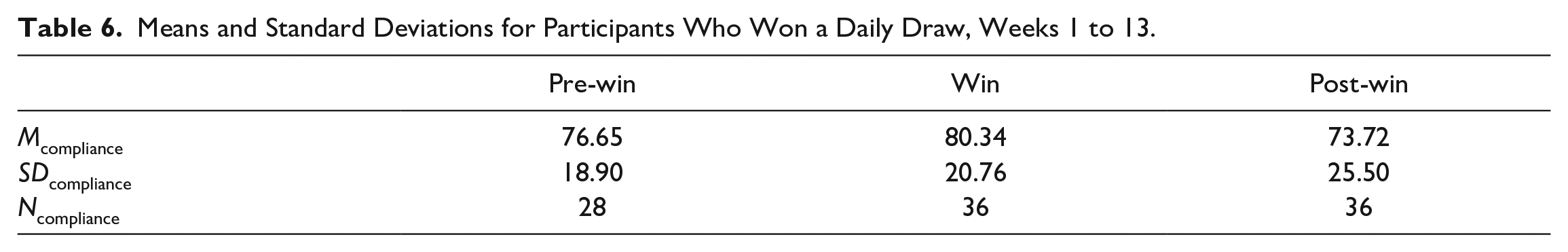

Table 6 shows summary statistics for rates of compliance on weeks preceding, during, and following a $25 daily draw win for the subset of

Means and Standard Deviations for Participants Who Won a Daily Draw, Weeks 1 to 13.

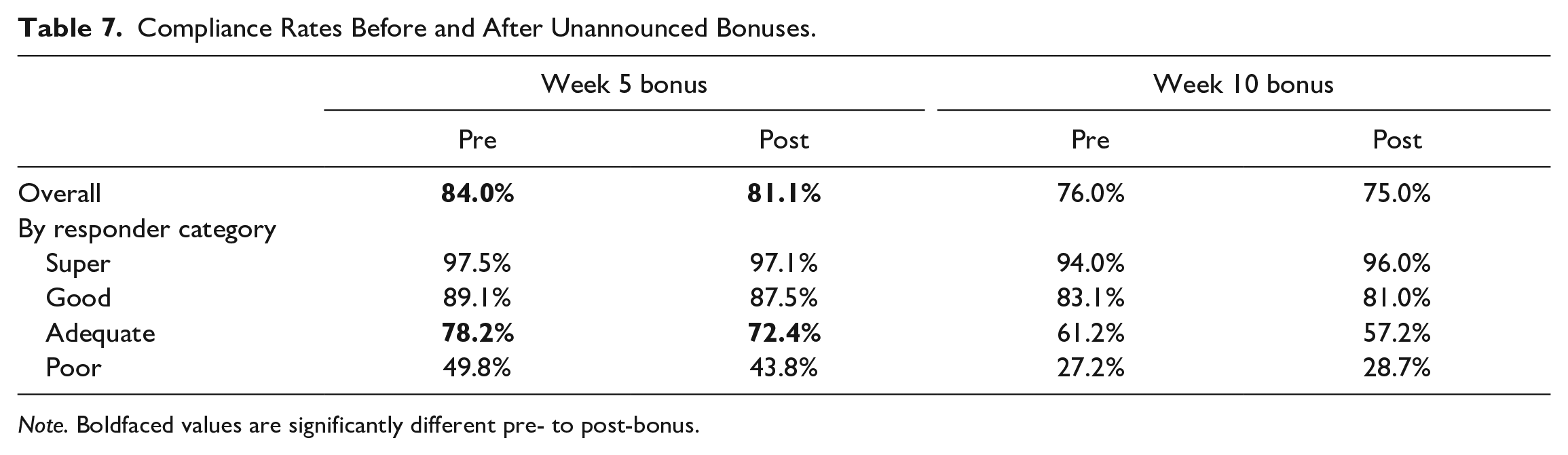

Table 7 shows summary statistics for rates of compliance on weeks before and after participants received a surprise bonus of $5, separated by responder type. A random-effects ANOVA of pre- to post-bonus compliance in Week 5 showed a significant reduction in compliance,

Compliance Rates Before and After Unannounced Bonuses.

Discussion

This study drew on data gathered from undergraduate alcohol drinkers to comprehensively examine variation in compliance with a long-term (14-weekend, 182-survey) ecological momentary assessment (EMA) protocol. Prior research focusing on study-level differences in compliance yielded few insights, leading us to focus on individual differences in the context of a single study. Three key findings emerged from our study. First, we found that compliance over time with our EMA protocol was not uniform. There was tremendous individual variability in rates of responding week-to-week, but variability was not explained by any sociodemographic, personality, mental health, or substance use measures collected at intake. Second, we found that compliance varied across our survey types and times of day, suggesting that different characteristics of our measurement contributed to better or worse compliance. Finally, we found no evidence that incentives enhanced or boosted compliance and draws may have

Compliance Over Time

Across all surveys administered, we achieved a compliance rate of 76.5%, declining from a high of 88.9% in the first week to 70% in the final week of surveys. Declines in compliance over time are consistent with other EMA and diary studies (Ono et al., 2019; Rintala et al., 2019; Silvia et al., 2013), but our mixed effects regression models show that rates of decline differ dramatically between people who are more versus less compliant, overall, with the EMA protocol. For example,

Reasons for these differences remain frustratingly elusive. We found no differences across responder categories on demographic measures (gender, ethnicity, educated parents, food insecurity), nor on any personality or well-being variables measured at study intake. Average

We took several steps to ensure that participants enrolled into our EMA study phase were interested and engaged at the outset of the study. Everyone who enrolled had to first decide to complete our eligibility screen, provide contact information, respond to an invitation to complete our intake survey, express interest in continuing to participate, complete a secondary consent form, provide their phone number, and respond to a confirmation text message. We assume that participants willing to complete these numerous steps anticipated a favorable cost-benefit trade-off to participating and found our team to be trustworthy (see Dillman et al., 2014, Chapter 2). Thereafter, participants likely had mixed reactions to the surveys and the protocol itself. In follow-up interviews to their EMA protocol, Eisele and colleagues (2022) found that some participants described the protocol becoming burdensome as initial excitement wore off and questions became boring. Others reported getting used to survey prompts as part of their daily routine. Participants in one study who had lower overall compliance were more likely to respond to morning surveys, if they were

Survey Type Differences

A key finding was that participants were selective in their survey completion, with some surveys apparently easier or more desirable to complete. Consistent with other research (van Berkel et al., 2020),

The midday survey was available for just 2 hours. This was an adequate completion window for the early evening survey, but its presentation during the transitional part of the day when many people are commuting home and preparing for evening social activities might have been a disadvantage. Perhaps more importantly, however, the midday survey involved a tedious cognitive task with a median completion time of just over 4 minutes. We suspect that large numbers of participants regularly chose to skip this survey, on which they were asked to complete a response inhibition (Stop Signal) task in 5 blocks, with 16 trials per block. Our initial expectation was that participants would treat this task as a game, which included accuracy feedback after each block. In other research, a word-ranking task with “gamified” elements (e.g., score, player leaderboard, time challenge) achieved a participation rate nearly double that of a nongamified version of the same task (van Berkel et al., 2017). Unfortunately, our midday task was likely more burdensome than fun, leading to reduced compliance when combined with the potential challenge of wresting participants’ attention at a busy time of day.

Incentives May Delay Attrition

At the outset of this study, we anticipated that regularly delivered incentives would help to overcome feelings of burden associated with continued EMA participation and at times that surveys felt uninteresting or intrusive. Our design included $1 compensation per survey, with gift card payments issued by email 2 days after each weekend survey burst (most participants redeemed or uploaded their payments to Amazon immediately). Regular incentives are a feature that likely contributed to willingness to participate and self-selection into our study (Ludwigs et al., 2020), but our unannounced bonuses and gift card draws allowed us to directly test the impact of incentives on compliance. For both types of incentive, we found no evidence that compliance was “boosted” the week following a bonus or a draw win. On the contrary, compliance rates were significantly lower on weeks after students won a gift card draw.

Extrapolating from principles of economic exchange, we assume that people will be more willing to complete work (responding to surveys) if they are compensated for that work (cash or gift cards). Meta-analytic work bears this out, showing that compliance rates are higher in studies offering financial incentives (e.g., Church, 1993; Wrzus & Neubauer, 2022), and people who feel adequately compensated tend to complete more surveys (Martinez et al., 2021). In contrast, unannounced bonuses and prize winnings ought to evoke participants’ sense of social exchange obligation (Dillman et al., 2014, p. 370) to boost compliance. In paper-based mail surveys, including a small “pre-incentive” with the initial survey invitation increased participation rates by 11% to 25% compared with control conditions in which invitations included no pre-incentive (Lesser et al., 2001). We found no evidence that participants completed more surveys in the weeks following receipt of a surprise $5 bonus. However, compliance rates also did not

Strengths and Limitations

A key strength of the present study was our use of detailed information about compliance in a longitudinal EMA design that permitted a contrasting perspective to the almost exclusively between-study effects that have been tested to date. We were able to evaluate compliance trends over a 14-week, 182-survey period; test for sociodemographic, personality, mental health, and substance use differences in compliance over time; identify differences across survey types and times of day; and evaluate different incentive techniques to maintain compliance. Our overall compliance rate of 76.5% across 14 weeks is comparable with what numerous other studies managed with just 1 or 2 weeks of data collection. In other words, we were able to leverage a strong sample with diverse design features to test exploratory questions about within-study variation in EMA protocol compliance.

At the same time, compliance data were a by-product of this sample, not the primary goal. We did not randomly assign participants to receive different kinds of surveys or different incentive structures, and so are limited in some of the causal conclusions we can draw from this work. An unanticipated limitation was participants’ apparent dislike for the Stop-Signal task that figured heavily on the midday survey. We presume, rather than confirm, that low compliance on this survey was due to the nature of the task, but we cannot rule out that time of day alone (4:00 p.m.–6:00 p.m.) may have driven low compliance.

Conclusion and Recommendations

In sum, this 14-week, 182-survey EMA protocol showed gradually waning compliance over time that differed by type of respondent and type of survey. Demographics, personality, mental health, and substance use measures did not distinguish between participants with higher and lower rates of compliance across the study. From these findings, we offer the following recommendations for future EMA research:

Develop Study Enrolment Strategies to Maximize the Share of Highly Engaged Participants

Our

Administer Short Surveys and Tasks That Are Fun (or at Least not Tedious) to Complete

We dispute the conclusion from other work that survey length or depth does not affect protocol compliance. Although meta-analyses and experimental data preceding our work failed to find any between-study differences in compliance related to surveys with more questions (Hasselhorn et al., 2022; Ottenstein & Werner, 2022; Williams et al., 2021), evidence suggests that perceived burden is higher and data quality may be poorer (Eisele et al., 2022; Hasselhorn et al., 2022). Our midday survey cognitive task was distinct from our other surveys and achieved much poorer compliance rates for all but the most engaged

Pay Participants and Consider Low-Cost Supplemental Incentives to Delay Attrition

Incentives are an important tool for engaging participants in an EMA protocol. The high overall compliance rate in our study is likely attributable in part to its incentive structure and frequent payment schedule. We did not see a boost to compliance rates in weeks following our supplemental surprise bonuses, but we nonetheless recommend this approach as a potential strategy to forestall some attrition. Over the course of the study, we spent over $25,000 on weekly incentives and just $3,000 in bonuses and draws. Given their potential to build goodwill, enhance trust, and maintain participant engagement (if not enhance it), the cost-benefit trade-off still favors the use of supplemental incentives, although we are cautious about the use of higher-value, lottery-like prizes that showed short-term compliance reductions in this study. We encourage research teams to think of creative ways to incorporate supplements into their own study designs.

Participant engagement in EMA research is undoubtedly difficult to sustain over time, and every new survey brings an opportunity for more people to drop out or ignore prompts. Kaurin and colleagues (2022) offer recommendations for balancing the density, depth, and duration of EMA assessments. We further encourage research teams to carefully build into their study design opportunities to enhance engagement. Reminder messages, feedback after completing surveys, supplemental incentives, and gamified elements are promising tools to enhance data quantity and quality.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by funding from a Project Grant awarded to A. Howard, E. Barker, M. Milyavskaya, and M. Patrick by the Canadian Institutes of Health Research.