Abstract

This study sought to understand the factors influencing voters to verify their paper ballots produced by an electronic Ballot Marking Device (BMD). To tackle this question, 60 undergraduate students from Rice University participated in mock elections where their votes on the electronic BMD displayed their selections accurately, but then a subset of their votes on the printed ballots were altered. After the system printed the paper ballot, two user interface (UI) interventions aimed at improving ballot verification rates were displayed in the following sequence: (1) a digital-based prompt instructing voters to “Carefully check your printed ballot. Take your time. Make sure everything is correct” and asked the question, “Are your printed selections correct?” requiring an on-screen response of “Yes” or “No,” and (2) a paper-based prompt requiring voters to handscribe their signature, a checkmark, or a sentence on the paper ballot. The results revealed that the paper-based intervention did not influence ballot verification performance, as all participants who detected at least one anomaly destroyed their compromised ballots after encountering the digital-based intervention. The overall detection rate on the digital-based intervention was high, with 88% of voters detecting changes in their ballots, suggesting that this ballot verification intervention was an effective countermeasure to encourage voters to check their paper ballots. The findings from this study can inform voting system design guidelines that motivate voters to independently audit their own ballot for anomalies.

Introduction

Ballot Marking Devices (BMDs) are increasingly used in elections to produce verifiable paper ballots based on voters’ digital selections. They combine the broadened usability and accessibility of electronic voting with the security of a paper trail that can be checked by voters and leveraged for auditing and recounting purposes. BMDs also help overcome challenges associated with traditional paper ballots, such as stray or ambiguous marks, limited accessibility for voters with visual or motor impairments, and logistical difficulties in managing large volumes of ballots.

However, growing concerns exist around the potential for malfunctioning or malicious software to alter votes on the printed ballots while displaying accurate selections on screen, therefore undermining election integrity. This raises a critical question: Can voters detect anomalies on their printed ballots?

Previous research reports relatively low error detection rates, ranging from 23% to 50%, without distinguishing between voters who failed to notice discrepancies despite checking their ballots and those who never checked their ballots at all (Acemyan, et al., 2013; Bernhard et al., 2020; Campbell & Byrne, 2009; Everett, 2007). More recent studies have clarified this distinction, finding that voters who actively check their printed ballots can detect errors at rates as high as 76% to 100% (Kortum et al., 2021; Kortum et al., 2022; Diokno et al., 2024). These findings suggest that low error detection rates stem from motivational factors rather than fundamental cognitive or perceptual limitations.

This study thus aims to examine the effectiveness of digital-based and paper-based interventions in promoting paper ballot verification and enhancing error detection among voters.

Method

Participants

The participants were 60 undergraduate students at a university in Houston, Texas. Participants were recruited using the university’s SONA system and compensated with course credit. Eligibility criteria required participants to be at least 18 and fluent in English. Of the participants, 42 (70%) were female and 18 (30%) were male. Ages ranged from 18 to 22 years, with a mean age of 19 (SD = 1). Twenty-one participants (35%) identified as white, five (8%) as African American, 26 (43%) as Asian, and five (8%) as multiracial; three (5%) preferred not to say. Four participants (7%) were Hispanic. Most participants (65%) had previously voted in one election, 17% had never voted before, and 18% had voted in more than one election.

Design

The study evaluated the effectiveness of two interventions: digital-based and paper-based. The digital-based intervention, which was modeled after a previous study by Kim et al. (2024), included an on-screen prompt that asked, “Are all your printed selections correct?” with two selectable options: “Yes” and “No.” The UI for this intervention was modified from the original design to incorporate three lines of instruction: “Carefully check your printed ballot. Take your time. Make sure everything is correct.” The digital-based intervention was held constant for all participants. For the paper-based intervention, a between-subjects design was employed to investigate the effects of different paper-based conditions on ballot verification performance. The three ballot-verification intervention conditions are described as follows:

Checkmark: On-screen instructions that read, “Check mark the bottom of your ballot,” and a prompt on the bottom of the paper ballot that stated, “By checking this box, I confirm that all the printed selections are correct.”

Signature: On-screen instructions that read, “Sign the bottom of your ballot,” and a prompt on the bottom of the paper ballot that stated, “By signing below, I confirm that all the printed selections are correct.”

Sentence: On-screen instructions that read, “Rewrite the statement at the bottom of your ballot,” and a prompt on the bottom of the paper ballot that stated, “Rewrite this statement below: I confirm that all the printed selections are correct.”

Block randomization was used to assign participants to one of three paper-based verification conditions, controlling for potential time-of-day effects.

The Wizard of Oz (WoZ) protocol was central to the study design, allowing researchers to simulate system functionality and ensure a realistic voter experience. Participants believed the BMD printed their selections, but in reality, the experimenter (the “wizard”) printed the paper ballots. This allowed researchers to control the printing process and introduce ballots with anomalies.

The system’s usability was evaluated using ISO 9241-11 performance metrics: effectiveness, efficiency, and satisfaction. Effectiveness was assessed by the number of voters who detected an anomaly, the number of errors, the number of help requests, and subjective workload via the NASA-Task Load Index (NASA-TLX; Hart & Staveland, 1988). The efficiency of the system was measured separately for three discrete tasks under the same experimental session: (1) the total time taken to print, which was measured as the elapsed time from when participants hit “Start Voting” to the printing of the initial compromised ballot, (2) the total time taken to re-print, which was measured as the elapsed time from the printing of the initial compromised ballot, to the printing of the second uncompromised ballot, and (3) the total time taken to cast, which was measured as the elapsed time from the printing of the last ballot to its submission into the ballot box. User satisfaction was measured using the modified System Usability Scale (SUS; Brooke, 1995) with the added Adjective Rating Scale (ARS; Bangor, Kortum & Miller, 2009).

Materials

To simulate an electronic voting experience, the mock election interface was prototyped in Figma, a browser-based design platform. The experimental setup included a ballot printer, a vertically oriented ballot-marking device (BMD), and a makeshift ballot box, from left to right on a table.

All participants completed the same 26-race mock election. The ballot featured 19 single-seat races (one election per race), a multi-candidate race allowing up to four out of five selections, and six propositions with binary “Yes” or “No” choices. To minimize bias, the ballot used fictitious candidate names and nonpartisan party labels (e.g., Party A, B). This prevented participants from deviating from the provided slate based on political affiliation.

Each participant received the same printed slate and was instructed to reference it throughout the voting process. Two versions of the printed ballot were used: a compromised version and an uncompromised version. The compromised ballot contained six alterations in the middle 50% of the ballot (specifically races 8, 10, 13, 15, 19, and 20). The uncompromised ballot aligned exactly with the slate. Depending on the experimental condition, the bottom of each printed ballot included one of three verification prompts: a checkbox, a signature line, or a sentence to be rewritten.

Procedures

Following completion of an IRB-approved consent form and demographics survey, participants voted in a mock 26-race election while referencing the provided slate of candidates. After the 26th race, they were directed to a digital review screen displaying all of their selections. Participants then pressed the “Print my Ballot” button, after which the experimenters covertly printed out the compromised ballot.

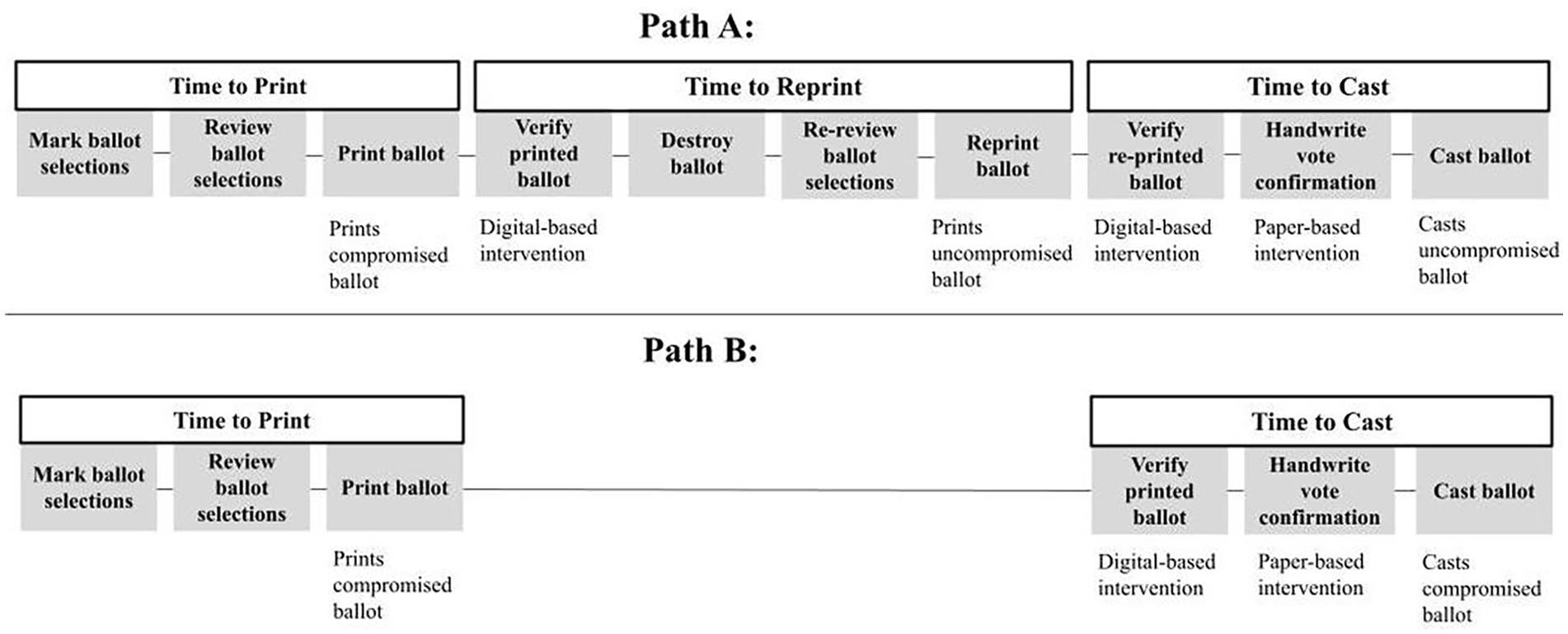

After the initial ballot printing, subjects encountered the digital-based intervention. This screen served as a branching point for two distinct task flows: Path A and Path B. Those who answered “No” to the question followed Path A, completing the steps of destroying the compromised ballot, re-reviewing their electronic votes, and printing an uncompromised ballot before ultimately encountering the paper-based intervention and casting their ballot. Participants who selected “Yes” followed Path B, proceeding directly to the paper-based intervention and then casting their compromised ballots in the ballot box. These two task flows are illustrated in Figure 1. Finally, participants completed the SUS, ARS, and NASA-TLX surveys.

Two possible task flows. Path A illustrates participants who identified an anomaly in their printed ballot. This is characterized by the destruction of the compromised ballot, reprinting of a new ballot, and casting of the uncompromised version. Path B represents participants who skipped reprinting and proceeded directly from print to cast, ultimately casting the compromised ballot.

Results

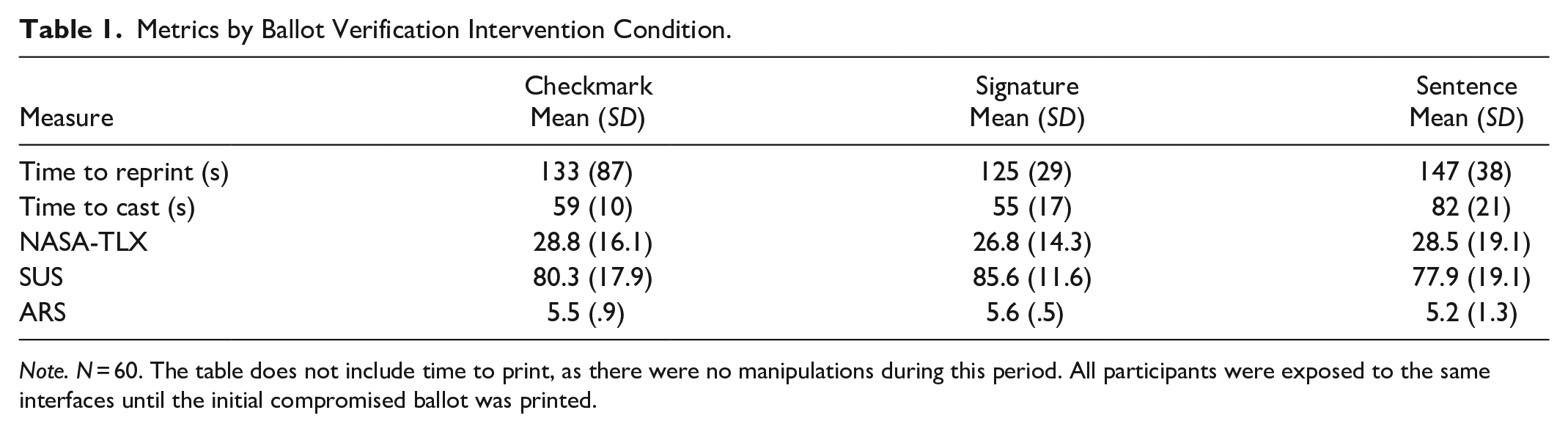

A total of 20 participants were assigned to each condition. Table 1 provides a detailed summary of the means and standard deviations of the metrics to assess the usability of the ballot verification intervention. One-way analyses of variance (ANOVAs) were performed on the quantitative data. The qualitative data on errors and assist requests were analyzed for patterns, categorized, and tallied into frequency counts. The quantitative and qualitative results are described in further detail in the following sections.

Metrics by Ballot Verification Intervention Condition.

Note. N = 60. The table does not include time to print, as there were no manipulations during this period. All participants were exposed to the same interfaces until the initial compromised ballot was printed.

Effectiveness

Error Rate

Fifty-nine voters (97%) successfully cast their votes. The one voter (2%) who failed to cast their ballot detected the anomalies in their uncompromised ballot but did not destroy it, ultimately handing both the compromised and uncompromised ballot to the experimenters instead of depositing the uncompromised ballot into the ballot box. During the experimental sessions, 21 errors were made. The majority of these errors (n = 9) occurred during the “Destroy Your Ballot” step. Of these nine, five voters skipped destroying their ballots altogether. The step with the second highest occurrence of errors (n = 8) was the “Review Your Ballot” step, following the printing of the compromised ballot. Of these eight, seven failed to detect anomalies and erroneously indicated that their printed ballots were correct. Notably, six of these eight voters also made ballot errors when voting, deviating from the slate in at least one race.

Assist Rate

During the experimental sessions, a total of 17 requests for assistance were made by the participants. The step with the highest occurrence of assistance requests was the “Destroy Your Ballot” step. In this step, nine assist requests were regarding where the trash can was located. The second most prevalent step with assistance requests was the “Review Your Ballot” step (i.e., digital-based intervention) after their compromised ballot had been printed. In total, five requests for help were made by participants. Two of these voters were about to drop their printed ballots into the ballot box prematurely and had asked, “Ballot box, or?” (ID 43) and “Just put it in?” (ID 60). On that same step, one voter (ID 38) asked, “So if they’re wrong, what do you want me to do? Click on here?” referring to the “No” button. The third leading step with assist requests was reviewing the electronic votes on the review screen right before printing (n = 2).

Cognitive Workload

A one-way ANOVA was conducted to examine the effect of ballot verification intervention (checkmark, signature, and sentence conditions) on NASA-TLX scores. The analysis showed no statistically significant effect of ballot verification intervention on NASA-TLX scores, F(2,57) = 0.09, p = .92, η² = .003. The mean NASA-TLX scores were as follows: checkmark (M = 28.8, SD = 16.1), signature (M = 26.8, SD = 14.3), and sentence (M = 28.5, SD = 19.1). These results suggest that the type of ballot verification intervention does not affect subjective workload ratings.

Choice to Examine and Detection Performance

All participants who checked their paper ballots found at least one anomaly and destroyed their ballots. The paper ballot examination rate was high, with 53 out of 60 participants (88%) choosing to check their paper ballots for anomalies. The seven participants (12%) who failed to detect errors in their ballots did so by bypassing the paper examination altogether.

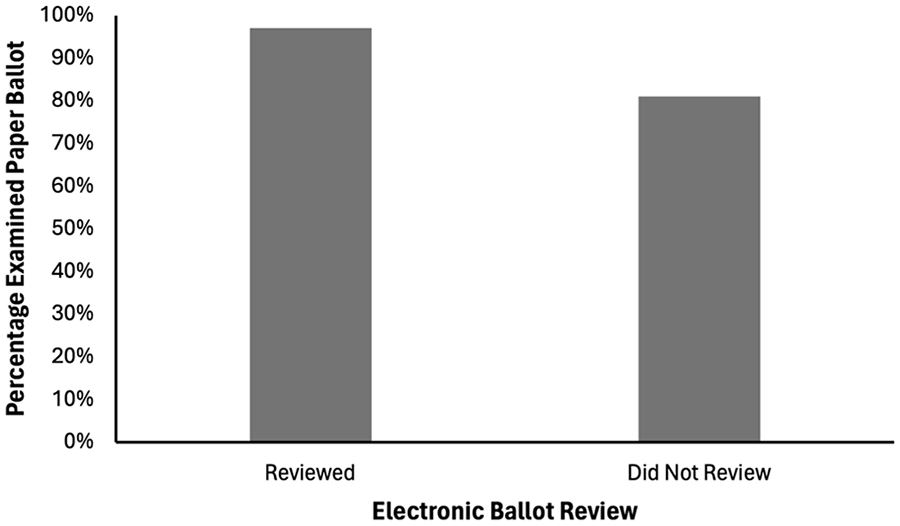

To determine whether examining the digital review screen influences voters’ likelihood of verifying their paper ballot, descriptive statistics for ballot verification rates were compared between those who reviewed the digital screen and those who did not. This analysis addresses the question: Does reviewing the digital screen impact the likelihood of verifying the paper ballot? Overall, 29 participants (48%) reviewed their votes on the digital review screen. As illustrated in Figure 2, among participants who verified their electronic ballots, 28 participants (97%) also verified their paper ballots.

Percentage of voters who examined their ballots as a function of whether or not they reviewed their electronic ballot on the review screen.

Efficiency

Time to reprint refers to the time elapsed from printing the initial compromised ballot to the second uncompromised ballot. Among the conditions tested, the average reprinting time was the shortest in the signature condition (125 s) and the longest in the sentence condition (147 s).

An ANOVA was conducted to examine the effect of ballot verification intervention (checkmark, signature, and sentence conditions) on the time to reprint. The analysis showed no statistically significant effect of ballot verification intervention on reprinting times, F(2,46) = 0.81, p = .45, η² = .03. The mean reprinting times were as follows: checkmark (M = 133, SD = 87), signature (M = 125, SD = 29), and sentence (M = 147, SD = 38). These results suggest that the type of ballot verification intervention does not affect reprinting times.

Time to cast is the time from printing the last ballot to its submission into the ballot box. The average casting time was the shortest in the signature condition (55 s) and the longest in the sentence condition (82 s).

An ANOVA was conducted to examine the effect of ballot verification intervention (checkmark, signature, and sentence conditions) on time to cast. The analysis showed a statistically significant effect of ballot verification intervention on casting times, F(2,46) = 13.5, p < .001, η² = .34. Post hoc comparisons using Tukey's HSD test indicated that participants in the checkmark condition (M = 59, SD = 10, p < .001) and signature condition (M = 55, SD = 17, p < .001) were significantly faster to cast their ballot than those in the sentence condition (M = 82, SD = 21, p = .005). However, no significant difference was found between the checkmark and signature conditions (p = .73). These results suggest that the type of ballot verification intervention influences casting times, with the sentence condition taking the longest to cast compared to the checkmark and signature conditions.

Satisfaction

Overall, user satisfaction with the voting system was rated “good”, with a mean SUS score of 81.3 out of 100, ranging from 30 to 100. The mean ARS score of 5.4 out of 7, which corresponds most closely to the adjective rating of “Good.”

An ANOVA was conducted to examine the effect of ballot verification intervention (checkmark, signature, and sentence conditions) on SUS scores. The analysis showed no statistically significant effect of ballot verification intervention on SUS scores, F(2,57) = 1.16, p = .32, η² = .04. The mean SUS scores were as follows: checkmark (M = 80.3, SD = 17.9), signature (M = 85.6, SD = 11.6), and sentence (M = 77.9, SD = 19.1). These results suggest that the type of ballot verification intervention does not affect subjective usability.

An ANOVA was conducted to examine the effect of ballot verification intervention (checkmark, signature, and sentence conditions) on ARS scores. The analysis showed no statistically significant effect of ballot verification intervention on ARS scores, F(2,57) = 0.91, p = .41, η² = .03. The mean ARS scores were as follows: checkmark (M = 5.5, SD = .9), signature (M = 5.6, SD = .5), and sentence (M = 5.2, SD = 1.3). These results suggest that the type of ballot verification intervention does not affect adjective ratings.

Discussion and Conclusion

Adding the three sentences in the digital-based intervention substantially improved examination rates. With the additional sentences, 88% of voters examined their ballots. In comparison, only 53% of voters examined their ballots in a previous study that did not include the intervention (Kim et al., 2024). This 69% increase in ballot examination rates suggests that the redesigned digital-based intervention was effective in motivating voters to examine their ballots.

This study discovered that 97% of voters who reviewed their electronic votes on the review screen also examined their paper ballots while encountering the digital-based intervention screen. Voters can indeed review their ballots twice, contradicting the findings from the study by Diokno and Farmer (2024) that none of the participants who reviewed their electronic votes on the review screen examined their paper ballots.

Furthermore, in analyzing the behavioral trends among participants who failed to detect any anomalies, six out of seven (86%) did not refer to the slate while voting. This suggests voters who were initially careless or lacked motivation while voting were also disinclined to audit their paper ballots for errors. In other words, initial carelessness during voting correlates with continued carelessness during ballot review.

It is also important to recognize that time-to-cast data is susceptible to confounds, such as reading or handwriting speed based on individual variability. However, the consistently longer completion times in the sentence condition are likely attributed to the increased motor effort required to handwrite a complete sentence (i.e., “I confirm that all the printed selections are correct”), as opposed to handwriting a checkmark or signature, which demands relatively minimal effort.

Overall, the results of this study showed that adding the three sentences to the digital-based intervention effectively increased the ballot verification rate, while still maintaining high usability and low workload. Notably, the redesigned digital-based intervention can effectively motivate voters to verify their votes—not just once, but twice—by reviewing both their electronic and paper votes. These findings offer actionable insights that voting system developers and designers can use to facilitate voter self-auditing, strengthening the security and integrity of the system.

Footnotes

Acknowledgements

The authors would like to thank Ellie Jung, Caitlin Claxton, Hayley Jue, and Grace Kwon for their assistance with the data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in part by a grant from DARPA via VotingWorks Award 205017.