Abstract

This preliminary study presents HPM-NL, a natural-language-based cognitive modeling tool developed to estimate task completion time (TCT) for upper-limb prosthesis tasks, specifically the clothespin relocation test (CRT). HPM-NL integrates logic from established human performance modeling frameworks (GOMS, CPM-GOMS, ACT-R, QN-MHP, and SOAR) and returns citation-anchored predictions based on user-input task descriptions. A Wilcoxon signed-rank test revealed no statistically significant difference between HPM-NL and Cogulator estimates for a single CRT cycle, suggesting comparable TCT outputs for this specific task. However, HPM-NL’s current scope is limited to single-task modeling in a structured experimental setting, with no assessment of its predictions across diverse tasks, real users, or broader cognitive measures such as workload. Further limitations include reliance on a proprietary large language model, potential citation errors, lack of empirical validation against human-subject performance data, and uncertainty about generalizability. Despite these constraints, HPM-NL provides an early-stage tool for exploring task modeling in prosthesis research.

Keywords

Introduction

The prevalence of limb loss and differences has risen significantly, with over 5.6 million individuals in North America living with amputations (Caruso & Harrington, 2024) and more than 185,000 new cases reported annually. Given the increasing reliance on advanced prosthetic technologies, accurately modeling user interactions is crucial for improving design and usability. Human performance modeling (HPM) plays a key role in predicting human performance, providing a computational alternative to costly human-subject studies (Wickens et al., 2021; Zahabi & Park, 2023). There are five major HPM frameworks have been used and validated from a significant number of studies, including Goals, Operators, Methods, and Selection Rules (GOMS) (Card, 2018); Cognitive, Perceptual, Motor-GOMS (CPM-GOMS) (John & Kieras, 1996); Adaptive Control of Thought-Rational (ACT-R) (Anderson & Lebiere, 2014); Queueing Network—Model Human Processor (QN-MHP) (Liu et al., 2006); and State, Operator, And Result (SOAR) (Laird, 2019; Park & Zahabi, 2024; Zahabi & Park, 2023).

Some of these models, while valuable and well-studied, are still difficult to learn and not easily applicable to real-world or clinical settings by end users. To address these limitations, we aim to introduce generative pre-trained transformer (GPT)-based HPM methodology and tool (HPM-Natural Language; HPM-NL) to predict task completion time (TCT) and workload of an upper-limb prostheses task. We pursued scientifically grounded HPM approach, while having accessibility to clinicians or other researchers, with minimized hallucinations. As it is well-known that chatbots generate hallucinations, instead of pursing generalization, we aim to provide clear modeling logic of HPM-NL and narrow down the scope of the validation process only for TCT and one task (clothespin relocation test; CRT), by comparing HPM-NL generated results and Cogulator generated results (Estes, 2021).

Method

To develop HPM-NL, we implemented a multi-step process designed to systematically gather empirical evidence from the prosthesis domain and integrate it with established HPM principles (e.g., fitts’ law).

Once these inputs are gathered, HPM-NL simulates the task using each of the five architectures according to their distinct computational rules. GOMS provides a baseline estimate of task time by applying Keystroke-Level Modeling, assuming expert behavior in routine, predictable tasks. CPM-GOMS extends this by identifying paths of overlapping cognitive and motor activity, allowing for parallel processing and more refined time predictions. ACT-R simulates behavior through its modular architecture, processing perceptual, manual, goal setting, and memory-related activities in cycles. It calculates task time by summing these cycles, and it evaluates cognitive workload by incorporating factors such as base mental effort, how frequently production rules fire, how interruptible the task is, and any skill-related penalties. Physical workload in ACT-R is modeled through the degree of motor involvement and biomechanical strain.

QN-MHP takes a different approach by modeling the user as a system of queuing processors. It measures time based on how long tasks wait in cognitive, perceptual, and motor queues, especially under multitasking conditions. This architecture captures dynamic aspects of human performance, and workload is inferred from the length of queues and competition for limited processing resources. The SOAR method models decision making as a series of cognitive cycles used to select and apply operators toward a goal. It tracks how often impasses arise, how complex operator choices are, and how demanding physical actions are to estimate both time and workload. Notably, only ACT-R, QN-MHP, and SOAR provide estimates for cognitive and physical workload. GOMS and CPM-GOMS focus strictly on the timing of expert performance and do not account for workload directly.

For every task, HPM-NL simulates performance across a virtual population of 30 to 50 participants, using either Monte Carlo methods (Speagle, 2019) or Bayesian sampling (Qian et al., 2003; Rasmussen & Ghahramani, 2003) to account for variability. The results include step-by-step breakdowns, comparisons across models, visual graphs, and tabular summaries. Every output is backed by citations in APA 7th edition style, and the system offers a clear explanation of how each estimate was derived, ensuring complete transparency and traceability. By aligning its outputs with peer-reviewed literature and rigorous modeling logic, HPM-NL provides a scientifically grounded framework for understanding how humans perform tasks under a variety of conditions.

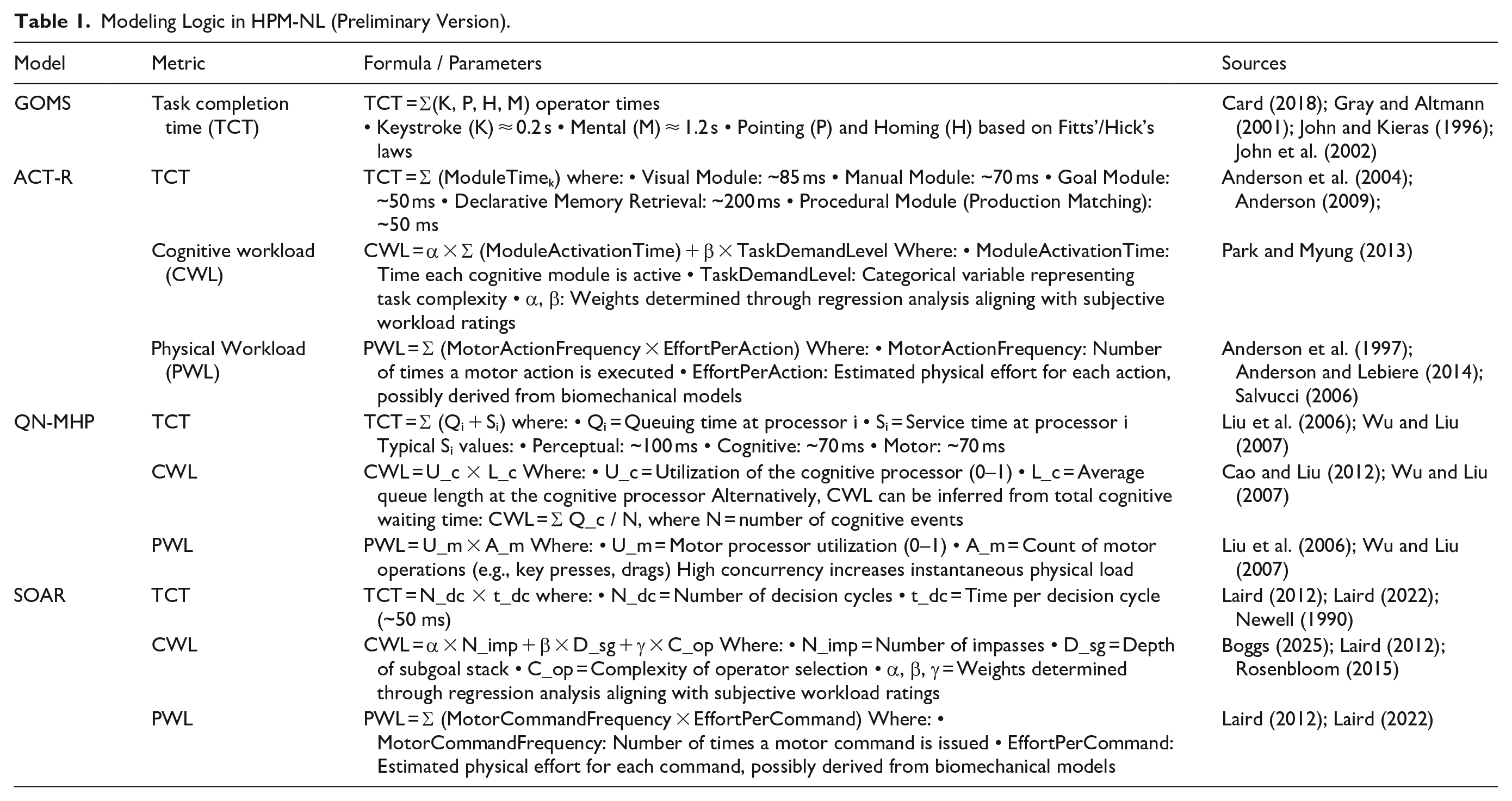

Modeling Logic in HPM-NL (Preliminary Version).

A human subject experiment was conducted to validate the performance of HPM-NL (IRB number: REB25-0361) in early 2025. Validation of the HPM-NL was conducted through a between-subjects experimental design comparing two sets of performance outcomes: (1) HPM-NL’s generated TCT predictions (Link) and (2) Cogulator-based TCT predictions (Estes, 2021) (Link) (used only for CPM-GOMS). Twenty-five graduate students (M: 17, F: 8, Age. M = 25.71, Age. STDEV = 2.45) enrolled in human factors engineering and user centered design course (BMEN 631) participated the validation study: modeling clothespin relocation test (CRT) (one cycle) of electromyography (EMG)-based upper limb prosthetic device users (Park et al., 2023; Park & Zahabi, 2020). All students received training on HPM, Cogulator, and HPM-NL throughout the course to ensure familiarity and consistency in their model-building approaches. We hypothesized that TCT from HPM-NL (CPM-GOMS) would exhibit no significant differences from Cogulator-generated TCT. We did not include a plain GPT, as an alternative (4o, o1, or other extensions), as it generated too much fake outcomes.

Result

The modeling logic implemented in HPM-NL from five HPMs are summarized in Table 1. The five models and papers in Table 1 were chosen primarily based on a recent systematic literature review paper: Park and Zahabi (2024). HPM-NL is free, can be searched and used from Explore GPTs’ menu (Link).

A Wilcoxon signed-rank test (Wilcoxon, 1992) was conducted using R to evaluate differences between HPM-NL and Cogulator across four paired observations. Results indicated no statistically significant difference between the two conditions (

Discussion

First, authors emphasize once again that the current version of HPM-NL is not generalizable, but intended only for preliminary use on a particular task (CRT). The small validation study indicated that the HPM-NL workflow can replicate benchmark timing estimates while drastically reducing modelling effort; users gain Cogulator-grade accuracy in seconds and without specialised software training. This equivalence supports the use of HPM-NL as a rapid first-pass estimator during early design or iterative usability studies.

Beyond this initial empirical result, HPM-NL contributes a new “

In a near future, HPM-NL could offer several tangible potential benefits for the HPM community. By embedding the formal logic of five well-established frameworks behind a natural-language interface, it reduces the steep learning curve that often limits the practical uptake of model-based evaluation. Clinicians and engineers can describe a task in everyday language and immediately receive transparent, fully referenced estimates of TCT and workload. Because every numeric output is tied to a peer-reviewed source and generated under a strict hallucination-minimisation policy, the tool retains scientific traceability while delivering the rapid turnaround that LLM pipelines make possible. Its free availability through the “Explore GPTs” catalogue further lowers barriers to entry and provides an easily deployable teaching and prototyping platform. Additionally, HPM-NL supports on-demand visualization of model components, giving users immediate feedback regarding potential bottlenecks or conflicts within the model. The broad reliance on established human factors literature undergirds these features with both theoretical consistency and empirical credibility. Lastly, the speed and accessibility are other merits of HPM-NL. Previously, modelers reported spending substantially more time aligning model assumptions and searching for relevant parameters, whereas HPM-NL required only brief textual prompts and provided ready-to-use output in under a minute per task. Coupled with its free availability and the absence of proprietary software requirements, this efficiency renders HPM-NL a practical and cost-effective solution for clinicians, engineers, and researchers.

While HPM-NL is delivered through a LLM interface, it does not rely on distributional text regularities alone. Every numeric prediction is produced after the LLM retrieves (Step 1) empirically reported constants from 30 years of prosthesis and HPM literature and (Step 2) instantiates the explicit causal algorithms of five classical frameworks. Consequently, the path from prompt to output can be traced from the user’s natural-language task description, through model-specific equations—e.g., ACT-R module cycle times (Anderson & Lebiere, 2014) or QN-MHP queue–service dynamics (Liu et al., 2006)—to the final TCT or workload estimate. Because the intermediate values and their sources are exposed in the output pane, clinicians and engineers can audit or override any assumption, providing a level of transparency comparable to hand-built CPM-GOMS spreadsheets yet orders-of-magnitude faster.

We nevertheless acknowledge that the generative layer can introduce bias when the input scenario strays beyond the CRT task. To guard against this, our roadmap adopts a hybrid validation strategy that alternates between analytic prediction and empirical calibration. Future work will (i) benchmark HPM-NL against observed TCT and NASA-TLX scores collected from able-bodied and prosthesis users across multi-step, multitasking protocols, (ii) quantify agreement using root-mean-square error and Bland-Altman analysis, and (iii) expose model provenance by listing the specific operators and parameters that most strongly influenced each prediction. By combining token-level traceability with established explanatory constructs—goal stacks in SOAR or processor-queue lengths in QN-MHP—we aim to deliver interpretations that are meaningful to both cognitive scientists and design practitioners while avoiding the “black-box” critiques often levelled at LLMs (Park et al., 2020; Park & Zahabi, 2024).

Practically, we advise treating HPM-NL as a rapid, first-pass hypothesis generator situated within a progressive-fidelity workflow (Wickens et al., 2021). Early in design, its citation-anchored outputs can highlight potential performance bottlenecks within seconds; subsequent high-fidelity simulations or instrumented user tests should then confirm or refine these estimates. In this layered role, the tool complements rather than replaces traditional HPM practice, balancing the accessibility benefits of LLM technology with the empirical rigour demanded in safety-critical healthcare applications.

In Addition to the Issues Above, Several Limitations Must be Acknowledged

First, we should further validate that each computational logic (other than GOMS) in Table 1 is well established, and functions as described in the original references. The original and recent modelers of each of the five HPMs should be involved and provide comments on them. Second, we did not test the variability of the HPM-NL according to the different styles of the queries. In the future study, we need to formulate the styles of queries to stabilize the results. Plus, the study’s validation was limited to a single, highly structured task—the clothespin relocation test—and focused solely on TCT. Other important performance dimensions such as CWL, multitasking, or fatigue effects were not assessed, and HPM-NL’s utility in modeling complex, real-world tasks remain to be demonstrated. The participant pool, without actual prosthesis users, constrains the generalizability of the findings, particularly for assistive technology design contexts where user variability is critical.

Another limitation arises from the technical reliance on a proprietary LLM—specifically, a fixed version of ChatGPT (o1 pro). This dependency introduces a risk of version drift, where future changes in the model’s architecture or behavior could affect HPM-NL’s outputs and compromise reproducibility. Additionally, although HPM-NL enforces strict citation traceability, its automated literature-mining and parameter extraction processes may still propagate errors or inherit biases from the source materials, potentially affecting the reliability.

The study also lacks a baseline comparison with general-purpose GPT prompting. Without this comparison, it is difficult to isolate the added value of HPM-NL’s curated modeling framework over ad-hoc use of LLMs for performance prediction. Furthermore, since the study participants had prior exposure to HPM-NL through classroom training, expectation effects may have influenced how they used the tool or interpreted its outputs, possibly biasing convergence with Cogulator.

Lastly, while hallucination mitigation strategies were applied, the risk of subtle inaccuracies remains inherent to any LLM-based system. Continued validation against empirical human-subject data, alongside community peer review, will be necessary to ensure the robustness and long-term credibility of HPM-NL in applied human performance modeling.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this research was provided by 2025 Schulich Momentum Fund (University of Calgary). The views and opinions expressed are those of the authors.