Abstract

As human-agent teaming (HAT) research continues to proliferate, it becomes more difficult for both HAT researchers and agent developers to stay abreast of the literature. To this end, we developed an analysis of alternatives tool that enables The Contextual Labeling of Analytical Information into Relevant Valuable Options to Yield Alternatives for Novel Techniques (CLAIRVOYANT). Powered by a multiple criteria decision analysis (MCDA) framework, CLAIRVOYANT aids in hypothesis generation and experimental design by displaying summarized relationships observed in the HAT literature based on user needs. After iteratively designing literature-informed frameworks, generating an initial literature database, and instantiating the MCDA, a validation study was conducted demonstrating CLAIRVOYANT’s potential. Current limitations and future directions are discussed.

Keywords

Introduction

Human-agent teams (HATs), in which people and technological agents interact to accomplish feats surpassing what either can achieve alone, are on the horizon. The advent of HATs stems from joint advances in agent design paradigms (e.g., large-language models) and empirical research on the socio-cognitive dynamics of human-agent interactions. However, the multidisciplinary expanse of the literature is increasingly impeding researchers from consistent terminology usage in key HAT constructs, identifying empirical gaps, and creating informed HAT experimental designs. To illustrate, in a recent literature review (Hidalgo et al., 2023) over 150 HAT measures and 130 experimental manipulations were identified in 110 empirical HAT studies.

Analysis of alternatives tools have been successfully employed to support decision making in military, healthcare, and business sectors to address similar concerns (Hofstetter et al., 2002). Ironically, in spite of being the subject of some HAT research (Wang, 2016), such tools have yet to be harnessed for addressing disciplinary silos within the HAT research community. To address this gap, we developed a tool for the Contextual Labeling of Analytical Information into Relevant Valuable Options to Yield Alternatives for Novel Techniques (CLAIRVOYANT).

CLAIRVOYANT is an open-source analysis of alternatives tool that integrates empirical findings reported in the HAT literature. Our goal was to enable robust support for hypothesis generation, gap identification, and design decisions for HAT experiments and agent development efforts. This involved three objectives. First, we documented studies from each of the construct categories in our measure-manipulation framework (Hidalgo et al., 2023) in an effort to capture the most comprehensive representation of human-agent teaming related constructs. Second, using the data entered from our first object, we aimed to develop a multiple criteria decision analysis (MCDA) database module to systematically accommodate various statistical analysis techniques used in HAT studies. Doing so enables comparisons across studies to present users a summary of the research landscape in a manner consistent with their needs. Third, we aimed to test and evaluate the utility of CLAIRVOYANT by comparing predictions from the tool against empirical outcomes from the most recent study in the recently concluded DARPA Artificial Social Intelligence for Successful Teams (ASIST) program. In the sections below, we describe our approach to these objectives, and discuss the tool’s viability and future directions.

Method

Literature Search

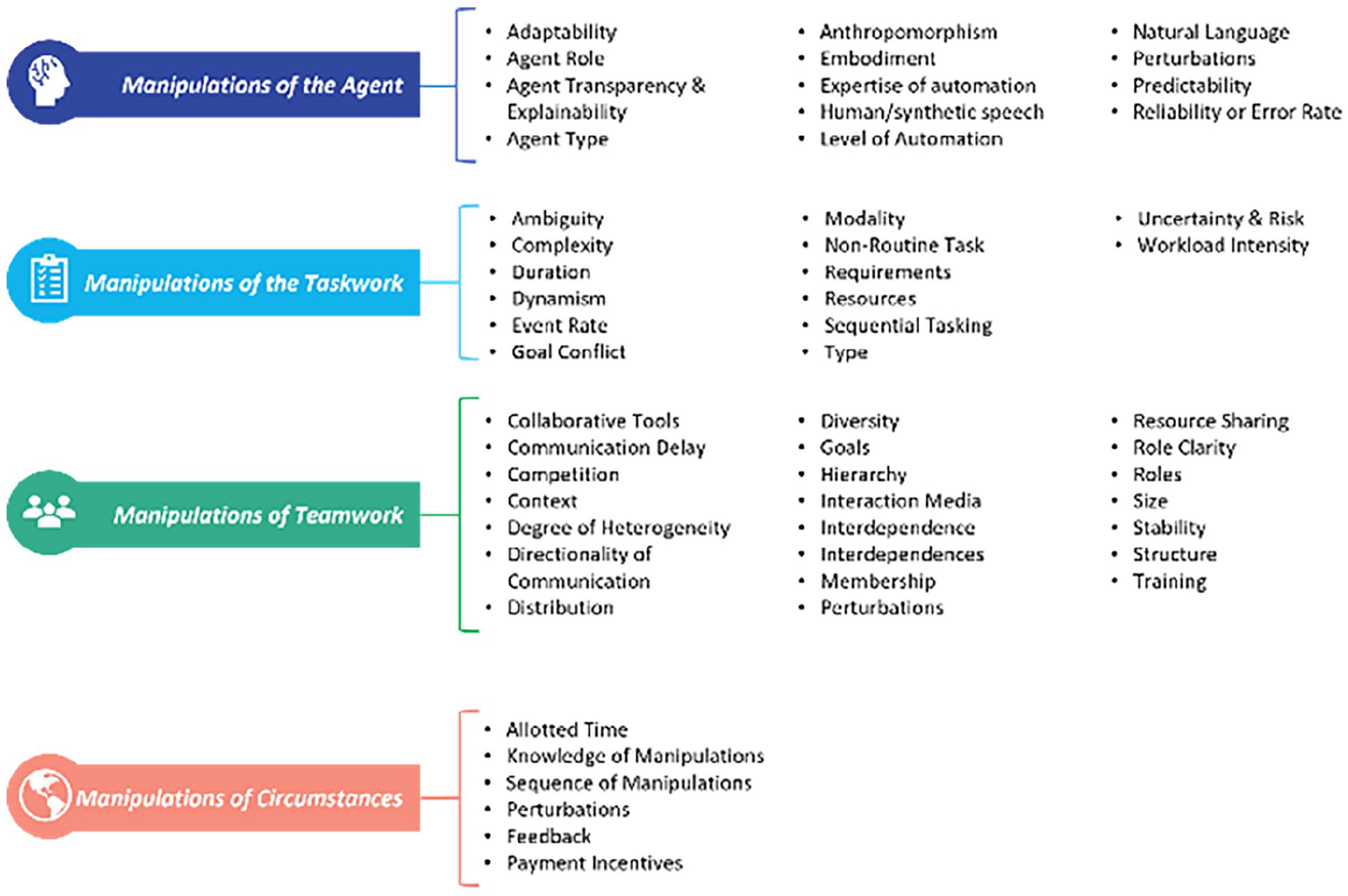

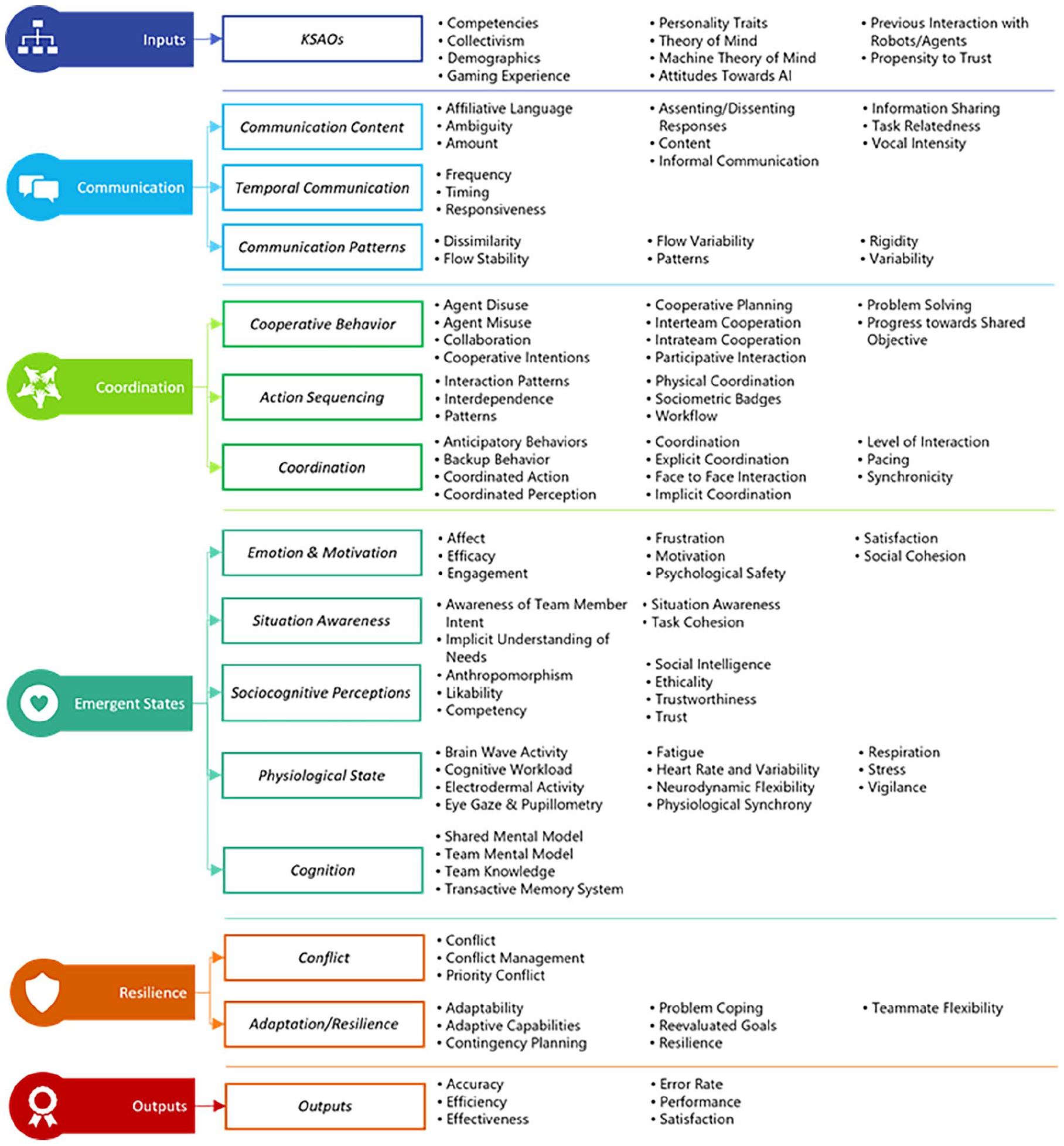

Developing this tool began with a broad sweep of the HAT literature and organizational team literature to produce a comprehensive list of studied measures and manipulations. To begin, all members of the research team went through an iterative sorting process to categorize constructs from empirical HAT studies to create frameworks for HAT manipulations and measures (see Figures 1 and 2, respectively). The framework categories were initially synthesized through combined researcher expertise, and then adjusted based on feedback from peers and early research dissemination (e.g., conference presentations; Hidalgo et al., 2023).

Human-agent teaming manipulation framework.

Human-agent teaming measure framework.

These frameworks were then used to guide the data entry effort by prioritizing coverage of at least one of each category in both frameworks (i.e., datapoints entered cover all four manipulation categories and all 15 measure categories). Inclusion criteria were then developed to select studies for entry. Only studies with (a) experimental manipulations, (b) measured outcomes, (c) human-agent interaction, and (d) reported statistics were included for database entry. We then identified studies that fit these criteria for each manipulation and measure category. For each study, manipulation attributes (e.g., name, conditions), measure attributes (e.g., name, type), and statistics (e.g., sample size, test type and statistics) were entered into the database. The result of this effort was 55 datapoints entered into an initial database that reflects experimentally studied relationships between HAT manipulations and measures.

Tool Design

Back-end: Multiple Criteria Decision Analysis

With study statistics from the 55 datapoints entered into the database, we implemented a MCDA that converted the provided statistics into a generalizable effect size (Cohen’s d). By converting reported effect sizes into one standardized effect size, CLAIRVOYANT is able to provide meaningful comparisons of reported effect sizes in the HAT literature across different studies to gain summarized insights about studied relationships. In short, the MCDA translates study results into comparable effect sizes which allows the tool to ultimately display meaningful results to the user (i.e., which manipulations and measures are most strongly related; see Supplemental Appendix B for user interface mockups).

Additionally, the MCDA is able to weight study characteristics in order to provide users a prioritized list of studies based on their interests. Currently, the MCDA is set to be able to order study results based on the weight that users place on sample size, relationship strength, and the number of studies a relationship is observed in. Taken altogether, the MCDA serves as the cornerstone of CLAIRVOYANT by providing a standardized effect size for comparing relationships and presenting them to the user with respect to the user’s priorities.

Front End: User Interface Design

On the user side, CLAIRVOYANT is accessed using an open-source software application hosted on the ASIST website. Users extract information from the database using a search query interface, selecting clickable icons for the manipulations, measures, and relevant study details they are interested in spotlighting (see Supplemental Appendix A for interface mockups). The search results show a comprehensive list of measure-manipulation pairs, including the number of data points (i.e., number of experiments containing each pair) and box-and-whisker plots showing their respective effect sizes. Further specifics about the studies could be accessed by clicking the Details icon. With this application, the user could observe three facets of the HAT literature: (a) how many and what manipulations have been previously implemented in the HAT literature, (b) the affected measures for each of the manipulations, and (c) the relationships (i.e., effect size) between the measure-manipulation pairs.

Validation Study

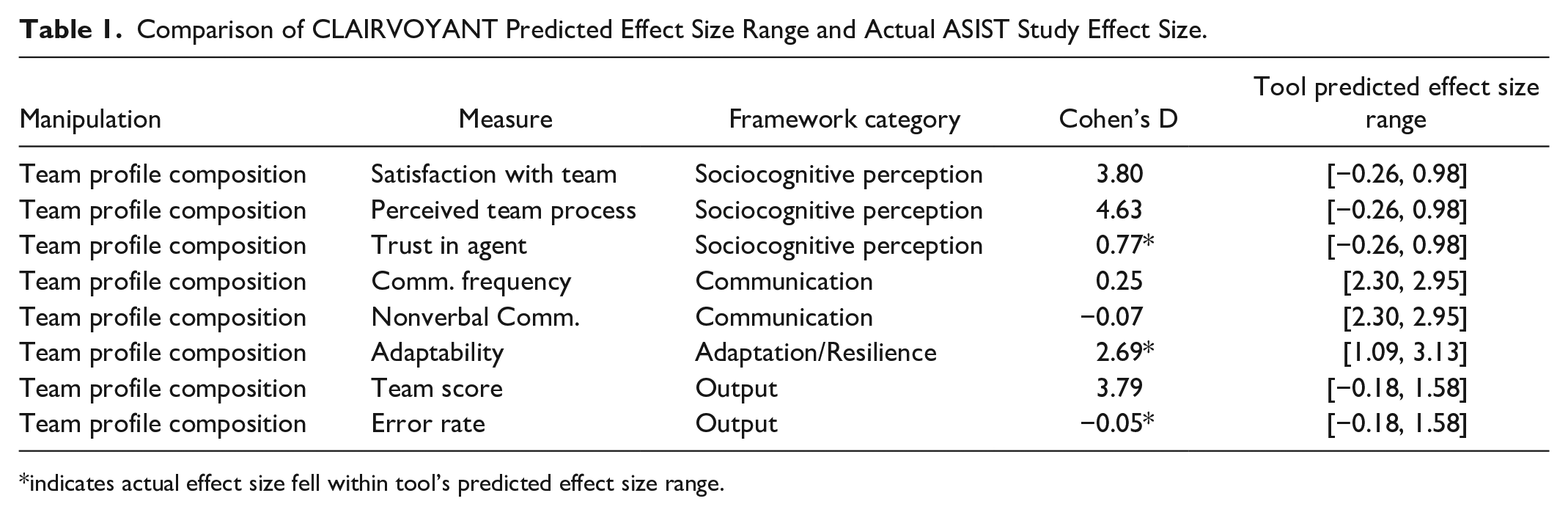

To examine the accuracy of the CLAIRVOYANT’s predictions, we conducted a validation study based on the initial set of 55 datapoints entered. We identified the most recent ASIST study as a viable dataset to test CLAIRVOYANT’s predictive ability. Because the experiment included HAT manipulations and measures, but had not formally published any results, the study offered a highly relevant dataset without any established findings that might be previously included in CLAIRVOYANT’s initial dataset. After examining the dataset, we selected one manipulation (team profile composition) and several measures (see Table 1), then ran predictions in CLAIRVOYANT by selecting the corresponding categories in the manipulation framework (Manipulations of Teamwork) and measures framework (Sociocognitive Responses, Communication, Adaptation/Resilience, Outputs).

Comparison of CLAIRVOYANT Predicted Effect Size Range and Actual ASIST Study Effect Size.

indicates actual effect size fell within tool’s predicted effect size range.

After obtaining predicted effect size ranges from CLAIRVOYANT, we conducted linear regressions to calculate effect sizes for the relationship between team profile characteristics and the selected measures. Table 1 displays the results of the validation study. Of the observed study effect sizes, three manipulation-measure pairings fell within the predicted range (trust, adaptability, and error rate). In the following section, we discuss limitations of the tool in its current state that might account for these discrepancies.

Discussion

Limitations

CLAIRVOYANT shows potential as a decision support system for HAT research and design. At present, it generates meta-analytical predictions to support hypothesis generation and gap identification, with initial testing demonstrating high utility and successful predictions when supplied with sufficient datapoints. However, there are two notable limitations to CLAIRVOYANT in its present state. First, CLAIRVOYANT is currently limited to predicting outcomes based on traditional hypothesis testing research frameworks with elementary inferential statistics (i.e., t-tests, ANOVAs, regressions). Further work is needed in order to include novel analytical methods that HAT research has been continuing to implement (e.g., recurrence quantification analyses; Eloy et al., 2023; Bayesian analyses, Guo & Yang, 2021).

Second, as seen from the validation study, CLAIRVOYANT’s predictions depend on the quality and completeness of statistical data in published empirical studies. There was a clear positive relationship regarding the accuracy of the tool’s prediction and the number of datapoints the tool had to draw upon. Although we prioritized coverage across manipulation and measure categories, the HAT literature is abundant with studies examining trust (Glikson & Woolley, 2020), adaptability (Zhao et al., 2022), and performance (O’Neill et al., 2022). Consequently, we were able to obtain 4 to 6 datapoints for trust and performance as opposed to 1 to 2 datapoints for most other measures. As such, divergent data reporting practices in the literature, as well as resource limitations, have limited the number of studies we were able to include for our initial validation. While several predicted effect sizes were inaccurate, the validation study’s results also suggest that the tool’s effect size estimations are capable of providing an accurate prediction range when supplied with enough datapoints (e.g., trust, adaptability, and performance).

Future Directions

As CLAIRVOYANT was intended to support all types of HAT-related users, we hope to increase usability and compatibility across different use cases for the tool beyond academia (e.g., aiding practitioners in identifying priority capabilities for agent teammates, aiding agent developers in testing agent capabilities). We envision the long-term administration and maintenance of CLAIRVOYANT to be in line with open science frameworks in HAT research paradigms, building on the success of similar efforts (i.e., the DARPA ASIST program). As such, active input would be needed from HAT communities to further develop the tool, including ways to harness AI advancements for empowering research and development efforts in the long run.

Additionally, to address limitations concerning the number of datapoints, we envision leveraging current advances in AI to assist in high volume data entry and reporting. Automating the literature seeking and data entry process would alleviate resource limitations that come from manually completing these tasks and provide more datapoints for a more representative and comprehensive reflection of the HAT literature. Adding to the viability of this endeavor, the existing database would be able to serve as a training dataset to help point these automated processes toward more accurate study seeking and entry.

Ultimately, CLAIRVOYANT serves as an initial step toward helping all HAT-related researchers and developers push the envelope when it comes to designing human-agent teams and the ways they are studied and implemented.

Supplemental Material

sj-docx-1-pro-10.1177_10711813241279793 – Supplemental material for Development of an Analysis of Alternatives Tool for Human-Agent Teaming Research

Supplemental material, sj-docx-1-pro-10.1177_10711813241279793 for Development of an Analysis of Alternatives Tool for Human-Agent Teaming Research by Daniel Nguyen, Myke C. Cohen, Summer Rebensky, Ramisha Knight, Cherrise Ficke, Lauren Fortier and Brent Fegley in Proceedings of the Human Factors and Ergonomics Society Annual Meeting

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported in this document was performed in connection with contract number W912CG-22-C-0001 with the U.S. Army Contracting Command - Aberdeen Proving Ground (ACC-APG) and the Defense Advanced Research Projects Agency (DARPA). The views and conclusions contained in this document/presentation are those of the authors and should not be interpreted as presenting the official policies or position, either expressed or implied, of ACC-APG, DARPA, or the U.S. Government unless so designated by other authorized documents. Citation of manufacturer or trade names does not constitute an official endorsement or approval of the use thereof. The U.S. Government is authorized to reproduce and distribute reprints for Government purposes notwithstanding any copyright notation hereon. Distribution Statement “A” (Approved for Public Release, Distribution Unlimited).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.