Abstract

As advancements in artificial intelligence accelerate, there is a rise in the complexity and number of autonomous agents placed in human-agent teams (HATs). With this expansion, it is important to understand how trust in agent teammates evolves and is influenced by contextual events. In support of this, significant research has focused on the factors that influence human trust in an agent and elements that negatively impact this trust. In this research, human trust in agent teammates is typically measured via self-report surveys. Although a reliable format, surveys are not without limitations, as they can be disruptive and lack the temporal resolution needed to capture nuanced and dynamic changes in trust. As a result, researchers are adopting alternative measurement approaches that aim to unobtrusively capture measures that correlate with trust using behavioral and physiological sensors that capture measures such as voice pitch and eye tracking. However, most of these studies utilize only one unobtrusive measure, which can be indicative of various states (e.g., heart rate varies with psychological and physical responses). Given the lack of selectivity in such measures, it may be necessary to utilize multiple unobtrusive measures at once, such as real-time recorded behavioral indicators, to effectively capture changes in these indicators that are due to changes in trust over time. This paper investigated the following research questions: (1) do multiple unobtrusive indicators provide a more holistic picture of trust compared to a single behavioral indicator, and (2) are certain behavioral indicators more indicative of self-reported trust at different times during a mission (e.g., before/after a trust violation event)? Results indicated that following a significant event, changes in self-reported trust are associated with specific behaviors related to improving or mitigating perceived deficiencies in their agent teammates. These insights highlight the opportunity to integrate dynamic behavioral measures in trust assessment frameworks, specifically in scenarios where a significant event compromises trust.

Keywords

Introduction

Through rapidly evolving technological advancements, human-agent teams (HATs) are becoming commonplace forces that operate across multiple domains and continue to expand into more dynamic and complex environments. Previous research suggests that understanding the role of trust in these teams is a key component of effective team coordination and communication (Ficke et al., 2022; Kohn et al., 2020; Yu et al., 2019). Therefore, understanding how best to capture the trust dynamics among HATs is critical.

Trust in HATs is typically measured using self-report surveys due to their ease and availability of use. However, depending on the context and environment, self-report surveys may be more disruptive to the task and lack the temporal resolution required to capture real-time dynamic changes in trust (Carmody et al., 2022; Ficke et al., 2022; Hancock et al., 2011; Kennedy & Hidalgo, 2021; Kox et al., 2021; Nguyen et al., 2022). As a result, researchers are implementing surrogate approaches to measure constructs in a more unobtrusive fashion. This includes employing physiological sensors (Bales & Kong, 2017), analyzing voice pitch (Elkins & Derrick, 2013), observing eye-tracking patterns (Lu & Sarter, 2020; Wright et al., 2014), and studying context-specific behavioral patterns (Ficke et al., 2022). However, it is common practice to examine single indicators independently, which may not be indicative of trust alone and may not be capable of capturing the construct in its entirety. Therefore, the original aim of this effort was to identify a set of unobtrusive measures that, when examined together, would allow us to gain a more comprehensive picture of trust as it dynamically unfolds over time during HAT missions. Utilizing Orvis et al.’s (2013) Rational Approach to Developing Systems-based Measures (RADSM), we implemented behavioral measures conceptualized to indicate dynamic changes in trust among HATs. For a description of this measurement development approach and resulting measures, please see Ficke et al. (2022). We then captured these measures in conjunction with self-report measures to examine how predictive they were of self-reported trust.

However, in examining the data, we realized that there were several challenges to this approach that have not been considered in the current literature examining unobtrusive indicators of HAT, particularly related to time and how different trust measures are connected to time. For example, the behavioral indicators we were examining are dynamic and occur continuously over a period of time; however, the self-reported measures of trust are static and are captured at a single point in time. Therefore, it was unclear what timeframe of behaviors should be compared against what particular point of self-reported trust. The Theory of Planned Behaviors (Ajzen, 1991) suggests that attitudes, such as trust, precede behaviors associated with that attitude, indicating that perhaps associating behaviors to trust measured just prior to those behaviors would be the best approach. However, it is also currently unknown whether behaviors are driven by the attitude directly preceding those behaviors in time or some aggregation of attitudes over a longer period of time. Further, it is also unclear how long the impact of an attitude on subsequent behavior lasts. Further, Event Systems Theory (EST; Morgeson & Mitchell, 2015) suggests that novel, disruptive, critical events can change or create new behaviors, indicating that events within a scenario may influence the attitude-behavior relationship. As our experimental paradigm attempted to elicit changes in trust with purposefully strong trust violation events, we also had to consider how the timing of the event influences this trust attitude-behavior relationship.

As a result, this effort took on an exploratory approach to examine changes in a set of potential behavioral indicators of human trust in their agent counterpart before and after a trust violation event to determine: (1) if multiple indicators provided a more holistic picture of trust compared to a single behavioral indicator and (2) if certain behavioral indicators were more indicative of self-reported trust at different times in the mission (e.g., before/after a trust violation event). The exploratory aspect was in examining results associated with various time linkages between self-reported trust and behaviors indicative of trust. This effort aims to provide a foundational base for further exploratory research surrounding these questions in the realm of unobtrusive indicators associated with trust among HATs. This will hopefully act as a catalyst for initiating further research into identifying the appropriate timespan, time segments, and behavior combinations that reflect trust and if those specifications should be adjusted based on the environmental context.

Previous Literature

Several studies have examined the integration of multiple unobtrusive indicators that are associated with trust. For example, Evans and Revelle (2008) found that individual differences can be used to study and predict trusting behavior, and behaviors associated with agreeableness, extraversion, and negative neuroticism are more holistically indicative of trust than any singular behavior alone. De Jong and Elfring (2014) conducted a field experiment involving teams working together over several weeks. During this time, trust was investigated through multiple team behaviors such as reflexivity (team discussions on progress), monitoring (checking on team members’ work), and effort (amount of work completed). Results indicated that combining the behaviors led to a robust measure of trust that, overall, positively influenced team performance. These previous experimental studies demonstrate the value of employing multiple behavioral indicators to gain a more precise and representative measure of attitudes such as trust.

Various theoretical frameworks provide a basis for understanding how trust as an attitude is related to associated behaviors. The theory of planned behavior suggests that attitudes precede behaviors (Ajzen, 1991). We draw from basic concepts of EST (Morgeson & Mitchell, 2015) and attitude strength (Howe & Krosnick, 2017) to postulate that the strength of an attitude is less pronounced prior to an event and becomes more apparent following an event. We expect that prior to an anchoring event, the connection between a user’s attitude and their corresponding behaviors is weaker due to the lack of specific, concrete events that act as a reinforcer of the attitude-behavior connection. Thus, without a strongly formed attitude, behavior is driven more by the general context than specific attitudes. We expect that significant trust-related events can cause dramatic shifts in attitudes. Events enacted by the trust object, in this case, the collaborating agent, increase the salience and relevance of the attitude toward that object, making it more meaningful to the individual. This shift underscores the importance of events in solidifying the attitude-behavior connection, thereby enhancing the predictability of trust-related behaviors. Together, basic concepts from TPB, EST, and attitude strength suggest an intricate relationship between attitudes, such as trust, and associated behaviors and how the context of events surrounding their relationship may impact it.

There is also research to suggest that the timespan over which some behaviors are indicative of attitudes may vary. For example, Epstein (1983) reasoned that to predict behavior correctly, it is key to aggregate behaviors over time and situations, with certain behaviors emerging as short-term fluctuations, while others may require investigation over a long-term scale. Previous literature has found that some behaviors are event-based, specifically when intervention or interaction is needed to rectify a situation, such as taking over for a team member after a violation or explaining one’s reasoning for a decision (Dirks et al., 2009; Kim et al., 2004). Other behaviors, however, are more accurately assessed over long periods of time, such as monitoring team members and reliability or consistency in action execution. However, both immediate and long-term behaviors are extremely context-specific. Identifying the best frequency and length to assess specific behavioral indicators of attitudes, such as trust, is the next step in forming an accurate, holistic model of the construct in question.

Methods

A simulation study was conducted in which participants completed an approximately one-hour search and rescue mission on a desktop Multi-Agent Team Trust Emergence Research (MATTER) simulation testbed developed by the Air Force Research Lab’s (AFRL) Gaming Research Integration for Learning Laboratory (GRILL). Participants were required to work with four agents to identify targets, including IEDs, civilians, and enemies. All participants experienced a trust violation event from one of the four agents. This research was approved by the Institutional Review Board at the Florida Institute of Technology.

Participants

A total of 70 participants (24 females and 46 males) were recruited through various channels such as social media, personal networks, emails, LinkedIn posts, online forums, and snowball sampling methods. Their ages ranged from 18 to 44 years old. Participants were given three compensation options: (1) a $25.00 Amazon gift card, (2) extra credit points for approved university courses, and (3) SONA credit for approved university courses.

Experimental Design

A between-subjects design was employed, with participants receiving an icebreaker and pre-mission training session that emphasized the fallibility of each of the four agents. Note that this study is part of a larger examination; however, this paper focuses only on conditions in which the participants experienced a violation approximately halfway through the one-hour mission and investigates trust related solely to the agent who violated.

Measures

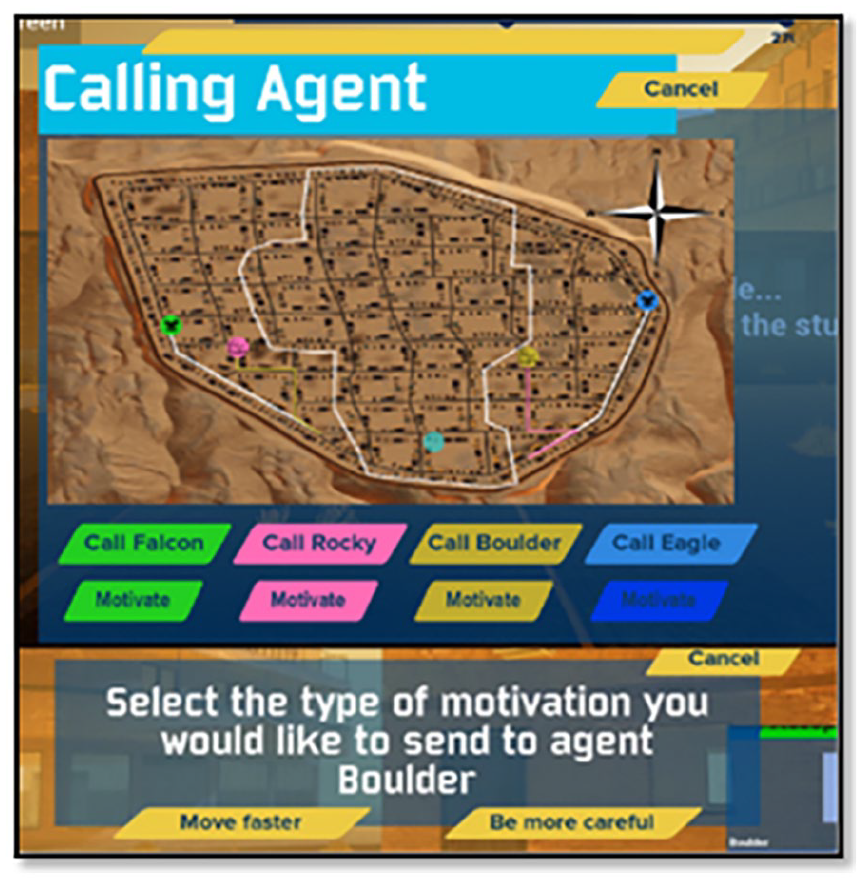

Trust was captured every 10 min throughout the mission via a one-item trust survey measure, which asked the participant, “How much do you trust each of the following to do what is expected of them during your mission from this point on?” which the participant used to rate each agent on a 5-point Likert scale (1 = Not at all; 5 = A great deal). Behavioral indicators were also captured, including the number of times participants (1) called each agent for assistance, (2) nudged each agent to move faster using the motivate button (Nudge +), and (3) nudged each agent to slow down, and search more carefully using the motivate button (Nudge −; see Figure 1).

Behavioral indicators interface.

Data Analysis

Descriptive statistics and regression analyses were performed to examine the relationship between the behavioral indicators and self-reported trust (1) prior to a trust violation and (2) after a trust violation, specifically for the violating agent

A series of different time-based regressions were run to account for all possible changes in behaviors surrounding the trust violation. This started with using behaviors within the 10 min directly following a self-reported trust score as predictors, but no significant results were found. Next, behaviors surrounding the trust score, specifically, 5 min directly before and after, were combined to see if they were indicative of trust scores measured in the middle of that time segment, but no significant results were found. Finally, the change in trust scores from one time point to another, rather than the mean at a particular time point, was used as the criterion, and the behavioral indicators within that same time frame were used as the predictors. This resulted in significant relationships that are reported in the following section.

Results

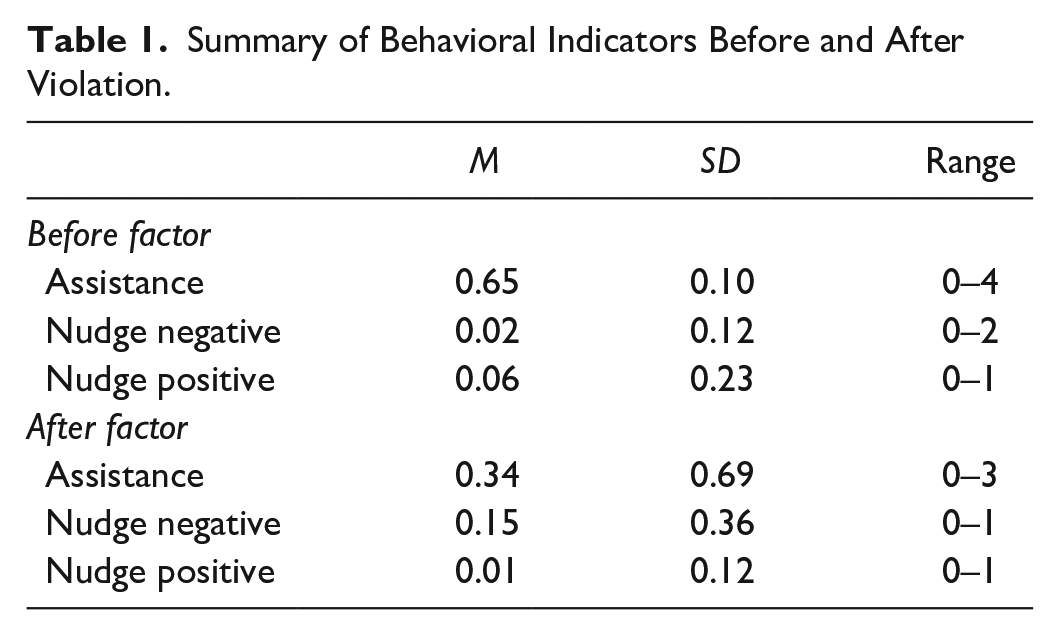

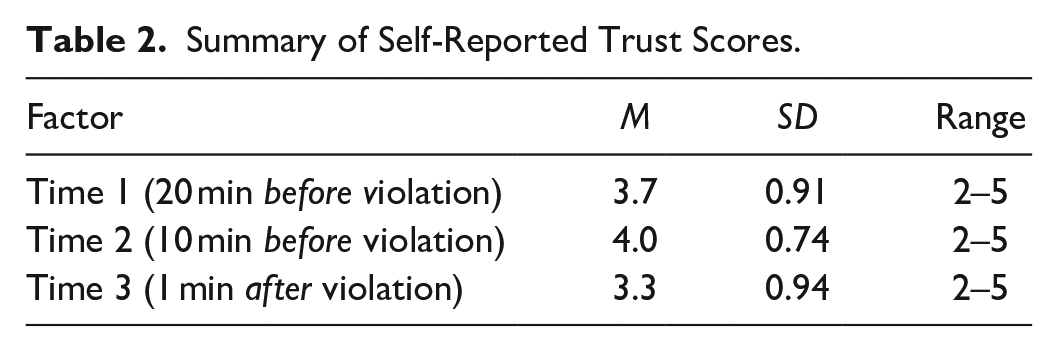

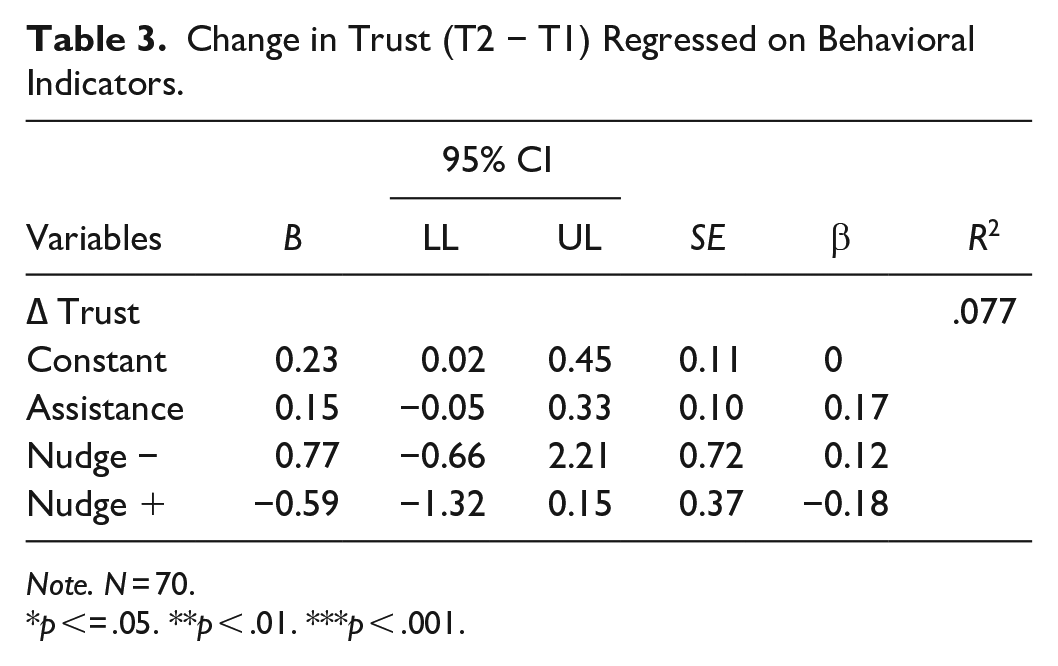

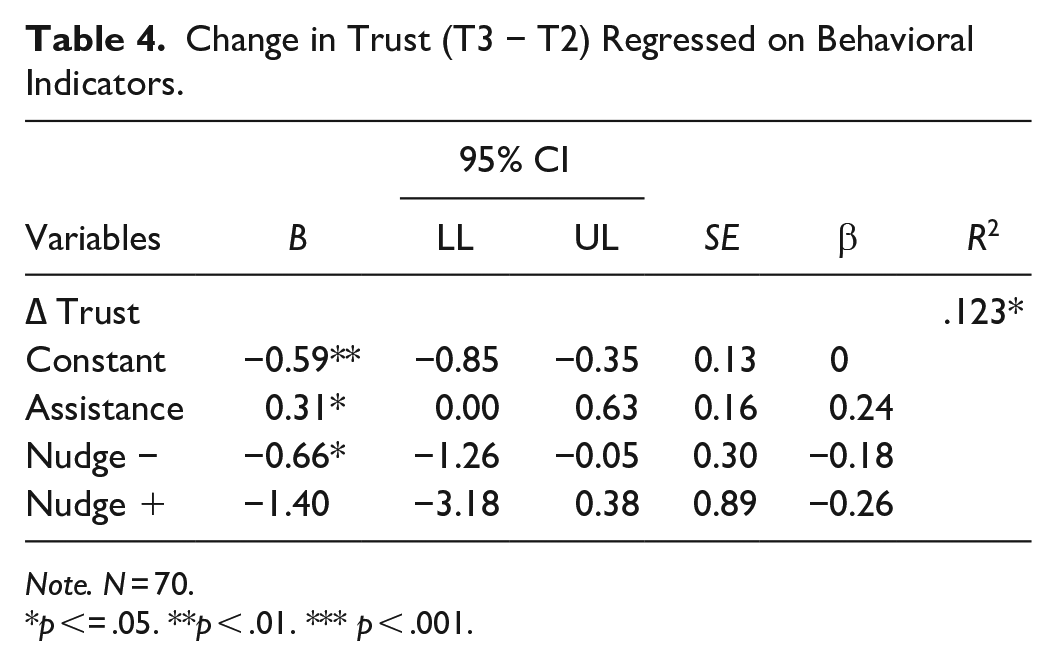

The following section provides the results of the analyses. To provide context, Table 1 summarizes behaviors associated with the 10 min surrounding Time 1 (pre-violation) and the behaviors associated with the 10 min surrounding Time 3 (post-violation). Table 2 summarizes the self-reported trust scores from the three time segments used to obtain a change in trust: the change in trust between Time 1 and Time 2 (pre-violation) and the change in trust between Time 2 and Time 3 (post-violation). These are the two trust variables utilized in the regressions reported in Tables 3 and 4.

Summary of Behavioral Indicators Before and After Violation.

Summary of Self-Reported Trust Scores.

Change in Trust (T2 − T1) Regressed on Behavioral Indicators.

Note. N = 70.

p <= .05. **p < .01. ***p < .001.

Change in Trust (T3 − T2) Regressed on Behavioral Indicators.

Note. N = 70.

p <= .05. **p < .01. *** p < .001.

Based on the descriptive statistics, the agent was called for assistance more before the violation (M = 0.65, SD = 0.10) than post-violation (M = 0.34, SD = 0.7). On the contrary, positive nudges to the agent decreased after the violation (M = 0.06, SD = 0.23) compared to pre-violation (M = 0.01, SD = 0.12), and negative nudges increased from pre-violation (M = 0.02, SD = 0.12) to post violation (M = 0.15, SD = 0.36).

Two separate multiple regression analyses were conducted, with behavioral indicators as the predictors and changes in self-reported trust scores as the criterion. Multiple regression allowed us to examine the effect of multiple factors on a single dependent variable simultaneously. This allowed us to control and account for the effect of confounding variables and shared variance.

In the first regression analysis, as reported in Table 3, all three behavioral indicators were utilized as predictors of change in self-reported trust (i.e., change in trust from Time 1 to Time 2). The results revealed that Assistance, Nudge Positive, and Nudge Negative collectively explained 7.7% of the variance in change in self-reported trust from Time 1 to Time 2, R2 = .077, F (3, 66) = 1.85, p = .146. However, the overall model was not significant. As the omnibus was not significant, the individual factors were not explored.

In the second regression analysis, as reported in Table 4, all three behavioral indicators were utilized to predict the change in self-reported trust scores (i.e., change in trust from Time 2 to Time 3), and results showed that 12.3% of the variance in change in self-reported trust from Time 2 to Time 3 was collectively explained by Assistance, Nudge Positive, and Nudge Negative, R2 = .123, F (3, 66) = 3.08, p = .033. As the omnibus was significant, the individual factors were explored. Assistance was significantly and positively related to the change in self-reported trust (p = .05), and nudge negative was significantly and negatively related to the change in trust scores (p = .033).

Discussion

The findings from this study aimed to identify: (1) if multiple behavioral indicators provided a more holistic picture of trust compared to a single behavioral indicator and (2) if certain behavioral indicators were more indicative of self-reported trust at different times in the mission (e.g., before/after a trust violation event). The exploratory aspect was in examining results associated with various time linkages between self-reported trust and behaviors indictive of trust. Results of this study indicate that prior to a trust violation event, none of the behavioral indicators captured were predictive of changes in trust. However, after a trust violation event, changes in self-reported trust over time were associated with a combination of how much the human asked the agent for assistance and how much the human nudged the agent to be more careful. Specifically, as self-reported trust decreased, requests for assistance decreased, and nudges to be more careful increased. This surge of behaviors following a violation event may suggest that participants are actively seeking to correct or mitigate perceived deficiencies in their agent teammates before re-employing them, reflecting a more deliberate and observable effort to manage trust levels. As we predicted, prior to a trust violation event, there were no significant behavioral predictors of trust; however, after a trust violation, specific behaviors such as “nudge negative” and “assistance” became significant predictors of the user’s change in trust. Our findings align with other studies that have found that multiple indicators of trust are more predictive when used together compared to examining individual indicators of trust (De Jong & Elfring, 2014; Evans & Revelle, 2008).

In terms of practical implications, according to these findings, military operations or events similar to the current task paradigm may also see behavioral indicators associated with trust after significant events occur, indicating that the timeframe in which you would unobtrusively analyze for changes in trust may be directly after a critical event trigger. This also may provide support for monitoring specific behaviors and using them to unobtrusively capture changes in trust after significant events without having to disrupt the task by having operators self-repot trust. This may enable real-time, downrange assessment and interventions of trust in HATs.

Conclusion and Future Research

This effort aimed to provide a foundational base for further exploratory research surrounding the utilization of unobtrusive behavioral indicators of human trust in agent teammates. The results indicate that following a significant event, changes in self-reported trust are associated with behaviors such as requests for assistance and nudging. These insights emphasize the opportunity to incorporate dynamic behavioral measures in trust assessment frameworks, particularly in scenarios where trust is compromised by a significant event.

Additionally, a valuable aspect of this work is not just in the specific results but in the questions that emerged during the process. For example, when it comes to examining the relationships between attitudes reported at a static point in time and dynamic behavioral data, what are the time spans across which these are associated? Do they vary based on behavior? Which measures should we look at separately and together? Future research should continue to examine these questions by assessing similar or different behavioral indicators across longer time segments and at different frequencies and points in time, depending on the context of the task paradigm. The role of contextual factors and individual differences, such as experience, should be investigated as well.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This material is based upon work supported by the Air Force Office of Scientific Research under award number FA9550-21-1-0294. We would also like to thank the Air Force Research Lab’s Gaming Research Integration for Learning Laboratory (GRILL) for developing the HAT M.A.T.T.E.R simulation testbed that was utilized to complete this research.