Abstract

Trust is imperative for safe and effective human-automation relationships, especially in complex systems. Transparency, which is the communication of the automation’s behavior and intent to the operator, can be used to build trust in human-automation teams. The present study investigated the impact of transparency across the Human-Automation Teaming (HAT) lifecycle (pre-, during, and post-task) on human-automation trust, communication, and reliance. Participants engaged in a counter small-unmanned air systems simulation. They were randomly assigned to one of six conditions with different configurations of transparency (or lack thereof) across the lifecycle phases. Overall, we found modest outcomes for the influence of automation transparency across the HAT lifecycle on trust, situation awareness, workload, and task performance. While trust levels did not significantly differ across lifecycle configurations, there were notable impacts on situation awareness, task performance, and workload, particularly when transparency was absent during critical phases such as training and the after-action reviews.

Keywords

Introduction

Automation, along with the rapidly evolving field of autonomous technologies, plays an ever-greater role in the vast majority of complex, modern systems. Considering this, understanding and effectively managing the interactions between humans and these systems is becoming increasingly important (Hancock, 2022).

Trust is imperative for safe and effective human-automation relationships, especially in complex systems (Jian et al., 1998; Parasuraman & Wickens, 2017; Sheridan, 1988). A lack of trust or understanding of the automation can diminish performance and operator situation awareness (SA; Lee & See, 2004). Transparency–the communication of the automation’s behavior and intent to the operator–can be used to build trust in human-autonomy teams (HATs; Lyons, 2013). The preset research utilizes two prominent models of transparency: Lyons’ (2013) robot-to-human model and Chen et al. (2014) situation awareness-based transparency (SAT) model.

Models of Human-Automation Transparency

The robot-to-human model of transparency (Lyons, 2013) emphasizes the importance of supporting the system’s intentional, task, and analytical models. Information supporting the intentional model aids the user in understanding the automation’s design, purpose, and intent. Information supporting the task model facilitates users’ understanding of how the automation executes tasks and progresses toward its goals. Finally, information supporting the analytic model enhances users’ comprehension of the automation’s decision-making processes, such as the underlying principles of its algorithms. Integrating information that supports these models helps build appropriately calibrated trust and reliance.

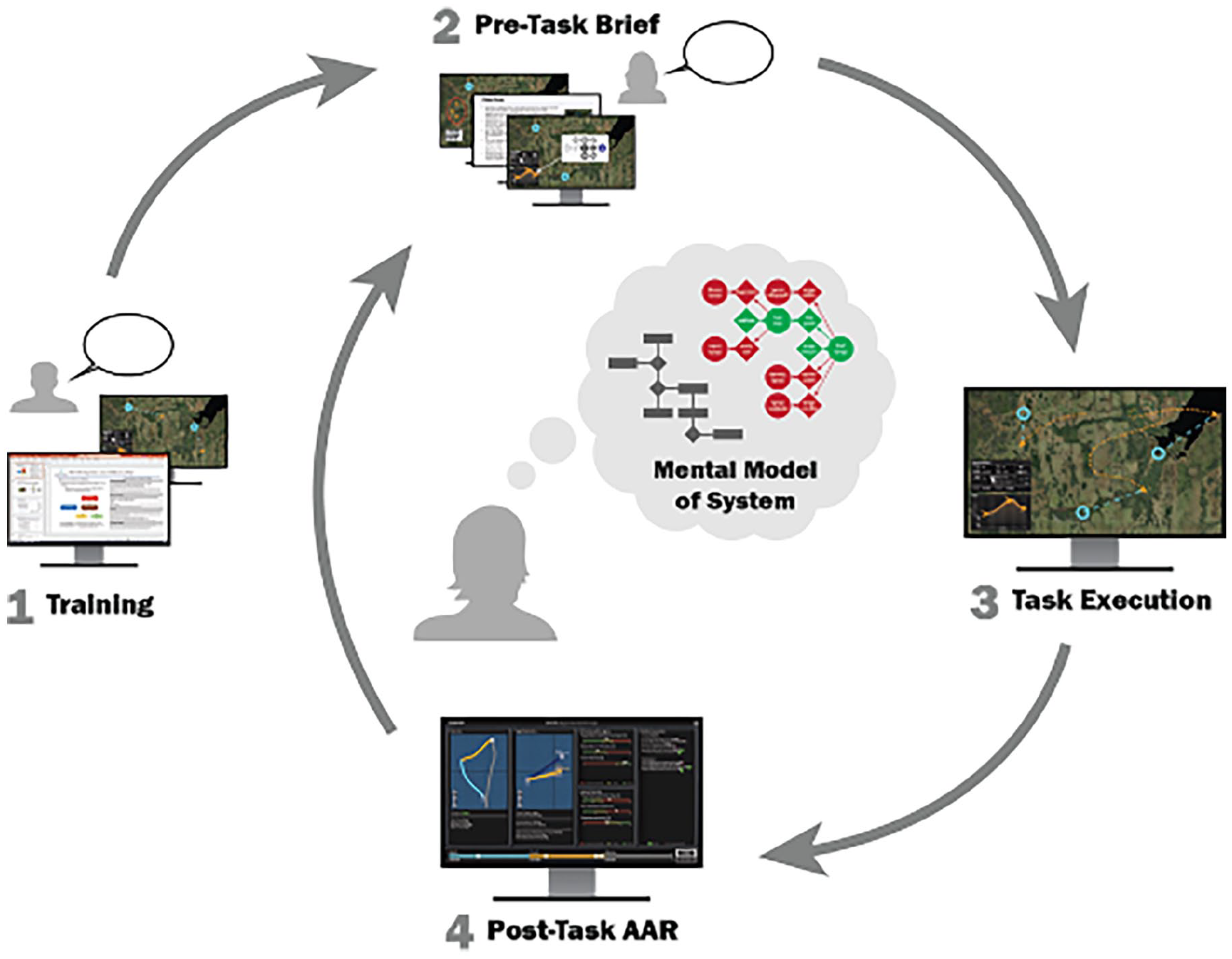

The situation awareness-based transparency (SAT) model (Chen et al., 2014) delineates three levels of information aimed at supporting SA. SAT Level 1 entails information that enhances the user’s understanding of the automation’s status and intentions. SAT Level 2 provides insights into the rationale behind the automation’s actions, while SAT Level 3 provides foresight into its future states. In this study, we applied these models to establish transparency across the HAT lifecycle (see Figure 1).

The human-autonomy teaming (HAT) lifecycle.

Transparency Across the HAT Lifecycle

The HAT lifecycle consists of operator training prior to task execution, pre-task briefings, task execution, and post-task after-action reviews (AARs). Existing research predominately addresses transparency during task execution (e.g., Loft et al., 2023; Skraaning & Jamieson, 2021; Yang et al., 2017). Transparency during this phase is typically communicated with real-time details of the automation’s behaviors and rationales for its actions.

Although this information may be valuable, rapid streams of information can be easily missed during complex tasks or direct the operator’s attention away from the task itself, leading to diminished performance (Knight, 2021; Miller, 2021). Thus, it is imperative to consider the implementation of transparency during all phases of the HAT lifecycle. Transparency during these other phases allows for the communication of information beyond real-time details of the automation’s behavior. Transparency during training, for instance, can convey essential information about the automation’s design, purpose, and intentions (Lyons, 2013). Similarly, transparency during AARs can offer insights into the automation’s behaviors during the task, aiding operators in comprehending its decisions.

Present Study

The primary aim of the present study was to investigate the impact of analytic transparency throughout the HAT lifecycle on human-automation trust, communication, and reliance. To achieve this, we employed Lyons’ (2013) robot-to-human model and Chen et al. (2014) SAT model. We primarily focused on integrating transparency information that supports the analytical model (Lyons, 2013). To accomplish our objective, we designed a testbed to simulate a counter small-unmanned air systems (C-sUAS) task with assistance from an intelligent agent. We implemented transparency opportunities at four phases during the scenario: training, pre-mission, during mission, and post-mission.

Hypotheses:

H1: Participants will report higher levels of trust in autonomy when transparency is applied to all phases of the HAT lifecycle.

H2: Participants will have higher SA when transparency is applied to all phases of the HAT lifecycle.

H3: Participants will demonstrate higher task performance when transparency is applied to all phases of the HAT lifecycle.

H4: Participants will report lower workload levels when transparency is applied to all phases of the HAT lifecycle.

H5: Participants will require less information from autonomy over time when transparency is applied to all phases of the HAT lifecycle.

Method

Participants

Participants (N = 97) were recruited from an online research participation program at the University of Central Florida. Most participants were female (52.6%) and had an average age of 20.54 years (SD = 3.16). The university’s Institutional Review Board and the U.S. Air Force Human Research Protections Office reviewed and approved the study.

Design

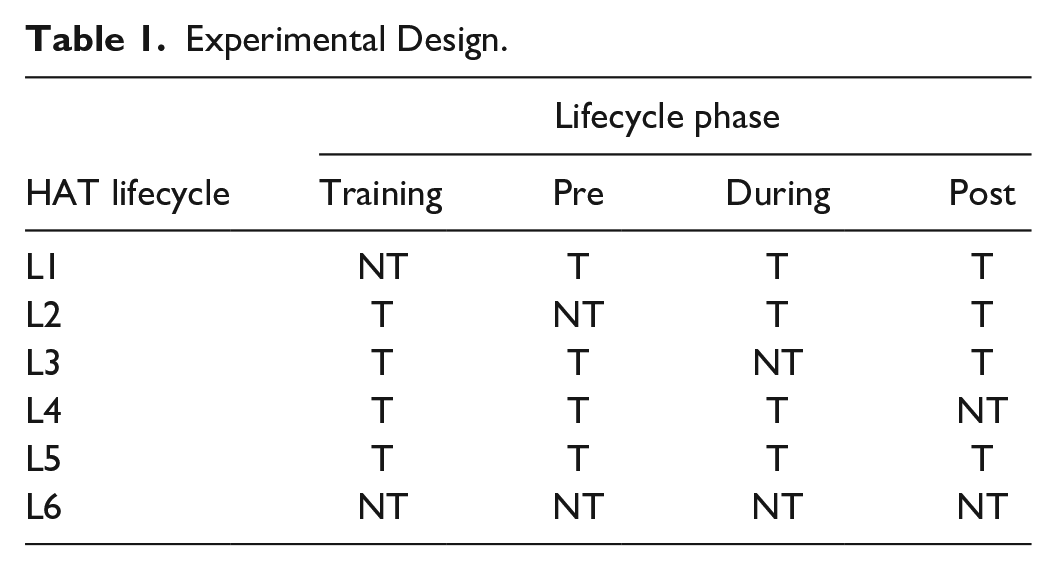

Analytic transparency across the phases of the Human-Automation Teaming (HAT) lifecycle was manipulated using a between-subjects experimental design. Participants were randomly one of six conditions, each with a different configuration of transparency (T) or no transparency (NT) across the four lifecycle phases (see Table 1). Each participant completed four iterations of the lifecycle, enabling us to assess the impact of each experimental condition over time.

Experimental Design.

Materials

Experimental Task

Participants engaged in a C-sUAS simulation with assistance from an intelligent agent capable of proposing and executing courses of action (COA) against UAS events. Four scenarios were developed to counterbalance event types. All elicited similar variations in workload, SA, and trust.

Questionnaires

Following each lifecycle, participants completed a questionnaire containing the short-form Human-Robot Trust Scale (Schaefer, 2016), Situation Awareness Rating Scale (SART; Taylor, 1990), and the NASA Task Load Index (NASA-TLX; Hart & Staveland, 1988). These measures assess trust, SA, and workload, respectively. After all lifecycles had been completed the Trust in Automation (TiA) survey (Körber, 2019) was administered.

Performance Metrics

Various metrics were utilized to evaluate task performance. These included the number of COAs run during each scenario, the number of UAS events successfully mitigated, and reaction time (RT)—the time to select a COA.

Procedure

Participants first completed the training phase. Training consisted of a PowerPoint presentation explaining the task and how to use the system. Those assigned to configurations with training transparency received additional information regarding the automation’s underlying algorithm. Following the presentation, participants completed a practice exercise to familiarize themselves with the task. After training, participants completed four iterations of their designated HAT lifecycle.

The pre-task brief outlined mission objectives and available resources. Transparency during this phase consisted of information regarding the automation’s current and anticipated states.

During task execution, participants with transparency had access to additional widgets conveying information about the automation’s future status and were able to compare COAs. Moreover, they could utilize speech queries to obtain information regarding the mission and the automation’s behavior. Participants with transparency during task execution were afforded the option to adjust their level of transparency (i.e., SAT level changes).

After task completion, participants engaged in an AAR to review the mission. Those with transparency in this phase (post-mission) could utilize the previously mentioned widgets and speech functions to glean additional insight into the COAs during the AAR. Participants could also adjust their SAT level. After the AAR, participants completed the subjective measures before proceeding to the next phase.

Results

Trust in Automation

We analyzed the effects of time (i.e., lifecycle) and lifecycle configuration on the Human-Robot Trust Scale scores using a 2-factor mixed-model Analysis of Covariance (ANCOVA). Propensity to trust (Reeves & Nass, 1996) was included as a covariate. Results showed no significant interaction between time and lifecycle configuration (p = .344), nor were there significant main effects between the variables of time or lifecycle configuration (ps > .05).

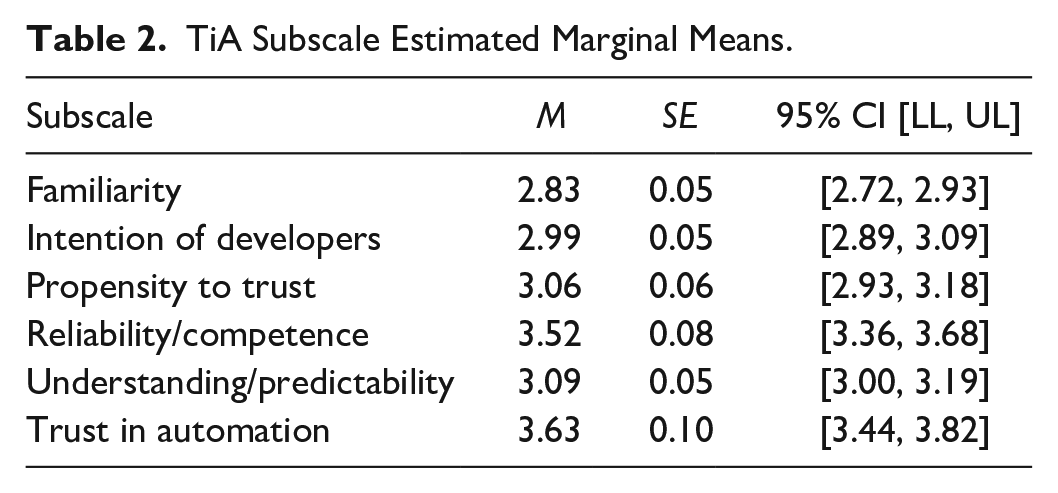

To examine the effects of lifecycle configuration on dimensions of trust (TiA), we conducted a two-way mixed-model ANOVA. No interaction was found between TiA subscales and lifecycle configuration, (p = .258), and there was no main effect for lifecycle configuration (p = .666).

We did, however, observe significant within-subjects effect of TiA subscale, F (3.37, 307.03) = 27.40, p < .001, η p 2 = .23. Bonferroni post-hoc analysis revealed that participants reported the highest levels of Trust in Automation compared to all other subscales (p < .001) except for Reliability/Competence (p = 1.00), which had the second-highest average score. Understanding/Predictability was significantly higher than Familiarity (p = .016). See Table 2 for descriptive statistics.

TiA Subscale Estimated Marginal Means.

Situation Awareness

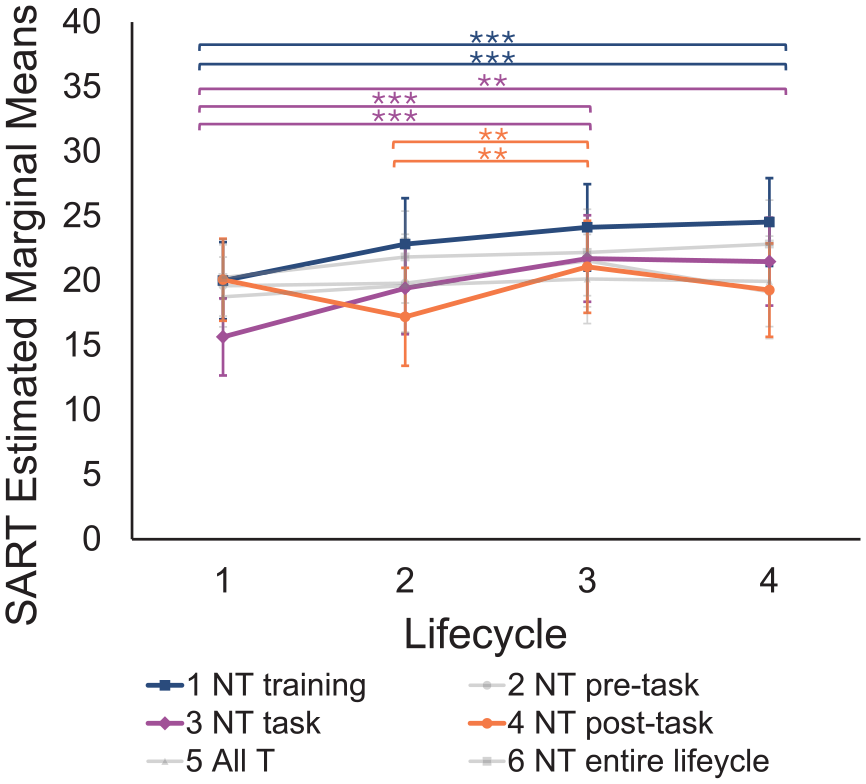

We examined the effects of time and lifecycle configuration on SART scores with a 2-factor mixed-model ANOVA. The interaction between time and lifecycle configuration approached significance and exhibited a moderately large effect size, F (14.31, 260.45) = 1.70, p = .054, η p 2 = .09.

Bonferroni post hoc tests were conducted to further explore this interaction (see Figure 2). When analytic transparency was absent during training, SA increased from the first to the last lifecycle (p = .035). Similarly, when analytic transparency was absent during task execution, SA increased from the first lifecycle to the third (p < .001) and fourth lifecycles (p = .004). When analytic transparency was absent during the post-task AAR, SA increased from the second to the third lifecycle (p = .006).

SART, lifecycle configuration by time.

Task Performance

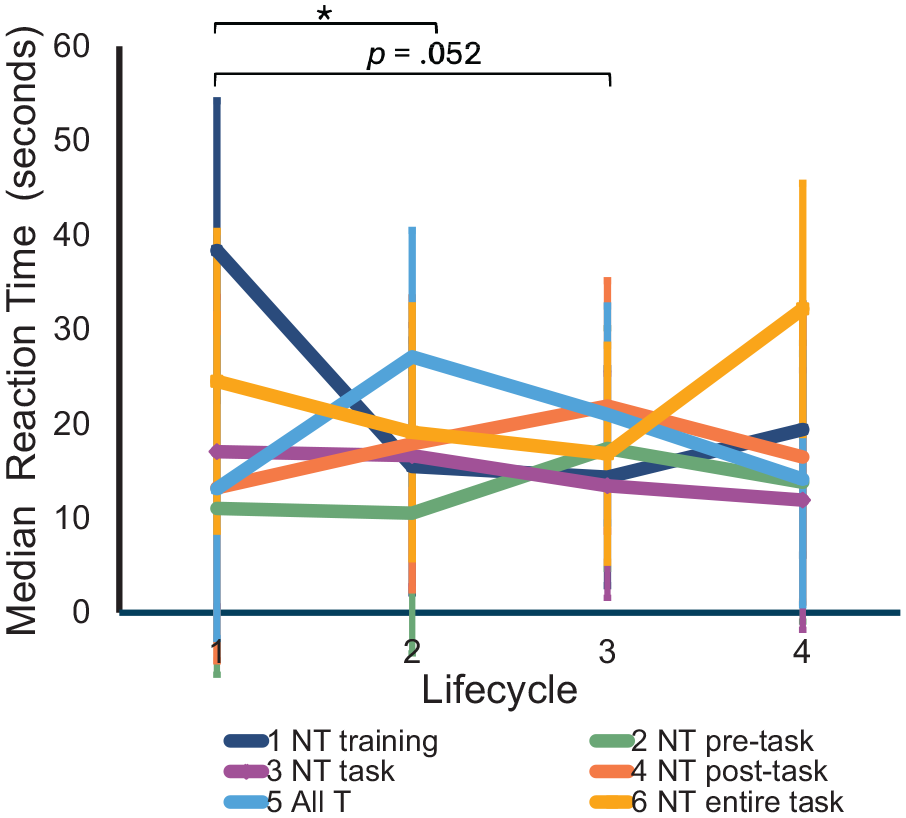

We investigated the effects of time and lifecycle configuration on median COA RT using a two-factor mixed model ANOVA. The interaction between time and lifecycle configuration approached significance, F (12.69, 208.81) = 1.71, p = .060, η p 2 = .09. Due to the moderately large effect size, Bonferroni post hoc tests were conducted to further explore this interaction (see Figure 3).

Median reaction time, lifecycle configuration by time.

When analytic transparency was absent during training, median RT was significantly higher in the first lifecycle compared to the second (p = .042) and approached significance when comparing the first to the third lifecycle (p = .052). No other comparisons were significant (ps > .05). On average across the lifecycles, the median RT ranged from 12.43 to 14.22 s. No significant interactions or main effects were observed for the number of COAs executed during each scenario or the number of UAS events successfully mitigated.

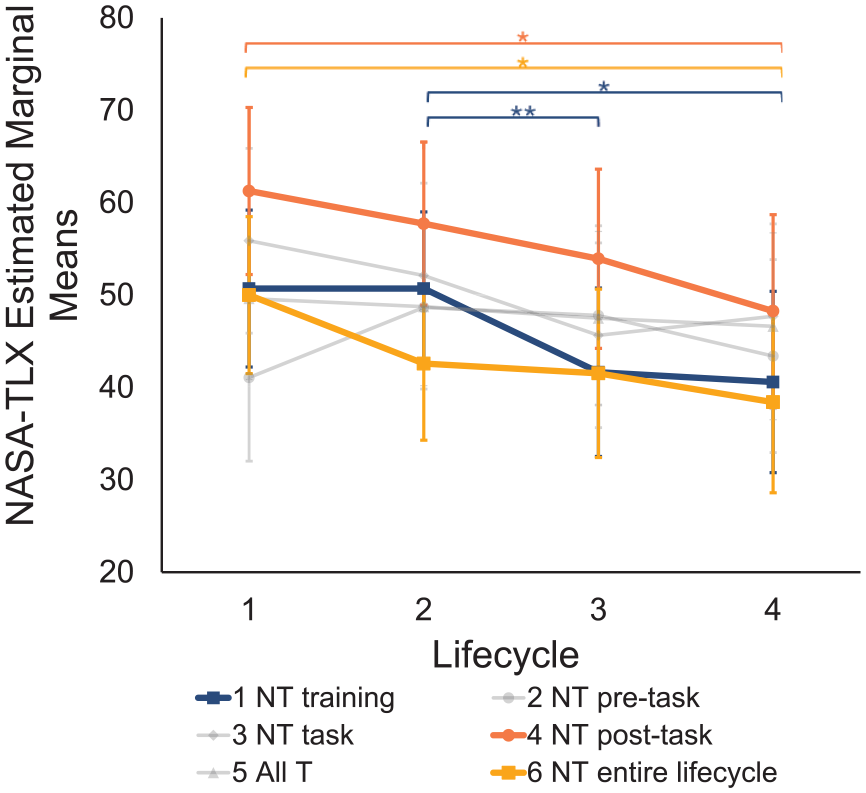

Workload

We examined the effects of time and lifecycle configuration on global NASA-TLX scores using a 3-factor mixed-model ANOVA. Physical workload was excluded from the analysis. The interaction between lifecycle configuration and time approached significance and exhibited a moderately large effect size, F (14.15, 257.56) = 1.63, p = .071, η p 2 = .08. Bonferroni post hoc tests were used to explore this interaction (see Figure 4). Configurations 1 (NT during training), 4 (NT post-lifecycle), and 6 (NT entire lifecycle) showed a decrease in workload over time.

NASA-TLX, lifecycle configuration by time.

Autonomy Information

Participants with analytic transparency during task execution and the post-task AAR could adjust their SAT level (i.e., they could increase or decrease the SAT level). Participants seldom used the SAT-level change widget, with an average of less than 1 SAT change per lifecycle. An independent-sample Kruskal-Wallis test indicated that the average number of SAT changes did not differ between configurations, H(3) = 1.43, p = .699.

Similarly, participants with transparency during task execution and the post-task AAR could use the autonomy’s speech query function. Yet, like the SAT level changes, this feature was infrequently used, with an average of less than 0.5 queries per lifecycle. An independent-sample Kruskal-Wallis test indicated no significant differences in the average number of speech queries between configurations, H(3) = 3.69, p = .297.

Discussion

Trust

Contrary to our prediction, participants did not report higher levels of trust when analytic transparency was present throughout all phases of the HAT lifecycle. Schaefer’s (2016) measure of human-robot trust was originally designed to measure trust in embodied robots, which may explain the null findings. Additionally, the short-form version of the scale may not have been sensitive enough to capture nuanced differences in trust (Schaefer, 2016). While we did not observe significant differences in TiA scores between lifecycle configurations, participants reported higher Trust in Automation and Reliability/Competence subscale scores than other subscales. This suggests that participants generally found the system to be trustworthy and reliable. Although we did not find significant differences in trust across lifecycle configurations, we observed several differences in variables conceptually related to trust (Hancock et al., 2011).

Situation Awareness and Workload

We observed an interaction between time and lifecycle configuration. When there was no analytic transparency during training and task execution, SA increased from the first to the last lifecycle. This trend may be attributed to a potential loss of critical information during these phases of the HAT lifecycle. Interestingly, the absence of transparency during the pre-task briefing did not similarly hinder SA. Although these findings are not fully in line with our hypothesis, they suggest that analytic transparency may be more critical for certain phases of the HAT lifecycle than others.

Our hypothesis that analytic transparency would reduce workload was not consistently supported. However, we found that a lack of transparency during training and the post-task AAR resulted in higher levels of workload. This observation further underscores the importance of analytic transparency during specific phases of the HAT lifecycle.

Task Performance

We hypothesized that analytic transparency would improve performance when applied to all phases of the HAT lifecycle. This hypothesis was not consistently supported. Participants lacking analytic transparency during training exhibited longer reaction times in the first lifecycle than in subsequent lifecycles. Potentially, participants in this configuration had less understanding of the automation’s decision-making process (Lyons, 2013) and, consequently, took longer to react to UAS events. However, participants in this condition appeared to recover from their initial loss of information. Despite this, we did not observe significant differences in the other performance metrics, suggesting that analytic transparency may not be required for all aspects of task performance.

Autonomy Information

Finally, we predicted that participants would require less information from the automation over time when analytic transparency was present in all HAT phases. This hypothesis was not supported. Participants infrequently used the speech query function and SAT-level change widget. During the post-study debriefings, some participants reported forgetting about the availability of the SAT-level change widget. Additionally, others reported that they understood the task sufficiently and did not perceive a need to utilize the speech query function.

Limitations and Future Directions

The present study demonstrates the manipulation of analytic transparency across the HAT lifecycle and verifies the viability of the testbed. The modest outcomes may be attributed to the use of an undergraduate student population as participants and the complexity of the task (Walker et al., 2010). Potentially, analytic transparency would produce stronger effects for participants with domain expertise, such as individuals with military training (Lyons et al., 2024). Additionally, the paradigm did not include manipulations that contradicted participants’ beliefs or expectations about the system. Thus, participants did not have to rely on analytic transparency to execute the task. Larger effects may be observed in tasks that necessitate dependence on analytic transparency. Although we demonstrated that analytic transparency can be systematically presented in an appropriate task context, more research is needed to provide comprehensive evidence for the effect of the individual transparency manipulations on human-automation trust, communication, and reliance. Future research should address these limitations to elicit larger effects and further demonstrate the viability of the analytic transparency manipulation.

Conclusion

The findings of this study emphasize the significance of incorporating automation transparency across the HAT lifecycle, highlighting the nuanced relationship between transparency and human-automation interaction. The results suggest that analytic transparency during certain phases of the HAT lifecycle is crucial for establishing system comprehension and preserving initial task performance and SA. This study lays the groundwork for future research to refine transparency strategies and advance our understanding of human-automation teams. Ultimately, this line of research contributes to the design and implementation of safer and more efficient automated systems across various domains.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Air Force Research Laboratory (FA865022C6417). The views expressed are those of the authors and do not necessarily reflect the official policy or position of the Department of the Air Force, the Department of Defense, or the U.S. government. Distribution A. Approved for public release: distribution is unlimited.