Abstract

Trust in automation and team communication are crucial factors in human-agent teaming. While research has examined how trust in automation and team dynamics impact performance separately, less is known about how they combine to influence team dynamics. This study investigated how team speech dynamics are related to trust in automation, team perceptions, and workload in the context of a longitudinal multi-participant computer simulated military mission using active-duty military personnel. Results showed that participants with lower trust in automation spoke more than their teammates with higher trust in automation, even after controlling for perceptions of team trust, cooperation, workload, role on the team, and team performance. A common finding in the team literature is that more team communication and more trust in automation are, separately, better for team performance. Thus, this research is an initial step toward demonstrating how automation alters the team dynamics typically considered essential to their success.

Within an increasingly interconnected 21st century workforce, many next-generation modernization efforts leverage autonomous systems to improve human team performance, whether helping with group decision-making in the boardroom or soldiers on the battlefield. As research in this area is rapidly developing, much of the focus of autonomous technologies is on immediate performance outcomes. However, autonomous technologies do not merely alter overall performance. They necessarily change individual and group dynamics (e.g., Flathmann et al., 2024), with the potential for unintended side effects, for example, to mission-critical team communication styles, relationships, and schemas. While there is a rich portfolio of research demonstrating how trust in automation and team dynamics impact performance separately (e.g., Lee & See, 2004; Schaefer et al., 2019), less is known about the degree to which trust in automation and team perceptions combine to influence team dynamics and resulting performance.

Among the complex array of factors and processes that shape human team performance, team communication is a critical element that may be impacted by the integration of autonomous systems into human teams. Human team communication ultimately reflects the shared ability to coordinate information and build higher levels of shared understanding (e.g., Dickinson & McIntyre, 1997; J. E. Mathieu et al., 2000). Generally, more team communication has been shown to be better for team performance (e.g., J. E. Mathieu et al., 2000; McNeese et al., 2021).

But team communication is a complex concept in that studies show different findings depending on a number of contextual factors. Meta-analytic results show that more communication tends to be associated with better performance (Marlow et al., 2018). But others have found situational effects where high performing teams communicate less when teams are more experienced (Toups Dugas et al., 2011), have a better organizational structure (MacMillan et al., 2004), or when they are using tools to support their collaboration (e.g., Gao et al., 2016; Penta & Harman, 2007). In these cases teams are able to engage in more implicit forms of communication and coordination.

Communication during team tasks has also been shown to indicate psychological states, like trust and feelings of team cohesion (e.g., Khawaja et al., 2012). Therefore, changes to team communication reflect changes in overarching team dynamics.

Trust in automation is another key variable studied in the context of sociotechnical systems and their performance, with trust in automation linked to autonomous system usage (e.g., Lee & See, 2004). For example, when people mistrust automation and feel confident in their own abilities, people often forgo relying on automation, opting instead to complete the task independently (e.g., Kantowitz et al., 1997a). In other situations, participants may mistrust automation but ultimately rely on it, such as if task workload is high (e.g., Molloy & Parasuraman, 1996; Thackray & Touchstone, 1989). If autonomy is unreliable and trust in the autonomy degrades, team performance is also reduced (Parasuraman et al., 1993).

Complicating this line of research, though, is the varied way trust can manifest itself in complex and real-world settings. Krausman et al. (2022) note that trust within teams “is a dynamic state that fluctuates over the lifecycle of the team and is further influenced by the social, task, and environmental contexts” (p. 2). To illustrate task factors, recent research suggests that intelligent automation can improve trust when it is more transparent about its actions (Lakhmani et al., 2016; Stowers et al., 2016). Generally, researchers note that, to understand trust in human-agent teaming context, multiple measurement approaches are needed (for a review, see Krausman et al., 2022), and that it is important to consider multiple approaches for studying communication (Baker et al., 2021).

Although the multifactorial relationship between team communication, trust in automation, and team performance will—to some extent—be context dependent, researchers have yet to build this knowledge base. To bridge this gap in the literature, the current study investigated team speech dynamics and trust in automation, team trust, and workload in the context of a longitudinal multi-participant computer simulated military mission.

This work expands the literature on human teaming in the following ways. We leverage a longitudinal methodology, a participant sample of active-duty military personnel, and a large team structure, each of which are rare in teamwork literature. The high-visual fidelity environment and combat missions were developed by experts from DEVCOM Army Research Laboratory (ARL), providing a relatively ecologically valid simulation. Finally, where much of the prior literature has emphasized how team communication and trust in automation affect team performance separately, we investigate the associations between these variables. We adopted a non-directional hypothesis that trust in automation would significantly alter team communication. On one hand, we considered it possible that trust in automation is associated with increased coordination and, in turn, more communication among team members. On the other hand, greater trust in automation may lead teams to offload aspects of both individual and team tasks, thereby reducing the need to verbally discuss task details.

Method

Participants

Seven military personnel were recruited from U.S. Army soldiers. Participation was voluntary and participants did not receive compensation. All procedures were approved by ARL’s Institutional Review Board. Written informed consent was documented from all participants.

Simulation

The team completed 18 missions over 2 weeks in a computer-simulated environment where they interacted with autonomous technologies to operate combat vehicles. Following a day-long training session, the team completed two 90-min missions daily for 9 days, navigating varied terrains to reach a pre-determined location while combatting enemy forces. Each mission occurred in a high-visual fidelity outdoor terrain, with unique starting points, objective locations, and opposition configurations.

Six participants were assigned as crew members, with three drivers and three gunners. The seventh participant was the section commander, responsible for coordinating each mission and directing the crew. Together, the team coordinated one manned vehicle and two robotic combat vehicles (RCV). One driver and gunner pair controlled the manned vehicle. Two driver and gunner pairs controlled the two RCVs.

To control the manned vehicle, the driver used a steering yoke and pedals. Drivers of the RCV selected waypoints for the RCVs by placing markers using a touchscreen map interface. RCVs were then capable of navigating and avoiding local obstacles independently. Gunners were responsible for firing upon oppositional forces. Gunners monitored an autonomous target recognition system that placed a highlight mask over oppositional forces upon detection. Gunners could also use the touchscreen map interface to place markers for key areas of interest (e.g., bombed buildings). Commanders interacted with an interface to monitor soldier and vehicle status and guide crew actions through predefined “plays.” Plays were specific patterns of two types; movement formations, which were used to move the team in a specific formation toward an objective, and battle drills, which were used to engage with oppositional forces.

Variables

Outcome variable

Real-time speech during each mission was transcribed using Dragon Software. Transcriptions were verified after collection by two members of the research team. Instances of unreliable utterances (e.g., “huh”) were removed. The number of words spoken by each participant was then totaled for each mission.

Predictors

Trust in automation, team trust, and workload were captured immediately following each mission. Trust in automation was captured using the Trust in Automation scale (Jian et al., 2000). We also controlled for three additional team processes to better clarify the relationship between team communication and trust in automation:

(1) Participants reported team trust by completing the Team Trust Scale (Costa, 2003). The Team Trust Scale was divided into individual factors of team cooperation and team trust. We controlled for team trust, because it has been shown to influence team performance and subsequent perceptions of teammate’s behavior (e.g., J. Mathieu et al., 2008; Salas et al., 2005). In short, teams lacking in trust often spend more time protecting their own work and checking and inspecting each other as opposed to collaborating effectively (e.g., Cooper & Sawaf, 1997; Salas et al., 2005). In low trust conditions, team members may be unwilling to share information out of fear of judgment, or because they think their information will not be used appropriately (e.g., Bandow, 2001). Individuals with low trust in their team members may withdraw from collective team activities and instead choose to work independently, subsequently lowering their communication with the team (e.g., Bandow, 2001; Salas et al., 2005).

(2) Cognitive workload was captured using the NASA-TLX (Hart & Staveland, 1988), as another variable commonly connected to team performance. If workload is too low, attention may lapse, resulting in errors and subsequently poor performance (e.g., Frazier et al., 2022). If workload is too high, team members may be unable to meet the task demands and perform poorly (e.g., Frazier et al., 2022). Previous research has also shown that team communication decreases when workload is too high (e.g., Marlow et al., 2018; Rosero et al., 2021). Although the aim of autonomous systems is often to decrease human cognitive workload, use of autonomous systems can exacerbate workload challenges by adding an extra layer of monitoring responsibilities (Onnasch et al., 2014), as team members must divide their efforts between task execution and ensuring the autonomous system is functioning appropriately.

(3) Mission performance was evaluated using the team’s average time needed to neutralize opposing forces. In this simulation, oppositional forces spawned into the environment as the team approached their location. The time needed to destroy each enemy vehicle was calculated by taking the difference between the time an enemy vehicle spawned and its destruction and then averaged per scenario.

Results

From the original set of 18 missions, one mission was removed because the datum was inaccessible and two additional missions were removed because participants did not complete the questionnaires.

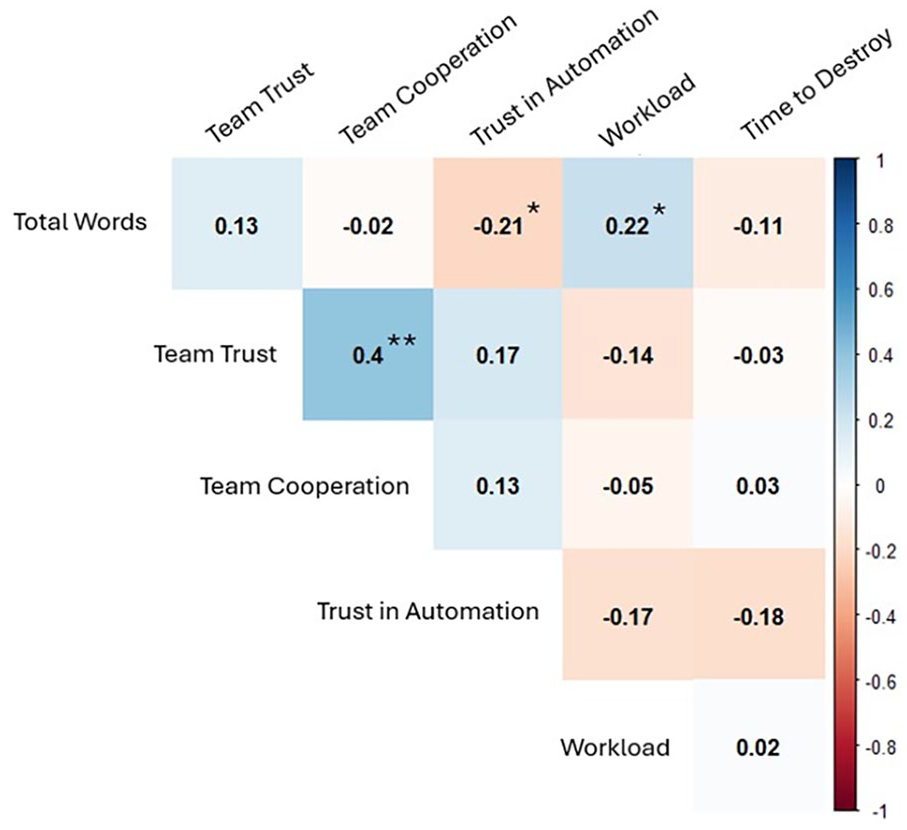

First, we calculated a repeated measures correlation matrix using the rmcorr library (Bakdash & Marusich, 2019) in R (R Core Team, 2021). Results can be found in Figure 1. Two significant relationships emerged. The number of total words spoken was positively associated with workload (p = .04), such that greater numbers of total words were associated with higher ratings of workload. The total number of words spoken was also negatively associated with trust in automation (p = .02). Greater numbers of total words spoken were associated with lower ratings of trust in automation.

Repeated measures correlation matrix between total words spoken and team perceptions and performance.

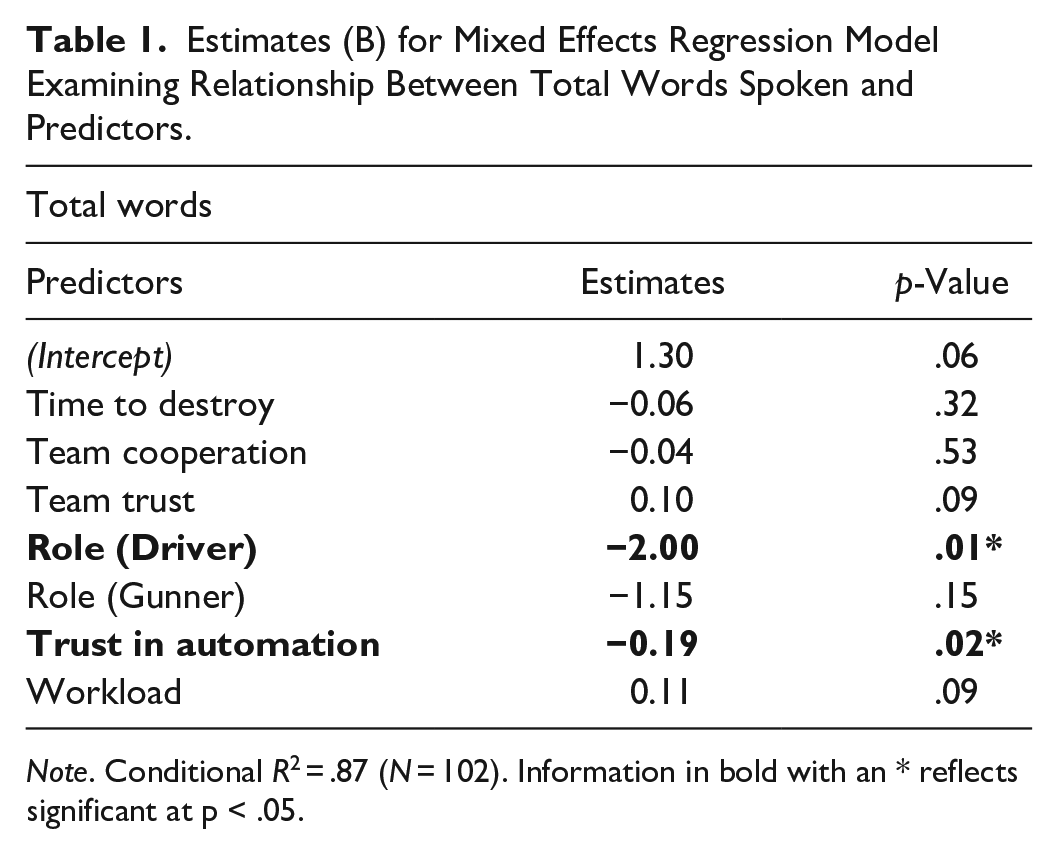

Next, we examined the degree to which an individual’s total words spoken throughout each mission was predicted by perceptions of trust in automation, team trust, team cooperation, perceived workload, and mission performance using a mixed-effects regression from the lme4 R library (Bates et al., 2015). Participant role (e.g., driver, gunner, or commander) and mission scenario were used as random intercepts to control for differences that arose due to assigned role (e.g., commanders spoke more since their role was to organize the entire team) and mission (e.g., participants may have spoken more as they became comfortable with the experiment), respectively. Additionally, all variables were scaled prior to running the regression model to standardize the resulting beta coefficients. Trust in automation significantly predicted speech dynamics (B = −0.19, p = .02), such that participants who had lower trust in automation spoke more than their teammates with higher trust in automation, even when controlling for the participant’s perception of their team trust, workload, their role on the team, and how well the team performed in the mission. Drivers tended to speak less than commanders (B = −2.00, p = .01). Team trust, workload, and mission performance were not significant predictors of speech dynamics. Results can be found in Table 1.

Estimates (B) for Mixed Effects Regression Model Examining Relationship Between Total Words Spoken and Predictors.

Note. Conditional R2 = .87 (N = 102). Information in bold with an * reflects significant at p < .05.

Discussion

This study examined the relationship between team attitudes, trust, and team processes and performance. A key strength of this study is its rare longitudinal large-team design that allowed us to examine how the team dynamics unfolded over an extended period. In contrast, much prior research on human teaming has relied on cross-sectional designs. Another key strength is that the high-visual fidelity simulation was designed by experts to mirror authentic combat scenarios. This offered a level of ecological validity that is often lacking in laboratory studies while maintaining experimental control.

Human-agent teams are increasingly operating within complex socio-technical environments, where the interaction between trust and performance is mission critical. As automation becomes more prevalent, it is essential to examine the attitudinal, behavioral, and cognitive effects of these technologies on teams. We showed how trust can affect a core component of teamwork, that of communication. A common finding and intuition in the team literature is that more team communication and more trust in automation are, separately, better for team performance (e.g., J. E. Mathieu et al., 2000; McNeese et al., 2021). However, our research highlights that the overall level of team communication is negatively associated with trust in automation. Additionally, neither trust in automation nor communication were correlated with mission performance. These findings serve as a call to researchers to examine higher-order relationships among teams that involve both human and non-human companions. Thus, this research is an initial step toward demonstrating the ways in which automation alters the team dynamics typically considered essential to their success. The present findings highlight the need to consider long-term and higher-level relationships beyond performance metrics in both applied and research settings.

Limitations

This study has two main limitations. First, while the sampled population reflects the community that will likely use the autonomous features described here (e.g., U.S. Army personnel), the sample may not be representative of other military branches or the public. Therefore, the results from this study may not generalize to other samples within and without the U.S. military. Second, since the sample size was small, relying on the same team to perform repeated missions over time, results should be interpreted with caution as they may reflect the unique dynamics of the single team sampled here.

Future Directions

There remain a number of gaps in our knowledge around how the addition of autonomous systems impacts human team dynamics. A promising next step is to replicate the research study outlined here with additional teams. This would allow us to examine the validity of these preliminary results. Additionally, the human-agent teaming literature would benefit from investigating the dynamic relationship between communication, team perceptions, and team processes under different circumstances (e.g., outside of the context of military combat missions) and with varying team configurations (e.g., teams without a defined leader). It is plausible that the relationship between trust in autonomy and team speech dynamics uncovered in this study is context dependent.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported herein was sponsored by the U.S. Army Research Laboratory and was accomplished under Cooperative Agreement W911NF-21-2-0279. The views and conclusions contained in this document are those of the authors and should not be interpreted as representing the official policies or positions, either expressed or implied, of the U.S. Army Research Laboratory or the U.S. Government. The U.S. Government is authorized to reproduce and distribute reprints for government purposes notwithstanding any copyright notation herein.