Abstract

Technological advances that seek to address future operational challenges abound. While advanced capabilities are being developed, there is an important space for human design considerations, including cognitive workload. One proposed solution to improve cognitive workload management is adaptive automation (AA). This research used a novel, model-based approach to assess the impacts of AA on cognitive workload. This assessment modeled the tasks in NASA’s Multi-attribute Task Battery-II (MATB-II) using the Improved Performance Research Integration Tool (IMPRINT). The effort sought to investigate the relationship of cognitive workload, situation awareness, and performance through three human-in-the-loop studies with 120 participants using MATB-II. The research also attempted to validate cognitive workload models from IMPRINT. The IMPRINT models were representative of the MATB tasks with statistically significant differences between workload conditions, which mirrored the models’ predictions. The results demonstrated that AA system task models can be developed using IMPRINT to provide design recommendations.

Systems being developed for future implementation are prevalent in the evolving technological landscape of today. An example of this evolution is seen with future aviation programs. Current aviation development programs are seeking to modernize air assets to fly faster and farther than ever before (Ernst et al., 2020; Skelley, 2024). While this capability will incorporate numerous capabilities, some of which are not yet realized, there is an important space for human design considerations. One human operator design consideration worthy of investigation is cognitive workload. Modern aviation systems lead to increased operator workload values above accepted levels in predictive models (Militello et al., 2019).

One solution that has sought to improve cognitive workload management is adaptive automation (AA), or the ability to dynamically change the level of automation in response to varying system demands (Sheridan, 2011). The implementation of AA seeks to increase or decrease operator cognitive workload within an optimal range (Inagaki, 2003; Scerbo, 1996). While AA has the potential to achieve these ends, there also exists the potential of AA introducing unintended negative consequences into a system (de Visser & Parasuraman, 2011; Kaber & Endsley, 2004; Smith & Baumann, 2020).

Cognitive workload, situation awareness (SA), and performance are three constructs that have shown to be inextricably linked in various settings (Endsley, 2021; Ernst et al., 2020; Wickens, 2008). All three constructs can be impacted by the introduction of AA into a system. The current effort sought to investigate the relationships among these three constructs over the course of the three studies using MATB-II, while also attempting to validate cognitive workload modeling predictions in IMPRINT. Predictive human performance modeling techniques such as IMPRINT do not provide particular measures to allow for specific workload predictions with AA.

The two research questions addressed were:

1. Can cognitive workload modeling inform design decisions in AA systems?

2. Can psychophysiological and subjective workload measures serve to validate operator cognitive workload model predictions for adaptive automation systems?

Methods

Participants

Three human-in-the-loop model validation studies were conducted with governing body IRB approval. A total of 133 participants volunteered for the three studies. After consideration for emergent behaviors, incomplete data sets, and other data collection issues, 40 participants were included in the analysis of each of the three studies. Participants could only participate in one study in an effort to mitigate learning effects. Studies 1 to 3 included 34 males and 6 females (mean age in years = 35.5, SD = 6.53, 33 males and 7 females (mean age = 36.0, SD = 8.34), and 29 males and 11 females (mean age in years = 34.2, SD = 4.90), respectively.

Materials

This research was conducted by modeling tasks from NASA’s MATB-II version 3.5 (Santiago-Espada et al., 2011). These tasks were modeled using IMPRINT, version 4.6.60.0 (Department of the Army, 2019). An MSI GE66 Raider gaming laptop computer running Windows 10 with an Intel 11th Generation Core i9 processor at 3.30 GHz and 32.0 GB RAM was used along with a 27in LCD flat panel display at a resolution of 1,400 × 900 pixels. The MATB-II simulation served to replicate aspects of a flight task and used a Logitech G-Extreme 3D Pro USB joystick, a standard USB keyboard, and an optical mouse. The four tasks included in MATB-II are system monitoring, tracking, communications, and resource management.

Objective cognitive workload measures were collected using a functional near infrared spectroscopy (fNIRS) using the NIRx NIRSport system using a preconfigured NIRx prefrontal cortex headband, pupil diameter using the Pupil Labs Core system, and heart rate variability (HRV) using a Polar H10 heart rate monitor. Synchronous data from these devices was collected using Lab Streaming Layer (LSL), version 1.14. The experimental setup is shown in Figure 1.

Participant station with MATB-II and physiological measurement devices connected.

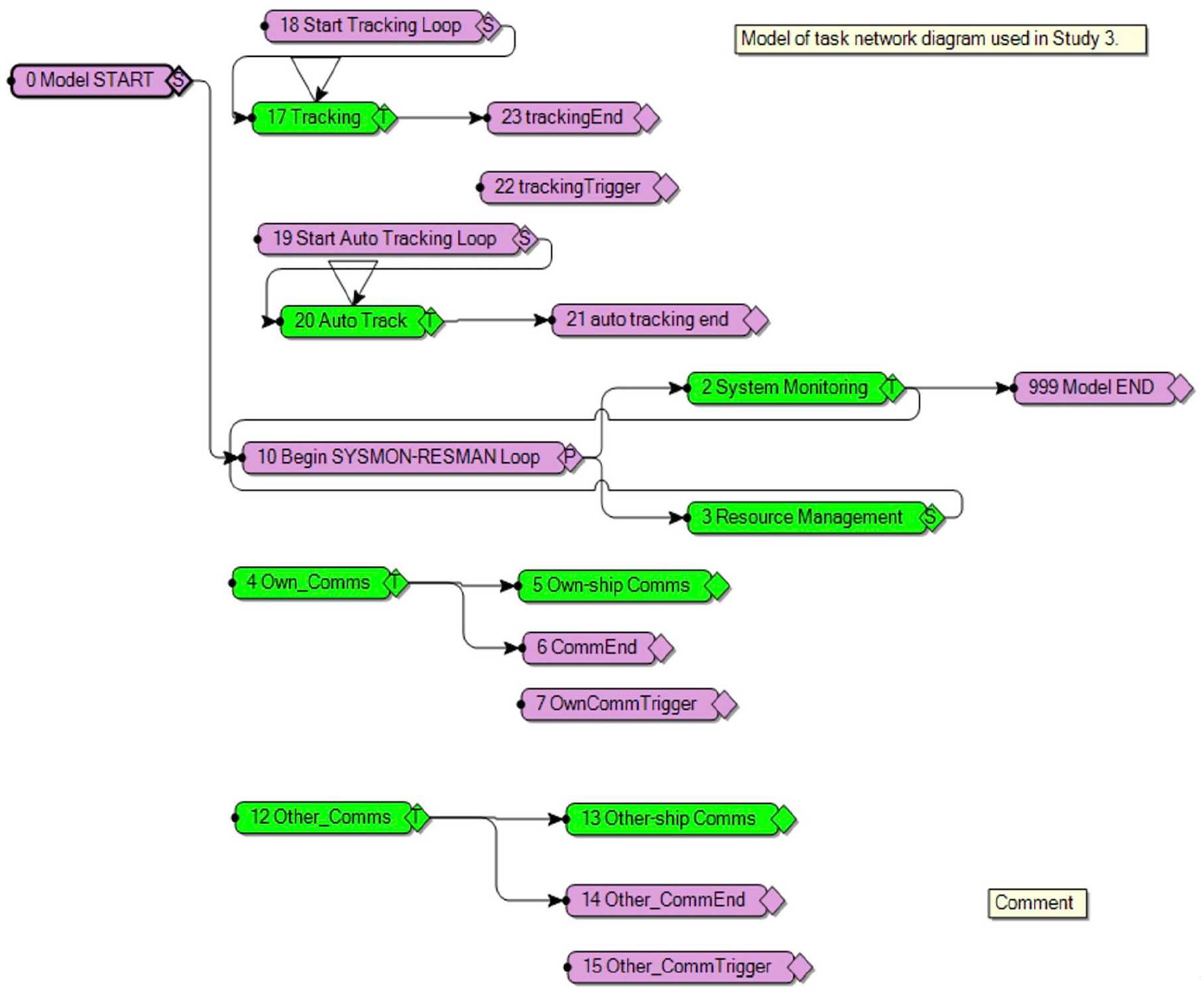

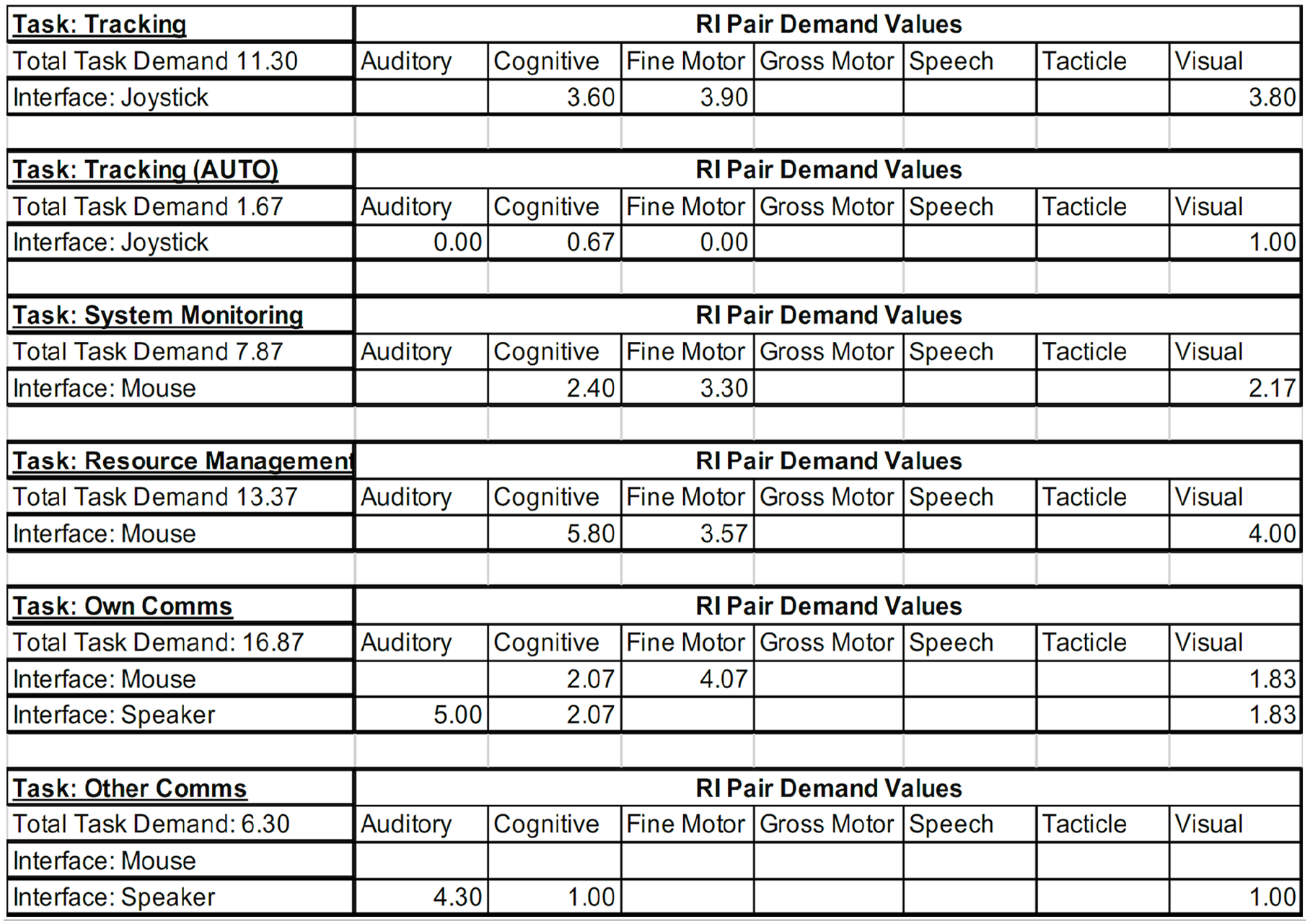

Cognitive workload prediction models of the MATB-II scenarios were modeled by the researcher using IMPRINT for every scenario presented in the three studies. The researcher used the default workload demand values provided in IMPRINT to build and verify the initial workload models. Additional models were constructed after conducting cognitive walk-throughs with three MATB expert users. The expert users had each operated an adapted version of the MATB for at least 20 hours. The results of this approach yielded models that followed the researcher-derived models in workload demand, suggesting that the validated metrics provided in IMPRINT serve as reliable anchors to adjust baseline workload predictions. Examples of the task network model and IMPRINT workload values used in the studies are depicted in Figures 2 and 3.

IMPRINT task network diagram.

Resource-interface (RI) pair demand values.

Procedures

The first study examined how objective and subjective surrogate cognitive workload measures related to cognitive demand. Investigation into different experience levels was included by using two training progressions to create a novice and experienced group of MATB-II operators. After completing their respective training progressions, all participants were instrumented with the physiological measurement devices to collect data during their trial runs. The trial runs consisted of a 10-min-low and a 10-min-high workload condition, with presentation counterbalanced across participants. There was a break between the 10-min trial runs to recalibrate the eye tracker and change MATB-II scenarios.

The results of Study 1 informed Study 2, where a higher level of automation was introduced in the tracking task of MATB-II. This task can be likened to flying an aircraft in manual or in autopilot. Study 2’s participants used the same procedures and experimental setup as those in Study 1, except for only completing one level of workload with two levels of automation.

Study 3 incorporated insights from Studies 1 and 2. First, fNIRS use was omitted in this study because no differences were found across experience groups or experimental conditions in Studies 1 and 2. There were also no significant differences in subjective or objective cognitive workload surrogate measures between novice and experienced participants in the first two studies. Therefore, the decision was made to proceed with only the experienced training progression in Study 3 to account for any learning effects and to focus more on the impacts of dynamically changing levels of automation on cognitive workload.

After completing their training progressions, participants completed a continuous 20-min trial with low and high workload levels and low and high levels of automation presented in 5-min, counterbalanced segments. This a-priori triggering of adaptive automation followed the critical event strategy (Hilburn et al., 1997). Participants also provided cognitive workload assessments during the trial runs by subjectively rating their cognitive workload as a percentage of their maximum workload every minute during the trials using the Continuous Subjective Workload Assessment Graph (CSWAG) approach (Shattuck & Miller, 2006).

Results

Following data processing procedures, data were analyzed using statistical methods. A mixed-effects model analysis approach was used to analyze the collected measures. For low and high workload conditions, dependent measures were analyzed against the fixed effects of experience level and workload level in the first two studies. In Study 3, fixed effects included workload level and automation condition. Random effects were modeled using each participant with their experience level nested. All statistical tests were conducted using JMP version 16.0.0.

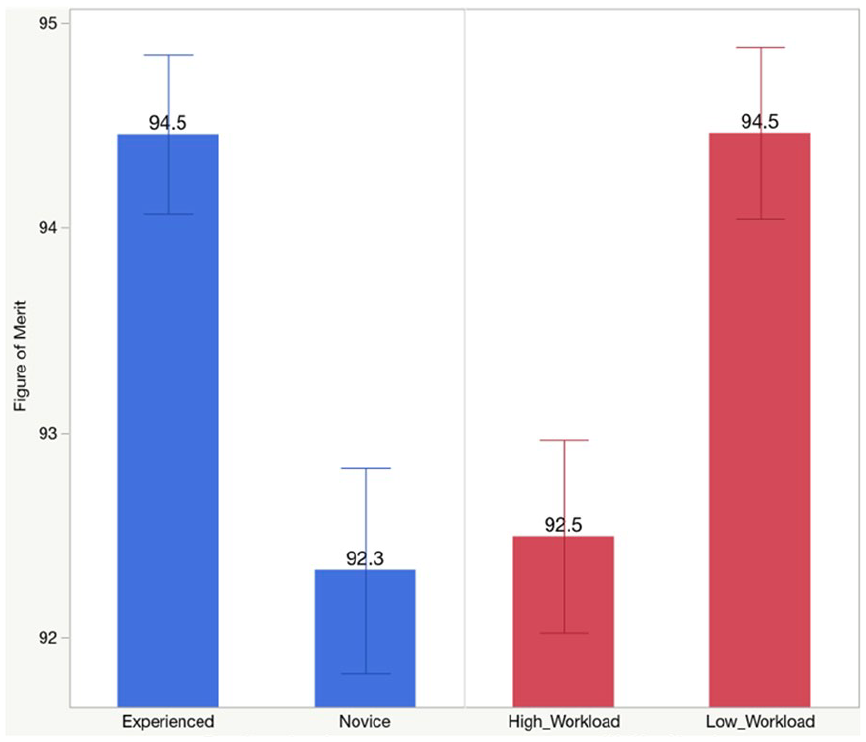

Results from the first study indicated that there were significant differences between workload conditions when assessed against mean R-R HRV intervals, pupil diameter, and CSWAG results (all p < .05). Performance data also supported differences in experience levels and workload conditions (p < .01) and are depicted in Figure 4.

Study 1 mean performance figure of merit versus experience level and workload level. Error bars denote the standard error.

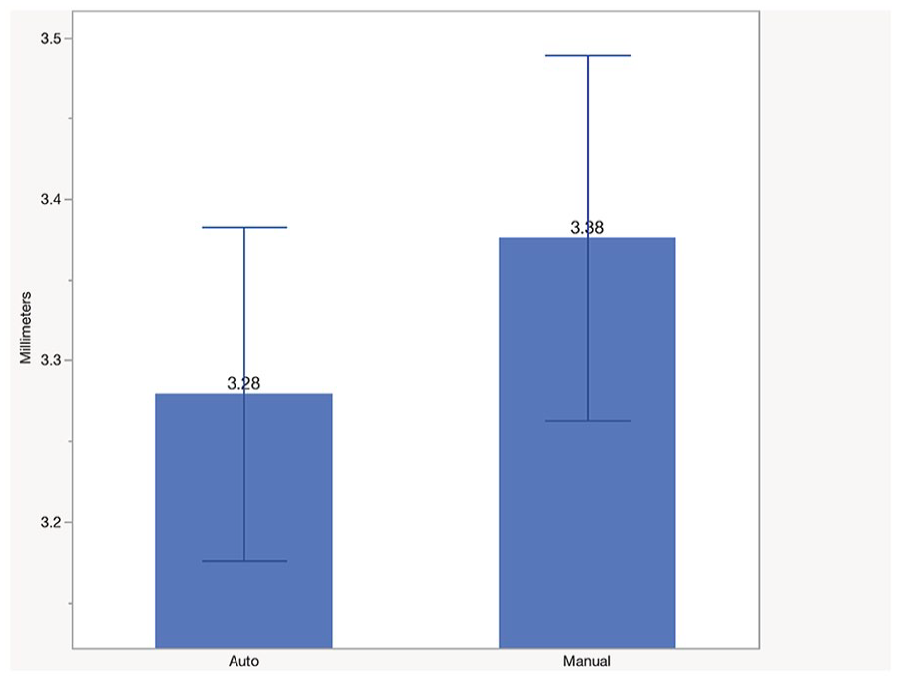

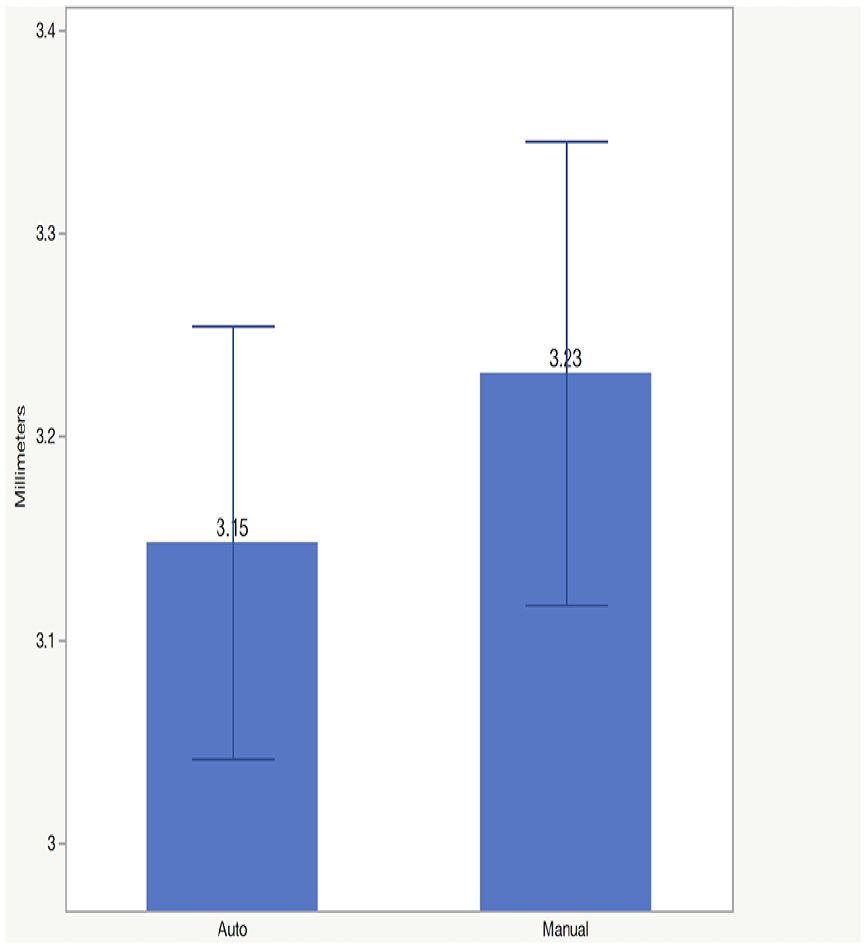

Data from Study 2 indicated increased MATB-II performance scores, increased HRV, and decreased pupil diameter during higher levels of automation. Figures 5 and 6 show mean pupil diameters during both levels of automation. Further, CSWAG results decreased during lower workload and higher levels of automation conditions (all p < .05). These results followed the same pattern as Study 1.

Study 2 mean left pupil diameter by tracking condition. Error bars denote the standard error.

Study 2 mean right pupil diameter by tracking condition. Error bars denote the standard error.

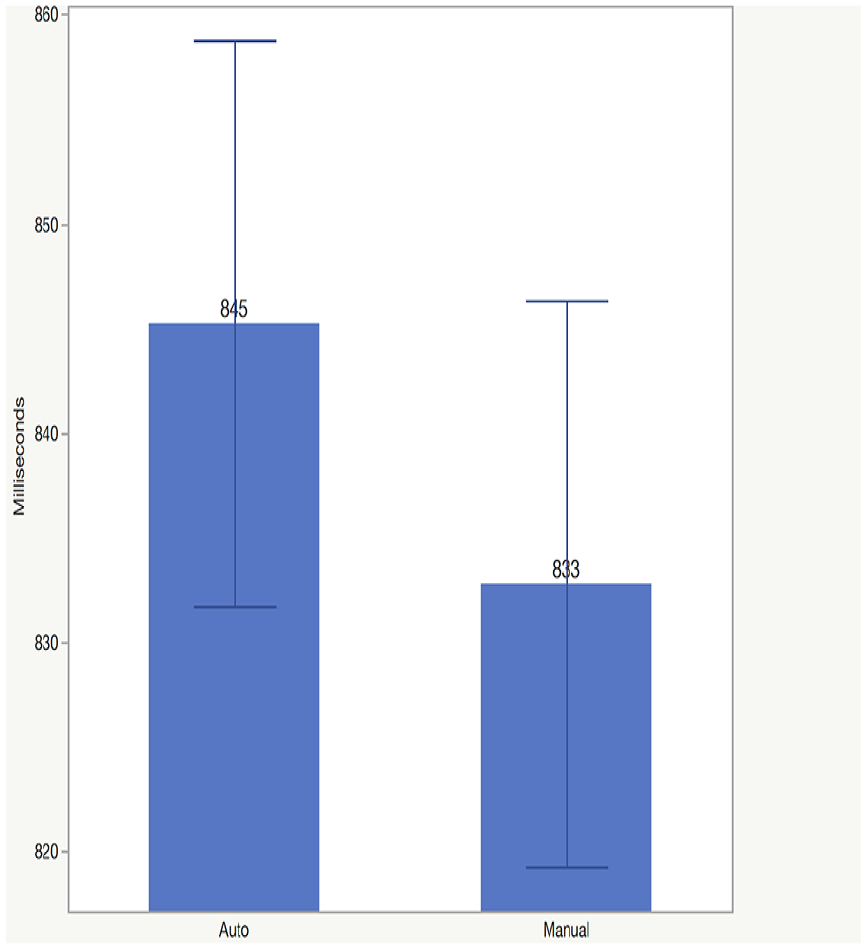

Study 3 results followed the patterns seen in the first two studies with respect to significant differences in performance, mean HRV, mean pupil diameter, and CSWAG percentages between workload and tracking conditions (all p < .05). Figure 7 depicts the differences in HRV R-R intervals in Study 3.

Study 3 mean HRV R-R intervals by tracking condition. Error bars denote the standard error.

Discussion

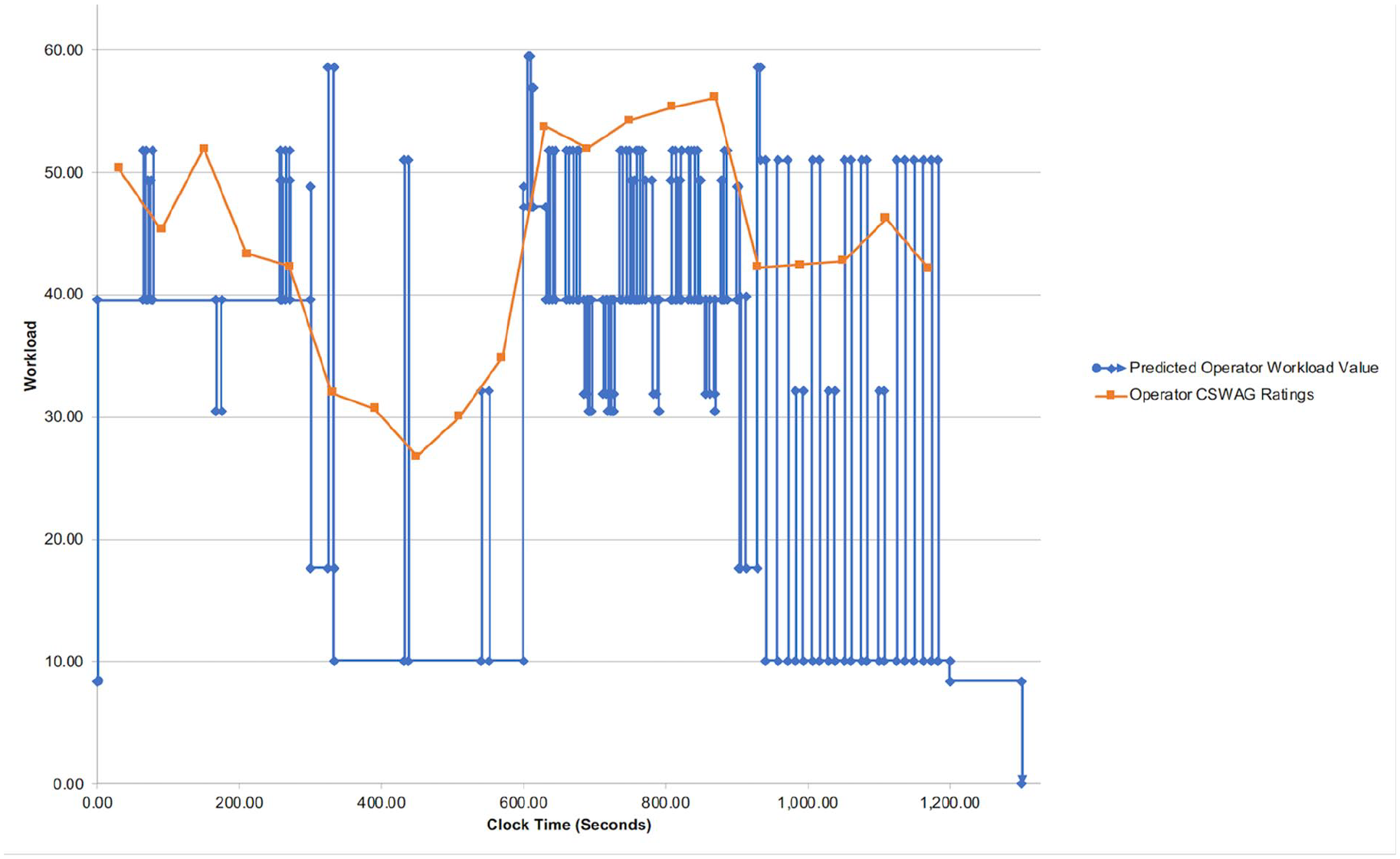

The accuracy of the models showed representational capacity to the real-world target when the predicted workload values were assessed against the results of the experimental studies. Higher workload condition results followed the associated IMPRINT models and lower workload prediction conditions followed suit as evidenced through pupil diameter and HRV (lower workload resulted in smaller pupil diameters and greater HRV). Figure 8 shows Study 3’s IMPRINT model cognitive workload predictions with participant continuous subjective ratings included.

Study 3 IMPRINT prediction model with composite CSWAG values overlayed.

Additionally, task workload values were manipulated in the model because of expert feedback, subjective cognitive workload ratings, and statistically significant differences in objective measures. The IMPRINT models showed utility by being able to incorporate multiple workload value updates. This research demonstrated that representative AA system task models can be developed using IMPRINT to provide design recommendations.

While the results are limited to the sampled population and controlled laboratory setting, the approach of pairing cognitive workload model predictions with human-in-the-loop validation studies provided insights into the impacts of AA on workload. These research efforts can help inform human performance modeling and system design considerations early in a system’s life cycle. While we develop technologies to address future operational environments, we should address human cognitive workload considerations in parallel with those efforts.

Footnotes

Disclaimer

The views expressed in this writing are those of the author and do not reflect the official policy or position of the Department of Defense or the U.S. Government.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the US Army DEVCOM Aviation and Missile Center Agency, Redstone Arsenal, Alabama, 34898-5000, JON RNK76, as part of the author’s doctoral research conducted at the Naval Postgraduate School in Monterey, CA. This research is Distribution A, approved for public release; distribution is unlimited.