Abstract

When Artificial Intelligence (AI) agents are incorporated into teams, they are expected to possess teaming capabilities beyond their advanced technological skills. Human-Autonomy Teams (HATs) are AI agents and humans working interdependently toward shared goals. Teams engage in processes, including goal specification, planning, mission analysis, coordination, monitoring, and backup behaviors. Participation of AI agents in teamwork processes can elevate HAT effectiveness and enhance the involvement of the agents as teammates. This study explored three levels of support for teamwork processes from AI agents: passive, reactive, and proactive. The study was conducted in HiveMind, a cooperative gamified testbed. The proactive agent scored higher on several assessment metrics, including effective communication, interdependence, and teaming. Furthermore, participants reported positive perceptions of the agent’s proactive support and initiative taking. The study’s findings suggest that AI teammates’ level of participation in teamwork processes influences human teammate’s perceptions and teaming experiences with their AI teammate.

Introduction

With the increased incorporation of Artificial Intelligence (AI) in a variety of domains, humans and AI are working in ever closer coordination. Human-Autonomy Teams (HATs) form from humans and AI agents working toward common goals while tackling interdependent tasks (Johnson et al., 2012). However, while AI is progressively expanding in its technical capabilities, additional characteristics need to be developed for AI agents to be considered and engaged with as teammates. Treating AI agents as teammates indicates that humans expect them to possess cognitive, behavioral, and affective teamwork competencies, such as anticipating teammates’ behaviors, coordinating effectively, and taking initiative (Zhang et al., 2021).

There is an expanding number of decisions when designing an AI agent teammate, including the level of autonomy (Parasuraman et al., 2000), transparency (Panganiban et al., 2020), explainability (Endsley, 2023), communication (Zhang et al., 2023), and etiquette (Dorneich et al., 2012; Flathmann et al., 2024; Miller, 2002) to name a few. However, when AI agents are incorporated as team members, they become a part of the teamwork processes cycle (Dorneich et al., 2023; O’Neill et al., 2023). Therefore, for AI agents to be involved as teammates, there needs to be continued exploration of their level of participation and support of these teamwork processes, such as participating in goal setting, mission analysis, planning, performance and systems monitoring, and backup behaviors. In a synthesis of HAT literature, O’Neill et al. (2022) suggested that while communication has been explored in teamwork processes, other aspects have received less attention in HAT, which limits the current understanding of what teamwork processes contribute to effective HATs.

While the AI agent’s capability to participate in teamwork processes can elevate HAT effectiveness, there are several levels through which the agent can participate. Previous work suggests pushing information to other teammates is an effective trait in all-human teams, but this trait may be more limited in teams with agents. In contrast, passive AI teammates have limited ability to anticipate when to share information (McNeese et al., 2018). Furthermore, proactive communication and supportive behaviors are desired AI teammate traits (Zhang et al., 2021), with proactive AI teammates leading to higher trust, satisfaction, and task performance than reactive AI teammates (Zhang et al., 2023). However, studies have primarily focused on proactivity in information sharing, which is only one teamwork process. Teamwork literature suggests that effective teammates engage in a variety of processes, including goal specification, mission analysis, planning, coordination, performance, systems monitoring, and backup behaviors (Marks et al., 2001; Rousseau et al., 2006; Salas et al., 2005). Therefore, considering how proactivity is a growing area of interest in AI teammate design, this study investigates passive, reactive, and proactive participation in teamwork processes beyond information sharing.

This study aims to explore AI agent support of teamwork processes by designing an AI agent with three levels of participation in teamwork: passive (no participation, except if asked by the human), reactive (suggests participation if the human is silent), and proactive (participates in teamwork processes proactively regardless of human status). This design strategy has been explored in a cooperative gamified testbed called HiveMind. In HiveMind, two ants (one human and one AI agent called RoboAnt) have access to different views of the environment. The information gap in the testbed requires communication between teammates to solve the game, creating high interdependency. The AI agent in this study was instantiated through the Wizard of Oz technique.

This paper provides the initial assessment of the AI agent design with three levels of teamwork processes’ support. The study aimed to understand better how the AI agent’s support affects human teammates’ perceptions and experience with their AI teammate RoboAnt. This study explores how AI agents’ participation in teamwork processes impacts human teammates, creating a foundation for future studies aiming to design AI agents as teammates rather than tools and promote effective HAT teamwork competencies.

Testbed and Agent Design

The evaluation required the design of the HiveMind testbed and an AI agent, RoboAnt.

HiveMind Testbed

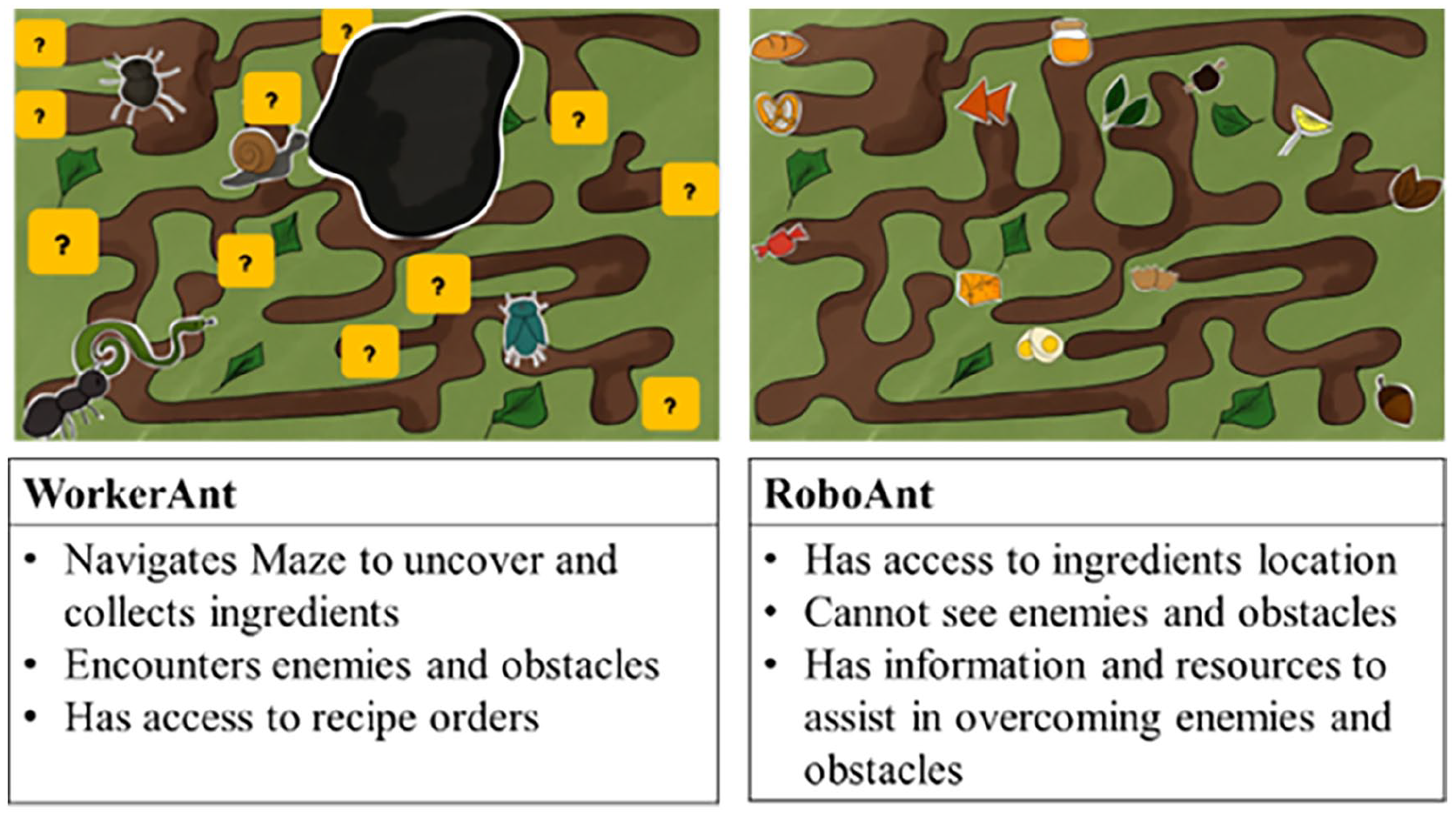

HiveMind is an asymmetric cooperative game testbed for HAT studies (Farah & Dorneich, 2022). HiveMind incorporates information asymmetry between participants, where two ants have access to two different views of the maze, each with different information. The team’s goal is to collect ingredients for a recipe in a specific order.

WorkerAnt: this role is assumed by the human participant. WorkerAnt cannot see the location of the ingredients (hidden with question marks) and will encounter obstacles without information on how to overcome them.

RoboAnt: RoboAnt, the AI teammate, has access to the second view of the maze, where it can see the location of the ingredients. RoboAnt cannot see the enemies and obstacles on the map. However, they have information and tools to help WorkerAnt overcome these challenges.

Figure 1 shows the two different environments in HiveMind. The testbed was designed to induce verbal teamwork behaviors between the human-agent team.

HiveMind asymmetric game view and player roles.

Due to the asymmetry, each player has a part of the information; therefore, the testbed design intends to motivate the team to verbalize their goal and analyze their environment. Furthermore, due to their complementary roles, WorkerAnt must navigate the maze and collect ingredients while facing enemies. RoboAnt provides the location of ingredients, tools, and hints to overcome the enemies. This requires coordination and information exchange between the two teammates. To be successful, the team must verbally provide directions, update location, communicate environmental changes, and update progress across the maze.

AI Agent RoboAnt

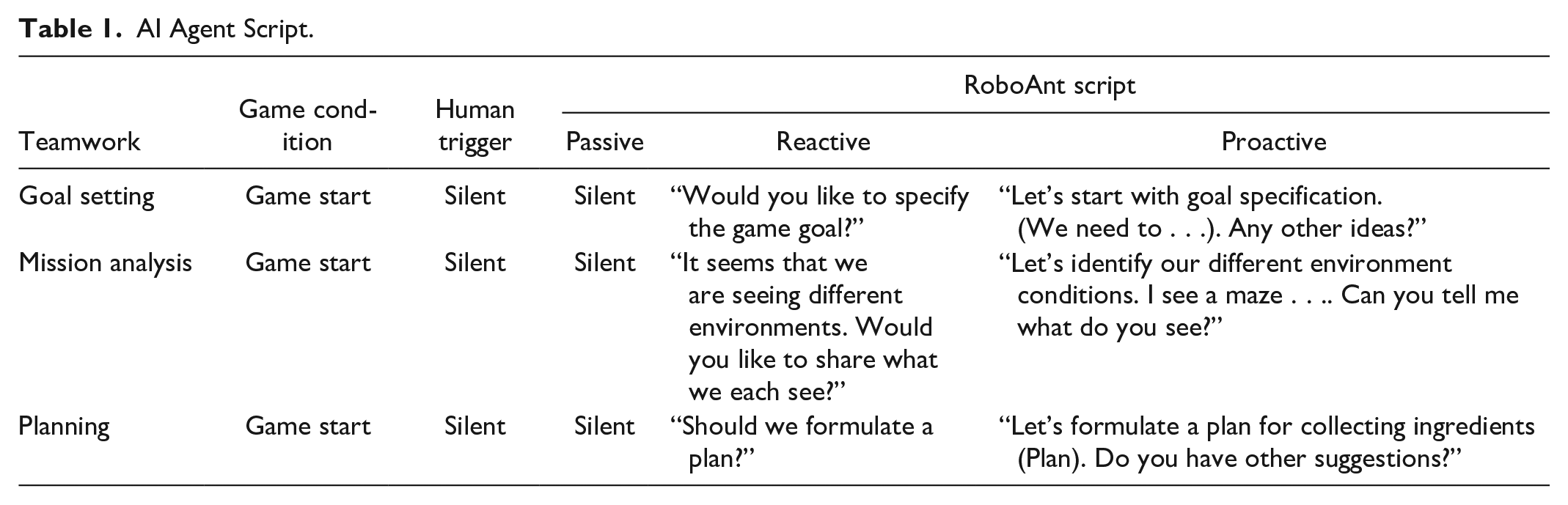

The AI agent RoboAnt was scripted to support teamwork processes based on the Preparation, Collaboration, Assessment, and Adjustment framework (Rousseau et al., 2006). The AI agent’s design framework includes seven teamwork processes: goal specification, mission analysis, planning, coordination, performance monitoring, systems monitoring, and backup behavior.

To script the agent, a list of all HiveMind game conditions was generated (e.g., game start, collecting recipe X, encountering enemy X). Every game condition was matched with a teamwork process for the agent to support (e.g., goal specification: game start). For every game condition, all human triggering events were listed (e.g., human is silent, human asks a question, human updates status). For every triggering event, the agent was scripted following the three levels of support: passive (no participation in teamwork), reactive (suggests participation if human is silent), and proactive (participates regardless of human status). Table 1 provides a summarized version of the agent design framework.

AI Agent Script.

Method

Participants

Seventeen participants (seven females, ten males) were recruited. Four participants were 18 to 20 years old; two participants 21 to 23; four participants 24 to 26; two participants 27 to 29; and five participants 30 and above. Three participants reported playing games daily, one weekly, and five a couple of times a month. The rest reported a couple of times a year or non-gamers.

Independent Variable

The independent variable in this study is the level of support for teamwork processes provided by the AI teammate RoboAnt. The independent variable has three levels: passive (no participation in teamwork), reactive (suggests participation if human is silent), and proactive (participates regardless of human status). Six participants were assigned to the passive condition, five to the reactive condition, and six to the proactive condition.

Dependent Variable

The dependent variables in this study are the agent teammate likeness (Wynne & Lyons, 2019), the perceived AI teammate performance (Crutchfield & Klamon, 2014), and interview questions.

Agent Teammate Likeness: this scale includes six HAT teaming dimensions: Agentic Capability, Benevolence, Communication, Interdependence, Synchronization, and Teaming (Wynne & Lyons, 2019).

Perceived AI Teammate Performance: This questionnaire aims to assess the AI teammate performance (Crutchfield & Klamon, 2014) by asking nine questions about whether the AI teammate did a fair share of the work, made a meaningful contribution, communicated effectively, monitored progress, helped in planning and organization, completed tasks with minimal assistance from humans, had skills and abilities to do a good job, respectfully voiced oppositions and actively involved in solving problems.

Interview questions: Interview questions focused on teamwork and RoboAnt as a teammate. The questions assessed the experiences and perceptions of human teammates with RoboAnt. This results section summarizes design implications extracted from the interviews.

Procedure

The study first includes a consent form and demographics survey. Prior video game experience is collected in a pre-game survey. Participants then watched an introductory video that introduced them to RoboAnt, established its general capabilities (verbal communication in spoken English; capable of teamwork activities), and overviewed the game setup (asymmetry information; different roles) without revealing details about the game components and detailed goals. This encouraged the human-agent team to communicate to establish goals and understand their different environments. After the video, the participant plays hivemind with RoboAnt, operated by a trained confederate. After completing HiveMind, participants fill in the HAT-oriented surveys and then engage in an interview. The procedure took 45 to 60 min.

Results

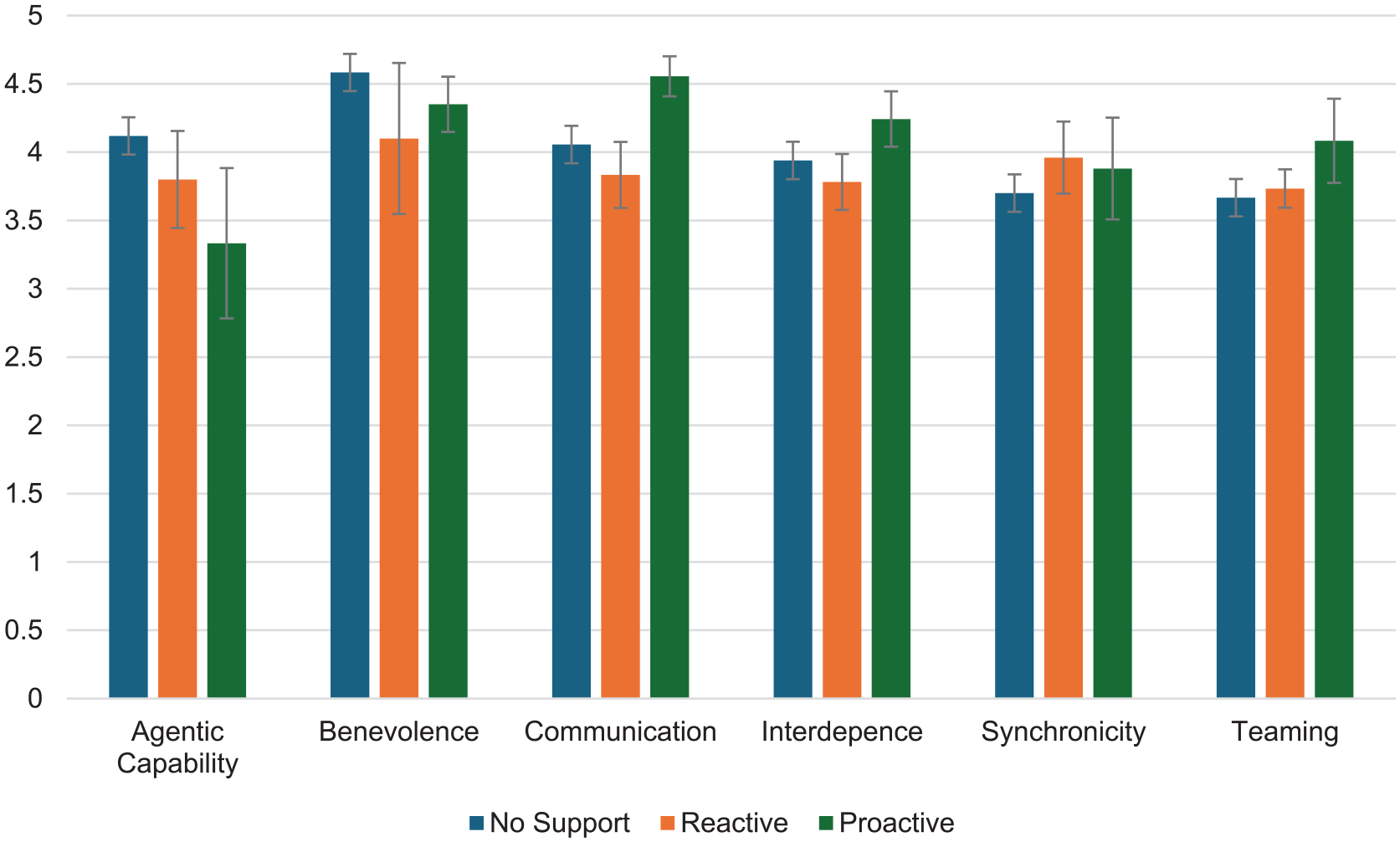

Agent Teammate Likeness

Proactive RoboAnt scored higher on average on communication (M = 4.5, SE = 0.1) compared to passive (M = 4.1, SE = 0.2) and reactive (M = 3.8, SE = 0.2). The proactive agent scored higher on interdependence (M = 4.2, SE = 0.2) compared to passive (M = 3.9, SE = 0.3) and reactive (M = 3.8, SE = 0.2). Additionally, proactive agents had a higher average for interdependence (M = 4.2, SD = 0.2) compared to passive (M = 3.9, SE = 0.3) and reactive (M = 3.8, SE = 0.2). Finally, proactive agents showed an advantage in teaming (M = 4.1, SE = 0.3) compared to passive (M = 3.7, SE = 0.2) and reactive (M = 3.7, SE = 0.1). Figure 2 presents a bar chart of the average scores with standard errors.

Agent teammate likeness average scores.

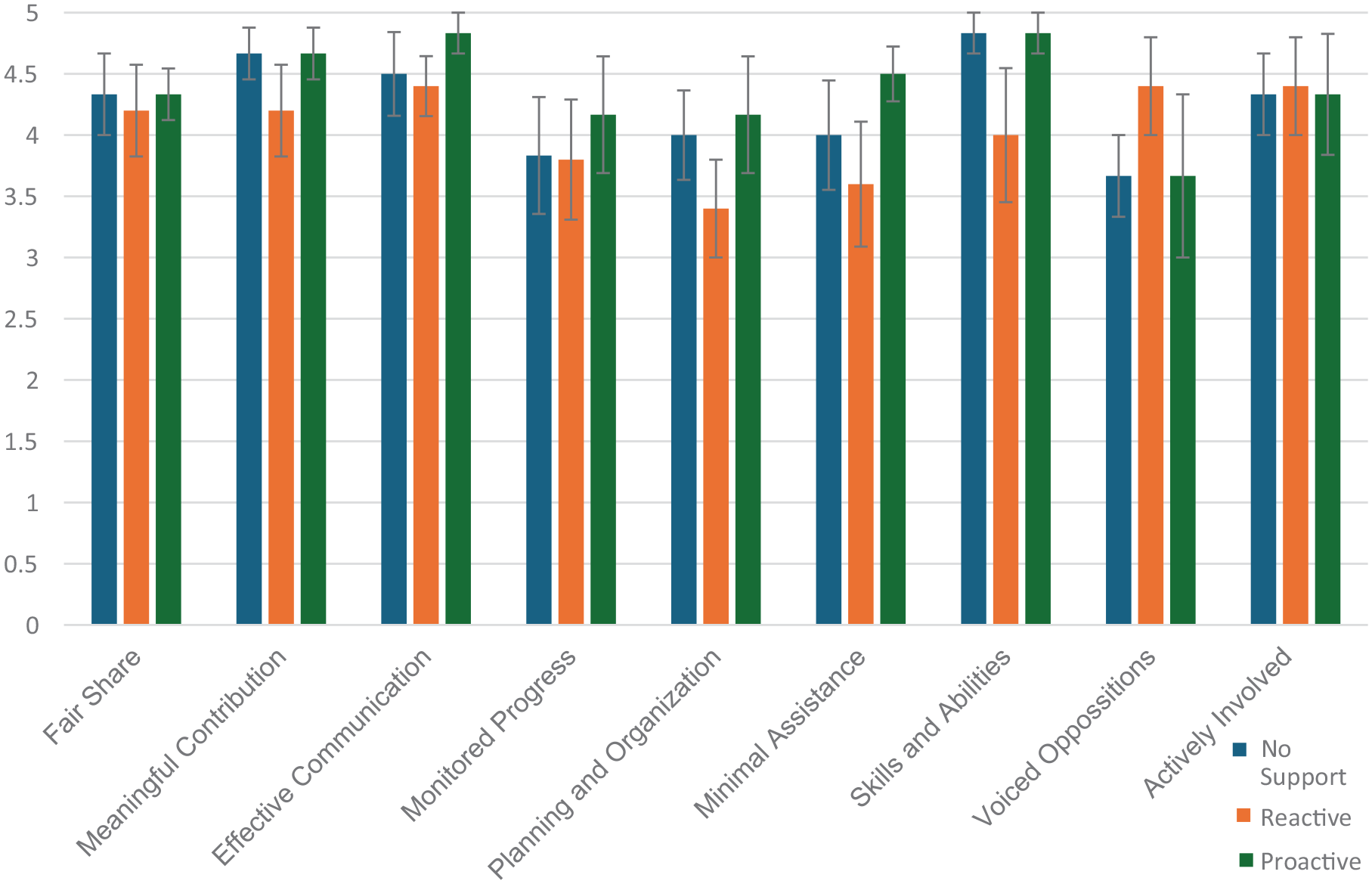

Perceived AI Teammate Performance

The proactive agent scored higher than passive and reactive in four of nine perceived performance measures. The Proactive agent scored higher on effective communication (M = 4.8, SE = 0.2) compared to passive (M = 4.5, SE = 0.3) and reactive (M = 4.4, SE = 0.2). The proactive agent had a higher monitoring (M = 4.17, SE = 0.5) compared to passive (M = 4, SE = 0.4) and reactive (M = 3.4, SE = 0.4). Proactive agent was perceived higher on planning and organization (M = 4.17, SE = 0.5) compared to passive (M = 4, SE = 0.4) and reactive (M = 3.4, SE = 0.4). Finally, proactive agents scored higher on completing tasks with minimal assistance (M = 4.5, SE = 0.2) compared to passive (M = 4, SE = 0.5) and reactive (M = 3.6, SE = 0.5). Figure 3 presents bar charts of the average scores with standard errors.

Perceived AI teammate performance scores.

Interview Design Implications

Teamwork Experience

When asked about their teamwork experience in HiveMind, four out of six participants in the passive condition highlighted that they first did not understand how to participate in teamwork with RoboAnt, mentioning that: “At first I did not quite understand that I can reach out to RoboAnt”; “It took me time to understand”; “It would be helpful if the AI could help me”; “It was limited.” In comparison, two out of five participants in the reactive condition mentioned involvement in teamwork: “I felt like there was a lot of teamwork. We communicated effectively”; “It was nice. Like having a teammate in the group.” Similarly, in the proactive condition, four out of six participants highlighted teamwork aspects like: “I feel connected with it”; “I find it good that RoboAnt was interacting with me. Like how to distract enemies, how to play the game and giving me the hints and directions; There was a prompt feedback. I felt like playing with another human being”; “I feel I couldn’t do the job without the involvement of the AI, so it was super useful”

A common aspect across the three conditions is the initial reluctance of participants to participate with RoboAnt as a teammate. Eight participants highlighted that: “After 15 minutes I did not have a choice and started using RoboAnt’s help” (Passive); “Initially, it took me time. Afterwards I was pretty much comfortable, and it became easier” (Passive); “Initially, I wasn’t sure if it was going to take my words since it is an AI, but I tried to communicate” (Proactive); “I was not sure if I should expect any answers from it or not” (Reactive). This observation indicates that AI teammates are a novel addition to human teammates. Therefore, they challenged teammates to adapt their teamwork processes and form a mental model of the AI agent’s taskwork and teamwork capabilities (Harris-Watson et al., 2023).

Teamwork Strategies and Behaviors

Participants in different conditions reported experiencing teamwork with RoboAnt at different levels. In the passive condition, four out of six participants highlighted that they tended to explore the maze by themselves first instead of relying on teamwork “Within every decision I would trust myself first and then RoboAnt”; “By the end I realized that I should have asked more questions if I did not know what to do, instead of try and loose points”; “Midway through the process, I knew how the ant was communicating with me, that’s when I got better at the game with the help of ant.” In the Reactive condition, three out of five participants indicated a two-way communication process with RoboAnt: “We used directional strategies and anchor points”; “I told the ant what I needed to complete my tasks and if I ran into an obstacle, they would help me understand how to overcome it.” In the proactive condition, six out of six participants highlighted RoboAnt’s proactive support such as: “From the beginning it told me where it was and what it was seeing. When I got stuck . . . [RoboAnt] reminded me of some information which was helpful”; “It was actively listening and communicating with me”; “RoboAnt is very supportive. It asked me about my location in the game and it told me how to overcome obstacles and how to get it done.”

Teamwork Adaptability

Since participants only played HiveMind once, they were asked how they would approach their teamwork if they were to play another round. Across the conditions, there was a consensus that participants would apply more teamwork with RoboAnt early on. Participants mentioned that: “I would first talk to her on what I was trying to do and what were the ideas that the ant has”; “I would communicate first before making any movement. To make sure we got the plan first”; “Now that I interacted with the system, I gained some adaptability. I think I would be more adapted to it and I understand how RoboAnt communicates with me”; “I would establish a strategy and maybe more social connection”; “I would be more open to listening. When the AI helped me, I understood they had a better understanding, and I was more open.” This willingness to apply more teamwork with RoboAnt indicates that human teammates need some level of familiarization with AI teammates to establish a mental model.

Discussion

The initial assessment of the design strategies suggests that the proactive RoboAnt had advantages in several metrics of the teammate likeness scale, specifically in communication, teaming, and interdependence. Participants perceived the proactive RoboAnt as more effective in communication, monitoring, planning, organization, and requiring minimal human assistance. Furthermore, design insights from interviews suggest that participants perceived the proactive agent as a teammate which was active in listening and communicating, and capable of providing backup and monitoring when needed.

The proactive AI agent was designed to proactively establish the game goal, propose a plan, and engage in mission analysis with their human teammate, to form an understanding of the asymmetric environments. This proactiveness could have facilitated teamwork situation awareness, which includes team goals, team roles, strategies and plans, and the task status of teammates (Endsley, 2023). The agent proactively supported all these teamwork processes, reflected by the higher scores on several metrics. Additionally, the proactive agent provided backup behavior and monitoring by asking: “have you encountered any enemies or obstacles?”; “would you like me to re-provide directions?.” These supportive behaviors are desired traits in AI teammates (Zhang et al., 2021) and were positively described in the interviews.

Conclusion

This study assessed various AI agent design strategies based on the agent’s level of support for teamwork processes such as goal specification, mission analysis, planning, and coordination. An initial exploratory assessment aimed to answer how the AI agent’s teamwork support impacted human teammates’ perception and experience with the AI. The proactive AI agent scored higher in metrics rating teaming and communication than a passive or reactive interaction strategy. Participants highlighted the proactive agent’s supportive behaviors and active communication.

Proactivity has been proposed as a desired AI teammate trait (McNeese et al., 2018; Zhang et al., 2021), however it has been mostly explored in information sharing. This work investigated three levels of AI teammate participation (passive, reactive, and proactive), across several teamwork processes, that were observed to promote effective teamwork in literature (Rousseau et al., 2006). This work contributes to a better understanding of AI agents’ design strategies that could promote the perception of agents as teammates. Findings suggest that the agent’s level of participation in teamwork processes influences human teammates’ perceptions and experiences. This provides a support for future work. Future work aims to assess the influence of the agent design on other teaming dependent variables such as performance, observable teamwork behaviors and team viability.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded in part by the Game2Work research program as part of the Iowa State University Presidential Interdisciplinary Research Initiative.