Abstract

Human Autonomy Teams (HATs) have been studied and many factors can influence HAT performance. However, how HATs manage team errors has yet to be understood. This paper explores how HATs manage team errors, specifically after automation errors and after receiving different forms of team training, using previously collected data. Three-member teams of two humans and one AI teammate were studied, completing reconnaissance tasks using Remotely Piloted Aircraft Systems (RPAS). The findings indicate that training methods may influence the effectiveness of team error management, and earlier detection and communication of the errors does not necessarily mean a more efficient error management process. Future research is needed to better understand this process within HATs.

Introduction

As autonomous technologies have advanced and are becoming more widespread in human-machine interaction (e.g., autonomous vehicles; drones), Human Autonomy Teams (HATs)—in which one or more humans collaborate with autonomous agents—computer entities possessing varying degrees of self-governance, working with humans toward a common goal—have become prevalent (McNeese et al., 2018; O’Neill et al., 2022). Many factors, such as communication and trust, that affect HAT performance have been studied (Bogg et al., 2021; McNeese et al., 2021). However, there is limited research on how HATs handle errors made by automated systems and the factors that affect their error management processes.

Within HATs, automated operation systems can make different errors, affecting their reliability (Chen et al., 2021). The impact of these automation errors on users’ performance varies. Different types of errors can lead users to make improper decisions and decrease performance, and even minor failures can have a negative impact (Chen et al., 2021; Guznov et al., 2016; Mosier & Skitka, 1999). Dong and Li (2024) studied automation function and malfunction in 2-person teams and discovered that automation malfunction impairs different elements, such as situation awareness and trust, and team performance in different ways. How automation errors are communicated plays a vital role in user performance (Chen et al., 2021).

To mitigate the outcomes from automation errors, error management should be considered to better understand how HATs manage these errors. In this paper, error management refers to the approach that separates the occurrence of errors from their outcomes, as elaborated by Horvath et al. (2023), citing (Frese et al., 1991). When automation is involved, error management is generally considered to consist of detection, explanation, and correction. Detection entails noticing that an error has occurred or may occur soon, explanation is the process of analyzing and grasping the nature of the error, and the correction stage includes the measures taken to either mitigate the error’s impact or implement strategies to prevent similar issues from arising in subsequent situations (McBride et al., 2014).

Regarding HATs, we should consider error management in the context of team errors. Team errors, or errors made in team processes (Reason, 1990, p. 9), include individual and shared errors. Individual errors are made by individual teammates. Shared errors are errors distributed across teammates regardless of whether direct communication is engaged (Sasou & Reason, 1999). Sasou and Reason’s framework of team error, including failure to detect, indicate, and correct individual errors and shared errors, reflects the “detection, explanation, and correction” components in error management when automation is involved.

Researchers have identified many helpful techniques to prevent human errors, and training has been one of the most essential ways (Van Cott, 2018). Some training methods have demonstrated better outcomes within HATS, which can be measured using traditional performance and efficiency metrics (Johnson et al., 2023) or tools from dynamic systems (Gorman et al., 2010a) in the face of different types of automation and autonomy failures.

Interactive Team Cognition Theory viewed team cognition as a changing activity that should be studied at the team level instead of studying static properties of individual cognition (Cooke et al., 2013). Interactive Team Cognition Theory also underscores the context of the team’s task environment. The concept of Interactive Team Cognition Theory and team coordination dynamics (Gorman et al., 2010b; Gorman & Wiltshire, 2024) can help understand the characteristics of the interactions among team members, team cognition, and performance during the error management process due to the dynamic nature of error management and team cognition.

This study aimed to better understand how Human Autonomy Teams manage automation errors and how training influences this process. Interactive Team Cognition Theory and team coordination dynamics suggest that when it comes to team-based error management, effective communication should be the key element for a successful error management process. Effective communication was found important in different types of teams, especially medical teams, in managing errors (Brown, 2004; Green et al., 2018) and when HATs overcome failures (Harrison et al., 2024). We hypothesize that when facing automation errors, teams that initiate communication about the errors faster (detection of the error as a team) should have a more effective process of achieving error correction due to more effective communication. Thus, teams receiving training that focuses on effective communication and better coordination should be more effective at error explanation and error correction.

Methods

This project utilized data from a previously conducted research project (Johnson et al., 2023). The experiment was conducted in a simulated task environment designed for team reconnaissance (CERTT-RPAS-STE; Cooke & Shope, 2004). Three task roles, navigator, photographer, and pilot are interdependent and require communication to complete the missions. The navigator’s tasks were to correctly plan the most effective and safest route for the Remotely Piloted Aircraft (RPA) and provide target waypoint information to the pilot and photographer. The pilot’s responsibility was to fly the RPA, considering the constraints provided by the navigator and the requested airspeed and altitude by the photographer. The photographer’s role required communication with the navigator and negotiation with the pilot in order to take a good-quality picture of the target. Each team role required a station containing three monitors, one keyboard, and one mouse.

Thirty teams of 60 participants were recruited from a major university in the southwest United States. For each team, two participants were randomly assigned to the navigator and the photographer role with one research confederate (the pilot). Teams were evenly distributed across three training scenarios upon arrival: control training, trust calibration training, and coordination training (Johnson et al., 2023) . Each team completed a 7-hr experimental session consisting of multiple missions. Participants were compensated $10 per hour.

The Wizard of Oz (WoZ) paradigm was employed so that the participants were unaware that the pilot was controlled by a confederate and believed they were interacting with an agent with autonomy. The pilot was controlled by a confederate following pre-written scripts (Kelley, 1984). All communication (text) was recorded through the chat message system built into the CERTT-RPAS-STE.

Participants in each training condition underwent a two-part training process: an instructional slide presentation followed by a hands-on training mission. The control training provided essential task-related knowledge and skills through both the slides and the hands-on training mission. During the slide presentation, participants learned that their pilot teammate was a program, referred to as a “synthetic teammate.” They were instructed to use standardized communication when interacting with this agent teammate and to anticipate certain limitations in its language processing abilities. Trust calibration training covered the information provided in control training, with the differences in calibrating participants’ trust in the agent teammate (pilot) by informing them that the agent can make mistakes in the slide training. Participants also experience delays during hands-on training in this training condition. A coordination training condition also contained the content of control training. Additionally, it focused on refining interpersonal communication and collective responses to system malfunctions. This was achieved by exposing team members to the information available to each teammate, while actively promoting information exchange among team members. During the training mission, the agent requested essential information and followed up if it was not provided in a timely manner with a shorter tolerance of delayed sending or requesting essential information from other teammates in this training method (Johnson et al., 2023).

Participants completed five missions, with the first mission being a control mission without any failures implemented. The other four missions included different types of failures during the missions. During the second mission, the first failure each team encountered was a 300-s Photographer Data Failure. During this failure, the photographer lost access to flight information, the display of waypoint information, and RPA status for 300 s to simulate an automation error within a team context. The agent teammate (pilot), the navigator’s displays, and the communication system remained unaffected. This failure was the first failure each team encountered during the experimental session after training. The teammates must communicate the necessary information read from a different team member’s station to the photographer and take a good picture based on the shared information. Each team was exposed to different types of failures after this automation failure throughout the missions. However, the current study only examined the first failure, which occurred in Mission 2, for the focus on automation error.

Data Analysis

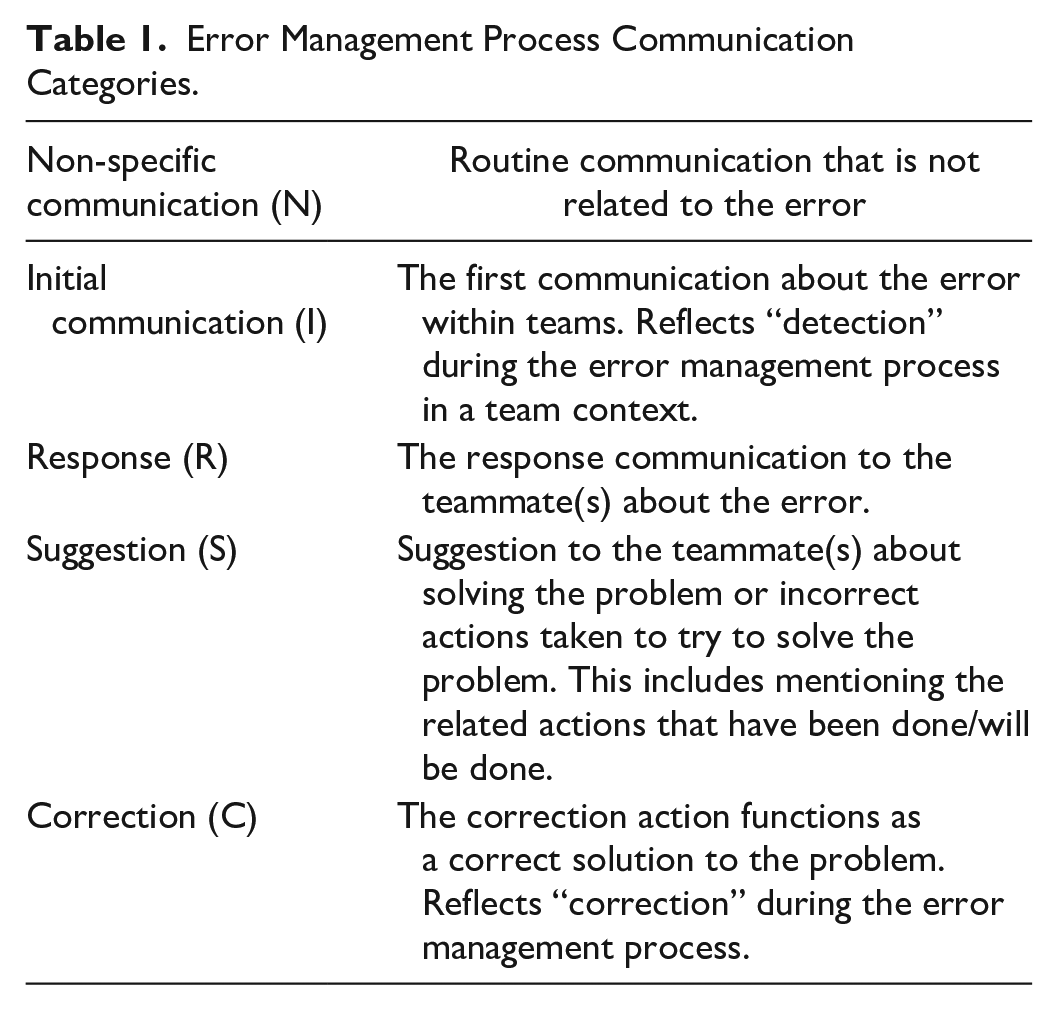

While considering error management on a team level, we try to understand and analyze at the team level rather than the elements of the team (the level of team members, etc.), which reflects the critical concept of Interactive Team Cognition (Cooke et al., 2013; Gorman et al., 2017). Inspired by Sasou and Reason’s (1999) framework on team error, we developed a behavioral coding scheme to capture each team’s detection and correction of the error through text communication during the automation error. Each communication chat message was coded as at least one of the following stages of the team error management process: Non-specific (routine) communication; Initial communication; or Correction, as explained in Table 1.

Error Management Process Communication Categories.

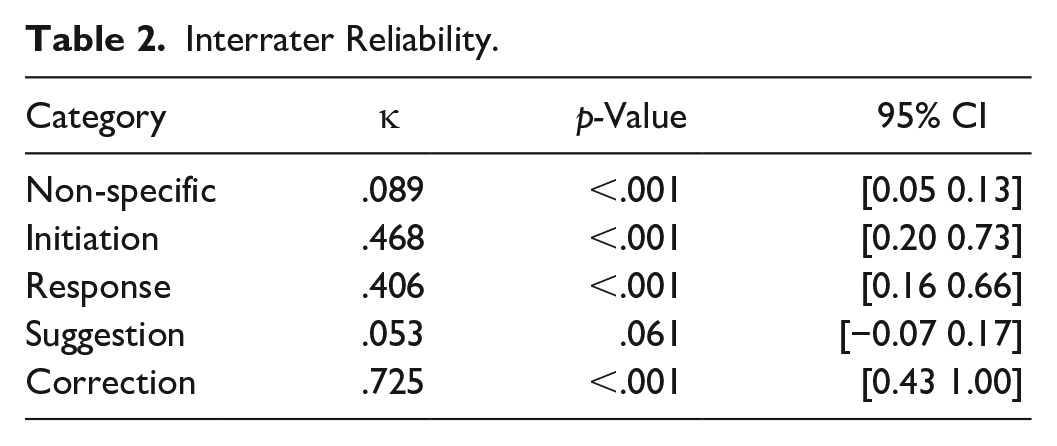

Both mission 1 and 2 from all teams were initially coded by one coder. Then interrater reliability was calculated using Cohen’s Kappa over two other raters (Table 2). The two raters were not aware of the hypotheses and reviewed 40% of the missions from each experimental condition that were randomly selected. A total of 24 missions out of 60 were distributed to the raters. Two raters coded these 24 missions based on the initial category instruction and then discussed the finalized rules of each category, as shown in Table 1. Cohen’s Kappa for the category “Suggestion” is smaller than 0.2, indicating that the interraters disagreed regarding communications that might have fit in the “Suggestion.” However, Responses and Suggestions categories were not used in the final analysis because our research questions focused specifically on the initial detection and final correction of errors, rather than the intermediate problem-solving steps. While Response and Suggestion offer valuable insights into the team’s process of developing solutions, they were beyond the scope of our current analysis. We plan to explore these categories in future studies to gain a more comprehensive understanding of team error management dynamics.

Interrater Reliability.

Detection of the error on a team level was time-stamped for the team member’s initial communication with teammate(s) about the error (Initiation time). Teams’ correction of the error was time-stamped when any team member successfully implemented the correct action to solve the problem (Correction time). The time between initiation and correction was interpreted as the time for the team to coordinate and identify a correct solution in response to this environmental autonomous error (Coordination time).

The teams that demonstrated Initial Communication (I) and Correction (C) within the error time frame were coded as “successful,” and the teams that failed to come up the correct solution were coded as “unsuccessful.” Bivariate correlational analysis and MANOVA were conducted on teams’ Initiation time, Correction time, and Coordination time.

Results

Seventeen out of 30 teams successfully overcame the error (seven Coordination Training teams, five Trust Calibration Teams, and five Control Teams). Bivariate correlations of successful teams revealed that teams’ Initiation Time and Coordination time were significantly correlated (r [15] = −.552, p = .022) and teams’ Correction time and Coordination time were significantly related (r [15] = .615, p = .009). The combination of the significant correlational results indicated that earlier communication about the errors does not necessarily equal a more effective error management process.

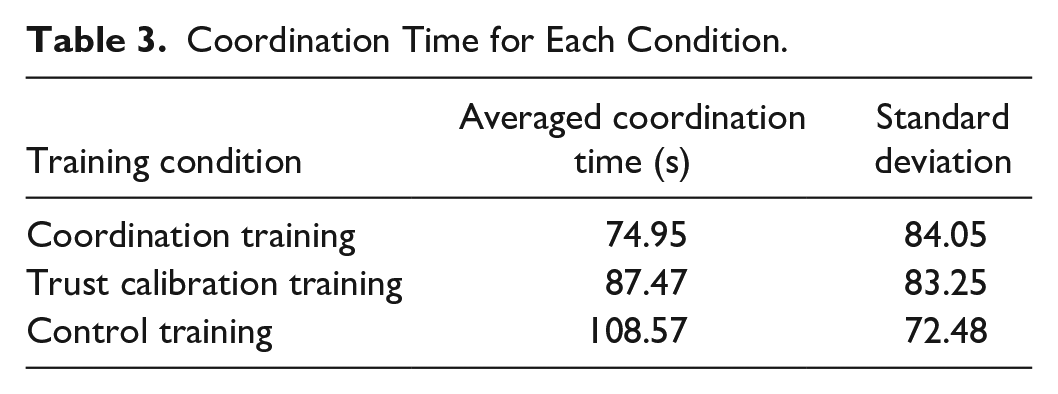

No significance was found between training conditions from MANOVA for Initiation time (η p 2 = 0.232), Coordination time (η p 2 = 0.035), and Correction time (η p 2 = 0.059). However, as Table 3 shows, Coordination time contained a trend. Teams that received coordination training and trust calibration training appeared to coordinate and manage this error more effectively than those that received control training. No significant results were found among unsuccessful teams. These teams either didn’t communicate about the error at all or didn’t come up with the correct solution by the end of the error. Failures of these unsuccessful teams require further research to understand the obstacles these teams encountered.

Coordination Time for Each Condition.

Discussion

This research contributes to understanding how HATs respond to automation errors. The findings suggest that different training methods influence the effectiveness of team error management. Teams that underwent coordination training and trust calibration training demonstrated a trend toward being more effective in handling errors compared to those with control training, which is consistent with prior results using other indicators of team effectiveness (Johnson et al., 2023).

One unexpected finding is the negative correlation between the time taken for teams to initiate communicating about the error and the time taken to correct it. This result does not support that the faster HATs initiate communication about the error, the faster they can come up with a correct solution for automation errors. However, future research is needed to explore the factors that influence this relationship and determine whether there is a critical range of time within which humans and autonomy should communicate errors and/or suggestions for improvement. Effective communication during the error management process should also be encouraged within HATs, as it can play an essential role (Harrison et al., 2024). This research study has several limitations. One significant limitation is that it only examines a single type of automation error, which does not provide a comprehensive view of how HATs manage all potential automation errors.

Currently, there is a lack of team research that focuses on error communication timing (e.g., when and how soon error communications need to happen to be effective) and appropriate channels of team error communication (e.g., human-to-human vs. human-to-machine) for error management in HATs. The insights from this study can inform the development of training techniques that better support effective communication utilizing different strategies in diverse fields where HATs are becoming prevalent (e.g., robotic-assisted surgical teams, air traffic controllers and pilots).

Footnotes

Acknowledgements

We acknowledge Dr. Craig J. Johnson, Dr. Mustafa Demir, Dr. Nathan J. McNeese, Alexandra T. Wolff, Dr. Steven M. Shope, Garrett M. Zabala, Sophie He, Cody Radigan, Tanvi G. Tendoklar, David A. Grimm, Ye Li and Srihari R. Sangaraju for their contribution to this research.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.