Abstract

Trust remains a critical focus within human-robot interaction research with the benefits of trust including increased task performance. There is a growing body of literature that suggests that the perceived characteristics of robots play a role in trust; however, less is known about the relationship between trust and the perceived characteristics of an autonomous robot teammate in an applied military setting. We investigated the relationship between the perceived characteristics of a robot teammate and the level of trust in the robot by equipping United States Military Academy (USMA) cadets with a pseudo-autonomous quadrupedal robot teammate during field training. We found that the likability, animacy, perceived intelligence, and perceived safety of the robot positively correlated with trust.

Introduction

Robots are becoming increasingly capable as teammates across various applications, with substantial benefits from autonomous robot. Previous research (E. de Visser & Parasuraman, 2011) showed that autonomous robots can increase task performance while lowering the subjective cognitive load of human teammates. Trust remains an important factor in the interaction between humans and autonomous robots as without proper trust, misuse, abuse, and disuse can arise (Parasuraman & Riley, 1997). Trust in automation develops from a variety of sources including the performance of the automation (Schaefer et al., 2016), how well the automation matches the expectations of the human teammate (Schaefer et al., 2016), and trust repair (Pak & Rovira, 2023). Understanding these factors is particularly important when designing robots for high-stress, high-complexity environments of the military domain. Robots are common within the military domain, ranging from bomb-defusing robots to reconnaissance drones (U.S. Army DEVCOM Army Research Laboratory, 2021). These robots are not fully autonomous and require a human operator to control, thus taking the human’s attention away from other tasks. Robots can potentially function as teammates to alleviate some of the cognitive burden of manually operating the robot, but the change in the dynamic between human and robot comes with its own challenges. Research in human autonomy teaming (HAT) found that human-robot teams are becoming more effective with increased levels of automation but still do not perform to the levels of all-human teams (O’Neill et al., 2022). If trust is considered a mediator of team outcomes (O’Neill et al., 2022), perceptions of the robot teammate affecting trust may be a partial limiting factor in team performance.

A series of perceptual factors have been linked to trust. Anthropomorphism has been tied to a positive relationship to trust. E. J. de Visser et al. (2012) established a positive relationship through the identification of trust resilience, while Culley and Madhavan (2013) identified the risk of over-trusting automation with increasingly more anthropomorphic characteristics. These findings predict that anthropomorphic traits will be beneficial in instances when designers want to increase appropriate trust from operators. However, some robots may not be easily anthropomorphized. Animacy is given far less consideration relative to anthropomorphism. Past research found that animacy and trust did not significantly change between interacting with a robot with an inner monologue and one without (Pipitone et al., 2023). Likability is another factor deserving of greater attention in relation to trust. Research has established that likable robots are generally used more (Nass & Moon, 2000); however, considerations on likable robots’ trustworthiness have yet to be considered. Perceived safety is related to trust, but it depends on context (Akalin et al., 2023). The perceived level of safety of a robot can shift with variations in the social environment, physical environment, and task (Akalin et al., 2023). Closely related to perceived safety is perceived intelligence. Intelligence is considered a function of competency by Bartneck et al. (2008) though competency is not well defined. Akalin et al. (2023) defined competence as how well a robot a task and its performance which aligns with established research on how performance is related to trust (Hancock et al., 2011).

Study Overview

As a part of a larger study, we investigated how the perceptions of robot teammates related to trust by equipping United States Military Academy (USMA) cadets with a pseudo-autonomous quadrupedal robot for a practice raid on a target in an urban environment and an attack on a target from dense woods during summer training exercises. The task in this study provided researchers with a unique opportunity to observe users in an applied military setting, with researchers entering the field alongside cadets. Past military field research on HAT failed to capture important design factors which led to O’Neill et al. (2022) excluding all instances of military field HAT from their literature review, thus leaving a need for improved field studies. We believe that our study overcame past challenges with field HAT research by partially meeting the inclusion criteria from O’Neill et al. (2022) by being empirical, including an identifiable level of automation for the robot, and having at least one person and one robot. The final inclusion criteria, working on a task interdependently toward a common goal, was fulfilled when cadets elected to use the robot. We anticipated that increased desirable perceptions (anthropomorphism, animacy, likability, perceived intelligence, and perceived safety) of the robot teammate would correspond with an increased level of trust.

Method

Participants

Data from 55 cadets who consented to participate in the study and provided post-robot-interaction assessments are presented. The mean age of participants was 21 years old (78.2% male and 21.8% female). The amount of experience cadets had with military robots varied, with 67.3% having no experience, 21.8% having very little experience, 9.1% having some experience, and 1.8% having a lot of experience as measured by a lab-developed questionnaire.

Materials

The team in the field was equipped with one Boston Dynamics “Spot” robot. In addition to the robot, the field team brought one robot backup battery and a tablet controller. A second team was equipped with paper surveys, clipboards, and writing utensils for data collection. Completed surveys were digitized using a document scanner and scored automatically with an optical mark reader (OMR) (Deshmukh, 2019).

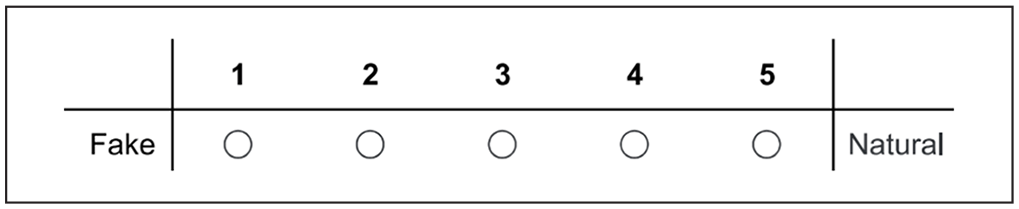

This study focused on the Godspeed scale (GQS) and Trust in Perception of HRI scale (HRI scale). The GQS scale measured the perceptions of robots across five, five-point semantic differential scales depicted in Figure 1 (Bartneck et al., 2008). The scales are anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety (Bartneck et al., 2008). Anthropomorphism measured how human-like in appearance or behavior the robot appeared. Animacy measured how much like a living creature the robot appeared. Likeability was how likable the cadets perceived the robot. Perceived intelligence was how aware the robot appeared. Perceived safety measured how much of a danger to themselves cadets viewed the robot.

Example item from the GQS from the Anthropomorphism scale.

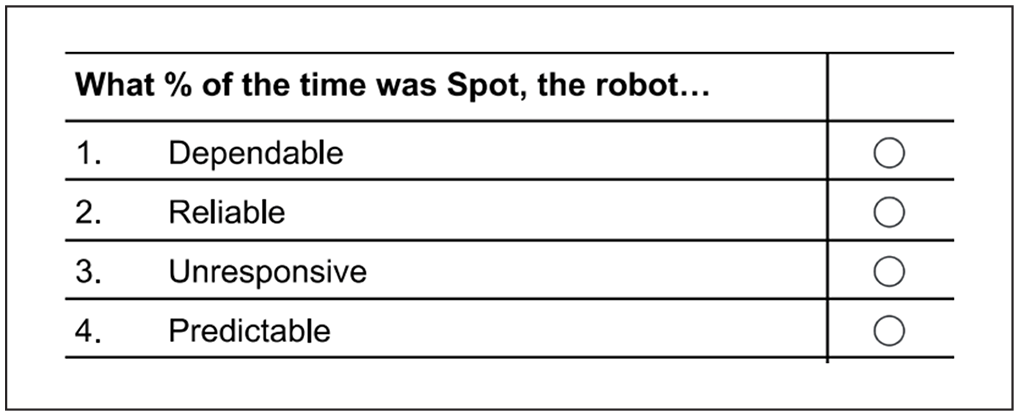

The second scale used for this analysis was the abbreviated Perceived Trust in HRI scale depicted in Figure 2 (Schaefer, 2016). This scale evaluated how much trust participants placed in the robot by responding to statements in percentages, ranging from 0% trust to 100% trust in 10% increments.

Example items from the HRI scale. Scale runs from 0% to 100% horizontally in 10% increments. Point 0% is depicted. Participants bubbled their responses in the circles right of the question.

Procedure

The robot was pseudo-autonomous, with a human operator as the controller and interpreter of the cadets’ verbal commands. Robot operators were members of the research team who were trained to operate the robot and well-experienced with the robot’s capabilities and limitations before entering the field. The robot was equipped with 360° camera vision, a live video feed, collision avoidance, stair walking, stabilization, and a self-righting feature. In the event the self-righting feature failed, operators manually righted the robot. Safety was the foremost concern in the field, thus operators kept the robot six feet away from themselves and cadets at all times. Cadets were briefed on the robot and provided with a demonstration of the robot’s features prior to any task involving the robot.

To run the study, the research team split into two teams to run the participants and collect the survey data from cadets. Two researchers met cadets at the training location with the robot and necessary equipment before the exercise. The field team established contact with the cadet functioning as the platoon sergeant to receive instructions on which squad was granted access to the robot. The robot operator then followed the squad through their exercise, sending commands to the robot as instructed. After the exercise, cadets departed from the field. The data collection team members were staged outside of the training location where they identified which cadets were in the squad that used the robot and collected post-experience surveys. Once the surveys were completed, the full team identified poorly marked surveys (surveys with stray markings, poorly erased markings, light bubbles, or bubbled twice) and clarified the responses. Surveys were scanned upon return to NC State and scored with the OMR checker.

Results

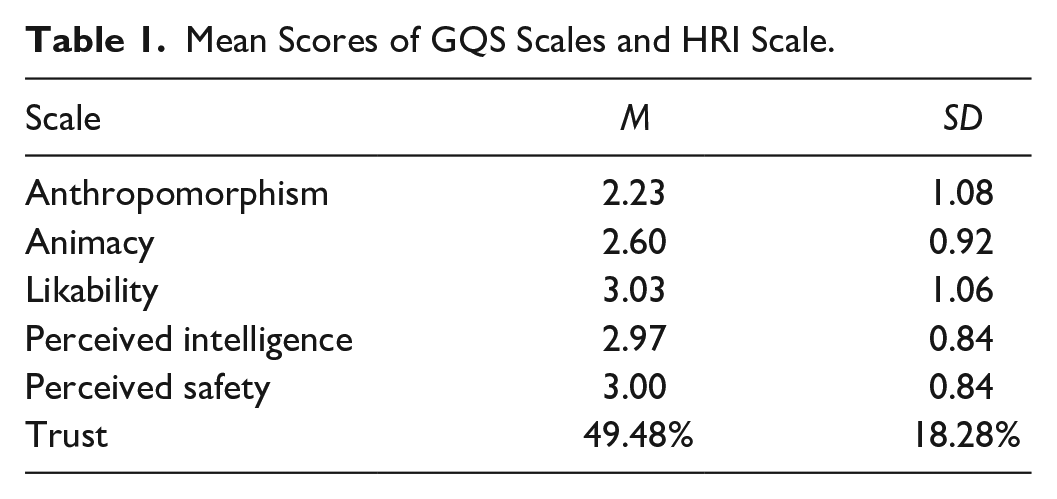

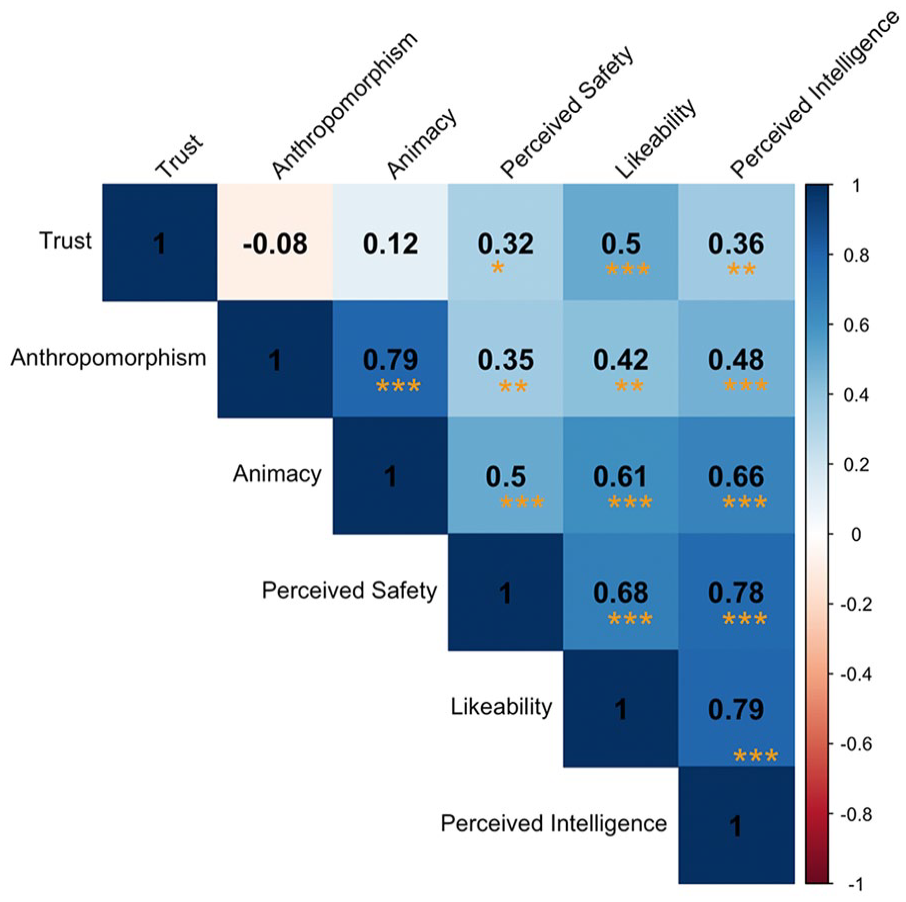

A total of 55 cadets answered all items of the GQS and HRI surveys. We did not include the final GQS survey item in any subscale calculations because its terminology was poorly understood (“Quiescent”). The mean scores of the GQS scales are reported in Table 1. We used a Pearson correlation to evaluate the relationship between the GQS and HRI scale depicted in Figure 3. Anthropomorphism was not significantly correlated with trust, r(55) = −.08, p = .54. Animacy was not significantly correlated with trust, r(55) = .12, p = .39. Likability was significantly correlated with trust, r(55) = .50, p < .001. Perceived intelligence correlated with trust, r(55) = .36, p = .007. Perceived safety correlated with trust, r(55) = .32, p = .016.

Mean Scores of GQS Scales and HRI Scale.

Relationship between trust in the robot and the subscales from the GQS. Correlation coefficients shown.

Discussion

Our findings suggested a relationship between trust and the perceptions of robot teammates. Most of the GQS scales significantly correlated with trust; however, the anthropomorphism and animacy scales were not significantly correlated with trust. The relatively low mean scores of anthropomorphism and animacy were similar to a previous study that used the GQS to observe how older adults perceived a social robot (TIAGo) to support aging in place (Tobis et al., 2023). They found that the GQS anthropomorphism and animacy scores were significantly lower than the other scale items and believed that this was caused by participants not recognizing the social presence of the robot (Tobis et al., 2023). Anthropomorphism generates trust, so it is reasonable to assume that non-anthropomorphic teammates would not capitalize on this effect, hence the lack of relationship between anthropomorphism and trust. It is important to note that machine-like perceptions of robot teammates were not negatively correlated with trust.

Likability was the most strongly correlated with trust which contributes to the established finding of likable robots being used more (Nass & Moon, 2000). Our correlation between perceived intelligence and trust supports the past literature’s connection between greater trust and increased perceived intelligence with more competent robots garnering greater levels of trust (Akalin et al., 2023; Hancock et al., 2011). The positive correlation between trust and perceived safety also aligned with past research that considered trust as a factor in a taxonomy for the perceived level of safety of robots (Akalin et al., 2023).

Limitations

Our primary challenge was managing the amount of exposure cadets received to the robot. The exercise encouraged cadets to make their own plans and decisions. This occasionally meant that the robot was not part of a platoon’s plan and was left on standby until called for. The use of paper surveys was another challenge. These surveys were vulnerable to damage, input mistakes, and skipped items. However, the OMR greatly limited the chances of incorrectly scoring items. Our OMR was more reliable than a human scoring the surveys by hand, with a false alarm rate of .02%.

Conclusion

Testing the robot in the field during a training exercise provided us with a rare opportunity to study how cadets interacted with the robot in an applied setting. We found that trust correlated with the perceptions of autonomous robot teammates across multiple dimensions. Anthropomorphism was not significantly correlated with trust, which raised questions about why it was not correlated despite past studies establishing a positive relationship between anthropomorphism and trust. Greater levels of trust in the robot corresponded with higher perceptions of likeability, perceived intelligence, and perceived safety. We believe there is a need to further investigate the disconnect between trust in autonomous quadrupedal robots and perceived anthropomorphism.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the ONR award N00014-22-1-2813.