Abstract

This study explores the application of agent transparency in the context of autonomous ships. Four levels of transparency were developed depicting decisions, planned actions, reasoning, and input parameters of a collision and grounding avoidance system in a realistic navigational context. Thirty-four licensed navigators were provided with Human Machine Interface concepts depicting four levels of transparency. Qualitative feedback was obtained through semi-structured interviews about which information they felt is needed to supervise autonomous ships safely and effectively. In addition, the participants’ ranked the HMIs according to their preferences. The results indicate the need for depicting the outcomes of the system’s collision risk analysis for supervisory control. Furthermore, the results illustrate the variations in supervisory strategies and the resulting dilemma for the amount and type of information required to support supervisors. Finally, this study highlights the importance of expert knowledge in the design of safety critical systems.

Introduction

The maritime industry is investing in advanced technologies to reduce its environmental footprint, increase personnel recruitment and retention, improve its safety, and enhance its resilience against adverse conditions, whilst maintaining profitability. To contribute to achieving these goals, Artificial Intelligence (AI) is anticipated to play a central role in supporting navigators in critical decision making and possibly even allowing ships to sail without direct human involvement (Kretschmann et al., 2017; Kurt & Aymelek, 2022). For example, developments point toward the application of collision and grounding avoidance (CAGA) systems for autonomous navigation purposes (Zhang et al., 2021). Considering the safety-critical nature of maritime navigation, this means that AI-enabled systems need to demonstrate a high degree of reliability and robustness across a wide range of situations. However, given the limitations of such systems to operate in novel and complex situations, careful design, implementation, management, and operation is required when deploying these in real-world environments (Littman et al., 2021; National Academies of Sciences, Engineering and Medicine, 2022). Therefore, proposed autonomous ship concepts typically employ human operators to monitor, supervise, and potentially intervene in the system to ensure the required performance and safety levels are achieved (IMO, 2018; Massterly, 2023).

Decades of research has demonstrated that there are significant human performance challenges associated with assigning humans a supervisory role of highly automated systems (Bainbridge, 1983; Endsley, 2017). Since operators are tasked with supervising systems that make their own decisions and actions, they are typically removed from much of the information- and decision-making loop, and their ability to intervene in the system may be affected (Endsley, 2017). As a result, their capability to intervene is impacted. Nevertheless, recent research has suggested that by disclosing the system’s decisions, planned actions, and internal reasoning to the operator, that is, by making the system “transparent,” situation awareness (SA) is supported, and some of these challenges may be alleviated (van de Merwe, Mallam, & Nazir, 2024; van de Merwe, Mallam, Nazir, & Engelhardtsen, 2024). However, considering the novelty of the application of AI-enabled systems in safety-critical domains, there is limited experience with the effect of transparency in these settings (National Academies of Sciences, Engineering and Medicine, 2022). Also, although previous studies have advocated the need for autonomous systems to be transparent about their decisions, actions, and analytical processes (Chen et al., 2014; Endsley et al., 2003; Hoff & Bashir, 2015), the operator’s view on which type of information is supportive of adequate supervision is less explored. Therefore, the aim of this study is to explore feedback and experiences from licensed navigators on transparent Human Machine Interface (HMI) concepts designed to provide the information needed to oversee the decisions and planned actions of CAGA systems.

Method

Participants

Data was gathered from 34 participants (32 males, 2 females). The participants were between 25 and 67 years of age (

HMI Concepts

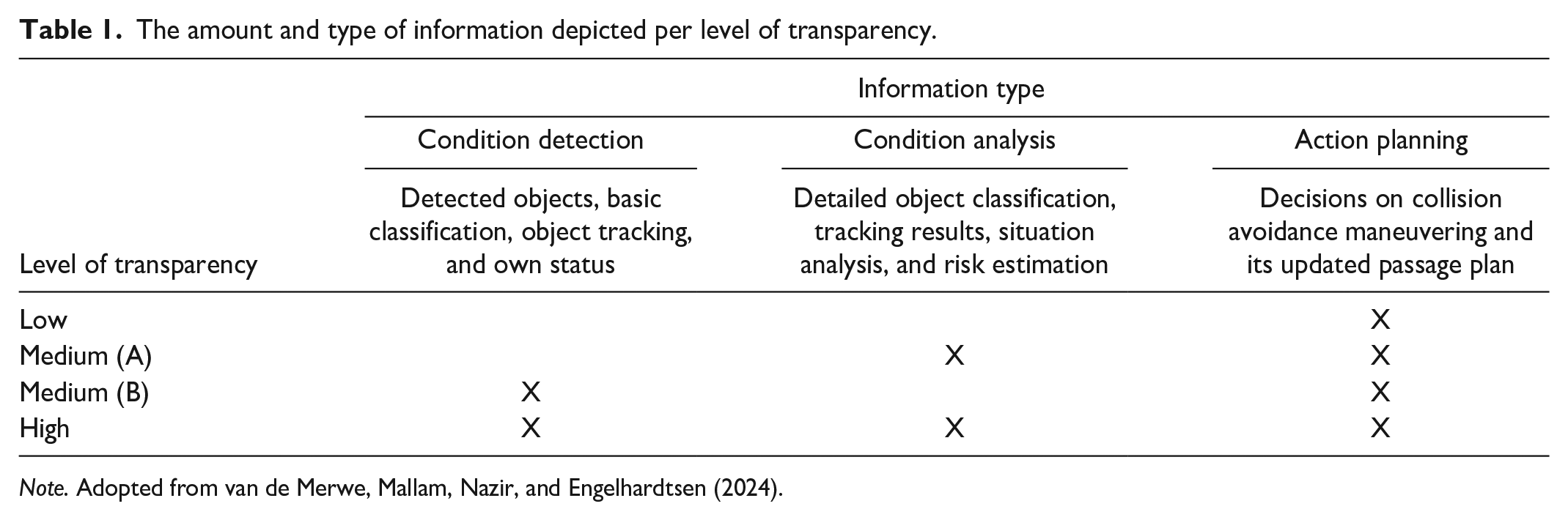

This study is part of an ongoing research project addressing transparency in an autonomous shipping context. In an earlier phase of the project, HMI concepts were developed to support human supervision of autonomous CAGA systems. In addition, realistic traffic situations were created by a navy-certified navigator depicting own ship in a navigational conflict with other vessels (van de Merwe et al., 2023a). Variations to the HMI concepts were developed to represent levels of transparency and depicted own ship’s perception of the traffic situation, its analysis, decisions, and planned actions. The amount and type of information constituting each level of transparency was varied according to a model of information processing (van de Merwe et al., 2023b) (see Table 1). Detailed examples of the HMI concepts and their variations in level of transparency can be found on the journal’s website as supplemental material to an experimental study reported in van de Merwe, Mallam, Nazir, and Engelhardtsen (2024).

The amount and type of information depicted per level of transparency.

Semi-structured Interviews

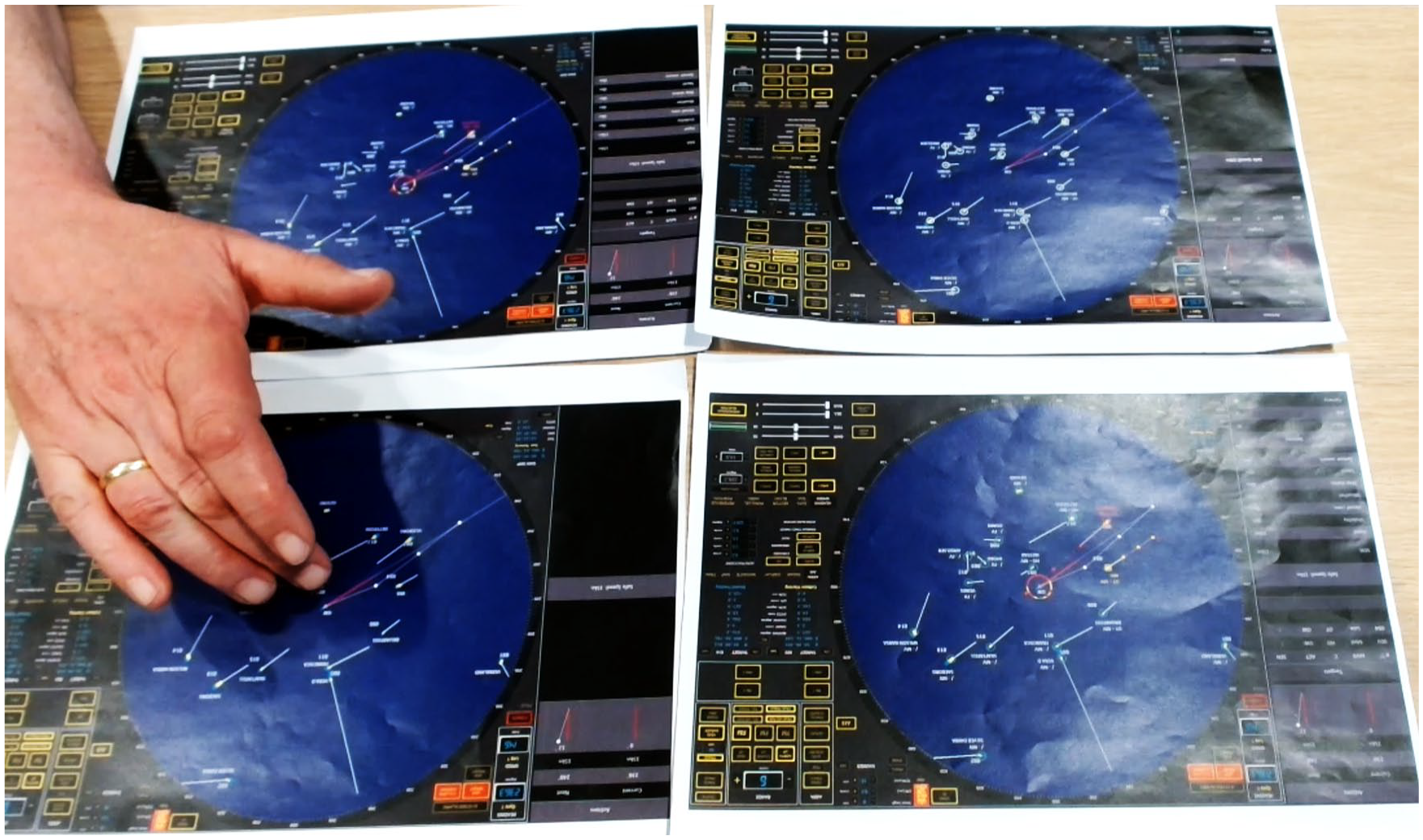

Semi-structured interviews were performed with each individual participant and lasted approximately 10 min on average. A think-aloud protocol was used to capture and understand how navigators assessed the information provided by the CAGA system (Eccles & Arsal, 2017). Voice data from the participants were automatically transcribed using a speech-to-text transcription function in Microsoft Word. The transcription was manually checked against the recordings to validate the result. Video data supported the audio recordings in cases where participants were gesticulating when answering, for example, pointing toward specific symbols or areas on the HMIs (see Figure 1).

Screenshot from the ranking and interview from one of the participants.

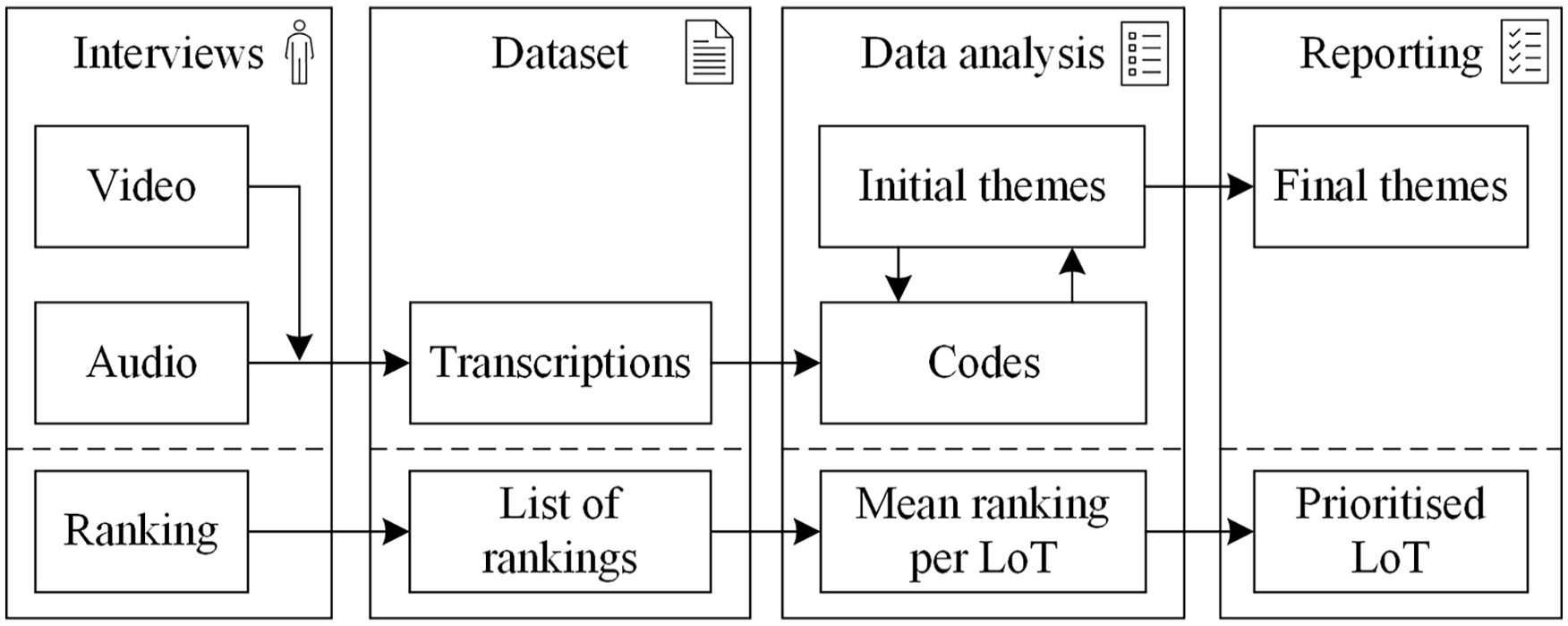

Thematic Analysis

Based on the transcribed dataset, a thematic analysis was performed to explore themes and identify which information elements best supported supervision (Braun & Clarke, 2006). Thematic analysis is a method to analyze and structure qualitative data. It aims to identify patterns in narrative data and provide interpretations of these in the form of themes. In this process, the researcher plays an active role in the interpretation and extraction of meaning in relation to the research question. Thematic analysis uses an iterative process in which codes (basic semantic content of interest; Braun & Clarke, 2021) are generated and combined to form initial themes. By iteratively analyzing the transcribed data, codes, and potential themes, a concluding set of themes is created. Finally, the themes can be illustrated with representative extracts from the transcribed dataset (see Figure 2).

Thematic and ranking analysis process as applied in this study.

Ranking Transparency Levels

The participants individually ranked four HMI concepts in terms of how well these allowed for observability and predictability of the CAGA system’s interpretation of the traffic situation, decisions, and planned actions (Endsley, 2017; MITRE, 2018). Definitions of these terms were read verbatim to the participants prior to ranking. The participants were given a print-out with an identical traffic situation for which only the level of transparency was varied in four levels, that is, low, medium (A), medium (B), and high (see Table 1 and Figure 1). The traffic situation was available continuously throughout the ranking task.

Data Recording

The interviews were captured using a video and audio recording device and the rankings were recorded using pen and paper. Due to technical difficulties with the recording equipment, interview data could not be obtained for one participant. Ethical approval was obtained from the Norwegian Centre for Research Data reference number 986652 and this research complied with the American Psychological Association Code of Ethics (APA, 2017).

Results

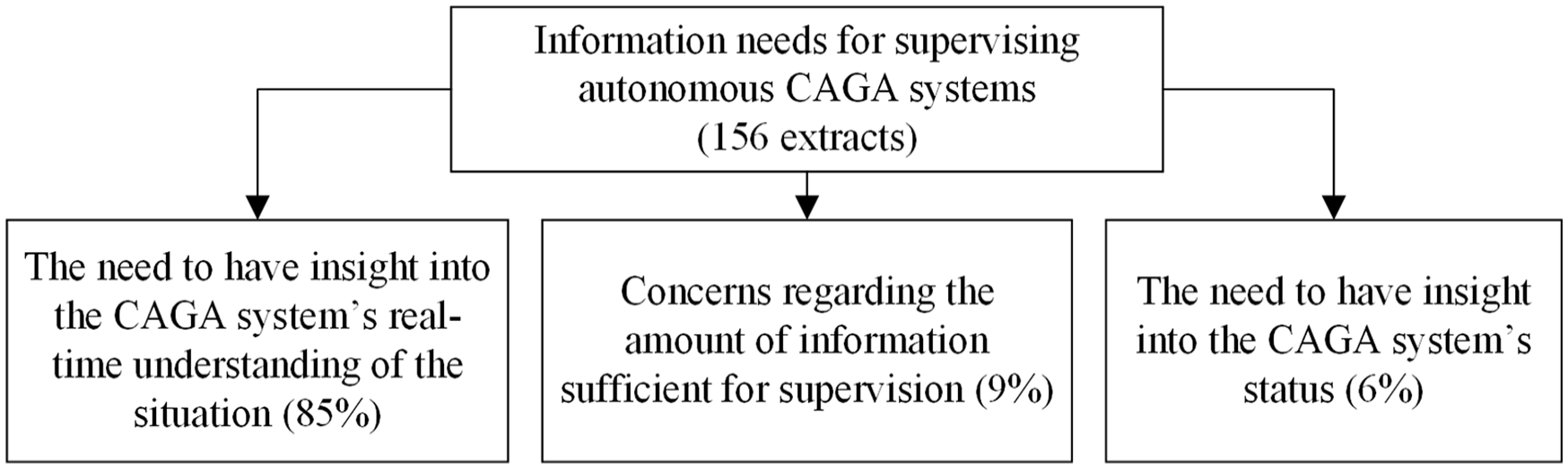

Supervising the CAGA System

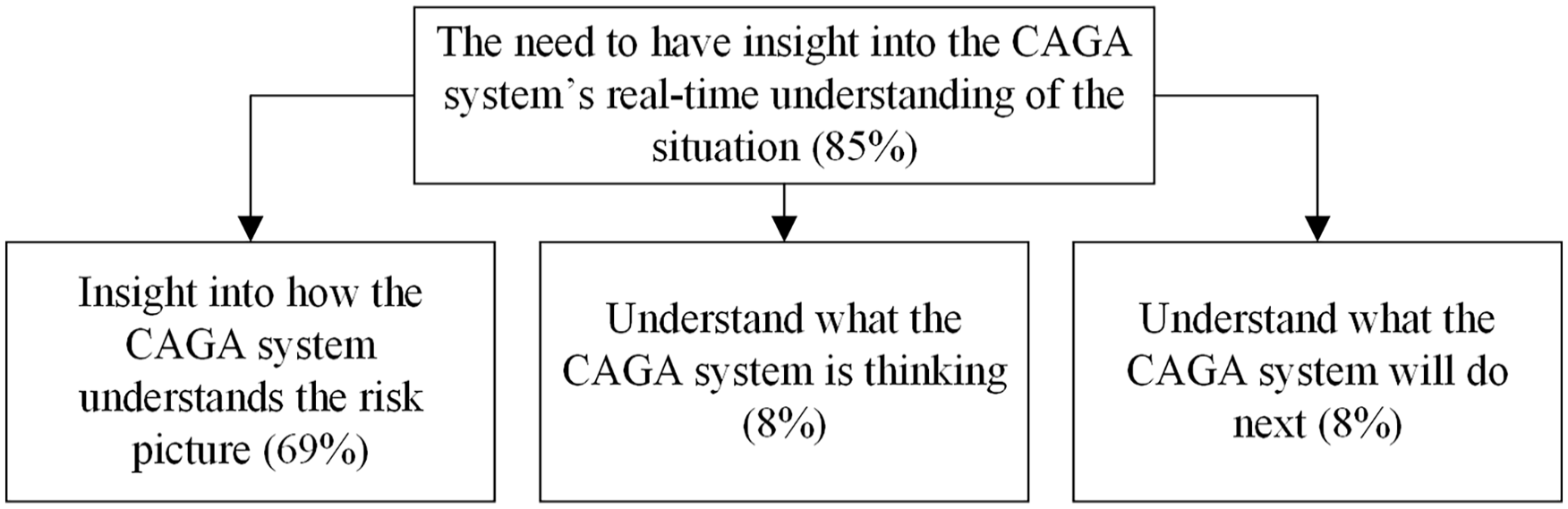

One Hundred Fifty-six individual narrative extracts were captured from the transcribed interview data. Within the overarching context regarding the information needs for supervising autonomous CAGA systems, three main themes were defined. The results indicate the navigators were concerned with the need for insight into the CAGA system’s real-time understanding of the traffic situation. As depicted in Figure 3, approximately 85% of the extracts revolved around this theme. Furthermore, 9% of the extracts expressed concerns and general comments about the amount of information needed for supervision. Finally, 6% of the extracts reflected the need for the CAGA system to indicate its status, that is, information about the state and use of its sensors.

Overarching themes.

When considering the dominating theme in more detail, that is, “the CAGA system’s real-time understanding,” three sub-themes were defined (see Figure 4). First, participants were concerned with the need to obtain insight into how the CAGA system understands the risk picture (69% of the total number of extracts). Second, they were concerned with understanding what the CAGA system is thinking (8%), and third, they wanted to know what the CAGA system will do next (8%).

Sub-theme “CAGA’s real-time understanding.”

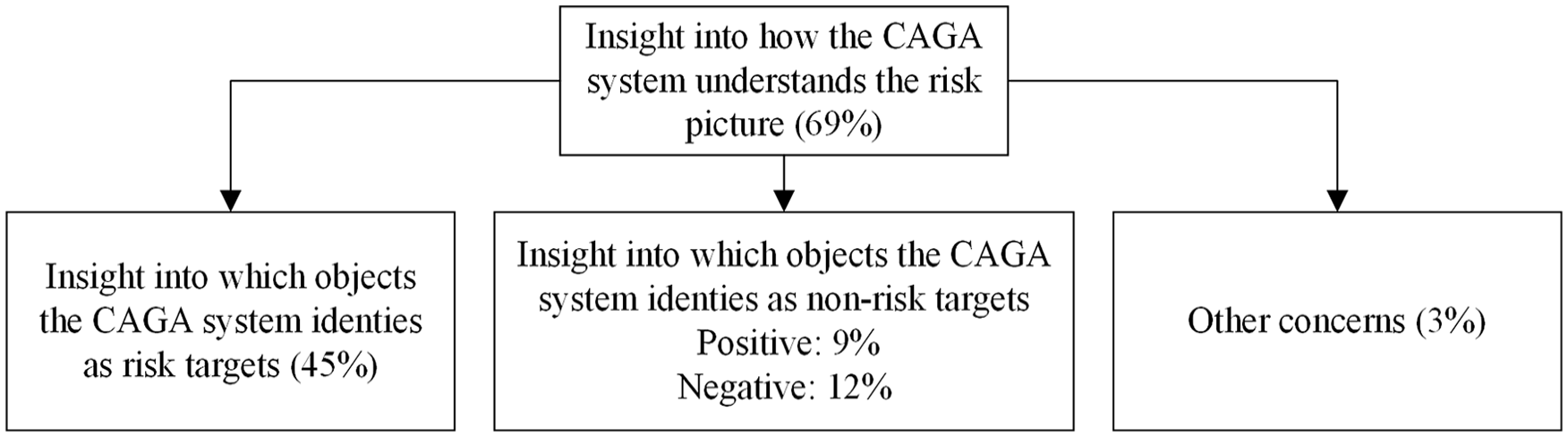

When again considering the dominating theme, that is, “how the CAGA system understands the risk picture,” it was found that 45% of all extracts indicate the need for depicting risk targets (see Figure 5). Furthermore, approximately 21% of the extracts concern the depiction of non-risk targets on the HMI. However, opinions were divided on this topic considering 9% of comments connotate positive attitudes toward including non-targets, that is, these are needed, when supervising CAGA systems, whereas 12% were negative, that is, these are not needed. Three percent of the extracts for this sub-theme concern other information needs such as depicting target ship types, and close-vicinity risk visualizations.

Sub-theme “How CAGA understands the risk picture.”

These findings indicate the navigators’ preference regarding which ships the CAGA system had identified as risk targets (including priorities), their predicted tracks (including course and speeds), and type of conflict with target vessels (head-on, crossing, or overtaking). Clearly, supervisors require this information to be able to understand and scrutinize the CAGA system’s decisions and planned actions in its context. To illustrate this further, the comment by participant 2 illustrates the need for visualization of risk targets given the supervisory task: As a navigator, I just think – boom, boom – forget about those [points towards targets that do not pose a risk]. That’s how I work. Then there are two target you need to keep an eye on [points towards targets adjacent to own ship], and there are two that are a danger now [points towards red and orange-colored targets]. [. . .] I can see I have Head-on on that one [points to red target on High transparency HMI]. It’s then very easy to see what its thinking with these green circles [confirmed non-risk targets on High transparency HMI], that it has actually tracked them.

However, the navigators were divided about the need for the system to convey all its information, that is, the system’s decisions and planned actions, its risk analysis, and a full disclose of its detected objects, input parameters and its sensors status. It was argued that having “the full picture” is essential for scrutinizing the performance of the system, yet it may also lead to information overload. Although all navigators agreed on the essence of depicting critical analytical information to support their supervisory task and avoid irrelevant information, opinions were divided on what counts as critical information in a supervisory context. For example, in terms of the need for depicting all detected targets (risk or non-risk), opinions were divided, as indicated by the following juxtaposed comments by participants 13 and 20 respectively: If I would be able to choose, I would have chosen this one [points to Medium (A) transparency HMI]. Because these green circles? I could have done without those. Because they may show me that all is ok, but it more interesting to show me what is not ok. Actually, I like the fact that it considers all targets. [. . .] Because now I think “those are ok” [highlighted non-risk targets in green]. So now it has done that job for me, and I can focus on the ones the system thinks are a risk [highlighted risk targets in yellow and red]. It is maybe a bit more demanding when there are a lot of ships, but if it is shown that I can trust it over time, then that will be really nice.

Ranking Transparency Levels

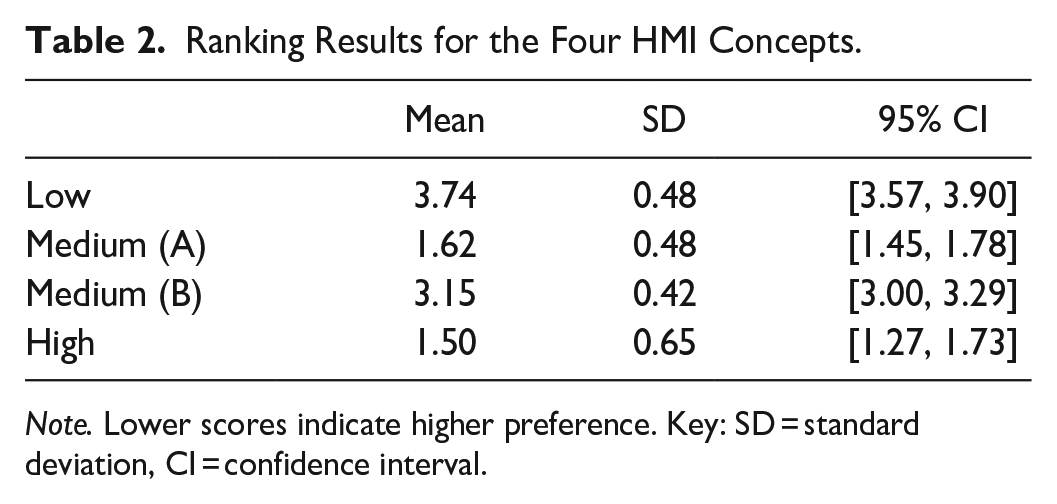

The results of the quantitative ranking analysis clearly indicate a difference between the levels of transparency when supervising autonomous CAGA systems (

Ranking Results for the Four HMI Concepts.

Discussion

Considering the participant population consisted of licensed navigators, the collective comments clearly illustrate their professional interest and responsibility in ensuring the safety of their ship. This was indicated by the fact that when the CAGA system’s analytical information was depicted on the HMIs (Medium (A)- and High transparency), arguably the most safety-critical information the system can depict, they clearly preferred these transparency levels. Based on the comments from the interviews, they argued that, when asked to supervise an autonomous CAGA system, they needed this information to understand its decisions and proposed avoidance actions. In a similar study performed by Aylward et al. (2022), it was argued that CAGA systems may have visualization capabilities conventional navigation equipment do not have in that they can provide insight into the collision avoidance problem space. That is, they have the potential to graphically depict the predicted behavior of own- and other ships in a collision avoidance context and therefore support the navigator’s SA. Considering that safe navigation is the primary responsibility of navigators, it is understandable that information providing insight into collision risk is considered essential in a supervisory context.

Similar results were found when the participants were asked to rank their preferences for the level of transparency. As depicted in the aim of this study is to explore feedback and experiences from licensed navigators on transparent Human Machine Interface (HMI) concepts designed to provide the information needed to oversee the decisions and planned actions of CAGA systems.

The unique commonality between Medium (A)- and High transparency is the depiction of analytical information. In these levels, the CAGA system depicts the results of its risk analysis, that is, detailed object classification, tracking results, situation analysis, and risk estimation. In addition, for the High transparency level only, the system also depicts which objects it has detected, their basic classification, object tracking, and information about its own sensor status. However, considering that these elements are also depicted in the Medium (B) transparency level and that this level is significantly less preferred than the Medium (A) transparency level, it can be deduced that these information elements did not contribute to the higher ranking. Similarly, transparency levels that show the system’s decisions and planned actions

Whether or not to display “the full picture,” or curate the information to include time-sensitive decisions, planned actions, and analytical information only, illustrates the dilemma when developing HMIs for supervision of autonomous systems: the need to balance the amount and type of information. Although more information may be preferred by some operators, this desire needs to be weighed against the potential for performance impacts, for example, the time needed to comprehend the information (van de Merwe, Mallam, Nazir, & Engelhardtsen, 2024). Consequently, other studies have argued for a demand-driven form of transparency and found benefits in terms of the possibility to increase transparency levels without increasing response times to situations (Vered et al., 2020). Perhaps, a dynamic approach to transparency is beneficial where the display of essential and non-essential information elements can be adjusted depending on the information needs by the supervisor. Further work should address this idea and assess its viability in a dynamic collision avoidance context.

Finally, this study highlights the value of including operational experience in the design and evaluation process. As prescribed in various industry standards and guidance materials, end-users should be involved throughout the design process when developing interactive systems (Endsley et al., 2003; ISO, 2019). In this research project, professional and licensed navigators contributed to the initial task analysis, the development of HMIs, the traffic situations, and evaluations in a controlled experiment. Finally, the qualitative evaluation, as reported in this study, further contributes to interpreting the results from the viewpoint of potential end-users of autonomous CAGA systems.

Conclusion

The aim of this study was to explore feedback and experiences from licensed navigators on HMIs designed to provide the information needed to oversee the decisions and planned actions of CAGA systems. Four levels of transparency depicted the CAGA system’s input parameters, its risk analysis, and its decisions and planned actions. This study assumed that transparency may support operator SA when supervising autonomous CAGA systems by keeping operators “in the information loop.” In this context, this meant that the CAGA system affords the operator with its decisions, planned actions, reasoning, and input parameters such that a sufficient information basis is obtained to evaluate the performance of the system and decide whether manual adjustment or intervention is needed.

Considering the foreseen supervisory role of human operators in overseeing the performance of autonomous systems, the provision of information to the operator should be carefully planned and managed. This means that the information that is

Footnotes

Acknowledgements

The authors would like to express their sincere gratitude to the navigators for their participation in this study. We would also like to express our sincere gratitude to Koen Houweling, MSc. for his contribution in developing the traffic situations and the transparency symbology.

This paper supports and expands on our results published in The Journal of Cognitive Engineering and Decision Making.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is sponsored by the Research Council of Norway, project nr. 311365.