Abstract

Reports of artificial intelligence (AI) in industries have drawn attention to digital flight decks of airliners. This encompasses single pilot operations, distributed crewing, and forms of reduced crew operations. Pilots, understandably, have concerns about reliability of AI and intrusion in crew operational procedures. To better comprehend the extent of automation malfunctions, and advanced roles for AI, data from NTSB and ASRS databases were examined. Results were assessed for applications of AI to resolve known human or system limitations. To evaluate trust and confidence related to AI, a survey of 42 company pilots provided baseline findings. Results revealed a substantial level of distrust in AI to make important decisions and that pilots believe they are necessary to prevent critical mistakes. To better understand the potential role of AI on the flight deck, parallel tracks for development are presented that illustrate domain-specific applications from more complex human-automation teaming and shared cognition operations.

Keywords

Overview

With nearly ubiquitous mentions of artificial intelligence (AI) being applied in various industries, one might reasonably expect a progression that involves the digital flight deck of airliners. This could encompass operations for single pilot (SPO), distributed crewing (DCO), or reduced crew (RCO) (Xu, 2023). Pilots, understandably, have concerns about the reliability of AI and intrusion in crew operational procedures and performance. We propose that, rather than a linear, all-encompassing progression, there might actually be two tracks with regard to aviation applications. In this article, we suggest one track that has evolved with automation and domain-specific or simple AI features. A second, and parallel, track contemplates more complex and shared aspects with AI. While some crossover might occur, the reality of SPO and similar operations becoming mainstream with commercial passenger air transport face multiple challenges of significant magnitude which may take decades to resolve. To investigate the extent to which these challenges and obstacles affect both tracks of AI integration, a survey of pilots reveals there is substantial concern and limited trust in AI as applied on the digital flight deck, albeit with a general elevated regard for the promise of AI in selected systems.

Automation on the Digital Flight Deck

As early as 1980 there were as many as 13 different technologies in crewed cockpits, mostly analog gauges along with the fledgling CDTI (cockpit display of traffic information) (Brighton & Klaus, 2023). The CDTI, with substantially evolved capabilities, exists today in ADS-B and traffic collision avoidance technologies. As functions performed by human operators were replaced with automated features, pilots soon learned to rely on automation and attend to other matters. Trust in automation grew and as flight decks became increasingly sophisticated more systems were introduced and pilots took on the role of monitoring and adjusting these systems. Automated systems then began to incorporate simple (basic) AI that could predict problematic situations, like terrain closure rates and providing alerts or, in some cases, command actions independently. In the following decades machine learning features and fly-by-wire technology developed. These increased substantially the role of assistive automation which also introduced autonomous operations in flight computers placed in Airbus and various military aircraft (Myers & Starr, 2021).

With efforts to develop effective and safe DCO and SPO for commercial aircraft, there is a natural resistance from some quarters where fear of job security could be threatened. These fears, plus limitations and concerns for autonomous operations, are expressed by DePete (2019) citing public disapproval. The progress to date with DCO and SPO efforts has shown some success and potential for further development. Accompanying the progress is corollary inclusion of more AI applications on the flight deck, although they are of the more complex designs. There is wide agreement that complex AI operations for commercial flights will be with cargo aircraft and that passenger flights are not the focus in the near future (Dormoy et al., 2021).

Today, it appears simple AI will continue to evolve in flight deck systems and expand assistive automation features. Simultaneously, more complex AI is rapidly proliferating and capturing interests with the potential for extraordinary accomplishments. Within the more complex applications lie the versions of SPO and RCO which may be of greater concern to pilots. One issue is the reliability of advanced AI which is in tandem with concerns regarding how trustworthy human operators regard the enhancements. The extent to which pilots trust AI is important to assess moving forward with more complex systems, machine interactions, and collaborative teaming. To begin, a general sense of the trust level pilots have with AI will help to determine areas for closer attention and development (Kreps et al., 2023). Our effort to explore this dimension included surveying pilots and examining National Transportation Safety Board (NTSB) and Aviation Safety Reporting System (ASRS) data regarding automation errors and related human factors. By assessing where concerns lie with AI on the flight deck, a more defined area for attention can be realized and efforts to resolve some of the reluctance among pilots might be addressed more effectively. We believe it would be useful to demonstrate that the more basic functions and automation advancements carry forward the current trend and present less threat than more complex AI that emulates decision-making and attempts to replicate human cognition. Since AI will likely be increasingly applied to flight deck systems along domain-specific parameters, the magnitude and complexity of issues are of potential significance for human factors engineers and system developers.

The AI-based decision aids are present in fault management systems and information processes and often are applied for situation assessment and potential alternative actions. Typical systems will alert the human via sensor information and then AI informs them what actions to take (Kistan et al., 2018). This is an example of early collaboration efforts. Previously, Abbott (2013) provided 25 types of information management problems that could represent major areas for AI intervention or assistance. These illustrate the variety and complexity of issues confronting humans and where AI might provide meaningful aid.

Growth of Artificial Intelligence in Aviation

When considering teaming humans with AI, the issue of social integration becomes primary with regard to establishing trust (Abbass, 2019). This invites further the necessity for humans to actively participate in the decision loop. Two mainstream applications for AI today are analytics for interpreting and transforming data and autonomy which, in part, enables AI to produce actions that achieve generated objectives. The interdependence of teaming intelligence requires shared mental models and mutual trust. Machine intelligence will require more time for accumulated experience to integrate with human practices. Areas of importance include common ground for situational awareness, transparency, and trust (Nørgaard & Linden-Vørnle, 2021). Teaming will require a mutual understanding of informational relevance and the value of information. The NASA Mars Rover missions made notable strides in this direction (Johnson & Vera, 2019). The need for achieving success in these efforts is outlined in a study by the Flight Deck Automation Working Group (Federal Aviation Administration, 2013) which revealed that commercial pilots reported addressing and resolving a critical safety situation on one of every five flights.

Endsley (2023) considers the role of AI as having advanced from manually encoded rules to machine learning and neural networks, which detect patterns and could be considered as advanced automation. Of further note, the speed at which AI can operate creates a situation awareness gap for humans and restricts maintaining an accurate and reliable cognitive map. Three ironies with AI are observed: (1) AI still is not markedly intelligent, (2) as AI becomes more adaptive, the less human operators will be able to comprehend the system, and (3) as AI becomes more capable, human operators will be less able to compensate for AI shortcomings. These issues feature prominently in the complex AI arena where teaming and autonomous operations reside.

When examining automation developments, descriptions of the degree of automation have advanced through levels represented for more machine authority and for more action selection and execution authority. Little empirical evidence was available until 1980 when the U.S. Navy created the Adaptive Function Allocation for Intelligent Cockpits (AFAIC) (Parasuraman et al., 1992) Identified as early as 1988 was the issue of adaptation in human-machine systems and issues where conflicting intelligences could become cooperative (Hancock et al., 2013). In the continuum of automation, adaptive aiding was introduced to provide automation on demand by an operator, which required monitoring the cognitive state of the operator to prevent overload. Following up on the AFAIC initiative, the CHARM (Cockpit Human-Automation Resource Management) system began an evolution of integrating human operators and computational support (Hancock, 2023). This area has focused on strategies to resolve decision making in unknown-unknown situations. It begins with known patterns, then progresses to the areas where something is not right but the reason is unknown (e.g., Black Swan events). Current versions of AI rely mostly on previous patterns and only recently are approaching the human capabilities for operating with prospective memory to project potential future states with some precision.

Hancock (2023) describes approaches for resolving function allocation concerns for teaming between human operators and AI agents. Function allocation addresses three key points: (1) how are functions allocated between humans and machines, (2) who does the allocating, and (3) who can authorize an allocation. The initial function of adaptive allocation is best suited for a human-centered design and has evolved with related concepts for augmented cognition. A newer concept known as cognitive-cyber symbiosis (Abbass, 2019) allows for autonomous relationships between machine learning and humans. This interchange is significant in creating a means for AI systems to communicate with humans, assess trustworthiness, and present information to humans in a form and at a pace suitable for humans to comprehend and act, with simultaneous reciprocal processing.

As automation continues to advance on the flight deck, efforts like the Cockpit Automated Procedures System (CAPS) developed by the European Union Horizon 2020 program address the progression from pilot control and decision-making to full autonomy with particular focus on procedures for abnormal or emergency actions. EASA has identified several challenges for AI to successfully integrate into crew assistance or augmentation processes. Of particular focus are Electronic Centralized Aircraft Monitor (ECAM) and Engine-Indicating and Crew-Alerting System (ECAS) functions. Both systems require human crew command for any actions to occur (Alvarez et al., 2023). This system has been in use by Airbus and various defense organizations. It is currently in the certification process with EASA. These actions are with the propulsion system and do not currently apply for other flight deck systems.

Artificial Intelligence and Pilot Perceptions of Trust and Reliability

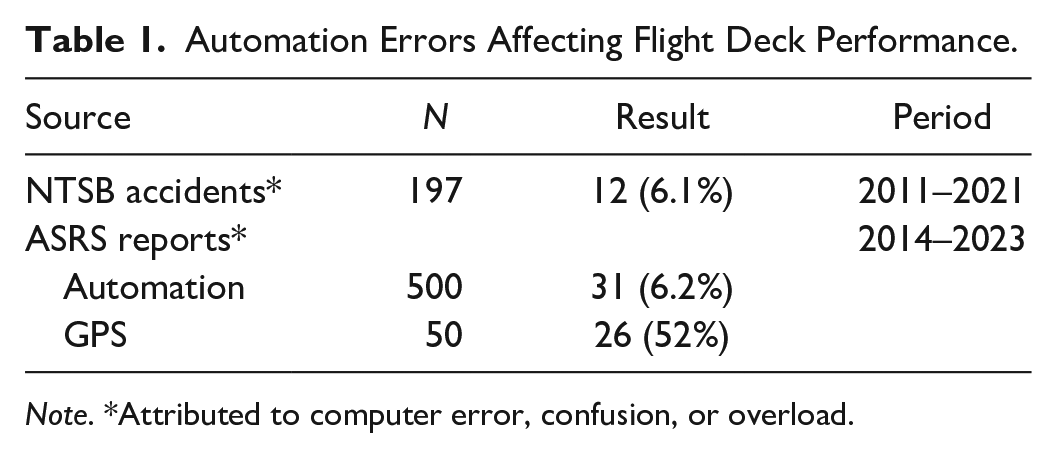

AI applications for flight deck systems will continue to evolve with objectives for reducing accidents and errors attributed to automation. To better comprehend the extent of accidents related to automation malfunctions, events reported in the NTSB database were assessed covering a 10-year period. To identify non-accident events related to automation malfunctions, data from the ASRS database were examined regarding commercial operations, limited to automation anomalies and related human factors aspects.

The results shown in Table 1 were extracted using thematic analysis from the NTSB Final Accident Reports database (https://www.ntsb.gov/investigations/AccidentReports/Pages/Reports.aspx) where computer error was identified as a cause.

Automation Errors Affecting Flight Deck Performance.

Note. *Attributed to computer error, confusion, or overload.

The ASRS Database Report Sets (N = 50 each) were examined for specific indications of automation malfunctions and for GPS data that were unexplained, confusing, or contradictory (https://asrs.arc.nasa.gov). Events from the NTSB database for 2011 to 2021 revealed 197 Part 121 accidents where 12 (6%) indicated the primary cause was computer information and automation error. ASRS database inquiries revealed 31 automation error malfunctions during 2014 to 2023 that resulted in confusion or overload. A third element, an inquiry of 50 GPS automation-related failures on commercial flight decks during 2022, indicated 26 (52%) were attributed to unknown causes. Consequently, data available from the ASRS events, which contained material for assessment, were evaluated to investigate the extent to which the potential for additional applications might include AI to resolve known human or system limitations. The 57 episodes examined established that automation errors affected flight operations and suggest AI could potentially be of significant use in diminishing disruptive effects and for providing corrective actions. However, pilots would need to trust that AI would reliably make safe and effective corrective actions.

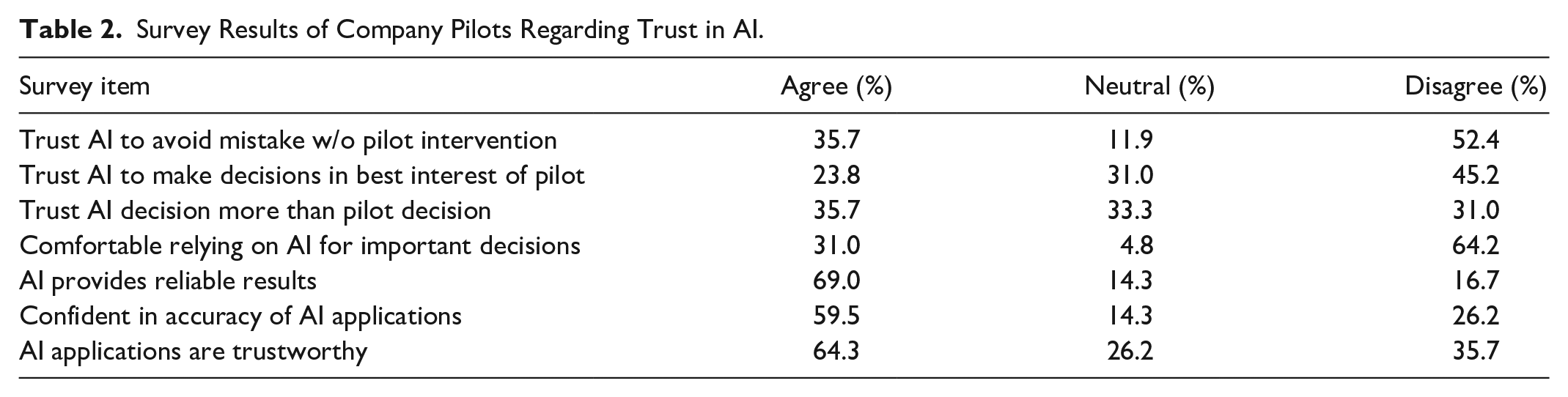

To address the trust issue, in part, results of an on-line survey of U.S. aviation workers shown in Table 2 included demographic data and 33 closed-end statements, grouped into categories for use and trust, and evaluated on a 7-point scale. Among the 273 respondents, data for those identifying as commercial company pilot (N = 42) were tabulated from the Trust category (8 items) relevant to the current subject of AI use in aviation systems. Pilots reported they trust AI to provide reliable results (69%), think AI applications are trustworthy (64.3%), and have confidence in AI applications (59.5%) in general. Conversely, pilots did not trust AI to make important decisions (64.2%) or for AI to avoid mistakes without pilot intervention (52.4%) regarding flight operations.

Survey Results of Company Pilots Regarding Trust in AI.

The survey results indicate pilots have a fair degree of trust in AI in general but have marked reservations about AI on the flight deck. A more targeted and specific inquiry is needed to determine which systems would be of greater concern if more advanced AI were integrated. Nonetheless, with respect to commercial pilots’ levels of confidence or concern related to AI, survey results mark a baseline which could help guide AI implementation going forward. A key intention is to determine whether it is possible to present AI developments on the flight deck in a manner that partially assuages concerns regarding replacing human operators or substantially revising processes for interpreting and transforming data. One element of trust in the relationship is that each party is willing to be vulnerable to the actions of the other party (Mayer et al., 1995). This concept is explored at length in the risk assessment literature and implications of human-AI trust suggest how much vulnerability or risk each is capable of accepting and in what circumstances. Collectively, the incidence of automation computer error on the flight deck, low level of trust in AI regarding decision making, and challenges of cognitive teaming elevate the issue of keeping the pilot in the loop for decisions and assessing risk.

The NTSB and FAA data findings over the period 2011 to 2021 indicated that flight deck errors attributed to automation malfunctions are on the rise. Although AI is becoming more integrated into flight deck systems and pilot functions, indications from the pilot trust survey suggest a substantial level of distrust in AI to make important decisions. Also, pilots believe they are necessary to prevent AI from making critical mistakes. Notably, in the context of AI applications, a linear trajectory of AI development and integration on the flight deck does not differentiate clearly among potential changes in pilot roles or functions and bears further examination.

Parallel Trajectories of Artificial Intelligence Development

A comprehensive timeline for AI application and integration in commercial aviation has been developed by the EASA and affects AI applications for all industries. The accompanying regulation establishes the world’s first comprehensive guidelines and timetable for AI development and implementation. The roadmap encompasses three levels: Level 1 focuses on human assistance and augmentation, with expected implementation by 2025; Level 2 encompasses human-AI teaming with implementation through 2035; Level 3 shifts to advanced automation and autonomous AI through 2050, although the end dates may be altered due to state of the art technology developments (European Union Aviation Safety Agency [EASA], 2023). Overall, the EASA roadmap is linear and combines various deliverables in organic phases. When evaluating the EASA AI Roadmap, linear evolution among the three levels does not distinguish clearly where more complex AI affecting pilot roles occurs.

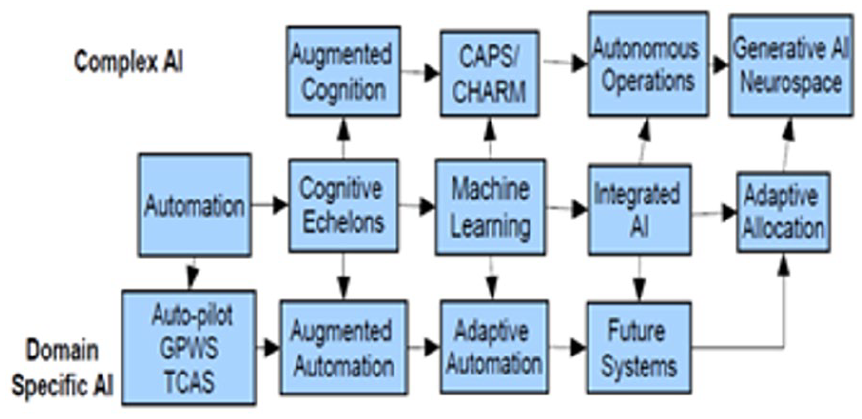

Consequently, the authors considered that, rather than a linear, all-encompassing progression, there might be parallel tracks with regard to aviation applications. By separating the linear evolution into two trajectories that share the automation and AI continuum it becomes possible to illustrate how domain-specific AI differs from advanced/complex AI with respect to applications affecting the flight deck. This becomes more pronounced when considering future versions that employ more interactive teaming of AI with human operators. In part, our expectation is that creating parallel tracks may ease some concerns over the rapid proliferation of AI developments in the current culture as they might apply to aircraft systems.

Our initial approach examined the EASA AI Roadmap 2.0 (EASA, 2023) to identify development levels, followed by assessing the potential for parallel trajectories that separate existing and near-future application levels of flight deck systems from more complex human-cognition types of AI functions, such as distributed or reduced-crew operations. With a better sense of the extent of automation errors and trust levels of pilots regarding potential AI assistance or intervention, attention now can be directed to the progression of AI in near future flight deck systems as distinct from more autonomous or distributed cognition networks.

The envisioned trajectories in two tracks are shown in Figure 1 that separate the evolution of AI. Both tracks of AI development on the digital flight deck began with automation. The first track, which we term the Domain-Specific AI Track, started with devices that were intended to reduce pilot workload and enhance safety. This moved into forms of assistive functions and later to alternative actions that required no concurrence with pilots before implementing actions. As fly-by wire technology evolved, more actions were mediated via aircraft computers and algorithms using sensor data and other sources to assess flight and engine parameters. The second track, which we term the Complex AI Track, is oriented toward more interactions and potential teaming with machine intelligence and learning systems (e.g., cognitive-cyber symbiosis). Herein lie the versions of SPO and reduced crewing for the digital flight deck.

Parallel trajectories for AI development on the flight deck.

Applications of domain-specific AI on the digital flight decks of modern airliners have been a mainstay for decades. These have been in the form of GPWS, TCAS, and other systems. The more recent advances in AI technology and potential have heightened interest, as well as concerns, among human factors specialists, pilots, manufacturers, operating airlines, and regulators. Objections have been raised about use of RCO, SPO, and similar suggested practices (Myers & Starr, 2021). As the complexity of AI applications grows, so do questions of reliability, trust, and transparency. Development along the Domain-Specific Track is likely to remain within dedicated applications, such as ADS-B technology. Applications can develop substantially while incorporating aspects that pertain to flight deck operations within the domains serviced aboard the aircraft. When reaching the future systems part of the track, the limitations will become more profound when fusion with integrated AI is involved, such as digital flight rules (Wing et al., 2022). When the interactions with air traffic control and company operations centers are added, the joined issues proliferate and will likely require more complex AI for reliability (trustworthiness) and safe resolution.

Approaching teaming and generative cognition separates development into the Complex track which is oriented toward more cognitive interactions and potential teaming with machine intelligence and learning systems and begins further along the automation continuum while establishing a parallel line of development. When considering teaming humans with AI the issue of trust becomes primary, as does function allocation, and invites further the necessity for humans to actively participate in the decision loop (Nørgaard & Linden-Vørnle, 2021). As our survey results revealed, there is a substantial gap in the trust arena in this regard. Since we don’t know what we don’t know, the learning curve for development will evolve formatively as innovative achievements advance the applications of complex AI. If patterns historically are to be taken into account, this will mean that as the AI learning curve advances, unforeseen issues will arise that complicate approaching the transparent and comprehensive human-like cognition that AI portends.

Finally, when AI applications are viewed as parallel trajectories with limited crossover in the foreseeable future, commercial airline pilots might have a more secure understanding of the limitations and developmental timelines affecting the digital flight deck.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.