Abstract

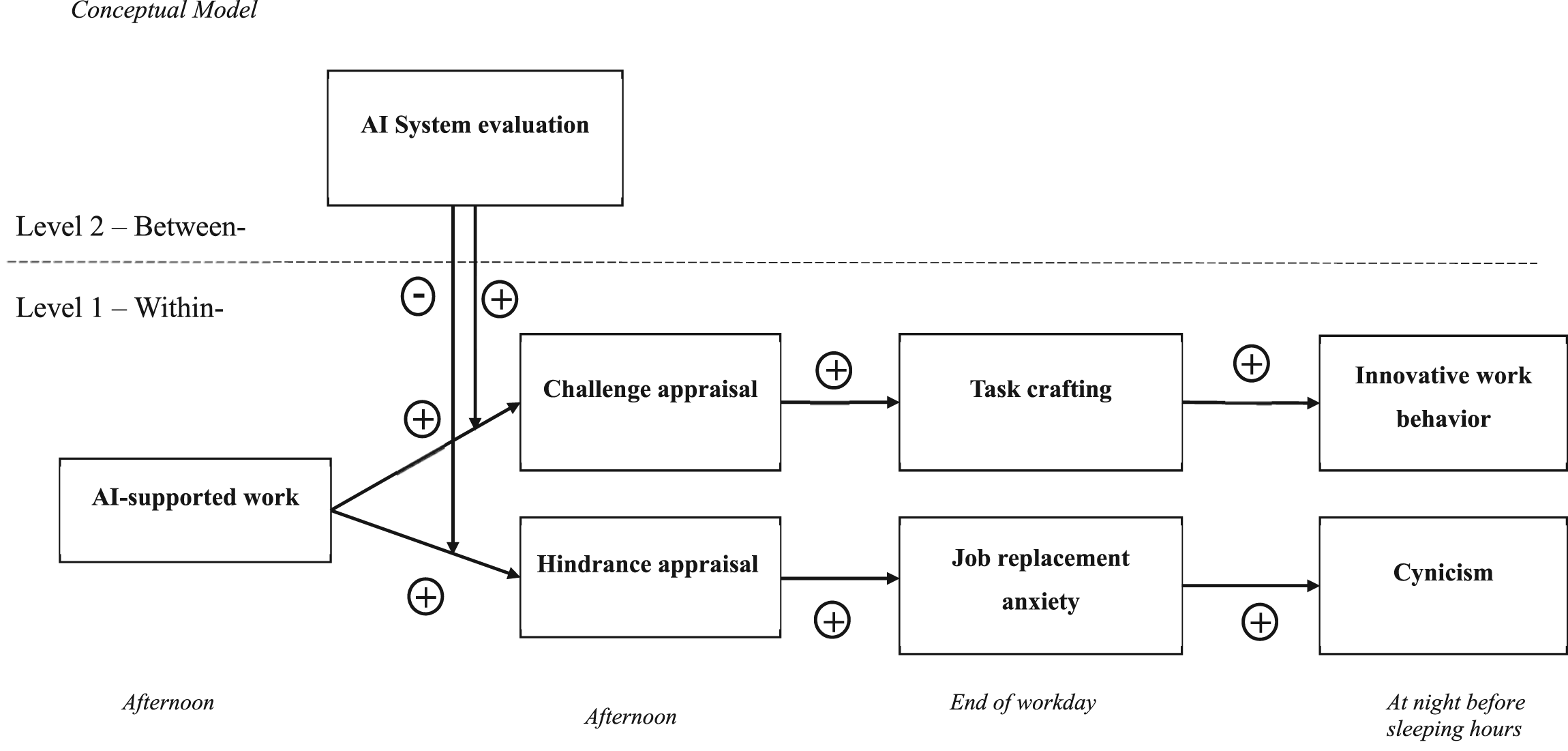

Drawing on the Challenge–Hindrance Stressor Framework (CHSF), we examined how AI-supported work functions as a daily stressor that can elicit both engagement and disengagement responses depending on individual challenge and hindrance appraisals. Using a 10-day diary study design conducted in the US (N = 173), we tested dual pathways through which daily AI-supported work influenced employee behavioral and attitudinal responses. On days when employees perceived having more AI-supported work, they were more likely to appraise it as a challenge, which in turn was associated with higher levels of task crafting and innovative work behavior. At the same time, AI-supported work was also appraised as a hindrance, triggering job replacement anxiety and resulting in higher levels of organizational cynicism. Furthermore, AI system evaluation, a general attitude toward AI systems, moderated the hindrance pathway such that individuals with more favorable AI evaluations were less likely to perceive AI-supported work as a hindrance. Our findings extend the CHSF to the context of AI, highlighting that the same AI stimulus can evoke distinct psychological and behavioral responses depending on appraisal and attitude. We discuss implications for theory and for organizations seeking to implement AI in ways that enhance positive employee outcomes while mitigating adverse effects, thereby contributing to a more human-centric approach to AI integration at work.

Introduction

Artificial intelligence (AI) technologies are reshaping industries, creating new employment opportunities and altering work conditions (Haipeter, 2020). AI encompasses self-learning technologies capable of human-like performance (Batin et al., 2017) and has become a technological priority across organizations (Davenport & Ronanki, 2018). Since 2023, the number of employees using AI in their role a few times a year has nearly doubled from 21% to 40%, while those using it a few times a week or more have increased from 11% to 19%, and daily AI use has doubled from 4% to 8% since 2024 (Pendell, 2025). During the same period, the proportion of organizations using AI in at least one business function increased from 78% to 88% (Singla et al., 2025).

This dramatic growth raises critical questions regarding the potential effects of AI on individuals’ work-related outcomes. While AI can optimize work by automating routine tasks, freeing time and cognitive resources for more challenging and meaningful activities, thus fostering initiative and creativity (Jia et al., 2024), it can also trigger concerns about its implications for personal growth and individual development, reflecting diminishing investment in human potential in favour of technology-centric work, leading to consequent fears regarding job security and, in turn, negative work attitudes.

Despite the growing integration of AI into everyday work, our understanding of AI’s complex effects remains limited, particularly regarding the daily experience of receiving AI support. Existing research has largely focused on AI readiness (Holmström, 2022), optimizing human-AI collaboration (Jia et al., 2024), trust in AI (Solberg et al., 2022), and human-AI tasks allocation (Chowdhury et al., 2023). Yet, these studies overlook the proximal, recurring nature of AI-supported work. As AI becomes embedded in routine processes, providing suggestions, automating decisions, or assisting execution, individuals are increasingly supported by it. These interactions likely vary day-to-day, and their appraisal and impact may shift accordingly. Still, the psychological impact of such interactions remains poorly understood, despite potentially exerting a stronger and more dynamic influence than distal constructs like AI awareness or organizational AI adoption.

To address this gap, we build on the revised challenge-hindrance stressor framework (CHSF, LePine, 2022; Podsakoff et al., 2023). We conceptualise AI-supported work as a job demand and, accordingly, a stressor because it entails changes in work requirements that necessitate adaptation. Consistent with Lazarus and Folkman (1984), stressors are not inherently positive or negative; rather, their effects depend on individuals’ subjective appraisal of the situation. Thus, AI-supported work may simultaneously be experienced as both facilitating and constraining, depending on how employees interpret its relevance to their work goals. Furthermore, empirical evidence shows that individuals can experience substantial intraindividual fluctuation in both challenge and hindrance appraisals across days (e.g., Ma et al., 2021), indicating that individuals do not consistently appraise the same demand in the same way over time.

Based on this, we propose two appraisal-based pathways through which daily AI-supported work influences employee outcomes. From a challenge standpoint, AI-supported work may be perceived as facilitating more efficient task completion, freeing time and cognitive resources, and thereby eliciting an engagement response (LePine, 2022). These resources can be directed towards redefining one’s core activities through task crafting, defined as the proactive modification of the number, scope, or type of work tasks to better align with personal strengths and interests (Wrzesniewski & Dutton, 2001). Such adjustments can stimulate innovative work behavior, defined as the creation, introduction, and application of new ideas within a work role to enhance role performance (Janssen, 2000). In contrast, from a hindrance perspective, AI-supported work can be viewed as an obstacle to growth, signalling reduced need for human input, hindering development and triggering a disengagement response (LePine, 2022). In turn, this may lead to anxiety tied to job replacement (Wang & Wang, 2022), which refers to fears that AI may take over human roles, increase dependency or directly affect job continuity. Experiencing job replacement anxiety may result in individuals becoming psychologically withdrawn, fostering employee cynicism, a dimension of burnout characterized by disengagement, negative attitudes toward the organization, and a reduced sense of one’s perceived value and importance (Dean et al., 1998).

To account for this variation in appraisal, we draw on recent developments in CHSF that emphasize the role of individual and contextual factors in shaping the appraisal process (e.g., LePine, 2022), as well as on research on human-AI interaction showing that AI’s effects depend on perceptions of usefulness, trustworthiness, adaptability, and transparency (e.g., Glikson & Woolley, 2020; Lee & Cha, 2025). Integrating these perspectives, we propose that AI system evaluation, a job-related attitude reflecting the extent to which individuals perceive the AI system as beneficial, supportive, and appropriate for their work goals (Berretta et al., 2023), influences the extent to which AI-supported work is appraised as a challenge and a hindrance stressor. This reflects a general evaluative stance, rather than a task-specific judgement, and is aligned with attitude theory (Ajzen & Fishbein, 1980), positing that general attitudes shape how individuals interpret and respond to specific experiences.

Although a few studies have applied the CHSF to AI-related constructs, key gaps remain. He et al. (2023) and Ding (2021) examined appraisals of AI awareness, but not how direct interaction with AI shapes these appraisals. Liang et al. (2022) linked AI awareness to innovation outcomes without modelling appraisal. Cheng et al. (2023) focused on organizational AI adoption and its effects on job crafting, but not from a daily perspective, neither considered its impact on other work-related outcomes. By contrast, our study examines the daily, proximal experience of AI-supported work, showing how momentary appraisals influence both positive (task crafting, innovative work behavior) and negative (job replacement anxiety, cynicism) outcomes.

This study offers several key contributions. First, it extends the conceptual map of AI by introducing the concept of AI-supported work, capturing the perceived supportive value of AI interaction rather than awareness or organizational adoption. This responds to recent calls for a more fine-grained understanding of how AI influences employee attitudes (Pereira et al., 2023). Second, it adds to research on CHSF by examining within-day variation in the appraisal of AI-supported work. Although early work in the transactional model of stress has predominantly assumed that ‘challenge stressors’ would elicit challenge appraisals and ‘hindrance stressors’ would elicit hindrance appraisals (Cavanaugh et al., 2000), more recent scholarship highlights that this assumption is overly simplistic (Pindek et al., 2024). Our study extends this view by demonstrating that AI-supported work, a single, ongoing job demand, can elicit varying appraisals within individuals on a daily basis. Third, it identifies AI system evaluation as an individual job-related attitude that can influence the impact of AI-supported work, addressing LePine’s (2022) call to clarify the conditions under which stressors are appraised. Fourth, it advances the job crafting literature by theorizing AI-supported work as an antecedent of task crafting and its role in fostering innovative work behavior. Finally, through experience sampling, the study captures day-to-day fluctuations in AI-supported work on a day-to-day level, responding to recent calls for daily level analysis (Pereira et al., 2023).

In line with the Special Issue’s emphasis on shaping a human-centric future of work, we adopt a perspective that places employees’ subjective experiences at the core of AI integration. The human-centric approach is defined as the way individuals interpret, adapt to, and are affected by AI support, recognizing that the value of emerging technologies cannot be assessed solely through efficiency or performance gains. Instead, it requires understanding how AI reshapes employees’ sense of autonomy, opportunities for growth, and emotional connection to their work. By examining the daily appraisal processes that determine the impact of AI-supported work when experienced as enabling and constraining, and by focusing on behavioral and attitudinal responses, our study helps clarify how AI can be integrated in ways that support rather than constrain meaningful participation in the evolving workplace. The conceptual framework is outlined in Figure 1 and explained in detail in the following section. Conceptual model

Literature Review and Theoretical Framework

AI has mixed effects on individual work outcomes, with both positive and negative effects documented (Pereira et al., 2023). Frequent interaction with AI systems has been linked to reduced quality of life (Soffia et al., 2024) and increased stress when AI controls work (Klonek & Parker, 2025). Yet, AI can also reduce workloads and automate routine tasks, creating opportunities for growth. As AI becomes embedded in daily tasks, employees increasingly receive AI support, yet its daily impact remains poorly understood.

As indicated earlier, we conceptualize AI-supported work as a job demand and, accordingly, a stressor that entails changes in work requirements to which individuals must adapt. Specifically, AI-supported work involves shifts in how tasks are performed, requiring individuals to adapt their cognitions and behaviours in response to evolving work practices. Given the fast pace at which AI tools evolve, this need for cognitive and behavioral adjustment is ongoing rather than episodic. Such adjustment requirements constitute a defining feature of stressors (Lazarus & Folkman, 1984; LePine et al., 2005).

To better understand the effects of AI-supported work, we draw on the revised CHSF, grounded in the transactional model of stress and coping (TMS). This perspective emphasizes that cognitive appraisals play a central role in determining whether a situation is experienced as stressful and in shaping subsequent responses (Lazarus & Folkman, 1984; LePine, 2022). Accordingly, appraisal functions as the key mechanism linking a stressor to its outcomes (Webster et al., 2011). While seminal CHSF work acknowledged the role of appraisal (Cavanaugh et al., 2000), early studies largely categorised stressors by their content (e.g., LePine et al., 2005). Subsequent research, however, has demonstrated that the same stressor can simultaneously elicit both challenge and hindrance appraisals (e.g., Kim & Beehr, 2020; Webster et al., 2011), prompting theoretical refinements that place greater emphasis on the appraisal process (LePine, 2022). Thus, although due to economic rationality certain stressors are more likely to be appraised as challenges or hindrances, the CHSF recognizes that appraisals are not universal and do not follow a fixed or uniform sequence (Podsakoff et al., 2007). Rather, they are shaped by individuals’ evaluation of how a situation relates to their goals, availability of resources, and prior experiences (Podsakoff et al., 2023), implying that what is considered a stressor for one individual may not be perceived similarly by another (O'Brien & Beehr, 2019). Moreover, because these evaluative processes are sensitive to psychological states, such as mood, appraisals may vary not only between individuals but also within the same individual across time (O'Brien & Beehr, 2019).

This dynamic and subjective nature of appraisal is particularly relevant in the context of AI-supported work, where shifts in the nature of work open space for contrasting appraisals. Although using AI to support work has the potential to alleviate workload and enhance productivity by automating tasks and providing employees with efficient tools, it can also introduce new requirements, such as the need to acquire new skills, heightened performance expectations, or increased obligation to demonstrate continued value and indispensability at work. These shifts in work demands and ways of working can trigger mixed appraisals, encompassing both challenge appraisals (reflecting potential for growth, learning, and achievement) and hindrance appraisals (reflecting perceived constraints or obstacles to goal attainment) (Cavanaugh et al., 2000; Searle & Tuckey, 2017).

The proposition that support can be appraised as either challenging or hindering is also reflected in prior research on task-focused co-worker support. While such support has been generally associated with positive outcomes, such as higher job satisfaction, involvement and performance (Ducharme & Martin, 2000; Jolly et al., 2021; Mathieu et al., 2019), receiving excessive support can also have detrimental effects, as it may complicate employees’ workflow and reduce their sense of control in deciding how tasks are completed, thereby weakening engagement and performance (Brock & Lawrence, 2014; Rafaeli & Gleason, 2009; Yun & Beehr, 2023). Similarly, AI-supported work can be appraised both as a challenge and a hindrance, as it can both enable and thwart personal growth. In what follows, we apply this framework to theorize its dual impact on employee outcomes.

The Challenge Pathway: AI-Supported Work, Challenge Appraisal, Job Crafting and Innovative Work Behavior

In her conceptual revision of the CHSF model, LePine (2022) integrates Conservation of Resources (COR; Hobfoll, 1989) theory, which posits that individuals strive to obtain, retain, and protect resources, and that stress occurs when these resources are lost; and motivation theory to explain the mechanisms through which challenge and hindrance appraisals are translated into behavior. It is proposed that appraisals initiate affective motivational responses characterized by engagement or disengagement responses, which in turn lead to gain or loss of resources.

In the context of AI-supported work, on days individuals perceive this support as a challenge, they are likely to first attend to the resources that can be gained, such as relief from routine tasks (Malik et al., 2021), greater confidence in the output produced, or relief over sharing responsibility for the outcomes. The positive appraisal serves as a basis for increased motivational engagement with one’s role, which can lead to further resource gains.

We propose that one key manifestation of this engagement in response to AI-supported work is the day-level rearrangement of job tasks to extend or compensate for tasks taken over by AI. With AI managing repetitive tasks that would normally consume cognitive and temporal resources, individuals gain resources they can use to focus on unstructured, high-level tasks that were not initially part of their job description. This behavioral adaptation is referred to as job crafting. Job crafting involves job redesign (Wrzesniewski & Dutton, 2001), allowing employees to independently adjust job aspects to better align with their personal needs, preferences, and abilities (Berg et al., 2008). Its three forms include relational (adjusting the relational aspects of a job, such as the nature and intensity of workplace interactions), cognitive (reframing how the job is perceived), and task crafting (modifying task boundaries, such as the type, amount, or scope of job tasks; Wrzesniewski & Dutton, 2001). We focus specifically on task crafting as the most proximal behavioral response to daily AI-supported work, as it primarily influences how tasks are carried out, giving employees opportunities to alter how they allocate, expand, or refine their task activities. For example, employees can automate routine components or adopt new AI-enabled approaches to completing their work, thereby freeing time and cognitive resources to take on more complex or varied tasks, in turn reshaping their task boundaries. By contrast, relational crafting is less immediately affected by AI-supported work, as AI primarily affects task execution rather than the structure of interpersonal relationships, providing less immediate impetus for individuals to alter how they relate to others at work. Similarly, cognitive crafting concerns employees’ reflections on the meaning or purpose of their work, and although AI support may give rise to such reflections, these are likely to represent a more distal response that may follow more proximal changes in task crafting, given that individuals experience the impact of AI support through changes in how they organize and execute their tasks.

As employees engage in task crafting to redefine and enlarge their roles on a given day, we argue that this, in turn, increases their individual innovative work behavior on that same day. Although empirical studies linking job crafting and innovative work behavior are still limited (see Afsar et al., 2019; Lee & Lee, 2018), there are compelling reasons to expect a positive impact of task crafting on innovative work behavior. Actively crafting tasks enhances person-environment fit (Tims et al., 2012), which has been shown to boost creativity (Livingstone et al., 1997). Moreover, the adaptive and exploratory features of task crafting closely align with innovative work behavior. As Lin et al. (2017) argue, task crafting and innovative work behavior are akin behaviors. Task crafting typically involves numerous changes, such as moving task boundaries, altering the nature and number of tasks, and reconfiguring work materials, areas, and procedures. In the same way, the development of ideas associated with innovative work behavior also requires generation and exploration. Daily crafting may help employees perceive their work from new perspectives, potentially identifying new applications for AI, and consequently identifying and introducing innovative work behaviors that enable doing the work more efficiently.

In sum, on days when AI-supported work is more strongly appraised as a challenge, it directs individuals’ attention to resource gains, leading to an engagement-oriented response that is manifested behaviorally through task crafting. This proactive reshaping of their daily tasks facilitates the emergence of innovative work behavior (Hu et al., 2020). Therefore, the following hypothesis is proposed:

Daily challenge appraisal and daily task crafting sequentially mediate the positive daily relationship between AI-supported work and innovative work behavior.

The Hindrance Pathway: AI-Supported Work, Hindrance Appraisal, Job Insecurity and Employee Cynicism

Following the revised CHSF, another possible outcome of the appraisal process is perceiving AI-supported work as a hindrance, thereby eliciting disengagement as the likely proximal affective/motivational response. Disengagement is expected to negatively impact performance, growth, and well-being, and deplete psychological resources through the experience of frustration, anxiety and anger (LePine, 2022).

In the context of AI-supported work, on days when employees are confronted with AI’s advanced capabilities, they may perceive this as a hindrance to their personal growth and career continuity. Specifically, AI support may be interpreted as constraining opportunities to demonstrate and develop their skills, maintain autonomy, or signal personal value, paralleling evidence that excessive co-worker support can disrupt task ownership, reduce autonomy, and limit personal value (Brock & Lawrence, 2014; Rafaeli & Gleason, 2009). Such hindrance appraisals reflect individuals’ interpretations of AI support as an obstacle to growth, insofar as it may impose unwelcome changes in work patterns, undermining preferred ways of working, and diminishing individual control. AI-supported work may thus be perceived to signal a reduced need for human contribution, rendering self-development efforts less worthwhile as investment becomes increasingly technocentric rather than human-centric, and therefore appraised as a hindrance to development and goal achievement.

This day-specific hindrance appraisal, in line with COR, is likely to trigger a disengagement response (LePine, 2022), depleting motivational and emotional resources and giving rise to affective states such as unease and anxiety (LePine, 2022). In this context, such anxiety is likely to centre on concerns about being replaced or rendered obsolete by AI, manifesting as heightened job replacement anxiety (Nam, 2019). Job replacement anxiety serves as a cognitive and emotional precursor that shapes employees’ attitudes toward their organization. On days when individuals experience higher job replacement anxiety, they are more likely to develop negative attitudes towards their work and organization. Such perceptions may be seen as a breach of the psychological contract (Andersson, 1996), which entailed security in exchange for contribution. As a result, individuals will start experiencing organizational cynicism, characterized by frustration, hopelessness, and disillusionment, alongside contempt toward and distrust of the organization (Guastello et al., 1992). Cynicism, rooted in the Greek Cynic tradition of moral idealism, is contemporarily characterized by apathy and resignation (Kanter & Mirvis, 1989). It is also a key component of burnout, representing an interpersonal distancing from work, where individuals become excessively detached and lose emotional and cognitive engagement with their work (Maslach et al., 2001). Thus, cynicism reflects estrangement from the organization, with individuals experiencing a negative attitude towards work and distancing themselves from it. This is also consistent with the CHSF’s prediction that hindrance appraisals prompt a disengagement response.

In sum, drawing on the CHSF, we propose a mediation model in which on days when AI-supported work is more strongly appraised as a hindrance, it can trigger job replacement anxiety, which in turn will result in enhanced employee cynicism. Therefore, the following hypothesis is proposed:

Daily hindrance appraisal and daily job replacement anxiety sequentially mediate the positive daily relationship between AI-supported work and employee cynicism.

The Moderation Role of AI System Evaluation

According to CHSF and its underpinning TMS, individuals’ appraisal of stressors depends on their perceived relevance or meaning, which in turn is shaped by individual and contextual characteristics (LePine, 2022). Parallel research in Human-AI interaction has shown that the effects of AI systems largely depend on whether they are perceived as useful, trustworthy, adaptive, and transparent (e.g., Glikson & Woolley, 2020; Lee & Cha, 2025). Building on this convergence, we focus on an individual’s AI system evaluation (hereafter referred to as AI system evaluation), a job-related attitude that captures the extent to which individuals perceive the AI system as beneficial, supportive, and appropriate for their work goals (Berretta et al., 2023). While AI-supported work is a daily task-specific judgment, AI system evaluation reflects a general evaluative stance on the value of the AI system. This reasoning is also aligned with attitudes theory (Ajzen & Fishbein, 1980), which posits that stable attitudes impact how individuals interpret and respond to specific experiences.

In line with CHSF, we propose that AI system evaluation influences the relationship between AI-supported work and stressor appraisal. Specifically, we propose that individuals’ overall evaluation of the AI system (a Level 2, between-person construct) moderates how they appraise daily fluctuations in AI-supported work. On days when AI-supported work is more prominent, individuals with more favorable attitudes towards the AI system are more likely to interpret the same daily AI support as a challenge, viewing it as an opportunity for growth and reallocating time and cognitive resources to task crafting. In contrast, individuals with more negative attitudes towards the AI system are more likely to appraise it as a hindrance, focusing on the potential for role devaluation or autonomy loss. Thus, the impact of perceived variability in momentary support is filtered through a stable evaluation lens of overall AI system evaluation. Consequently, we expect that AI system evaluation will moderate the within-person indirect effects of AI-supported work. Specifically, higher AI system evaluation will strengthen its positive indirect effect on innovative work behavior (via challenge appraisal and job crafting) and weaken its positive indirect effect on employee cynicism (via hindrance appraisal and job replacement anxiety).

Person-level AI system evaluation moderates the day-specific indirect relationship between AI-supported work and innovative work behavior through challenge appraisal and task crafting. This daily relationship is stronger for individuals who evaluate the AI system more positively compared to those with lower evaluations.

Person-level AI system evaluation moderates the day-specific indirect relationship between AI-supported work and cynicism through hindrance appraisal and job replacement anxiety. This daily relationship is weaker for individuals who evaluate the AI system more positively compared to those with lower evaluations.

Methodology

Design

Recent research highlights that job characteristics and employee behaviors vary not only between individuals (between-person), but also within individuals over time (within-person) (De Gieter et al., 2018). To capture these dynamics, this study employs a daily diary design, aiming to examine the effects of AI-supported work on work-related outcomes at the daily level. Diary designs with repeated measures are known to have methodological advantages over cross-sectional and multi-wave designs, such as reducing retrospective bias (Ohly et al., 2010). This approach is particularly effective for capturing short-term dynamics, providing insights regarding the impact of AI-supported work (Bolger et al., 2003).

Participants and Procedure

Prior to data collection, ethical approval was obtained from the ethics committee of the corresponding author’s research institution. The study employed a daily diary design. Data were collected three times per day over 10 consecutive working days (Monday to Friday). Participants were recruited from the United States via Prolific, a widely used online recruitment platform that offers high-quality participant recruitment at a reasonable cost (Palan & Schitter, 2018). Participants were employed across various sectors such as finance, insurance, construction, energy and water supply, education, hospitality, healthcare, craftsmanship, IT & communications, art & entertainment, agriculture & forestry, public administration, production & industry, traffic, and science. Job roles included, for example, data analysts, data scientists, chief information officers, creative directors, heads of operations, and HR managers. To ensure appropriate participant selection, we initially used Prolific’s internal screeners. Only participants who were employed full-time, worked more than 30 hours per week, and used AI every day, or multiple times per day were automatically sent the study details by the Prolific system. An online screening questionnaire was then distributed using the Social Sciences platform (https://www.soscisurvey.de/en/index) via Prolific to those participants who signed up for the research. This questionnaire included a participant information sheet, consent form, and screening questions. We only selected participants who met the following additional screening criteria: (a) had a consistent daily work schedule (i.e., a regular and stable daily work schedule with similar start and end times each day): so that daily surveys reflected comparable portions of the workday, and (b) interacted with AI in their daily work activities. They were invited to complete the baseline survey on the Friday of the preceding week. The baseline survey collected demographic information (e.g., gender, age), typical work start time, time zone, three attention checks, and the measure of AI system evaluation. Only those participants who passed all three attention checks were invited to the daily surveys. Daily surveys were automatically distributed starting the following Monday. Based on their reported start of workday, each participant received the surveys containing measures of AI-supported work, challenge and hindrance appraisals 5 hours after starting work; the surveys containing measures of job replacement anxiety and task crafting 8 hours after starting work (end of working day); and the surveys containing measures of cynicism and innovative work behavior 11 hours after starting work (at night before sleeping hours) (see Gochmann et al., 2022). A reminder was sent 1 hour after each survey became available, and participants had a two-hour window to complete it before it expired. Participants received compensation of $0.39 for screening, $1.31 for the baseline survey, and $0.99 for each daily survey completed. They also received a bonus of $6.57 if they completed all daily surveys on at least seven out of ten days.

Of the 188 participants who completed the baseline survey, 15 were excluded for not completing any daily surveys, resulting in a final sample of 173 participants. This sample size aligns with recommendations for daily diary studies (Bolger et al., 2003) and is comparable to response rates in similar research (see Fisher & To, 2012). The sample included 59% men and 41% women with a mean age of 38.57 (SD = 11.01). In terms of work patterns, 41.1% of participants worked shifts, while 58.9% worked regular hours. Participants reported using AI for work-related activities from 30 minutes to 8 hours per day.

Measures

All items used in the study were assessed on a 5-point scale, ranging from 1 “Strongly disagree” to 5 “Strongly agree”. All study measures, including adapted daily items, are reported in Appendix A.

AI-Supported Work was assessed using a 6-item scale adapted from a measure developed by Doll and Torkzadeh (1998), initially designed to capture the perceived impact of IT on work. This scale was also previously adapted by Sasidharan (2022) to capture the perceived impact of a specific IT system. To fit the purposes of the study, we adapted the items to reflect daily measurement of perceived AI-supported work. A sample item is “Today, AI saved my time”.

Challenge and hindrance appraisal were assessed using the 8-item scale (4 items for each appraisal) developed by Searle and Auton (2015), adapted for daily AI-related measurement. A sample challenge item is “Using AI in my work today makes me think it will keep me focused on doing well”, a sample hindrance item is “Using AI in my work today makes me think it will limit how well I can do”.

Task crafting was captured using a 5-item scale adapted from Slemp and Vella-Brodrick (2013) with items adapted for daily measurement. A sample item is “Today, I introduced new work tasks that I think better suited my skills or interests”.

Innovative work behavior was assessed using a 9-item scale from Janssen (2000) with items adapted for daily measurement. A sample item is “Today, I transformed innovative ideas into useful applications”.

Job replacement anxiety was measured using the 4-item from the job replacement subscale of the broader AI Anxiety scale developed by Wang and Wang (2022) with items selected to align with the study aim and adapted for daily measurement. A sample item is “Today, I felt fear that AI will replace someone’s job”.

Cynicism was measured using the 5 items from the cynicism subscale of the Maslach Burnout Inventory – General Survey (Schaufeli et al., 1996) with items adapted for daily measurement. A sample item is, “Today, I have become less enthusiastic about my work”.

AI System Evaluation was captured using the 5 items used by Berretta et al. (2023) based on Ajzen and Fishbein’s (1980) work. The (adapted) iterations of all five items are similar: “I perceive the AI system as…”, with a 5-point Likert scale ranging from (1) “very bad” to “very good”, (2) “very foolish” to “very wise”, (3) “very unfavorable” to “very favorable”, (4) “very harmful” to “very beneficial”, (5) “very negative” to “very positive”.

Analytic Strategy

Because of the nested structure of our data (i.e., days nested in individuals), we used multilevel modelling to examine the hypothesized relationships. All models were specified with the software Mplus 8.5 (Muthén & Muthén, 2017), using Bayesian estimation with default non-informative priors. We chose Bayesian estimation for our analyses because we hypothesize indirect relationships, which are not normally distributed (Bauer et al., 2006). In such cases, Bayesian estimators produce accurate estimates as they do not rely on the assumption of normality (Muthén & Asparouhov, 2012). The Bayesian estimator also automatically handles missing data of dependent variables in Mplus by imputing them as part of the estimation process (Asparouhov & Muthén, 2010).

To examine the hypothesized moderated mediation model, we specified first a 1-1-1-1 multilevel mediation model (see Preacher et al., 2010). At the within-person level, we first modelled the relationships between AI-supported work and challenge as well as hindrance appraisal as random slopes to account for the proposed cross-level moderating effect of positive AI system evaluation. We further specified challenge appraisal to predict task crafting. We also modelled hindrance appraisal to predict job replacement anxiety. Subsequently, task crafting was modelled as a predictor of innovative work behavior to reflect the positive consequences of AI-supported work. Furthermore, we specified the relationship between job replacement anxiety and cynicism to reflect the adverse consequences of AI-supported work. We also specified direct effects from AI-supported work to our second-stage mediators (task crafting and job replacement anxiety) and both outcomes (innovative work behavior and cynicism), as well as direct paths of our first-stage mediators (challenge and hindrance appraisal) to innovative work behavior and cynicism, allowing us to test both direct and indirect paths. All relationships besides those between AI-supported work and challenge, as well as hindrance appraisal, respectively, were specified as fixed slopes. To examine the proposed cross-level moderation effect of AI system evaluation in the between-person part of our model, we specified AI system evaluation to predict the random slopes linking daily AI-supported work to challenge and hindrance appraisal, respectively. We also specified direct relationships between challenge and hindrance appraisal and AI-supported work at the between-person level.

For the assessment of moderated indirect effects, we followed the approach recommended by Hayes and Preacher (2010) by calculating conditional indirect effects at +1 SD and −1 SD of the cross-level moderator (AI system evaluation). An indirect or conditional indirect effect was considered present when the 95% credibility interval of the estimate did not include zero (Preacher et al., 2007).

Results

Measurement Models

We conducted Multilevel Confirmatory Factor Analyses (MCFAs) to assess the psychometric distinctness of our variables. For these analyses, we used the Maximum Likelihood estimator with robust standard errors within a frequentist framework, as Bayesian estimators do not produce model fits that are conventionally used to assess measurement models. In line with suggestions by Dyer et al. (2005), we specified all daily variables (AI-supported work, challenge and hindrance appraisal, task crafting, job replacement anxiety, innovative work behavior and cynicism) in our model at the within-person level and AI system evaluation at the between-person level. To evaluate the goodness-of-fit of our models, we used cut-off values as recommended by Hu and Bentler (1999).

First, we specified our theorized model where each variable loaded on a separate factor with 7 factors at the within- and 1 factor at the between-person level. This model yielded a satisfactory fit (χ2 [613] = 1708.178, p < .001, RMSEA = .038, CFI = .923, SRMRw = .037, SRMRbetween [SRMRb] = .015). Next, we specified a 6-Factor model at the within-person level and a 1-Factor model at the between-person level, where we combined challenge and hindrance appraisal into one single factor. This model had an overall inferior fit (χ2 [619] = 2944.441, p < .001, RMSEA = .055, CFI = .836, SRMRw = .062, SRMRb = .015) compared to the theorized model as indicated by the S-B scaled Δχ2 test: S-B scaled Δχ2 = 516.787; Δdf = 6; p < .001. Additional models where we aggregated our second-stage mediators, task crafting and job replacement anxiety (χ2[619] = 2659.210, p < .001, RMSEA = .052, CFI = .856, SRMRw = .092; SRMRb = .015) as well as the outcomes innovative work behaviors and cynicism (χ2[619] = 2433.383, p < .001, RMSEA = .049, CFI = .872, SRMRw = .059; SRMRb = .015) did also fit the data less well than the theorized factor model as indicated by the respective S-B scaled Δχ2 tests: S-B scaled Δχ2 comparing our theorized model to the model with job replacement anxiety and task crafting as one factor = 937.116; Δdf = 6; p <. 001; S-B scaled Δχ2 comparing our theorized model to the model with innovative work behavior and cynicism as one factor = 451.049; Δdf = 6; p <. 001.

Hypotheses Testing

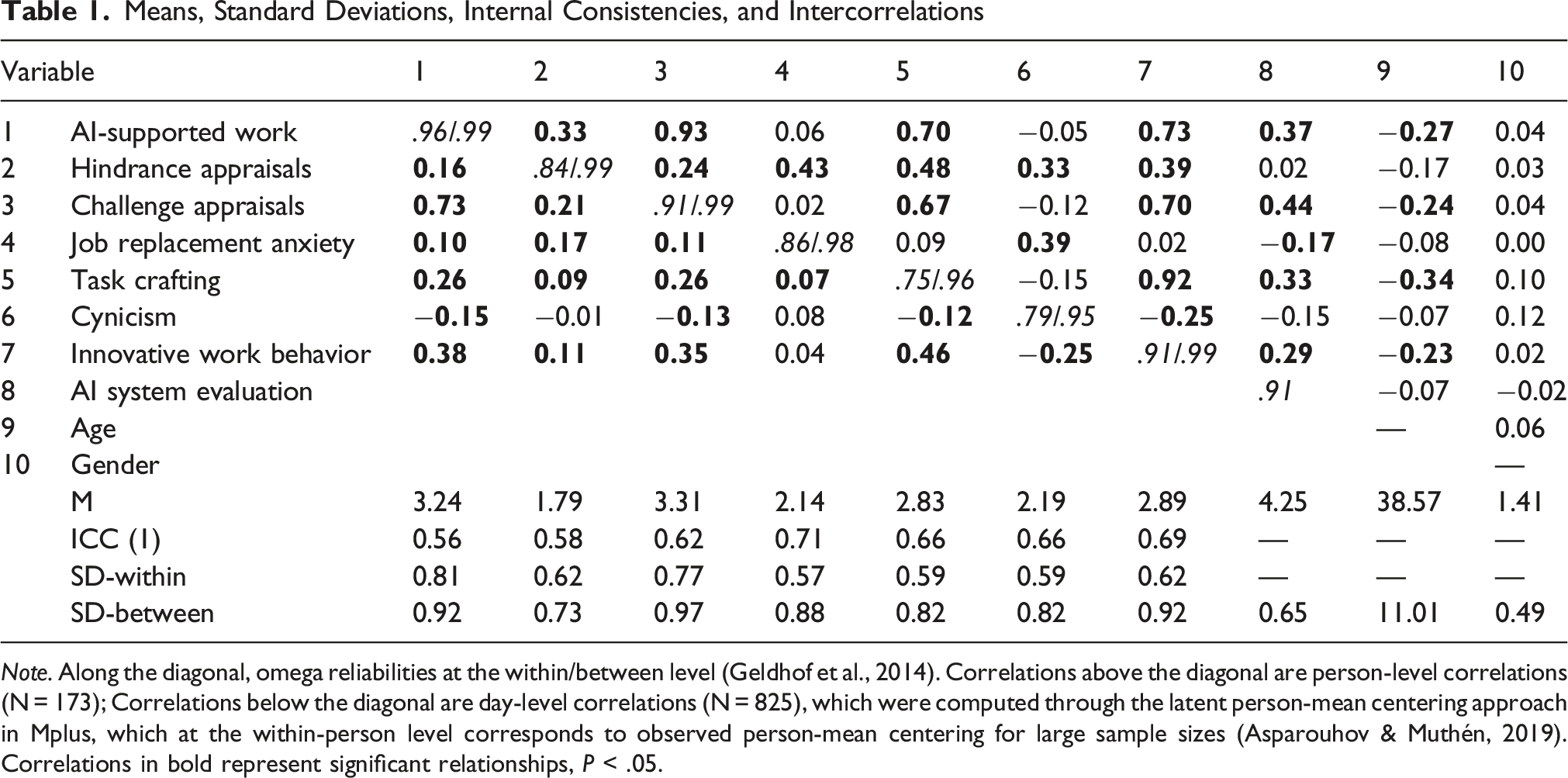

Means, Standard Deviations, Internal Consistencies, and Intercorrelations

Note. Along the diagonal, omega reliabilities at the within/between level (Geldhof et al., 2014). Correlations above the diagonal are person-level correlations (N = 173); Correlations below the diagonal are day-level correlations (N = 825), which were computed through the latent person-mean centering approach in Mplus, which at the within-person level corresponds to observed person-mean centering for large sample sizes (Asparouhov & Muthén, 2019). Correlations in bold represent significant relationships, P < .05.

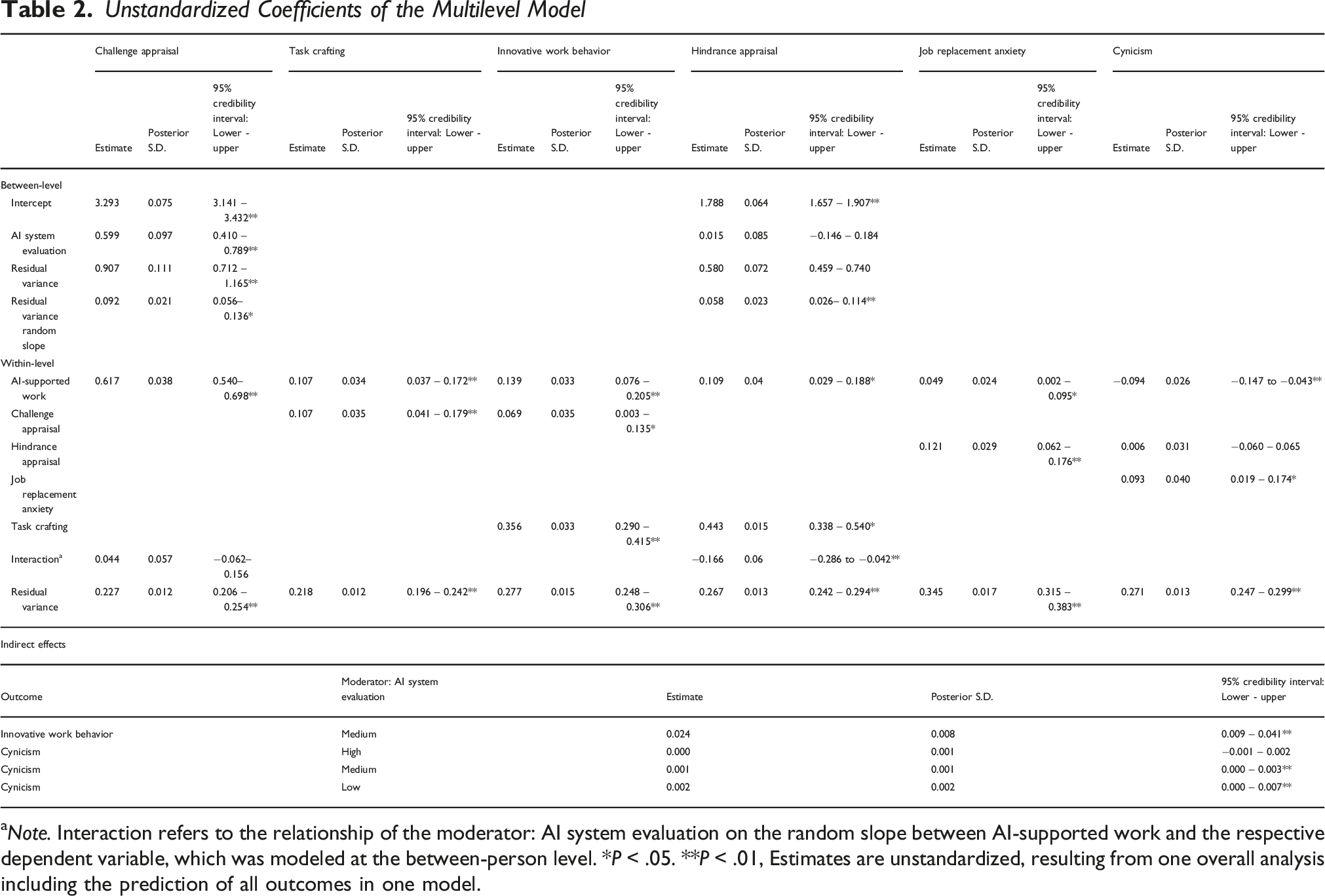

Unstandardized Coefficients of the Multilevel Model

aNote. Interaction refers to the relationship of the moderator: AI system evaluation on the random slope between AI-supported work and the respective dependent variable, which was modeled at the between-person level. *P < .05. **P < .01, Estimates are unstandardized, resulting from one overall analysis including the prediction of all outcomes in one model.

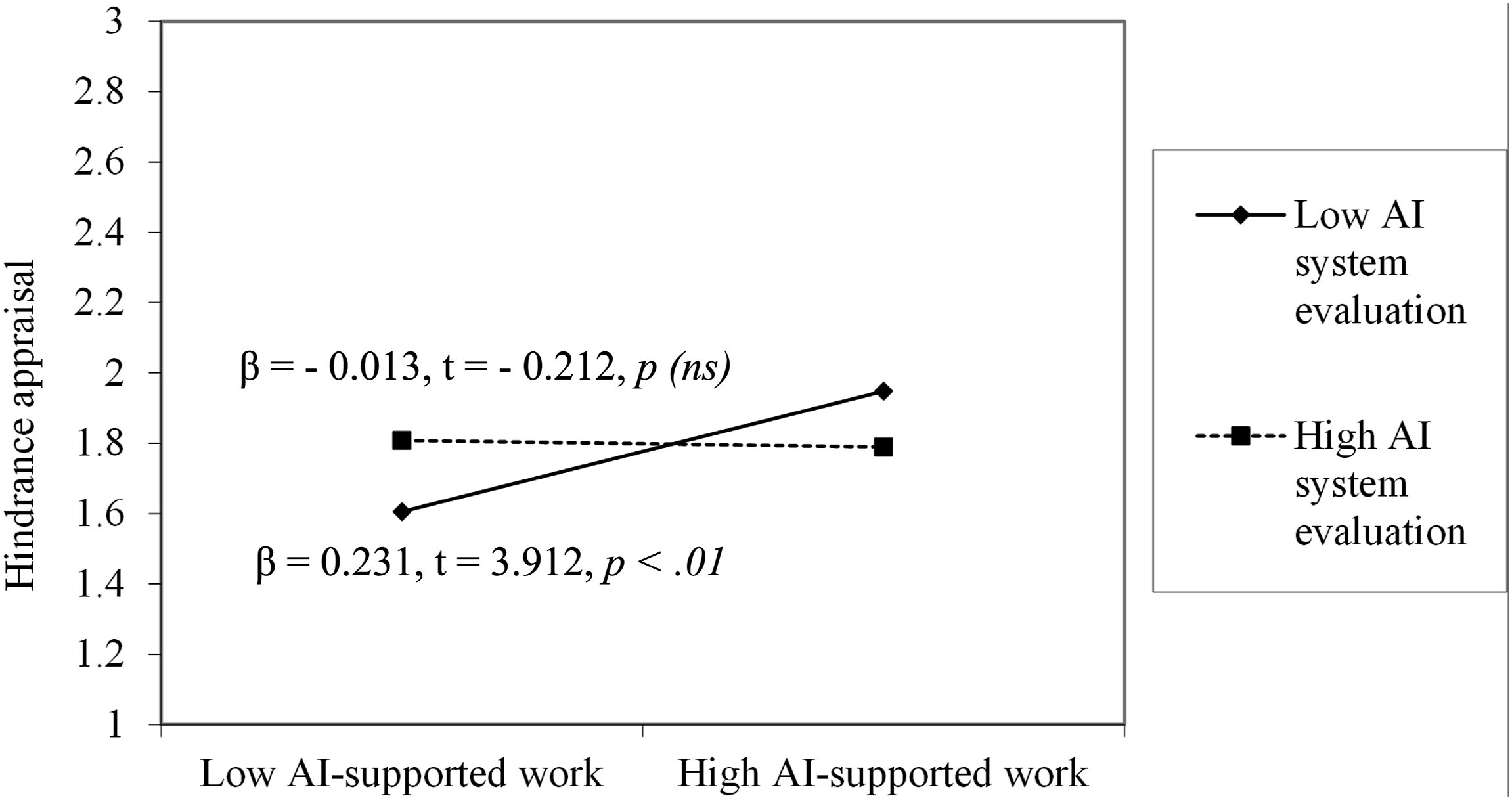

Next, we examined whether AI system evaluation moderated the within-person relationship between AI-supported work and innovative work behavior via challenge appraisal and task crafting (Hypothesis 3). The interaction term between AI-supported work and AI system evaluation on challenge appraisal was not statistically significant (γ = 0.044, P = .410), suggesting no evidence for a cross-level moderation effect. Consistently, our data did not support that the indirect effect of daily AI-supported work on innovative work behavior via challenge appraisal and task crafting was moderated by an individual’s evaluation of the AI proposed under Hypothesis 3. We also examined whether AI system evaluation moderated the within-person relationship between AI-supported work and cynicism via hindrance appraisal and job replacement anxiety (Hypothesis 4). The interaction term between AI-supported work and AI system evaluation on hindrance appraisal was significant (γ = −0.166, P = .008), indicating a cross-level moderation effect. We plotted these relationships at conditional values of person-level AI system evaluation (+/−1 SD; Cohen et al., 2003). As depicted in Figure 2, this interaction shows that the daily relationship between AI-supported work and hindrance appraisal was weaker for individuals who evaluated the AI system more positively compared to those who evaluated it as less. In particular, for individuals with higher evaluations of the AI system, our results suggest that there is no daily relationship between AI-supported work and hindrance appraisal (γ = −0.013, P = .832). In addition, the moderated indirect effect of day-specific AI-supported work on daily cynicism via hindrance appraisal and job replacement anxiety was weaker for individuals who evaluated the AI system more positively. The conditional indirect effects suggest that the proposed indirect effect on cynicism was significant for individuals with lower (γ = 0.002, P = .01; 95% CI [0.000, 0.007]), and medium (γ = 0.001, P = .01; 95% CI [0.000, 0.003]), but not higher, evaluation of the AI system (γ = 0.000, P = .450; 95% CI [-0.001, 0.002]). Furthermore, comparing the slope difference between individuals with lower and higher evaluation of AI revealed a significant difference (γ = 0.244, p = .003; 95% CI [0.060, 0.419]). This pattern of conditional indirect effects supports Hypothesis 4. Cross-level interaction of AI system evaluation on the daily relationship between AI-supported work and hindrance appraisal

Discussion

The burgeoning literature on AI has often overlooked how employees perceive the support offered by AI in their daily work and its effects. This is a significant omission, as increasing numbers of employees are exposed to AI systems that provide task-related support. While there is substantial literature on the impact of co-worker support (Chiaburu & Harrison, 2008; Ng & Sorensen, 2008), we know relatively little about receiving support from a new type of co-worker, an AI system (Zercher et al., 2025). Building on the revised CHSF (LePine, 2022), we theorise and test two parallel pathways through which AI-supported work, depending on its daily appraisal, may lead to innovative work behavior via task crafting, or cynicism via job replacement anxiety, as well as the moderating role of AI system evaluations.

Our findings provide support for both pathways. On days when AI-supported work was appraised as a challenge, it predicted task crafting and, in turn, innovative work behaviour. At the same time, when appraised as a hindrance, it predicted job replacement anxiety and subsequent organizational cynicism. In addition, AI system evaluation moderated the hindrance pathway, such that more favourable attitudes towards the AI system reduced the strength of the relationship between AI-supported work and hindrance appraisal.

Theoretical Implications

This research contributes to the literature in several ways. While much existing work has examined the general opportunities and risks of AI adoption (Malik et al., 2021), our study narrows the focus to the experience of AI-supported work, how it is appraised on a day-to-day basis, and the affective and behavioral consequences that follow from it. By centering on daily appraisal processes, we provide a theoretically grounded account of how AI-supported work translates into employee outcomes.

Specifically, by applying the revised CHSF (LePine, 2022) to investigate the dual appraisal of AI-supported work, we extend the framework to the domain of human-AI collaboration, thereby advancing its relevance to technology adoption. Our findings clarify how this type of support can elicit both challenge and hindrance appraisals, reaffirming that the same stressor can trigger opposing mechanisms depending on individual perceptions and contextual cues. The results revealed that on days when individuals perceived higher AI-supported work, they were more likely to appraise it as a challenge, resulting in task crafting. This suggests that employees may interpret AI-supported work as a challenge and respond with an engagement-oriented approach, rearranging their tasks to complement what has been automated or to make productive use of time freed up by AI. This finding aligns with prior research highlighting that AI-supported work systems facilitate opportunities for skill development (Wisskirchen et al., 2017) and motivate job redesign (Jia et al., 2024). The study also confirmed that task crafting, in turn, predicted innovative work behavior, supporting our proposition that employees’ engagement-oriented responses to AI-supported work foster innovation (Kuijpers et al., 2020). Broadening task boundaries increases employees’ resources (e.g., time), which enables further resource investment (Hobfoll et al., 2018). These results echo the literature on augmented intelligence, where employees leverage skills developed through AI interaction to improve task performance and engage in innovative behavior (Jia et al., 2024).

At the same time, the hindrance pathway also received support: on days when individuals perceived having more AI-supported work, they were more likely to appraise it as a hindrance, which, in turn, predicted job replacement anxiety and, ultimately, employee cynicism. This demonstrates that AI-supported work can be interpreted as a challenge and a hindrance, leading to distinct positive and negative work-related outcomes. This finding mirrors prior research indicating that the same stressor can evoke both challenge and hindrance appraisals depending on individual or contextual factors (e.g., Searle & Auton, 2015; Webster et al., 2011).

Importantly, the positive relationship between AI-supported work and hindrance appraisal reinforces the notion that AI-supported work can hinder individuals’ perceptions of growth and development. This aligns with research on interpersonal relations, showing that receiving support from co-workers can negatively affect individual performance by affecting their preferred way of completing a task and their sense of autonomy (Brock & Lawrence, 2014; Rafaeli & Gleason, 2009). Indeed, while the majority of studies highlight the positive effects of social support (e.g., Ng & Sorensen, 2008), a substantial body of research has documented negative associations with received support, including heightened negative affect (Buunk et al., 1993), increased absenteeism (Rael et al., 1995), and accentuated effects of workplace exclusion (Cruz et al., 2022). Similarly, AI-supported work may be experienced as creating constraints on how employees can carry out their tasks or demonstrate their skills, limiting perceived opportunities for growth and thereby being appraised as a hindrance. Thus, this finding challenges the prevailing assumption that receiving AI support is inherently beneficial and extends ambivalent support dynamics to technologically mediated contexts.

Moreover, by demonstrating that AI system evaluation moderates the hindrance pathway, we further contribute to the CHSF literature by addressing LePine’s (2022) call to identify the moderating conditions that shape the stressor–appraisal relationship. The finding that, on days with higher AI-supported work, individuals were less likely to perceive it as a hindrance when they held a more favourable attitude toward the AI system suggests that individuals make separate evaluations about the system itself (more stable) and the daily support received (more dynamic), with the former shaping how the latter is interpreted. This dynamic echoes findings from interpersonal support research, where support from trusted co-workers elicits more positive reactions, while support from those held in low regard may evoke discomfort or feelings of incompetence (Fisher et al., 1982). This finding reinforces the importance of carefully managing the implementation and integration of AI systems into workplace practices, taking a human-centric perspective and focusing on how they impact and are perceived by individuals. It also aligns with previous research showing that employees’ attitudes toward technology shape how they respond to its use (Venkatesh et al., 2003), underscoring the relevance of fostering positive attitudes as part of effective AI adoption.

Our findings further extend the job crafting literature by identifying AI-supported work as a new antecedent of task crafting, thereby expanding its nomological network (Lee & Lee, 2018), and examining its role as a mediator of the relationship between AI-supported work and innovative work behavior. Prior work has focused primarily on autonomy and relational factors, while our findings suggest that AI can serve as a trigger for crafting by altering task structures.

The use of a diary design further strengthens these contributions by capturing within-person fluctuations in AI-supported work and its outcomes. This complements research that relies on cross-sectional designs and advances a more dynamic understanding of how employees engage with AI over time (Man Tang et al., 2022; Pereira et al., 2023).

Finally, our findings also contribute to the broader conversation on building a human-centric future of work. Human-centric perspectives on AI emphasize designing and implementing AI systems that enhance, rather than erode, employees’ sense of autonomy, and meaningful human involvement in work processes (Jarrahi, 2018; Schmager et al., 2025). By highlighting that AI-supported work can elicit both challenge and hindrance appraisals on a daily basis, our study extends this literature by providing an appraisal-based account of how such human-centric outcomes emerge in everyday experience. Specifically, it underscores that employees are not passive recipients of technological change but active interpreters whose well-being depends on how AI is embedded in their work context. Consistent with research showing that employee perceptions and organizational practices critically shape AI outcomes (Bankins et al., 2024), the dual pathways identified reinforce the importance of designing AI systems and implementation practices that centre human needs, preserve autonomy, and create opportunities for employees to meaningfully shape how AI is integrated into their daily work.

Practical Implications and Recommendations

Our research offers timely and practical insights for organizations implementing AI-supported work. On the positive side, we find that when AI-supported work is appraised as a challenge, it becomes a catalyst for task crafting, enabling employees to respond constructively to AI-supported work by reorganizing tasks, adapting their roles, making productive use of their time, and increasing engagement in innovative work behavior. This is a significant finding in the context of rapid digital transformation, as it highlights how AI-supported work can enhance individuals’ agency, skill use, and innovation when positively framed.

At the same time, our results reveal a critical risk: AI-supported work can be appraised as a hindrance and elicit job replacement anxiety. Employees may perceive AI as a constraint on their preferred way of completing tasks, autonomy, and personal growth, triggering disengagement and organizational cynicism.

To maximise the positive outcomes of AI-supported work, organizations must focus on ensuring that AI systems are perceived as supportive, valuable, and aligned with employees’ goals. This includes integrating socio-technical systems effectively (Trist & Bamforth, 1951), providing clear communication about AI’s role, and fostering opportunities for task crafting in response to new technology. Critically, promoting favourable attitudes towards AI by ensuring it is seen as trustworthy, useful, and transparent can shape whether AI support is less likely to be interpreted as a hindrance. To minimise the risks, organizations should avoid implementing AI in a top-down, imposed manner. Just as support from a co-worker can be detrimental when unsolicited or poorly timed, AI interventions can exacerbate insecurity and resistance if employees do not have a favourable view of the AI system. Implementation strategies should therefore prioritise participation, training, and the preservation of employee autonomy to reduce hindrance appraisals and foster a sense of control, autonomy, and value of human work.

Limitations and Future Research

Although this study has several important strengths, including the use of a daily diary design conducted over 10 days, with multiple daily measurement points, it is important to acknowledge several limitations that future research should address. While both hypothesized pathways were supported, and we found that higher AI system evaluation attenuated the relationship between AI-supported work and hindrance appraisal, we did not observe a moderating effect on the challenge pathway. It is therefore important to identify additional factors that may shape this relationship. Future research could examine both contextual factors, such as industry type (e.g., people-oriented vs. technology-intensive sectors), and individual characteristics, such as personality traits (e.g., openness; neuroticism; McCrae & Costa, 2008), which may amplify or buffer the relationship between AI-supported work and challenge appraisal. Similarly, personal resources like self-efficacy, or contextual elements like training and implementation practices, may moderate the appraisal of AI-supported work and should be explored further.

In addition, although our theoretical framing treats AI support broadly as assistance provided by AI for task-related activities, the actual nature of this support may likely differ across individuals and organizations, for example, from simple information retrieval to automated task execution or decision support. These differences would mean that employees may not be responding to a single, uniform form of ‘AI support’, but rather to diverse AI-enabled functions embedded in their daily work. This may shape how employees evaluate the usefulness and implications of AI-supported work on a given day, potentially influencing their challenge and hindrance appraisals. Future research would benefit from examining whether distinct forms (e.g., augmentation versus automation, routine assistance versus strategic decision support) of AI-supported work will lead to systematically different appraisal patterns and outcomes.

It is also important to note that given our focus on appraisals as central mechanisms, we approached the impact of AI-supported work from a CHSF perspective. However, alternative frameworks such as work events or emotion-focused models (Weiss & Cropanzano, 1996) may also offer useful lenses to examine the impact of AI interaction.

Our focus on task crafting was informed by the conceptual affinity between AI-supported work and this specific form of job crafting. However, future research could also explore how AI influences other types of job crafting, such as relational and cognitive crafting (Wrzesniewski & Dutton, 2001), particularly as AI agents become increasingly human-like. These developments may further substitute interactions with human co-workers and impact employees’ sense of meaningfulness at work.

Our emphasis on daily, within-person effects and cross-level interactions is a key strength, as we are among the first to examine the day-to-day implications of interactions with AI (cf., Shao et al., 2024). However, we did not theorize or test for homologous effects across levels. Given that AI research has rarely addressed such temporal dynamics, we believe it is important to first establish how AI-supported work unfolds at the daily level before examining whether these patterns generalise across individuals. Future research could advance AI research by examining its effects at both the between and within-person levels (Chan, 1998).

Finally, the study relied entirely on self-reported data, which, while appropriate for capturing subjective appraisals such as stress and job replacement anxiety, does raise concerns about common method bias. We implemented procedural controls (e.g., randomization, anonymity) and statistical remedies (Podsakoff et al., 2003), but future research should aim to include external or behavioral indicators, particularly for outcomes like task crafting and innovative work behavior. Furthermore, as the sample was drawn exclusively from a Western population, caution is warranted in generalising the findings. Cross-cultural replication and larger samples over extended periods would help strengthen external validity.

Conclusion

The study of AI remains a moving target (Stollberger et al., 2025), with all the complexities associated with examining a fast-evolving and multifaceted phenomenon. This research contributes to the growing field of human–AI interaction by demonstrating that daily AI-supported work can be appraised as both a challenge and a hindrance stressor eliciting corresponding engagement or disengagement responses, such as task crafting or job replacement anxiety. These responses, in turn, shape key outcomes like innovative work behavior and organizational cynicism. Crucially, how individuals interpret AI-supported work is influenced by their general attitude towards the AI system itself. This underscores the pivotal role of managers in guiding successful AI integration by shaping positive employee attitudes and ensuring the implementation of systems that are not only effective, but also perceived as supportive, fair, and aligned with human needs.

Supplemental Material

Supplemental Material - Examining the Impact of Daily AI-Supported Work on Employee Outcomes: A Challenge-Hindrance Approach

Supplemental Material for Examining the Impact of Daily AI-Supported Work on Employee Outcomes: A Challenge-Hindrance Approach by Kanimozhi Narayanan, Claudia Sacramento, Wladislaw Rivkin, Mohsen Joshanloo, Matthew Carter, Anitha Chinnaswamy in Group & Organization Management.

Footnotes

Ethical Considerations

The research received ethical approval from Aston Ethics committee. All participants were above 18 years of age and provided consent before taking part in the research.

Author Contributions

Kanimozhi Narayanan: Conceptualization, Data curation, Formal analysis, Methodology, Project administration, Writing - original draft preparation, Writing - review & editing. Claudia Sacramento: Conceptualization, Writing - original draft preparation, Writing - review & editing. Wladislaw Rivkin: Data curation, Formal analysis, Writing - original draft preparation, Writing - review & editing. Mohsen Joshanloo: Writing - original draft preparation. Matthew Carter: Supervision, Validation. Anitha Chinnaswamy: Funding acquisition, Writing-editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: this work was supported by Aston University.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data is available from the corresponding author upon reasonable request.

Supplemental Material

Supplemental material for this article is available online.