Abstract

Stocks of some food products, such as whiskey, cheese, or port wine, ameliorate during storage, facilitating product differentiation according to age. This induces a trade-off between immediate revenues and further maturation. Inventory management decisions include purchasing volumes of agricultural produce and production volumes for age-differentiated products. Because products can be blended from stocks of different ages, issuance decisions offer operational flexibility. However, whereas some industries (port wine, sherry) only request that the product labels refer to the average age of issued stocks, others (whiskey, rum) have stricter blending regulations, requiring that the product labels represent the minimum age of all components. Further, producers must deal with multiple uncertainties. Purchase prices of agricultural commodities depend on volatile climate-dependent harvest seasons, stocks decay during maturation, and sales market conditions fluctuate. We solve this inventory management problem using a deep reinforcement learning algorithm with three key innovations: (i) A novel actor pipeline that decomposes the action space and flexibly partitions decision dimensions between a neural network and a lookahead optimization model, (ii) an algorithm explicitly maximizing average rewards, and (iii) reward-handling techniques that exploit structural problem insights. Our approach yields near-optimal policies that consistently outperform benchmark heuristics. Beyond the algorithmic contributions, our results offer new managerial insights into the value of blending under uncertainty. Minimum-age blending substantially enhances the profits of firms as compared to no blending because companies can adjust their purchasing policy in response to price fluctuations. The more flexible average-age regime further improves profits by

Introduction

Inventory systems of ameliorating food such as spirits (whiskey, rum, grappa), fortified wine, ripened cheese, and ham (Dimson et al., 2015) consist of stock volumes in different age classes, that is, different accumulated maturation times. This work considers ameliorating products characterized and marketed by their maturation time, such as three-year-old or seven-year-old rum. Unlike fine wines (e.g., Hekimoğlu et al., 2017), the value of such products is independent of the vintage and primarily driven by the aging process, with older products yielding larger sales revenues.

The management of such inventory systems needs to align purchasing, production, and issuance decisions. Purchasing decisions determine the acquired volume of agricultural raw materials, which undergo an initial processing step, transforming them into ameliorating stocks that start the maturation process. Production decisions specify the volumes of various age-differentiated products originating from the same inventory system, which are packaged and placed in the sales market. The age indication, that is, the target age, is typically printed on the packaging and serves as the primary characteristic feature of the product. In concurrence with production decisions, issuance decisions determine the allocation of stock volumes from different age classes to different products. Thereby, producers have flexibility by blending stocks from different age classes. An example from port wine inventory management illustrates the interplay of production and issuance decisions. In a given year, managers want to produce

This contrasts with perishables, for which issuing older stocks first (FIFO) is optimal in many problems (e.g., Chen et al., 2021). In ameliorating food inventory systems, however, simple issuance rules fail because they do not account for blending options, and producers must serve multiple age-differentiated products, whose value increases with age, from a shared inventory system. This creates specific trade-offs in production and issuance decisions. Stocks of young ages can either be used for production or be matured and used later for older products. By offering young products to the market, we obtain immediate revenues, but we may lose larger profits in later periods. Thus, a key management objective is to maintain a balanced structure of inventory volumes in different age classes. With more consumers turning towards luxury foods, such aging products gain importance (IWSR, 2024). In the following, we characterize ameliorating food inventory systems in more detail.

Food inventory managers face various sources of uncertainty. Globally, crop yields and quality are largely influenced by climate variability (Ray et al., 2015). As producers can only use stocks that meet the specific quality requirements for amelioration, this harvest variability is reflected in volatile purchase prices. For Protected Designation of Origin products, geographical restrictions on sourcing natural resources amplify the price variability. Examples are port wines, exclusively made from grapes from the Douro Valley in Portugal, and Parmigiano Reggiano cheese, produced and matured solely in selected Italian regions. In their purchasing decisions, managers must deal with regional price fluctuations. Directly after acquiring the agricultural commodities, the processing step transforms them into storable (not yet matured) products. Typically, producers have capacity limitations at this stage. For instance, whiskey (made from barley and water) is distilled using specific equipment before it is filled into wooden (preferably former port wine) casks for maturation. Further, the maturation process of stocks is subject to the risk of decay. When spirits decay during maturation, they are sold under a white label, generating substantially lower revenue than those used for brand products. In contrast, cheese is more stable but typically cannot be sold in case of decay. Apart from decay, the stored volume is also reduced due to evaporation. For spirits, the fixed proportion that evaporates each period is typically called the “angel’s share.” After turning stocks into final products, no further maturation is possible. Therefore, final products are placed in the market, generating stochastic revenues. However, because agricultural commodities need to be sourced locally, whereas final products are marketed worldwide, purchase prices fluctuate substantially more strongly than the revenues for final products.

To summarize, the decision problem is defined by purchasing decisions for the youngest age class and integrated production and issuance decisions for various age-differentiated products. To align these sequential decisions with the dynamics of aging, blending, and the involved uncertainties, we introduce a generic Markov decision processes (MDPs) formulation for the ameliorating food inventory management problem. Due to the long maturation times and the cross-generational perspective of predominantly family-owned producers, future profitability is equally important as short-term financial success. Hence, we use the average reward criterion in the value function. Due to the consideration of inventory age across the state, action, and transition spaces, the curse of dimensionality renders finding optimal solutions impracticable. Recently, several researchers have proposed deep reinforcement learning (DRL) algorithms for inventory management. Using an actor–critic architecture, these typically approximate both the value function and the policy through neural networks (NNs). However, the state-of-the-art algorithms such as proximal policy optimization (PPO) all rely on discounting future rewards for convergence, which conflicts with our objective of maximizing average rewards. Further, finding good policies when dealing with large, multidimensional action spaces remains difficult for DRL approaches. Additionally, delays between actions and the associated rewards may impede the learning progress (Dulac-Arnold et al., 2021). These challenges are immanent in ameliorating inventory management. Due to flexibility in issuance decisions, the number of action dimensions increases with the number of age classes in the inventory system. Further, due to the time required for maturation, revenues associated with purchased volumes are delayed at least by the products’ target ages.

Our methodological contributions are twofold. First, we develop an actor–critic DRL approach with a new type of actor pipeline that integrates the actor NN with a lookahead optimization model. Action dimensions can be assigned to either actor pipeline component. This entails a trade-off in its design. On the one hand, coordinating too many action dimensions in the NN may impair policy learning. On the other hand, allocating action dimensions to the lookahead model introduces approximation errors. We achieve the best performance when the NN handles only a few key decisions with long-term impact, that is, purchasing volumes and production volumes for younger products, while the remaining production volumes for older products and complex issuance decisions are delegated to the lookahead model. Second, we transfer and adapt the average policy optimization (APO) algorithm, developed for average reward optimization in computer game environments, to the inventory management domain. To this end, we introduce the following reward-handling techniques. (i) We develop a new reward-shaping method that exploits domain knowledge about the inventory problem. (ii) We scale the rewards observed during training by an upper bound on the optimal average reward derived from an analysis of the average inventory age structure. Across a range of ameliorating inventory problems, our DRL approach consistently and significantly outperforms benchmark heuristics. Compared to a solution inspired by industry practice, our algorithm improves profits by

In addition, we provide managerial insights on ameliorating food inventory systems. We quantify the value of blending under different regulatory regimes. Compared with a setting that only allows issuance from the target ages, the profit increases by

The remainder of this paper is structured as follows. We discuss relevant literature in Section 2. Section 3 introduces the MDPs formulation for ameliorating food inventory management. Additionally, we provide a linear program (LP) yielding an upper bound on the average reward per period. We present our solution approach, including the novel actor pipeline, the APO algorithm, and the new reward-handling techniques in Section 4. Section 5 presents a port wine industry case. In Section 6, we evaluate our methodology, analyze the value of blending, and demonstrate our policy mining approach. Section 7 provides concluding remarks.

Literature Review

We structure the literature review as follows. Section 2.1 investigates research addressing the value of blending. Section 2.2 discusses extant research on multiage inventory systems, focusing on age-differentiated and ameliorating products. Section 2.3 reviews applications of DRL in inventory management. We summarize the identified research gap in Section 2.4.

Blending

Blending operations are predominantly used in the process industries, with various applications including crude oil refining (Mendez et al., 2006; Papageorgiou et al., 2012), chemical product formulation (Karmarkar and Rajaram, 2001), mining (Chen and Maravelias, 2022), and donor milk pooling (Chan et al., 2023). In these problems, companies blend raw materials with variable component mixes during production while blending constraints restrict the proportion of input components in the final products.

Although a large body of literature considers blending, only a few studies have quantified the value of the associated flexibility. Dong et al. (2014) address a crude oil refining problem where producers can convert heavy to light components before the blending stage, where final products are blended from these components according to fixed recipes. Their findings indicate that the added value of conversion flexibility is influenced by the variability of purchase prices and the processing capacity of producers. In a generalized capacity investment and production problem involving multiple raw materials and final products, Kulkarni and Francas (2017) examine the value of flexibility in designing blending recipes. They compare fully flexible blending networks with blending chains, illustrating that the relative advantage of full flexibility over chaining increases with blending costs.

Inventory Management for Age-Differentiated Products

Extant research on inventory problems involving age-differentiated products largely stems from the blood inventory management literature, as some medical treatments require fresher blood than others. Goh et al. (1993) evaluate different issuance rules under stochastic supply and age-differentiated demand. Deniz et al. (2010) jointly address replenishment and issuance rules. They analytically show that when substitution between the products is possible, different parameter settings favor different rules. Finally, Chen et al. (2019) consider the joint optimization of blood platelet collection, fulfillment, and issuance. They analytically show that FIFO issuance is optimal in their problem setting. In a successor paper, the authors generalize their results to perishable inventory models with age-differentiated demands and additionally show that younger products with higher shortage costs must be given priority in fulfillment (Chen et al., 2021). In this previous research, action spaces are kept small by focusing on individual decisions or are designed to represent straightforward decision rules.

Whereas the aforementioned works consider perishables, we have recently observed an increasing research interest in the effects of inventory amelioration. Early contributions are from the field of forest management. Lin and Buongiorno (1998) target the optimal stopping decision to determine the cutting time for trees under environmental and financial risks. Recently, Kouvelis et al. (2023) investigate inventory management at hog farms. Instead of putting underweight hogs on the market, farmers can feed them further to achieve higher revenues in later periods. For the inventory management of ameliorating food such as cheese and whiskey, Buisman and Rohmer (2022) propose a rolling-horizon optimization approach for dealing with demand uncertainty. Jahandideh et al. (2023) analyze the optimal allocation of a fixed production capacity to age-differentiated ameliorating products. They show that a stationary policy is optimal in their stylized problem setting. For ameliorating port wine inventory, Pahr et al. (2025) derive management policies for intractable practical problems by mining and scaling the optimal policies for aggregated problems. They rely on a state space discretization to solve the aggregated problems using value iteration.

DRL for Inventory Management with Multidimensional Action Spaces

Recently, many researchers have approached inventory problems using DRL algorithms. Boute et al. (2022) provide an early review and a comprehensive overview of different algorithms and the design choices relevant to different inventory management problems. Foundational works apply state-of-the-art algorithms to standard inventory problems to illustrate the general applicability of DRL. Oroojlooyjadid et al. (2022) provide a multiagent deep Q networks (DQNs) approach for the well-known beer game. Gijsbrechts et al. (2022) investigate DRL as a general-purpose method and report promising results for well-researched lost-sales, dual-sourcing, and multiechelon inventory problems using the A3C algorithm.

However, the performance of DRL approaches deteriorates when dealing with multidimensional action spaces (Dulac-Arnold et al., 2021). Hence, our review focuses on inventory research that explicitly tackles this challenge. Park et al. (2023) propose an intuitive approach that reduces the dimensionality of the action space. They exploit the near-optimality of a base-stock policy structure for multi-item inventory systems and learn the base-stock parameters instead of the state-dependent order quantities. Naturally, this kind of approach only works for problems with simple near-optimal policy structures. Kouvelis et al. (2024) exclude state-dependent infeasible actions by adding a hidden constraint layer to the NN determining the policy. Other researchers use well-performing heuristics to guide their DRL algorithm. For a perishable inventory problem, De Moor et al. (2022) develop a reward-shaping approach for their DQN algorithm that penalizes deviations from a heuristic policy. Liu et al. (2025) pre-train the policy network to mimic the actions suggested by an established heuristic before initiating the actual PPO algorithm for multiechelon, multiproduct inventory management. A few recent works enhance DRL algorithms for inventory management with optimization techniques. Harsha et al. (2025) develop a novel algorithm that estimates state values using an NN and uses sample average approximation and mixed-integer linear programing (MILP) to optimize the action selection based on the predicted state values. However, their approach does not scale well if the transition space is complex. An alternative approach is the implementation of an actor pipeline, which decomposes the action space dimensions. Only a subset of action dimensions is handled by an actor NN while the others are determined using other optimization techniques. Akkerman et al. (2025) propose an actor pipeline for the multi-item inventory record inaccuracy problem, where the actor NN decides whether to reorder and a MILP model determines the inventory inspection route.

Research Gap

Our research addresses open questions in all of the outlined fields. We are the first to analyze the value of age-based blending. In this setting, the operational flexibility lies in consolidating a variable inventory age structure. Further, we contribute to the emerging ameliorating inventory management literature by analyzing different blending regimes in issuance decisions. Lastly, we provide several contributions to the literature on DRL for inventory management. For handling the multidimensional action space in the ameliorating food problem, we develop a novel actor pipeline that integrates an actor NN and a lookahead optimization model. In contrast to existing actor pipeline applications, our approach enables control over the allocation of action dimensions to either of the two components. Hence, we analyze the trade-off between a (suboptimal) policy approximation using the lookahead model and the coordination of many action dimensions in the actor NN, also potentially impairing performance. Moreover, existing state-of-the-art DRL algorithms rely on discounting future rewards. Because the ameliorating food inventory problem is naturally modeled as an average-reward MDPs, we transfer an algorithm that specifically optimizes the long-run average reward to the inventory domain and thereby substantially outperform state-of-the-art algorithms. Finally, Boute et al. (2022) point out that integrating structural problem insights into DRL approaches remains an open avenue for future research. To that end, we suggest two novel reward-handling techniques that exploit the problem structure.

Modeling Ameliorating Food Inventory Management

This section develops a generic model for ameliorating food inventory systems. We first provide the MDPs formulation in Section 3.1. We represent the specific characteristics amelioration, evaporation, blending flexibility, and the various uncertainties in the MDP constituents, that is, decision epochs, states, actions, transitions, and rewards. In Section 3.2, we develop an analytical upper bound on the average reward, used for benchmarking and for enhancing our solution approach.

Model Formulation

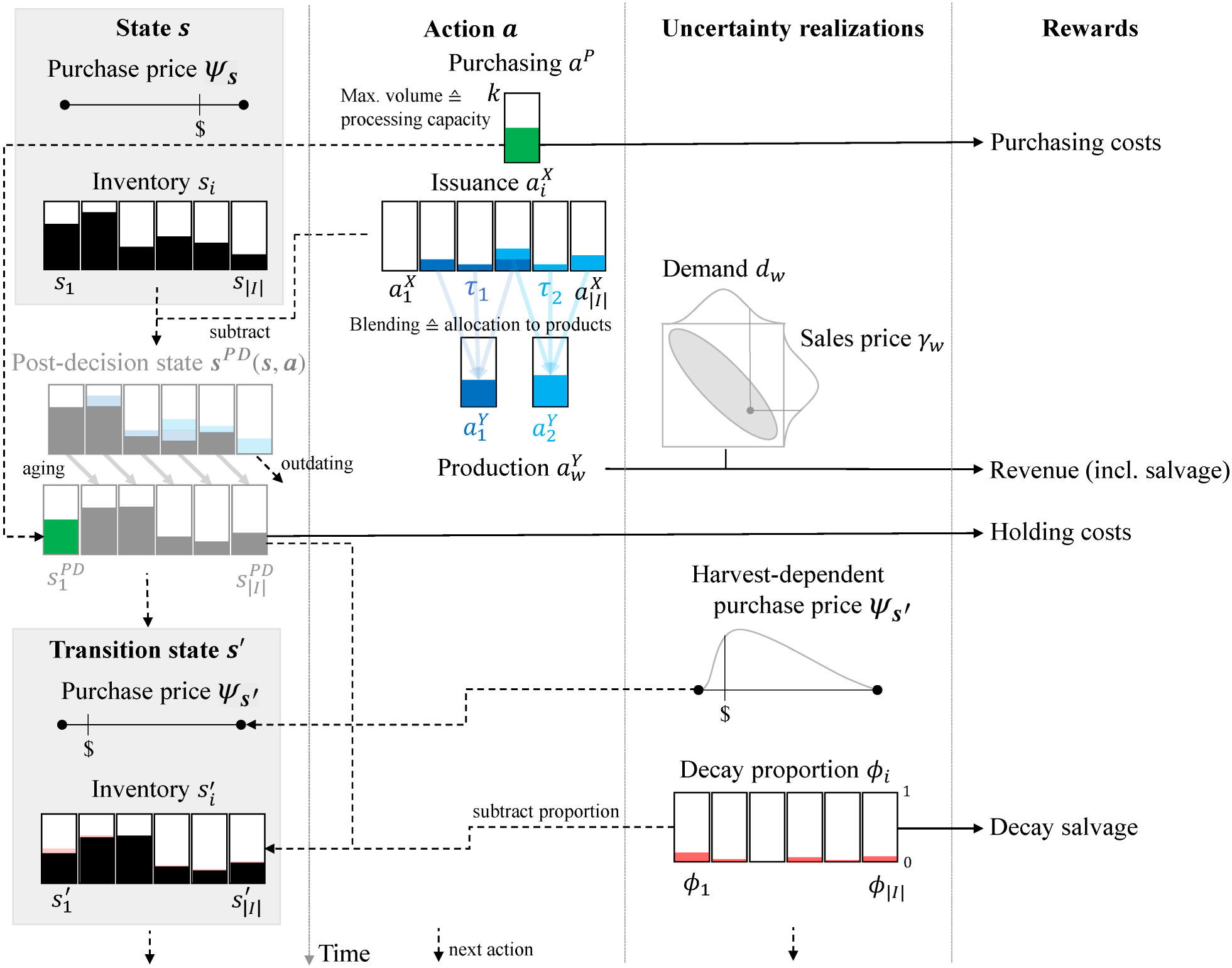

Ameliorating food inventory systems are composed of age classes

Because ameliorating food stocks can be processed in any volume, we use a continuous-value MDPs formulation. Aging spirits are stored in wooden casks, whereas cheese and ham age in designated ripening chambers. For all aging products, a small proportion of

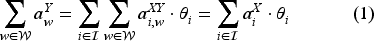

For a problem with

Exemplary state transition in the ameliorating food inventory problem.

Production actions

Note that the example in Figure 1 shows the average-age blending regime, as both products’ blends include stocks from age classes younger than the target ages. We characterize a complete action

We consider two types of uncertainty affecting the state transition (third column of Figure 1). First, a new purchase price

Typically, demand for food products cannot be backlogged. Unmet demand is therefore lost. Further, bottled or packaged stocks have lost their maturation potential and cannot be returned to inventory. However, leftover products may be salvaged, with

We exclude the option to store finished products. With regard to cheese and ham, this is due to the limited shelf life after packaging. With regard to spirits and fortified wines produced from harvested crops, the interval between decision epochs is one year. Due to the large storage costs of bottled products, producers and retailers avoid shelving finished products for such an extended period.

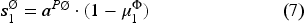

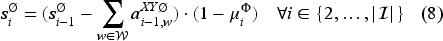

Decayed units from each age class

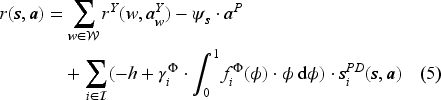

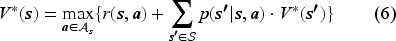

The value function concludes the infinite-horizon MDPs formulation. Because predominantly family-owned producers assume a cross-generational perspective and stocks gain rather than lose value over time, the average reward criterion naturally applies to the ameliorating food inventory problem.

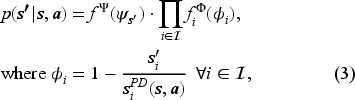

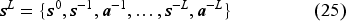

We develop an upper bound on the optimal average reward of the MDPs described in Section 3.1 based on the analysis of the average inventory structure. This bound provides a conservative yet informative benchmark for evaluating the performance of inventory policies. Further, we use this analytical result to scale rewards in our solution approach (see Section 4.3). For the average-reward infinite-horizon MDPs, we denote

To obtain a tractable approximation of the non-linear expected revenue function in Equation (4), we employ a piece-wise affine upper approximation function

We also discretize the continuous probability distribution of purchase prices by introducing a finite set of indices

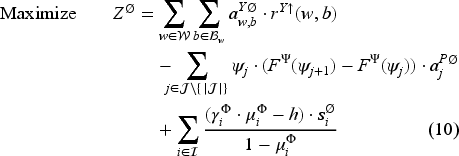

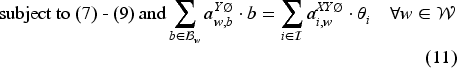

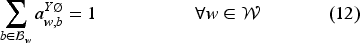

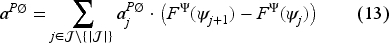

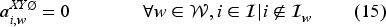

Equation (10) represents the objective function that maximizes the average reward per period based on Equation (5). Constraints (11) link the production volumes to the issuance volumes for individual products. Constraints (12) ensure that production proportions add up. Constraints (13) relate the total average purchasing volumes used in Constraints (7) to the price-level dependent purchasing volumes. Constraints (14) implement the target age adherence in issuance decisions. Constraints (15) implement the problem-specific blending regime characterized by

For identical parameter settings, the optimal objective value for the optimization model defined by Equations (7) to (19) is at least as large as the average reward

We provide the proof in Electronic Companion EC.1. The generation of an upper bound through an LP problem relaxation is also found in the fluid approach proposed by Bertsimas and Mišić (2016). In contrast with their approach, however, we cannot decompose the state space dimensions as inventory levels in different age classes are interdependent. Hence, we directly model the average system structure, including the interdependencies between average purchasing and issuance volumes and the resulting average inventory in different age classes. Note that the resulting upper bound is conservative, as it does not account for the sequential MDPs dynamics. Consequently, e.g., average purchasing volumes can be allocated entirely to low purchase price level intervals in the upper bound LP. In contrast, the optimal purchasing policy in the MDPs also depends on the current inventory volumes, which result from the historical sequence of actions, purchase prices, and decay volumes.

The curse of dimensionality and the continuous-value MDPs formulation rule out the use of exact solution algorithms for the ameliorating food inventory problem introduced in Section 3.1. Therefore, we develop a DRL solution approach. We present the algorithm design, including a novel actor pipeline in Section 4.1. Moreover, we employ an algorithm that specifically maximizes average rewards and, therefore, conforms with our value function formulation in Equation (6). Section 4.2 discusses average-reward DRL algorithms and justifies our choice of APO. Section 4.3 introduces new reward-handling methods to further improve the algorithm’s performance.

Actor Pipeline

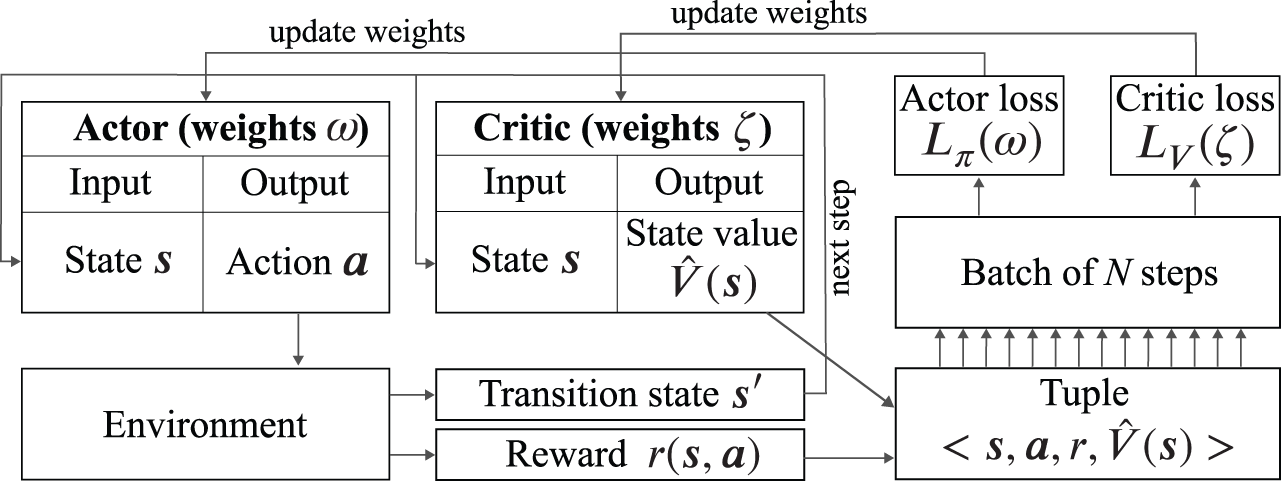

Recently, actor–critic DRL algorithms have been considered the state-of-the-art in inventory research (e.g., Boute et al., 2022; Gijsbrechts et al., 2022; Liu et al., 2025). Figure 2 provides an overview of their general architecture.

Actor–critic architecture.

The actor implements the MDPs policy using an NN. In each step of the algorithm, it maps the current state variables to an action. The action is handed to the environment, which implements the dynamics of the MDPs. Hence, it outputs a reward based on the provided state-action-pair, and derives the transition state using Monte-Carlo sampling from the uncertainty distributions.

The critic also includes an NN, which likewise receives the current state as input but outputs an estimate of the state value as formulated in the value function in Equation (6). In each algorithm step, the tuple of state, action, reward, and state evaluation is stored in a buffer. The transition state is passed on to the actor and the critic to initiate the next algorithm step. Once a batch of

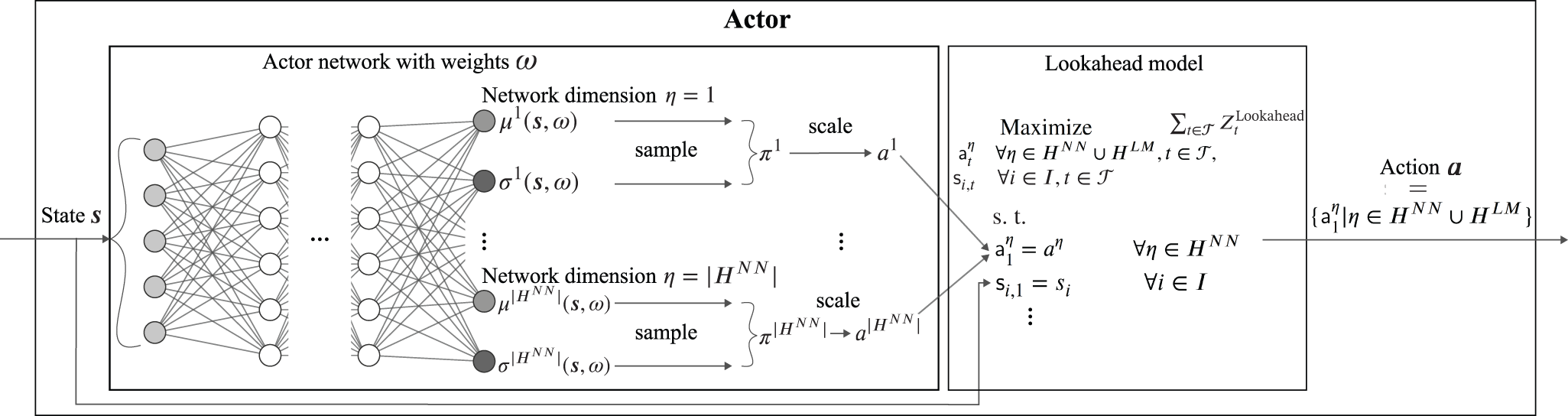

Actor–critic DRL approaches have successfully been applied to inventory problems with low-dimensional action spaces. For instance, Gijsbrechts et al. (2022) consider up to two action dimensions. Because of the integration of purchasing, production, and flexible issuance decisions, the action space in the ameliorating food inventory problem entails large dimensionality and high combinatorial complexity. Both represent major challenges for practical reinforcement learning, possibly leading to compromised quality of the trained policy (Dulac-Arnold et al., 2021). Contrary to DRL, we can easily integrate multiple decision dimensions when solving inventory problems with lookahead optimization models (e.g., Buisman and Rohmer, 2022). However, such models fail to represent the possibility to react to uncertainty in sequential decision-making. Therefore, we develop an actor pipeline that combines the strengths of both approaches by decomposing the action space, allowing the flexible allocation of action dimensions to either an NN or a lookahead optimization model. The pipeline is illustrated in Figure 3, which zooms into the actor in Figure 2, with state variables as input and action variables as output.

Actor pipeline.

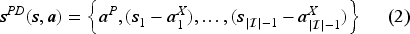

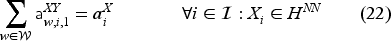

We formalize the generic design as follows. Let

For each action dimension, the actor NN outputs a mean and a standard deviation, which together form a normal distribution from which action values are sampled (see left side of Figure 3). In the initial training iterations, this sampling ensures the exploration of the solution space. However, as the training progresses, the actor ideally decreases the standard deviation, exploiting the benefits of near-optimal actions.

The lookahead model derives actions for those dimensions

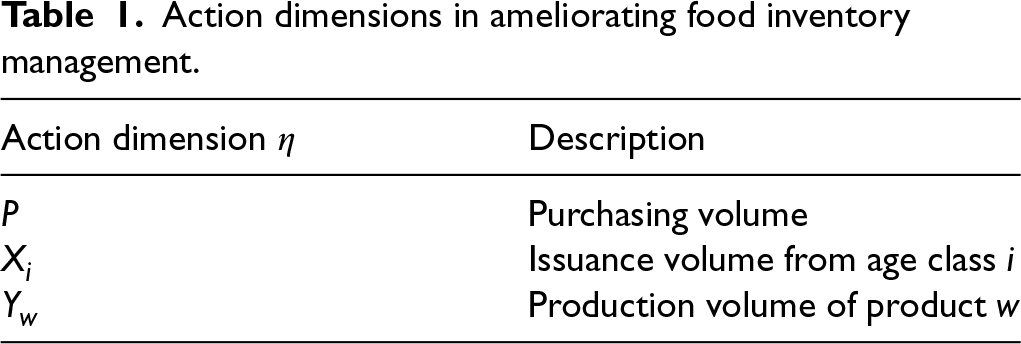

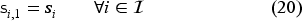

For the ameliorating inventory management problem introduced in Section 3.1, Table 1 summarizes the action dimensions that must be partitioned between the actor NN and the lookahead model. To guarantee fast computation, we use an LP formulation for the lookahead model. For each period

Action dimensions in ameliorating food inventory management.

However, we illustrate how the LP interacts with the DRL environment and the actor NN. Constraints (20) initialize the inventory decision variables in Period 1 with the values

We use the mean values of the underlying probability distributions when modeling state transitions across the lookahead horizon to formulate a deterministic model (e.g.,

State-of-the-art actor–critic algorithms such as PPO (Schulman et al., 2017), soft actor–critic (Haarnoja et al., 2019), and twin-delayed deep deterministic policy gradient (TD3) (Fujimoto et al., 2018) rely on discounting future rewards. The discount factor is frequently treated as a hyperparameter and tuned to achieve a better performance. However, in Operations Research problems, only the problem environment can justify discounting future rewards. Furthermore, if the discount factor is close to 1, state-of-the-art algorithms tend to perform worse (Zhang and Ross, 2021). As our value function in Equation (6) maximizes the average reward per period, we employ an algorithm specifically designed for this objective.

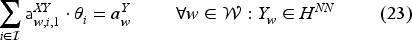

Recently, several actor–critic algorithms for the average reward criterion have been introduced, which all build on existing approaches for discounted rewards and are evaluated on computer game environments. These algorithms typically calculate state values relative to an estimate for the average reward

Reward-Handling Techniques

To enhance the learning performance of our algorithm, we introduce two novel reward-handling techniques that build on specific problem insights.

It is common in DRL research to normalize the state variables, action variables, and rewards to a pre-defined range. This practice prevents adjusting the network’s weights to differences in the numerical scales between the NN’s input and output layers during training (van Hasselt et al., 2016). We normalize state and action variables in the interval

For rewards, however, strict clipping in the interval

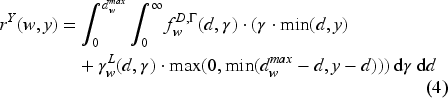

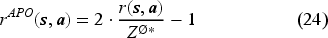

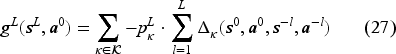

Apart from reward scaling, reward shaping, that is, modifying the observed reward by adding a term

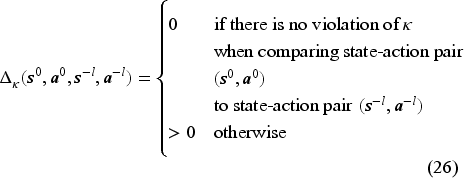

For a given policy property

Note that including the history in the state may also support the NNs in learning the structure of reward delays incurred by maturation times (Hester and Stone, 2012). We provide a more detailed description and a theoretical analysis of the history-based reward-shaping approach in Electronic Companion EC.4. Moreover, we show the performance impact of both reward-handling techniques in Electronic Companion EC.5.

We apply our solution approach to a large-scale port wine industry case. 10-year-old and 20-year-old Graham’s Tawny are high-volume trademark products from the Symington Family Estates (Symington Family Estates, 2025). The company uses stocks matured up to 25 years in issuance decisions to account for flexible blending under the average-age regime. We summarize further problem parameters derived from company data and the used algorithmic settings in Electronic Companion EC.6.

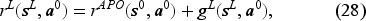

Figure 4 shows the training progress of the APO algorithm. The left subfigure shows that the explained variance of the value targets

Training progress of the average policy optimization (APO) algorithm for the port wine industry case.

We extract the policy from the set of training iterations that achieves the largest average profit on an evaluation data set. In a large-scale simulation of 50,000 periods, the gap to the upper bound of this policy is only

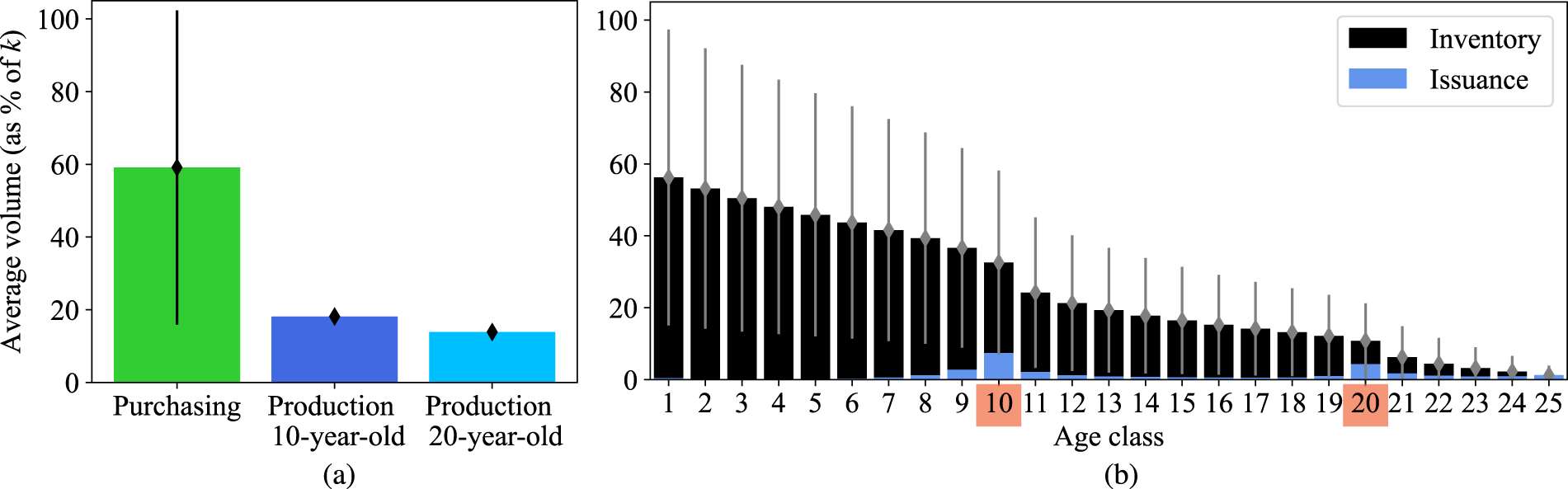

Average analysis of port wine inventory system. (a) Purchasing and production actions. (b) Inventory levels and issuance actions.

The average purchasing volume is substantially larger than the accumulated production volumes. This difference is entirely attributed to evaporation and decay losses. The policy prevents outdating in the last age class by issuing all remaining stocks. Further, the distribution of issuance volumes provides insights into the blending strategy. Despite the large flexibility under the average-age regime, issuance volumes are centered around the products’ target ages. Blending from distant age classes leads to increased evaporation losses, even if the average age equals the target age. We analytically characterize this relationship between blending and evaporation in Electronic Companion EC.7.

Note that the production volumes have a very low standard deviation, demonstrating that our policy allows Symington to offer their products reliably on the sales market (see Figure 5(a)). In contrast, the purchasing volumes and, consequently also, the inventory volumes (see Figure 5(b)) are highly volatile. Enabled by the blending flexibility, Symington can purchase less in high-price periods and more in low-price periods. To summarize, average-age blending helps address Symington’s two main challenges: (i) Adapting the purchase volume to the price level while maintaining stable production volumes and (ii) blending on-target with a narrow spread of age classes while dealing with volatile inventory volumes across age classes. The following section comprehensively characterizes the value of blending in ameliorating inventory management. Further, because blending permits flexible inventory management, we provide managers with explanations and insights for the volatile policy resulting from NN training.

Our numerical experiments provide insights into the performance of our actor pipeline, the value of blending in issuance decisions, and important decision drivers in ameliorating inventory management. We structure our analyses as follows. Section 6.1 presents the experimental design. We summarize benchmark approaches in Section 6.2. In Section 6.3, we evaluate our DRL approach and different configurations of the actor pipeline introduced in Section 4.1. Section 6.4 investigates the impact of different blending regimes on the average reward and the resulting policies. We mine the trained policies to elicit key factors behind specific decisions and generic managerial insights in Section 6.5.

Design of Experiments and Algorithm Configuration

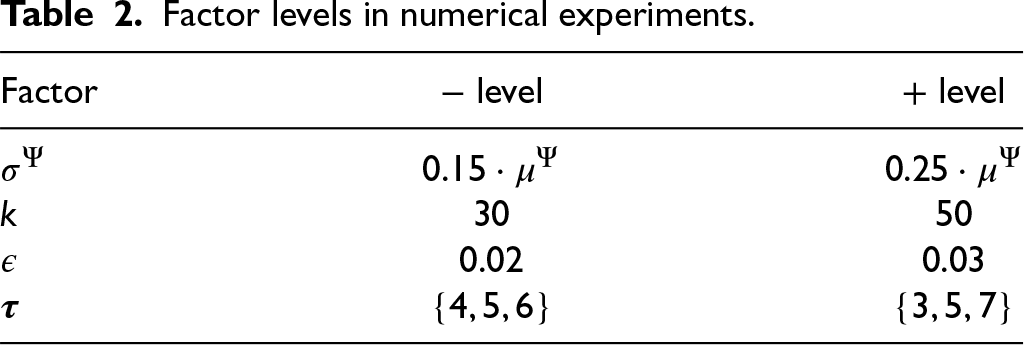

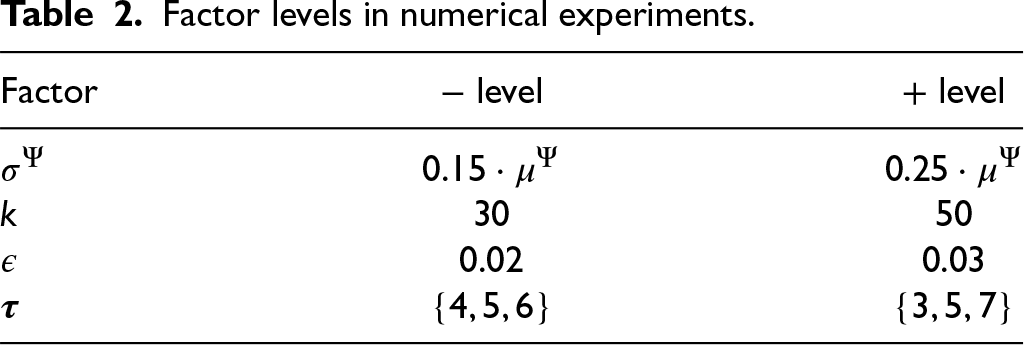

We assess configurations of the novel actor pipeline as well as different blending regimes in a generic ameliorating food inventory system comprising ten age classes (

Factor levels in numerical experiments.

Factor levels in numerical experiments.

We implement the APO algorithm in Python within the Ray Rllib ecosystem (release 2.3.0) (Liang et al., 2017). It is noteworthy that, unlike other DRL approaches, where hyperparameter sensitivity can lead to substantial performance variations (e.g., Gijsbrechts et al., 2022), our APO implementation yields robust performance across problem instances with limited tuning effort. Nevertheless, we carefully tune the hyperparameters within ranges conventionally used for inventory management. Because we observe stable training performance and algorithmic convergence, we use the same APO hyperparameter settings throughout our experiments. We provide these settings as well as details of our hyperparameter tuning approach in Electronic Companion EC.3. Our source code and all experiment data are available on the following repository: https:/github.com/amelioratinginventory/ameliorating_inventory.

Unlike, for example, the conventional lost sales model studied by Gijsbrechts et al. (2022), well-established heuristics for benchmarking our DRL approach have not yet been developed for the ameliorating food inventory problem introduced in Section 3.1. Therefore, we develop heuristics based on classic inventory models to demonstrate our algorithm’s effectiveness. The details of their implementation are provided in Electronic Companion EC.9.

For the purchasing decisions, we propose two heuristics. First, we adapt the popular newsvendor model for each product individually, accounting for the expected accumulated costs during maturation. This is also inspired by industry practice, where products are managed separately in spreadsheet-based planning tools. We label this newsvendor-based approach to purchasing

For production and issuance decisions, the classic FIFO policies used in perishable inventory management fail in our problem as they neglect amelioration effects as well as blending flexibility. We hence develop two new benchmarks. The first approach again resembles spreadsheet-based planning in industry. We define each product’s overall production volume target through the newsvendor model. Then, in any given period, we use a simple LP to issue the stocks, maximizing adherence to the production volume targets while minimizing target age excess in blending. We label this approach

A further intuitive benchmark approach is derived from the actor pipeline presented in Section 4.1. When delegating all action dimensions to the lookahead model (

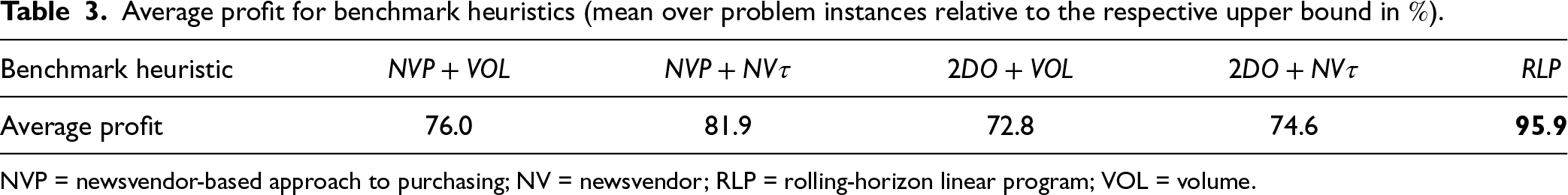

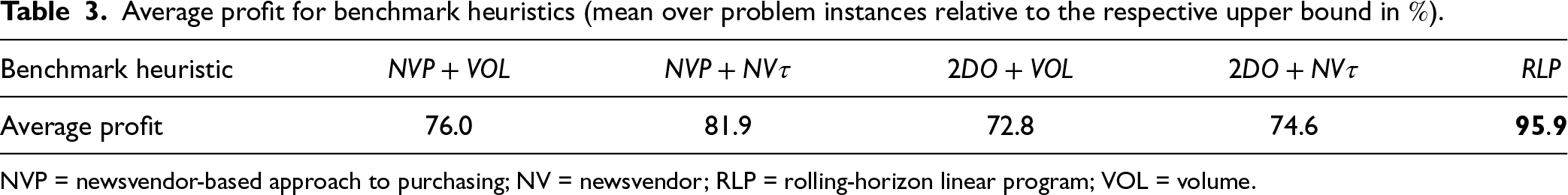

For all problem instances discussed in Section 6.1, we implement all possible combinations of

Average profit for benchmark heuristics (mean over problem instances relative to the respective upper bound in

).

Average profit for benchmark heuristics (mean over problem instances relative to the respective upper bound in

NVP = newsvendor-based approach to purchasing; NV = newsvendor; RLP = rolling-horizon linear program; VOL = volume.

We utilize the benchmarks developed in the previous section to assess the performance of our DRL approach across different configurations of our novel actor pipeline. In our analyses, we focus on the average-age blending regime because it involves the largest problem complexity. Since purchasing decisions predefine the feasible space for future production and issuance decisions, we expect the most significant impact on the average reward when handing this action dimension to the NN. Similarly, production decisions for younger products predefine the feasible space for future production decisions for older products. Therefore, we investigate the following configurations of

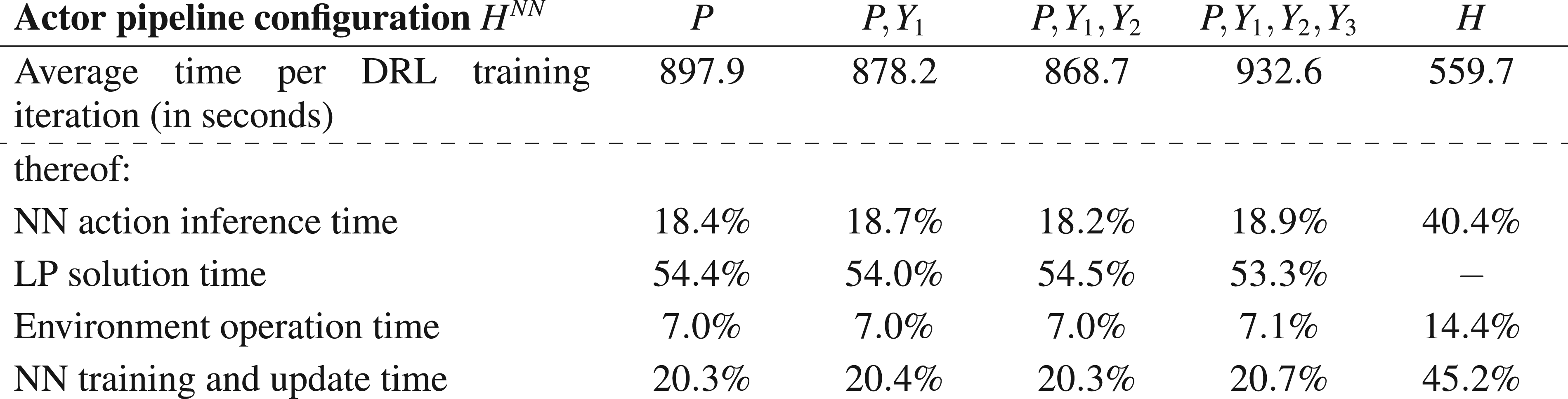

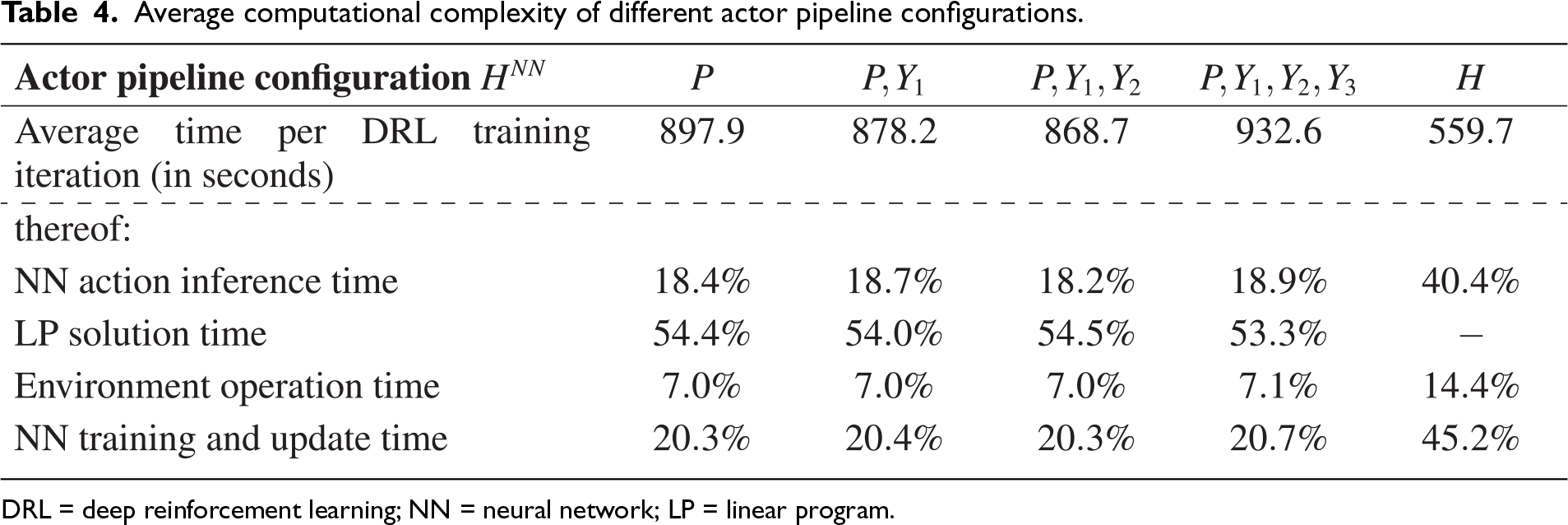

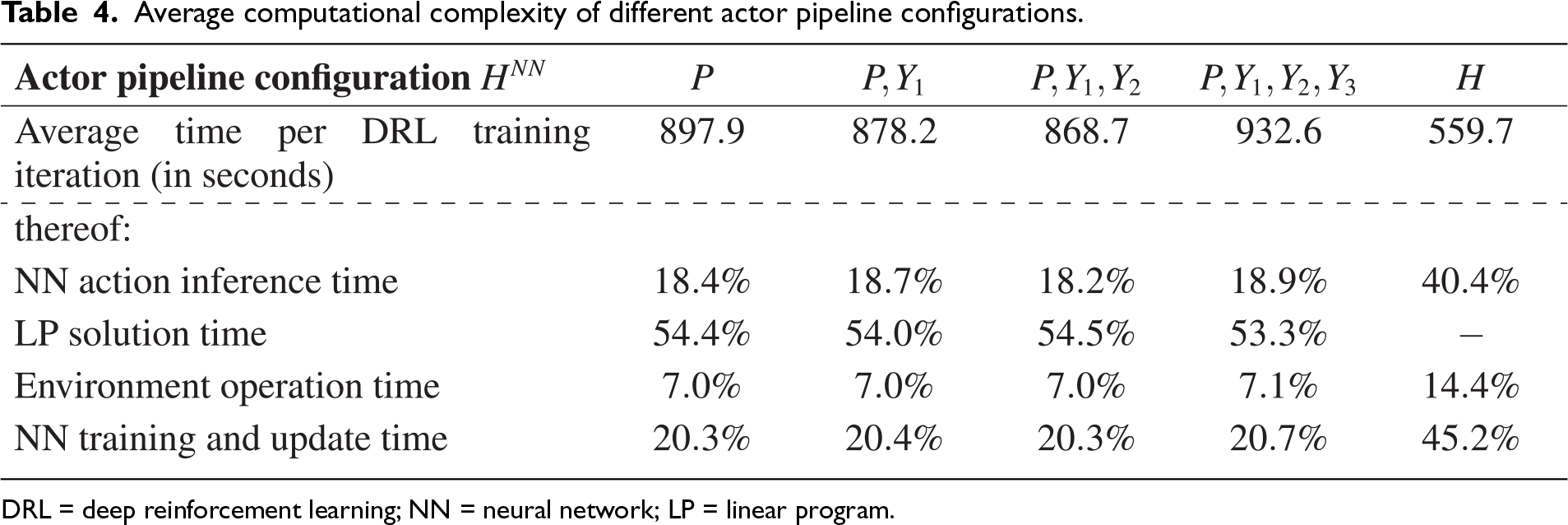

Table 4 reports the average training time per DRL iteration on a local 16-core Intel Xeon Platinum 8280L CPU (2.7 GHz, 32 GB RAM), using the algorithmic setup described in Section 6.1 and Electronic Companion EC.3. For the hybrid actor pipeline configurations, which combine an NN and a lookahead LP model, we observe comparable total training times (Columns 2–5 in Table 4). In all these configurations, the LP solution time is the main bottleneck, consistently accounting for more than half of the total iteration time. This underlines the importance of a computationally efficient LP formulation within the actor pipeline. By contrast, the configuration

Average computational complexity of different actor pipeline configurations.

Average computational complexity of different actor pipeline configurations.

DRL = deep reinforcement learning; NN = neural network; LP = linear program.

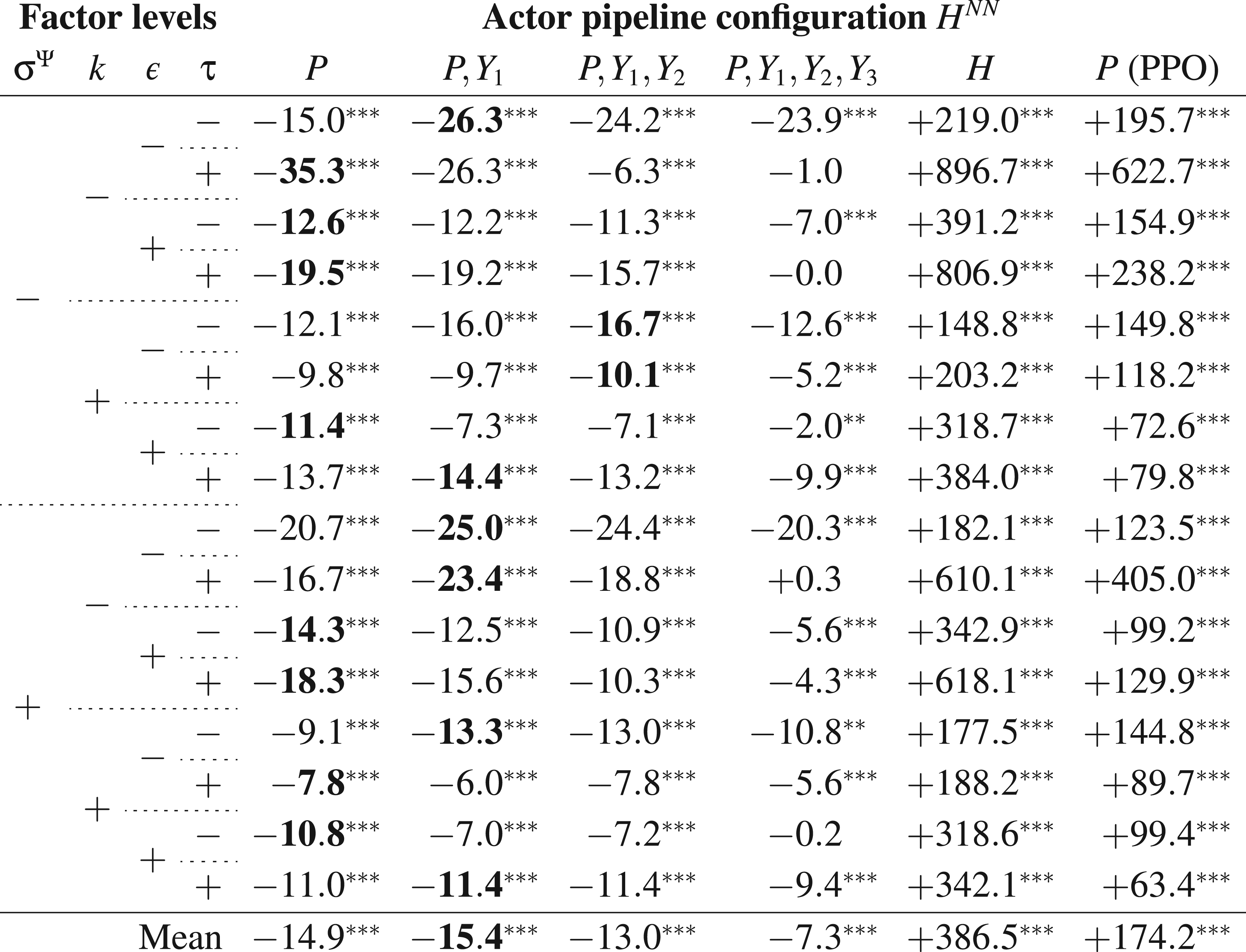

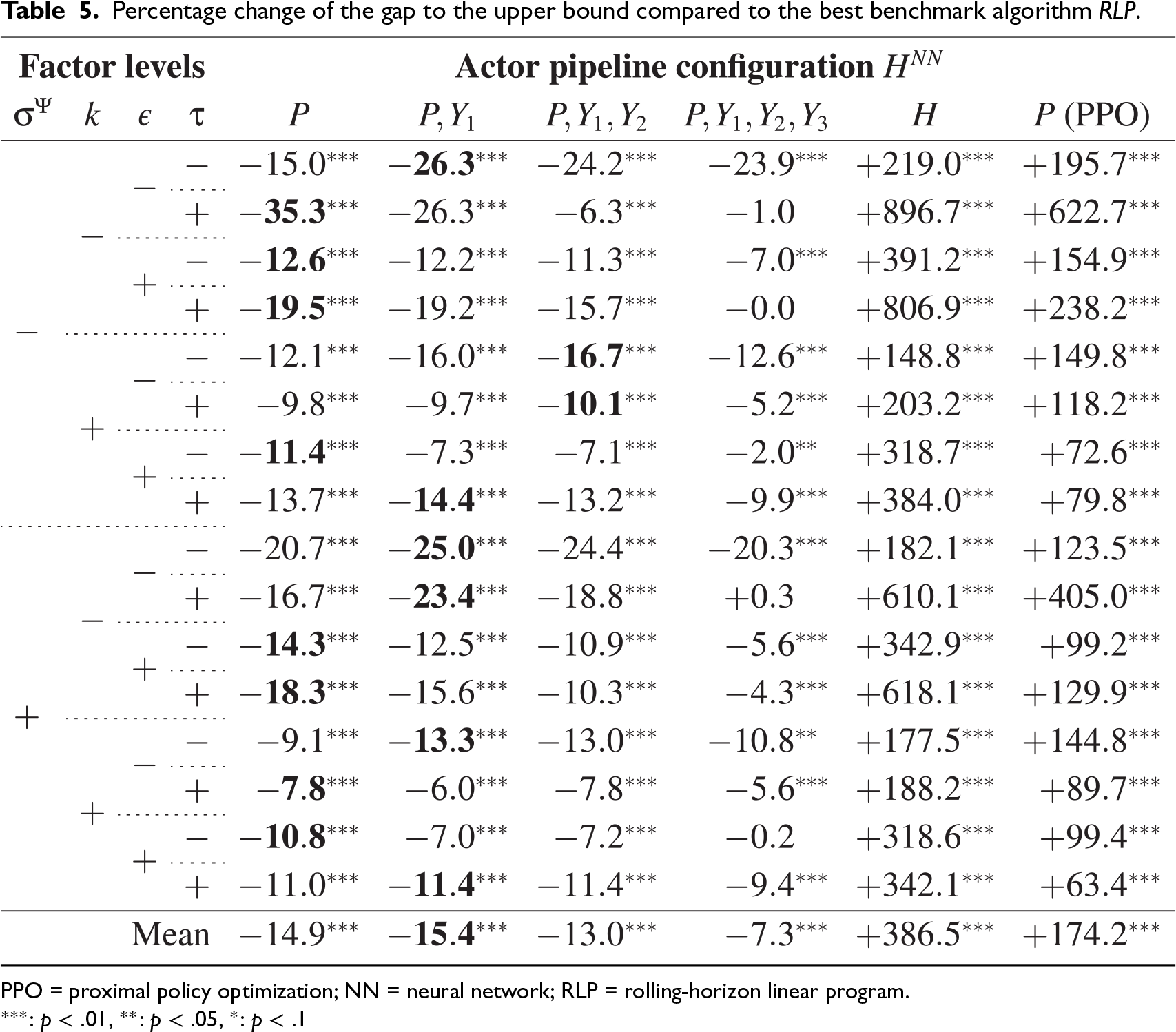

We evaluate the performance of each configuration with 30 independent simulation runs of 2,000 decision epochs. To allow for rigorous statistical analysis, we use equal initial state variables across all runs and common random numbers across all configurations, including the benchmark cases. From each training run, we extract the policy from the specific training iteration, for which we have obtained the best performance on a separate test dataset. Table 5 illustrates the percentage change of the gap between the best benchmark (

Percentage change of the gap to the upper bound compared to the best benchmark algorithm

PPO = proximal policy optimization; NN = neural network; RLP = rolling-horizon linear program.

The rightmost column of Table 5 reports results for the actor pipeline configuration

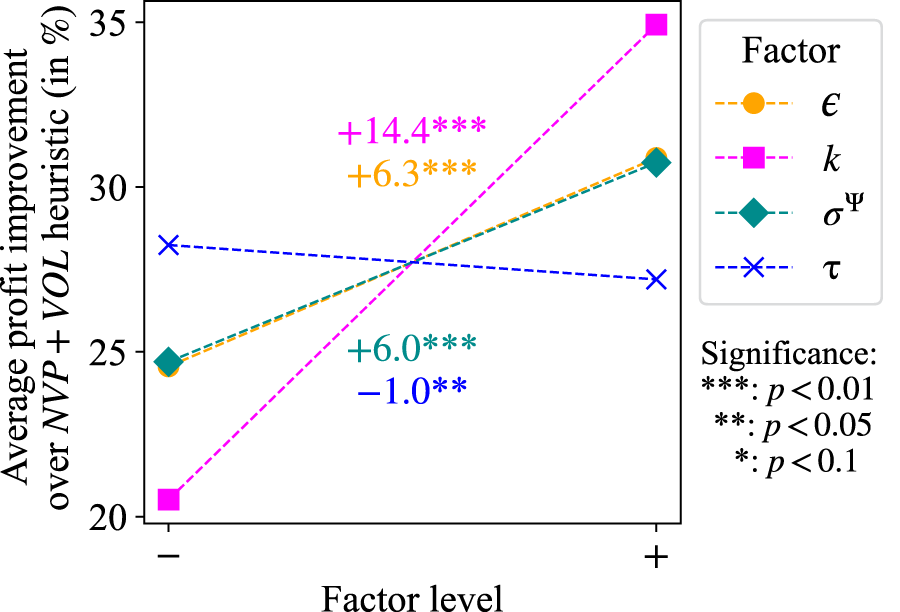

For all ensuing analyses, we use the policy derived from the best-performing actor pipeline configuration in each instance. On average, our actor pipeline DRL approach outperforms the benchmark inspired by industry practice (

Main effects of factors on the percentage profit gain compared to the heuristic inspired by industry practice.

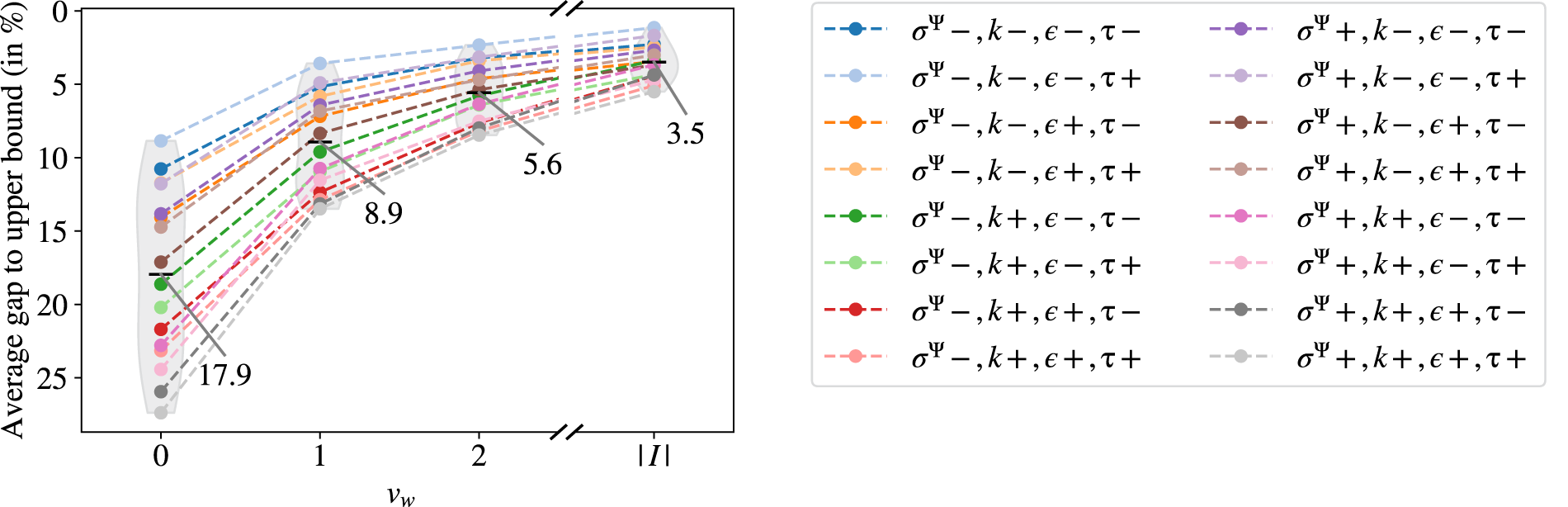

In this section, we analyze the value of blending using the same experimental design with common random numbers as in Section 6.3. In the first part of our analysis, we investigate the degree of blending flexibility under the average-age regime expressed by the parameter

Average profits under the average-age regime for different levels of blending flexibility, expressed through

In the second part of our analysis of the value of blending, we compare the average-age blending regime (

Average profits under different blending regimes.

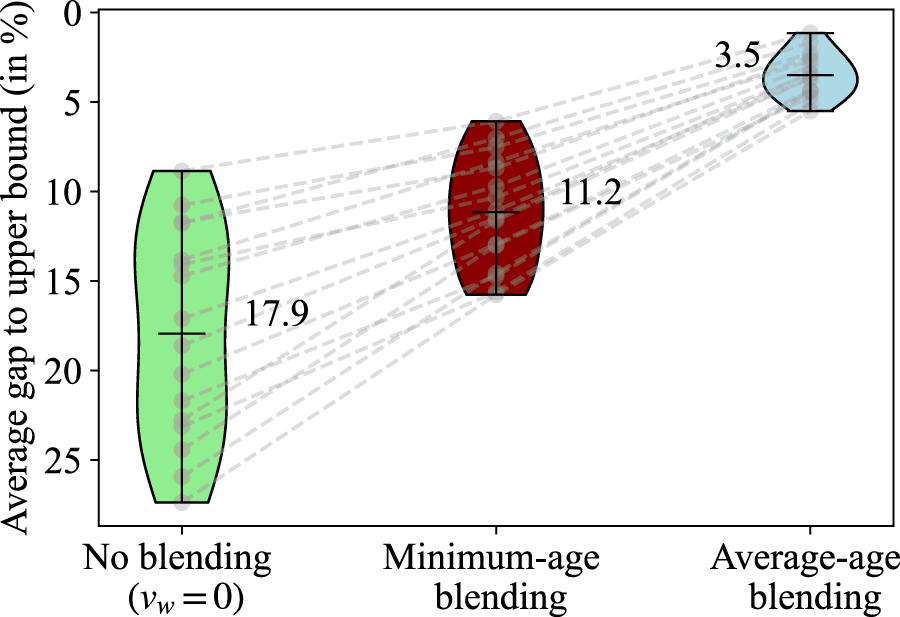

Figure 8 summarizes the average profits from the different blending regimes across problem instances, again expressed as the percentage gap to the upper bound. For reference, we also include the setting in which blending is prohibited. Minimum-age blending substantially improves the average profits, especially in those problem instances with the largest gaps under the no blending regime. On average, the gap to the upper bound decreases by

Issuance decisions across different blending regimes. (a) Usage of target age in issuance. (b) Target age excess in issuance.

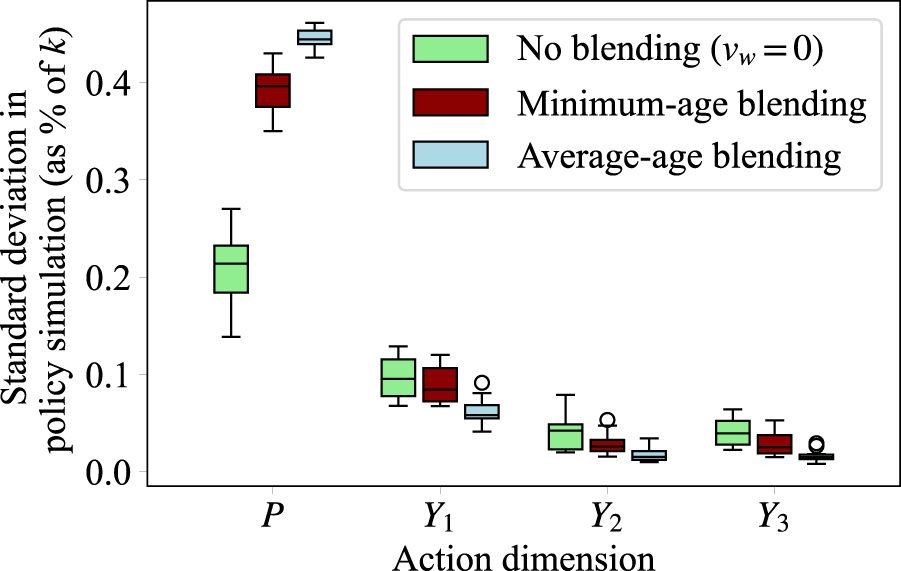

To illustrate further drivers of profit enhancements through blending, Figure 10 illustrates the policy variability for the remaining action dimensions during simulation. We observe that with increasing blending flexibility, the volatility in the purchasing policy increases substantially. On the other hand, despite the more variable inventory structure resulting from the more variable purchasing policy, the production volumes fluctuate less across all products. This entails two effects on average profits. Firstly, we decrease the average purchasing costs by adjusting the purchasing policy to price fluctuations. Secondly, due to the concavity of Equation 4, more stable production volumes lead to larger average revenues (see Electronic Companion EC.1). Note that across all instances, the production volumes of older products (

Policy variability across different problem instances.

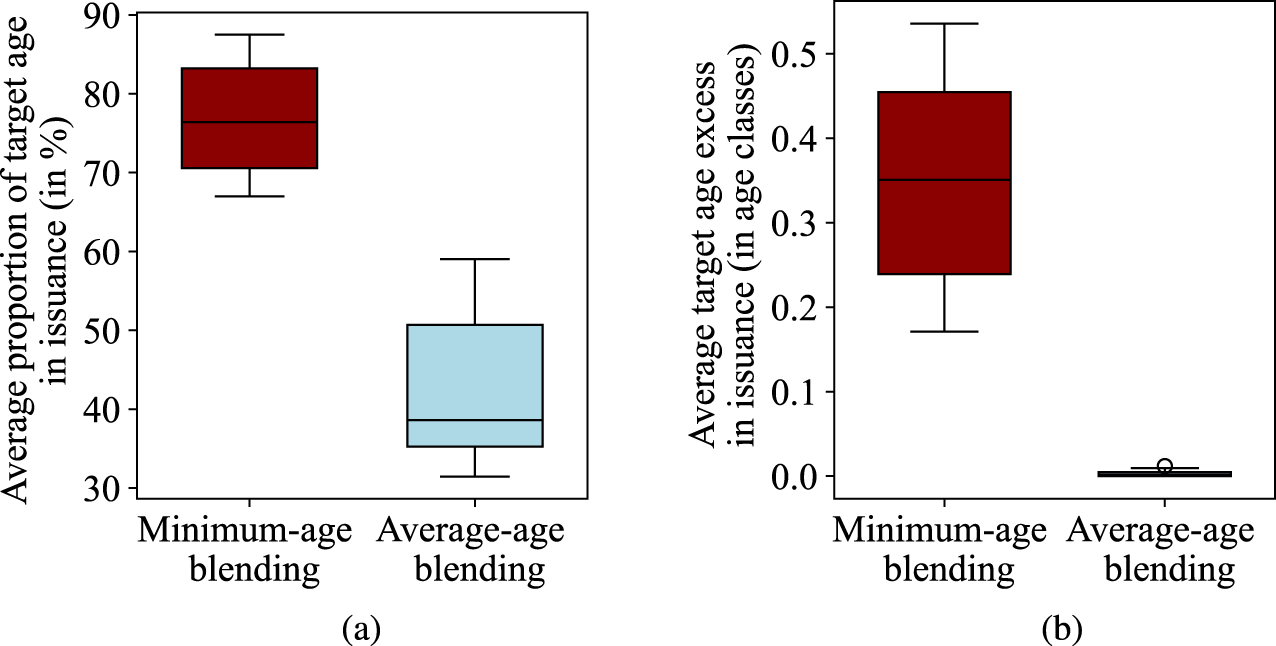

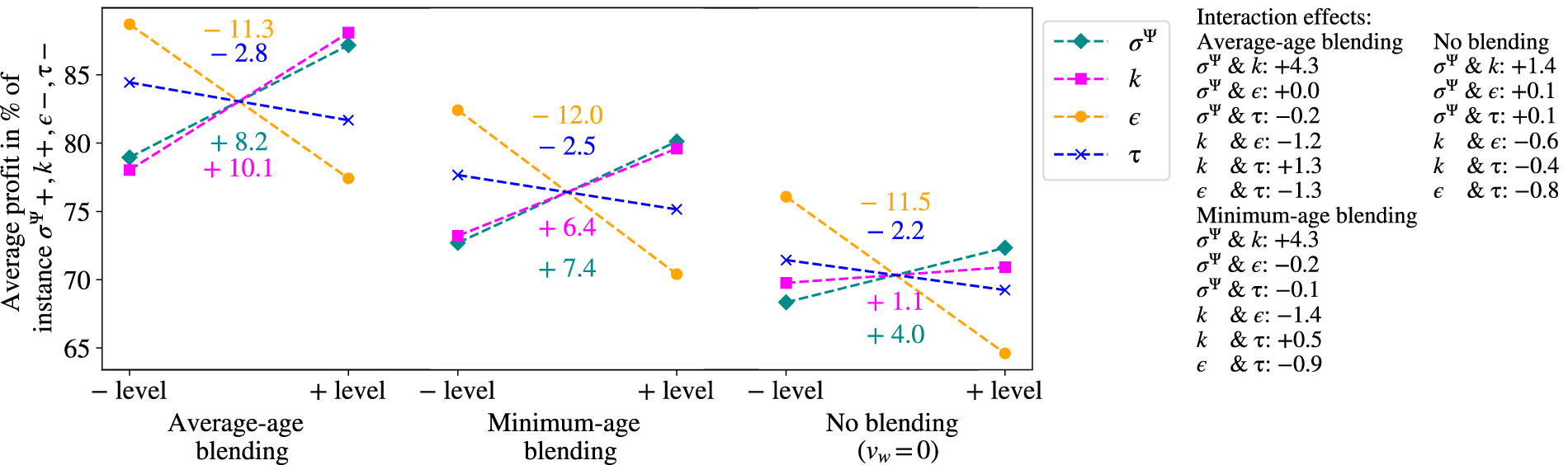

Finally, we investigate the effect of the factors

Main effects of factor levels on average profits under different blending regimes.

We develop a two-step policy mining approach to analyze the drivers behind near-optimal decisions. In the first step, we map the inventory management policy using machine learning, similar to Bravo and Shaposhnik (2020), who mine optimal policies to obtain structural insights, and Kouvelis et al. (2024), who use classification trees to interpret near-optimal DRL policies. We train regression trees for each action dimension that use state variables as features and the corresponding policy output as the label. We provide the full details of this first step in Electronic Companion EC.10.

Trees are interpretable by following the feature evaluations from the root node to the leaf node. However, this path analysis does not quantify the impact of state variables on the eventual action in a given state. Therefore, we use Shapley values to derive such local explanations. Shapley values originate from game theory and determine how much each feature contributes to the deviation of the model output for the current observation from the mean model output. For the details of the computation of exact Shapley values for regression trees, we refer to Lundberg et al. (2020). When applied to MDPs policies, these values can be used to explain the factors behind actions in specific states (Beechey et al., 2023).

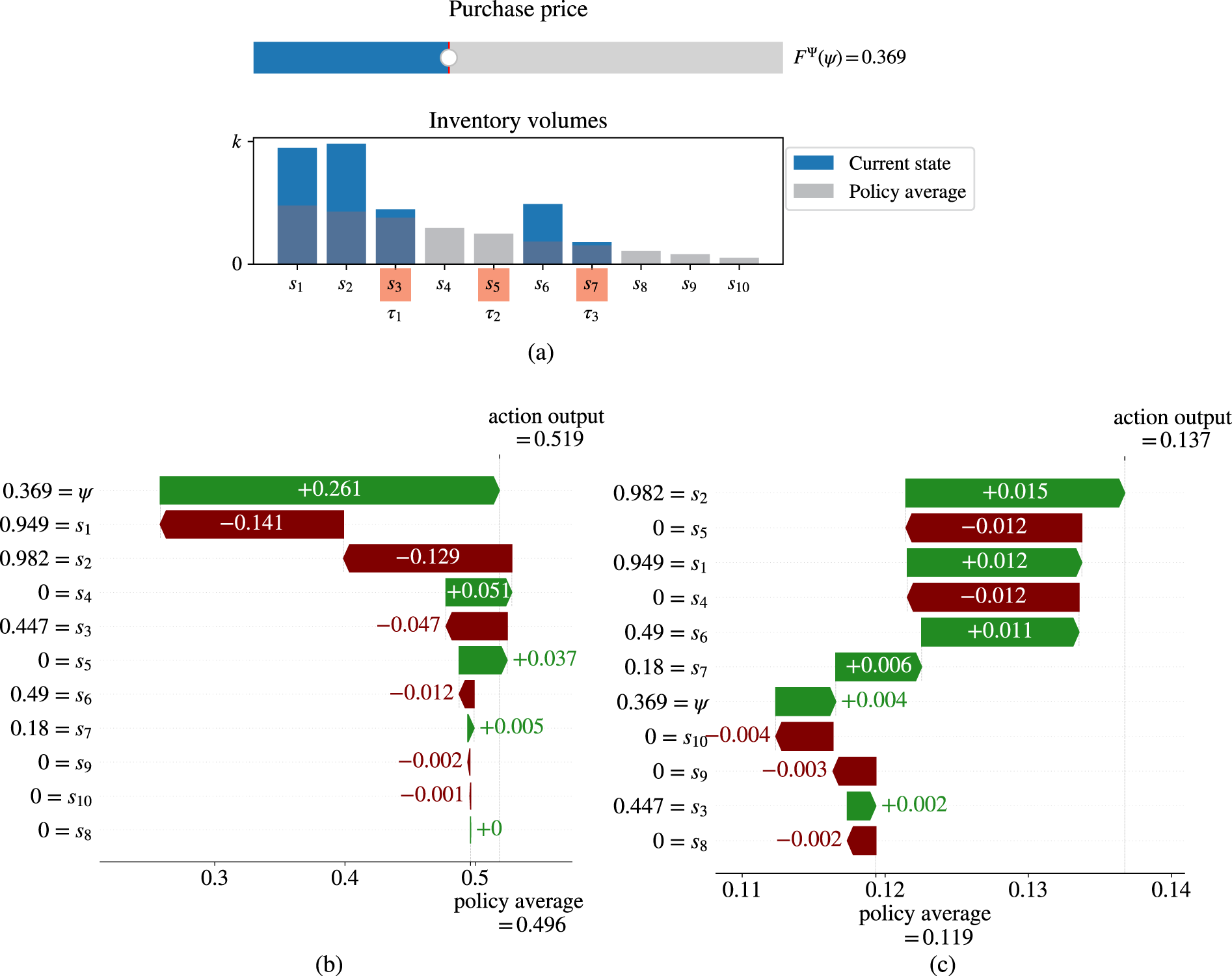

Figure 12 illustrates local explanations of the purchasing action (

Exemplary Shapley values (problem instance:

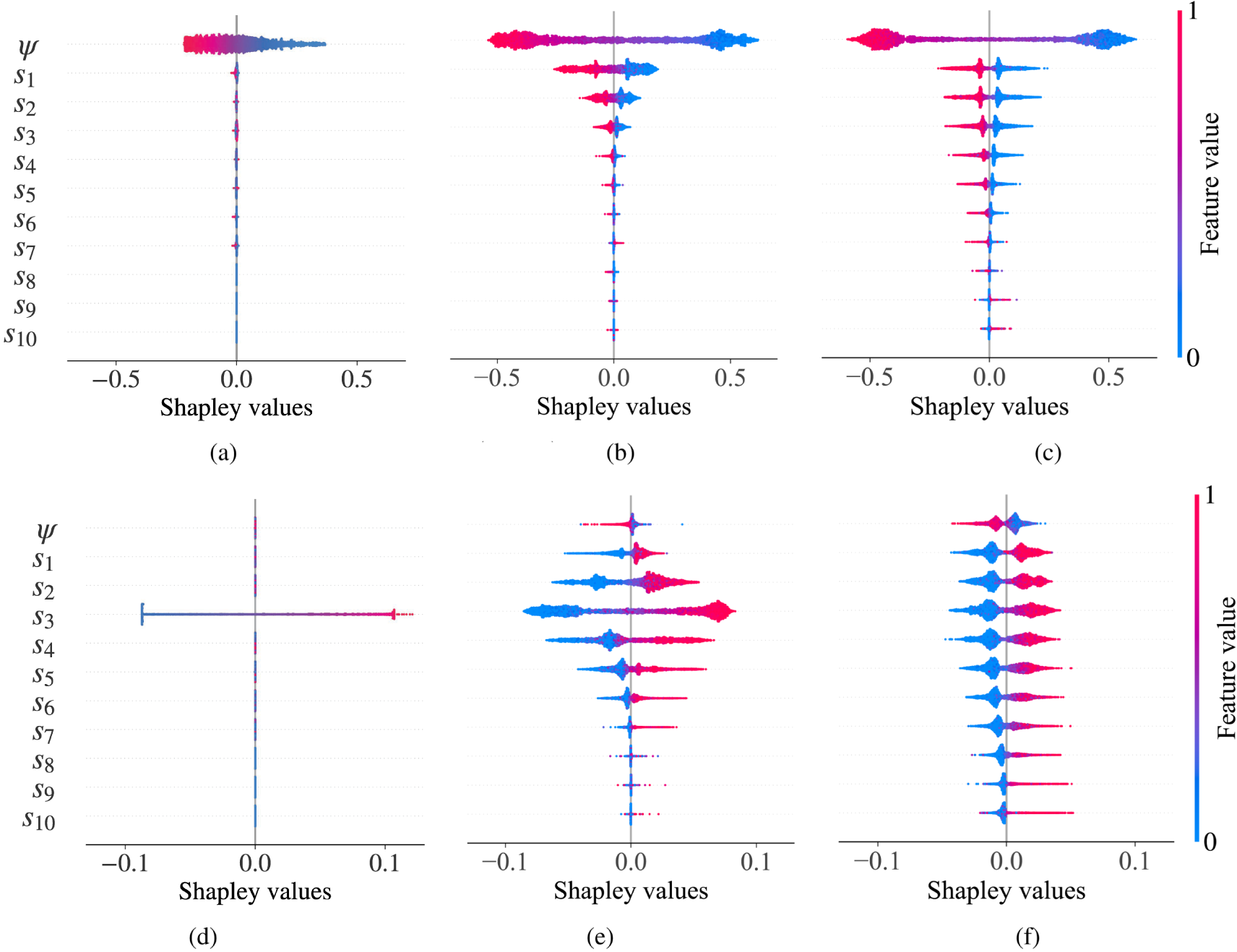

Generally, policy mining, in combination with Shapley values, helps managers better understand why certain actions are taken in specific states, building trust in the solution approach. We further generate global policy insights by aggregating the local explanations for a set of recurrent states. Figure 13 shows the distribution of Shapley values for action dimensions

Global analysis of Shapley values for different blending regimes (problem instance:

Naturally, there is a negative correlation between the purchasing actions and the purchase prices (see Figures 13(a–c)). When blending is prohibited (see Figure 13(a)), the price is the only relevant feature due to the lack of interaction between age classes in issuance decisions. The absolute impact of the purchase price

For the production volume of the youngest product, we also observe strong differences between the blending regimes (see Figures 13(d–f)). If blending is prohibited, the inventory volume in the target age (

Overall, Figure 13 shows that blending complicates the inventory problem, as decision-makers must consider various influencing factors to arrive at near-optimal decisions. For companies, this implies that fully reaping the profit improvement potential from more flexible blending strategies requires investing in sophisticated algorithmic support. To enhance the practical usability for inventory managers, a subsequent step could translate the policy mining insights into a set of “if-then” rules (Pahr et al., 2025).

This paper tackles the inventory management of ameliorating food products such as whiskey, rum, and port wine using a DRL approach. Our methodology introduces several innovations, including a novel actor pipeline, an average reward algorithm, and new reward-handling techniques that utilize the underlying MDPs structure. Our managerial results demonstrate that blending substantially enhances profitability, suggesting that sectors such as whiskey and rum may benefit from re-evaluating their stringent blending regulations. Through policy mining, we identify the key drivers behind the value of blending and foster trust in the derived policy. Increased blending flexibility requires the consideration of an increased number of relevant decision factors, leading to more complexity in inventory management. We see several possibilities for future research. First, innovative actor pipelines and exploiting structural problem insights are promising avenues to enhance the applicability of DRL in the Operations Management domain. Future work could investigate adaptive actor pipelining that dynamically allocates action dimensions across actor components. Second, while this paper provides general insights into the specific characteristics of ameliorating inventory management, the modeling framework can be readily extended to integrate industry case-specific modifications, such as non-stationary environments or correlated supply-side and demand-side uncertainties. Moreover, subsequent research could investigate how endogenous sales price dynamics through pricing decisions or the market influence of large producers interact with the value of blending.

Supplemental Material

sj-pdf-1-pao-10.1177_10591478251387795 - Supplemental material for The Value of Blending—Managing Ameliorating Inventory Using Deep Reinforcement Learning

Supplemental material, sj-pdf-1-pao-10.1177_10591478251387795 for The Value of Blending—Managing Ameliorating Inventory Using Deep Reinforcement Learning by Alexander Pahr and Martin Grunow in Production and Operations Management

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

How to cite this article

Pahr A and Grunow M (2025) The Value of Blending–Managing Ameliorating Inventory Using Deep Reinforcement Learning. Production and Operations Management XX(XX): 1–22.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.