Abstract

The decarbonization of power systems facilitates the electrification of appliances, many of which can be operated in a flexible way. Demand response (DR) programs can exploit this flexibility with retail price adjustment, thereby addressing several operational challenges. In this paper, we address the welfare optimization problem of local utilities that procure electricity for their customers at the wholesale market. We demonstrate how DR programs can be designed for local electricity systems where electricity demand and its response to temporary price changes is unknown. For this purpose, we address a novel and complex pricing problem—pricing under unknown, time-interdependent, and discontinuous demand—leveraging Deep Reinforcement Learning. Using a numerical case study calibrated on Californian electricity market data, we show that such a “Deep DR program” helps to identify effective prices that improve social welfare. The performance of the program is consistently positive across a variety of system conditions. We further demonstrate that our approach beats Time-of-Use tariff-based benchmarks already after five and a parametric benchmark after 19 simulation days, on average. Second, we provide novel insights regarding an important but frequently overlooked aspect of DR program design: The length of the notification interval, that is the timespan for which future prices must be set in advance. We find that the timing of price information is important and that longer notification intervals can improve social welfare. Finally, we provide insights into DR price setting and find that DR prices co-move with wholesale market prices but are lower for longer notification intervals and shorter event sequences. The presented Deep DR program provides an example of how advances in machine learning-based algorithms can help to meet the complex operational requirements of future local electricity systems.

Keywords

Introduction

The decarbonization of the economy requires the electrification of many applications and gives rise to a large number of new loads in local electricity systems, such as electric vehicles and heat pumps. Electricity consumption of these loads is, to some degree, flexible in time. Similarly, many pre-existing loads, such as production facilities, have a large potential for flexible operations (e.g. European Commission, 2022; US Department of Energy, 2022). This temporal flexibility in local electricity systems can be exploited via demand response (DR) programs. Such programs temporarily change prices to incentivize customers to adjust their load. Thereby, operational challenges, such as short-term imbalances of supply and demand due to fluctuating renewable energies, can be addressed more effectively.

While DR programs have generally received considerable attention by the research community (Boßmann and Eser, 2016; Wu et al., 2025), this is not the case for their application in local electricity systems. The majority of contributions to research on DR programs investigate applications at high levels of aggregation, such as electricity procurement on wholesale markets (Märkle-Huß et al., 2018; Yousefi et al., 2011) or reduction of peak generation (Blonz, 2016). At the local level, research into DR programs mostly targets one specific type of load, such as electric vehicles (Valogianni et al., 2020), or heating, ventilation and air conditioning (HVAC) systems (Adelman and Uçkun, 2019). In contrast, in our work, we address local electricity systems, such as electricity distribution systems, with an unknown combination of different load types.

Determining effective DR prices for local electricity systems, however, is challenging. Due to missing communication infrastructure at customer premises and privacy considerations (Haider et al., 2016), the composition of loads and their individual responses to price changes is unknown. Instead, it is only possible to observe an aggregate response. Determining effective prices when observing only aggregate load behavior is difficult. First, the loads in distribution systems exhibit different kinds of temporal flexibility and thus respond differently to price changes: For instance, during periods of high prices, some production processes can be paused, while others must not be interrupted, and local electricity storage facilities may even feed electricity back into the grid. Thus, the reaction of the system to price changes depends on the composition of the loads. Second, many electrical applications, such as production processes, have on-off properties. This introduces discontinuities into the demand function and makes the estimation of effective prices methodologically challenging.

In this paper, we overcome these challenges and thereby provide three contributions. First, we contribute to research on DR programs by demonstrating how DR programs can be designed for local electricity systems. Specifically, we address the welfare optimization problem of local utilities—such as energy cooperatives and regulated utilities in the United States or utilities owned by municipalities in Europe—which procure electricity at the wholesale market. We show that DR programs can perform effectively even if the properties of electricity demand are unknown and wholesale market prices are highly variable. The performance remains robust under a variety of system characteristics such as different load type combinations, wholesale price variability, and forecasting quality. Moreover, the underlying DR prices can be identified quickly such that welfare improvements exceed the ones achieved by a Time-of-Use (ToU) tariff, that is a pricing structure where the cost of electricity varies depending on the time of day, after a number of training steps corresponding to five days in the real-world, already. The performance of a parametric benchmark, that optimizes prices using a linear model of demand, is exceeded after 19 days. This underlines the practical applicability of the program.

As the second contribution, to design the DR program, we solve a novel, complex pricing problem: pricing under unknown, time-interdependent, and discontinuous demand. First, the response of customers to prices is unknown ex-ante. Second, customers’ decisions depend on the state of their loads, such as the state-of-charge of an electric vehicle or the state-of-completion of a production job. These states render the demand function time-interdependent, so that not only current prices but also past and future prices affect the consumption decisions (resulting in rebound and prebound effects). Third, the on-off property of devices introduces discontinuities into the demand function. This problem of finding prices under unknown, time-interdependent, and discontinuous demand also occurs in other contexts, such as marketing, and has not yet been solved optimally (cf., Bertsimas and Vayanos, 2017; den Boer and Keskin, 2020). We show how machine learning-enabled pricing based on Deep Reinforcement Learning (RL) is generally able to solve this problem quickly and effectively.

Third, we provide novel insights regarding an important, but frequently overlooked aspect of DR program design: The length of the notification interval, that is the timespan for which future prices must be set in advance. When participating in a DR program, load operators decide upon the dispatch of their appliances based on current and upcoming prices. Depending on the constraints of the load, such a decision may lock in demand for the upcoming hours and reduce future load flexibility. Due to such reductions in load flexibility, the length of the notification interval affects the performance of DR programs (Kienscherf et al., 2020). However, this mechanism has not yet been studied systematically. We show how effective choices of notification intervals depend on the forecasting capabilities of the DR program operator, customers’ expectations, and the participating loads.

We proceed as follows. In Section 2, we explain the gaps in research on DR programs and pricing under unknown demand. In Section 3, we present the structure of the DR program analyzed by us, including the optimization problems of the DR program operator and the flexible load operators. We characterize the optimal decisions of the load operators in Section 4. In Section 5, we describe the solution of the DR program operator’s decision problem using Deep RL ('Deep DR program'). In Section 6, we evaluate the performance of the Deep DR program and present results with regard to learning speed, load portfolios, and other important characteristics. We conclude and discuss our findings in Section 7.

Related Work

Time-variable prices are an important lever for coordinating supply and demand in electricity systems. On the supply side, the importance of pricing for investment in and operations of generation resources has been studied for a long time (e.g., Bohn et al., 1984). The advent of variable renewable energy sources, such as solar and wind energy, has made pricing choices more complex. As a result, the literature has revisited pricing questions in the context of renewable energies (e.g., Gambardella and Pahle, 2018; Sunar and Swaminathan, 2021; Zhou et al., 2016). These include, on the one hand, the impact of renewable energies on prices and revenues of market participants (e.g., Al-Gwaiz et al., 2017; Sunar and Birge, 2019) and, on the other hand, the impact of pricing on renewable energy investments (e.g., Comello and Reichelstein, 2017; Kök et al., 2020).

Another important lever for managing more volatile electricity systems lies at the demand side. By adjusting electricity prices during critical times, demand can be incentivized to deviate from original consumption patterns, which is the purpose of DR programs.

DR Programs

DR programs are an active field of research (see e.g., Faruqui and Sergici, 2010; Haider et al., 2016; Shoreh et al., 2016; Vardakas et al., 2015; Yan et al., 2018 for reviews). DR programs are usually characterized by a temporary increase of retail prices from a baseline (US Department of Energy, 2006). The number of price increases is typically restricted by the regulator, in order to provide a price insurance for customers and limit their exposition to price risks (Borenstein, 2007). The limitation of price increases differentiates DR programs from real-time pricing (Borenstein, 2005). The effectiveness of DR programs has been studied for a variety of applications at different levels of aggregation, which we summarize in the following.

One large stream of research studies applications of DR programs at high levels of aggregation, such as national electricity systems. At this level, DR programs can help to shift load into times of low supply cost as well as reduce demand peaks and congestion. As a result, DR programs can reduce supply costs (e.g., Feuerriegel and Neumann, 2016; Gils, 2016; Märkle-Huß et al., 2018) and save on expensive investments in generation (e.g., Blonz, 2016) and transmission networks (e.g., Nikzad et al., 2012; Zhou and Mai, 2021).

Another part of the literature considers the level of individual customers. These studies investigate how DR programs should be designed for optimal load response, for instance with regard to the role of baselines (Agrawal and Yücel, 2022), suitable combinations of long-term contracts and real-time adjustment (Wang et al., 2017), or optimal contracts considering both demand reduction and volatility (Aïd et al., 2022). Webb et al. (2016) further consider the interaction between DR programs and energy efficiency measures. Based on the assumption that customer models are known to the utility, these papers are able to determine the optimal DR price and other relevant design parameters.

Our work lies between these two extremes, since we consider groups of customers. Other contributions at this level include Anees et al. (2021), Grimm et al. (2021), Liang et al. (2019), and Sarfarazi et al. (2022). In these studies, the DR program operator faces a group of available flexible loads but their constraints are known. Therefore, the authors are able to identify the effective price by solving a complex optimization problem, either iteratively or by using linear programing. In our study, however, we are interested in situations in which the population of customers and their constraints are unknown to the DR program operator and their individual responses are not observable.

Few papers also consider groups of customers and provide approaches to designing DR under such less defined conditions. Adelman and Uçkun (2019) design a social welfare-maximizing program for a population of automated HVAC systems while Valogianni et al. (2020) address electric vehicles for a system under grid constraints. In both cases, the DR program operator requires an algorithm to approximate the optimal price but the programs address homogeneous groups of loads. As a result, the operator knows the structure of the customers’ operational problem. However, in local electricity systems, there are heterogeneous electric load types (e.g. HVAC systems; electric storages; electric vehicles; interruptible and noninterruptible loads), which have different degrees of flexibility and thus respond differently to prices. DR programs to manage such local electricity systems with heterogeneous loads have not been researched. We follow Adelman and Uçkun (2019) and Valogianni et al. (2020)’s setup of designing a DR program for a population of customers with unknown preferences. In addition, we assume that the DR program operator does not know the demand structure of the customers but instead faces an unknown portfolio of heterogeneous load types in the system. Accordingly, a key methodological challenge within the design of the local DR program is the identification of prices under unknown demand.

Pricing Under Unknown Demand

Vardakas et al. (2015) review optimization approaches for DR programs where customer preferences and operational constraints are known. Under such conditions, optimal DR prices can be identified by solving a linear programing problem (e.g., Anees et al., 2021; Grimm et al., 2021) or iteratively (e.g., Sarfarazi et al., 2022). However, DR program operators usually only have limited knowledge of the existing load flexibility of their portfolio - due to a lack of communication infrastructure and privacy considerations (Haider et al., 2016). Moreover, the pricing problem faced in local DR programs is particularly challenging as demand can be time-interdependent and discontinuous. The demand function is therefore a piece-wise function, for which both the limits of the subdomains as well as the functional form on each subdomain are unknown.

In the context of DR programs and electricity markets, several suggestions have been made to identify effective prices under unknown demand. In most cases, the existing literature approaches the problem by assuming a reasonable parametric form of demand—usually a demand function linear in price—which is subsequently parameterized. For the problem of DR pricing for continuous demand with no time-interdependency, Khezeli et al. (2017) propose least squares and quantile estimation for optimizing incentive payments in a DR program. They define a linear demand function, derive a truncated least squares estimator, and evaluate a myopic and a perturbed myopic price policy for DR contracts. For a similar demand model and solution approach, Mieth and Dvorkin (2020) suggest a pricing strategy for a distribution grid with grid constraints. Other approaches additionally assume time-interdependency of demand. Dong et al. (2017) empirically estimate a cross-price elasticity between peak and off-peak periods and then analytically derive optimal ToU tariffs. Nikzad et al. (2012) instead numerically solve a stochastic linear model to optimize grid reliability, although for known system parameters. However, the suggested approaches to DR price estimation cannot be easily transferred to a local electricity system where demand can be discontinuous.

Other contributions have therefore suggested parameterized pricing approaches that go beyond linear demand functions. Adelman and Uçkun (2019) and Valogianni et al. (2020) suggest adaptive pricing algorithms under unknown preferences for major appliances in the distribution grid—HVAC systems and electric vehicles. However, their approaches are specific to the appliances considered as they leverage the operational constraints of these appliances. Such model-based learning approaches have also been used in other fields where agents face unknown demand. For example, Cohen et al. (2020) suggest a multidimensional version of binary search to approximate the optimal price of products whose market value linearly depends on a set of features, such as those found in online flash sales. Moazeni et al. (2020) optimize marketing efforts in a multiplicative advertising exposure model with Poisson arrivals.

As assumptions of parametric models on demand are strong and may lead to inconsistent results, nonparametric approaches can help to alleviate the restrictions imposed by a target demand model. Vallés et al. (2018) use quantile regressions to derive distribution functions of flexibility conditional on socio-economic and physical parameters, allowing them to classify multiple customer groups with different response behavior. However, they do not consider time-interdependencies. As a hybrid of parametric and nonparametric approaches, Yousefi et al. (2011) consider that electricity demand can be composed of different types of aggregate demand functions and suggest a Q learning-based approach to identifying the weights and parameters of a linear combination of four demand models. More generally, Harsha et al. (2021) further develop a quantile-based method to address the newsvendor problem and apply it to energy consumption data of residential households. Such an approach allows for a wider variety of demand functions as it does not require assumptions about the specific shape of demand. However, while they consider past demand as a potential parameter in their model, their approach does not allow for inter-temporal optimization.

RL is one specific approach to optimize prices in a nonparametric way while considering time-interdependencies. Recently, RL has been used to address a variety of operational problems in the smart grid (e.g., Li et al., 2023; Ponse et al., 2024; Vázquez-Canteli and Nagy, 2019; Zhang et al., 2020). In the context of DR programs, these include the control problem of consumer loads (e.g., Bahrami et al., 2021), as well as the price setting problem. While this literature on pricing in DR considers differently detailed load models—from the retail pricing problem (e.g., Lu et al., 2018; Qian et al., 2024; Tang et al., 2024), via the joint pricing and bidding problem at retail and wholesale markets (e.g., Xu et al., 2019, 2022b), to the consideration of market power in wholesale market bidding (Xu et al., 2024)—its models of the consumer side are often simplified and do not incorporate key aspects of load flexibility: The on-off property of devices is ignored and demand flexibility follows the same continuous functional form for all consumers (e.g., Kim et al., 2015; Liu et al., 2021; Xu et al., 2022a). While the functions commonly take into account price elasticities (Liu et al., 2021), that may also be time-dependent and vary over the course of the day (Lu et al., 2018), storability, shiftability and interruptibility are commonly not modeled, even though these are key properties of flexible load (Barth et al., 2018)—and also pose methodological challenges to pricing. Moreover, all these contributions assume homogeneous customer models, such that the behavior of a consumer portfolio composed of several types of flexibility has not been explored.

To summarize, we extend the literature on DR design by considering more complex and heterogeneous types of consumer flexibility. In particular, we investigate settings where the DR program operator interacts with a portfolio of consumers of unknown flexibility types, whose behavior varies over the course of the day and whose load response exhibits time-interdependencies and discontinuities.

By providing an effective way to solve pricing problems under unknown, time-interdependent, and discontinuous demand, our approach also relates to the theoretical literature on pricing under unknown demand (see e.g. den Boer, 2015, for an overview). Most notable contributions include Bertsimas and Vayanos (2017), who suggest an adaptive optimization approach for price setting under a linear demand model with extensions for time-interdependent or polynomial demand; den Boer and Zwart (2015), who generalize learning for non-time-interdependent demand; and den Boer and Keskin (2020), who consider discontinuous demand functions. In contrast to these contributions, our setting is more general, since it is characterized by both time-interdependencies and discontinuities. Despite this complex setting, we later show that we still find effective solutions to the pricing problem.

The Local DR Program

In local electricity systems, small regulated utilities or publicly-owned cooperatives procure electricity on behalf of their retail customers. Part of this electricity is bought at the wholesale market, such as the Western Energy Imbalance Market in the case of California (CAISO, 2023b). While prices at the wholesale market vary in real-time, utilities and cooperatives typically sell the electricity to their customers at a retail tariff that is either entirely constant or takes multiple price levels, depending on the hour of the day (so called “Time-of-Use” tariffs). Given the monopolistic position of these utilities, retail tariffs are subject to the approval of the regulator or the members of the cooperative (e.g., California, 2021; CPUC, 2019).

Customers in local electricity systems are residential households as well as small commercial and industrial customers. The roll-out of smart meters is still limited in some countries (pwc, 2022). In this case, utilities cannot observe individual customers’ response to time-specific prices in real-time but only ex post when billing their customers (typically at the end of the calendar month or year). The utilities and cooperatives can, however, measure electricity consumption in their local electricity system in real-time, e.g. through information and communication technology-equipped metering infrastructure at transformer stations.

We now assume that utilities and cooperatives are price takers at the wholesale market and operate a DR program (and in the following, we refer to them as “DR program operators”). For instance, the peak load of Palo Alto Utilities, an example for such a utility and potential DR program operator, is 159 MW as compared to the overall peak load of 52 GW in California/CAISO (CAISO, 2023a; ORNL, 2023). Because of these differences in magnitude, we consider the modeling as price takers reasonable. Under the DR program, the operator can temporarily raise retail prices during periods of high wholesale market prices and thereby incentivize its customers (in the following, we refer to them as “load operators”) to shift consumption to periods of lower wholesale market prices. As a result, electricity procurement costs at the wholesale market can be decreased. The price increases are, however, only permitted during times of high wholesale market prices, in order to limit the exposition of customers to price risks. Such limiting conditions for price deviations are common in related DR programs, such as the Critical Peak Pricing (CPP) program in California (Blonz, 2016), for instance.

Temporal Structure of the DR Program

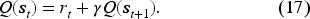

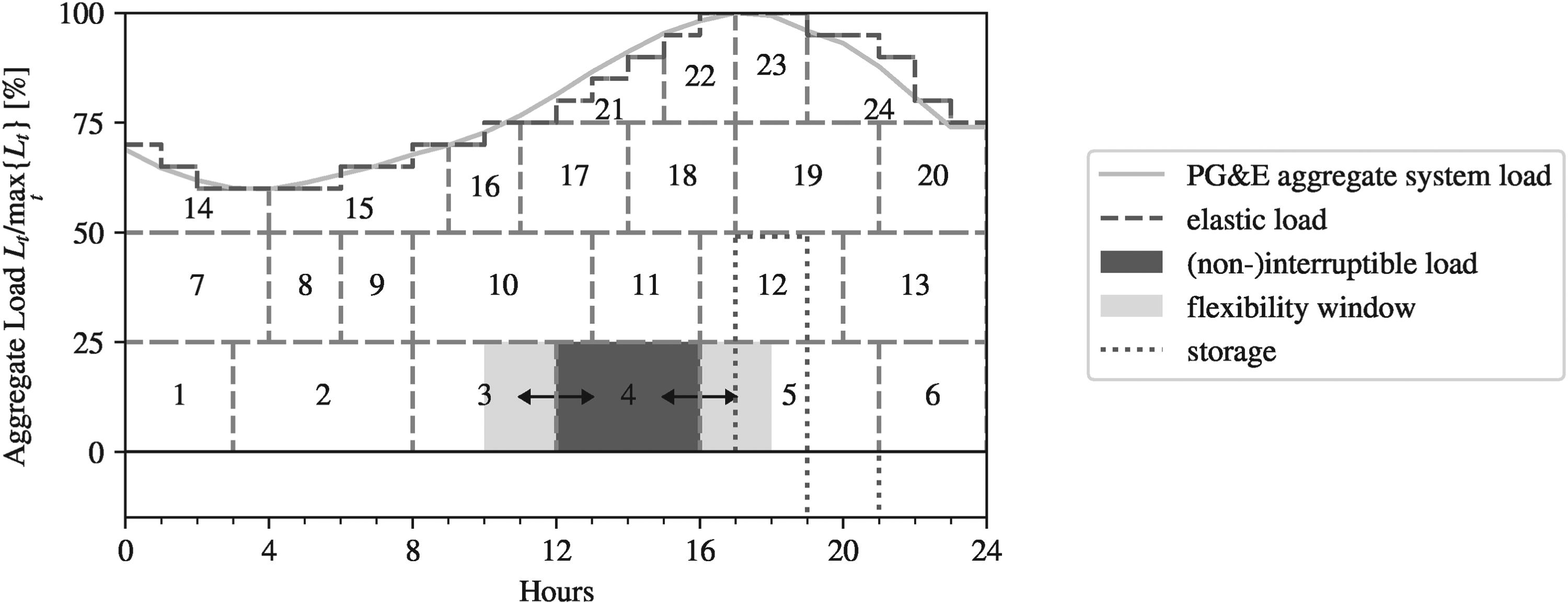

Figure 1 illustrates the temporal structure of the DR program and the sequential decision-making of the DR program operator and the load operators: In the first stage of time period

Sequence of actions in period

In the second stage of the time period

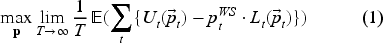

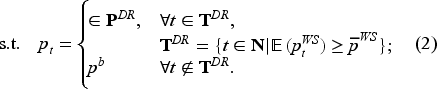

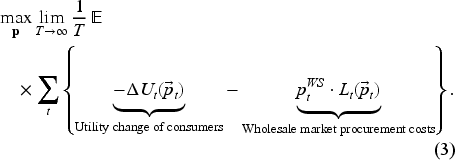

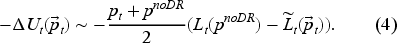

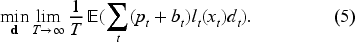

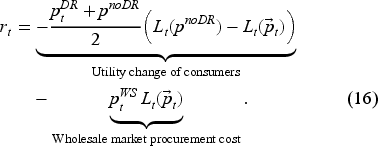

Since utilities and cooperatives are owned by regional authorities and customers, we assume that the DR program operator pursues welfare maximization (cf. Adelman and Uçkun, 2019). The DR program operator seeks to maximize expected social welfare by the choice of retail prices

The DR program operator’s pricing is subject to two constraints. First, a DR event can only be called if the forecasted wholesale market price

The objective function as described in Equation (1) cannot be operationalized directly, since the utility

Second, we approximate demand

The given expression estimates the welfare change at time

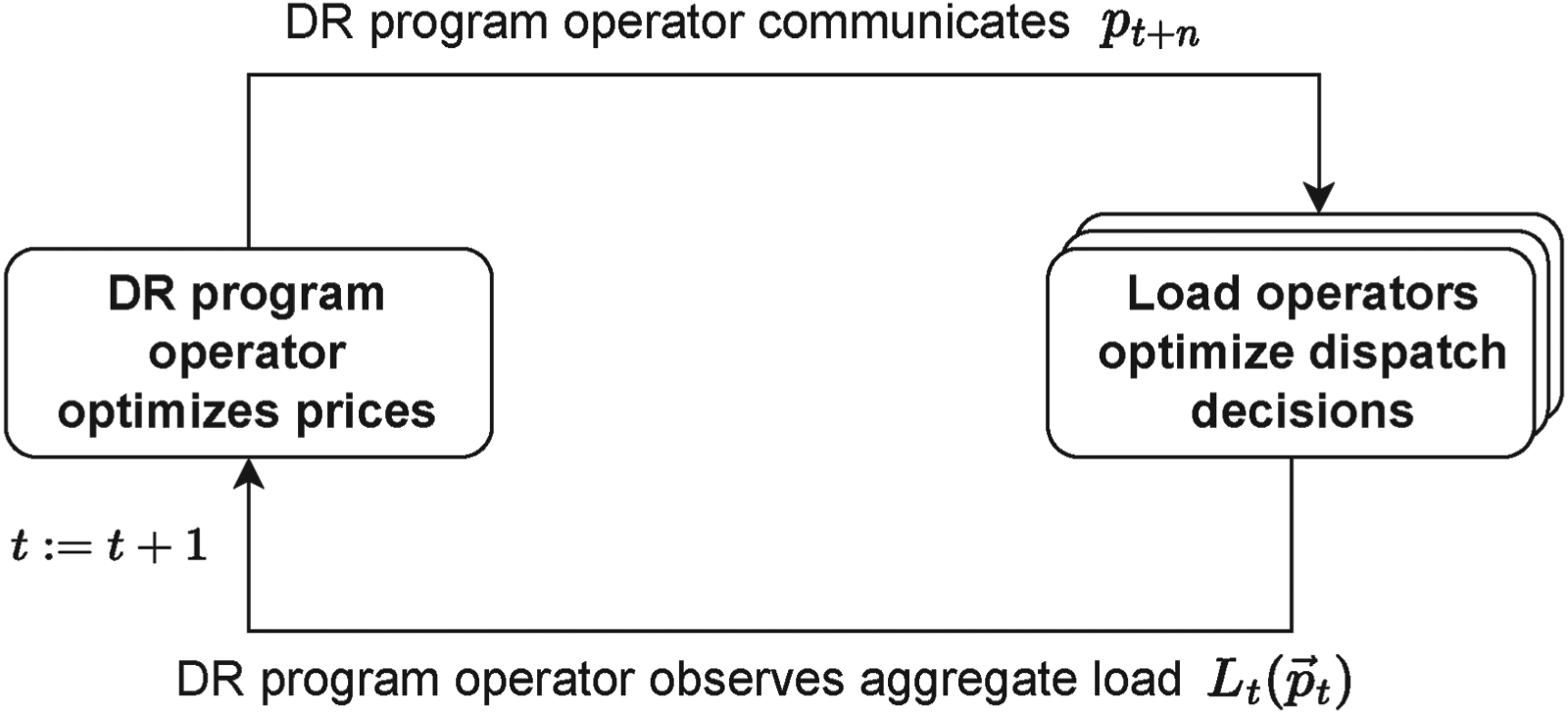

After defining the optimization of the DR program operator, we now focus on the load operators. We characterize the aggregate flexible load

Figure 2 illustrates the four types and their ability to respond to variable prices: Storage is able to charge and discharge and, thereby, shift load into other time periods. Examples could be a residential battery storage or an electric vehicle. Interruptible and noninterruptible loads both have predefined load profiles which they can re-schedule. While the former allows for intermittent switching on and off, the latter needs to follow a continuously running load profile once started. Examples for interruptible loads could be a washing machine or a large-scale printing job; for noninterruptible loads, examples would be an oven for baking or a melting process in the industry. For an elastic load, such as the level of room heating or cooling, scheduling is not time-interdependent.

Flexible load types. Note. The subfigures illustrate the main flexibility characteristics of each load type. The grey line represents a customer’s original load profile over time. With a flexible load, the load profile can be modified to the dashed profile. Explanations by load type: A customer with storage can modify his load profile by charging (increasing net load in one period) and discharging in the next period (decreasing net load in the next period). A customer with an interruptible load can anticipate part of his load, interrupt, and then exercise the rest of the load profile. In addition or alternatively, part of the load can also be postponed. A customer with a noninterruptible load can anticipate or postpone the entirety of the load profile, but not only a part of it. Finally, a customer with an elastic load can increase or decrease load in a given period in response to price, without changes to the load profile in other time periods.

Operators of time-interdependent load types—that is storage as well as interruptible and noninterruptible loads—minimize expected stage-wise electricity costs while facing an intertemporal optimization problem. We generalize the objective function of a load operator with a single time-interdependent flexible load of type

Load operation costs are driven by two components: the retail price

While all load types share the same objective function, their load type-specific operational characteristics are reflected by different sets of constraints. These constraints importantly include the intertemporal transition function

In Supplement C.1, we provide a table summarizing the load type-specific optimization problems. We explain the type-specific model components in the next section, when deriving the solutions to the load operator’s decision problems.

We describe the scheduling problem as an optimal control problem under uncertainty to capture the dynamic decision structure of the rolling time horizon. As the DR program operator sequentially communicates future prices, the load operators take new information on prices up to

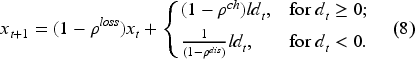

Storage is characterized by the ability to charge electricity and discharge it at a later point in time.

Additional constraints include the minimum and the maximum state-of-charge,

Let

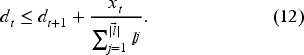

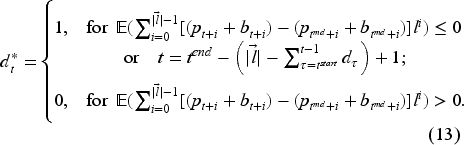

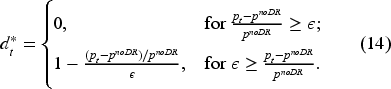

The optimal charging policy is,

The proof of Proposition 4.1 as well as a detailed explanation of

An interruptible load follows a specified load profile

Let

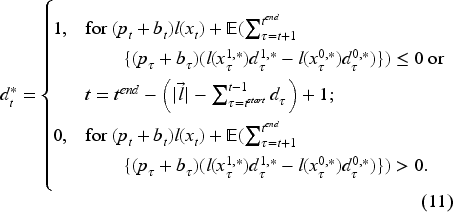

The optimal dispatch policy is,

The proof can be found in Supplement C.3. The policy distinguishes two cases. First, the next load component of the interruptible load will be dispatched in

In contrast to interruptible loads, noninterruptible loads cannot be stopped once they have been started. The definition of variables and parameters as well as the transition function Equation (6) of the noninterruptible load is identical to the previously described interruptible load. In addition, the constraint set includes an additional noninterruptibility constraint,

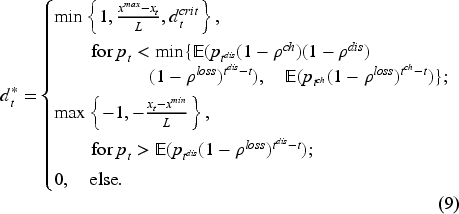

Let

The optimal dispatch policy for noninterruptible loads is,

This is a special case of Proposition 4.2. The proof can be found in Supplement C.4. In contrast to interruptible loads, noninterruptible loads cannot be interrupted and a dispatch in

In addition to the time-interdependent loads, we consider elastic loads. Elastic loads respond only to the price signal of period

The dispatch policy of an elastic load is,

The price sensitivity parameter

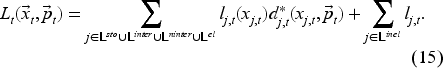

The aggregate load behavior

In addition, aggregate load includes inelastic loads

We can use Equation (15) to derive some simple comparative statics: Ceteris paribus, if the price in

Finally, as pricing is linear and only depends on the amount of energy consumed, the optimal dispatch of each load is independent of whether load operators hold a single load or combinations thereof. In both cases, aggregate load and the response to the DR program will be identical.

Given the responses of the load operators described in the previous section, the DR program operator has to optimize prices (recall Equation (1)). This corresponds to an optimal control problem under uncertainty with the following unknowns: First, the upcoming wholesale market price is nondeterministic and may deviate from the DR program operator’s forecast. Second, the DR program operator does not know the dispatch optimization problems of the individual load operators and cannot compute their load response to DR prices. After the DR price has been announced, the DR program operator only observes the actual aggregate load realization.

Moreover, the dispatch behavior of a single load operator can be described as a Markov decision process: the optimal decision to dispatch in

Definition of States, Actions, and Rewards

Deep RL aims to approximate an optimal policy function—that is a function which maps the optimal action to a given state—by systematic exploration of an environment. For this purpose, at each time step, the RL agent observes the state of the system, picks an action, and considers the reward to continuously update the estimated value of an action in a given state and improve the policy.

For our setup, we choose the following specifications. We characterize the state

Finally, the DR program operator maximizes social welfare over time, as represented by the objective function Equation (1). In Deep RL, the original objective function is substituted by the value function

The discount factor is described by

The field of Deep RL has developed a variety of different algorithms for approximating optimal policies. In this paper, we use the Deep Deterministic Policy Gradient (DDPG) algorithm as proposed by Lillicrap et al. (2015). DDPG is a policy gradient method and uses two neural networks to represent the policy (actor) and the value functions (critic). The actor network corresponds to a function mapping optimal actions to states. At each step, the actor network is used to predict the presumably optimal action, the DR price. This or a modified action (during exploration) is then implemented and the system subsequently produces a reward and transitions into the next state. At the end of the time step, the tuple of state, action, reward, and next state is used to re-train the value function and the gradients of the value function are used to update the policy.

We base our implementation of the DDPG on the parameterization suggested by Lillicrap et al. (2015) and adjust selected hyperparameters. Further details can be found in Supplement D.

Numerical Experiments

In this section, we evaluate the performance of the DR program that we parametrized using Deep RL (Deep DR program).

Setup

Wholesale Market Prices

First, we represent the wholesale market using an auto-regressive time series model with a seasonal (hourly) component and a noise term of variance

Forecasts of Wholesale Market Prices

For notification periods

Instead of endogenously modeling forecasts—whose accuracy depends on local characteristics of the electricity system (such as the stability and predictability of weather conditions)—modeling synthetic forecasting errors allows to generate insights beyond specific local conditions. For instance, we later model the deterioration of the forecasting quality in the notification interval by increasing the variance

Retail Prices

We define the base price

Frequency of DR Events

The frequency of DR events depends on the choice of the threshold

Load Data

Electricity systems typically exhibit a pronounced seasonality. In our study, we use average hourly Californian demand data as the basis for the parametrization of all four load types (i.e., aggregate demand data of the entire PG&E service territory, for different months of the year 2020 (CAISO, 2022)).

The procedure for creating the load portfolio is illustrated in Figure 3 and described as follows. For elastic loads, we use the PG&E 24-hours load profile directly and randomly assign price sensitivities

Illustration of the load portfolio for August 2020.

Further details on load composition, the calibration of load types, as well as individual load parametrizations are presented in Supplements E.4 and E.6. Furthermore, we present a study of the behavior of the different load types when exposed to the DR program in Supplement E.5.

For each parameter configuration, we perform 20 runs. For each run, we stochastically generate a new training and test time series and randomly initialize the Deep RL algorithm. We first train our DR pricing policy on a training set of 2,160 training time steps (corresponding to 2,160 hours or approximately three months in the real-world) which was generated following the procedure described in Section 6.1.1. During training, the pricing policy is optimized using the DDPG algorithm described in Section 5.

We then apply the resulting pricing policy to a test time series of 2,160 training time steps (corresponding to 2,160 hours or approximately three months in the real-world), which was also generated using the procedure described in Section 6.1.1. We evaluate the improvement in social welfare as compared to a fixed retail price

Benchmarks

We further compare the results of the Deep DR program to the following three benchmarks: A parametric benchmark as well as an ex ante and an ex post ToU tariff. For the parametric benchmark, we estimate a time-of-the-day (hour)-specific demand function where demand in

Results

Cost-Effectiveness of the Deep DR Program

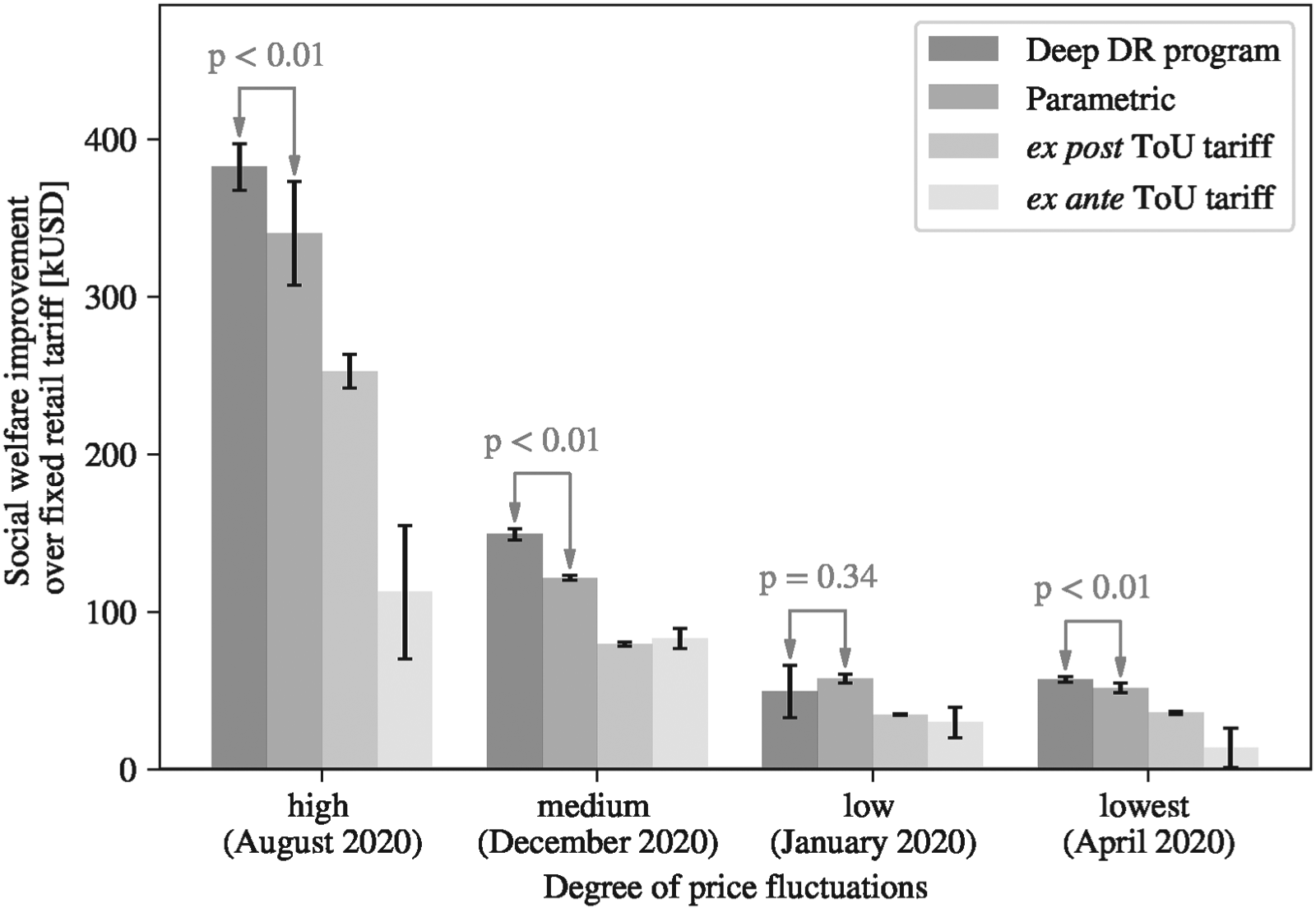

Using the previously described parametrization as a starting point, Section 4 illustrates the results for each pricing approach for different months of the year, as compared to a fixed retail rate. We specifically selected these months to represent different degrees of wholesale market price fluctuations (defined as the difference between the 5 and the 95-percentiles of monthly price distributions), as they affect the potential for social welfare improvement from time-dependent load adjustments.

Comparison of pricing approaches for selected months of the year. Note. Performance (averages over 20 runs) of the Deep demand response (DR) program for different levels of wholesale market price fluctuations. Bars represent the 95% confidence interval.

We find that the Deep DR program outperforms all benchmarks for the majority of the months evaluated. In the month with highest price fluctuations, August, the Deep DR approach achieves the largest advantages, achieving social welfare improvements of 382 kUSD on average, as compared to 340 kUSD (parametric), 253 kUSD (ex post ToU tariff), and 110 kUSD (ex ante ToU tariff), respectively. The performance of the Deep DR program corresponds to a 12.5% improvement over the parametric benchmark. For December and April, our approach achieves savings of 149 kUSD (versus 121 kUSD, 79 kUSD, and 83 kUSD under the benchmarks) and 57 kUSD (versus 51 kUSD, 36 kUSD, and 13 kUSD under the benchmarks). Relative savings compared to the parametric benchmark are 22.8% and 10.8%, respectively. For the month of January, the social welfare improvement by the Deep DR program is 49 kUSD but it does not significantly differ from the parametric benchmark. In an additional analysis, we further systematically vary price volatility around the seasonal components and find that the social welfare improvement increases in price volatility (see Supplement F.5.1).

In summary, while the magnitude of the advantage varies, the results clearly show the superior performance of the Deep DR program. Our estimation of social welfare changes during operations aligns well with actual welfare changes (see Supplement F.1). Our results are further robust to different specifications of the setup, such as the load portfolio, forecasting quality, noise in inelastic load, the choice of the price threshold, and load operators’ expectations (see Supplements F.3 to F.8), and other system characteristics (see following sections).

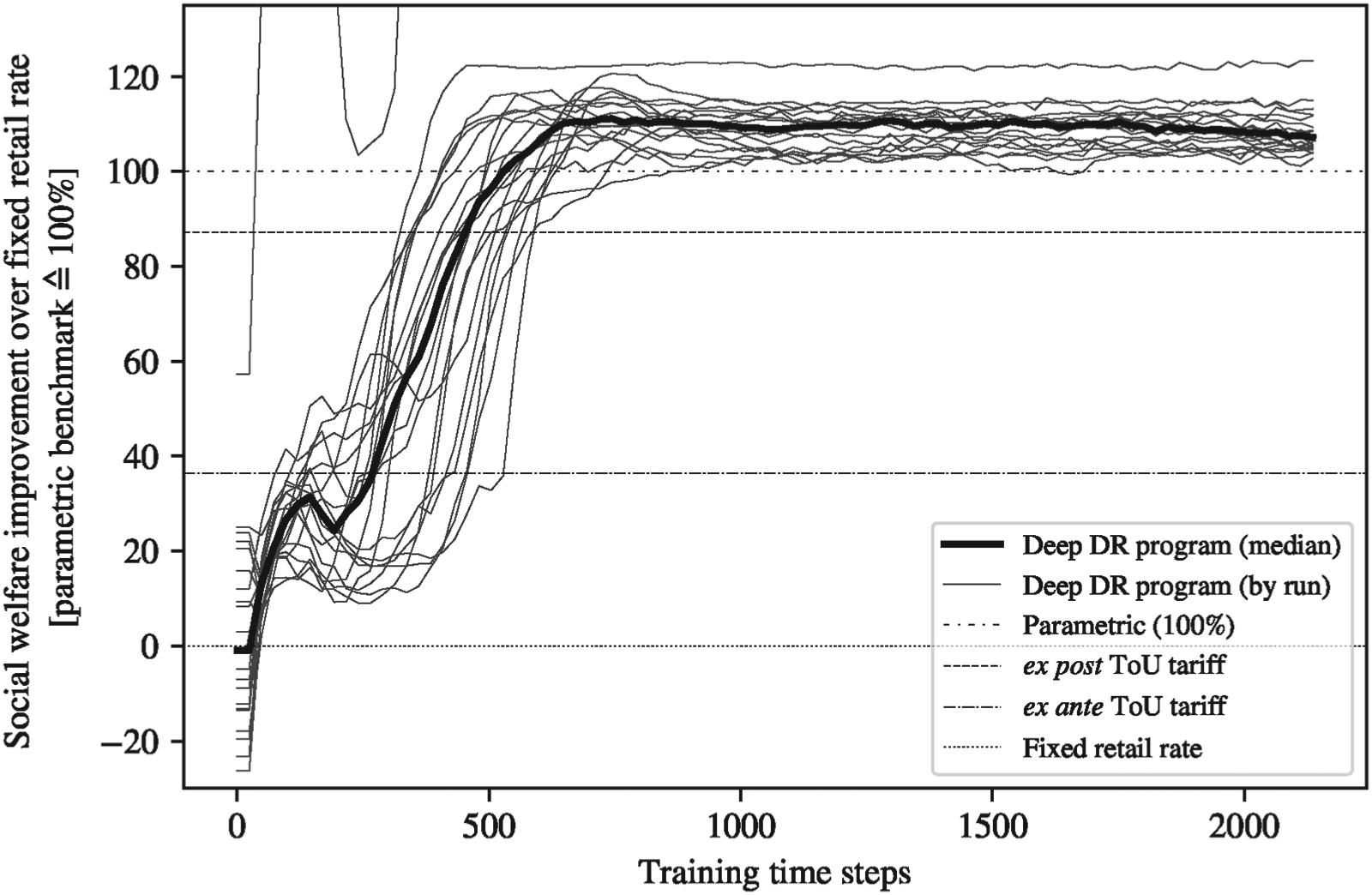

We furthermore analyze the learning behavior of the Deep RL algorithm since for real-world applicability, convergence and convergence speed are important. Section 5 illustrates the learning behavior of the algorithm for 20 runs, using the default parameters of the case study for the month of August with a storage share of 50%.

Convergence speed of the Deep RL algorithm. Note. Training and test data are stochastically generated and differ for all 20 runs. Improvements over the fixed retail rate are scaled to the performance of the best benchmark, that is parametric benchmark

We find that, on average, we can achieve persistent savings across different runs of the stochastic price time series, as represented by the bold line. On average, the two ToU benchmarks are beaten after 120 training time steps or five days in the real-world (ex ante ToU tariff) and 18 days (ex post ToU tariff), respectively. The parametric benchmark is beaten after 19 days, on average, and after 23 days for the median run. We report both values as one parametric run performs particularly bad. Learning saturates after about 575 training time steps (24 days), that is, the program reaches 95% of its final performance. Analyzing individual runs (thin lines in the figure), we find that the best exploration run provides results better than the parametric benchmark after a learning period corresponding to 48 hours (2 days), the second best after 384 hours (16 days), and the worst one after 864 hours (36 days). This additionally demonstrates the robustness of our approach for individual runs. In Supplement F.4, we further provide details on the computational resources used as well as the necessary training time.

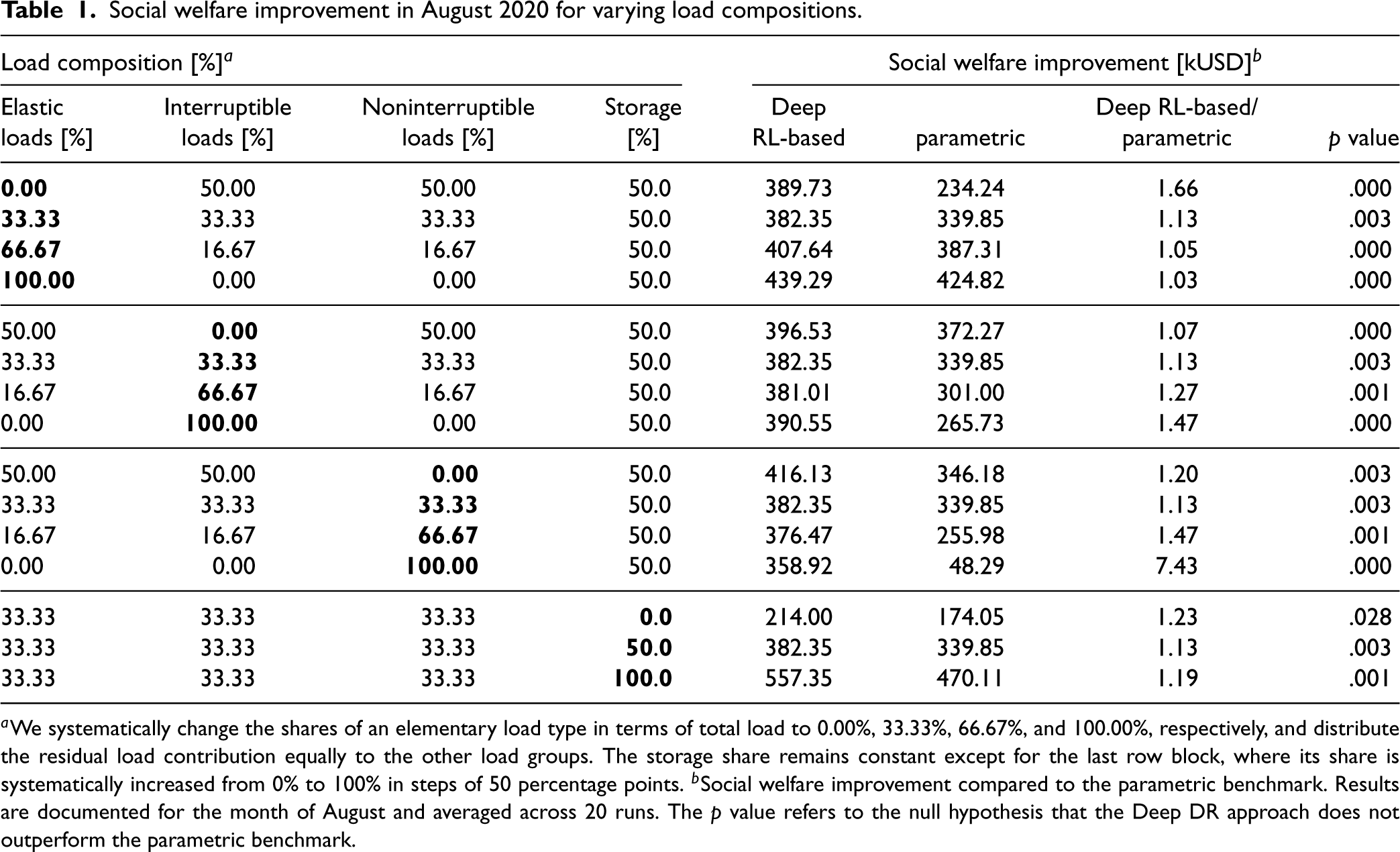

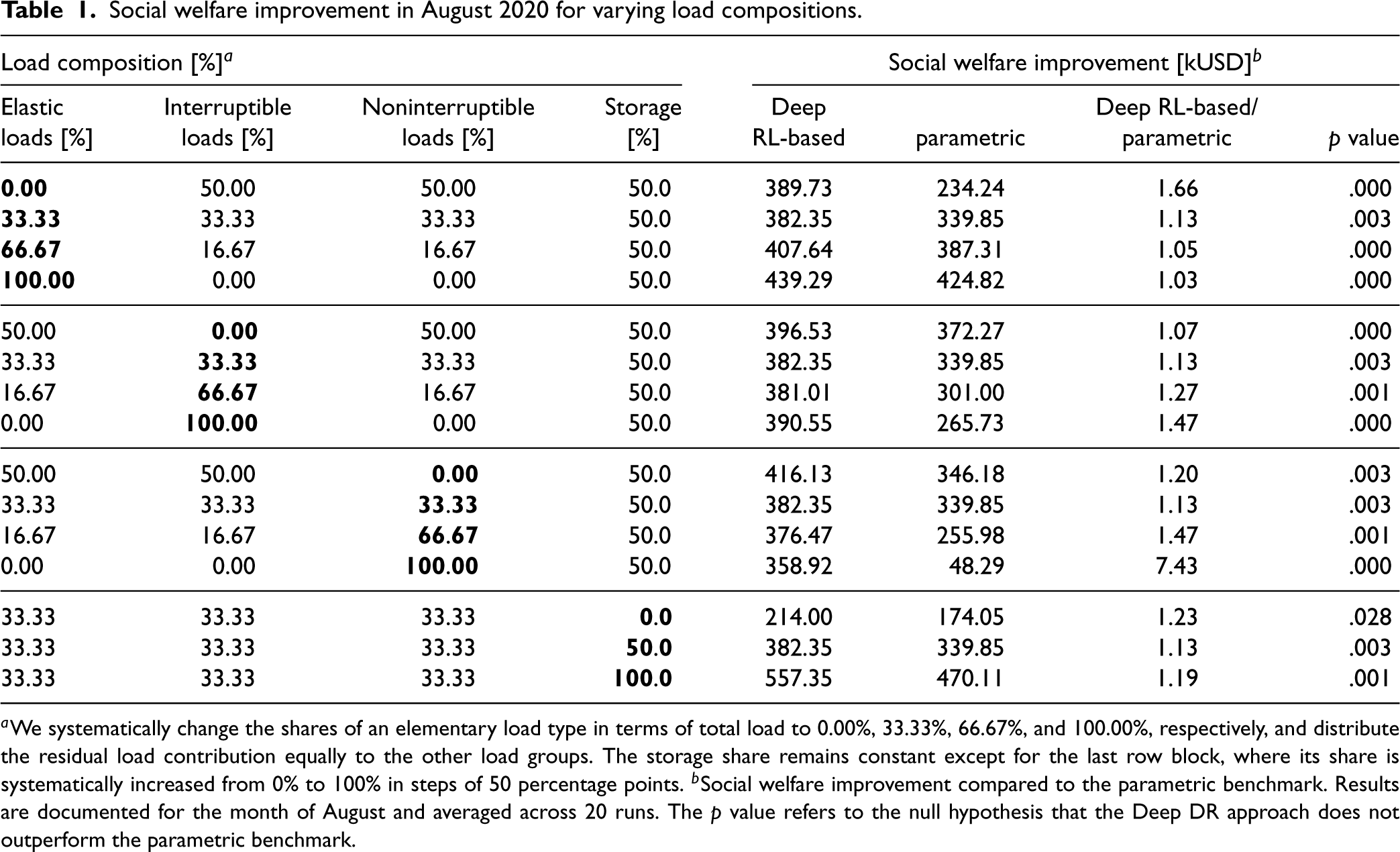

We furthermore evaluate the Deep DR program for different load compositions, as documented in Table 1. The first four columns list different compositions of load types. Each compartment is dedicated to one load type (indicated in bold) which we systematically vary. Note that the shares of elastic, interruptible, and noninterruptible loads add up to 100% of demand under a fixed retail rate, while storage is measured as a share of peak demand. The second half of the columns documents the social welfare improvement in [kUSD], as achieved by the Deep DR program, and compares it to the performance of the parametric benchmark.

Social welfare improvement in August 2020 for varying load compositions.

Social welfare improvement in August 2020 for varying load compositions.

We find that the Deep DR program is able to realize consistent improvements across load portfolios, as compared to a fixed retail rate. For instance, for a 0% share of elastic load types in the portfolio, our program achieves an improvement of 389.73 kUSD, as compared to a situation with no DR. If the load portfolio is entirely composed of elastic loads (100%) as well as the default share of storage, an improvement of 439.29 kUSD can be achieved. Comparing our results for different load types, we find that improvements are higher for load portfolios with higher shares of elastic loads and higher participation of storage. This can be explained by a larger flexibility potential of elastic loads and additional storage. For interruptible loads, the performance stays relatively constant. Finally, the deterioration for higher shares of noninterruptible loads could be caused by the increasing relevance of discontinuities in the response of noninterruptible loads. Such discontinuities could negatively impact the learning process.

We further compare the Deep DR program and the parametric benchmark. We find that the advantage of the Deep DR program over the parametric benchmark decreases for higher shares of elastic loads and increases for load portfolios where the other load types dominate. For instance, the advantage over the parametric benchmark is 66% if the load portfolio contains no elastic loads, but shrinks to 3% if it consists of elastic loads and storage, only. The advantage further generally increases for higher shares of storage. If no storage is present, the difference in performance of the Deep DR program and the parametric benchmark is insignificant, but the advantage of the Deep DR program increases to 19% when storage reaches 100% of peak load. We explain this finding as follows: the demand model used for the parametric benchmark works best for elastic loads but cannot represent the discontinuities and nonlinearities exhibited by the other load types.

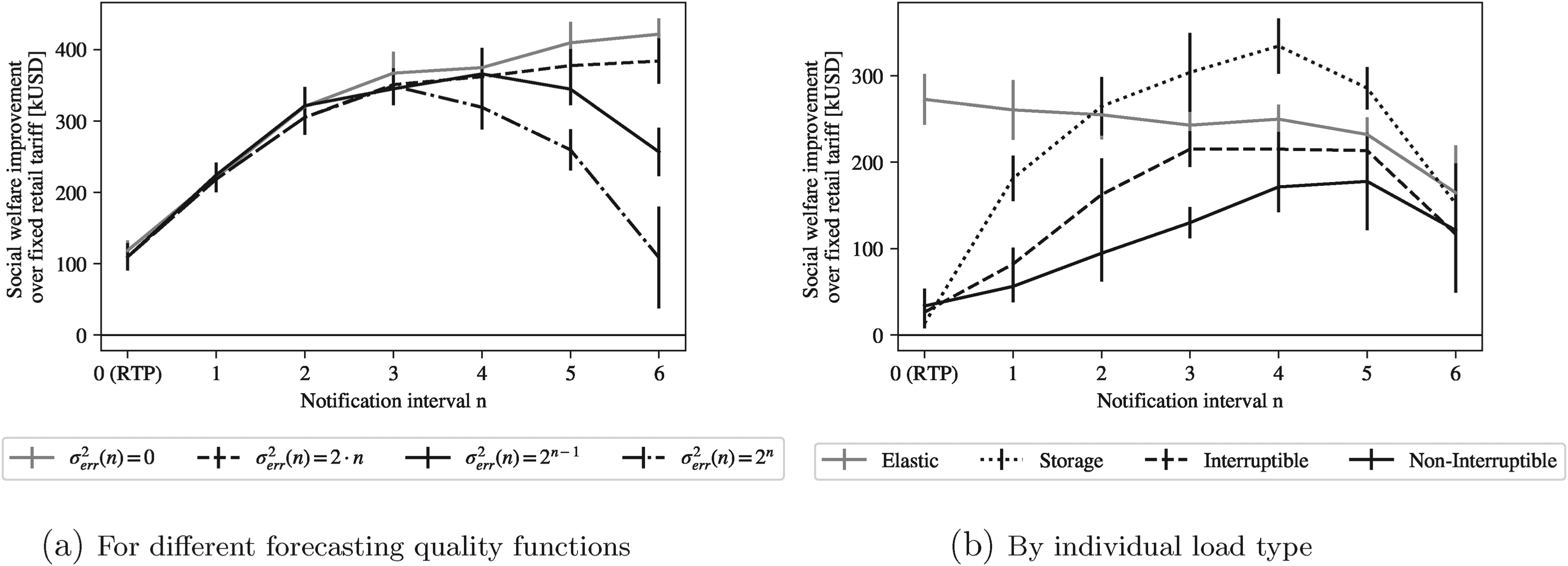

We further investigate the impact of the notification interval on social welfare. For a notification interval of zero, this corresponds to a real-time price (RTP). For a notification interval larger than zero, load operators are notified in advance and can plan their dispatch in a cost-minimal way.

In practice, longer notification intervals are associated with deteriorating forecasts with regard to future wholesale market prices. We therefore investigate the optimal choice of the notification interval when increasing notification intervals are associated with deteriorating forecasting quality, modeled by an increasing forecasting error variance

Figure 6(a) illustrates social welfare changes for different relationships of notification interval

Welfare changes by notification interval. Note. Results for the month of August. Bars represent the 95% confidence interval over 20 runs. (a) Forecasting error variance

The relation between social welfare and the notification interval is more heterogeneous when differentiated along load types. Figure 6(b) shows that, if the load composition is entirely composed of elastic loads, short notification intervals lead to the highest social welfare improvements. The reason is that elastic loads do not face time-interdependent constraints. For the other load types, in contrast, a notification in real-time produces sub-optimal welfare changes. For storage, optimal welfare improvements can be reached for a notification interval of four hours. This corresponds to the time needed to fully precharge and prepare for increased DR prices. For any further increases of the notification interval, the forecasting quality deterioration dominates any possible further improvements. Finally, interruptible and noninterruptible loads are the least flexible load types. Compared to elastic loads and storage, they generally show low levels of social welfare contributions. For interruptible loads, a notification interval of three to five hours is optimal under the given specifications of the system; for noninterruptible loads, a notification interval of four to five hours produces the largest improvement.

In summary, the analyses reveal that ex ante information provided by the DR program operator through early notification of load operators is important. The optimal notification interval depends on the forecasting quality and load composition, both of which can vary considerably between real-world local electricity systems. Therefore, the notification interval should be tailored to the characteristics of the system of interest. We additionally include our results in comparison to the parametric benchmark in Supplement F.9. Our approach generally outperfoms the parametric benchmark, except for very long notification intervals and elastic loads, as already reported in Table 1.

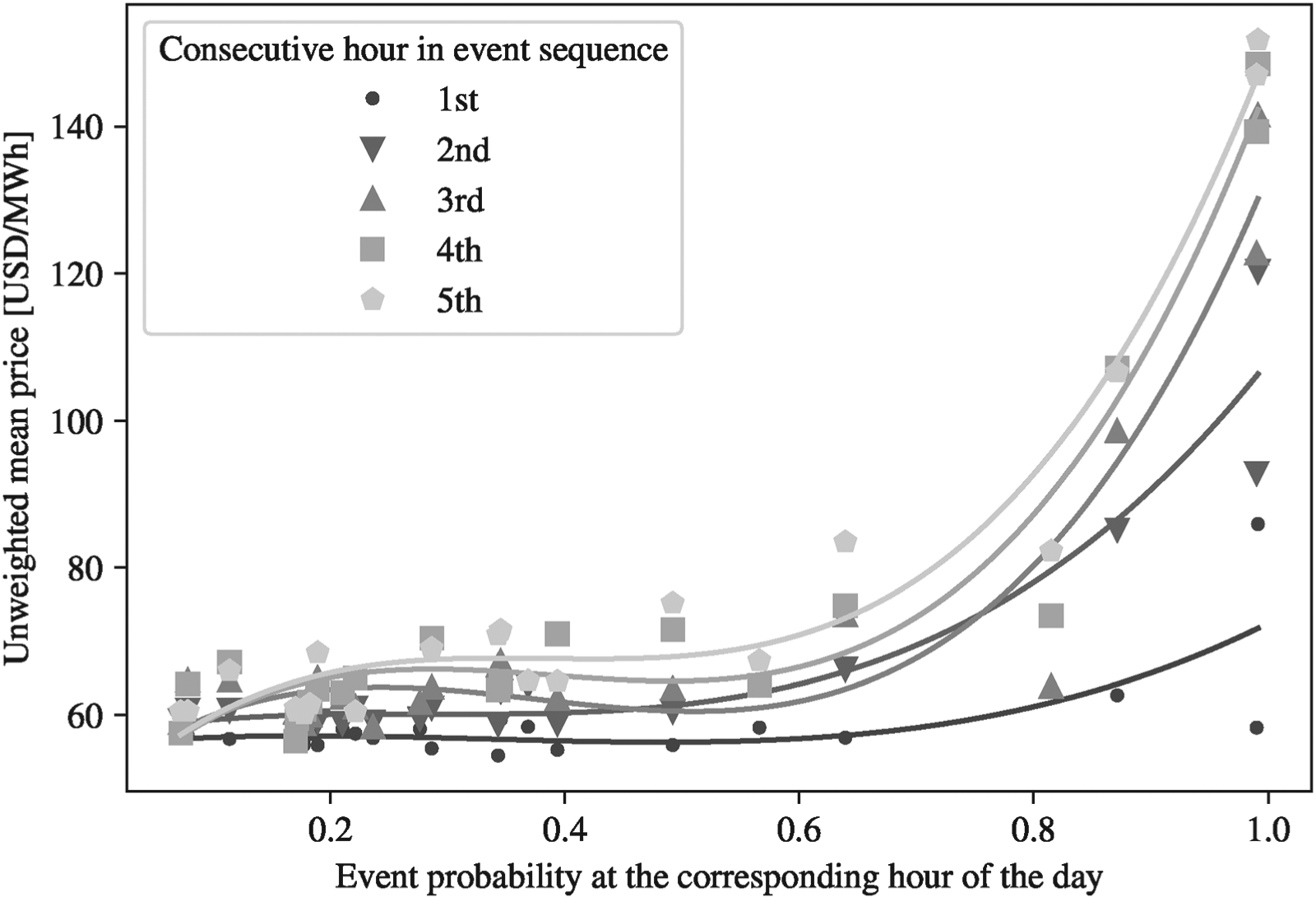

We finally leverage our numerical study to provide insights on the pricing policy of the DR program operator. Such insights can also be considered by regulators when investigating whether a DR program is effective and presumably social welfare maximizing.

First, we aim to understand how the state of the system influences price setting. We hypothesize that the longer a DR event is lasting, the more flexibility of the loads is exploited—so that higher price incentives are needed to achieve a response of the loads. Section 7 presents the average price setting (vertical axis) as a function of each hour’s position in a consecutive DR event sequence—a set of DR hours that occur back-to-back without interruption. Specifically, DR prices charged in the first hour of a consecutive event are labeled as “1st,” the second hour as “2nd,” and so on. The figure clearly demonstrates the hypothesized pattern: as the DR event window spans more consecutive hours, prices tend to rise. For instance, comparing the fitted lines of the first hour of the event sequence with the fifth hour shows that prices are generally higher the longer the event persists.

DR prices depending on the hour into an event sequence. Note. Results for the month of August. Event probability in a given hour is computed as the share of events in a given hour of the day. The fitted functions correspond to polynomials of degree four.

In addition, we investigate how this behavior varies with the event probability (horizontal axis), defined here as the percentage of hours within a specific hour of the day that experience a DR event. We hypothesize that hours with higher event probabilities are more likely to coincide with higher wholesale market prices, and therefore also see higher DR prices. Indeed, comparing price levels between low event probability hours (left end of the

We pursue several additional analyses on how the price setting depends on the notification interval and the load portfolio in Supplement F.10. First, we find that for very short notification intervals, DR prices amplify wholesale market price fluctuations to overcome load operators’ intertemporal constraints and elicit a sufficiently strong response. For instance, when notification is in real time, DR prices increase wholesale market price changes by a factor of about 1.15 (see Figure F11). Second, we demonstrate that mean DR prices decrease as notification intervals lengthen, primarily because of increased load flexibility and the potential to shift consumption to lower-priced hours (see Figure F12). Third, we observe that the composition of the load portfolio impacts price fluctuations (see Table F4). For example, higher shares of elastic loads and storage lead to reduced DR price fluctuations.

Finally, we discuss how these insights inform DR price setting. First, DR prices should positively correlate with wholesale market prices. If the DR price does not change or converges to the maximum of the set of possible DR prices (as defined in Equation (2)), the DR program operator might deviate from social welfare maximization for the sake of maximizing private profits. Second, DR prices tend to rise with longer event durations, reflecting the need to realign intertemporal dispatch with the most valuable periods for load reductions. Third, longer notification intervals not only boost social welfare but also lower prices for customers and increase consumer surplus—provided forecasting remains sufficiently accurate. Policy makers can thus support welfare redistribution to customers by supporting longer notification intervals. Lastly, optimal DR prices should adapt over time as the load portfolio evolves. For instance, as storage-type loads (e.g., batteries, home storage) proliferate, both DR prices and their co-movement with wholesale market prices should decrease.

In the following, we discuss our contibutions, limitations and potential challenges in real-world implementation, as well as future research directions to address these challenges.

Theoretical Contributions

First, we contribute to research by demonstrating how DR programs can be designed for groups of consumers in local electricity systems, for which constraints are unknown and individual responses are not observable to the DR program operator. Despite this limited information, we find that the Deep RL-based DR program can be effectively applied under a variety of system conditions and customers. The performance of the Deep DR program is particularly advantagous over previous parametric approaches for load portfolios with discontinuities and for systems that are subject to high wholesale market price fluctuations, as caused by intermittent renewable energies (McKinsey, 2024). Under high fluctations, the Deep DR program can identify prices that are better aligned with the actual costs of electricity and outperforms the benchmarks. Similarly, the Deep DR program is particularly valuable if the DR program operator has access to price forecasts of high quality and can, therefore, pass on cost-reflective price signals to customers early on, using long notification intervals.

Second, the length of the notification interval for future prices is another important, but often overlooked aspect of DR program design. So far, empirical evidence on the dependence of the load response on the notification interval mostly originates from surveys (e.g., Buber et al., 2013; Fridgen et al., 2018; Taylor and Schwarz, 2000). DR program design commonly assumes that DR events are either announced day-ahead (e.g., Valogianni et al., 2020; Webb et al., 2016), in real-time (Khezeli et al., 2017), or it does not explicitly model DR events (e.g., Agrawal and Yücel, 2022; Aïd et al., 2022). We find that effective notification intervals are the result of a trade-off between time-interdependent constraints of load operators and deteriorating forecasting accuracies. Specifically, if forecasting accuracy is limited, the DR program operator cannot correct already announced DR prices when system conditions become clearer. In this case, load operators react to prices that do not reflect actual wholesale market costs and social welfare decreases. For research and practice, it is therefore relevant to assess the DR potential as a function of information availability. Many studies of load flexibility potential consider complete information (e.g., Gerke et al., 2020; Gils, 2016) and might, therefore, overestimate the ability of loads to adjust to DR events.

Finally, to design the presented DR program, we solve a novel, complex pricing problem under unknown, time-interdependent, and discontinuous demand. Within the theoretical pricing literature, current contributions consider time-interdependencies and discontinuities separately, but not in combination. Within the literature on DR program design, the majority of approaches assume a parametric form of aggregate demand or focus on specific appliances. Our results suggest that such models might impose unnecessary restrictions. Except for extreme scenarios with strong discontinuities (that result from high shares of noninterruptible loads or storages), our Deep RL-based approach is effective to solve such challenging pricing problems. It is therefore worthwile to explore also for similar settings in other domains.

Practical Contributions and Potential Challenges Towards Real-World Implementation

DR programs are already highly relevant for today’s operations of distribution systems. The design of these local DR programs is, however, based on general experience and insights from pilots rather than on a systematic methodological approach. In our numerical experiments, Deep RL has shown to identify effective prices in short learning periods from aggregate demand only with minimum requirements for communication hardware, positioning it as a promising candidate to overcome the shortage of metering infrastructure in practice.

For DR program operators, a direct requirement for an implementation in practice is to gather insights about the predictability of prices at their respective wholesale market, e.g., by evaluating external forecasting services (such as AleaSoft, 2024; DNV, 2024). The analyses of the effects of forecasting accuracies (see Supplement F.5.2) can provide them with guidance on whether or not the forecasting quality is sufficient for a successful practical implementation of the DR program.

However, some challenges remain to be solved for successful real-world implementation. First of all, guardrails need to be provided to avoid undesired outcomes. In rather rare cases, a DR policy may not converge during training or produce inferior results. This can be noticed through unusual periods of constant prices. In this case, human experts may be included into the loop to decide on reinitializing training. Moreover, exploration and pricing policies could be informed by experts and their domain knowledge (c.f. Mark et al., 2022). For instance, selected prices could be restricted to move within a certain band around the wholesale market price.

Future Research Directions

Based on our results, we see several promising directions for future research. First, the DR program could be extended to more complex electricity markets. While many markets are still regulated and subject to monopolistic retailers, pricing algorithms could be adjusted to consider competitive DR markets. Similarly, customers may be clustered into different tariff groups. For instance, flexible consumers could be assigned to a Deep DR program, while less flexible customers could be subscribed to ToU rates, benefiting from their predictability. Another research direction aims towards accelerating the learning process, which would further increase the practical applicability of Deep DR programs. Here, strategies such as curriculum or transfer learning approaches may be explored. For instance, the pricing policy could be pretrained on synthetic and historical data (c.f. Klink et al., 2020). In that case, the DR program operator could use an approximate synthetic model of the system and later adjust the Deep RL agents to the characteristics of the real-world system.

Finally, while the presented Deep DR program solves a relevant problem in the domain of DR systems, it is also an example of how general advances in machine learning-based algorithms can help to meet the complex operational requirements of future local electricity systems. We are excited to see how this promising area develops in the near future.

Supplemental Material

sj-pdf-1-pao-10.1177_10591478251363654 - Supplemental material for A Deep Demand Response Program for Local Electricity Systems

Supplemental material, sj-pdf-1-pao-10.1177_10591478251363654 for A Deep Demand Response Program for Local Electricity Systems by Marie-Louise Arlt, Gunther Gust and Dirk Neumann in Production and Operations Management

Footnotes

Acknowledgments

The authors thank the participants of the 2024 ISDAEB Workshop, 2022 MSOM Conference, 2022 OR Conference, 2019 WITS, 2019 WCBA, 2018 Meeting of the POMS, and 2018 USAEE Conference. Marie-Louise Arlt acknowledges the support of the German Academic Scholarship Foundation.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Notes

How to cite this article

Arlt M-L, Gust G and Neumann D (2025) A Deep Demand Response Program for Local Electricity Systems. Production and Operations Management XX(X): 1–18.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.