Abstract

Background

Assistive technologies (ATs) that use functions based on artificial intelligence (AI) are set to become more relevant in the coming years. While research highlights the important role of professionals in social services in successfully implementing ATs, it remains unclear which factors are relevant in the context of AI-infused ATs.

Objective

With this scoping review, we aimed to discover which aspects influence the acceptance of professionals working in social services towards AI-infused ATs.

Design

Online databases were used to identify papers published 2019–2024, from which we selected six studies examining the viewpoints of professionals in education, (health)care, and therapy. Thematic analysis of the selected studies was executed and principal findings are summarized.

Results

All six studies did research on socially assistive/care robotics. 3 out of 6 studies utilized technology acceptance models in their research. The thematic analysis identified five categories as external predictors and four as contextual factors. Two studies reported results based on factors from other theories.

Conclusion

This review reveals the scarcity of research on the acceptance of professionals in social services towards AI-infused assistive technologies, with results drawing exclusively from human-robot-interaction research. We propose that more research is needed to determine what factors are relevant for the acceptance of AI-infused assistive technologies by professionals in social services.

Keywords

Introduction

Even though artificial intelligence (AI) technologies are not necessarily a new technology innovation, AI has gained significant popularity since the release of the ChatGPT software in 2022. Since then, there has been a widespread public discussion about potential risks and promising opportunities. However, AI is an ‘inconclusive term [that] represents a vast array of technological innovations and activities […]’. 1 It includes various technological innovations, leading to multiple definitions of AI. 2 In summary, AI refers to technologies designed to mimic/emulate human thought and behaviour. 3 Important subcategories include, for example, (natural) language processing, robotics, expert systems, machine learning, and deep learning.1,4

It is predicted that assistive technologies (AT) that use AI functions are set to become more relevant in the coming years. 5 Many authors claim that the integration of AI into assistive technologies has the potential to improve support options.6,7 Assistive technologies or products can be described as ‘external product (including devices, equipment, instruments, or software), especially produced or generally available, the primary purpose of which is to maintain or improve an individual’s functioning and independence, and thereby promote their well-being’. 8 Such assistive devices are essential tools in everyday life for people with disabilities.9,10

In a comprehensive exploration of AI-based assistive technology research for individuals with disabilities, Dange et al. identified the growing interest and active research as well as the increase in the application of AI techniques in this field. 11 Thus, different types of AI-infused assistive technologies are currently emerging, for example, AI-mediated communication tools (AI-MC), 12 artificial intelligence tools for education (AIEd), 13 AI-infused assistive devices for people with physical disabilities (e.g. AI-infused wheelchairs 14 ) or AI-infused assistive applications for deaf and hard of hearing, 15 and many more. Based on Spallazo et al., AI-infused ATs are ATs that integrate AI systems. 16

Results from literature reviews like Iannone and Giansanti et al. on integrating AI into assistive technologies for people with autism highlight the potential for specific user groups. 17 However, ‘Ethical concerns, biases, lack of transparency, insufficient explainability, and limited trustworthiness are major challenges when using generative AI in assistive technologies, particularly in systems that impact people directly’. 18

When implementing and using such technologies, different factors play an important role. Research highlights the importance of professionals in social services in successfully implementing assistive technologies.19–22 Milella & Bandini emphasize the continuing decline in the ‘caregiver-to-patient ratio’, which is anticipated to increase the integration of intelligent assistance in general care significantly. 23 At the same time, there seems to be hardly any in-depth knowledge and approaches to improve the acceptance of these stakeholders. 24

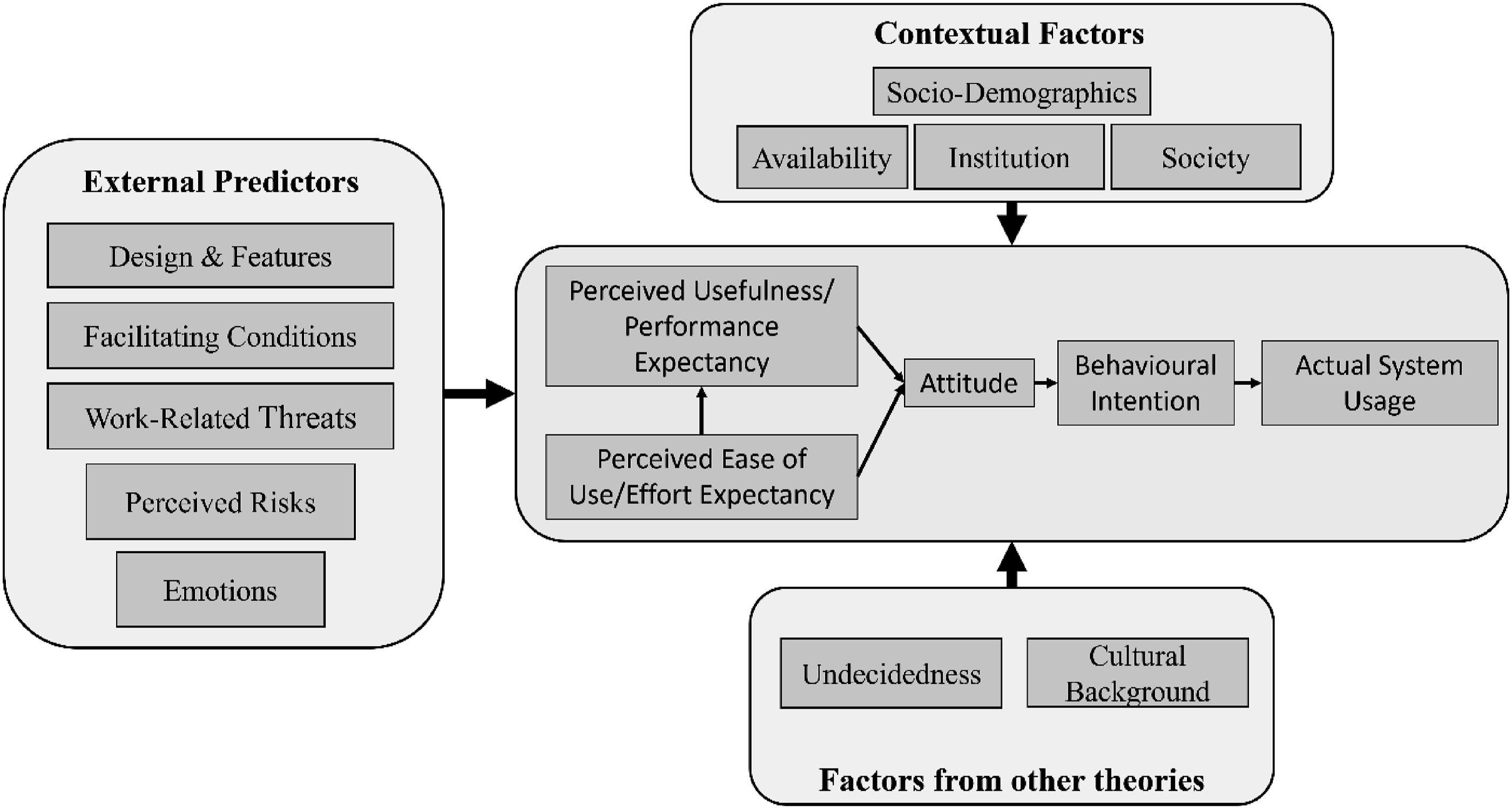

However, acceptance is an essential factor in the actual use of technologies. In the last decade, different technology acceptance models were developed and tested.25–31 A synthesis of the most important models and concepts can be found in Winkelkotte et al. 32 Besides the key factors that remain mostly consistent throughout all acceptance models, a deeper understanding of factors in specific contexts and regarding particular user groups and technologies is necessary. In general, modification of technology acceptance models can be categorized into four major categories: External Predictors, Contextual factors, Factors from other theories and Usage measures. 33 Currently, models from different research fields designed explicitly for AI technologies are emerging (e.g., Refs. 34 and 35). However, it remains unclear which factors are relevant in the context of AI-infused ATs.

Rationale

Considering the heterogeneity among vulnerable user groups, numerous factors must be taken into account when providing technology. 36 Consequently, without knowledge of relevant professionals’ perspectives on AI-infused assistive technologies, the impact, opportunities, and risks on vulnerable user groups and relevant contexts are difficult to assess. Yet, this knowledge will be crucial for successfully developing, implementing, and using AI-infused assistive technologies. Moreover, professionals in the context of social services are oftentimes underestimated as facilitators of technology. 37

Objective statement

Therefore, this paper aims to discover which aspects influence the acceptance of such technologies and identify research gaps in assistive technologies and technology acceptance. Ultimately, this will help reflect on using AI-infused assistive technologies in the everyday use of vulnerable user groups. This results in the following research question: What factors influence the acceptance of AI-infused assistive technologies by professionals working with vulnerable user groups in social services?

Eligible participants were those professionally working with or assisting vulnerable user groups in settings associated with social services, including teachers, caregivers, and healthcare professionals. As pointed out by Payne, social service is a ‘broad term (…) that usually includes education, healthcare, housing, the personal social services, and social security’. 38 That means social services can be used as an umbrella term for health and social care systems and services. Professions directly working with vulnerable user groups in these contexts range from care workers, assistants, social pedagogues, therapists, teachers, etc. A complete list and rationale are provided in Multimedia Appendix 1.

This scoping review aims to provide an overview of existing knowledge for the acceptance of professionals working in social services of AI-infused assistive technologies. In general, scoping reviews can be described as a preliminary assessment of available research literature with the goal of summarizing evidence and identifying key concepts to inform future research, and identify or address knowledge gaps.39–42

The review will include a thematic analysis42–44 based on established technology acceptance models and theories and clustered along the four major categories of the Technology Acceptance Model (TAM) modification described by Marangunić and Granić.

33

In detail, the objectives of this review are the following: (1) To scope the body of literature about factors that influence the acceptance of professionals in social services towards AI-infused assistive technologies. (2) To identify what methodologies and concepts were used to measure acceptance. (3) To identify which AI-infused assistive technologies were used in research. (4) To identify significant knowledge gaps and synthesize the knowledge about factors that influence the acceptance of professionals in social services towards AI-infused assistive technologies as a guideline for future research.

Methods

This scoping review was based on the methodological frameworks described by Newman and Gough, 45 Arksey and O´Malley, 46 Peters et al., 42 and the Preferred Reporting Items for Systematic Reviews and Meta-Analyses Extension for Scoping Reviews (PRISMA-ScR) guidelines. 47

Description of the methodology

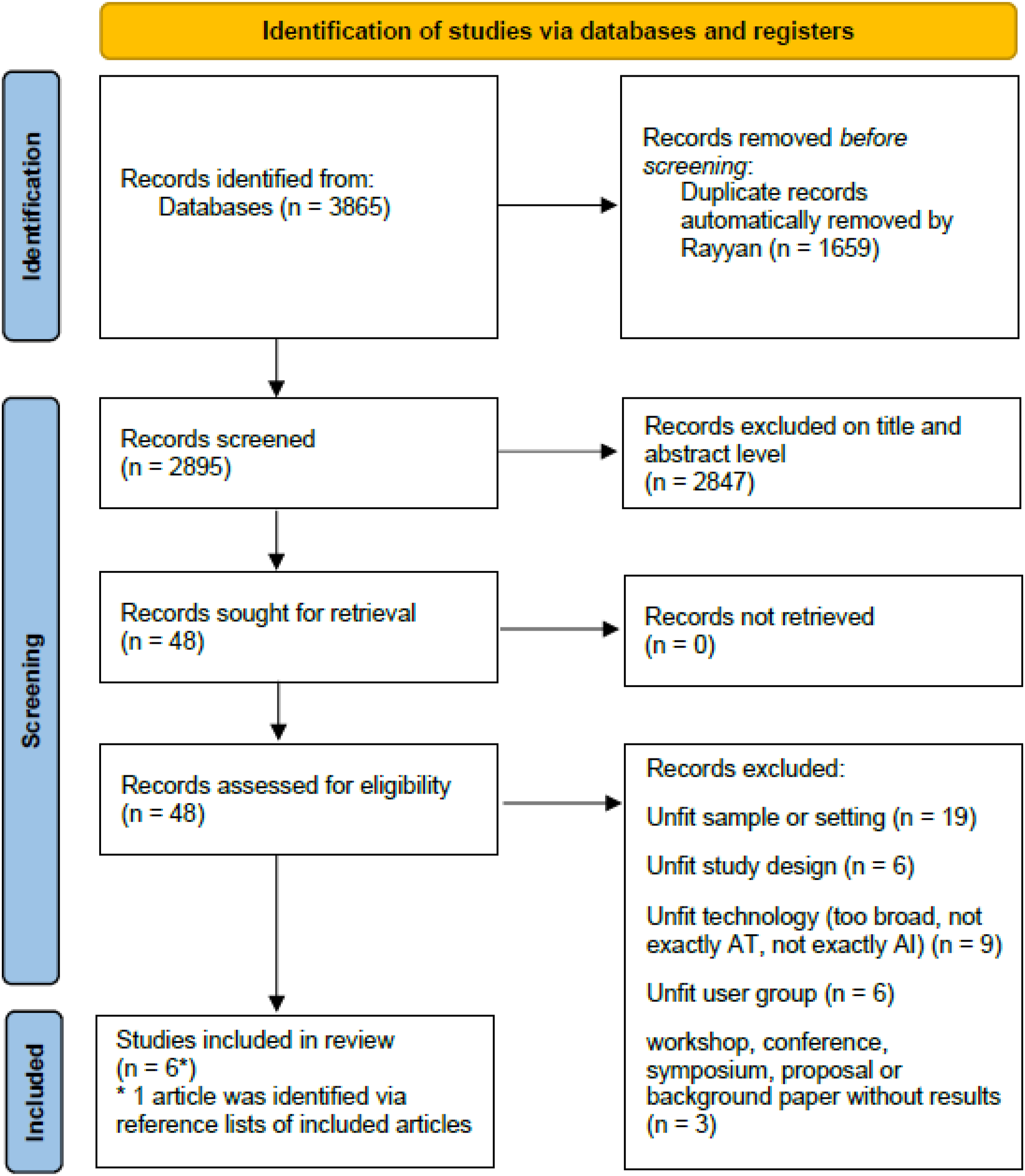

Figure 1 shows the PRISMA diagram of the study selection process. PRISMA 2020 flow diagram of the study selection process.

Search strategy and study selection

The protocol for this review was prepared in September 2024 and was discussed and revised with two colleagues. Next, the search strings were tested and refined in an iterative process in September and October 2024. The final search took place in October 2024: - - -

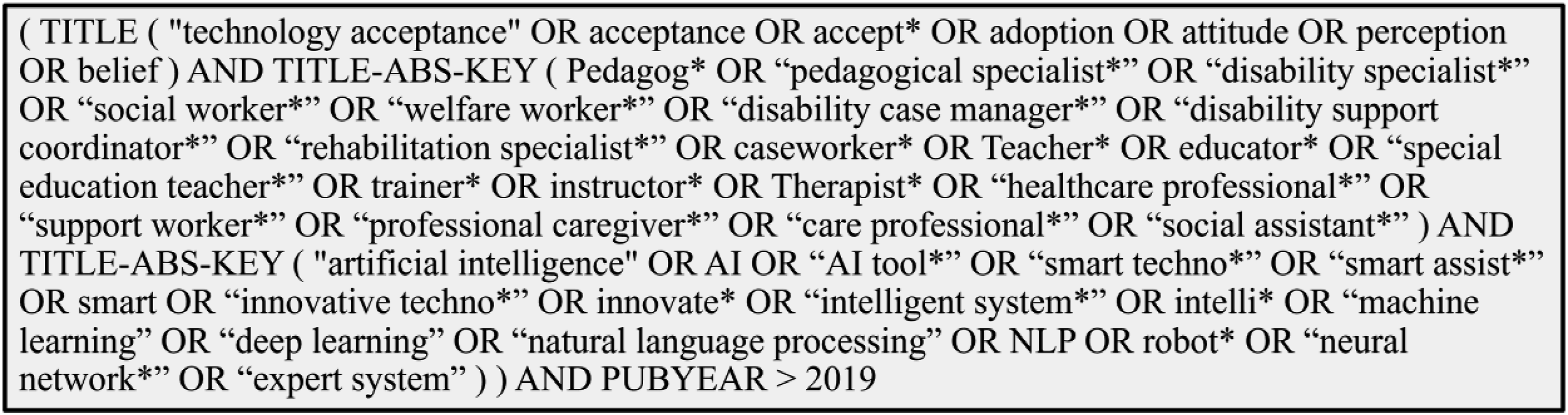

The following restrictions were applied: The publication date must not be earlier than 2019. The title must include keywords related to technological acceptance. Due to the feasibility of the review, the search was only conducted in English. For reference, Figure 2 displays the search conducted in the Scopus database. The search strings consist of three layers: keywords related to factors influencing acceptance, professionals in social services, and AI-infused ATs. Therefore, articles needed to meet the inclusion criteria across all three domains. The reproducible complete electronic final search strategy of all databases searched, including inclusion and exclusion criteria, is provided in Multimedia Appendix 1. Search strings Scopus.

A total of 3865 articles were identified through the database search. Using the Rayyan software, 48 1659 duplicates were removed automatically. Articles were then screened on a title and abstract level. In the next phase, 48 articles were assessed for eligibility on a full-text level. Articles were excluded after the full-text screening process for several reasons. The most common reason for exclusion was the sample or setting being unfit. For example, the sample in several studies (mainly) consisted of students, pre-service teachers or non-professional caregivers. Additionally, some studies mixed different professions inside and outside of the scope of this review. In some cases, the results were not related to professionals in social services, but rather focused on the acceptance of vulnerable user groups towards the technology. Additionally, some studies were excluded because they either reported on ATs that could not be clearly identified or categorized as AI-infused or emphasized AI in a general sense without specifically focussing on ATs (e.g. AI-based training programs for teachers).

A total of five articles were identified for inclusion from the databases searched. Reference lists from these publications were manually searched for any reports missed by database searches, and one extra article was found to be eligible for inclusion in the scoping review.

Data collection

The data extraction process was charted in a spreadsheet and is provided in Multimedia Appendix 2. The articles were read several times to identify key factors related to social service professionals’ acceptance of AI-infused ATs. The data was also analysed through thematic analysis, identifying and clustering relevant factors for acceptance.

Results

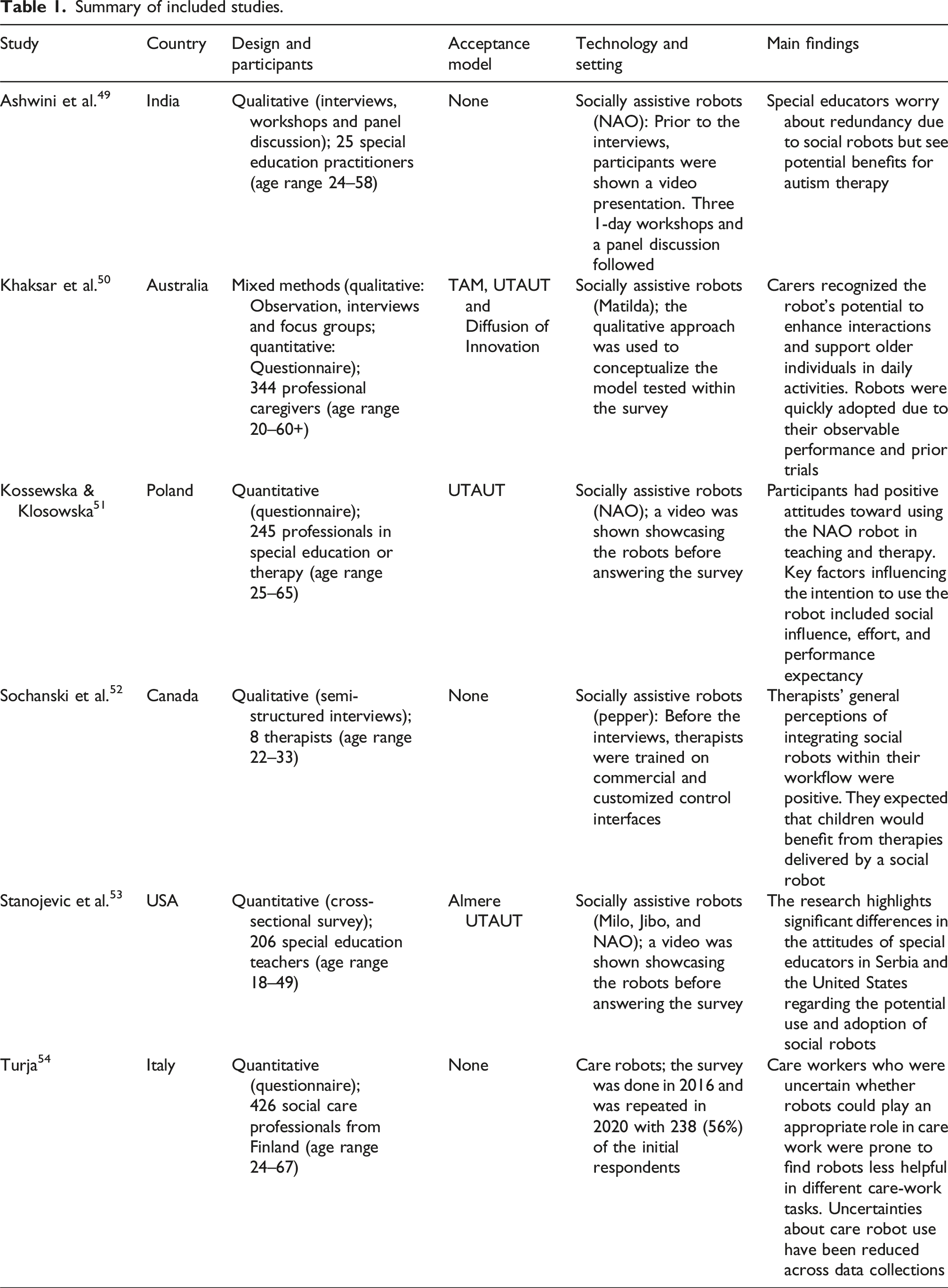

Summary of included studies.

General characteristics of included studies

The six articles included were mainly published between 2020 and 2024. The included articles comprise research from six different countries. The study design ranged from quantitative (n = 3/6) to qualitative (n = 2/6) and mixed-method (n = 1/6) studies. All quantitative studies used a questionnaire/survey distributed primarily online. The qualitative studies used interviews, focus groups and panel discussions. The mixed-methods study consisted of a qualitative part followed by a quantitative questionnaire. In total, 3 out of 6 studies referred to and/or used an established technology acceptance model for their study – all three studies referred to the Unified Theory of Acceptance and Use of Technology (UTAUT) model, with one also utilizing the TAM and Diffusion of Innovation model. The studies took place in three contexts: education, (health)care, and therapy. The sample includes 770 care professionals (2 out of 6 studies), 231 educators (2 out of 6 studies) and 253 therapists (2 out of 6 studies).

All of the included articles focused on robots as AI-infused technologies, such as socially assistive robots (SAR; 5 out of 6 studies) and care robots in general (1 out of 6 studies). AI functions of the SARs include, for example, natural language processing and gesture analysis. Although no specific care robots are mentioned, the paper emphasizes the significance of AI in care robots.

The included articles mentioned the following user groups: Persons, children and students with Autism Spectrum Disorder (ASD), older adults, people with intellectual disabilities, and people with care needs.

Synthesis

To synthesize existing knowledge on the acceptance of AI-infused assistive technologies relevant to vulnerable user groups by professionals in social services, a thematic analysis across the six selected studies was performed. Based on established models of technology acceptance and the four major categories of TAM modification,

33

categories in Figure 3 emerged from the thematic analysis. In addition to the core elements of the acceptance models, we identified five categories as external predictors and four as contextual factors. Two studies reported results based on factors from other theories. Thematic categories of the themes emerged in the selected studies.

Core elements

All studies reported results that relate to and/or approve the mediating capacity of perceived usefulness/performance expectancy and perceived ease of use/effort expectancy for attitudes towards technology and intention to use.

External predictors

The identified external predictors were clustered into the following subcategories: Design and features, facilitating conditions, and work-related threats and perceived risks.

Design and features

In the study by Ashwini et al., three key themes related to positive acceptance in technology design and features were identified: human-like attributes, language options, and reduced technical complexity. Khaksar et al. noted that carers highlighted social robots’ ability to recognize gestures and communication, which could enhance adoption in aged care services (=Compatibility). Stanojevic et al. noted significant differences between Serbian and U.S. participants’ perceived adaptiveness and perceived sociability. In addition, Sochanski et al. mentioned adaptability (e.g. personalization) as well as the Usability of the Interface as important factors.

Facilitating conditions

Khaksar et al. mentioned that robots that are observable and triable before usage positively influence acceptance. Furthermore, social influence and its different impacts on encouraging the possible use of SARs were also notable. This is in line with Sochanski et al. who reports that the perceptions of participants changed after they had the opportunity to utilize and control a robot. Furthermore, Ashwini et al. mentioned the necessity of adopting appropriate training programs. According to Stanojevic et al., the importance of facilitating conditions was the only domain that remained stable in culturally different backgrounds.

Work-related threats

Khaksar et al. specifically reported concerns about performance within the workplace while using social ATs. These include the potential of being tracked by others at work, the possibility of overreliance on social ATs, causing the loss of self-confidence and self-awareness, and the possibility of losing jobs via social ATs. Overall, concerns about potential job loss due to replacement by social robots emerged as a common theme. Ashwini et al. specify that concerns can be rooted in negative experiences with new technologies.

Perceived risks

Khaksar et al. adopted and tested perceived risks and summarizes, that ‘building trust, awareness of their performance, and secure working environment between social robots and carers may facilitate their acceptance in aged care facilities’. Trust was also mentioned by Ashwini et al. and was related to the fear that special education practitioners becoming marginalized, and robots could cause further divisions. On the other hand, trust was positively affected when participants had seen best practices of successful implementation or their positive trajectory due to experiences with other technologies. According to Sochanski et al. trust was primarily influenced by the interfaces utilized to control the robot.

Emotions

Stanojevic et al. report that the influence of anxiety was significantly different because anxiety seems less of an obstacle to the adoption and implementation of SARs in the United States than in Serbia. Also, the Perceived Enjoyment was significantly different in both samples.

Contextual factors

Identified contextual factors were categorized into Availability, Institution, and socio-demographic data.

Availability

Ashwini et al. highlight the necessity of availability and basic internet connectivity. Also, in line with Stanojevic et al., the cost of technology is an important predictor, especially in specific regions.

Institution

Ashwini et al. mentioned specific institutional policies as hindering or benefiting factors for acceptance. In addition, Stanojevic et al. highlight the approval or guidance of authorities as an important factor.

Society

Sochanski et al. report societal pressures due to negative stereotypes or misconceptions have a significant impact on the overall acceptance of robots.

Socio-demographic

None of the studies specifically reported socio-demographic factors. Only Stanojevic et al. mentioned age, gender, and years of experience as different influential factors between U.S. and Serbian professionals.

Other theories

Two studies highlighted the linkage between acceptance and other theories. Turja reports that undecidedness correlates with service robot acceptance (uncertainty model 55 ). Stanojevic et al. used the analysis of the cultural background (Hofstede dimensions 56 ) to explain differences in the Serbian and U.S. sample.

Conclusion/discussion

In this scoping review, we identified six studies published between 2020 and 2024 addressing factors relevant to the acceptance of social service professionals. Our findings indicate a paucity of research focussing specifically on professionals in social service and AI-infused ATs outside of the well-established research in human-robot interaction. We also discovered that studies referring to technology acceptance models mostly mentioned the UTAUT model. Furthermore, the thematic analysis revealed different factors clustered into external predictors, contextual factors, and factors from other theories.

The results of this review show, that not only purely technological factors but also other factors which not necessarily relate to the technology’s performance play an important role. This is in line with findings from Wanner et al., who state that, in the context of intelligent systems, it is necessary ‘to look beyond performance as the dominating decision factor for intelligent system efficacy’. 57 Advances in this field are essential due to the lack of ethical guidelines for interactions between people with disabilities and AI, as the emphasis is primarily on product usability. 58

In the context of perceived risks, Trust seems to play a significant role in the acceptance of AI-infused assistive technologies. This finding aligns with early adaptations of acceptance models for AI technologies, such as those proposed by Scheuer. 34 First implications of relations of trust and other factors provided by Vorm and Comb, show that trust is mainly influenced by system transparency. 35 Thus, research and knowledge on AI Explainability 59 can play a crucial factor in theorizing AI acceptance. This indicates that ethical considerations in AI are crucial for developing inclusive and equitable AI-infused ATs. In addition to AI Explainability, Fairness – particularly regarding biases in data60,61 – plays a crucial role. Hence, Dieker and Zaugg emphasize the need for representation by people with disabilities in the development of AI-infused ATs. 62 First advances like the Guidelines for Participatory Technology Design with People with Disabilities aim to improve participatory technology development. 63

In conclusion, the trend of AI infusion should also be viewed critically. Engström notes that users of popular online platforms often lack real choices regarding AI exposure due to its integration. 64 This risk may also apply to ATs, especially outside robotics, where it might be harder to identify AI usage.

In addition, the implication that the appearance of AI-infused ATs might have an impact on acceptance is also in line with Scheuer. He argues that acceptance models like the TAM or UTAUT are only feasible if AI is primarily perceived as a technology and not as a personality. Otherwise, other models might be more suitable for analysis.

Finally, the included studies show that the key factors in technology acceptance models generally remain consistent and, thus, can be identified as crucial factors for acceptance within the scope of this review. This aligns with recent research from other fields. For example, Ismatullaev and Kim state in their review on adopting AI systems that Attitude and Perceived Usefulness have been identified in many studies as the most influential factors. 65

Limitation

This scoping review has some limitations. In order to make the review more feasible, only scientific papers published in English were included. Furthermore, this scoping review is set in a fast-evolving scientific landscape, and thus, it needs to be noted that results are only up to date as of October 2024. Biases in identifying primary articles and extracting data from the articles were corroborated by the fact that only one researcher was responsible for evaluating and analysing the articles. Another threat is the selection of databases or digital libraries as well as search strings, since these may not cover the completeness of the studies carried out in the context of the problem. This may include different labels for AI-infused ATs and professions, user groups and questions from other areas. This becomes evident by the fact that, despite a broader goal, the sample only included studies on robotics as AI-infused ATs. As a result, the findings may not be applicable to other AI-infused ATs and require further verification.

Identification of gaps

This scoping review highlights a few research gaps that need to be addressed. While screening, it became evident that many papers failed to be included because their sample consisted primarily of student volunteers or included other stakeholders (e.g. parents) or medical staff not associated with direct care (e.g. doctors). Thus, more research is necessary, including employees working in facilities related to social services and a refined research agenda that clearly labels and maps the different professions. Furthermore, the under-representation of certain professions (e.g. social workers) indicates a research gap. Additionally, the absence of a theoretical framework related to technology acceptance in 3 of the included studies suggests that future research needs to be based on existing theoretical knowledge. Hence, new emerging models focussing on AI technologies must be utilized and tested. However, the lack of evidence, especially regarding other AI-infused ATs, means that high-quality research is needed to determine what factors are relevant to the acceptance of professionals in social services. Ultimately, this includes summarizing different AI-infused AT types and categories and differentiating their acceptance factors.

Future research directions and defined research agenda

Overall, it is imperative to note that the panorama of published studies fails to consider and report relevant methodological aspects and theoretical background on acceptance and address employees, especially in a context related to social pedagogy and social work. Generally speaking, research in this area still seems exploratory. This is particularly evident due to the many studies with samples including student volunteers. Furthermore, it is apparent that the articles that meet all the inclusion criteria mainly report on robotics. Hence, more effort is needed to take off from this phase. This research gap could be addressed by mapping relevant AI-infused ATs tailored for specific users. Based on the methodological approach in this review, future literature reviews could focus either on specific AI-infused ATs with the exclusion of robotics, or specifically on robotics that can be characterized as AI-infused ATs. Furthermore, focussing on certain professions (including student volunteers) rather than a multitude of professions might help to gain more information on the current state of research. In this context, an update on the methodological approach (e.g. including or solely focussing on grey literature) might also be beneficial. On the other hand, empirical study designs should be performed to address the identified gaps. Empirical validation through survey-based studies to test identified predictors in relation to other AI-infused ATs appears particularly relevant. Furthermore, newly emerging predictors, such as trust, should be prioritized. In the long run, longitudinal studies examining how the adoption of AI-infused ATs changes over time, along with cross-cultural analyses investigating cultural differences, will be important for gaining a deeper understanding. Overall, research on AI-infused AT will be a fast-changing and growing field. Hence, an update to this review will be possible and feasible in the next years.

Research on the technology acceptance of AI-infused ATs by professionals in social services still has a long way to go. By synthesizing the existing knowledge, this scoping review managed to screen some crucial points of this process and identify knowledge gaps and directions for further research. Considering all this information, studies of higher methodological quality targeted at specific AI-infused ATs (e.g. AI-MC, AIEd, AI-infused wheelchairs, and AI-infused assistive applications for deaf and hard of hearing) and professions are necessary to answer the research question because soon, the quality of work by social service professionals and the quality of life by vulnerable user groups will most likely depend on skills and attitudes concerning AI-infused ATs.

Supplemental Material

Supplemental Material - Acceptance of AI-Infused assistive technologies for vulnerable user groups by professionals in social services – Insights from human-robot interaction research. A scoping review

Supplemental Material for Acceptance of AI-Infused assistive technologies for vulnerable user groups by professionals in social services – Insights from human-robot interaction research. A scoping review by Lukas Baumann, Susanne Dirks, and Bastian Pelka in Technology and Disability.

Supplemental Material

Supplemental Material - Acceptance of AI-Infused assistive technologies for vulnerable user groups by professionals in social services – Insights from human-robot interaction research. A scoping review

Supplemental Material for Acceptance of AI-Infused assistive technologies for vulnerable user groups by professionals in social services – Insights from human-robot interaction research. A scoping review by Lukas Baumann, Susanne Dirks, and Bastian Pelka in Technology and Disability.

Footnotes

Author contributions

Conception: Baumann, Dirks, and Pelka.

Performance of Work: Baumann.

Interpretation or Analysis of Data: Baumann.

Preparation of the Manuscript: Baumann.

Revision for Important Intellectual Content: Dirks and Pelka.

Supervision: Dirks and Pelka.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.