Abstract

Employers are increasingly turning to innovative artificial intelligence recruiting technologies to evaluate candidates’ online presence and make hiring decisions. Such social media screening, or social profiling, is an emerging approach to assessing candidates’ social influence, personalities, and workplace behaviors through their publicly shared data on social networking sites. This article introduces the processes, benefits, and risks of social profiling in employment decision making. The authors provide important guidance for job applicants, technical and professional communication instructors, and hiring professionals on how to strategically respond to the opportunities and challenges of automated social profiling technologies.

The hiring process has evolved with technological advancements, with an increasing number of hiring professionals turning to social media platforms to gather information about job applicants (Landers & Schmidt, 2016; Nikolaou, 2014, 2021). While traditional hiring methods such as résumé screening and in-person interviews have been used for decades, social media can provide additional insights into a candidate's personality, soft skills, and workplace behaviors (Roth et al., 2016; Tews et al., 2020). According to a CareerBuilder (2018) survey, 70% of employers screen candidates’ social media content during the hiring process to evaluate candidates’ qualifications, professional online personae, and reputation, and 57% of employers rejected candidates due to damaging content on social media.

The impact of social media on recruitment varies across different industries. Information technology (IT), manufacturing, and sales are among the top industries that are most likely to research potential job candidates’ social networking profiles (CareerBuilder, 2018), and these are industries in which a large number of technical communicators pursue a career (Carliner & Chen, 2018). In the hospitality industry, a sector that hires many business students, 50% of recruiters reported using social media to screen job applicants (Chang & Madera, 2012). In other words, students in science, technology, engineering, mathematics, and management, the primary audience of technical and professional communication (TPC) programs and service courses, are more likely to be subjected to social profiling in job searches than are students from other disciplines. The heavy use of social profiling in these sectors may be connected to the fact that IT, sales, and hospitality belong to mass-hiring, high-attrition industries (Heaslip, 2022; Tolan, n.d.; Zivkovic, 2022), which not only have huge numbers of applicants for job openings but also are more likely to lose employees due to outside career prospects and issues related to stress, workplace culture, and the lack of training and growth opportunities (Gupta, 2013).

In recent years, the emergence of artificial intelligence (AI) has revolutionized the hiring process, particularly in social media screening (Kong et al., 2021; Qamar et al., 2021; Thibodeaux, 2017). Manually examining social media profiles, such as searching Facebook for a candidate, can be time-consuming, subjective, and inefficient (Nikolaou, 2014) and a significant barrier for hiring professionals who need to evaluate a large number of job applications. In contrast, AI-driven screening tools have been shown to provide more consistent, efficient, and scalable assessments of social media profiles (Kalkat, 2019; Upadhyay & Khandelwal, 2018). These tools use machine-learning algorithms to analyze large-scale social media content in order to provide an automated evaluation of candidates’ potential and organizational fit (Bilal et al., 2019). To distinguish AI screening from manual examination of social media profiles, we use the term “social profiling” to refer to AI-assisted social media screening.

Many concerns have been raised about the use of social profiling tools, particularly regarding algorithmic biases and privacy invasion. For example, research has shown that these tools may inadvertently perpetuate discrimination against people with disabilities (Packin, 2021; Ruggs et al., 2016). Moreover, social profiling may collect and use personal data in ways that are not transparent to job applicants, potentially violating their privacy rights and harming their perceived credibility and career prospects (Tursunbayeva et al., 2022). For instance, Stevenson (2012) demonstrated how a marketer with 15 years of consulting experience failed to land a job due to a low “Klout score” (i.e., a rating that measures a user's online social influence). Harwell (2018) also reported a similar case in which a babysitter could not secure a position without passing an AI scan for respect and attitude (e.g., detection of swear words in social media activities). Despite these concerns, the use of AI-driven automated screening tools is likely to continue to grow in the hiring process, so it is important to examine the processes, opportunities, and risks associated with this new technology. Such research is particularly important to TPC service classes because these classes teach job-search genres to undergraduate students across the country. Therefore, in this article, we examine three research questions:

How do social profiling tools assess job candidates’ online profiles? What are the promises and perils of social profiling tools for employers and job candidates? What strategies can candidates employ to perform well in AI-assisted recruiting, and how can TPC instructors equip students from all disciplines with the knowledge and skills necessary to navigate social profiling technologies?

We begin by introducing our research design and providing a definition and taxonomy of social profiling. We then delve into the functionalities of social profiling tools before exploring the potential and pitfalls of such technology. We conclude by discussing some best practices and implications for job seekers, TPC classrooms, and hiring professionals regarding strategic and ethical responses to this transformative technology.

Research Design

To explore practices and theories related to social profiling, we employed two approaches: examining social profiling tools and reviewing existing literature.

Social Profiling Tools

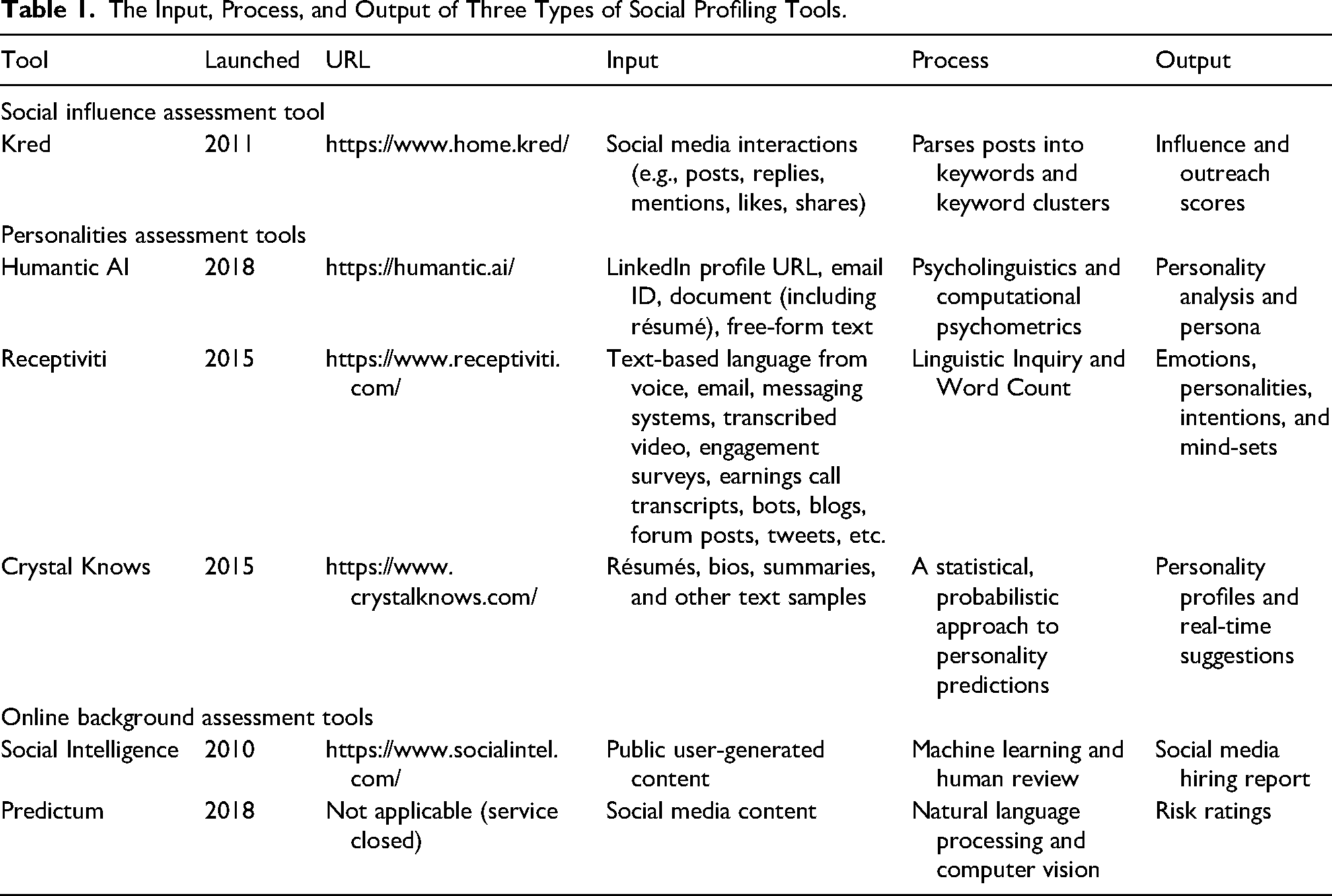

We identified prominent AI-driven profiling tools developed by technology companies and start-ups to assist with assessing candidates for employment. To understand their functionalities, we categorized their focus areas of assessment into three types: social influence, personality, and online background. Social influence assessment tools (e.g., Klout, Kred, PeerIndex, CreatorIQ, and Heepsy) measure candidates’ social influence based on “how far and how fast information is spread on the social network to influence another's action and behavior” (Ngai, 2011). Personality assessment tools (e.g., Humantic AI, Receptiviti, and Crystal Knows) evaluate candidates’ personalities and soft skills by analyzing communication records generated by candidates. Online background assessment tools (e.g., Social Intelligence and Predictum) conduct background checks on candidates’ behaviors and attitudes through their public information online.

To provide consistent coding and analysis of these tools, we evaluated them using the input–process–output model, a widely used approach in software engineering to understand information-processing programs. To understand recruitment and hiring, we define input as the type of candidates’ information collected by potential employers for automated screening. Process refers to the technologies and procedures of analyzing candidates’ data input. And output refers to the deliverables of social profiling tools that present candidates’ characteristics and employability. Table 1 summarizes the input, process, and output of the three types of social profiling tools.

The Input, Process, and Output of Three Types of Social Profiling Tools.

To ensure the relevance of our analysis to potential job applicants in TPC, we selected tools that (a) are currently available, (b) have publicly available material online for analysis, and (c) have an impact on TPC students. Therefore, we excluded social influence assessment tools, such as Klout, because the industries where most technical communicators work do not consider social influence as a core competence. We also excluded Predictum, a background-check tool that has been blocked by Facebook and Twitter due to violations of terms of service on data harvesting and user privacy (Patterson, 2018). In our analysis, we focus on two tools with far-reaching impact: Humantic AI, a personality profiling tool, and Social Intelligence, an online background-check tool. We analyzed a wide range of documents, including company white papers, technical reports, application programming interface (API) documentations, blog posts, use cases, and marketing materials, to understand how these tools work, what roles they play in the candidate-screening process, and what ethical challenges such tools present to users and the larger public.

Integrative Literature Review

Using the ProQuest Central database, the largest multidisciplinary, full-text database in today's market, we collected articles about the use of social media in recruitment and candidate screening. We identified these articles by using the following search terms: artificial intelligence AND AI AND social media AND hiring AND recruit* AND employ*. The truncation character * helps retrieve variations of the search terms (e.g., recruiting, recruitment, employment, employers, employees). Due to the novelty of the topic, our search included two source types: scholarly journals and trade journals. We restricted our search to English-written documents published from January 1, 2017, through December 31, 2022. Our initial search yielded 794 articles, including 230 academic publications and 564 trade publications. After excluding duplicate and irrelevant articles, we ended up with 178 articles in our literature review, consisting of 66 academic publications and 112 trade publications. These articles were published from various fields, including human resource (HR) management and talent acquisition (n = 85), computer science and data mining (n = 44), industrial and organizational psychology (n = 33), law and ethics (n = 10), and computers and composition (n = 6). We then conducted thematic analyses of these 178 articles to explore the key concepts and themes useful for understanding social profiling. We found four key themes: definition and taxonomy of social profiling, social profiling tools, potential and pitfalls of social profiling, and implications for practice. The following sections present our findings from these perspectives.

Definition and Taxonomy of Profile, Profiling, and Social Profiling

The articles in our literature review presented three key terms that are relevant to defining social media screening: profile, profiling, and social profiling. A profile is a collection of interrelated data that represents a category of subjects—human or nonhuman, individual or group (Council of Europe, 2010; Ferraris et al., 2013). Profiling is the application of this data collection to a subject by using technology and algorithms to construct individual profiles from large data sets in order to predict personal preferences, behaviors, and attitudes and make recommendations or decisions about them (Bilal et al., 2019; Ferraris et al., 2013; Hildebrandt, 2008). It is a form of dataveillance that produces a new type of predictive knowledge by making visible patterns that are not apparent to the naked eye (Hildebrandt, 2006, 2009). In other words, profiling is considered “the only viable technology” that deals with two problems of an information society: information overload and the blurring borders between noise, information, and knowledge (Hildebrandt, 2006, p. 548).

Social profiling, or user profiling, is the process of modeling user profiles by collecting publicly available data from online social networks, such as Facebook, Twitter, Instagram, LinkedIn, and Pinterest (Bilal et al., 2019). It has been used in both public and private sectors, including security and criminal investigations, finance, marketing (e.g., for recommendations, personalized searches, and advertisements targeted to customers), health care, education, and employment (Ferraris et al., 2013; Kanoje et al., 2014). In the employment sector, social profiling techniques can help classify and analyze candidates’ potential for recruitment or organizational management purposes. For instance, Mihuandayani et al. (2018) discussed machine-learning techniques for retrieving and categorizing candidates’ personality traits based on the Dominance, Influence, Steadiness, and Conscientious (DISC) Model through automatic analyses of candidates’ Facebook and Twitter activities. Ni et al. (2017) proposed a skill–knowledge–graph approach to understanding employees’ behavioral patterns through automated analyses of large amounts of real-time data generated from employees’ social media activities.

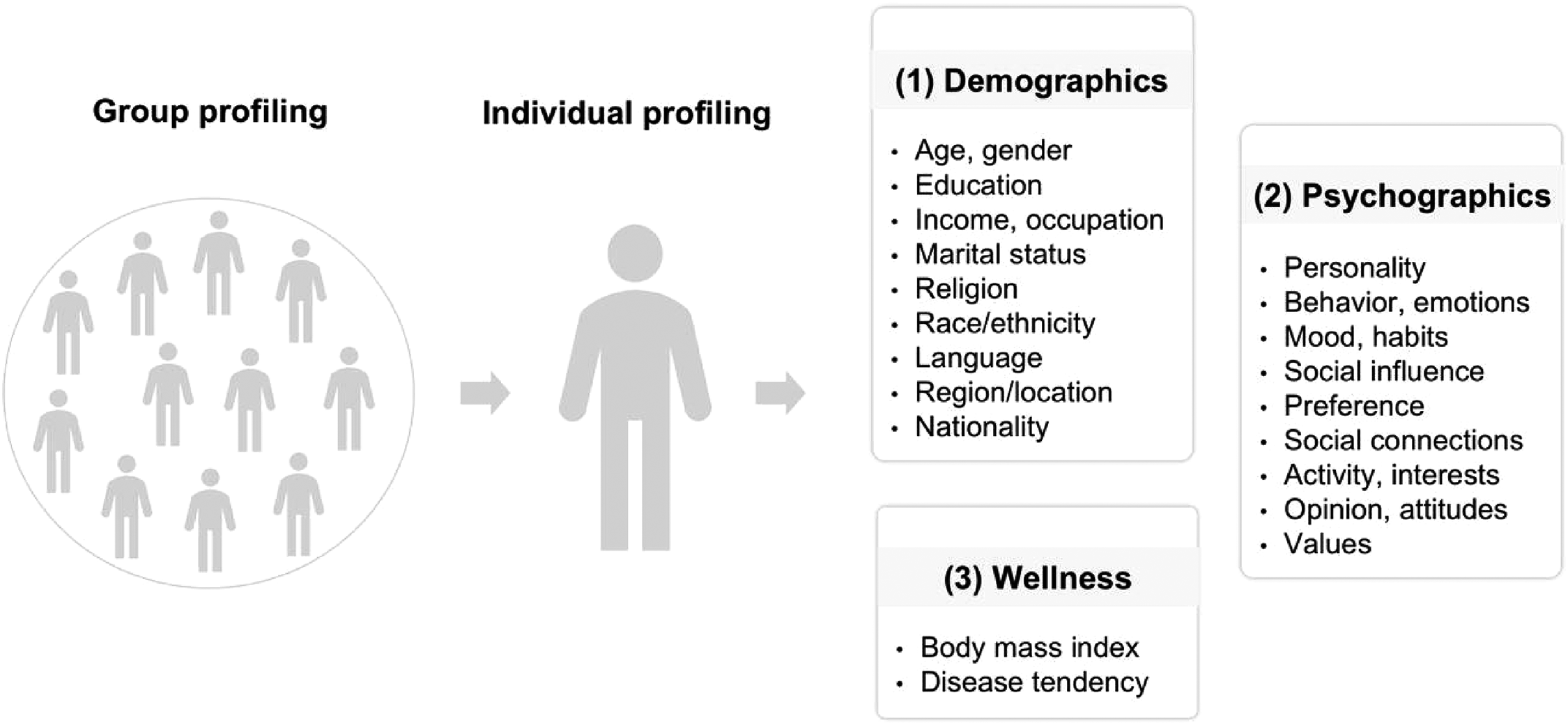

After reviewing the literature on extracting user attribute information from social network data, Bilal et al. (2019) identified a thematic taxonomy of social profiling, which includes group profiling and individual profiling. Group profiling involves collecting information from a group (e.g., a community or category) of users who share common attributes (Hildebrandt, 2006, p. 549). For example, Li et al. (2014) developed a coprofiling approach to jointly profile attributes (e.g., occupation, education) for users based on the assumption that connected users on social media are likely to share similar attributes. Individual profiling, or personalized profiling, identifies and represents the attributes of a person based on the individual's recorded data (Hildebrandt, 2006, p. 549). Bilal et al. (2019) had three categories of individual profiling: (a) demographic profiling (e.g., age, gender, ethnicity, income, education, city), (b) psychographic profiling (e.g., personality, behavior, emotions, habits, interests, attitudes), and (c) wellness profiling (e.g., body mass index, disease tendency). Figure 1 presents the taxonomy of social profiling.

Taxonomy of social profiling (adapted from Bilal et al., 2019).

Social Profiling Tools

We conducted case studies of two leading AI tools in the market of social profiling: Humantic AI and Social Intelligence. We present these tools’ theoretical foundations and data life cycles (e.g., data input, processing, analysis, and output).

Humantic AI

Humantic AI (formerly known as DeepSense) positions itself as an AI assistant for data-driven sales and talent acquisition that assesses the personality, behaviors, communication preferences, and emotional intelligence of prospective buyers and job candidates. With its proprietary AI engine that combines machine learning and natural language processing with social and industrial/organizational psychology, computational linguistics, and psycholinguistics, Humantic AI boasts of being “one of the most powerful cross-domain applied research systems in the world” (How Humantic AI Works, n.d.).

Theoretical Foundation: Psycholinguistics and Computational Psychometrics. Humantic AI leverages psycholinguistics research on correlations between linguistics and personality and computational psychometrics research on correlations between social activity and behavior to perform predictive behavioral assessments and create detailed personality profiles of buyers and job applicants.

The last two decades have witnessed the development of new methods of analyzing personal traits through unique language use (i.e., functional lexical features and machine learning; Argamon et al., 2005), the lexical-availability-based framework (Ramírez-de-la-Rosa et al., 2023), and user-defined dictionaries such as those provided by Linguistic Inquiry and Word Count (LIWC). To automate such an analysis, the LIWC software uses text analysis algorithms to detect the presence of various psychological and linguistic features in texts (Pennebaker et al., 2001). Building proprietary dictionaries based on its corpus of tens of millions of words, LIWC has gone through multiple updates. Its latest version, LIWC-22, provides over 100 different output dimensions and distinguishes between emotions (i.e., positive [emo_pos], negative [emo_neg], anxiety [emo_anx], anger [emo_ang], and sadness [emo_sad] variables) and sentiments, or tones (i.e., positive [tone_pos] and negative [tone_neg] variables). Individual words and word stems are used to categorize different language dimensions such as psychological (i.e., drives, cognition, and affect) and social processes (Boyd et al., 2022, p. 11). The LIWC2015 Development Manual, for instance, reveals that its anger scale contains 230 anger-related words and word stems (Pennebaker et al., 2015).

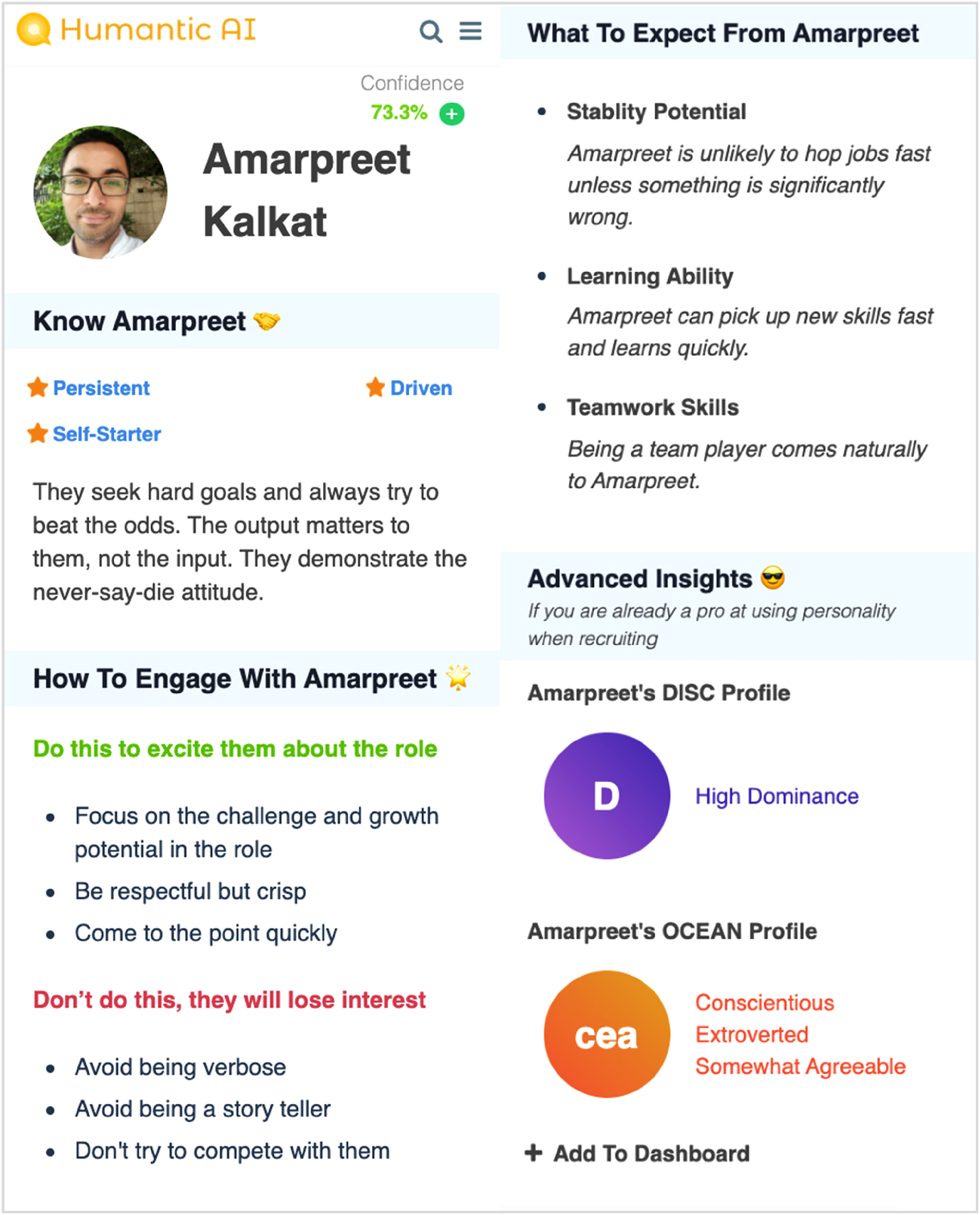

Humantic AI operates on two scientifically validated psychometric models: DISC (dominance, influence, steadiness, and calculativeness) and OCEAN (the Big Five) personality traits (openness, conscientiousness, extraversion, agreeableness, and neuroticism). Instead of using standardized psychological tests or questionnaires, Humantic AI measures personality traits with computational psychometrics by combining traditional psychometrics and cognitive sciences with AI models to analyze large-scale data (Pandian, 2022). Computational psychometrics examine linguistic attributes in texts to identify data that represent personality. The results of the DISC and OCEAN assessments are then used to both identify candidates’ workplace behaviors and soft skills (e.g., learning ability, teamwork skills, attitude, needs for autonomy, and stability) and provide personalized advice for interacting with each candidate.

Data Input: Résumé and Digital Footprints. Using a “no-test assessment,” Humantic AI taps into new data sources—the ever-growing data pool (Matz & Netzer, 2017) provided by social media platforms—or the publicly available digital footprints created in user-generated content, such as posts, likes, forwards, endorsements, and images (Cahmen, 2022; Kalkat, 2019). It measures many features, including linguistic data such as posts, comments, and recommendations as well as nonlinguistic data such as activity patterns, likes, LinkedIn profile information, and metadata (How Humantic AI Works, n.d.). According to its API documentation, it has three types of plans with different types of input (Humantic AI, n.d.): the Standard Plan, which allows input such as plain text, résumé, and other DOCX or PDF files; the Advanced Plan, which adds LinkedIn Profile to the input allowed by the Standard Plan; and the Special Plan, which adds Email ID to the input allowed by the Advanced Plan. AI tools can easily extract personality-related information from people's LinkedIn profiles by counting the number or intensity of social connections and interactions and by analyzing linguistic features related to DISC or OCEAN personality traits (Pandian, 2022). While Humantic AI does not reveal the tools it uses to build algorithms, it likely uses software similar to LIWC to perform both psycholinguistic and computational psychometric analysis in order to identify personal traits in textual data shared by users.

Data Output: Accuracy and Concerns. Humantic AI extracts from user-generated content two types of analytics: personality analysis and persona. A personality analysis provides insights into candidates’ personality traits based on their DISC and OCEAN profiles. A persona presents a candidate's soft skills, providing suggestions about how to engage with and what to expect from the candidate. Figure 2 presents a sample user output of Humantic AI, incorporating the personality analysis and persona of Amarpreet Kalkat, Humantic AI's Founder and CEO. The analysis shows that he excels in learning ability and teamwork skills, preferring a direct communication style.

Sample user output of Humantic AI.

To understand the accuracy of the service's approach to generating a personality profile, Humantic AI also conducted validation studies. The preliminary results suggest an accuracy level of 78%–85%, which resembles the accuracy of traditional psychological assessment tests (Janz, n.d.). And the service claims it can improve recruiters’ productivity by 20%–30% while improving their quality of hire (Humantic AI, 2020).

Tools like Humantic AI require access to postings or LinkedIn profiles to create psychological profiles of candidates in order to evaluate their career-related strengths and weaknesses (Dattner & Chamorro-Premuzic, 2020). Relying on linguistic analysis, Humantic AI requires users to submit 300 words or more for analysis in order to produce useful results. It will not provide results unless it can be at least 40% confident, and for results above 40%, it offers a confidence score based on the amount of data available (How Humantic AI Works, n.d.). Such tools may disadvantage candidates who choose to have little to no social media presence because the tools cannot generate accurate personality profiles with such limited input. Therefore, it is problematic to generalize the measurement of personality traits.

Social Intelligence

Social Intelligence (acquired by Fama) is an “automated, AI-driven pre-employment smart background check” service designed to foster safer workplaces by verifying that prospective employees do not exhibit hostile or offensive behaviors that may violate company values (What Is Social Media Screening, n.d.; Social Intelligence Story, n.d.). In comparison to traditional background-check firms or personality assessment tools like Humantic AI, Social Intelligence extends the scope of prehire screening beyond criminal records or personality traits. It examines job candidates’ potential for harmful behaviors in the workplace, including racism, sexism, violence, and illegal activities. According to the Social Intelligence Blog (https://blog.socialintel.com/), this service has been used across various sectors and industries, such as health care, retail, manufacturing, entertainment, finance, and education. Supplementing social media screening with traditional background checks can assist employers in streamlining their screening processes, reducing the risk of negligent hiring, protecting their company's reputation, and building a healthier workplace culture (Social Intelligence Corp, 2017; What Is Social Media Screening, n.d.).

Data Input: Web and Social Data. The initial step in Social Intelligence's screening process is submitting the personal information it has identified about candidates on their résumé (What Is Social Media Screening, n.d.). This information is crucial in confirming the candidates’ identity, allowing Social Intelligence's proprietary software to proceed searching for user-generated data that are publicly available on the Internet. These data are derived from various sources, including popular social media platforms (e.g., Facebook, Instagram, Twitter, LinkedIn, and TikTok) and online forums (e.g., Reddit, Tumblr, GitHub). The scope of data collected for scrutiny extends beyond the content posted directly by candidates. It also encompasses their engagement activities, such as likes, shares, and follows. This comprehensive approach ensures that Social Intelligence captures a holistic view of candidates’ online presence and behaviors, enabling HR managers to understand, for instance, how candidates view and interact with a company's diversity and inclusion initiatives (Twigg, 2020).

Data Processing: Technological, Ethical, and Legal Considerations. While the exact algorithmic process used by Social Intelligence is not disclosed, it is plausible that the company uses the LIWC software, as we mentioned, to identify key words related to violence, crime, and discrimination. Additionally, it might use sentiment analysis (also called opinion mining) to analyze candidates’ opinions, attitudes, emotions, and evaluations about products, services, organizations, individuals, issues, and events. Sentiment analysis can be conducted at three levels: document level, sentence level, and entity or aspect level (Liu, 2012).

Despite its obfuscated algorithms, Social Intelligence combines technology-driven screening and human review to ensure a thorough, ethical examination of candidates’ profiles (What Is Social Media Screening, n.d.). By leveraging machine-learning technologies, the platform automatically builds an online presence profile for candidates based on their digital footprints. Nevertheless, to prevent the misinterpretation of or erroneous attribution of human patterns to flagged content that may contain parody, sarcasm, or innuendo, human analysts review, verify, and evaluate the information before making any adverse decisions (Social Media Screening at Scale, n.d.). As Peng et al. (2019) highlighted, the presence of systematic biases in hiring recommendations originates from three primary sources: world distribution, algorithmic bias, and human cognitive bias. To mitigate bias in hiring decision making, they advocated for a “hybrid system,” “human-in-the-loop system,” or “algorithm-in-the-loop system” that incorporates both human and algorithmic judgment in candidate screening (p. 125). As the integration of automated hiring with HR management continues to evolve, Social Intelligence's dual approach strives to maintain a fair and accurate evaluation process.

In contrast to traditional background checks that have been shown to disproportionately affect minority candidates in hiring decisions (Social Intelligence Corp, 2020), Social Intelligence has implemented a Protected Class Safety feature. This feature intelligently removes protected class information from consideration, such as race, national origin, gender, age, religion, disabilities, marital status, sexual orientation, and pregnancy. By eliminating this sensitive information, the platform aims to protect job candidates from discrimination and unintentional bias (What Is Social Media Screening, n.d.).

Additionally, Social Intelligence operates as a consumer reporting agency (i.e., assembling or evaluating consumer credit information for the purpose of furnishing consumer reports to third parties) and is thus governed by the Fair Credit Reporting Act (FCRA) of 2018. Unlike conducting in-house background checks of candidates using search engines such as Google, which may pose legal problems for employers, Social Intelligence attends to protecting users’ privacy and security (Social Intelligence Corp, 2017). As Mithal (2011) reported, Social Intelligence's policies and procedures comply with the FCRA, ensuring “the maximum possible accuracy of the information reported from social networking sites” (p. 1). According to FCRA (2018) regulations, Social Intelligence is obligated not only to obtain consent from the candidates prior to conducting the search but also to notify employers who use its consumer reports about their responsibilities to inform applicants of adverse decisions made based on those reports.

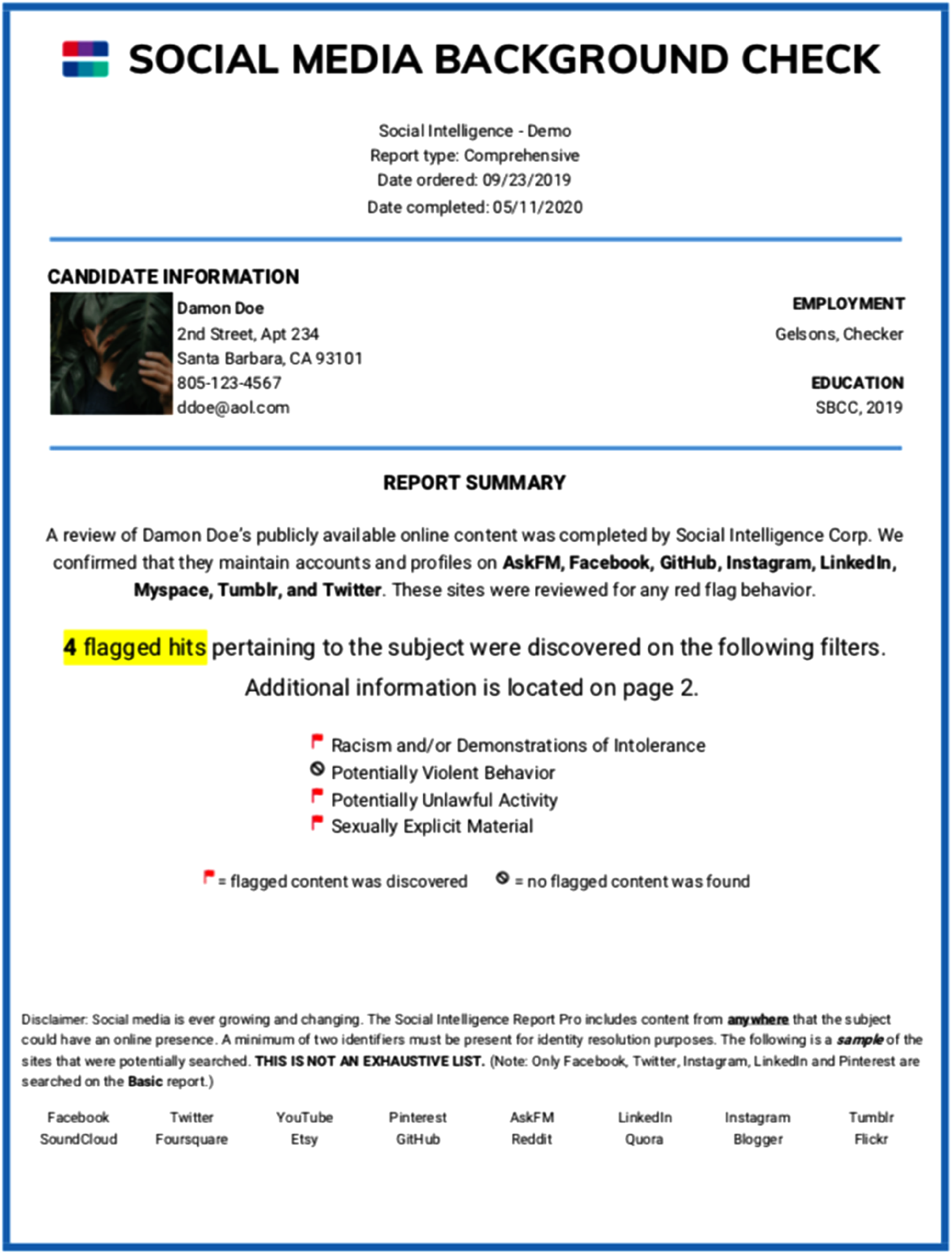

Data Output: Social Media Hiring Report. Combining technology with human review, Social Intelligence generates a comprehensive report showcasing examples of candidates’ problematic behaviors on social media. Figure 3 displays the cover page of a sample report. This fictitious report begins by providing standard background-7screening information, such as contact details, occupational history, and educational background. It then identifies the social media platforms on which the candidate has a presence and has been reviewed. Additionally, the report indicates the number of “flagged hits” found on the candidate's social media accounts within the past 7 years. A flag is assigned if the candidate's online activities suggest any (a) racism or displays of intolerance (e.g., misogyny, homophobia, ableism), (b) sexually explicit material, (c) potentially violent conduct, or (d) potentially illegal activity. The remaining sections of the report present screenshots of flagged content, organized by source, content type, content year, and personally identifiable information. Only the most recent examples are included in the screenshots when multiple instances of flagged content are discovered.

Cover page of a Social Intelligence report.

In summary, the rapid development of social profiling tools highlights the increasing use of advanced AI technologies to assist in the prehire assessment of job candidates. The market for automated social media screening is highly volatile and constantly evolving. While current service providers continuously refine and update their algorithms to optimize evaluation processes and outcomes, the research, development, and testing of these technologies are still in an exploratory stage and often confronted with uncertainties, legal considerations, and ethical controversies. To become “effective users,” “informed questioners,” and “reflective producers” of technology (Selber, 2004), then, all stakeholders in the hiring process must be aware of both the potential benefits and the drawbacks of social profiling technologies.

Potential and Pitfalls of Social Profiling

A growing body of academic research and trade publications discusses the advantages and challenges for both employers and job applicants that are associated with using social network data in the hiring processes. This section focuses on the promises and risks of social profiling in preemployment screening, drawing from the findings of our integrative literature review.

Social profiling provides employers with significant benefits, including a cost-effective and efficient approach to talent assessment and human capital management. Compared with the traditional manual evaluation of résumés, social profiling expedites the candidate screening and selection processes by producing quick access to unfiltered online information about candidates (Nikolaou, 2014, 2021). Using scalable data analysis techniques, automated screening of social media profiles implements quantitative evaluation parameters that introduce greater consistency in candidate screening so that hiring professionals do not have to rely on just their experience and intuition (Sakka et al., 2022).

More important, social profiling offers insights into candidates’ personalities, soft skills, and emotional intelligence. According to a report from LinkedIn Talent Solutions (2019), 91% of talent professionals think that soft skills are very important to the future of recruiting and HR. The rise of automation and AI means that in addition to hard skills, soft skills such as creativity, adaptability, and collaboration play vital roles in workplace success. The report also suggests that, despite the growing value of soft skills, employers struggle to accurately assess soft skills due to their lack of a formal, structured approach. The most common ways to measure soft skills in the past few decades involved asking behavioral questions and observing body language, which is susceptible to bias and often elicits well-rehearsed answers. In contrast, by using social profiling tools to prescreen candidates, employers can assess candidates’ soft skills more systematically and consistently without relying on face-to-face encounters. As Evuleocha and Ugbah (2018) argued, social media profiles can help organizations reduce their uncertainties about candidates’ organizational fit and increase their certainties about hiring decisions and subsequent communication outcomes.

But despite these benefits, the process of social profiling can raise a range of potential ethical, legal, and privacy concerns. To start with, the use of social media in recruiting may strengthen digital inequality by excluding job seekers who are not active social media users (Karaoglu et al., 2021; Ruggs et al., 2016; Vosen, 2021). Various sociodemographic factors, such as age, race, education, and income, may lead to differential use of social media (Karaoglu et al., 2021). For example, job seekers with limited access to the Internet and lower social media literacy are less likely to engage in “capital enhancing activities” online, such as promoting self-achievement and networking with professionals, putting them at a disadvantage in the age of social media (Hargittai & Hinnant, 2008).

Second, hiring algorithms could amplify rather than reduce human bias in assessing candidates. Picardi (2019) demonstrated that demographic information (e.g., race, gender, age, ethnicity) revealed on social media could lead to discriminatory hiring practices. And Kleinberg et al. (2018) concluded that implicit bias in target variables and training data sets for machine-learning algorithms could lead to prejudice against historically marginalized communities. Such algorithmic decision making, which inherits human bias and is rooted in past discrimination, can privilege Whiteness, reinforce racism and sexism, and exacerbate oppression and social injustice (Noble, 2018; Schermer, 2011).

Third, knowing that potential employers may extract and screen candidates’ social media content may cause individuals to self-censor or self-oppress to protect their privacy. Although the U.S. Equal Employment Opportunity Commission (2014) acknowledged the pervasive role of social media in driving employment decisions, it suggested that social media profiles may reveal an individual's private information that is protected by federal and state legislation. Thus, by introducing issues of user consent and privacy invasion (Evuleocha & Ugbah, 2018), social media profiling becomes “a topos of participatory panopticism” or an “omniopticon tool” for social surveillance (Mitrou et al., 2014, n.p.), which could considerably affect an individual's well-being, freedom of expression, autonomy, and other interests and rights.

Finally, these tools result in the datafication of individuals by treating a person as belonging to a specific category or attempting to “distill a person's online presence into a single number” (Basulto, 2012; Mitrou et al., 2014). Social profiling, then, may suffer from the “black box problem” because it objectifies individuals. Thus, black-boxed due to proprietary concerns, social profiling tools provide little insight into AI's decision-making process, so AI's outputs or decisions cannot be predicted (Bathaee, 2018).

Implications for Practice

To facilitate the informed decision making of diverse stakeholders regarding the use of emerging social profiling technologies in the hiring process, we provide specific recommendations based on a multidisciplinary literature review and case studies of social profiling tools. First, we present recommendations for job applicants—particularly TPC students who will soon start job searching—for managing their social media profiles. Next, we provide TPC educators with recommendations for preparing students for social profiling. Last, we briefly discuss how employers and hiring professionals can ethically engage with social profiling tools.

Implications for Job Applicants

With AI transforming recruiting, job applicants need to understand the increasingly important roles that social media plays in the job-seeking process. Job seekers must be mindful of every digital footprint they leave and take steps to create a professional and positive online brand. We offer five recommendations based on our case studies and integrative literature review: explore the algorithmic process, critically engage with social media, avoid problematic (“red flag”) content, separate personal and professional profiles, and check privacy settings.

Explore How Algorithms Evaluate Social Media Content. Despite the black box problem of social profiling technologies, job applicants can gain insight into how algorithms process social media content by examining the methodology sections of company white papers and technical reports. The screening of large-scale social media data primarily relies on algorithms, namely, “any well-defined computational procedure that takes some value, or set of values as input and produces some value, or set of values as output” (Cormen et al., 2022, p. 1). In social profiling, the input is user-generated social media content that make up the “public building blocks” of an individual's online brand (e.g., photographs, videos, tone of voice, language style, postings, shares). These building blocks can be analyzed for quantity, quality, and content through techniques in natural language processing that enable computers to “understand text and spoken words in much the same way human beings can” (IBM, n.d.). Such analysis is then correlated with personality and psychological traits, beliefs, emotions, interpersonal relations, and political preferences as output (Dattner & Chamorro-Premuzic, 2020; How Humantic AI Works, n.d.; Kulkarni et al., 2018).

Although no universal formula exists for evaluating written or spoken content, psycholinguistic, computational psychometric, and machine-learning researchers have identified some consistent patterns (Boyd et al., 2022; Dattner & Chamorro-Premuzic, 2020; Ramírez-de-la-Rosa et al., 2023). For example, the use of positive words reflects an extroverted disposition whereas the use of negative words reflects an emotionally sensitive disposition. When posting, sharing, and reacting to social media content, then, job applicants should consider how algorithms parse words as data points and exercise caution in their word choices. For TPC job applicants, their social media presence should highlight their technology skills, personal characteristics, and professional competencies, such as written communication, project management, collaboration, and time management (Brumberger & Lauer, 2015).

Maintain an Online Presence and Critically Engage With Social Media. One of the pitfalls of social profiling is that it excludes candidates who have minimal to no online presence, so job candidates should critically engage with social media, striking an appropriate balance in the amount of information they share on online networks. Daer and Potts (2014) warned against the notion that “simple uses of social media connote critical engagement with it” (p. 27). Employers may be more interested in prospective colleagues who do more than just consume media but instead actively participate in media by engaging with others, sharing thoughtful information, and demonstrating a passion in personal or professional life (Accredited Schools Online, 2022; Jenkins et al., 2009). Sharing meaningful and thought-provoking content online can reveal candidates’ passions, personality, and interests both inside and outside of the workplace, which recruiters may see as a sign of positive engagement with social media (Accredited Schools Online, 2022).

Audit Social Media Accounts and Eliminate “Red Flag” Content. Although awareness of social profiling tools can lead to self-censorship, job seekers can benefit from auditing all their social media accounts to ensure that they are presenting a professional online brand, which is crucial for surviving in the age of automated recruiting. It is essential to ensure that job-relevant information, such as employment history, education, and certifications or training, is consistent across all platforms. Job seekers should also eliminate social media content that could be considered negative or undesirable to potential employers (Carr & Walther, 2014). Such “red flag” content may include provocative or inappropriate photos, videos, or information; photos or videos showing alcohol or drug use or any criminal behavior; lies about qualifications; information that reflects poor communication skills or that bad-mouths previous employers or colleagues; unprofessional screen names; and discriminatory comments related to race, gender, or religion (CareerBuilder, 2018).

In their analysis of 436 hiring managers’ assessments of candidates’ Facebook profiles, Tews et al. (2020) found that self-absorption had the largest detrimental effect on recruiters’ overall evaluation of candidates’ organizational fit, followed by opinionatedness, alcohol use, and drug use. To avoid self-absorption, job seekers should show interest in and be cheerleaders for others’ work rather than use social media only for self-promotion. By interacting with and responding to people on social networks, job seekers indicate that they can get along well with others.

Separate Personal and Professional Profiles. Social profiling can lead to the invasion of privacy and a blurred boundary between private and professional life (Lam, 2016). Because diverse audience groups (e.g., families, friends, prospective employers, and colleagues) may access social media users’ online profiles, job seekers might consider strategically separating their personal and their professional social media profiles depending on the platform's primary audiences. A Jobvite Recruiter Nation Survey (2020) suggested that employers were most likely to use LinkedIn (72%), Facebook (60%), Twitter (38%), and Instagram (37%) to research candidates. This survey also indicated that LinkedIn was the most common site used by recruiters to search for candidates (95%), contact candidates (95%), and vet candidates before an interview (93%). Therefore, while job seekers may post personal information on certain platforms (e.g., Snapchat, TikTok, Pinterest), they should especially take care to present a professional persona on LinkedIn.

In addition, candidates can double-check the groups they join and pages they subscribe to or follow. Such affiliation information can unintentionally provide employers with more data that can be used to evaluate the candidates’ involvement in the profession. For instance, TPC job applicants may consider following professional organizations on social media, such as the Society for Technical Communication (STC), the Special Interest Group on Design of Communication (SIGDOC), Write the Docs, IEEE ProComm, and the User Experience Professionals Association.

Check Privacy Settings to Adjust the Visibility of User Profiles. Publicly available social media content can be subject to surveillance and data mining. To prevent privacy invasion from prospective employers’ use of social profiling technologies, job seekers might consider adjusting the default privacy preferences to control the accessibility of selected content to the public (Root & McKay, 2014) instead of voluntarily exposing information to “an indefinite audience in social media” (Mitrou et al., 2014). They should use search engines to examine what content is associated with their names online. A significant disparity might exist between “the amount of privacy applicants believe they have” regarding their social networking activities and “the amount of privacy that actually exists” online (Byrnside, 2008, p. 460). As some sites regularly change their privacy features, job seekers need to check the latest privacy settings and customize the amount of information visible to different audiences.

Implications for TPC Classrooms

Daer and Potts (2014) argued that the idea of a “digital native” generation in which everyone is digitally savvy and proficient is a myth. Students have varying levels of social media literacy, and instructors can help them navigate social profiling technologies by integrating social media into their curriculum design. To prepare students in TPC and upper-level service classes for social profiling, we recommend lessons that increase students’ awareness of social profiling, discuss strategies for curating professional online identities, develop students’ algorithmic literacy, and cultivate students’ soft skills.

Increase Students’ Awareness of Social Media Profiling. Prior research has indicated a disparity between consumers and HR professionals regarding their perceptions of what social media content can reveal about job candidates (Evuleocha & Ugbah, 2018; Lam, 2016). In particular, college students often underestimate how much and to what degree potential employers use social media information during the hiring process (Curran et al., 2014). While students acknowledge that employers frown upon posts related to drugs, alcohol, profanity, and negative comments, they fail to recognize the significance employers put on proper grammar, spelling, and effective communication skills (Root & McKay, 2014). Moreover, students are often unaware that hiring professionals consider the social media groups they join and the information they associate with friends as crucial reflections of their disposition and interpersonal abilities (Root & McKay, 2014). Therefore, instructors need to make students aware of the use of social media as a screening tool and educate them about the types of information that employers value (Blount et al., 2016).

Discuss Strategies for Curating Professional Online Identities. Professional writing classrooms for STEM and management students are ideal sites to discuss strategies for curating online presence, given their focus on written and visual communication skills, editing, online information design, user experience, content management, and digital rhetoric. It is crucial to help students understand that all digital footprints, whether passive or active, contribute to building an individual's online identity (Daer & Potts, 2014). Instructors can design social media assignments that involve composing social media posts, analyzing users’ online persona, tracing students’ own digital activities, and critiquing problematic social media content from the viewpoint of potential employers (Root & McKay, 2014). Asking students to generate a list of best and worst practices in social media use can also be a useful exercise (Daer & Potts, 2014).

Develop Students’ Algorithmic Literacy by Engaging With Algorithmic Audiences. To help students make informed decisions regarding the black box problem of social profiling, instructors should focus on enhancing students’ algorithmic literacy. Koenig (2020) defined algorithmic literacy as “a basic understanding, a critical eye, and a rhetorical connection to the algorithmic platform” (n.p.). In contrast to the traditional recruitment scenarios where hiring professionals are the audiences of job candidates’ portfolios, social profiling presents a new rhetorical situation where both hiring professionals and algorithms serve as audiences. To help students write for algorithmic audiences, then, TPC instructors can help students “unbox or demystify” algorithms by “making them objects of study” (Gallagher, 2020). Instructors can guide students to trace the mechanisms of social profiling tools through case studies or various pedagogical resources, such as Bakke's (2020) search reflection assignment, Koenig's (2020) journal entries assignment, and Gallagher's (2020) “Unboxing an Algorithm” assignment. These assignments provide a space for students to identify, record, analyze, and evaluate engagements with algorithms. Such reflective assignments can help students better understand the new ethical challenges of communicating with nonhuman audiences and develop strategies to address the new rhetorical situation.

Cultivate Students’ Soft Skills. The use of personality profiling tools, such as Humantic AI, calls for heightened attention to soft skills development. According to LinkedIn Talent Solutions (2019), soft skills in high demand in the workplace include creativity, persuasion, collaboration, adaptability, and time management. Soft skills, or human skills, are “demonstrable skills that involve engaging directly with others to achieve goals related to work” (Rose et al., 2020, p. 4). These skills are often transferable, enabling working professionals to move from one industry to another, as Huang et al. (2021) found. Scholars have demonstrated the necessity and feasibility of teaching in-demand soft skills in TPC classrooms, helping students to recognize that acquiring soft skills “may be even more important than learning technical tools or new research methods” (Anthony & Garner, 2016; Rose et al., 2020, p. 7). To develop students’ soft skills, instructors can incorporate multiple assignments into their curricula, such as cases, scenarios, self-evaluation, and interviews, to cultivate students’ positive attitudes and work ethics and develop their teamwork, leadership, empathy, oral and written communication, problem-solving, and critical thinking skills. For example, instructors can assign students regular self-evaluation exercises in which they assess their own strengths and areas for improvement in a simulated environment. Students can reflect on their personal development, set goals, and create action plans to enhance their soft skills.

Implications for Employers and Hiring Professionals

To avoid legal and ethical issues related to privacy invasion, biases, and discrimination, hiring managers, recruiters, and HR professionals who use social profiling must take precautions by establishing formal policies on social profiling, providing mandatory training for decision makers, obtaining user consent, and ensuring that humans are involved in the decision making process (Davison et al., 2016; Evuleocha & Ugbah, 2018; Ostheimer et al., 2021; Peng et al., 2019; Picardi, 2019; Thomas et al., 2015). Further, employers should ensure that every candidate is treated equally in the hiring process, particularly those who are socially disadvantaged and may have limited publicly available online data. One possible approach to preventing discrimination and biases is to establish clear guidelines on the timing of social profiling. For example, Davison et al. (2016) suggested that hiring professionals should conduct social media screening later in the hiring process when they already know the “visible protected class memberships” of the candidates (p. 38).

Conclusion

Automated analyses of social networking content have been increasingly used by hiring professionals to assess job candidates’ workplace behaviors, personalities, soft skills, and organizational suitability. This study has presented a multidisciplinary perspective on social profiling in employment decision making, drawing on HR management, workforce assessment, computer science, business ethics, and technical communication. By examining the taxonomy, service providers, benefits, and risks of social profiling, we have offered insights into how job seekers, TPC instructors, and hiring professionals interact with innovative AI-assisted screening technologies.

These insights suggest that job seekers must be mindful of their digital footprints and maintain a positive online presence. They would benefit from producing meaningful content while eliminating problematic content. Instructors in TPC classrooms should raise students’ awareness about the use of social profiling in the hiring process and develop their algorithmic literacy and soft skills. And employers and HR professionals should develop best practices for social profiling by prioritizing legal compliance and ethical concerns. Given the black-boxed nature of AI-assisted recruiting, serious scholarly and regulatory attention should be paid to ethical concerns such as accuracy, transparency, explicability, and accountability and to the possible development of strict liability standards and regulations. In conclusion, we emphasize that all stakeholders must work collaboratively to use these emerging automated technologies in employment screening in legal and ethical ways.

Our study, however, has several limitations, including its reliance on existing literature on social media screening and social profiling tools. As many of these tools were developed in the last decade, the algorithmic processes and accuracy of the tools have yet to be closely examined by researchers and customers. Future research can use interviews, surveys, and experiments to examine the experiences of hiring professionals who use social profiling tools and job candidates who seek employment by those professionals. Such research might examine the following underexplored questions: What role does social media screening play in the hiring processes for different occupations, ranks, and sectors? How do job applicants in different cultural contexts perceive social media screening in the hiring process? What policies and regulations are needed to ensure the legal, fair, and ethical use of social profiling services? What are professionally endorsed practices of social profiling that address issues of discrimination, biases, and inequality? And how can TPC professionals help the public to navigate the risks of social profiling in this changing sociotechnological landscape of AI, automation, and big data?

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation (grant number 1937037).