Abstract

When algorithmic media are becoming more independent in their ability to select, organize, and create what and how we remember daily life, this article examines the genealogical pre-condition of algorithmically generated “memory” through a case study of Google Photos. I argue that the algorithmic conceptualization of memory is rooted in the history of cybernetics, which is a contrast to the socially constructed memory. I first investigate older phenomenological questions around “memory” in the science of cybernetics and then examine a genealogy of cybernetic memory. Finally, I illustrate how cybernetic memory is animated in Google Photos. This article historically examines what “memory” is understood to be in algorithmic media and how the science of cybernetics has integrated our current understanding of memory into algorithmic memory practices—the socio-technical imaginary of the past.

Introduction

In 2015, Google launched a new digital photo storage system, Google Photos. The application is able to not only store the unlimited amount of digital photographs but also curate and suggest what to remember among an inaccessible number of photos. The assistant feature of Google Photos automatically organizes a collage of photos with certain designs and music featuring time, space, people, and moods detected in the photos. Then, the service periodically displays the algorithmically curated photos and posts as “memories” 1 through their platform with a notification, “Your memories are waiting” (see Figure 1).

Notifications for algorithmically generated “memory” from Google Photos.

Before Google Photos, many social networking services and applications, such as Timehop, Facebook “Memories,” and Apple photo album “Memories,” introduced similar memory-related features. However, Google Photos has been much more sophisticated in selecting photos that invoke “positive” reactions by translating emotions presented in the photograph to the mathematical language without human additions. Besides possessing the capacity to store daily life, Google Photos actively intervene in the way we recall the past. Photographs stored in the application continuously reappear in the present, generating a new mode of remembering the past. Artificial intelligence (AI) embedded in contemporary digital media, such as Google Photos, automatically reanimates the stored past with algorithmically selected photos that evoke memories of particular people, places, and moments. Even though technological engagements in memory practices have never been new, these techniques of remembering create a rupture in the previous discussion on human memory and media.

As Stiegler (1998) argues, human memory practices are inherently associated with technologies and techniques that exteriorize our memories. Starting with (or before) drawing and writing in ancient times, acts of remembering have been linked to various material aids or media. Human memory practices have been aided or triggered by “an external and inferior technology of recollection” that inscribe material traces outside of the human body (Barnet, 2001, p. 218). In this long relationship between materiality and memory practices, Sturken (1997) emphasizes “technologies of memory,” which indicates objects and media in memory practices that are not passive containers of memory but active social and technical processes through which memories are produced, shared, and given meanings. Landsberg (2004) also addresses the power of objects and mass media as “transferential space” where the object and medium transfer memories between different subjects across different times, spaces, and identities. This is “prosthetic memory,” which is facilitated by objects and media, creates affective connections to the past and forms a subjectivity (Landsberg, 2004, p. 120).

Recently, more scholars have given particular attention to media in digital memory practices (Mayer-Schönberger, 2011; Schwarz, 2014; Van Dijck, 2007; Van House & Churchill, 2008). With the emergence of digital media technologies, many scholars in memory studies admit that memory becomes embedded in and highly networked with digital media technologies and suggest new visions and methods of understanding the relationship between media and memory. Gane and Beer (2008) argue that we are living through the development of digital technologies that archive, reconstruct, and remediate our memories of everyday life and identity formations. Similarly, media scholars Garde-Hansen et al. (2009) conceptualize a “new memory ecology,” indicating that memory practices are associated with new media technologies and memories are mediatized in the ecology of memories.

Algorithmic media in twenty-first century not only archive and remediate human memories, but also select what to remember as well as suggest how to remember. With algorithmic media suggesting “memory,” our acts of recalling the past might be becoming increasingly automated with algorithmically curated “memory.” In contemporary media cultures, with many social networking applications, these techniques of remembering the past induced by algorithmic operations on stored digital records are becoming ever more pervasive.

When algorithmic media are becoming more independent in their ability to select, organize, and create what and how to remember, this article questions how the algorithmic curation has become what they called “memory.” Memory is an active process of enduring and reconstructing traces in time, which is possible through human practices of recollecting or remembering. In Google Photos, however, algorithmic media operate memory and automate the process of remembering. This article examines the genealogical pre-condition of the new “memory,” the algorithmic conceptualization of memory. I trace back to cybernetics and its trajectory to the contemporary algorithmic media generating and suggesting “memory.”

The term “cybernetics” is coined by the American mathematician Norbert Wiener. With the term, Wiener (1965) theorizes the science of “control and communication in the animal and the machine,” which emphasizes communication in/between both humans and machines to control future behaviors. With the explicit purpose of controlling and commanding the system, Wiener predicts a future in which machines in feedback loops autonomously learn, stabilize, and adapt in the same way that the human brain works in his understanding. A cybernetic system receives messages, performs the prescribed actions, and waits for feedback to adjust its next performance. In this repetitive circle of performances and feedbacks, which is communication for Wiener, the system can be governed and controlled. This system of feedback loops is Wiener’s (1965, 1988) model of communication for all kinds of bodies, including living organisms and machines.

The ultimate goal of cybernetics was to develop communication facilities to transmit “message between man and machine, between machine and man, and between machine and machine” (Wiener, 1988, p. 8). Even though contemporary neuroscience rejects the technological metaphor of cybernetics projected onto the human brain and body (Bollmer, 2018), Wiener’s theories of cybernetics have been influential in shaping the contemporary development of social, technological, and economic systems in Western culture. Many scholars claim that cybernetic theories and understandings of communication are still repeated in today’s digital cultures (Bollmer, 2018; Dyer-Witheford, 2015; Halpern, 2015; Hayles, 1999).

While Wiener’s understanding of communication and its impact on artificial culture are widely discussed, the concept of memory is undertheorized in Wiener’s original work and recent scholarly discussions on cybernetics and digital cultures. In that, this article examines the deep and hidden legacy of cybernetics in the algorithmic understanding of memory by investigating older phenomenological questions around memory in the science of cybernetics. I first reflect Wiener’s ideas of “memory” across his writings on cybernetics and define cybernetic memory as technical processing of the behavioral patterns. I then articulate how the cybernetic imagination of memory has lasted by unrealized ideas in history. At the end of the article, I examine the legacy of cybernetics for the technical rules of automated recalling and the conceptions of memory circulated in the archival operations of Google Photos. This article aims to reveal the underlying rules shaping “memory” in Google Photos and to illuminate how an algorithmic conception of memory has genealogical origins in the science of cybernetics, which simplifies human memory practices into the consumption of technically curated machine-readable signals.

Cybernetic Memory: The Technical and Behavioral Memory

In Wiener’s ideas of memory across his writings on cybernetics, the science of cybernetics defines and understands memory technically and behaviorally.

First, cybernetic memory implies a technical synthesis of storage and recollection. For Wiener, the human nervous system and automatic machines are fundamentally alike because both make decisions based on decisions they have made in the past. In Wiener’s concept of cybernetic systems, the records of the past are essential to make decisions in the present and maintain the stability of the continuously animated communication system in feedback loops. The recorded information is continuously transmitted to perform and predict actions. The cybernetic repository of the information stores the information and facilitates the system’s capacity to react and predict. Here, cybernetics understands memory in a technical way that includes the process of selecting, storing, and processing the information.

Wiener (1965) suggests that “the uses of memory are highly various” (p. 121). In The Human Use of Human Beings, Wiener (1988) explicitly mentions memory as combinations “of the data put in at the moment and of the records taken from the past stored data” (p. 11). Here, memory seems the site of storage, where the information is inscribed and preserved. In his overall theories of cybernetic systems, however, memory goes beyond the static site of storage. Wiener (1965) explains memory as a core function of both the human nervous systems and computing machines, which indicates “the ability to preserve the results of past operations for use in the future” (p. 121, emphasis added). In multiple uses of memory in the cybernetic systems, memory implies not only inscriptions and preservations of the records, but also the processing of the recorded information for future behaviors.

Wiener identifies this technical operation of memory in two separate layers in human brains: short- and long-term memories. The short-term memory functions to carry out the current processes, which “should record quickly, be read quickly, and be erased quickly” (p. 121). The short-term memory temporarily stores the filtered sensation and quickly removes the information once the current process is completed. On the other hand, long-term memory is intended to be a part of the permanent record, which contributes to “the basis of all its future behavior” (p. 121).

Wiener duplicates this understanding of human brains to his ideas of the cybernetic machines. For Wiener, the perfect operation of both humans and machines means conducting a chain of operations without failing the stability of memory, where the information is inscribed, recorded, and processed. Without a short-term memory, information is only accumulated, and the excess of information in memory might negatively impact the statistical probability of prediction and the stability of the system. The system should not just accumulate all the messages but rapidly erase and implant a new record. Thus, the ideal memory process should synthesize short- and long-term memories, which would be an active process of managing, altering, and processing the stored information, rather than a static accumulation and representation. Based on this neuroscientific understanding of human brains, Wiener envisioned the machinic memory not as a static accumulation of information, but an active process of storing, adjusting, and processing the stored and incoming information for future actions. Here, memory is linked to the processual archive, rather than the storage of fixed data blocks. Cybernetic memory should respond, react, and adjust to the system, instead of merely repeating the stored information. In the cybernetic imagination, Wiener hopes that “the acts of recording, storing, and recalling may no longer be held separate” (Halpern, 2015, p. 71). Cybernetic memory should be both records of signals and the acts of recollection simultaneously.

Second, cybernetic memory implies a technical analysis of the inscribed behaviors. Another clue to understanding the legacy of cybernetics on present-day conceptualizations of memory is related to Wiener’s behaviorist perspectives. Wiener emphasized behaviors, more specifically the ever-changing patterns of behaviors, which turn into information data to be transmitted and modified in the feedback systems.

In Wiener’s design of cybernetic systems, the main goal of the processual memory is to creating a perfect feedback system to govern and control the communication system. The core idea of feedback systems relies on the cybernetic analogy between humans and machines—that both can modify themselves on the basis of past experiences to act more effectively in the future and achieve specific anti-entropic 2 ends. The bodies in the cybernetic system continuously act by adjusting their behaviors in responses to the feedback. In this cycle of the feedback system, all behaviors are purposeful, aimed at achieving “a final condition in which the behaving object reaches a definite correlation in time or in space in respect to another object or event” (Rosenblueth et al., 1943, p. 15). Humans and machines are the systems of self-referentiality and communication that generate and modify their behaviors in response to the outer world. For Wiener (1988), the “present is unlike its past and its future unlike its present” through the continuous modification of behaviors in feedback systems (p. 48).

The modification of behaviors is observable and measurable because Wiener emphasizes the patterns of behavior. Wiener believes that the world is made up of patterns. For Wiener (1988), the human body is “not stuff that abides but patterns that perpetuate themselves,” and the pattern is the message. That is the same as human-made machines. The physical identity of machines and living organisms is determined by the patterns, which are constituted by “the order of elements of which it is made,” rather than “by the intrinsic nature of these elements” (p. 3). In the feedback systems, what is transmitted and modified is the patterns of behaviors.

Reversely, the pattern is generated by something only in action and performance. The behavioristic perspective only focuses on the visible inputs and outputs that are performed. The cybernetic inquiry into bodies, including human and machine, relies on the behavioristic perspectives. Cyberneticians measure and analyze the actions and reactions to find the patterns. An internal intention and thought matter less, unless they are transmitted to others and become visible forms of actions that can be scientifically and technically observed and analyzed. In the development of the anti-aircraft gun, 3 for example, engineers and mathematicians only focus on the behaviors of the gun(ner) and the (pilot of) plane—not their experiences, thoughts, or motivations. While the internal intentions are unseen, unverifiable, and opaque, the actions are measurable and predictable. Halpern (2015) explains that these perspectives of behaviorism make the world one of a black-boxed entity “whose behaviors or signals [are] intelligible to each other, but whose internal function or structure [is] opaque, and not of interest” (p. 44). Focusing on transmitting signals between different bodies means that the way elements are arranged matters more than its intentions, meanings, and contexts. The mind of the human is unknown or not the interest of the cybernetic systems. What matters is always the actions and the patterns created by the successive performances.

Likewise, the science of cybernetics examines the behaviors of bodies. The behaviors are not fixed “with respect to its surrounding” to achieve or maintain the purpose of the system (Rosenblueth et al., 1943, p. 18). The purpose cannot be deduced from one’s internal intentions and properties but is “discernible only through analysis of that entity’s ongoing relationships with other entities and its environments” (Behrenshausen, 2016, p. 94). For Wiener, humans and machines can learn and reproduce themselves. Through the automatic adjustments, they can modify their patterns of behaviors and improve their tactics of performances by experiences to act more effectively or purposefully. The modification of patterns matters most in the cybernetic systems, not the materiality of the body or its internal properties.

In this action-oriented understanding, cyberneticians only record and observe the actionable behaviors to find the patterns and, ultimately, adjust the behaviors. While memory is technologically understood in the cybernetic synthesis of storage and processing, memory is comprehended behaviorally here. With the technological processing of the stored data, memory means the past patterns of behaviors, which can be measured, analyzed, and then modified later.

This cybernetic conception of memory reduced memory practices to the repetition of observable patterns, without considering the contexts or internal meanings through which memory is constructed. In cybernetics, the memorable means the observable patterns of behaviors that satisfy the purposes of the system. The sentimental connections or the internal meanings are inessential or worthless in cybernetic behaviorism. Both humans and machines are pattern-generating devices that continuously examine previous patterns of behavior recorded in the technical sense of memory and modify those patterns to create better outcomes. Memory becomes the technical source of future actions.

A Genealogy of Cybernetic Imaginations

This understanding, or imagination, of cybernetic memory is circulated in the design of twentieth-century media, even though some of the ideas left unrealized.

The basic idea of cybernetic memory as both storage and recollection has been already suggested by Friedrich Kittler’s (1990) analysis of the transition from the “discourse network 1800” to the “discourse network 1900.” Kittler emphasizes that the transition explains the obsession with recording and reproducing the acoustic and optical data. In the “discourse network 1900” of various recording apparatus, such as phonograph and camera, according to Kittler, memory is determined by the network of recording media. Human senses are exteriorized and stored in media such as photographs and sound recordings, becoming technically operable memories. The memory, which belongs to the technical inscription, is transmitted and exchanged between other media. When memory belongs to the “discourse network 1900” of storage apparatus, memory is the technical operation of stored information for future transmission and operation, without considering human symbolic meanings (Kittler, 1999).

A more obvious connection appears in computing technologies. The technical understanding of cybernetic memory has been analogous to the fundamental structure of computing technologies since the mid-1900s. John Von Neumann, a member of the Macy Conferences 4 with Norbert Wiener, developed the “Von Neumann Architecture,” which became the prototype of the first stored-program computer, electronic discrete variable automatic computer (EDVAC), the predecessor of all modern computing machines (Gehl, 2011, p. 1230). The architecture stores data and programs in a memory core as well as processes and executes the stored data and programs with a processor (Von Neumann, 1993). The processor took over the mathematical task that human computing groups had performed in the 1700s and improved the speed and accuracy of processing. Processing was conditioned by the memory of the computer, which included short-term storage of equations and intermediate results and long-term storage of computer programs (Gehl, 2011, p. 1231). The synthesis of immediate and archival memory to process and execute future actions became known as the random access memory (RAM) and the hard-drive storage system.

Likewise, the separation between transmission media and storage media, which was prominent in the nineteenth century, became obsolete after the computer revolution. In the technical synthesis of storage and processing, memory in the computing refers to storage and regeneration simultaneously. The stored information is continuously processed and came into being anew. Following the cybernetic imaginations, memory became the temporal matter of processing in the archive, instead of the spatial matter of recording data in storage. Ernst (2013) argues that the temporal understanding of processual memory updates the traditional understanding of “archive.” In his theories of the digital archive, computer-memory is no longer read-only storage, but the successively generated archive. Unlike the nineteenth-century storage media that preserved time, the digital archive operates computer-memory without the intrinsic macro-temporal index. Even though what appears in the screen seems permanent, link locations of information always change in every regeneration of the information. The digital records are not stored, but continuously regenerated by interventions of “enduring ephemeral—a battle of diligence between the passing and the repetitive” (Chun, 2011), which is seemingly analogous to the process of recollection in organic memory practices.

Around the same time, Vannevar Bush (1945), in his essay As We May Think, had prophesized a new machine, the Memex. His idea of the Memex would be the perfect memory machine that allowed a user to access the total capture of information and utilize an automatic system of indexing the stored information. The Memex would be a desk-like machine with two projectors (see Figures 2 and 3). In addition to the total database of information in the massive storage space, the projectors allow users to compare and connect the information to create associative trails and personal annotations on documents. The associative trails and personal annotations reorganize the information to be accessed easily in the future. Like the science of cybernetics, the design of associative trails comes from the neuroscientific structure of human brains. The design emulates the way brain cells carry an intricate web of trails and turn them into the association of thought. Memex’s indexing system associates discrete documents. Bush envisioned people reacquiring “the privilege of forgetting” through the technical supplant to memory that would preserve, manage, organize, and reactivate the stored information for humans (p. 121).

The design of the Memex (Bush, 1945, p. 123).

Memex in use (Bush, 1945, p. 124).

The idea of the Memex is about not only storage but also the management and processing of the stored information. The role of associative trails is critical to managing the massive amounts of records. The records are not stored statically, but continuously reorganized for easier access and retrieval in the future. The Memex updates the cybernetic imagination and envisions the future of memory, which has permanent storage capacity with automatic and novel forms of indexing. As Halpern (2015) argues, the importance of the Memex is that “the machine would break the taxonomic and stable structure of the archive” and would work like human brains may think (p. 73).

The emphasis on behaviors also appears in some designs of the twentieth-century technological designs. As Bollmer (2018) argues, the cybernetic behaviorism can also be found in the Turing test, which is often cited as the precursor of AI. In his essay, Computing Machinery and Intelligence, the British computer scientist and mathematician Alan Turing, suggests the Turing test by asking “can machine think?” Turing proposes a game in which a human and a machine are hidden in a separate room from the human evaluator. When a human and a machine have a conversation through a text-only channel, the human evaluator should judge which one is a machine. In Turing’s (2004) argument, if a machine can imitate the way humans “think” and generate “human-like” responses, the imaginable digital computers could do well in the imitation game.

Turing’s idea of “think” is, in fact, generating the pattern of behaviors. The Turing test emphasizes a performative and behavioristic identity that can generate patterns, which can be imitated. In this game, the internal thought and the physical body matter less than the performed behavior. When communication is about the transmission of patterns, like Wiener’s arguments, the smart machine can imitate the intelligence by performing the way the real human may act. Following the cybernetic emphasis on behavior, the Turing test gives us the possibility of communication between humans and machines “as if bodies [do] not matter” and patterns of behaviors matter (Peters, 2012, p. 236).

In this behavioristic understanding, both humans and machines are acting machines that generate and modify patterns. Identity and message are the behavioristic and informational patterns that can be observed and existed outside of the body. Moreover, the regular patterns of bodies can even be divorced from the physical body and transmitted to a different body. Wiener (1988) claims that “telegraph[ing] the pattern of a man from one place to another” is a matter of “technical difficulties” not of “any impossibility of the idea” (p. 110). These ideas lead the larger project that develops technics and technologies of measuring, extracting, and transmitting the patterns of bodies. The body becomes inessential and can be replaced by any prosthesis, such as a robot or machine.

This idea has been influential for science fiction films in Western culture. For example, the 1990 American film Total Recall depicts a company that can implant false memories into human brains to let people have memories without having direct experience. Also, the 2004 movie, Eternal Sunshine of the Spotless Mind, begins with a couple who have just extracted and erased their memories of each other after breaking up. More recently, the British television series Black Mirror (2016–present) describes several dystopian images of the future when emerging technologies can intervene in human practices of remembering through the technological ability to record, implant, transfer, and manipulate everything people see, do, and hear. The cybernetic understanding of the body as a prosthesis, which is just one way of performing patterns, has shaped the scientific and cultural imaginations of memory.

The concepts of cybernetics emphasize the analysis of the information-processing mechanisms that generate purposeful behaviors. In Wiener’s view of the subject, humans perform motor activities controlled by the internal information-processing mechanisms and feedback systems. Human brains, the core of the internal mechanisms, are viewed as complex communication and control systems that process and manipulate the transmitted information to maintain the stability of the systems, like an artificial control system.

Wiener’s structural analogy between human brains and automatic control systems has proliferated to theorize human behaviors and develop computing machines—the programmable electronic devices that process numbers and words to automate complex processes. Since the twentieth century, various input-output devices with different information-conversion mechanisms have been developed with the upgrading of processing speeds and memory capacities in every invention. Contemporary computing cultures reflect the cybernetic legacy, which highlights the information-processing and pattern-generating aspects of humans and machines. The cybernetic imagination has lingered in many technological designs in the twentieth-century and memory practice has become a worthy interest for scientists and technicians.

Algorithmic Uses of Cybernetic Memory: Feedback, Behavior, and Self-Learning Machines

This section has situated the algorithmic rules of Google Photos within a genealogy of cybernetic memory. In the legacy of cybernetics, memory becomes an interchangeable term between humans and machines, as if computing machines that simulate the way human brains remember. This conceptualization of memory—the cybernetic archive and the behavioral patterns—is circulated in the way Google Photos generate and understand “memory.” In what follows, I demonstrate the algorithmic uses of a cybernetic approach to memory, focusing on the technical rules of Google Photos.

Cybernetic Archives

The cybernetic emphasis on processual memory shifts the discourse on memory in communication technologies. The new cybernetic archives envision to synthesize storage and processing. The cybernetic archives of storage and processing process, execute, and modify the documented information to create new actions and associations to the outer world. In the cybernetic imaginary, the acts of recording, storing, and recalling are synthesized by computational programming.

The application of cybernetic archives—the technical synthesis of storage and recollection—spread to algorithmic machines in contemporary cultures that simulate the neural organizational structures. In 2012, a group of Google scientists published a remarkable article Building High-level Features Using Large-Scale Unsupervised Learning, which is also known as a “cat paper.” The Google research teams developed new approaches to large-scale machine learning that can distinguish objects in pictures. In the standard machine-learning approach, machines learn from “labeled data” (Dean, 2012). Humans have to collect tens of thousands of pictures that have already been labeled as the name of objects in the pictures to train the machine. Since the original approach takes much time, Google’s research on “self-taught learning” and “deep learning” suggests building artificial neural networks, an approach modeled on human brains’ neuronal learning processes, 5 that might be able to detect unlabeled data. As early computing machines imitated the dual architecture of a short- and long-term memory, the algorithmic machines simulate the way human brains learn the new information.

The Google research team developed a distributed computing infrastructure for training large-scale neural networks, then connected 16,000 computer processors of Google’s CPU cores in data centers (Dean, 2012). Their hypothesis, based on human neural networks, was that the machine networks, like human neural networks, “would learn to recognize common objects” in videos if they were exposed to YouTube videos for a week (Dean, 2012). The neural network was exposed to 10 million randomly selected video thumbnails that include a list of 20,000 distinct items over 3 days. As they had hypothesized, the neural network was responsive to certain stimuli on the test set for the cat neuron and the human body neuron and taught itself to recognize cat faces and human bodies against random backgrounds and other detractors. The results confirmed that the neural network learns not only the concept of faces, but also the difference between cat faces and human faces without having been given the labeled images. The system showed 81.7% accuracy in detecting human faces, 76.7% accuracy in identifying the human body, and 74.8% accuracy in classifying cat faces, which almost doubled its accuracy in recognizing objects in a list of 20,000 distinct items (Le et al., 2011).

The artificial neural network approach has been applied to a variety of Google’s intelligent products, including Google Photos. In 2015, Google introduced the new application of machine learning, Google Photos. In 2015, Google I/O, The Google Photos director, Anil Sabharwal highlighted three major features: free, unlimited storage capacity for the automatic back-up; an automatic photo-organization system; and an easier photo-sharing system. Digital copies of all photos that users have in their devices are automatically stored in Google Photos, which has unlimited storage capacity for digital photos up to 16 megapixels and videos up to 1080p resolution. Then, the stored photos from all devices synchronized with Google Photos are automatically displayed on a single page and organized in chronological order based on metadata of digital images. Users can re-view and search their photos across the day, week, month, and year. Also, any selected photos can be shared with others through the mail, text, and social media accounts.

The biggest innovation was in the organization system, which incorporated Google’s object-recognition algorithms and machine-learning system. Using Google’s artificial neural network, the application offers a private feature that automatically reorganizes and groups photos by people, places, and things without users’ efforts to label each photo. For example, if the user tabs a certain face suggested in the application, all photos having the face are separately displayed from the recent one to the oldest one. The name of places and things are automatically tagged in the group of photos based on the metadata of digital images. In addition, users can label the name of human faces. Once Google Photos algorithm completes detecting all faces in stored images, the application sends notification saying that “Which face is yours?” The notification shows some faces frequently appeared in the user’s photos and lets the user choose one face to “personalize Google Photos” and “help [the user’s] contacts’ Google Photos apps to recognize [the] face in photos.” Once the user gives information of which one is his or her face, Google Photos begins tagging the user’s name on every photo the user is appeared and suggesting the user’s contacts to share the photo with him or her if they have photos of the user.

By detecting the people, places, and things in photos, Google Photos generates metadata, which are operated on by the algorithmic rules of categorizing and organizing all photos for future retrievals. In Google Photos, the record and its recollection become more closely synonymous, as Wiener had dreamed for cybernetic memory machines.

Google Photos even concretizes and updates the designs of the Memex, following the legacy of cybernetics. Like the basic structures of cybernetic designs, Google’s machine-learning algorithms emulate the neural organizational structures and memory functions of the human brain. Google Photos develops novel forms of indexing systems that associate discrete photos. As Vannevar Bush had envisioned, humans can acquire the privilege of forgetting by relying on the machinic capacity of storing and managing image-information. Once users select and inscribe the moments, acts of recalling become a part of the algorithmic process that store, organize, and retrieve them. Even without human-created labels, captions, or the associative trails, the algorithms are able to generate the novel forms of the index to assemble and associate photos.

In Google Photos, there is no representational display of users’ past, but the algorithmic translation aimed at producing new associations and further interactions between users and Google’s algorithmic machines. Despite their emphasis on the unlimited storage capacity, the main goal of Google Photos is not storing and documenting the external worlds but becoming a cybernetic archive that integrates storage and memory.

Feedback, Behavior, and Self-learning Machines

The role of feedback systems is critical for the proper functioning of cybernetic archives. For Wiener (1988), cybernetic archives can “modify their patterns of behavior on the basis of past experience” to act more effectively with future environments (p. 48). Memory becomes a source for future actions of cybernetic archives, which belong to the cycle of feedback systems to perform differently in response to the outer world. The goal of cybernetics is self-referential machines that “improve the strategy and tactics of their performance” themselves (Wiener, 1988, p. 170).

Google Photos is a self-learning machine in feedback loops associated with human users. Even compared to Memex’s associative trails, Google Photos’ indexing system is unique because it is automatically generated and maintained by the algorithmic operations. The automatic organization systems rely on Google’s algorithmic abilities to learn and analyze patterns in photos and videos. Google Photos algorithms mathematically model photographs to analyze the patterns found in images, in addition to the metadata embedded in the digital images such as time and location information, and automate the process of organizing images in feedback loops.

As the first commercial of Google Photos (28 May 2015) describes, Google Photos becomes the application to make “all your photos organized and easy to find” and “a home for all [the] moments in time that you never want to forget.” For the last 4 years, Google algorithms have continuously updated their functions for producing memories out of the stored moments. The process of suggesting memories becomes automated by Google algorithms, which have taught themselves in feedback loops with users. On the first version of Google Photos in 2015, users could curate special photos through Collage and Animation features, in addition to the auto-organization of photos regarding people, places, and things. The first Google Photos commercial describes the features as the applications for turning “moments to memories” and “mak[ing] them come alive.” Later, these features become a part of algorithmic operations that automatically detect, analyze, and learn the visible patterns of behaviors found in the images and videos.

On May 2016, Google introduced “smarter albums,” which are algorithmically generated albums for users’ travel photos. The algorithms assemble the best shots of travel photos and generate the separate albums for the photos and videos taken during the travel. The commercial for the smarter albums (22 May 2016) emphasized that Google Photos finds the best moments of the travel and automatically creates an album, shows highlights of the travel, and group photos regarding places they visited. The algorithmic operations automatically generate the memories of travel, and the only thing users need to do is to add captions to describe the images.

The automatic album implies that the algorithms can identify not only information about photos but also information in photos. The technical rules of the algorithmic analysis are related to the cybernetic emphasis on behavioristic patterns in the feedback system. According to Wired magazine’s interviews with two Google developers involved in the smart album projects, Google Photos product manager, Francois de Halleux says that Google uses multiple layers of algorithms to create the automatic albums (Moynihan, 2016). After eliminating the duplicated images, Google algorithms detect and evaluate the elements in a photo to decide the quality of the photo.

First, Google Photos identifies the time and location information of the photos. Google’s ability to track the location and time information is no longer a surprise. Based on Google location services, Google Photos first determines whether a user was traveling or not when the photo was taken. Google Photos product lead, David Lieb explains that the distances between a user’s home and the location information of the photos trigger the auto-creation of an album. When photos and videos are taken far away from a user’s home, Google assumes that the user is on travel. The system also pays attention to the number of photos the user has taken in a certain period. Google identifies the travel when the user takes more photos and videos than usual at a specific location or a specific time frame. Specifically, if the time is around national holidays, college breaks, or other significant days registered in Google, the chance of creating the automatic album goes higher.

Then, Google Photos detects additional information in photos. If the algorithms detect geographical landmarks in a photo, the photo can be added to the smart album. To detect national and international landmarks, Google Photos uses a combination of location services, geotags, and machine learning, according to the Wired magazine interviews. Even without the assistance of location services, as of 2016, Google Photos algorithms could automatically identify 255,000 landmarks through the machine-learning system that have taught themselves to detect patterns of landmarks. Geotags and location information of photos given by users supplement the identification of the machine learning to minimize possible misrecognition.

The most remarkable feature of Google Photos is face detection. In addition to the elements indicating the location information of photos, Google detects human faces in photos. Google has been devoted to developing automatic face detection techniques since early 2000s. Google purchased face detection software company PittPatt in 2011 and a Ukraine facial recognition company in 2012 to integrate face detection/recognition services into Google’s AI products. As one of the research on face detection/recognition published in the Google AI database, Shah and Kwatra (2012) propose automatic photo enhancement through facial expression analysis. The facial analysis aims at automation, which organizes images, evaluate the “goodness” of a face by classifying facial attributes, selecting the high-scoring photos, and replacing “inferior” faces with “superior” faces from other photos without any user inputs (p. 1).

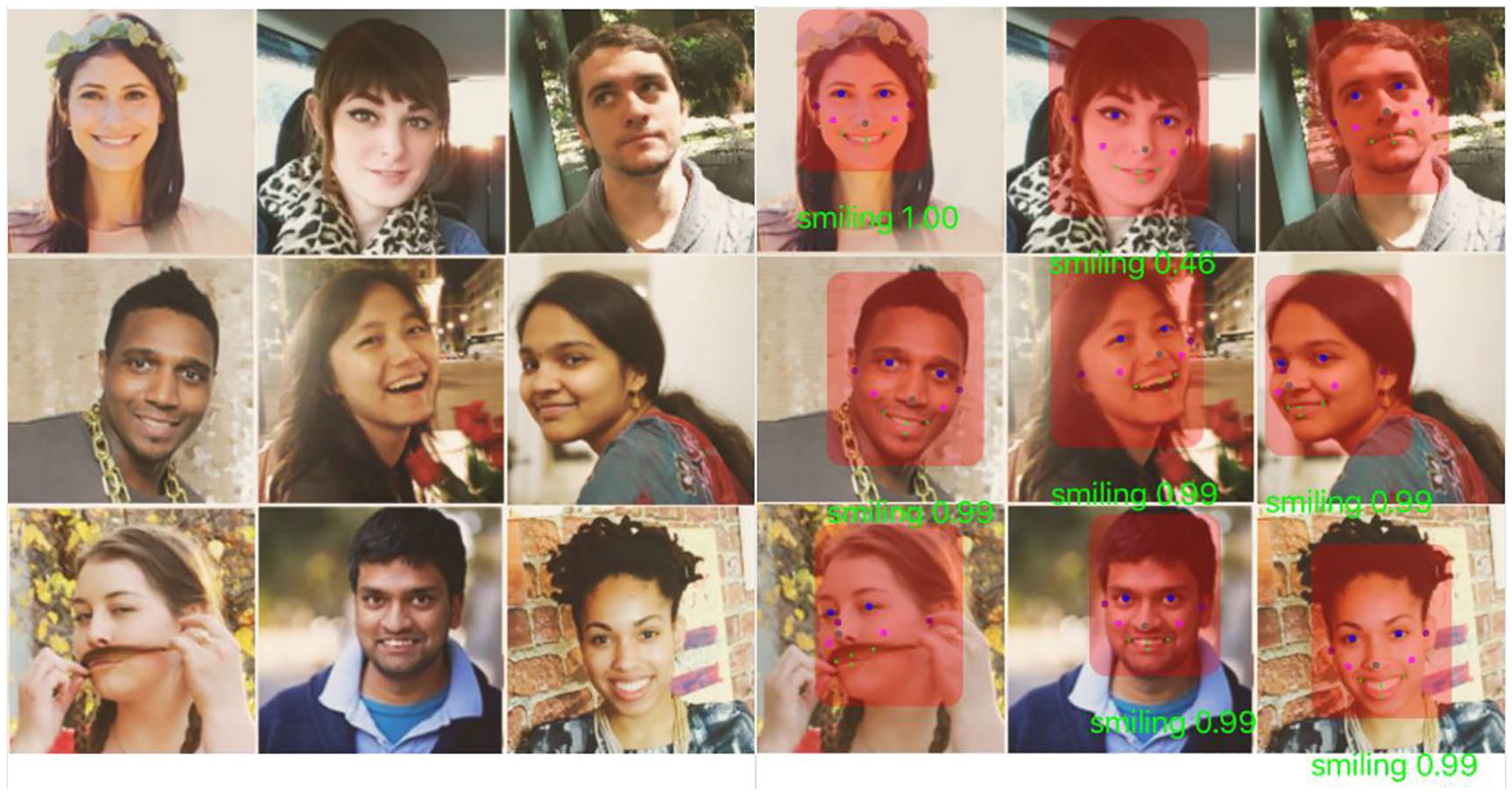

Google Photos adapts most of the techniques suggested in this research to create the smart album. According to two Google Photos developers interviewed with the Wired magazine, Google algorithms look for faces of people that frequently appear in users’ previous photos. The algorithms also detect the facial expressions of human faces. The photos where all people have their eyes open and smile are selected to be the best photos in the smart album.

The detection of facial features and expressions relies on the mathematical modeling of human faces and actions. In Google Developer’s “Face Detection Concepts Overview” (2016), Google introduces its “face detection” technique as “the process of automatically locating human faces” in digital images or video. Google defines the following four terms used in discussing face detection techniques:

Face recognition automatically determines whether two faces are likely to correspond to the same person. Note that at this time, the Google’s face application programming interface (API) only provides functionality for face detection and not face recognition.

Face tracking extends face detection to video sequences. Any face appearing in a video for any length of time can be tracked. That is, faces that are detected in consecutive video frames can be identified as being the same person. Note that this is not a form of face recognition; this mechanism just makes inferences based on the position and motion of the face(s) in a video sequence.

A landmark is a point of interest within a face. The left eye, right eye, and nose base are all examples of landmarks. The Face API provides the ability to find landmarks on a detected face.

Classification is determining whether a certain facial characteristic is present. For example, a face can be classified with regards to whether its eyes are open or closed. Another example is whether the face smiling or not. (“Face Detection Concepts,” Google Developers, 2016 emphasis in original)

These four concepts show how Google Photos mathematically model human faces in photos to identify, measure, analyze, and classify facial traits.

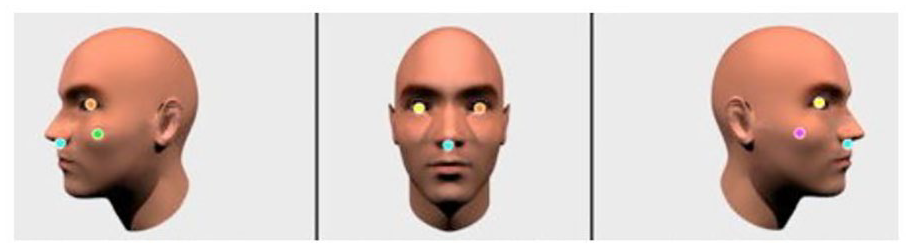

The algorithms detect all the faces in given images at a range of different angles by measuring Euler Angles of the human face and estimating pose angle to characterize a face’s orientation (see Figure 4). In addition, the algorithms extract the “landmarks” of the human face, which include eyes, nose, and mouth, associated with the angle (see Figure 5). Additional landmarks can be detected to identify facial features and expressions, such as two eye centers, nose tip, nose root, four eyebrow corner, and four lip corners. The technical features encode the local shape and spatial layout of human face landmarks. For smile detection, the algorithms extract features from both the mouth region and cheek and eye muscles, then calculate orientation angles between face landmarks (see Figure 6). These features also encode average intensity difference and spatial color distribution around the eyes and the mouth based on the “difference in teeth/lips color and iris/skin color respectively” (Shah & Kwatra, 2012, p. 4).

Pose angle estimation in Google Face Detection (“Face Detection Concepts Overview,” 2016).

Landmarks of human face in Google Face Detection (“Face Detection Concepts Overview,” 2016).

Additional landmarks analysis of human face (“Face Detection Concepts Overview,” 2019).

Then, the algorithms combine all the measurements and indicate a certainty value, which shows the probability that facial attributes, such as smile/no-smile and opened/closed eyes, are present in the photo. When the score is higher than .7, the photo becomes “good” to be added to the smart album, in contrast to “inferior” photo that does not measure up to qualified patterns (see Figure 7). The higher scores ensure that the photos evoke positive emotions to users and contains a great quality of images. The photos of the frontal face, the opened eyes, and smiling face become algorithmically chosen and processed memories for future retrievals of users.

The smiling and eyes open classification in Google Face Detection (Detect Facial Features in Photos—Mobile Vision, 2017).

Like the cybernetic emphasis on the patterns of behaviors, the algorithmic photo album follows the behaviorism traditions by documenting, measuring, and classifying facial movements. The algorithms attribute pixels to the “landmarks” of human faces to register facial reactions and emotional behaviors. Then, the algorithms track the facial muscles and movements around the eyes, nose, and mouth. Without considering the socio-cultural context, the internal intention, or the type of bodies related to the facial expressions, the automated facial expression recognition only measures the visible patterns of the human facial movements, relying on a behavioristic understanding of human emotions.

The legacy of cybernetics can be found here, in the way the algorithms of Google Photos learn, analyze, and modify observable and measurable patterns. By focusing on the transmission between different bodies, in the science of cybernetics, behavioral patterns have taken on greater significance than the physical body or the internal thoughts. Following behaviorism, cyberneticians observe, document, and analyze the visible inputs and outputs that generate modifiable patterns in feedback loops. Regardless of its physical body and internal intentions, what matters is what is visible and transferrable.

Algorithmic media suggesting “memory” such as Google Photos becomes the cybernetic self-learning machine that detects, documents, analyzes, and predicts behaviors and patterns of human users to suggest more proper and purposeful memories to users. In the genealogy of cybernetics, human users become acting machines that generate patterns of actions. The algorithmic memory practices make human emotions and memories parts of the observable actions, which can be mathematically modeled, measured, and analyzed in the cybernetic systems.

In this algorithmic use of cybernetic memory, discussed throughout this section, the algorithmic archives determine the memorable and the forgettable, and suggest what, when, and how we remember. Algorithmic media are directly associated with acts of remembering by suggesting what, when, and how to remember what we stored on the basis of algorithmic selection and organization. As Hoskins (2009) argues, in digital media ecology, remembering is networked and memory is characterized by its mediatized emergence in everyday digital media. Memory practices are socio-technical, which includes not only our acts of recalling, but also our engagements with digital media and living archival memory (Hoskins, 2009, p. 92).

In our socio-technical practices with Google Photos, the algorithmic media consistently and regularly call on us to re-view “memories” they have generated. The “memory” comes to mean standardized digital objects created outside of human bodies that do not need to be the same as what human user actually remembers. These new digital objects are results of the standardized reproduction processes operated by cybernetic archives that identify, classify, and reassemble recognized patterns. These processes aim to create cybernetic systems between algorithmic media and nostalgic subjects, who constantly revisit the past through technologies for automated memory practices (Lee, 2019). When we become a nostalgic subject by engaging in algorithmically created digital objects, which are called “memories,” algorithmic operations circulate the quantified pattern as memory and promote new modes of memory practices that manage one’s modes of engaging with one’s past.

Conclusion

This article explores the legacy of cybernetics in algorithmic memory practices to demonstrate how contemporary socio-technical memory practices build on and modify the cybernetic heritage in the contemporary design of machines, computing, and communication. The theories of cybernetics understand memory technically and behaviorally, which make memory an interchangeable feature between humans and machines. When both humans and machines are information-processing and pattern-generating devices, memory can be measured, produced, and evaluated by the technical operations of the cybernetic archives that analyze patterns of behaviors. In Wiener’s theories of cybernetics that emphasize the efficiency of information transmission between technical systems and living organisms, our memory practices become efficient through the technical synthesis of memory and recollection.

In Google Photos, algorithmic processing is integrated into digital storage to become the cybernetic archives that the acts of recording, storing, and recalling are synthesized. The algorithmic processing of digital storage gives concrete shape to Wiener’s ambitions. The newness of the algorithmic media suggesting “memory,” such as Google Photos, is due to their algorithmic management of the stored data. Our memory practices engage not only in what users have stored on Google Drives, but also on novel rules of automatic curation. Even if our digital practices readily configure the stored contents, we have drawn into a system that the algorithmic medium and the human user communicate with each other and become the integrated cybernetic system, regardless of inner thoughts, purpose, and contexts of actual memory practices. Building on the cybernetic imagination, algorithmic media are becoming sophisticated forms of archives that can store, classify, analyze, and reanimate our memory.

This analysis does not mean that the algorithmic curation of photos is the only way of contemporary memory practices. This might also be too soon to argue that ways of remembering are transformed in response to the algorithmically suggested “memory.” However, when we give up responsibility for careful curation of our digital memories and fail to examine our reactions to the algorithmic production of “memory” critically, this form might become naturalized memory practice through algorithmic logics. Increasingly, it shapes and influences our actions, our memories, and our acts of remembering and forgetting by algorithmizing procedures of memory practices.

The genealogical exploration encourages us to examine how we move forward with the contemporary socio-technical imaginations. As a cybernetic archive, algorithmic media such as Google Photos is still involved in the operations of documenting, measuring, analyzing, and predicting our actions. In the endless feedback loops, the algorithms improve their strategy and tactics of performance for a “better” outcome. Our acts of inscribing, storing, and recalling are the endless sources of algorithmic predictions. While the cybernetic fantasy has defined the present understandings of memory within applications, such as Google Photos, the technical legacies of algorithmic media have now become the future imagination of memory, desire, and the production of subjects. Memory might indicate something different in the future.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.