Abstract

During the COVID-19 pandemic, many qualitative researchers were forced to alter their data collection methods as traditional face-to-face interviews and focus groups were prohibited by social distancing requirements. While the shift to remote and digital platforms has undoubtedly provided numerous benefits, such as more flexibility and reach, it has also introduced new challenges, particularly the risk of imposter participants, or dishonest or false participants who fabricate their identities or exaggerate their experiences to join a study. Through reflection on two case studies, we identified several red flags, which we categorized according to phases of the research process—recruitment and data collection. Based on the red flags, we provide methods and recommendations for detecting and preventing imposter participants from impacting the validity and trustworthiness of qualitative research. Researchers must routinely implement these recommendations for qualitative research as technology becomes a more attractive avenue for recruiting potential participants, particularly participants belonging to populations often described as “hard to reach.” However, these problems are structural and require institutional attention. We, therefore, pose recommendations for academia, institutional review boards, publishers, and reviewers of qualitative research.

Introduction

Readers of this journal know that qualitative research has great value for analyzing participants’ experiences, perceptions, and behaviors to provide deeper insights into real-world problems, such as health inequity (Moser & Korstjens, 2017). Traditionally, qualitative research standards promote in-person data collection methods, such as face-to-face interviews, focus groups, and ethnographic observations (Tenny et al., 2024). Fieldwork is often essential for researchers to engage with participants in various contexts, ranging from their homes to public spaces, allowing them to immerse themselves in the environments of the participants (Sutton & Austin, 2015). However, the COVID-19 pandemic significantly altered qualitative data collection methods as face-to-face interviews and focus groups became nearly impossible due to lockdowns and social distancing requirements (Tremblay et al., 2021). As a result, researchers quickly adapted to new circumstances, with one notable shift being the transition to remote and digital methods for data collection (Keen et al., 2022). Platforms like Zoom, Google Meet, and Skype became the new norm for conducting virtual interviews and focus groups (Keen et al., 2022). This shift gave researchers and participants greater flexibility and the ability to reach participants globally without the need for travel or concerns about meeting social distancing requirements (Poliandri et al., 2023). Other advantages of the shift to virtual platforms included cost-effectiveness, time savings, and increased access to a more diverse range of study participants (Keen et al., 2022). While the shift to remote and digital platforms has undoubtedly provided numerous benefits, it has also introduced new challenges, particularly the risk of

The term “imposter participants,” first coined by Chandler and Paolacci (2017) in the context of online survey studies, has also been used in qualitative research and refers to “dishonest, fraudulent, fake, or false participants” who fabricate their identities or exaggerate their experiences to join a study (Chandler & Paolacci, 2017; Roehl & Harland, 2022). The phenomenon of imposter participants has become increasingly prevalent with virtual data collection methods. In one pilot study, 31 of 37 healthcare researchers (or 84%) surveyed reported encounters with imposter participants in their studies (Kumarasamy et al., 2024). In addition, Mistry et al. (2024) conducted a literature review that identified the low but growing prevalence of this issue across qualitative health research disciplines. These participants pose a significant threat to qualitative research by undermining the validity and reliability of the data (Roehl & Harland, 2022).

While we are not the first to encounter imposter participants (see Mistry et al., 2024), we anticipate that this problem will continue to become more frequent. In the absence of resources on how to prevent and deter imposter participants from entering studies, we argue that researchers should be proactive about ensuring the validity of qualitative research. Therefore, we share our experiences and lessons learned from two case studies involving imposter participants so that future researchers conducting virtual interviews or focus groups can identify potential imposter participants before they join the study. This paper also builds on the growing literature by (a) providing recommendations for preventing imposter participants from impacting active research and (b) highlighting potential practices for academia, institutional review boards (IRBs), publishers, and reviewers. We also interpret our experiences and recommendations in the context of health equity and early career challenges, given our perspectives and research agendas.

Case Studies of Imposter Participants

First, we provide an overview of two qualitative case studies involving imposter participants. The first case study was conducted by a postdoctoral research fellow (IRB# STUDY 00021915) while the second was conducted by a doctoral candidate as part of their dissertation (IRB# STUDY 00020313). Both studies focused on recruiting racially minoritized perspectives. Specifically, we exclusively recruited Black study participants.

Case Study 1: Building Narrative Practice to De-Stigmatize Mental Illness Among Black Youth in Minneapolis

The first case study is a multiple-phase project on local news narratives surrounding Black youth and mental health in Minneapolis, Minnesota. The first stage was a content analysis of local Minneapolis news, to assess how the local news media presented stories about Black youth and mental health issues. Next, the research team followed the content analysis with four focus group discussions (FGDs) with youth (age 13–19) who identified as Black and were currently living in Minneapolis (

Case Study 2: Postpartum Experiences Among Black Birthing People Who Had a Stillbirth: A Qualitative Study

The purpose of this study was to use FGDs to gain an in-depth qualitative understanding of social support, grief, and quality of care among Black birthing people who experienced a stillbirth. Study participants were primarily recruited through national stillbirth-related non-profit organizations. A recruitment flyer was emailed to these organizations with a request to share it on their social media platforms (e.g., Facebook and Instagram), websites, or via listservs. Interested and eligible participants were invited to a 90–120-minute FGD. The flyer noted that participants would receive a $75 electronic gift card via email as a token of appreciation for their time. Potential participants were directed to email the principal investigator

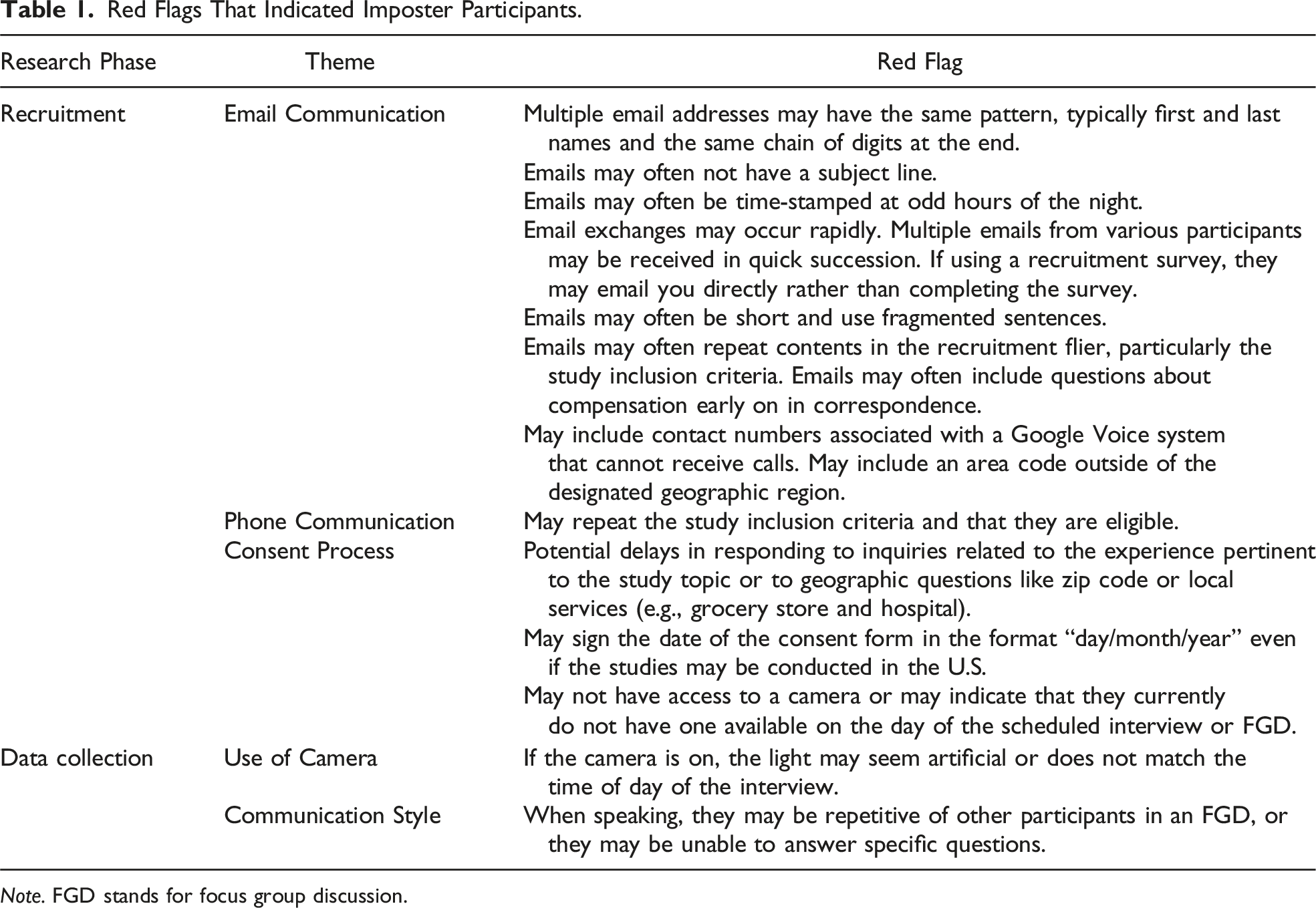

Red Flags That Indicated Imposter Participants

Red Flags That Indicated Imposter Participants.

Recruitment: Email Communication

Whether through direct email communication (Case Study 2) or entering email addresses in an online survey (Case Study 1), the PIs noted consistent patterns across email addresses used as contact information for potential participants. These email addresses generally followed the same email address schema: first name, last name, followed by a series of numbers (Mistry et al., 2024; Ridge et al., 2023). Additionally, email addresses across several individuals often included a different first name and last name that was followed by the same sequence of numbers. These suspicious emails often lacked a subject line and were time-stamped during regular sleeping hours for the geographic location (e.g., 12 a.m. and 5 a.m.) (Ridge et al., 2023; Sharma et al., 2024). Within a short window of advertisement, there would frequently be a surge of emails from these plausible imposter participants (Mistry et al., 2024; Ridge et al., 2023). In Case Study 1, we experienced an influx of over 700 submissions within 1 week of posting the eligibility survey.

When communicating with those expected to be imposter participants, they typically responded to email inquiries within minutes but rarely completed the necessary intake survey. In terms of email content, imposter participants often sent short messages with fragmented sentences, frequently repeating information from the recruitment flyer (e.g., inclusion criteria) (Ridge et al., 2023; Santinele Martino et al., 2024). Another red flag was an almost immediate inquiry about compensation rather than the subject of the FGDs (Ridge et al., 2023). This communication style was particularly curious for Case Study 2 because of the sensitivity of the topic being discussed (i.e., pregnancy loss).

Recruitment: Telephone Communication

In addition to email communication, imposter participants would often use a contact number set up through a telecommunication service such as Google (i.e., Google Voice) (Roehl & Harland, 2022). Additionally, contact numbers frequently had an area code outside of the designated geographic region for the study, as was the case for Case Study 1. When the researcher attempted to reach out to the imposter participant via phone, the phone may not have rung, there was only one ring, or there was no option to leave a voicemail as the imposter participants rarely answered the phone call.

Recruitment: Consent Process

If the imposter participants answered their phone or email during the initial recruitment process, the researchers of both case studies identified additional red flags. During the consent process, imposter participants would often repeat the study inclusion criteria to confirm their eligibility. There was often a noticeable lag in responses, particularly when it came to inquiries specific to the study topic (e.g., zip code and local services) (Ridge et al., 2023; Santinele Martino et al., 2024). Additionally, when signing the consent form, the date was often presented in the “day/month/year format,” even though the studies were conducted in the United States

Data Collection: Use of Camera and Communication

Since the PIs of both case studies identified the aforementioned red flags, only a few participants were found to be imposters during the data collection phase (Case Study 1

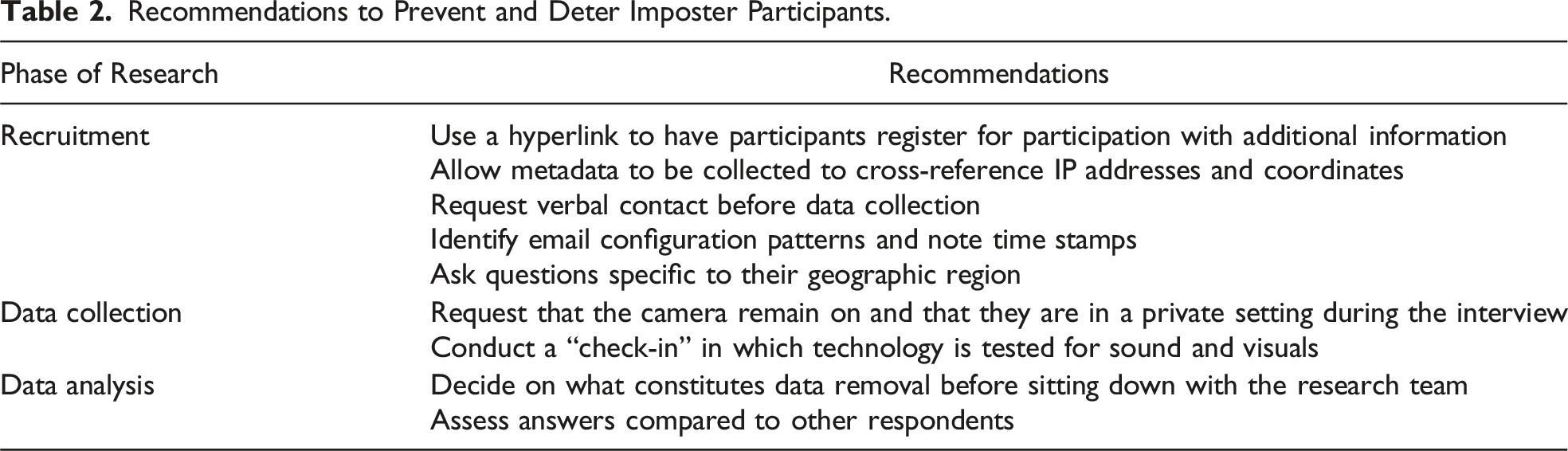

Lessons Learned and Recommendations

Recommendations to Prevent and Deter Imposter Participants.

Recruitment

The first sign that alerted us that imposter participants were attempting to join our studies was the exponential increase in emails expressing interest in our studies. Including a distinct recruitment step that asks participants to complete an eligibility survey may be an effective barrier to deter and identify imposter participants (Drysdale et al., 2023; Sharma et al., 2024). An online eligibility survey can adopt approaches from quantitative scholars who have been concerned with fraudulent survey participation (Lawlor et al., 2021; Levi et al., 2022). For example, including a captcha to identify bots may be useful. Some online survey software (e.g., Qualtrics) also allows the survey host to collect metadata. Metadata is often used as a tool for database management (Ulrich et al., 2022); here, we use the term to describe information collected in a survey that is not directly asked. Survey software, like Qualtrics, collects this data automatically and passively so that it does not require extra time for the researcher to program the survey nor for the participant when answering questions. Researchers have used metadata to identify time spent on surveys to ensure participants were not completing surveys too quickly, which may indicate a lack of attention, or too slowly, which may indicate participants did not complete the study in one sitting. As a tool for identifying imposter participants, metadata may also collect Internet Protocol (IP) addresses and the coordinates of participants (Drysdale et al., 2023). Paired with direct questions that collect contact information (i.e., email address and phone number), metadata can confirm information, like area codes, matches the geographic area participants claim to reside. Metadata also allows researchers to identify multiple names and email addresses submitted from the same IP address with back-to-back time stamps (Ridge et al., 2023). This cross-referencing strategy may be time-consuming; however, for studies that experience response surges, like Case Study 1, it was responsible for identifying and removing most of the imposter participants.

A second step in the recruitment process can include requiring verbal contact with potential participants via phone or Zoom (Ridge et al., 2023; Santinele Martino et al., 2024). If verbal contact is done via phone, researchers should prepare questions that confirm eligibility by matching responses with those reported on the online survey. Additional questions should be asked that go into detail relevant to the study. For example, the research team for Case Study 2 asked participants to state the hospital where potential participants received prenatal care. While this is an opportunity to note awkward pauses that may indicate the individual is looking up the information (Ridge et al., 2023), researchers should also check the validity of answers. In the case of Case Study 2, one participant reported a hospital that did not have a prenatal care unit. Additional geographic questions like “What county do you live in?” or “What is your area code?” can further allow researchers to identify potential imposter participants. If the verbal contact step occurs via Zoom, researchers should request that the camera be on so that researchers can assess that the time of day outside matches their geographic region.

Data Collection

While these safeguards on recruitment should allow researchers to identify most of the potential imposter participants, additional steps during the data collection process can reaffirm participant validity for studies with no in-person component. Additional safeguards may also be important for larger teams where the same person is not conducting all the screening. In addition to making it clear that cameras must remain on during the online interview or focus group (Ridge et al., 2023; Santinele Martino et al., 2024), participants should be made aware that there will be a technology check to confirm that video and audio are working effectively. For those that fail the check, the researcher can ask to reschedule with the imposter participants. This may be followed up with an email that the study is no longer collecting participants, to finally “weed out” these imposter participants from the study.

Data Analysis

Finally, some participants may make it through all the previously mentioned safeguards and fully participate in the study. These participants should still be compensated for their time if the study includes an incentive. However, researchers may remain uncertain of whether these participants are valid. During the data analysis phase, the research team should set a protocol to discuss these potential imposter participants. While reviewing any previous checks that may be questionable (e.g., a slight pause before answering questions and not having a camera that worked initially), researchers should also compare these participants’ answers to other respondents to identify if (a) their answers have reasonable detail, (b) their details given are not consistent within a single interview, or (c) they simply repeat what other have said within a focus group. This strategy may need to be balanced with bias assessments to be certain that researchers are making data removal choices based on imposter red flags and not personal bias toward a certain group of people whose voices may be silenced because of imposter participant concerns (Mistry et al., 2024). Of course, some participants’ validity may remain uncertain to the research team, but the protocol should clearly state if any uncertainty results in the removal of data or if some data will still be analyzed. These measures should be included in the final manuscript, and the existence of questionable participants should be mentioned in the limitations section. Although the authors do not think these recommendations will be foolproof in preventing imposter participants, qualitative researchers conducting online studies must take these safeguards into account when designing their studies, as they are imperative to affirming validity.

Other Considerations: Academia, Institutional Review Boards, Publishers, and Reviewers

While the recommendations above focus on how researchers can prevent and detect imposter participants from affecting the quality of data, the responsibility to address imposter participants should not solely rely on the researchers themselves (which is particularly important for early career researchers). We also propose considerations for academic institutions, IRBs, publishers, and reviewers of qualitative research to ensure this growing phenomenon is addressed at multiple levels.

The recommendations described above serve as only superficial solutions for a problem that is likely to persist in qualitative research as technology becomes a more attractive avenue for recruiting potential participants. Therefore, the potential for imposter participants must become a topic that is openly discussed in qualitative research method classrooms early in researchers’ training. This issue never came up in the PIs research methods courses. A module in any field that utilizes qualitative methods would benefit from preparing its future scholars to take these recommendations seriously as it may impact early scholars’ first projects, theses, or dissertations. Such a module could follow the format of this paper. First, instructors may open class discussions with the history of imposter participants. Next, students may read case studies that highlight how this issue impacts research at various levels (e.g., recruitment, data collection, and analysis). Students can then be given the opportunity to identify strategies for preventing and detecting imposter participants before writing a protocol for their hypothetical research team. In this case, instructors can evaluate students’ thoroughness to prevent imposter participants from impacting their research and probe any holes in their strategy.

Furthermore, some recommendations posed by the authors include tracking metadata through online survey software (e.g., Qualtrics). While this mechanism was a useful tool for identifying imposter participants, IRBs should be made aware of how tracking IP addresses and coordinates will be used in projects. Although other scholars have recommended that ethical review boards should be made aware of metadata being used to detect imposter participants (Mistry et al., 2024), this strategy may be inappropriate for certain groups whose information may make them vulnerable. For example, the use of IP addresses and coordinate tracking regarding patient confidentiality or documentation status may not allow for this strategy. Protocols should clearly state how such data will be collected, used, and secured to protect the privacy of people, even if they are imposter participants. Pretending to be a member of a vulnerable population, such as Black birthing people who have experienced a stillbirth, to earn monetary incentives is unethical. However, we as researchers must recognize that our studies do not exist in a vacuum, uninfluenced by the injustices of the world. For both PIs, the presumed-imposter participants were predominantly from foreign countries where small monetary incentives may be considered substantial, or they may be forced to take on these roles. Regardless of individual motives to be imposter participants, academics must continue to develop ethical practices for preventing imposter participants. Thus, IRBs should receive training on this practice and be available to consult with researchers at their institutions on implementing preventative recruitment practices.

We are not the only qualitative researchers who have experienced imposter participants entering our sample. We are also not the only scholars who have identified questionable or imposter participants after data collection. Considering that publishing is a major pillar for tenure and promotion and the considerable amount of time it may take to conduct qualitative research, publishers and reviewers must also develop a set of guidelines for manuscripts that transparently discuss imposter participants. First, similar to the teaching module evaluating a protocol that was suggested above, reviewers may consider the rigor by which a research team planned to prevent and detect imposter participants. Details of the protocol may be discussed in the trustworthiness part of the method section. Editors should, therefore, be wary of desk-rejecting manuscripts that are transparent about the potential for imposter participants when the authors present an adequate protocol. After all, imposter participants may not always be so obvious. Indeed, there may be participants who the research team is unsure belong in the study. While the research team must be diligent about discussing how to handle more nuanced cases, editors and reviewers should expect these experiences to be noted in the method and limitation sections of a manuscript as well as develop guidelines for what is adequate detail for addressing potential imposter participants.

Notably, the experiences of the PIs do not include all possible red flags. Despite other strong lists of red flags and recommendations (see Mistry et al., 2024), there remains no concrete checklist for identifying imposter participants. Similarly, as technology advances, tactics to impersonate eligible participants will likely evolve. Therefore, the research community in all its facets (e.g., IRB, academia, and researchers) should continue to discuss the imposter participant phenomenon to maintain the integrity and validity of qualitative research.

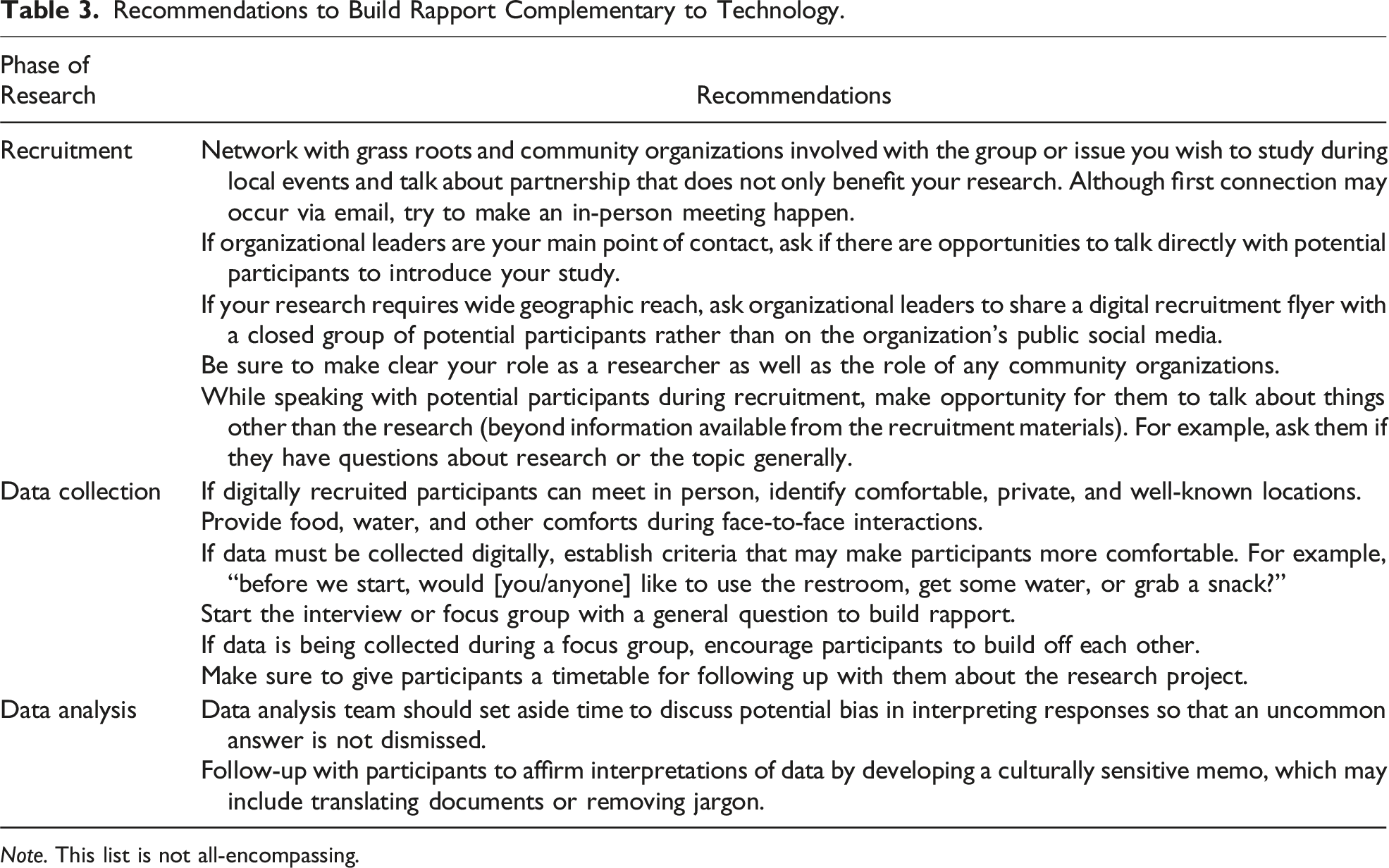

Considerations for Qualitative Research and Health Equity

Across these additional considerations for academia, IRB, and publishers is the theme that the power and significance of qualitative research lies in the people from which it is collected. Both case studies in this manuscript focused on issues of health equity among populations that may be described as “hard to reach” (Flanagan & Hancock, 2010), and thus, recruitment efforts must be thoughtfully implemented. The U.S. Centers for Disease Control and Prevention defines health equity as a state in which every person has the “opportunity to attain full health potential” and no one is “disadvantaged from achieving their potential because of social position or other socially determined circumstances” (Brennan-Ramirez et al., 2008). For populations who historically and currently experience inequity based on group identities, building trust is imperative to addressing issues of health equity. In both case studies, the research team first sought direct connections with organizations with connections to the target populations in order to help identify potential participants. However, the studies became most vulnerable to imposter participants when information about the studies was posted online.

Recommendations to Build Rapport Complementary to Technology.

Conclusions

The experiences with imposter participants from two case studies, red flags across qualitative research, and recommendations to prevent imposter participants highlighted here may serve as a guide for future qualitative research as technological methods gain prominence. It is our hope that this manuscript does not deter researchers from developing trustworthy connections with populations in the pursuit of health equity but rather emphasizes the importance of building these relationships ethically and with a person-first approach before turning to the ease of technology.

Footnotes

Author Note

The views expressed here do not necessarily reflect the views of the Robert Wood Johnson Foundation.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported, in part, by the Robert Wood Johnson Foundation (grant #79754); Health Equity Working Group Grant at the University of Minnesota; School of Public Health; Minnesota Population Center, at the University of Minnesota.

Ethical Statement

Data Availability Statement

The data that support the red flags and recommendations are available from the corresponding author, upon reasonable request.