Abstract

Background

Simulation-based learning is a crucial educational tool for disciplines involving work-integrated learning and clinical practice. Though its uptake is becoming increasingly common in a range of fields, this uptake is less profound in diagnostic radiography and computed tomography.

Aim

This study explored whether CT simulator software may be a viable option to facilitate the development of practical clinical skills in an effective, safe and supported environment.

Methods

A cross-sectional mixed methods design was employed. Students in their third year of study undertook formal simulation CT learning using the Siemens SmartSimulator, prior to a six-week off-campus clinical experience. A pre- (n = 42, response rate = 39%) and post-clinical placement Likert scale survey was completed (n = 21, retention rate = 50%), as well as focus group interviews to gather qualitative data (n = 21). Thematic analysis was employed to explore how the simulator developed students’ knowledge of CT concepts and preparedness for clinical placement.

Results

Survey scores were high, particularly in terms of satisfaction and relevancy. Focus groups drew attention to the software’s capacity to build on foundational principles, prepare students for placement and closely emulate the clinical environment. Students highlighted the need for continual guidance and clinical relevance and maintained that interactive simulation was inferior to real-world clinical placement.

Conclusion

The integration of CT simulator software has the potential to increase knowledge, confidence, and student preparation for the clinical environment.

Background

A vital component in the development of undergraduate medical radiation professionals is clinical education, and simulation is recognized as an essential preparatory tool for work-integrated learning and clinical practice (Chamunyonga et al., 2020). To facilitate a holistic approach to their learning, universities are required to teach a solid grounding in academic knowledge, in conjunction with essential technical and patient-centred capabilities. As a consequence of the busy, outcome-driven and most recently, pandemic-affected times we live in, there is increasing pressure on clinical sites to attend to their core healthcare obligations; unfortunately, this can negatively impact the learning outcomes of students (Ash et al., 2012). Thus, university educators must reassess how to best facilitate the development of practical clinical skills in effective, safe and supported simulated learning environments. Students not only need to be academically prepared for clinical experiences, but also require learning opportunities to develop technical skills outside the clinical learning environment.

Students value simulation training because they can see, participate in, and perform techniques/skills that may not be possible while on placement. While virtual simulation has been successfully embedded within Australian radiation therapy programs, the use of virtual simulation within computed tomography curricula is not extensively reported in the literature. This observation is of concern due to the ever-increasing use of CT in clinical practice worldwide (Australian Government, 2011; Brenner & Hall, 2007). It is well-established that there is a lack of resources and options available for students to access CT simulation and interactive learning. Only two Australian studies confirm the use of simulated learning for computed tomography in a pre- and post- clinical placement setting (Liley et al., 2018; Lee et al., 2020). Our study sought to quantitatively and qualitatively assess the experiences and perspectives of medical radiation students’ within the simulation-based CT environment.

Intervention

The University of South Australia offers a four-year Bachelor of Medical Radiation Science (specialising in either Medical Imaging, Radiation Therapy or Nuclear Medicine). Upon completing this degree, graduates are eligible to work as diagnostic radiographers, radiation therapists or nuclear medicine technologists, which are healthcare professions which use medical radiation to diagnose and treat pathology. In semester one of the third year, students complete RADY3032 (CT and PET Imaging), which incorporates six weeks of theoretical learning. This is followed by third year clinical courses whereby students must gain practical competency in a range of both X-ray and CT examinations by the end of the fourth year. The fourth year of the degree consists entirely of clinical placement where students endeavor to meet remaining competencies. The intervention component of this study was conducted within the six weeks theoretical learning of RADY3032 as part of the students' preparation for clinical placement. The post-clinical placement assessment was delivered after the students returned to university.

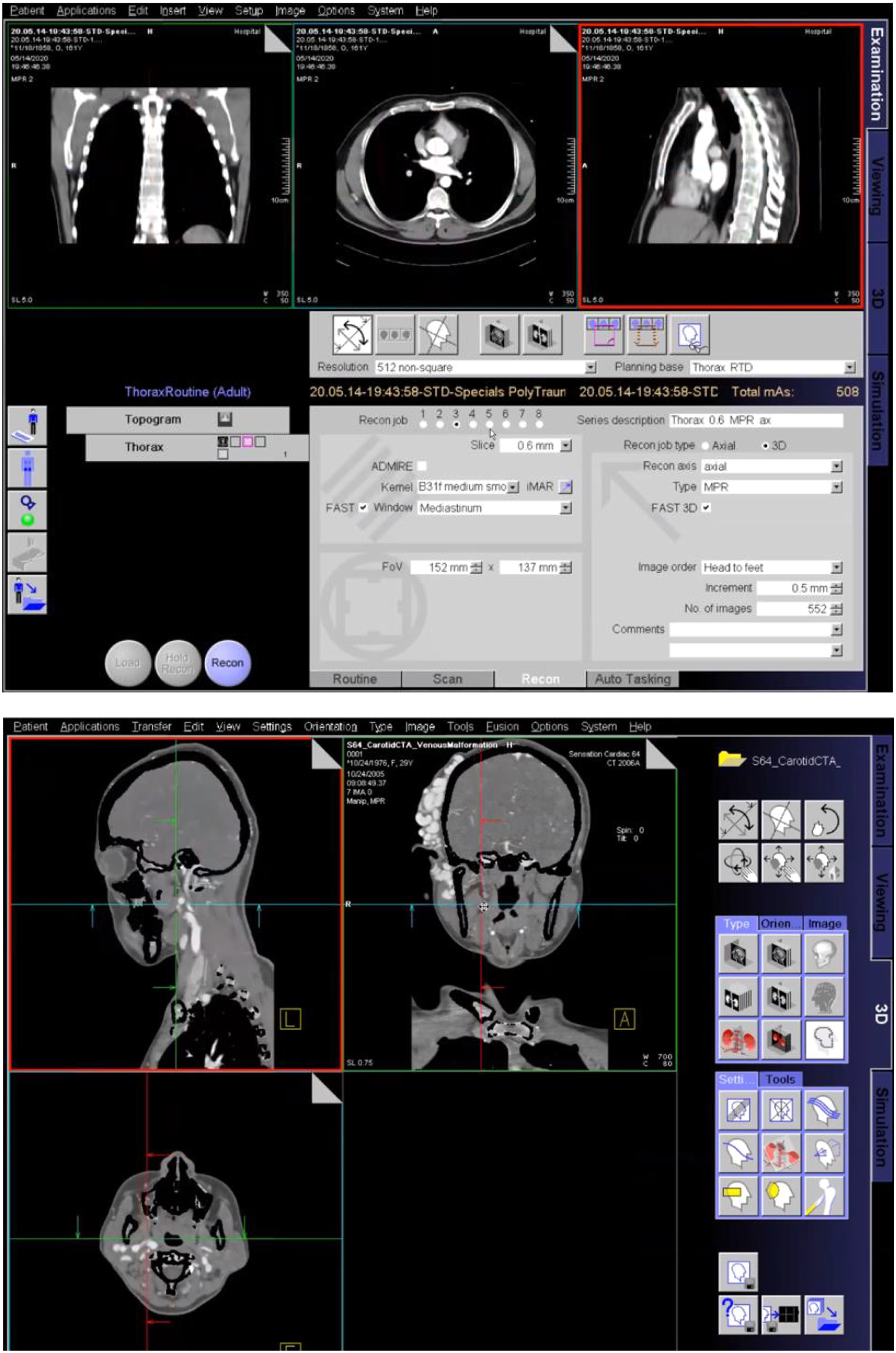

SmartSimulator is a service intending to deliver a simulated educational experience with medical devices from Siemens Healthineers utilising cloud-based technology solution on a personal computer (Siemens Healthcare, 2021). Students had access to a CT workstation simulation of common scanning protocols and a 3D post-processing simulation workstation of acquired CT datasets as seen in Figure 1. Acquisition and post-processing of simulated data sets using Siemens SmartSimulator. Siemens Healthcare (2021).

Methods

The Medical Radiation Practice Board of Australia (MRPBA) (2019) has revised their professional capabilities to ensure that all Medical Radiation graduates are competent at performing CT imaging to be accredited. This project aims to collect evidence on the efficacy of a teaching intervention by answering the following research questions: 1. What are the undergraduate medical radiation students’ experiences and insights in the simulation-based environment? 2. How well does the tool assist students to achieve success through experiential learning? 3. How can the tool be improved to achieve course learning objectives?

Sample

Ethics approval was sought and approved by the University of South Australia Human Research Ethics Committee (protocol number: 203871). The study was conducted according to the World Medical Association Code of Ethics (Declaration of Helsinki). This study consisted of one cohort of medical radiation science students (third years). All undergraduate medical radiation students within this cohort spanning across all three disciplines (medical imaging, radiation therapy and nuclear medicine) were eligible to participate in this study (n = 108).

Students were informed that research participation (survey and the focus group) was voluntary; however, as taking part in a simulation task was part of the assigned curriculum, if they did not wish to participate in the study, they would still need to complete the learning activity. If students did not wish to participate, their assessment results would not be used in the study data and their academic results would not be affected. For the focus groups, all participants were provided a participant information sheet and signed a written informed consent. The participants were informed that the focus group discussion would be audio recorded for transcription purposes.

Research protocol

This study was a cross-sectional mixed methods design, combining quantitative and qualitative approaches (Prentice et al., 2011). In place of a specific medical radiation-specific framework, the INACSL (International Nursing Association of Clinical and Simulation Learning) Standards of Best Practice was adopted to inform proper use of simulation for participants (Watts et al. 2021). This is based on Kolb’s Experiential Learning Theory, which has been used extensively to inform simulation-based education in healthcare fields (Demirtas et al. 2022). Our approach therefore involved facilitating a learner-centered approach driven by the learning objectives and accompanying it with prebriefing and debriefing (Watts et al. 2021). The research protocol aligning to the INACSL standards is described below.

Pre-clinical surveys were provided to assess the students’ attitudes to the SmartSimulator and their learning experience via Likert statements along with open-ended responses to record the best and worst aspects of SmartSimulator. Following the use of the SmartSimulator in week three of the course, participants were invited to complete an online survey. This was conducted at this stage to provide students with sufficient time to form a perspective regarding their simulator experience.The survey questionnaire design and timing was informed by previous studies investigating the effectiveness of simulated learning for diagnostic radiography students (Liley et al., 2018; Bridge et al., 2014; Kong et al., 2015; Shanahan, 2016). The survey included five-point Likert-scale response options to questions about student learning experiences. The post-clinical survey was distributed to participating students once they had completed their clinical placement. This was distributed at this time to explore the relationship between simulator use and performance on clinical placement, and was primarily based on the matched-question design employed by Liley et al. (2018), which explored students’ perception of using a remote access simulation facility in CT learning. Focus-groups were conducted where participants expressed their experiences and personal opinions. The focus group recruitment occurred once the course had been completed, which allowed approximately six weeks for students to properly understand the usefulness and relevance of the SmartSimulator to their studies. Four focus groups of 5-6 participants were conducted in-person. A semi-structured interview guide was developed based on research questions that followed a structure with introductory, flow, key and final questions (Rubin, 1996). The introductory questions were designed to provide the participants with an opportunity to speak about their experiences on a more general level and allow them to freely discuss their experience. The focus-group interviews were undertaken by a staff member external to the course coordination team but specialised in medical radiations. The data retrieved from the focus groups were transferred from audio recording to written transcripts by the same researcher conducting the focus group. Coding was performed to generate themes and construct transparent explanations and perspectives of the emerging structures and relationships. Researchers independently read transcripts multiple times, to find patterns in the data. Student comments were manually coded for thematic analysis using Saldaña’s three pass method (Saldaña et al. 2013). The overall findings of the research were formed using the devised themes by adhering to the consolidating criteria for reporting qualitative studies (COREQ) (Booth et al. 2014). Questions posed to participants are demonstrated in Appendix 1.

Statistical analysis

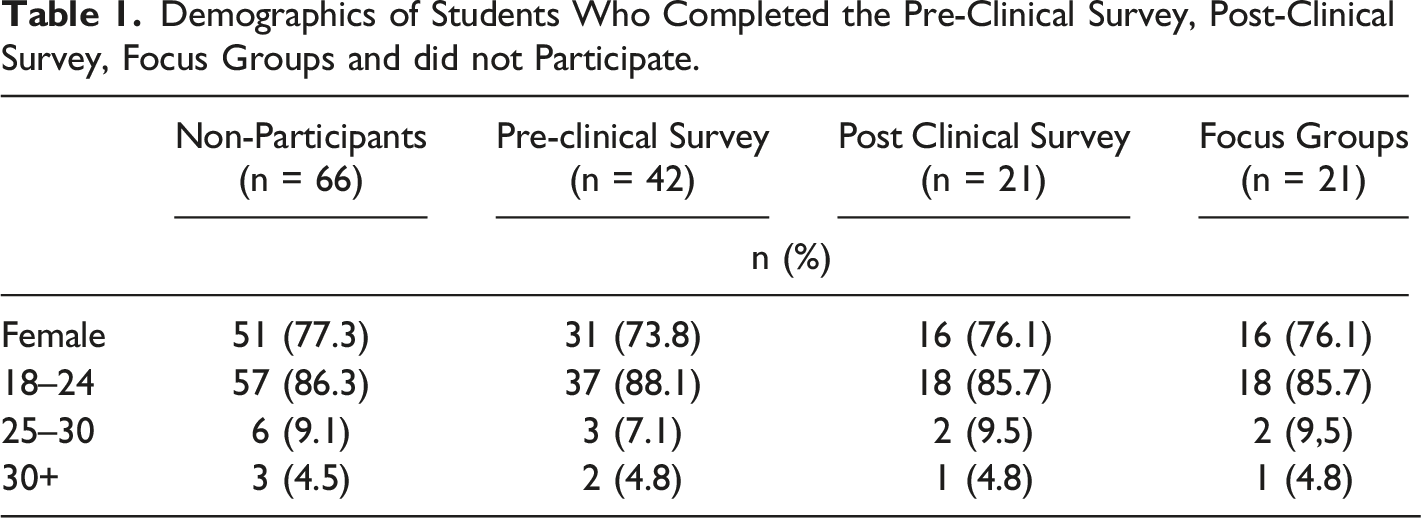

Demographics of Students Who Completed the Pre-Clinical Survey, Post-Clinical Survey, Focus Groups and did not Participate.

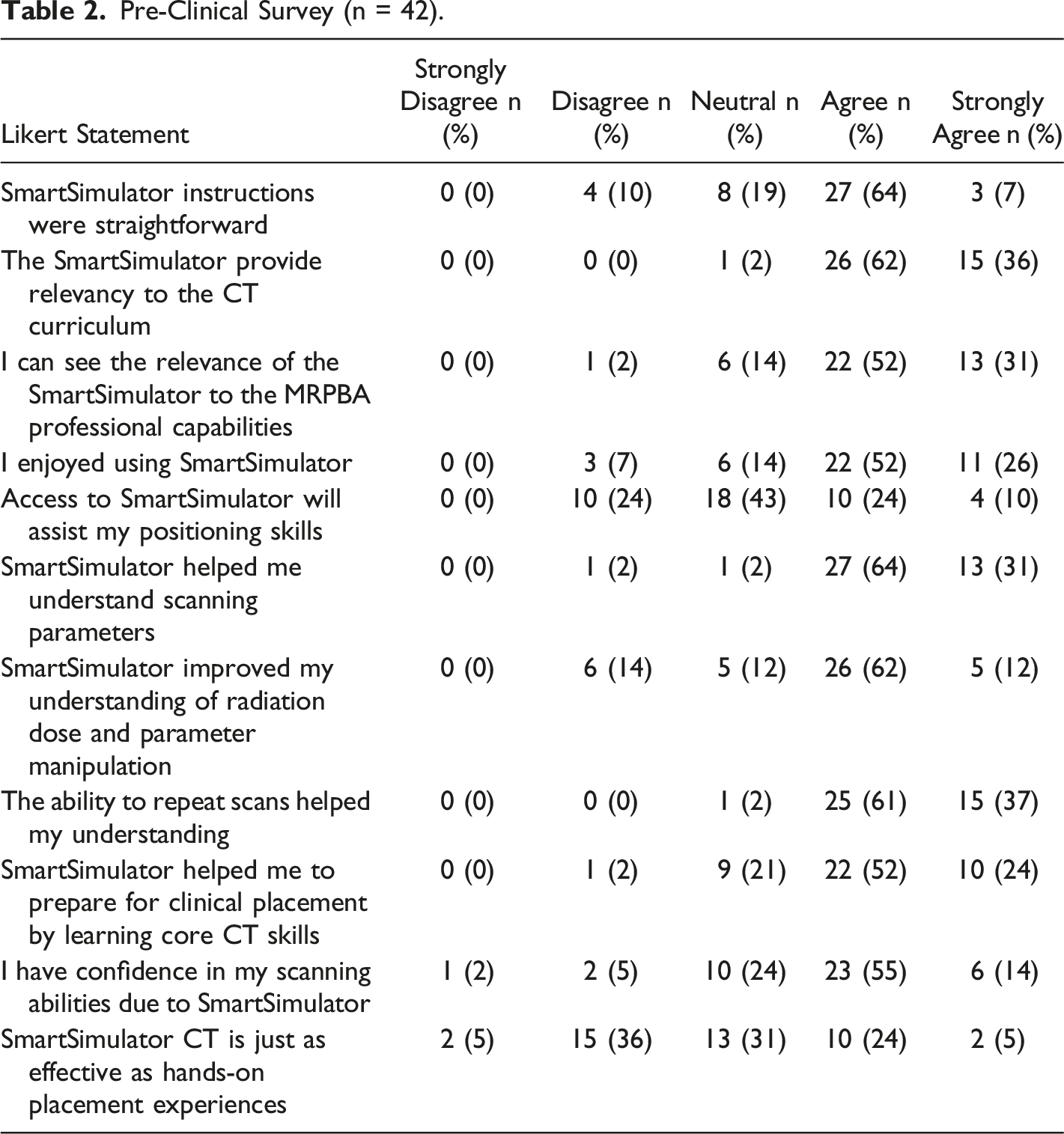

Pre-Clinical Survey (n = 42).

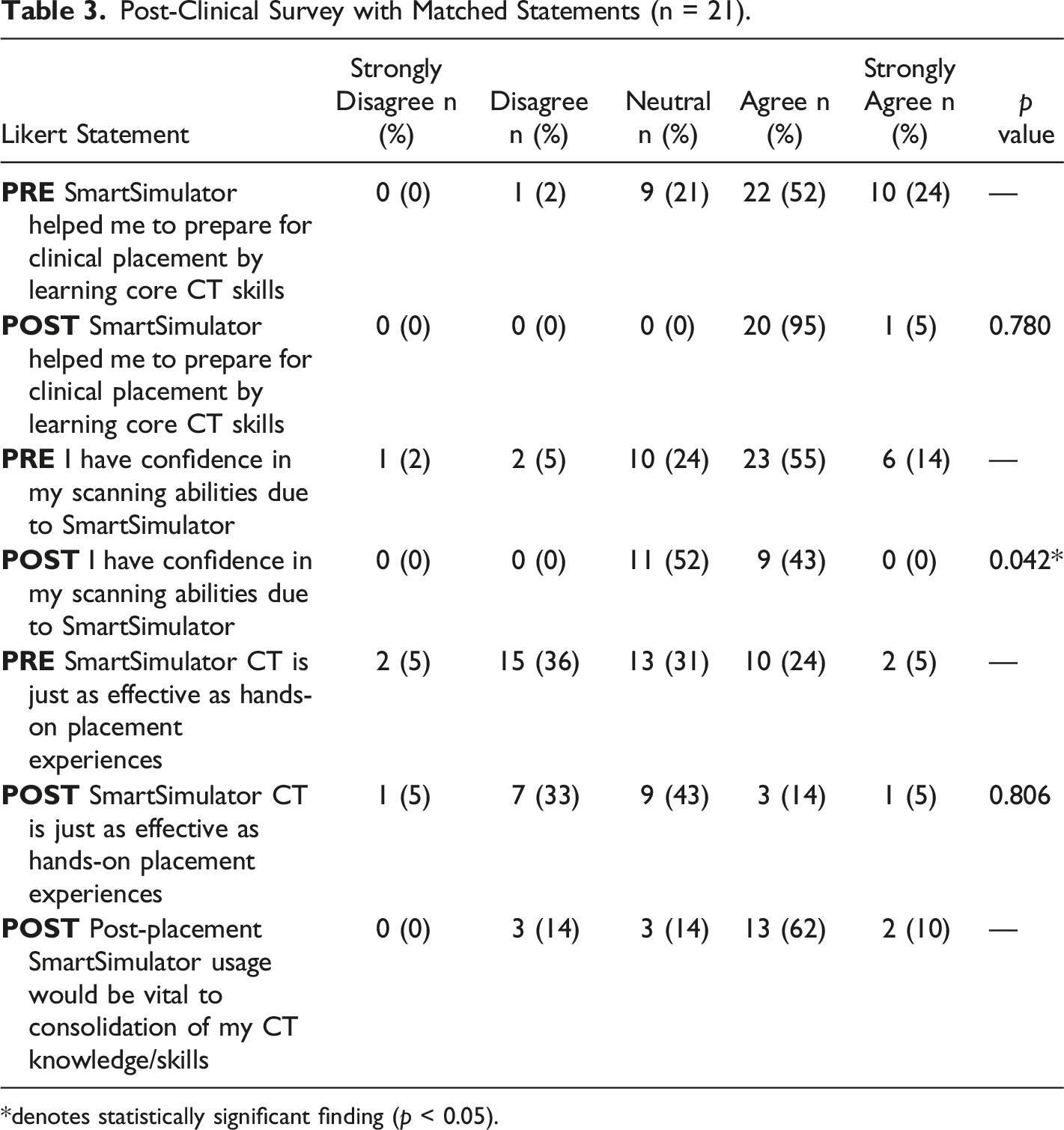

Post-Clinical Survey with Matched Statements (n = 21).

*denotes statistically significant finding (p < 0.05).

Results

Pre-clinical surveys were obtained from 42 students and 21 of these students returned post-placement surveys (retention rate of 50%) and participated in focus group interviews (Table 1 and 2). Mann–Whitney U-tests demonstrated no significant difference in terms of student preparedness for CT clinical placement using the simulator program, as well as no significant difference in their preference for hands-on learning after placement (p = 0.806) (Table 3). Overall, students perceived a significant decrease in skills confidence post-placement (0.042). These findings are largely consistent with Liley et al. (2018), in that no significant results favouring post-placement outcomes were found in either study. Nevertheless, when viewed independently, both pre- and post-clinical Likert scores were generally considered high.

The pre-clinical survey indicated that 38% of respondents had operated a CT scanner prior to their placement in a previous clinical experience. Scores were generally favourable with regards to student enjoyment (78% agreed or strongly agreed), positioning skill development (67%), and parameter and dose knowledge acquisition (85% and 74% respectively). The majority of students found the program’s instructions to be straightforward (71%). Qualitatively, students detailed that advice from supervisors was paramount in the activity’s capacity to advance their learning. The overwhelming feeling associated with learning new technologies were diminished in students who sought help from supervisors, which was largely dependent on the student's willingness to ask for assistance. Students highlighted that a disadvantage of the simulation activity was that it was conducted without tutor supervision, meaning that assistance and feedback was often delayed until students could receive it through online communication. Although some students highlighted this as a major advantage of real-world scanners, they also cited that the busy nature of the department meant that their interaction with their mentors was limited:

‘We weren't allowed to touch the controls and they go through it very quickly’.

With reference to the post-clinical survey, 72% of the participants agreed or strongly agreed that SmartSimulator usage was vital to consolidation of CT knowledge/skills. A major benefit of the simulators was the ability to develop knowledge previously acquired in the classroom and on clinical placement. Students who had already experienced a ‘real’ CT scanner found the simulator as a beneficial reinforcement to their knowledgebase. This perception was experienced by students in the study by Lee et al. (2020), who noted that a lack of familiarity with a CT scanner prior to the activity made simulation-learning time-consuming and difficult to navigate. These findings suggest simulation may be best implemented once at least a brief insight into the CT workstation is established.

The software proved useful for students who had not yet encountered clinical placement, as it offered pupils the most comparable experience possible to clinical practice. The liberty to make mistakes without actual patient or supervisor-related repercussions was a highlighted benefit and reinforces the value of prior exposure to the real radiographic environment. Quantitatively, the post-clinical survey saw 29% of the participants indicating that the SmartSimulator CT was just as effective as hands-on placement experiences.

“I found it was exactly the same as when I was on placement, so found it easy to replicate what I was seeing in class. I was quite confident with the buttons, layout and the process that the radiographers took. It was really good.”

“There is nothing quite like operating the real machine, but that being said it was great to practice windowing, and for the theory side of things. Sometimes on placement you have to scan as quick as possible, but with the simulators you can take your time and practice the theory.”

The need for rapid, responsive and trustworthy technology was highlighted. Given the fast-paced and demanding nature of both modern technology and contemporary university education, it is natural for students to hold certain expectations about the capability of their learning devices to accurately deliver their needs within a rapid timeframe. Hence, technical issues regarding simulation software were cited to be a major drawback to students’ learning process. This often saw them become discouraged with the learning process and development, ceasing their study time prematurely due to lack of interest.

“...our time was spent trying to figure out what to do (when things went wrong) and not actually on answering the questions”.

98% found the intervention to be relevant to their learning within the course as well as in relation to industry requirements (83%). In the focus groups, students suggested the simulators could be improved by strengthening the connection between learning and clinical work, via use of clinical scenarios and case-based learning.

‘I think being able learn in a more practical way (ie. the simulators), put it more into perspective. On placement), even if you were not scanning, you could bring up the next patient or do reconstructions. It was beneficial knowing you could help out.

‘I think it could be more exciting if we were given a scenario and we had to pick a protocol based on the scenario. This would have made it more ‘real-life’.’

Discussion

This study investigated undergraduate medical radiation students’ experiences and insights in the simulation-based environment. Students agreed that the simulation program had a positive impact on their understanding of theoretical and practical CT concepts. Although this was a small-scaled project centred upon medical radiation students, a wide range of experiences were noted, and students considered the simulation experience a valuable educational tool. Satisfaction of the program proved high, and most students acknowledged the relevancy and importance of the intervention for their development. It assisted in the acquisition of CT knowledge prior to undergoing placement, as well as consolidation of principles for those who had previous student clinical experience.

With external accreditation standards requiring medical radiation students to become competent in CT, the implementation, and utilisation of CT simulator is imperative (MRPBA, 2019). Conventional methods and actual scanning time are effective in developing theoretical and practical CT elements (Hawarihewa et al., 2021); this is reflected in the mixed responses regarding whether simulator technology has comparable effectiveness to the real CT experience. However, the use of CT simulation certainly appears to assist students' perception of CT competence, within a safe learning environment (Liley et al., 2018; Lee et al., 2020). This was seen most profoundly in the high satisfaction and mistake-learning elements of the pre-clinical survey.

As seen in both qualitative commentary and surveys, the simulators achieved its role in bridging the gap between theory and practice. Allowing students the ability to familiarize themselves with the CT control panel proved a crucial steppingstone in evolving their learning to more sophisticated concepts such as analysing the impact of imaging parameters on radiation dose and image quality. Such development may lead to superior image quality, better time-management skills, and enhanced patient safety (Valentin, 2007). The benefits of CT simulation may extend beyond student learning; as it was successful in replicating actual CT for many students, this has implications of relieving the heavy burden that exists in meeting the educational needs of students on placement, whilst simultaneously meeting patient demands and upholding their institution’s standards. CT simulation can therefore make available clinical time and resources that are not feasible to learn at home or university, such as workflow processes, patient communication and interaction with surrounding professionals.

A majority of students (69%) initially showed high level of confidence in their scanning abilities using the simulators. However, from the post-placement surveys, 52% of students indicated ‘neutral’ in their confidence in scanning, after placement. The interpretation of this data must be treated with caution due to a low retention rate (50%) in the post-clinical survey. It could be explained due to their exposure to different vendor-specific scanners (i.e. not Siemens CT scanner). This could potentially lead to reduced confidence in scanning and navigating their interface and platform. However, nearly three quarters of students also expressed that the use of simulators would be useful to consolidate their CT knowledge and skills. This finding is consistent with Liley et al. (2018) and is highly important for future planning of CT courses and preparing students for their prospective clinical placements.

In contrast to real-world placements where students receive guidance in real-time, a highlighted disadvantage of the simulators was that they were used independently without a tutor. In other healthcare fields, when students are able to practice simulation under the supervision of faculty, they feel more confident and competent when entering the practice setting and are assigned care for patients (Durham & Alden 2008). This serves as a reminder to educators that as the capacity to teach off-site over cloud-based platforms is enhanced, the fundamental aspect of teaching must still be retained; this will entail intimate supervision in the preliminary stages of learning until students are able to consistently perform tasks without it. Nevertheless, when this level of competence is reached, remote access to simulation resources has been viewed positively by students, as it allows greater flexibility relating to when and where they engage with content and permittance for work at their own pace (Shanahan 2016).

Limitations and suggestions for further future research

In conjunction with the limitation of a low response rate (38.9%) and low retention rate (50%), this study did not feature a control group not receiving the simulation intervention. This was performed to ensure all students had access to the most contemporary educational approaches. A prospective randomized controlled trial with a control group using traditional teaching method would provide further insights into the benefits and efficacy of this simulation technique. Furthermore, the location and nature of clinical placements also differed amongst students, with some sent to busy public hospitals and others practicing at smaller private CT institutions. As well as this, students may have accessed additional learning resources to enhance their knowledge during the study period. These factors could influence the experiences and perceptions of the students after placement. This therefore suggests that heterogeneity of our findings cannot be excluded. Additionally, a baseline assessment of confidence or prior experience through focus group interviews would also have been helpful to better place the pre-clinical results in context. Future studies should explore the effect of simulator education on more objective skill outcomes. Only when research can determine whether simulation objectively enhances students’ clinical skills, can the research agenda then move toward determining whether the ultimate goal of superior patient outcomes is being achieved. Lastly, the cost-effectiveness of simulators was not evaluated in the study.

Conclusion

Investigating the perceptions of students learning CT principles and skills using simulation equipment is imperative to determine their preferences, and inhibitors to their development. This study saw the use of cloud-based CT simulator technology in preparing students for clinical placement, whilst permitting for a safe environment where mistakes could be made without real-world clinical impact. Overall, students thoroughly enjoyed this interactive learning experience. However, the perception that interactive simulation is inferior to real clinical experience was maintained. The process could be enhanced through greater guidance, technological support and scenario-based learning. This reinforces the need for continual educational and financial support, so that the full potential of CT simulators can be realised. Further studies are required to investigate whether the inclusion of simulators enhances objective skill and knowledge outcomes. Simulators will prove to be a significant inclusion in undergraduate curriculum, especially in the post COVID-19 world.

Footnotes

Acknowledgements

The authors would like to acknowledge the assistance of Dr Claire Aitchison from the Teaching Innovation Unit at the University of South Australia.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Early Career Academic Innovation Grant, University of South Australia.

Focus Group Questions

Introductory questions

Follow questions

Key questions

Final questions

Have you previously used the real-life CT scanners during your clinical placements?

If you have used the CT scanner(s) during your clinical placements, how does the SmartSimulator compare to the real-life scanner(s)?

What are the advantages of the SmartSimulator?

Is there anything more you want to share about your experiences with the use of SmartSimulator?

Can you share some of your experiences of using the SmartSimulators?

How does the SmartSimulator improve your understanding of the courses’ content/concepts?

What are the disadvantages of the SmartSimulator?

How can the Simulator assist or prepare you for clinical placements?