Abstract

Keywords

We have seen a surge in the use of games to collect data for research questions outside games research itself, variously called

Data Collection With Games: Forms and Reasons

More systematically, one may distinguish

Looking across these varied instances, one can make out four main reasons games are used for scientific data collection. The first is

Validity of Data Collection With Games

Whenever data is collected, the question of

Beginning with Campbell (1957), researchers have developed multiple typologies of validity and validity threats, usually structured around aspects of claimed causal relations between the treatment and outcome of an experimental study. In a classic typology, Shadish, Cook, and Campbell (2002, pp. 38, 42-93) distinguish four kinds of validity:

When it comes to games research, various validity threats have been mentioned: the use of different games as experimental conditions that vary in more than the targeted aspect (Ferguson, 2015); failing to recognise differences in gaming expertise linked to different game genres (Latham, Patston, & Tippett, 2013); or the high cognitive load of games, making it difficult for players to play a game in a natural way

Structure of This Article

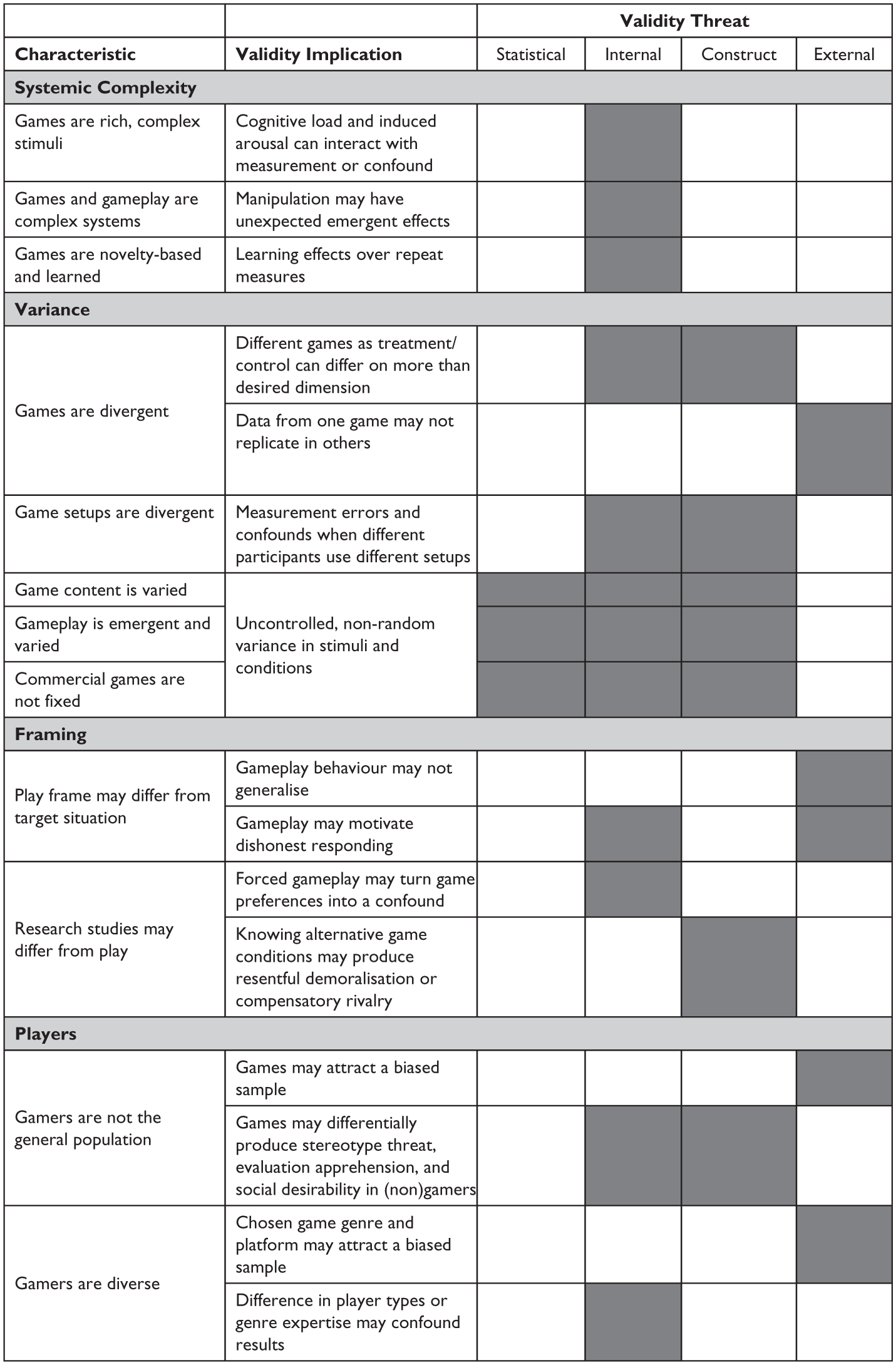

To move the field forward, this article surveys known validity threats in the use of games for data collection, as well as highlighting potential, as-of-yet unstudied threats where there is a compelling argument for them. In interest of space, we constrain the discussion to well-established validity issues of quantitative research that are particularly pertinent to games. We organise our discussion along three key features of games that appear to underlie most validity issues we identified, namely

Overview of game characteristics. Shaded cells highlight relevant validity implications.

We arrived at this systematisation through a combined bottom-up and top-down approach: Bottom-up, we conducted an opportunistic literature search across major relevant databases (Web of Science, Scopus, ACM Digital Library, Google Scholar), searching for “validity” and “games”, resulting in 10 relevant papers (see References). Top-down, we used the typology by Shadish et al. (2002) to systematically ask for each validity threat they classify where and how it may manifest around digital games. Clustering reported and hypothesised validity threats, we arrived at a smaller subset of threats that we then tried to variously organise, arriving at three high-level characteristics of games that appeared responsible for them. In response to reviewer suggestions, we factored out player-related threats into a separate fourth category. We expressly view our proposed systematisation as a first draft.

For each of the four groups, we will first introduce underlying characteristics and then report observed and potential validity threats. In the discussion and conclusion, we will draw some overarching observations on the opportunities and challenges of using games and game design in data collection and outline areas for future research.

Systemic Complexity

Many even simple games form

What this means for researchers is that isolating and manipulating constituent parts – let alone psychologically “active ingredients” (Michie & Johnston, 2013, p. 469) – of a game is inherently challenging. But without isolating features we risk confounds in our experimental manipulations – third variables that provide a competing explanation for our results. Confounds threaten internal validity, meaning that we may not be able to justify that the treatment has observed an effect.

Within this section, we identify and address three characteristics of games that are involved in their complex systemic constitution:

Games are rich, complex stimuli

Games and gameplay are complex systems

Games are novelty-based and learned

Games Are Rich, Complex Stimuli

Stimulus complexity

Games and Gameplay Are Complex Systems

The systemic interrelation and interaction of elements of games makes it is hard to change one aspect of a game in isolation (Kim & Shute, 2015). In psychological parlance (Littman & Rosen, 1950), changes on the

For game-based data collection, we see two particular ramifications. First, any manipulation (e.g. imported from a standard non-game study design) may generate unforeseen interactions and emergent dynamics in addition to the causal effects it is hypothesised to produce. Therefore, researchers should prototype and pretest manipulations to check for these confounds. This means that researchers need a clear idea of what confounds to look out for. For example, Carnagey and Anderson (2005) used two modes of the same racing game to manipulate whether violence is rewarded in the game. In one condition, players were rewarded for killing pedestrians and race opponents. In the other, they were prevented from doing so. While most differences between the conditions were controlled by using the same game, the difference in used game mode still arguably had an unintended knock-on effect on competitiveness (Adachi & Willoughby, 2011a). Concretely, there were different numbers of things to compete on in each condition. The condition where players were rewarded for destroying race opponents had two sources of competition: winning the race and surviving the free-for-all. This second source of competition was not present in the control condition where destroying race opponents was prevented. To us, this suggests that researchers should incorporate game design expertise in pretests, as these kinds of unexpected dynamics are likely more readily apparent to experienced game designers.

Relatedly, the development process itself has a potential significant impact on the conditions designed. In games, it is typical (and practical) to develop a first part of the game (“first playable”, “vertical slice”) in high detail to establish the game’s core mechanics and gameplay. All subsequently developed parts or levels are effectively variations and extensions on this core gameplay. Hence, designers are constrained in the development of subsequent content (or conditions) in a way that they are not in the first, and there will often be a general difference in quality, be that in terms of fun or balance, between initial and subsequent conditions developed in this way. As McMahan et al. (2011, p. 4) assert for experimental designs in general: “in our experience, researchers may spend more time or effort implementing a condition that they subconsciously (or consciously) favor, biasing the study toward that condition.”

Games Are Novelty-Based and Learned

Novelty is the property of an experience being new (Silvia, 2006). Simply put, the first experience of a stimulus or measurement instrument is different from subsequent encounters. In situations where novelty features strongly, the validity threat of

This relates to two time-related characteristics of games. First, interest and curiosity are two major intrinsic motives and sources of enjoyment in gameplay. Games feature properties like dramatic conflicts, novel content, puzzles, or randomness to afford uncertainty that draws attention and is satisfying to resolve (Costikyan, 2013). If a part of a game entails largely the same outcomes and experience on replay, less uncertainty, curiosity, and interest are likely to arise. This is of particular relevance to games focusing on narrative: after a first play-through, the novelty and dramatic tension of their plot is largely exhausted (Roth, Vermeulen, Vorderer, & Klimmt, 2012). As a result, if players play the same section of a game twice – once as a treatment and once as a control – the diminishing uncertainty, curiosity, and interest may become major learning effects.

A second time-related game characteristic is that players over time learn to play them well. In fact, a large part of their enjoyment arises from the competence experience of learning to master the game (Deterding, 2015; Koster, 2005). Most games are therefore designed with a careful scaffolding of required and taught skills and knowledge, increasing difficulty in lock-step with growing player skill (Chen, 2007). If a player returns to replay earlier game sections they’ve already mastered, they are likely to find it easy to overcome its challenges, and will thus likely experience mastery or learning – again, a strong possible learning effect. Another potential learning-related confound is the difference between learning a new skill and performing a mastered skill, which can express itself in e.g. error rates, time taken, exploratory versus goal-oriented behaviour, and the like. Players’ game knowledge may even threaten construct validity. For example, if multiple play-throughs allow players to memorise puzzle solutions rather than solving puzzles anew, game performance may be indicative of short term memory rather than problem-solving abilities. The amount of time participants have to learn a game before engaging with the game section that constitutes the experimental manipulation/control may also confound results. Too little time and players lack of skill may prevent them from effectively completing the experiment. Too much time may lead to ceiling effects or converging upon optimal strategies. Finally, which section of the overall sequence of a game players play may significantly impact what level of difficulty and required skills they encounter.

These strong potential learning effects and other confounds are of particular concern in within-subject, repeated measure designs where the same subject is presented with control and experimental condition in sequence. Apart from choosing different study designs, one common mitigation is to vary the sequence of control and manipulation conditions as part of randomisation. A second mitigation strategy would be to use techniques like adaptive procedural content generation and pre-testing to ensure that game content in each condition is equally novel and difficult.

Variance

Next to complex systems, another popular way of framing games is as a possibility space (Squire, 2008). Games open a space or tree of possible states and partly relinquish control over in-game events to extraneous influences like player choice or randomness. Control, however, is the

Games are divergent

Game setups are divergent

Game content is varied

Gameplay is varied and emergent

Commercial games are not fixed

Games Are Divergent

Games are a highly diverse medium, with different interfaces and controls (e.g. desktop monitor plus mouse and keyboard versus mobile touch screen versus motion control plus VR headset), different social contexts and configurations (e.g. public competitive Esports play versus private cooperative or competitive multiplayer gaming versus solitary play), and different genres (e.g. open world exploration, casual puzzler, idle game, RPG, first person shooter), each affording different demands and experiences. No single game can therefore be taken as representative of all games. The selection of a particular game, including its interface, controls, social context and configuration potentially threatens internal validity if different games (or game configurations) are used for different experimental conditions, and impinges on external validity in terms of how well or widely any findings generalise. Indeed, the use of different games to operationalise different experimental conditions has been cited as a critical internal validity issue in the violence in video games literature (Adachi & Willoughby, 2011b; Ferguson, 2015). Because the games used differ on more dimensions than just violent content, these dimensions present potentially confounding variables (Elson et al., 2013).

In response, some scholars have suggested ways to match games on certain criteria (Adachi & Willoughby, 2011b), such that key features considered relevant to the investigation vary only in desired respects. For example, research on violence in video games has adopted this approach to match violent versus non-violent treatment and control games on competitiveness (Adachi & Willoughby, 2011a), difficulty of controls (Przybylski, Rigby, & Ryan, 2010), and frustration (Przybylski, Rigby, Deci, & Ryan, 2014). Two difficulties arise with this matching strategy. Firstly, games may not successfully be matched on the given factor. Secondly, games may remain divergent on unmatched factors that reveal themselves to pose confounds. For instance, Anderson and Dill (2000) selected the two games WOLFENSTEIN 3D (id Software, 1992) and MYST (Cyan, 1993) as violent treatment and nonviolent control because they matched for “blood pressure, heart rate, frustration, difficulty, action pace, enjoyment and excitement” (Adachi & Willoughby, 2011b, p. 58). Yet in this, Anderson and colleagues missed that the games also differed in competitiveness, which proved to be a significant confounding variable (Adachi & Willoughby, 2011b).

Another approach is to adapt a single game to provide treatment and control conditions, a so-called modified game paradigm (Hilgard, Engelhardt, & Rouder, 2017). This adaptation can often be achieved through modding an existing game (e.g. Elson & Quandt, 2016; Engelhardt, Hilgard, & Bartholow, 2015; Mohseni, Liebold, & Pietschmann, 2015), or developing bespoke games (e.g. Zendle et al., 2015). This allows researchers to control the experimental conditions far better, and avoids the potential confounds of different games. The challenge, as explained earlier, remains that a small manipulation within a game can have unforeseen and undesired emergent systemic effects on gameplay and player experience.

Game Setups Are Divergent

Related to the diversity of games, the way digital games are technically delivered and instrumented can vary greatly. This leads to potential measurement errors or confounds, as the means for collecting, transmitting, and recording data are subject to error, interference, or unwanted variance. This issue is particularly acute in remote/online designs, where researchers have less control over gaming hardware, controls, and networks. Variance in participants’ computers, controls, or networking bandwidth can cause issues with tasks and measures such as those involving reaction times (Hilbig, 2016; Reimers & Stewart, 2007).

Game Content Is Varied

Even within a single chosen game, the interactivity of games means that the actual content (levels, puzzles, rewards, challenges) players experience will vary between players and game sessions, often significantly and to a not fully predictable extent. This threatens construct validity, as it may be hard to ensure that or discern whether players experienced the desired stimulus, and to ensure that or discern whether they experienced other, undesired variance in stimuli. If game performance is also used as a measurement instrument, such as in educational assessment, chance variation may overwhelm the meaningful information it contains. On the structural level of a game’s design, there are at least three sources of emergent variance.

Games provide player choice

Games relinquish significant control to the player (Klimmt, Vorderer, & Ritterfeld, 2007), supporting choice in what character they embody, what goals they pursue, what strategies they use and what actions they take. Not only is such meaningful choice directly fuelling engaging and enjoyable autonomy experiences (Deterding, 2016b): it can regularly lead different players to perform different actions and experience different outcomes. Within an experiment, in contrast, tasks performed are usually strictly controlled, and undesired variance in outcome minimised. While almost all games offer some degree of player choice, the amount of choice offered differs markedly between game genres and games. Where some

Games often include random events

Particularly so-called games of chance relinquish control over key game events to randomness. In other games, randomness is incorporated in the design through procedurally generated content to afford replayability: a random seed is used to e.g. generate a different game map every time. Where such events are

The starting situation can vary

The starting situation of a game can be fixed or variable. For instance, many games allow players to customise their characters before start, configure controls, or set the game difficulty. These configuration options are one of the easier variables to control. For instance, a save game or starting setup can be prepared and loaded to ensure consistency across participants. The important thing is to account for this potential variance, especially in remote designs. However, some game details change separately and automatically, for instance the game-wide unlocking of new items, levels, or achievements, which affects the possibility space of all subsequent players.

Gameplay Is Emergent and Varied

By relinquishing control over the game state to player agency, games open the systematic possibility that players act differently with every game session. Game theoretically, one can model this possibility space of actions as a decision tree (Elias, Garfield, & Gutschera, 2012). If players were fully informed and rational actors in rational choice terms, solely motivated to win the game, one could calculate and predict the strategically optimal move, and such game theoretic calculations are indeed a common tool among game designers (J. H. Smith, 2006). However, especially in games with two or more interdependent actors (human or artificial), possible choices and game states quickly compound to a point where calculating the optimal move becomes humanly impossible: three pairs of turns into chess, there are 121 million possible game states, for instance. Nevertheless, game sessions display higher-level dynamics and player communities and expert players evolve higher-level strategies and heuristics to reduce this complexity, which again interact and change in hard to fully predict ways (Elias et al., 2012). In active player communities around games like LEAGUE OF LEGENDS (Riot Games, 2009), for instance, shared views about optimal high-level strategies like character choice (“the meta”) are in constant flux. Complicating the picture further, player actions are regularly shaped by more concerns than mere winning (Gundry & Deterding, 2018). Overall, this means that especially in interdependent multiplayer games and other games with so-called emergent gameplay (sic), gameplay actions and experiences are hard to control and predict on a low level and showcase emergent but again not fully predictable nor controllable patterns on a higher level of organisation.

Commercial Games Are Not Fixed

Current trends in game development mean that even an individual game’s variance in content is not necessarily stable, but may change between sessions and over time.

Game updates and A/B tests

The widespread availability of high-speed internet connections has enabled a new development and business model usually called

This risk can be easily avoided in games purpose-made for research. When using existing entertainment games, researchers should decide on a canonical version of the game for the purposes of the study wherever possible, document the version of the game used, and ideally make a copy of it available for future researchers interested in replication. In some cases, a canonical version may be safeguarded by downloading a local copy, disconnecting the game from the internet, or disabling updates. Where a stable version cannot be ensured and a game is updated during an experiment, the researcher should consider and report any potential impact this may have had.

Adaptation and content generation

Many games tailor the experience to the individual player. Single-player games commonly use techniques like dynamic difficulty adjustment (Hunicke, 2005) to give players a satisfying experience by adjusting difficulty based on their past gameplay performance, or even procedurally generate whole levels to keep content novel for players (Shaker, Togelius, & Nelson, 2016). While these systems aim to provide players with an overall evenly enjoyable experience, they reduce control and predictability of actual moment-to-moment game content for researchers. Whether or not to choose a game with dynamic difficulty adjustment or procedural content generation thus becomes an important research design consideration: if an even higher-level player experience is desired, such games may be the best option (but need pretesting). If low-level control is needed, they are to be avoided.

Multiplayer games

Other players in multiplayer games are sources of variation. For example, one participant may face an easy opponent, while another faces an experienced opponent. While competitive multiplayer games use ranking and matchmaking systems to provide players with an overall even, fair experience (Sarkar, Williams, Deterding, & Cooper, 2017), individual match experiences still differ in many respects. Similarly, online player communities may differ between servers and change over time in their size, activity, demographics, norms, and practices (Bartle, 2004). Thus two players of the same multiplayer game may have different experiences based on when they played and who they played with.

This variance of multiplayer games can be somewhat controlled by using confederates or bots. However, this may in turn produce history effects (when confederates tire out over multiple plays) or threaten ecological validity, as scripted human or bot play may differ from spontaneous play. Where multiple study participants play together, it may be appropriate to use a Group Randomised Trial design to adjust for intraclass correlation (Murray, 1998).

Framing

People’s everyday life is organised into different kinds or types of social situations that each come with particular roles, norms, and expectations: going to the movies, shopping at a store, giving a lecture, etc. During socialisation, people learn what kinds of situations exist in their society, and how to understand and act appropriately within them. Sociologist Erving Goffman (1986) first extensively studied these kinds of situations, calling them

Play May Differ From the Target Situation

One oft-mentioned feature of digital games is that they allow the

Games mute socio-material consequences

As mentioned, in-game events usually have lowered practical and symbolic consequences compared to their non-play-framed counterparts. While gambling and the rise of real-money trading and microtransactions around in-game items provide plenty of counterexamples, in many games, there is no bodily or economic risk involved in game outcomes. Players may therefore be more risk-taking in games than they would be in the real world. There is rich related debate in economics on how much participants need to be paid and what real-world payout consequences there need to be for participant decisions during a study for the study to count as ecologically valid (Camerer, Hogarth, Budescu, & Eckel, 1999). Several studies on economic games find differences in player choice when monetary incentives are added (Schlenker & Bonoma, 1978).

Games invite strategic action

Games are one of the few social contexts in which ruthlessly rational, strategic, self-interested action is allowed and even desired: a player who doesn’t try hard to calculate and take optimal moves in order to win would be considered a spoilsport (Deterding, 2014). This norm of gameworthiness is counterbalanced with norms of playworthiness – having fun together – which may result in suboptimal behaviour like self-handicapping. However, different game contexts and genres come with different norms how ruthlessly one is allowed and expected to play (Deterding, 2014), and these norms may differ from the situational norms of the activity of context that one wishes to collect data on. For instance, if a game is designed to elicit people’s preferences about different flavours of ice cream, and there is a strategic in-game advantage to answer “chocolate” even if one

Research Studies May Differ From Play

Like play, research studies also constitute a social frame with norms and expectations of their own – what psychology calls demand characteristics (Orne & Whitehouse, 2000). These pose game-characteristic validity threats where they interact or clash with the norms and expectations of gameplay now being re-framed as a research study.

Experiments may force gameplay against player preference

First, in leisurely gameplay, players expect autonomy over what game they play when and how long (Deterding, 2016b). However, in research studies, participants are generally assigned to predetermined gameplay conditions. This may lead to frustration as participants may be made to play games they would not usually choose to play and may not like (Ferguson et al., 2017). Certain games may be more widely acceptable than others. For example, players of first-person shooters may be happy to play a casual puzzle game, whereas the reverse may not be true. More generally, genre, controls, or required energy and time to learn may all present differential barriers to engagement with different games (Brown & Cairns, 2004). Thus, they may all interact with player dispositions (genre preference, controller familiarity, etc.) that may covary with other player features (age, gender) to produce patterned differences in play outcomes, engagement, and the like that may confound the treatment-outcome correlation under study.

Game conditions may differ

If study participants learn about the differences in treatment and control groups, they may adjust their behaviour accordingly, a validity threat that is usually discussed under the labels of compensatory rivalry and resentful demoralization (Shadish et al., 2002). Compensatory rivalry is the phenomenon wherein a control group puts in extra effort in order to compete against a group receiving an intervention. This may be exacerbated in game-based research given the competition-embracing social norms of gameplay (Deterding, 2014).

Resentful demoralisation is the opposite effect, where one group is demoralised by being put in the inferior condition. Games are generally expected to be fun, but often two experimental conditions cannot be equally fun. Participants who view their game condition as inferior may be subject to resentful demoralisation, e.g. if their condition is excessively difficult or particularly easy. Similarly, if participants are recruited on the basis of playing a game, they may be demoralised to find the game is different to what they anticipated, or they are in a non-game control condition.

Player Factors

In the preceding sections, we discussed principled issues that arise from the constitution of games, no matter the

Gamers are not the general population

Gamers are diverse

Gamers Are Not the General Population

To draw inference from a sample to a wider population, the sample must be representative of that population. Else, the external validity of the study is threatened. This is a particular concern for games-based research in that gaming is a voluntary pursuit: individuals self-select to play games, which may lead to sampling bias. Problems arise when characteristics of being a

For some, gaming is part of their identity, while others regularly play games without self-identifying as gamers. Yet no matter if they self-identify as gamers or not, people who play games often share certain characteristics such as

It seems likely that gamers will be more interested in taking part in a study involving games compared to non-gamers. This is evidenced in online surveys, where proficient players may be over-represented (Khazaal et al., 2014). As a result, games-based research may attract a participant sample with particular skills and knowledge that deviate from the general population. Relatedly, gamer identity and gaming capital may interact with using a game for data collection. Participants who do not identify as a gamer (e.g. older adults McLaughlin, Gandy, Allaire, & Whitlock, 2012) may suffer stereotype threat: by anticipating that they will perform badly, their actual performance is decreased (J. L. Smith, 2004). Similarly, expectations about gameplay may heighten evaluation apprehension. While self-identifying gamers may find it socially desirable to perform well in a game and exert extra effort, non-gamers still often view games as a waste of time. Thus, non-gamers may want to downplay their investment in and performance at games, unless the gameplay has a socially acceptable justification (Deterding, 2017). Put differently, social desirability may produce significant performance differences between participants identifying as gamers or non-gamers.

Finally, the use of games as a research instrument may have differential effects on attrition, the drop-out of study participants over time. Some degree of attrition is common with long-running studies, and game-based interventions are in fact sometimes use to promote long-term behaviour change, such as ZOMBIES, RUN! (Six to Start, 2012) or SUPERBETTER (Johnson et al., 2016; McGonigal, 2012). However, self-identifying gamers or participants with high gaming capital might be more likely to persist with a game-based experiment or treatment, or in contrast, abandon a study earlier because they have higher expectations of game design or perceive the intervention as less novel.

Gamers Are Diverse

The gamer stereotype of a white heterosexual male teen (Shaw, 2012) doesn’t reflect the growing diversity of people who play games (Williams, Yee, & Caplan, 2008). That said, playing a game is generally seen as a voluntary activity that individuals self-select into based on their preferences (Deterding, 2016b). Existing gaming literacy, socio-economic status, and the like may also affect what kinds of gaming devices and games people access. Hence, certain player characteristics may therefore be over- or under-represented among the users of certain games, genres, or platforms. While recruiting from a console multiplayer first-person shooter may result in a more white, male, gamer-identifying

A related problem frequently raised in the literature is that players typically show different degrees of expertise in different game genres (Latham et al., 2013). Games may differ substantially in the skills they involve, one game may train twitch skills, while another may train executive processes. Differential effects of training with different video games were identified by Subrahmanyam and Greenfield (1994). Put differently, gaming literacy is not a unitary construct. Researchers should control for genre-related expertise to ensure differential representation across condition doesn’t confound results.

A third common difference among players are so-called

Discussion and Conclusion

Games are increasingly popular ways to harness research data from large (online) populations – be it as the natural exhaust of entertainment games, be it games intentionally chosen or designed as research instruments. While there has been some work on the validity of games-centred media effects and learning research, little has been done on the validity threats of using games to collect data for non-game-related research questions. We therefore offered a systematisation of potential validity threats of game-based research. While game-based research is just as fallible to general threats to validity (Shadish et al., 2002) such as publication bias (Ferguson, 2007) or issues surfaced in the current debate on reproducible research (Munafò et al., 2017), we particularly focused on the validity issues characteristic for games and their players.

The latter are maybe the most straightforward to address. Games and especially particularly game genres still attract particular populations with particular preferences and abilities. To some extent, this issue is self-correcting as game-playing becomes ever more prevalent and normalised across populations. Remaining bias can be controlled for with relatively standard research design measures, or simply documented as a limitation.

A less straightforward validity issue is that games are complex systems from which gameplay and player experience emerge non-linearly. This makes it fundamentally difficult to manipulate just one game parameter (as an experimental treatment) without potentially also changing many others, producing potential confounds that threaten internal validity. And because much of the enjoyment and engagement of games revolve around curiosity stoked by novelty and competence fuelled by experiences of learning, games can show strong maturation and attrition effects: playing the same content twice just isn’t as fun or challenging as the first time around. The standard methodological response is larger sample sizes with between-subject designs or within-subject designs with randomised ordering of conditions (Shadish et al., 2002). Another solution may be to pretest manipulations prior to the actual study, involving game design expertise to ensure no unexpected emergent confounds manifest.

Furthermore, we found that games, game content, and gameplay are highly varied, and researchers often have relatively little control over what players do and experience in a game and in what order. This makes statistical testing more challenging (or at least often requires larger sample sizes), and threatens internal, construct, and external validity. Different games vary on many dimensions, including crucially game genres. This cautions against operationalising different conditions of constructs as different games, or generalizing findings from one game to another, let alone other game genres. In educational research, authors like Squire (2011) have therefore called to replicate studies on the effects of particular design or instructional strategies across

Beyond such first stabs at mitigating strategies, we think the issues of systemic complexity and variance point to a more fundamental research need in games that is as much theoretical as methodological. Games and gameplay can be described on multiple levels of organisation (Klimmt et al., 2007). Developer experience suggests that some or even most of the socially and psychologically functioning mechanisms are located on higher, molar levels of organisation (Hunicke et al., 2004). For instance, providing

A final game characteristic threatening validity we identified was social framing: research studies and gameplay are both very particular types of social situations with very particular orderings, norms, roles, and expectations, which may already be triggered simply by verbally and visually labelling a situation as a game or an experiment. While some evidence suggests a close correlation of in-game and real-life behaviour, some suggests marked differences (Deterding, 2016a). Scholars like Dmitri Williams (2010) have therefore called for a systematic research programme on the mapping of real and virtual worlds. Almost ten years later, we are still dearly in need of such a concerted effort. By highlighting two characteristic features of play situations – lowered consequence and a license for strategic action – we hope to have given some starting points for it.

And in light of the preceding pages, one may add a second research programme, concerned not just with the meta-methodological preconditions of game-based research (when and where can games even function as research instruments?), but with its

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the

Author Biographies

Contact:

Contact: