Abstract

Behavioral policies Nudge and Boost are often advocated as good candidates for evidence-based policy. Nudges present or “frame” options in a way that trigger people’s decision-making flaws and steer into the direction of better choices. Nudge aims to do this without changing the options themselves. Boosts also present choices in alternative ways without changing options. However, rather than steering, Boosts are aimed to increase people’s competences. Nudge and Boost originated in extensive research programs: the “heuristics-and-biases program” and the “fast-and-frugal heuristics program,” respectively. How exactly do Nudge and Boost policies relate to the theories they originated from in the first place? Grüne-Yanoff and Hertwig labeled this a question of “theory-policy coherence” and propose to use it for determining the evidence-base of Nudge and Boost. I explore the question: “In how far is theory-policy coherence in Nudge and Boost relevant for evidence-based policymaking?.” I argue that the implications of (weaker or stronger) theory-policy coherence are relevant in two ways. First, Grüne-Yanoff and Hertwig show that theory-policy coherence between Nudge and Boost and the research programs is not as strong as often assumed. It is crucial for the evidence-based policymaker to realize this. Assuming theory-policy coherence while it does not exist or is weaker than assumed can lead to an incorrect assessment of evidence. Ultimately it can even lead to adoption of policies on false grounds. Second, the concept of theory-policy coherence may assist the policymaker in the search and evaluation of (mechanistic) evidence. However, in order to do so, it is important to consider the limitations of theory-policy coherence. It can neither be employed as the (sole) criterion with which to determine how well-grounded a policy is in theory, nor be the (sole) basis for making comparative evaluations between policies.

Keywords

Introduction

Evidence-based policy prescribes that policymakers adopt those policies that are backed up with the “best evidence” (e.g., Davies and Nutley 2000; Sanderson 2002; Head 2010). Although there is not one widespread definition of evidence-based policy, it generally holds that policies are based on extensive or high-quality experimental research such as randomized control trials, and meta-analyses (e.g., Cartwright, Goldfinch and Howick 2009, Cartwright and Hardie 2012, Khosrowi 2019). The behavioral policies of Nudge (Thaler and Sunstein 2008) and Boost (Grüne-Yanoff and Hertwig 2016; Hertwig and Grüne-Yanoff 2017) are often promoted as good candidates for evidence-based policy (e.g., Vlaev et al., 2016; Hertwig 2017; Schubert 2017).

A Nudge steers the chooser’s behavior away from the behavior implied by the cognitive shortcoming towards the choice she would have made would she have been fully rational (e.g., healthier food choices) without changing the set of available options.

Nudges do this by invoking people’s cognitive flaws, by changing features of the choice that people would generally say not to care about (e.g., position in a list, default, framing). Yet, the chooser should be able to easily choose otherwise.

Boosts can roughly be defined as having the goal of expanding people’s competences to reach their own objectives without presupposing or having assumptions about what these goals are. Three classes of Boosts can be distinguished: “Policies that (1) change the environment in which decisions are made, (2) extend the repertoire of decision-making strategies, skills, and knowledge, or (3) do both.

See, Grüne-Yanoff and Hertwig (2016, 2017)

Examples of Nudges are opt-out systems for organ donation (e.g., Johnson and Goldstein 2003; Thaler and Sunstein 2008), saving programs that ask employees to save from a future salary increase rather than their current salary (Thaler and Benartzi 2004; Thaler and Sunstein 2008), and “framing” by means of the wording of choice options in positive or negative terms (e.g., Kahneman 2003; Rothman et al., 2006; Thaler and Sunstein 2008).

Examples of Boosts are fast and frugal decision trees that replace lengthy diagnostic questionnaires for healthcare workers (e.g., Jenny et al., 2013), statistical training (e.g., Gigerenzer and Hoffrage 1995; Sedlmeier and Gigerenzer 2001) and changing the representation of statistical information from relative to natural frequencies (Gigerenzer et al., 2007; Kurz-Milcke et al., 2008).

Both Nudge and Boost policies originate from extensive research programs. Within these research programs, experimental evidence generally shows that contextual features heavily influence the choice behavior of individuals and (cognitive) theories aim to explain why they do so (e.g., Thaler, 1985; Kühberger and Tanner 2010). However, the research programs tell different “stories” about people’s competences and how to correct decision-making mistakes (e.g., Katsikopolous 2014). Nudge originates from the heuristics-and-biases research program (e.g., Tversky and Kahneman 1974; Thaler and Sunstein 2008) which holds that the limits on people’s time, knowledge and cognitive powers prevent them from making good decisions. Boost originates from the fast-and-frugal heuristics research program (e.g., Gigerenzer and Hoffrage 1995; Gigerenzer et al., 1999). The fast-and-frugal heuristics research program holds that despite bounds on people’s time, knowledge and cognitive powers, due to the use of heuristics in environments for which these are fit, people often are in fact successful decision-makers (e.g., “ecological rationality,” see for instance Goldstein and Gigerenzer 2002, Todd and Gigerenzer et al., 2007, Todd et al., 2012, Gigerenzer 2019).

How exactly do Nudge and Boost policies relate to the research programs they originated from in the first place? Grüne-Yanoff and Hertwig (2016) labeled this question as the problem of “theory-policy coherence” and propose to use it for determining the evidence-base of Nudge and Boost. In this article, I explore the following question: In how far is the concept of theory-policy coherence in relevant for evidence-based behavioral policymaking

I argue that there are two ways in which theory-policy coherence is relevant for the evidence-based policymaker interested in Nudge and Boost. First, I issue a warning against adopting an overly simplistic view of theory-policy coherence, one in which Nudge and Boost are fully coherent with and “naturally flow out” of the research programs they originate in. Rather, the coherence between Nudge and the heuristics-and-biases research program, or Boost and the fast-and-frugal heuristics research program, is often not as strong as might seem intuitive to assume. It is crucial for the evidence-based policymaker to realize this. For instance, falsely assuming strong theory-policy coherence can lead to the consideration of evidence that is unrelated to the policy and ultimately to justification and adoption of policies on the wrong grounds. Theory-policy coherence should be used with care, or not at all. Second, I will show that policymakers can profit from analyzing theory-policy coherence, provided they do so in a sophisticated and considered manner, taking into account the complexities of the relations between the theories and policies in question. Theory-policy coherence can be of great help to the policymaker in the search and evaluation of (mechanistic) evidence, or so I will argue.

The paper is structured as follows: in the first section entitled A First Look onTheory-Policy Coherence in Nudge and Boost I analyze a natural, intuitive way to think about theory-policy coherence in Nudge and Boost, dubbed the “simple view.” In the second section entitled Theory-Policy Coherenceby Grüne-Yanoff and Hertwig I discuss a more sophisticated view of theory-policy coherence as developed by Grüne-Yanoff and Hertwig (2016). I analyze how they show that Nudge and Boost are not as coherent with the heuristics-andbiases and fast-and frugal heuristics research programs as generally thought. However, I also argue that Grüne-Yanoff and Hertwig’s account of theorypolicy coherence has some challenges of its own. It is too restrictive: it does not assess coherence on policy assumptions that are not necessary for implementation but are crucial for comparing and selecting one policy over the other. It is also too permissive: it allows strong theory- policy coherence on vague theories about the mechanisms underlying people’s behavior. In the third section entitled The Relevance of Theory-Policy Coherence for Evidence-Based Policy, I argue that the concept of theory-policy coherence may still help the policy-maker in the search for and evaluation of evidence, provided that neither the simple nor Grüne-Yanoff and Hertwig’s view are adopted without due consideration of their limitations. The last section presents Conclusions.

A first look on theory-policy coherence in Nudge and Boost

A first, intuitive, way to start thinking about theory-policy coherence in Nudge and Boost is to consider how they draw on the main theories and findings of the heuristics-and biases research program and the fast-and-frugal heuristics program, respectively.

Let us start with Nudge and the heuristics-and-biases program. According to the heuristics-and-biases program people often violate the criteria for rationality held by mainstream economics, such as consistency, transitivity, and the rules of first order logic (e.g., Thaler and Sunstein 2008; Tversky and Kahneman 1974). Experiments conducted within this research program show that minor changes in the way the choice is presented influence the choices that people make. The heuristics-and-biases program also aims to explain these violations of rationality. Scholars argue that people “suffer” from biases and heuristics which are “shortcuts” or “rules of thumb” in reasoning (e.g., Benartzi and Thaler 2007; Gilovic, Griffin and Kahneman 2002; Jolls et al.,1997). For instance, as Sunstein et al. (1998) state: “They suffer from certain biases, such as overoptimism and self-serving conceptions of fairness; they follow heuristics, such as availability, that lead to mistakes.”

Nudge aims to help people to make better decisions as judged by themselves would they have been fully rational and is often modelled and legitimated with reference to these elements of the theoretical framework of the heuristics-and-biases program. For instance, a paradigmatic Nudge focuses on the default choice option (e.g., Johnson and Goldstein 2003; Johnson and Goldstein 2004; Thaler and Sunstein 2008; Kaiser et al., 2014). In a default Nudge, there is one option that is automatically chosen if one does not choose otherwise or opt out. An example is an opt-out system for organ donation in which citizens are automatically registered as organ donors unless they actively de-register. The effect of these opt-out systems is explained by heuristics and biases such as the “endowment effect” and the “endorsement effect” and/or lack of willpower (e.g., Abadie and Gay, 2006; Dinner et al., 2011; Frederick 2002; Grüne-Yanoff and Hertwig 2016; Hertwig and Grüne-Yanoff, 2017; Sunstein and Thaler 2003; Thaler and Sunstein, 2008; Davidai et al., 2012).

In contrast, the fast-and-frugal heuristics program does not commit to mainstream economics’ criteria for rationality (e.g., Gigerenzer and Hoffrage 1995; Gigerenzer et al., 1999). Rather, they argue for an entirely different concept of rationality: “ecological rationality.” Roughly, ecological rationality concerns the fit between goals, environments, and strategies (e.g., heuristics) used. Contrary to the heuristics-and-biases program, using a particular heuristic is considered rational if using that heuristic is the right strategy in the right environment. In real life people do not have unlimited information, time and brainpower, and so using simple heuristics as rules of thumb is often efficient (Gigerenzer 1994, 1996, 2008). Moreover, because even in situations in which full information, time, and full “brainpower” is available, simple heuristics sometimes still lead to better decision outcomes. That is, despite putting in less cognitive effort and not considering all information simple heuristics still obtain similar or higher accuracy, especially when applied to new information. One of the main reasons for this is “overfitting” of more complex decision-making processes. This roughly entails that the decision-making process is highly accurate when applied to past data, but not to new data. This is for instance the case if too much meaning is attached to (noise from) past data when the model or decision-making process is applied to new information (e.g., Martignon and Hoffrage 2002).

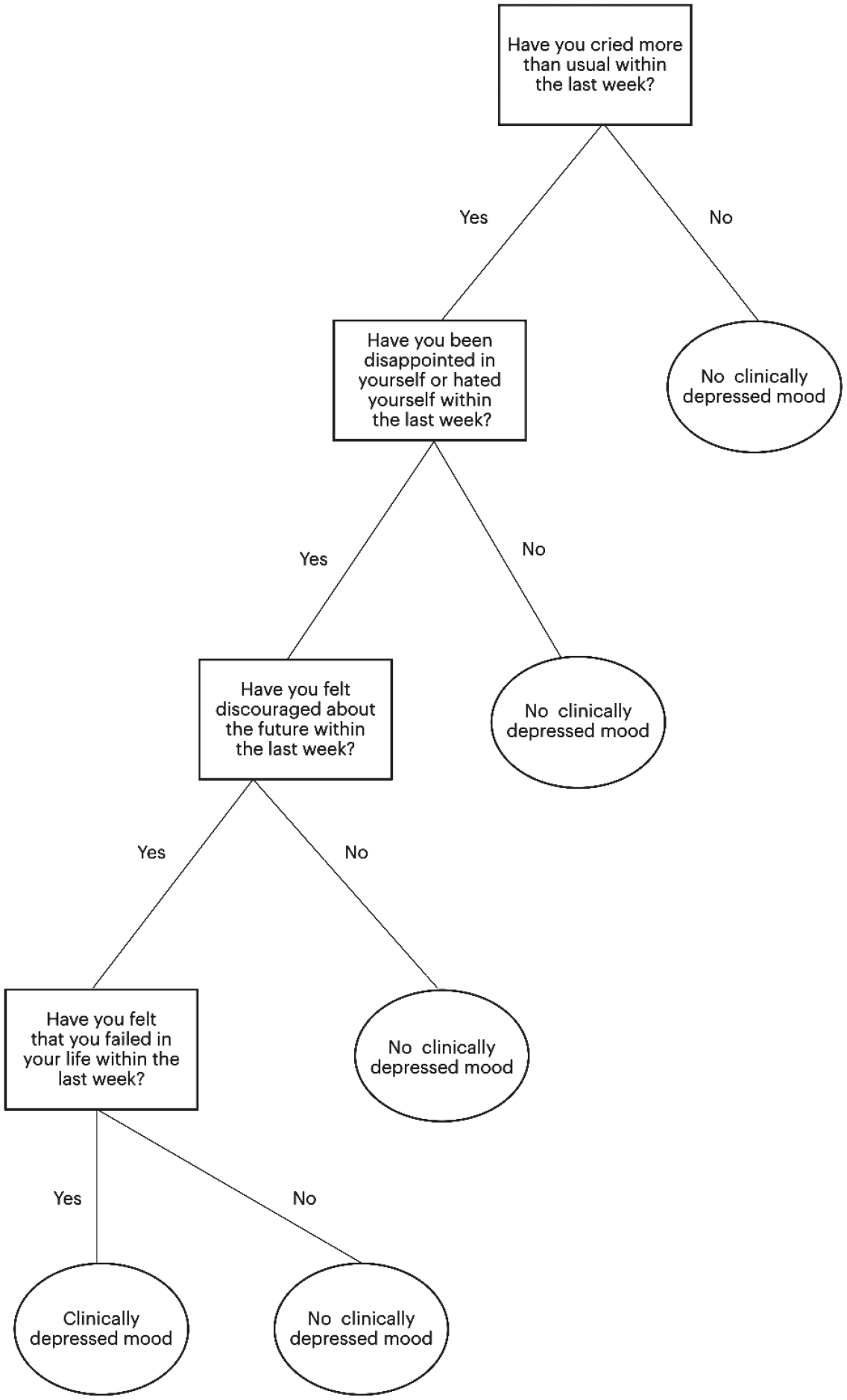

A paradigmatic example of a Boost is a so-called Fast and Frugal Tree (FFT) (e.g., Gigerenzer et al., 1999; Martignon et al., 2003; Gigerenzer, 2004; Katsikoupoulous et al., 2008; Luan et al., 2011). FFT’s are small decision trees generally used to replace long and complicated decision-making processes (which take all potentially relevant information into account to perfectly “calculate” the right answer). The FFT just contains a small number of yes or no questions organized in simple trees. An example of an FFT is one that helps a psychologist to determine whether a client is clinically depressed (e.g., Martignon et al., 2008; Jenny et al., 2013). For an example of an FFT, see Figure 1.

Proponents of Boost generally argue with reference to the theoretical framework of the fast-and-frugal heuristics program. They argue that if the FFT succeeds in matching the informational structure of the environment it can outperform decision-making strategies that are informationally and computationally more complex (e.g., Kozyreva and Hertwig, 2021) and considers that people do not have unlimited time, information and cognitive power (e.g., Gigerenzer et al., 1999). The idea of the FFT is that even though it does not contain a fully specified account of all nuances, it is still accurate and also more efficient than a normal questionnaire or more comprehensive decision-making process. In other words, it does not “steer” people into the direction of the choice people would have made would they have been fully rational. Rather, it aims to enhance people’s decision-making competences, such that they have more accurate skills and beliefs which help them make better choices.

The above discussions may naturally lead one to adopting what I call the “simple view”: that there is a seemingly direct and strong link between theory and policy. After all, the heuristics-and-biases and fast- and-frugal research programs draw on different concepts of rationality which seem to imply policy proposals with different causal targets. That is, whereas Nudge aims to use the biases and heuristics to steer into a particular choice direction, Boost aims to eliminate the biases and apply the heuristics to help people foster and expand their competences (knowledge and skills) (Grüne -Yanoff and Hertwig 2016; Hertwig and Grüne -Yanoff, 2017).

Considering the results of the heuristics-and-biases and fast-and-frugal heuristics research programs and the goals and designs of Nudge and Boost, the simple view is a natural first step to take. It shows the intuitive attraction of an analysis of Nudge and Boost that does assume the heuristics (and biases) researched in the heuristics-and-biases and fast-and-frugal heuristics programs. It furthermore is a useful benchmark for assessing the relevance of more sophisticated views on the topic, as we will discuss below.

Theory-policy coherence by Grüne-Yanoff and Hertwig

A more sophisticated view on theory-policy coherence

Although some authors have addressed issues concerning lack of theory-policy coherence in Nudge and Boost (e.g., Rebonato, 2012; Malecka 2021), Grüne-Yanoff and Hertwig are the first to develop a comprehensive account of theory-policy coherence. Grüne-Yanoff and Hertwig (2016) demonstrate the complexity of theory-policy coherence in Nudge and Boost by explicitly analyzing whether Nudge and Boost are coherent with the theoretical research programs. They have developed an elaborate account which specifies what theory-policy coherence in Nudge and Boost should entail. In addition, they use their account of theory-policy coherence to compare theory-policy coherence in Nudge and Boost. They argue that while neither policy is fully coherent with the research program they originated from, Boost is less problematic in that sense than Nudge is. Theory-policy coherence as a concept, however, raises many questions. For instance, it raises the question of what coherence itself entails and between which elements or features of the theory we should assess for theory-policy coherence. Not all elements of the theory or research program might be equally relevant for theory-policy coherence evaluation. Furthermore, some aspects of the policies might neither be fully implied by the theoretical research programs, nor contradict them. In the following sections, first the concept that Grüne-Yanoff and Hertwig employ will be analyzed in more detail. Subsequently, aspects of theory-policy coherence which Grüne-Yanoff and Hertwig do not discuss, will be critically assessed on their relevance to the evidence-based policymaker.

Elements for evaluation for theory-policy coherence

Grüne-Yanoff and Hertwig base their theory-policy analysis on what they call “necessary policy assumptions” of Nudge and Boost. Necessary policy assumptions are the implicit and explicit assumptions underlying Nudge and Boost policies that need to be fulfilled in order for the policies to be successfully implemented. More specifically, Grüne-Yanoff and Hertwig are concerned with the question what cognitive theories Nudge and Boost assume if they aim to help people make better choices. Furthermore, they evaluate the implications of these theories for the decision-making competencies and flaws of both policymakers and the people subject to the policies.

Grüne-Yanoff and Hertwig (2016) categorize four domains on which they identify necessary policy assumptions in Nudge and Boost:

1) Cognitive mechanisms: error awareness and controllability,

2) policymakers’ knowledge of people’s goals and distribution in the population,

3) characteristics of the people the policies are trying to help: minimum competence and motivation on the part of the agents, and

4) characteristics of the policymaker: benevolent and less error-prone policymakers.

Grüne-Yanoff and Hertwig argue that Nudge and Boost hold contrastive necessary policy assumptions. That is, Nudge holds necessary policy assumptions that Boost typically does not hold and vice versa. For instance, according to Grüne-Yanoff and Hertwig, knowledge of people’s goals and their distribution within society are necessary policy assumptions for Nudge, but not for Boost. This is because Boosts do not aim to steer people in a particular direction. They just aim to help people understand the choice situation better and expand their skills repertoire. Often, Boosts are developed on request. Grüne-Yanoff and Hertwig state that: “boost policies, unlike nudge policies, require only minimum knowledge of goals (e.g., whether statistical literacy and shared decision making are desired goals), and no knowledge of how they are distributed in the population. This is because boost policies typically leave the choice of goals to people themselves (e.g., it remains the sole decision of the statistically literate person whether or not to participate in cancer screening) or design effective tools in response to a demand expressed by professionals (Grüne- Yanoff and Hertwig 2016: 166)”.

Nudge, in turn, steers people into the direction of the best choice option, as judged by the people themselves (Grüne-Yanoff and Hertwig 2016; Rebonato, 2012; Thaler and Sunstein 2008). Therefore, policymakers should know what these options are and how they’re spread within society. Furthermore, an example of a necessary policy assumption for Boost that Nudge explicitly does not have, according to Grüne-Yanoff and Hertwig, is the competence to learn new skills and the motivation to apply them. This is a necessary policy assumption for Boost since Boost requires awareness of the agents and is focused on training people to use new, better, simple heuristics. Nudge on the other hand, does not have the ambition to teach people new heuristics and therefore also does not need people to have the ability to learn new heuristics and have the motivation to apply them.

Contrary to simply assuming theory-policy coherence, Grüne-Yanoff and Hertwig tell us what the relevant elements are between which coherence is to be established and why. From the above it follows that, on their account, theory-policy coherence could exist even if some elements of the theory are not assumed by the policy, as long as these elements are not necessary policy assumptions.

Coherence

Grüne-Yanoff and Hertwig’s evaluation of theory-policy coherence in Nudge and Boost is best illustrated by going through a few examples. A first example: Grüne-Yanoff and Hertwig argue that there is coherence with Boost’s necessary policy assumptions “controllability and awareness of the error” and the fast-and- frugal heuristics research program. For instance, in the case of an FFT, people need to actively swap their former decision-making process, such as a lengthy questionnaire, for the FFT. Hence, they argue, people need to be aware that the previously used decision-making process is faulty and that the FFT will generate better results. Furthermore, people need to be able to apply the FFT, so in that sense they need to control their faulty reasoning and make the swap. Research within the fast-and-frugal heuristics program indicates that agents often are aware of their mistakes, in the sense that they know that not every answer produced by a heuristic will be correct. In some cases, the answer produced by the heuristic can be overridden (e.g., Goldstein and Gigerenzer 2002; Pachur and Hertwig 2006; Volz et al., 2006; Grüne-Yanoff and Hertwig 2016). So here the research program holds the exact same assumption as the policy and even has empirical proof.

Furthermore, Grüne-Yanoff and Hertwig seem to argue that there is weak theory-policy coherence between Boost and the fast-and-frugal heuristics research program on the necessary policy assumptions “motivation and minimum competence of the agent.” People who are being boosted need to actively apply the Boost. They thus need to have minimal competences to do this and be motivated to actually do it. Since the fast- and-frugal heuristics program does not say anything explicitly on these subjects, Grüne-Yanoff and Hertwig argue that regarding these assumptions theory-policy coherence is not present, but also not as profoundly absent as for some of the Nudge assumptions (Grüne-Yanoff and Hertwig 2016: p. 173–174). Hence, the policy assumption is consistent with the theoretical framework of the fast-and-frugal heuristics research program.

Lastly, and related to the previous point, Grüne-Yanoff and Hertwig argue that the necessary policy assumptions for Nudge “knowledge of people’s goals and the distribution of them within society” and “less error prone and benevolent policymakers” are contrary to the heuristics-and-biases research program. According to them, since the heuristics-and-biases framework holds that people do not have stable preferences, it is difficult to find out what agents’ goals are. Furthermore, they discuss that according to the heuristics-and-biases framework also highly educated people and other people who are supposed to be experts suffer from these decision-making flaws. Hence, based on the heuristics-and-biases research program, it is highly unlikely that policymakers would not be subject to biases and heuristics (Grüne-Yanoff and Hertwig 2016: 172–173). Grüne-Yanoff and Hertwig show that, in this case, the policy assumption is contrary to the heuristics-and-biases framework and there is no theory-policy coherence.

Looking closely at Grüne-Yanoff and Hertwig’s analysis, we can see that they use a qualified concept of consistency. Grüne-Yanoff and Hertwig reckon a policy coherent with the research program when the policy assumption is (one of many/multiple) implied by the theory. However, weak theory-policy coherence exists when the policy assumption is neither implied by the theory nor contrasted. There is no theory-policy coherence when the necessary policy assumption is contrary to the findings and assumptions of the theory. With this analysis, Grüne-Yanoff and Hertwig show that the relationship of Nudge and Boost with the research programs they originated from is complex. Questions such as whether policymakers do not fall prey to the same decision-making flaws as the agents, they are trying to help are crucial for evidence-based policy design. However, even though thinking in a more sophisticated way about theory-policy coherence is a crucial step forward in evaluating the theoretical commitments of Nudge and Boost, the concept is not without its pitfalls. The next section discusses crucial challenges for theory-policy coherence.

Challenges for theory-policy coherence

Coherence as consistency

The policy assumptions underlying Nudge and Boost draw on the existence of various cognitive mechanisms underlying people’s decision-making processes. Theory-policy coherence exists when necessary policy assumptions are also assumed within the theoretical frameworks of the research programs. However, the heuristics-and-biases and fast-and-frugal heuristics research programs do not always succeed in the identification of the operative mechanisms underlying the experimental results (e.g., Barton and Grüne-Yanoff, 2015; Grüne-Yanoff, 2016). Forinstance, it is unclear whether people stick with defaults because of loss aversion, lack of willpower or because of the endorsement bias. This brings about at least two challenges for concepts of theory-policy coherence. First, if a policy is coherent with a theory, it remains unclear how well grounded it is in theory at that point. For instance, a policy drawing on lack of willpower can be coherent with the heuristics-and- biases program because it has identified lack of willpower as one of the possible operative mechanisms. Hence, coherence shows that, in theory, the policy could work if willpower is the operative mechanism, but it does not offer the theoretical resources to show when this is the case. In other words, there can be strong theory-policy coherence despite, or even because of, a vague theory that cannot successfully identify the operative mechanisms. After all, the more unspecific a theory is about a certain mechanism and the conditions under which it works, the easier it is for a policy to be coherent with that theory. Second, and relatedly, it is difficult to compare theory-policy coherence in policies, because it does not distinguish between policies that are coherent with a vague theory that cannot identify operative mechanisms and policies that are coherent with a more concrete theory that can do so. The question is thus how a policy could be meaningfully coherent with a theory if that theory fails in identifying the working cognitive mechanisms, such as certain biases and heuristics. Hence, theory-policy coherence is (too) permissive, because it leaves open the possibility that strong coherence would be established with a vague theory.

Furthermore, precisely because heuristics-and-biases and fast-and-frugal heuristics’ theories are sometimes not sufficiently specific and cannot identify the operative mechanisms, they do not always imply distinct policy proposals. For example, defaults are explained differently by the fast-and-frugal heuristics framework than by the heuristics-and-biases framework. Whereas the heuristics-and-biases research program explains them by biases such as the endowment effect, the fast-and-frugal heuristics framework explains them through the endorsement effect in which the default implicitly expresses “social information” on which option is recommended. Therefore, defaults cannot only be considered Nudges, but also be considered Boosts. This depends on the causal target of the policy as well as the underlying mechanism by which it is legitimated (see for a related point Grüne-Yanoff and Hertwig 2016). Hence, theory-policy coherence cannot make sense of why one and the same policy is sometimes considered a Boost and other times a Nudge, without presupposing certain mechanistic theories and thus presupposing theory-policy coherence. This, in a related context, is also why Grüne-Yanoff argues for more mechanistic evidence for behavioral policies (Grüne-Yanoff and Hertwig, 2016).

These kinds of considerations, of how well grounded a policy is in theory, go beyond an interpretation of theory-policy coherence as (a version of) consistency. Ultimately, what is needed for an operationally meaningful concept of theory policy coherence, is that policy assumptions are “directly” implied by the research program. That is, for theory-policy coherence to exist, it should be established between policies and theoretical frameworks which succeed in identifying the working cognitive mechanisms, such as particular biases and heuristics. In other words, the theoretical framework should imply that the policy at issue indeed invokes a particular cognitive mechanism, and that this brings about behavior change.

Beyond necessary policy assumptions

The necessary policy assumptions that Grüne-Yanoff and Hertwig have identified are related to the debate between Nudge and Boost as competing behavioral policies. That is, the debate that centers around the question: what are the conditions under which Nudge or Boost is the more effective behavioral policy?‘. For instance, in this debate, Nudge is often criticized for not being able to steer people into the direction of the choice they would have made had they been fully rational. The policymakers cannot, or so critics argue, determine people’s preferences precisely because due to biases and heuristics people do not reveal their preferences through their choices (e.g., Rebonato, 2012; Hertwig 2017). Boost advocates claim to evade this challenge by aiming towards a different causal target. They aim to help people have better beliefs rather than steering into a specific choice direction. They claim that in case the goals of the target population are unknown by policymakers, the goals within the population are heterogenous, or when people have conflicting goals, Boost is a better policy than Nudge. That is, when a policy aims to help people make better decisions by their own lights, Boost is the preferred policy. For instance, Hertwig (2017, p. 151) states that: “If policy-makers are uncertain about people’s goals, if there is marked heterogeneity of goals across the population or if an individual has conflicting goals, then boosting is the less error-prone intervention.”

The necessary policy assumptions that Grüne-Yanoff and Hertwig have identified are necessary for the successful execution or implementation of the policy. Without these assumptions being fulfilled, the policy goals cannot be met. Since policies are often justified based on their alleged effectiveness, assumptions like these are thus crucial for the justification of the policy. However, there are also other policy assumptions that may not be necessary for implementation but that are nevertheless crucial for justification of these policies. This is particularly prominent when comparing (behavioral) policies for selection. In order to show this, we return to the debate on whether knowledge of the target audience’s goals is necessary to improve decision-making.

Grüne-Yanoff and Hertwig have identified that “specific knowledge of people’s goals and the distribution of these goals within a target population” is a necessary policy assumption of Nudge. By contrast, this is not a necessary policy assumption of Boost. The policy designer does not need to know what people’s specific goals are. This is because Boost has a different causal target, namely to help people form more adequate beliefs and expand their decision-making skills. As we have seen, Boost advocates also claim that it is very difficult, if not impossible to know people’s specific goals (e.g., Hertwig 2017, Arkes and Gassmaier, 2012). Hertwig argues that “experts may even systematically misconstrue what people want for themselves. . .Even choosers themselves may not always be aware of their goals; sometimes they may need to work them out, and to do that, they need transparent information and the competence to process it” (Hertwig 2017: p. 152). Furthermore, as discussed, it is argued that therefore, under particular circumstances, Boost is a more effective and ethical policy than Nudge (Grüne-Yanoff and Hertwig 2016; Hertwig 2017). Hence, an assumption underlying a justification for choosing Boost over Nudge is that “it is very difficult or even impossible to know people’s specific goals and the distribution of these goals within a target population.” However, this assumption clearly is not a necessary assumption for the successful implementation of the policy. Boost could be successfully implemented even if it was easily possible to have knowledge about people’s specific goals. Yet, the very assumption just quoted is a crucial policy assumption underlying the justification for Boost. Another example of an assumption underlying the justification for a policy, is the assumption underlying Nudge that people’ cannot learn to overcome their biases. Even if people could learn this, Nudge could be successfully implemented. It is therefore not a necessary policy assumption. However, it is an assumption underlying an important justification for choosing Nudge (over Boost). Assumptions like these take a prominent role in the debate about whether to choose one policy over another. Assessing whether these motivations are indeed justified and there is really coherence between the policies and the research programs is important for those who want to use theory-policy coherence as a tool to compare and evaluate (behavioral) policies.

Grüne-Yanoff and Hertwig’s concept of theory-policy coherence does not include other policy assumptions than those necessary for the implementation of the policy. Hence, their view of theory-policy coherence is (too) restrictive, because there can be “complete” theory-policy coherence even though there is no coherence between “comparative” policy assumptions underlying the justifications or motivations for the policy and the research program at issue. In other words, what is at issue here is that there also exist policy assumptions that are not necessary for the successful implementation but do have a crucial role in the debates whether to choose Nudge over Boost or vice versa.

The relevance of theory-policy coherence for evidence-based policy

The choice for the policymaker

Evidence-based policy prescribes that policymakers adopt those policies that have the best evidence that they work (e.g., Davies and Nutley 2000; Sanderson 2002; Head 2010). Evidence-based policy requires policies to be based on high quality empirical evidence as well as meta-analyses. Although there is not one definition or paradigm of evidence-based policy, generally it works with evidence hierarchies in which quantitative evidence is placed over qualitative evidence (e.g., Cartwright, Goldfinch & Howick 2009, Khosrowi, 2019). In particular, randomized control trials (RCT’s) are considered superior over other types of evidence. As discussed, Nudge and Boost designs are often modelled after RCT’s conducted within the heuristics-and-biases and fast-and-frugal heuristics research programs. Because the results of these experiments are explained by high-level meta-analyses on the mechanisms (potentially) bringing about these results, Nudge and Boost are often considered good candidates for evidence-based policy. However, as many philosophers have argued, randomized control trials often tell you that a policy works in that specific experimental setting, but mechanistic evidence tells you why a policy works and consequently, why it would or would not work in the specific policy environment at issue (e.g., Russo and Williamson 2011). For instance, Grüne-Yanoff (2016) discusses a situation in which default nudges have effect because of “weakness off will” to put cognitive effort in. In this case, installing the default nudge will not work in an environment in which there already was very few cognitive efforts associated with the registration. But if the mechanism behind the success of defaults is “loss aversion,” then the policy could work in that same environment. The mechanistic theories researched in the heuristics-and-biases and fast-and-frugal heuristics programs are thus key for the evidence-based policymaker interested in Nudge and Boost.

However, as discussed in the previous section, the relation between Nudge and Boost and the heuristics- and-biases program and fast-and-frugal heuristics program is not in any way straightforward. On some aspects, there is no theory-policy coherence. On others there is, in part. Neither of these occasions show in a meaningful way how strong the coherence is between the theoretical frameworks and the policy assumptions. Assuming theory-policy coherence while there is none, or weaker coherence than assumed leads to the motivation and adoption of policies on false grounds. For instance, if a policy is adopted based on coherence with a vague theory, then the policy might be grounded into a theory that has not been able to successfully identify the operative mechanisms and the policy cannot be legitimated based on these mechanisms. In that case, the policymaker does not have evidence that this is in fact the operative mechanism.

The challenges of theory-policy coherence leave the policymaker with a choice. The first option is this: The evidence-based policymaker can leave any consideration of theory(- policy coherence) aside and judge the policy on its own merits without invoking high-level mechanistic theory. She could do this by for instance conducting experiments in the specific context in which the policy is to be applied and for the specific target group of that policy. However, she will then not be able to explain why behavior occurs and extrapolate (e.g., Russo and Williamson 2011; Cartwright and Hardie 2012). The other option is that she can adopt Grüne-Yanoff and Hertwig’s view while taking its limitations into account. That is, being aware of these limitations and/or employing theory-policy coherence more carefully by looking at concrete mechanisms as well as other policy assumptions which are important in selecting a policy. However, it is crucial that the evidence-based policymaker commits to one of these options, as assuming the simple view might lead her to incorrectly present evidence for a theory unrelated to the policy.

The next section shows that if the evidence-based policymaker decides to take on theory-policy coherence and takes its limitations into account, she can use the concept constructively in the search for and evaluation of evidence. In particular, there are two main challenges related to mechanisms in the evidence-based policy discussion on Nudge and Boost that theory-policy coherence can assist with.

What theory-policy coherence can do for the evidence-based policymaker

Two challenges in evidence-based behavioral policy

In the literature on the evidence-base for behavioral policies, two challenges take center stage. First, as discussed, the heuristics-and-biases and fast-and-frugal heuristics research programs both draw on many alternative mechanistic explanations. However, often there is no mechanistic evidence that tells us which of the multiple proposed mechanisms would be at play in case Nudge and Boost are applied. This is a problem for the evidence-based policymaker. In order to know whether a policy will work in a particular environment, she needs to know which specific mechanisms are underlying the erroneous behavior the policy aims to correct. Furthermore, and in the same vein, she needs to know which mechanisms bring about the targeted behavior that the policy aims to bring about (see also The Choice for the Policymaker). Furthermore, as we have seen in Coherence, mechanistic explanations are often underlying the motivations or justifications for Nudge and Boost, and more specifically, for choosing one over the other. Nudge and Boost are both trying to help people make better decisions through invoking (biases and) heuristics (Grüne-Yanoff and Hertwig 2016; Hertwig and Grüne-Yanoff, 2017). As discussed, evidence-based policy aims to select the policy with the best evidence. Therefore, mechanistic evidence is needed to assess which policy is better than the other in a particular situation in order to reach a certain goal. Moreover, the lack of mechanistic evidence raises the question if, or to what extent, the mechanisms discussed in the heuristics-and-biases and fast-and-frugal heuristics research programs are bringing about the decision-making errors at all. There might be different mechanisms underlying decision-making than the ones the heuristics-and-biases and fast-and-frugal heuristics frameworks imply, and there are different, arguably less prominent programs, referring to these different mechanisms (Reijula et al., 2018).

Second, much of the alleged (mechanistic) evidence for Nudge’s policy assumptions is contested with alleged counterevidence from the fast-and-frugal heuristics program and vice versa: evidence for policy assumptions of Boost is often contested with alleged counterevidence from the heuristics-and-biases program. For instance, many of the debates on Nudge and Boost are about questions concerning people’s cognitive constitution. An example is experiments which allegedly show that people cannot learn to overcome biases or can learn to apply new, more successful, heuristics (e.g., Gilovich, Griffin & Kahneman 2002; Thaler and Sunstein 2008; Gigerenzer 2015). Relatedly, other debates are about whether people suffer from certain biases at all and whether the experiments at issue are well set-up. For instance, there is wide- spread literature on loss aversion. There is an on-going debate on whether or not the experiments conducted within the heuristics-and-biases framework indeed show that the loss aversion mechanism is indeed operative (e.g., Pachur and Hertwig 2006). For instance, amongst others, are the Asian Disease experiments (Kahneman and Tversky 1981). Gigerenzer claims that the Asian Disease example does not show that people suffer from loss aversion triggered by the framing of the example either as “lives saved” or “lives lost” (Gigerenzer 2015). Rather, Gigerenzer argues that in the description of the Asian Disease case one of the two alternatives is not fully specified. Due to the insufficient specification people take the wording of the example as signaling implicit information. According to Gigerenzer, it is thus not loss aversion that is the operative mechanism but rather hidden social information. This is allegedly demonstrated by experiments in which the alternatives in the Asian Disease problem are fully specified and in which people do not suffer from loss aversion. Hence, there is, at least seemingly, conflicting evidence concerning the mechanistic theories assumed by (some) Nudges and Boosts. Relatedly, as discussed, many other debates are about questions concerning evidence for people’s cognitive constitution, such as the debate on whether people can or cannot learn to overcome biases and can or cannot learn to apply new, more successful, heuristics.

Assessing (ir)relevant (mechanistic) evidence

Grüne-Yanoff and Hertwig show that Nudge and Boost are often not (fully) coherent with the heuristics- and-biases and fast-and frugal heuristics research programs, respectively. As a consequence, not all evidence from the heuristics-and-biases and fast-and-frugal heuristics research programs is actually relevant for Nudge and Boost. For instance, mechanistic evidence that people can learn and apply new heuristics is not evidence for representational boosts that merely change relative into absolute frequencies. An assessment of theory-policy coherence shows why: the ability to learn and apply new heuristics is not a (necessary) policy assumption of this type of Boost. Hence, the concept of theory-policy coherence can show whether the alleged evidence is indeed evidence for the policy at issue. It therefore can have a critical, de-bunking, role: it can help show that there is no or weak theory-policy coherence and alleged evidence for theory is unrelated to the policy at issue.

Guide to what kind of mechanistic evidence is needed

If theory-policy coherence can help the evidence-based policymaker to determine in how far the presented evidence is relevant, then it can also guide the policymaker in her search for evidence. If there is theory-policy coherence, then evidence for a certain theory might also (but does not have to) be evidence for the policy. For instance, fast-and frugal heuristics research shows us that people often rationally select heuristics and evaluate the results they produce, and that people can furthermore learn new heuristics. Then, theory-policy coherence with Boost would tell the policymaker that evidence for that theory would be evidence for Boost. That is, as long as Boost assumes that people can learn and apply new heuristics. The policymaker could look whether such evidence is available or not. Hence, theory-policy coherence can guide the evidence-based policymaker by showing her for which theories evidence could be found. However, she needs to pay attention in the situation that the theory contains multiple mechanistic explanations but is unable to identify or explain which ones are operative under which circumstances. In those situations, the “evidence” insufficient to consider Nudge or Boost as evidence-based.

Assessing seemingly conflicting evidence

Theory-policy coherence sheds light on to what extent seemingly conflicting evidence is indeed evidence for Nudge and Boost. Can theory-policy coherence also show to what extent the evidence is actually conflicting? Evidence from experiments that (allegedly) show that expert statisticians also use heuristics such as “anchoring” is, if empirically correct, evidence for the heuristics-and-biases framework that “experts also suffer from biases and use heuristics if they think intuitively.” No matter how hard you have trained, you keep making the same mistakes if you have to think intuitively in daily life (e.g., Thaler 1985). This is evidence for Nudge’s policy assumption that teaching people to reason correctly often does not make sense (e.g., Thaler and Sunstein 2008). The fast-and-frugal heuristics program’s experimental evidence, on the other hand, shows that people choose heuristics, evaluate the outcome, and choose another heuristic if they recognize the heuristic has produced an error (e.g., Pachur and Hertwig 2006; Volz et al., 2006). Furthermore, for instance, Sedlmeier and Gigerenzer (2001) show that people can be trained to learn new decision rules. This is coherent with Boost’s policy assumption that people can learn new heuristics and in principle apply these as well. Similar results are often presented as counterevidence for Nudge’s policy assumption that people cannot overcome biases and the incorrect use of heuristics (e.g., Kühberger, 1995; Kuhberger and Tanner 2010; Gigerenzer 2015). However, theory-policy coherence shows us that the heuristics-and-biases research program’s evidence for Nudge that experts often resort to heuristics and do not reason according to the standard picture of rationality is not conflicting with evidence from the fast-and-frugal heuristics research program for Boost that people can learn new heuristics. In fact, the heuristics-and-biases research program does not say anything about learning new heuristics (arguably, because they commit to the standard picture of rationality) and the fast-and-frugal heuristics research program does not deny, but rather endorses, that experts often make use of heuristics rather than following the rules of the standard picture of rationality. Arguably, this is because the fast-and-frugal framework entails that the application of heuristics often yields better results than reasoning according to the standard picture. Therefore, the (evidence for) the theories are not conflicting, neither fully coherent, but consistent with one another. Hence, theory-policy coherence shows what theory the evidence is for and to what extent that theory is connected to the policy assumptions. If evidence for the theories does not conflict, then that same evidence does not conflict for the policies it is coherent with.

“Crisscross coherence”

So far, we have only discussed theory-policy coherence for conventional Nudge and heuristics-and-biases program and Boost and fast-and-frugal heuristics program combinations. However, the concept of theory-policy coherence itself does not imply that it can only be established between these combinations.

What, then, about theory-policy coherence between, say, Nudge and the fast-and-frugal heuristics program and Boost and the heuristics-and-biases program? Could it make sense to consider such “crisscross coherence”? If so, then the policymaker could, in principle, also find evidence for Boost within the heuristics-and-biases research program and for Nudge within the fast-and-frugal heuristics program. For example, take the policy assumption (in Boost) that people understand natural frequencies better than relative frequencies. That assumption is coherent with the findings of the heuristics-and-biases program that people make many relative frequencies mistakes. Although the heuristics-and-biases program might not explain the difference in people’s performance between absolute and relative frequencies in terms of better or worse understanding, it does show that people’s cognitive mechanisms can be faulty when dealing with relative frequencies. So, one might conclude that this important (piece of) evidence for Boost can (also) be found in experiments within the heuristics-and-biases research program—for instance, experiments that show that people give different answers to decision problems depending on whether the problem is described in absolute or relative frequencies.

Now consider a second example, this time in the opposite direction. Nudge’s policy assumption that people make mistakes if they use heuristics is coherent with fast-and-frugal heuristics’ theory that some heuristics are not apt for particular environments which leads to decision making errors. Arguably, it could even be coherent with Nudge’s assumption that framing a decision problem in a different way influences the choices people make. After all, changing the statistical representation of information is a form of framing. The heuristics-and-biases research program would typically explain the difference in behavior change between absolute and relative risks in terms of biases and errors. For instance, referring to a study by McDaniels (1988), Sunstein states that: “A striking study . . . finds that people pervasively neglect absolute numbers, and that this neglect maps onto regulatory policy. In a similar vein, it has been shown that when emotions are involved, people neglect two numbers that should plainly be relevant: the probability of harm and the extent of harm” (Sunstein, 2003, p. 766). 1 By contrast, the fast-and-frugal heuristics program explains the behavior change in terms of better understanding (absolute frequencies) and worse understanding (relative frequencies). For instance, Gigerenzer et al. write that: “Just as absolute risks foster greater insight than relative risks do, there is a transparent representation that can achieve the same in comparison to conditional probabilities: what we call natural frequencies” (Gigerenzer et al., 2007: 55). 2 Furthermore, they argue that “when the goal is to understand the size of the benefit and harm, always ask for absolute risks (not relative risks) of outcomes with and without treatment. (Gigerenzer et al., 2007: 60).”

Crisscross coherence illustrates that while the theoretical research frameworks are, at times, very different, the policies sometimes are coherent with either research program, or the competing research program. This raises the question whether Nudge and Boost are perhaps not that different. The answer to this question is nuanced. In some instances, Nudges and Boosts have the same designs (e.g., particular defaults or statistical representations). Both experiments from the heuristics-and-biases program and the fast-and-frugal heuristics program could provide evidence for the same behavior change. In that sense, there would be crisscross coherence with experiments from both research programs. However, the heuristics-and-biases program and the fast-and-frugal heuristics program would a) provide different mechanistic theories for it and b) evaluate them differently from a normative perspective. Whether Nudge and Boost are indeed different would depend on the evidence for these theories and its coherence with the policy aims. For instance, a Nudger would change the statistical representation to natural frequencies to steer people into the direction of a certain choice option. A booster would change the statistical representation to natural numbers to increase people’s decision-making competences. Allegedly, he would do so without steering. Consequently, the same intervention (whether called a Nudge or a Boost) could indeed lead to behavior change. However, the fervent Nudger would not claim to have increased competences, whereas an equally fervent booster would. So, if the fast-and-frugal heuristics research program too shows that people are making decisions in one direction under relative frequencies, and in another direction under absolute frequencies, then it could be coherent with Nudge’s policy goals. By contrast, for the booster, coherence with the heuristics-and-biases program which holds that people are being steered is problematic. If this theory is indeed backed up with sufficient evidence, the booster’s aim of increasing competences would not be met. Arguably, in that case the alleged Nudge turns out to be in fact a Boost.

One might reply to all this that the idea of crisscross coherence ignores the debates about the underlying rivaling theoretical research programs. It does. Crisscross coherence as considered here does help understand that there is important evidential overlap even between programs with different theoretical commitments. And that, in turn, can be a helpful perspective to the policymaker who sifts through the evidence presented in the, often complex, Nudge-Boost debate and rivalling heuristics-and-biases and fast- and-frugal heuristics research programs. Thus understood, we might conclude that crisscross coherence shows that theory-policy coherence can help the policymaker understand better which evidence might be relevant and where (and in which programs), evidence can be found without pre-formed theoretical commitments. Furthermore, theory-policy coherence could help a policymaker label a proposed intervention as either a Nudge or a Boost (or a combination). Having said that, the prospects for crisscross coherence are naturally bound by the limitations of theory-policy coherence. In many instances it will remain the case that coherence with one theory would be in contrast, and thus mutually exclusive, with another theory.

Other theories and policies

Related to crisscross coherence, it should be noted that theory-policy coherence does not require any specific theory for Nudge and Boost to be coherent with. Therefore, it is important to note that (crisscross) theory-policy coherence can in principle also be established between Nudge and Boost and theories beyond or outside the heuristics-and-biases and fast-and-frugal heuristics research programs. Grüne-Yanoff and Hertwig also indicate this when they discuss Boost’s necessary policy assumption “sufficient motivation of the agent to apply the Boost.” Since the fast-and-frugal heuristics framework does not say anything about the extent to which agents are motivated to learn and apply new heuristics, the evidence-based policymaker could also look for (motivational) theories outside the heuristics-and-biases and fast-and-frugal heuristics research programs that show that agents are motivated to apply Boosts or other similar newly learned tools (Grüne-Yanoff and Hertwig 2016, Hertwig and Grüne-Yanoff, 2017). In that case, Boost would be coherent with another theory and the evidence-based policymaker could search for evidence supporting that theory at issue in order to establish evidence for the necessary policy assumption that agents need to have minimal motivation to apply Boost.

Furthermore, and in the same vein, other (behavioral) policies than Nudge and Boost could be coherent with the heuristics-and-biases and fast-and-frugal heuristics programs. For instance, Reijula et al. (2018) and Ehrig et al. (2015) propose an alternative behavioral policy to Nudge and Boost that intervene on the institutional rather than at the individual level: “mechanism and norm design.” They argue that Nudges and Boost are often employed to generate social outcomes, but that individual rationality often does not translate into social welfare, as problems such as “tragedy of the commons” illustrate. Arguably, in this context, they implicitly make a case against theory-policy coherence of Nudge and Boost’s social goals with classic macro-economic problems. Mechanism and norm design, by contrast, take the social outcome as a starting point. Ehrig et al. (2015) argue that more organ donations are not always better because of, for instance, costs associated with the medical procedures. As an alternative policy, they refer to an organ donation matching tool as developed by Roth et al. (2004) in which recipients and donors are more efficiently matched. In addition, there are also donation options that provide higher priority. Policies like these may have a stronger coherence with macro-economic theories than with the heuristics- and-biases or fast-and-frugal heuristics program. However, it is conceivable that there are combinations possible with for instance nudges to get registrations for such a matching system. In that case, theory-policy coherence could exist with both macro-economic theories as well as with the heuristics-and-biases framework. However, it should be stressed that theory-policy coherence should not be used for cherry-picking. That said, it allows policies to be evaluated against their coherence with a variety of academic disciplines and research programs.

Conclusions

Assuming a tight relation between Nudge policies and heuristics-and biases theory and Boost policies and fast-and-frugal heuristics theory is problematic. In various instances, Nudge and Boost assume decision-making competencies and flaws that are only merely broadly consistent with, or even contrary to, the theoretical frameworks of the heuristics-and-biases and fast and-frugal heuristics research programs. Furthermore, even in cases where they are ‘fully coherent’ with the heuristics-and-biases and fast-and-frugal heuristics research programs, this does not imply that the policy is strongly based on theory. For instance, evidence for a vague theory that is coherent with the policy but unable to explain which of multiple possible alternative mechanisms is operative is not helpful to determine whether the policy will work in a given situation. Furthermore, the evidence-based policymaker should evaluate coherence with all policy assumptions that may justify the selection of one policy over another, rather than only with policy assumptions that are necessary for the success of its implementation. The challenges of theory-policy coherence leave the evidence-based policymaker with a choice. The choice implies that the evidence-based policymaker should be aware of where and when she brings in theory, and if she does, examine coherence with the policy. If the evidence-based policymaker does bring in theory, theory-policy coherence can aid in two major challenges she faces regarding Nudge and Boost: the search for (relevant) mechanistic evidence and the examination of seemingly conflicting evidence. Regarding the first, theory-policy coherence can guide the evidence-based policymaker by showing which mechanistic theories evidence should be found. In addition, it can help the policymaker assess which evidence is coherent, and thus relevant for the policy, and which not. Regarding the second, by showing which evidence is relevant and which not, theory-policy coherence can also help the policymaker to see whether seemingly conflicting evidence is in fact conflicting. Thus is the relevance of coherence between theory and policy for Nudge and Boost in evidence-based policymaking.

Footnotes

Acknowledgements

I thank in particular Conrad Heilmann and Jack Vromen for their thorough and helpful comments. I also thank Till Grüne-Yanoff and Marcus Widengren for their very helpful comments on a previous draft, as well as other attendees of the 2020 INEM Summer School on “Economic Behaviours: Models, Measurements, and Policies” in Como for interesting discussions and questions. Furthermore, I would like to thank the two anonymous reviewers and the editors of this journal for their constructive comments.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.