Abstract

Evaluation Capacity Building (ECB) is a critical function that can increase uptake, quality and impact of evaluation activity in large organisations. This article describes how ECB theory has been applied in the design and implementation of foundational evaluation training in a large state government department. Appraisal of the first three years (2018–21) establishing the training component of the capacity building function is explored, in light of contemporary capacity building literature. Post-training feedback indicated high degree of participant satisfaction and met need among department staff. One-year follow-up of training workshops indicated that over two-thirds of respondents (68 percent) found evaluation training had a lasting impact on their work, and that participants continued to use evaluation support materials and apply what they had learned. The article provides practical information on this theory-informed approach, to support others who are commencing or delivering evaluation capacity initiatives within public sector agencies or other large organisations, including for those who are adapting training for online delivery during COVID-19. The article identifies lessons learned, including through the experience of moving training online during the COVID-19 pandemic, and presents opportunities to increase the impact of evaluation capacity building.

Keywords

Background and context

Evaluation Capacity Building (ECB) is an increasingly widespread topic of interest and relevance in evaluation literature and practice. Preskill and Boyle (2008) chart the increasing prominence of ECB from the early 2000’s citing increases in conference papers and articles, and extensive participation or interest in ECB among practitioners. Similarly, McDonald et al. (2003) note growing government interest in building evaluation capacity within departments and agencies during this period.

In Australia, several state and federal government departments have established and grown substantial evaluation units to support internal evaluation capacity, and to increase quality and use of evaluation in policy design and decision making (Tracy & Downing 2018; Lloyd et al., 2018; Williams, et al., 2019; Rintoul, 2019). This trend has also been observed internationally where the literature documents increasing interest in ECB in both government and non-government organisation settings to improve accountability (Naccarella et al., 2007; García-Iriarte et al., 2011).

At a large state government department (the Department of Health and Human Services), a 2016 internal audit of evaluation activity examined the extent to which quality evaluation was implemented to inform decision making. The audit revealed evaluations of variable quality. The Department needed clearer guidelines and standards to support improved evaluation capacity. Evaluation reports and findings were further not being used consistently to inform policy and practice. It also examined whether the evaluation investment was strategic, whether robust evaluation findings were available for policy makers, and whether the infrastructure to support evaluation was effective. In 2017, the Department established a Centre for Evaluation and Research Evidence (the Centre) in response to audit findings and act upon senior executive interest to improve the quality, consistency and culture of evaluation across policies and programmes. The Centre’s mandate included developing accessible resources and processes to support and deliver evaluation, and enable staff to conduct and/or commission evaluations (Department of Health and Human Services, 2016).

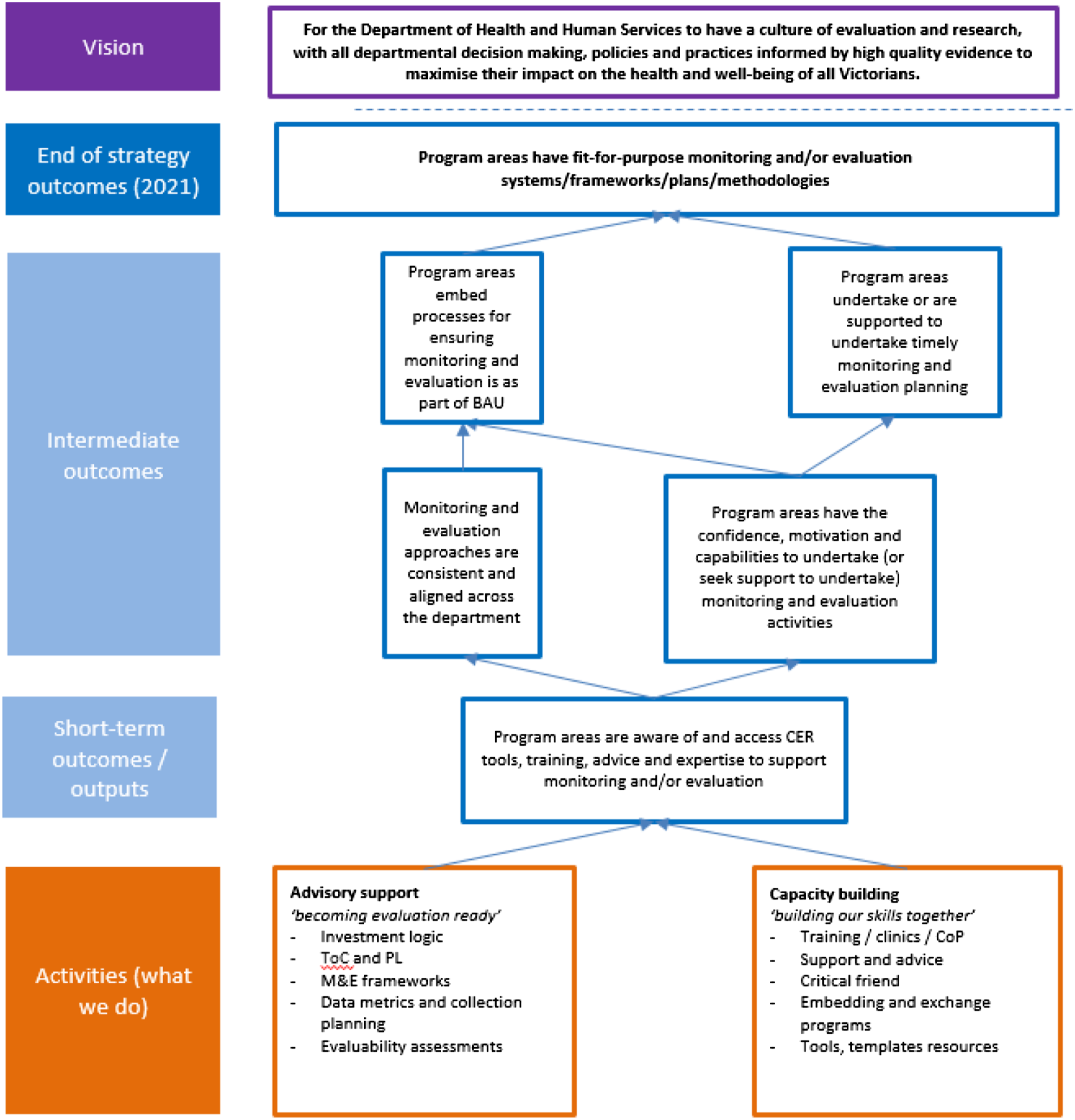

Evaluation capacity building activity has been central to the progression of the evaluation agenda within the Department. Evaluation training was particularly valued by senior executives as a demonstration of capacity building at work and was actively promoted by this cohort across the Department contributing to high levels of participation in the first year. Training was supported by the development a suite of evaluation resources including an Evaluation Guide and templates for evaluation plans and reports. The Department’s Executive Board agreed to double the staffing resources for the Centre between 2017 and 2019 and establish four main pillars of evaluation activity: advisory support; evaluation delivery; knowledge translation; and capacity building. The delivery of internal strategic evaluations grew to 72 concurrent projects in 2019, and the advisory service offered over 100 consultations in the same year. The demand for evaluation began to exceed the number of evaluations that the Centre could feasibly deliver directly, and the evaluation capacity building efforts became even more important in delivering the Centre’s intended outcomes. The contribution of advisory support and capacity building to the Department’s 2021 strategic outcomes are shown in the excerpt of the Centre’s programme Logic in Figure 1. Excerpt of programme logic showing advisory support and capacity building workstreams.

The programme logic is based on two theoretical propositions. The first surrounds the individual learning process, and the interaction of capability, motivation and opportunity in the context of supportive structures as described by Michie et al. (2011). Intermediate changes in the model begin with short term awareness, and subsequently confidence, motivation and capabilities enable individual behaviour change as a result of the capacity building interventions. Secondly, elements of organisational learning theory became evident as individual staff receiving capacity building and advisory support learn, transferred knowledge and modified behaviours to support organisational change in a (mostly) ‘bottom up’ fashion. Organisational learning theory stresses that it is only through the learning of individuals that organisational routines are changed (Senge, 1990).

This article seeks to illustrate the key learnings about the design, implementation and evaluation of the Centre’s capacity building function, focusing on the introductory Evaluation 101 training course. It appraises the first three years in light of contemporary capacity building literature and identifies opportunities for improvement and growth. Critical reflection following the period of innovation and organisational change is both good practice from a quality assurance perspective (Langley et al., 2009) and an evaluation competency (Australasian Evaluation Society, 2013).

Design

Capacity building approach

The Department’s evaluation capacity building approach was designed to incorporate many features which support sustainable evaluation practice, as articulated in the comprehensive and widely used Multidisciplinary Model of Evaluation Capacity Building framework (Preskill & Boyle, 2008). Key elements of the Department’s capacity building design included: development of evaluation policies and procedures; a strategic evaluation plan to provide a clear vision to when and why evaluation is undertaken; the establishment and growth of the internal evaluation unit (the Centre); and an online knowledge bank to support storage, dissemination and use of evaluation reports.

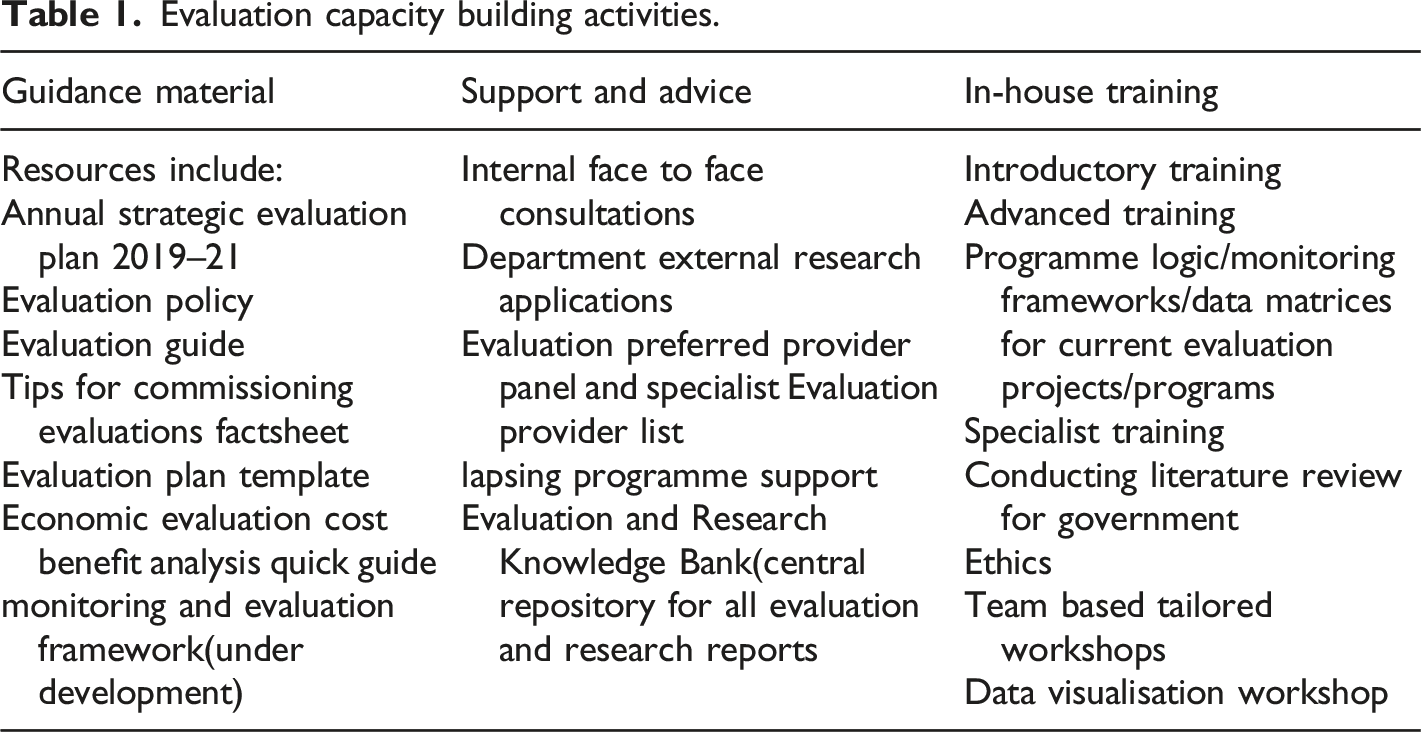

Evaluation capacity building activities.

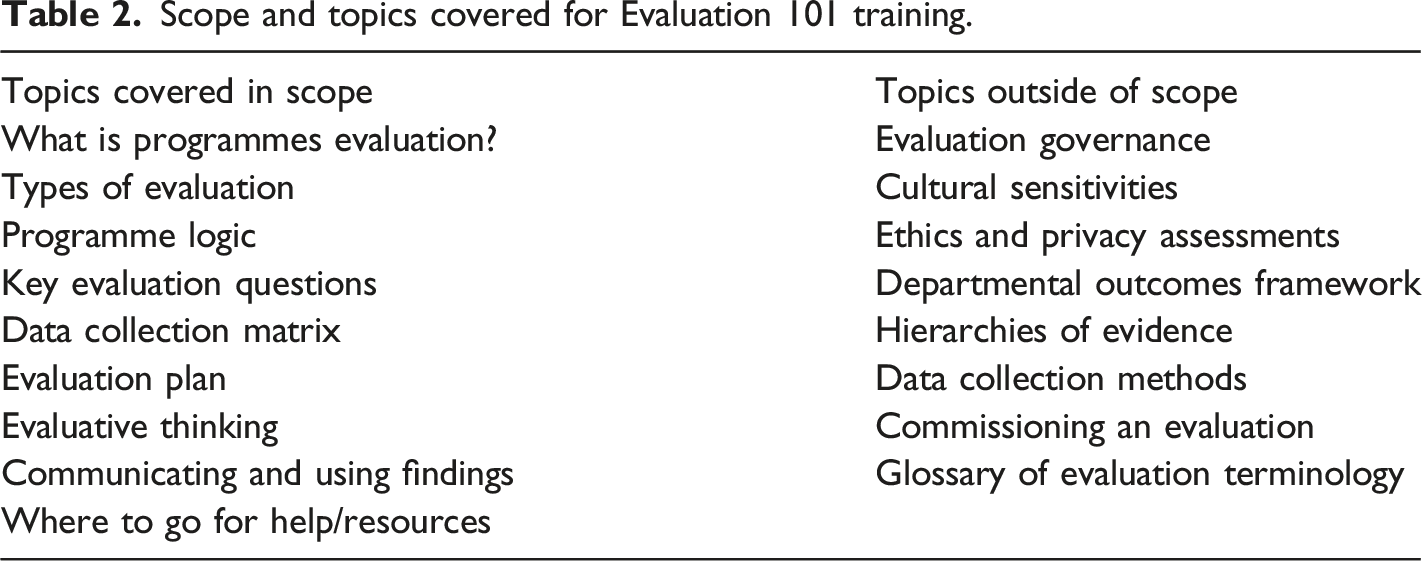

Scope and topics covered for Evaluation 101 training.

Foundational evaluation training

The most prominent and widely accessed component of the Capacity Building approach was foundational Evaluation 101 training workshops, which forms the focus of this article. As previously flagged, training is a well understood and tangible element of capacity building and of critical importance to executive stakeholders in demonstrating progress towards building an internal culture of evaluation. Training workshops sought to both promote the Department’s consistent evaluation approach, and help departmental staff develop the foundational understanding of evaluation that would allow them to scope evaluation in their own policies and programmes. The following section examines the design and approach of Evaluation 101 training in more detail. The critical analysis and focus on the training element in this article was selected due to the prominence of the activity with over 600 participants in the first year, and that training is a clear-cut component of the overall strategy which lends itself to more detailed measurement and analysis.

Design justification

A range of ECB strategies were employed in the design of Evaluation 101 training. The approach aligned with four of the ten ECB teaching and learning strategies posed by Preskill and Boyle, 2008. Since individuals were the target of the intervention, training was tailored to meet the needs of individuals with a range of backgrounds including policy officers and non-evaluators from across the Department (ranging from child protection, housing, regulation, mental health etc.). It used and referenced the full range of broader ECB resources on offer (see Figure 1).

Because the Department was so large (approximately 12,000 employees), capacity building strategies needed to be scalable and accessible to significant numbers of staff as support efforts in the form of coaching and mentoring were in extremely high demand and inevitably limited.

Evaluation 101 training component

The most prominent component (although arguably not necessarily the most important) was the development of “Evaluation 101” introduction to evaluation training workshops. The foundational Evaluation 101 training sessions were tailored as a ‘starter session’ to achieve reach by being manageable for most people considering undertaking an evaluation or see it on the horizon for their programme or operational area. These workshops were of 90 minutes duration and aimed to provide individuals with orientation to the Department’s evaluation support resources as well as exposure to some key pillars of evaluation practice such as key evaluation questions and programme logic. Preskill and Boyle (2008) suggest that design should consider ‘whose capacity is to be developed, and to what level’ (pp. 2–3). In this regard, the course was intentionally pitched as an accessible introduction, aimed at individuals who were (or who were likely to be) required to develop an evaluation plan, and for whom evaluation was not a usual part of their everyday job.

Learning objectives

The learning objectives for Evaluation 101 training were spread across level two to four of the SOLO taxonomy as defined by Lucander et al., (2010). SOLO, which stands for the Structure of Observed Learning Outcomes, is a means of classifying learning outcomes in terms of their complexity (Chen et al., 2020). Learning objectives spanned uni-structural or surface learning for the awareness elements, and relational level (deeper) learning for topics which had a practical workshop learning activity (Chen, et al., 2020).

Learning objectives for Evaluation 101 training included building the capacity of staff to:

1. Be able to describe what evaluation is and why it is important to the Department. 2. Be able to design appropriate evaluation questions for the type of evaluation and construct a basic logic model. 3. Be able to formulate a draft evaluation plan supported by the Departments evaluation guide. 4. Take note of the ways that the Centre can help to support evaluation work in the Department and know how to contact the Centre if required.

Training topics were drawn from the Department’s 2018 Evaluation Guide. Key topics selected for inclusion were identified by evaluation mangers from the Centre as those most critical for the intended initial orientation of staff. Development of awareness and basic knowledge of evaluation concepts and starting to influence confidence or attitudes to evaluation were in scope. Developing substantial skill improvements was not in scope. The topics covered are shown in Table 2.

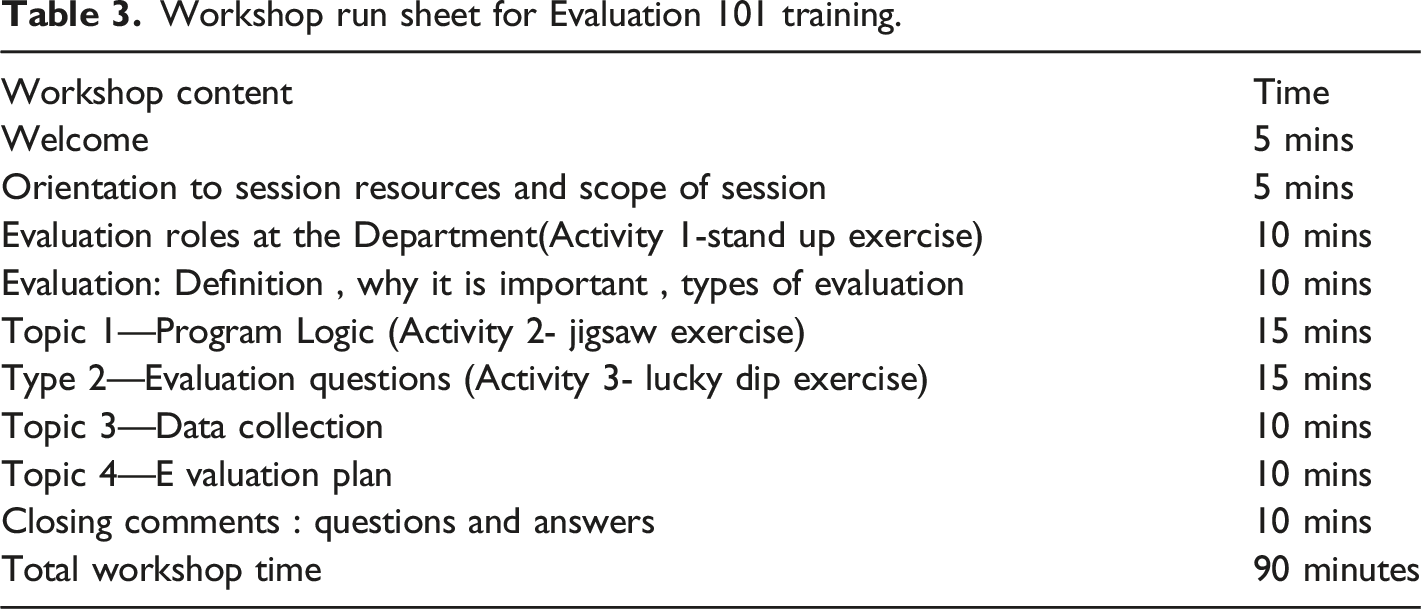

Workshop run sheet for Evaluation 101 training.

Evaluation 101 training workshop approach and trainers

A brief workshop with a large audience is necessarily fairly didactic. In addition to lecture style information conveyance, participants were provided with a resource pack (containing the Evaluation Guide, Evaluation Plan Template and Evaluation Report templates) and guided through them during the session. Elements of problem-based learning were included, and all participants were invited to volunteer an example of their own evaluation experience, or adapt an example provided by the presenter. Activities were centred on developing evaluation questions (lucky dip style activity where evaluation questions were drawn from a pool and judged for suitability) and on programme logic (jigsaw style, where a programme logic was constructed on paper).

Approximately half of the Centre’s staff had the attributes needed to deliver training workshops and the time and interest to participate as a trainer. This provided ample continuity planning in case of unforeseen absence, and reduced training burden from individuals. Core competencies required integrity and flexibility, professional credibility (as described in the Australasian Evaluation Society Evaluators Professional Learning Competency Framework Domain 1), ability to operate mindful of culture, stakeholders and context (Domain 3) and excellent interpersonal skills (Domain 6), in addition to the evaluation specific expertise as described in Domains 2, and 4 (Australasian Evaluation Society, 2013).

Evaluation 101 training evaluation findings

A workshop satisfaction survey was conducted at the end of each capacity building workshop between January 2018 and August 2020. A follow-up online survey was emailed to all participants after 1 year of operation to understand longer term impact of participation. This two-pronged approach attempted to assess both the immediate learning outcomes and subsequent change in behaviour and practice in line with the concept of multiple ‘levels’ of learning outcomes (Kirkpatrick & Kirpatrick, 2011).

As expected, participants reported high levels of workshop satisfaction in post-event surveys. 90 percent of the approximately 600 participants reported their experience of the workshop to be good or excellent, and 56 percent reported that they had to a large extent gained new ideas and knowledge.

Evaluation 101 workshops aimed to attract staff who were doing or were about to implement an evaluation. It aimed to attract staff who would have an opportunity to apply and transfer learnings to a real situation imminently. Despite this being part of the advertised purpose, on average only 51 percent were doing an evaluation or were about to commence or contract manage an evaluation. The remainder reported a general interest in evaluation. As such, the workshops only partially succeeded in attracting the target population and as such have needed to evolve over time to accommodate staff who do not bring an example from current work to the training. Alternatively, the sessions could be more targeted and/or require participants to have had an active evaluation underway in order to register rather than the current open registration process.

Qualitative feedback indicated Evaluation 101 face to face training duration and rapid pace as an efficient and helpful approach. Some of the feedback included ‘short, sharp, and to the point’ and ‘90 minutes meant I could easily schedule this into my diary amid other obligations’. However, for some participants, the content was too rushed. Commensurate with the literature which points to the need to allocate sufficient time for learning, the workshop was extended to 2 hours which has not dampened demand.

One-year follow-up

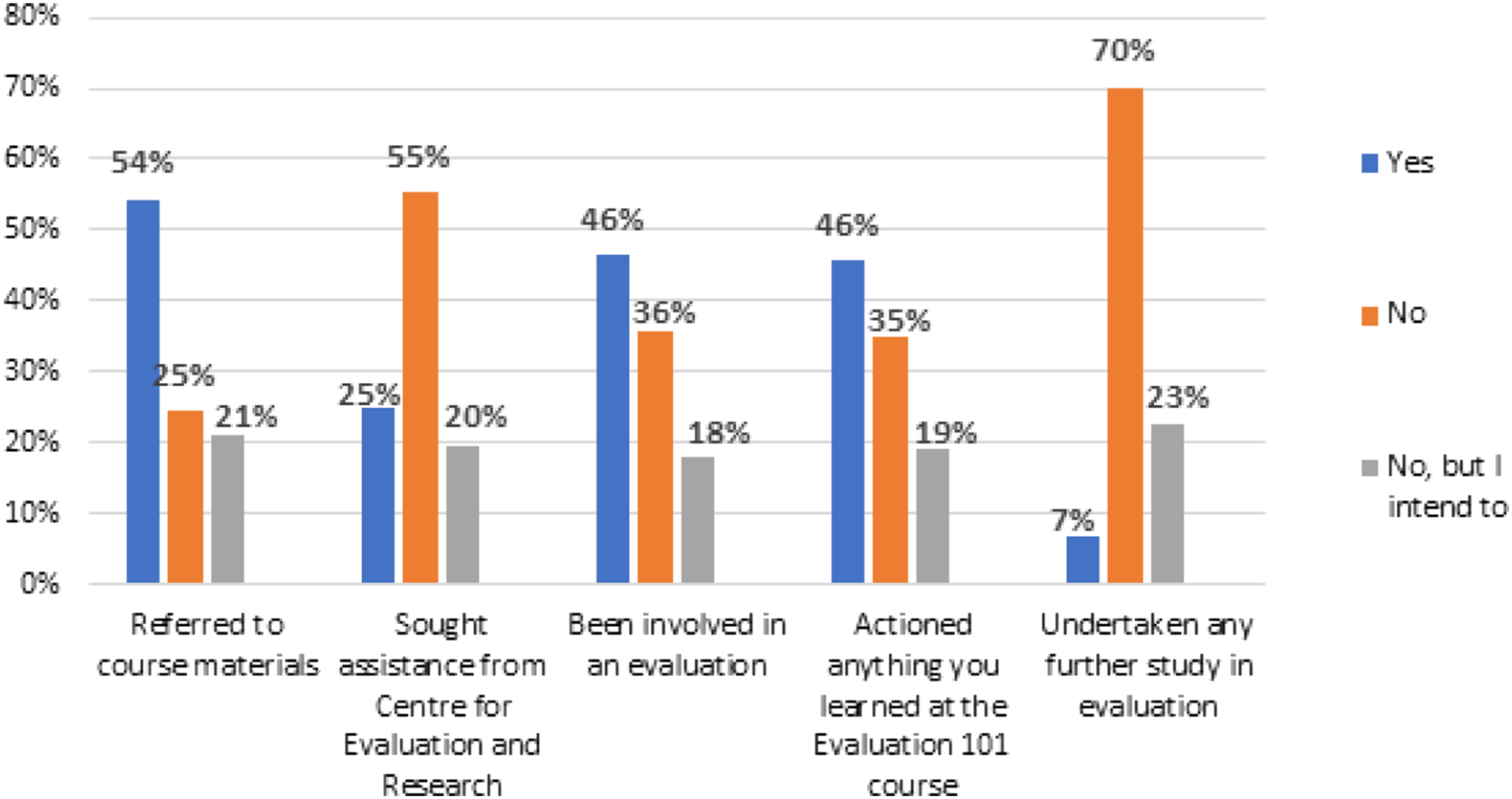

Of approximately 600 eligible attendees, 12 percent (n = 71) responded to the follow-up survey. One year on, over two-thirds of respondents (68 percent) indicated the Evaluation 101 training course had a lasting impact on their work. Figure 2 points to the actions staff reported to have undertaken in evaluation. While learning objectives 1 and 2 were awareness related (being able to describe evaluation and its importance and being able to design key evaluation questions and construct a basic logic model, they were not expected to produce measurable changes). Follow-up participant actions 1 year post Evaluation 101 training.

The data points to partial achievement of learning objectives 3 and 4 at one year follow-up of Evaluation 101 training as reported by participants: • Learning objective 3 aimed for participants to use evaluation support materials such as the evaluation plan. Over half of the respondents (54 percent) had referred to the course materials, and a further 21 percent had not but intended to do so in the future. One participant reported ‘It is useful to have the Evaluation Guide as a reference – particularly for the types of evaluations’. Another referred to ‘direct use of the information when evaluating work or developing a project’. • Learning objective 4 aimed for participants to note support options and understand how to access them. A quarter of the respondents (25 percent) had sought 1:1 assistance from the Centre since the workshop. Feedback suggested that seeking support had been a positive experience and that the Centre for Evaluation and Research Evidence is ‘a wonderful resource and very encouraging of non-experts to build knowledge and capability that stays with teams in other areas. The team is always willing to assist and provide advice’.

Figure 2 outlines a summary of follow-up survey actions reported by Evaluation 101 training respondents (n = 71) which included referral to evaluation course material, seeking assistance from the Centre, involvement in evaluation, actioning key learnings from the training course or undertaking further study in evaluation. Referral to Department course materials were the most frequently reported action following training, indicating the purpose and expected outcomes of ECB efforts were being achieved.

Source: Internal Departmental Evaluation Report (2020) • Thematic analysis of free text responses revealed three main take-aways recalled by participants. Key themes included importance of thinking about evaluation at early stages of design and planning, evaluation knowledge and skills and availability of evaluation resources and support. 1. Importance of thinking about evaluation at early stages of designing and planning projects/programmes. Comments suggested that participants were more mindful about evaluation, having ‘greater awareness of the process and opportunities for evaluation’. 2. Evaluation knowledge and skills such as skills to assist in the development of programme logic, clarifying goals, process and issues to be considered. One participant reported ‘I have always thought there was more to the theory of change as a standalone theory across all projects/services...and therefore felt that I needed to do more work. However, understanding that the theory of change are the assumptions behind the design of the service/project that need testing, was really helpful’. 3. Availability of evaluation resources and support within the department. Comments echoed the satisfaction and utility of the resources and the Centre’s support, such as those described in relation to learning objective 3 and 4 cited above.

These evaluation findings indicate that after a relatively short period in operation, the Department’s capacity building approach is making headway toward its strategic outcomes and vision shown in Figure 1. In the short term, there is awareness of evaluation tools and supports, evaluation tools are used (with support as required) and early indicators that they are being applied with increasing consistency and confidence by participants (Figure 1). Overall, evaluation findings suggest good progress towards the short to intermediate term intended outcomes of ECB in the Department.

The evaluation findings also highlight a number of potential challenges and barriers to achieving the end of strategy outcomes (2021) and overarching vision (Figure 1). Follow-up survey respondents, who had not fully applied what they learned at the training, were asked what support they needed to do so. Resourcing capacity and time have been reported among the most frequently cited barriers to capacity building ((Labin et al., 2012) and were common in the training feedback. One respondent said that evaluation is ‘pushed into the background when more urgent reactive requests take precedence’ which is likely to be an enduring (and possibly appropriate) reality in fast paced policy or operational environment. Participants also cited limited or inconsistent appetite for evaluation at programme, policy or project level in some areas and lack of understanding and appreciation of evaluation at executive and manager level. Importantly, in a Department with up to 12,000 employees and 10 divisions there is the potential for evaluation capacity building to gain greater traction in some areas than others. It is certainly evident in the data that some areas utilise the Centre’s services and have more training attendees than others. This may point to areas which should be targeted further in future capacity building efforts which should include leadership engagement.

Discussion

Limitations of evaluation 101 training design

In practice, there were some design weaknesses to the Evaluation 101 approach which reflect the practical realities of designing and delivering training in a large organisation with finite delivery capacity and resources. These were identified through discussions between team members who participated in ECB activity and met regularly for planning, debriefing and continuous improvement purposes.

Lack of executive offering: Executive sponsors and senior managers play an important role in shaping culture and providing leadership for evaluations (Preskill, 2014). Almost all the intermediate outcomes require leadership support, for example to establish and embed consistent processes and expectations about how evaluation will be conducted and used. The leaders need to be able to recognise and commission good evaluation, critique poor evaluation and champion evaluation needs of the Department towards the desired ‘fit for purpose’ strategic outcomes. Executive leaders are unlikely to come to Evaluation 101 training and require other activities to meet their evaluation learning needs that are yet to be developed.

Limited follow-up: Progress from ‘awareness’ to ‘use’ requires substantial support. As Dickinson and Adams (2012) articulate, training alone is insufficient for this kind of behaviour change and benefits from follow-up coaching and support. While the evaluation 101 training is scalable, the advisory support function is necessarily limited and the Centre are continuing to develop mechanisms to meet advisory requests including a newly piloted, fortnightly ‘drop-in evaluation clinic’.

Adjustment to content: The workshop content has evolved over time, and participant feedback and presenter preferences have shaped a shift in content. Initially the workshop included icebreaker and small group activities which encouraged attendees to recognise their own role in the Department’s vision, and their expertise (even if limited) in championing evaluation within their work groups. Champions are valued by organisations to promote evaluation to their colleagues (Rogers & Gullickson, 2018). The activities also aimed to engender peer support and networks, leveraging shared beliefs and connections which are established facilitators of behaviour change in implementation science (Groll & Wensing, 2004). However, over time the agenda has been reprioritised to focus more time on data collection and analysis in line with participant feedback, and less on scene setting and participant interactions. These adjustments to content may be a false economy if other valuable aspects of the training are minimised as a consequence.

Lack of implementation support: Structured follow-up such as implementation coaching is not consistently conducted. The value of post-training implementation support is well recognised in the implementation literature and is consistent with the findings of Dickinson and Adams (2012) that other supports are generally required to build sustainable evaluation practice post training. The tools, resources and advisory supports go some way to address this but are reliant on an individual’s motivation and actions to reach out for assistance.

Implementation experience

The Evaluation 101 training workshops were immediately met with extremely high demand. The first workshop received 37 registrants but approximately 45 people attended. The subsequent three workshops had over 100 registrants each, and eventually plateaued at approximately 40 per month after 12 months. In the first year and a half, approximately 600 departmental staff attended the training. Owing to the large and diverse groups, the implementation required intensive facilitation and investment of staff time to administrate the course (fielding registrations, preparing course materials, finding and setting up training rooms and so forth).

In 2020, the delivery mode changed rapidly to online delivery in the context of the COVID-19 pandemic and emergency response. The context hastened an existing intention to trial an online offering. Microsoft Teams was used prominently for online communications in the Department and became the delivery platform. This required rapid adaptation of the training course. Key changes included: • Registration of 40–50 participants (though this limit was adjusted up over time accommodating up to 100 participants). • Two facilitators plus a logistics assistant (formerly only one facilitator). • Adjustment to teaching activities to online friendly activities. • No hard copies of Evaluation Guide, tools and resources (formerly these were handed out). • Modified participant discussion formats (chat/breakout). • A half hour extension so training duration increased from 90 minutes to 2 hours to allow for adequate engagement in an online environment. • Facilitators required additional capabilities in online facilitation and technology.

The changes had a significant effect on the Evaluation 101 training offering. While the content was broadly retained in the original form, there were pros and cons of online delivery. Duration of training increased by half an hour to accommodate appropriate time to connect participants with their peers working in similar areas which is not the same as a face to face setting (although there is some evidence from chat transcripts that suggest participants do occasionally reach out to follow-up with each other). Challenges included the inevitable technological restrictions for some people, and one of the potential facilitators became unable to facilitate because of unreliable home internet. The engagement of participants was harder to assess, but satisfaction remained high (over 90 percent positive experience) based on training feedback forms. Additional benefits of the shift to online delivery included increased equity of access across the states regional, rural staff and the logistical benefit of being able to schedule sessions flexibly and add additional courses as required (rather than having to make room bookings for the training sessions up to a year in advance).

Limitations to approach and analysis

The Centre’s Evaluation 101 training is aligned with the approaches and strategies proposed by Preskill and Boyle’s (2008) Multidisciplinary model of ECB. The training does not action all strategies, and addition of other relevant strategies such as internships at the evaluation centre, post-training coaching, communities of practice within the Department could strengthen the intervention. Nearly all of the elements of sustainable practice are fulfilled by the model. The outer elements of the organisational learning capacity such as leadership and to some extent the knowledge, skills and attitudes especially as they pertain to leaders could be strengthened in future Evaluation 101 training content. Further executive engagement with training, rather than as champions for evaluation capacity building is planned as a critical next step of ECB activity for the Department.

The overarching ECB programme of the Department is ambitious in seeking to change ‘culture, decision making policies and practice…’ (see ‘Vision’ in Figure 1) across a large state-wide government setting. Elements of culture and influence on decision making at a strategic level are less likely to have been influenced by the predominantly ‘bottom up’ approach to date. The next steps include refining the Evaluation 101 training programme, and further build overall capacity building of evaluation in the Department to enhance and target the executive and manager level coaching and engaging less proactive Divisions or branches of the Department. It could also be valuable to establish a Community of Practice to support post-evaluation training implementation.

Overall, the ECB training combined with other capacity building activities in the Centre gained traction, but further work is needed to achieve cultural change and evidence-based decision making elements of the Department’s vision. The review of Evaluation 101 training presented in this article reflects on achievement of learning outcomes as the main criteria of merit. It potentially overlooks other important criteria such as sustainability and accessibility. This analysis examines the ECB activities predominantly in line with Preskill and Boyle’s (2008) theoretical model. Other models including those such as Labin et al. (2012) Integrative Capacity Building model could provide alternative perspectives.

In the longer term a more comprehensive approach to evaluating the full scope of ECB activities beyond the workshops would be valuable particularly in terms of effects and impact (Preskill, 2014). It is important to note that findings from the 1 year follow-up survey are based on a relatively low response rate (12 percent of eligible attendees) which may impact on the validity of the conclusions. Work is continuing to collect a larger sample to validate this initial data collection. Findings are also based on self-reports and future work will consider triangulating these findings given the risk that non-respondents had a different experience than respondents.

Conclusion and next steps

The strategic application of 101 Evaluation training and broader capacity building strategies is well embedded in systems and structures in the Department’s ECB model. Training and resources have been well utilised by staff with early indication they have positively guided evaluation practice. The model has transitioned to a format compatible with COVID-19 operations and has continued to be offered on a monthly to bi-monthly basis.

Future challenges and next steps include sustaining the enthusiasm and practice changes in a high workload and environment and continuing to spread and seek consistent changes to evaluation commissioning and use at executive leadership level. In particular, engaging departmental leaders to both champion ECB and directly engage with their own capacity building will be helpful in embedding good practice and motivating staff to use their enhanced capabilities (Michie et al., 2011). Finally, an important next step for evaluating the impact of ECB will be to consider how increasing use of evaluation has influenced policy and practice over time.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.