Abstract

This article describes the development of a questionnaire to assess research and evaluation capacity-building support. This support was provided to public health professionals via a capacity-building partnership, the Western Australian Sexual Health and Blood-borne Virus Applied Research and Evaluation Network (SiREN). Evaluating research and evaluation capacity-building initiatives can be challenging due to their complexity. The development of the questionnaire was informed by systems concepts that acknowledged the complex nature of capacity-building, a literature review and consultation and pilot testing with stakeholders. The final questionnaire contains 17 quantitative and seven qualitative questions. Pilot testing found that the questionnaire was easy to understand and acceptable and enabled service users to provide an accurate description of changes that occurred as a result of receiving support. The development of the questionnaire provides insight into how measurement tools to assess capacity-building initiatives can reflect complexity, including the influence of contextual factors, unintended consequences and the non-linear nature of the capacity-building process. The questionnaire could be adapted to evaluate similar capacity-building efforts and the findings used to strengthen capacity-building efforts.

Keywords

What We Already Know

• Research and evaluation capacity is crucial to supporting effective decision-making in public health. Yet, research and evaluation capacity-building programs are not well-examined and there is a need for appropriate and evidence-based measurement tools to examine their impact. • Evaluating capacity-building programs is challenging due to their complexity. For example, capacity-building programs can target multiple levels of change (e.g. individual, organisational and system), have multiple factors affecting their ability to create change (e.g. staffing and funding changes) and often have a long lag between program implementation and outcomes. • Applying systems approaches can address some of the challenges associated with evaluating capacity-building programs. Systems concepts can provide new insights into how capacity is built.

The Original Contribution the Article Makes to Theory and/or Practice

• The questionnaire developed in this practice article is the first research and evaluation capacity-building questionnaire that includes process, impact and outcome questions and includes systems thinking concepts in the design. • The application of systems concepts to the development of the questionnaire provides valuable insight for evaluators interested in incorporating systems approaches into the design of structured measurement tools. • The questionnaire can be adapted to evaluate other research and evaluation capacity-building programs and the evidence it generates used to strengthen capacity-building efforts.

Introduction

This article describes the development of a questionnaire to assess research and evaluation capacity-building support. Public health professionals require research and evaluation capacity (Brownson et al., 2018; Cooke et al., 2018) and access to relevant evidence for effective decision-making (Edwards et al., 2016). Research and evaluation capacity is broadly defined as the motivation, knowledge, skills, and structures to engage in sustainable research and evaluation practice and apply research and evaluation evidence to decision-making (Cooke, 2005; Labin et al., 2012; Preskill & Boyle, 2008). Recognition of the importance of research and evaluation capacity is growing (Pulford et al., 2020; Schwarzman et al., 2019). Recent examples of research and evaluation capacity-building strategies include training (Ramanadhan et al., 2020), access to research and evaluation tools and resources (Bourgeois et al., 2023), for example, evidence portals, and personalised support in the form of brokers, mentoring or consultancy (Chauveron et al., 2021; Lindeman et al., 2018; Stone-Jovicich et al., 2019). One promising strategy is partnerships between researchers, evaluators and public health professionals (Lobo et al., 2016). These partnerships can strengthen research and evaluation practice and increase evidence-informed decision-making (Tobin et al., 2022b).

Evaluating capacity-building programs is challenging due to their complexity (Cooke et al., 2018; Pulford et al., 2020; Vang et al., 2021). For example, capacity-building programs can target multiple levels of change (e.g. individual, organisational and system) (Cooke et al., 2018; Norton et al., 2016), have multiple factors affecting their ability to elicit change (e.g. staffing and funding changes) (Brownson et al., 2018; Tobin et al., 2022b) and there is often a long lag between program implementation and outcomes (Bourgeois et al., 2018; Cooke et al., 2018; Tobin et al., 2022b). The evaluation of such programs needs to account for these intricacies.

Applying systems approaches can address some of the challenges associated with evaluating capacity-building programs (Klier et al., 2022). Systems thinking is a way of viewing a system that seeks to explore it as a whole, its component parts and the interactions between them (Cabrera & Cabrera, 2019; Peters, 2014). Hawe et al. (2009) has suggested that a systems perspective can improve understanding about how a program interacts with the system in which it is embedded and how the program contributes to change. In a study of evaluation capacity within a network, Grack Nelson et al. (2019) reported that using systems concepts provided a holistic understanding of how capacity was built and provided new insight into how capacity is built. There is value to utilising systems approaches in evaluation and a need for further studies exploring their application (McGill et al., 2021; Roche et al., 2021).

Despite numerous examples of capacity-building initiatives, it is not a well-examined field (Bourgeois et al., 2023; Bowen et al., 2021; Pulford et al., 2020). There is a need for appropriate and evidence-based measurement tools to examine the impact of these programs (Sauter et al., 2020; Schwarzman et al., 2019). Several measurement tools have been developed that assess existing evaluation capacity (Bourgeois & Cousins, 2013; Cousins et al., 2008; Gagnon et al., 2018; Mackay, 1999; Nielsen et al., 2011; Schwarzman et al., 2019; Taylor-Ritzler et al., 2013) and research capacity (Holden et al., 2012; Kothari et al., 2009; Smith et al., 2002; Van Mullem et al., 1999). Schwarzman et al. (2019) and Taylor-Ritzler et al. (2013) developed surveys to assess the evaluation capacity of an organisation, and could be used pre and post implementation of a capacity-building program to assess change. However, these surveys are not designed to examine the processes related to the quality of the implementation of a capacity-building program such as the presence of effective communication and trust, which are important processes to facilitate understanding of how and why a capacity-building program works or does not work.

In addition to assessing existing research and evaluation capacity, there are tools to examine partnerships (Kegler et al., 2020). Relevant to this article are tools to assess partnerships between researchers, community organisations and community members. For example, the Community Impacts of Research Oriented Partnerships (CIROP) questionnaire (King et al., 2009) measures the impacts of research partnerships from the perspective of the community (individuals and organisations). While the CIROP provides a comprehensive assessment of the kinds of changes that research partnerships can achieve, it does not assess the interactions between partners or changes in evaluation capacity. Another tool that focuses on research partnerships is the Partnership Indicators Questionnaire, developed to assess the performance of knowledge creation and exchange partnerships between researchers and government that aim to generate policy-relevant research (Kothari et al., 2011). This tool includes process concepts such as clear leadership and respectful communication. Concepts or outcomes relevant to non-government organisations or evaluation capacity-building, such as increased evaluation skills, are not included. A need remains for measurement tools that are sensitive to the complexity of capacity-building programs.

This article describes the evidence-informed and consultative approach used to develop a questionnaire to evaluate the research and evaluation support delivered by a capacity-building partnership. The development of the questionnaire contributes to addressing the limitations and considerations described earlier, particularly concerning how measurement tools can reflect the complexity of capacity-building programs.

Background and Context

The questionnaire assessed a capacity-building partnership, the Western Australian Sexual Health and Blood-borne Virus Applied Research and Evaluation Network (SiREN). SiREN builds program planning, research and evaluation capacity within public health organisations working to prevent and manage sexually transmissible infections and blood-borne viruses and promote sexual health (the system). This system includes a range of research, clinical, government and non-government organisations staffed by researchers, clinicians, educators, peer-based outreach staff, health promotion practitioners and policymakers.

SiREN utilises strategies operating at the individual, organisational and system level. SiREN strategies include personalised program planning, research and evaluation support; fostering partnerships between research, government and non-government organisations; seeking grant funding; developing and participating in collaborative research and evaluation projects; and creating and sharing a wide variety of resources and services to build research, evaluation and evidence-informed decision-making capacity amongst public health professionals. Management of SiREN is undertaken by a team of five university-based academics alongside a steering group comprising stakeholders from government, research and non-government organisations. Together, they provide input into the strategic direction of SiREN. Detailed descriptions of SiREN have been published previously (Lobo et al., 2016; Tobin et al., 2019, 2022, 2022b).

Questionnaire Purpose

The purpose of the questionnaire was to evaluate the program planning, research and evaluation support provided by SiREN to service users (staff working within the system defined above). Specific examples of the kinds of support the questionnaire was required to assess include writing a manuscript for publication; planning a public health program; creating an evaluation plan; developing a new evaluation method; writing a conference abstract; presenting at conferences; preparing an ethics application; or developing a grant proposal. The provision of this support forms a partnership between SiREN and the service user, as both parties combine their skills and knowledge to develop solutions to a shared concern. SiREN staff who provide this support are university-based with expertise in service delivery, program planning, research and evaluation. The aims of the questionnaire are to: 1. Assess process factors that contribute to the achievement of outcomes and impacts, for example, expectations are met. 2. Identify changes that occurred to research and evaluation capacity, evidence-informed decision-making and program planning. 3. Determine the contribution of SiREN and external influences in achieving these changes.

Methods

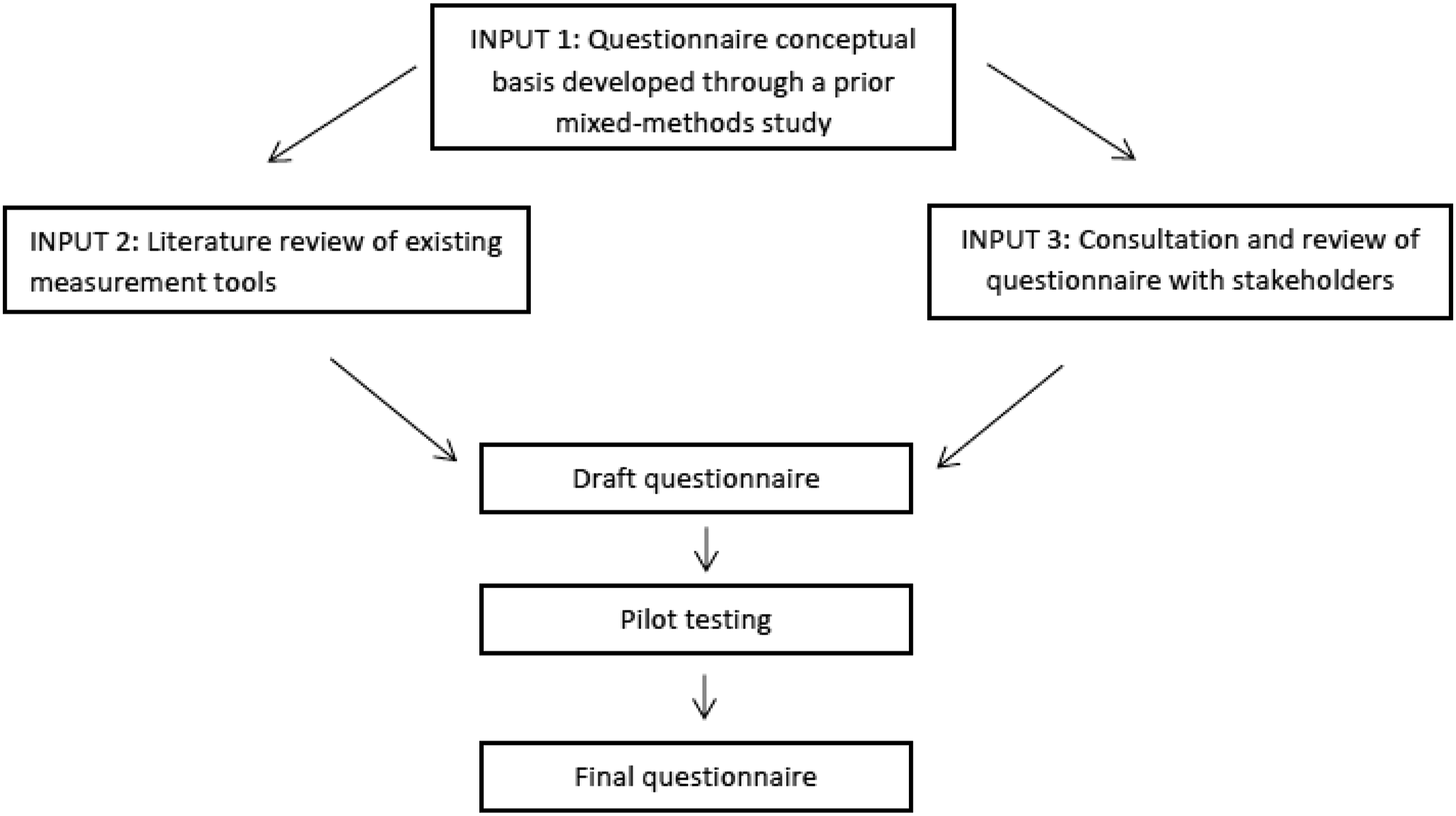

The Questionnaire Origin and Development Appraisal Tool (Hamzeh et al., 2019) guided questionnaire development and reporting. The questionnaire was constructed using processes aligned with previous questionnaire development studies (Cousins et al., 2008; Holden et al., 2012; King et al., 2009; Kothari et al., 2009; Palinkas et al., 2016; Taylor-Ritzler et al., 2013) and received ethical approval from the Curtin University Human Research Ethics Committee (approval number: HRE2017-0090). The three key inputs are described below and presented in Figure 1: 1. Findings from a previous study conducted by the authors (Tobin et al., 2022b) informed the conceptual basis of the questionnaire. 2. A literature review to identify existing tools and methods that could be used in the development of the questionnaire. 3. Consultation with the research team; SiREN management team; and SiREN steering group to establish the purpose and general structure of the questionnaire, select questions and assess face validity. Questionnaire Development

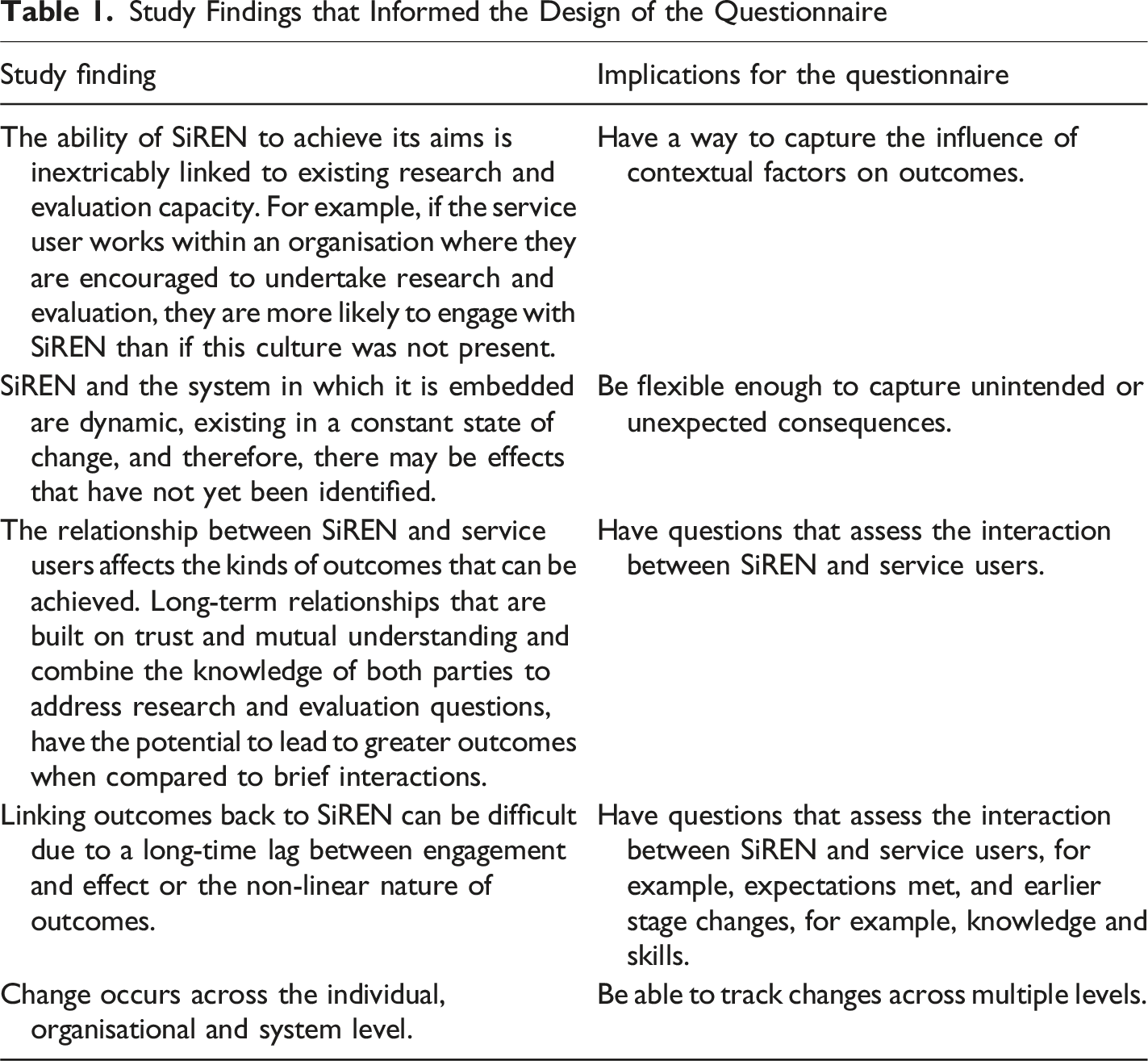

Input 1 – Conceptual Basis

Study Findings that Informed the Design of the Questionnaire

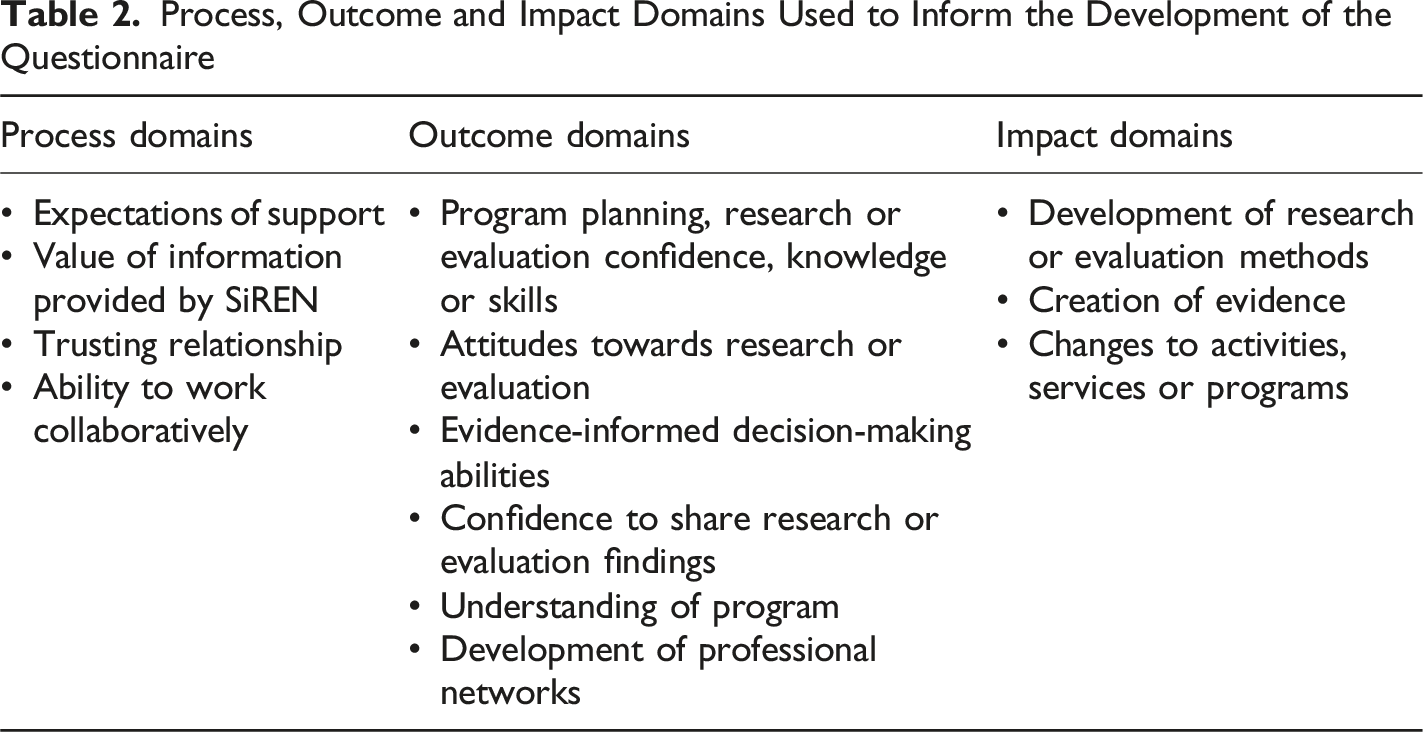

Process, Outcome and Impact Domains Used to Inform the Development of the Questionnaire

Input 2 – Literature Review

A literature review located tools and methods to support development of the questionnaire. Specifically, the search identified studies that developed tools to assess: partnership functioning, or outcomes associated with evidence-informed practice, research capacity or evaluation capacity-building programs. Search terms were developed based on the domains in Table 1 and through consultation with the research team and librarian. Databases searched included Proquest, PsycINFO and CINAHL. Reference lists of included articles were also reviewed to identify relevant studies. Articles were excluded if the questionnaire did not include questions relevant to the domains described in Table 2, was not tested, or could not be administered at an individual level. As the database search mainly identified quantitative measures, the Better Evaluation website (Better Evaluation, 2020) was reviewed to locate relevant qualitative methods. The Better Evaluation website was chosen as it is a freely available knowledge platform that provides information on over 300 evaluation methods and processes (Better Evaluation, 2020).

The literature review identified 12 relevant questionnaires, which were used to develop a pool of questions. These questions were reviewed to determine which ones best captured the domains presented in Table 2. The final questionnaire used questions from five questionnaires (Bronstein, 2002; Cousins et al., 2008; Jones & Barry, 2011a, 2011b; King et al., 2009). In addition, two qualitative evaluation methods were identified from the Better Evaluation website (Dart & Davies, 2003; Earl et al., 2001). These qualitative methods were used to address some of the implications described in Table 1 (e.g. capturing the influence of contextual factors). Where an appropriate item could not be located, a new question was developed through consultation with the research team. From this process, a draft questionnaire was developed.

Input 3 – Consultation

Group or individual consultation with members of the research team (n = 4), SiREN management team (n = 5) and SiREN steering group (n = 7) was used to refine the draft questionnaire and assess its face validity. These groups were selected for their expertise. At the time this study was undertaken, three members of the research team were members of the SiREN management team (RL, GC and JH), and two were paid SiREN employees (RL and RT). All research and management team members had experience in public health-related evaluation, research, capacity-building and questionnaire development. All groups had an in-depth understanding of SiREN’s activities and evaluation needs. Assessing face validity with a small group of experts, numbering between 9 and 24, has been used in similar studies (Sauter et al., 2020; Schwarzman et al., 2019). Feedback from all groups was sought on the selection of measurement method and timing of administration. The SiREN steering group provided feedback on general evaluation considerations. Feedback from the research and SiREN management team included inclusion and exclusion of questions; sequencing of questions; face validity of selected questions; clarity of the questionnaire instructions and questions; and the response formats (e.g. Likert scale choices).

An online questionnaire was identified as the most practical measurement tool to enable the efficient collection and analysis of data and minimise respondent burden. Findings from the consultation suggested that the inclusion of process questions was important in case outcomes were not achieved due to influences outside the control of SiREN (e.g. service user priorities changing or staff changes). Feedback on the timing of the questionnaire was that it should be administered immediately after support had ceased. Support provided by SiREN can be provided from a few weeks to over a year. Therefore, it was agreed that administering the questionnaire immediately after support ceased would allow enough time for most changes to occur and reduce issues with recall.

Pilot Testing

To assess content validity, individuals who had previously engaged with SiREN were purposefully selected to pilot test the questionnaire via an online survey. Assessing content validity with experts and refining the questionnaire based on feedback is recommended practice (Haynes et al., 1995). Criteria for inclusion were a current SiREN steering group member, received research or evaluation support from SiREN, or partnered with SiREN to undertake research or evaluation. Pilot testing assessed the usability and acceptability of the questionnaire. Specifically, it asked respondents to provide feedback on the clarity of the instructions and questions and the accuracy of questionnaire completion timing. If participants had received support from SiREN within the past 12 months, it asked if the questionnaire enabled them to provide an accurate description of changes that had occurred. Invitations to participate were delivered to participants by email which contained a link to review the questionnaire and a link to the pilot testing.

Eighteen individuals were invited to participate, and 16 responses were received (89% response rate). Respondents were from non-government (n = 11, 69%), peak body (n = 3, 19%) and government (n = 2, 12%) organisations. All participants indicated that survey instructions and questions were clear. More than half had requested research or evaluation support from SiREN in the last 12 months (n = 9, 56%). Of these participants, all (n = 9, 100%) agreed that survey questions enabled them to provide an accurate picture of support and any resultant changes. Of those who had requested support, one (11%) reported that some of the questions were irrelevant to the support received. Three respondents (18%) suggested minor amendments to question ordering and wording (e.g. moving the first question to the third question). Questionnaire completion was estimated at 5 minutes. The majority (n = 13, 81%) agreed that the time taken to complete the survey was acceptable. Three participants (19%) reported that more time was required; as a result, this estimation was amended to 10 minutes to capture respondent burden more accurately.

The Questionnaire

The final Research and Evaluation Capacity-building Questionnaire (RECB-Q) contains 17 quantitative and seven qualitative questions that assess the processes, outcomes and impacts of the research and evaluation support provided by SiREN. Of the 24 questions, 14 were based on pre-existing tools with the remaining questions (n = 10) developed by the study team. The questions and their sources are described in the text below. The complete questionnaire is available in Supplemental Table 1.

Process Questions

The RECB-Q contains four process questions that assess the interactions between SiREN and the service user: expectations being met, relevance of information provided by SiREN, establishment of trust, and working together to effectively problem solve. Response options are always, often, sometimes, rarely and never. Questions relating to process factors such as the development of trust and effectively working together to problem solve were adapted from Jones and Barry’s trust and synergy scales (2011a, 2011b). These questions are shorter-term indicators that can determine if SiREN is on track to achieve change.

Quantitative Questions Assessing Outcomes and Impacts

Eleven questions assess the outcomes and impacts that occurred as a result of receiving support. Evidence-informed decision-making and research and evaluation capacity-related quantitative questions were modified from questionnaires developed by Cousins et al. (2008) and King et al. (2009) or were developed in consultation with the research team. While measured at the individual and organisational level, they can also indicate changes occurring at the system level, for example, networks, funding and culture.

Outcomes include changes to program planning, evidence-informed decision-making, research and evaluation confidence, knowledge and skills; receptiveness to new research and evaluation opportunities; confidence in sharing work (e.g. at conferences); the development of professional networks; and understanding of how their program fits or contributes to the broader response to sexual health and blood-borne virus issues. This last item reflected the increase in clarity around program purpose that is acquired from the provision of program planning and evaluation support.

Impacts include the development of research or evaluation methods; improvements in the organisation’s program planning, evaluation, or research-related processes, policies or practices; and changes in the activities, services or programs provided by the organisation. To capture the importance of SiREN in supporting change, service users are asked if the support led to outcomes that they, or their organisation, could not have achieved otherwise. This question was adapted from Bronstein (2002) and Jones and Barry (2011a).

Quantitative question response options are increased/decreased, no change or agree/disagree. These were chosen to allow service users to identify whether or not a change occurred, rather than assigning it a value (e.g. strongly agree, agree). This acknowledges that the degree of change depends on a wide variety of factors, many of which are outside SiREN’s control, including pre-existing skills and organisational capacity. The response option not relevant to the support received was also included as the support provided by SiREN is individualised; therefore, some of the changes included in the questions will not be relevant to all service users. For example, a service user who received assistance with developing an evaluation method is unlikely to see an increase in the development of their professional networks.

Qualitative Methods regarding Critical Changes

Qualitative questions aim to elicit story-based responses about important changes that occurred. The Most Significant Change (Dart & Davies, 2003) and Outcome Mapping (Earl et al., 2001) techniques informed the development of qualitative questions about outcomes that were valued by service users and SiREN’s contribution to those changes. To capture unintended or unexpected consequences, service users are asked to describe changes not listed in the previous quantitative section. They are then asked to describe the most valuable change that occurred, why the change was important to their work, how support provided by SiREN contributed to this change, and what other factors contributed to the change. These questions enable service users to describe what change they found most important and why, rather than selecting from a pre-determined list of changes. In addition, the response to these questions enables SiREN to differentiate between its influence and the effect of contextual factors on changes that occurred.

To strengthen the credibility of reported changes, supporting documentation of the changes that occurred (e.g. evaluation plan) is requested from respondents (Earl et al., 2001; Mayne, 2008). Final questions ask service users if they would have liked the support to have been different in any way, have any other feedback and would be happy to be contacted to discuss their feedback.

Analysis of Questionnaire Responses

The quantitative questions of the questionnaire generate ordinal data, which require different analytical approaches compared to continuous data (Lalla, 2017). These data can be summarised using descriptive statistics such as frequency distributions, mode, median and range. For larger sample sizes, appropriate inferential statistical tests for ordinal data may also be applied, depending on the research aims and design. Common tests include the Wilcoxon Signed-Rank Test for paired samples and the Mann-Whitney U Test for independent samples (Lalla, 2017).

Qualitative data can be analysed by following the steps in the Most Significant Change technique described by Lennie (2011). This method involves collaboratively reviewing participant narratives within predefined domains of change (e.g. skills in evaluation methods) and selecting the stories that reflect the most meaningful changes for the organisation (Lennie, 2011).

Discussion

The RECB-Q was designed to assess the processes, outcomes and impacts of research and evaluation support provided by a capacity-building partnership (SiREN). To the authors’ knowledge, the RECB-Q is the first research and evaluation capacity-building questionnaire that includes process, impact and outcome questions and explicitly links systems concepts to design. Pilot testing demonstrated that the questionnaire was easy to understand, acceptable and enabled service users to provide an accurate description of the support they received from SiREN and any changes that occurred as a result.

To date, tools to assess capacity-building and partnerships have focused on pre-determined quantitative indicators (Cousins et al., 2008; King et al., 2009; Kothari et al., 2009, 2014). While the RECB-Q does include quantitative questions, its crucial point of difference from other tools is the inclusion of complexity-sensitive questions. These qualitative questions ask respondents about a change they found most important to their practice and why it was important. These questions were modified from the Most Significant Change technique (Dart & Davies, 2003). The inclusion of these questions adds value to the RECB-Q in two key ways. Firstly, these questions concentrate on what SiREN service users find most important rather than what SiREN values. This understanding can inform continuous improvement as activities can be refocused on how to enhance value from a service user’s perspective. Secondly, the support provided by SiREN and the sexual health and blood-borne virus system in which it operates is dynamic. Therefore, new impacts and outcomes may emerge that have not yet been identified. These qualitative questions can identify unanticipated changes which can be used to inform new directions, strengthening SiREN’s effectiveness.

Evaluation often ignores complexity through de-contextualising outcomes, limiting understanding of how change is achieved (Zappala, 2020). The RECB-Q attempted to address this limitation, seeking to understand the role of context by including questions that ask how SiREN and external factors contributed to the attainment of outcomes. This can establish credible causal links between observed changes and program strategies (Earl et al., 2001; Mayne, 2012). In addition, it builds an understanding of how contextual factors interact with SiREN to constrain or amplify outcomes (Hawe et al., 2009). This is an important consideration when evaluating capacity-building programs as external factors (e.g. organisational culture) influence their ability to elicit change (Brownson et al., 2018; Tobin et al., 2022b).

Another point of difference of the RECB-Q from other capacity-building measurement tools is that it contains process questions in addition to impact and outcome questions. The inclusion of process questions, such as the development of trust, is important as they affect the ability of SiREN to achieve its intended outcomes. While outcomes may differ depending on the kind of support requested or contextual influences, the processes of establishing trust and meeting expectations occur consistently. These are regularities or patterns in systems thinking and are beneficial points to monitor for evaluation (Dyehouse et al., 2009). Recent capacity-building studies have emphasised that capacity-building is more likely to succeed when the relationship between those building capacity and program staff is responsive, trusting and respectful. This is because a solid relationship facilitates learning through the free exchange of knowledge (Gibson & Robichaud, 2020). Therefore, process questions can act as initial indicators of success as they occur before the longer-term impacts, such as creating evidence. In this way, monitoring processes can assist with addressing challenges with the dynamic nature of capacity-building and the lag time between implementation and changes becoming evident.

The evaluation field is rapidly embracing complexity-sensitive approaches in various forms (Gates, 2016; McGill et al., 2021). At the same time, researchers increasingly acknowledge the complex nature of capacity-building (Hanlon et al., 2018; Lawrenz et al., 2018; Pulford et al., 2020). There are synergies between complexity-sensitive methods and the evaluation of capacity-building programs (Lawrenz et al., 2018). For example, there is a need for greater understanding of the mechanisms of action of capacity-building programs (Lamarre et al., 2020); complexity-sensitive methods may be able to address this by exploring context and causal relationships (McGill et al., 2020). Evaluators and researchers wishing to build a stronger evidence base of how capacity-building programs work could add systems concepts and methods to their toolkits, a call echoed in health promotion and public health practice more broadly (Gates, 2016; McGill et al., 2021).

Process questions included in the RECB-Q focused only on factors identified as essential to achieving outcomes for SiREN. There are no established process indicators for capacity-building programs that address both research and evaluation. Therefore, it is unclear if the RECB-Q process questions are reflective of similar capacity-building programs. The exploration of processes that support other research and evaluation capacity-building programs is warranted and would provide deeper insight into how to design and implement such efforts to maximise effectiveness. The relationally focused process factors included in the RECB-Q correlate with previously identified research partnership literature, such as the development of trust and good communication processes (Luger et al., 2020). Several tools have been constructed to assess research partnership functioning that could be used as a starting point to expand on the measures included in the RECB-Q (Arora et al., 2015; Kothari et al., 2011; Marek et al., 2014).

A key strength of the RECB-Q development was the empirical and collaborative approach. Similar to other questionnaire development studies (King et al., 2009; Kothari et al., 2009; Taylor-Ritzler et al., 2013), the creation of the RECB-Q was based on multiple methods, which increased its conceptual and methodological quality (Hamzeh et al., 2019; Haynes et al., 1995). The collaborative expert-led approach ensured appropriateness and relevance to the needs of SiREN and its service users. Its brief, online format makes it acceptable to service users and reduces the time taken for SiREN to collect and analyse data. The RECB-Q may be adapted to evaluate other similar capacity-building projects. While many of its questions align with what is known in the literature, it was developed based on a single capacity-building project. Therefore, before evaluating other programs, it should be tested and modified as required. The number of organisations that SiREN engages with is relatively small, consisting of approximately 15 research, government and non-government agencies. The size of SiREN and the local sexual health and blood-borne virus workforce limited the sample available for pilot testing and precluded the use of tests of statistical significance. The study findings that informed the generation of the questionnaire items were also derived from a small sample (n = 17) (Tobin et al., 2022b). This may have reduced the number and type of questionnaire items generated. Further reliability and validity testing, including test-retest reliability, is recommended (Hinkin, 1995) with a larger sample size from other health-related fields.

Conclusion

The advancement of research and evaluation capacity-building in public health requires the development of evidence-informed evaluation tools. The RECB-Q is an evaluation tool that assesses processes, outcomes and impacts of research and evaluation support provided by a capacity-building partnership. It was informed by systems concepts and reflects the dynamic and complex nature of capacity-building. The RECB-Q addresses complexity through the inclusion of process indicators, consideration of contextual influences and capturing unanticipated outcomes. Applying systems concepts to the development of the RECB-Q provides insight for evaluators interested in incorporating systems approaches into the design of structured evaluation tools. The RECB-Q can be adapted to evaluate other research and evaluation capacity-building programs and the evidence it generates will be used to strengthen capacity-building efforts.

Supplemental Material

Supplemental Material - The Development of a Questionnaire to Assess Research and Evaluation Capacity-Building

Supplemental Material for The Development of a Questionnaire to Assess Research and Evaluation Capacity-Building by Tobin Rochelle, Hallett Jonathan, Crawford Gemma, Maycock Bruce Richard and Lobo Roanna in Evaluation Journal of Australasia

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Curtin University of Technology; Australian Government Research Training Program Scholarship; and Sexual Health and Blood-borne Virus Applied Research and Evaluation Network (SiREN).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.