Abstract

Evaluation students need a sense of how the expertise they bring and the knowledge and skills they are learning relate to the tasks of evaluation and to their own ongoing professional development. Within the Master of Evaluation and the Graduate Certificate in Evaluation at The University of Melbourne’s Assessment and Evaluation Research Centre (formerly the Centre for Program Evaluation), students use an evaluator competencies self-assessment to inform their coursework and practice across three subjects. In this paper, the authors – the coordinator of the subjects and several students – share practices and examples of how the self-assessment added value for their learning. Discussion of the logistics, ethical requirements, and appropriate use given the limitations of self-assessments provide ideas for integrating self-assessment into the design and delivery evaluation capacity building or formal courses to increase ownership of learning for adults who want to expand their evaluation expertise.

Keywords

• Self-assessment is helpful for adult learning. • Self-assessment in evaluation is important due to the profession lacking formal assessment of skills and knowledge, and the diverse backgrounds of evaluators. • Description of and reasoning for using evaluator self-assessment to guide learning in graduate education. • Logistics and ethics of implementation in formal education and publication. • Examples of student assessments and impacts on learning based on their self-assessment. • Implications and ideas for other organisations wanting to implement self-assessment.What we already know

The original contribution the article makes to theory and/or practice

Introduction

Davidson (2005) states that good evaluation delivers useful criticism. Therefore, evaluators must have an ‘evaluative attitude’ (p. 35) about their own practice and conclusions, that is, they must actively seek all criticism and use it to improve. The Australian Evaluation Society (AES) embedded evaluative attitude into their Evaluators’ Professional Learning Competency Framework (AES Professional Learning Committee, 2013; Gullickson et al, this issue) in the Evaluation Attitude and Professional Practice Domain. This Domain applies evaluative attitude to awareness of one’s evaluation knowledge and skills as critically important for evaluators, demonstrated by three related competencies: • Demonstrate self-awareness (acknowledge competencies and competency gaps, practices self-assessment/self-reflection). • Practice within own competence, that is, decline to participate or moderate contributions in areas outside their competence. • Seek opportunities to build their competence as evaluators

Understanding and operating within one’s own competence is a particular challenge in evaluation. All the existing competency sets are broad, making it impossible to cover all competencies within the coursework or practicums formal evaluation training (Montrosse-Moorhead et al., 2022). Without any common knowledge or skills assessment, it can be challenging for any adult who wants to learn evaluation to figure out what they know and what they should learn next.

Self-assessment can assist with learning for adults, and a self-assessment on evaluator competencies can provide insight for learners about their skills and knowledge, and their learning pathways (Wildschut et al., 2024, this issue). The authors of this paper, a professor and her students, share how self-assessment on evaluator competencies has been structured into a formal evaluation course, and examples of how that self-assessment impacted learners. We intend that sharing the logistics and impacts of using the Learn Evaluation Assessment Portal (LEAP) for evaluator competency self-assessment will help others make good use of self-assessment for guiding adult learning in evaluation.

Context: The University of Melbourne

The University of Melbourne is a publicly funded university in Melbourne, Australia. The current strategic plan states: ‘Our purpose is to benefit society through the transformative impact of education and research. Our aspiration is to be a world-leading and globally connected Australian university with students at the heart of everything we do’ (University of Melbourne, 2020, p. 4).

Evaluation is core to generating, documenting, and valuing the various aspects of education and research; therefore, learning how to do evaluation is critical to the University’s mission. The University 1 has offered graduate qualifications in evaluation since 1990. The current coursework qualifications include: the Graduate Certificate in Evaluation (half year full time) and the Master of Evaluation (one-year full time). They have been in place since 2012 and fully online since 2015. Entry requirements include a four-year bachelor’s degree and at least three years of work experience. However, many students take evaluation subjects without being enrolled in the courses either as electives in other University of Melbourne courses or through the Community Access Program (Gullickson et al., 2021, 2022).

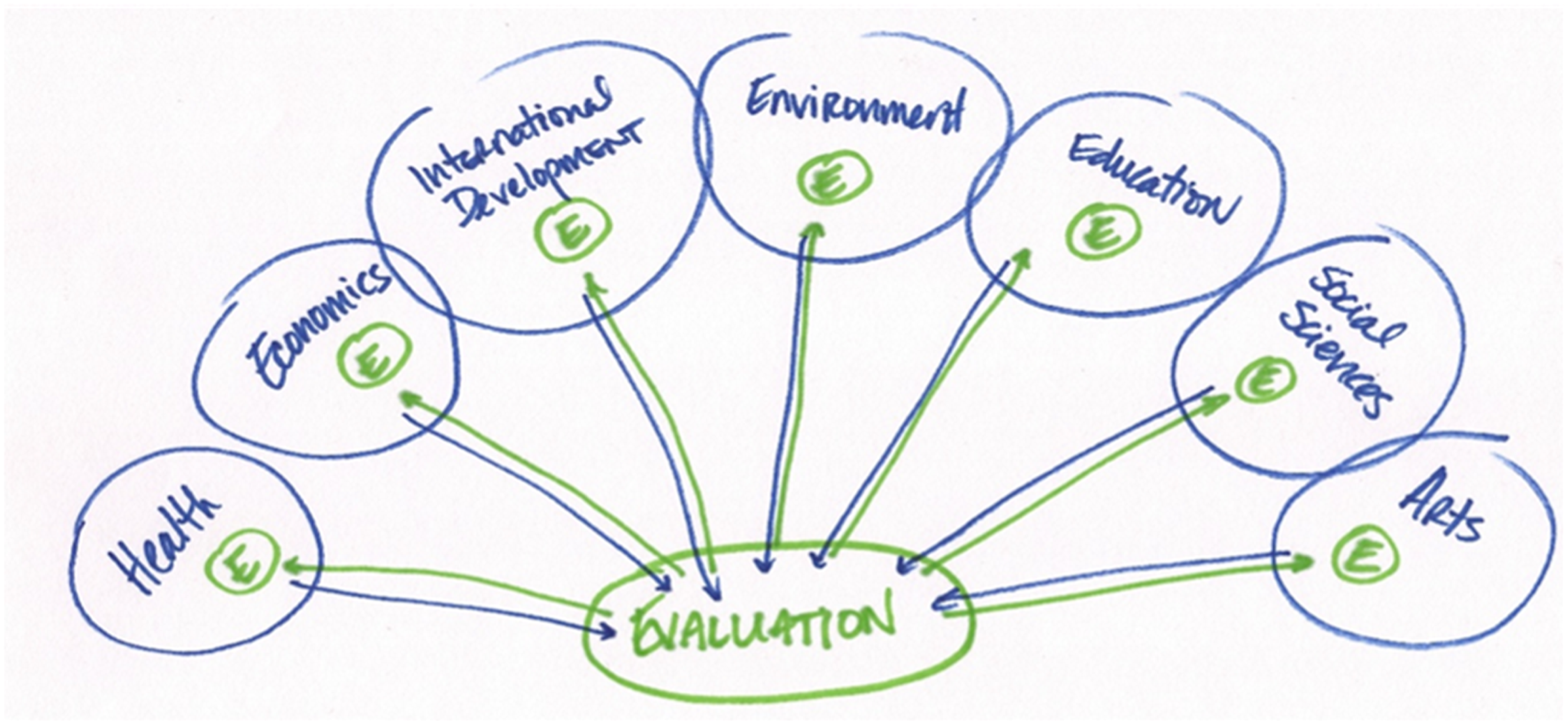

Both courses are designed to develop students’ theory, practice, and praxis in evaluation. Within these courses, evaluation is taught as a transdiscipline, that is, ‘a discipline that has standalone status and is used as a methodological or analytical tool in several other disciplines’ (Scriven, 2008, p. 65). This means that evaluation has its own knowledge base, methods, and practices, but also must rely on content and methods from many other disciplines. In Figure 1, evaluation is shown as its own discipline within the green circle. The green arrows to the small green circles in the other disciplines (health, arts, etc.) show the knowledge coming from evaluation and being used within them. The blue arrows indicate the knowledge from those disciplines coming to evaluation to be used in it. Evaluation as a transdiscipline © 2021 Amy Gullickson. This work is licensed under CC BY-NC 4.0.

Since evaluation applies across disciplines and sectors, students come from a variety of backgrounds and levels of experience with evaluation. Coursework is structured to simultaneously benefit senior, mid-level, and junior staff from consultancies, government, and not-for profit organisations. As of 2021, government and not for profit were the most common types of organisations for whom students worked; education, international/community development and health care/public health were the most common sectors. Students range from independent consultants who have been practicing for 20+ years, leaders from all levels and sectors of government agencies and not for profits of all sizes, and staff members of those organisations who range from just starting out in an evaluation role through to mid- and late career.

Taking the LEAP at The University of Melbourne

The lead author integrated self-assessment on evaluator competencies into the Master of Evaluation in 2013 when the capstone subject was introduced to comply with the Australian Qualifications Framework. From 2013-2021 students were invited to use the self-assessment form from the Essential Competencies for Program Evaluators (Ghere et al., 2006) which was available as a downloadable PDF. However, this did not allow collection of data across students and across years to understand learners and their needs. So, when the LEAP became publicly available in 2022, she switched to it. Students use the LEAP in three subjects, two offered in the graduate certificate and master's, and an additional subject in the masters only. These subjects are: Developing Evaluation Capacity, Practice of Evaluation, and Evaluation Capstone.

Across these subjects from 2015–2022, all enrolled students will have done an evaluator self-assessment, but not all will have done it via the LEAP. In all cases, taking the LEAP or another evaluator competency self-assessment is not an assessment task for the subject. Instead, students use it to inform their responses on assessment tasks. Students access the LEAP through the public portal, which allows their data to be used for research (assuming they give permission) and gives them a place to access it to use after graduation and throughout their practice. The LEAP self-assessment is a rough measure that is unlikely to capture incremental learning (Wildschut et al., 2024, this issue). Therefore, if students have taken the LEAP in a previous subject, they are not expected to complete it again. They just refer to their previous results for use in their assessments.

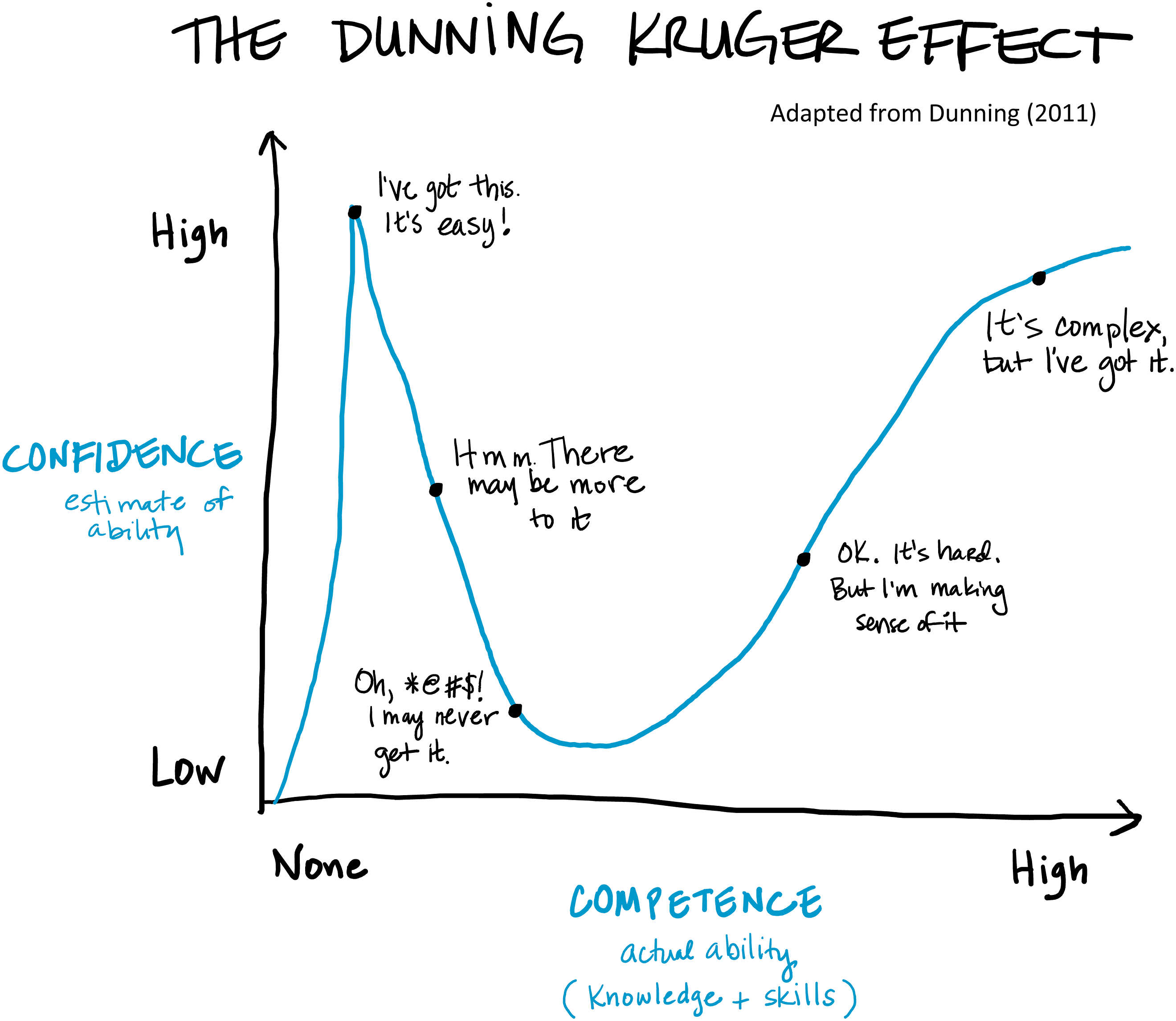

In each subject, the content and lecture related to the LEAP is accompanied with an explanation of Dunning-Kruger Effect (Dunning, 2011) (Figure 2) to help students understand the primary common failing of self-assessments. The lead author, as the designer and often coordinator for each of these subjects, asks them to frame where they are in their learning based on the Dunning Kruger swoop – and to be aware that others they encounter may be at the peak before the swoop in relation to evaluation specific knowledge. This is particularly important in evaluation where various disciplines and sectors define and practice evaluation as applied research, cost–benefit analysis, or quality assessment, rather than ‘the generation of a credible and systematic determination of merit, worth, and/or significance of an object through the application of defensible criteria and standards to demonstrably relevant empirical facts’ (Gullickson, 2020, p. 4). The Dunning-Kruger Effect © 2024 Amy Gullickson. This work is licensed under CC BY-NC 4.0.

Ethics

Ethics in this article applies in a variety of ways: the rights of the students in relation to the self-assessment platform and to their assessments, and the rights of their workplaces. On the LEAP platform, anyone who takes the self-assessment decides, based on the consent process and privacy policy, whether they want their de-identified data to be part of the ongoing research. If they consent to have their data shared, no one at the University or on the LEAP research team can see their identified individual ratings via the platform or know whether they have used it. Students share self-assessment ratings only in Evaluation Capstone, and only those relevant to their project. This protects their rights as both students and research participants in accordance with the National Statement on Ethical Conduct in Human Research (National Health and Medical Research Council, Australian Research Council and Universities Australia, 2023; 2018). Use of the LEAP platform also provides them a report of their full responses, upholding research reciprocity in line with the Australian Evaluation Society’s Ethical Guidelines (Australasian Evaluation Society, 2013).

The University of Melbourne policy states that students own their assessment products. This publication honours that policy through collaboration with selected students who used the LEAP as co-authors on the paper. They have chosen and edited the text presented below as illustrations of the self-assessment in their learning.

Across all three subjects, students were invited to bring projects from their workplace or other contexts into the learning. In every case, students have the option to use a pseudonym and other methods to de-identify their workplace in conversation and assessments to preserve confidentiality. In the cases below, student authors have permission from their workplaces to name them.

The LEAP in action

In each of the three subjects, use of the LEAP is tailored to the content and context of students’ learning. Logistically, Practice of Evaluation and Developing Evaluation Capacity are eight-week subjects, with 24 contact hours and an expectation of 96 hours of study and 50 hours of assessment. Evaluation Capstone has a similar expectation of work spread out across 18 weeks, as students typically need longer to design and complete their independent projects. In the following sections, for each subject, we begin with an introduction to the subject, its assessment, and how the LEAP fits into the learning process. Then the students briefly introduce themselves, their work roles, and their aims for learning in their evaluation courses – these are written in first person. The students then share excerpts taken directly from their assessments demonstrating how they integrated the self-reflection from the LEAP into their work. Excerpts were thus written to suit the assessment and may be in first or third person. They have had minor updates for clarity and, in some cases, for sources where new sources have been published since they completed their assessment tasks. They are otherwise as submitted and indented in text in the spirit of quotation in qualitative research as they are effectively, quotes.

Practice of Evaluation

Practice of Evaluation is an elective for Graduate Certificate students and compulsory for masters’ students. In it, students work through an evaluation from design to reporting, beginning with designing an evaluation using their own example evaluand and finishing with conducting evaluative synthesis and reporting an evaluative conclusion from existing data. They use the LEAP in their evaluation design to understand their areas of strength and opportunities for learning, and to help them think about where they will need support to deliver an evaluation that has requirements beyond their abilities. The major assessment is an evaluation design for their example evaluand. In it, students are expected to discuss their competencies in relation to the evaluand and the evaluation design to help them address potential threats to evaluation quality based on their own skills (or lack thereof).

Student: Sarah Gonzalez, Phoenix Space

I am Head of Impact at Phoenix Space and a graduate student in the Master of Evaluation program. For this subject, I designed an evaluation for the Empower Program at Phoenix Space, a program that partners with non-government organisations to deliver Science, Technology, Engineering and Mathematics education courses aimed at 8 to 25 year-old refugee and disadvantaged students.

Assessment excerpt: Evaluator Competencies related to delivering a specific evaluation project

The evaluator utilized LEAP which identifies seven professional competency domains for evaluators (Learn Evaluation, 2021). The evaluator is relatively new to the evaluation profession, acknowledging certain competency areas requiring further development which were confirmed by the assessment based on an updated set of the AES Evaluator Competency Framework (see Gullickson et al, this issue). In particular, the domains and sub-domains within Evaluation Theory, Culture and Context, Research Methods, Evaluation Activities Synthesis were scored the lowest and require support on the part of an outside independent evaluator (AES Professional Learning Committee, 2013). The domains of Evaluative Attitude and Professional Practice, Project Management, and Interpersonal Skills scored the highest due to the evaluator’s previous professional experience as an external financial auditor and experience in working with clients from various sectors and contexts. Finally, the evaluator will plan to compensate for her cultural understanding gaps by utilizing consultants to represent the various cultures represented in the student populations, given the wide range of varying cultures that could be impacted by the design of Empower’s courses.

Developing Evaluation Capacity

Developing Evaluation Capacity is an elective in both courses. In it, students explore designing and delivering evaluation capacity building initiatives for organisations and individuals. One of the areas we study is the competencies required of those who deliver evaluation capacity building. To teach evaluation to others, what do evaluation capacity building practitioners need to know about teaching and learning and about evaluation? To explore what they know about evaluation to consider where they could be able to competently offer evaluation capacity building, students complete the LEAP. In both of their assessment tasks, they are asked to identify the evaluator competencies needed to deliver the training/initiative, and in the final exam, they are expected to discuss how their competencies fit with what is needed, and how they will manage if there’s a mismatch.

Student: Jane Howard, Victorian Department of Health

I am a mid-career evaluator having come into evaluation from 20-plus years in scientific research. I was supported by my employer to build upon my competencies as an evaluator by completing a Graduate Certificate of Evaluation through The University of Melbourne.

Assessment task, players, and relation to the student

The major assignment for the Developing Evaluation Capacity subject asked us, as an external consultant, to design an evaluation capacity building training proposal for our client. I named this consultancy EvalSkills. We had the option of basing our response on a case study provided or drawing from our own context. I chose to use the provided case study in which provided details about the client: The Evaluation Collaborative. I used evaluation capacity building theory to justify the training design chosen. Critical to this assignment was understanding my own competencies as they related to what skills the EvalSkills consultant would need to deliver this training. In my day-to-day role as an internal Government evaluator, I routinely deliver evaluation capacity building training to departmental staff. The EvalSkills consultant competencies I listed are also essential to my role.

Developing Evaluation Capacity Final Assessment Excerpt: Practitioner capabilities

There are many variables affecting the success of evaluation capacity building such as individual characteristics (i.e. background of learners, motivation, ability of the evaluation capacity building practitioner to teach) and environmental (i.e. organisational support and resourcing, evaluation capacity building educational materials and resourcing) (Knowles et al., 2015). However, there are important evaluator competencies that EvalSkills staff must have to successful delivery the evaluation capacity building. Some of these are: Reflective practice – being aware of personal evaluation preferences, strengths and limitations and self-monitoring the impacts of your evaluation capacity building delivery to inform future delivery. This includes listening to what The Evaluation Collaborative’s evaluation capacity building needs are. Knowledge of evaluation theory and application to practice – this is the ability to translate theory to practice. How to choose the right evaluation design, construct program logics, determine the evaluability of an evaluand and apply critical thinking skills. Ability to negotiate and address conflict – EvalSkills consultants need to be able to negotiate with The Evaluation Collaborative’s senior management for why EvalSkills’ evaluation capacity building is worth investing in. Situation analysis – EvalSkills consultants need to assess The Evaluation Collaborative’s organisational context, staff competencies and incorporate results from the leadership’s needs assessment and listening tour and ensure the evaluation capacity building intervention meets their performance needs (above points taken from Stevahn et al., 2005, pp. 46–57). In delivering the workshops EvalSkills consultants need to have a deep understanding of evaluative practice and methodology (Stevahn et al., 2005). EvalSkills consultants will demonstrate professional honesty, integrity, and equity in all their responses to The Evaluation Collaborative staff needs, while delivering workshops, and providing technical assistance and mentoring. This will build trust between The Evaluation Collaborative and EvalSkills consultants, which is foundational to the success of the evaluation capacity building intervention (Buckley et al., 2021). The EvalSkills team has individuals with diverse skills. All EvalSkills staff have a masters level degree in either Public Health or Evaluation and a minimum of five years as a practicing evaluator. EvalSkills staff who deliver the training have between them a range of relevant knowledge across the areas The Evaluation Collaborative delivers evaluations for including education, health, disability, and mental health and health promotion. They have experience delivering evaluation capacity building to organisations ranging from not-for-profit, to university and government. EvalSkills consultants selected to deliver the evaluation capacity building will also have experience in establishing Community of Practice.

Evaluation Capstone

Capstone is the culminating experience for master’s students. It is compulsory and taken as either the last or second last subject of the eight subjects in the master’s degree. Students use the LEAP to shape their capstone project. They take the self-assessment (or revisit their previous results) to reflect on their learning in the master’s, and the evaluation knowledge, skills, and practice they want to develop further or consolidate, and design a capstone project to suit. In their project proposal, they describe how their proposed project will help them develop their chosen competencies. In their final assessment, students describe how their competencies developed throughout the project. Often, students report that the project helped them develop more competencies than listed in their proposal. Sometimes, due to changes in the project, or their ongoing learning, they report development of different competencies instead. In all cases, students are reflecting on their learning needs, their learning goals, and relating it to projects they design at their workplace, as volunteers, or in research. This engages them in a learning process they can replicate after their formal study to continue their professional development.

Student: Shin Tanabe, World Bank

I am a knowledge management analyst at World Bank, who co-leads the organisation of training programs and events related to urban development and disaster management for government officials and development practitioners in developing countries. I have over ten years of professional project management and learning design experience in international education and international development. For my capstone, I volunteered on a project at the Centre for Program Evaluation.

Tanabe capstone proposal: Assessment excerpt

(1) Justification statement In completing the AES Evaluators’ Professional Learning Competency Framework self-assessment using the LEAP, I recognised my need to improve in the areas of theoretical foundation and evaluation project management. In particular, the abovementioned tool highlighted my weakness in weaving contextual understanding into analysis, synthesis, evaluative interpretation, and reporting. This result encouraged me to reconsider the evaluation skills and experience I must develop as an evaluation professional. I have discussed this result with my colleagues, who suggested improving my skills in assessing evaluation literature and linking the key literature with my evaluation work. Thus, I decided to work on the Raise Our Voices project to improve my literature review skills and learn how to integrate the literature review into the entire evaluation work. As the project aims to assess the process and impact of the peer-led groups, I conceived that I could apply my vocational experience and knowledge related to knowledge management and community development and contribute to the evaluation project. To start work on this project, I have reviewed books on conducting literature reviews (Fink, 2019; Jesson et al., 2011; Ridley, 2012) and seen that I need to be more attentive to identifying key models or theories that underpin an evaluation, to synthesising diverse concepts and ideas, to identifying gaps in existing knowledge and to making a specific argument to establish a framework for understanding an evaluative work. I believe that conducting a scoping review using Arksey and O’Malley’s (2005) methodological framework will hone my literature review skills and strengthen my theoretical foundation for conducting evaluation work.

Tanabe capstone final: Assessment excerpt

Evaluation Capstone Learning Reflections My learning goal was to develop my competency in theoretical foundation and evaluation project management. In line with this goal, I had joined the Raise Our Voices program conducted by the Ethnic Communities’ Council of Victoria and undertook a scoping review of studies on peer support for people with disabilities from culturally and linguistically diverse backgrounds. Through this project, I realized the importance of conducting a literature review to refine evaluation design and ensure the overall quality of the evaluation project and communicating with stakeholders to be aware of the political context of an evaluation project. Importance of Conducting a Literature Review The importance of conducting a literature review has been highlighted in many research papers and textbooks (Cohen et al., 2018; Giancola, 2020). Through this project, I realized the value of systematic literature review is not only to improve the overall quality of an evaluation project but also to track one’s project process, identify relevant information to be included in results and discussions and synthesize different points of views. In particular, I found it helpful to learn about Arksey and O’Malley’s (2005) methodological framework and PRISMA diagram, to enhance the quality of my scoping review, track review progress and improve my reporting skills. Importance of Communicating with Relevant Stakeholders Through interactions with the staff at Ethnic Communities’ Council of Victoria, I also recognized the value of understanding the strengths and challenges of evaluation and confirming the use of terminology (e.g. CALD: Cultural and Linguistic Diversity) with a client. The latter was critical not only for finding relevant literature but also to identify the criteria of merit and present results in a way that clients could relate to. In addition, I realized that clarifying the controversies over a certain terminology and sharing my interpretation were important to demonstrate sensitivity to the political context (Fitzpatrick et al., 2022) and professionalism. Overall, this evaluation project helped me realize how to connect literature review with empirical evaluation work and also how to manage a project within the time frame and be accountable to stakeholders. I would like to continue improving my evaluation skills (including literature review skills) and contribute to various projects.

Student: Erin Davis, Clear Horizon

I was a Research Fellow in the Gender and Women’s Health Unit at the Melbourne School of Population and Global Health, The University of Melbourne when I was a student in the Master of Evaluation degree. Currently, I work as a consultant in the evaluation sector. My work includes using methodologies in co-design, implementation science, and theory-based evaluation with participatory and collaborative approaches. I undertook the Master of Evaluation to further enhance my knowledge and skills in evaluation approaches and methods. My capstone project involved developing and applying a document analysis methodology to support a broader Empowerment Evaluation with a women’s organisation in the South Pacific. These documents provided several years of measurement data about the implementation and impact of the organisation’s work to prevent violence against women.

I used the LEAP to assess my knowledge and skills against the domains and competencies provided by the Australian Evaluation Society (AES Professional Learning Committee, 2013). As shown in the excerpts below, the LEAP results helped justify my capstone proposal and later supported my critical reflection for further competency development for the final assessment report.

Davis Capstone Proposal: Assessment excerpt

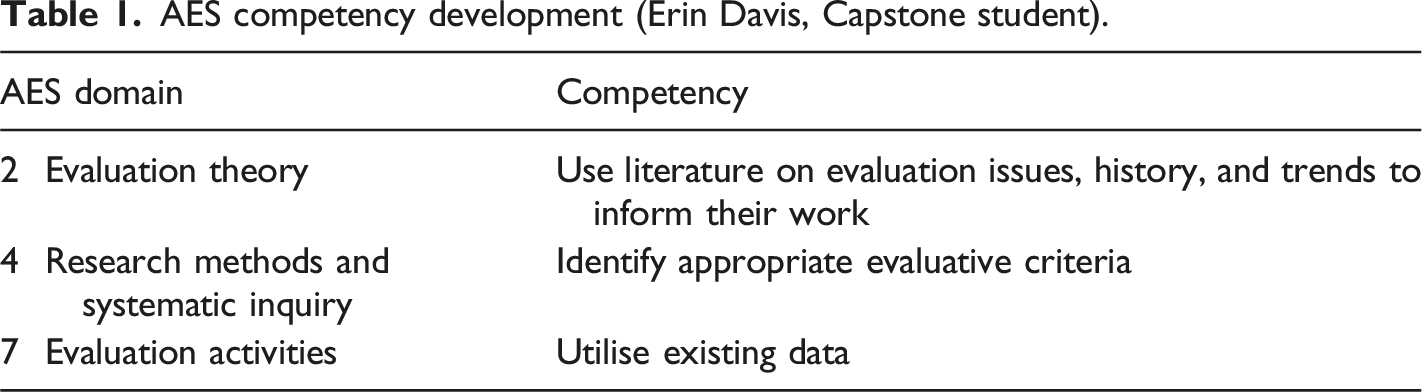

Program documents are often provided by evaluation clients as data to be analysed alongside other qualitative or quantitative methods. Within the social sciences literature, authors such as Tight (2019) and Bowen (2009) provide practical guidance for reviewing various types of documentary materials for research purposes. There is little scholarly guidance, however, for applying such procedures to support evaluative judgements about a program’s merit, worth, or significance. As such, I am uncertain about how to systematically assess documents to produce evaluative findings. I will address this gap in my capstone project by developing a document analysis methodology to support an evaluation with a South Pacific women’s organisation. This project will aid my professional development by improving AES competencies identified in the LEAP (Table 1). AES competency development (Erin Davis, Capstone student).

I will address the competency in domain seven by using program documents as existing data and subjecting them to quality appraisal using guidance from the document analysis literature (Bowen, 2009; Tight, 2019). The quality appraisal will determine which documents (or parts of documents) to include for further analysis. Selected documents will then be reviewed using the Values Identification Matrix as a guide (Roorda & Gullickson, 2019). This analytical procedure will address the competency in domain four as the matrix enables the systematic identification of evaluative criteria with supporting data found within the documents. For example, the documents may reveal the importance of applying cultural protocols in community engagement work (a criterion of merit), with supporting documentary evidence demonstrating the implementation and impact of such protocols for violence prevention.

As per the domain two competency, the document analysis will be informed by a theoretical framework derived from the evaluation and social sciences literature. Specifically, the theoretical framework will combine elements of Empowerment Evaluation, which guides the overarching evaluation approach, (Fetterman, 2017; Fetterman et al., 2015), with valuing theory (Shadish et al., 1991), and transnational feminist theory (Zerbe Enns et al., 2020). This theoretical framework will enable me to consider the documentary evidence for criteria of merit within the context of a cross-border evaluation partnership in the global women’s rights movement.

Davis capstone final: Assessment excerpt

The capstone project enabled my competency development under the AES domains identified in my proposal and further generated reflection about my continued professional capacity-building. The capstone addressed my previous uncertainty about using documents as existing data (domain seven). I now know how to appraise documents for their quality and relevance for an evaluation and use them as a data source to develop criteria of merit (domain four) supported by relevant evidence to support evaluative conclusions. I also understand the limitations of using documents as a sole data source and the importance of triangulation with the results of other methods, such as the qualitative data produced by the Empowerment Evaluation activities undertaken through the broader evaluation. I drew on scholarly literature (domain two) to develop and justify the theoretical framework, methodology, and results of the document analysis. Valuing theory literature provided guidance to integrate the logic of evaluation (Scriven, 1991, 1995, 2007; Shadish et al., 1991) into the methodology and use the Values Identification Matrix (Roorda & Gullickson, 2019) to identify appropriate evaluative criteria within the documents (domain four). This produced five overarching criteria of merit with a substantial amount of supporting data evidencing the implementation and impact of the organisation’s violence prevention work. The Empowerment Evaluation literature (Fetterman, 2017, 2019) helped align the capstone with the broader evaluation approach by ensuring that the document analysis findings were subjected to scrutiny and validation by key stakeholders. This approach was supported by a transnational feminist lens (Guttenbeil-Likiliki ‘Ofa-Ki-Levuka, 2020; Falcón, 2016; Zerbe Enns et al., 2020) by centring the organisation’s expertise as a leader in the South Pacific women’s rights movement, and critically reflecting on the potential influence of my own biases as an academic in Australia. There are some lessons from the capstone to address in my continued professional development under the AES competency framework. Firstly, I would like to strengthen my capacity to communicate concepts about the logic of evaluation to those who are not familiar with these ideas, particularly in a cross-cultural context (domain three: culture, stakeholders, and context). Secondly, I hope to develop my capacity to synthesise criteria-based findings as this can be challenging depending on the complexity of the evaluand and the client’s appetite for this level of overall judgement (domain two: evaluation theory). I will address these issues through consulting experts and literature on cross-cultural evaluation work and synthesis methods. Finally, I will continue my evaluation capacity-building through my employment, membership in the AES, and attendance at professional learning workshops and conferences (domain 1: evaluative attitude and professional practice).

Summary

The examples above showcase how students were able to use the competency self-assessment to identify their skills and needs in relation to delivering an evaluation and an evaluation capacity building project, and for designing and delivering their own learning intervention in capstone. While self-assessment is a rough measure with known challenges (Kruger & Dunning, 1999), it has been effective for these purposes. Students who use a self-assessment tool – the LEAP or others – benefit from the broad view of competencies related to evaluation, and Dunning-Kruger Theory informed conversations around how they have rated their skills and what that means for what they will encounter in their own practice. Using self-assessment to inform assessment also keeps it firmly within the boundaries of assessment for learning, which is an appropriate use of such a tool (Black & Wiliam, 1998; Stufflebeam & Wingate, 2005).

Reflections: Using the Self-Assessment in Teaching and Learning

The breadth of evaluator competencies, the variety of backgrounds that students bring to the course, the range of perspectives on evaluation, the absence of educational requirements for professional evaluation practice, and the lack of any validated measures of evaluation expertise make self-assessment and self-awareness an essential aspect of evaluation teaching and learning. Our mandate as evaluators to practice self-awareness and seek criticism to improve reinforces this need (AES Professional Learning Committee, 2013; Davidson, 2005; Gullickson et al., this issue). The LEAP provides an opportunity for reflection and self-diagnosis that enables The University of Melbourne Evaluation students, regardless of age, background, or level of experience, to get a rough sense of their strengths and where they need help. Based on students’ comments related to self-assessment across these three subjects over the past ten years, illustrated by the above examples, the process provides insight for students about areas where they are confident and where they need support. The LEAP enables clarity about where students need to ask for help and provides a pathway to ensure that they are not inadvertently creating threats to quality based on their lack of knowledge or self-knowledge. It also provides definition about the competencies related to evaluation to help address the bias challenges that come with Dunning Kruger.

As for the LEAP as an instrument, it met the needs of students and the lecturer for making self-assessment feasible. The individual reports provided students an artefact of the assessment for future reference and their ongoing development, accessible even after they depart their university course. Long term, it would be great to have people able to link their self-assessments over time to see their progress. Meantime, it continues to offer learning benefits to Evaluation students at University of Melbourne.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.