Abstract

Introduction

Unicondylar knee arthroplasty (UKA) is a minimally invasive surgical technique that replaces a specific compartment of the knee joint. Patients increasingly rely on digital tools such as Google and ChatGPT for healthcare information. This study aims to compare the accuracy, reliability, and applicability of the information provided by these two platforms regarding unicondylar knee arthroplasty.

Materials and Methods

This study was conducted using a descriptive and comparative content analysis approach. 12 frequently asked questions regarding unicondylar knee arthroplasty were identified through Google’s “People Also Ask” section and then directed to ChatGPT-4. The responses were compared based on scientific accuracy, level of detail, source reliability, applicability, and consistency. Readability analysis was conducted using DISCERN, FKGL, SMOG, and FRES scores.

Results

A total of 83.3% of ChatGPT’s responses were found to be consistent with academic sources, whereas this rate was 58.3% for Google. ChatGPT’s answers of 142.8 words, compared to Google’s 85.6-word average. Regarding source reliability, 66.7% of ChatGPT’s responses were based on academic guidelines, whereas Google’s percentage was 41.7%. The DISCERN score for ChatGPT was 64.4, whereas Google’s was 48.7. Google had a higher FRES score.

Conclusion

ChatGPT provides more scientifically accurate information than Google, while Google offers simpler and more comprehensible content. However, the academic language used by ChatGPT may be challenging for some patient groups, whereas Google’s superficial information is a significant limitation. In the future, the development of Artificial Intelligence-based medical information tools could be beneficial in improving patient safety and the quality of information dissemination.

Introduction

Osteoarthrosis is the leading cause of knee pain in the elderly, with a lifetime risk of 50% for symptomatic knee osteoarthritis. 1 Knee arthroplasty is a well-established, effective surgical treatment routinely applied to appropriate patients for the management of gonarthrosis. 2 For the treatment of unicompartmental gonarthrosis, unicondylar knee prosthesis, first introduced in 1954, can be utilized. 3 When compared to total knee arthroplasty, unicondylar knee arthroplasty is considered a less invasive approach due to its smaller skin incision, reduced blood loss, and superior preservation of the knee’s native anatomy.4,5

The rapid advancements in artificial intelligence have introduced novel analytical techniques and algorithms, significantly enhancing their application within medical practices and demonstrating the potential to reshape medical disciplines, including orthopedics and traumatology.6,7 In 2018, OpenAI introduced the ChatGPT model, a language model with advanced linguistic capabilities, made publicly available as a computer program. ChatGPT is increasingly being adopted in the medical field,8,9 serving as a communication tool for patients to acquire information, share knowledge, and seek solutions to their health-related concerns. 10 Since 1998, Google, the most widely used search engine globally, has facilitated the easy access to health information. 11 However, patients frequently rely on search engines or artificial intelligence applications for obtaining health information without consulting a healthcare provider, which can sometimes lead to inaccurate or misleading results. 12

This study aims to compare the information provided by ChatGPT and Google regarding unicondylar knee prosthesis, focusing on accuracy, reliability, detail level, and applicability. The findings will assess the reliability of artificial intelligence patient education and evaluate the advantages and limitations of artificial intelligence in comparison to traditional search engine-based information sources.

Materials and Methods

This study aims to compare the responses provided by Google’s “People Also Ask” section and ChatGPT-4 regarding patient education on unicondylar knee prosthesis in terms of accuracy, scope, level of detail, and applicability. The study was conducted using a descriptive and comparative content analysis methodology. It is a preliminary study conducted between January 1st, 2025, and February 28th, 2025. Given the nature of the study, blinding was not applicable, as the data consisted of publicly accessible information retrieved from internetbased platforms. The responses from ChatGPT-4 were based on its training data, with a knowledge cut-off of April 2023.

All academic sources used in this study were carefully verified. Peer-reviewed articles were selected primarily from PubMed, Scopus, and Web of Science, and assessed for relevance, scientific quality, and publication credibility. When possible, DOIs and PMIDs were checked to confirm source authenticity.

Additionally, when appropriate, the content of responses was evaluated in light of established clinical guidelines, including those published by NICE and AAOS. The study adhered to ethical standards, including the principles outlined in the NICE guidelines and the Declaration of Helsinki.

The research was conducted in two phases. In the first phase, the 12 most frequently asked patient questions concerning unicondylar knee prosthesis were identified through the “People Also Ask” section on Google. This methodological approach was chosen due to its reflection of patient information-seeking behavior and its ability to provide analysis grounded in real-world data. These questions were selected to highlight the most commonly sought topics by patients and to facilitate a comparative analysis. Google searches were performed using keywords such as “Unicondylar Knee Prosthesis Treatment,” “Unicondylar Knee Prosthesis Recovery,” and “Unicondylar Knee Prosthesis Surgery,” and the questions suggested by the search algorithm were recorded. In the second phase, the same 12 questions were directed to ChatGPT-4, and the generated responses were documented. In cases where multiple responses were produced by ChatGPT, only the first response was considered. To ensure objectivity, all responses were independently evaluated by two medically trained reviewers, who resolved any differences by mutual agreement.

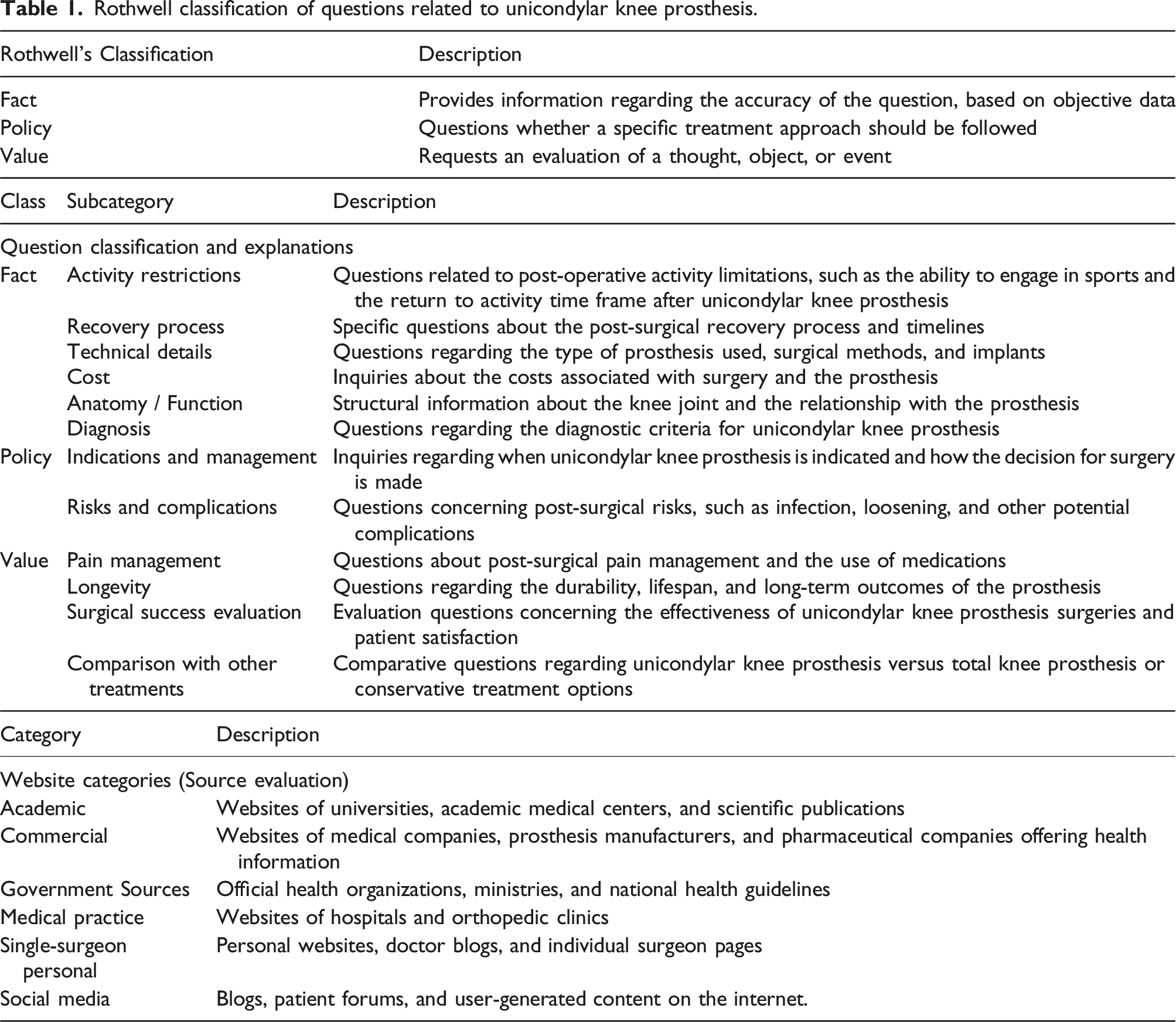

Rothwell classification of questions related to unicondylar knee prosthesis.

Cohen’s Kappa statistic was used to calculate inter-rater agreement in the statistical analysis. Differences between Google and ChatGPT responses in terms of scientific accuracy and level of detail were statistically analyzed using Fisher’s exact test or chi-square test. The lengths of the responses and the technical terms used were compared using the Mann-Whitney U test. These analyses aimed to determine the reliability of both platforms for patient education and highlight the advantages and limitations of both systems. Responses from both ChatGPT and Google were compared using the DISCERN score, FKGL, SMOG, and FRES. Parametric data were analyzed using independent samples t test, and non-parametric data were analyzed using the MannWhitney U test.

Discern score

DISCERN is a 16-item tool designed to assess the quality of written materials provided for patient education. These items are grouped into three sections: reliability of the publication (Items 1–8), presentation of treatment options (Items 9–15), and overall assessment (Item 16). Each item is scored from 1 (very poor) to 5 (very good). The total score is calculated out of 80: 63–80: Excellent; 51–62: Good; 39–50: Fair; <39: Poor.

Readability metrics

The comprehensibility of the responses was automatically calculated using the following metrics:Flesch-Kincaid Grade Level (FKGL): Indicates the average education level required to understand the text. SMOG (Simple Measure of Gobbledygook): Assesses the academic complexity of the text. FRES (Flesch Reading Ease Score): The higher the score (closer to 100), the easier the text is to read.

Results

Most frequently asked questions regarding unicondylar knee arthroplasty according to google and ChatGPT.

Comparison of google and ChatGPT responses to 12 questions regarding unicondylar knee arthroplasty.

The responses from ChatGPT were based on academic guidelines, orthopedic expert articles, and scientific sources, with 66.7% of the responses relying directly on scholarly literature. In contrast, 41.7% of Google’s responses included academic and medical content, with a greater prevalence of content derived from commercial clinical websites. While 75% of Google’s answers offered practical advice for daily life, ChatGPT’s responses included 58.3% of such content (Figure 1). Despite the higher level of technical detail in ChatGPT’s responses, some patient queries were more clearly answered by Google, offering more accessible and practical information (Table 4). Distribution of content in ChatGPT and google responses. Comparison of the first 5 frequently asked questions on unicondylar knee arthroplasty between google and ChatGPT responses.

The consistency of ChatGPT’s responses was calculated to be 8.3%, while Google’s responses showed variability of 25% across different search results, with some queries yielding different answers.

Measurement metrics.

Discussion

Artificial intelligence systems, such as ChatGPT, have inherent limitations related to their training data, as well as drawbacks such as the inaccessibility of certain referenced links.13,14 Conversely, Google offers advantages, including the continuous repetition of links, greater accessibility, and ease of readability; however, the propagation of inaccurate information, which may be mistakenly perceived as valid, can have adverse consequences for patients.13,14 Artificial intelligence methodologies should be employed interactively to compensate for each other’s shortcomings, with specialized system training required for data analysis.

In the study by Dubin et al. on arthroplasty, a comparison of Google and ChatGPT data revealed that the majority of the questions were ‘fact-based,’ with 25% of them being similar. 15 In our research, Google web searches, classified according to the Rotwell taxonomy, included questions in all three categories—‘fact,’ ‘policy,’ and ‘value,’ with 50% of questions categorized as ‘value.’ On the other hand, ChatGPT predominantly contained questions in the ‘fact’ and ‘policy’ categories, with ‘fact’-based questions accounting for over 50%. Additionally, ChatGPT did not include any questions in the ‘value’ category. There were very few overlapping questions between Google and ChatGPT within this category.

Recent literature suggests that ChatGPT’s responses are more comprehensive, with greater reliance on academic references compared to Google.16,17 Eryılmaz et al. demonstrated that ChatGPT provided more objective and comprehensive answers than Google in the context of arthroscopic meniscus surgery. 18 In the present study, we found that ChatGPT’s responses were more aligned with the scientific literature, incorporating medical terminology and evidence-based information, whereas Google’s responses were more influenced by patient experience. Moreover, when analyzing content length, Google responses were more succinct.

Mika et al. suggested that ChatGPT, when addressing frequently asked patient questions regarding total hip arthroplasty, predominantly provided evidence-based responses and could be a valuable tool for patient education. 19 In a separate study, Michael et al. noted that for numerical response queries, both Google and ChatGPT provided highly similar answers. 20

In 2016, Lawson et al. identified that the highest DISCERN score on academic websites was 51, while the general DISCERN average across all websites was 44. 21 Hurley et al. (2023) reported an average DISCERN score of 60 in their study examining AI’s knowledge on shoulder instability. 22 In studies with high-quality medical content, readability and informational quality were often suboptimal, with Lawson et al. reporting an FRES score of 50.17, corresponding to a readability level equivalent to 10.98. 21 Daraz et al., in their 2018 systematic review, found that FKGL values among orthopedic surgeons were slightly above 10. 23 In our study, the DISCERN scores exceeding 60 suggest that the content is generally appropriate for patient education. However, the elevated FKGL and SMOG scores imply that the content may be challenging for the majority of patient populations to comprehend. These findings are further supported by the FRES scores. On the other hand, Google-based responses, while having lower DISCERN scores, were more successful in terms of readability, suggesting that Google’s content is more superficial but easier for patients to understand. However, due to the lack of scientific grounding, it is not advisable for patients to base their treatment decisions solely on such information. Ethical concerns arise when patients rely solely on AI-generated information for medical decision-making. Although ChatGPT can improve access to health information, it is not a substitute for professional medical advice. Ensuring physician oversight and promoting digital health literacy are crucial for safe and effective use. 24

Conclusion

In our study, it was observed that ChatGPT responses were more likely to cite academic articles and scientific sources, whereas Google responses were predominantly focused on practical, daily-life recommendations. Concerning unicondylar knee prosthesis information, ChatGPT offered more detailed medical content, while Google provided more accessible and actionable advice relevant to daily life.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.