Abstract

Objective

Providing feedback to mammography radiologists and facilities may improve interpretive performance. We conducted a web-based survey to investigate how and why such feedback is undertaken and used in mammographic screening programmes.

Methods

The survey was sent to representatives in 30 International Cancer Screening Network member countries where mammographic screening is offered.

Results

Seventeen programmes in 14 countries responded to the survey. Audit feedback was aimed at readers in 14 programmes, and facilities in 12 programmes. Monitoring quality assurance was the most common purpose of audit feedback. Screening volume, recall rate, and rate of screen-detected cancers were typically reported performance measures. Audit reports were commonly provided annually, but more frequently when target guidelines were not reached.

Conclusion

The purpose, target audience, performance measures included, form and frequency of the audit feedback varied amongst mammographic screening programmes. These variations may provide a basis for those developing and improving such programmes.

Introduction

The effectiveness of mammographic screening depends on the performance of equipment and technologists, and the screen reader’s ability to perceive and accurately interpret mammographic abnormalities. 1 Screening programmes show substantial variation in the sensitivity and specificity of reader performance, 2 and in organization and delivery, including single versus double and independent double reading, with and without consensus, computer-aided detection (CAD), and real time versus batch reading. Higher recall rates are more common in programmes using single versus double reading, leading to increased false positive rates and decreased specificity. 3 The critical reading challenge is to obtain an optimal balance between sensitivity and specificity. Continuous education to maintain and improve interpretative skills is required, and in some countries audit feedback systems have been developed to help mammographic screen readers both assess and improve their reading skills.1,4,5

Audit feedback has been shown to improve performance in many areas of medicine, including mammography,1,5–8 but no studies have comprehensively compared audit feedback procedures and practice among mammographic screening programmes. We conducted a web-based survey among member countries of the International Cancer Screening Network (ICSN) 9 that offer mammographic screening, to investigate aspects of the reader and facility audit feedback undertaken, examining the purpose, target audience, performance measures, and form and frequency of the audit feedback, in addition to actions taken if the recommendations or requirements were not met.

Methods

An ICSN working group developed the survey and the web version was created by the United States’ National Cancer Institute. Access details were emailed in 2012 to the ICSN mammographic screening programme contact in 30 countries. Representatives from non-responding countries received a further request to participate, at the ICSN Conference in Australia in October 2012, and a reminder was emailed to those still non-responding in March 2013. Representatives could delegate response to the survey. For countries with a nationwide organized screening programme, we considered the response to represent the country. For countries with opportunistic screening (e.g. the United States (US)), or a mix between organized and opportunistic screening (e.g. Switzerland), the response was considered to represent a group of screening sites. We refer to both organized screening programmes and clusters of opportunistic screening sites (e.g. the Breast Cancer Surveillance Consortium (BCSC) in the US) as screening programmes. 10

The four-part web survey consisted of 50 questions, some allowing more than one response. Part A included questions about the characteristics of the programme (e.g. standard number of views used and ratio of analogue/digital mammographic screening examinations), the reading procedure (e.g. single reading; double reading where the second reader knows the first reader’s interpretation; independent double reading where the second reader does not know the first reader’s interpretation; use of computer-aided detection (CAD)), and the number of readers interpreting screening mammograms in 2010, or the most recent year in the programme. Part B sought information about recommendations and requirements to start and continue reading, the content of eventual recommendations and requirements, and who had made the recommendations and requirements. Parts C and D asked to whom the audit feedback was directed, the individual reader and/or the facility, respectively. The same questions about audit feedback were asked to the reader and the facility, and included general questions as well as a section focusing on which performance measures were included in the audit feedback. General information about the screening programmes (year in which the programme was implemented, age groups covered, etc.) had been collected in a general survey in 2012 developed by another ICSN working group. 9

Descriptive statistics (numbers and percentages) report the responses, for each question and for combinations of responses. STATA, version 12.1 (StataCorp, Station, Texas, USA) was used for the descriptive analyses, Microsoft Excel 10 for the figures. Screening volume was defined as the number of screening examinations interpreted by one reader, recall as the number of call backs for further evaluation after a positive screening exam, and positive predictive value (PPV) as the number of screen-detected breast cancers (ductal carcinoma in situ (DCIS) or invasive cancer) divided by the number of recall examinations due to positive screening mammography. A screen-detected cancer was breast cancer detected as a result of screening participation, and an interval cancer was a diagnosed breast cancer within a defined time span for women with a negative screening result. Tumour characteristics included information such as tumour size and lymph node involvement.

Results

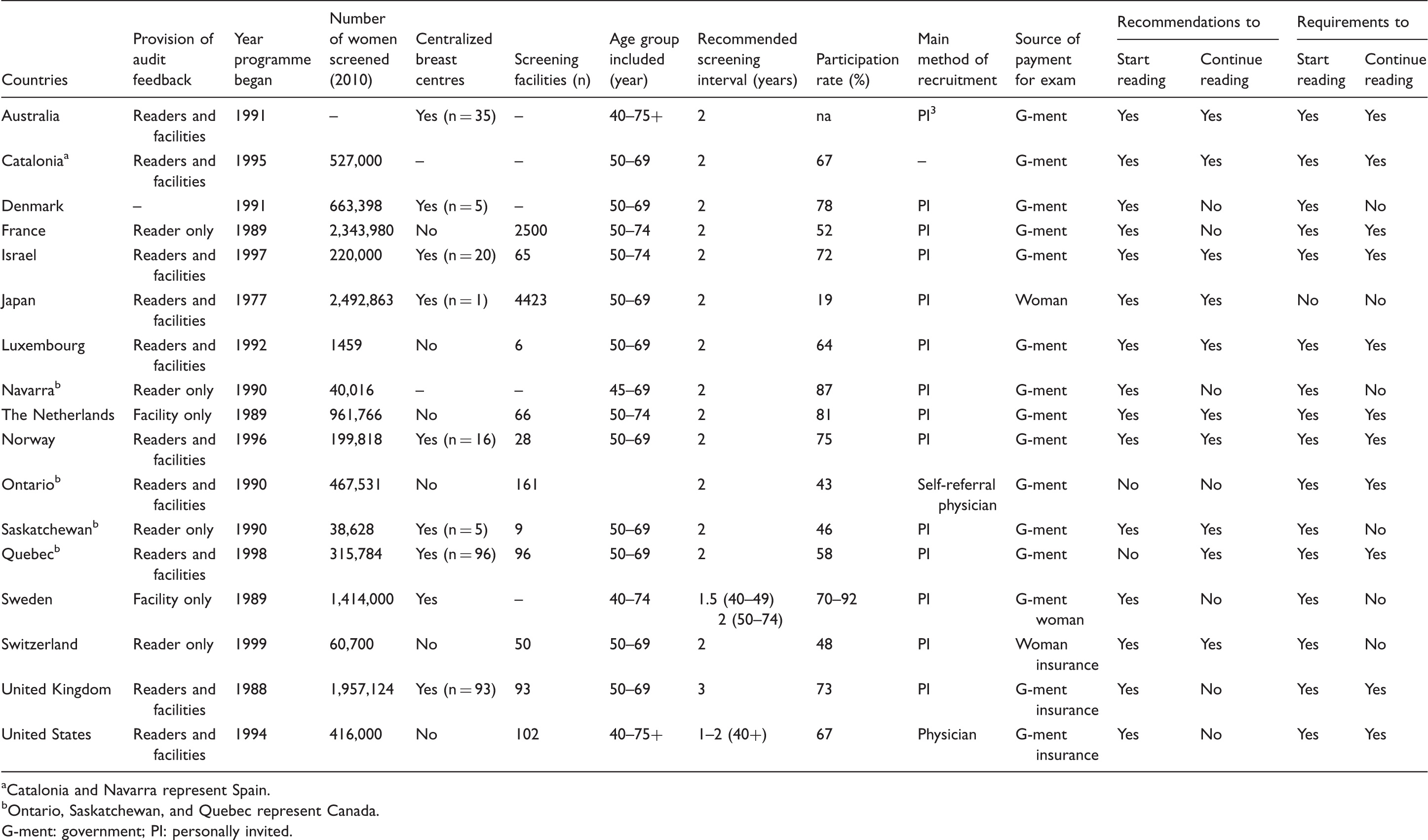

Basic characteristics of the screening programmes.

Catalonia and Navarra represent Spain.

Ontario, Saskatchewan, and Quebec represent Canada.

G-ment: government; PI: personally invited.

The number in the target group of the screening programmes varied from 55,000 (Luxembourg) to more than 38 million women (Japan) (Table 1). Participation rates varied from 19% (Japan) to 81% (the Netherlands). Seven programmes reported independent double reading (Australia, France, Luxembourg, the Netherlands, Norway, Switzerland, and UK) (data not shown), two (Sweden and Japan) reported double reading, one independent double or double (Catalonia), and one independent double reading with or without CAD/other (Denmark). We defined independent double reading in the survey as “two readers, the second reader does not know the interpretation from the first reader”. Two programmes reported single reading only (Navarra and Ontario). The other programmes (Saskatchewan, Quebec, and the US) reported a mix of different reading procedures. Data were not provided about reading procedures in Israel.

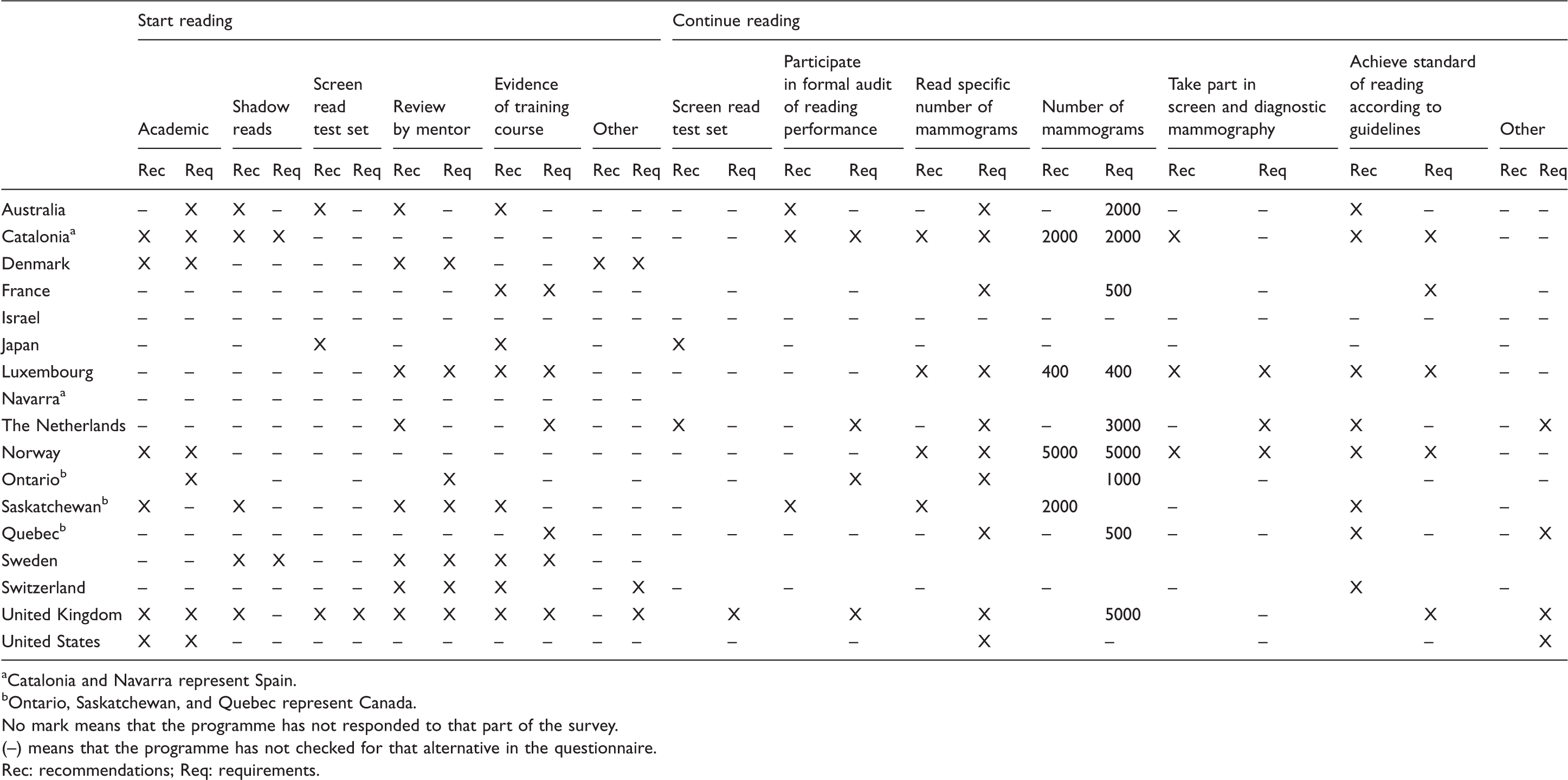

Recommendations and requirements to start reading screening mammograms.

Catalonia and Navarra represent Spain.

Ontario, Saskatchewan, and Quebec represent Canada.

No mark means that the programme has not responded to that part of the survey.

(–) means that the programme has not checked for that alternative in the questionnaire. Rec: recommendations; Req: requirements.

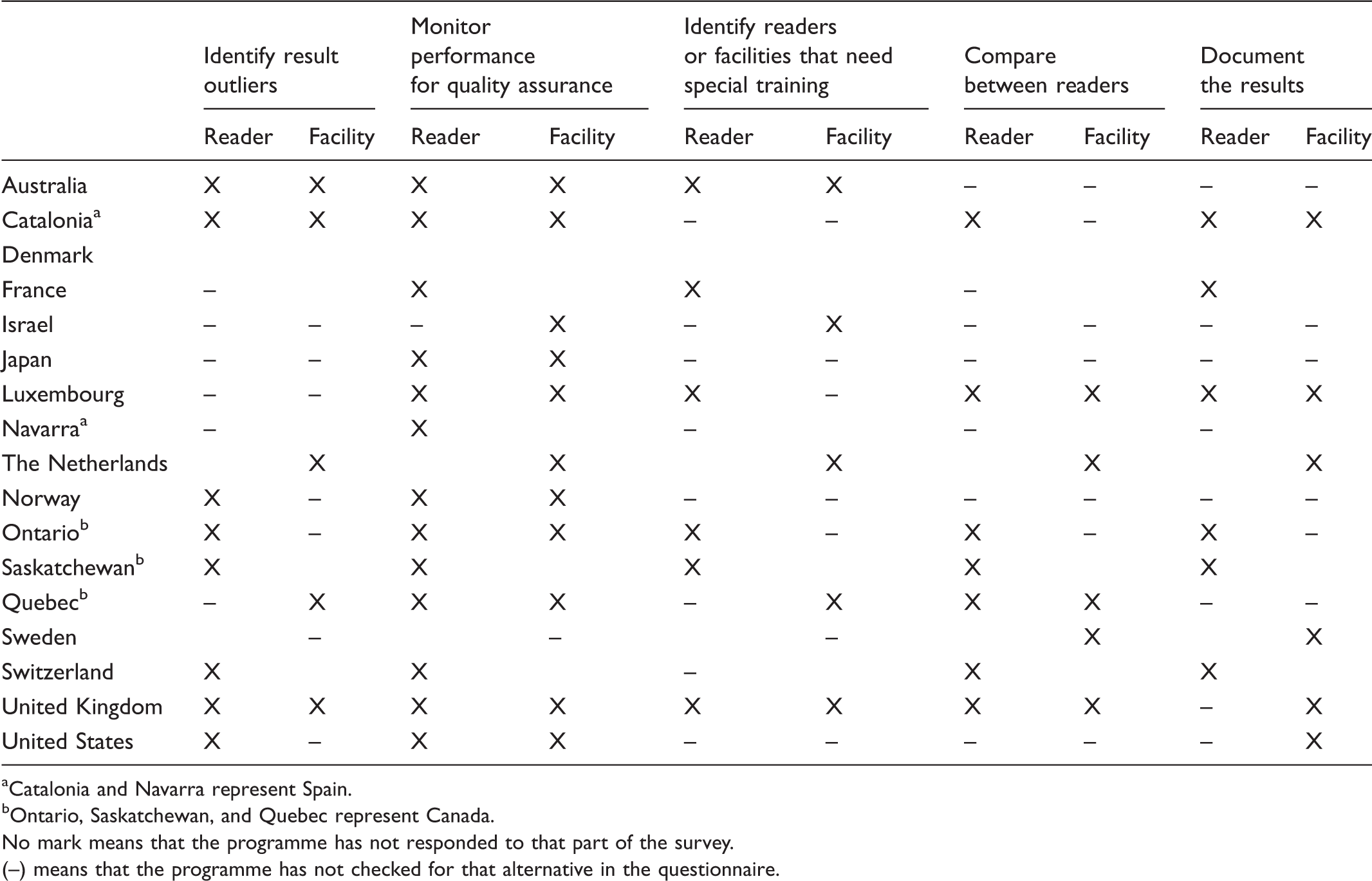

Purpose of the reader and facility audit feedback.

Catalonia and Navarra represent Spain.

Ontario, Saskatchewan, and Quebec represent Canada.

No mark means that the programme has not responded to that part of the survey.

(–) means that the programme has not checked for that alternative in the questionnaire.

The readers were the target audience in 13/14 of the programmes that responded to part C of the survey (that reader audit feedback is provided) (data not shown). Facilities and health administrators were the target audiences for Australia, Catalonia, France, and Luxembourg, while Norway and Saskatchewan reported the target audience as just the facility. The main target audience for facility level feedback was readers in eight programmes (Israel, Japan, Luxembourg, the Netherlands, Norway, Quebec, Sweden, and UK), facilities in seven programmes (Australia, Luxembourg, the Netherlands, Quebec, Sweden, US, and UK), and health administrators in four programmes (the Netherlands, Quebec, Sweden, and US).

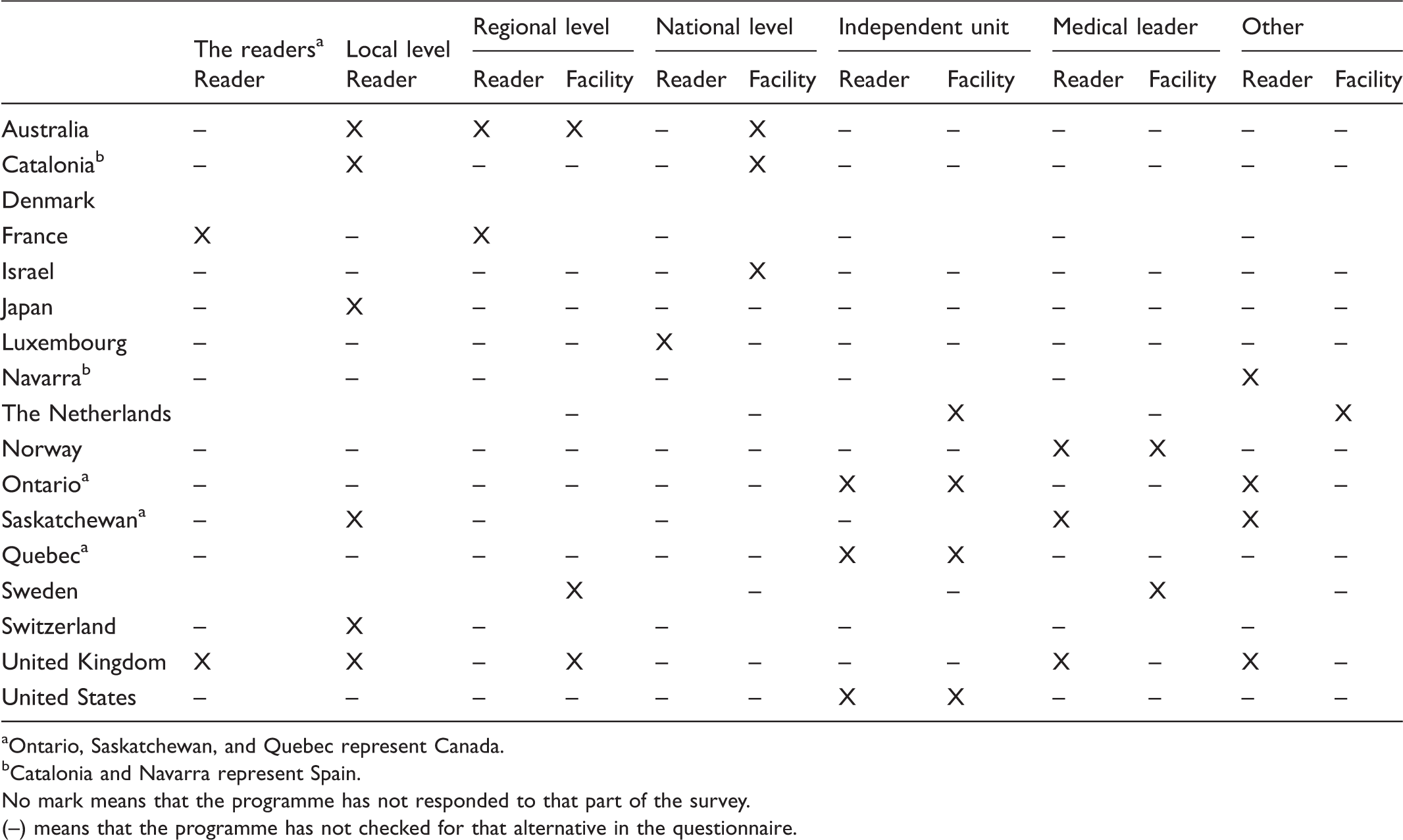

Responsible for running the analysis for reader and facility audit feedback.

Ontario, Saskatchewan, and Quebec represent Canada.

Catalonia and Navarra represent Spain.

No mark means that the programme has not responded to that part of the survey.

(–) means that the programme has not checked for that alternative in the questionnaire.

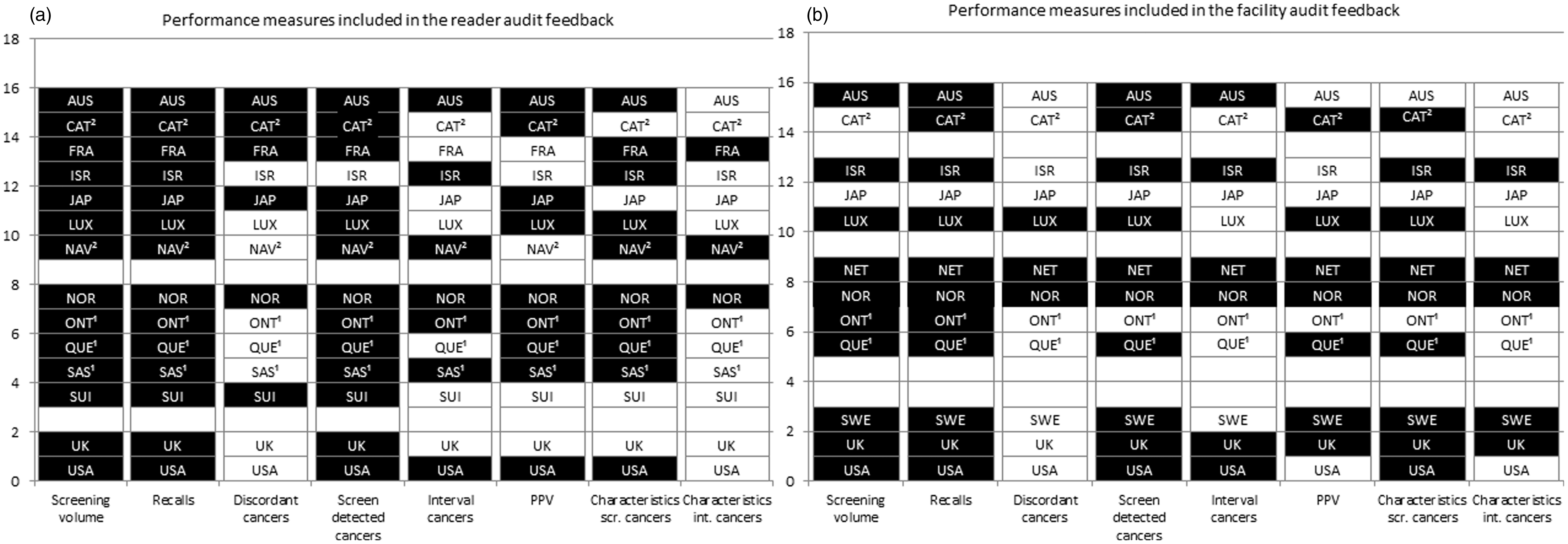

Reader audit feedback reports included various measures (Figure 1). Fourteen programmes provided screening volume and recall rate on a reader level, 13 provided screen-detected cancers (Figure 1(a)), eight included interval cancer rate, and three gave characteristics of the interval tumours. Of the 12 programmes that reported performance measures at the facility level, 11 provided information about recall rate and 10 reported screening volume and rate of screen-detected cancer (Figure 1(b)). Six programmes reported the interval cancer rate. All but Australia, Israel, Japan, Ontario, and the US reported PPV. Histopathologic tumour characteristics were given for screen-detected cancers in nine programmes and for interval cancers in five.

Performance measures included in (a) reader and (b) facility audit feedback. NB: Black box with programme name: the alternative is representative for the programme. White box with programme name: the alternative is not representative for the programme. White box without programme name: No response to that part of the survey. 1: Catalonia (CAT) and Navarra (NAV) represent Spain; 2: Ontario (ONT), Saskatchewan (SAS), and Quebec (QUE) represent Canada.

Individual audit feedback was given annually or more frequently for 10 programmes (Australia, France, Israel, Japan, Luxembourg, Ontario, Quebec, Sweden, US, and UK). Four programmes (Australia, Navarra, Norway, and UK) presented the results ad hoc. In the US, web-based data were accessible all the time. Facility audit feedback was given annually for seven programmes (Australia, Israel, Japan, Quebec, Sweden, US, and UK). Ad hoc presentation was reported by Norway, infrequently by Luxembourg, and “other” interval by the Netherlands.

Only Quebec and the US reported confidence intervals for the performance measures on the reader audit feedback. This was included for Australia, Sweden, and Quebec for facility audit feedback.

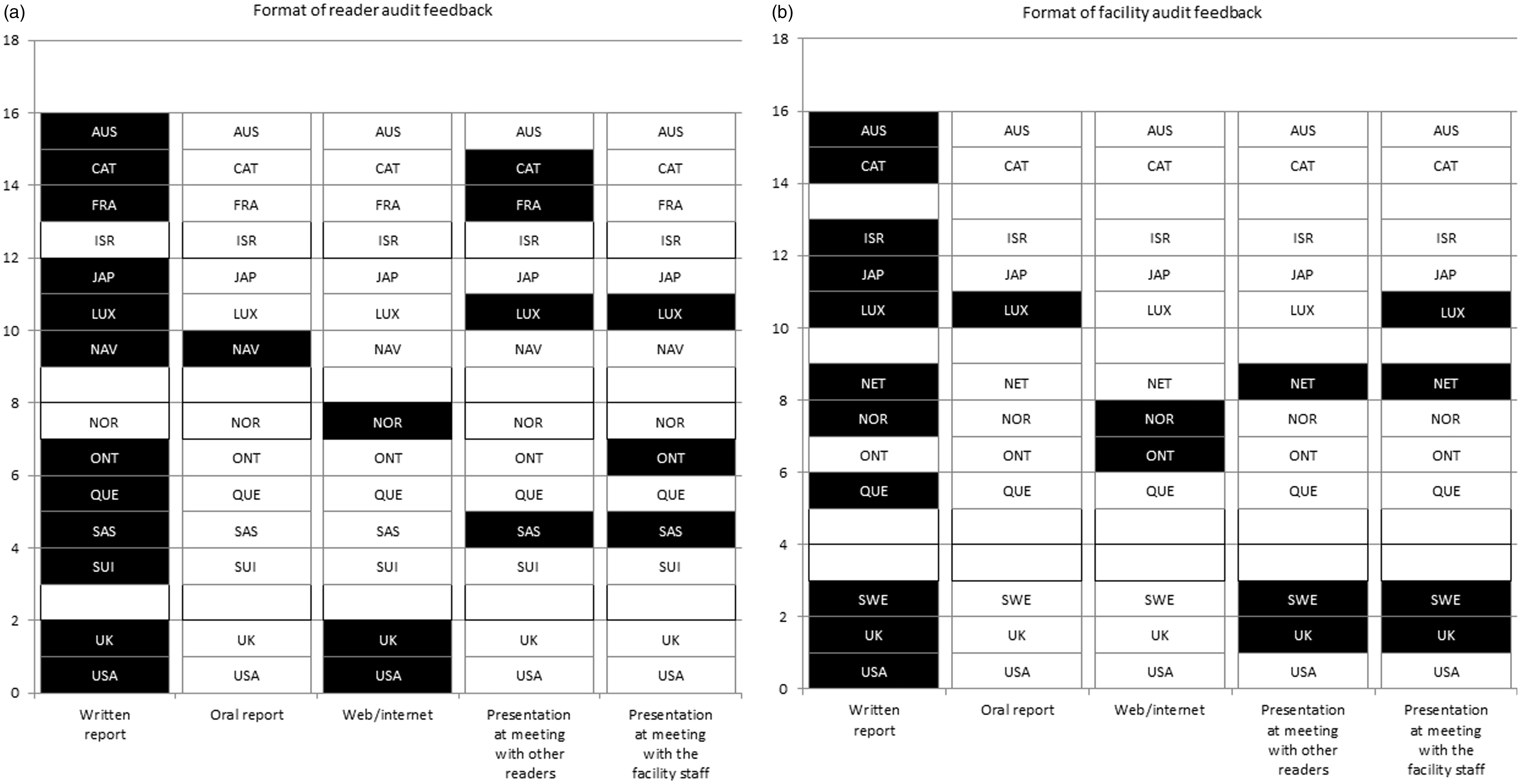

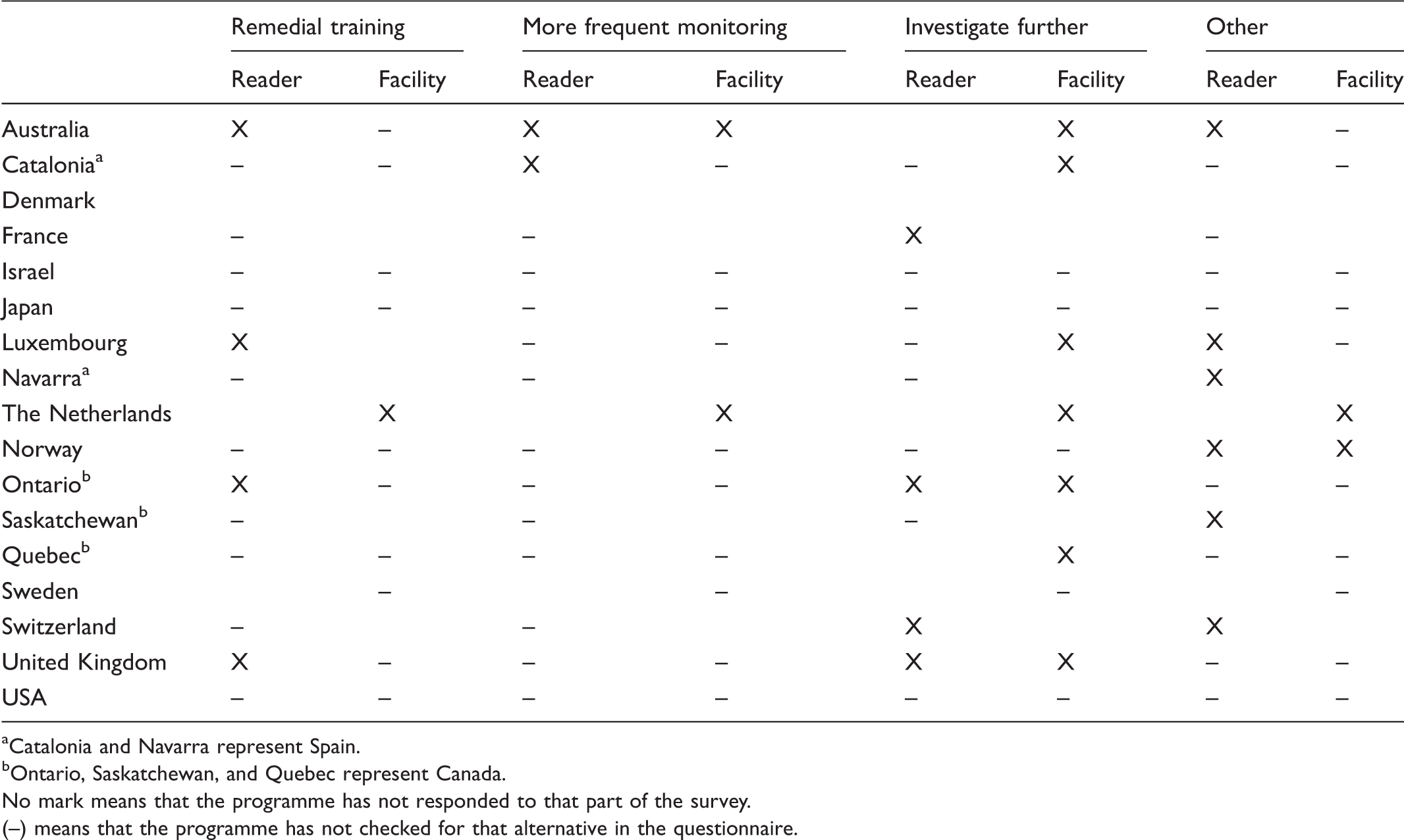

If guidelines/benchmarks were not achieved, four programmes offered remedial support/training for readers; one offered remedial training for the facility (Table 5); and Switzerland, US, and Quebec reported no action for the reader if the targeted guidelines were not met. Australia and Luxembourg removed readers from the programme (data not shown), Norway provided more skills, and Navarra offered meetings to discuss actions. Actions regarding the facility were remedial training (n = 1), more frequent monitoring (n = 2), and further investigation (n = 7). Norway reported providing more skills, and the Netherlands reported identification of facilities that need intervention with a subsequent decision on the action that should be taken by the National Institute for Health and Environment. A written report was the most common format for reader level feedback (Figure 2). The UK and US offered web-based information in addition to a written report. Norway offered web-based information only. Eleven out of the 12 programmes responding to this question used a written report for facility level feedback.

Format of (a) reader and (b) facility level audit feedback. Black box with programme name: the alternative is representative for the programme. White box with programme name: the alternative is not representative for the programme. White box without programme name: No response to that part of the survey. 1: Catalonia (CAT) and Navarra (NAV) represent Spain; 2: Ontario (ONT), Saskatchewan (SAS), and Quebec (QUE) represent Canada. Actions if targeted guidelines are not achieved for readers and facilities. Catalonia and Navarra represent Spain. Ontario, Saskatchewan, and Quebec represent Canada. No mark means that the programme has not responded to that part of the survey. (–) means that the programme has not checked for that alternative in the questionnaire.

Discussion

This is the first international survey to explore the provision of audit feedback to readers and facilities in mammographic screening programmes, with results from 17 programmes in 14 countries across four continents. Programmes varied substantially in organization, size, and participation rate. The most common requirement to start reading screening mammograms was reviewing mammograms with a mentor. The most frequent purpose of reader and facility audit feedback was monitoring performance for continuous QA. The readers were the most common target audience for the audit feedback, but facilities and health administrators were also targeted. The most common performance measures included in reader audit feedback reports were screening volume, recall rates, and rates of screen-detected and interval cancer.

Knowledge about the best ways to provide audit feedback is limited,1,4,5 and studies have shown only modest effects. A meta-analysis of audit feedback studies concluded that feedback was most effective when delivered by a supervisor or respected colleague, presented frequently, and included specific goals and actions. 1 Our survey did not identify who delivered the feedback. We only asked who was responsible for running the analyses, and at three programmes this was the medical leader. Most programmes provided audit feedback annually, but some measures that require cancer outcomes may be delayed by as much as two or more years, so annual feedback would not be timely. In the majority of the programmes, audit results were compared with benchmarks and/or guidelines, which can be considered a goal.

When audit feedback showed deficits in the interpretive scores, the three most common remedial actions for the readers were remedial training (four programmes), more frequent training (two programmes), and further investigation (four programmes). Four programmes reported other actions, including meetings to discuss possible causes, and removing readers. Several countries reported no action if results from subsequent audit feedback showed that targeted guidelines were not met. It would be interesting to understand why these programmes have no official action for under performers. Our survey did not include questions to determine if there was a measured effect of using audit feedback in the screening programmes. Other studies that measured the results of audit feedback showed mixed and small effects, 11 but many of them combined audit feedback with other interventions, so it is difficult to determine the extent to which the positive effects were directly related to the audit feedback.

How feedback is delivered may also be important for the learning process. To give and receive feedback on an individual level might be more sensitive than at facility level. However, individual feedback enables targeted actions, which then affects the facility. Individual feedback might be difficult in some cases due to the small number of cancer cases in a screening setting. Reporting results both on individual and on facility level to compare with colleagues and other screening centres might therefore be preferable. In a US study, radiologists perceived their performance to be better than it actually was, and at least as good as their peers, and they had particular difficulty estimating their false positive rates and PPV. 12 Radiology is practical work, and learning by mentoring may be the best way to achieve the applied skills needed to fulfil the criteria in the recommendations and requirements. In our survey, all programmes had recommendations or requirements to start screen reading, consisting of a combination of academic and practical training. Fourteen programmes also had recommendations or requirements to continue screen reading, most including reading a minimum number of mammograms.

Clinical audit in mammographic screening suffers from the low prevalence of breast cancer. Readers may see a limited number of cases from which to gain experience, and it may take over two years to obtain sufficient data from clinical audit to identify falling clinical performance. 13 To address this problem, screen reading test sets enriched with cancer cases have been used as training and QA tools. Reasonable levels of agreement between clinical performance and test set performance can be achieved. 14 UK screen readers are required to participate in PERFORMS (Personal Performance in Mammographic Screening), an educational self-assessment and training scheme. 15 In Australia (BreastScreen Australia) and New Zealand (BreastScreen Aotearoa), readers are encouraged to participate in BREAST (BreastScreen Reader Assessment Strategy). 16 Statistically significant moderate positive correlations have been demonstrated between reader performance at BREAST test sets and performance demonstrated by clinical audit. 17 In the US, a digital versatile disc (DVD) intervention with enhanced cancer cases that also provided immediate feedback to the radiologist 18 was found in a randomized controlled trial to significantly improve interpretive performance in a post-test set. The effect was not tested in clinical practice. In a test set study from the Netherlands, 19 the areas under the receiver operating characteristic curve, case sensitivity, and lesion sensitivity were satisfactory, and recall agreement was substantial, although agreement in lesion type and breast imaging reporting and data system was not satisfactory.

A qualitative study which provided feedback on audit content and presentation conducted amongst American radiologists suggested that they liked seeing their audit data compared with both their peers and benchmarks. 11 A web-based audit feedback tool was developed and tested based on this feedback, 20 and radiologists who used this tool found it very useful, although there has been no investigation into whether the tool made a difference in their clinical work.

Mammography screening relies on multiple disciplines all of which can affect the interpretation accuracy, and failure to achieve targeted guidelines may not necessarily reflect screen-reading skills. QA is required for all aspects of the screening process, 21 and schemes exist for other professions involved in mammography screening, 22 as well as for other cancer screening programmes. 18

In this survey, responses were received from less than half of ICSN member countries. The ICSN has only one contact in each country, to whom the survey invitation was sent. We do not know if the low response rate was a result of not having audit feedback or not being able to identify an appropriate person to complete the survey. Having only one person representing a programme respond to the survey might also bias the results. Direct contact with the person responsible for the audits would have been the best way to obtain the most accurate responses to the survey questions. A check box indicating whether audit feedback is or is not performed would have assured us that the survey was received. In addition, the survey was conducted in English and some countries may not have participated because the designated responder was not fluent in English. Although parts of the survey were not completed, we cannot tell whether this is because the programme does not provide that kind of audit feedback, if data were not available, or if that part was simply not filled in.

Our survey did not include questions about reviewing false negative or false positive screening exams. Such reviews are valuable for learning and are recommended in the European guidelines for breast cancer screening and treatment. 21

Most mammographic screening programmes that responded to this survey use audit feedback, which is expected to increase the quality of the performance. This study presents different methods of audit feedback and may assist programmes to develop new and improved systems.

Footnotes

Acknowledgement

Data from the NCI-funded Breast Cancer Surveillance Consortium (BCSC) co-operative agreement [U01CA63740, U01CA86076, U01CA86082, U01CA63736, U01CA70013, U01CA69976, U01CA63731, U01CA70040] was provided by a special competitive supplement to U01CA70013.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.