Abstract

This study investigates case managers’ willingness to entrust counseling tasks to decision-support systems using Artificial Intelligence (AI). We theorize three factors influencing this willingness: the discretionary nature of the task, the salience of client interaction in case managers’ professional identity, and their preconceptions of AI. To test the explanatory power of these factors, we conducted an online survey, including a priming experiment, among 1415 front-line case managers from the German Federal Employment Agency. The empirical results show (1) task-associated discretionary power decreases case managers’ willingness to entrust counseling tasks to AI, (2) in contrast to priming technology usage, the client interaction prime has no effect, while (3) attitudes toward AI have a substantive effect on case managers’ willingness to entrust counseling tasks to AI. These findings have important policy implications for public employment services as they help prioritize which tasks in job counseling deserve special attention in their digitalization strategies.

Introduction

In public employment services (PES), case management is key to jobseekers’ re-employment prospects. It requires case managers to make complex decisions such as profiling, job matching, and the allocation of active labor market policies. Algorithmic decision support (ADS) systems based on data-driven computational models have been proposed to improve the counseling process by assisting case managers in making these decisions. While advocates of ADS argue that, when tasks are clearly defined, AI-based systems can perform with greater accuracy and at lower cost than human decision-makers, the use of ADS in case management remains contested for both conceptual and empirical reasons (e.g., Ball et al., 2023; Desiere and Struyven, 2021; Sztandar-Sztanderska, 2025). Fiscal constraints, shortages of qualified case managers, and demographic shifts in the workforce place PES under growing pressure to optimize their counseling processes. Against this background, there is an increasing danger that ADS will be imposed through a top-down approach, disregarding the perspectives of case managers whose work these systems claim to enhance. This study therefore places case managers’ perspectives on ADS at its center. Specifically, it seeks to understand for which tasks case managers perceive ADS as valuable support and integrate these tools into their counseling processes, and in which cases they reject them.

Based on the user-centered design framework in e-government (e.g., Scupola and Mergel, 2022), the goal is to develop ADS in job counseling that aligns with case managers’ normative expectations and professional judgments. This requires a systematic understanding of which tasks case managers are more or less willing to entrust to AI-based systems. The term “entrust” emphasizes the dyadic relationship between case managers and clients, in which both co-produce labor market outcomes (Gottwald and Sowa, 2019). If case managers do not see the technology as trustworthy for clients, they may refuse to integrate it into service routines or work against it (Marienfeldt, 2024). Understanding for which tasks case managers consider ADS beneficial (Körtner and Bonoli, 2023) is also key for PES to prioritize which tasks in counseling should and should not be supported by AI-based systems.

While research on citizens’ preferences for AI-based systems in public administration is growing, studies of case managers’ attitudes toward ADS in PES remain scarce (e.g., Ball et al., 2023; Desiere and Struyven, 2021). Case managers exemplify Lipsky’s (2010) street-level bureaucrats (SLBs). First, their interactions with jobseekers are hierarchical and grounded in legal frameworks designed to uphold principles such as impartiality, fairness, and transparency (Maynard-Moody and Musheno, 2012). Second, because counseling is often too complex for full legal formalization (Petersen et al., 2021), case managers exercise discretion to adapt decisions to clients’ needs (Senghaas et al., 2019; Van Berkel, 2017). Third, they face significant challenges such as high caseloads, shifting agency goals driven by social policy changes, and limited resources (Gilson, 2015: 384). Digitalization can affect all three elements by delegating tasks including discretion to AI-powered systems. Busch and Henriksen (2018: 4) describe this as digital discretion, where computerized routines influence or replace human judgment, shifting discretion from SLBs to algorithms.

We argue that when examining which counseling tasks case managers in PES are more or less likely to entrust to AI-based systems, it is crucial to consider whether and to what extent the task involves administrative discretion. Private sector research shows that task characteristics matter for citizens’ acceptance of AI-based advice (e.g., Castelo et al., 2019; Logg et al., 2019). Scholars in public administration likewise emphasize that task characteristics (e.g., Wenzelburger et al., 2024) and discretion (e.g., Bullock, 2019; Busch, 2023) shape acceptance of ADS in the public sector. Empirical research on how task characteristics influence case managers’ acceptance of AI is limited (e.g., Ball et al., 2023; Wang et al., 2024), though theoretically, bureaucrats are expected to be more receptive to AI-based decisions in low-complexity, low-discretion cases (Bullock, 2019: 757). Although discretion is fundamental to case managers’ work and essential to client acceptance, the relationship between digital discretion, case managers’ role perceptions, and entrusting tasks to AI-based systems in job counseling remains largely unexplored.

Extending research on digital discretion (e.g., Bullock, 2019; Busch, 2023) and AI in social welfare administration (e.g., Ball et al., 2023; Desiere and Struyven, 2021; Gräfe et al., 2024; Marienfeldt, 2024), this study tests three arguments. First, case managers’ willingness to entrust tasks to AI is task-dependent: tasks associated with higher discretion are less likely to be entrusted. Second, case managers’ professional identity is expected to shape their willingness to delegate administrative tasks. Applying the concept of professional identity (e.g., Cohn and Maréchal, 2016; Osiander and Steinke, 2016), we expect that making client interaction more salient in professional identity reduces willingness to entrust tasks to AI. Third, research on citizens’ acceptance of AI in public services suggests that prior attitudes such as how people generally feel about AI strongly predict acceptance (e.g., Busch, 2023; Kleizen et al., 2023). Similarly, case managers’ initial perceptions of AI are expected to matter: those reporting pessimistic or negative attitudes toward AI should be less likely to entrust tasks to ADS.

To test these arguments, we conducted an online survey with a random sample of 1415 front-line case managers from the German Federal Employment Agency (FEA). Respondents evaluated 12 common tasks in case managers’ work, including profiling jobseekers’ skills, matching them to vacancies, and allocating active labor market policies. For each task, the 90-percent sample rated on a slider scale (0–100) how much they trusted an AI-based system versus a human case manager. A smaller 10-percent sample assessed the degree of administrative discretion in these tasks. The slider task in the 90-percent sample was embedded in a priming experiment, where a closed and two open-response questions highlighted either client interaction or use of digital technology in professional identity. A control group received the same slider scale without priming. In the post-experimental survey, we measured respondents’ General Attitudes toward AI (GAAI) using the psychometric scale developed by Schepman and Rodway (2023).

This study contributes to the literature on digital discretion (e.g., Bullock, 2019; Busch, 2023) and AI in social welfare administration (e.g., Marienfeldt, 2024) in several ways: First, it presents perceived discretion as a critical task characteristic shaping case managers’ willingness to entrust tasks to an AI. Second, it examines a representative sample of case managers in PES rather than surveying citizens’ preferences for AI in administrative discretion (e.g., Busch, 2023). Third, unlike studies that exogenously induce task characteristics, this study uses a subsample to measure discretion as a perceived task characteristic. Fourth, alongside testing the effect of case managers’ subjective feelings toward AI, the priming experiment identifies the causal impact of making client interaction salient in their professional identity on their willingness to entrust tasks to AI-based systems. Finally, the empirical results help prioritize which counseling tasks deserve particular attention in PES digitalization strategies.

Theoretical framework

Advocates of AI in public administration often claim that AI-based tools enhance decision-making by making it faster, more efficient, and less prone to error (Jørgensen, 2023; Rinta-Kahila et al., 2022). However, several high-profile cases in public administration show these expectations are not always fulfilled (see Szafran and Bach, 2024, for review). For example, in the Netherlands, a government algorithm falsely accused citizens of welfare fraud (Peeters and Widlak, 2023), Australia’s debt algorithm significantly overestimated debts, causing severe hardships (Rinta-Kahila et al., 2022), and the COMPAS algorithm in the United States, used to predict defendants’ likelihood of re-arrest, discriminated against Black defendants (Angwin et al., 2016). These cases raise concerns about the trustworthiness of ADS systems in public administration (De Boer and Raaphorst 2023; Starke et al., 2022). A rapidly growing body of literature examines ADS acceptance in welfare-to-work and employment services from the perspective of SLBs (Ball et al., 2023; Considine et al., 2022). In particular, research on SLB oversight of automated systems in welfare administration (Sztandar-Sztanderska, 2025) underscores SLBs’ role in shaping how AI is monitored and applied. Building on this literature, we theorize three factors that are likely to shape SLBs’ willingness to entrust counseling tasks to AI-based decision-support systems: administrative discretion, client interaction, and general attitudes toward AI.

Task characteristics and digital discretion

The perceived characteristics of a task an algorithm is designed to perform significantly influence its acceptance, independent of actual performance (Castelo et al., 2019). In a survey experiment, Lee (2018) manipulated decision-maker type (algorithmic or human) across four private-sector managerial decisions requiring either “mechanical” or “human” skills. Lee (2018) argues that people distinguish between tasks requiring subjective judgment and emotional capability (human skills) and those involving quantitative data processing for objective assessments (mechanical skills). She found algorithmic decisions were perceived as less fair and trustworthy than human decisions, eliciting more negative emotional responses due to algorithms’ lack of intuition and judgment. Likewise, Castelo et al. (2019) find citizens reluctant to entrust AI systems with “subjective” tasks, such as choosing clothes or writing a poem, while supposedly “objective” tasks are more readily assigned to AI systems (also see Mahmud et al., 2022, for review).

The impact of task characteristics on SLBs’ willingness to delegate tasks to AI-based systems has received limited attention. Drawing on Simon’s (1977) distinction between programmed and non-programmed decisions, Wang et al. (2024) examine how task characteristics affect acceptance of algorithmic decision-making in three public-sector scenarios. In a survey experiment with Chinese community staff, they find acceptance of AI-based decisions significantly higher in low-complexity cases. Similarly, survey experiments with Dutch and UK public employees show algorithmic decision-making is valued for low-complexity tasks but faces resistance when algorithms replace managers’ decisions in high-complexity contexts (Nagtegaal, 2021).

The exercise of administrative discretion represents a high-complexity task central to job counseling and a defining feature of SLBs’ daily work (Lipsky, 2010; Van Berkel, 2017). Administrative discretion can be understood in two ways: a top-down perspective, which sees it as authority delegated by rule-makers and requiring oversight to prevent misuse, and a bottom-up perspective that regards discretion as an essential tool for effective policy implementation (Maynard-Moody and Musheno, 2012). This study focuses on the latter and argues that case managers’ perceptions of discretion in a given counseling task critically shape their willingness to entrust it to an ADS.

AI may fundamentally alter discretion by shifting judgment from SLBs to algorithm designers, potentially automating decision-making (Busch, 2023). Automating discretion is both conceptually and normatively contested. As Lipsky (2010) emphasizes, discretion is not a byproduct of bureaucratic work but a constitutive element of SLB practice. Through discretion, policies are tailored to individual needs; through responsiveness, outcomes gain legitimacy; and through accountability, bureaucrats are held responsible for decisions. Automating these judgments risks reducing public administration to a depersonalized process, undermining SLBs’ competencies and legitimacy (Ball et al., 2023). Marienfeldt’s (2024) literature review shows that reducing SLBs’ discretion through algorithmic systems diminishes autonomy and often prompts workarounds, such as manual interventions, to preserve discretionary space. Similarly, Busch (2023) suggests that the perceived importance of discretion influences how SLBs assess the appropriateness of AI-based technologies.

The expectation that higher levels of perceived administrative discretion are associated with lower willingness to entrust tasks to AI-based systems may be grounded in different motives (Sztandar-Sztanderska, 2025). First, algorithmic discretion may be rejected as it removes an integral part of SLBs’ decision-making autonomy. In this view, skepticism toward AI-based decision-making in PES stems from professional identity. Second, case managers might hesitate to delegate discretionary tasks to AI due to doubts about the technology’s ability to manage complex decisions effectively. Here, reluctance is driven by concerns about performance and reliability. A third motive involves ethical considerations, particularly regarding tasks with significant consequences for beneficiaries’ lives (e.g., suspension periods). However, the concern is less about whether AI can perform a task, and more about whether it should. Analytically disentangling these motives remains for future research. Situated within the emerging literature on AI in welfare administration, we expect that higher perceived discretion is associated with stronger rejection of delegating tasks to AI. Specifically, we hypothesize:

Role of client interaction in professional identity

As perceptions of administrative discretion in client interactions may vary with professional identity, we examine this role more closely. Professional identities shape how SLBs perceive and use discretionary power (Lipsky, 2010). Maynard-Moody and Musheno (2000) conceptualize SLBs’ professional identity through the narratives of state agent, citizen agent, or professional agent. State agents are policy-oriented, citizen agents focus on individual circumstances, and professional agents emphasize expertise in exercising discretion. Other conceptualizations address PES case managers’ identities, focusing on how they define their role and client relationships (Senghaas et al., 2019). Early qualitative work by Eberwein and Tholen (1987) proposed four ideal types of case managers: brokers (matching jobseekers to vacancies), social workers (individual support, citizen agent narrative), social law clerks (rule-bound, state agent narrative), and service providers (flexible, citizen-oriented). Quantitative studies tested these types’ validity and stability in case managers’ self-perception (Osiander and Steinke, 2016; Sell, 1999).

Although professional identity is expected to shape how case managers exercise discretion, little is known about its causal relationship with AI acceptance. Observational comparisons of public employees’ professional identity and willingness to entrust AI may suffer from self-selection and omitted variable bias. To address this, we use a priming experiment to selectively activate professional identities (Cohn and Maréchal, 2016), thereby identifying its causal effect (also see Fernández et al., 2023). Causality is conceptualized via the potential outcome model (Cunningham, 2021: 9), which defines causality as the difference between potential outcomes under different conditions. Our experiment thus isolates the effect of professional identity on case managers’ willingness to entrust tasks to AI.

Drawing on social identity theory (Akerlof and Kranton, 2010; Tajfel, 1970), we assume case managers hold multiple identities based on socially significant categories such as gender, religiosity, ethnicity, or profession. Each identity is linked to social norms prescribing permissible behavior, and the weight individuals attach to an identity determines which norms become relevant (LeBoeuf et al., 2010). Case managers’ professional identities may emphasize client interaction (e.g., Osiander and Steinke, 2016; Sell, 1999) or technical, administrative implementation (Maynard-Moody and Musheno, 2000). We expect the relative salience of these different aspects of case managers’ professional identity to shape their willingness to entrust tasks to AI. Specifically, it should matter whether case managers evaluate AI use while focusing on client interaction or the technical aspects of counseling decisions. Thus, we hypothesize the following about priming professional identity:

Technology attitudes and algorithm appreciation

In addition to discretion as a task-specific feature and the salience of client interaction in professional identity, personal views of AI may also affect case managers’ willingness to entrust tasks to an ADS system. In a survey experiment with Belgian citizens, Kleizen et al. (2023) for example, found that information on ethical AI had no significant effect on trust or perceived trustworthiness of AI in public services. Instead, attitudes toward AI, such as privacy concerns, trust in government, and trust in AI, were strong predictors of trust in ADS. Wenzelburger et al. (2024) likewise find that citizens’ technology attitudes shape evaluations of AI across policy domains. Yet, little evidence exists on case managers’ AI attitudes and how these shape their willingness to entrust counseling tasks to ADS.

Individuals’ general attitudes and subjective feelings toward AI may be particularly relevant in work contexts where, unlike in private use, adoption decisions are typically made by senior management. Because this shift in control limits individual choice, Schepman and Rodway (2023: 2724) argue that how users feel about AI in general is crucial for understanding how they respond to AI implementation in their work. Following Davis’ (1989) technology acceptance model, much research focuses on consumers’ willingness to adopt technology. However, this framework applies less well to case managers. We therefore focus on their emotional responses to AI, drawing on Schepman and Rodway’s (2023) conceptualization: positive attitudes include perceptions of utility (e.g., improved performance), desired use (e.g., at work), and positive emotions (e.g., excitement, being impressed); negative attitudes feature concerns about AI (e.g., unethical use, errors) and negative emotions (e.g., discomfort, finding AI sinister). This framework captures general attitudinal orientations toward AI-based technologies rather than domain-specific views on AI in social administration.

These pre-perceptions of AI are expected to influence case managers’ willingness to entrust tasks to AI-based systems. If case managers have positive emotions toward AI, they may be more willing to accept algorithmic advice and incorporate it into decision-making. Positive AI attitudes could make case managers see AI-based systems as valuable tools for improving decisions, increasing their trust in algorithmic advice. Conversely, negative attitudes toward AI may reduce willingness to accept such advice, leading to resistance and reluctance to adopt AI-based tools and integrate them into daily work routines. On these grounds, we expect the following:

Methods and data

Dependent variable: Trusting human versus machine

Case managers from the German FEA were presented with sliding bar questions covering 12 common case management tasks. These tasks span a broad range of administrative and counseling activities and vary by degree of administrative discretion. We took care to present tasks that, though hypothetical, could realistically be supported or overtaken by AI-based systems (mundane realism). To ensure this, we cooperated with former case managers in selecting and wording tasks. Respondents used a slider to indicate whether they would rather entrust the tasks to a case manager or an AI system. The slider allowed 50 positions left (trust in case manager) and 50 positions right (trust in AI). The further the slider was pulled to one side, the greater the respondents’ confidence that a case manager or an AI program should take over the task. 1 The 12 tasks were presented in two sets of six tasks (Appendix B1). The order of the tasks within the sets and the sets varied randomly per respondent. The dependent variable measures case managers’ task-specific trust in the AI-based system on a scale from 0 to 100 where “0” represents high task-specific trust in case managers and “100” represents high task-specific trust in AI.

Ninety percent of respondents were presented with sliding bar questions measuring trust in case managers versus AI for specific tasks. The remaining 10% received a modified version, hereafter referred to as the calibrating sliding bar questions (Appendix B2). It is an empirical question how case managers, with their professional experience, evaluate the scope of administrative discretion in the above tasks. To measure the discretion level case managers ascribe to the 12 tasks, respondents in the 10-percent sample rated their administrative discretion on a 0–100 scale. “0” means very little discretion for a task, and “100” means a lot of discretion for a task. All other aspects of the slider task remained unchanged. Using these responses, we assign a value of task-associated discretionary power to each of the 12 tasks (Appendix Figure A4). 2

Research design: Priming experiment

To prime case managers’ professional identity, they were randomly assigned to treatment or control conditions and presented with questions either related (treatment) or unrelated (control) to aspects of their professional identity. Such identity priming designs are common in survey experiments (Sniderman, 2018) as a technique for increasing the salience of a specific identity. They have been successfully used to trigger national, ethnic, religious, and partisan identities (e.g., Levendusky, 2018), as well as the professional identity of criminals and bankers (Cohn and Maréchal, 2016).

The priming experiment comprised three conditions. Respondents from the 90-percent sample were each assigned, with a probability of 30%, to (1) the client interaction prime, (2) the technology user prime, or (3) the control group (no prime). In line with previous research priming professional identity (e.g., Cohn and Maréchal, 2016), the instrument consisted of three items in each prime: one closed single-choice question and two open-response questions. Respondents in the client interaction prime were asked how they perceive their role as case managers and to select one of five roles: labor market broker, social worker, social law clerk, service provider, and a residual category (“other role”). The roles represent a slight modification of the typology proposed by Sell (1999). This was followed by two open-response questions asking respondents (1) for a brief description of their rationale for selecting a role and (2) what they perceive to be the major challenges in fulfilling that role. The minimum length of each response was set to 10 letters.

Respondents in the computer user prime were asked what type of user they are regarding their private usage of computer technology. Possible answers were the innovative type (“first mover”), the open-minded type, the to-be-convinced type (“need to be convinced of the benefits of new tools”), the cautious type, and a residual category (“other type”). After that, respondents answered two open-response questions with a minimum length of 10 letters. They were asked to describe (1) why they assigned themselves to this user type and (2) what typical problems they experience when using newly introduced digital technologies. The third group (control) received no priming treatment. Respondents completed the slider task questions after receiving one of the two primes or being assigned to the control group (Appendix B3 and B4).

Attitudinal measures

Respondents’ attitudes toward AI are measured by a reduced version of the GAAI scale developed and validated by Schepman and Rodway (2023). In contrast to policy attitudes toward AI, GAAI captures psychological correlates relevant to AI acceptance. Schepman and Rodway show that GAAI scores correlate with personality traits. Its original version comprises 16 positive and 16 negative items. We selected three variables with the highest factor loadings from each subscale for capacity reasons. Since the GAAI items include strongly negative images of AI that could prime case managers’ perceptions, they were measured after the slider task, post-experimentally. We used factor analysis to aggregate the six GAAI items into two factors, capturing positive and negative attitudes toward AI. The six GAAI items have a Cronbach’s alpha of 0.84. The principal factor analysis reveals two factors, one for negative and another for positive attitudes toward AI.

In addition, we asked respondents for socioeconomic characteristics such as age, gender, and job tenure. Age and gender may also influence willingness to delegate counseling tasks to AI. These factors are regularly considered in studies examining acceptance of AI-based technologies in the public sector (Gesk and Leyer, 2022; Horvath et al., 2023), though findings remain mixed. We therefore included age and gender as controls in the regression model. Tenure was included as a control variable, as its effect on willingness to delegate is theoretically ambiguous. On the one hand, longer tenure could be associated with less trust in delegation if more experienced case workers feel more confident in their professional autonomy compared to less-tenured colleagues, who have less ingrained routines and may therefore be more open to innovation. On the other hand, the association could also be reversed: longer tenure might be linked to greater openness to delegation to AI if experienced case workers face work overload and see AI as a potential source of relief. In addition, less-tenured case workers may be more likely to perceive AI as a threat to their job security or promotion prospects. Although we cannot disentangle each of these potential associations in detail, we conducted an additional analysis to further assess their plausibility. The results are presented in Appendix Table A7 and Appendix Figure A10. 3

Sample and data analysis

Case managers in the German FEA operate under the Social Code Book II (SGB II) and SGB III, which regulate unemployment benefits (e.g., ALG and ALG II at the time of the survey) and active labor market policies. These laws prescribe binding rules (e.g., eligibility for benefits), but also allow for “pflichtgemäßes Ermessen” (discretion according to duty) in some areas, such as: assigning jobseekers to activation measures (e.g., training, job coaching), designing integration agreements (“Eingliederungsvereinbarungen”), and imposing periods of suspension for non-cooperation (though these are also tightly regulated). Hence, while legal norms and standardized procedures frame case managers decisions, they have substantial discretion in their decision-making.

In recent years, the FEA has made significant progress in digitizing its processes. The efforts have primarily focused on transitioning from paper-based to online application procedures, for example through the rollout of various apps. AI-based applications are not yet employed in customer interactions. The FEA has established an AI-Competence Center within its IT System House, developing pilot AI projects, including applications for speech recognition and forecasting of labor market dynamics. Given the centralized organizational structure of the FEA, there is no regional variation in digitalization across districts. Furthermore, according to its own statements, the FEA has committed to ‘human-friendly’ automation, in which staff retain responsibility for final decisions.

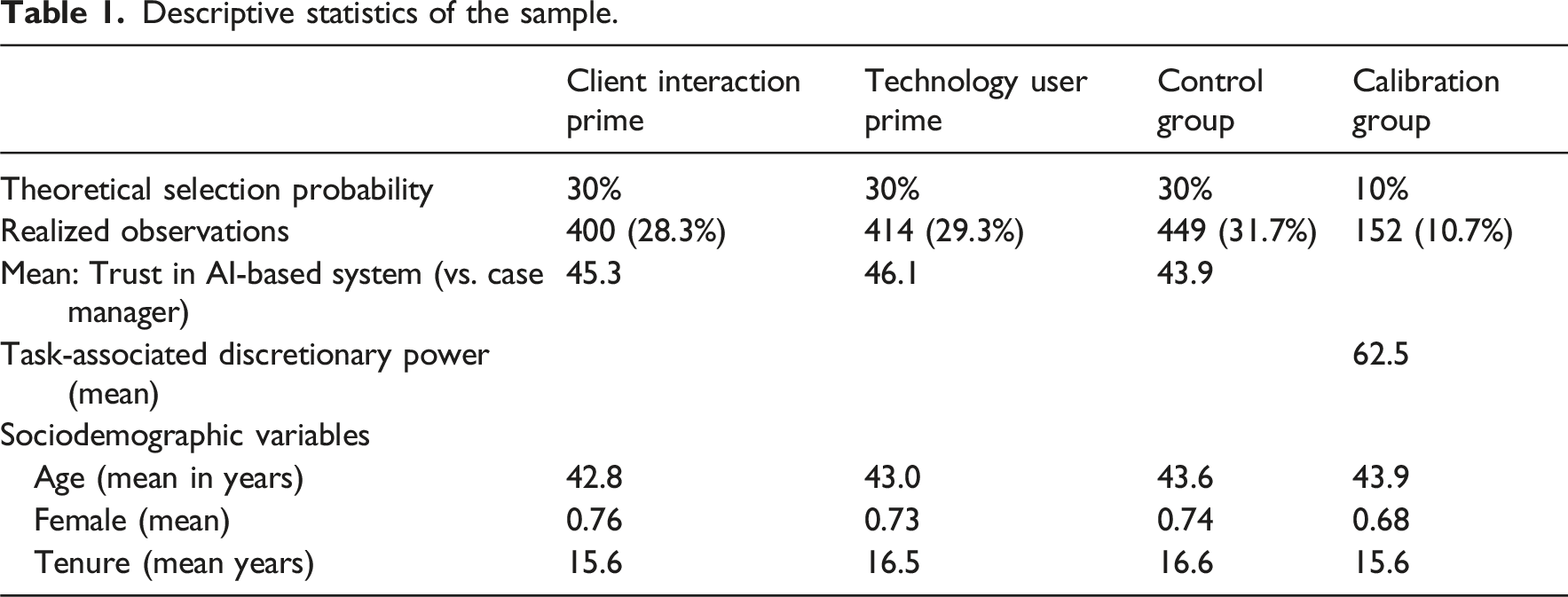

Descriptive statistics of the sample.

We also checked for sample selectivity by comparing key sociodemographic characteristics in the gross sample (5000 case managers invited to participate) and the net sample (1415 case managers who participated fully). For the gross sample, the FEA provides information on case managers’ gender, age, and location of the employment agency (East vs West Germany). This information allows us to estimate a probit model where the dependent variable is 1 for participation and 0 otherwise. The analysis shows that male case managers are slightly more likely to participate in our survey, while age and region do not have statistically significant effects on the likelihood of participation (Appendix Table A1). Although the R2 for this model is low, suggesting that other unobserved characteristics are likely to matter for participation, we can at least rule out that the net sample is biased regarding key sociodemographic factors.

We transformed the dataset (wide format) into a long format with a single dependent variable (instead of 12 tasks) measuring willingness to trust the AI-based system compared to human decision-makers and 12 dummy variables capturing task type. Next, we assigned average values of task-associated discretionary power from the calibration slider task to each of the 12 tasks. Ultimately, we used 15,156 observations from 1263 respondents. To estimate effects of task-associated discretionary power, the priming experiment, and self-reported GAAI, we relied on a random effects GLS model considering clustering of data by respondents. To check robustness, we also estimated alternative models such as pooled OLS, random effects ML estimator, and multilevel regression. None of these estimators altered substantial findings (Appendix Table A3). Before regression analysis, we checked the distribution of dependent variables (Appendix Figures A1 and A2). We found the dependent variable (0–100) can also be grouped into a categorical variable (Appendix Figure A3). We re-ran the regression using this categorical variable and a multilevel ordered logit regression. This alternative operationalization of the dependent variable did not alter substantive findings (Appendix Table A4). 5

Results

Task-associated discretionary power

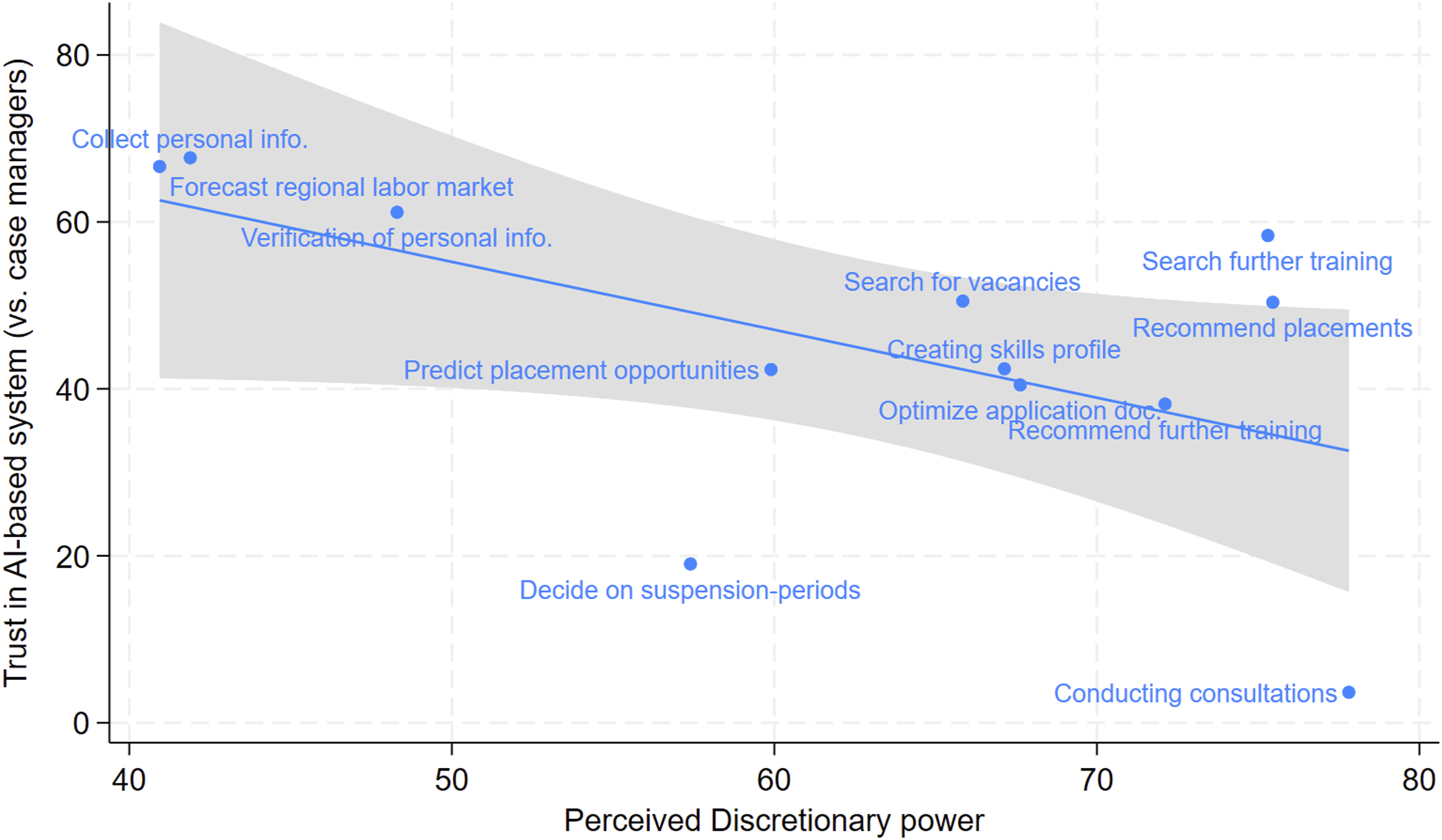

In the first step, we bring together the evaluation of the discretionary power associated with each of the 12 tasks obtained from the calibration sample with the average level of trust towards an AI-based system versus a human case manager. According to H1, we expect a negative relationship, as we argue that higher levels of task-associated discretionary power correlate with less trust in AI-based systems. This initial expectation is supported (see Figure 1). On the y-axis, Figure 1 reports the average trust in AI-based systems for each task obtained from the 90-percent sample. On the x-axis, it reports the average discretionary power associated with the 12 tasks obtained from the 10-percent sample. Figure 1 shows, for example, that the task of conducting counseling interviews combines high levels of associated discretionary power (about 77 base points) and the lowest trust in an AI-based system to conduct this task (about 4 base points). Tasks like forecasting the development of the regional labor market and capturing jobseekers’ personal information combine below-average levels of associated discretionary power (about 44 base points) and high levels of trust in an AI-based system to conduct this task (about 68 base points). Running a bivariate OLS regression confirms the negative correlation (b = −0.814; p = 0.061). Effect of task-associated discretionary power.

There are also some notable exceptions from this overall pattern, as the task to decide upon periods of suspension, for instance, combined very low levels of trust in an AI-based system to conduct this task (about 18 base points) with moderate levels of associated discretionary power (about 57 base points). The latter may be because the decision to impose a sanction is highly discretionary (Lee, 2018), while the type of sanction (amount of transfer reduction) is prescribed in the social welfare legislation. However, the impact of the sanction on the client’s life could be considered so serious that case managers would not want to entrust this decision to an AI-based system. Other tasks, such as searching for further training and recommending job placements, are associated with high discretionary power (about 75 base points) and moderate to high levels of trust in AI-based systems. Case managers seem to recognize that AI-enabled systems can help them find further training and job vacancies but should not decide which training the client should take or which job the client should apply for. These decisions, which are at the core of case managers’ daily work, are perceived as requiring a high degree of discretion. Unfortunately, we cannot assess to what extent this association might be explained by the fear that AI-based systems could replace case managers’ jobs. At least, the auxiliary results on tenure (Appendix Table A7) do not corroborate this notion. 6 Since we lack more detailed information on case managers’ sense-making of these tasks or their fear of being replaced by AI, these interpretations remain speculative.

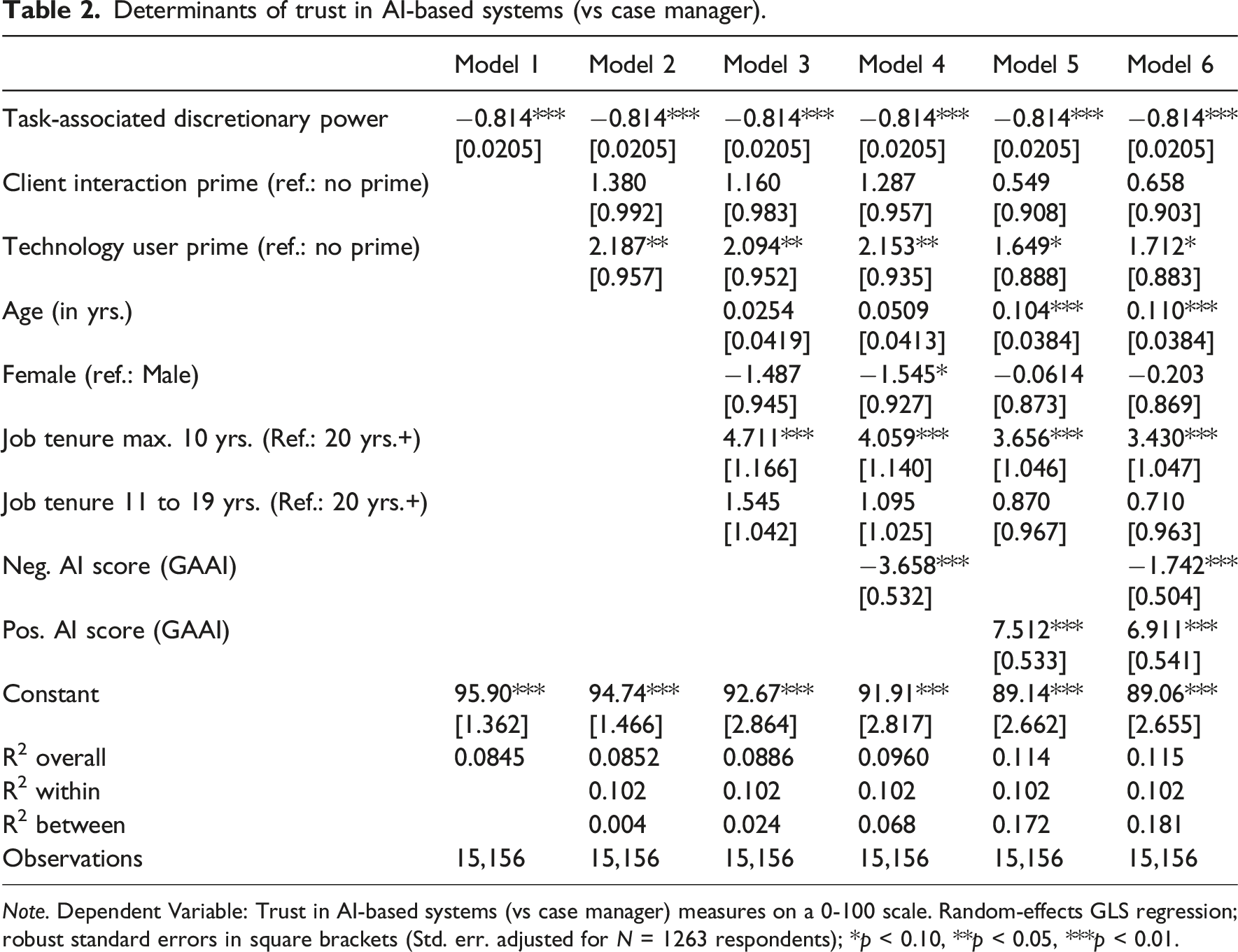

Determinants of trust in AI-based systems (vs case manager).

Note. Dependent Variable: Trust in AI-based systems (vs case manager) measures on a 0-100 scale. Random-effects GLS regression; robust standard errors in square brackets (Std. err. adjusted for N = 1263 respondents); *p < 0.10, **p < 0.05, ***p < 0.01.

Priming case managers’ professional identity

Before discussing the findings of the priming experiment summarized in Model 2 in Table 2, we explore the functioning of the open-response priming technique used in the survey. As a first face-validity check, we conducted a word cloud analysis by merging the two open-response questions for each prime together, creating two texts; one including the open-responses for the client interaction prime and another text capturing all the text obtained from the computer technology user prime. The two word clouds presented in Appendix Figures A5 and A7 show that the open answers differ systematically and provide an initial indication that the prime could have worked. Priming, therefore, worked in the sense that the texts from the open-response questions relate to the respective topic in terms of content. Hence, in principle, the prime is suitable for triggering the desired effect on the respondents. This impression is corroborated by looking at the most frequently used term in the open response texts (Appendix Figures A6 and A8). The four most frequently used words in the client interaction prime are “customer”, “time”, “service”, and “support”. The four most frequently used words in the computer technology user prime are “new”, “technology”, “use”, and “work”. Also, see Appendix Table A2 on the distribution of self-reported role perception in the client interaction and technology user prime.

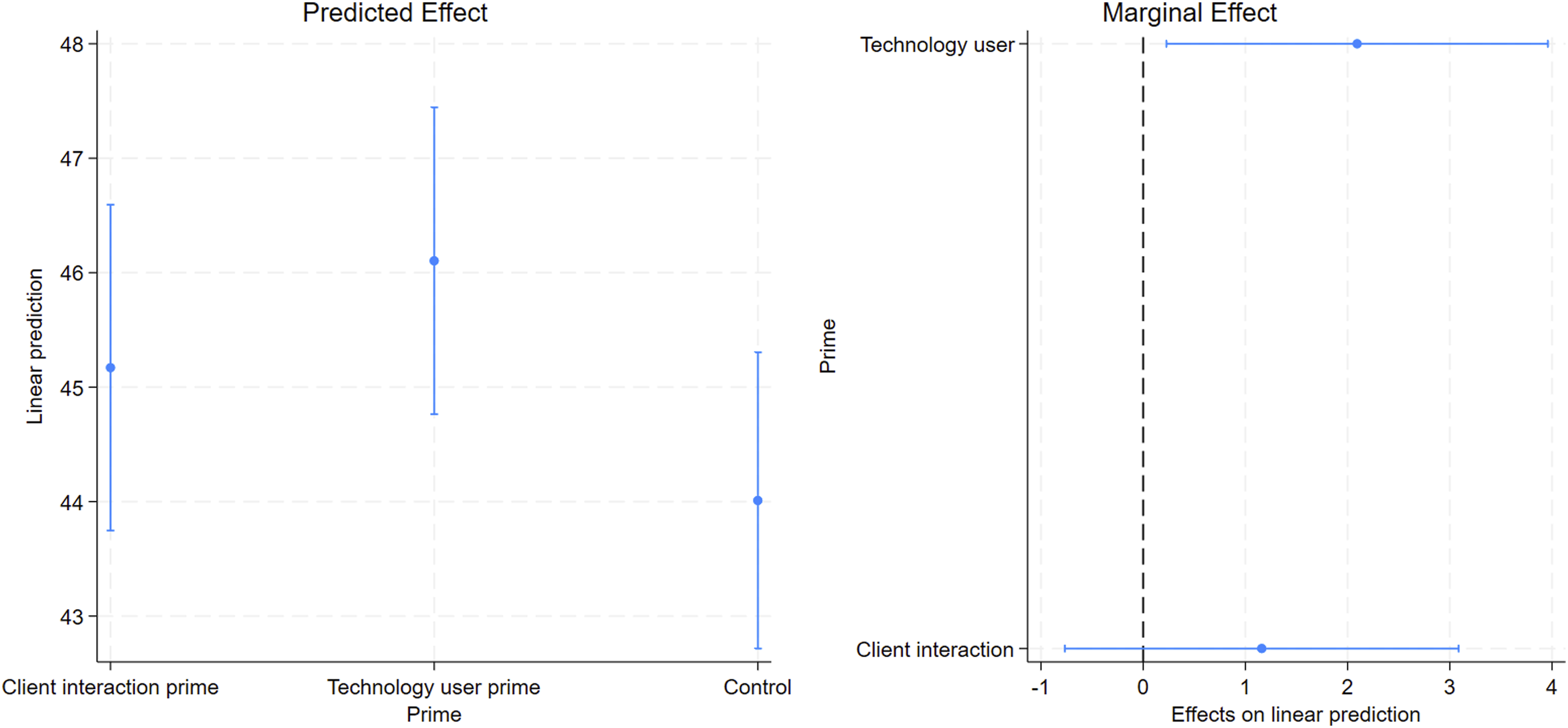

To estimate the priming effect on respondents’ willingness to trust AI-based systems, Model 2 in Table 2 includes a set of dummy variables representing whether respondents received the client interaction or computer technology user prime, whereas the control group serves as the reference group. Contradicting H2, the client interaction prime does not decrease respondents’ trust in AI-based systems, compared to the control group receiving no prime. However, the computer user prime has a substantially small but statistically significant positive effect: Compared to the control group, respondents who got the computer user prime report about two points higher trust in AI systems. The computer technology user prime, thus, does not trigger skepticism but seems to make respondents aware that they are quite comfortable with new technologies in a private context. This perception seems to be partially transferred to the trust in AI-based systems in their working environment. This statistical relationship is visualized in Figure 2, showing the predicted and the marginal effect of the priming experiment. Effect of priming on trust in AI-based system (vs case managers).

To test whether the positive effect of the computer user prime causes the small but significant increase of trust in AI-based systems, we look at the analysis of the manipulation check questions. At the end of the survey, we asked respondents how much completing the questionnaire made them think about the following three aspects: (1) their self-image as case managers, (2) their self-image as users of digital technologies, and (3) about the ecological consequences of digital innovations. Responses were measured on a scale from 1 (Not at all) to 7 (Very much). Next, we regress these three variables on the treatment variable. We expect respondents in the client interaction prime to report higher values for (1) and respondents in the computer technology user prime to have higher values for question (2). Question (3) serves as a placebo. The results of this manipulation check are summarized in Appendix Figure A9. While the client interaction prime does not affect (1), the computer technology user prime significantly affects (2). There is no effect for the placebo question (3). Hence, results from the manipulation check strengthen our confidence that the small and positive effect of the computer user prime is causal.

General attitudes towards AI

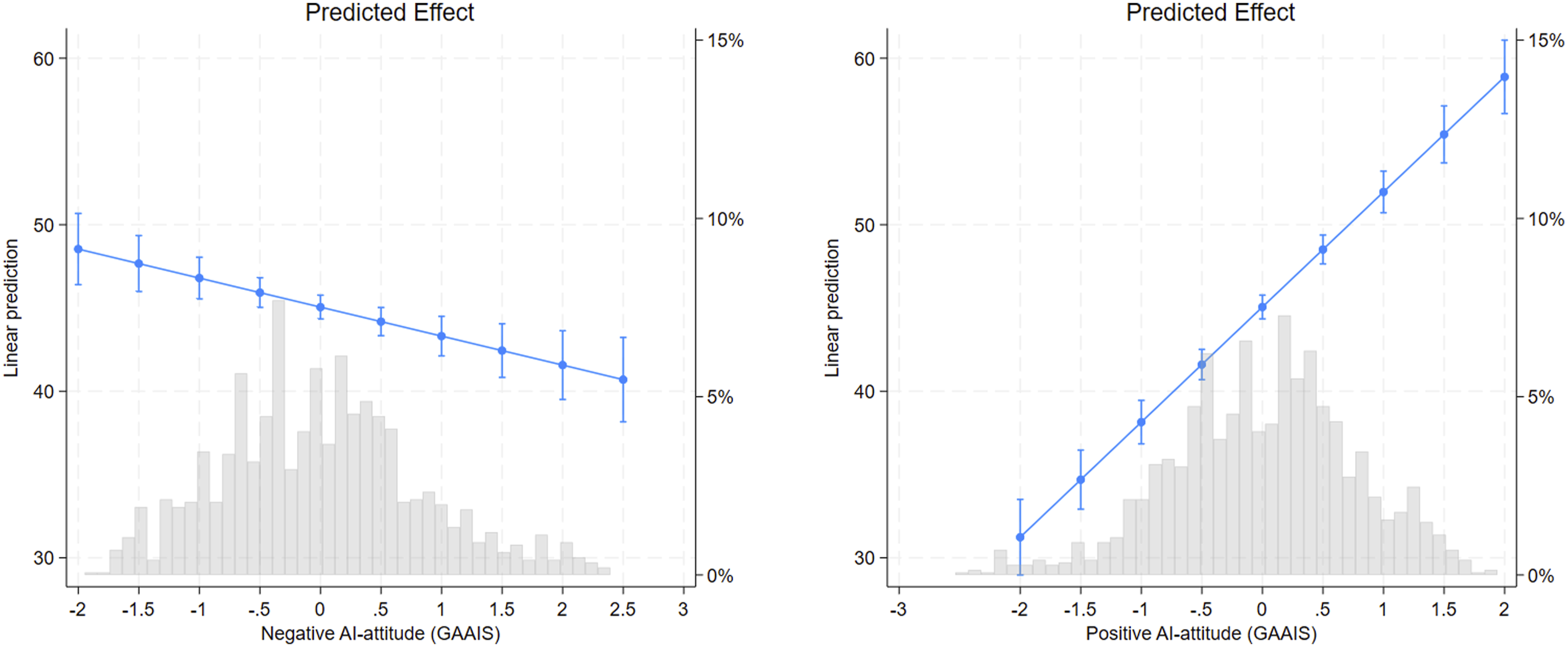

Models 4 to 6 in Table 2 summarize the relationship (observational) between respondents’ attitudes toward AI and their willingness to entrust AI-based systems in PES. In line with H3a and H3b, we find strong evidence that negative views toward AI are associated with lower willingness to entrust tasks to AI-based systems, whereas positive views are associated with greater willingness. What is even more remarkable is the relative size of these significant relationships. As visualized by predicted effects in Figure 3, positive AI attitudes are associated with up to 30 basis points higher trust when running from its minimum to its maximum. In contrast, negative AI attitudes hardly affect the predicted willingness to entrust tasks to AI-based systems. Effect of AI-attitudes on trust in AI-based system (vs case manager). Note. Based on Model 7 in Table 2. Confidence intervals at 95%. Histogram included.

These findings are robust towards including respondents’ sociodemographic characteristics (age, gender, and tenure). Compared to respondents with a job tenure of 20 years or more, respondents with a maximum of 10 years of work experience are more likely to trust AI-based systems (Model 3 in Table 2). This statistical relationship for tenure is also robust towards excluding the age variable. Hence, case managers’ willingness to entrust tasks to AI-based systems declines with their work experience. Case managers’ tenure, however, is highly correlated with their age (0.604***). Excluding tenure from Model 3 in Table 2, the coefficient for age becomes negative and statistically significant (−0.073**). This could be either a cohort effect, where younger cohorts are more open to AI, or a life cycle effect, where openness to AI declines as respondents age.7

Discussion and conclusions

Amid mounting fiscal pressures, shortages of qualified personnel, and demographic shifts in the current PES workforce, ADS systems are promoted for their potential to improve job counselling but risk top-down implementation that overlooks the perspectives of future users. This study therefore centers on case managers’ perspectives, investigating when they entrust tasks to AI-based systems and when they do not. Theoretically, it identifies three factors expected to shape case managers willingness to entrust tasks to AI-based systems: the discretionary nature of the task, the salience of client interaction in professional identity, and preconceptions of AI-based technologies. Methodologically, prior studies on algorithm acceptance typically pre-define task complexity (e.g., Lee, 2018; Wang et al., 2024). This study instead uses a split-sample design to measure case managers’ perceptions of discretionary power in counseling tasks. The explanatory power of the three factors is tested in an online survey among 1415 case managers from the German FEA.

The results can be summarized in three points: First, in line with H1, task-associated discretionary power is an important determinant of case managers’ algorithm appreciation. Expanding on Busch’s (2023) findings on citizens’ reluctance to digital discretion, this study finds that case managers are less willing to entrust counseling tasks to AI-based systems if the task is perceived to involve greater discretionary responsibility. Second, contrary to H2, there is no evidence that priming client interaction in case managers’ professional identity affects their willingness to entrust AI-based systems (Maynard-Moody and Musheno, 2000; Sell, 1999). However, priming the usage of computer technology in professional identity has a small but significant positive effect on trust toward AI-based systems. These findings highlight the importance of an appropriate narrative to enhance algorithm appreciation among case managers. Rather than presenting AI as disrupting professional practice, results suggest that highlighting existing experience with information technology in PES is more promising for fostering openness toward digitalizing employment services. Third, in line with H3a/H3b, attitudes toward AI substantially affect case managers’ willingness to trust AI-based systems (Schepman and Rodway, 2023). Expanding on Kleizen et al.’s (2023) finding that citizens’ AI attitudes predict their acceptance of AI in government, we find that case managers’ general AI attitudes are crucial in determining their willingness to entrust advisory tasks to AI-based systems. These attitudes, reflecting how respondents generally feel about AI, are unlikely to change quickly. Finally, findings on tenure and age suggest that younger case managers, and those with less job experience, are more willing to entrust tasks to AI-based systems. Although we cannot disentangle whether this is an age or cohort effect, it could argue for strengthening internal exchange formats and training across seniority levels to mitigate both technology skepticism and exaggerated optimism.

Before discussing potential policy implications, it is important to address this study’s limitations. First, although GAAI are generally stable, their post-experimental measurement may have been influenced by preceding slider tasks and the priming experiment. Since this study focuses primarily on the slider task, its calibration, and the priming experiment, these components were prioritized and presented first. In addition, the GAAI items, including strongly negative images of AI, could bias case managers’ perceptions. Therefore, the GAAI items were measured post-experimentally. Available survey time and sample size prevented randomizing the order of components. Future research could address this issue in more detail. Second, there is concern that the slider task, intended to measure discretion, may be confounded with perceptions of a task’s relevance to client well-being. This study represents a first step in addressing discretion as a subjective task characteristic and willingness to entrust tasks to AI-based systems in PES. Future research could include in-depth qualitative analysis to examine how case managers attribute discretion to counseling tasks. Third, there is a general methodological remark on research designs examining ADS acceptance in PES. While we aim to identify causal effects in the experimental part (e.g., Cunningham, 2021; Druckman and Green, 2021), the post-experimental measure provides only correlative evidence. Further research could move more strongly in the direction of identifying the behavioral implications of ADS in SLBs’ decision-making in the field (e.g., Alon-Barkat and Busuioc, 2023) or take a qualitative approach to investigate SLBs’ sense-making and justification of ADS use in greater depth (e.g., Heuer and Zimmermann, 2020). A multi-method approach that incorporates both behavioral measures and mental processes seems most fruitful for fully understanding what shapes the acceptance of algorithmic discretion in case management.

In policy terms, three key implications emerge. First, policymakers should consider case managers’ perceptions of task-related discretion and prioritize the digitalization of tasks with lower discretionary demands. This insight is particularly valuable for effectively implementing AI-based decision support systems in PES (Scupola and Mergel, 2022). Second, priming to use AI might also help decrease skepticism toward digitalizing tasks with low discretion levels. Priming these systems as “computer software” rather than “AI” could already influence perceptions and acceptance. Third, case managers’ subjective attitudes and general feelings toward AI should be taken seriously. While these attitudes cannot easily be changed, exchange formats and training across hierarchical and experience levels could help mitigate both technology skepticism and exaggerated optimism, thereby creating leverage for a more objective assessment of the pros and cons of ADS systems in PES.

Supplemental Material

Supplemental Material - Are case managers willing to entrust counseling tasks to artificial intelligence? The role of administrative discretion, client interaction, and technology attitudes

Supplemental Material for Are case managers willing to entrust counseling tasks to artificial intelligence? The role of administrative discretion, client interaction, and technology attitudes by Martin Dietz, Christopher Osiander, Mareike Sirman-Winkler, Markus Tepe in Journal of European Social Policy.

Footnotes

Acknowledgments

Previous versions of this study have been presented, for example, at the German Political Science Association (Annual Meeting of the Public Administration Section), the Institute for Employment Research (IAB) research seminar, the the Policy Lab Digital, Work & Society of the German Federal Ministry of Labor and Social Affairs (BMAS) and the European Employment & Social Rights Forum hosted by the European Commission. We want to thank all participants for their valuable comments, which contributed to the revision of the manuscript. The views expressed in this study are those of the authors. Any remaining errors are the sole responsibility of the authors.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.