Abstract

Image-guided therapies have been on the rise in recent years as they can achieve higher accuracy and are less invasive than traditional methods. By combining augmented reality technology with image-guided therapy, more organs, and tissues can be observed by surgeons to improve surgical accuracy. In this review, 233 publications (dated from 2015 to 2020) on the design and application of augmented reality-based systems for image-guided therapy, including both research prototypes and commercial products, were considered for review. Based on their functions and applications. Sixteen studies were selected. The engineering specifications and applications were analyzed and summarized for each study. Finally, future directions and existing challenges in the field were summarized and discussed.

Introduction

Over the last decades, image-guided therapy, including minimally invasive surgery and image-guided intervention, has been widely used in clinic owing to the rapid development of imaging modalities and medical devices. 1 Compared with other methods, image-guided therapy is less invasive, more accurate, and has better postoperative outcomes.2,3

In order to further improve outcomes from image-guided therapy, clinicians often combine other navigation methods to locate target lesions faster and more accurately so that they can apply surgical instruments accurately and avoid delicate structures. 4 The operators of image-guided therapy have to shift their focus between the surgical field and the display screen showing the real-time information. The need for mental registration of different image modalities is challenging since it has a high-level requirement for the operator to look at the position of the target in the image and determine the corresponding location in the patient. Unfortunately, the difficulty and subjectivity of this process increase the risks to patients.4,5 Both virtual reality (VR) and augmented reality (AR) technology have been used in attempts to overcome this problem. However, VR has proved in practice to be more suitable for surgical planning and simulation since it refers to immersion within a complete virtual environment.6–10 AR is a technology that allows the user to see a real world scene but with virtual objects superimposed upon or composited with the scene.6,11

By combining AR technology with image-guided interventions, target organs, and their complex surroundings can be displayed directly in the actual operating field. 12 Hidden organs inside a body can be seen by surgeons directly, and the perception of an interventional procedure can be improved. 13 In addition, the lack of specific abilities or experience by surgeons or radiologists in some complicated interventional procedures, such as video-assisted surgery and intraoperative examinations, can be compensated through AR technology. 12

Many AR technology-based research prototypes and commercial products have been reported in the application of image-guided therapy. In this review paper, publications related to the application of AR technology-based research prototypes and commercial products in image-guided therapy are analyzed and summarized for their technical specifications and applications. Finally, in the discussion section, current challenges, and directions for future work are presented.

Materials and methods

The phrases “augmented reality in medical procedure,”“augmented reality for interventional radiology,” and “image-guided procedure with augmented reality” were used as keywords to generate potential articles that could be reviewed. PubMed, 14 ProQuest, 15 Library of the University of Georgia, 16 ScienceDirect, 17 IEEE Xplore, 18 and Google Scholar 19 were used as scientific research engines in this study. The search range was from January 1, 2015, to June 15, 2020. The initial search results included 233 articles.

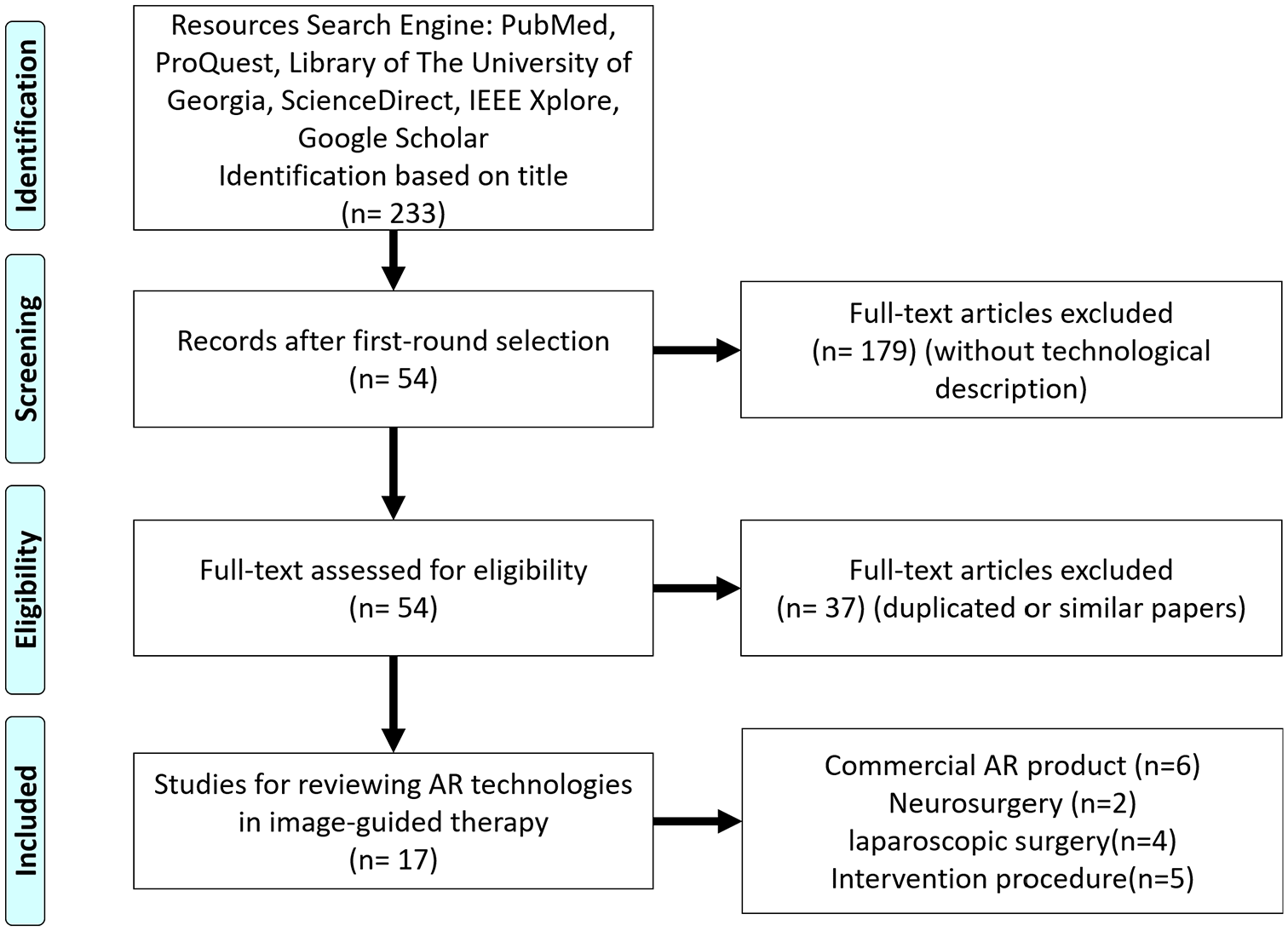

Elimination evaluations were applied to the articles from the initial search to generate the final reviewed articles for this study. In the first-round selection, initial articles were divided into two categories: those with a commercial product and those without a commercial product. For each paper with a commercial AR product, the most recent applications were selected to make sure it could reflect the current situation of the field. For each paper without a commercial AR device, its abstract was manually analyzed, and it was eliminated if it did not contain a description of AR technologies. After this selection process, 54 papers remained. Next, duplicated or similar content or applications from different papers were removed. Finally, 17 papers were selected for review. These articles were then divided into two categories: commercial AR products and AR research prototypes. Under the category of AR research prototypes, the candidates were further divided into different sections based on their surgical applications and reviewed in depth (Figure 1).

Research flowchart of this study.

Results

Commercial AR products in image-guided interventions

Many commercial AR products are available on the market. Among these, Microsoft HoloLens (Microsoft Corporation, Redmond, Washington, USA) is an AR product that has been used in a wide range of medical applications. The HoloLens is a head-worn display (HWD) designed to project information via three-dimensional (3D) holograms with multiple sensors.20,21 In order to determine its position in space, HoloLens is equipped with an inertial measurement unit, depth-sensing camera with infra-red (IR) sensor, and RGB camera. HoloLens is also able to receive user input via voice commands and hand gestures. 22

Besides HoloLens, there are other commercial AR products applied in image-guided therapy, such as Endosight (Endosight, Milan, Italy) and Magic Leap (Plantation, Florida, US). The Endosight is specifically designed for biopsy and ablation procedures. The current version of the product includes a disposable optical sensor to be embedded in third-party probes or hardware. 23 The real-time AR image from Endosight can be viewed from AR goggles or tablets in color. 24 The Magic Leap is more similar to HoloLens, it is not specifically designed for clinical application only but the application of it in image-guided clinical procedures. The applications of commercial AR products in image-guided interventions are summarized in Table 1.

Summary of the applications of commercial AR products in image-guided interventions.

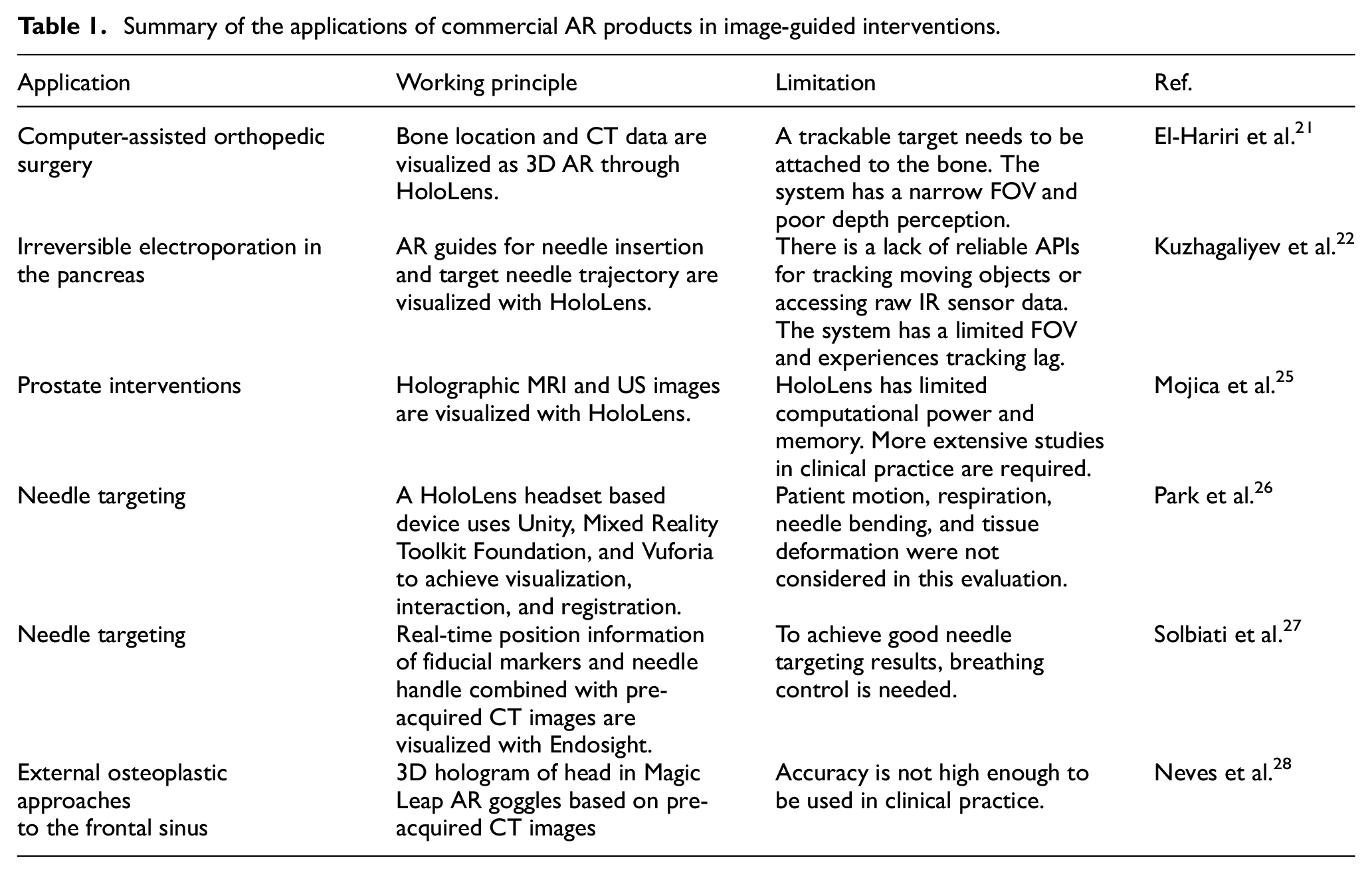

El-Hariri et al. 21 used HoloLens to generate 3D AR visualization of a computer-assisted orthopedic surgery (CAOS) with intra-operative US tracking information and preoperative CT data (Figure 2(a)). The method was demonstrated on a foam pelvis model, and the locations of real and virtual fiducials were measured to assess the system. With the use of a CT750HD scanner, the pelvis’ CT data were acquired for the HoloLens display and used for AR visualization, after which the data were segmented, converted, and exported into Unity. The segmentation and registration between US and CT data were achieved by Shadow peak (SP) segmentation and normalized cross-correlation (NCC) registration. With the use of AR, the HoloLens pelvis hologram was projected onto the foam model. Through experiments, the system error was found to have the root mean squares of 3.22, 22.46, and 28.30 mm in the x, y, and z directions, respectively.

HoloLens based image-guided interventions: (a) overview of the HoloLens-based AR system, 21 (b) projected AR image with registered US probe, 22 (c) topology (left) and photograph (right) of the holographic AR interface, showing the operator immersed in a scene that includes 3D holographic structures and an embedded 2D virtual display window 25 and (d) left: Participant inserted the needle guided by AR system. Top right: View of the needle insertion procedure without AR system. Bottom right: View of the needle insertion procedure with AR system. 26

Kuzhagaliyev et al. 22 presented an AR system (Figure 2(b)) focused on improving the efficiency of irreversible electroporation (IRE) by indicating a point of entry to the body via a drawing of the current needle offset and the target trajectory with HoloLens holographic guides. The system was developed using the Universal Windows Platforms (UWP) Unity3D engine. Three stereoscopic IR cameras from V120: Trio were used to track optical IR markers on the NanoKnife IRE needles as well as the US probe and the HoloLens headset. The IR marker position information was interpreted through the Motive software platform. A transmission control protocol (TCP) server was also developed in order to extract NatNet information and to act as middleware between Motive and UWP. By providing live information and superimposed visual guides, the system has the potential to reduce needle misplacement risk and to increase IRE therapy effectiveness through accurate electrode placement.

Mojica et al. 25 developed and assessed an interface for image-based planning of prostate interventions with the usage of a head-mounted display (HMD) (Figure 2(c)). Developed with Unity3D Engine and written in C#, the interface was tested on HoloLens and required the usage of hand gestures and voice commands to manipulate images and holographic scenes. The AR interface establishes a data and command pipeline that links the sensor – the MRI scanner or the US machine – and the operator. HoloLens, controlled by gestures and voice commands, acts as the interface, whereas the host PC is used as a separate processor. The host PC processes the MRI and US data and sends the data to HoloLens via a TCP/IP connection. The operators found the system easy to operate, and the AR visualization was identified to be superior to desktop-based volume rendering by all operators (two urologists, one radiologist specialized in acquisition and delineation of prostate MR images, and five engineering/scientific researchers) due to factors such as the ease of planning access paths and selecting a trajectory, manual co-registration of MRI and ultrasound, and ergonomics.

Park et al. 26 evaluated a HoloLens based 3D AR-assisted navigation system by using CT-guided simulations. The AR system was a HoloLens headset based system, and the visualization and interaction application was developed through Unity and Mixed Reality Toolkit Foundation. The developed system could achieve automated registration between the 3D model and CT grid through computer vision and Vuforia without any user input. The evaluation procedure, shown in Figure 2(d), was based on an abdominal phantom with an 11 mm lesion as the target and a 21G-20 cm Chiba needle. Two attendings, three interventional radiology residents, and three medical students participated in this evaluation procedure by performing CT guided needle targeting with and without the developed AR system. Results showed that the AR system could reduce the radiation dose by 41% and the number of needle passes required to reach the target by 54.2%. All participants could perform at the same level with the developed AR system.

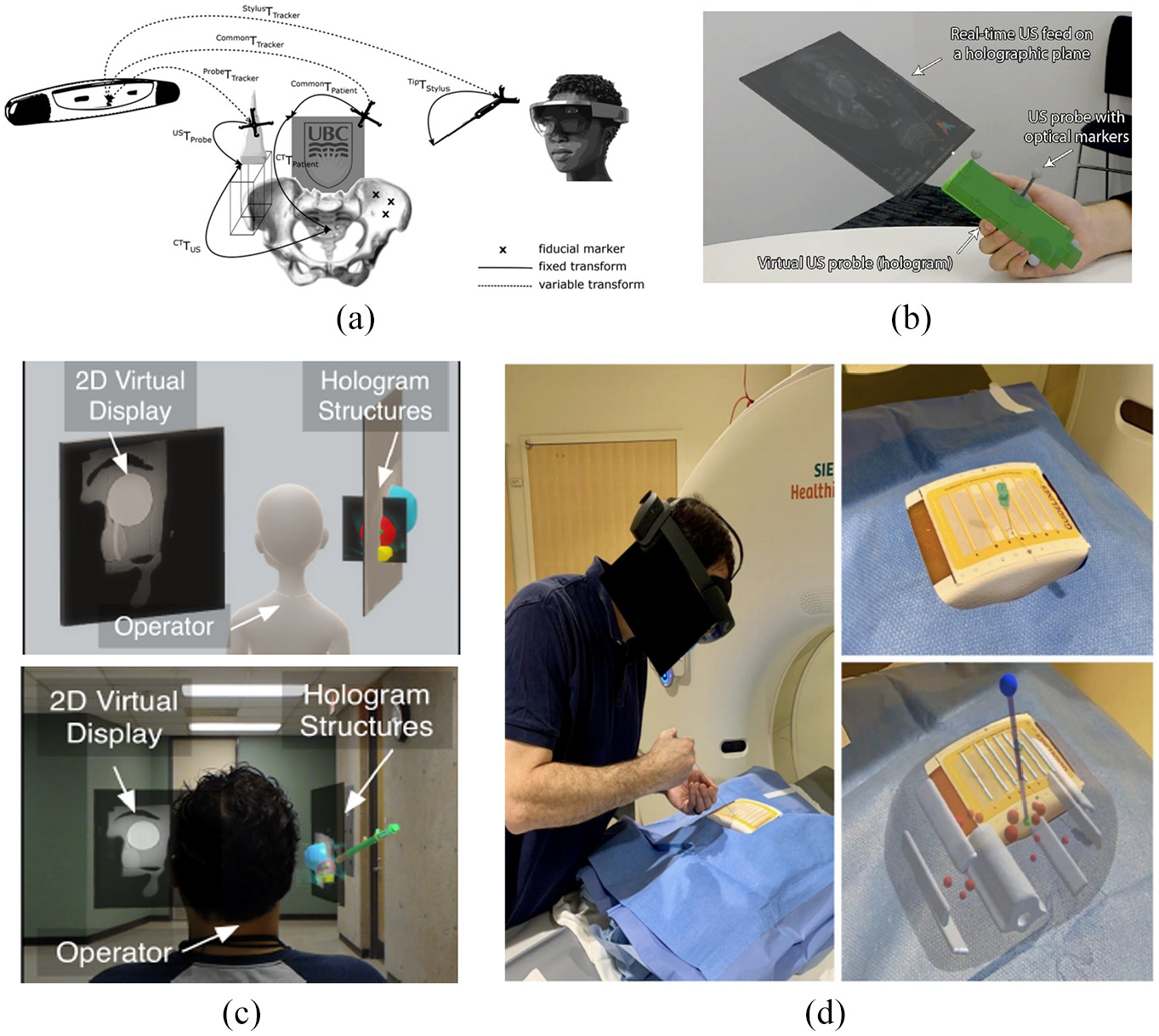

Solbiati et al. 27 designed a series of experiments to evaluate the feasibility of the novel Endosight AR system (Endosight, Milan, Italy). The Endosight system has a customized needle handle, radiopaque tags as fiducial markers, and a tablet used to run the Endosight software and display the AR images. The workflow of the Endosight system starts with fiducial markers being placed on the patient’s skin before CT scanning. Then Endosight software finds the target tumor and fiducial markers on the CT images automatically via a segmentation algorithm. Next, during the intervention, the pre-intervention information is combined with real-time position information from the needle handle and fiducial markers to generate real-time AR images on the tablet (Figure 3(a)). Finally, radiologists finish the intervention based on the real-time AR images. Three evaluation purposes for the Endosight system were set in this study: to use a rigid phantom to test the targeting accuracy; to evaluate the feasibility of the system under the respiration effect through a porcine with and without breathing control; and to confirm the feasibility of the system in a clinical situation by using a cadaver with liver metastases. The Endosight system had good results in three experiments. The target error was 2.0 ± 1.5 mm and 3.9 ± 0.4 mm (mean ± standard deviation) in the phantom test and porcine test, respectively. In the cadaver test, the errors for two different metastases were 2.5 and 2.8 mm.

(a) Illustration of Endosight AR system with porcine model for needle insertion. Top: Real-time AR image, wherein the red line is the needle, the blue line is the needle handle, the yellow line is the target, and the red curved line is the outline of the porcine. Bottom: Correspondent CT images, among which the leftmost image is the CT image before needle insertion and the rest are after needle insertion. The yellow arrow indicates the position of the target and needle. 27 (b) The 3D hologram from Magic Leap AR system superimposed to the cadaveric head. 28

Another example is the Magic Leap AR goggles (Plantation, Florida, US). Neves et al. 28 created an AR system based on Magic Leap AR goggles for performing external osteoplastic approaches to the fromtal sinus. Through the CT scans images of target head structure, the key structures, such as bones and frontal sinus, were segmentated based on threshold values of Hounsfield units through the 3D Slice application first. Then, these segmented structures were imported into a proprietary application and loaded on Magic Leap AR goggles to create and visualize a 3D hologram of these structures through the Magic Leap AR goggles as shown in Figure 3(b). Finally after a series of registration, the 3D hologram from Magic Leap AR goggles could be used to guide surgical procedures. The proposed AR system was applied in six cadaveric head with trephinations and osteoplastic flap approaches to evaluate the performance. Through the tests results, registration, and surgery procedures were completed successfully in all six specimens. Only one surgical complication occurred among all six tests. The average registration time was around 2 min. After comparing the postprocedure CT images between the contour of the osteotomy and the true perimeter of frontal sinus, the mean difference of proposed ARsystem was 1.4 ± 4.1 mm.

Research prototypes

As well as applying commercial AR products directly in image-guided interventions, many research groups have developed AR prototypes that can be used in different image-guided procedures. In this section, such prototypes are reviewed based on the surgical procedures they are designed for.

AR used for neurosurgery

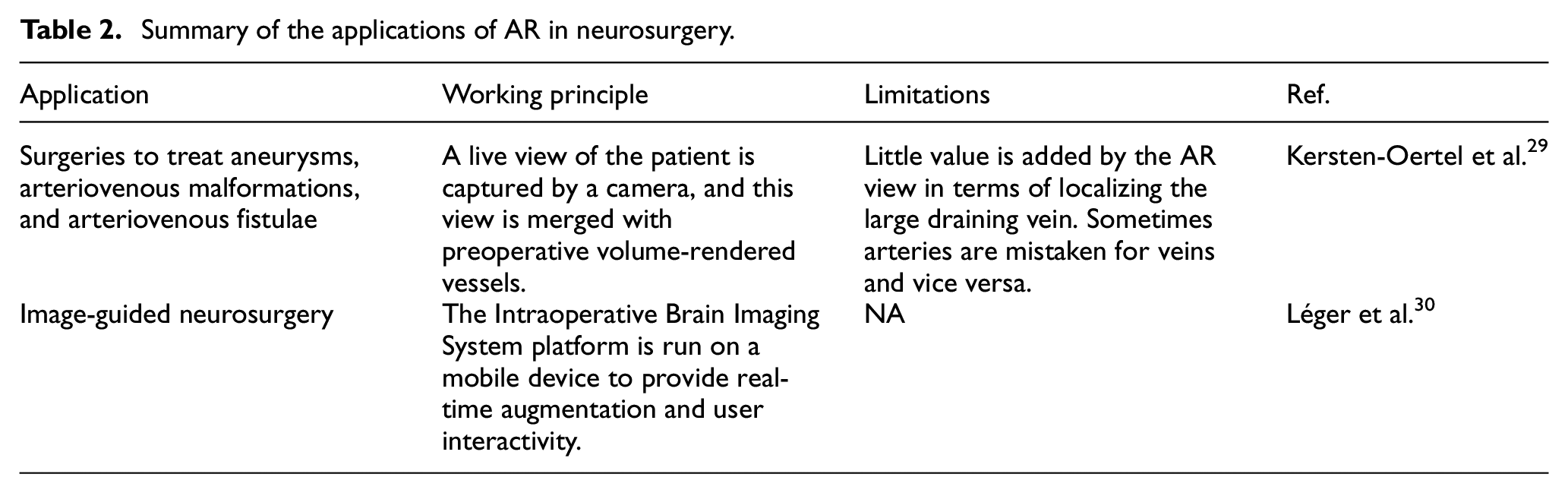

Neurosurgery is a specialty that relies heavily on imaging. AR can be used to project a 3D model of the patient’s scan onto the surgical field. This confers benefits such as not having to constantly switch views between the surgical field and computer screen and reducing cognitive load since normally the surgeon has to determine how 2D images apply to the 3D scene. In this section, some applications of AR in neurosurgery are reviewed (Table 2).

Summary of the applications of AR in neurosurgery.

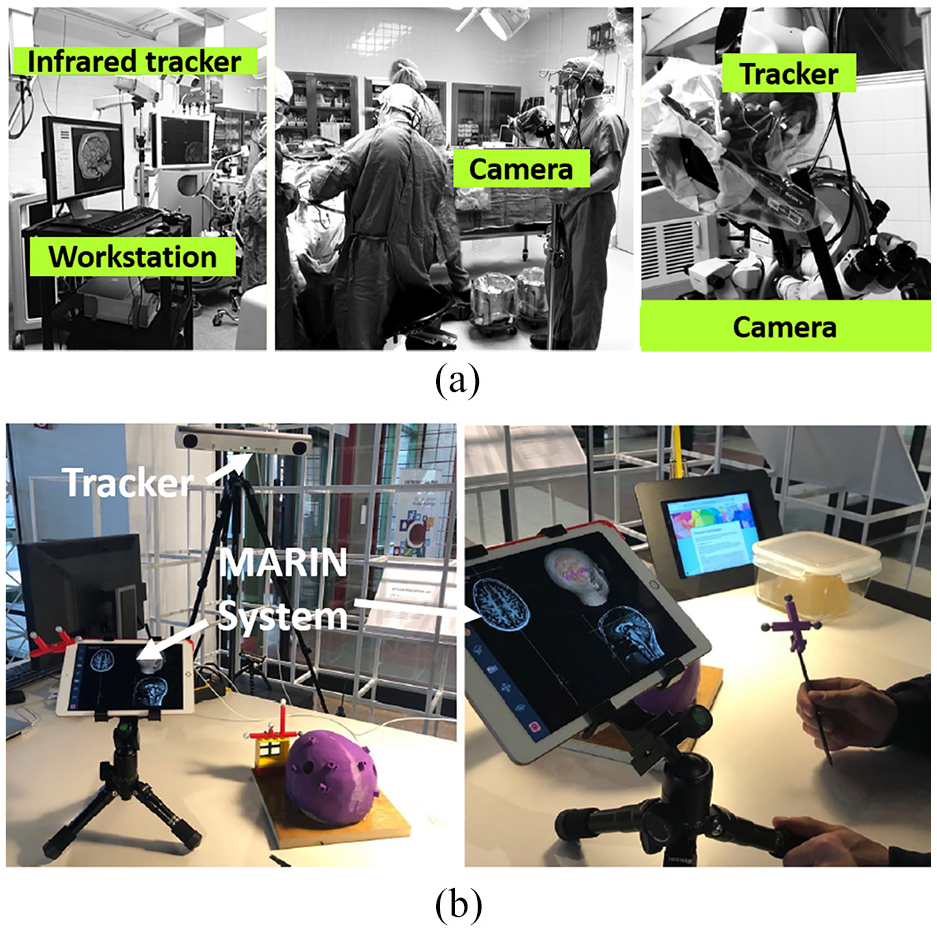

Kersten-Oertel et al. 29 performed a feasibility study on a prototype of an intra-operative brain imaging AR system (Figure 4(a)) applied to several neurovascular surgeries. The Polaris camera (Waterloo, Canada) was used for localization, and it was calibrated with extrinsic and intrinsic calibration before utilizing both visual markers and optimization software to project the corresponding 3D camera coordinates to image space. Images of arteries and veins were then produced by computed tomography angiography (CTA) images. Lastly, the camera image and the volume-rendered vessels were blended together to produce a 3D AR view. According to feedback gathered from surgeons who used this prototype, this prototype was useful for identifying abnormal feeding arteries (feeders).

Léger et al. 31 investigated the impact of the use of two different AR image-guided surgery setups (mobile AR and desktop AR) and traditional navigation on surgeon attention shift for the specific task of craniotomy planning. Based on their findings, Léger et al. 30 further developed the concept of an AR mobile system into a complete system, called MARIN, that can use a mobile device to perform real-time AR video augmentation for image-guided neurosurgery (IGNS), as shown in Figure 4(b). The MARIN system includes a mobile device, a desktop computer, a tracking system, and a wireless router. A complete list of compatible hardware is included in Léger’s article on MARIN. MARIN uses the Intraoperative Brain Imaging System platform, which is extended for image augmentation onto a mobile device using OpenIGTLink. To evaluate the performance of MARIN, a phantom study was conducted to localize pre-defined targets. It was found that there was a significant improvement in the time taken to reach the target for MARIN compared to standard IGNA guidance. Most of the subjects (94%) preferred MARIN over standard IGNS. While the results obtained are favorable, senior neurosurgeons have differing opinions on how they would like to use MARIN in the operating room. Such differences might need to be considered in future designs of MARIN.

AR used for laparoscopic surgery

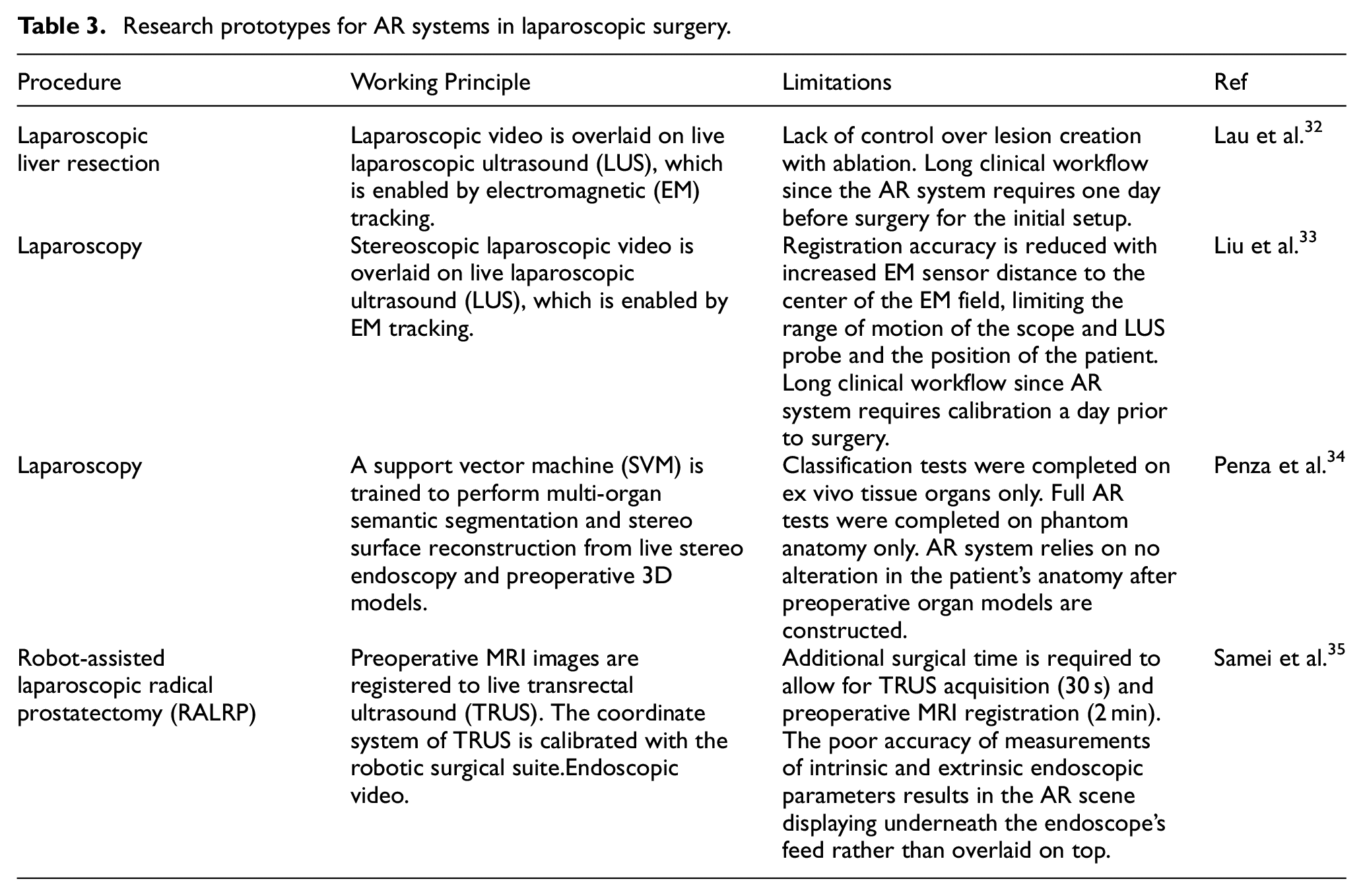

Laparoscopy has been applied to many surgical procedures recently. Applying AR systems to laparoscopy related surgery can help overcome the limitations faced by minimally invasive procedures. Such limitations include reduced tactile feedback and maneuverability, limited field of view (FOV), and poor depth perception. The implementation of AR through various imaging and tracking techniques has been investigated by researchers for laparoscopy-based surgeries (Table 3).

Research prototypes for AR systems in laparoscopic surgery.

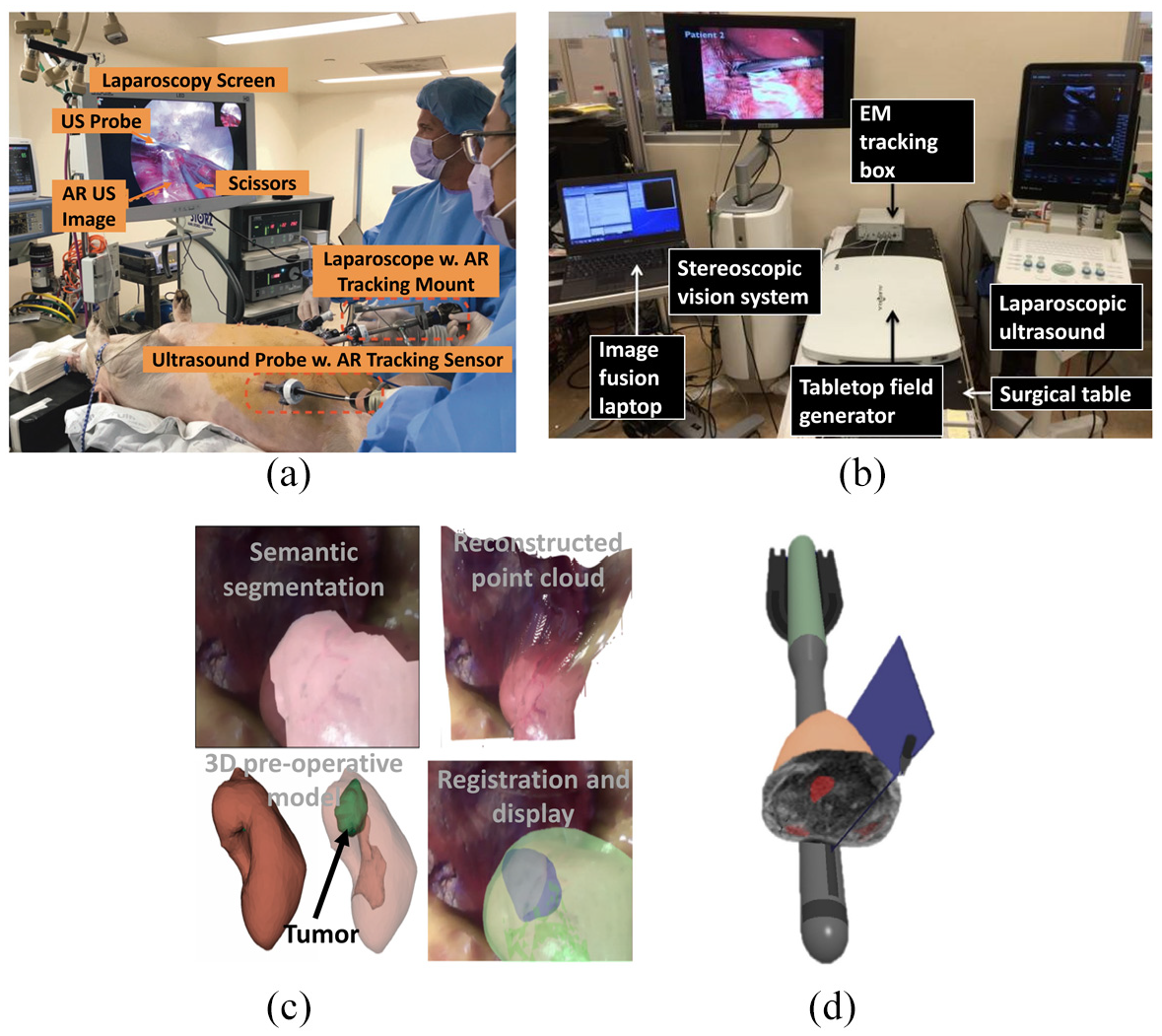

Lau et al. 32 designed and developed an AR system for laparoscopic liver resection that displays laparoscopic video (Image 1 Hub; KARL STORZ, Tuttlingen, Germany) overlaid with real-time laparoscopic ultrasound (LUS) images intra-operatively by implementing an electromagnetic (EM) tracking system. The EM tracking system consists of a tabletop EM field generator (Aurora; Northern Digital, Waterloo, Ontario, Canada) and EM sensors that are mounted on the laparoscope handle and the LUS imaging tip (4-Way Laparoscopic 8666-RF; BK Ultrasound, Peabody, Massachusetts, USA). A geometric relationship was created between the two imaging probes and the EM generator through calibration. The LUS was calibrated using the PLUS library 1 day prior to experimentation to create a coordinate transformation between the EM sensor’s 3D coordinate system and the image plane of the LUS. This generated LUS image positional coordinates within the AR system, allowing for image presentation within the EM tracking space. The laparoscope was calibrated immediately prior to experimentation by determining its intrinsic parameters and the coordinate transformation between the laparoscopic camera lens and the EM sensor’s coordinate system. The real-time LUS image was then overlaid upon the laparoscopic video image by the AR system as shown in Figure 5(a) along with the full operative setup. This was achieved by mapping the geometric 3D spatial coordinates of the LUS imaging tip to the 2D image of the laparoscopic video image. To compare successful surgical times between the AR guidance system and traditional laparoscopy, two porcine livers with previously created radiofrequency ablation (RFA) lesions were resected. Both resections were successful, with a 2.5 cm diameter lesion being resected in 7 min under AR guidance, and a 1 cm diameter lesion being resected in 3 min under traditional laparoscopy.

AR for laparoscopic surgery. (a) Laparoscopic video and LUS operational setup 32 . (b) Stereoscopic and LUS AR setup with EM tracking 33 . (c) Context-aware AR scenes of the kidney (left to right, top to bottom): semantic segmentation, point cloud reconstruction, 3D model, and final scene 34 . (d) AR scene with 3D rendered TRUS probe and instruments, TRUS plane orientation (blue), and prostate and MRI volume slice 35 .

Lui et al. 33 similarly developed an AR system for laparoscopic surgeries, implementing tabletop EM tracking (Aurora; Northern Digital, Waterloo, Ontario, Canada) for laparoscopic video overlaid intra-operatively with real-time LUS images (Flex Focus 700, BK Medical, Herlev, Denmark). In this study, however, the laparoscope was stereoscopic (VSII; Visionsense Corp., New York, USA), producing a 3D video with improved depth perception (Figure 5(b)). Two EM sensors were attached to the imaging probes in similar positions compared to Lau et al.’s 32 design, and the LUS was calibrated with a similar method to Lau et al.’s 32 method. Prior to laparoscopic calibration, the LUS image was rendered using a virtual camera mimicking the optics of the laparoscope. Both the left and right scope cameras were calibrated to the scope handle EM sensor separately using hand-eye calibration. This related the scope lens’ coordinates to the EM sensor’s coordinates, creating a transformation between them. A 3D AR scene was created by registering and overlaying the LUS image onto both the left and right video streams of the scope, which were then rendered to create the 3D display. The target registration error (TRE) was measured during element calibration and image registration to determine their respective accuracies. The LUS calibration and left and right scope calibration TREs were 1.10 ± 0.23 mm, 1.27 ± 0.25 mm, and 1.04 ± 0.23 mm, respectively, and the left and right image registration TREs were 2.59 ± 0.58 mm and 2.43 ± 0.48 mm, respectively. The system latency was also measured to establish any time delay between the display of the full AR system and the stereoscope alone. The measured latency of the full AR system and the stereoscope alone were 177 ± 12 ms and 119 ± 12 ms, respectively, showing a 58 ms time delay. The system was then utilized in an animal study and shown to visualize the abdominal organs of a swine undergoing laparoscopy successfully.

Penza et al. 34 proposed a context-aware AR system for laparoscopic surgeries, implementing multi-organ semantic segmentation and stereo surface reconstruction from intra-operative endoscopic (ENF-VH; Olympus, Shinjuku, Tokyo, Japan) images with preoperative 3D organ models (Figure 5(c); bottom left). Multi-organ semantic segmentation (Figure 5(c); top left) created tissue segmentation masks from the left eye of the intra-operative endoscopic video by Superpixel segmentation, Superpixel-based tissue classification, and confident Superpixel-merging. Superpixel segmentation, achieved with linear spectral clustering, provided consistent over-segmentation by extracting homogenous areas of tissue from the endoscopic image with well-adhered organ boundaries. Superpixel classification was achieved with support vector machines (SVM) and the Gaussian kernel and implemented with scikit-learn. For this, Superpixel texture-based information was extracted and encoded into a uniform rotational binary pattern, and the computation of the mean intensity value inside the Superpixel was incorporated. A measure of confidence was introduced and merged with morphology closing to obtain the multi-organ semantic segmentation masks. Dense tissue reconstruction was then performed using an algorithm to create a robust disparity map of 3D tissue surfaces from the stereo endoscopic images (Figure 5(c); top right). Stereo-pair correspondences were calculated by block-matching that utilized a modified Census Transform and Hamming Distance, and they were refined for improved point cloud density. To identify homogenous areas of similar depth, Superpixel was exploited and plane-fitted, and the disparity values of its contours were considered Dirichlet boundary conditions allowing for the smoothing of the disparity map by the Gauss-Seidel method. To build the context-aware AR scene, multi-organ semantic segmentation extracted point cloud portions correlating to the segmented tissue from the 3D reconstructed tissue surface, which were registered to the associated tissues from a preoperative 3D anatomical model, which in turn were projected onto the intra-operative endoscopic stereo image via perspective projection (Figure 5(c); bottom right). To evaluate the Superpixel-based tissue classification, 36 endoscopic images were taken of the ex vivo abdominal organs (kidney, liver, fat, and spleen) of three pigs. Nine images of each organ were taken at different poses, established by varying the tissue-endoscope angles (30°, 60°, and 90°) and distances (4, 5.5, and 7 cm). The SVM was trained on images from two of the pigs with a chosen dataset of 300 Superpixels and tested on images from the third. A full context-aware AR test was also completed on abdominal organ phantoms to evaluate the multi-organ semantic segmentation error and AR overlap error in terms of Dice similarity coefficients (DSC). The semantic segmentation error DSCs for the organs were found to be 0.86, 0.83, 0.95, and 0.82 for the kidney, spleen, liver, and fat, respectively, and AR overlap error DSCs were found to be 0.80 and 0.73 for the kidney and spleen, respectively.

Samei et al. 35 proposed a partial AR system to guide robot-assisted laparoscopic radical prostatectomy (RALRP) by registering preoperative MRI images of the prostate to intra-operative transrectal ultrasound (TRUS). A robotic TRUS system consisting of an ultrasound machine (BK 3500; BK Medical, Herlev, Denmark) with its TRUS probe mounted on a 2 DOF biplane transducer was implemented to capture live 3D TRUS images of the prostate. To track and guide the surgical equipment, the TRUS coordinate system was manually calibrated to the da Vinci surgical suite (S-model, Si-model; Intuitive Surgical, Sunnyvale, California, United States) coordinate system. To allow for changes in the prostate’s position and shape between preoperative MRI and surgery, the MRI image was non-rigidly registered to the TRUS using finite-element models and tissue elasticity values of the prostate and surrounding tissues. Registration involved the segmentation of a 3D TRUS volume at the beginning of surgery, which the MRI prostate surface was non-rigidly registered upon, with any changes in prostate shape or position being manually adjusted by the surgeon. Fast, real-time rendering of the deformed MRI for projection was synthesized in 2D slices by a finite-element algorithm. The 3D scene created consisted of a 2D MRI slice projected onto the prostate axial to the surgical instrument, the 3D surface mesh of the prostate, a 2D representation of the TRUS plane for image location, and 3D virtual models of the TRUS probe, surgical instruments, and endoscope (Figure 5(d)). A phantom study was performed on a fabricated phantom prostate and its surrounding tissue to measure a combined error in MRI-TRUS registration and TRUS-da Vinci calibration, which averaged at 3.19 ± 1.30 mm. The AR system was then successfully implemented during twelve RALRP in vivo clinical procedures, and the MRI-TRUS registration and TRUS-da Vinci calibration errors were measured in eight patients. The average registration error and calibration error were 2.1 ± 0.8 mm and 1.4 ± 0.3 mm, respectively, with a combined error of 3.5 mm.

AR used for interventional procedures

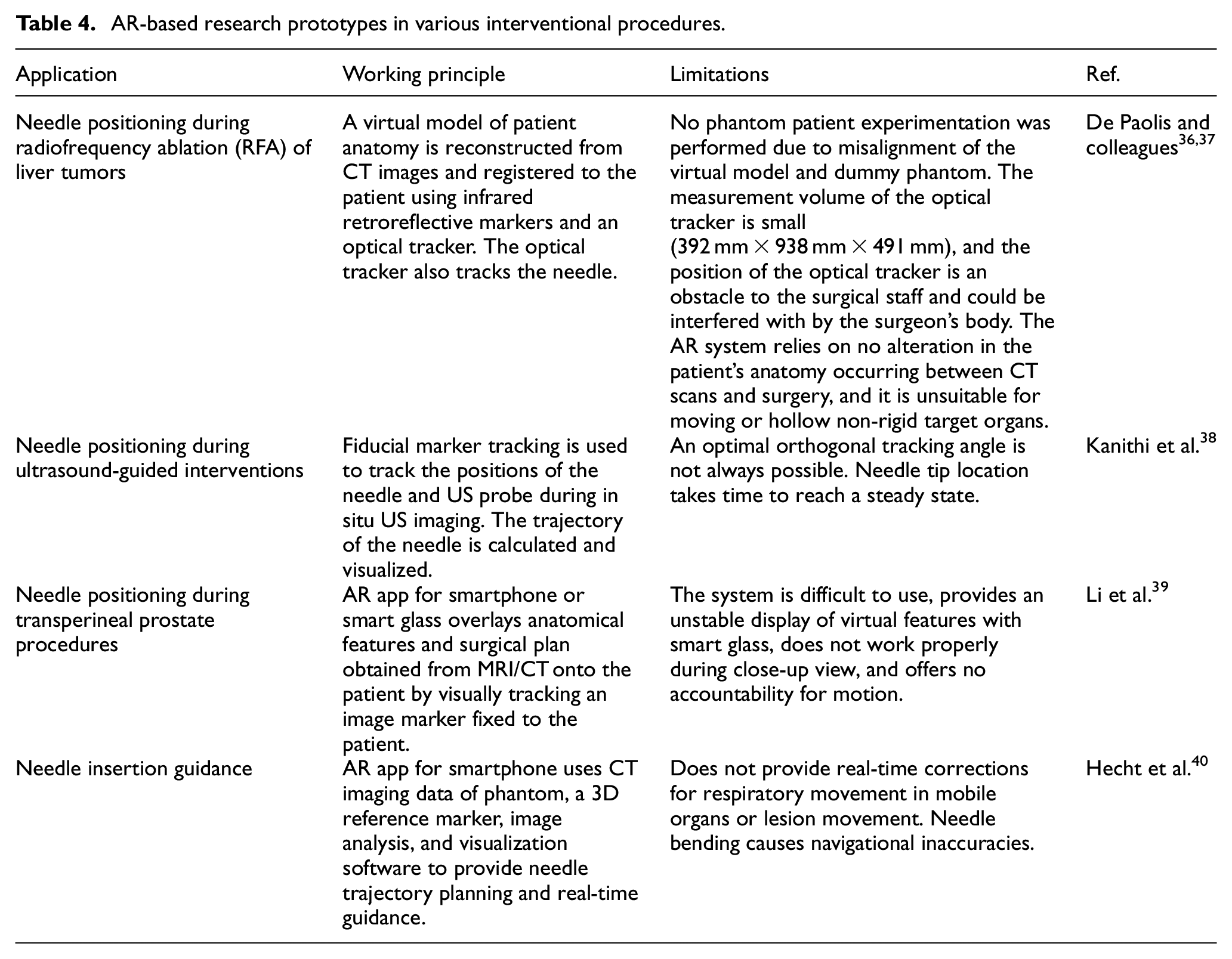

Besides the applications of AR technology in specific surgical procedures, researchers have also reported AR-based systems that can be applied in various interventional procedures, such as needle insertion. This part of the review will discuss the technical details and applications behind these systems, along with their benefits and limitations. A summary of the review in this section is provided in Table 4.

AR-based research prototypes in various interventional procedures.

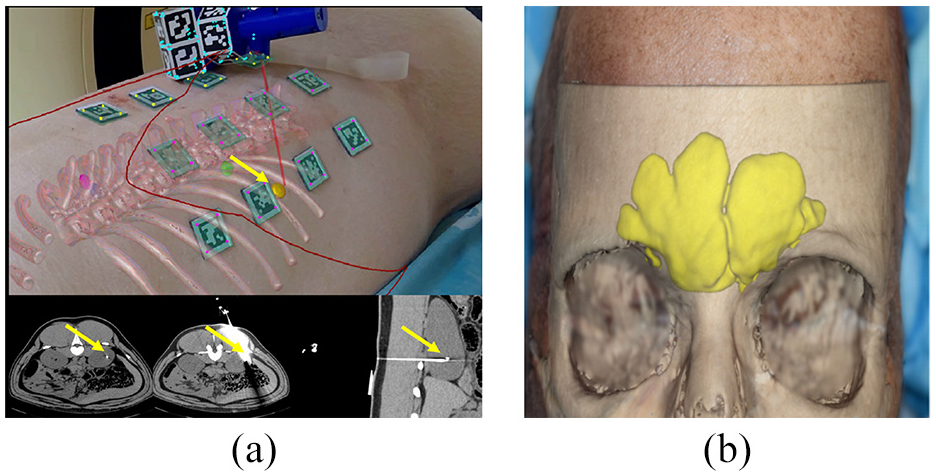

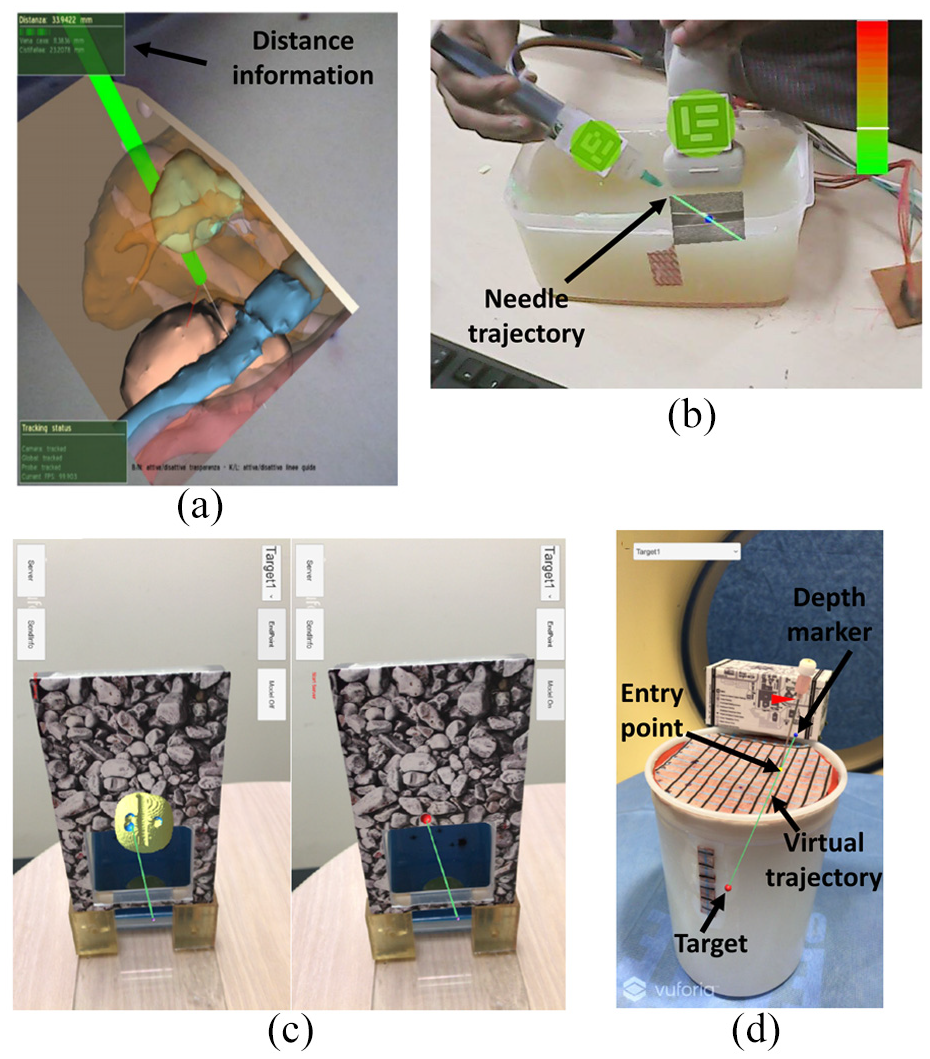

De Paolis and Ricciardi 36 and De Paolis and De Luca 37 both reported on an investigation into the use of an AR system to improve needle insertion accuracy for RFA of liver tumors. The technique implemented a reconstructive 3D model of a patient’s anatomy built from preoperative axial, coronal, and sagittal CT images traditionally used in the planning phase of surgery. To create the AR scene, the anatomy of the 3D virtual model had to be registered to that of the patient. This was achieved by defining fiducial points on the patient using radiopaque stickers prior to CT scanning and creating two corresponding coordinate reference systems: one of the patient and one of the CT reconstructed model. During the registration phase, the Horn algorithm computed a rigid transformation matrix between the two coordinate systems, and the real fiducial point positions were measured with a calibrated tracker probe to pair with their represented virtual points. The Polaris Vicra optical tracker (Northern Digital Inc., Waterloo, Ontario, Canada) with two infrared cameras was implemented to visually overlay the virtual model over the real patient anatomy in the AR scene as well as provide a system for tracking the RFA surgical tool once inside the patient. An augmented RFA tool was created by equipping the real tool with reflective spheres. To achieve realistic placement of the virtual model within the real patient, the augmented scene was occluded with a virtual window to highlight the region of interest and provide depth perception to the scene (Figure 6(a)). To provide distance and guidance information of the augmented RFA tool within the AR scene, several visual and audio cues were created to inform the surgeon of the tool’s proximity to the target organ and the tool’s current and target trajectories. The image-guided surgical toolkit (IGSTK) library was used to implement visualization and image processing within the tracker’s measurement volume. The application uncertainty of the AR system was tested before and after 30 min of training on a wooden box containing parallelepipeds with an aligned virtual model. The mean application uncertainties were 0.75 mm before and 0.64 mm after 30 min of training, whereas traditional guidance without AR averaged at 3 mm. A preliminary quantitative test was also completed during an open surgery on surface liver tumors to test operating room suitability and application uncertainty. The surgeon utilized recognizable anatomical landmarks less likely to cause deformation for the fiducial points during registration and confirmed the virtual anatomy correctly overlapped that of the real patient.

Kanithi et al. proposed the use of an immersive AR system to assist needle placement during US-guided interventions 38 (Figure 6(b)). The AR system is based on fiducial marker tracking, US imaging, and visualization of probe trajectory. The US probe and needle syringe were labeled with fiducial markers, so in a captured frame, the position of needle tip could be predicted through a transformation function, which was derived from the fiducial markers. The in situ visualization of US images and needle trajectory was achieved by obtaining the physical dimensions of the markers and their locations on the US probe. These dimensions and location information were used to find registration area for the US video. Evaluation by Kanithi et al. found that at a viewing angle of 90° the error was close to zero and gradually increased as the viewing angle varied. The distribution of error for needle tip localization accuracy with and without moving average filter was 1.53 and 1.57 pixels, respectively. In addition, the accuracy of needle trajectory was verified by measuring the perpendicular distance between the actual target location and predicted trajectory. The average deviation was 3.27 mm, the standard deviation was 2.95 mm, and the range was 0.9–9.6 mm.

AR technology for interventional procedure. (a) An occluded virtual window of the abdomen with surgical instrument distance information 37 . (b) Projected AR view and virtual needle trajectory 38 . (c) AR App interface. Left: Lesion target overlaid on the prostate phantom. Right: Planned needle trajectory 39 . (d) Smartphone screen with intentionally off-axis needle placement. Green line: virtual needle trajectory. Red dot: target lesion. Yellow dot: entry point. Navy dot: depth marker. Proper insertion depth is achieved when the needle hub base (arrowhead) coincides with the virtual depth marker (navy dot) 40 .

Li et al. 39 proposed an AR system which uses smart see-through glasses/smartphone to guide needle placement in transperineal prostate procedures (Figure 6(c)). This system consists of image analysis and visualization software for CT/MRI images, AR hardware devices, a newly developed AR app, and a local network. Anatomical features and the surgical plan are overlaid onto the patient through the developed app by visually tracking a fixed image marker. The target lesion, other anatomical information, and surgical planning are defined in MRI/CT images via registration by using the fiducial markers. By visually tracking the image frame, the information can be displayed by the AR device in real time. The AR system also allows the operator to select a pre-defined target, describe the needle plan, and view a 3D model for checking the surrounding anatomy. A multi-modality interventional prostate phantom was applied to evaluate the developed AR system. The results showed that the angular difference between actual needle and virtual needle was 0.58° ± 0.43° and 1.62° ± 1.52° over 10 trials for smartphone and glasses, respectively.

Hecht et al. 40 reported a smartphone-based AR system for needle trajectory planning and real-time guidance (Figure 6(d)). The CT imaging data from a phantom were used for procedural training of the AR system. A 3D reference marker and image analysis and visualization software were also included in this smartphone-based AR system. Unity 2018.1 and Vuforia SDK were used for developing the AR app. Registration between different coordinate systems was achieved by identifying fiducial markers manually and performing rigid point-to-point registration. The desired target could be selected from the pre-defined targets on the smartphone screen when the app was running. Two phantom experiments were conducted to evaluate the performance of the developed system. The first experiment showed that the mean error of needle insertion was 2.69 ± 2.61 mm, which was 78% lower than the CT-guided freehand procedure. In the second experiment, operators successfully navigated the needle tip within 5 mm on each first attempt under the guidance of the AR system, which eliminated the need for further needle adjustments. Furthermore, the average procedural time was 4.5 ± 1.3 min, which was 66% lower than the CT-guided freehand procedure.

Discussion

By combining AR technology with image-guided interventions, surgeons are able to see hidden organs and complex surroundings without performing invasive open surgery. This could significantly improve the success rate and efficiency of image-guided interventions. However, there are still some challenges in translating current technologies into clinical practice.

The wearable see-through glasses approach is applied in many AR-based interventional cases, such as HoloLens, Magic Leap. One drawback of the see-through glasses approach is that it tends to be inaccurate because the calibration of the eye perspective is user-specific. The calibration procedure is not easy, and the left and right eyes may need separate calibration. During an interventional or surgery procedure, even a small movement of the glasses related to the head could change the registration completely, which may lead to large errors in the final interventional result. In addition, the FOV of see-through glasses is very limited, so the operation may not have a quick response to an emergency situation outside the FOV. Furthermore, the accuracy and usability of this approach will rely on the design of the goggle since the built-in translational and rotational tracking is a combination of accelerator and gyroscope information. So, wearable see-through glasses are mainly used for surgical assistance (with voice and gesture control) rather than for navigational or guidance use. This can limit the use of AR in image-guided therapy to tasks such as prompting pre-operative MR images during an ultrasound-guided needle biopsy.

The accuracy of AR-based intervention is highly dependent on the registration accuracy between AR images and the surgical field. Inaccurate registration can mislead or confuse surgeons and put patients at risk. Since AR images are based on pre-acquired medical images, organ motion and tissue deformation are the major factors causing inaccurate registration for AR-based interventions.12,41,42 To improve the registration accuracy, many methods have been reported to compensate for organ motion and tissue deformation. One research direction is to develop advanced registration methods or mathematical models for compensation during AR reconstruction. For instance, Kong et al. 43 reported a finite element modeling based automated AR registration process, which is coupled with optical imaging of fluorescent surface fiducials; Ng et al. 44 developed a Gaussian distribution and Tukey weight algorithm to reduce elastic deformation error; and Tonutti et al. 45 derived a patient-specific deformation model for brain pathologies by combining the results of pre-computed finite element method simulations with machine learning algorithms. However, these algorithms and models are difficult to widely implement since they are case-specific and their parameters need to be optimized for different situations. Another research direction is to achieve real-time reconstruction with intraoperative US, CT, or MRI. Real-time MRI could be a challenge to the surgical team in some situations, and real-time CT scanning could increase radiation exposure, so neither are ideal methods. Although US is better than other methods, it is still questionable whether all organs can be reconstructed from US as they can from CT. 12

The amount of time required for the preparation stage is another challenge to the clinical application of AR-based interventions. Although the registration time and operation room preparation time are acceptable, it takes about 3–4 h to completely build up AR image navigation by a skilled surgeon. 12 This makes the whole intervention time-consuming. In future research, the development of software to decrease the preparation time is key to allowing AR-based interventions to be widely adopted.

Cost efficiency is also a challenge in the development of AR-based interventions. AR is a totally new technology and does not belong to part of the original clinical training so there will be a learning curve required and human resources to be invested in the new technology. This will increase the cost comparing with current situation. Reducing the learning curve and required human resource should be one of the future research directions in this field. In addition, making AR-based intervention devices compatible with current clinical equipment would help to reduce costs. 41

Furthermore, clinical regulatory issues are major challenges for AR-based interventional devices. New medical devices must undergo strict evaluations from clinical regulatory agencies, such as the U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA), before they can be applied clinically.

Conclusion

In conclusion, image-guided interventions have become more and more popular in recent years. In order to help surgeons observe hidden organs and their complex surroundings and further improve interventional accuracy, AR technology is applied during image-guided interventions. From 2015 to 2020, many papers were published on commercial AR products and AR research prototypes for image-guided interventions. Such papers were analyzed, and ultimately 16 related papers were selected for review in this article. The engineering characteristics of the AR technologies in the 16 selected papers were summarized based on different interventional procedures. Although many applications of AR technology in image-guided interventions have been reported, there are still some challenges to overcome before AR technology can be widely applied in clinical practice, such as registration, organ deformation, preparation time, cost, and clinical regulation.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is partially supported by the Royal Society Wolfson Fellowship. This study was supported in part by the National Institutes of Health (NIH) Bench-to-Bedside Award, the NIH Center for Interventional Oncology Grant, the National Science Foundation (NSF) I-Corps Team Grant (1617340), NSF REU site program 1359095, the UGA-AU Inter-Institutional Seed Funding, the American Society for Quality Dr. Richard J. Schlesinger Grant, the PHS Grant UL1TR000454 from the Clinical and Translational Science Award Program, and the NIH National Center for Advancing Translational Sciences.