Abstract

This article examines how the roles of policy actors shape preferences for the regulation of artificial intelligence (AI). We distinguish between generalists, active across multiple policy sectors, and specialists, whose engagement is concentrated mostly in a single sector. We expect that generalists tend to support horizontal, cross-sectoral AI regulation, whereas specialists favor sector-specific, vertical approaches. Our analysis draws on original elite survey data comprising over 190 respondents from France, Germany, and Switzerland, including organizations and individuals engaged in AI governance across banking, health, and social welfare subsystems, as well as actors in the emerging AI policy sector. Using Bayesian regression models, we find indicative evidence that generalist actors—such as public interest groups, or trade unions—are more likely to endorse encompassing regulation, while specialists—such as occupational interest groups or public administrations—are more inclined toward sectoral regulation. This divergence becomes particularly pronounced when actors evaluate whether their own policy field should be integrated into a broader AI regulatory framework: policy fields that have many specialist actors, such as health policy, show markedly lower support for such integration. These findings contribute to public governance scholarship by clarifying how institutional roles influence regulatory preferences in the context of complex, cross-cutting policy challenges. The study contributes to our understanding of actor roles in policy subsystems and cross-sectoral policymaking as well as to Digital Era Governance in the public sector.

Keywords

Introduction

The public policy literature describes the political system as an assembly of policy subsystems, which are communities of actors collaborating or conflicting over specific policy issues, such as healthcare, immigration, taxes or energy. When new problems arise and enter the political agenda, policymakers face the choice of responding with sector-specific regulations versus with an encompassing regulatory regime that spans across existing policies and organizations of the bureaucracy (6, Perri, 2004; Jochim and Peter 2010; Trein and Maggetti 2020).

Research in public administration and policy has pointed out that coordination between public sector organizations is essential for effective administrative action in general, but extremely difficult to achieve (6, Perri, 2004; Adelle and Russel 2013; Cejudo and Michel 2017; Daly 2005; Jordan and Lenschow 2010; Nilsson et al., 2018; Peters 2015; Underdal 1980). It can be pursued rather through a bottom-up (Cejudo and Trein 2023), or a top-down fashion (Jochim and Peter 2010; Varone et al., 2013). In taking a similar perspective, public administration scholars have emphasized that organizational coordination is required to reduce the negative side effects of the administrative fragmentation created by New Public Management (NPM) reforms (6, Perri, 2004; Bouckaert et al. 2010; Christensen and Lægreid 2007; Reiter and Klenk 2019). They have also pointed to the need of administrative holism, to implement digital government effectively (Dunleavy et al., 2006; Margetts and Dunleavy 2013).

This theoretical problem is highly relevant for digital government and Artificial Intelligence (AI) specifically, because they cut across different sections of the public sector (Dunleavy et al., 2006; Dunleavy and Margetts 2025; Moon 2023; Wirtz et al. 2020). More generally, the rapid development of AI tools and their deployment in the public and private sectors makes it necessary to regulate AI (e.g., defining, rules, guidelines and standards). The first step to AI regulation requires setting the agenda, and different policy actors, such as interest groups and parliamentarians play an important role in the process (Baumgartner et al. 2019).

Against this background, we investigate the question why actors prefer a rather sector-specific (vertical) or rather encompassing (horizontal) regulation regarding AI. We are well aware that scholarship on AI governance has highlighted that, in an ideal scenario, there should be both general, encompassing regulations and specifications for various AI applications in different areas (Büthe et al., 2022; Krafft et al. 2022). Our goal is not to confirm or disconfirm this normative point. Nevertheless, since we know that public policies mostly change incrementally (Howlett et al. 2020; Weible and Sabatier 2018), it is likely that there will be some starting AI regulation–either rather sectoral or encompassing–that influences on the following regulatory trajectory.

To answer this question, we make three arguments, based on research from public administration, public policy, and political science. Firstly, we hold that actors with a “generalist role”, such as peak-level business organizations, trade unions or elected representatives, are more likely to support an encompassing approach to regulating new policy problems. On the contrary, we contend that actors with a “specialist role”, such as administrative organizations, scientists, individual firms, and professional organizations should support more sector-specific regulations. Second, we argue that if actors perceive a governance issue as a significant challenge for their own policy field, they are more inclined to prefer a sectoral rather than an encompassing regulation. Thirdly, we expect that the configuration of actors within a subsystem impacts on the support for a sectoral versus an encompassing approach to AI regulation.

To assess the relevance of these theoretical expectations, we collected data through an original survey on early-stage AI policy. The survey targeted organizations (and some individuals, notably parliamentarians) actively involved in AI governance within three diverse countries, France, Germany, and Switzerland. Furthermore, we focus on three well-established policy subsystems that are affected by AI developments: banking, health, and social welfare. Additionally, we examine actors who could potentially be part of an emerging AI policy sector. AI serves as a relevant example to investigate actors’ perceptions of regulatory preferences for an encompassing or a sector-specific regulation, as it is a cross-cutting issue with the potential to impact banking, health and social welfare policies (as investigated here) and various other policy subsystems as well (e.g. autonomous car and traffic regulation, algorithms to detect fiscal fraud, facial recognition and predictive policing, search-based chat and education, etc.).

Our findings provide some empirical evidence that actors with a generalist role, e.g., unions or public interest groups, tend to support encompassing AI regulations, whilst those with a specialist role, such as occupational interest groups, prefer sectoral regulations. This finding is corroborated if we look at the number of policy sectors in which actors report to be active in. It is also confirmed if we look at whether generalists or specialists want their own sector to be included into encompassing AI regulations.

Object of study: Preferences for AI regulations

The object of study (or dependent variable) in this paper is actor preferences regarding the scope of AI regulation. The regulation of AI entails rules codified in law, but may also involve the gathering of information or other mechanisms, to achieve behavior modification (De Almeida, dos Santos, and Farias 2021, 506–7). There is an emerging literature that deals with how different polities regulate AI, i.e., rules regarding the development and deployment of autonomous intelligent systems (Wirtz et al. 2020, 820). This work reveals that the EU has a horizontal approach aiming at regulating AI across sectors according to a risk-based approach (Ebers 2025; Paul 2024). On the contrary, the US have a regulatory approach where the federal government pursues a vertical approach with limited regulatory action through Executive Orders that change between administrations, whilst the States regulate AI often with a focus on specific policy fields, such as labor or mobility or for particular applications (Sloane and Wüllhorst 2025; Weerts 2025). China has several regulations that intend to complement measures to boost innovation with rules to protect national security and social values (Koenig 2025, 133). The goal of this paper is not to deepen our analysis of these regulatory instruments, but to focus on the preferences of actors that are concerned by these approaches.

Specifically, we are interested in actor preferences regarding the sectoral scope of an AI regulation. We want to understand if policy actors focus on sector-specific regulations, such as rules focusing on particular problems, e.g., health care or mobility, as it is the case in some US States? Or, if actors rather prefer encompassing approaches to regulation, as it is the case in the EU. This question touches on an important dimension of public administration research and practice, notably how much “holism” (Christensen and Lægreid 2007; Dunleavy and Margetts 2025; Jochim and Peter 2010) actors prefer when it comes to designing regulations for complex problems, such as AI.

Theoretical background: Why do policy actors prefer different regulations?

To understand why actor’s policy preferences regarding AI regulations differ, we formulate theoretical expectations about the roles of actors, issue importance, and actor configurations at the subsystem level. Notably, we propose a theoretical contribution that distinguishes generalist and specialist roles for policy actors.

Distinction of specialist and generalist roles of policy actors

Our main theoretical argument builds on the assumption that, in relation to their institutional role, policy actors (e.g., interest groups, parliamentarians, public administrations) can be considered to rather have a generalist role or a specialist role. The distinction encompasses whether actors tend to be involved in several policy fields and a large variety of issues or whether they focus more narrowly on one or two policy fields. This theoretical approach does not focus on the actual scope of an actor’s technical knowledge regarding a specific policy field but focuses on the breadth of their engagement, without excluding that actors with a narrower focus probably tend to better understand the issues they focus on.

Elected representatives are vote-, office- and policy-seeking actors (Müller and Strøm 1999). Focusing on the last motivation (i.e., policy advocacy), the seminal study of Searing (1987) about parliamentary roles distinguishes generalists from policy specialists. The latter are Members of Parliament (MPs) who select a few issues and aim to influence policymaking in these specific domains. They gather first-hand knowledge about their topics of specialization by establishing contacts with interest organizations active on the ground (Searing 1987, 439) and rely less (than generalists do) on information-sharing among parliamentary peers and with party leaders (Searing 1987, 441). In a similar vein, previous literature proposes to distinguish generalists as “foxes” (MPs who “know many little things”) and specialists as “hedgehogs” (MPs who “know one big thing”) (Martínez-Cantó, Breunig, and Chaqués-Bonafont 2023, 869). Regarding administrative holism, research suggests that an increase in specialization of MPs on particular policy issues tends to reduce cross-sectoral integration activities (Reber et al. 2023).

For instance, policy specialists aim at being appointed as party representatives in the relevant legislative committees (Andeweg and Thomassen 2011). Having access to this policymaking venue, which reflects the division of tasks within the legislature and, thus, the specialization of elected representatives, is highly relevant to framing policy problems, collecting insider-information, designing policy solutions and, eventually, claiming credit for a newly adopted policy (Fenno 1987; Peeters et al. 2021; Saalfeld and Strøm 2014). Accordingly, we expect that elected representatives who deemed themselves as policy specialists will not support encompassing regulations (e.g., a regulatory approach to AI that integrates many different issues at the same time) since it reduces the relative importance of their own policy expertise and, consequently, their (intraparty) status and prestige, and their influence on the policymaking process. On the contrary, policy specialists will rather claim that when a new policy issue reaches the political agenda (i.e., AI), then it should be addressed within their own policy community (i.e., sector-specific regulation) (Lemke et al. 2023).

Beside elected representatives, interest groups (IGs) are the second key stakeholders involved in the policymaking process that this study is looking at. An interest group aims to defend the material interests of its individual or collective members and/or to promote the ideal cause of the group in the public space and political system. The rich literature has investigated the multiple advocacy strategies that privileged “insider groups” versus less powerful “outsider groups” use to influence policymaking, such as informal lobbying, litigation, direct democracy, or grassroots mobilization (Dür and Mateo 2016; Hall and Deardorff 2006; Maloney et al. 1994). While the resources, venue shopping strategies and coalition behavior of IGs are well-documented across many countries and policy domains (see Varone and Eichenberger 2023 for an overview), the empirical evidence about the IGs support for encompassing versus sector-specific regulations is very scant if not missing at all.

Generalist interest groups include “business or employers’ associations”, which bring together business leaders, at the level of firms or a particular economic branch (e.g., banking, or health care), or even at the umbrella level of the private economy. Their counterparts are “trade unions”, which represent the interests of employees in companies, at the industry level (e.g., working conditions agreements) and in neo-corporatist negotiations with employers and the State (e.g., pension systems, minimum wage). Finally, “public” IGs have members who support an idealistic cause that goes beyond their own interests and is intended to benefit society (e.g., civil rights, environmental protection, pacifism). Since these groups represent broad, sometimes even diffuse interests, they have a large policy portfolio as well and engage in advocacy activities on many issues and in several institutional venues. We expect that both peak-level economic organizations (representing either employers or employees) and public interest groups support general regulation since they aim at defining policy rules that define a level-playing field for all economic actors and/or address the vital concerns of citizens that cut-cross policy domains (e.g., the defense of privacy that is challenged by many AI applications).

In sharp contrast, other interest groups are clearly specialized in a specific domain or even a narrow issue. This is notably the case for so-called “occupational” IGs, which represent people who work in the same profession, for instance as bankers or liberal professionals (e.g., doctors, lawyers). This category also includes categorical or identity-based organizations representing the interests of a particular segment of the population (e.g., consumer groups, seniors’ associations, disability associations). Occupational and categorial groups pursue two objectives: the group’s leaders should ensure the organizational survival of their organization and defend public policies that serve the (material) interests of their members (Schmitter and Streeck 1999). We expect that specialized groups support a specific regulatory approach. Indeed, a specialized group that influences a policy domain decisively towards the clearly identifiable preferences of its members can probably guarantee or even increase its membership. This not only ensures more financial resources (e.g., through membership fees) but also a better representativeness of the group and, therefore, a reinforced credibility with elected representatives and public officials.

Decision-makers need information provided by interest groups, (e.g., technical expertise, acceptability of a policy solution, or political intelligence on the positioning of potential allies; see Hall and Deardorff 2006). However, they value it differently, depending upon their own motivations. Elected representatives with a generalist role, who aim at building a political majority to pass a legislative solution, rely on information about its political acceptability as delivered by IGs with a generalist approach. On the contrary, public administrations with a policy specialist role engage in exchange relationships with more specialized interest groups since they want to gather technical policy expertise to elaborate an effective policy solution. If these political exchanges (Bouwen, 2002) occur as postulated here, then they have self-reinforcing effects on issue (non)specialization of actors, on their respective support for encompassing regulation and, eventually, on the collectively achieved policy outputs.

Finally, we also consider the role of public administrations and scientific experts as important policy stakeholders. We assume that these actors are fundamentally policy specialists. Indeed, the common wisdom is that public administrations are highly fragmented, with narrow policy mandates and fiercely defending their autonomy and organizational resources. The compartmentalization or “siloification” of ministries and their respective subunits, and the independency of regulatory agencies, are often criticized as main barriers to the design and implementation of general regulations. How to develop coordination between isolated (if not competing) administrative structures belongs obviously to the major topics of the literature on public administration and management. Generations of scholars have recurrently highlighted the challenges of bridging organizational divides and achieving “positive and negative coordination” (Scharpf 1997), “joined-up” government (6, Perri, 2004), “substantive policy integration” (Briassoulis 2017) within a “New Governance Arrangement” (Howlett and Rayner 2006), “boundary-spanning policy regimes” (Jochim and Peter 2010) or “functional regulatory spaces” (Varone et al., 2013).

The assumption of specialization is also plausible for scientific experts. Despite the increase in multi-, inter-, and transdisciplinary curricula and research, most academic researchers, who are involved in policy debates as independent and free-lance experts versus a “state-certified” policy advisors (Dunlop 2014), remain specialists. Technical policy expertise is the access good they provide when they are invited to a parliamentary hearing, to carry out a scientific study on behalf of a public administration or to join a governmental taskforce (Eichenberger et al., 2023).

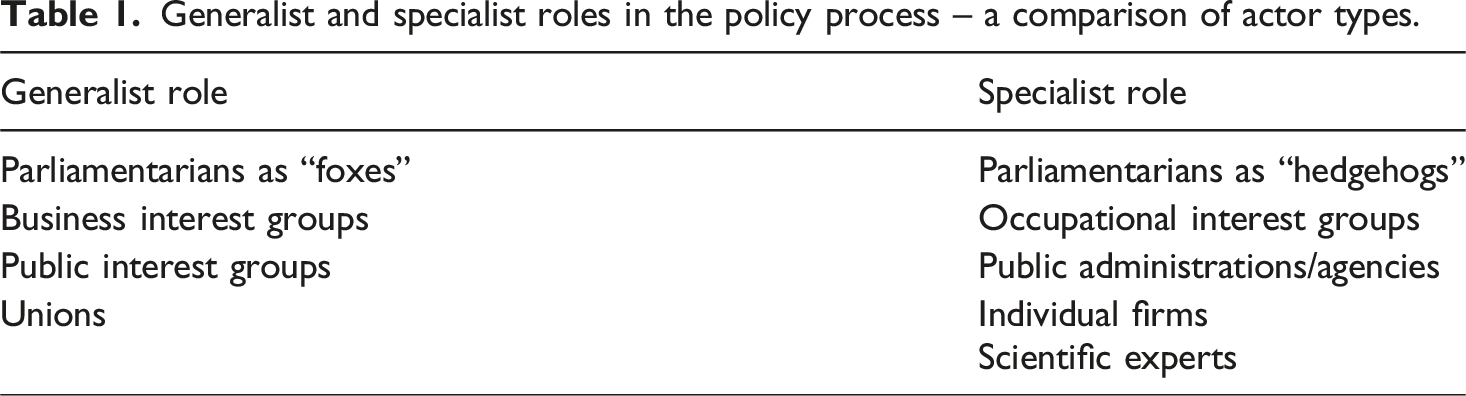

Generalist and specialist roles in the policy process – a comparison of actor types.

On the contrary, our argument posits that firms, occupational interest groups, public administrations, parliamentarians as “hedgehogs” (who know a lot about one thing) and scientific experts can be considered as policy specialists. This distinction is particularly evident when it comes to firms and occupational interest groups, as they typically concentrate on a specific policy matter. As for public administrations and scientific experts, it is plausible to assume that while they recognize the importance of coordinating various policy issues, they often specialize in more specific domains. Against this background, we propose the following expectation (Expectation 1):

Issue importance for actors’ own subsystem

When decision-makers encounter a new challenge like AI, their preference for an encompassing regulatory approach versus a more sector-specific one is not solely determined by the actor type they represent. The public policy literature indicates that an essential determinant of actors’ policy preferences is how actors perceive the significance of the issue at stake (Baumgartner et al., 2018; Burstein 2003; Trein and Varone 2024). According to the punctuated equilibrium theory (PET), various policy subsystems compete for the scarce political attention of high-level policymakers. In the case of a new issue such as AI, if actors from specific subsystems perceive it as important, it is reasonable to assume that they would advocate for framing any policy solution in a manner that prioritizes addressing the specific concerns they are interested in. For instance, if healthcare actors believe that AI is significant for health policy, they are highly inclined to favor sector-specific regulation for AI over a more general approach. This preference stems from the expectation that sector-specific regulation is more likely to address the challenges posed by AI to the healthcare domain and that the policy goals, instruments and implementation arrangements will remain under their control (i.e., within their “policy monopoly” according to the PET).

This reasoning becomes particularly compelling if actors perceive an urgent need for swift regulation on a policy issue. In such cases, they are likely to rely on heuristics, or cognitive shortcuts, leading them to propose policy solutions aligned with (the policy legacy of) their own policy subsystem. This approach allows for quick implementation of policy solutions based on a familiar framing corresponding to (the shared beliefs of) their own policy subsystem. The literature on policy integration has previously argued that there is a tendency towards sectoralization of policy solutions when addressing emerging problems unless deliberate actions are taken to counteract this trend (Cejudo and Trein 2023). Building upon this context, we put forward the following expectation (Expectation 2):

Actor configuration at the subsystem level

The earlier mentioned differentiation between generalist and specialist roles in the policy process carries implications for supporting encompassing regulation in relation to the substantive policy fields that are examined by the research. Differences between policy issues matter (Baumgartner et al. 2019; Hill and Varone 2021) because they sort the actors involved in the policy subsystems into generalists and specialists. In other words, some policy fields tend to comprise of actors that can be considered policy generalists whereas others of actors that are policy specialists.

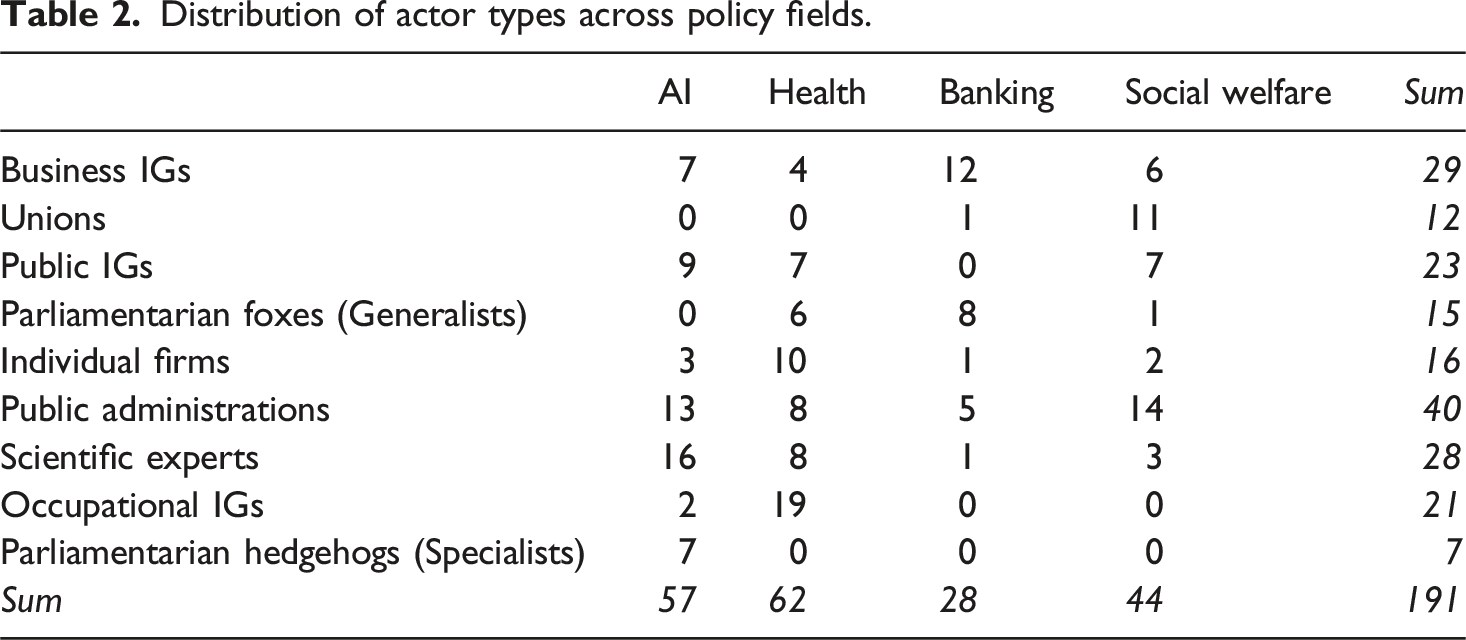

In this study, we compare four policy domains, namely banking, social welfare, health and AI. Based on previous studies about actors’ configurations in these four policy domains, it can be reasonably assumed that the predominant actor types are primarily business groups in the case of banking (Gava 2023), and unions or public interest groups in the case of social policy (Bonoli 2013). Conversely, in the domains of AI and health policy, occupational interest groups, firms, and scientific experts are known to be the more dominant actors (Criado et al. 2024; Trein 2018).

This distinction among actors’ prominence in different policy domains suggests that those involved in banking and social policy would prefer their respective fields to be included in an integrated (encompassing) approach to AI regulation, whereas this inclination does not apply to AI and health policy. In simpler terms, whether actors desire the inclusion of their own policy field in an encompassing AI policy approach is contingent upon the composition of the existing actors’ community. Based on this premise, we formulate the following expectation (Expectation 3):

Data and method of analysis

To empirically examine our expectations, we use data from an original online survey of French, German, Swiss organizations and important individuals (such as parliamentarians) that are politically active in one of three established policy fields (banking and finance, health, and social welfare). Furthermore, our survey also includes actors that we identified to be part of (a potential) AI policy subsystem. The three countries represent a diverse set of polities in terms of EU membership (France and Germany are EU members, Switzerland is not an EU member), and decentralization at the national level (France is a unitary state with elements of decentralization, Germany is a centralized federation, and Switzerland is a decentralized federation). And the comparisons of four policy domains allows us to find out whether actors in established policy subsystems behave differently in comparison to actors in a nascent subsystem.

The unit of observation are collective policy actors, i.e., organizations. The only individuals who we surveyed are parliamentarians. Regarding the selection of responding organizations, we paid special attention to organizations operating at the intersection between one of the policy subsystems and digital topics but included organizations with and without specific focus on AI. For the (potential) nascent AI policy subsystem, we only included organizations with a clear focus on AI, to avoid simply selecting the entire digital policy subsystem. This corresponds to typical studies with surveys amongst actors in policy subsystems (Ingold and Varone 2012). The respondents to the survey were representatives of organizations that fall within the categories theorized in our framework. These included, for example, heads of organizations, representatives of departments responsible for AI or media, or designated spokespersons. Overall, we received 189 responses to our online survey, that can be attributed to the policy field, in Germany, Switzerland, and in France (Table S1, Supplementary Materials).

To operationalize our dependent variable, the support for an encompassing versus a sectoral approach to regulation of AI, we combine two variables. Firstly, we use a variable that measures whether actors are in favor of sectoral versus an encompassing approach to AI regulation (on a scale from 0 to 10). Secondly, we use a variable measuring how many sectors the respondent mentioned that they want to be covered by an encompassing AI regulation. To obtain this information, respondents were presented with the policy fields covered in the Comparative Agendas Project (CAP; see Baumgartner et al. 2019) and asked to indicate which sectors should be covered by an AI regulation. By using regression scores in principal component analysis, we combined both variables into an indicator regarding encompassing regulation of AI that combines information about support and substance. This variable is centered around its mean.

To operationalize our first theoretical expectation, we use two measures. Firstly, we created binary variables for each of the nine actor types that we defined above (see Table 1 above). The coding scheme for the interest groups was based on the codebook utilized in the INTERARENA project (Binderkrantz et al. 2015). We added the categories, firms, scientific organizations, and public administrations to the codebook. To distinguish between specialists and generalists amongst parliamentarians, we code those parliamentarians who were active in the media and in parliament regarding AI as specialists. All the other parliamentarians were coded as generalists (even if they had either pronounced themselves on the topic in the media, or voted for a parliamentary resolution, but did not fulfill both conditions). The first author of this study conducted the coding of the actor types, and the other two coauthors verified the results. Secondly, we created a measurement to quantify the number of sectors in which actors are actively engaged, using again the CAP categories of policy issues. As the distribution exhibited a significant right skew, we decided to logarithmically transformation this variable. Rather than focusing on actor types, this measurement indicates the reported activity of policy actors.

To operationalize the second expectation, we use a variable that measures the importance that actors attribute to AI in their respective policy fields (on a scale from 0 to 4). To measure our third expectation, we coded a binary variable assessing whether actors desire their own policy field to be incorporated into an encompassing approach to AI regulation. During the survey, we presented the participating actors with the different policy fields covered in the CAP and inquired about their preference for each sector to be included in an encompassing AI policy solution. As there is no established policy field specifically designated for AI within the CAP codebook, we use policy field 17 (Technology) for those actors who we attributed as part of AI policy subsystem, to measure if their own policy field should be included into an encompassing regulation of AI. We verified this approach with the team that is responsible for the CAP codebook. Table S2 in the Supplementary Materials shows the descriptive statistics for all variables in the dataset.

To analyze the data, we employ Bayesian regression models. This analytical strategy allows us to mitigate the problem of few responses and to obtain robust inferences (McElreath 2016). In accordance with the literature, we use weakly informative priors (Lemoine 2019).

Results

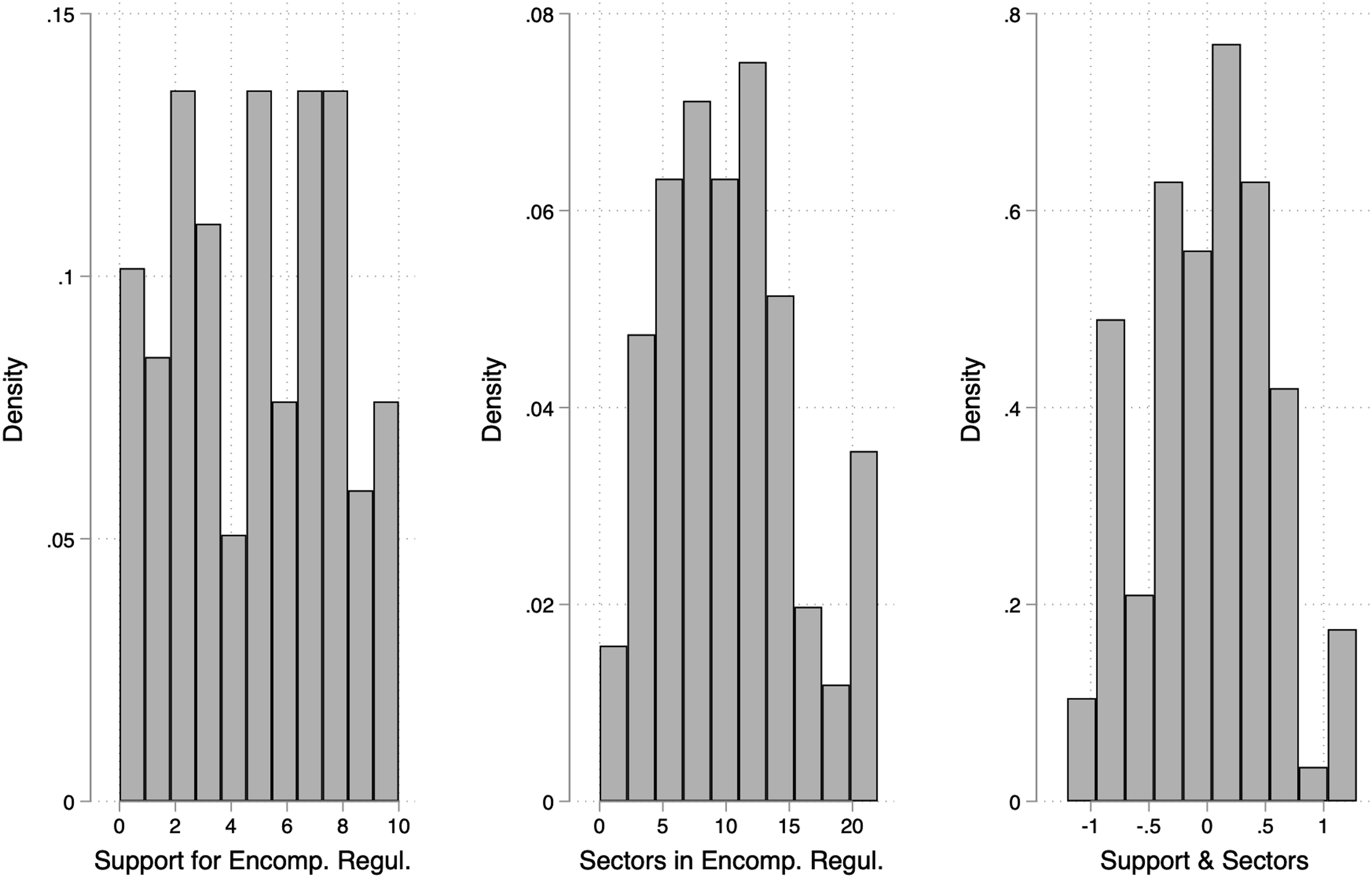

At first, we look at how actors’ support encompassing regulation of AI. Figure 1 displays the distribution of responses to the questions we used to construct our dependent variable measuring whether actors support an encompassing approach to AI regulation that combines different sectors, or if they prefer a more sectoral focusing on specific policy issues separately. Support for encompassing regulations of AI.

The left hand-side graph indicates the results on the question how much respondents supported an encompassing solution compared to a sectoral one and with a rating on a scale ranging from zero to 10. The results suggest that actors are rather evenly distributed between the different options for answers. The middle graph shows the number of specific policy issues actors mentioned when asked which sectors should be included in an encompassing approach to AI regulation. The results indicate that most respondents mention between five and 15 policy sectors that should be integrated in an encompassing regulation regarding AI. Finally, the right hand-side graph shows the combination of both variables through regression scores in principal component analysis, which results in a rather normal distribution of support for encompassing regulations for AI.

These findings indicate that actors’ preference regarding the scope of AI regulation are mitigated. Some prefer sectoral regulations focusing on AI applications in specific sectors, whilst others prefer more encompassing rules to deal with AI that cover various fields. In the following, we explore how this distribution is linked to the type of actors and whether generalist or specialist actors have different preferences.

Differences between actor type categories

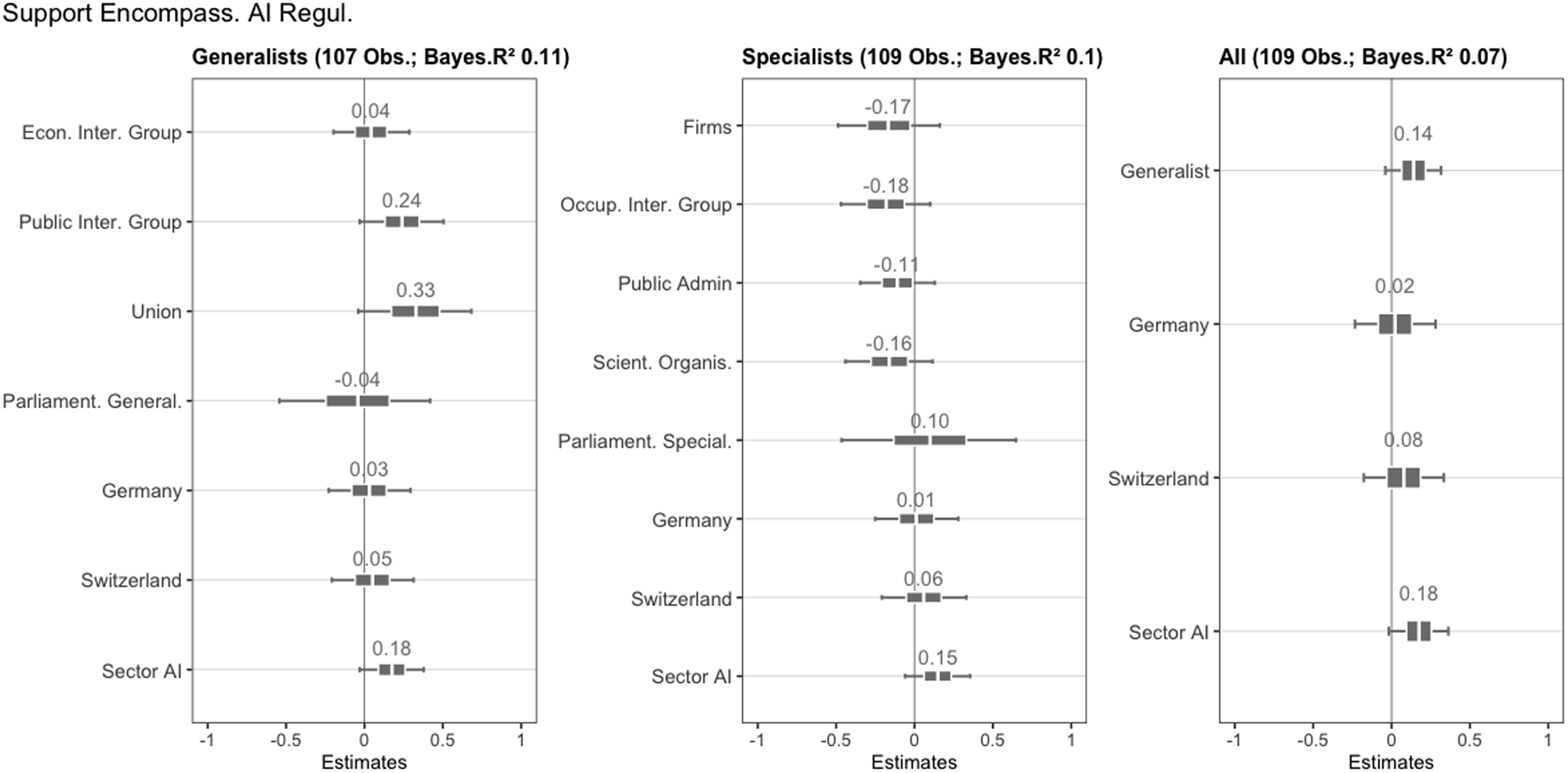

We start with the analysis that looks at how different actor types–policy generalists and policy specialists–support an encompassing AI regulation. To carry out the analysis, we estimate a linear regression model based on the above-mentioned indications. Figure 2 shows the posterior medians with 50% and 89% uncertainty intervals (high density intervals) representing the inner and outer probability that these actors support encompassing AI regulations. The models show that the estimations using binary variables for each actor type fit the data better compared to a model that only includes a binary variable controlling for either a generalist or a specialist role. Regression tables with more information on the model fit can be found in the supplementary materials to the paper. Coefficient plots, regarding actor types and preferences for encompassing AI regulation.

The findings indicate that public interest groups, and unions tend to support encompassing AI regulation. Statistically speaking, the results regarding the support for such measures is most robust for unions and public IGs where almost both credible intervals are located (almost) beyond zero. This finding supports our first expectation, but it is not conclusive. Regarding economic IGs, the median of the posterior distribution is not credibly more positive than the variables coded as specialists. The same holds for parliamentarians who we coded as generalists.

Regarding policy specialists, the findings indicate a tendency among firms, occupational IGs, and public administrations to oppose encompassing AI regulations. These results are credible at least the 50% uncertainty interval, which is below zero. The findings are less clear cut for “hedgehogs” parliamentarians who should have somewhat of a specialist role. Finally, in the third model, we only include a binary variable controlling for either generalist or specialist. The posterior distribution of this variable indicates some support for the first expectation, but the results remain somewhat inconclusive, because credibility intervals are not fully beyond the 89% line. The model fit (Bayes R2) clearly shows that the models with several variables fit the data better.

In the models, we control for Germany and Switzerland (federalist countries) compared to France (centralized country), which serves as a baseline because it has a different political system. We also control for AI as policy field compared to health, social welfare, and banking, since those who are part of this emerging policy field might interpret our survey question (do you support an encompassing (10) versus a sectoral (0) approach to AI) differently than those from the three established policy fields.

These findings offer some support for our first expectation, which posited that policy generalists are more likely to support encompassing AI regulation compared to policy specialists. We draw this conclusion on the observation that the coefficients point in the direction we expect, except for parliamentarians. Nevertheless, the results are not conclusive, since the statistical robustness of these findings is limited. This is however unsurprising, since AI is still a new issue and stakeholders are uncertain about their preferences. Therefore, the case of AI is a hard test for our theoretical argument. Against this background, the results are encouraging and indicate – except for the distinction between generalists and specialists amongst parliamentarians – that the actor role is likely to be linked to preferences for regulation.

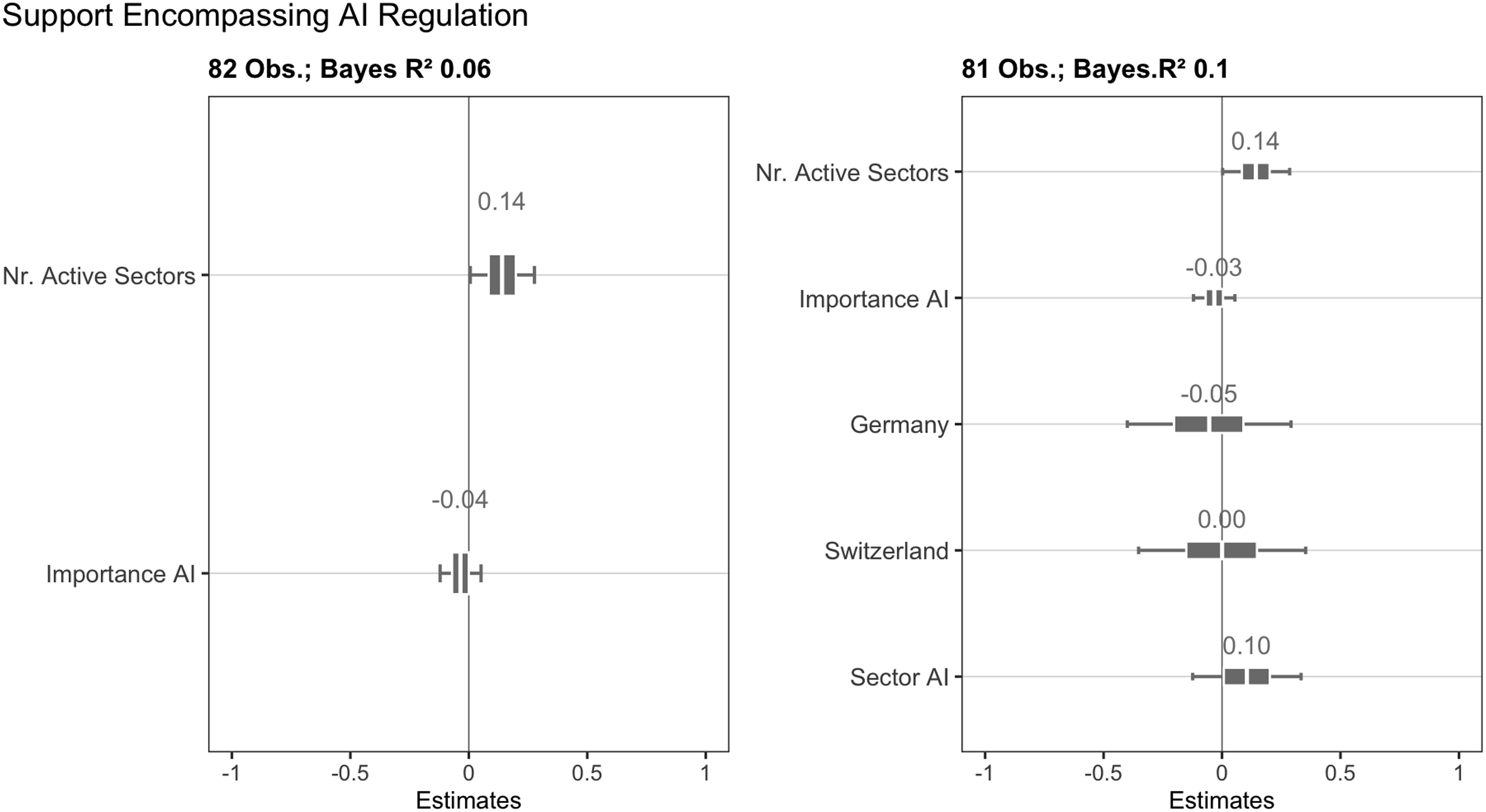

Breadth of policy activity and importance of AI

We now turn to an analysis that further probes our first expectation with a different measurement (breadth of reported activity instead of actor categories) and combines it with an analysis of our second expectation. Specifically, we estimate a model that controls for degree to which actors are active in different policy sectors. In this model, we do not use our coding of the actor category as a measurement for the generalist-specialist distinction, but the reported breadth of policy activity from the actors who participated in the survey. Furthermore, we include the variable measuring how important actors perceive AI for their policy sector, which operationalizes our second expectation. Figure 3 shows the posterior medians with 50% and 89% uncertainty intervals (high density intervals) representing the inner and outer probability for this analysis. We again control for country and the AI sector, which yields a better model fit (Figure 3). The logic of the control variables is the same as in Figure 2. The tables with more information on the models can be found in the supplementary materials to the paper. Coefficient plots, regarding support for encompassing AI regulation with activity across sectors and perceived importance of AI.

The results reveal that actors who report to be active across many policy sectors tend to support more encompassing AI regulations. The findings extend further support to the first expectation according to which those who report to be active in less policy fields should have more of a policy specialist role whereas those reporting activity in more fields ought to be generalists.

Furthermore, the results from this analysis show that when actors perceive AI as an important issue within their sector, the likelihood of supporting encompassing regulation decreases compared to those who view AI as less important. This result lends support to our second expectation, which proposes that when actors perceive AI as an important issue within their own policy sector, they are more inclined to prefer a focused policy solution tailored to their specific sectoral interests, rather than an encompassing regulatory approach. Nevertheless, these findings are less conclusive statistically, because the posterior distribution shows a median that is certain just above the 50% credibility interval. In summary, these results support especially our first expectation (Actors with a generalist role support encompassing regulation), whilst they extend only limited confirmation to our second expectation (Actors who consider AI to be important for their own policy field support rather sectoral regulation).

Inclusion of own sector into encompassing regulation

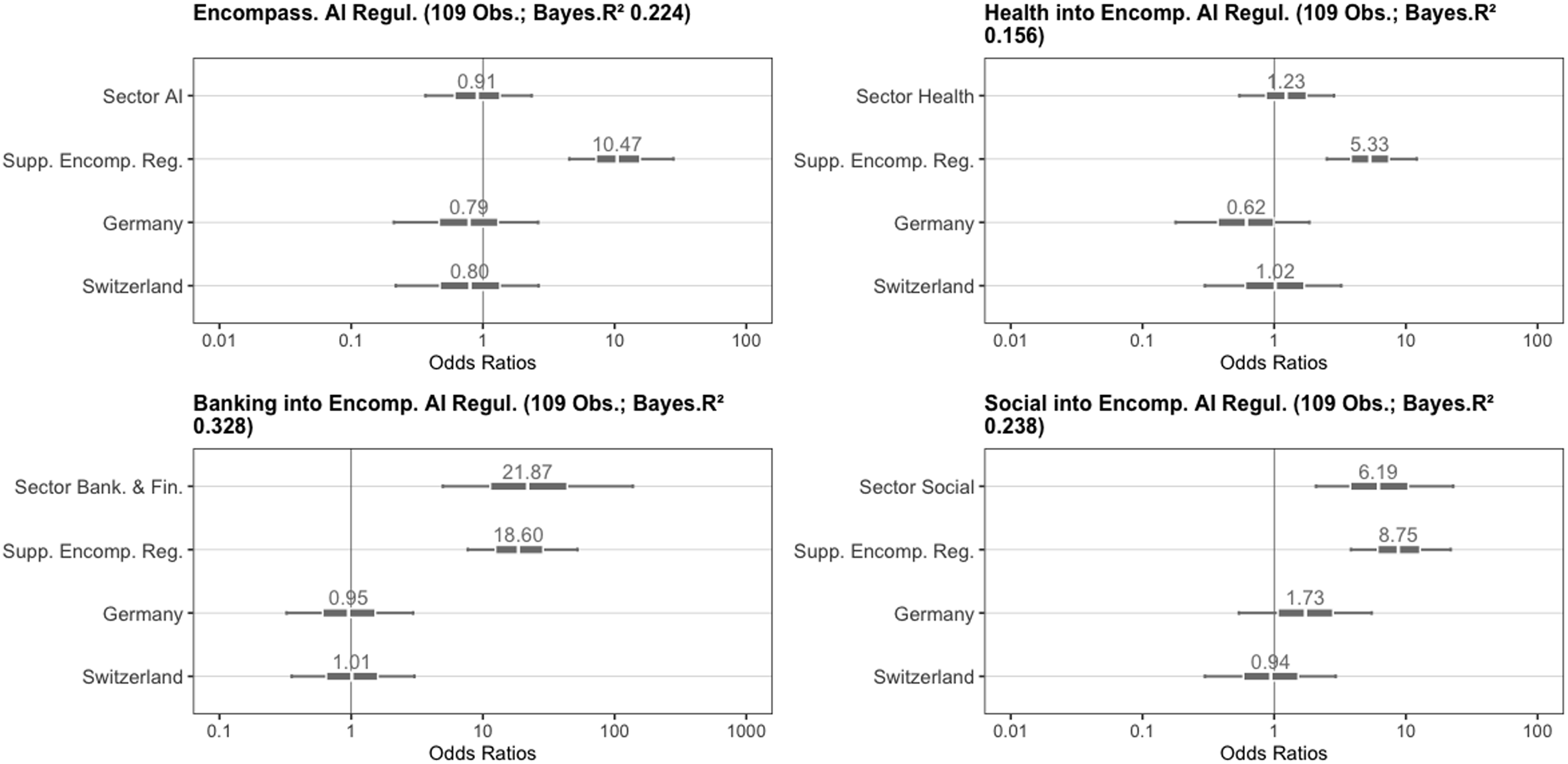

The final part of the empirical analysis comprises of another empirical test that is related to our goal to understand actor preferences for AI regulation. Here we investigate whether actors endorse the inclusion of their proper policy field in an encompassing regulatory approach to AI. For this analysis, we examine each of the four policy fields separately. Figure 4 presents the results of these analyses, showing the posterior medians with 50% and 89% uncertainty intervals (high density intervals) representing the inner and outer probability for this analysis, based on the actors’ policy sector affiliation and their stance on encompassing versus sectoral AI regulation. In these models, the dependent variable is a binary measurement, which is why we choose to estimate logit models and the coefficients reported are odds ratios. The selection of country control variables follows a similar logic as in the previous models. The regression tables can be found in the supplementary materials to the paper. Coefficient plots regarding inclusion of own sector into an encompassing AI regulation.

The analyses reveal notable differences across policy sectors. The estimations demonstrate that actors belonging to social policy and banking policy fields tend to support the inclusion of their respective policy sectors into an encompassing AI regulation. Conversely, actors in health policy show less inclination to include their own policy field in an encompassing AI regulation. The same pattern emerges for the emerging policy field of AI, where actors within the national AI policy subsystem tend to be hesitant about an encompassing AI regulation (Lemke et al. 2023, 2024). These findings extend support to our analysis regarding the separation of policy generalists and specialists. From a statistical point of view, the results of the analysis are conclusive and show a robust difference between policy fields.

Distribution of actor types across policy fields.

Therefore, these results support our argument that the distribution of active actor types across policy fields explains why they tend to support the integration of their respective policy fields into an encompassing regulatory approach to AI (see our third expectation). While the number of organizations in this sample is limited, we contend that the nature of the specific policy field, particularly the emphasis on science and scientific expertise, accounts for the support of actors within the policy community for encompassing regulation compared to more specialized policy systems.

Conclusions

In this study, we analyzed whether policy actors, broadly defined, prefer an encompassing regulation or sector-specific regulations of Artificial Intelligence (AI). We proposed a general framework that distinguishes policy actors as either generalists, who adopt a broad perspective on public policy, or specialists, who focus on narrow, sector-specific issues. Based on an original survey targeting selected policy organizations in France, Germany, and Switzerland, we examined whether generalist actors tend to support broader, more encompassing AI regulations, and if specialist actors are more inclined to favor sector-specific approaches.

The results of our analysis revealed that actors which are active in several policy fields tend to support encompassing regulations. The distinction between types of generalist and specialist actors and their relationship to preferences of regulations works somewhat for public interest groups and unions, but to a lesser extent for other actors. What matters are policy sectors. In technically complex fields with many specialist organizations, policy actors tend to oppose integrating their fields into encompassing AI regulations. Overall, the findings indicate some support to our argument that generalists favor broader regulations, while specialists advocate for sectoral solutions.

Our research contributes to the empirical study of AI regulation and policy in two significant ways. First, we utilize original survey data collected from diverse policy organizations, rather than relying on surveys focused on the general population or specific administrative bodies only. Second, we examine the preferences of various policy actors, for different styles of regulation––encompassing such as the (risk-oriented) EU regulation versus sector-specific, such as in the US states regulations. Our results imply that on the one hand, actors are mitigated about how to regulate AI, because of the pacing problem, which entails the dilemma that too early regulation might result in regulations that are ineffective as they are not calibrated well on the technology, or come too late to avoid catastrophic consequences (Almada and Petit 2025, 98). On the other hand, organizations might have mixed preferences, because the state has a double role regarding AI as Digital Era Governance implies. It must adopt AI to improve governmental services against the background of new innovations (Dunleavy and Margetts 2025, 188). At the same time, policy actors need to watch out to protect the population from the negative consequences of these new technologies, because they have a central role in this process.

Furthermore, our research contributes to the scholarship on policy integration and “administrative holism” (Christensen and Lægreid 2007; Jochim and May, 2010; Trein and Maggetti 2020; Varone et al., 2013) by making a link between this literature and Digital Era Governance scholarship (Dunleavy et al., 2006; Dunleavy and Margetts 2025). Finally, our research contributes to public policy scholarship by proposing how actors can fit in the role of a “policy generalist” or a “policy specialist.” This dimension offers an important contribution to research on public policy, by showing how the actor type associated to a policy sector impacts on preferences for regulating cross-cutting policy issues. Future research needs to develop this idea further, notably by asking the question if and how actor types can be placed on a continuum between a “full generalist” and a “full specialist” in terms of policy orientation.

The analysis opens different avenues for future research. Firstly, future research should further investigate the preferences of generalists and specialists by focusing on other policy fields with less uncertainty compared to AI, because the statistical robustness of some of our results is not fully conclusive. Secondly, future research could examine the preferences for horizontal and vertical regulations including security and defense policy, since this policy field is lacking from our analysis. Finally, it would be very interesting to match the preferences of policy actors, such as we explore them in this paper, with results from a population survey, to understand if there is a difference between elites and citizens regarding AI regulation.

Supplemental Material

Supplemental Material - Generalist and specialist roles of policy actors: Insights from preferences on AI regulation

Supplemental Material for Generalist and specialist roles of policy actors: Insights from preferences on AI regulation by Philipp Trein, Nicole Lemke, and Frédéric Varone in Public Policy and Administration

Supplemental Material

Supplemental Material - Generalist and specialist roles of policy actors: Insights from preferences on AI regulation

Supplemental Material for Generalist and specialist roles of policy actors: Insights from preferences on AI regulation by Philipp Trein, Nicole Lemke, and Frédéric Varone in Public Policy and Administration

Footnotes

Acknowledgement

We thank the Swiss National Science Foundation for generous funding (Grant Nr: 185963).

Ethical Considerations

We obtained an ethical clearance for this research by the Ethics Commission of the Faculty for Social and Political Sciences at the University of Lausanne.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.