Abstract

Governments have been putting forward various proposals to stimulate and facilitate research on Artificial Intelligence (AI), develop new solutions, and adopt these technologies within their economy and society. Despite this enthusiasm, however, the adoption and deployment of AI technologies within public administrations face many barriers, limiting administrations from drawing on the benefits of these technologies. These barriers include the lack of quality data, ethical concerns, unawareness of what AI could mean, lack of expertise, legal limitations, the need for inter-organisational collaboration, and others. AI strategy documents describe plans and goals to overcome the barriers to introducing AI in societies. Drawing on an analysis of 26 AI national strategy documents in Europe analysed through the policy instrument lens, this study shows that there is a strong focus on initiatives to improve data-related aspects and collaboration with the private sector, and that there are limited initiatives to improve internal capacity or funding.

Keywords

Introduction

Artificial Intelligence (AI) technologies are gaining extraordinary momentum. After a period of relative neglect, commonly referred to as the “AI winter”, in the past few years, technologies such as Machine Learning, intelligent chatbots, and image and speech recognition have reached a new peak in mainstream visibility, user expectations, and global investments. Such renewed focus is shared by governments worldwide, who are swiftly buying into a discourse on the potential of AI to achieve public sector goals. AI represents “an ideal technology to be applied to the public-sector context, where environmental settings are constantly changing, and pre-programming cannot account for all possible cases” (Sun and Medaglia, 2019: 370). AI applications can potentially increase the efficiency and effectiveness of service delivery (Mikalef et al., 2021), but also support government decision-making by simulating different policy options (Margetts and Dorobantu, 2019). Examples of government AI applications include the prediction of crime hotspots (Goldsmith and Crawford, 2014), supporting cancer treatment choices by doctors in public hospitals (Sun and Medaglia, 2019), recommending hygiene inspections in restaurant businesses (Kang et al., 2013), and responding to enquiries in natural language on waste sorting, taxes and parental support (Aoki, 2020), amongst many others (Pencheva et al., 2020; Van Noordt and Misuraca, 2022).

The potential of AI for enhancing social benefits and economic growth has been stressed in many research papers (Zuiderwijk et al., 2021) and policy documents (Jorge Ricart et al., 2022), with governments across the world aiming to prepare their country for the introduction of AI and be the leading country in AI (Toll et al., 2020a). In this respect, governments have been putting forward policies and strategies to stimulate and facilitate research on Artificial Intelligence, developing new solutions and adopting these technologies within their economy and society (Fatima et al., 2020; Guenduez and Mettler, 2022). Despite this intent, however, the adoption and deployment of AI technologies within public administrations face many barriers, limiting administrations in drawing on the benefits of these technologies (Mikalef et al., 2021; Schedler et al., 2019). Recent academic literature has highlighted the various barriers public administrations face in developing and using AI technologies, ranging from the lack of quality data, ethical concerns, unawareness of what AI could mean, lack of expertise, legal limitations, and the need for inter-organizational collaboration (Campion et al., 2022; Fatima et al., 2020; Medaglia et al., 2021; Van Noordt and Misuraca, 2020b; Wirtz et al., 2019). As a result, there are limited insights into implementing AI in public administrations, and the uptake thereof is still in an early phase (Mergel et al., 2023; Tangi et al., 2023). While many private sector organisations, and especially small and medium enterprises (SMEs), face similar challenges in using AI technologies within their business processes, governments are actively introducing policy initiatives and measures to make it easier for businesses to develop and use AI technologies, as many of the national AI strategies describe (Fatima et al., 2022).

In this respect, the public sector is only mostly regarded as a facilitator or regulator of AI technologies in the private sector. Far less attention is given to the government’s role as an AI user and on how governments aim to overcome public organisations’ barriers to using AI for societal benefit (Kuziemski and Misuraca, 2020). The use of AI by governments themselves is thus not often within the scope of the discussion (Guenduez and Mettler, 2022), which limits the potential transformation impact that this technology can have (Pencheva et al., 2020). Little is understood of how governments aim to facilitate and stimulate the use of AI within their own administrations, as a consequence of scarce research on the use of AI in government and on how various barriers to public sector innovation of AI have been overcome (Medaglia et al., 2021). This article thus aims to contribute to this research gap by providing a noteworthy empirical basis of activities governments set out to stimulate the use of AI in public administration and overcome these barriers, by reviewing published AI strategies through the policy instrument lens.

These strategy documents include plans and goals in which governments describe how to overcome various issues and barriers to introducing AI in their societies. Despite that published AI strategies often hold a mythical discourse about the opportunities of AI (Ossewaarde and Gulenc, 2020), researchers have already started analysing the AI strategies, in an effort to gain new understandings of the role of governments in AI. In this emergent phase, these efforts include analyses based on general categories, such as policy areas (Fatima et al., 2020; Valle-Cruz et al., 2019), and narratives (Guenduez and Mettler, 2022). Other analyses adopted grounded approaches by looking for textual patterns (Papadopoulos and Charalabidis, 2020), focusing on ethical principles (Dexe and Franke, 2020), and on which public values are most referenced in AI strategies (Robinson, 2020; Toll et al., 2020b; Viscusi et al., 2020).

Whilst research has examined the policy instruments described in AI strategies (Djeffal et al., 2022; Fatima et al., 2020), these studies examine the AI strategies in general, without a specific focus on AI implementation in public administration. To tackle this issue, this study analyses 26 AI strategies from 25 European countries, focusing on the activities related to stimulating the development and adoption of AI within the public administration, with the research question: “What are the main policy initiatives proposed in AI strategy documents by European governments to facilitate the development and adoption of AI technologies within their public administrations, and how do these initiatives aim to address the barriers faced in the implementation of AI in government?”

The strategies analysed in this study have been published following the momentum of the Coordinated Plan on Artificial Intelligence by the European Commission, where European Member States have committed to introducing AI strategies (or other programmes) in which they specify investment plans, implementation measures and other initiatives related to AI (European Commission, 2018). We aim to understand the main policy initiatives that Member States designed to make the European public sector a “trailblazer” in the use of AI, as stated in the Coordinated Plan (European Commission, 2018).

Our previous research (Tangi et al., 2022; Van Noordt et al., 2020) showed significant differences in the extent to which national strategies address the use of AI in public administrations, and in which initiatives governments propose to overcome barriers. In this study, a systematic content analysis has been conducted over 26 AI strategies to identify which initiatives administrations put forward to facilitate the development and adoption of AI in their public administrations. An inductive coding of 816 segments of texts describing plans to introduce AI in government across 26 documents reveals similarities and differences in the approaches that governments are taking.

This paper is structured as follows. First, we provide an introduction to public administrations’ barriers to using Artificial Intelligence. Next, we connect the barriers faced in the implementation of AI with the literature on policy instruments. Afterwards, the coding methodology and approach are described. Next, the main findings of the coding process are presented and explained. The paper concludes with the core take away points of the study and proposals for further research on overcoming the barriers of AI adoption in government.

Literature review

Challenges to the use of AI in the public sector

The term Artificial Intelligence still holds many different interpretations (Collins et al., 2021; Noordt, 2022) and is commonly used as an umbrella term to describe software and hardware that is capable of conducting tasks which previously were thought to require human intelligence (Tangi et al., 2022). Machine Learning algorithms “find their own ways of identifying patterns, and apply what they learn to make statements about data” (Boucher, 2020: 4). For the public sector, AI holds promises of improving the internal efficiency of public administrations, improving decision-making, enhancing citizen participation, improving legitimacy, making public services more personalised, and removing redundant tasks and activities for public workers (Dwivedi et al., 2019; Eggers et al., 2017; Mehr et al., 2017; Valle-Cruz and García-Contreras, 2023; Van Noordt and Misuraca, 2022). As a result, AI technologies can provide far greater public value than other digital technologies (Li et al., 2023).

Because of this high potential of AI technologies to improve various functions of governance in the public sector, preliminary research has emerged, analysing the use of AI technologies by government authorities, which challenges they face, and which consequences occur from their deployment (Kuziemski and Misuraca, 2020; Medaglia et al., 2021; Neumann et al., 2022). In fact, despite earlier enthusiasm for the benefits of AI technologies, a substantial body of research has highlighted the risks and dangers of AI technologies, such as the risk of algorithmic bias (Bannister and Connolly, 2020), opaque decision-making (Janssen et al., 2020b), rapid loss of jobs, risks to citizen’s privacy (Yeung, 2018), radicalisation, and the spread of fake news (Dwivedi et al., 2019). While many of these ground-breaking findings correspond to the use of AI technologies by larger technology companies or government authorities outside the European Union, scandals and controversies regarding the use of AI by public authorities within the European Union have started to emerge, highlighting the fact that the potential benefits of AI may be offset by its negatives if not used appropriately.

Whilst the possible negatives effects - or even dangers of the irresponsible use of AI – should not be ignored in the academic debate on the use of AI in government (Chen et al., 2023; Schiff et al., 2021), this paper dives deeper into the various challenges governmental organisations face with adopting these technologies, rather than into the consequences following the deployment of AI. As the existing digital government literature has researched extensively, public organisations often face many hurdles in using innovative technologies (Cinar et al., 2018; De Vries et al., 2016). A technology may be available on the market, already used extensively in the private sector and create expectations on how public services and governments ought to facilitate services, but government organisations may still face difficulties in adopting the technology in their organisation (Meijer and Thaens, 2020), even more so in a way that changes organisational work practices (Gieske et al., 2020; Schedler et al., 2019).

Similar barriers exist for using AI technologies within the public sector, as early research has shown (Neumann et al., 2022; Rjab et al., 2023; Van Noordt and Misuraca, 2020b). Following a review of existing studies on AI in the public sector, Wirtz et al. (2019) found four main streams of challenges that hinder the implementation and use of AI applications in the public sector: technology implementation, legal, ethical, and societal challenges. These streams have been found in other studies, highlighting that it is difficult to start with AI (Bérubé and Giannelia, 2021; Schaefer et al., 2021; Van Noordt and Misuraca, 2020b) or scale up following a successful pilot (Aaen and Nielsen, 2021; Kuguoglu et al., 2021; Tangi et al., 2023).

In particular, some technological challenges identified refer to a lack of good data (Janssen et al., 2020a). Deploying AI technologies requires public organisations to have robust data management practices, as data is the backbone of many AI applications (Valle-Cruz and García-Contreras, 2023). A lack of sufficient data, poor data quality, or difficulties in obtaining the necessary data – due to issues in sharing data between various public organisations and adherence to multiple data-related regulation, such as the GDPR – limits public organisations (Agarwal, 2018; Harrison et al., 2019; Sun and Medaglia, 2019). Data-related challenges emerge if a significant portion of public services is not digitised and, thus, little to no data is available on these services for AI development and adoption. Some of the legal barriers public administrations face are legal restrictions hindering their use of AI technologies, such as privacy legislation or the mandate of public authorities to deploy AI technologies (Burrell, 2016; De Bruijn et al., 2022).

Public procurement regulation has been regarded as unfit for public authorities to stimulate AI technologies, as it often requires more flexible innovative procurement processes (Madan and Ashok, 2022; Mcbride et al., 2021). Legal uncertainties may also hinder the use of AI in government, as unclarities regarding the responsibility, liability and accountability of AI-enabled decisions in the public sector may lead to hesitation among civil servants to adopt AI (Alshahrani et al., 2021).

Related to legal concerns are various ethical concerns that raise barriers to using AI in government (Danaher, 2016). With AI, there are concerns about whether the development and use of AI are ethically and morally justifiable, which values are pursued during the development of AI, and whether AI follows social norms and obligations (Bannister and Connolly, 2020; Hartmann and Wenzelburger, 2021; Ju et al., 2019). Public administrations may thus be hesitant to use AI technologies as they may threaten the privacy of citizens, make decisions more opaque, biased, or because there is a general distrust towards having machines or computers play a more substantial part in the delivery of public services (Sigfrids et al., 2022).

Citizens may be hesitant to have AI play a significant role in public administration’s decisions and operations, as highlighted by the various concerns citizens have shown about the role of AI in society (Schiff et al., 2021; Wang et al., 2023). A general lack of understanding of how AI works among citizens may make them hesitant for public authorities to use AI, limiting the possibilities for authorities to use these technologies. Civil servants themselves may also not fully understand the opportunities and consequences of AI, as there is a general lack of AI-related skills in the public sector (Mergel et al., 2019; Mikalef and Gupta, 2021; Wirtz et al., 2019). This limits the opportunities to spot potential use for AI, develop new innovative AI applications and use AI in civil servants’ work.

Policy tools to overcome barriers to AI in government

Despite these known barriers to AI adoption limiting the ability to create public value from these technologies (Van Noordt and Misuraca, 2020a), little is still known about how public authorities aim to overcome them (Medaglia et al., 2021; Wirtz et al., 2021). Some public administrations may have conducted successful trials with AI, but face difficulties in scaling up the results across the organization or across organisational boundaries (Alexopoulos et al., 2019; Kuguoglu et al., 2021; Van Winden and van Den Buuse, 2017). In the discourse on AI, the role of government in AI is often only regarded as a regulator in society, or as a facilitator of AI for the private sector, and many of the policies as proposed in national strategies are linked to these two roles (Kuziemski and Misuraca, 2020; Zuiderwijk et al., 2021), leaving limited insights on what governments plan to do to support their own use. A recent analysis of AI policies found that European countries do not often highlight the potential of AI technologies to improve their services (Guenduez and Mettler, 2022). Despite these limitations, what is mentioned in AI strategies may thus provide valuable insights into what actions governments are planning or doing to tackle the existing challenges public administrations themselves face in developing and implementing AI technologies.

Such an inquiry into understanding public policy has been of interest in the public administration field for a more extended period, as research on “anything a government chooses to do or not to do” (Howlett and Cashore, 2014), and in particular, the policy instruments chosen, provides insights on which goals governments aim to achieve and through which means (Howlett and Cashore, 2014). In general terms, policy instruments are referred to as “techniques of governance which, one way or another, involve the utilization of state resources, or their conscious limitation, in order to achieve policy goals” (Howlett and Rayner, 2007). Often, these are studied in the instrument choice approach, where particular attention is given to selecting and implementing these policy instruments as the decision to choose a specific instrument from those available. In doing so, policy instruments are researched for different purposes, such as whether they are fit for their deployment, how policy issues are framed, and how social and power dynamics play a role in the selection of the instrument (Kassim and Le Galès, 2010). The choice of preferred instruments has been linked to more comprehensive governance models, as a shift from more network-styled governance models requires policy tools to follow accordingly, since the decision for specific policy instruments is constrained by the higher-level policy and governance regime (Howlett, 2009).

In this sense, policy instruments are considered as “tools of government” to give effect to public policies and the practical means of how policy is to be achieved and how governments intervene in society (Howlett, 2004). Several classifications of policy instruments have been introduced to categorise the wide variety of actions governments could take. These include: classifications based on the extent of government coerciveness, such as in the Doern continuum (Bali et al., 2021); on the resources behind each tool, such as the carrots, sticks, sermon and organization framework (Bemelmans-Videc et al., 1998; Howlett, 2004); and the NATO taxonomy by Hood (1983), where governments use the resources (Nodality, Authority, Treasure and Organization, hence the NATO acronym) either to monitor or to alter behaviour (Hood, 1983; Howlett, 2018). The work by Hood (1983) has been more recently revisited to capture the unique characteristics of the digital era (Hood and Margetts, 2007). The theoretical framework by Hood and Margetts draws on the four categories of tools of government available (Nodality, Authority, Treasure and Organization), and has been fruitfully used to analyse government strategy and policy (Acciai and Capano, 2021), although in limited form about digital innovation (Mukhtar-Landgren et al., 2019; Reid and Maroulis, 2017). A key exception is the analysis of policy instruments to identify modes of AI governance in analysing AI strategies, ranging from self-regulation, market-based, entrepreneurial and regulatory governance approaches (Djeffal et al., 2022).

Nodality-related instruments refer to the government’s property of “being in the middle of a social network” (Hood and Margetts, 2007: 21) and include instruments associated with such capability, primarily retrieving or sending information. Common instruments in this category include, for example, information campaigns, sending reminders to taxpayers, or receiving information on tax evasion. As such, this is the main instrument governments can implement concerning spreading information and know-how about the topic they aim to address (Hood and Margetts, 2007). Recently, attention has been given to how the internet has affected the use of nodality instruments by governments (Castelnovo, 2021; Margetts, 2009). However, nodality-based instruments are also limited as they only deal with the provision of information and are not sufficient if the government is not seen as a credible source, or when actors are not capable or willing to act on the information provided (Howlett and Mukherjee, 2018).

Alternatively, the state may provide more coercive instruments, described as Authority-related instruments. These include all instruments that draw on legal powers to require and condition behaviours, such as introducing laws and regulations to demand or forbid certain outcomes (Hood and Margetts, 2007). Authority-based tools are only effective when the government is considered legitimate and require high amounts of monitoring and enforcement to be effective (Howlett and Mukherjee, 2018).

Governments may also utilise their financial resources to achieve policy goals. Such Treasure-based instruments allow governments to influence behaviour, such as providing monetary incentives, funding, or applying taxes (Hood and Margetts, 2007). Through financial incentives and disincentives, actors can be persuaded to do certain actions. Providing grants, loans, charges, tax reliefs or funding interest groups or other organisations belong to treasure-related instruments (Howlett and Mukherjee, 2018). Such financial instruments can be based on certain conditions – increasingly allowing for more refined conditions through the advance of data gathered through digital devices.

In addition to these resources, governments may also use Organization-based instruments, which refer to the government’s ability to possess or have easy access to resources and capabilities (Hood and Margetts, 2007). These tools allow governments to act through devices or mechanisms to ensure a change of behaviour in actors. This may occur through creating new agencies, restructuring past agencies, introducing new tools, or deciding to provide services directly. However, lately, Organization-based instruments hardly refer to fully-owned government organisations anymore, as there have been several organizational policy tools which result in a combination of public and private actors (Margetts, 2009).

In practice, policies often combine these different types of instruments. With the advance of digital government studies, the policy instrument literature has been combined to include new instruments from the digital era (Waller and Weerakkody, 2016). In fact, even AI technologies are likely to be deployed by public administrations as part of their policy instrument “toolkit” by, for instance, improving Nodality-related instruments by personalizing information to citizens and thus providing more effective results (Van Noordt and Misuraca, 2022).

Policy instruments are often used to change citizens’ and businesses’ behaviour to meet governments’ policy goals. However, there is limited research on how policy instruments could be utilised to understand and explain how public administrations steer themselves to achieve digital government goals, such as AI-related policy goals. This may be because public administrations have traditionally been regarded more as an instrument themselves (as an Organisation-related instrument) or as the organisations that a deciding and implementing the instruments, rather than being at the receiving end thereof (Waller and Weerakkody, 2016). Nevertheless, considering the substantial barriers public administrations face in AI implementation and general digital government transformation, there may be much value in understanding how governments facilitate such activities through policy and strategies.

Methodology

Data collection

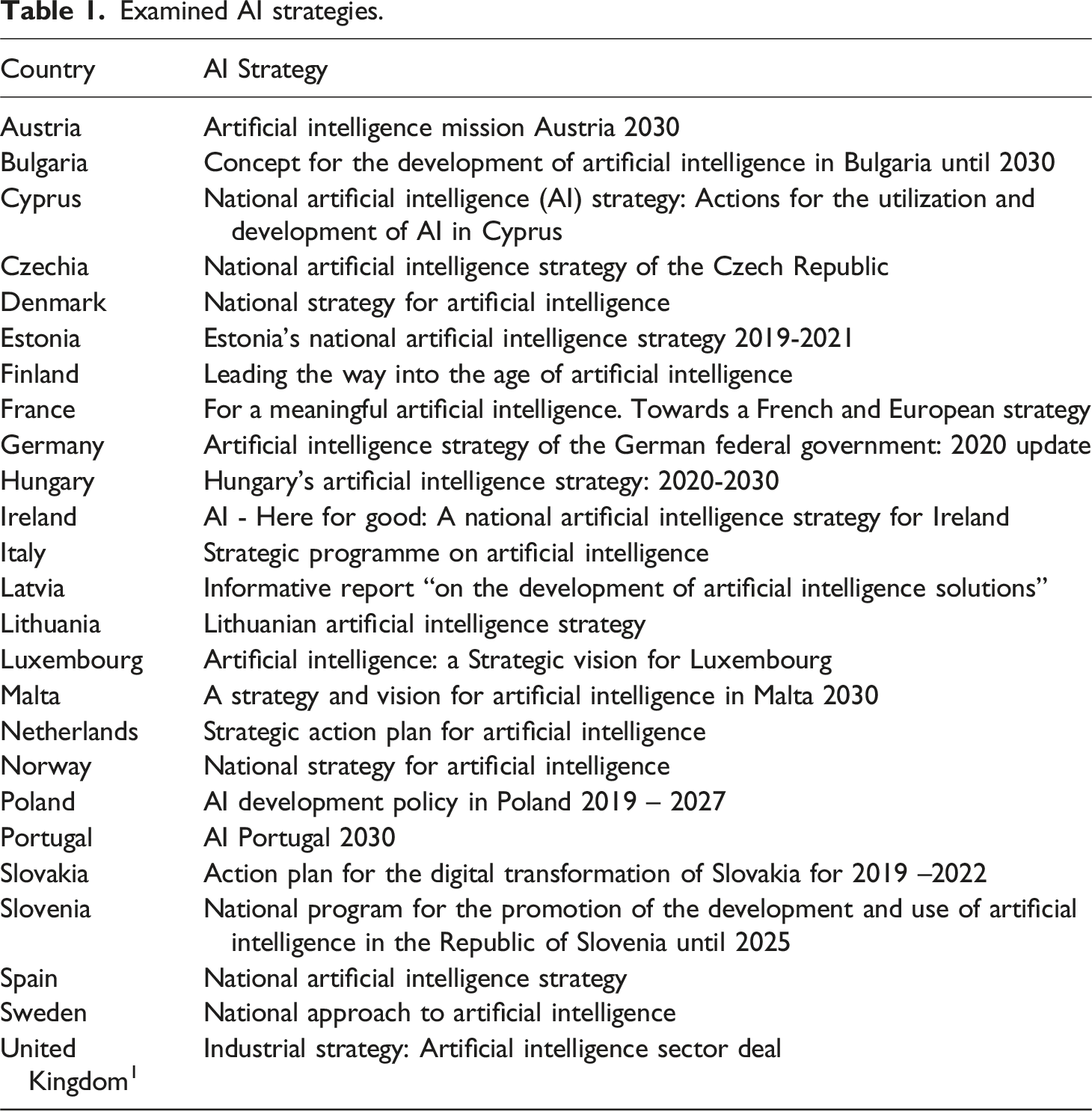

Examined AI strategies.

What counts as a national AI strategy is not always straightforward, as many policy documents, reports and other documents describe AI initiatives (e.g., the countries and initiatives overview of the OECD).

2

In some countries, influential AI documents are published by expert groups or civil society, often in collaboration with the government, which may be regarded as the national AI strategy. Governments may have released numerous AI policy documents in other countries, such as minor updates of the main AI strategy. Hence, to ensure comparability and validity of the analysis, only the AI strategies that were published by the government and can be considered formal AI strategy documents as indicated by the European Commission

3

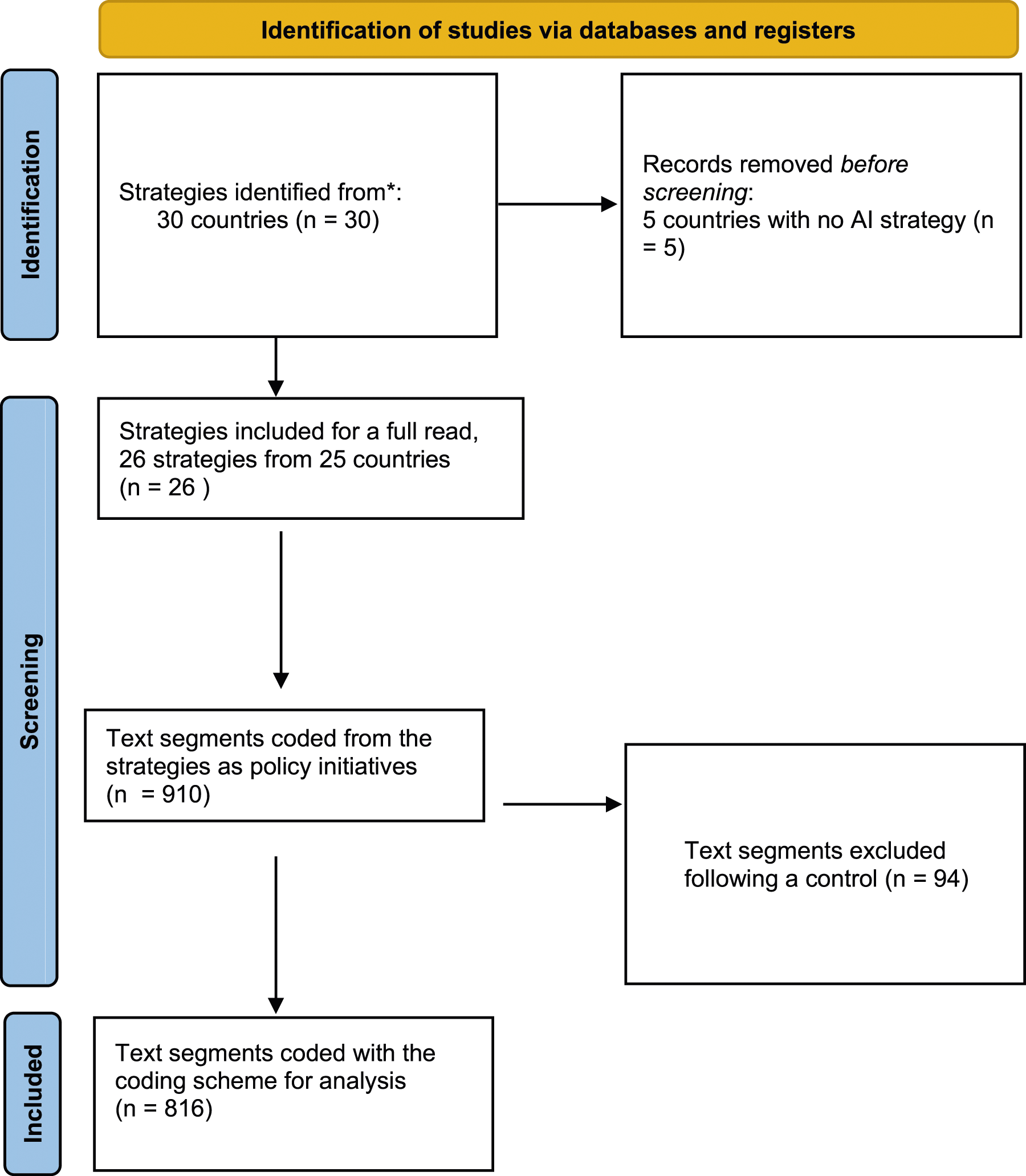

are considered for this analysis. In this respect, we thus do not include certain documents that other studies have included in their analysis of AI strategies, such as Fatima et al. (2020), consequently limiting comparability with these studies and highlighting the need to find consensus in what is considered the national AI strategy of a country. Since some of the strategies were published in languages other than English, machine translation was used to translate the text through either DeepL, Microsoft Office or Google Translate, depending on the document format and the language supported by these platforms. Figure 1 provides an overview of the steps taken in collecting and analysing the national AI strategy documents. Steps taken to collect AI strategies and analyse the policy initiatives regarding AI in public administration.

To analyse national AI strategies, we adopted a qualitative approach, given the novelty of the phenomenon (Yin, 2013). In particular, we conducted a content analysis of the published AI national strategy documents. A content analysis follows a systematic approach to extract patterns of meaning around emergent themes through an iterative process (Flick, 2014; Hsieh and Shannon, 2005) and is a well-established method adopted in a variety of studies on strategic plans in the areas of public administration (Mazzara et al., 2010) and Information Systems research (Nasir, 2005). Following the collection and identification of national AI strategies, the research team included each document for a full reading. In the first step, any text section describing actions, initiatives, or suggestions to facilitate, stimulate or reinforce the development and uptake of AI in the public sector was coded as a “policy initiative”, using the coding software MAXQDA™ (La Pelle, 2004).

In this respect, a rather strict reading of the text was included, and only explicit references (either in the text or through the context of the paragraph) to actions and the public administration, state administration, public services, or other related terms, were included. The strategy documents hold many activities, but most target the private and academic sectors. Other measures are more general, such as increasing the number of AI courses in education. While public administrations may indirectly benefit from these activities, these initiatives have not been included in the review, unless they explicitly referred to the public administration. Alternatively, the strategies highlight the benefits or risks of AI for the public sector but do not explain how this ought to be achieved or mitigated.

Following an internal control of the coding process, 94 initiatives were excluded from the analysis because of duplications, or because they were too abstract to classify. This process resulted in the coding of 816 policy initiatives across the 26 documents, with an average of 31.4 initiatives per strategy document, with the Lithuanian strategy having the fewest initiatives (14) and the French the most (77). Some strategies include fewer initiatives to analyse, such as Bulgaria, Czechia, Italy, Lithuania, Portugal, and the United Kingdom, featuring fewer than 20 policy initiatives; on the other hand, Cyprus, Denmark France, the Netherlands, Norway, Slovenia, and Spain all have over 40 policy initiatives, which should be kept in mind when comparing the relative occurrence of policy initiatives in the strategies. In addition, it is to be noted that governments may have conducted other initiatives to support the uptake of AI within their public administrations that are not highlighted in their strategy and, thus, are not within the scope of this analysis.

Data analysis

In the second step, using the software MAXQDA™, the policy initiatives were assigned a first-order coding. The coding scheme development followed a process divided into phases (Dey, 2003), adopting an inductive coding strategy. Every policy initiative coded in the first step was assigned to one or multiple of the first order coding, as the initiative categories described in the text sometimes overlapped. This led to 1050 coded policy initiatives, compared to the 816 initiatives identified before. Divergences in coding were discussed among the two researchers until a consensus was reached. Following the agreement, a second-order coding was conducted on the first-order coding to better identify the nature of the policy initiative within the category.

An overview of all the policy initiatives and their corresponding first and second-order coding can be found in the Appendix.

Findings

Policy initiatives to improve the use of AI in public administration

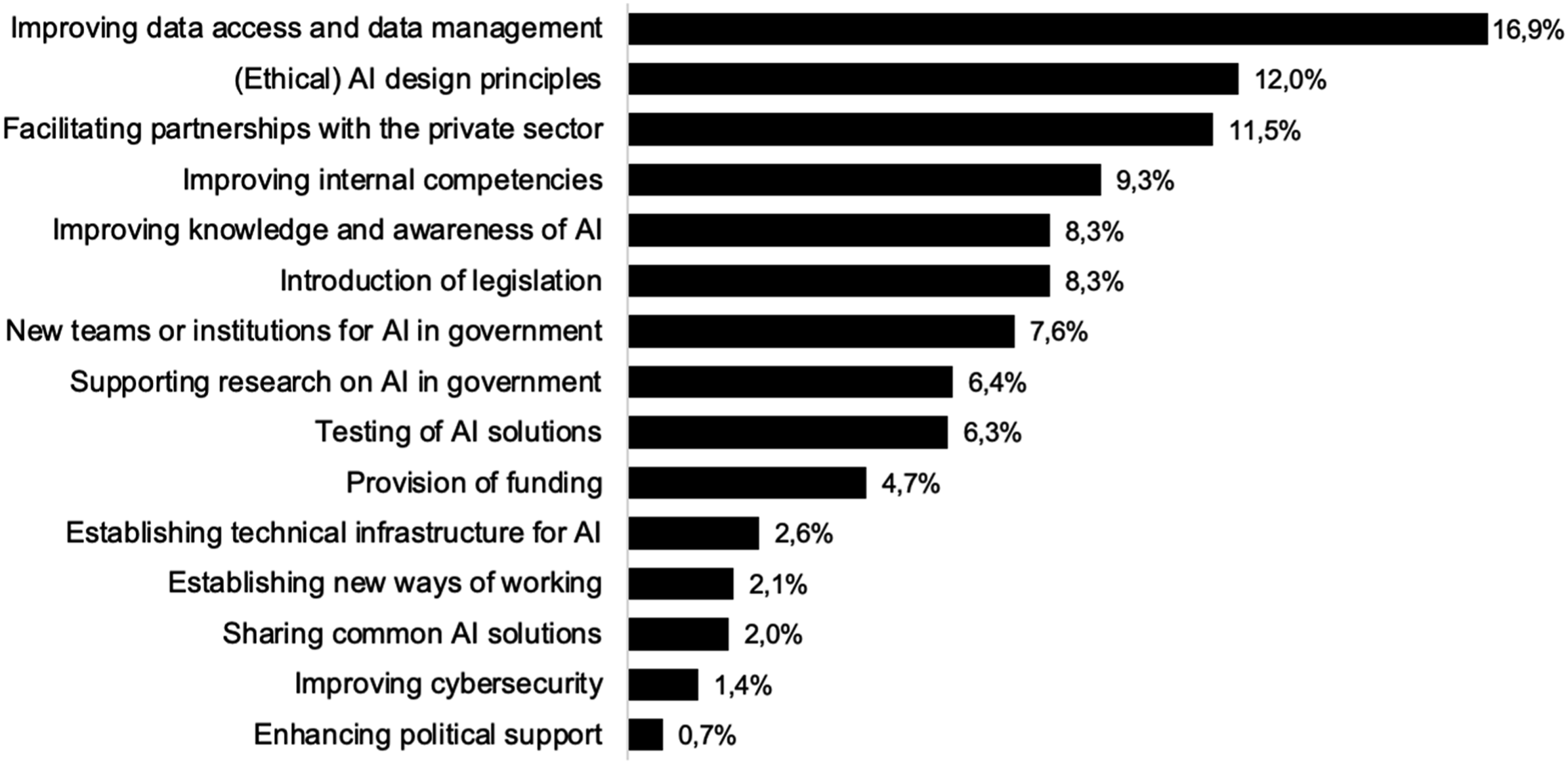

The inductive coding exercise led to identifying 15 types of policy initiatives that aim to tackle challenges to AI development and implementation in the public sector. The frequency of the initiatives in the strategy documents is illustrated in Figure 2, represented as percentage values of the total number of mentions of initiatives in all the strategy documents (N = 1050). Categories of policy initiatives – frequency of mentions in strategy documents expressed as percentages (N = 1050) of coded policy initiatives.

Improving data access and data management

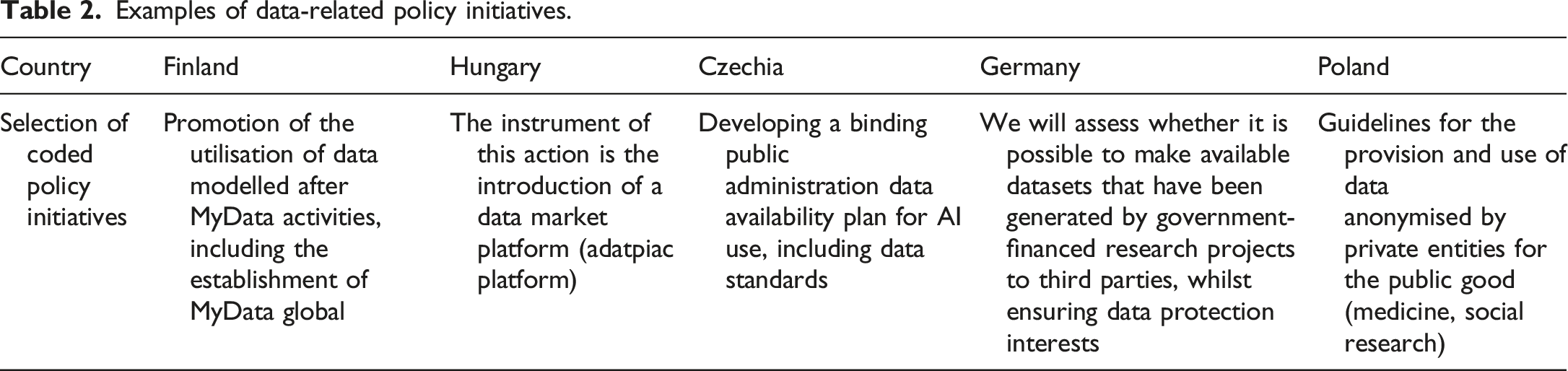

Examples of data-related policy initiatives.

Whilst, in general, data-related initiatives are frequently mentioned, there are several strategies in which a fair amount of all the initiatives described therein refer to improving data access and management. In the strategies of Germany, 4 Italy, Hungary, Latvia, Luxembourg, Norway, and the UK, more than 25% of all the coded initiatives refer to data. Hungary, in particular, stands out, with 38.7% of all the initiatives referring to actions to improve data quality and access. From this, it becomes clear that one of the main initiatives of moving forward with AI in government seems to be improving the public administration’s data ecosystem. However, it is possible that overreliance on solely improving the data ecosystem, as these countries seem to do, could lead to a lack of investment to overcome other vital barriers, such as funding, expertise, legal barriers, and administrative culture.

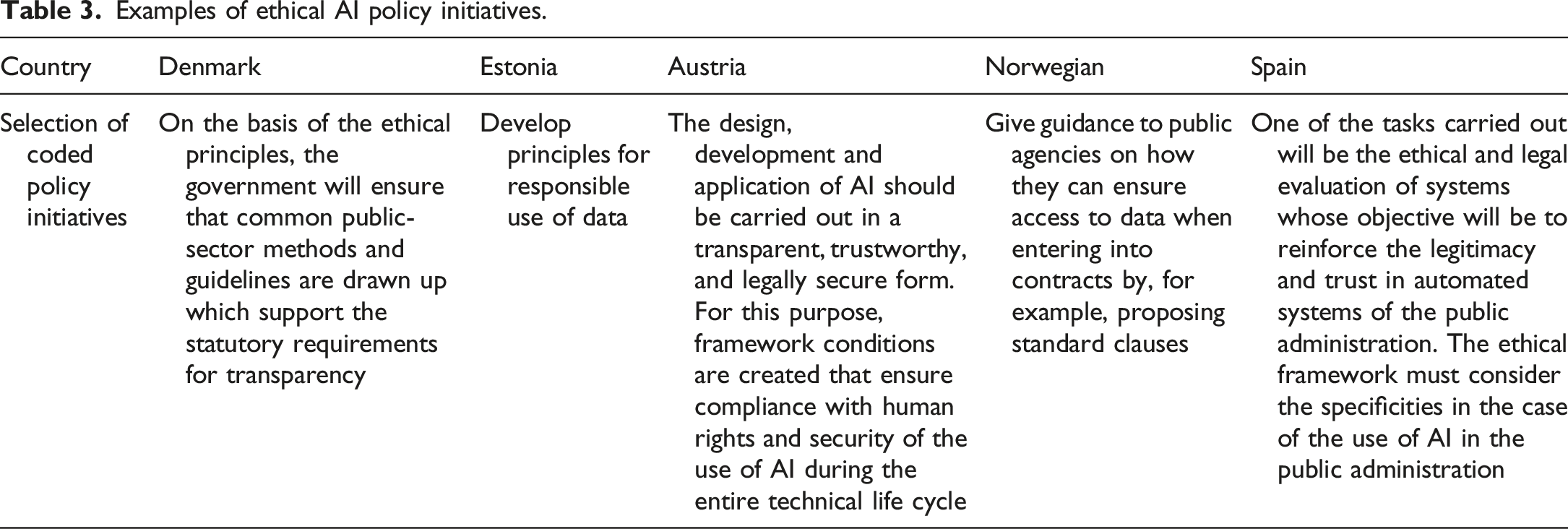

Ethical AI design principles

Another cluster of policy actions (12%, 126 out of 1050) refers to the establishment or use of various ethical AI design principles introduced to assist public organizations to develop and implement AI ethically. Strategies often describe actions to establish a set of ethical AI principles, often aligned or similar to the guidelines 5 proposed by the High-Level Expert Group on AI (AI HLEG) from the European Commission, or include the recommendation, obligation, or other actions to follow these established AI principles. Examples include an ethical framework and methods for the public sector described in the Danish strategy, supporting the implementation of the AI HLEG recommendations in the Slovakian strategy, and establishing principles for the responsible use of data in the Estonian AI strategy. The Austrian strategy describes that design, development, and application (the entire life cycle of AI) should be transparent, trustworthy, legal, and secure, which is why framework conditions will be created.

Examples of ethical AI policy initiatives.

Such activities are in line with the vital role of governments to create ethical and trustworthy AI prevalent in many of the AI strategies around the world (Guenduez and Mettler, 2022) – but it is possible that the conflict of roles of governments as a regulator and as user of these technologies creates tension (Kuziemski and Misuraca, 2020), which is why there may be a high prevalence on ensuring ethical AI in the strategies, but there is less attention given to the use of ethical AI by public administrations themselves.

Interestingly, almost half of all coded initiatives in this category (48.4%) were present in only four countries: the Netherlands, France, Finland, and Norway. In these strategies, having solid ethical frameworks and guidance for civil servants to overcome the ethical concerns of civil servants in developing and using AI were often mentioned. In contrast, in the Estonian, Lithuanian, German, Bulgarian, Czech and Polish strategies, only one initiative referred to establishing and using ethical AI principles for the public sector to follow. Many strategies do mention the need to develop ethical frameworks and follow ethical guidelines. However, often they do not specify whether public organizations must do so, or only describe that private organisations should develop and use AI ethically. It may also very well be the case that most governments do not see a need to ensure the ethical use of AI in the public sector more than in other domains, which is why there is no specific focus thereof.

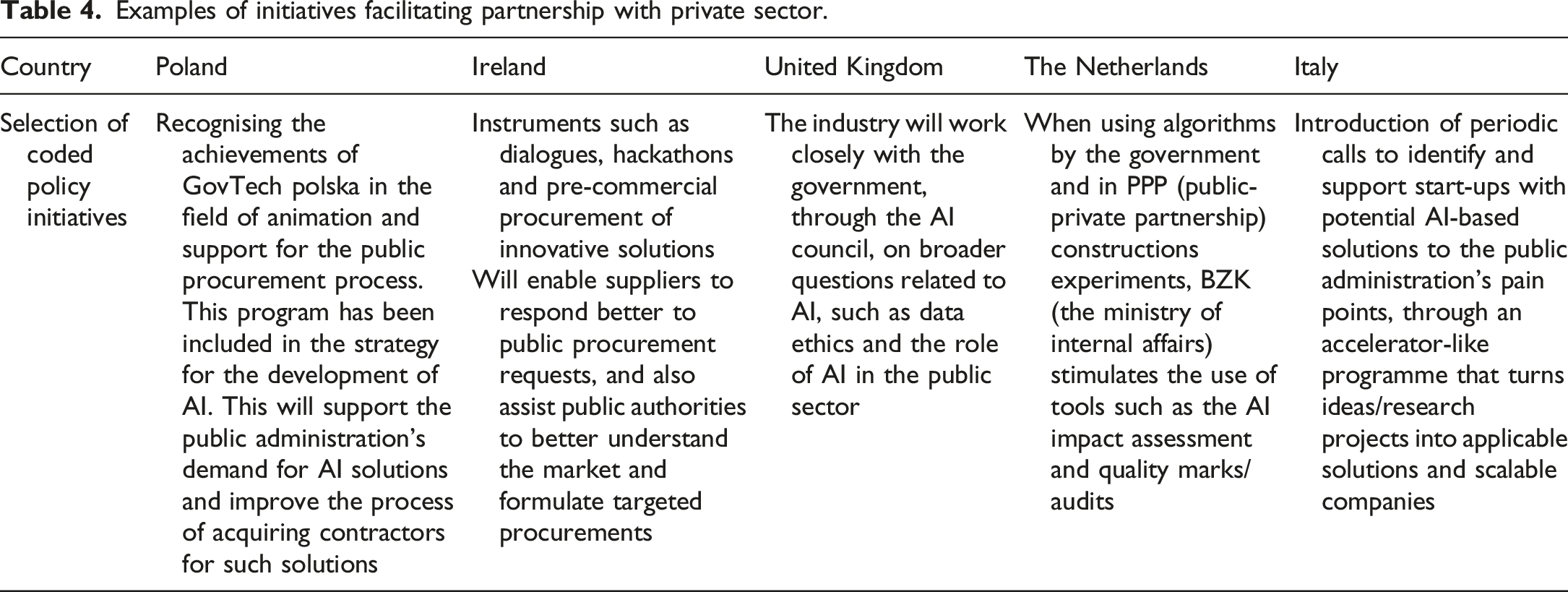

Facilitating partnerships with the private sector

Public administrations are encouraged in the AI strategies to work with the private sector to develop and adopt AI technologies for use in their own organisation: facilitating partnerships with the private sector is often (11.5%, 121 out of 1050) mentioned. AI strategies often describe how innovative the private sector is in developing AI applications, which consequently could be procured by public administrations. In the Polish strategy, for example, great emphasis is given to the establishment of GovTech Polska, a new organisation which will assist public administrations in working together with the private sector for innovative AI technologies. The Irish strategy, amongst others, also mentions that mechanisms will be developed to support the public procurement as a catalyst for trustworthy AI.

Examples of initiatives facilitating partnership with private sector.

Some countries emphasise facilitating partnerships with the private sector more than others. For example, the United Kingdom and the Italian strategy have 25% of their initiatives referring to actions to strengthen collaboration with the private sector. Portugal, Czechia, the Netherlands, and Poland also have over 15%. There is a risk, however, that these countries put too much emphasis on gaining innovative solutions from the private sector but do not place enough investments into obtaining the adequate skills to work with AI, procure AI effectively, or have a general level of understanding among civil servants about the potential and dangers of AI.

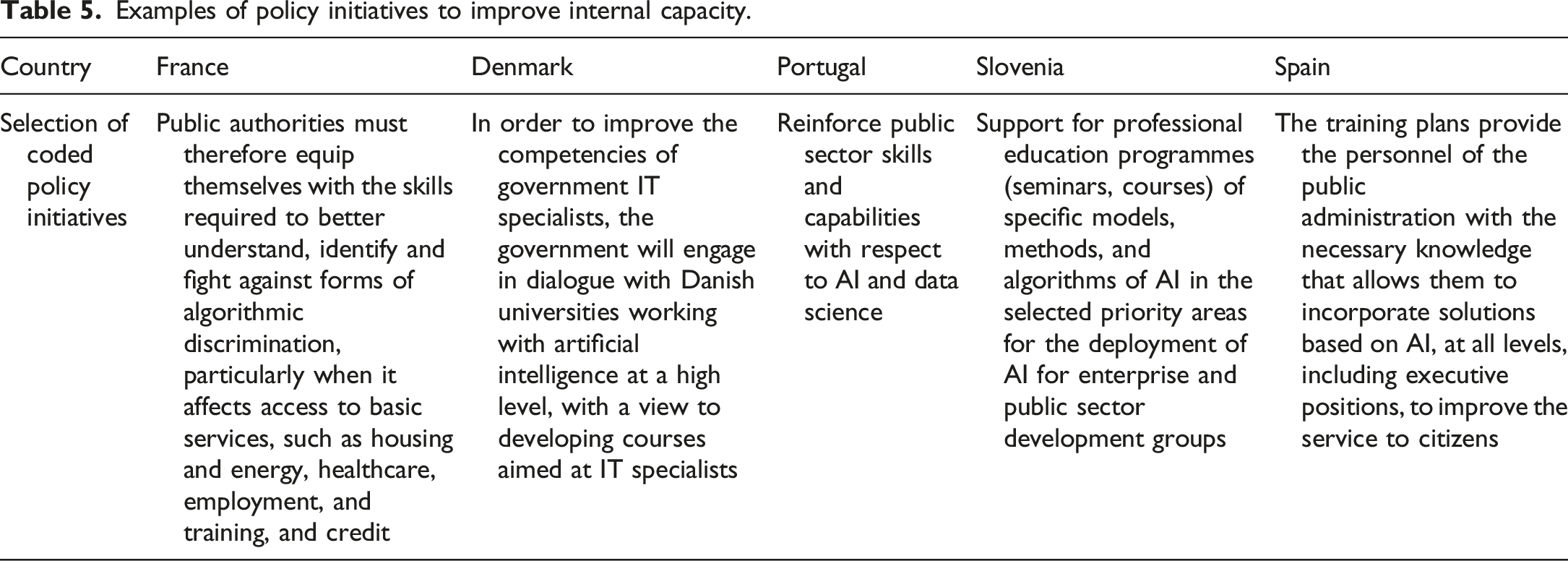

Improving internal competencies

Examples of policy initiatives to improve internal capacity.

Significant differences can be found in the frequency of policy initiatives – both in absolute and relative terms – hinting towards different approaches in boosting the uptake of AI in government. For example, the Spanish AI strategy includes most policy initiatives to improve public administration’s internal competencies (11 out of 63, 17.5%). This is in stark contrast with other strategies, such as the German, 6 the Bulgarian, the United Kingdom and the Swedish, with only one such initiative referring to improving internal competencies. In the Czech strategy, no such initiative was identified.

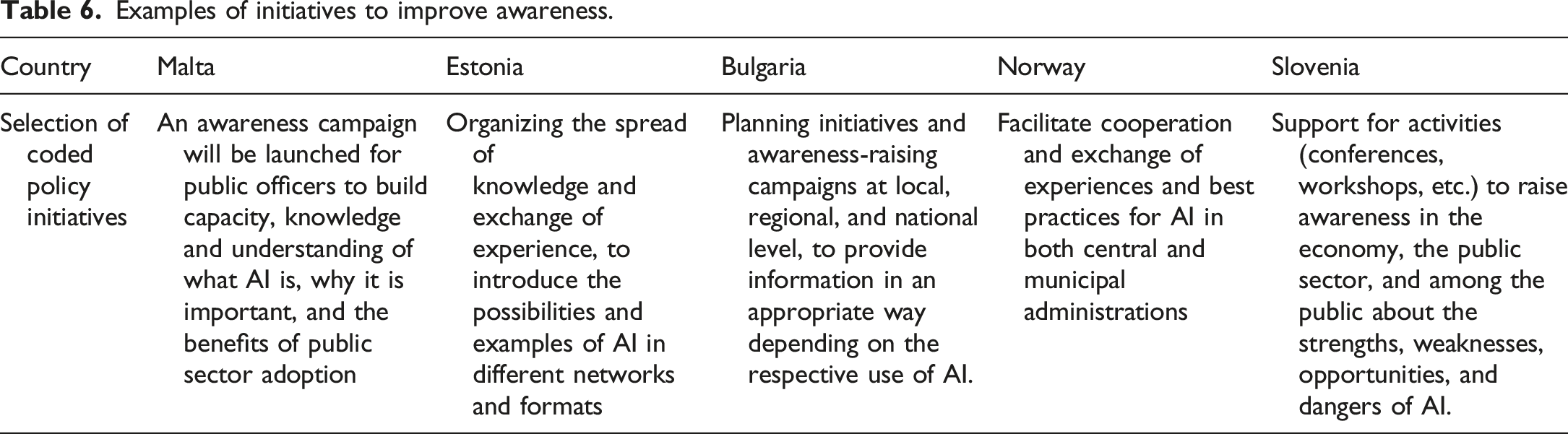

Improving knowledge and awareness of AI

Examples of initiatives to improve awareness.

In particular, the Maltese and Slovenian AI strategies stand out, with many of their policy initiatives described as improving knowledge and awareness of AI.

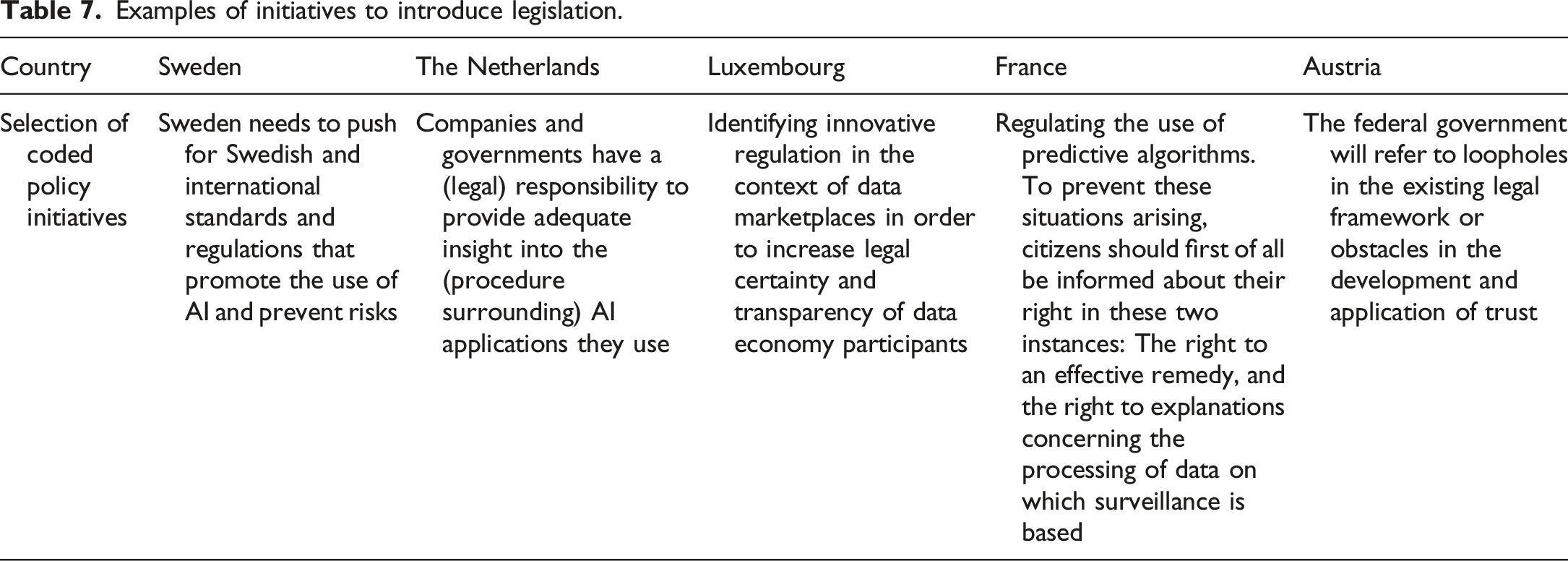

Introduction and review of legislation

To overcome some barriers to developing and using AI in the public sector, a sizable amount of the coded policy initiatives describe the introduction of legislation, regulation, or certain rights to tackle some legal difficulties (8.3%, 87 out of 1050). The content of the initiatives varies greatly, as some of the initiatives describe new legislation to follow when public authorities will be using AI – such as regulation that prevents risks of AI, as highlighted in the Swedish strategy – or the obligation for public administrations to provide insights in the AI applications they are using, as in the Dutch public law. Several policy initiatives describing new legislation concern improving data access and sharing – overlapping firmly with the first cluster of data-related initiatives described. Another set of legislation is related to public procurement and aims to review, change, or introduce new competition or procurement law to reduce administrative barriers in procuring AI. Hence, a new form of innovative procurement contract could be stimulated to create more favourable conditions for experimentation during a procurement process.

Examples of initiatives to introduce legislation.

Within this category, France stands out with 20 policy initiatives related to this (17.2% of all of France initiatives), such as referring to the revision of the reuse directive to open additional public data, the new laws on individual data portability for local authorities to develop AI, and the concept of data of public interest, as highlighted in the Law for a Digital Republic. Other countries mention far fewer initiatives referring to introducing new legislation to support the introduction of AI in public administration.

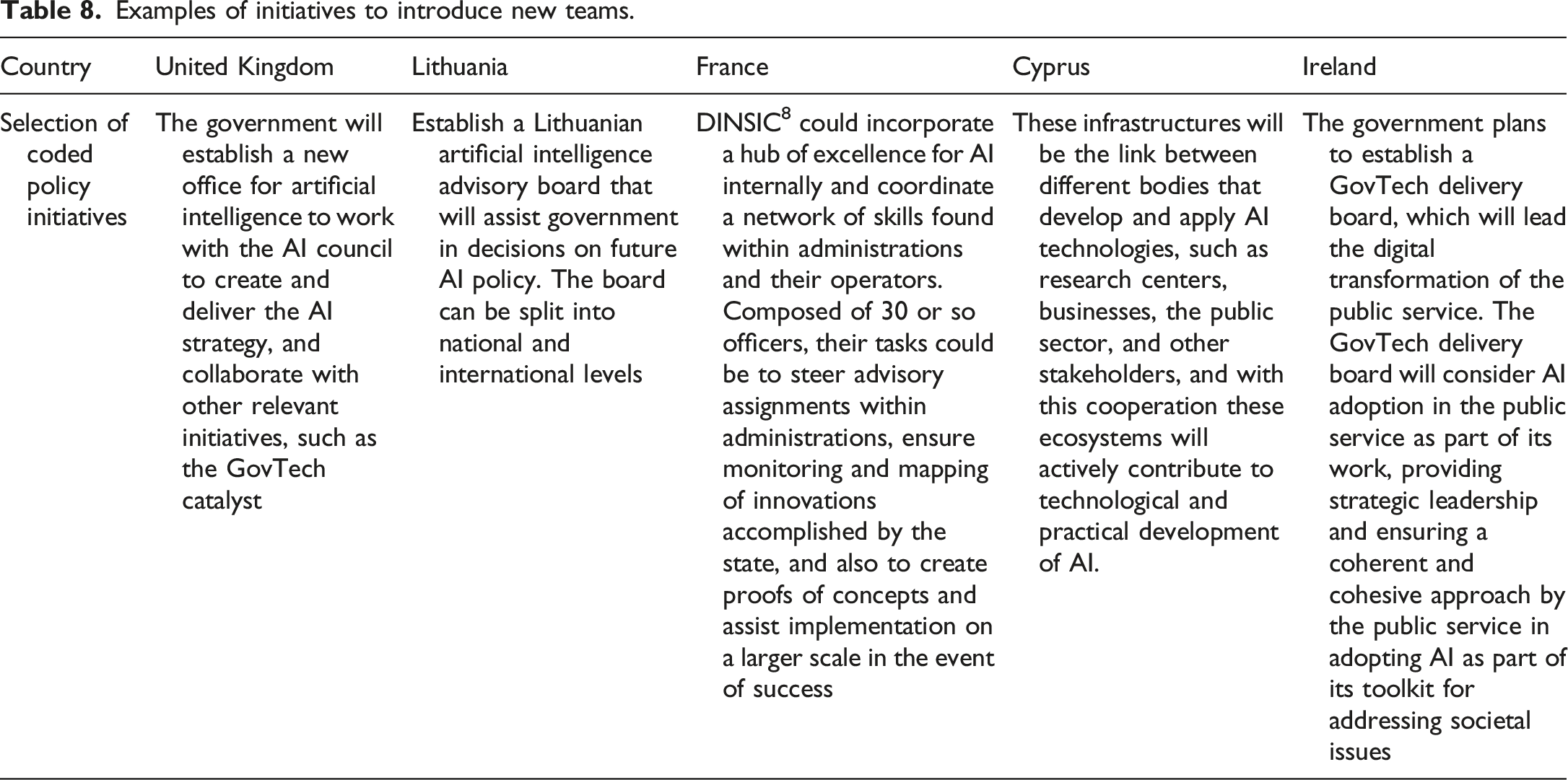

New teams or institutions for AI in government

Examples of initiatives to introduce new teams.

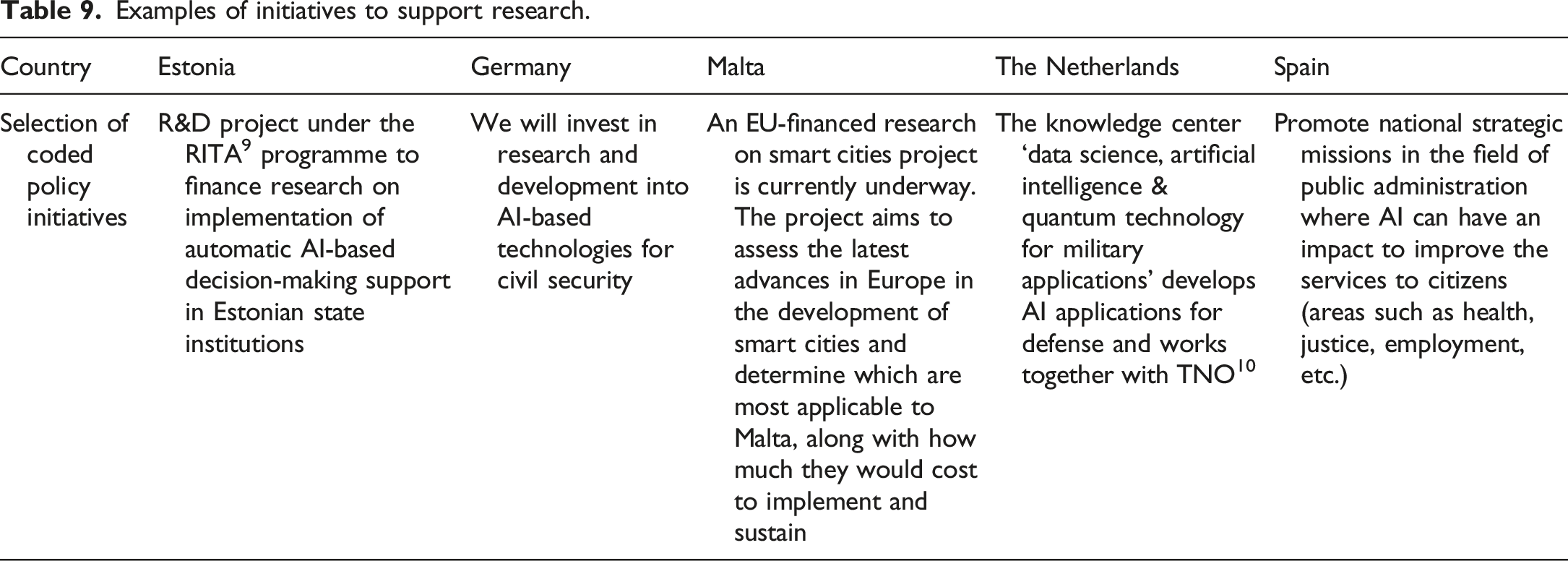

Supporting research on AI in government

Examples of initiatives to support research.

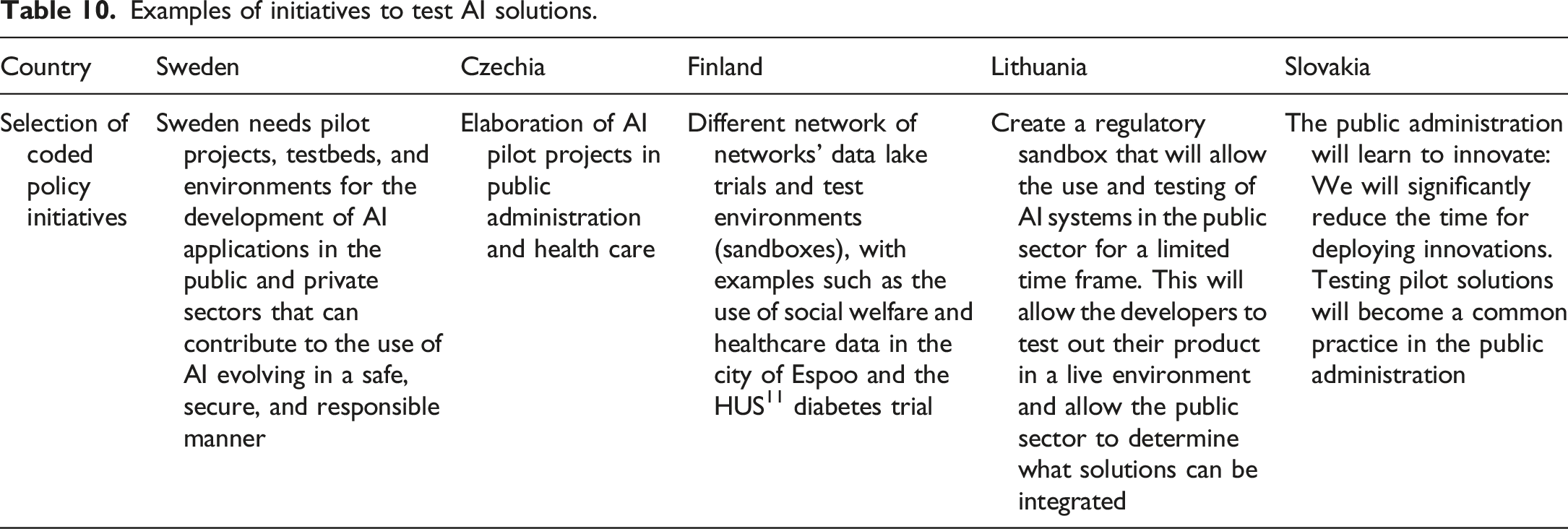

Testing of AI solutions

Examples of initiatives to test AI solutions.

Provision of funding

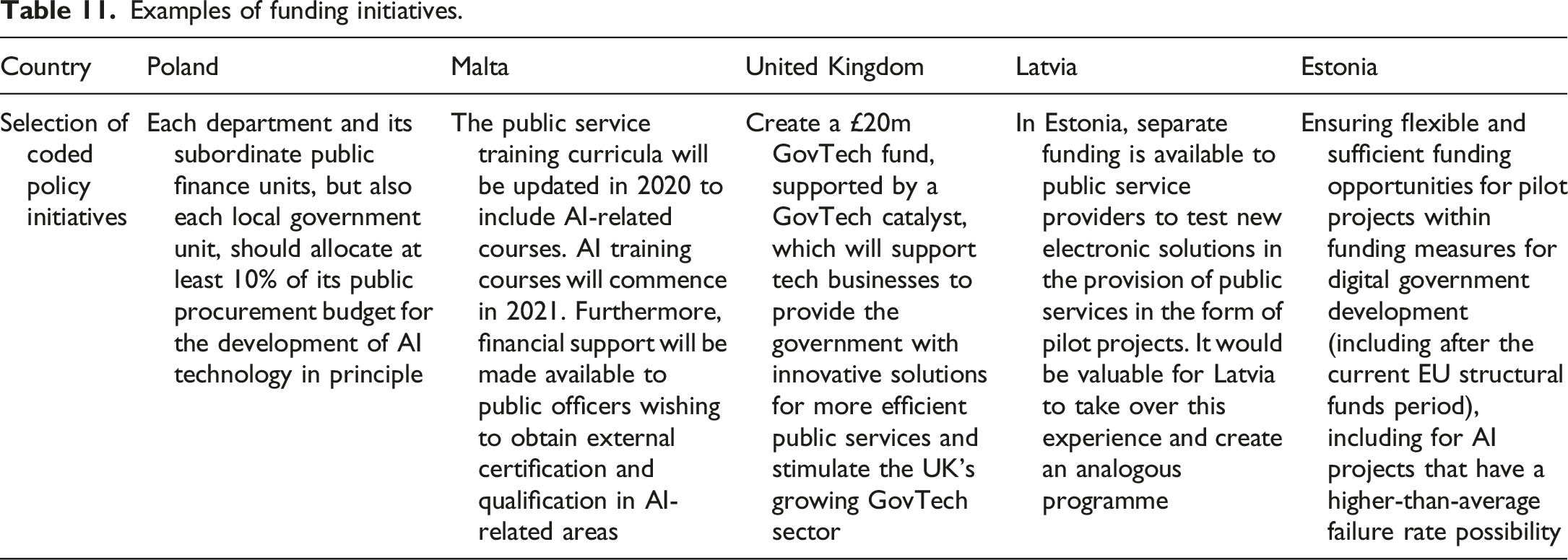

Examples of funding initiatives.

Regarding the funding of AI for use in the public administration, Estonia and Latvia often refer to it within their strategies. Many other, in fact, hardly refer to the availability of funding to assist in the uptake of AI in government, or do not make clear whether this funding is for research purposes, pilots or assisting in implementing new AI solutions within the government.

Other initiatives

Lastly, a residual category includes initiatives with less than 3% representation, which are nonetheless worth mentioning. A set of initiatives (27) refer to other technical, infrastructural actions needed for public administrations to develop or adopt AI. These include preparing some form of technical, analytical layer (11) to ensure compatibility of AI, such as reviewing the architecture of AI in Malta, the BüroKratt AI concept for interoperability of public sector AI in Estonia, or creating a structured public database ecosystem to overcome technical barriers for AI in Luxembourg. Alternatively, this may include initiatives to enhance or establish cloud computing for the public sector’s usage, improve the availability of high-speed internet in public administrations, or make high-performance computing available for government use. The Hungarian strategy lists that those public institutions should be provided with whichever hardware they require for AI R&D activities, such as supercomputers or other cloud-based software. Other initiatives to support AI within the government include actions to change the working practices and culture of public administrations (22), sharing of standard, reusable AI solutions, such as language models or datasets across the public sector (21), enhancing cybersecurity (15) and ensuring sufficient political support (7) to advance with the plans of the strategy.

Discussion and concluding remarks

As our analysis shows, there is a fair diversity between the different strategies in how they discuss plans to facilitate the use of AI technologies within public administrations, in the kind of actions they propose to overcome various barriers to AI adoption, and in the propensity to highlight some policy initiatives more than others. It has to be noted that, following a full read of the strategy documents, there are many passages in the texts that are rather generic, with a lack of concrete descriptions on what are the plans, mixing intentions, wishes and active policy, and are unclear on whether specific initiatives target public sector organizations, private or academic organizations, or society as a whole. This lack of clarity of strategies on the goals, targets, and instruments has been highlighted by other studies – for example, many strategies often lack details on implementations and metrics (Fatima et al., 2020) – but it is arguably even more unclear with understanding the various policy instruments and goals for stimulating public sector AI (Zuiderwijk et al., 2021). Often shortcomings or challenges are highlighted in the strategies that public administrations face in using AI technologies. However, concrete measures to overcome these barriers are omitted, or initiatives lack information or depth, although some exceptions exist.

Given the difficulties that public administrations face in developing and deploying AI technologies, this study analysed which activities AI strategies describe to overcome these difficulties with the research question: “What are the main policy initiatives proposed in AI strategy documents by European governments to facilitate the development and adoption of AI technologies within their public administrations, and how do these initiatives aim to address the barriers faced in the implementation of AI in government?”. Many governments seem to favour an approach heavily focused on improving data and other data-related factors to overcome existing barriers to AI development and adoption within their administrations. Such a focus on data is not unexpected, as one of the essential preconditions for AI is access to adequate volumes of high-quality data (Janssen et al., 2020a; Van Noordt and Misuraca, 2020b; Wimmer et al., 2020). The focus on data might be related to the year of publication of the strategies: also considering that some strategies were published a few years ago, data were essential as the fuel for moving the first step towards AI. However, nowadays, as also highlighted by several studies, public administrations should realize that for fostering the adoption of AI, data policies need to be complemented by policies that tackle other implementation barriers, such as organizational barriers, lack of skills, or lack of coordination (Giest and Klievink, 2022; Maragno et al., 2022). Therefore, there is a possibility that this reliance on improving data quantity, quality and access may not boost the adoption of AI as much as anticipated, if many other significant barriers remain. Such a risk is particularly true for countries with many initiatives within this category without policy initiatives to tackle other implementation barriers.

Furthermore, there seems to be a strong focus on GovTech and the private sector to assist public administrations in overcoming development and adoption challenges. There appears to be an underlying assumption that developed innovative solutions will eventually find their way into public administrations by supporting the AI private sector ecosystem. However, challenges to procurement, such as the legal use of data (Harrison and Luna-Reyes, 2022), ownership (Campion et al., 2022), opacity and secrecy (Mulligan and Bamberger, 2019) can bear serious risks if public administrations themselves cannot manage such partnerships successfully (Tangi et al., 2022).

This requires policies that foster internal capacity, ensuring the presence of internal competencies and awareness of AI solutions, stressed in policy documents (Kupi et al., 2022; Tangi et al., 2022) as well as in academic work (Desouza et al., 2020; Mikalef and Gupta, 2021). Ensuring that there is no dependency on the private sector in the field of AI may also further ensure that the governments’ approaches are aligned with public values, such as increasing inclusion and engagement, rather than the current emphasis on efficiency values that AI is set to achieve now (Toll et al., 2020b; Wilson, 2021). In this direction, readers of the strategies could also notice many actions aimed at boosting the opportunities for the development of AI in the private sector, but similar initiatives aimed at boosting the public sector use of AI are lacking (Guenduez and Mettler, 2022). For instance, opening public data seems primarily a strategic goal for private organizations’ development of AI solutions and providing economic growth – not to improve public services or policymaking through better reuse and sharing amongst administrations.

The severe lack of reference to funding programmes for public sector AI may make it challenging for public administrations – even if data is available – to move forward with initiating AI development. Overcoming implementation barriers and changing work practices beyond the scope of a single pilot require resources to implement organizational changes (Kuguoglu et al., 2021). A mix of policy initiatives that rely strongly on improving awareness and information to act upon often requires adequate financial resources to be successful (Hood and Marge'tts, 2007), as only introducing many “soft” instruments with a lack of financial or regulatory incentives may run the risk of having them be ineffective in overcoming the barriers faced by public administrations (Van Noordt et al., 2020).

In fact, AI strategies have been mentioned as unrealistic funding strategies, despite aspirations to pour many resources into AI (Fatima et al., 2020). Whether the investment is thus aimed at research, the private sector, or the public sector remains unclear. For the public sector specifically, strategies may refer to the Digital Europe Programme 12 or the Recovery and Resilience Facility 13 as potential funding sources for public sector AI – but often lack specifications on what exactly the funding will be used for. Alternatively, it may also be possible that strategies are not the documents describing funding strategies and opportunities, but the noticeable absence requires further investigation.

Limitations and future research

This study features some limitations, which must be taken into account. First, some countries are not included in the analysis, as they have not published an AI strategy yet or are not members of the Coordinated Action Plan of AI. This excludes all non-European countries, which may have different approaches or plans to overcome the barriers to AI development and adoption. Future research may thus require the inclusion of other regions and countries to compare findings between regions, especially since differences between regions have already been identified (Guenduez and Mettler, 2022). Furthermore, as the only documents used were the national AI Strategies, other policy actions, such as eGovernment strategies, may have been overlooked. It may very well be those countries’ eGovernment strategies hold additional information on how AI in the public sector will be facilitated. Further research may include a more comprehensive coverage of actions to describe a full spectrum of a specific country or region, as done in Sweden (Toll et al., 2020b). It is also possible that national AI strategies focus more on the apparent data-related challenges as a main priority, and that other documents could include more concrete actions on the identified areas, as these barriers become more visible after experimenting with AI for a while (Kuguoglu et al., 2021).

While this study aims to contribute to the identified research gap on implementation strategies for AI use in the public sector (Wirtz et al., 2021; Zuiderwijk et al., 2021), it still barely scratches the surface in understanding how governments perceive the use of AI, which expected benefits they aim to gain, how they overcome barriers to innovation, and whether the proposed initiatives, in fact, sufficiently tackled the implementation barriers. All these questions remain essential and require further inquiry (Medaglia et al., 2021). It may be possible that certain “styles” or approaches to stimulating AI in government are emerging, with some governments focusing strongly on improving data ecosystems, the private sector and/or internal competencies, similar to the strategic stance towards AI identified earlier (Viscusi et al., 2020) – although in this study a clear distinction between countries could not be found. Furthermore, it is unclear whether previous institutional arrangements, such as historical eGovernment progress or public management reforms, influence the likelihood of proposing certain initiatives and not others. It is indeed possible that approaches followed in past eGovernment strategies will be followed with AI technologies as well, since a lot of the discourse of AI in strategy documents is in line with that of eGovernment (Toll et al., 2020b). What wider consequences to public administration capacity and governance capabilities will be when AI becomes increasingly used in public administration processes is still an open research question.

Supplemental Material

Supplemental Material - Policy initiatives for Artificial Intelligence-enabled government: An analysis of national strategies in Europe

Supplemental Material for Policy initiatives for Artificial Intelligence-enabled government: An analysis of national strategies in Europe by Colin van Noordt, Rony Medaglia and Luca Tangi in Public Policy and Administration

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Joint Research Centre (CT-EX2019D361089-102).

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.