Abstract

This paper provides a qualitative review of research related to sexual harassment interventions employed in institutions of higher education (IHEs) and introduces a needs assessment process that IHE administrators can use to inform their choice of intervention. Additionally, this paper provides direction regarding how to assess the impact of sexual harassment interventions as prevention programs can only be effective if they are continuously evaluated. This review may help researchers identify under researched sexual harassment related topics in higher education and IHE administrators make evidence-based decisions related to the choice, implementation, and assessment of sexual harassment interventions.

Sexual harassment of faculty, staff, and students has been a topic of concern in higher education in the U.S. since the late 1970s when the term “sexual harassment” was first used by activists on the campus of Cornell University (Bellafante, 2018). Sexually harassing behavior can best be understood as falling into one of three categories: gender harassment, unwanted sexual attention, and sexual coercion (Fitzgerald & Cortina, 2018). The most widespread form of sexual harassment both in the workplace and higher education is gender harassment which includes expressing insulting, degrading, and contemptuous attitudes about women or engaging in behaviors that are hostile and exclusionary (Bondestam & Lundqvist, 2020; Fitzgerald & Cortina, 2018). Unwanted sexual attention includes sexual advances that are uninvited, unwelcome, and unreciprocated (e.g., asking for dates, unwanted touching). Finally, sexual coercion involves sexual advances in which an employee or student is offered a benefit for acquiescing or is threatened if they do not (Fitzgerald & Cortina, 2018). Despite recognition of the importance of sexual harassment, researchers have tended to study and write about dating and sexual violence more than sexual harassment and its prevention in higher education. While sexual violence is a serious issue, sexual harassment is more common. Given that the causes of these phenomena are likely to be different and therefore require different interventions, it is important to study sexual harassment in higher education in its own right.

The costs of sexual harassment are well established. Sexual harassment negatively affects victims’ mental and physical health and impairs employees’ work-related attitudes (e.g., job satisfaction, commitment) and outcomes (performance, absenteeism) as well as students’ educational outcomes (e.g., lower grades; Bondestam & Lundqvist, 2020; Fitzgerald & Cortina, 2018; Henning et al., 2017; National Academies of Sciences, Engineering, and Medicine, 2018; Shaw et al., 2018; Wood et al., 2021). Sexual harassment can also negatively impact colleagues and peers who witness it (Berdahl & Raver, 2011; Bondestam & Lundqvist, 2020) and the institutions in which it occurs. Institutions can incur costs from increased absences, turnover, and lower motivation and commitment among employees as well as legal costs if there are formal charges. Unfortunately, there is considerable evidence that formally reporting sexual harassment to their institutions is the least common response taken by students and faculty who experience it (Bondestam & Lundqvist, 2020; National Academies of Sciences, Engineering, and Medicine, 2018).

Despite its long history, and legal prohibitions against it, sexual harassment remains a pervasive problem in IHEs (Cantor et al., 2017). While estimated rates of prevalence vary as a function of the different ways in which sexual harassment is defined and measured, there is little doubt that it is a ubiquitous and pernicious problem (Fitzgerald & Cortina, 2018). This was documented recently in a large scale study in higher education conducted by the Association of American Universities (AAU) and in systematic reviews of empirical research conducted in higher education (Bondestam & Lundqvist, 2020; National Academies of Sciences, Engineering, and Medicine, 2018). In their 2015 survey of university of students, the AAU found that 62% of female and 42.9% of male undergraduates indicated they experienced sexual harassment from a student or employee; while 44.1% of female and 29.6% of male graduate/professional students did (Cantor et al., 2017). Based on their review of empirical research conducted in higher education, the National Academies of Sciences, Engineering, and Medicine (2018) concluded that greater than 50% of female faculty and staff and 20% to 50% of female students experience sexual harassment in academia. Among students, peer-perpetrated sexual harassment is more frequent than faculty/staff-perpetrated sexual harassment (Wood et al., 2021). Studies of faculty, managers, and administrative staff within higher educational institutions find that managers, administrative, and professional personnel are engaged in workplace harassment more than academic faculty (Henning et al., 2017).

The persistence of sexual harassment, the costs it incurs, and the reluctance of targets to report it make the need for academia generally, and IHE administrators more specifically, to focus on sexual harassment prevention efforts particularly urgent. This need is even more evident in the context in which sexual harassment is occurring today; a context in which there is greater social awareness of and mobilization against sexual abuse and harassment in the form of the #MeToo movement. However, despite this obvious need, organizations’ efforts to prevent sexual harassment are lacking and ineffective (Feldblum & Lipnic, 2016).

The purpose of this paper is to provide a qualitative review of the evidence basis for sexual harassment interventions that have been employed in IHEs. Relatively little higher education research has explored the effectiveness of sexual harassment interventions and when such research is conducted it tends to focus on a limited set of interventions (Bondestam & Lundqvist, 2020). The current paper takes a systemic perspective, considering higher education institutions as a whole as well as their component parts and how they are interrelated. A systemic approach to addressing sexual harassment requires thinking about multiple interventions that target different aspects of the system. Consequently, the current paper summarizes research findings for a range of interventions that have and can be used alone or in combination in the context of higher education.

The review of the literature reveals that while there is limited evidence for the effectiveness of a number of more commonly employed interventions (e.g., policies, education and training), less commonly studied potential interventions (e.g., leadership, organizational practices and systems) may effectively address sexual harassment. Additionally, this paper introduces a process (needs assessment) that IHE administrators can use to inform their choice of sexual harassment interventions. Finally, this paper provides direction on how IHE administrators can assess the impact of the interventions they employ on relevant outcomes in order to make informed decisions about the continued use of the chosen interventions. This review may not only help higher education researchers identify under researched sexual harassment related topics in higher education but also help IHE administrators make evidence-based decisions related to the choice, implementation, and assessment of sexual harassment interventions.

Intervention Strategies

Sexual harassment interventions have been described as taking one of three approaches: primary, secondary, or tertiary (Hunt et al., 2010; McDonald et al., 2015). Primary interventions attempt to address the root cause of the problem, preventing the problem from arising in the first place. This approach typically includes the development and communication of sexual harassment policies and the provision of sexual harassment awareness education and training (Hunt et al., 2010; McDonald et al., 2015). Secondary interventions are directed toward how institutions respond after sexual harassment has occurred. This approach focuses on developing and ensuring an effective complaint procedure; preventing further incidents and dealing with the immediate consequences of the sexual harassment. Finally, tertiary interventions focus on longer-term restorative responses (e.g., provision of counseling) that address lasting consequences of the sexual harassment and support victims after sexual harassment has occurred.

There is a general “. . .absence of empirical evidence examining the effectiveness of many of these strategies” (Hunt et al., 2010, p. 661) with tertiary interventions, in particular, receiving the least research attention (Hunt et al., 2010; McDonald et al., 2015). While this typology is helpful, some intervention strategies span more than one of these approaches, and others do not fit neatly into this framework. Institutional factors including leadership, organizational structure, practices, and systems (not directly related to victim support or complaint procedures) and climate may also play a role in preventing sexual harassment. In practice, a combination of strategies employing multiple interventions across a variety of settings is likely to be most effective (Nation et al., 2003).

The research evidence for the intervention strategies employed by IHEs described below is based on a literature search conducted using a variety of library databases (e.g., Proquest, ERIC, PsycInfo, Google Scholar) spanning a number of disciplines (e.g., education, psychology, sociology). The search focused on publications from the late 1990s to the present using various combinations of general terms (sexual harassment, prevention, intervention, higher education, university, college, academic, work, and organization) and more specific terms where necessary (e.g., training, policy). Additionally, the reference lists of the publications that were obtained were reviewed for relevant articles that were not identified in the initial search of the databases.

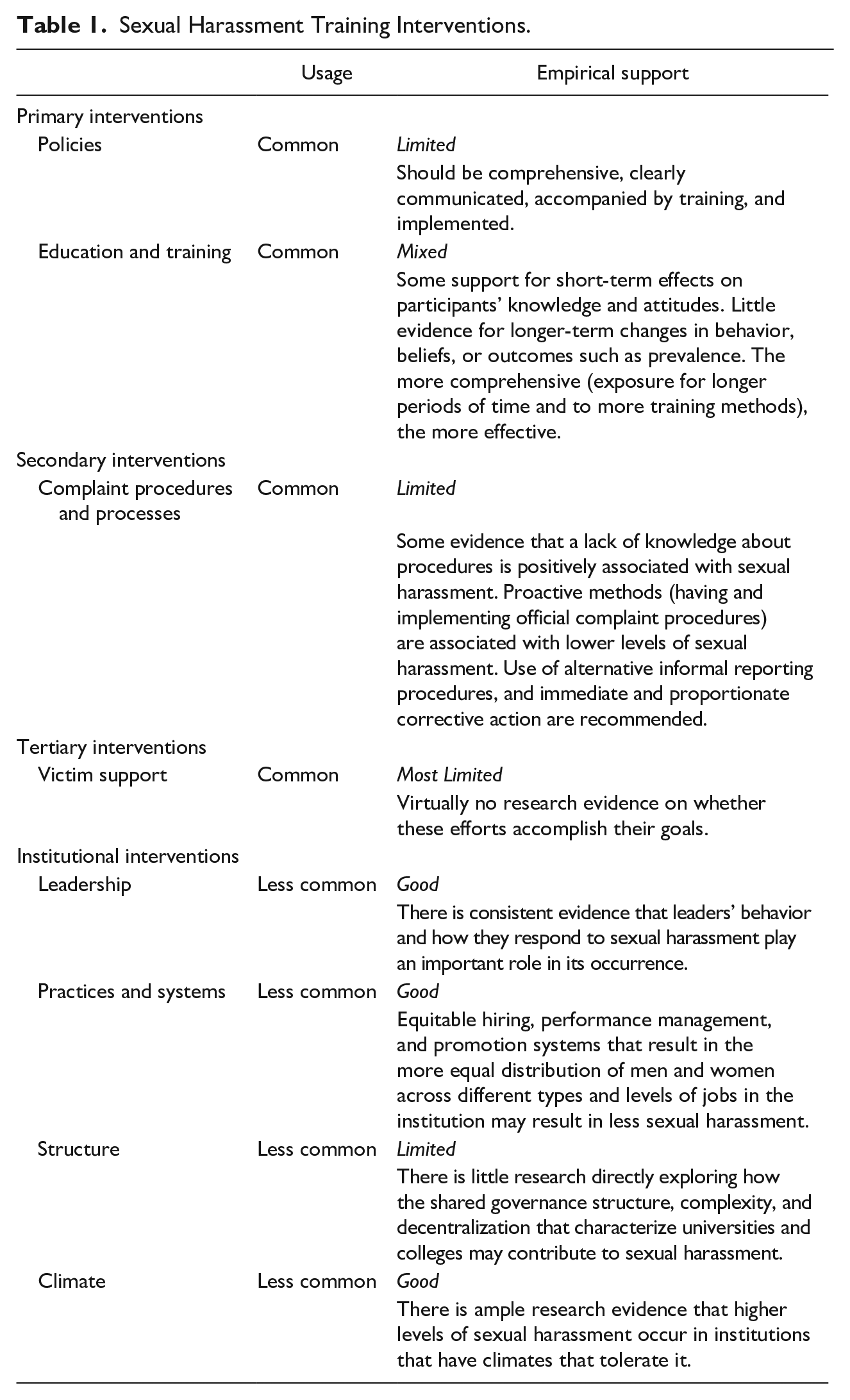

See Table 1 for a list of intervention efforts, the extent to which they are used to address sexual harassment and the extent to which they have been researched and received empirical support. This table reveals a number of interventions that are commonly used despite limited evidence for their effectiveness in preventing or reducing sexual harassment (policies, education and training, complaint procedures and processes, and victim support). These are tools that most IHEs are likely to adopt because they are widely accepted. This table also identifies factors (e.g., leadership, practices and systems, climate) that are less commonly used as prevention strategies but which research suggests can reduce the incidence of sexual harassment. These potential intervention strategies provide additional opportunities for IHEs to address sexual harassment and are discussed below.

Sexual Harassment Training Interventions.

Primary Interventions

Institutional policies

Policy and training are the primary prevention strategies discussed most frequently in the sexual harassment literature. However, while many researchers recommend policies as a primary preventive measure, there is almost no evidence-based research on their effectiveness; including whether they lead to decreased sexual harassment (Bondestam & Lundqvist, 2020; Hunt et al., 2010). A formal sexual harassment policy can establish behavioral guidelines that may serve to deter potential harassers, and help victims recognize and report sexual harassment (Hunt et al., 2010). Policy may also influence the extent to which bystanders are likely to report harassment (Jacobson & Eaton, 2018). Some research finds that perceptions of the implementation of sexual harassment policy and procedures negatively affects the incidence of sexual harassment and positively affects employees’ organizational commitment and satisfaction (e.g., Williams et al., 1999). Other research suggests that sexual harassment policies are a reflection of, and can reinforce, an organizational climate that is intolerant of sexual harassment (Fusilier & Penrod, 2015).

The sexual harassment literature and the Equal Employment Opportunity Commission (EEOC) suggest that, among other things, effective policies should: clearly communicate what sexual harassment is and identify prohibited conduct; clearly describe the complaint process (outlining multiple avenues for reporting); be visible and widely disseminated; and clearly articulate the intention to enforce the policy and specify penalties for its violation (Daniel, 2003; McDonald et al., 2015; Reese & Lindenberg, 2004). Most employers and IHEs have a sexual harassment related policy, in part because they can minimize legal liability (Fusilier & Penrod, 2015; Magley & Grossman, 2018). However, the extent to which these policies are publicly available online may differ by type of university, with fewer private universities posting policies (Fusilier & Penrod, 2015). Additionally, research finds that a high percentage of policies lack characteristics identified in the law as essential to policy effectiveness (Fusilier & Penrod, 2015); although features that provide legal protection may or may not be effective in reducing sexual harassment. Evidence suggests that the mere existence of a policy is insufficient to deter sexual harassment (Gruber, 1998; Reese & Lindenberg, 2004); while it does not always occur, communicating and implementing the policy and providing training related to the policy’s content is essential to its effectiveness (Fusilier & Penrod, 2015; Joubert et al., 2011; Reese & Lindenberg, 2004). Employees tend to be less satisfied with the implementation of their institution’s sexual harassment policy than the content (Reese & Lindenberg, 2004) and there may be backlash against sexual harassment policies depending on how they are communicated (Tinkler et al., 2007).

Simply put, most public and private not for profit universities have sexual harassment related policies. However, their mere existence tells us little about their effectiveness. Critical to their success is whether these policies are comprehensive, clearly communicated, accompanied by training, and implemented in the way they are intended. Guidelines for policies can be found in the articles referenced above as well as the U.S. Department of Education’s Office for Civil Rights (OCR; https://www2.ed.gov/about/offices/list/ocr/docs/sexhar00.html).

Education and training

Sexual harassment related training is ubiquitous (Bainbridge et al., 2018) and is a common element of sexual harassment prevention programs (Buckner et al., 2014). This may be, in part, because training can be an effective defense to charges of harassment; EEOC Guidelines call for training, and many states have an explicit policy about sexual harassment training (Buckner et al., 2014). However, some have suggested that training interventions are problematic because they conceptualize the harassment problem at the individual level, and focus on fixing people, rather than taking a more systemic approach that considers the role of the organization (Perry et al., 2019). Many IHEs provide sexual harassment related education and training tailored to different target audiences (faculty, students, and staff) on their campuses (Association of American Universities, 2017). Training programs focus on increasing knowledge of the institution’s sexual harassment policies and procedures, what constitutes prohibited conduct, understanding power dynamics, promoting cultures of respect and civility, how to report harassment, and awareness of campus resources among other topics (Magley & Grossman, 2018). Training methodologies used in these programs involve a combination of workshops, discussions, online videos as well as theater productions (Association of American Universities, 2017). A recent development is bystander intervention programs and related training (Bondestam & Lundqvist, 2020; Quick & McFadyen, 2017). The EEOC now encourages bystander training that trains third parties to recognize and report sexual harassment (Quick & McFadyen, 2017). Peers and colleagues are likely to know that harassment is occurring, to be negatively affected by it, and their willingness to report it may be greater than the target’s (Berdahl & Raver, 2011).

In a review of sexual harassment training research, Roehling and Huang (2018) found that training interventions varied greatly from less involved (using a single training method delivered during a short training period) to more involved (using multiple methods over a longer training period). Almost a third of the studies reviewed included undergraduate students, while the remaining studies relied on samples from public sector organizations including samples from the US military, US government employees and university employees. Sexual harassment training research has tended to explore its impact on participants’ immediate reactions to the training rather than longer-term changes in attitudes and behaviors (Hunt et al., 2010). While few firm conclusions can be made, evidence is accumulating that sexual harassment training has short-term effects on participants’ knowledge and attitudes, but there is little evidence of its impact on longer-term changes in behavior or beliefs or on outcomes such as prevalence (Bondestam & Lundqvist, 2020; Medeiros & Griffith, 2019; Swedish Council for Higher Education, 2020). For example, research shows that training influences one’s likelihood of perceiving any given scenario as sexually harassing (Buckner et al., 2014). Additionally, there is evidence that the more training methods participants are exposed to, and the longer time they spend in training, the more positive the impact on intended training outcomes (Roehling & Huang, 2018). Some researchers have suggested that the general training literature can provide guidance on how to develop evidence-based and effective sexual harassment training (Medeiros & Griffith, 2019; Perry et al., 2009). An approach based on training research evidence and theory inevitably leads to a greater consideration of what happens prior to and following the training and to a greater consideration of the institutional and group context (leadership support, climate) in which the training occurs (e.g., Cheung et al., 2017; Goldberg et al., 2019; Perry et al., 2009; Walsh et al., 2013). Evidence-based best practices are most likely to be implemented by employers when there are institutional resources and leaders support training (Perry et al., 2012).

Secondary Interventions

Complaint procedures and processes

How an organization responds to sexual harassment has important implications not only for the individual’s willingness to report sexual harassment when they experience it, but for the climate the institution fosters (Gruber, 1998). A critical aspect of this response lies in the grievance procedures the institution has in place. There is little academic research on sexual harassment complaint handling in higher education and, as a result, little evidence of its role in prevention (Bondestam & Lundqvist, 2020; Swedish Council for Higher Education, 2020). The limited research that has been done finds that a lack of knowledge about the institution’s grievance procedures is positively associated with sexual harassment (O’Hare & O’Donohue, 1998). Research also finds that institutions that used proactive methods to address sexual harassment, including official complaint procedures, experience lower levels of sexual harassment (Gruber, 1998). Similarly, a study of armed service men and women by Williams et al. (1999) found that perceptions of the military’s efforts to implement sexual harassment procedures (e.g., providing thorough investigations, enforcing penalties against harassers) negatively affected the incidence of sexual harassment and positively affected respondents’ organizational commitment and satisfaction. EEOC recommendations include having procedures that provide complainants with multiple reporting options (e.g., written, verbal, with any representative with whom they feel comfortable). Additionally, because victims tend not to use formal complaint procedures, it is important to have informal procedures for reporting, advice, and resolution available (Berdahl & Raver, 2011).

Following a complaint, steps should be taken to immediately stop the harassment and conduct a fact-finding investigation led by an investigator who is well-trained and objective (Daniel, 2003). The speed of grievance processing is important to perceptions of its fairness (McDonald et al., 2015). The institution should take immediate corrective action including proportionate discipline when harassment has occurred. How severely organizations respond to sexual harassment is positively related to perceptions of how effective the response is in communicating the organization’s intolerance of sexual harassment (Nelson et al., 2007) and organizational intolerance is negatively related to the frequency of sexual harassment (Fitzgerald et al., 1997; Ollo-López & Nuñez, 2018). However, overly severe punishments (e.g., termination of the harasser) may encourage people to ignore harassment or cover it up to avoid the negative outcome. An effective procedure is one that has been communicated, is perceived as fair and is consistently implemented (Berdahl & Raver, 2011; McDonald et al., 2015). Despite a consistent focus on updating systems designed to manage complaints, the majority of students and faculty experiencing sexual harassment do not report it (Bondestam & Lundqvist, 2020). This has led some institutions to address individuals’ reluctance to use formal grievance procedures by providing alternatives. For example, the College of Liberal Arts at Purdue University created the Sexual Harassment Advisors Network (SHAN) to provide advice to students, faculty, and staff who have issues or concerns related to sexual harassment. While SHAN is not intended to replace more formal channels, it provides students, faculty, and staff with more options to discuss and deal with their harassment experiences (Grauerholz et al., 1999).

Tertiary Interventions

Victim support

Once sexual harassment has occurred, there are interventions that IHEs can employ to provide victims with support, rehabilitation, and potentially reparations. Sexual harassment can have devastating effects on targets’ mental and physical health and school and job-related outcomes (Bondestam & Lundqvist, 2020; Fitzgerald & Cortina, 2018; Henning et al., 2017; National Academies of Sciences, Engineering, and Medicine, 2018; Shaw et al., 2018; Wood et al., 2021). Victim support interventions are longer-term responses that aim to address the lasting effects of the harassment, restore individuals’ health and safety and prevent further incidences of harassment and victimization (McDonald et al., 2015). Unfortunately, there seems to be little clear research evidence that support efforts achieve their intended effects (Bondestam & Lundqvist, 2020; Hunt et al., 2010; McDonald et al., 2015).

Institutional Factors

In their systematic review of sexual harassment in higher education, Bondestam and Lundqvist (2020) observed that organizational factors (e.g., leadership, organizational practices and systems such as those related to recruitment, organizational structure) are often overlooked in research on sexual harassment prevention in higher education (Bondestam & Lundqvist, 2020). The roles of these factors in sexual harassment prevention are considered next.

Leadership

Leaders play an important role in the occurrence of sexual harassment as well as how individuals respond to experiences of it (Perry et al., 2021). When leaders role model appropriate behavior, are sensitive to and discourage sexual harassment, harassment is less likely than when they are indifferent to it (Pryor et al., 1993, 1995). Leaders’ enforcement of sexual harassment policy and their efforts to stop harassment when it occurs are negatively related to incidences of sexual harassment, and positively related to employees’ organizational commitment and satisfaction and greater likelihood of reporting harassment (e.g., Gruber, 1998; Offermann & Malamut, 2002; Williams et al., 1999). Leaders who implement practices related to sexual harassment policy and procedures demonstrate their commitment to addressing sexual harassment and contribute to a climate intolerant of it (Gruber, 1998; Offermann & Malamut, 2002). Alternatively, when leaders engage in sexually harassing behaviors, they establish social norms that suggest that it is permissible, and as a result, others are more likely to engage in similar behavior (Pryor et al., 1993). Although the case is often made that leaders shape work climates that have implications for sexual harassment, little research has directly assessed this (Glick et al., 2018; Perry et al., 2021). However, some have suggested that leadership development programs that foster leadership styles (e.g., inclusive leadership) that are incompatible with sexual harassment may be a longer-term strategy for reducing sexual harassment in part through the more positive climates these leaders foster (Perry et al., 2021). Such programs could be targeted to faculty, staff, and students who hold leadership positions on campus.

Practices and systems

While the section on leadership focused on how leaders might lead in a way that makes sexual harassment less likely, the current section focuses on the role that equitable personnel processes, the representation of women in positions of power and the extent of gender balance in work groups play in sexual harassment. First, institutional practices and systems related to equitable student admissions, and hiring, advancement and promotion of employees generally and women more specifically have long-term implications for harassment (Bell et al., 2002; Berdahl & Raver, 2011; Kabat-Farr & Cortina, 2014; Tenbrunsel et al., 2019). Reward and promotion systems that are based on performance and are transparent and equitable are likely to foster cooperative institutional climates and to reduce sexual harassment (Berdahl & Raver, 2011). By contrast, institutional practices and systems that lack transparency, employ competitive reward systems (e.g., fail to reward group performance), and threaten individuals’ work environments encourage hostility, including sexual harassment, as a way to protect one’s job (Berdahl & Raver, 2011). There is also evidence that organizations with practices designed to help employees balance work and family (e.g., flexible hours) experience lower levels of sexual harassment perhaps because practices designed to improve employees’ well-being positively impact organizational culture (Ollo-López & Nuñez, 2018).

Second, scholars have suggested that gender equitable systems that increase women’s representation in leadership roles will reduce sexual harassment because female leaders are more sensitive to, and less tolerant of, harassment and less likely to engage in harassment (Bell et al., 2002). A recent study found that the number of women in the upper administration of colleges and universities (presidents, provosts, and chancellors) was negatively and significantly related to harassment claims (Glass et al., 2020). However, the gender of the university president alone did not impact harassment allegations, suggesting that it is a critical mass of women who can effect change. Women’s assumption of leadership positions may foster organizational cultures that are less tolerant of, and more likely to identify and address sexual harassment (Glass et al., 2020). Third, sexual harassment is less likely to occur in gender balanced workgroups (e.g., Bell et al., 2002; Gruber 1998). Women are particularly vulnerable to sexual harassment in male-dominated organizations and where they have low status, low power, and earn less than men (Bell et al., 2002).

The shortage of women in some academic fields and authority positions, and the power differentials that exist (e.g., between tenured and non tenured faculty; between graduate students and their advisors), make sexual harassment particularly likely in academia (Tenbrunsel et al., 2019). The research reviewed above suggests the importance of equitable hiring, performance management, and promotion systems that result in the more equal distribution of men and women across different types and levels of jobs in the institution (Berdahl & Raver, 2011). Importantly, practices and system related changes can occur in the context of campus employment as well as the admissions process.

Structure

The impact of organizational structure on sexual harassment has received little research attention (Ollo-López & Nuñez, 2018). However, some research suggests that structural features of institutions that contribute to increased job satisfaction and commitment (e.g., opportunities for internal mobility, autonomy in decision making) result in lower incidences of sexual harassment (Mueller et al., 2001). There is also evidence that sexual harassment may be more likely in larger work groups where depersonalization is more likely and a sense of community is potentially lower (Berdahl & Raver, 2011). Additionally, sexual harassment appears to be more likely where job responsibilities are more formalized and people’s work depends on the direct supervision of their boss (Ollo-López & Nuñez, 2018).

Universities and colleges typically operate under the principle of shared governance in which oversight of, and responsibility for, the institution are shared by faculty, administrators, and trustees (Tenbrunsel et al., 2019). This governance structure diffuses authority and makes oversight of behavior difficult (Tenbrunsel et al., 2019). Because universities are complex and decentralized, addressing harassment often cuts across a number of university structures which may make it harder for the institution to address harassment systemically. Consistent with this, a recent report by the AAU found that 95% of responding institutions indicated they were developing new coordination or data sharing relationships between offices and programs to address sexual assault and misconduct on campus (Association of American Universities, 2017). This suggests the potential benefits of interventions that create new positions that are clearly vested with the responsibility of oversight related to sexual harassment or that facilitate coordination and communication between existing structures. Structural changes target the manner in which complaints are processed and managed internally by the organization behind the scenes.

Multi-pronged approaches and climate

There is ample research evidence that higher levels of sexual harassment occur in institutions that have climates that tolerate it (e.g., Fitzgerald et al., 1997; Ollo-López & Nuñez, 2018; Perry et al., 2021). In climates that tolerate sexual harassment, employees perceive there to be a greater risk of reporting harassment, a lower likelihood that allegations will be taken seriously and lowered expectations that harassers will be sanctioned. More recent research suggests that other types of climates that promote positive and respectful interactions at work (e.g., climates of civility, justice climates, inclusive climates, and ethical climates) may also be inhospitable to sexual harassment and contribute to deterring it (e.g., Lim & Cortina, 2005; Perry et al., 2021; Rubino et al., 2018). Certain cultures and climates (e.g., those that are more inclusive) may also influence the extent to which a third party observer is willing to support a woman who experiences harassment (Collazo & Kmec, 2019).

Each of the intervention strategies discussed above (training programs that promote civil behavior; the existence of strong policies and clear procedures for filing complaints) can play a role in fostering a more positive institutional climate that does not tolerate sexual harassment. The cumulative effect of a variety of interventions on climate may in part explain why comprehensive (involving multiple interventions, stakeholders, and settings) rather than singular prevention strategies have been found to be most effective (Nation et al., 2003). In actual fact, many universities and colleges have developed and use multiple strategies to address sexual harassment. While many factors impact climates, leaders play a particularly important role in shaping organizational and unit-level climates (Boekhorst, 2015). Their own behavior establishes social norms about behaviors that are acceptable (Pryor et al., 1993) and their choice to implement practices related to sexual harassment policy and procedures demonstrates their level of commitment to addressing sexual harassment and contributing to a climate intolerant of it (Gruber, 1998; Offermann & Malamut, 2002).

Intersectionality

Sexual harassment research conducted in the general workplace and in higher education has generally failed to explore how multiple dimensions of difference (e.g., race, gender, sexual orientation) influence individuals’ experience of sexual harassment (Bondestam & Lundqvist, 2020; Fitzgerald & Cortina, 2018). For example, women of color may experience more and different types of harassment compared to white women, white men, and men of color (Fitzgerald & Cortina, 2018). A number of IHEs have developed programs targeting specific constituencies (Association of American Universities, 2017). However, it is unclear whether these programs address intersectionality per se, or merely include a separate program for each specific group. To the extent that individuals with different intersectional profiles have different experiences, their needs may differ, and so too might the programs needed to address them.

Choice of Intervention: Needs Assessment

This section considers how IHE administrators might choose between different intervention strategies. Few would disagree with the importance of implementing sexual harassment prevention efforts in IHEs, and not surprisingly institutions currently offer a wide variety of interventions. While it seems logical to suggest that interventions are likely to be most effective when they address the specific needs of a given institution, it is not always clear that the interventions that are developed and implemented are based on formal assessments of the needs of the institution and the people affiliated with it. A needs assessment involves diagnosing what needs to be trained, for whom, and when (Salas et al., 2012). Implementing a sexual harassment prevention program without first conducting a needs assessment is akin to giving a patient (individual, institution) medicine without fully diagnosing their illness and understanding how it is affecting them specifically.

Different stakeholder groups within higher educational institutions (e.g., faculty, students, staff) likely have different needs that require different interventions. A person analysis can help identify individuals who are most likely to benefit from an intervention and the type of intervention that is most appropriate for them. In some cases a climate survey can serve as a diagnostic tool if it provides insight into the prevalence of sexual harassment, the institution’s perceived tolerance of it, and knowledge about reporting procedures. For example, a climate survey may help identify which stakeholder groups on a given campus experience the highest levels of sexual harassment and therefore should be targeted for immediate action. Supplementary data may be required to determine which target groups have a good understanding of information related to sexual harassment (e.g., reporting channels; institutional policies) and which do not and therefore which groups should be targeted to receive this information. An intervention designed to provide individuals with information about reporting channels is most effective when it targets only those who require this information.

Additionally, an organizational assessment can help identify intervention priorities based on the institution’s goals and determine whether the institution has the resources and will to support interventions that are developed (Salas et al., 2006). For example, if an important goal of an institution is to promote female leaders into positions of authority, an intervention designed to target this specific outcome should be prioritized. It is also important to consider the institution’s organizational climate. Ideally, interventions that align with the institution’s strategic priorities and those that foster an environment that supports the intervention should be given top priority. Annual climate surveys may provide useful information about the climate in which interventions are or will be implemented, and thus help identify potential obstacles to the success of these interventions. It is also important to assess the institution’s resources and will; interventions will only be successful when the institution provides financial support, proper equipment, and necessary materials to develop and implement interventions.

An important outcome of a needs assessment is an understanding of the specific needs and whose needs the intervention is designed to address and thus greater clarity around the outcomes that an intervention should be designed to impact (e.g., greater awareness, changed behavior, improved climate). The needs assessment should be tied directly to an evaluation plan (National Academies of Sciences, Engineering, and Medicine, 2018). For example, some institutions may identify and prioritize changing a particular group’s (e.g., members of fraternities and sororities) attitudes related to sexual harassment, while others may focus on addressing the institution’s need to limit legal vulnerabilities by reducing sexual harassment behavior.

Intervention Evaluation

Research has not evaluated the effects of major efforts designed to prevent sexual harassment (Bondestam & Lundqvist, 2020). However, to be effective, prevention programs must be continuously evaluated (Nation et al., 2003). Information learned from evaluations of interventions serve a number of purposes (Griffin, 2012). This information can be used to justify the allocation of resources and time, assess whether the intervention is having the intended impact, and consequently whether elements or the whole of the intervention need to be revised or discontinued. Research in the context of training interventions finds that the frequency of evaluations and what is evaluated (e.g., reactions, behavior, knowledge, results) has implications for whether the training intervention effectively transfers beyond the training program. For example, transfer of training beyond the training context is more likely when organizations evaluate their training more frequently, and when they assess the impact of training on trainees’ behaviors and department and organizational performance rather than on trainees’ reactions to the training and how much they learned in the training (Saks & Burke, 2012).

Despite all of the good reasons to evaluate, research on program evaluation and particularly training interventions indicates that few institutions do it. Research has identified obstacles that limit the extent to which program evaluation occurs including: lack of resources, assumption that the program works, a lack of agreement on what should be evaluated, and a lack of knowledge about how to conduct evaluations. Institutions may also be reluctant to evaluate their sexual harassment prevention programs due to concerns that learning that they are potentially ineffective may put them in legal jeopardy despite increasing evidence that employers have to establish that their interventions (e.g., sexual harassment training, antiharassment policy) are effective to obtain legal protection (Roehling & Huang, 2018). When evaluation is undertaken, there is often a disconnect between what is measured and the outcomes training is intended to impact (Medeiros & Griffith, 2019). For example, when evaluation of training is undertaken it tends to focus on the affective reactions of trainees (satisfaction with the training) which may be weak predictors of training effectiveness (Griffin, 2012; Wang & Wilcox, 2006). Next, I recommend how institutions can begin to evaluate the sexual harassment intervention programs they implement.

Choose Appropriate Program Outcome Measures

Program evaluation fall into one of two categories: formative and summative. Formative evaluations focus on improving the quality of the program, including its delivery and design. Summative evaluations are used to assess whether the program achieved its intended goals and outcomes (Griffin, 2012; Wang & Wilcox, 2006). Further, summative evaluations can focus on short-term outcomes such as reactions of and learning by participants or longer-term outcomes such as behavior change and institutional impact (Wang & Wilcox, 2006). Historically, training evaluation has been based on outcomes from these four hierarchical levels: reactions, learning, behavior, and results (Salas et al., 2012), with the majority of institutions relying on the first two levels. In other words, evaluation typically focuses on short-term outcomes that occur immediately after an intervention while ignoring its longer-term impact.

Reactions might refer to whether the participant liked the program and thought it and the person delivering the program were effective. In the context of training interventions, scholars have suggested that questions about the usefulness of the intervention may be more strongly associated with learning than questions about whether participants liked the intervention (Aguinis & Kraiger, 2009). It may also be useful to ask people how interested and motivated they are to participate in the intervention as these perceptions may have implications for its success (Wang & Wilcox, 2006). Learning outcomes indicate how well participants learned program-provided facts and information (e.g., understand the institution’s policy; know what different types of sexual harassment are; understand the institution’s reporting procedures). Behavioral evaluation pertains to the extent to which the program leads to behavioral change outside of the program. This requires direct observations of behavior outside of the program which is often difficult, time consuming and costly (Tannenbaum & Woods, 1992). This might include observations that program participants are more willing to report inappropriate behavior when they are a third party. Finally, results assess whether the program impacts institutional outcomes such as a reduction in formal complaints of sexual harassment or turnover related to sexual harassment. These distinctions are important and care must be taken to collect the data that are most consistent with program goals. For example, while participant reactions can be used to understand interest, attention, and motivation to engage with a program, they say little about whether the person has successfully acquired new skills or knowledge. However, learning evaluations that measure knowledge and skills can be used together with reaction evaluations to improve a program.

Addressing whether a program is effective requires a clear idea of the purpose of the program and what the program is meant to impact and is likely to require multiple measures of different types of outcomes (e.g., reactions, learning, behavior; Salas et al., 2012). Greater clarity of purpose can be achieved by the needs assessment which can help identify who is in need (e.g., undergraduates do not have a good understanding of the institution’s reporting channels) and what is needed (provision of information about reporting channels). An intervention program designed to provide this information could then be evaluated on the basis of the extent to which undergraduate students who participate in the program understand the various reporting channels available to them (a learning outcome perhaps measured by a quiz). Collecting data on a given outcome from multiple sources where appropriate (e.g., program participants, peers) may provide evidence of convergence and thus greater confidence in results (Griffin, 2012).

Additionally, it is important to consider how quickly evaluation information is needed and how long it will take for the impact of the program to impact the outcomes of interest (McLinden, 1995; Tannenbaum & Woods, 1992). For example, increasing program participants’ knowledge will likely take less time to impact and could therefore be assessed more quickly than a program designed to change behaviors which will likely require a longer window. The choice of outcome measures should be based on the results of the needs assessment as well as the purpose for which the measures are being used. For example, measures needed for compliance reporting may be different from those that may be helpful for making improvements to the prevention program. Unfortunately, institutions tend to use data that are easily measured or available rather than obtaining data that align with program objectives (Funnell, 2000). Consistent with this, in their review of research on sexual harassment and assault training programs, Medeiros and Griffith (2019) observed that there is a general “. . .misalignment between the constructs being measured and the intended outcomes of training” (p. 10). Once appropriate outcomes have been identified, attention must be given to finding or developing valid measures.

Choose Appropriate Evaluation Designs

Once relevant outcomes are identified, the next step is to choose an evaluation design. Before a particular evaluation design is adopted, the level of evidence required to show program impact must be decided. Typically, greater effort (i.e., more rigorous and sophisticated designs) is required to establish greater evidence that the program is effective. More rigorous designs, which include use of control groups and pretests, help reduce counter-arguments that the program was effective. More rigorous evaluation designs are also more costly and require more skill to employ. However, these designs are not always necessary depending on the purpose of the evaluation (McLinden, 1995). For example, less rigor is required to establish how open participants are to a new program which can be assessed by collecting reaction data at the end of a program. However, if the program is large (impacting a large number of people), ongoing, and perceived as important by the institution, a more rigorous design may be appropriate. Therefore, it is important to establish the level of evidence required to establish program effectiveness up front.

Many have suggested that the most powerful experimental designs (random control trials) are difficult, if not impossible, to implement in real world settings, particularly when they involve human program participants as is the case in sexual harassment prevention programs (Chatterji, 2007; Kraiger et al., 2004). However, some simpler quasi-experimental designs may be effective. For example, where possible, it can be useful to make comparisons between those who have been exposed to the program and a control group of individuals who have not. Logically, if both groups are impacted by the same extraneous factors (e.g., college communications), these factors are less likely to explain differences between the two groups. It may also be possible to use program participants who will but have not yet participated in the program as a comparison group for those who have. Where comparison groups are not available or possible, using trend lines may be a possibility: tracking the outcome of interest (e.g., level of satisfaction with reporting sexual harassment procedures as measured in an annual campus climate survey) over time (Shadish et al., 2002). Outcome measures (level of satisfaction) collected at multiple times prior to the program can be used to forecast future trends; support for program effectiveness occurs when the post program data is higher than the forecasted trend line.

Evidence of impact can also be demonstrated by showing the outcome targeted by the program (e.g., knowledge of the institution’s reporting procedures) is higher following the program compared to before (pre-test/post-test design). An even stronger version of this design would be to compare changes in outcomes that are expected to change as a function of the program (planned) relative to those that are not (unplanned; Haccoun & Hamtiaux, 1994). If, for example, a program is designed to improve participants’ ability to identify different types of sexually harassing behavior, responses to items assessing this knowledge should improve by a greater amount than items assessing knowledge of the institution’s sexual harassment reporting procedures (Haccoun & Hamtiaux, 1994; Kraiger et al., 2004). Finally, in some cases, assessing impact only after the intervention may be appropriate if the program developers are more interested in whether a particular level of the outcome (skill, knowledge, performance) has been achieved rather than the amount of change in that outcome (Sackett & Mullen, 1993). For example, a program designed to impart information about the institution’s resources for sexual harassment can establish effectiveness by demonstrating that program participants are familiar with at least 80% of the campus resources related to filing a complaint of sexual harassment. In this case, it may be less important to establish how many more resources participants are familiar with following the program (pre-post program change), than that they are familiar with 80% of them (i.e., achieved the benchmark). It is typically harder to make the case for program impact when no-control group designs are used. However, when less rigorous designs are used, they should include a careful investigation (using interviews or questionnaires) into whether other extraneous factors (e.g., course curricula, other campus initiatives) may have played a role in what appears to be a program effect (Sackett & Mullen, 1993).

Finally, where quasi-experimental and experimental designs are not feasible (e.g., when only correlational data obtained from a survey or from a large database are available), a confirmatory evaluation approach may help strengthen support for a causal relationship between program participation and targeted outcomes (Chatterji, 2016; Reynolds, 2005). This approach is based on a clear theory of how the program is expected to impact the outcomes of interest and looks for and interprets patterns of relationships based on the theory. The following are examples of the types of evidence that can provide greater support for conclusions that the program impacted targeted outcomes (Chatterji, 2016; Reynolds, 2005):

(1) Temporality: When outcomes are measured after program participation.

(2) Gradient: When greater program intensity (e.g., number of participation hours, number of sessions attended) is accompanied by greater outcomes.

(3) Size: When size of the relationship between the program and outcomes is sufficiently large.

(4) Specificity: When a specific type of intervention consistently influences a specific type of outcome.

(5) Consistency: When the intervention impacts the same outcomes similarly across contexts and groups of people.

(6) Coherence: When the findings taken as a whole provide a convincing story for the effects of the program on outcomes.

Program Evaluation 2.0

In practice, institutions may implement sexual harassment prevention programs that are complex because they involve multiple intervention strategies, target multiple stakeholders (faculty, staff, and students), are delivered by human agents, and are housed in institutions that are themselves complex. Some scholars have suggested that evaluating complex social programs requires a different approach than evaluating programs that are less complex (Chatterji, 2007, 2016). Complex social programs often do not lend themselves to the most rigorous experimental designs; they are hard to standardize due to human delivery agents, difficult to randomly assign and manipulate, and often more than one program can influence outcomes of interest. As a result, it is difficult to isolate the net effects of the entire program on targeted and measurable outcomes over and above the effects of other contextual factors. This reality runs counter to assumptions of a traditional evaluation approach, in which a standardized treatment can be experimentally manipulated and randomly assigned to a clearly defined target group, resulting in an indisputable causal impact on targeted outcomes (Chatterji, 2016).

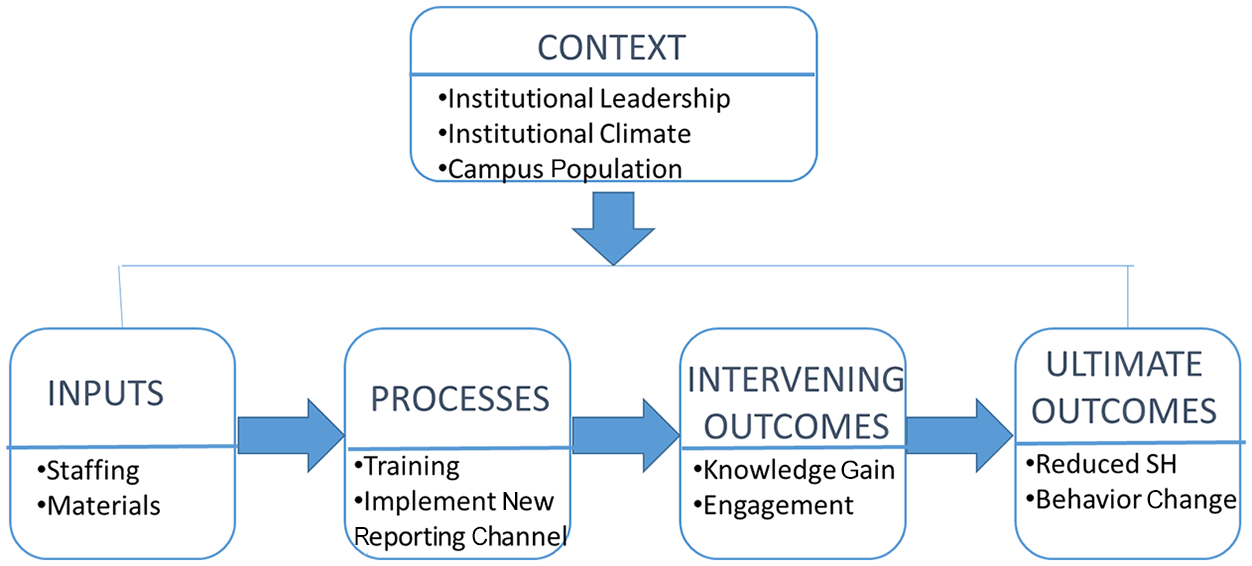

Two approaches have been suggested to evaluate the effects of complex programs on targeted outcomes. First, it may be helpful for multiple stakeholders in the institution, including those involved in the development and implementation of the program, to develop a “logic model” or “program theory” which depicts the causal pathways by which the program is expected to lead to outcomes in target populations before it is evaluated (Chen & Rossi, 1983). The logic model makes the underlying assumptions about how the program is expected to work explicit (Reynolds, 2005). This model can then be used to guide the evaluation process (Chatterji, 2004). The model can be based on social science theory, different stakeholder beliefs, and local information. The model should: identify program relevant constructs (e.g., different aspects of the content of the program and how it is delivered), map relationships between these and program outcomes, and identify aspects of the larger context (e.g., campus population, institutional leadership, climate) that may influence the program and outcomes (Chatterji, 2004, 2016). Additionally, this model should outline the relationship between the program and a sequence of hierarchical outcomes: immediate (e.g., intended targets participate in the program), intermediate (e.g., participants learned something), and long-term impacts (e.g., there is greater intervention on the part of bystanders on campus; Funnell, 2000). For each level, attention should be given to: what the outcome of interest is, what success looks like, what program factors and non program factors may impact success, and what information should be collected, from what sources, and using what methods (Funnell, 2000).

The collection of sequential outcomes over time provides information about intervening processes that affect program effectiveness and allows course correction along the way (Chen & Rossi, 1983). Different questions about impact are likely to arise during different phases of the program (e.g., early compared to later stages) and these different questions will require different methodological approaches. For example, earlier in the evaluation process, the model can be used to assess whether the program is actually being implemented in a way that is consistent with how it was conceived. As data are collected and changes to the program are made, the model may evolve over time, and incorporate feedback loops (e.g., showing how outcomes impact contextual factors). See Figure 1 for a depiction of a sample logic model that may guide the implementation and evaluation of a sexual harassment prevention program.

Sample logic model.

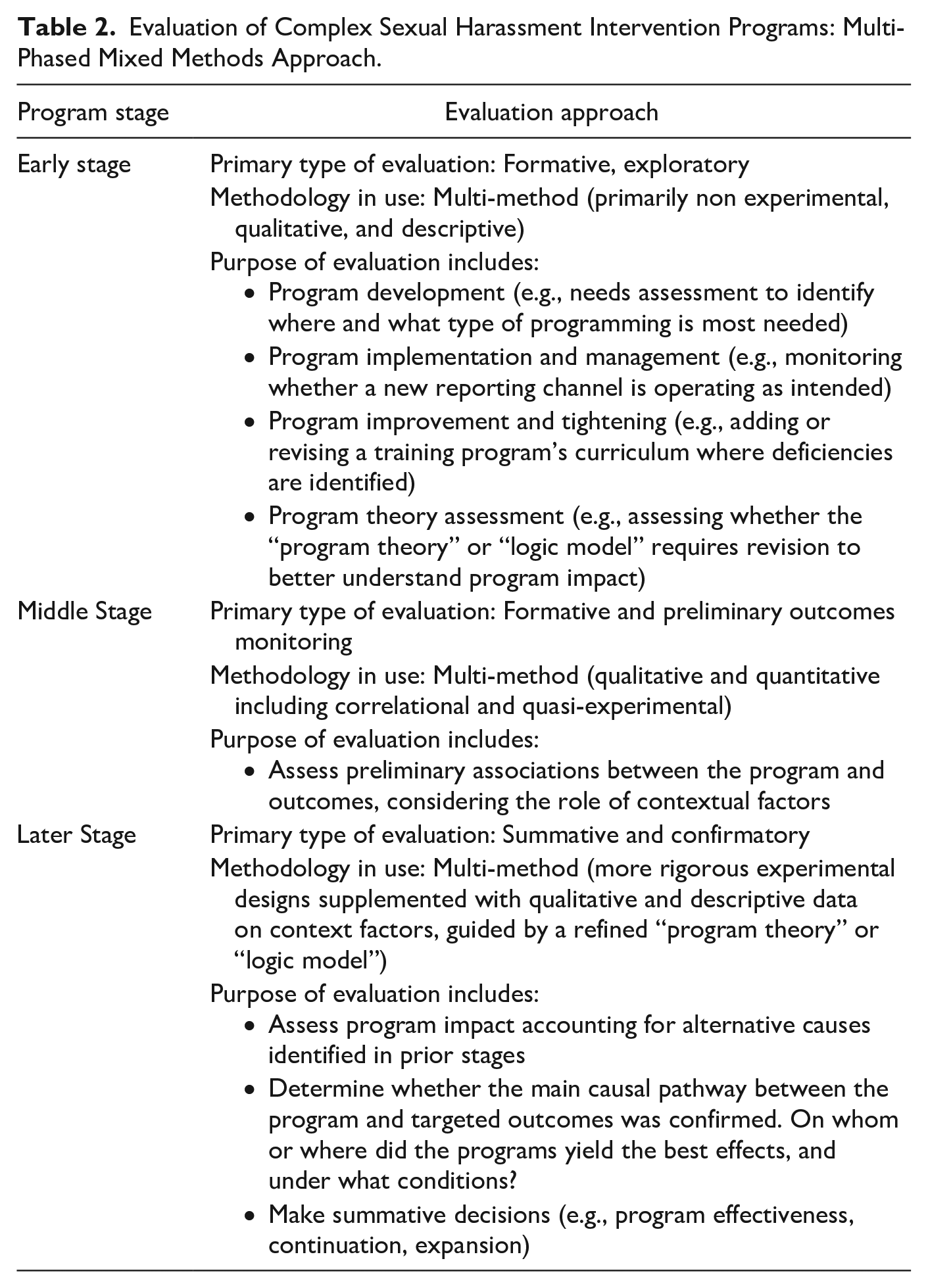

Some scholars have also advocated for multi-phased mixed methods designs that rely on a logic model in addition to other strategies. These designs employ qualitative and quantitative (correlational, quasi-experimental, and experimental) research methods at different points in the life of the program to assess whether it had the intended effects (Chatterji, 2004, 2016; McLinden, 1995). This approach suggests adopting a more exploratory perspective in earlier phases where the goal is to learn about the organizational context, refine and stabilize the content and delivery of the program and better understand the context in which it is implemented. Only when a program is implemented successfully (and the logic model may help establish this) can it be determined whether it has had its intended impact (Chen & Rossi, 1983; McLinden, 1995). The next, middle, stage may involve preliminary examination of relationships between the program and its intended outcomes. Finally, guided by an underlying logic model or program theory, later stages would take a more confirmatory approach and more formally assess the impact of the program on intended outcomes using the comparative experimental or quasi-experimental designs described earlier (Chatterji, 2004, 2007, 2016). This approach suggests that more rigorous evaluation designs should occur in later stages when the program is better defined, and potentially confounding environmental and organizational variables are more clearly identified and can be observed and analyzed. Across these phases, multiple qualitative (e.g., interviews) and quantitative (e.g., surveys) methods may be used for process and outcome assessments, and complement one another to provide a more comprehensive understanding of the program (Chatterji, 2016).

Table 2 summarizes what the approach proposed by Chatterji (2004, 2016) might look like when applied to a hypothetical sexual harassment prevention program comprising multiple moving components in a higher education institution. For example, a program like this might include: education and policy training offered to students and employees at all levels; new institutional offices and processes for reporting and addressing incidents of sexual harassment; and maintenance of longitudinal survey data and records on sexual harassment incidents.

Evaluation of Complex Sexual Harassment Intervention Programs: Multi-Phased Mixed Methods Approach.

Conclusion

IHE administrators are responsible for identifying and implementing sexual harassment prevention efforts aimed at a diverse set of stakeholders. These prevention efforts will be more successful when administrators adopt an evidence-based approach to selecting interventions, implementing them, and assessing their impact. Reflexively reaching for traditional policy and training tools, in the absence of research evidence and needs assessments, wastes institutional resources and fails to deliver intended outcomes. Instead, IHEs should use needs assessments to inform program development (e.g., a person assessment may suggest that different types of programs are required for different stakeholders), identify where problems lie (e.g., within labs or groups that have higher rates of sexual harassment), and establish program priorities (e.g., deciding who most requires an intervention and when). Then, IHEs can adopt a systematic approach to program evaluation, using theory to guide data collections. IHEs already collect an extraordinary amount of data related to sexual harassment (in general) and the impact of prevention interventions (more specifically; Association of American Universities, 2017).

Unfortunately, institutions are implementing complex prevention programs without a parallel emphasis on systematically collecting data or systematically analyzing the data they already have related to program effectiveness—making it impossible to identify the prevention programs that might deliver the greatest value.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.