Abstract

The spread of modern digital technologies, such as social media online platforms, digital marketplaces, smartphones, and wearables, is increasingly shifting social, political, economic, cultural, and physiological processes into the digital space. Social actors using these technologies (directly and indirectly) leave a multitude of digital traces in many areas of life that sum up an enormous amount of data about human behavior and attitudes. This new data type, which we refer to as “digital behavioral data” (DBD), encompasses digital observations of human and algorithmic behavior, which are, amongst others, recorded by online platforms (e.g., Google, Facebook, or the World Wide Web) or sensors (e.g., smartphones, RFID sensors, satellites, or street view cameras). However, studying these social phenomena requires data that meets specific quality standards. While data quality frameworks—such as the Total Survey Error framework—have a long-standing tradition survey research, the scientific use of DBD introduces several entirely new challenges related to data quality. For example, most DBD are not generated for research purposes but are a side product of our daily activities. Hence, the data generation process is not based on elaborate research designs, which in turn may have profound implications for the validity of the conclusions drawn from the analysis of DBD. Furthermore, many forms of DBD lack well-established data models, measurement (error) theories, quality standards, and evaluation criteria. Therefore, this special issue addresses (i) the conceptualization of DBD quality, methodological innovations for its (ii) assessment, and (iii) improvement as well as their sophisticated empirical application.

Introduction

The spread of modern digital technologies, such as social media online platforms, digital marketplaces, smartphones, and wearables, is increasingly shifting social, political, economic, cultural, and physiological processes into the digital space. Social actors using these technologies (directly and indirectly) leave a multitude of digital traces in many areas of life that sum up an enormous amount of data about human behavior and attitudes. This new data type, which we refer to as “digital behavioral data” (DBD), encompasses digital observations of human and algorithmic behavior, which are, among others, recorded by online platforms (like Google, Facebook, or the World Wide Web) or sensors (like smartphones, RFID sensors, satellites, or street view cameras) (Wagner et al., 2025). By including “algorithmic behavior,” we acknowledge that in some (or many) instances, this observable behavior is due to non-human agents, e.g., bots. This is in line with the term “machine behavior”, introduced by Rahwan et al. (2019, p. 477), referring to intelligent machines as a class of actors with particular behavioral patterns and ecology. The term DBD is closely related, but not identical, to the term “Big Data” and shares most of its characteristics, such as velocity, volume, value, variety, and veracity (Fröhling et al., 2023; Kohne et al., 2021), and, as such, most of the challenges in terms of analyzability.

DBD presents new methodological opportunities for understanding social and political processes and transformations, from global networking and political polarization to analyzing interaction patterns in digital environments. It also enables us to study the major changes in the private and public spheres that digitization is bringing about, such as the effects of social media and AI on democracies, social cohesion, or individual well-being. In addition to studying new social phenomena, DBD can also help to enhance our understanding of classic social phenomena such as social movements and collective action (Tufekci, 2017) or health behavior and mental well-being (Chancellor & De Choudhury, 2020) by providing new types of data.

However, studying these social phenomena relies on data that satisfies specific quality requirements. In survey research, there is a long tradition of data quality frameworks that mainly focus on intrinsic requirements of survey data (cf. the Total Survey Error framework by Groves et al., 2009) or the broader Total Survey Quality framework (Biemer, 2010). Intrinsic data quality properties refer to inherent attributes of the data itself and are independent of context or usage, e.g., accuracy, validity, or reliability. Due to the heterogeneity of DBD, multiple error frameworks that focus on inherent attributes of specific DBD sources exist, such as the Total Error Framework (TEF) by Amaya et al. (2020), the Total Error Frameworks for Found Data by Biemer and Amaya (2020) the Total Error Framework for Digital Traces of Human Behavior on Online Platforms (TED-ON) by Sen et al. (2021) or, more specifically, the Total Twitter Error (Hsieh, Ching Chi & Murphy, 2017). Bosch and Revilla (2022) introduced an error framework for metered data (in the following, we will use the term “web tracking data”, see also the contribution to this volume by Adam et al., 2024). In contrast, the extrinsic perspective evaluates the data quality based on context-specific criteria, primarily focusing on the data’s fitness-for-use or how well the data meets the needs of users or research objectives. For instance, the FAIR criteria, referring to the usability of data in terms of findability, accessibility, interoperability, and reusability (Wilkinson et al., 2016), are related to extrinsic data quality properties.

The special issue at hand aims to provide deeper insights into current research activities with respect to conceptualizing and empirically investigating data quality issues within digital behavioral data. Specifically, our call invited contributions focusing on the conceptualization of DBD quality, methodological innovations for its assessment and improvement, as well as their sophisticated empirical application. This special issue comprises nine valuable contributions addressing various aspects of DBD data quality. While most of the following contributions adopt an intrinsic perspective, there are a few exceptions, e.g., Dahlke et al. (2023) or Yu et al. (2024), which also include an extrinsic data quality perspective.

The first group of papers focuses primarily on conceptual frameworks for assessing DBD. Daikeler et al. (2024) systematically review 58 existing data quality frameworks to assess their applicability to modern digital social science data. Schneck and Przepiorka (2024) introduce a new comprehensive framework, the Total Error Framework for Digital Behavioral Data (TEF-DBD), employing meta-dominance analysis to identify and quantify error sources within DBD.

A second set of contributions explores methodological innovations and their sophisticated empirical applications. Antoun and Wenz (2024) conduct an accelerometer-based study, which provides high-resolution, passive data on physical activity, offering advantages over traditional self-reported measures. However, nonparticipation bias remains a critical challenge affecting data quality. While the previous paper addresses the representation arm of the TSE framework, the paper by Cernat et al. (2024) focuses on the measurement side. Specifically, it addresses the measurement quality of digital trace and survey data using the MultiTrait MultiMethod (MTMM) model, focusing on smartphone usage behaviors. They challenge the assumption of digital trace data’s inherent superiority over self-reported measures, demonstrating that quality varies significantly across methods. Similarly, Wenz et al. (2024) also focus on passively collected digital behavioral data (DBD) from smartphones. This study evaluates the alignment between self-reported smartphone use and DBD across three key dimensions: amount of use, variety of use, and activities of use.

Adam et al. (2024) evaluate the quality challenges of collecting web tracking data, e.g., individual media consumption. Their contribution highlights issues related to sampling, validity, device diversity, long-tail consumption, transparency, and privacy. They introduce WebTrack, an academic solution enabling enhanced content-level analysis, significantly improving data quality by capturing a broader spectrum of digital behaviors (the software was further maintained and developed by GESIS. For more information introducing the service, see Mangold et al., 2023, and https://gesis.org/webtracking).

The paper by Dahlke et al. (2023) assesses the data quality of web-scraped data against the assumption that all web content is equally accessible. This study challenges that assumption by systematically examining biases in the accessibility of web content collected from URL-logged browsing data. In a similar vein, Grigoropoulou and Small (2024) investigate machine-generated data from private companies that present new opportunities for social science research, but concerns remain about the accuracy and reliability of such data. This study evaluates the data quality of business location records from SafeGraph, a widely used private-sector dataset, focusing on financial institutions’ classification and accuracy. The findings reveal significant classification errors, including mislabeling of businesses, unidentified closures, and duplicate records, which systematically affect the dataset’s validity.

Finally, the paper by Yu et al. (2024) is concerned with the intrinsic and extrinsic quality of datasets used for training machine learning models in hateful communication detection, which is a critical yet underexplored issue. This systematic review evaluates datasets developed over the past decade, focusing on their inclusivity, representational accuracy, and the biases embedded in their curation. The study reveals that existing datasets disproportionately focus on specific target identities while underrepresenting others, such as individuals with disabilities or older adults. Additionally, the review highlights mismatches between conceptualized target groups in the dataset documentation and the actual data contents.

Together, these contributions advance the understanding of data quality issues in digital behavioral data, offering conceptual frameworks and practical methodologies to enhance data validity and reliability in empirical social science research. However, the purpose of this editorial is not only to briefly introduce the contributions to this special issue. We also aim to contribute to the research by classifying DBD studies concerning data quality. In doing so, we refer to a classification schema by Wagner et al. (2025) based on the dichotomous dimensions “data collection modus” (user- or platform-based) and “data generation process” (designed vs. found data). Using this 2 × 2 classification schema can help categorize DBD studies and identify related data quality properties as well as related data quality issues. By distinguishing categories of research design and mode of collecting DBD, we attempt to link data quality dimensions to the respective categories.

The remainder of the editorial is structured as follows: we briefly recapitulate data quality in the (survey-based) social sciences. We then focus on an in-depth introduction to DBD, focusing on introducing the classification schema mentioned above. The editorial concludes with a broader and integrated perspective on the quality of DBD.

Data Quality in the Social Sciences

Daikeler et al. (2024) provide a methodological overview of relevant data quality frameworks in the social sciences, which is central as it examines existing data quality frameworks to assess their applicability to modern digital social science data. They show that most of these frameworks evolve around an intrinsic or extrinsic perspective on data quality.

The Total Survey Error (TSE) is a well-known example of an intrinsic perspective, and it is concerned with the correctness of the data and identifies certain error sources. Following the TSE approach, we can distinguish two error sources, which can be related to “measurement error(s)” and “representation error(s)”. While the first two error sources are introduced in the seminal work on the TSE by Groves et al. (2009), Biemer et al. (2014, p. 387) introduce a third error type, namely “modelling error”, which captures “the error arising from fitting models for various purposes such as imputation, derivation of new variables, adjusting data values or estimates to conform to benchmarks, and so on”. Measurement errors can be divided into validity-related, measurement, and processing errors, indicating how well the survey questions measure the constructs of interest. Representation errors include coverage, sampling, nonresponse, and adjustment errors, indicating how well estimates generalize to the target population (Lyberg et al., 2018, p. 154).

On the other hand, an extrinsic perspective focuses on the usability of data in concrete research contexts, i.e., its “fitness for use” (or fitness for purpose) and contrasts with the intrinsic perspective, which emphasizes the internal properties of the data itself. Other dimensions related to the extrinsic perspective are the legal and ethical dimensions, including open science-related aspects such as reuse and replicability of the data (Daikeler et al., 2024). A prominent implementation is the so-called FAIR principle, introduced by Wilkinson et al. (2016). The acronym refers to the data requirements being findable, accessible, interoperable, and reusable. Data adhering to these FAIR principles are of high scientific value and are necessary but not necessarily sufficient conditions for reproducible (and replicable) research (Schoch et al., 2024). The section on “An Integrated DBD Quality Perspective” will extend the discussion of extrinsic (or contextual) data quality (Batini & Scannapieco, 2006).

Finally, we also want to refer to a recent position paper by Birkenmaier et al. (2024), titled “Defining and Evaluating Data Quality for the Social Sciences”, which also links existing data quality approaches, such as the TSE mentioned above, to more recent methodological developments in the (computational) social sciences. They highlight the challenges of diverse methods, varying data types (surveys, social media, web tracking, and linked data), and the lack of universal standards. In response, they propose a comprehensive and unified framework that integrates two major traditions in data quality evaluation: error-focused frameworks (like the Total Survey Error approach) and dimension-focused frameworks, which emphasize accuracy, timeliness, and usability. Based on input from multiple domain experts, they emphasize the importance of “fitness for use,” meaning data quality should be evaluated based on its intended use case. The proposed framework centers around defining the purpose of data usage clearly, specifying intrinsic quality requirements (such as accuracy and reliability), and addressing extrinsic requirements (like accessibility, documentation, and interoperability). The framework offers practical guidance for researchers and data curators by mapping these requirements to concrete metrics and indicators.

An In-Depth Introduction to DBD

In recent years, the social sciences have increasingly recognized the potential of DBD to address new substantive research questions in many research fields (Box-Steffensmeier et al., 2022). However, the scientific use of DBD is also associated with entirely new challenges. In contrast to survey data, most DBD are not generated for research purposes but are a side product of our daily activities (also called “readymade data” by Salganik, 2018). Hence, the data generation process is not based on elaborate research designs, which in turn may have profound implications for the validity of the conclusions drawn from the analysis of DBD. With respect to the aforementioned distinction between the intrinsic and extrinsic perspective, this mostly refers to the intrinsic perspective.

Since the 1960s, researchers have distinguished between unobtrusive and obtrusive research methods (Webb et al., 1966). Unobtrusive (also, nonreactive or observational) methods allow social scientists to study human behavior without direct interaction, avoiding disruptions like surveys or interviews. Because researchers do not influence data generation, these methods offer higher ecological validity but limit inferential power. In contrast, obtrusive methods, such as experiments, involve researcher-designed interventions in controlled (e.g., lab experiments) or semi-controlled environments (e.g., online field experiments). While large-scale online field experiments enhance ecological validity, they may reduce internal validity, as other factors can confound treatment effects. This distinction can also be applied to digital behavioral data: we can differentiate between data that is gathered with obtrusive methods (so-called “designed data”) and data that is collected with unobtrusive methods (so-called “found data” or “organic data”) (Strohmaier & Wagner, 2014). Digital behavioral data qualifies as “found digital behavioral data” when collected from social media or web platforms without controlling the data generation process. Typical examples are the “traces” that humans leave on Facebook or Google as a byproduct of their interactions with online platforms. Digital behavioral data can be considered “designed digital behavioral data” when the researchers control the data generation process – e.g., by randomly sampling participants from well-defined populations, measuring and blocking confounders, or randomly assigning participants to treatments.

For both, found and designed DBD, researchers can adopt a user-centered or a platform-centered data collection approach. User-centered data collections require users to participate in collecting their data, while platform-centered data collections typically do not require support from the data owner. User-centered data collections, often utilizing surveys to recruit participants, can link digital behavioral data with individual-level information on demographics and variables like party identification, political trust, or evaluations of other societal groups (Stier et al., 2019). To conduct user-centered data collections, research software like web tracking browser plugins (Adam et al., 2024), mobile apps (Kreuter et al., 2020; for recent developments see also Lux et al., 2025), or data donations (Boeschoten et al., 2022) is being developed at various academic institutions.

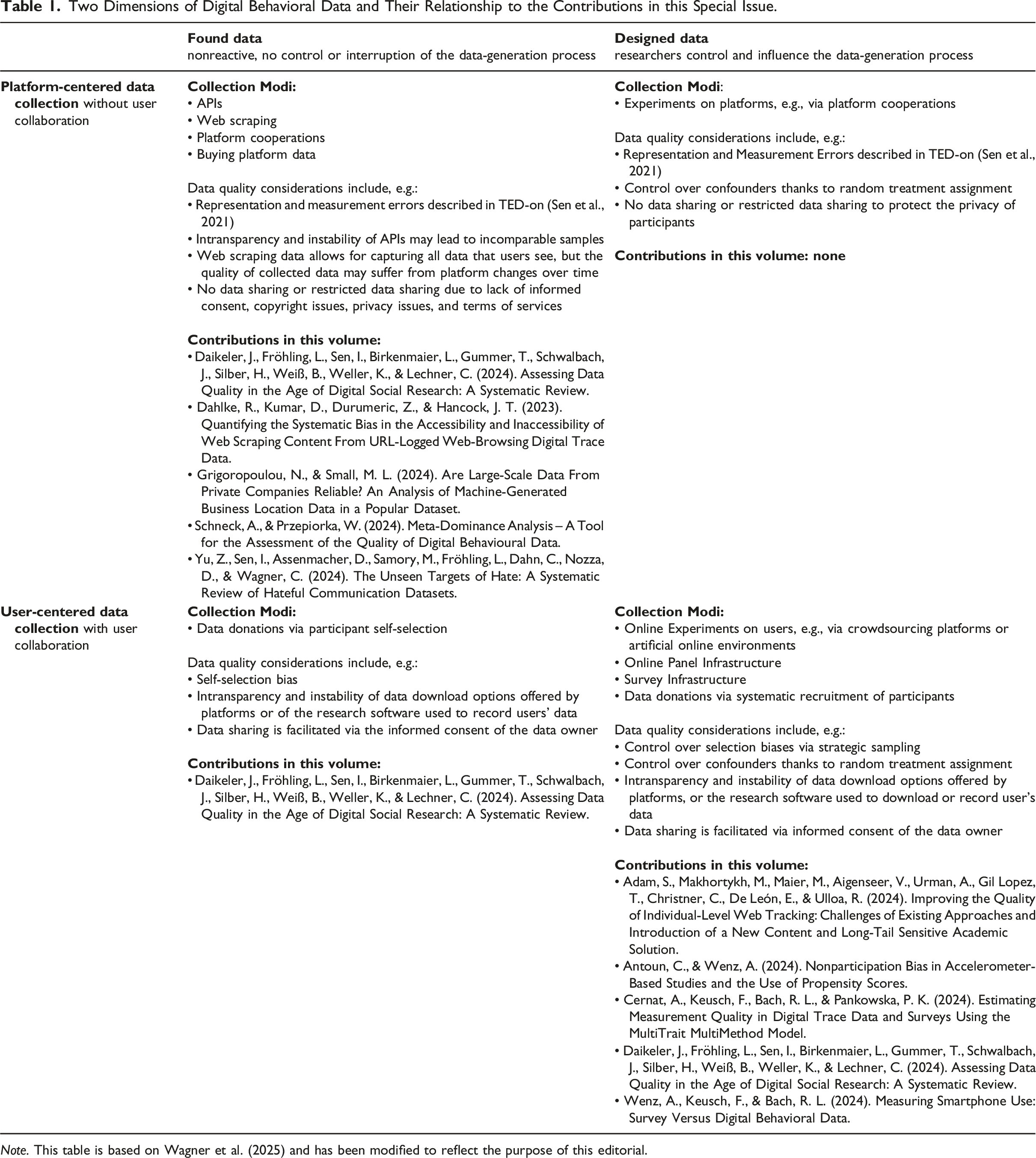

Two Dimensions of Digital Behavioral Data and Their Relationship to the Contributions in this Special Issue.

Note. This table is based on Wagner et al. (2025) and has been modified to reflect the purpose of this editorial.

For example, platform-based DBD is often used to study collective or platform-specific phenomena such as civic engagement, political polarization, and dynamic social processes (e.g., spreading information over time). Controlling confounders for found platform-based data collections is difficult since this data is typically collected under the algorithm, and a change in the algorithm can have dramatic and instant effects on the signals in the data collected (Lazer, 2015; Wagner et al., 2021) (see also the later section on “An Integrated DBD Quality Perspective” for a refined discussion on conclusion validity and causal inference in particular). Further, improving the sample quality of found data is typically not possible since the sampling frame (i.e., the platform population) is not well-defined and all risks and challenges associated with nonprobability samples apply when using this kind of data (for a recent overview, see Freese & Jin, 2025). The opacity and instability of data access software (e.g., API and data owner download options) and research software to record data (e.g., web tracking software) also limit the quality of DBD.

User-centered data collections using a probability-based sampling design allow for making inferences about a given target population since the inclusion probabilities are known for every selection step. Typically, participants are invited to participate in a survey and then, after survey completion, to participate in a DBD collection. Hence, participants must consent twice, increasing the likelihood of nonresponse bias (Antoun & Wenz, 2024) since participation in these data collections is associated with factors such as privacy concerns, tech-savviness, education, and age or data type (Beuthner et al., 2023; Elevelt et al., 2019; Silber et al., 2022). However, for cost-related reasons, many user-centered DBD collections rely on nonprobability samples, limiting the extent to which findings can be generalized. Some studies propose statistical weighting approaches to fix this issue (e.g., propensity scores, see Antoun & Wenz, 2024). However, while we have sound statistical theory when it comes to using probability-based samples, more research is needed when it comes to variance estimation and advanced weighting techniques, especially concerning the collection of weighting variables that are correlated with key survey variables and the data-generating process (Cornesse et al., 2020).

An Integrated DBD Quality Perspective

While the preceding discussion has mostly focused on intrinsic data quality—that is, whether the data meet objective measurement- and representation-related standards regardless of their intended use—we conclude by offering initial reflections on how further quality dimensions might be integrated to develop a more comprehensive understanding of DBD quality in social science research. We have already introduced the concept of extrinsic (or contextual) data quality (Batini & Scannapieco, 2006), which represents the task-dependent element of overall data quality. It refers to the fitness for purpose of data and, thus, their adequacy for addressing specific analytic tasks such as parameter estimation, effect identification, prediction, classification, or pattern detection. Relevant dimensions of extrinsic data quality and related concepts from official statistics include accessibility, value-added, relevancy, timeliness, completeness, and an appropriate amount of data (e.g., Batini & Scannapieco, 2006; Eurostat, 2019; Karr et al., 2006; Wang & Strong, 1996).

This expanded perspective shifts the focus toward the extent to which the data support attaining the intended research objectives. For example, consider a scenario where the available DBD meets all established intrinsic quality criteria, yet lacks information on confounders essential for causal effect identification (e.g., Pearl, 2009). In such a case, the high intrinsic quality is insufficient to ensure valid causal inferences if confounding bias in the causal effect estimates derived from these data cannot be eliminated.

It follows that both intrinsic and extrinsic data quality are essential components of what we term conclusion quality. This comprehensive quality concept encompasses the entire research process and builds on the principles of statistical conclusion validity (e.g., Shadish et al., 2002). It reflects the degree to which conclusions drawn from the results of data analysis are valid and contribute to achieving the research objectives. Conclusion quality is a multidimensional construct shaped by various factors, including theoretical reasoning, estimand identification (Lundberg et al., 2021), study design, intrinsic and extrinsic data quality, analytical decisions (e.g., model specification, estimation strategy; for survey data, see West et al., 2016, 2017), interpretation of results, and integration with domain knowledge.

From a DBD perspective, high conclusion quality can only be achieved when the data meet established intrinsic quality standards and are fit for purpose—that is, they contain the necessary information to complete the underlying research tasks effectively. In case of designed DBD, fitness for purpose can be actively ensured through deliberate design choices during the study planning phase, although the feasibility of doing so depends on the specific research tasks and the level of control over the data-generation process. For example, establishing data fitness is relatively straightforward when utilizing a self-programmed tracking app to collect health-related behavioral data from respondents’ smartphones alongside a large-scale survey (e.g., Antoun & Wenz, 2024 in this volume; Munzert et al., 2021; Thornton et al., 2021), while implementing rigorous study designs on external social media platforms typically requires collaboration with platform providers (e.g., González-Bailón et al., 2023; Nyhan et al., 2023).

In contrast, found DBD are typically unstructured and not fit for purpose in their raw form. This necessitates a posteriori optimization strategies such as data pruning, data fusion, and missing data handling to align them with the research objectives (Leitgöb & Keusch, forthcoming). The required adjustments depend on the data at hand and the specific analytic tasks required to address the research objectives. For example, eliminating confounding and endogenous selection bias is essential for causal inference (e.g., Elwert & Winship, 2014; Pearl, 2009), while prediction and classification tasks demand the inclusion of variables with high predictive power. For descriptive purposes, the focus is on achieving a representative depiction of the target population. Thus, the data-related aspect of conclusion quality depends on the extent to which available DBD with given intrinsic data quality can be refined to achieve fitness for purpose. Given the considerable researcher degrees of freedom (e.g., Simmons et al., 2011) in selecting the data from the universe of available sources and making them fit for purpose, we argue that transparent and well-reasoned documentation of these decisions constitutes another element of conclusion quality enhancing the traceability and replicability of DBD-based research.

Obviously, the degree of control over the data generation process differs between found and designed DBD, with substantial implications for conclusion quality. While the prospective nature of designed DBD allows for the proactive implementation of features to ensure high intrinsic quality and data fitness, found DBD can only be optimized retrospectively, if at all. Accordingly, designed DBD are generally expected to support higher conclusion quality. However, because found DBD are generated without scientific intervention in real-world settings, conclusions based on these data may yield higher ecological validity. In research practice, scholars must balance these quality dimensions to best align the use of DBD with their specific research objectives.

Among the dimensions of extrinsic data quality, accessibility and timeliness have become particularly salient for DBD in recent years. This is mainly attributable to the 2018 Cambridge Analytica scandal, which involved the unauthorized commercial exploitation of Facebook data of up to 87 million users. The scandal, referred to by Bruns (2019) as the APIcalypse, “shifted the lid on the very severe privacy issue in the digital world” (Trezza, 2023, p. 1) and ushered in what Freelon (2018) terms the post-API age, characterized by radical restrictions on access to social media data for research purposes. More recently, Twitter (now X) placed its API behind a paywall in March 2023, replacing free academic access with a tiered pricing model (Developers [@XDevelopers], 2023). These developments have significantly curtailed the availability of large-scale social media data and the promptness of accessing and analyzing such data for research purposes. Such limitations affect conclusion quality, with considerable real-world consequences. For example, selective or outdated training data may introduce algorithmic bias in automated decision-making systems, reinforcing discrimination against marginalized groups underrepresented in digital spaces (e.g., Ferrara, 2023).

Harvesting DBD can also raise unresolved questions around legal and ethical concerns. This is particularly true for web scraping conducted outside the official channels offered by platform providers via APIs, which potentially intersects with legal frameworks, including privacy and data protection laws, intellectual property rights, trespassing laws, and computer misuse regulations (e.g., Brown et al., 2024; Krotov et al., 2020; Trezza, 2023). From an ethical perspective, key questions include “whether informed consent is necessary when dealing with ‘found data’, and […] at what stage computational research becomes human subjects research requiring particular ethical protection” (Brown et al., 2024, p. 12; for further discussion, see e.g., Boyd & Crawford, 2012; Breuer et al., 2025; Metcalf & Crawford, 2016; Zook et al., 2017; Zwitter, 2014). Importantly, these issues are not limited to (scraped) social media or web data but apply broadly to DBD as a whole. Since highly individualized and granular behavior can be recorded at scale in the form of DBD, considering the consequences of DBD-based measurements and mis-measurements is crucial (Wagner et al., 2021). Consequently, we consider data processing and usage quality as a further essential component of a comprehensive quality framework for DBD, covering the extent to which all stages of data collection, processing, analysis, archiving, and sharing comply with all applicable legal regulations, adhere to established ethical research standards, and are fully documented to ensure transparency and reproducibility.

Thus far, the discussion has adopted a task-centered perspective, which assumes that DBD at hand are either selected or generated specifically to optimize the completion of given research tasks. As a final point, we propose complementing this view with a data-centered perspective on DBD quality. This perspective is typically rooted in data archiving infrastructures, which aim to provide comprehensive and broadly applicable DBD capable of supporting the empirical investigation of a wide range of research questions. Compared to the task-centered approach, which focuses primarily on data relevance for individual, particular research objectives, the data-centered perspective additionally emphasizes a higher-order form of data relevance. The latter aims to ensure that stored DBD are broadly usable across diverse social science domains and analytical contexts. Moreover, because these DBD are intended for multiple use by different researchers, particular attention is directed toward documentation, metadata provision, and user support—core criteria of the data quality framework proposed by Karr et al. (2006) for official statistics and elements of the FAIR principles (Wilkinson et al., 2016). Accordingly, high-quality archived DBD include detailed information about their generation process, rich metadata (e.g., timestamps, geolocation, processing duration), and are accompanied by tools that support efficient data access and handling.

Summing up, we propose integrating the core concept of intrinsic data quality into a comprehensive DBD quality framework with additional dimensions such as extrinsic data quality and data processing and usage quality. In doing so, we seek to address the apparent disconnection between the data quality discussion and the specific research objectives associated with the analysis of DBD. Moreover, this broader conceptualization enhances the compatibility of our understanding of DBD quality with established multidimensional data quality frameworks in the field of statistics (e.g., Eurostat, 2019; Karr et al., 2006) and the FAIR principles (Wilkinson et al., 2016). The resulting tasks are to refine and expand these initial considerations and integrate them into a structured, coherent, and comprehensive DBD quality framework that enables the development of valid quality indicators for all dimensions.

Conclusion

While digital behavioral data (DBD) can be of high scientific value when investigating social life, its scientific value hinges on a multidimensional notion of data quality. The nine contributions in this special issue show that we must look beyond intrinsic error frameworks (e.g., measurement and representation) toward an integrated view that incorporates extrinsic, task-specific fitness-for-use as well as data processing and usage quality (legal, ethical, transparency, and reproducibility). Our 2 × 2 classification of DBD by data-generation process (designed vs. found) and collection modus (user- vs. platform-based) can help to locate typical error sources, feasible remedies, and the limits of inference. Building on this, we add conclusion quality as another important quality dimension: valid, well-documented conclusions that emerge from aligning theory, design, intrinsic and extrinsic data quality, and analytic decisions.

DBD-based research must therefore (1) deliberately design studies to ensure fitness-for-purpose whenever control is possible; (2) transparently optimize found data ex post and report the resulting researcher degrees of freedom; (3) confront shrinking accessibility and timeliness in the post-API age; and (4) meet stringent legal and ethical standards. Further research is needed to consolidate existing frameworks into a coherent, indicator-ready DBD quality framework, including developing robust methods for weighting and causal inference with nonprobability and passively collected data, and investing in FAIR DBD archiving infrastructures.

Footnotes

Acknowledgements

The authors used ChatGPT-4o (OpenAI, accessed July 2025) to assist with language refinement during manuscript preparation. All content was reviewed and approved by the authors.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.