Abstract

Identifying strategies that maximize participation rates in population-based web surveys is of critical interest to survey researchers. While much of this interest has focused on surveys of persons and households, there is a growing interest in surveys of establishments. However, there is a lack of experimental evidence on strategies for optimizing participation rates in web surveys of establishments. To address this research gap, we conducted a contact mode experiment in which establishments selected to participate in a web survey were randomized to receive the survey invitation with login details and subsequent reminder using a fully crossed sequence of paper and e-mail contacts. We find that a paper invitation followed by a paper reminder achieves the highest response rate and smallest aggregate nonresponse bias across all-possible paper/e-mail contact sequences, but a close runner-up was the e-mail invitation and paper reminder sequence which achieved a similarly high response rate and low aggregate nonresponse bias at about half the per-respondent cost. Following up undeliverable e-mail invitations with supplementary paper contacts yielded further reductions in nonresponse bias and costs. Finally, for establishments without an available e-mail address, we show that enclosing an e-mail address request form with a prenotification letter is not effective from a response rate, nonresponse bias, and cost perspective.

Survey researchers are actively seeking strategies to maximize participation rates in web surveys. While much of this attention has focused on surveys of persons or households, there is a growing interest in surveys of establishments (Clayton, Searson, & Manning, 2000; Clayton & Werking, 1995; Ellguth & Kohaut, 2014; Johnson, 2016; Manfreda, Vehovar, & Batagelj, 2001). Establishment surveys, which include surveys of businesses, institutions, government agencies, farms, hospitals, among other types of organizations (Lavrakas, 2008, p. 239), play a crucial role in monitoring the economic health of a nation, tracking changes and emerging trends in the labor market, and are used by policy makers for future economic planning purposes (Snijkers, Haraldsen, Jones, & Willimack, 2013). Web surveys offer many advantages over mail, telephone, and face-to-face surveys for collecting responses from establishments, including reduced costs, shorter field periods, faster data processing, and potential improvements in data quality (Bethlehem & Biffignandi, 2011). Because of these advantages, many government agencies in Europe and North America have incorporated web survey options into their establishment surveys (Bremner, 2011; Broszeit & Laible, 2017; Haraldsen et al., 2011; Robertson & Hatch-Maxfield, 2012).

However, the advantages of web surveys may be offset by their response rates, which tend to be lower compared to other data collection modes and mixed-mode designs (Manfreda, Bosnjak, Berzelak, Haas, & Vehovar, 2008; Shih & Fan, 2008; Wengrzik, Bosnjak, & Manfreda, 2016). Although this literature is based on surveys of persons and households, the same conclusion likely holds for establishments. Unfortunately, there is a dearth of information on design strategies for optimizing participation rates in web surveys of establishments. One of the most important design decisions affecting the response rate is the choice of

We are unaware of experimental studies that have examined the effects of different contact modes on response rates and costs in web surveys of establishments. Thus, designers of establishment surveys lack an evidence base to inform decisions related to the optimization of web survey participation. In this article, we address this knowledge gap by reporting the results of a contact mode experiment conducted on a web survey of establishments in Germany. Eligible establishments that had previously participated in a national mixed-mode survey were randomized to receive the invitation to a web-only survey and subsequent reminder via different combinations of paper and e-mail contacts. In addition to response rates and costs, we examine the effects of contact mode on nonresponse bias using an extensive array of establishment-level administrative record information available for the full sample. Our results provide practical insights for survey designers considering the trade-offs between different contact mode strategies.

The remainder of the paper is organized as follows. First, we review the current literature on the effects of invitation and reminder modes in web surveys and present the open research questions to be addressed in the present study. Then, we describe the study design and experimental groups and report the results of the study. The article concludes with a discussion of the results and their implications for survey practice.

Literature Review

There is a sparse literature on the effects of contact mode on web survey participation. Most of this literature is based on university populations and other Internet-savvy groups, which may not directly translate to establishment populations—we return to this point later. One of the earliest contact mode experiments in a web survey was conducted by Birnholtz, Horn, Finholt, and Bae (2004), who examined the effect of paper versus e-mail invitations on a sample of engineering researchers. The invitations were sent along with a code to redeem a US$5 Amazon.com voucher. Paper invitations were associated with a higher response rate than e-mail invitations (40% vs. 32%); however, the difference was not statistically significant which the authors acknowledged could be due to small sample size. Kaplowitz et al. (2012) compared the performance of a postcard invitation to an e-mail invitation in a web survey of university faculty, students, and staff. Compared to the postcard invitation, the e-mail invitation yielded a significantly higher response rate among students (22% vs. 19%) and faculty (40% vs. 33%), but no difference among staff (43% vs. 43%).

Bandilla, Couper, and Kaczmirek (2012) report the results of an invitation experiment in which respondents who previously took part in a face-to-face, general population survey in Germany were randomized to receive a paper or e-mail invitation for a follow-up web survey. The invitation mode was crossed with a prenotification letter, and a single reminder was administered in the same mode as the invitation. Without the prenotification letter, the paper invitation yielded a higher response rate than the e-mail invitation (51% vs. 40%). However, with the prenotification letter, the e-mail invitation was associated with a higher response rate than the paper invitation (57% vs. 51%). Israel (2013) also examined the effect of crossing invitation mode with a prenotification letter in a web survey of clients from the Florida Cooperative Extension Service. One group received a prenotification letter followed by an e-mail invitation, and another group received the e-mail invitation without prenotification followed by an e-mail reminder. Although both groups received two contacts, the group with the prenotification letter had a higher response rate to the web survey than the other group (24% vs. 18%). The effectiveness of using a prenotification letter (or postcard) to improve web survey response rates is a common finding and consistent with the notion that prenotification letters make e-mail invitations seem less unsolicited and are therefore less likely to be dismissed or considered as spam (Crawford et al., 2004; Dykema, Stevenson, Day, Sellers, & Bonham, 2011; Harmon, Westin, & Levin, 2005; Kaplowitz, Hadlock, & Levine, 2004; Porter & Whitcomb, 2007).

Building on the finding that prenotification letters are likely to improve response to a subsequent e-mail invitation, one could posit that a paper invitation followed by an e-mail reminder might have a similar effect. Dykema, Stevenson, Klein, Kim, and Day (2012) examined this notion in a web survey of university faculty. Faculty members were randomized to receive a paper or e-mail invitation. E-mail reminders were sent to nonrespondents in both invitation groups. The paper invitation produced a slightly higher response rate than the e-mail invitation before reminders were sent (13% vs. 9%), but the subsequent e-mail reminder had a much larger effect on the paper invitation group, increasing the response rate to 27% compared to 12% in the e-mail invitation group. In line with the prenotification literature, the authors attributed this result to the paper invitation which was “likely more successful at underscoring the legitimacy and importance of the study […] and likely served as a sort of advance letter that increased the likelihood sample members would notice and respond to the subsequent e-mailed requests to participate” (p. 367). However, this effect was not replicated by Millar and Dillman (2011). In a web survey of university students, they compared the effectiveness of an e-mail invitation with follow-up e-mail contacts versus a paper invitation with follow-up e-mail contacts (a strategy that they refer to as “e-mail augmentation”). The difference in response rates between the paper (21.2%) and e-mail (20.5%) invitation groups was not statistically significant.

Knowledge Gaps and Research Questions

The literature review paints a mixed picture regarding the optimal choice of contact mode(s) for maximizing participation in web surveys. Paper invitations are sometimes more effective than e-mail invitations, and other times not. Similarly, the use of a paper invitation followed by an e-mail reminder can improve response rates over an e-mail-only contact strategy, but this is not a consistent finding. The mixed findings suggest that the effects of contact modes are likely to be population specific. Thus, it is questionable whether the findings reported from university populations and other Internet-sophisticated individuals carry over to establishments. One pertinent difference between surveys of establishments and those of individuals is that survey invitations and other communications sent to establishments are typically received by a gatekeeper (e.g., secretary or administrative assistant) who then decides whether or not to forward the request on to a knowledgeable respondent. In surveys of individuals, the invitation correspondence is likely to be received by the target respondent regardless of whether it is delivered by mail or e-mail; however, in surveys of establishments, one of these contact modes might be more effective than the other in terms of getting past the gatekeeper and being routed to the relevant respondent (see chapters 2 and 9 of Snijkers et al., 2013).

Another reason why the above findings may not translate to establishments is that they are based on populations for which postal and e-mail addresses are known. Although postal addresses are usually known for establishments, an e-mail address may be lacking for many. Even e-mail addresses that have been provided by establishments through their participation in a previous survey—the situation considered in the present study—may be outdated because of turnover, name changes, or for other reasons. Different contact strategies may be considered for these situations. For example, in the case of an invalid e-mail address, supplementary paper contacts can be used to deliver the survey invitation and any subsequent reminders. Establishments for which an e-mail address is not available can be administered paper contacts from the outset or, alternatively, these establishments can be sent a prenotification letter with a request to provide an e-mail address to receive an e-mailed invitation. It is unclear whether establishments are willing to comply with such a request, but even if not, the prenotification contact might increase the likelihood that establishments will notice and respond to a subsequent paper invitation and reminder versus a paper invitation and reminder strategy that does not include the additional prenotification contact. However, sending supplementary paper contacts and/or prenotification letters comes with additional costs to the survey organization. Whether these additional costs can be justified with a meaningful increase in the response rate is unknown.

Besides response rates and costs, it is also important to consider the effects of different contact mode strategies on nonresponse bias. In the household survey literature, response rates have been shown to be only weakly correlated with nonresponse bias (Groves, 2006). That is, high response rates do not imply small nonresponse bias, just as low response rates do not imply large nonresponse bias. Rarely is it feasible to conduct a detailed examination of nonresponse bias due to the lack of relevant auxiliary information available for both respondents and nonrespondents. In the present study, we overcome this limitation by making use of extensive record information on the full sample of establishments.

Specifically, we address the following research questions:

Do paper and e-mail invitations differentially impact response rates to a web survey of establishments?

What combination of paper/e-mail invitation and reminder contacts maximizes the response rate in a web survey of establishments? Are supplementary paper contacts effective in eliciting response from establishments with invalid e-mail addresses?

Are establishments willing to provide an e-mail address as part of a prenotification request letter? Does the strategy of requesting an e-mail address via a prenotification letter, and sending a supplementary paper invitation and reminder to establishments that do not provide one, yield a higher response rate compared to simply sending a paper invitation and reminder without prenotification?

To what extent do different paper/e-mail contact strategies affect nonresponse bias and survey costs?

Method

Survey and Sample Selection

The contact mode experiment was conducted on establishments in Germany selected for a web survey entitled “Job Vacancies and Personnel Policy in Establishments—A Supplementary Survey on Applicant Selection (SAS)” (Vicari & Zmugg, 2015). The SAS was sponsored by the Institute for Employment Research (IAB) of the Federal Employment Agency (BA) in Nuremberg. The objective of the survey was to examine factors that influence establishments’ decision-making process for filling job vacancies. The SAS was a “one-off” survey of all public and private establishments that (1) had previously participated in the IAB Job Vacancy Survey (JVS) in at least one of the years 2010, 2011, or 2012, and (2) were registered as employing staff in at least one of 25 target professions. 1 The JVS is an ongoing, annual survey of establishments that measure the number and structure of job vacancies in Germany. Every year, the JVS draws a cross-sectional sample of establishments from the BA establishment register. The BA register contains a complete listing of all establishments that employ at least one individual who is liable for social security contributions. Each yearly JVS sample is invited to take part in four quarterly interviews with the initial survey conducted by mail (with an option to complete the survey online) and follow-up interviews conducted by telephone. Further details of the JVS can be found in Moczall, Müller, Rebien, and Vogler-Ludwig (2015).

A total of 29,513 establishments met the above eligibility criteria and were selected for the SAS survey. Postal and e-mail addresses were available for 17,992 of them. All e-mail addresses were voluntarily provided by establishments at the end of the JVS survey. Contact details were requested “in case of questions” by the survey organization. Many of the available e-mail addresses were personalized, including the name of the contact (e.g.,

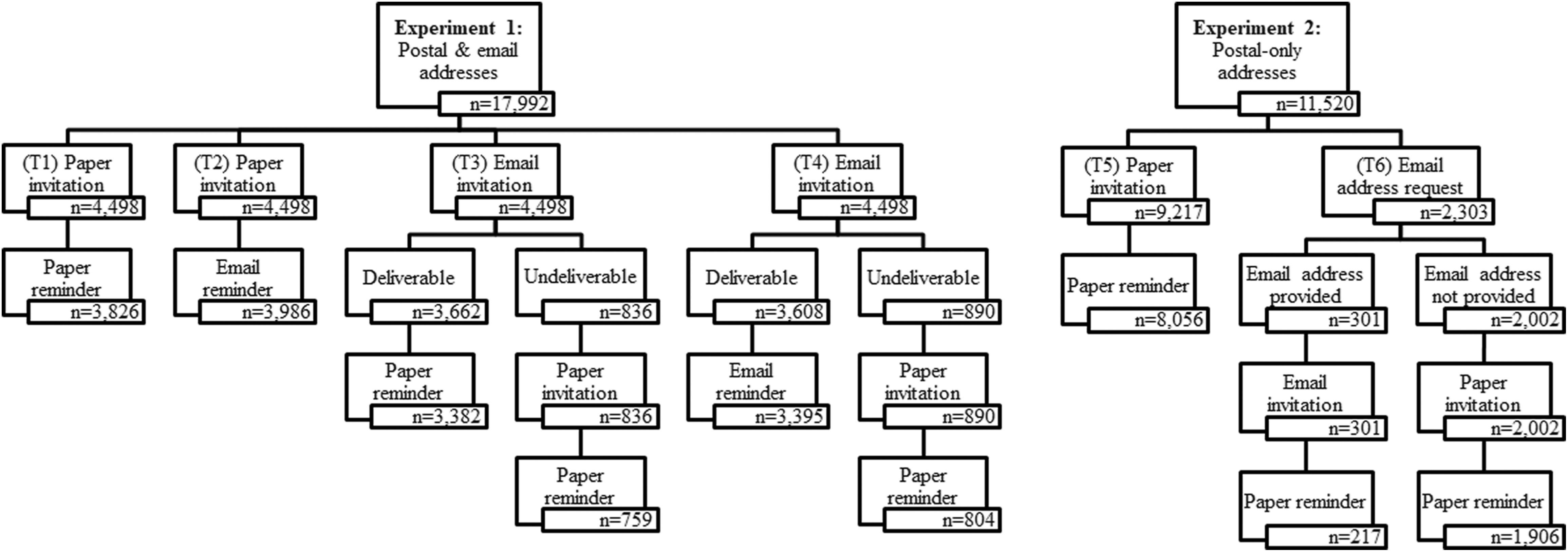

Experiment 1 (Postal-E-Mail Addresses): Invitation and Reminder Mode

A fully crossed paper and e-mail invitation and reminder experiment was conducted on the postal-e-mail address sample. The sample was randomly subdivided into four equal-sized groups (

Schematic overview of contact mode treatment groups for postal-email addresses and postal-only addresses.

Experiment 2 (Postal-Only Addresses): Prenotification and E-mail Address Request

A prenotification and e-mail address request experiment was conducted on the postal-only address sample (see Figure 1; Experiment 2). The sample was randomly split into two disproportionate treatment groups. The first group (T5) consisted of 80% (

Study Timeline

All paper invitation/prenotification letters were sent out initially on November 17–18, 2014. E-mail invitations were sent out on November 19, 2014, in order to harmonize the date of arrival for both sets of invitations. All invitations were addressed to the human resources (HRs) office or managerial board of the establishments. The invitation letters included the salutation “Dear sir or madam” (even in the case of personalized e-mail addresses) in the hope that the invitation would be forwarded to an HR officer or any person responsible for hiring. Establishments that did not receive the e-mail invitation due to an invalid e-mail address (T3 and T4 only) and establishments that did not react to the prenotification e-mail address request letter (T6 only) were mailed supplementary paper invitation letters on November 27, 2014. All invitations announced the closing date of the survey to be December 20, 2014. On December 15, 2014, reminders were sent to all establishments that had not yet accessed the web survey by December 11, 2014. Postal and e-mail reminders were sent at the same time according to the assigned treatment group. The reminder announced a postponed survey closing date of January 5, 2015. Despite the postponed closing date, it was decided to keep the survey open until January 31, 2015, because establishments continued to access the web survey after the official closing date.

Statistical Analysis

Research Questions 1, 2, and 3 are addressed by performing χ2 tests for differences in response rates between the relevant treatment groups. A respondent is defined as any establishment that initiated the web survey, regardless of whether they actually completed the survey. This definition includes establishments that broke off the web survey after having started it and those that technically finished the questionnaire but were filtered to the last page of the survey because they reported not employing staff in one of the target professions. Response rates are calculated as the ratio of the number of respondents to the full sample within each treatment group. Establishments with invalid contact information (e.g., bounced e-mails, undeliverable letters) are included in the denominator and treated as eligible nonrespondents. This ratio is equivalent to the most conservative response rate formula (Response Rate 1) recommended by the American Association for Public Opinion Research (American Association for Public Opinion Research, 2016).

Research Question 1 is addressed by comparing the response rate of the paper invitation (T1/T2) groups with the e-mail invitation (T3/T4) groups before the reminder and supplementary paper contacts were administered. Research question 2 is addressed by comparing response rates between the four treatment groups in Experiment 1 after the reminder, and before and after the supplementary paper contacts, were administered (T3 and T4 only). For brevity, we do not distinguish between the supplementary paper invitation and supplementary paper reminder and simply report the overall response rate after both paper contacts were administered. Research question 3 is addressed by first examining the return rate to the prenotification e-mail address request used in Experiment 2. This is followed by an examination of the response rate among establishments that provided an e-mail address before and after the paper reminder was administered. Then, we compare the overall response rate of the prenotification treatment group (T6) to that of the reference group that was administered a paper invitation and paper reminder without the prenotification step (T5).

Research question 4 is answered by comparing nonresponse bias and survey costs between the relevant treatment groups before and after reminders and supplementary paper contacts were administered. Nonresponse bias is calculated using establishment-level administrative variables obtained from the aforementioned BA register. The BA register contains extensive information about the industry and size of each establishment, as well as the demographic profile of its employees (Schmucker, Seth, Ludsteck, Eberle, & Ganzer, 2016). The following register variables are used in the evaluation: number of employees, percentage of full-time employees, percentage of female employees, percentage of German employees, percentage of low-qualified employees, percentage of middle-qualified employees, percentage of high-qualified employees, percentage of marginal employees, 3 median age of employees, location in East (vs. West) Germany, industry sector, and year of foundation. Estimates of these administrative variables are provided in Online Appendix Table 1 for each treatment group. 4 All variables were categorized accordingly after the inspection of their distributional properties with preference given to approximately equal-sized categories. These variables were selected based on their frequency of use among secondary users of the BA database (Brixy, Kohaut, & Schnabel, 2007; Henze, 2014; Späth & Koch, 2008; Wagner, 2012).

Nonresponse bias is computed separately for each estimate of each category,

We also report the

All response rates and nonresponse bias calculations are weighted to account for probabilities of selection and nonresponse in the JVS forerunner study (for further details, see Vicari & Zmugg, 2015).

The costs of implementing each treatment procedure are calculated using expense estimates provided by the postal department of the BA, whom was responsible for printing and administering all paper-based contacts. The material expenses included printing, postage, and envelopes. Personnel and working time expenses are not included in the cost calculations. Costs related to programming and testing the web survey instrument are treated as fixed and are also not included in the cost calculations.

Results

Research Question 1: Paper and E-mail Invitation Modes

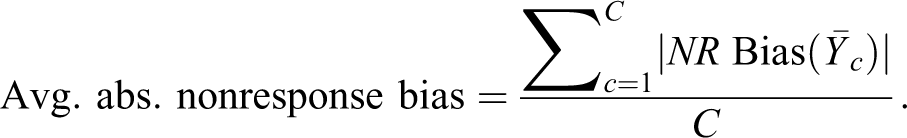

First, we examine response rates between the paper and e-mail invitation modes used in Experiment 1. Response rates for each of the treatment groups are presented in Figure 2 (a tabular version is provided in Online Appendix Table 2). Response rates in the paper invitation groups, T1 and T2, were 7.56% and 8.37%, respectively.

5

Both response rates are modestly higher than response rates in the e-mail invitation groups, T3 and T4, which were 5.15% and 2.90%, respectively. The difference between the combined response rate of the paper invitation groups (T1/T2: 7.95%) and the combined response rate of the e-mail invitation groups (T3/T4: 4.08%) is statistically significant (

Weighted response rates before reminder, after reminder, and after supplementary paper contacts, by treatment group.

Research Question 2: Paper and E-mail Invitation and Reminder Modes

Next, we compare response rates of the four treatment groups in Experiment 1 after the reminder was administered and before/after the supplementary paper contacts were administered to establishments with invalid e-mail addresses (T3 and T4 only). Figure 2 shows that the highest response rate occurred in the paper invitation–paper reminder group (T1; 20.34%) followed by the e-mail–paper group (T3; 18.20%), the paper–e-mail group (T2; 12.76%) and, lastly, the e-mail–e-mail group (T4; 6.43%). The difference between the two highest response rate groups (T1 and T3) is statistically insignificant (

Mailing supplementary paper contacts to establishments with undeliverable e-mail invitations

6

had a positive and statistically significant effect on response rates in both e-mail invitation groups. Administering supplementary paper contacts increased the response rate in the e-mail–paper group (T3) from 18.20% to 21.86%, and in the e-mail–e-mail group (T4) from 6.43% to 10.25%. The overall response rate of T3 with supplementary paper contacts yields the highest response rate across all treatment groups, though it remains statistically similar to T1 (

Research Question 3: Prenotification Letter and E-Mail Address Request

We now evaluate the results of Experiment 2. A total of 301 (of 2,303) establishments in T6 responded to the prenotification request letter by providing an e-mail address for a (weighted) return rate of 8.38%. Among the establishments that provided an e-mail address and were sent the subsequent e-mail invitation, 83 (or 30.1%) participated in the web survey before the paper reminder was sent and a further 24 participated after the reminder was sent for a conditional response rate of 38.99%.

7

The overall (unconditional) T6 response rate is 3.27%, which is lower than that of the reference group (T5): 5.11% before reminder (

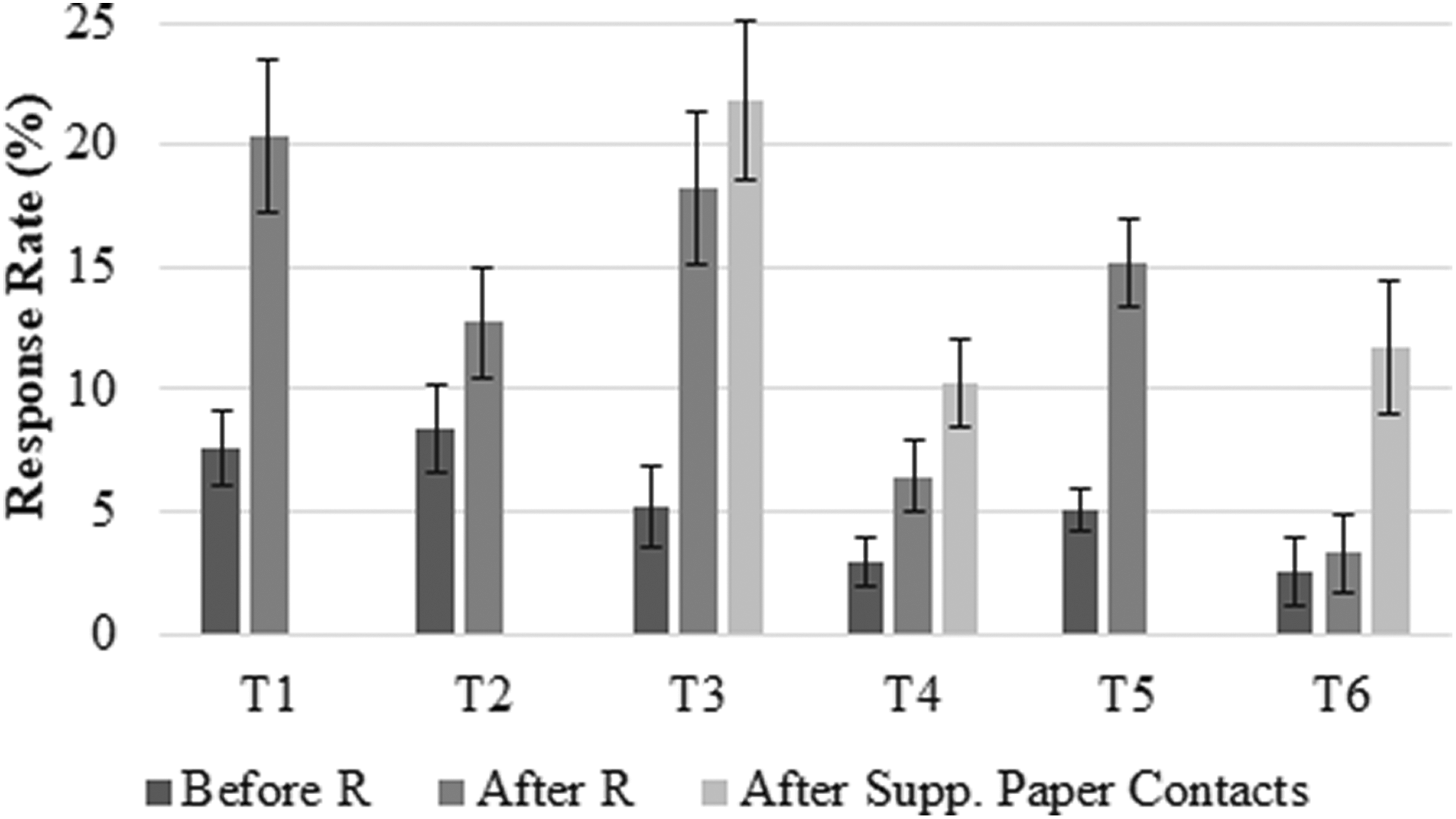

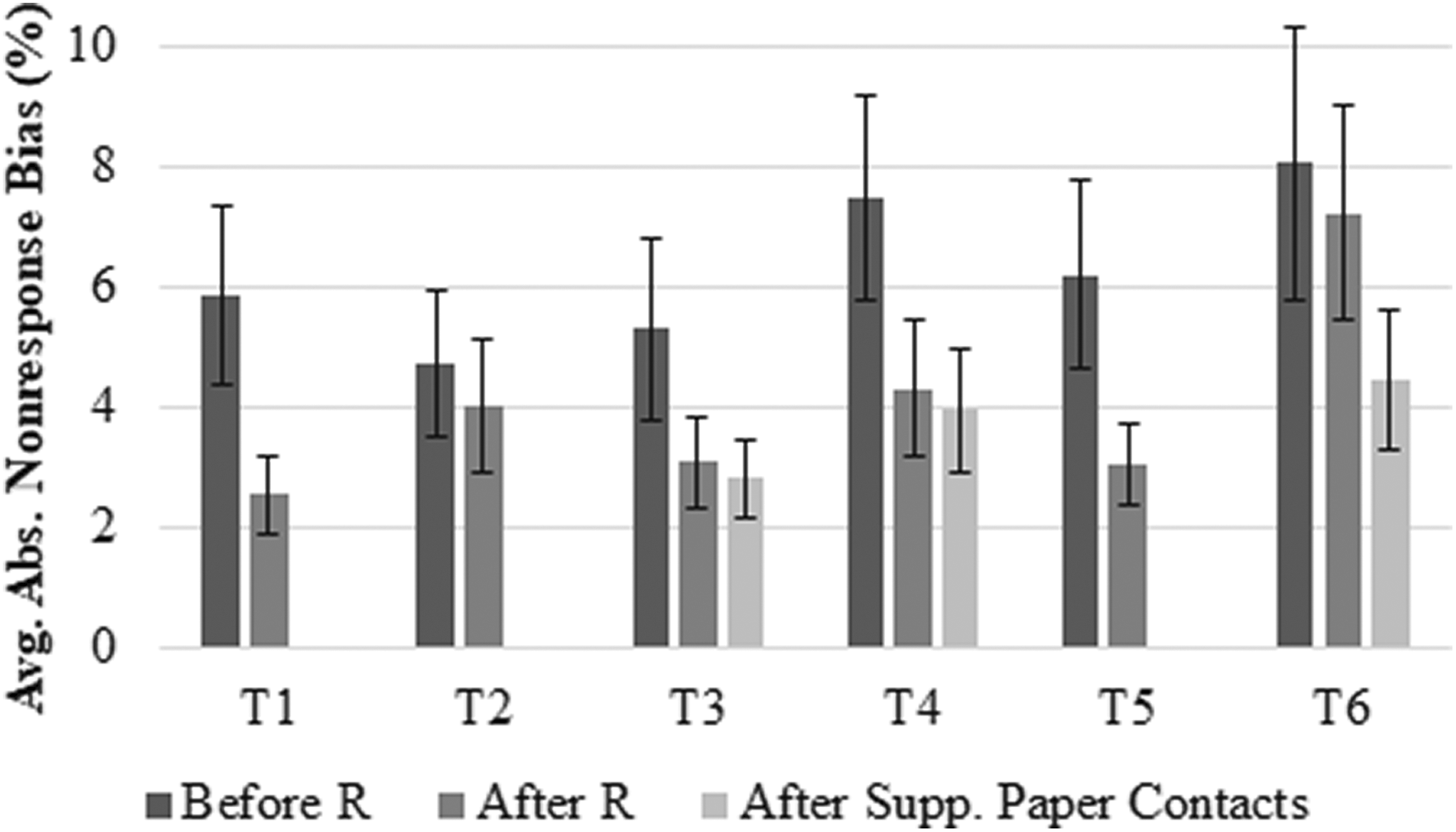

RQ4: Aggregate Nonresponse Bias and Survey Costs

Finally, we consider the implications of the above contact strategies on nonresponse bias and survey costs. Here, we report aggregate nonresponse bias in the form of average absolute nonresponse bias (AANB; in percentage points) across the 12 administrative variables. Figure 3 shows the AANB for each treatment group before/after the reminder, and after the supplementary paper contacts were administered. The results are largely consistent with the notion that higher response rates yield smaller average nonresponse bias. Starting with Experiment 1, before the reminder and supplementary paper contacts were administered, the combined paper invitation group (T1/T2) yields smaller AANB than the combined e-mail invitation group (T3/T4): 4.52% versus 5.65% (not shown), which is consistent with the response rate results presented earlier. After administering the reminder, the paper–paper group (T1) yields the smallest AANB overall (2.53%) followed by the email–paper group (T3; AANB: 3.08%), the paper–email group (T2; AANB: 4.02%), and the e-mail–e-mail group (T4; AANB: 4.30%). These patterns correspond exactly to the highest-to-lowest response rate groups. Further, the figure shows that reminders and supplementary paper contacts reduced AANB across all treatment groups. The largest reductions in AANB due to reminders occurred in T1 (from 5.84% to 2.53%) and T4 (from 7.49% to 4.30%). The supplementary paper contacts reduced AANB only slightly in both e-mail invitation groups (T3: from 3.08% to 2.80%; T4: from 4.30% to 3.94%).

Average absolute nonresponse bias before reminder, after reminder, and after supplementary paper contacts, by treatment group.

Turning now to Experiment 2, the group that received the prenotification e-mail address request letter (T6) yields larger AANB than the group that did not receive the prenotification request letter (T5); the result holds before the reminder (8.06% vs. 6.21%) and after the reminder (7.23% vs. 3.03%) was administered in both groups. Administering supplementary paper contacts to establishments that did not provide an e-mail address via prenotification letter reduced this discrepancy by decreasing the AANB in T6 to 4.45%.

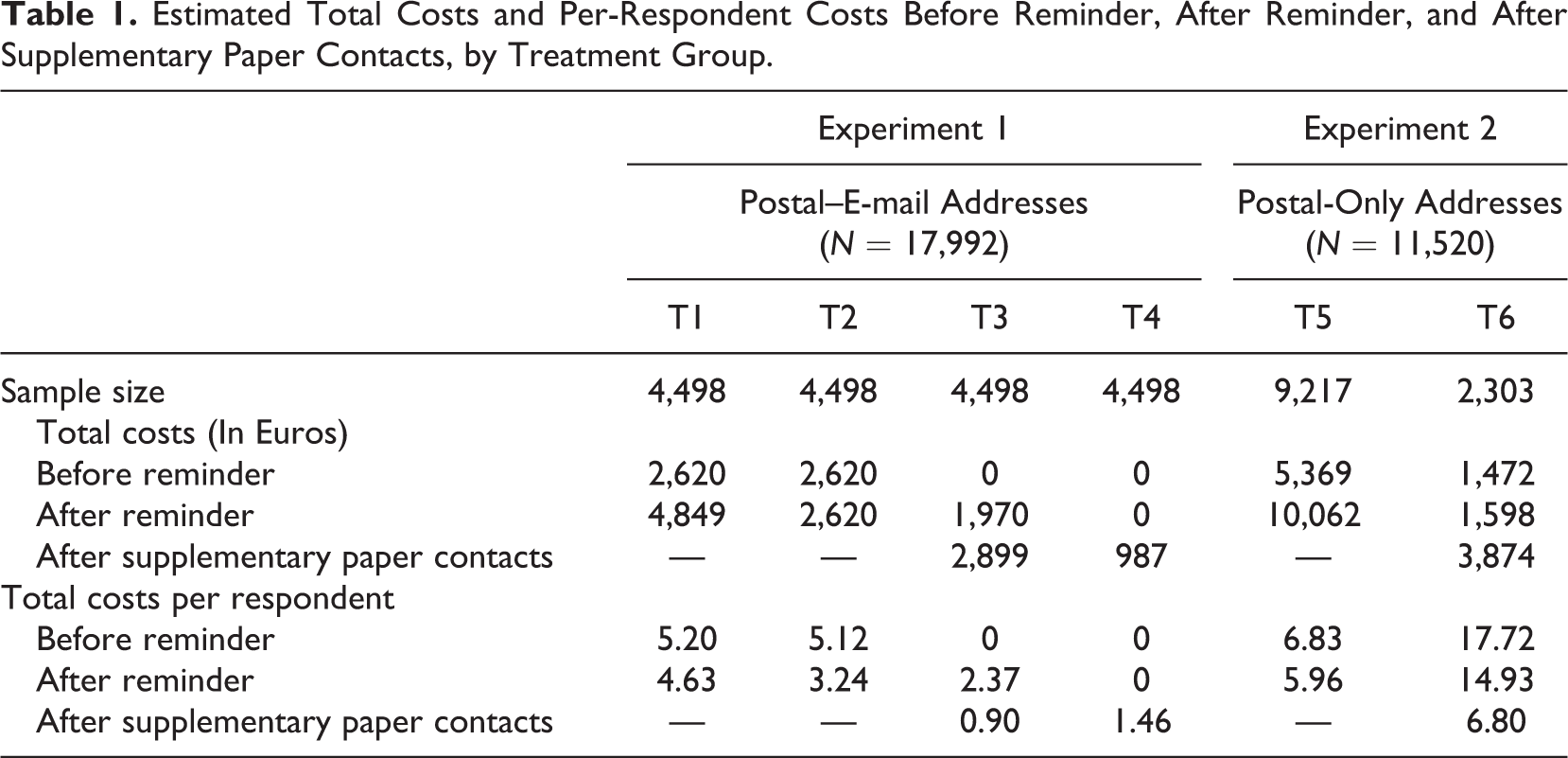

The total costs and per-respondent costs of administering each treatment are presented in Table 1. As expected, the lowest per-respondent costs in Experiment 1 were associated with the treatments that included e-mail contacts (T2, T3, and T4). However, the e-mail-only invitation and reminder group (T4) was not the cheapest treatment on a per-respondent basis after accounting for supplementary paper contacts. After administering all contacts, the e-mail–paper group (T3) yielded the lowest per-respondent costs (0.90 EUR) followed by the e-mail–email group (T4: 1.46 EUR), the paper–e-mail group (T2: 3.24 EUR), and the paper–paper group (T1: 4.63 EUR). It is noteworthy that the cheapest and most expensive treatments (T3 and T1, respectively) also produced the highest response rates and smallest average nonresponse bias overall, which suggests that mixing e-mail and paper contacts can yield significant cost savings without compromising on response rates and data quality. In Experiment 2, the prenotification e-mail request letter did not yield lower per-respondent costs relative to the group without prenotification (T6: 6.80 EUR; T5: 5.96 EUR). Thus, we conclude that the prenotification letter with e-mail address request strategy was not effective.

Estimated Total Costs and Per-Respondent Costs Before Reminder, After Reminder, and After Supplementary Paper Contacts, by Treatment Group.

Discussion and Implications

This experimental study examining the effects of different contact mode strategies on participation in a web survey of establishments yielded five main findings. First, paper invitations produced higher response rates and lower average nonresponse bias compared to e-mail invitations before any follow-up reminders or supplementary paper contacts were administered. Second, although sending a paper invitation followed by a paper reminder yielded the highest response rate and lowest nonresponse bias overall, the sequence of e-mail invitation followed by a paper reminder yielded a similarly high response rate and low nonresponse bias at about half the per-respondent cost. A related third finding was that the strategy of starting with an e-mail invitation and following up with a paper reminder produced a higher response rate and lower average nonresponse bias than the opposite contact mode sequence; however, both mixing strategies were more effective than an e-mail-only invitation/reminder contact strategy. Fourth, although some establishments reacted to a prenotification request letter by providing an e-mail address to receive the survey invitation, this strategy produced a lower response rate compared to a standard paper-only invitation strategy without the additional prenotification step. Finally, sending supplementary paper contacts (invitation and reminder letters) to establishments with invalid e-mail addresses (and those that did not provide an e-mail address upon request) was found to be useful for improving response rates, reducing average nonresponse bias, and decreasing per respondent costs.

The study results offer practical insights for researchers seeking to maximize participation rates and, in turn, minimize the risk of nonresponse bias in web-only surveys of establishments. Adopting a paper-only invitation and reminder strategy appears to be the most effective method for maximizing participation among establishments, regardless of whether they voluntarily provided an e-mail address at an earlier time point. The superiority of the paper invitation mode is consistent with findings reported by Bandilla et al. (2012) and Dykema et al. (2012). An e-mail-only invitation and reminder strategy should be avoided if possible as it was shown to be the least effective contact mode strategy, which is in line with findings reported in the household survey literature (Dykema, Stevenson, Klein, Kim, & Day, 2012; Israel, 2013). The finding that an e-mail invitation followed by a paper reminder is more effective than the opposite mode sequence and can perform similarly as well as a paper-only contact strategy bodes well from a cost perspective and runs counter to the notion that paper invitations improve response to subsequent e-mail reminders in a similar way as prenotification letters tend to improve response to e-mail invitations (e.g., Dykema et al., 2011,2012; Kaplowitz et al., 2004). Another important practical import of this study is that supplementary paper contacts can be a useful means of obtaining responses from establishments with invalid e-mail addresses—a finding that is consistent with other studies (e.g., Israel, 2013; Newberry & Israel, 2017). Not only does this approach improve response rates, but it also reduces aggregate nonresponse bias and per-respondent costs.

Although the present study contributes new insights to a sparse literature on the optimization of web surveys of establishments, it is subject to some limitations. For example, the study is based on a targeted sample of establishments that previously participated in a different IAB survey—the JVS—about 2–4 years earlier. Thus, the studied establishments are likely to be more cooperative and participate at a higher rate than establishments from the general population. On the other hand, the JVS is a mixed-mode survey, and there is a general reluctance among establishments in Germany to participate in a web-only survey when they are accustomed to responding in other survey modes (Ellguth & Kohaut, 2014). Therefore, any increased cooperativeness due to familiarity with other IAB surveys may have been offset by the web-only implementation of the present study. Nevertheless, we encourage future replications of these contact mode experiments on other samples of establishments, including those with little or no prior experience participating in surveys sponsored by the same entity.

Conclusions

At a time when survey researchers are increasingly utilizing the web to collect information from establishments, it is useful to know that administering paper-only contacts remains an optimal strategy for eliciting responses to web-only surveys. Although this strategy is more expensive and time-consuming than an e-mail-only contact strategy, the advantage of paper-only contacts—in terms of maximizing response rates and minimizing nonresponse bias—is likely to be a worthwhile trade-off when considering the critical role that high-quality establishment survey data play in monitoring a country’s economic activities and planning future economic policies.

What is also promising is that similarly high levels of data quality can be achieved using a substantially cheaper sequence of an e-mail invitation followed by a paper reminder. In addition, our results, taken from a large survey recruitment of over 29,000 establishments, showed that administering supplementary paper contacts to establishments with invalid e-mail addresses can yield even further gains in data quality and reductions in per-respondent costs. However, survey organizations should not go out of their way to solicit valid e-mail addresses from establishments for the sole purpose of administering e-mail contacts, as this approach is likely to be ineffective from a cost and data quality perspective, relative to a paper-only contact approach, as our study showed.

It is our hope that these practical contributions lead to more effective recruitment strategies in web surveys of establishments and act as a catalyst for further methodological experimentation in establishment surveys in order to expand the currently sparse empirical knowledge base from which researchers can draw from.

Supplemental Material

sakshaug_online_supplement - Paper, E-mail, or Both? Effects of Contact Mode on Participation in a Web Survey of Establishments

sakshaug_online_supplement for Paper, E-mail, or Both? Effects of Contact Mode on Participation in a Web Survey of Establishments by Joseph W. Sakshaug, Basha Vicari and Mick P. Couper in Social Science Computer Review

Footnotes

Authors’ Note

The data from the SAS study used in this article are available by contacting the second author at

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support was provided by the Institute for Employment Research (IAB) in Nuremberg, Germany.

Supplemental Material

The supplemental material is available in the online version of the article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.