Abstract

Incivility, that is, the breaking of social norms of conversation, is evidently prevalent in online political communication. While a growing literature provides evidence on the prevalence of incivility in different online venues, it is still unclear where and to what extent Internet users are exposed to incivility. This paper takes a comparative approach to assess the levels of incivility across contexts, content and personal characteristics. The pre-registered analysis uses detailed web browsing histories, including public Facebook posts and tweets seen by study participants, in combination with surveys collected during the German federal election 2021 (N = 739). The level of incivility is predicted using Google’s Perspective API and compared across contexts (platforms and campaign periods), content features, and individual-level variables. The findings show that incivility is particularly strong on Twitter and more prevalent in comments than original posts/tweets on Facebook and Twitter. Content featuring political content and actors is more uncivil, whereas personal characteristics are less relevant predictors. The finding that user-generated political content is the most likely source of individuals’ exposure to incivility adds to the understanding of social media’s impact on public discourse.

Introduction

Online incivility has become a major research focus in recent years. There is now a solid understanding of the prevalence of incivility, especially on social media (e.g., J. W. Kim et al., 2021; Oz et al., 2018; Salgado et al., 2023; Su et al., 2018; Theocharis et al., 2020; Vargo & Hopp, 2023), the perceptions of incivility by citizens (e.g., Sude & Dvir-Gvirsman, 2023), and the effects of uncivil messages (e.g., Goovaerts, 2022; Van’t Riet & Van Stekelenburg, 2022). However, due to the difficulty to directly measure citizens’ online behavior across different platforms, one crucial question remains poorly understood: to what extent are Internet users actually exposed to incivility?

This study contributes to this question and research on incivility in at least three ways. First, most published research has focused on highly selective samples of individuals who are actively engaging online, for example, by posting comments, while overlooking the fact that the majority of individuals passively observe or “lurk” online without actively contributing content. Studies trying to assess exposure to incivility and its effects were based on self-reports that clearly have limited validity and suffer from inherent biases when trying to measure digital behavior (Parry et al., 2021; Scharkow, 2016). It is unclear whether self-reports of incivility are valid and reliable measures of exposure, or instead reflect general perceptions of (online) communication (Kenski et al., 2020; Sliter et al., 2015). In our study, we passively measure exposure to incivility through a web tracking of public content on news websites, Facebook, and Twitter, including comments and replies. Second, scholars of incivility were not able to compare the relative influence of contextual factors, content, and personal characteristics together in one integrated design. We collect the web tracking data with the informed consent of individuals and are able to analyze these jointly with survey responses of the same participants, opening up a direct linkage of digital behavior, demographics, and attitudes (Stier et al., 2020). Third, previous studies did not have obvious sampling points for platform comparisons, concentrating, for instance, on data from the same actors on different platforms (e.g., Oz et al., 2018). However, the user bases of both platforms obviously differ. It could well be the case that there is a large amount of incivility on a specific platform, still, it only spreads among certain persons, and only a few persons are actually exposed to any of this incivility. Vice versa, there could be low levels of uncivil content on another platform, but many persons could receive it. Analyzing the content actually seen by the similar persons assigns the appropriate weight to each platform and account, taking into account each individuals’ respective media diet.

In our analysis, we investigate pre-registered hypotheses regarding the role of contextual factors (platform differences (news websites, Facebook, Twitter) and campaigning context (pre- vs. post-election periods)), content characteristics (political content and actors), and personal characteristics (gender, political extremism) in shaping individuals’ exposure to incivility. By examining these factors in one comprehensive research design, we gain a better understanding of the complex dynamics that contribute to the exposure to incivility in online discourse. The results show that citizens are particularly likely to get exposed to incivility when they use Twitter, read reactions/comments on original posts/tweets and political content.

Comparing Incivility Across Platforms and Time Periods

Assessing the predictors of exposure to incivility by comparing the levels of incivility across contexts, content, and personal characteristics is hard for several reasons: First, the concept of incivility has been defined in various ways. Incivility refers to rude, impolite, or offensive language that violates established norms of respectful communication. One common feature is that most scholars understand incivility as the use of disrespectful language toward others. This includes profanity, vulgarity, identity-based attacks like insults based on origin, sexuality, or religion (Chen, 2017; Coe et al., 2014; J. W. Kim et al., 2021; Muddiman, 2017; Papacharissi, 2004). In particular, the combination of political content and online discussions seem to be a perfect breeding ground for incivility. Besides its multidimensionality, incivility can take various shapes and manifests itself in different language across platforms (e.g., Reddit vs. Facebook; Muddiman & Stroud, 2017; Stoll et al., 2023).

Second, not only the conceptualization of incivility varies, but also the data and methods used are all limited in certain respects. Text analysis is a cornerstone of platform comparisons, yet only a limited number of text documents can be annotated by human coders. Recently, several automated approaches like dictionaries, supervised machine learning or a pretrained model like Google’s Perspective API have been used (Hopp & Vargo, 2019; Hopp et al., 2020; J. W. Kim et al., 2021; Theocharis et al., 2020; Vargo & Hopp, 2023). While methodological advances have been made for the identification of incivility, measuring actual exposure to incivility has mostly been done using survey data (Jaidka et al., 2021; Kenski et al., 2020; Sude & Dvir-Gvirsman, 2023). However, self-reports come with the disadvantage of memory errors and other well-known and evident biases, especially when it comes to reporting (characteristics) of online communication (Parry et al., 2021; Scharkow, 2016). To identify incivility at scale, automated text analysis and precise exposure measures—including the content seen by users—need to be brought together.

And third, previous studies have not yet integrated the multiple factors that might influence exposure to incivility in one research design. First, the context, that is, platform affordances play a crucial role when it comes to the prevalence of exposure to incivility. The technical environment and characteristics of online platforms and online discussions might predefine whether incivility spreads quickly, for example, since algorithms boost or inhibit toxic content via content moderation and governance principles (Jaidka et al., 2021; Oz et al., 2018). The surrounding social context and its differential impact on platform algorithms can also change drastically, for example, during an election campaign when political identities become more salient. Moreover, the types of content of a message (e.g., its political nature or actors mentioned) typically carry more or less incivility. Finally, characteristics on the person level might make it more or less likely to be exposed to uncivil and toxic online discussions.

Until now, these three levels of influence have been studied in isolation, since scholars lacked the data for a systematic comparative approach. Previous comparative studies had to choose a similar entity on different platforms as the main sampling frame, for example, comparing news articles and Facebook comments on the same topic (Rossini, 2022) or the Facebook and Twitter accounts of the White House (Oz et al., 2018). Yet even the known prevalence of uncivil messages for topic X on platform Y does not tell us anything about actual exposure to incivility since we do not know how many users receive these uncivil messages.

We will compare the relevance of these factors following a person x situation x context approach to explain exposure to political incivility (Funder, 2008). In the subsequent sections, we will present pre-registered hypotheses and embed these in the relevant literature on the different levels of influence—context, content, and personal characteristics.

Context: Platform and Campaigning

When thinking about incivility on social media, notions of trolls, toxic Facebook groups, or Twitter bubbles come to mind (Sydnor, 2019). However, this notion is not trivial and claims about social media content being more uncivil than other forms of (online) communication need to be substantiated. While most of the work on incivility in the political realm today is conducted on social media platforms, the question is still whether the content is really more uncivil than, for example, on legacy media (Sydnor, 2019). After all, besides user-generated content, news media plays a huge role in political online discussions. However, journalistic norms and rules might prohibit traditional journalistic pieces online from being as uncivil as discussions on social media. Despite notions of media displaying incivility in order to gain the attention of the audience (Mutz, 2015), and news media also portraying political conflicts, the interactive nature and characteristics of social media should increase incivility (Sydnor, 2019, 2020). Given that news agencies maintain editorial boards and strict verification systems, and journalists—particularly those associated with traditional news platforms—do not engage in uncivil behavior that would harm democratic and journalistic principles, we regard online news articles as a baseline for incivility. They, of course, report about politics, parties, and politicians and do portray political conflict, but uncivil discourse, similar to the interactive, lively, back and forth on social media should be rare.

Comparisons of the quantity and quality of uncivil content on different social media platforms have been largely carried out under the umbrella of platform affordances. The idea is that technical and organizational features of platforms account for differences in incivility usage and exposure (Evans et al., 2017). On social media, affordances that have been discussed concerning the prevalence of incivility are anonymity and deindividuation, moderation efforts, and characteristics of the network the users are operating in. According to these considerations, more anonymous networks such as Twitter, where most users have pseudonymized usernames, could foster incivility in contrast to non-anonymous platforms such as Facebook, where a majority uses their real name and the network consists of family and friends that can (potentially) see your comments and posts (Jaidka et al., 2021; Oz et al., 2018; Schroll & Huber, 2022). Jaidka et al. (2019) recently demonstrated that Twitter’s political discussions became more polite and less uncivil after the platform doubled its character limit, highlighting the impact of technical platform affordances.

Besides the aforementioned affordances, rating systems (e.g., liking or sharing a post on a social media platform) can encourage uncivil user comments as well as engagement (J. W. Kim et al., 2021). If incivility is rewarded with user engagement, social media algorithms are likely to spread or amplify those contents. Further, Shmargad et al. (2022) emphasize the idea of incivility as a dynamic and normative process, as repeated incivility in threads receives more attention and fosters engagement.

When examining the prevalence of incivility in online discourses, studies find varying levels of incivility on different platforms. Comparing findings on the prevalence of incivility across multiple studies is challenging as studies often examine incivility in different countries and various time periods, contexts (election periods, political events), platforms, and also topics while using selective samples, for example, active social media from the US. However, most studies agree that (1) the levels of incivility vary by platform (Oz et al., 2018), (2) incivility is a rather rare construct that usually only applies to a small fraction of the content—even when analyzing selective content (Hopp & Vargo, 2019; Su et al., 2018; Vargo & Hopp, 2023) and (3) that incivility is more prevalent in user comments (Salgado et al., 2023; Shmargad et al., 2022; Vargo & Hopp, 2023). In sum, based on evidence on differences between different platforms when it comes to incivility, we pose the following hypothesis:

Online communication is most uncivil on Twitter, followed by Facebook, then news.

Of course, the platform is not the only contextual variable shaping online discourses and their quality. Despite websites and networks operating worldwide, cultural and country contexts could play a role (Otto et al., 2020; Schroll & Huber, 2022). In political discourses, the temporal context might also be crucial. Comparing campaigning with non-campaigning times has a long tradition in political communication, and scholars argue that campaigning times are peculiar since politicians strive for media attention, exaggerate differences, and communicate more than in regular, non-campaign times (Van Aelst & De Swert, 2009; Vasko & Trilling, 2019). The straightforward idea is that political campaigns bring conflict, negativity, polarization, and differences between parties, candidates, and political groups to the forefront. This, in turn, might spill over to political reporting and political discussions (but see: Vasko & Trilling, 2019); negative campaigns and the incivility of political actors as well as a heated atmosphere in a close election race could be “contagious” and be mirrored in uncivil online discussions. This idea has been confirmed for offensive comments to negative YouTube campaigns (Kwon & Gruzd, 2017), and civility or incivility cues can spill over to conversations on social media (Han et al., 2018). Therefore, we pose the following hypothesis:

Online communication during election campaigns is more uncivil than communication outside of election campaigns.

Content

The literature yields different answers to the question why political content might be more uncivil than everyday conversations. First, contestation (of political topics), conflict, and disagreement are essential components of democracy (Stryker et al., 2016). While politeness is a strong norm in everyday discussions, this norm might be violated because democracy is (also) about competition and conflict of ideas, parties, political groups, or candidates (Sun et al., 2021). Second, when analyzing different topics and contents online, it becomes evident that the political realm is more uncivil than unpolitical, “soft” topics. Political discourse elicits a more hostile and uncivil tone than non-political topics such as technology or lifestyle (Coe et al., 2014; Sobieraj & Berry, 2011). Online political discussions often circle moral issues such as abortion, euthanasia, drug legalization, or climate justice. These topics are close to citizens’ moral convictions and spark more emotional and polarized discussions, which might, in turn, lead to incivility (Oz et al., 2018; Theocharis et al., 2020; Ziegele et al., 2014). Third, besides talking about topics that people deeply care about and identify with, political and party identity dynamics and (affective) polarization of political groups add to the idea that political discussions are more uncivil than their non-political counterparts (Y. Kim & Kim, 2019; Wolf et al., 2012).

Political online communication is more uncivil than non-political online communication.

But not only political topics but also partisan cues, such as the presence of political actors, contribute to uncivil discussions. Similar to the explanation of partisans using more incivility and fiercely defending the in-group against attacks from the political opponent, the presence of political actors might activate partisan identities and lead to polarized and uncivil discussions (Anderson & Huntington, 2017). The idea is that incivility, partisan cues, and partisan identity are deeply interwoven (Thorson et al., 2008). Thus, we pose the following hypothesis:

Online communication containing political actors is more uncivil than communication without political actors.

Personal Characteristics

Citizens differ in which online spaces they communicate, how they use and perceive political online communication in general, and uncivil discourse in particular (Kenski et al., 2020). Previous studies have extensively examined the influence of various personality traits on perceptions of incivility (Nai & Maier, 2021; Sydnor, 2019); however, evidence on how these traits contribute to the exposure of online incivility is scarce. Consequently, we focus on two crucial personality characteristics, recognizing the potential relevance of other traits personality traits and the need for further research.

First, gender might be a crucial variable when it comes to uncivil online discussions. It seems evident that men use more incivility in online political discussions (Han et al., 2018; Küchler et al., 2023). Due to the absence of suitable data so far to investigate this phenomenon, not a lot is known about self-selection into incivility. We can only speculate and infer from the more active participation of men in online discussions (Van Duyn et al., 2021) that they would also be more likely to visit comment sections where incivility should be more prevalent. Men also have a higher appetite for contentious arguments and disagreements (Wolak, 2022). We therefore assume that men do not only communicate in an uncivil manner more often but are also exposed to it more regularly:

Men are exposed more to uncivil online communication.

Second, political ideology and attitudes are crucial in understanding incivility. Right-wing ideology and especially more extreme ideological positions are associated with using incivility (Anderson & Huntington, 2017; J. W. Kim et al., 2021). There are several explanations for that. Citizens with a right-wing ideology put a lot of emphasis on freedom of speech, which might lead to uncivil self-expression (Han et al., 2018). However, not only (extreme) right-wing citizens but all citizens who hold extreme ideologies and are strong partisans are prone to using uncivil communication. One the one hand, strong partisans might hold the belief that all forms of communication are legitimate to criticize the opponent in a conflict, including incivility (Kosmidis & Theocharis, 2020; Muddiman & Stroud, 2017). Furthermore, of course, partisanship represents a strong social identity cue, which might lead to more heated discussions with members of the “outgroup,” that is, citizens identifying with another party (Muddiman, 2021). Finally, the relationship between incivility and polarized public spheres has been discussed by political communication scholars (Druckman, Gubitz, Lloyd, & Levendusky, 2019). The idea is that extreme positions lead to more polarized and, in turn, more uncivil discussions (Y. Kim & Kim, 2019; Skytte, 2021) and vice versa (Lee et al., 2019; Su et al., 2018; Wolf et al., 2012).

Individuals with extreme political ideology are exposed more to uncivil online communication.

Research Design

Data

This paper relies on web browsing histories and survey data collected from the same participants during the German federal election 2021. The observational study was pre-registered on 23 January 2023 (https://osf.io/7jbnk/). At the time of registration, the data for the paper had been collected already and the text analysis model for detecting incivility had been validated against the manual codings of student assistants. However, the hypotheses were pre-registered before the incivility predictions were applied to the millions of collected website visits and before any analysis of incivility was performed (the survey data had been analyzed for other purposes already).

In total, 739 participants were recruited from the respondent pool of the market research panel dynata based on population margins that are representative for the German population. The data collection received IRB approval from the GESIS Ethics Committee (decision 2021-6). Participants gave their informed consent and installed a browser plugin that tracks their website visits on their primarily used browser. They received incentives for their participation in the study and keeping the tool active. Participants were also surveyed on their demographics and political attitudes, allowing us to link individual-level data with their browsing behavior. We excluded 87 participants who did not visit any news websites, Facebook, or Twitter, resulting in a lack of measurement for the dependent variable (exposure to incivility) among these individuals. Another 14 participants did not respond to the survey on key demographic variables (see Online Appendix Section S1 for details on the sample).

For the collection, we used a browsing extension designed by academic developers that not only records the starting time and URL of a visited website—as most commercial tracking tools do—but also scrapes the html content (Aigenseer et al., 2019). This feature is crucial to capture adaptive content, as some websites, for example, social media platforms and dynamic websites, cannot be scraped retrospectively. Consequently, the information about the content an individual saw at the time of retrieval is usually lost. Content from an extensive blocklist of sensitive domains (e.g., banking or adult content) was discarded from the tracking. The tool captured public content within Facebook and Twitter (not private messages or private profiles) and distinguishes between original Facebook posts/tweets and reactions in the form of comments/tweet replies. In total, we recorded more than 8 million website visits made by the participants using their web browsers during the study period between 19 August 2021 and 27 October 2021.

Measures

Incivility

To measure the dependent variable exposure to incivility, we used Google’s Perspective API to predict a probability-score which indicates the likelihood that the sentences a user was exposed to is uncivil. Due to the differences regarding the text types (long news articles vs. relatively short social media posts), we decided to measure incivility at sentence level—the best comparable unit across platforms. In addition, Google’s Perspective API provides the most accurate results for short social media texts, since it was trained on this kind of data. The Perspective API was utilized by various studies to detect uncivil content on, for example, Facebook (Hopp & Vargo, 2019; Hopp et al., 2020; J. W. Kim et al., 2021), Twitter (Hopp et al., 2020; Theocharis et al., 2020), and Reddit (Vargo & Hopp, 2023). Google’s Perspective API was criticized for having a bias in the German language as it produces higher probability scores than other languages. 1 To mitigate potential bias introduced by predictions from the German API, we translated our data into English and conducted a robustness test by re-evaluating all sentences using the English model of the Perspective API. 2

Independent of and before using the Perspective API, human coders were trained on a codebook previously developed for the project ‘Tracking the effects of negative political communication during election campaigns in online and offline communication environments’ to identify whether a document (e.g., news article or a social media post) contained incivility until reliability scores were acceptable (Krippendorff’s Alpha 0.67, see the codebook in Online Appendix S5). Coders annotated 210 unique documents (i.e., full news articles, Facebook posts or tweets) for the validation of the automated predictions. Since we predicted a probability score for each sentence, we validated the scores by using the highest predicted probability across all sentences from a document. For comparing the probability scores with the binary codes of annotators, we created a dummy with the probability threshold that fitted the binary coder annotations best. In addition, we directly compared the predictions with 397 manual annotations on the sentence level. The F1 score for the document-level validation was 0.84, and for the sentence-level validation 0.83. Individual F1 scores for news, Facebook and Twitter were similar across both validations. The validation of the toxicity-scores predicted on the translated sentences showed similar F1 scores as well (see the validation tables in Online Appendix S2).

Platform

When measuring exposure to incivility, we focus on content from three platforms: news websites, Facebook, and Twitter. Using a list of more than 700 news domains which was generated in previous web tracking projects (Scharkow et al., 2020), and was updated on the basis of our data set, we identified 264 news websites visited at least once during the study period. Out of the 739 participants, 628 (98%) visited at least one news article; 328 (51%) saw at least one public post on Facebook; 172 (27%) encountered at least one tweet, and 109 participants (18%) were exposed to public content from all three sources. For methodological reasons, we did not include the content of home pages of news websites in the analysis. Unlike specific news URLs, home pages contain many different topics, headlines and previews. Therefore, it cannot be determined what the focus of the participant actually was. In addition, home pages are packed with clickbait articles that often cover sexualized content. Toxicity models like the Perspective API tend to predict high levels of incivility in these cases.

Time Period

To investigate effects of political campaigning, we differentiate between a pre- and post-election period by considering all online exposure until election day as pre election and the remainder as post election.

Content

We used a dictionary-based method containing keywords on political issues, institutions and actors to identify political content with an F1 score of 0.91 (Table A7). In addition, we identified sentences containing political actors by using string searches for the two leading political candidates for each of the five parties present in the German Bundestag. Note that the measure of political actors is a subset of the political content measure. Overall, 18% of the sentences were classified as political and around 8% contain a leading political actor. News articles and Twitter contained more political content than Facebook (Online Appendix Table A2). These figures resemble findings in recent studies. For instance, Trilling et al. (2022) identified political content in 52% of the news articles—a finding that corresponds with our data when classifying political content in news at the document level, revealing a congruent 55%. In the absence of German-specific data, Wojcieszak et al. (2023) report slightly lower but comparable percentages.

Personal Characteristics

Prior to the collection of the web tracking data, a survey was conducted to obtain demographic variables and covariates on political information behavior, which we included as specified in the pre-analysis plan. The standard demographic variables are gender (45% female participants), age (in years; M = 48.6, SD = 13.56, MIN = 18, MAX = 74), education (coded into 3-point measure following the country-comparative ISCED scheme; 1 = primary/lower secondary [low], 2 = upper secondary [medium], 3 = tertiary [high]; M = 2.4, SD = 0.69, MIN = 1, MAX = 3), and East/West German (23% of the participants are based in eastern Germany). Political interest was captured using a 5-point scale from 1 (= not at all interested) to 5 (= very interested; M = 3.53, SD = 1.17, MIN = 1, MAX = 5). Political ideology was operationalized with an 11-point scale from 1 (left) to 11 (right; M = 5.45, SD = 1.88, MIN = 1, MAX = 11). An additional measure of political extremity with a range from 0 (= low extremity) to 5 (= high extremity) was calculated as the distance from the political ideology scale’s midpoint (M = 1.43, SD = 1.34, MIN = 0, MAX = 5). Descriptive statistics for all used variables can be found in Online Appendix Table A1.

Analysis Strategy

To model exposure to incivility across platforms, we use a pre-registered linear multilevel model with the probability that a sentence an individual was exposed to is uncivil as our dependent variable (level 1). As sentences are nested within persons, the person-level is modeled as level 2. In line with our hypotheses, news articles—where we expect the least amount of incivility—are used as the reference category for the platform dummies in the regression.

Results

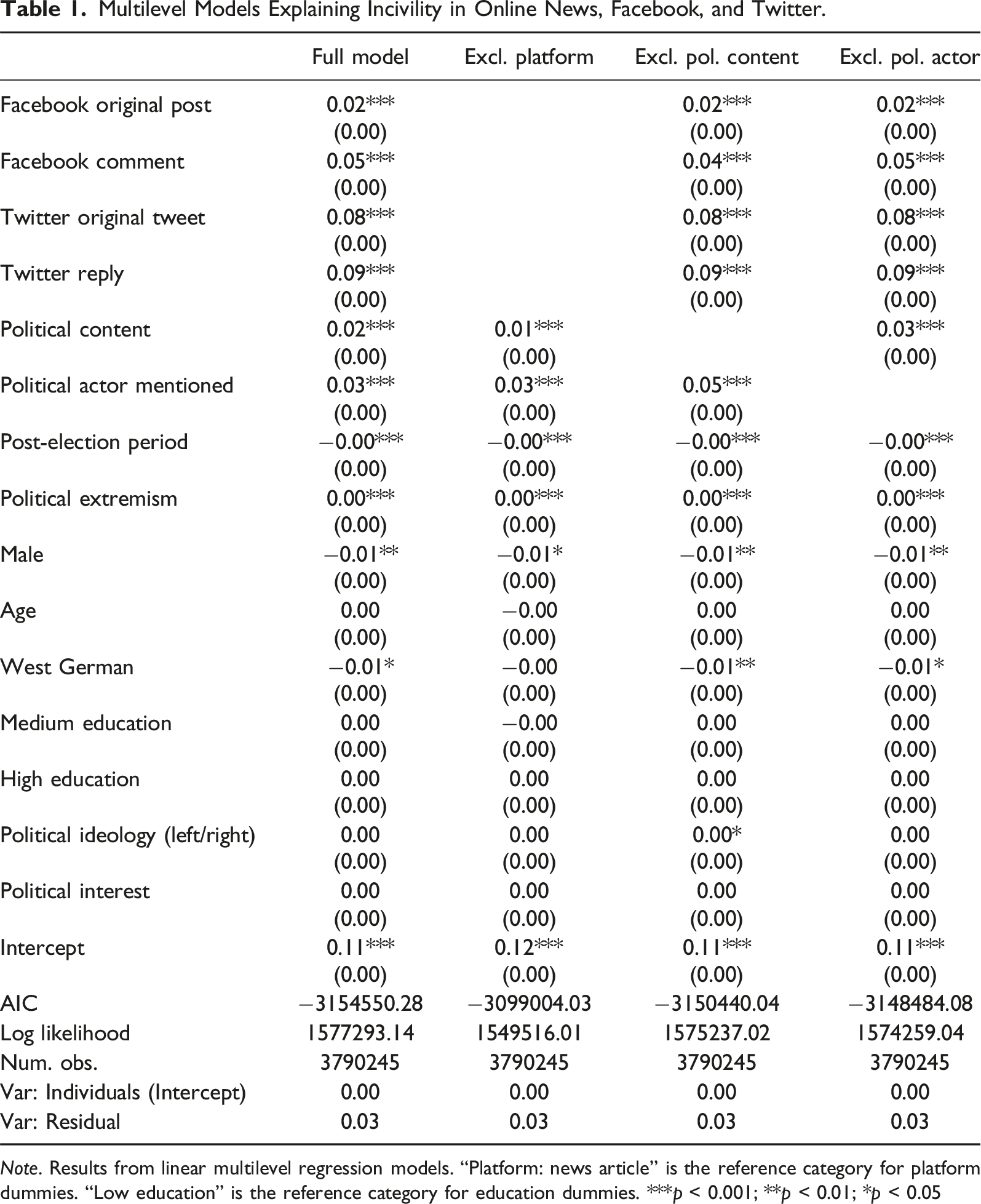

Multilevel Models Explaining Incivility in Online News, Facebook, and Twitter.

Note. Results from linear multilevel regression models. “Platform: news article” is the reference category for platform dummies. “Low education” is the reference category for education dummies. ***p < 0.001; **p < 0.01; *p < 0.05

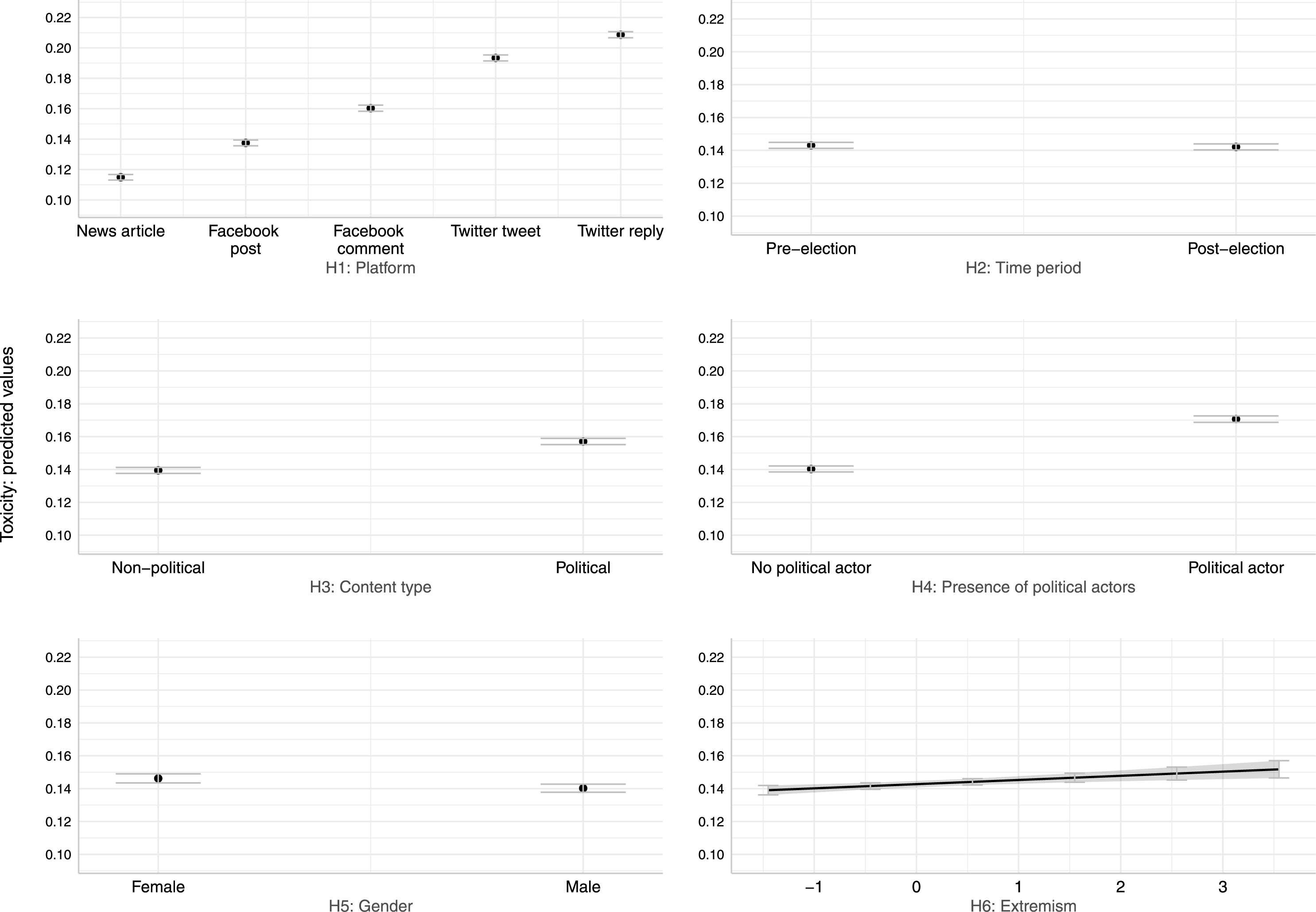

In line with the pre-registered H1, citizens are exposed to higher levels of incivility on Twitter, compared to Facebook and news websites. As an addition to the pre-registered hypothesis, we additionally focused on differences between original Facebook posts and Facebook comments as well as between original tweets and tweet replies were introduced after the registration of our study (all deviations from the pre-analysis plan are summarized in Online Appendix S3). Similar to previous studies, we found that user comments on Facebook as well as replies on Twitter contained higher levels of incivility compared to original Facebook posts and tweets. Given that such a large number of analyzed sentences tends to produce statistically significant results, it is important to also assess the substantive relevance. Figure 1 therefore shows the predicted marginal effects computed with the R package ggeffects (Lüdecke, 2018) which enable us to interpret the coefficients by holding the other factors constant. Despite differences between original content and comments/replies, the platform is still the strongest predictor. Content seen on Twitter is generally more likely to be uncivil than Facebook posts, Facebook comments, and news articles, in decreasing order. Taken together, there is consistent evidence for H1. Predicted effects from full regression model in Table 1.

Turning to H2, the evidence is less clear. There are statistically significant effects of the pre versus post-election period in Table 1, yet with an absolutely minimal effect size. This is confirmed in Figure 1 where confidence intervals of both time periods actually overlap. Statistically significant regression coefficients can still result in overlapping confidence intervals when taking the marginal effects due to clustering and other statistical nonlinearities (Mize, 2019, p. 90/91). The null findings can be explained by the continuing political tensions after election day. German elections are immediately succeeded by very much public negotiations to form government coalitions and oftentimes public brawls among leading politicians of the losing camp, here the conservative party CDU. After the unclear election result in 2021 where no political block clearly won and ultimately a liberal-green-social democratic coalition was formed for the first time, post-election political communication was particularly contentious. To fully test H2, a longer time period, preferably over several election periods, would be needed.

Both Table 1 and Figure 1 show that exposure to political content is characterized by higher levels of incivility. Political actors are a stronger predictor of incivility than political content in general. This is also confirmed in the smaller models in columns 2 and 3 where only one of both variables is included. As a further robustness check, we also tested whether the results vary by classifying non-political comments and replies as political if the original post or tweet to which they belong is political. After all, comments/replies are reactions to an original post that sets the topic for subsequent conversations and might trigger slanders such as idiot even though they contain no political keywords. With this more expansive operationalization of political conversations, the results in general and also the differences between the original post and subsequent reactions remain robust (Online Appendix Table A10). Overall, these various models provide consistent evidence for H3 and H4. However, to put those findings in context, it should be noted that only about 18% of the analyzed sentences are political.

Finally, the two remaining hypotheses predicted that there would be a self-selection into uncivil content based on personal characteristics. The regression coefficients in Table 1 point against the predicted direction of H5: women were actually exposed more to incivility then men. The marginal effects confirm these detectable, yet small differences by gender (marginal effect of 0.146 (CI: [0.143, 0.149]) for women versus 0.140 (CI: [137, 0.143]) for men). Individuals with a more extreme ideology were exposed more to incivility, confirming H6. The effect remains significant across all robustness checks. Taken together, personal characteristics matter, yet Figure 1 clearly demonstrates that these factors are substantially less relevant than the effects of the platform and content characteristics.

We also conducted additional robustness tests to assess the stability of the results. Table A8 in the Online Appendix replicates the main results when only using the core sample of 109 participants who had any exposure on news websites, Facebook, and Twitter. In Table A9, the results are similar when using the English Perspective API after translating our documents. In Table A11, we calculated a negative binomial multilevel model that is made for count dependent variables (which we not have), but takes into account the skewed distribution of the dependent variable. In all of these additional tests, the main results reported in the paper hold.

Discussion and Conclusion

This comparative study of incivility contributes to the state of research mainly with regard to three aspects: First, we take advantage of a user-centered data collection that combines survey measures with digital behavioral data on the individual level. Participants were not chosen based on their observed social media activity as in most previous studies, but from a diverse sample of study participants which allows us to draw more general conclusions. Second, the browser extension enabled us to passively measure individual exposure to incivility across multiple platforms, including comment sections on social media. Unlike existing content analyses of platform data, we were able to link the data to individuals and do not rely on selective content such as data on certain topics or specific accounts (news outlets, parliamentarians, political candidates etc.). Third, our study provides substantive findings that confirm previous research and theories while also encouraging further investigation.

Our study shows that platforms are by far the strongest predictors of exposure to uncivil content. We assume that platform affordances foster both user behaviors and the creation of user-generated content. Platforms have extensive possibilities to establish the environment for political communication by setting their own rules and norms, but also to moderate content and sanction users, for example, by temporarily blocking their accounts for using uncivil language. Further, we provide evidence that user-generated reactions (comments) internet users are exposed to within social media contain higher levels of incivility. This finding is crucial as most study designs fail to link content on social media to individuals. Without linkage, it is uncertain whether users are exposed to comments on social media since it often requires active behavior, such as clicking on a “show comments” button.

Similar to previous literature, we found content containing political discussions and actors to be more uncivil. The characteristics we investigated are rather generic and set a baseline for further (comparative) research. It would, for example, be promising to look into different topics within political and non-political content. Literature suggests that not all political content is equally prone to incivility, but moral topics tend to be. Again, however, previous evidence mostly came from content analyses or experimental studies, which are not able to capture exposure. Furthermore, our findings contribute to disentangling the effects of (technical) platform affordances and (user-generated) content. In addition to identifying generally weaker content effects, we observe that Facebook comments and Twitter replies exhibit higher levels of incivility compared to regular posts on these platforms, while compromising a lower share of political content.

In contrast to other studies, participants in our sample had no specific taste for incivility as we found only minor self-selection effects. We suspect that skewed samples and/or inaccurate self-reports of incivility exposure may have contributed to an overestimation of individual self-selection in previous literature. However, we cannot exclude that in different contexts, for example, platforms that were not covered in this study, content and countries, personal characteristics may exert a stronger influence on exposure to incivility.

We acknowledge several limitations of the current research design. While Google’s Perspective API has become the standard instrument to measure incivility (Hopp et al., 2020; J. W. Kim et al., 2021; Vargo & Hopp, 2023), using black box pre-trained models has obvious limitations. Toxicity models have biases (Hede et al., 2021) and levels of incivility are hard to compare across countries and cultural contexts. Another limitation to mention is that we could only track participant behavior in selected browsers on desktop computers or laptops. In order to obtain a broader picture, mobile data would have to be collected as well. This is currently methodologically not feasible, as it would require to observe participants across multiple smartphone apps. Further, ethical and legal reasons inhibited us from collecting private posts on Facebook. In light of the congruence between our findings and current studies (e.g. Y. Kim & Kim, 2019; Oz et al., 2018; Su et al., 2018), we do not suspect that exposure to incivility on Facebook is primarily fueled by private posts or that they contain notably higher levels of incivility compared to public posts. Additionally, it would be worthwhile to not only compare campaigning- and non-campaigning times but further zoom into temporal dynamics within a campaign. Scholars might look at the question of whether uncivil discourse amplifies when approaching election day or in close political races. Moreover, certain events like debates, interviews, or external events could change levels of incivility and the tone of political discourse.

The results highlight the importance of integrated research designs that link online behavior to individual-level information and study the link between predispositions and exposure beyond platform comparisons and experimental designs (Stier et al., 2020). If researchers try to find incivility in online discussions, they will find the venues where it is prevalent. However, for understanding the impact of social media and toxic content on societal discourses, it is important to understand how many citizens are actually exposed to uncivil content. Incivility that no one ever sees cannot have any effects on citizens and democracy at large. Therefore, we encourage researchers to focus more on measuring exposure in ecologically valid ways and to study its downstream effects, for example, on political attitudes and outcomes like social trust. Furthermore, although methodologically challenging, linking individuals’ perceptions of incivility to exposure could enrich our understanding of online incivility. While our findings highlight the importance of contextual factors, our study can only be one piece in a more comprehensive research agenda that puts incivility in comparison across different platforms, countries, and cultural and political contexts.

Supplemental Material

Supplemental Material - Incivility in Comparison: How Context, Content and Personal Characteristics Predict Exposure to Uncivil Content

Supplemental Material for Incivility in Comparison: How Context, Content and Personal Characteristics Predict Exposure to Uncivil Content by Felix Schmidt, Sebastian Stier, and Lukas Otto in Social Science Computer Review

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (MA 2244/10-1).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.