Abstract

Comment sections below news articles are public fora in which potentially everyone can engage in equal and fair discussions on political and social issues. Yet, empirical studies have reported that many comment sections are spaces of selective participation, discrimination, and verbal abuse. The current study complements these findings by analyzing gender-related differences in participation and incivility. It uses a sample of 303,342 user comments from 14 German news media Facebook pages. We compare participation rates of female and male users as well as associations between the users’ gender, the incivility of their comments, and the incivility of the adjacent replies. To determine the incivility of the comments, we developed a Supervised Machine Learning Model (classifier) using pre-trained word embeddings and word// frequency features. The findings show that, overall, women participate less than men. Comments written by female authors are more civil than comments written by male authors. Women’s comments do not receive more uncivil replies than men’s comments and women are not punished disproportionately for communicating uncivilly. These findings contribute to the discourse on gender-related differences in online comment sections and provide insights into the dynamics of online discussions.

Keywords

Many citizens access news on Facebook and comment on news articles (Newman et al., 2017; Pew Research Center, 2017). While some scholars hoped that social media could foster inclusive and civil public online discussions (Dahlberg, 2001; Ruiz et al., 2011), research has taught us differently: comment sections are spaces of selective participation and prone to incivility (Chen, 2017; Coe et al., 2014; Muddiman & Stroud, 2017; Rowe, 2015).

The current study adds to these findings by focusing on gender-related differences in online discussions in comment sections. It considers two aspects: (1) the participation rates of female and male users and (2) incivility by and against male and female users, that is, their communication of incivility and their likelihood of receiving uncivil feedback. Thereby, the study addresses two gaps of existing research. First, previous studies on public (political) participation of women in online comment sections have often relied on self-report data (e.g., Bergström & Wadbring, 2015; Diakopoulos & Naaman, 2011; Stroud et al., 2016; van Duyn et al., 2021; for exceptions, see(Baek et al., 2021) Baek et al., 2021; Lee & Ryu, 2019; Vochocová et al., 2016). Self-reported behavior, however, often differs from actual behavior (e.g., Vochocová et al., 2016). The present study, therefore, uses data from a large-scale content analysis of actual discussions to examine whether a gender-related gap in the participation rates of female and male users exists in online comment sections. Second, only few studies have investigated gender-related differences regarding the communication of incivility and the likelihood of becoming a target of uncivil communication (e.g., Rheault et al., 2019). In fact, most of these studies have examined persons of public interest, such as politicians, journalists, or popular YouTubers (e.g., (Chen et al., 2020); Döring & Mohseni, 2020; Rheault et al., 2019; for an exception see Nadim & Fladmoe, 2021). The present study adds insights into how female laypersons in online comment sections are affected by incivility and whether they use incivility themselves.

The current study relies on data from a large-scale content analysis of 303,342 user comments on 14 German news media Facebook pages. We investigate whether male and female commenters differ regarding their participation rates in comment sections, whether they differ in their use of uncivil communication, and whether they face the same degree of uncivil reactions from other users. Data collection and analysis were conducted with the help of computational methods. To determine the incivility of user comments, we trained an Incivility Classifier, which achieved an acceptable accuracy of .68. The gender of comment authors was assigned automatically by matching usernames with dictionaries of male and female first names. To account for the hierarchical structure of comments and replies, we used multilevel modeling. Using this innovative combination of approaches, we contribute to the discourse on gender-specific differences in online comment sections and complement existing research on the dynamics of online discussions.

Gender-Related Differences in Online Discussions

Gender and Participation Rates

Gender-related differences in comment sections can be approached with a view on the participation rates of men and women. Discussions in comment sections are public and often about political topics (e.g., Stroud et al., 2016; van Duyn et al., 2021). Therefore, research on political deliberation (e.g., Polletta & Chen, 2013; Vochocová et al., 2016) can be used to derive hypotheses about the associations between gender and participation rates in comment sections. This research suggests that women are less likely than men to participate in political deliberation because they were socialized to avoid these discussions or because they were, for a long time, restricted from political spaces in general (van Duyn et al., 2021). Additionally, historically, women were blamed to lack the resources to successfully conduct political deliberation (Polletta & Chen, 2013). Some research has also linked women’s lower engagement in political deliberation to the psychological trait of conflict avoidance (Ulbig & Funk, 1999) or to their compliance to gender stereotypes that still prevail (see next sections). Ultimately, recent research has found that female politicians who are highly visible on social media platforms are more likely to be attacked uncivilly than their male colleagues (Rheault et al., 2019). From a social learning perspective (e.g., Bandura, 1969), female users could learn from these observations that engaging in public political discussions is harmful and, therefore, they refrain from participating.

In line with these arguments, most previous studies examining participation in online comment sections have found that more male users than female users write comments. These findings are largely consistent across different platforms (e.g., websites of news media outlets, Stroud et al., 2016; comment sections on Facebook sites of political parties, Vochocová et al., 2016; comment sections in general, van Duyn et al., 2021) and countries (Czech Republic: Vochocová et al., 2016; Korea: Baek et al., 2021; Lee & Ryu, 2019; USA: Stroud et al., 2016; van Duyn et al., 2021). The findings also align with research on offline political discussions, which has shown that women contribute less than men in terms of speech participation (Karpowitz et al., 2012).

One study, however, has found that more women than men reported to comment on news on social networking sites (Kalogeropoulos et al., 2017). In contrast, men were more likely to write comments on news websites. Yet, the authors only measured the frequency but not the type of commenting (e.g., private vs. public). It might well be that women are more active in certain online behaviors (e.g., commenting on photos or status updates shared by their network; Junco, 2013), but are less active in public commenting on political content (Lee & Ryu, 2019; Vochocovà et al., 2016).

In sum, the theoretical arguments and most empirical studies on participation behavior in public comment sections suggest that men are more active than women in terms of participation rates. Therefore, we can derive the following hypotheses:

(1) More men than women participate in comment sections on Facebook pages of news outlets and (2) men contribute more comments than women.

Gender and Incivility

Gender-related differences in online discussions can also be approached as differences in the communication behavior of female and male users and as different reactions of other users to their behavior. In this paper, we focus on the communication of incivility. Definitions of incivility range from incivility as rhetorical and stylistic elements such as insulting vocabulary, ad hominem attacks, or verbal intimidation (Coe et al., 2014) to incivility as a set of behaviors that “threaten democracy, deny people their personal freedoms, and stereotype social groups” (Papacharissi, 2004, p. 267). Uncivil behaviors then include racism, sexism, attacking people for belonging to social or ethnic groups, or threatening other individuals’ rights (Kalch & Naab, 2017; Papacharissi, 2004).

Empirical research particularly investigating incivility against women follows the aforementioned definitions to a greater (e.g., Southern & Harmer, 2021) or lesser extent (e.g., Nadim & Fladmoe, 2021; Rheault et al., 2019). For the current study, we follow the definition of Coe et al. (2014) who understand incivility as “features of discussion that convey an unnecessarily disrespectful tone toward the discussion forum, its participants, or its topics” (Coe et al., 2014, p. 660, italics in original), including name-calling, aspersion, lying, vulgarity, and pejorative for speech. We chose to adhere to this definition because (a) it is a comprehensive definition that offers clear indicators for uncivil expressions, and (b) it is an established definition of incivility and will, therefore, enable us to compare our results with existing research.

We use this definition to investigate incivility as a gender-related difference in comment sections along two aspects, namely, the communicators (i.e., do women communicate less uncivilly than men?) and the objects of incivility (i.e., are women targeted more often by incivility than men?). Additionally, we analyze a combination of these two aspects, namely, whether women are punished with uncivil responses disproportionally stronger than men when they communicate uncivilly.

Uncivil Communicators

Studies often do not provide a theoretical explanation for these differences (e.g., Kapidzic & Herring, 2011; Montgomery et al., 2004), but some research has referred to Social Role Theory (e.g., Wilhelm & Joeckel, 2019). One implication of Social Role Theory is that people expect others to behave in line with their gender stereotypes (Eagly, 2013; Eagly & Wood, 2012). Gender stereotypes attribute domestic, subordinate, and communal behaviors to women, while men are considered dominant and agentic (Eagly & Wood, 2012). Translated to communication behavior, Social Role Theory would predict that due to still-existing gender stereotypes, women communicate more warmly and less aggressively than men.

In sum, these findings and arguments suggest that women and men show different online communication behavior. Based on our understanding of incivility (Coe et al., 2014), incivility manifests in a disrespectful and often aggressive tone that aligns more with the (stereotypical) communication behavior of male than female commenters. We therefore hypothesize:

Uncivil Reactions

Social Role Theory and the so-called backlash effect can help to explain these findings (Wilhelm & Joeckel, 2019). According to this research, violating gender stereotypes can produce dissonance in communication partners and lead to a backlash effect (Rheault et al., 2019). The backlash effect has originally been researched in professional environments. Findings are that women are misjudged on the job or in hiring situations when showing counter-stereotypic behavior, such as agentic or aggressive communication (Eagly & Karau, 2002; Rudman & Glick, 1999, 2001). Not only women face stereotyping or discrimination due to gender—men also do (Davison & Burke, 2000). Yet, negative consequences due to the ascription of gender stereotypes are more likely to occur for women than for men ((Chen et al., 2020); Wotanis & McMillan, 2014). Similarly, a backlash effect can occur in the field of online journalism and online discussions. It can manifest in, among others, negative evaluations (i.e., flagging of online comments), social isolation, and even harassment ((Chen et al., 2020); Wilhelm & Joeckel, 2019).

In the current paper, we focus on a potential backlash effect that manifests in uncivil reactions to messages that are posted by male or female users in online discussions in comment sections. Social Role Theory and the backlash effect suggest two assumptions: First, given that discussions in comment sections are often political in their nature (see previous sections) and given that politics are still often considered a predominantly male arena (e.g., Rheault et al., 2019; Schneider & Bos, 2019), it can be assumed that women who publicly voice their opinion on politics will face more uncivil feedback than men. Second, this effect could be particularly strong when female users themselves engage in uncivil communication. In this case, women would violate two gender stereotypes, namely, the expectation not to (overly) engage in the field of politics and the expectation of communicating in a domestic, subordinate, and communal way (Eagly & Wood, 2012). Wilhelm and Joeckel (2019) have argued that such behavior could be considered “an act of double deviance” (p. 384). In line with the backlash effect, (Chen et al., 2020) found that female journalists receive uncivil reactions especially when reporting about “male topics.” Moreover, in an online experiment (which, however, focused on flagging behavior as an operationalization of backlash), Wilhelm and Joeckel (2019) demonstrated that online users perceive it as disproportionately negative when women compared to men write counter-speech or hate-speech comments, that is, when they violate existing gender norms. The participants were more likely to sanction female users compared to male users for such comments (Wilhelm & Joeckel, 2019).

Still, studies have predominantly reported these effects for persons who are in the spotlight, such as politicians (Rheault et al., 2019; Southern & Harmer, 2021; Rossini et al., 2021; Ward & McLoughlin, 2020), journalists (Chen et al., 2020; Gardiner et al., 2016), or popular YouTubers (Döring & Mohseni, 2020; Wotanis & McMillan, 2014). Some evidence exists that the less well-known a person is, the smaller inequalities between men and women could be: Southern and Harmer (2021, p. 263, highlights in original), for example, investigated “‘ordinary’, or less high profile” MPs 1 in the UK and found only little differences between male and female MPs. Similarly, Rheault et al. (2019) found that gender-related differences in uncivil reactions to the tweets of politicians existed only for highly visible politicians. Finally, a survey focusing on laypeople suggested that “online harassment does not appear as specifically a ‘woman problem’” (Nadim & Fladmoe, 2021, p. 255) because men also reported to face incivility. Explanations for a less pronounced backlash effect for laypeople could be that others perceive the behavior of laypeople as less norm-violating and as less threatening to the “status quo” than the behavior of well-known persons whose public actions are often considered exemplary for a society.

Reviewing the previous section, it is fair to say that the empirical evidence regarding online incivility against women in general is inconclusive. This applies even more to the research on incivility against women engaging in comment sections. Social Role Theory and the backlash effect suggest that female users of these predominantly political spaces will face uncivil feedback when they participate in general, and in particular when they use uncivil communication themselves. However, previous empirical research has also suggested that the differences regarding uncivil reactions may diminish among laypeople. As the inconclusive state of research prevents deriving clear hypotheses, we ask the following research questions: RQ1: Will comments written by female users receive more uncivil replies than comments written by male users? RQ2: Will the backlash effect investigated in RQ1 be particularly strong when female users post uncivil comments?

Method

Sample

To answer the research questions and test the hypotheses, this study used a dataset of 303,342 user comments below news articles on 14 German Facebook news pages. 2 We collected posts and comments via Facebook Graph API. First, we collected all posts published between July and August 2018 on the 14 Facebook pages. The articles covered a broad range of topics (e.g., sports, politics, etc.). During the collection period, no specific events took place that would bias the news coverage. From this corpus of posts, we drew a sample along the following criteria: we only considered posts with at least 60 comments, and we only included original posts on the Facebook pages of the news outlets, which linked to a respective article on the news outlets’ websites (i.e., we excluded shared posts from other Facebook pages that appeared in the feed of the selected news page). This procedure resulted in a total of 792 news posts that were included in our sample. Due to the selection criteria for news pages (i.e., national news pages) and posts (i.e., number of comments) it is very likely that most of the posts included in our analyses report about political topics although we did not specifically concentrate on topics as a selection criterion.

In a second step, we collected all online discussions that were linked to the selected posts and included them in the analyses. The comment sections on Facebook are organized hierarchically in comments and replies. Replies are displayed (in chronological order) below each comment. In total, the dataset contains 139,830 comments and 163,512 corresponding replies.

Identifying Gender from Usernames

Since Facebook urges its users to register with their real names, their gender can be inferred from the author’s username in most cases. For our analyses, we automatically determined gender from the first name of each user. We used the python-package gender-guesser, 3 which works with data bases of international first names. Names can be categorized as either (mostly) male, (mostly) female, or ambiguous (androgynous). To validate the automated measurement, we manually evaluated a random subsample of 500 comments. Coding (male, female, androgynous, unknown) was done by two coders. Subsequently, we conducted a qualitative error analysis to investigate if and when human and algorithmic gender coding differed significantly. We found that the gender assignment was accurate in 99% of the cases in which users stated their names in the order “first name” followed by “surname”. Users who intentionally misspelled their names on purpose (e.g., “Tho Mas”) or used an alias (e.g., “Cutie Pie”) were categorized as “unknown” by the gender guesser (7% of the sample of 500 comments), whereas human coders often could identify misspelled names (e.g., “Tho Mas” for Thomas) and consequently the gender of a user. Further, we found that gender was assigned correctly by the algorithm for both German and non-German first names, whereas human coders needed further research to assign the gender correctly to non-German names. Interestingly, misclassification was therefore mainly caused by the lack of knowledge of the human coders, not the gender guesser. Based on the sample, we found no significant evidence for a bias in the gender measurement in favor of male or female users. Also, aliases and misspellings occurred both among female and male users.

In our analyses, we included all users whose names were automatically determined as either female, male, mostly female, or mostly male. In sum, 93,171 comments (including replies) were assigned to female authors (31%), and 179,422 comments were assigned to male authors (59%). For 30,749 comments, the author’s gender could not be assigned (10%). Those comments were excluded from the analyses, resulting in a total of n = 272,593 comments that were considered for further analyses.

Classifying Incivility in User Comments

To measure incivility in a user comment, we applied a Supervised Machine Learning model (classifier) that classifies user comments automatically into uncivil and civil. A classifier is a statistical model that predicts a certain output (e.g., incivility) given a certain input (e.g., comment text). It is necessary to train the classifier on a dataset that includes information on both the input and the output. Accordingly, user comments in the training dataset must be labeled (manually) as uncivil or civil. After successful training, the classifier is then applied on a large dataset of unlabeled text to test the hypotheses (in our case: n = 272,593). To apply a pre-trained classifier to a new dataset, both datasets should be as comparable as possible. In the following, we describe the training dataset and the approach of the incivility classifier in more detail.

Training Data

For every comment, coders rated the level of incivility on a three-point scale (0 = civil, 1 = slightly uncivil, 2 = predominantly uncivil). The scale was adapted from the incivility measure by Coe et al. (2014). That is, a comment was coded as uncivil when it included name-calling, aspersion, lying, vulgarity, or pejorative for speech. Contrary to the procedure by Coe et al. (2014), we did not code every manifestation of incivility separately, but rather coded a comment as uncivil when it included at least one of the incivility-related characteristics. Inter-coder reliability was tested on a sample of 100 comments and reached a satisfactory level of Krippendorff’s α = .83.

A difficulty is that uncivil user comments occur less frequently than civil comments in the training data. When coding along the described three-point scale, there are very few comments per incivility-level (i.e., slightly or predominantly uncivil). In consequence, the classification results were unstable and rather unsuitable to distinguish between all three levels of incivility accurately, especially between slightly and predominantly. Therefore, we aggregated slightly and predominantly uncivil to uncivil. By doing so, we work with a dichotomous incivility measure (0 = civil, 1 = uncivil) in the current analysis. Within the training data, the category civil was assigned 6678 times (67%) and the category uncivil was assigned 3294 times (33%).

Model Building with Word Embeddings and Bag-of-Words

Word embeddings must be trained separately on enormous amounts of text documents. Therefore, researchers often use pre-trained word embeddings. Word embeddings should be trained on a dataset that is comparable to the dataset that will be classified. For classification tasks on non-English and non-formal language texts, such as online discussions, few pre-trained models are available. For our sample of German user comments, we used 300-dimensional fasttext word embeddings (Bojanowski et al., 2017) that have been trained on a corpus of 50 million German tweets (Cieliebak et al., 2017; Deriu et al., 2017). It turned out to be the most appropriate word embedding model for our classification task. To use these word embeddings as independent variables (features) for incivility classification, they must be transformed from a representation on word-level into a representation on document-level. Since every word in a comment has its own 300-dimensional word vector, we averaged the word embedding vectors for all words in a comment to one 300-dimensional vector per comment (Pérez & Luque, 2019). This way, words and characters that are not included in the pre-trained embeddings are ignored. To ensure that all relevant words are considered, we additionally used frequency distributions of single words and word combinations (BoW) to predict the incivility of a comment. For the BoW representation, the comment messages were transformed into weighted frequencies of unigrams, bigrams, and trigrams ((Stoll et al., 2020); Risch & Krestel, 2018).

Model Results and Evaluation

Multilevel Modeling with Logistic Regression

Comment sections on Facebook are organized hierarchically in comments and replies. RQ1 and RQ2 investigate whether the incivility of a reply depends on the gender of the comment’s author and on the interplay between a comment author’s gender and incivility. More precisely, we assumed that the incivility of a reply can be predicted by incivility of the comment and gender of its author. To consider these structural dependencies, we conducted multilevel analyses that model the incivility of a reply on level 1 as a function of level 2 (comment) gender (RQ1) and incivility by gender (RQ2). Additionally, since we analyze comments below 792 news posts, we introduced these news articles as a third level of analysis. Since we use both, binary dependent and independent variables, we applied multilevel logistic regression models using the R-Package lme4 (Bates et al., 2012).

Results

A total of 94,923 identifiable individual users were involved in the discussions. Thereof, 59,781 users were identified as male (63%) and 35,142 users were identified as female (37%). Consequently, of all users whose gender could be identified, more than 1.7 times as many men than women were active in the online discussions. Furthermore, an average male user wrote more comments than an average female user (male: M = 3.00, SD = 7.64; female: M = 2.65, SD = 7.92, t = 6.66, df = 71,489, p < .001). These findings support H1, which assumes that online discussions in comment sections are dominated by male authors indicated by the facts that (1) more men than women participate and (2) an individual man, on average, writes more comments than an individual woman.

H2 assumed that the comments written by female and male users differ regarding their incivility. To test this hypothesis, we computed a simple cross-table on the full dataset (we excluded comments by authors whose gender was not identifiable), including all 122,822 comments and all 149,771 replies. Forty-two percent of the comments written by male users contained incivility. In contrast, only 35% of the comments written by female users were uncivil. The relationship between gender and incivility thus points in the expected direction, although it is quite weak (χ2(1) = 1239.08, p < .001; φ = 0.07). These findings support H2.

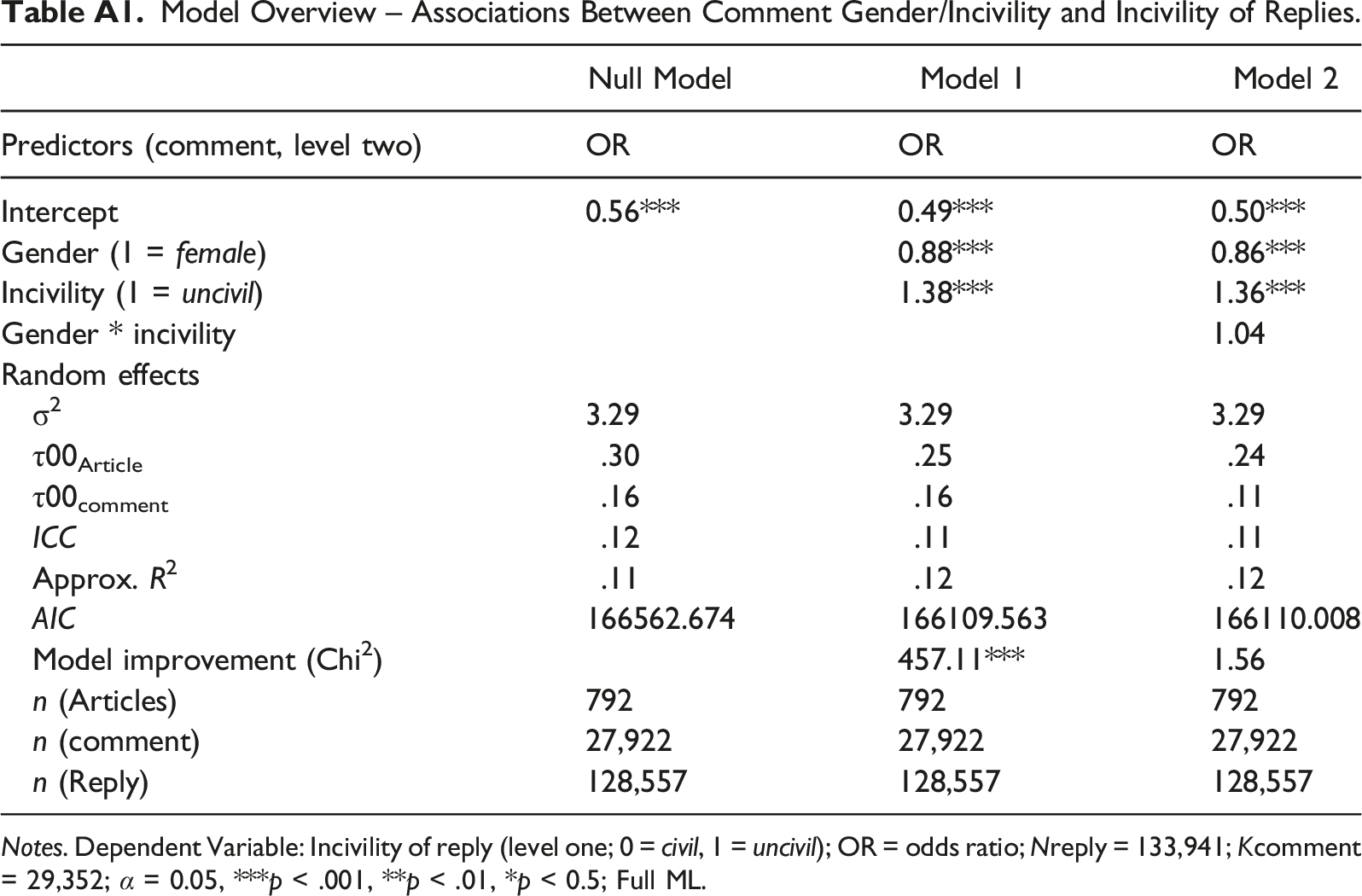

To investigate RQ1 and RQ2, we conducted multilevel logistic regression models that describe the incivility of a reply as a function of the related comment’s incivility, gender of its author, and the interaction term of incivility and gender. Thus, for this analysis, we only considered comments that received at least one reply. This reduced the dataset to n = 27,922 comments and n = 128,557 replies. Table A1 shows the results of the multilevel logistic regressions. The intraclass correlation coefficient (ICC) shows that incivility in a reply is explained to 12% by the groups it is nested in (i.e., the related comment and news article). In the empty model (null model), the odds ratio for y = 1 is OR = 0.56, p < .001, meaning the overall chance of a reply i over all groups j being uncivil (y = 1) is smaller than being civil (y = 0).

RQ1 asked whether comments written by female authors will be more likely to receive uncivil replies than comments written by male authors. To answer this question, we ran a model including the level-2 variable “gender of the comment author” (model 1). We also added the variable “incivility of the comment” as a control variable. Results show that the chance of a reply being uncivil decreases when the comment is written by a female author (OR = 0.88, p < .001). This means that contrary to the implications of Social Role Theory, female users are less likely than men to receive uncivil feedback.

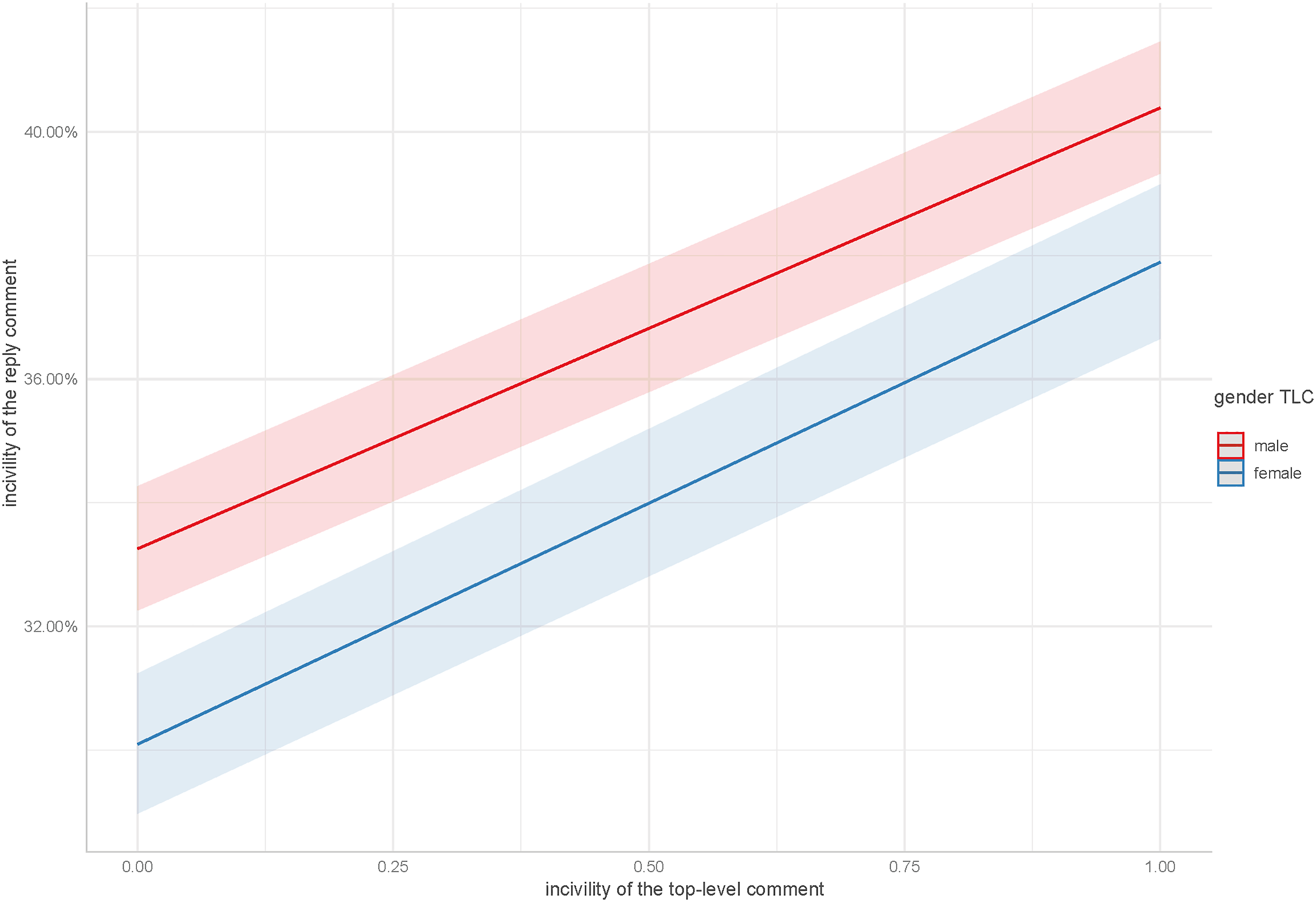

RQ2 asked whether women, as compared to men, will be disproportionately sanctioned for commenting in an uncivil manner. To investigate this question, we added the interaction term of level-2 incivility and gender (model 2). Results show that the interaction term is not significant (OR = 1.04, p = .21). The interaction diagram (see Figure A1) shows that the incivility of the replies to uncivil comments does not differ depending on whether the comment is written by a male or female user. In contrast, independent from a comment author’s gender, uncivil comments stimulated more uncivil replies (OR = 1.36, p < .001). In summary, these findings suggest that female comment authors are not more likely to receive uncivil reactions than male authors, neither to civil nor to uncivil comments.

Discussion

Since user comments on Facebook pages of news outlets have become a popular form of online participation, it is important to investigate gender-related differences in comment sections. This study has examined these differences from several perspectives. First, we asked for differences in women’s and men’s participation rates in user comment sections regarding both, the shares of women and men participating and the numbers of contributions by female and male individuals. Second, we analyzed whether comments authored by female and male users differ in their incivility. Third, we looked at differences regarding the incivility of the replies to the comments written by female and male users. More specifically, we asked whether women receive more uncivil replies than men. In addition, we investigated whether women are disproportionately sanctioned with uncivil reactions for writing uncivil comments themselves. In doing so, this study is one of the first to analyze gender-related differences on how laypersons communicate and receive incivility in comment sections. We tested our hypotheses on a large dataset of online discussions on German news media’s Facebook pages. We automatically determined the incivility of the comments and the gender of the commenters. To analyze dependencies between gender and incivility in comments and replies, we conducted multilevel logistic regression models.

The results show that fewer women than men participated in online discussions. Additionally, women, on average, wrote fewer comments than men. This suggests that discussions in comment sections are indeed dominated by male users. The results contradict the findings from a survey that reported that women and men participate to the same extent in comment sections (e.g., Kalogeropoulos et al., 2017). It is possible that female users report a general willingness to write comments that equals the willingness of male users. However, the results of our study suggest that when it comes to actual commenting behavior, women are less inclined to engage to the same extent as men in discussions on (political) news in online comment sections. These results support the findings of earlier studies on political participation (Karpowitz et al., 2012; Polletta & Chen, 2013) and the findings of the few available content analyses of gender-related participation in comment sections (Baek et al., 2021; Lee & Ryu, 2019; Vochocová et al., 2016). The findings also raise the question for future research on what could be done to create public spaces that are equally inviting for people of all genders, to actively reduce traditional hierarchies (van Duyn et al., 2021; Polletta & Chen, 2013), and to make women more comfortable to discuss potentially controversial topics (Ulbig & Funk, 1999).

Additionally, we found weak gender-related differences in the use of incivility within comments. Male commenters, on average, wrote slightly more uncivil comments than women. These findings are in line with previous research on different communication styles of men and women (e.g., Thelwall et al., 2010). Still, 35% of the comments written by female users were uncivil, which suggests that many women participating in comment sections do not adapt to the stereotypic expectation of behaving warm and subordinate. Future research could investigate whether this is a result of a gender-specific online disinhibition effect (Suler, 2004) or of fading gender stereotypes in general.

Regarding gender-related differences in the reactions to comments written by female or male authors, women’s comments did not receive more uncivil replies than comments written by men. This finding contradicts the expectations of Social Role Theory and the backlash effect and supports research suggesting that gender-related backlashes could be less pronounced for female laypeople than for females who are in the spotlight, such as journalists, MPs, and YouTubers (e.g., (Rheault et al., 2019); Southern & Harmer, 2021). For the domain of comment sections, the current study supports the assumption that incivility against lay females might be less of a severe problem compared to well-known or high profile people. A reason may be that users responding to well-known authors have more background knowledge on the authors, are more aware of their gender, and actively attribute social roles. These processes could be responsible for a stronger backlash when well-known authors seemingly behave in contradiction with users’ expectations. When interacting with other laypersons, users may, however, not necessarily pay equal attention to the authors’ profile names and thus their gender.

Our findings also show that uncivil commenting leads to more uncivil replies. This supports previous studies that found a “vicious circle of incivility,” which means that uncivil comments trigger further incivility in the subsequent discussions. Interestingly, our results show that this effect is independent of the commenters’ gender. Here, our findings contradict a previous study that found small but significant “double backlash effects” against women in online discussions (Wilhelm & Joeckel, 2019). While the different results might be explained by the fact that this previous study used a different operationalization of backlash (i.e., flagging behavior instead of written comments) and relied on experimental settings instead of content analyses, our study still leaves room for more detailed investigations (see further below).

Overall, our findings provide some evidence for gender-related differences in participation rates, but limited evidence for differences in communication behavior and a gender-specific backlash. In terms of participation rates, our study has only investigated whether participation rates are equal. However, our data does not give insights into whether or how incivility affects the future commenting behavior of users (e.g., throughout a comment section or even across several comment sections), especially for female commenters. Future studies should address this in more detail. Although comments written by female authors are underrepresented in our dataset, men do neither overly dominate the discussions in terms of uncivil communication behavior nor are women’s comments more likely to be attacked uncivilly. These findings should certainly not suggest that gendered harassment against individual women of interest or groups of women in the spotlight is not a relevant problem in online discussions (the opposite is shown in other studies, e.g., (Chen et al., 2020)Rheault et al., 2019; Southern & Harmer, 2021). Yet, our findings do not suggest that such attacks are generally more frequently directed at women than at men. One explanation, again, could be that we investigated incivility toward “average” commenters who do not possess certain expertise, status, or power. Such characteristics might make authors, particularly females, prone to uncivil attacks (Rheault et al., 2019). Future studies should address this limitation and investigate the differences between laypersons and persons of interest to gain a deeper understanding of gendered incivility.

A second explanation could be that we did not distinguish types of gendered incivility. Studies have already shown that female and male MPs experience different types of harassment. Female MPs suffer from gender-based stereotyping, whereas male MPs face incivility due to professional aspects, such as party affiliation or political stances (Southern & Harmer, 2021; Ward & McLoughlin, 2020). Further, compared to men, women are subject to “more sexist, racist, or sexually aggressive hate comments” (Döring & Mohseni, 2020, p. 73). They are more objectified due to gender and physical appearance, while receiving less supportive feedback on their content (Döring & Mohseni, 2020; Wotanis & McMillan, 2014). Accordingly, it is the task of future studies to disentangle different types of gendered incivility and reinvestigate the relationship between gender and types of incivility in more detail.

In sum, our findings provide important implications for research on gender-related differences in online discussions regarding participation and communication behavior in online comment sections. More specifically, our results suggest that users primarily reply to “what” is said in comments instead of replying to “who” has said it. This would be an important prerequisite for inclusive online discussions in which women can equally contribute their perspectives. This interpretation is supported by research showing that users evaluate persuasive messages online (such as user-generated product reviews) mainly based on the arguments and rhetorical and stylistic devices (Willemsen et al., 2011). However, various studies have also shown that users consider the identity-related disclosures of online communicators when judging their messages (Forman et al., 2008). Thus, future research needs to untwine the conditions under which users do or do not consider identity-related cues of messages when evaluating their content and responding to it.

Limitations

Along with new insights that add to existing research on gender-related differences in online communication, this study has several limitations: First, we did not code for news topics discussed in the posts below which users commented. However, our sampling procedure (e.g., including only posts that received at least 60 comments) increased the probability that we predominantly included political, and, therefore, “male” topics, which often attract high numbers of comments (e.g., Coe et al., 2014). This might explain the gender-related gap between the participation rates of female and male users; in fact, van Duyn and colleagues (2021) showed that women and men comment on different types of topics, that is, women are more likely to comment on local news and men are more likely to comment on national or international news. Despite the high probability that our sample included many political topics, we did not find that women are disproportionately sanctioned with uncivil feedback for voicing their opinion on these topics. Having said that, future studies should investigate in more detail the relations between participation rates and communication behavior of female and male users by controlling for topics during analyses.

A second limitation is that our dataset has not been collected in real-time. This means that exceptionally uncivil comments may already have been filtered out before we collected the data. Consequently, extreme forms of incivility, which might have affected the results, could not be analyzed. This is a problem of many content analyses and although we do not assume that this limitation overly biased our results, future research should consider collaborating with news outlets or platform providers to gain insights into deleted or filtered comments as well.

Third, it is unclear in our dataset who is addressed in an uncivil reply. Replies can possibly contain a high level of incivility against the author of a comment, but also against a third person (e.g., a politician) or against an issue. Additionally, in Facebook comment sections, all replies are subordinate to the related comment, yet there are no further sub-levels of responses to replies. However, replies may well address a preceding reply within the thread instead of the related comment. Future studies need to take this into consideration and measure the object of incivility more comprehensively. Further, by studying incivility in replies to comments, our research employed a very specific operationalization of the gender backlash. Future studies may consider investigating additional manifestations of backlash, such as flagging behavior (Wilhelm & Joeckel, 2019) or being ignored. In content analyses, the latter form of backlash could possibly be investigated by analyzing the number of replies that female and male comment authors receive.

Finally, to conduct our analyses on a large sample, we decided to determine the incivility of a comment automatically, applying a supervised machine learning approach (classifier). The classifier was trained on a comparable dataset of 10,000 user comments from an earlier content analysis. The model achieved 68% accuracy in classifying user comments as either civil or uncivil. This means that about 32% of the comments have been misclassified. Incivility often is a matter of personal perception (Chen, 2017), and some forms of incivility can only be deduced from context. It remains challenging for machines and even for humans to determine incivility based on text patterns. To provide the best measurement of incivility, we tested several classification approaches that differ regarding their complexity, expense, and performance, including different feature constellations and Deep Learning approaches (i.e., LSTM model architecture). Results showed that complex Deep Learning architectures clearly overfit the training data. This led to higher model performance but, at the same time, resulted in unreliable measurement on new data. We therefore chose the model described since it ensures a reliable identification (recall) of uncivil user comments. Model performance scores showed that the classifier identified most uncivil comments (Recall uncivil = 0.80) but tended to misclassify many civil comments as uncivil, too. This must be kept in mind when interpreting the findings of the study. Still, the group comparisons between male and female users should remain valid since the reliability of the incivility measurement does not differ by gender. Nevertheless, future studies that are mainly interested in interpreting descriptive or absolute values may consider using a larger sample of manually labeled training data that assures lower heterogeneity in most cases and, therefore, leads to better model results.

It is also important to emphasize that we only used a binary gender classification. We did not consider, for example, androgynous names, such as “Dominique” or “Charly.” Additionally, based on the applied automated measurement of gender, users’ first names were categorized as (mostly) female or (mostly) male. According to our manual evaluation of the gender guesser algorithm on 500 comments, this procedure was not biased by any systematic misclassification. Yet, some users register with aliases or intentionally misspell their names (e.g., “Tho Mas” or “Cutie Pie”). The gender guesser classified these names as unknown, but they might have been correctly assigned by human coders in some cases. Future research should consider using refined methods to be able to investigate these cases, too.

Lastly, we do not know whether the users who were classified as female or male also identify as women or men. Even more so, authors might intentionally choose an ambiguous name or one of another gender category to avoid gendered harassment. There is a long history of research on identity deception in computer-mediated communication. It manifests as identity concealment, attractiveness deception, or category deception (Utz, 2005), with the last one referring to a switch in gender. Motivations behind identity deception online can be privacy concerns, status elevation, idealized self-presentation, or identity play, among others (Caspi & Gorsky, 2006; Utz, 2005). Having said that, we are fully aware of the gender variety in our society. Nonetheless, not only most existing research, but also international first name lists are mostly based on a binary understanding of gender. It is up to future studies to offer innovative solutions for this limitation.

Conclusion

Commenting on news articles on Facebook pages of news media is currently considered one of the most popular forms of public user participation. Previous research has argued that the discussions in comment sections could foster equally accessible and respectful exchange. However, various studies reported that a high share of incivility threatens the discussion atmosphere in comment sections and drives readers away from reading and writing comments. Yet, only few studies have used content analyses to investigate whether users’ gender is related to their commenting behavior and whether the comments written by female and male users are differently likely to trigger uncivil responses. The present research used a large dataset of comments from various news outlets to investigate these questions. The results suggest that gender-related differences in comment sections are mainly related to unequal participation rates and commenting frequencies of male and female users. We did not find striking differences regarding gender-based (un-)civil communication behavior or regarding gendered abuse through uncivil replies to women. These findings extend previous research on gender inequalities that often used self-reports or experimental designs with a limited number of comments or focused on well-known authors of user-generated content. We hope that our research will stimulate further studies that disentangle when and why women and men are treated (un)equally in comment sections.

Software Information

Python-Package gender-guesser: https://github.com/lead-ratings/gender-guesser

300-dimensional fasttext word embeddings: The pre-trained Tweet embeddings are provided under the Creative Commons License CC BY 4.0 by Spinningbytes: https://www.spinningbytes.com/resources/wordembeddings/(accessed June 19, 2020).

Weighted frequencies of unigrams, bigrams, and trigrams: We used Tf-idf weighting (Term frequency-inverse document frequency weighting), which is the standard weighting metric in natural language processing.

Removal of stopwords: We used the NLTK Stopword Corpus for German. Full documentation: https://www.nltk.org/book/ch02.html

Removal of long sequences of letters or punctuation to a maximum of three consecutive characters: We applied the TweetTokenizer from the nltk. tokenize package. Full documentation: https://www.nltk.org/api/nltk.tokenize.html

Support Vector Machine: We applied the Support Vector Classifier SVC from the scikit-learn python package (Pedregosa et al., 2011). Full documentation: https://scikit-learn.org/stable/modules/generated/sklearn.svm.SVC.html.

Statistical analysis: R-package lme4, For full documentation: https://www.rdocumentation.org/packages/lme4/versions/1.1-23.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Ministry of Culture and Science of the German State of North Rhine-Westphalia and Deutsche Forschungsgemeinschaft, Grant NA 1281/1-1, project number 358324049.

Notes

Appendix

Incivility of a reply (Level one; 0 = civil, 1 = uncivil) predicted by comment Gender*Incivility (level two; 1 = male, 2 = female); Nreply = 133,941; Ncomment = 29,352. Model Overview – Associations Between Comment Gender/Incivility and Incivility of Replies. Notes. Dependent Variable: Incivility of reply (level one; 0 = civil, 1 = uncivil); OR = odds ratio; Nreply = 133,941; Kcomment = 29,352; α = 0.05, ***p < .001, **p < .01, *p < 0.5; Full ML.

Null Model

Model 1

Model 2

Predictors (comment, level two)

OR

OR

OR

Intercept

0.56***

0.49***

0.50***

Gender (1 = female)

0.88***

0.86***

Incivility (1 = uncivil)

1.38***

1.36***

Gender * incivility

1.04

Random effects

σ2

3.29

3.29

3.29

τ00Article

.30

.25

.24

τ00comment

.16

.16

.11

ICC

.12

.11

.11

Approx. R2

.11

.12

.12

AIC

166562.674

166109.563

166110.008

Model improvement (Chi2)

457.11***

1.56

n (Articles)

792

792

792

n (comment)

27,922

27,922

27,922

n (Reply)

128,557

128,557

128,557