Abstract

Regulatory fit, which describes how people experience a sense of “rightness” when their motivational orientation matches their goal-pursuit strategies, has been studied in various domains of psychology. Recently, Achar and Lee (2022) found that regulatory fit might also play an important role in the moral domain. In particular, they report that regulatory fit intensifies people’s moral predisposition toward a range of behaviors and judgments across moral domains. We conducted three well-powered and preregistered close and conceptual replications (overall N = 3150) in which we aimed to replicate and extend this line of research using honesty as a prominent example of moral behavior. In neither of our studies we found support for the proposed interaction between moral predispositions (as assessed via Moral Disengagement and Honesty-Humility) and the experience of regulatory fit on dishonest behaviors and intentions. The findings could reflect either limited robustness of the regulatory fit effect or of the regulatory fit manipulation.

Plain language summary

Regulatory fit theory suggests that when people feel a good match between their goals and how they go about achieving them, they’ll have a sense of doing things the right way. This idea has been explored in various psychological studies. Recently, researchers Achar and Lee looked into whether feeling this kind of match can affect how moral or immoral a person acts. They thought that if someone generally behaves morally and feels this “fit,” they might act even more morally. On the other hand, if someone tends to act immorally, this fit could make them act even worse. After conducting several studies, they found evidence supporting their idea. However, when we tried to check their findings with our own three large-scale studies, we didn’t find the same results. In our research, whether or not people felt this “fit” didn’t change their moral behavior. People who were inclined to act morally did so regardless of feeling a match between their goals and actions, and the same was true for those inclined to act immorally.

Introduction

The experience of regulatory fit reflects increased motivation and engagement when one’s goal pursuit sustains or matches one’s regulatory orientation (e.g., Higgins, 2005, 2006; Higgins et al., 2003). For instance, in a study context, fulfilling a desire for accomplishment and aligning with aspirations for growth, a student might approach this goal by eagerly engaging in additional readings (Higgins, 2005). In such a situation, goal pursuit “feels right” and a person experiences value from fit (Higgins, 2011).

Regulatory fit has been suggested to be relevant for a range of outcomes across research fields such as public health (e.g., Ludolph & Schulz, 2015), organizational behavior (e.g., Higgins & Pinelli, 2020), and education (e.g., Miwa et al., 2022). Recently, Achar and Lee (2022) proposed that regulatory fit may extend its influence into the moral domain. They hypothesized that the subjective experience of regulatory fit would increase the likelihood of engaging in moral conduct among individuals predisposed to moral behavior but decrease it among those inclined toward immoral conduct. Based on their investigation spanning seven studies and 3559 participants, Achar and Lee (2022) found supporting evidence for their hypothesis, stating that they found, “unambiguous evidence that regulatory fit impacts moral conduct by intensifying moral predispositions.” (p. 1). Herein, we set out to replicate their results through three well-powered replication studies.

According to the regulatory fit theory (e.g., Higgins, 2005; Higgins et al., 2003), people experience increased motivation and engagement when the way in which they pursue their goals aligns with their self-regulatory orientation (i.e., a regulatory fit). Specifically, promotion-focused individuals, who prioritize growth and nurturance, experience regulatory fit by utilizing eagerness strategies to achieve their goals. In parallel, prevention-focused individuals, who prioritize security and safety, experience regulatory fit by utilizing vigilance strategies (e.g., Aaker & Lee, 2006; Lee & Higgins, 2009).

In line with these theoretical notions, prior research on regulatory fit and persuasion has demonstrated that regulatory fit amplifies existing attitudes, in the sense that when there is fit certain judgments and opinions “feel right”—leading to either more positive or negative evaluations depending on individuals’ regulatory orientations. For instance, regulatory fit has been observed to elicit more positive opinions of persuasive messages when participants held favorable thoughts about the message, yet more negative opinions when they held unfavorable thoughts (Cesario et al., 2004). 1 Moreover, it has been associated with increased motivation for pleasant tasks and decreased motivation for unpleasant tasks (Lee et al., 2011). Summarizing, regulatory fit has been documented to intensify pre-existing attitudes within the realm of persuasion.

Building upon these findings, Achar and Lee (2022) proposed that regulatory fit would also amplify peoples’ existing beliefs regarding the morality of actions, thereby directing judgments and behaviors to align with individual moral predispositions. In particular, they proposed that individuals with higher levels of Moral Disengagement will be less likely, whereas those with lower levels of Moral Disengagement will be more likely to behave morally when experiencing regulatory fit (vs. non-fit). Moral Disengagement refers to an individual difference describing the propensity to selectively detach moral self-sanctions, allowing to engage in behaviors that may be considered morally reprehensible without disrupting their favorable moral self-view (Bandura, 1999). Moral Disengagement includes mechanisms such as moral justification, euphemistic labeling, and displacement of responsibility (Bandura et al., 1996), and have been found to positively relate to a range of antisocial behaviors, including bullying, fraud, and counterproductive work behavior (e.g., Barsky, 2011; Moore et al., 2012; Thornberg & Jungert, 2014). Summarizing, Achar and Lee (2022) proposed that regulatory fit amplifies individuals’ pre-existing moral beliefs, influencing behavioral alignment with said moral predispositions, conceptualized as Moral Disengagement.

In line with their hypothesis, Achar and Lee (2022) found across seven studies that the experience of regulatory fit indeed influences the expression of moral predispositions. In particular, they found that regulatory fit intensifies people’s (im)moral predisposition in a range of behaviors and judgments, including relationship infidelity, misreporting taxes, imposing punishment on transgressors, cooperating in a social dilemma, and cheating. Importantly, they also found that their results were generalizable across conceptualizations of moral predispositions more broadly which included, in addition to Moral Disengagement, Honesty-Humility (e.g., Ashton & Lee, 2007) and Machiavellianism (e.g., Collison et al., 2018). These findings could provide important theoretical insights into person-centered approaches aiming to influence moral behavior. Furthermore, the regulatory fit manipulation could prove as a useful intervention that might encourage moral behavior in practice (e.g., Ayal et al., 2015; Hertwig & Mazar, 2022). Importantly, the manipulation used to influence regulatory fit is very time efficient and applicable in a broad range of contexts, which makes it especially useful in applied settings.

Given the theoretical and practical significance of Achar and Lee’s (2022) findings, we conducted three preregistered longitudinal (i.e., across two measurement occasions) replications, including one conceptual replication—that is, a replication that extends an already tested finding by examining it using a new method or context (e.g., Isager et al., 2025)—and two close replications, that is, replications aiming to follow as faithfully as possible the methods of a previously published study (e.g., Isager et al., 2025). In addition, our current series of studies—particularly the two close replications—constitute the first large-scale, preregistered, independent close replication effort in the influential field of regulatory fit.

Firstly, we performed a conceptual replication (Study 1) to provide a very general test of Achar and Lee’s (2022) idea that regulatory fit enhances moral predispositions. In this replication, we measured moral predispositions using Honesty-Humility—defined as “the tendency to be fair and genuine when dealing with others” (Ashton & Lee, 2007, p. 156)—which is a basic trait consistently related to a broad range of moral behaviors (see, Ścigała, Schild, Moshagen, et al., 2021; Thielmann et al., 2020; Zettler et al., 2020 for meta-analytical evidence). Furthermore, we assessed moral behavior in the mind game—a commonly used measure of dishonesty with established external validity across a variety of moral behaviors (Jiang, 2013; Potters & Stoop, 2016; Schild et al., 2021). Study 1 constitutes a reanalysis of pre-existing, secondary data and is a preliminary investigation that helped motivate the two replication studies. As such, Study 1 can only be considered a conceptual replication. Part of the data collection (i.e., Honesty-Humility) occurred prior to preregistration.

In Study 2, we set out to conduct a close replication of Study 3 reported by Achar and Lee (2022), which shows that regulatory fit intensifies moral predispositions—that is, Moral Disengagement—in tax reporting. We focused on that study specifically due to the high practical and financial relevance of tax reporting. Finally, in Study 3, we aimed to replicate Study 7 reported by Achar and Lee (2022), which demonstrates that regulatory fit intensifies moral predispositions—that is, Moral Disengagement—in cheating. We chose to replicate this study because it uses a monetary-incentivized task to measure actual unethical behavior. Moreover, we expand Studies 2 and 3 by incorporating the measurement of Honesty-Humility. This additional exploratory assessment allows us to investigate whether the results we observe with Moral Disengagement extend to another (established) moral trait, thus enhancing the generalizability of our findings.

Across the three studies, our hypothesis was that individuals characterized by higher moral predispositions—specifically, those with higher levels of Honesty-Humility (Study 1) and lower levels of Moral Disengagement (Studies 2 and 3)—would exhibit more ethical behavior when exposed to regulatory fit vs. non-fit. Conversely, we anticipated that individuals characterized by lower moral predispositions—specifically, those with lower levels of Honesty-Humility (Study 1) and higher levels of Moral Disengagement (Studies 2 and 3)—would exhibit less ethical behavior when exposed to regulatory fit vs. non-fit.

Study 1

In Study 1, we provide a conceptual replication of findings reported by Achar and Lee (2022). Specifically, we hypothesized that the experience of regulatory fit (vs. non-fit) will decrease the probability of dishonesty among those with higher levels of Honesty-Humility and increase the probability of dishonesty among those with lower levels of Honesty-Humility (Hypothesis 1).

The sample size, methods, and statistical analyses were preregistered (https://osf.io/q56w3/registrations), and the study materials, analyzes scripts, and data, are available on the OSF (https://osf.io/q56w3).

Methods

Participants

In the current study, we included 2977 Prolific workers located in the UK whose levels of Honesty-Humility we had assessed in two previous independent studies. Specifically, we included 650 participants from a study conducted on March 19, 2021 and 2327 participants from a study conducted between July 22 and 23, 2021. These prior studies encompassed various measures and tasks unrelated to our current investigation, and we collectively refer to both studies as the “first measurement occasion” herein.

At the first measurement occasion, the Honesty-Humility scale included a control question: This is a control question. Please choose “Strongly disagree”. Participants who did not pass this control question were excluded. Additionally, to assure good data quality, we recruited participants who took part in at least 100 studies prior, following “standard practice for data collection” (Chandler et al., 2019, p. 3; Douglas et al., 2023; Peer et al., 2022). However, please note that it is possible that high levels of experience with scientific studies might have had an impact on the results.

Out of the 2977 participants who completed the first measurement occasion, 1093 returning participants completed the second measurement occasion including the regulatory fit manipulation and the mind game, which took place between the 29th and the 31st of March 2023. Hence, the separation between the two measurement occasions amounted to approximately two years. In total, on the second measurement occasion we collected data from 1093 participants (M age = 42.46, SD age = 13.45, 684 women, 406 men, and 3 participants who selected the response option “other”).

In accordance with Simonsohn’s (2015) recommendation, our aim was to bolster the sample size by two and a half times compared to the original study. As the original study’s sample size for examining the interaction between regulatory fit and Moral Disengagement totaled 294 participants (Achar & Lee, 2022, Study 3), our target was set to at least 735 participants (i.e., 294 x 2.5). Given that our final sample size amounted to 1,093, we conclude that we have sufficient power to detect the hypothesized effect as found in Achar and Lee (2022).

Procedure

First measurement occasion

At the first measurement occasion on March 19, 2021, Honesty-Humility and the entire HEXACO-60 scale were assessed at the beginning of the study, after participants were presented with the information and consent forms, as well as demographic questions. On the other hand, the assessment of Honesty-Humility conducted between July 22nd and 23rd, 2021, was included with several other scales at the end of the study. This assessment was preceded by participants’ involvement in an economic game. More information about the exact procedures at the first measurement occasion can be found on the Open Science Framework (OSF, https://osf.io/q56w3/).

Second measurement occasion

On the second measurement occasion, participants were presented with the participant information and the consent forms and were asked to agree to participate in the study. Afterward, participants were directed to the regulatory fit manipulation and randomly assigned to one of the four conditions, that is, either (1) experimentally induced promotion-focus matched with an eagerness strategy (regulatory fit), (2) induced prevention-focus matched with a vigilance strategy (regulatory fit), (3) induced promotion but matched with a vigilant strategy (regulatory non-fit), (4) induced prevention focus but matched with an eagerness strategy (regulatory non-fit). First, participants were presented with either the promotion or the prevention instructions where they were informed that we would like to learn about how today’s general public thinks about different issues and they were asked to describe one of their aspirations (in the promotion condition), or one of their obligations (in the prevention condition).

Following that, participants took part in either the eagerness or the vigilance strategy condition. In the eagerness strategy condition, participants were asked to list five things they can do to make sure everything would go right with achieving the aspiration (in the promotion condition) or fulfilling the obligation (in the prevention condition). In the vigilance strategy condition, participants were asked to list five things they can do to avoid anything that could go wrong with achieving the aspiration (in the promotion condition) or fulfilling the obligation (in the prevention condition). Finally, participants took part in the “mind game” to assess moral behavior (see details below), and once they made their decisions in the game, they were debriefed and thanked for their participation.

Measures

Honesty-Humility

We used the Honesty-Humility scale from the HEXACO-60 (Ashton & Lee, 2009; M HH = 3.60, SD HH = 0.63). The scale consists of 10 items, for instance, “I would never accept a bribe, even if it were very large,” “I’d be tempted to use counterfeit money, if I were sure I could get away with it” and “I think that I am entitled to more respect than the average person is.” Participants rated their (dis)agreement with the items on a 5-point Likert scale ranging from “strongly disagree” (1) to “strongly agree” (5). We presented the items in a random order. The reliability of the scale was good (Cronbach’s α = .76), and the items were averaged.

Mind game

In the game, participants were informed that they would be asked to write down a number between one and eight on a piece of paper. Following this, a random number within that range would be displayed on the screen. They would then be asked whether the displayed number matched the one they had written down earlier. Furthermore, they were informed that the chance of both numbers matching was stated to be 12.5%. If the numbers did match, participants were told they would receive a bonus incentive of £0.4 in addition to the basic fee of £0.70, which they would obtain independently of the reported number. This setup allowed participants the opportunity to dishonestly claim the additional bonus by falsely reporting a match, even if the numbers did not actually match.

Analytical approach

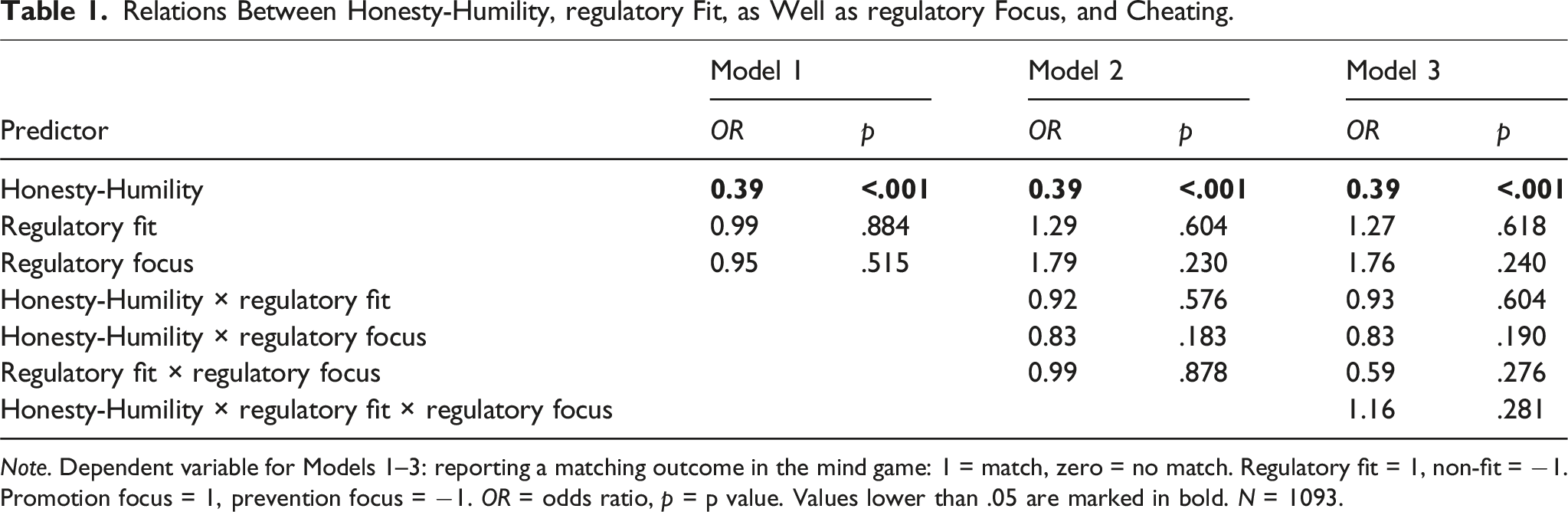

Relations Between Honesty-Humility, regulatory Fit, as Well as regulatory Focus, and Cheating.

Note. Dependent variable for Models 1–3: reporting a matching outcome in the mind game: 1 = match, zero = no match. Regulatory fit = 1, non-fit = −1. Promotion focus = 1, prevention focus = −1. OR = odds ratio, p = p value. Values lower than .05 are marked in bold. N = 1093.

We used modified logistic regressions as they allow to account for the fact that 12.5% of participants are expected to win in the mind game and, thus, honestly report the match between their chosen number and the one shown. Hence, the cheating rate obtained from this analysis describes the proportion of dishonest individuals adjusted for those who reported the target number honestly (see Moshagen & Hilbig, 2017).

Results

We found that 39.34% of participants indicated a matching number in the mind game, which is significantly higher from the stochastic baseline of 12.5%—that is, the assumed probability of obtaining a matching outcome if everyone was honest (z = 18.16, p < .001). This result aligned with other studies using the mind game (e.g., Schild et al., 2021; Thielmann et al., 2023). Given this stochastic baseline, the proportion of dishonest individuals in the sample was estimated to 31% (SE = 0.02).

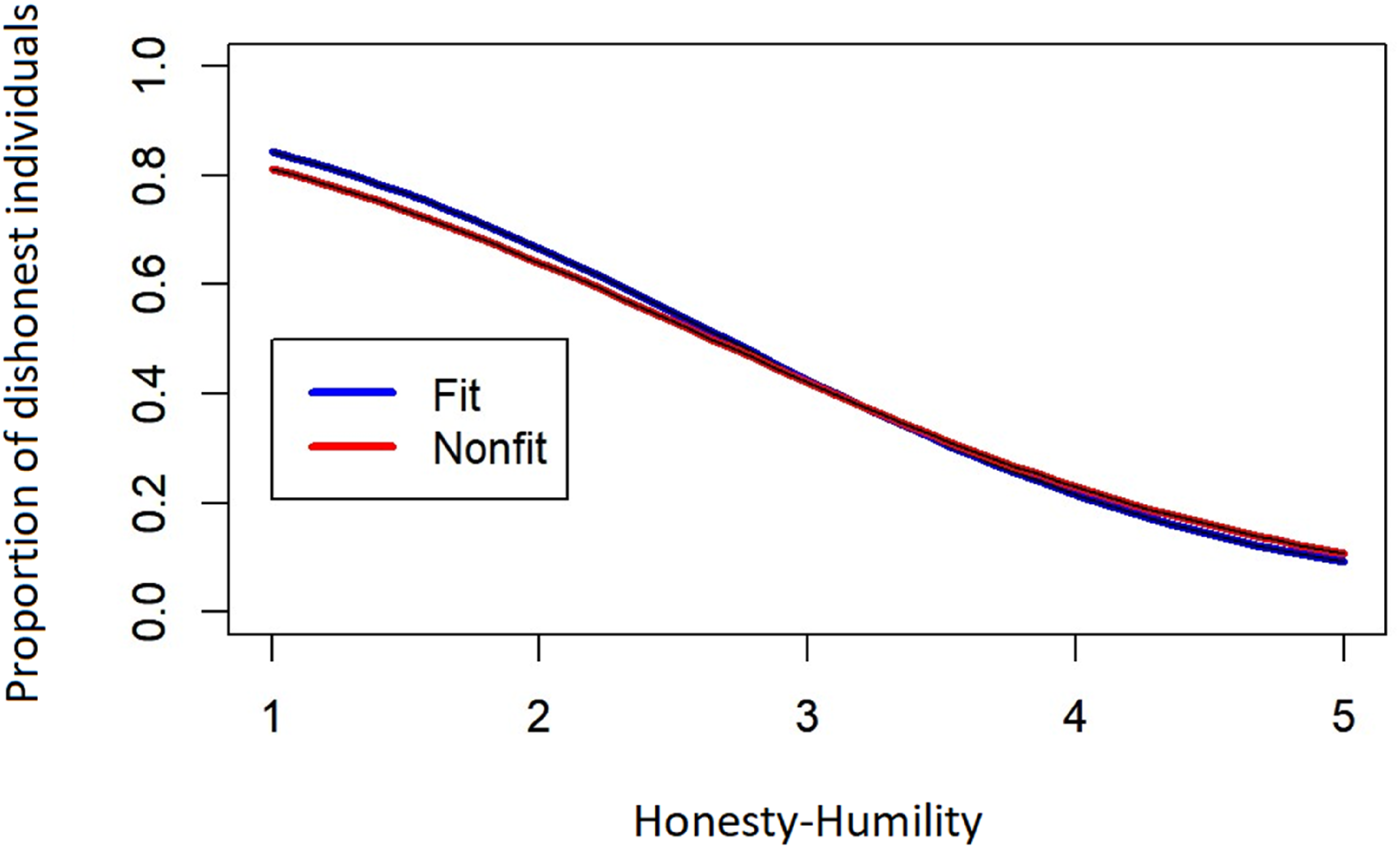

We found no significant effects of any of the predictors in the three models, apart from Honesty-Humility which negatively predicted dishonesty in all models (see Table 1), replicating previous work (e.g., Heck et al., 2018). Importantly, we did not find significant interactions between Honesty-Humility and regulatory fit when predicting dishonesty in none of the models (Model 2: OR = 0.92, 95% CI [0.70, 1.23], p = .576, Model 3: OR = 0.93, 95% CI [0.71, 1.22], p = .604; see Table 1). We illustrate the interaction between Honesty-Humility and regulatory fit in Figure 1. Relation between Honesty-Humility and the proportion of dishonest individuals by regulatory fit.

Finally, as an exploratory robustness check, we tested whether Honesty-Humility was associated with random assignment to the regulatory fit versus non-fit conditions, the promotion versus prevention conditions, and with dropout between the two measurement occasions (beyond basic demographics: gender and age). Across all analyses, adding Honesty-Humility—either as a total score or at the item level—did not significantly improve model fit, as it did not account for additional explained variance beyond demographics (see Supplemental Tables S7–S9).

Discussion

In Study 1, we aimed to conceptually replicate the findings by Achar and Lee (2022) in a well-powered longitudinal study. We expected that individuals with moral predispositions (i.e., high Honesty-Humility) are more honest when they experienced regulatory fit (vs. non-fit), whereas individuals with immoral predispositions (i.e., low Honesty-Humility) are more dishonest in an incentivized cheating paradigm. However, we did not find evidence for the interaction described.

Importantly, however, we deviated from the methods used by Achar and Lee by using a different outcome in our conceptual replication. Furthermore, we acknowledge the limitation of not measuring cheating at the participant level and instead relying on RRlog to estimate the proportion of dishonest individuals in Study 1. However, please note that this is an extremely common approach in dishonesty research (e.g., Gerlach et al., 2019) that has been successfully employed in studies to explore interactions (e.g., Thielmann et al., 2024). Additionally, following recommendation by Moshagen and Hilbig (2017) to account for the noise introduced by population inferred cheating paradigms, we recruited a relatively large sample in this study. In detail, a sensitivity power analysis in multitree (Moshagen, 2010) indicates that Study 1 was sufficiently powered (power = .88) to detect an effect size of Cohen’s w of .09, which is typically considered small (Cohen, 1988; see pp. 10–11 in the Supplemental Materials).

Further, there was a considerably larger time difference (approximately 2 years) between our measurement occasions and some participants participated in another different moral behavior task (a bribery game) in the first measurement occasion. At the same time, however, speaking to the credibility of our study, we found the positive relationship between Honesty-Humility and cheating as documented in other studies (e.g., Heck et al., 2018; Kleinlogel et al., 2018; Schild et al., 2020).

Finally, the study was conducted on a UK sample, rather than a US sample. While we did not expect that these deviations should diminish the general effect, our replication was conceptual, preliminary, and based on a pre-existing dataset. Given the limitations of Study 1, we set out to closely replicate Study 3 in Achar and Lee (2022) to test the robustness of the original effect.

Study 2

In the following, we present a close replication of Study 3 (Achar & Lee, 2022) testing whether regulatory fit moderates the relations between Moral Disengagement and (1) tax intentions as well as (2) tax payments. We aimed to keep the study procedure as close as possible to the original study and also used the original study material. We test the following hypotheses, which reflect the findings reported by Achar and Lee (2022; Study 3): Hypothesis 2a: The experience of regulatory fit (vs. non-fit) will reduce intentions to pay tax among those with higher levels of Moral Disengagement and increase intentions to pay tax among those with lower levels of Moral Disengagement. Hypothesis 2b: The experience of regulatory fit (vs. non-fit) will reduce the intended tax payments among participants with higher levels of Moral Disengagement.

The hypotheses, methods, and statistical analyses were preregistered (https://osf.io/q56w3/), and the study materials, analyzes scripts, and data, are available on the OSF (https://osf.io/q56w3/).

Methods

Participants

On the first measurement occasion, we recruited 889 participants (M age = 45.28, SD age = 14.45, 436 women, 438 men, 8 participants who selected the response option “other,” and 7 participants who selected the response option “do not wish to disclose”). On the second measurement occasion, seven days after the first one, we recruited 831 participants. We excluded 18 participants because they provided inconsistent demographic information between the two measurement occasions, and 9 participants because they failed the attention check in the Moral Disengagement scale. This resulted in 862 participants on wave 1 (out of the initial 889), and 804 participants on wave 2 (out of the initial 831). If participants completed the study more than one time (i.e., multiple participation) at any of the measurement occasions, only their first response was included. The final sample size amounts to 804 participants (M age = 45.25, SD age = 14.50, 396 women, 396 men, 6 participants who selected the response option “other,” and 6 participants who selected the response option “do not wish to disclose”).

Analogically to the original study, we recruited US residents over the age of 18, irrespective of their primary language, ethnicity, age, race, country of origin, or state of residence. We recruited participants using Prolific Academic (prolific.ac). To ensure good data quality, we invited participants with at least a 95% approval rate, as well as those who have completed at least 100 studies prior.

Following recommendations by Simonsohn (2015), we aimed to recruit a sample 2.5 times higher than the sample of the original study. Specifically, because the sample used for the interaction between regulatory fit and Moral Disengagement in the original study amounted to 294 participants (Achar & Lee, 2022, Study 3), we aimed to recruit 735 (i.e., 294 × 2.5) participants. Because the typical dropout on Prolific typically does not extend 10% (e.g., Ścigała et al., 2020), we conservatively oversampled by 20% and aimed to recruit 882 participants on the first measurement occasion. We stopped the data collection at the first measurement occasion once the recruitment of 882 participants was completed on Prolific Academic (please note, however, that despite stopping the data collection at 882 participants, we received a few additional submissions, which amounted to 889 submissions in total). We stopped the data collection at the second measurement occasion seven days after launching the second measurement occasion.

Procedure

At the beginning of the first measurement occasion, participants were asked to complete a captcha to verify if they are human. Then, they were presented with the participant information form, as well as the consent form, and asked to agree to participate in the study. At this stage, we informed participants that they will be taking part in a study with two measurement occasions and that they should not participate in the first part if they are not able to participate in the second. Following that, participants were asked about their age, sex, and Prolific ID. Afterward, we informed participants that they are not allowed to use Artificial Intelligence-generated responses in the following survey 2 , and we directed them to the regulatory fit manipulation.

For the regulatory fit manipulation, as in Study 1, participants were randomly assigned to one of the four conditions, that is, either (1) promotion-eagerness (regulatory fit), (2) prevention-vigilance (regulatory fit), (3) promotion-vigilance (regulatory non-fit), (4) prevention-eagerness (regulatory non-fit). The regulatory fit manipulation was identical to Study 1. Next, participants took part in the tax game, where they were presented with a scenario in which they found out that they do not have enough money to purchase a car they planned on purchasing because of an unexpected tax expense. After reading the instructions, participants were asked to imagine that they are still thinking about that new car and asked about their intentions to pay tax, as well as about the amount of tax they would pay. Finally, participants were thanked for the participation and reminded about the second part of the study.

On the second measurement occasion, one week after the first one, participants were first presented with the participant information form and the consent form, as well as asked about their sex, age, and Prolific ID. Afterward, participants were asked to complete the Moral Disengagement about Cheating Scale (Shu et al., 2011). Next, participants were asked to complete the Honesty-Humility scale from the HEXACO-60 (Ashton & Lee, 2009). The description of the Honesty-Humility scale is available in Study 1’s description above. Finally, participants were debriefed and thanked for their participation.

Measures

Moral Disengagement

To measure Moral Disengagement, we used the Moral Disengagement about Cheating Scale (Shu et al., 2011). The scale includes six items, for instance, “Sometimes getting ahead of the curve is more important than adhering to rules,” “Rules should be flexible enough to be adapted to different situations” and “Cheating is appropriate behavior because no one gets hurt.” Participants rated their (dis)agreement with the items on a 7-point Likert scale ranging from “strongly disagree” (1) to “strongly agree” (7). The items were presented in a random order, and the scale included an attention check: “Select ‘strongly disagree’ here”. The reliability of the scale was good (Cronbach’s α = .80) and the items were averaged.

Tax game

We used the tax game in order to measure participants’ intentions to pay taxes. The tax game was hypothetical and it included the following scenario (identical to the original study): “This is a hypothetical scenario. Please imagine that you had been wanting to buy a new car. Recently you had the opportunity to make some extra money and you ended up earning an additional $16,000 that could go toward the car. But when you get your paycheck, you were reminded that the extra money you earned is subject to a 20% income tax. You realized that after deducting the income tax, you would be $3000 short of the amount you need to buy the car. While you were still trying to overcome the shock of the realization, you came across an article that mentioned a 2001 tax gap study conducted by the IRS. In the study, it was estimated that individuals underreport their overall income by 43%; and sole proprietors, who report self-employment income on schedule C of their tax returns, were estimated to underreport their income by 57%. According to the article, a penalty of 75% of any underpayment will be imposed on taxpayers convicted of underreporting their income due to fraud. But due to resource constraint, the IRS could only conduct random audits to verify tax filings and identify underreporting.”

To measure participants’ tax intentions, we asked them about the probability they would engage in the following behaviors, rated on a scale from 1 (“definitely not”) to 10 (“definitely yes”): (i) pay the income tax, even though it means they cannot buy the car they want, (ii) underreport their income, so that they can buy the car they want (reverse-coded), (iii) not pay the income tax at all (reverse-coded). The three measures exhibited good reliability (Cronbach’s α = .81) and were averaged. In addition, to measure the tax payment in the tax game, participants were asked to indicate the amount of income tax they would pay on their extra income on a slider scale from 0$ to 3200$.

Differences from the original study

We aimed for the study procedure to be as close to the original procedure as possible. However, there are several ways in which we deviated from the original study procedure. First, in the replication study, we used Prolific Academic, whereas the original study used Cloudresearch. The reason for this difference is that we did not want to risk recruiting the same participants who have already taken part in the original study. Second, to minimize the dropout from the first to the second measurement occasion (39% in the original study), and the potential resulting selection effects, we separated the first measurement occasion from the second measurement occasion by one week only (as opposed to the eight weeks in the original study). Third, to avoid deception in the regulatory fit manipulation, we did not inform participants that “We are in the process of developing some advertising campaigns and would like to learn about how today’s general public thinks about different issues.” as was done in the original study. Instead, we informed participants that “We would like to learn about how today’s general public thinks about different issues.”

Fourth, in the original study, participants at the first measurement occasion were informed that they are participating in a “series of studies.” To avoid deception, in the replication study we informed participants that they are participating in a study with two parts. Fifth, the original study did not include a Captcha. However, we included it for consistency (given that both the original Study 7 from Achar and Lee (2022), and the replication of Study 7 [i.e., Study 3 below] includes a captcha). Sixth, the replication study included a measure of Honesty-Humility, whereas the original study did not include this measure. However, the Honesty-Humility measure did not impact the results of the replication itself because it was administered after all other measures. Finally, unlike the replication study, the original study did not include information indicating that participants are not allowed to use AI-generated responses.

Analytical approach

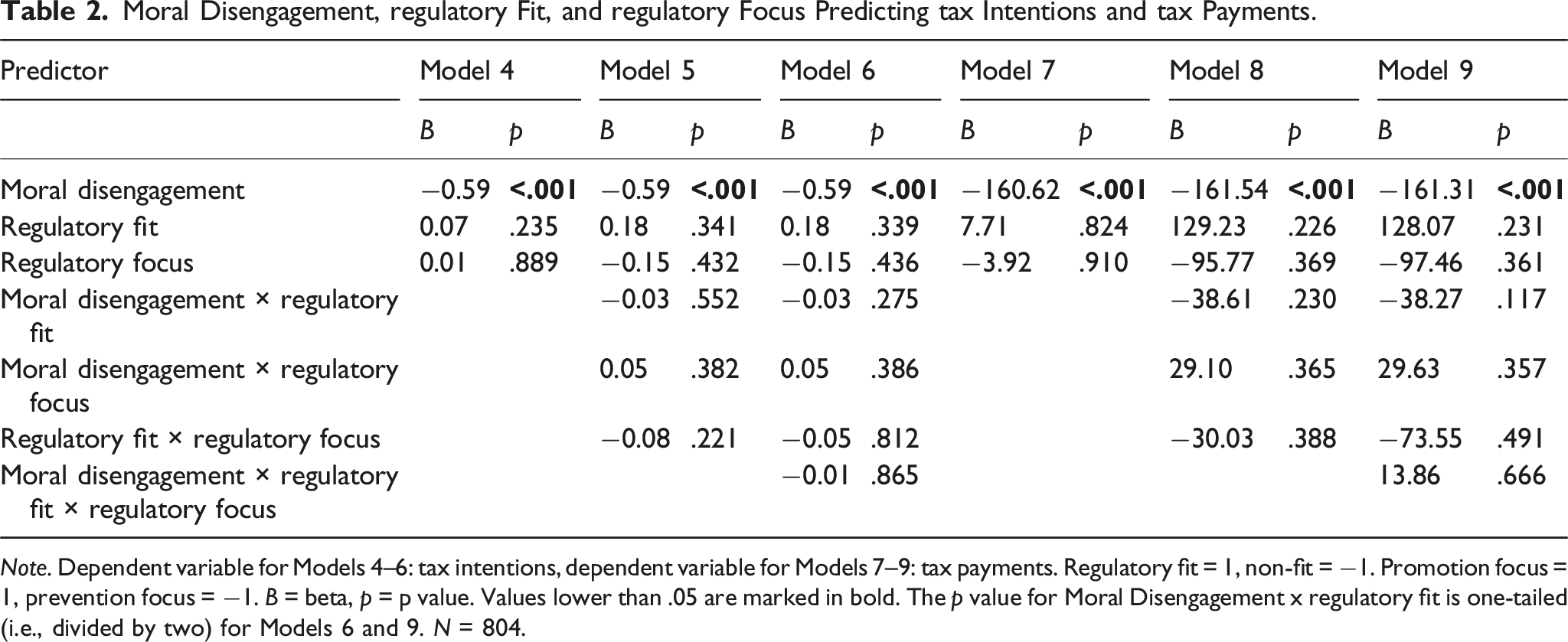

Moral Disengagement, regulatory Fit, and regulatory Focus Predicting tax Intentions and tax Payments.

Note. Dependent variable for Models 4–6: tax intentions, dependent variable for Models 7–9: tax payments. Regulatory fit = 1, non-fit = −1. Promotion focus = 1, prevention focus = −1. B = beta, p = p value. Values lower than .05 are marked in bold. The p value for Moral Disengagement x regulatory fit is one-tailed (i.e., divided by two) for Models 6 and 9. N = 804.

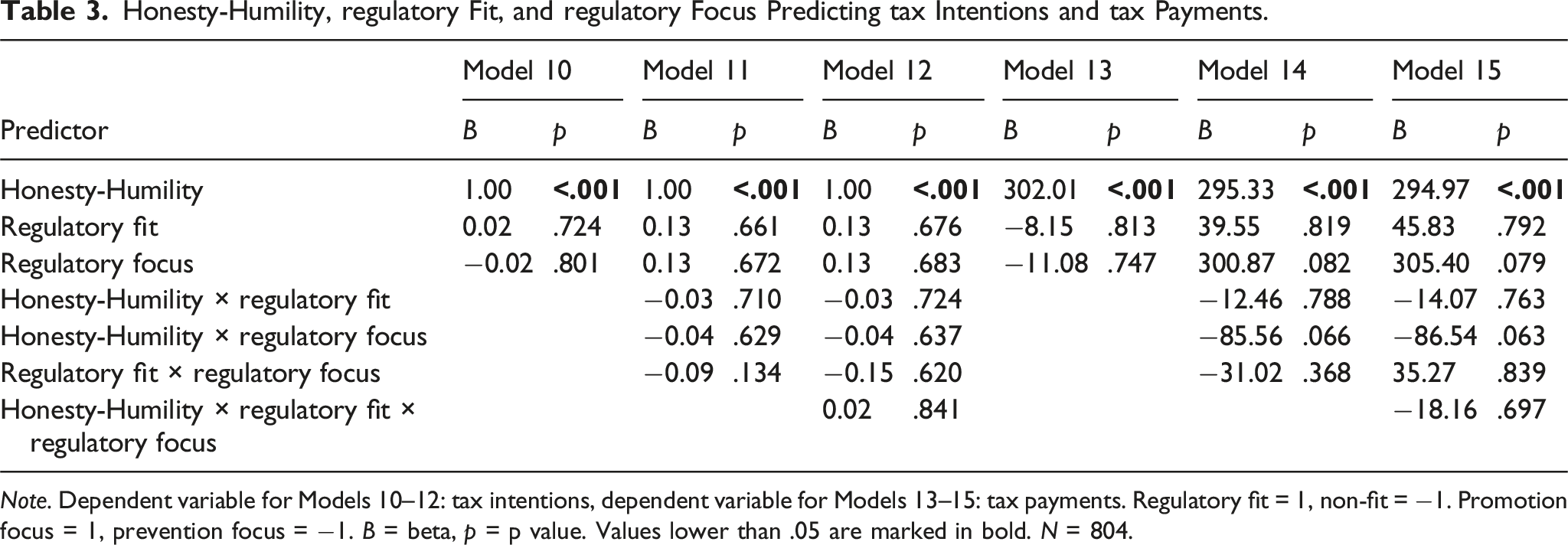

Honesty-Humility, regulatory Fit, and regulatory Focus Predicting tax Intentions and tax Payments.

Note. Dependent variable for Models 10–12: tax intentions, dependent variable for Models 13–15: tax payments. Regulatory fit = 1, non-fit = −1. Promotion focus = 1, prevention focus = −1. B = beta, p = p value. Values lower than .05 are marked in bold. N = 804.

Because the hypotheses are directional, we used a significance level of .05 (one-tailed), instead of a significance level of .10 (as in the original study), to test for interactions between Moral Disengagement and regulatory fit in Models 6 and 9 (as preregistered). However, the one-tailed significance level of .05 is analogical to a two-tailed significance level of .10; the difference lies in its directional focus.

Results

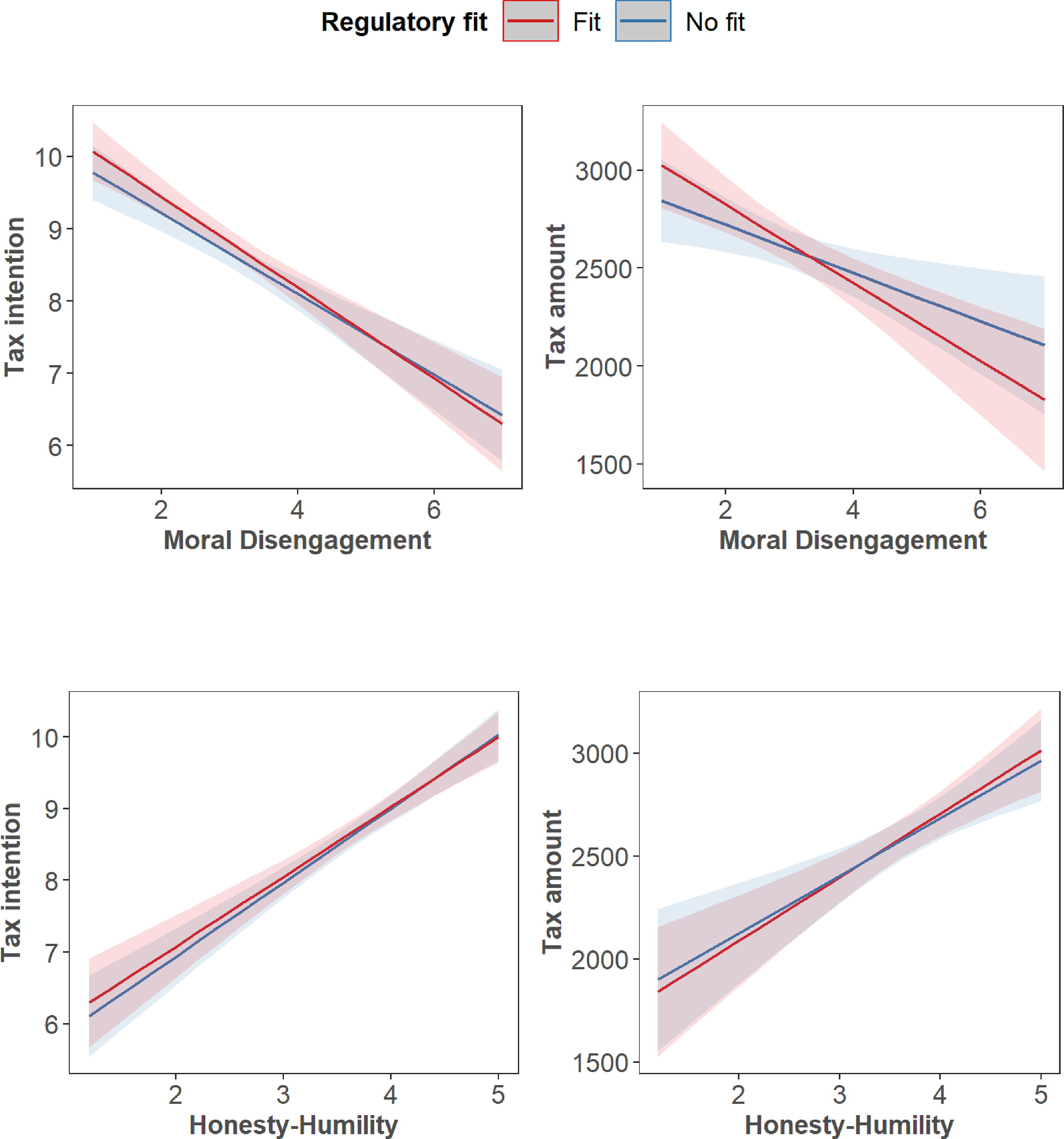

We observed no significant effects of the predictors in Models 4–15, except for the negative relations between Moral Disengagement and both tax intentions and payments in Models 4–9 (see Table 2), as well as the positive relations between Honesty-Humility and tax intentions and payments in Models 10-15 (see Table 3). Notably, we found no significant interactions between Moral Disengagement and regulatory fit in predicting either tax intentions (Model 5: B = −0.03, 95% CI [−0.15; 0.08], p = .552, f

2

= 0.00 and Model 6: B = −0.03, 95% CI [−0.15; 0.08], p = .275, f

2

= 0.00), or tax payments (Model 8: B = −38,61, 95% CI [−101.63; 24,41], p = .230, f

2

= 0.00 and Model 9: B = −0.03, 95% CI [−101.35; 24.80], p = .117, f

2

= 0.00; see Table 2, as well as Figures 2(a) and (b)). Relations between Moral Disengagement, as well as Honesty-Humility and tax intentions/amounts by regulatory fit.

In line with these findings, we did not observe significant interactions between Honesty-Humility and either tax intentions (Model 11: B = −0.03, 95% CI [−0.19; 0.13], p = 710, f 2 = 0.00 and Model 12: B = −0.03, 95% CI [−0.19; 0.13], p = .724, f 2 = 0.00), or tax payments (Model 13: B = −12.46, 95% CI [−103.45; 78.53], p = .788, f 2 = 0.00 and Model 15: B = −14.07, 95% CI [−105.47; 77.33], p = .763, f 2 = 0.00; see Table 3, as well as Figures 2(c) and (d)).

As an exploratory robustness check, we systematically assessed whether Honesty-Humility and Moral Disengagement were related to condition assignment. Specifically, we tested whether adding each trait to models including basic demographics (gender and age) improved prediction of random assignment to the regulatory fit versus non-fit conditions and the promotion versus prevention conditions. Because both traits were measured at the second measurement occasion, they could not be used to predict dropout. Honesty-Humility showed a statistically significant improvement in predicting assignment to the regulatory fit condition (R 2 = .00, log lik. = −580.94, χ2 = 4.36, p = .037). Across all remaining analyses, adding Honesty-Humility or Moral Disengagement—either as a total score or at the item level—did not significantly improve model fit, as they did not account for additional explained variance beyond demographics (see Supplemental Tables S10–S13).

Discussion

In Study 2, our objective was to closely replicate Study 3 by Achar and Lee (2022) while employing a longitudinal design and a large sample size. We hypothesized that individuals with lower levels of Moral Disengagement would demonstrate stronger intentions to pay taxes and higher tax payments, while those with higher levels of Moral Disengagement would exhibit lower tax payments, following experiences of regulatory fit compared to non-fit. However, contrary to the expectations drawn from Achar and Lee’s work, our findings did not support these hypotheses.

Given that Achar and Lee had found consistent effects in their manuscript, we set out to run yet another well-powered replication. Here, we focused on the only study which measured actual behaviors in the moral domain (Achar & Lee, 2022, Study 7). In detail, we tested whether the experience of regulatory fit influences dishonesty in a sender-receiver game, a commonly used cheating paradigm (e.g., Gneezy, 2005; Sheremeta & Shields, 2013; Ścigała, Schild, Moshagen, et al., 2021).

Study 3

In the following, we present a close replication of Study 7 (Achar & Lee, 2022), examining whether regulatory fit moderates the relationship between Moral Disengagement and cheating. Our aim was to maintain as close a resemblance to the original study procedure as possible. We test the following hypothesis, which reflects the findings reported by Achar and Lee (2022, Study 7): The experience of regulatory fit (vs. non-fit) will increase dishonest behavior among participants with higher levels of Moral Disengagement and increase honest behavior among those with lower levels of Moral Disengagement (Hypothesis 3).

The hypothesis, methods, and statistical analyses were preregistered (https://osf.io/q56w3/registrations), and the study materials, analyzes scripts, and data, are available on the OSF (https://osf.io/q56w3/).

Methods

Participants

We recruited 1716 participants (M age = 40.97, SD age = 18.30, 857 men, 832 women, and 18 participants who selected the response option “other,” and 9 participants who did not disclose their sex) on the first measurement occasion, and 1276 returning participants on the second measurement occasion (one week after the first). If participants completed the study more than one time (i.e., multiple participation) at any of the measurement occasions, only their first response was included. On the first measurement occasion, 25 participants failed an attention check, and on the second measurement occasion 23 participants provided inconsistent demographic information. This resulted in 1668 participants on the first measurement occasion (out of the initial 1716), and 1253 on the second measurement occasion (out of the initial 1276 participants). Thus, the final sample size amounted to N = 1253 participants (M age = 41.50, SD age = 13.95, 596 men, 643 women and 11 participants who selected the response option “other,” and 3 participants who did not disclose their sex).

Our goal was to increase the sample size by 2.5 times compared to the original study, as recommended by Simonsohn (2015). The original study tested for the interaction between regulatory fit and Moral Disengagement on a sample size of 570 participants (Achar & Lee, 2022, Study 7). Therefore, our target was to gather data from 1425 participants (570 × 2.5) on the second measurement occasion. Similar to Study 2, oversampled by 20% at the first measurement occasion to account for dropout. Additionally, we maintained the second measurement occasion open for a week to allow participants ample time to complete the study. However, it turned out that the dropout rate from the first to the second measurement occasion was slightly higher than anticipated. As a result, we recruited 1253 participants, instead of the planned 1425. However, the sample is still substantially higher (i.e., 2.2 × higher) than this of the original study.

Identical to the original study, participants were US residents over the age of 18, and were recruited irrespective of their age, race, primary language, ethnicity, country of origin, or state of residence. We recruited participants using Prolific Academic (prolific.ac), with at least 100 studies completed prior and a 95% approval rate.

Procedure

At the beginning of the first measurement occasion, participants underwent a captcha verification process to confirm they are human. Subsequently, they were presented with both the participant information and consent forms, with instructions to agree to participate only if they could commit to both parts of the study. We then collected demographic information including age, gender, and Prolific ID. They then proceeded to complete the Moral Disengagement about Cheating Scale (Shu et al., 2011; for details, see the description in Study 2 above). Then, participants were thanked for their participation and reminded of the forthcoming second part of the study.

On the second measurement occasion, occurring one week later, participants were again presented with the participant information and consent forms. Following this, participants were directed to the regulatory fit manipulation, which closely resembled the one presented in the two previous studies. The only difference was the addition of “Thank you for participating in our study” at the beginning of the regulatory focus manipulation, aligning with the original instructions from Study 7 (Achar & Lee, 2022). Afterward, participants engaged in the sender-receiver game where they had a chance to send a dishonest message to another participant to obtain a monetary gain. Finally, participants were asked whether they believed their interaction partner would follow their advice in the sender-receiver game, and were also asked about their sex, age, and Prolific ID. After this, they were debriefed and thanked for their participation.

Measures

Sender-receiver game

In the sender-receiver game, participants, designated as “Partner 1,” were informed of being randomly paired with another participant, labeled as “Partner 2,” whose identity would remain anonymous throughout. They were further informed of two bonus payment options for the two partners, and that Partner 1 alone possessed knowledge of the amounts associated with each option, while Partner 2 would ultimately decide which option to choose.

Participants were instructed that they could send one of two messages to Partner 2, which would serve as the sole information for Partner 2’s choice regarding the payment option. Option A would grant Partner 1 $0.25 and Partner 2 $0.20, while Option B would provide Partner 1 $0.20 and Partner 2 $0.25. Partner 1 had the choice to send one of the following messages to Partner 2: “Option A will earn Partner 2 more money than Option B” (the dishonest message; Message 1) or “Option B will earn Partner 2 more money than Option A” (the honest message; Message 2). The order in which these messages were presented to participants was randomized.

Differences from the original study

The differences from the original study are identical to the differences listed in Study 2. In addition, in the original study (Achar & Lee, 2022, Study 7), senders in the sender-receiver game were not actually matched with receivers. To avoid deception, we matched the senders with a smaller group of receivers. Specifically, we asked a small number of participants (N = 50) to take part in the sender-receiver game as receivers. Senders were divided into smaller groups, who made the same decisions (i.e., small groups of senders who decided to send Message 1 and small groups of senders who decided to send Message 2). Each group was paired with one receiver, who was informed about the message sent by the group. The decision made by the receiver impacted the group of senders they were matched with. Please note that we recruit a smaller group of receivers than the senders to avoid deception, while at the same time reducing the costs of the study.

Importantly, our instructions in the sender-receiver game were identical to Achar and Lee’s instructions. The key difference is that in our study, participants were compensated based on the receiver’s choice after the study ended. In contrast, although Achar and Lee’s instructions indicated this would be the case, the actual payment procedure did not follow through as stated. The difference in payment procedures between ours and Achar and Lee’s study could not have impacted participants’ decisions, as they had the same information when making their choices in both studies (for details, see Supplemental Materials, pp. 4–6).

In addition, the original study involved several items that were not included in the original analyses, and hence, we did not include it in the replication: “Have you participated in similar tasks before? If yes, also describe the task briefly.” “What language do you speak at home?” “What is your state of residence?” “What is the international airport closest to where you live?”

Analytical approach

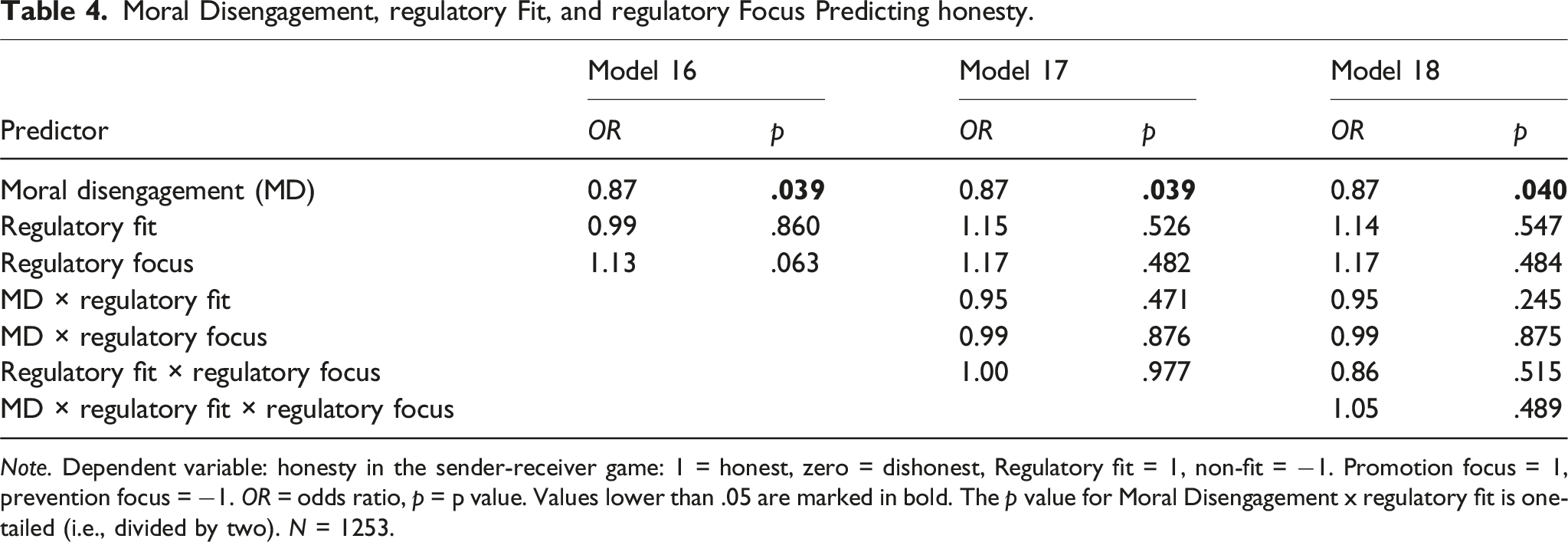

Moral Disengagement, regulatory Fit, and regulatory Focus Predicting honesty.

Note. Dependent variable: honesty in the sender-receiver game: 1 = honest, zero = dishonest, Regulatory fit = 1, non-fit = −1. Promotion focus = 1, prevention focus = −1. OR = odds ratio, p = p value. Values lower than .05 are marked in bold. The p value for Moral Disengagement x regulatory fit is one-tailed (i.e., divided by two). N = 1253.

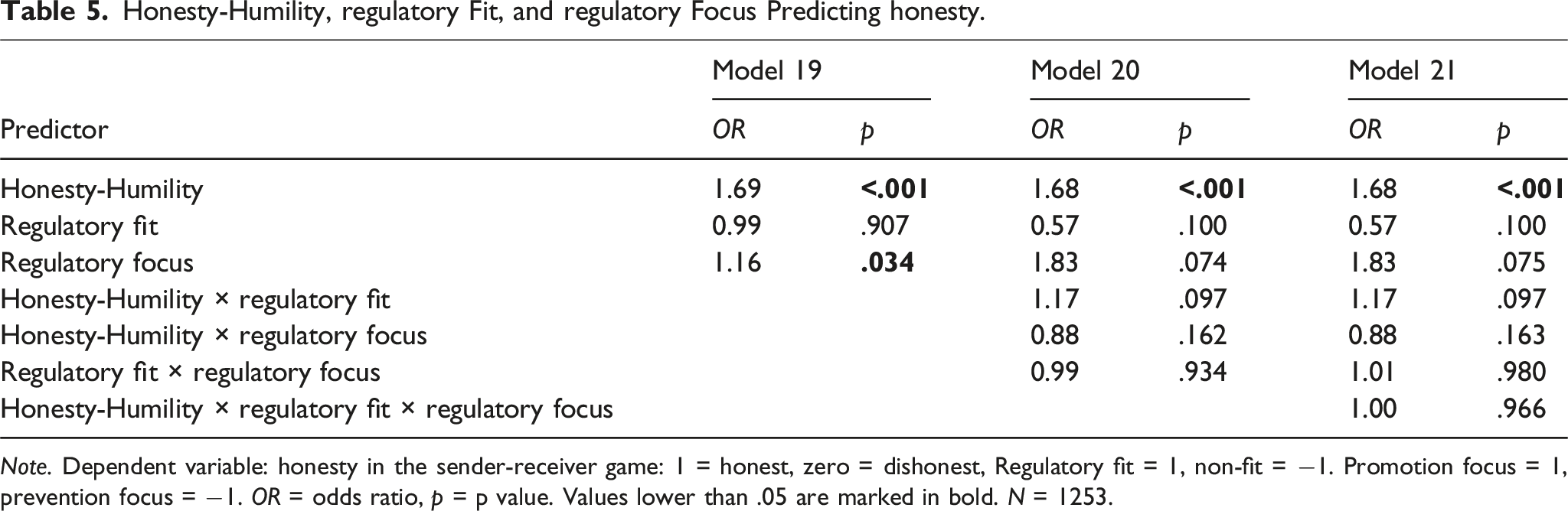

Honesty-Humility, regulatory Fit, and regulatory Focus Predicting honesty.

Note. Dependent variable: honesty in the sender-receiver game: 1 = honest, zero = dishonest, Regulatory fit = 1, non-fit = −1. Promotion focus = 1, prevention focus = −1. OR = odds ratio, p = p value. Values lower than .05 are marked in bold. N = 1253.

Because our hypothesis is directional, we employed one-tailed p values when testing for the interaction between Moral Disengagement and regulatory fit in Model 18 (as preregistered). Please note that the two-tailed significance level of .10 used by Achar and Lee is analogical to a one-tailed significance level of .05; the difference lies in its directional focus.

Results

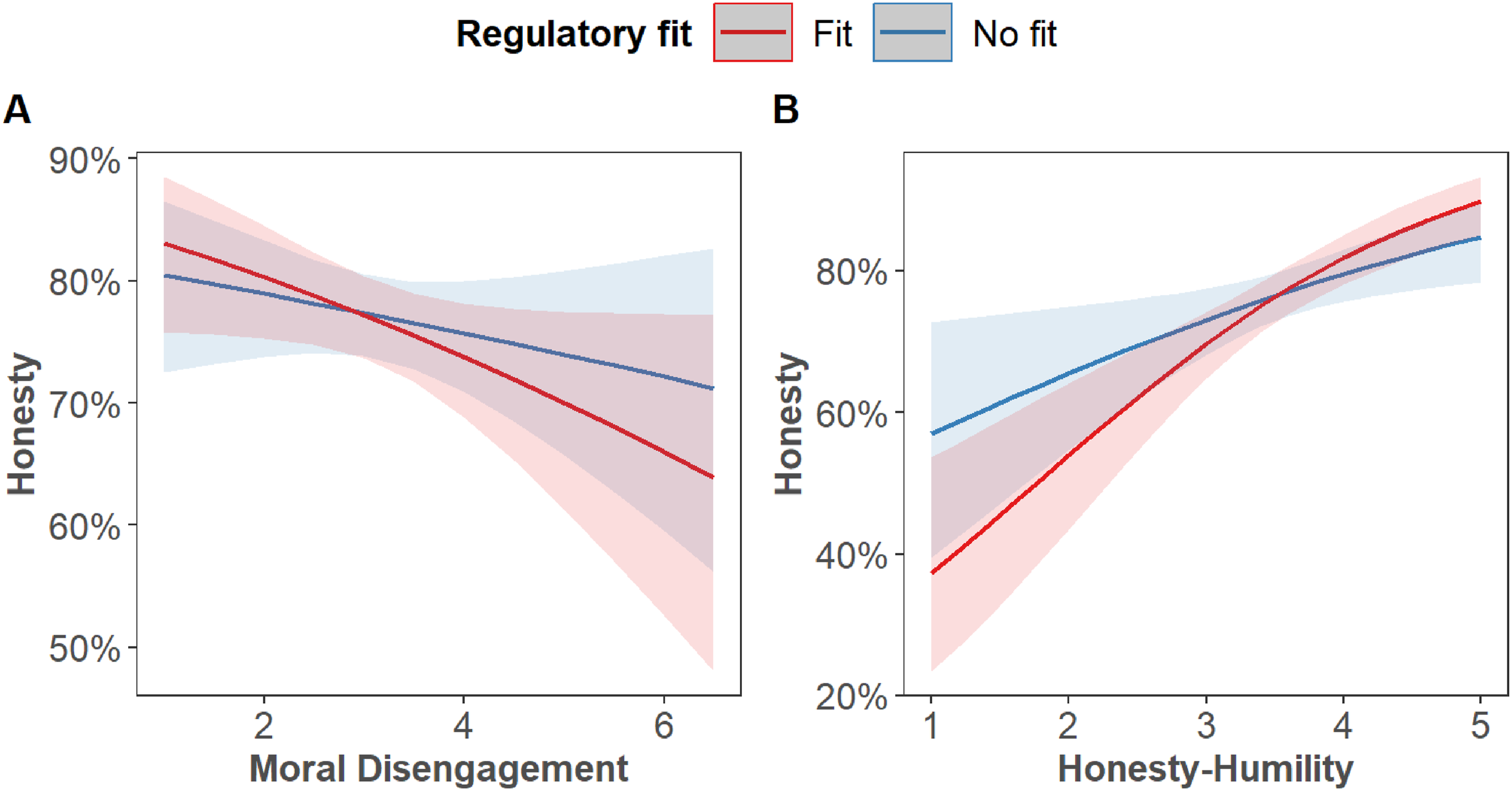

We did not observe any significant effects of any of the predictors, except for the negative relations between Moral Disengagement and honesty in Models 16–18 (see Table 4), the positive relations between Honesty-Humility and honesty in Models 19–21 and a significant effect of regulatory focus in Model 19 (see Table 5).

Notably, we found no significant interactions between Moral Disengagement and regulatory fit in predicting honesty (Model 17: OR = 0.95, 95% CI [0.83; 1.09], p = .471 and Model 18: OR = 0.95, 95% CI [0.84; 1.09], p = .245; see Table 4, as well as Figure 3(a)). Similarly, we conducted exploratory tests to determine whether there are any significant interactions between Honesty-Humility and regulatory fit when predicting honesty. However, we found no significant interactions in this regard either (Model 20: OR = 1.17, 95% CI [0.97; 1.41], p = .097 and Model 21: OR = 1.17, 95% CI [0.97; 1.41], p = .097; see Table 5, as well as Figure 3(b)). Relations between Moral Disengagement (Panel A), as well as Honesty-Humility (Panel B) and honesty by regulatory fit.

As an exploratory robustness check, we assessed whether Honesty-Humility and Moral Disengagement were related to condition assignment or attrition. Specifically, we tested whether adding each trait to models including basic demographics (gender and age) improved prediction of (1) random assignment to the regulatory fit versus non-fit conditions, (2) the promotion versus prevention conditions, and (3) dropout between the two measurement occasions. Because Honesty-Humility was measured at the second measurement occasion, it could not be used to predict dropout. Across all analyses, adding Honesty-Humility or Moral Disengagement—either as overall scores or at the item level—did not significantly improve model fit, as they did not account for additional explained variance beyond demographics (see Supplemental Tables S14–S18).

Discussion

In Study 3, our aim was to closely replicate Study 7 conducted by Achar and Lee (2022). We hypothesized that individuals with lower levels of Moral Disengagement would display more dishonest behavior, whereas those with higher levels of Moral Disengagement would demonstrate greater honesty, following experiences of regulatory fit compared to non-fit. However, contrary to the expectations derived from Achar and Lee’s research, our findings did not support these hypotheses.

General discussion

In a series of seven studies totaling 3559 participants, Achar and Lee (2022) consistently found that regulatory fit enhances moral predispositions. Specifically, participants with higher moral predispositions behaved more ethically, while those with lower moral predispositions behaved less ethically when experiencing regulatory fit, compared to non-fit. This finding bears significant theoretical implications as it represents the first one to suggest that the experience of regulatory fit may intensify moral predispositions. This insight both builds upon and extends Regulatory Fit Theory (e.g., Higgins, 2006), as well as the person-centered perspectives on moral behavior (e.g., Thielmann et al., 2020).

Moreover, the practical implications of Achar and Lee’s (2022) results are noteworthy. They suggest that the manipulation of regulatory fit could potentially be used to encourage moral behavior in certain contexts where such conduct is crucial, such as tax reporting (Achar & Lee, 2022, Study 3). Given the theoretical and practical significance of these findings, we embarked on a series of three replication studies (including one conceptual and two close replications) aiming to replicate the finding that regulatory fit amplifies moral predispositions.

Based on the results of the two close replication studies (Studies 2 and 3) and one conceptual replication (Study 1) reported herein, we conclude that we failed to replicate the findings reported by Achar and Lee (2022). Using a comprehensive assessment of moral traits and behaviors, as well as the same manipulation of regulatory fit that was used in the original study, we did not observe any significant differences in terms of moral behavior between participants with higher vs. lower moral predispositions when exposed to a regulatory fit (vs. non-fit). More specifically, in our investigations we used (1) two measures of moral predispositions, that is, Honesty-Humility (Studies 1, 2, and 3) and Moral Disengagement (Studies 2 and 3), and (2) four measures of moral behavior, that is, tax intentions and tax payments in a hypothetical game (Study 2), and cheating in the mind game (Study 1) as well as in the sender-receiver game (Study 3). Despite this comprehensive assessment of both moral predispositions and behavior, none of the studies replicated the expected effects.

There are several possible explanations of our failure to replicate the findings reported by Achar and Lee (2022). One possibility is that the effect of regulatory fit on strengthening moral predispositions is not robust—being absent or much weaker than Achar and Lee (2022) suggested. On the other hand, the manipulation of regulatory fit might not be reliable. Since similar or identical manipulations of regulatory fit have been successfully used in various studies to influence behavior (e.g., Cesario et al., 2008; Freitas & Higgins, 2002; Hong & Lee, 2008), it appears more likely that the effect of regulatory fit on moral behavior is not robust, rather than a failure of the used experimental manipulation. However, we were not able to verify this, as—in line with Achar and Lee (2022)—we did not use manipulation checks. If the manipulation indeed did not elicit the experience of regulatory fit, this calls into question the effectiveness of a commonly used regulatory fit induction more broadly. If the manipulation did work, then our findings suggest that the influence of regulatory fit on moral behavior specifically may be weaker or less reliable than previously thought. The present design cannot adjudicate between these two explanations and both are possible. While conducting further studies on this topic would have been valuable, we lacked the necessary resources to do so. We hope that future research will shed more light on these issues. This also applies to the experimental manipulation of regulatory fit more generally, as there is a lack of direct, preregistered, and well-powered empirical evidence that has actually measured whether people experience a sense of “rightness” when their motivational orientation matches their goal-pursuit strategies.

It should be noted that our replications include several differences from the original studies that might have contributed to our failure to replicate the original findings. First, Study 1 was a conceptual replication, including different measures of moral behaviors than any of the original studies, as well as a larger time gap (for a detailed discussion, see pp. 13-14 in the Manuscript). Second, although Studies 2 and 3—close replications of Studies 3 and 7 from Achar and Lee (2022), respectively—used the exact same measures of moral traits and behaviors, as well as the same regulatory fit manipulation, they differed from the original studies in several important ways (see “Differences from the original study” sections above). Most notably, to reduce dropout between the two measurement occasions and minimize potential selection effects, we shortened the interval between sessions to one week, compared to eight weeks (Study 3) and at least four weeks (Study 7) in the original studies. In addition, whereas participants in the original studies were told they were participating in a “series of studies,” we informed participants in our replications that they were completing a two-part study, to avoid deception. This more transparent framing, together with the shorter gap between the two measurement occasions, may have led participants to engage in hypothesis guessing and demand effects. This could have been the case specifically in Studies 2 and 3 where the time lag was one week. However, we expect this was not an issue in Study 1, where the time lag between the two studies was two years. Taken together, the effects observed by Achar and Lee may only emerge under specific conditions that further minimize hypothesis guessing and demand effects—for example, with longer delays between sessions and less transparent study framing.

In addition to differences from the original studies, our work has several limitations that might have contributed to our failure to replicate the original findings. First, we have observed several statistically significant correlations between moral traits (both overall and at the item level) and participants’ random assignment to conditions, as well as dropout between measurement occasions (see Supplemental Tables T1–T3), raising concerns about potential issues with randomization and attrition. However, because these correlation-based analyses involved a large number of significance tests, there was an increased risk of false positives. This issue is particularly pronounced in large samples: although larger sample sizes enhance precision and confidence in detecting genuine but small effects, they also make it possible for even weak associations to reach statistical significance. Therefore, we conducted a more systematic set of follow-up analyses. Specifically, we used hierarchical regression models to test whether adding Honesty-Humility or Moral Disengagement (overall or at the item level) significantly improved model fit when predicting condition assignment or dropout beyond basic demographics (gender and age). Although issues with randomization or dropout might constrain interpretation, across all three studies, only one of the 24 models—adding Honesty-Humility to predict regulatory fit assignment in Study 2—showed a statistically significant improvement in model fit, suggesting that individual differences overall did not meaningfully bias randomization or attrition.

There are several minor limitations and deviations from the original studies. Specifically, the replications were conducted using Prolific.ac, whereas the original studies were conducted on Cloudresearch. Previous research indicated that participants on Prolific tend to behave more honestly than those on Cloudresearch (Peer et al., 2022), which might have affected the observed findings. Please note, however, that we did not observe ceiling effects in any of the included measures of moral traits and behaviors (see Table S1 in the Supplemental Materials). Second, there is a possibility that some participants may have utilized AI to formulate or gather ideas for their responses. It is important to note, however, that participants in Studies 2 and 3 were explicitly instructed not to use AI-generated responses. Furthermore, we tested the responses using ZeroGPT (zerogpt.com), one of the most reliable currently available tools for detecting AI-generated text (Walters, 2023). ZeroGPT identified all responses as human-generated across the three studies, except for those in the responses in the promotion condition of Study 2, which were deemed “most likely human-generated” (see Table S2 in the Supplemental Materials). Consequently, we do not view AI-generated responses as a significant concern in our investigation.

While our studies have several limitations, they do exhibit several strengths. First, although the intensification effects are reported in many papers—which on their own can be seen as conceptual replications providing some evidence for the theoretical generalizability of the regulatory fit effect (e.g., Aaker & Lee, 2006; Higgins et al., 2010; Hong & Lee, 2008; Ludolph & Schulz, 2015; Motyka et al., 2014)—the regulatory fit literature lacks independent close replications using the same procedures and materials as the original studies. Our studies suggest that future research should focus on independent, close replications to ensure the robustness and generalizability of the observed effects, especially in the realm of moral behavior. This will help validate the theoretical claims and provide a more solid foundation for understanding the role of regulatory fit.

Second, the total number of participants in our three studies (N = 3,150, average N = 1050) is comparable to the combined sample size of Achar and Lee’s seven studies (N = 3,559, average N = 509). This larger sample size provided us with greater statistical power to detect the effects in question. Despite this, we did not observe the effects reported by Achar and Lee. Finally, besides measuring Moral Disengagement, we also assessed Honesty-Humility to evaluate the generalizability of the findings. This is crucial because it allows us to determine whether the effects observed in the context of Moral Disengagement extend to other moral traits, thereby providing a more comprehensive understanding of regulatory fit’s impact on honesty.

Overall, even though there are differences between our replication studies and those of Achar and Lee (2022), we conclude that the findings of Achar and Lee (2022) are not as robust as presented in the original paper. While it remains unclear whether the null findings reflect a failure of the regulatory fit manipulation, differences from the original studies, or a genuine absence of effect, we note that our procedures in Studies 2 and 3 closely followed those used in the original studies, and similar manipulations have been effective in prior research. Thus, a more plausible interpretation may be that the effect itself is weaker or less robust than previously suggested. In this spirit, we call for additional replication attempts in the study of regulatory fit. Our large-scale attempt may open a new research path in this regard.

Summarizing, Achar and Lee (2022) found consistent evidence that regulatory fit enhances moral predispositions. However, three replication studies reported herein, including one conceptual and two close replications, we failed to replicate these findings. Despite using comprehensive assessments of moral traits and behaviors, and employing the same regulatory fit manipulation as the original study, none of our studies showed significant differences in moral behavior between participants experiencing regulatory fit versus non-fit.

Supplemental Material

Supplemental Material - Revisiting regulatory fit and its effect on honesty: A replication attempt

Supplemental Material for Revisiting regulatory fit and its effect on honesty: A replication attempt by Karolina A. Ścigała, Christoph Schild, and Stefan Pfattheicher in European Journal of Personality

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Center of Integrative Business Psychology, Aarhus University.

Open science statement

The sample sizes, methods, and statistical analyses for all studies were preregistered (https://osf.io/q56w3/registrations), and the study materials, analyzes scripts, and data, are available on the OSF (![]() ).

).

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.