Abstract

Judging individual differences of interaction partners is a key mechanism of human social functioning. However, investigating the behavioral underpinnings of these judgments at a larger scale has traditionally been difficult. We present a machine learning-based approach for cue extraction and integration allowing for large-scale, fine-grained behavioral analyses of social judgments and showcase its application for the case of language behavior and judgments of individual differences in performance. We used a natural language processing approach to extract granular verbal and paraverbal language cues from audio streams and transcripts from a high-stakes assessment center (N = 556, C = 20,289 cues). We subsequently leveraged machine learning models to examine how well targets’ language behavior predicted perceivers’ performance judgments. We then analyzed the predictivity of different language domains and identified the cues that drove our predictions. We found that both verbal and paraverbal language behavior predicted the performance judgments, but a combination of the two domains led to limited improvement in predictive performance. Additional cue level analyses revealed that the utilized cues from both subdomains expressed similar information. We discuss contributions to the performance judgment literature as well as implications for future research on judgments of individual differences in general.

Plain Language Summary

Using Machine Learning to Better Understand How People’s Behaviors Influence How They Are Judged By Others: The Example of Performance Judgments:

Keywords

Social judgments about individual differences of interaction partners (e.g., how competent someone is) are a ubiquitous and consequential phenomenon both in private (Back & Nestler, 2016; Borkenau & Liebler, 1992; Funder, 2012) and in occupational contexts (DeGroot & Motowidlo, 1999; Hickman et al., 2023; Hogan & Shelton, 1998) and have received ample attention in both personality and social psychology (Borkenau et al., 2004; Funder, 1987; Human & Biesanz, 2011; Joel et al., 2017; Kenny & West, 2008; Nestler & Back, 2013). They are typically made under a high amount of uncertainty. That is, perceivers are faced with a multitude of behaviors in which targets differ that are potentially relevant. To arrive at a judgment in the face of such uncertainty, perceivers will somehow integrate these observable behavioral cues into one judgment. A key goal in personality psychological approaches has been to uncover these behavioral cue integration strategies and to investigate the behavioral underpinnings of social judgments (Back & Nestler, 2016; Nestler & Back, 2013).

However, doing so at a large scale and a fine-grained behavioral level has traditionally been difficult (Back & Egloff, 2009; Furr & Funder, 2007): First, research traditionally relied on human coders to extract (quantify) behavioral cues which comes with the key challenges of (a) high costs of the assessment, (b) limitations in the selection of cues, and (c) the subjectivity of human coders (Furr & Funder, 2007; Heyman et al., 2014). Second, the modeling of perceivers’ cue integration strategies typically relied on relatively simple, linear regression models (Hogarth & Karelaia, 2007; Karelaia & Hogarth, 2008), (a) limiting the number of variables that can be included, (b) introducing issues of multicollinearity, and (c) restricting the models’ ability to include nonlinear effects (Cooksey, 1996; Tibshirani, 1996; Yarkoni & Westfall, 2017). Moreover, most of the existing research (d) relied on in-sample estimates of model performance, potentially providing inflated estimates of the predictivity of behavioral cues for social judgments (Lever et al., 2016; Yarkoni & Westfall, 2017).

One avenue to overcome these challenges is the application of machine learning (ML) and related computational approaches for cue extraction and integration. First, using an ML-based approach to automate cue extraction has the potential to (a) drastically reduce the cost of cue extraction, (b) allow for a much more comprehensive extraction of behavioral cues, and (c) increase the objectivity of assessments. Second, by applying ML models for cue integration analyses, one is able to deal with (a) a larger number of (b) correlated and partially redundant cues, and (c) their potentially complex interactive and nonlinear effects. Additionally, (d) ML models are usually evaluated using cross-validated performance estimates, which can provide us with more generalizable estimates of the predictivity of behavioral cues for social judgments.

From a practical perspective, implementing such models, either as a replacement for human judges or as judgment aides, promises to increase both efficiency and objectivity of judgment processes. Increases in efficiency can be achieved by enabling more cost-effective and scalable behavioral extraction and judgment-making. Objectivity, in turn, can be improved on two levels. First, in cue extraction, through the standardized, automated extraction of behavioral cues. Second, in cue integration, through the algorithmic integration of these behavioral cues by the ML models, providing more consistent evaluations across all candidates. Further, while biases can exist both in human and computational judgment models, computational models allow for more direct insights into the judgment-making process through statistical approaches, thus allowing for easier evaluation and recalibration than with human judges.

We showcase the advantages of such ML-based approaches for judgment research using a key domain of interpersonal behavior (language behavior) and social judgments (performance judgments). That is, we investigate: Which role does language behavior play in how perceivers judge individual differences in performance in interpersonal situations?

First, we provide an initial estimate of how well language, conceptualized as both verbal and paraverbal language cues, predicts judgments of interpersonal performance. Second, we investigate how strongly the domains of verbal and paraverbal behavioral information contribute to the predictive performance, and third, we provide in-depth insights on which cue groups and single cues are most predictive for the judgments. For this, we use natural language processing approaches to extract verbal and paraverbal language cues from audio files and transcripts from interpersonal assessment center (AC) role-plays. We then use these cues to train cross-validated ML models predicting the performance judgments.

Analyzing the behavioral underpinnings of social judgments

Social judgments are ubiquitous, they are key mechanisms of navigating social life and span its complete bandwidth, from love (e.g., Back, Penke, et al., 2011; Eastwick, Finkel, et al., 2023; Joel et al., 2017) to work (e.g., Kleinmann & Ingold, 2019; Pierce & Aguinis, 2013; Swider et al., 2022) and arguably everything in-between. Established models in research on judgments of individual differences such as the lens model (Brunswik, 1956) and its differentiated variant, the Realistic Accuracy Model (Funder, 1995), highlight the key role of physical and behavioral cues (Nestler & Back, 2013): People extract cues from the different streams of information they are exposed to (e.g., extracting what and how an interaction partner says something) and integrate these cues into a judgment about the selected individual difference (e.g., a target’s trustworthiness or competence).

Accordingly, to analyze social judgments empirically, one needs to, first, assess and quantify available cues in the respective social environment. This involves measuring behavioral cue differences among targets that are observable in a given context (cue extraction). Second, following the assessment of perceiver judgments for each target, one needs to analyze the multitude of ways in which target differences in cues and cue configurations relate to how they are evaluated by perceivers (cue integration).

This basic judgment framework has been applied across a wide range of cue groups and constructs. Examples extend from static cues such as appearance (e.g., Back, Schmukle, & Egloff, 2011; Naumann et al., 2009) to nonverbal cues such as body language or facial expressions (e.g., Breil et al., 2021) to language behavior including both verbal behavior, that is, what someone says (e.g., Koutsoumpis et al., 2022; Moreno et al., 2021), and paraverbal behavior, that is, how someone says something (e.g., Banse & Scherer, 1996; DeGroot & Gooty, 2009). These frameworks were used to investigate the behavioral underpinnings of perceptions such as personality traits (Back & Nestler, 2016), liking (Back et al., 2010), or ability (Murphy, 2007; Reynolds & Gifford, 2001). However, while the general framework has proven immensely valuable, prior investigations were methodologically limited with regard to both cue extraction and integration.

Regarding cue extraction, previous research mainly relied on human coders to systematically capture and assess selected behavioral cues (Back & Nestler, 2016; Breil et al., 2021, 2023). This approach comes with three key challenges (Furr & Funder, 2007; Heyman et al., 2014): First, the assessment of (behavioral) cues with human coders is time-consuming and costly. Depending on the complexity of the coding scheme, the training of coders can take anything from multiple hours to multiple days or weeks (Furr & Funder, 2007). Also, a multitude of coders is needed to ensure reliability within and independence across cue measures. Second, due to the high costs, researchers usually need to actively select the cues they want to examine and are thus only able to investigate snippets from the universe of possibly assessable behavior. Third, while in most cases a lot of effort is put into training the coders, codings still stay subjective in nature. Especially when codings are performed on a broader level, they arguably contain a substantial degree of subjective impressions.

Similarly, previous research on cue integration in the context of judgment-making and interpersonal perception typically relied on conventional statistical models like Ordinary Least Squares (OLS) regressions, regressing a target variable linearly on the extracted cues (e.g., Borkenau & Liebler, 1992; Funder, 1995; Gosling et al., 2002; Hogarth & Karelaia, 2007; Karelaia & Hogarth, 2008; Küfner et al., 2010). However, these models come with several limitations: First, they only allow for the inclusion of a limited number of variables in one model (in proportion to the sample size) and they do not penalize uninformative predictors (Yarkoni & Westfall, 2017). Analyses are hence often performed not on the level of singular cues (e.g., wide stance, loudness of voice) but on an aggregated level (e.g., agentic behavior; Borkenau & Liebler, 1992; Breil et al., 2023; Klehe et al., 2014; McFarland et al., 2005). Second, when cues overlap (multicollinearity), OLS regressions fail to correctly estimate how these cues contribute to social judgments (Cooksey, 1996). This is especially important when larger sets of possibly redundant cues are investigated, which generally is the case for research outside of an experimental setting. Third, while it is mathematically possible to include nonlinear terms, nonlinear cue judgment relations and cue configurations are often just pooled in the residual correlation (Hogarth & Karelaia, 2007; Karelaia & Hogarth, 2008). Curvilinear relations (e.g., it’s not appealing to never talk but it’s equally not appealing to never stop talking) or multiple interactions between cues (e.g., fidgeting has a smaller negative impact on judgments when the person smiles and speaks in a confident tone) are rarely included in analyses (Dougherty & Thomas, 2012). Fourth, previous research mainly relied on in-sample prediction models. That is, the same data is used to train the model (determining the regression weights; training) and to determine the performance of the model (estimating a goodness of fit parameter such as r, R2, MSE; testing). However, such an approach risks overfitting. That is, one risks overly closely modeling idiosyncratic noise in the data, leading to inflated performance estimates and reduced generalizability (Lever et al., 2016; Stachl et al., 2020; Yarkoni & Westfall, 2017). We propose that there is a strong potential to provide more comprehensive and robust insights into the behavioral underpinnings of social judgments by leveraging the unique advantages of an ML-based approach for cue extraction and cue integration in order to overcome these limitations.

A machine learning-based approach to cue extraction and integration

In recent years, ML approaches have allowed researchers to use novel data sources (e.g., social media likes, sensor data, or bank transaction data; Gladstone et al., 2019; Matz & Netzer, 2017; Müller et al., 2020; Youyou et al., 2015) and existing data sources in novel ways and on an unprecedented scale (e.g., facet-level questionnaire data, behavioral records, text; Joel et al., 2017; Nave et al., 2018; Park et al., 2015; Stewart et al., 2022). Most of this research has focused on complex numerical data (e.g., GPS coordinates, large-scale questionnaire data, matrices of social media likes) in its preprocessing and prediction approaches. However, beyond that, advancements in the realm of machine and deep learning led to the development of a range of models that can assess and process behavioral cues as inputs from a wide variety of information streams (e.g., Baltrusaitis et al., 2018; Cao et al., 2021; Eyben et al., 2016; Honnibal & Montani, 2017). Still, while there are some first applications in the context of automating selection procedures (e.g., Hickman et al., 2021, 2023), applications of ML-based cue extraction and integration in the realm of interpersonal perception research for actual face-to-face interactions remain scarce. Yet, they hold the potential to address the three presented key challenges linked to manual coding (Furr & Funder, 2007; Heyman et al., 2014). First, by eliminating the biggest driver of costs, manual coding, one could drastically reduce the cost of cue extraction, allowing researchers to scale cue extraction to larger samples. Second, these models further allow for the extraction of more, and more fine-grained behavioral cues, allowing for analyses of a wide variety of behavior on a fine-grained level. Third, replacing human coders with automated models for cue extraction reduces the subjectivity of the assessment by consistently applying the same objective extraction rules over all data points.

Equally, ML-based approaches can address the presented challenges of traditional cue integration approaches. First, they can incorporate a larger number of cues and deal with lower sample size-to-cue ratios (Putka et al., 2018; Stachl et al., 2020). Second, they are able to deal with the potential overlap of information of different behavioral cues (multicollinearity; e.g., Breiman, 2001a; Tibshirani, 1996). Third, certain ML algorithms, such as the Random Forest (RF) algorithm (Breiman, 2001a), can consider more complex nonlinear and interactive effects between cues. Fourth, ML model performance is usually estimated using cross-validated performance estimates. Cross-validation refers to a series of practices from the family of resampling methods (Shao, 1993; Stachl et al., 2020). It avoids overfitting by measuring a model’s performance not on the data it was trained on (in-sample) but instead uses unseen data (out-of-sample) for performance evaluation. This minimizes overfitting, leading to more accurate assessments of a model’s generalizability. It is important to note that performance evaluation through cross-validation is not inherently linked to model complexity. While it is commonly associated with more advanced predictive models, it can also be applied to simpler approaches, such as OLS regression, to provide more robust assessments of a model’s performance (e.g., Grunenberg et al., 2024).

Combined, an ML-based approach for cue extraction and integration (1) has the potential to (a) reduce cost, (b) extract more cues at a finer granularity, (c) while increasing the objectivity, and (2) allows to effectively leverage a (a) larger number of (b) potentially overlapping cues, (c) to model linear and nonlinear effects, while (d) providing more robust and generalizable results. One behavioral domain where using ML-based approaches for cue extraction and integration could be especially valuable is language behavior.

Language and individual differences

As the main channel for exchanging thoughts and feelings, language behavior is considered a key domain of behavior in social interactions (Holtgraves, 2014). Two subdomains of language behavior are regularly distinguished (Grünberg et al., 2018; Hickman et al., 2023; Martín-Raugh et al., 2023; Wilhelmy et al., 2016): verbal behavior, that is, what a person says (e.g., choice of words, topics), and paraverbal behavior, that is, how a person says something (e.g., voice pitch, jitter). People differ meaningfully in both subdomains and these differences are related to a wide variety of relevant individual differences and outcomes, such as personality and cognitive ability (Breil et al., 2021; Holleran & Mehl, 2008; Koutsoumpis et al., 2022; Schwartz et al., 2013; Stern et al., 2021), emotions (Banse & Scherer, 1996) and professional success (Mayew et al., 2013). Moreover, language behavior is regularly used as a basis for social judgments both in private and work life, for example, when judging personality (Borkenau et al., 2016; Hall et al., 2016; Mahrholz et al., 2018; McAleer et al., 2014), cognitive ability (Murphy, 2007; Murphy et al., 2003; Reynolds & Gifford, 2001), when deciding who to vote for in political elections (Klofstad, 2016; Tigue et al., 2012), and who to date (Collins, 2000).

Additionally, there is a wide variety of research on the cue level investigating selected groups of verbal and paraverbal cues and their relation to perceivers’ judgments. For example, there is a substantial body of research connecting verbal cues to targets’ personality and cognitive ability and their outcomes (e.g., Koutsoumpis & de Vries, 2022; Moreno et al., 2021; Murphy et al., 2003; Reynolds & Gifford, 2001), as well as research showing what language content judges use when making judgments about these characteristics (e.g., Fast & Funder, 2008; Hickman et al., 2021; Holtgraves, 2011). A challenge when dealing with verbal cues is that they are highly context-dependent and their analysis is thus complex. To deal with this complexity, two complementary approaches are often distinguished. In so-called closed-vocabulary approaches, one reduces complexity by using category scores of predefined lists of words (i.e., dictionaries) that can be counted in a given text (e.g., the percentage of words representing positive or negative emotions per document; Eichstaedt et al., 2021; Pennebaker & King, 1999). Generating predefined category scores comes with the advantage that results can be interpreted with minimal effort and compared across contexts, but analyses are limited in their scope to the selected categories, a fixed level of aggregation, and reduced context information.

Open-vocabulary approaches are data-driven, bottom-up alternatives (Schwartz et al., 2013). They are not bound by a-priori assumptions but instead use a probabilistic approach to generate features from patterns in the data (e.g., by modeling topics through cluster analysis; Blei et al., 2003). This approach allows researchers to identify new cue patterns in the data and to include context information. However, the interpretation of cue level results often requires additional processing (e.g., the definition of a cut-off level for relevance) and sometimes aggregation (e.g., cluster analyses or manual grouping) and is often difficult to compare across samples. For both closed- and open-vocabulary approaches, research has identified cues that are related to psychological characteristics and outcomes, and are used by perceivers in their judgments. This includes different measures of personality (e.g., Cutler et al., 2021; Fast & Funder, 2008; Holtgraves, 2011; Mehl et al., 2006; Park et al., 2015; Yarkoni, 2010) in a wide variety of settings, such as social media data (Park et al., 2015; Schwartz et al., 2013), text messages (Holtgraves, 2011), life narratives (Borkenau et al., 2016), short stories (Küfner et al., 2010), and job interviews (Hickman et al., 2021).

A smaller array of studies has analyzed the extent to which paraverbal cues are associated with targets’ individual differences and perceivers’ judgments. Examples include personality and cognitive ability (for an overview, see Breil et al., 2021), as well as the expression of emotions (for an overview, see Juslin & Laukka, 2003). Four sets of paraverbal cues emerged as especially relevant: voice pitch (i.e., frequency-related; Aronovitch, 1976; Banse & Scherer, 1996; Borkenau et al., 2016; Koutsoumpis & de Vries, 2022; Stern et al., 2021), loudness (i.e., energy-related; Aronovitch, 1976; Banse & Scherer, 1996; Borkenau & Liebler, 1992), pauses in speech (i.e., temporal-related; Aronovitch, 1976; Koutsoumpis & de Vries, 2022) and speech rate (i.e., also temporal-related; Aronovitch, 1976; Banse & Scherer, 1996; Koutsoumpis & de Vries, 2022). Research has indicated that judges use these paraverbal cues to make fast and consensual judgments with some accuracy (Borkenau et al., 2004; Borkenau & Liebler, 1992; Mahrholz et al., 2018; McAleer et al., 2014; Pisanski & Bryant, 2019).

Due to its complexity, the limitations of traditional methods for cue extraction and integration are especially impactful in the investigation of language behavior. This led researchers to often investigate only selected snippets or one subdomain of language behavior, with a strong focus on verbal behavior (e.g., Borkenau et al., 2016; Eichstaedt et al., 2021; Hickman et al., 2021, 2023). For both verbal and paraverbal behaviors, recent developments in ML research, or more precisely natural language processing, have provided powerful tools that are able to automatically extract a wide variety of fine-grained behavioral cues (e.g., Devlin et al., 2019; Eichstaedt et al., 2021; Eyben et al., 2010, 2016; Honnibal & Montani, 2017). These tools allow researchers to perform large-scale, fine-grained cue extraction of both verbal and paraverbal cues. Combining this cue extraction approach with ML prediction models for cue integration allows researchers to effectively leverage this larger number of cues and their interplay to investigate their predictivity for social judgments at scale.

This study: The case of interpersonal performance judgments

We demonstrate the utility of an ML-based approach to better understand social judgments about performance. Judgments about individual differences in current performance and consecutively about individual differences in the ability to perform are impactful social judgments (Lievens, 2017). They regularly guide social behaviors and decisions: Teachers and peers tend to allocate more resources and feedback to those they perceive to perform better (Denissen et al., 2011; Gest et al., 2005, 2008; Rosenthal & Jacobson, 1968), we rely on performance judgments when determining who to ask for advice (Borgatti & Cross, 2003; Hofmann et al., 2009) or who to trust (Mayer & Gavin, 2005), and we design structured procedures to assess individual differences in performance when deciding who to hire as an employee (Roe, 2017).

A key method to assess performance in interpersonal situations, both for applied and research settings, is through role-play exercises (Breil et al., 2023; Lievens, 2017; Oliver et al., 2016). In contrast to other simulation-based assessments, such as interview questions or situational judgment tests, they allow to evoke actual behavioral differences for perceivers to use in their performance judgments. From a research perspective, role-plays therefore allow for unique access to investigate how perceivers form performance judgments on a fine-grained behavioral level (Breil et al., 2023; Lievens, 2017). Reviewing prior research, very few studies have analyzed the direct impact of individual behaviors on perceivers’ performance judgments in interpersonal settings (Breil et al., 2023; Hickman et al., 2023; Klehe et al., 2014; McFarland et al., 2005; Oliver et al., 2016). While there is some research on judgments of ability focusing on general behavioral differences in interpersonal situations (Borkenau et al., 2004; Murphy, 2007), due to its relevance for work settings, such as personnel selection and development, many of the available studies have focused only on groups of behavioral cues that are relevant to workplace settings on relatively high levels of abstraction (e.g., Borkenau et al., 2016; Klehe et al., 2014; McFarland et al., 2005). This leaves the behavioral foundation and thus our general understanding of performance judgments in interpersonal performance heavily underexplored.

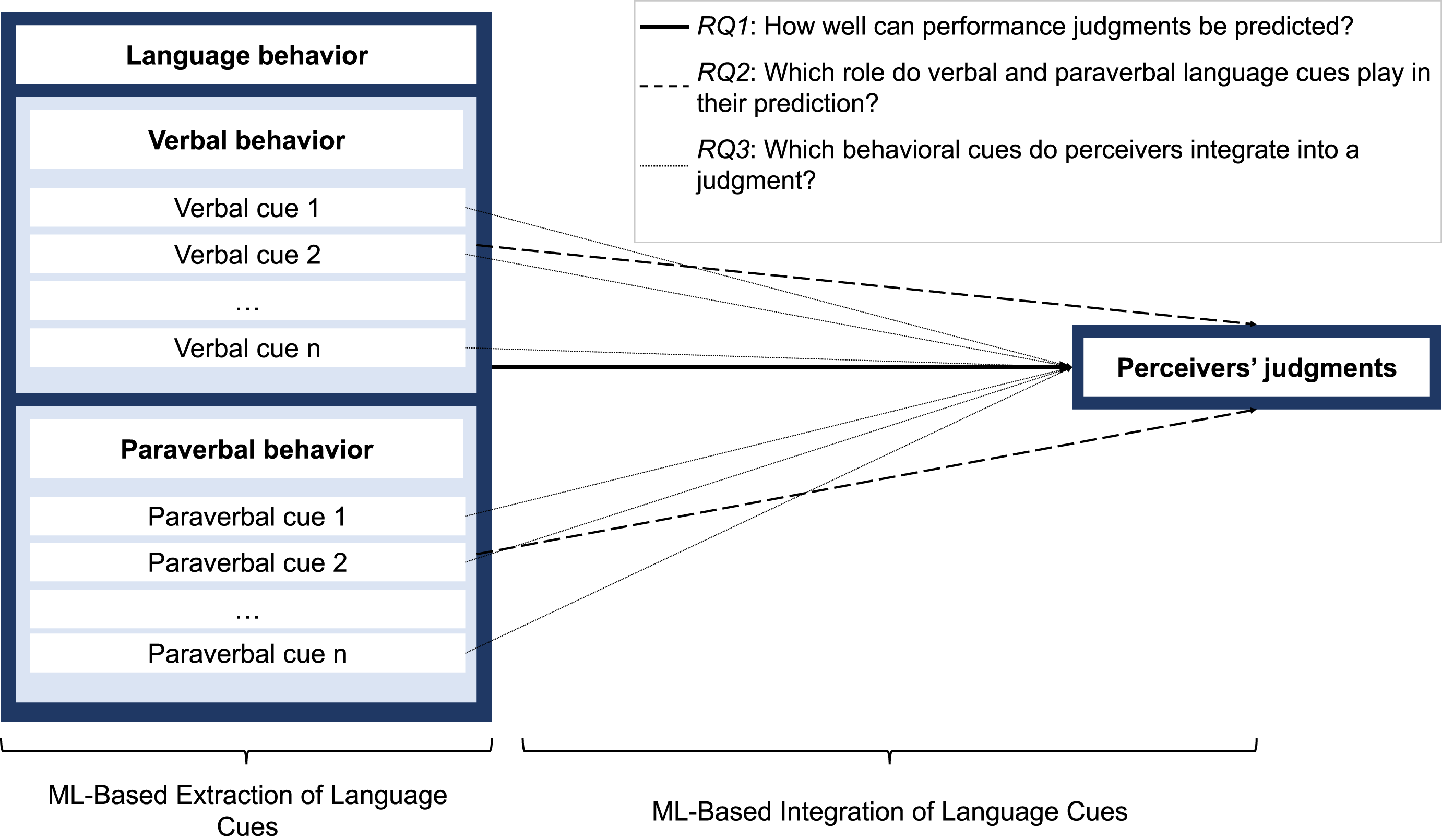

Combining a personality psychology perspective with the presented ML-based approach applied to interpersonal role-play exercises can provide a fine-grained understanding of their behavioral underpinnings at a larger scale. Language behavior as a key domain of interpersonal interaction is particularly well-suited for this investigation. With this approach, we can make three central contributions to the behavioral understanding of performance judgments in interpersonal situations (see Figure 1 for an overview): Predictivity of language behavior for performance judgments: Overview of the research model.

First, while there is some research investigating the association of specific interpersonal strategies that include language behavior with performance judgments (e.g., Klehe et al., 2014; McFarland et al., 2005) and Hickman et al. (2023) provided a first estimation for the predictivity of verbal language cues, we are missing investigations of the predictivity of language as a whole, that is, as a combination of verbal and paraverbal behavior. In this research, our aim is to build on this important initial work and investigate the predictive value of language behavior as a whole. Accordingly, we posit the following research question:

RQ1: How well can the perceivers’ performance judgments be predicted using language cues?

Second, to gain a more nuanced understanding of the impact of language behavior on performance judgments, it is necessary not only to investigate the general predictiveness of language behavior but also to differentiate between the two subdomains of verbal and paraverbal behavior. As this study is the first to investigate the extent to which verbal and paraverbal cues predict performance judgments in interpersonal situations, it is important to understand how predictive the respective subdomains are and how their individual predictivity compares to their combined predictivity. We thus pose the following research question:

RQ2: Which role do verbal and which role do paraverbal cues play in the prediction of performance judgments?

Equally, we are currently missing vital insights on the cue level. Two important exceptions include the work by Breil et al. (2023) and Grunenberg et al. (2024) who investigated manually coded interpersonal behaviors and the work by Hickman et al. (2023) who conducted an analysis of the extent to which verbal cues predicted performance judgments, leveraging a combination of open- and closed-vocabulary approaches. Here, we built on their promising insights and further included paraverbal behavior, which allowed us to provide a comprehensive investigation of the language-based predictability of performance judgments, including detailed insights into relevant verbal and paraverbal groups of cues and single cues. Thus, we posit the following research question:

RQ3: Which groups of language cues and single language cues drive the prediction of performance judgments?

With this study, we provide specific novel empirical insights into the behavioral underpinnings of judgments of performance differences in interpersonal situations, and, more generally, showcase the utility of an ML-based approach for cue extraction and integration to better understand judgments of interindividual differences.

Method

Interpersonal exercises

Procedure

The data for this study stems from two interpersonal role-play exercises from a real-world, high-stakes AC deployed by a German university to select candidates for programs in human medicine and dentistry. The two exercises followed the same procedure. Before entering the individual role-play situations, targets (i.e., the applicants) had 90 s to read instructions explaining the context of the given situation and the task at hand. In the first exercise, Bad News, targets had to deliver bad news to a person, and in the second exercise, Persuasion, targets had to persuade and convince the role-player (see Appendix A for an overview of the exercises). The targets then entered a testing room to interact with a professional role-play actor to complete the exercise. Targets had 5 minutes to complete the exercise but could leave earlier if they wanted to do so. All role-plays were videotaped. The present study utilizes data collected as part of a larger research project, with subsets of this data having been previously reported on in separate studies (see Appendix A for an overview). The data collection for this study was reviewed and approved by the IRB of the University of Münster.

Targets

The university preselected the targets on the basis of their high school GPA. Across the three cohorts considered in this study, N = 655 individuals took part in the selection procedure, out of these targets, n = 556 consented to having their videotapes merged with their performance ratings for use in later research analyses. For Bad News, the final sample size was n1 = 554, as for two targets performance judgments were missing. For Persuasion, the final sample size was n2 = 545, as 11 videotapes got corrupted during data storage. Targets’ ages ranged from 16 to 44 (M = 19.30, SD = 1.97), 406 targets were female, and 150 were male, 392 targets applied for human medicine, and 164 targets applied for dentistry.

Perceivers and ratings

Experienced physicians (i.e., perceivers) rated targets’ performance in teams of two. Each team was assigned to one role-play exercise only. All perceivers received comprehensive rater training. Perceivers observed the targets through a one-way mirror. The perceivers rated the targets’ global performance on a 6-point Likert scale ranging from 0 to 5, with higher values indicating higher performance. Specifically, the perceivers were instructed to “imagine the applicant as a medical student in the advanced clinical phase of their training” and to rate the applicant’s overall competence for that role based on the demonstrated performance. Each perceiver rated a target’s performance independently. For the final score, we used the average of the two ratings (Bad News: M = 3.31, SD = 1.04, ICC(1, k) = .65; Persuasion: M = 3.54, SD = 1.16, ICC(1, k) = .74).

Transcripts

Using videotapes of the role-plays, student assistants created transcripts of each interaction. Each student assistant received comprehensive training, which included an introduction to the transcription software, a presentation on transcription rules, and detailed guidance on handling challenging cases such as unclear audio, specific colloquial expressions, time stamps, and speech pauses (see Appendix B for an overview of the guidelines). As part of their training, the student assistants discussed a sample transcript and practiced transcription to familiarize themselves with the process. Before beginning actual transcripts, each student assistant was required to transcribe a sample video, receive feedback, and iteratively revise their transcription until it met the required quality standards. To ensure accuracy and consistency, every transcript was reviewed and corrected by a second student assistant who had not created the original transcription. All transcripts were completed verbatim for both the targets and the role-player, though only the targets’ speech was used for feature extraction and further modeling.

Analyses

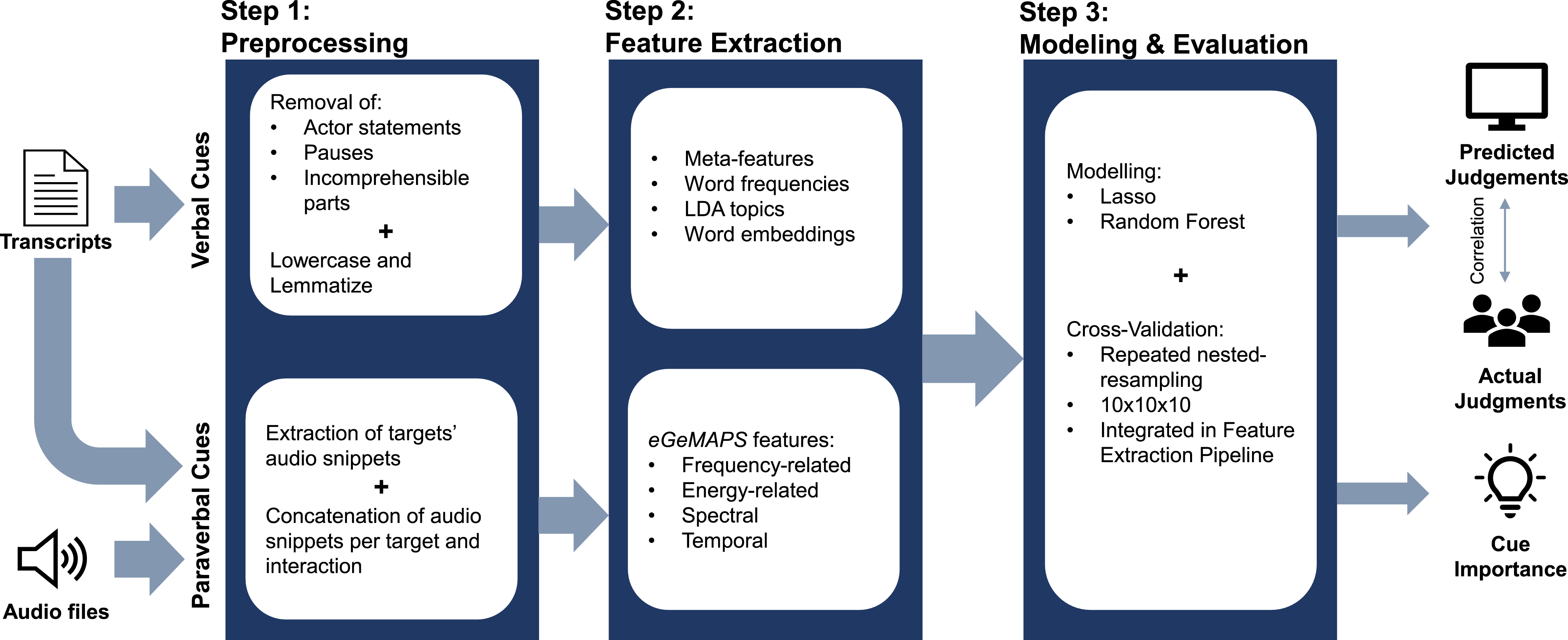

The development and validation of our prediction models can be divided into three main steps: preprocessing, feature extraction, and modeling and evaluation. Only the first two steps (i.e., preprocessing and feature extraction) differ between verbal and paraverbal cues. Figure 2 illustrates our approach

1

. Illustration of the analysis pipeline.

Verbal cues: Preprocessing and feature extraction

Preprocessing

To allow for the meaningful extraction of verbal cues, the transcripts were preprocessed (Eichstaedt et al., 2021; Park et al., 2015): First, the role-player’s statements were removed so that only the target’s verbal behavior remained in the transcript. Second, tokens indicating pauses and incomprehensible parts of a transcript were removed along with all punctuation characters. Finally, every transcript was lowercased and lemmatized.

Feature extraction

We used a combination of feature engineering and open-vocabulary approaches to extract verbal cues (Eichstaedt et al., 2021). In total, we extracted four different groups of verbal cues: meta-features, word frequencies, topics, and word embeddings.

First, the meta-features group refers to a category of general, feature-engineered cues that can be extracted from the transcripts. In this study, we extracted a total of five meta-features: character count (total number of characters of a target’s statements), word count (total number of words a target used), mean word length (average length of all words a target used), vocabulary size (number of unique words a target spoke in an interaction), and stop word count (number of stop words such as the or a).

Second, we extracted unweighted and weighted word frequencies for each training set’s most common 10,000 tokens with scikit-learn’s CountVectorizer and TfidfVectorizer classes (Pedregosa et al., 2011). Unweighted word frequencies refer to the count of discrete occurrences of a given token in a given document (i.e., transcript). By contrast, we extracted weighted word frequencies using the term frequency-inverse document frequency (TFIDF) paradigm. TFIDF frequencies are high for a token that occurs frequently in a given document (i.e., a single transcript) but not in the whole corpus (i.e., all transcripts).

Third, we applied a topic modeling approach, Latent Dirichlet Allocation (LDA; Blei et al., 2003), to extract a document-topic matrix. Similar to dimensionality reduction techniques such as principal component analysis, topic modeling identifies underlying structures (topics) in a text corpus (i.e., all transcripts). LDA assumes that documents consist of a mixture of topics and that topics are mixtures of words, with words that frequently co-occur across documents forming cohesive topics (Eichstaedt et al., 2021). By providing a document-term matrix (i.e., word frequencies for each transcript) and specifying the desired number of topics, LDA produces a document-topic matrix, indicating the probability of each topic appearing in each transcript. Using scikit-learn’s LatentDirichletAllocation (Pedregosa et al., 2011) class, we fit a model that considered 100 topics, resulting in a document-topic matrix that quantified the alignment of each transcript with each of the 100 topics.

Fourth, we leveraged pre-trained word embeddings to extract document vectors for each transcript. Embedding methods convert symbolic representations, such as words or images, into multi-dimensional numeric vectors that encode semantic and syntactic relationships (Eichstaedt et al., 2021). Word embeddings represent individual words or entire documents in a vector space, where similar vectors indicate similar meanings. These embeddings are typically created using models that learn word-to-vector mappings by analyzing patterns in large text corpora, such as predicting a word based on its surrounding context (e.g., Devlin et al., 2019; Mikolov et al., 2013). Developing a word embedding model from scratch is computationally highly demanding, so one usually relies on pre-trained word embedding models in practice (Kjell et al., 2023). In this study, we used the pre-trained, 96-dimensional package de_core_news_sm that spaCy provides for the German language (Honnibal & Montani, 2017). The document vector for a given transcript was created by first extracting individual word vectors for each word in the transcript and then calculating the dimension-wise mean across all individual word vectors.

Paraverbal cues: Preprocessing and feature extraction

Preprocessing

For the extraction of the paraverbal cues, we preprocessed the videotapes of the role-plays on the basis of the transcripts’ timestamps, extracting video snippets that contained only the target’s turns in the interaction. We concatenated these video snippets into a single file per target and interaction. We then extracted the audio track from each file and used it as the source for extracting the paraverbal cues.

Feature extraction

To extract paraverbal cues, we relied on the extended Geneva Minimalistic Acoustic Parameter Set (Eyben et al., 2016) implemented in openSMILE (Eyben et al., 2010). This parameter set was created with the aim of enhancing comparability across studies investigating paraverbal behavior. In total, it includes 88 different descriptive statistics (e.g., mean, variability metrics) from 31 different voice characteristics that map onto four different groups: frequency-related cues (i.e., What is a person’s pitch when they speak?), energy-related cues (i.e., How loud does a person speak?), spectral cues (i.e., How clear is a person’s voice?), and temporal cues (i.e., When does a person speak?).

Additional variables

Further, we included a selection of additional descriptive variables in our analyses: targets’ sex and age, as well as variables indicating targets’ cohort and targeted program of study (i.e., human medicine vs. dentistry).

Feature sets

In our analyses, we differentiated between three different feature sets. Each feature set consisted of the respective set of language cues and the Cav = 5 additional variables. First, the combined language feature set contained both verbal and paraverbal cues (c = 20,289) and was of size Cboth = 20,294. Second, the verbal feature set (c = 20,201) was of size Cverbal = 20,206. Third, the paraverbal feature set (c = 88) was of size Cparaverbal = 93.

Modeling and performance evaluation

Machine learning modeling

We relied on two categories of supervised ML models to predict the perceivers’ ratings based on the targets’ language behavior and compared their performances. We compared linear models, that is, models that integrate predictors (i.e., cues) in a linear fashion to predict an outcome (i.e., perceivers’ ratings), against nonlinear models, allowing for the modeling of nonlinear patterns, such as complex interactions between features.

We trained Lasso regression models (Tibshirani, 1996) as an example of a linear model. Lasso regression belongs to the family of regularized regressions. Like other regularized regressions, Lasso regression increases its signal capture (i.e., instead of noise capture) and generalizability to unseen data by penalizing uninformative features. Lasso regression is well-suited for dealing with multicollinearity because it can set uninformative or redundant features’ weights to zero. This can be especially useful when dealing with large numbers of features, because Lasso can shrink the size of the models, extracting only the most predictive features.

RF regression (Breiman, 2001a) is an ML algorithm from the family of ensemble learning algorithms. It operates by creating multiple individual decision trees on a random subset of the data. In the end, the predictions of all individual trees are aggregated to form the global prediction of the RF model. This practice prevents overfitting while keeping the ability to capture complex interactions among features, thus making the final prediction more robust.

Performance evaluation

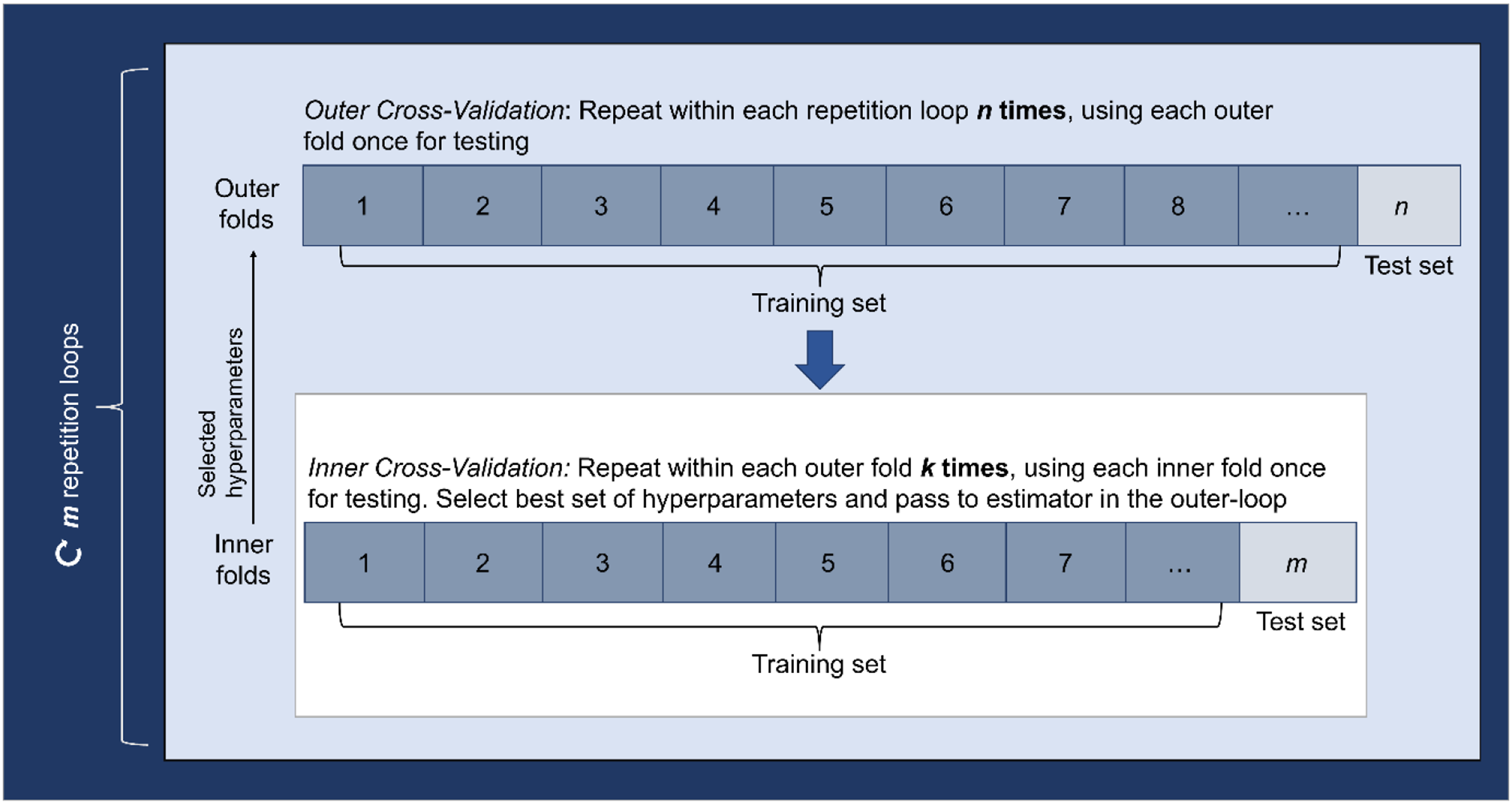

To ensure not only that a prediction model captures the particular patterns in the data it was trained on but also that it does not overly closely capture potential noise in the data (i.e., overfitting), it is important to evaluate the performance of a prediction model on data that it was not trained on. When performing complex modeling, including the tuning of a model’s hyperparameters, nested resampling techniques (e.g., nested cross-validation) can be applied for cross-validation (Bischl et al., 2012; Bouckaert & Frank, 2004; Varma & Simon, 2006). Building on the idea of k-fold cross-validation, nested resampling adds an additional level of resampling to the evaluation process. That is, the data are partitioned into multiple subsets by nesting multiple resampling loops (n × k-folds). The outer loop of resampling divides the data into training and test sets. However, within each iteration of the outer loop, an inner loop of resampling is applied. In the inner loop, the training set from the outer loop is further divided into training and test subsets. These training and test subsets are then used to evaluate the performance of different hyperparameter configurations. The best configuration is then used in the outer loop on unseen data to test the model’s performance. This enables a comprehensive assessment of the model’s performance across various parameter configurations while separating model training and hyperparameter tuning. To further improve generalization by making sure that the reported performance of the model is not skewed by the way the subsets in the data were created, the nested resampling approach can be repeated, generating different subsets for each repetition loop (m × n × k-folds; see Figure 3). Illustration of repeated-nested resampling.

Our models were trained using a 10 × 10 × 10 repeated-nested resampling approach (Stachl et al., 2020). Where necessary, we performed the feature extraction (i.e., CountVectorizer, TfidfVectorizer, LDA) within the resampling procedure to avoid data leakage. We choose Pearson correlation coefficients as our performance metric.

Cue (Group) SHAP values

ML models have traditionally been regarded as black boxes—highly effective at making predictions yet challenging to interpret (Breiman, 2001b; Fokkema et al., 2022; Stachl et al., 2020). Recent advancements in the field of explainable ML, however, have introduced a variety of methods to describe and interpret the cue integration processes of ML models. A prominent approach is using SHAP values (SHapley Additive exPlanations; Lundberg & Lee, 2017; Scheda & Diciotti, 2022). In contrast to other feature importance metrics, such as permutation feature importance (Breiman, 2001a), SHAP values provide not only an assessment of a feature’s overall importance but also inform about the direction of a feature’s impact (Molnar, 2022). Rooted in cooperative game theory, SHAP values attribute the difference between a model’s actual prediction and the average prediction in its training data to individual features based on their contributions. In doing so, they allow insights at a local (i.e., for each prediction) and a global (i.e., for the full model) level. That is, SHAP values are computed for each individual prediction in a test set, enabling detailed local explanations. By aggregating these individual contributions across the dataset, global explanations can be extracted, offering insights into the overall importance and direction of each feature’s impact on the model. SHAP values are directly comparable across different predictions because they are expressed on the same scale as the model’s output. That means, the direction and magnitude of a single SHAP value indicate how much the inclusion of a feature moves the prediction for a specific instance (e.g., a SHAP value of +2.5 means the feature increases the prediction by 2.5 units for that instance).

Estimating SHAP values can be integrated with resampling approaches, for example, by extracting them in the outer loop of cross-validation (Scheda & Diciotti, 2022). This practice enables one to analyze the distribution of SHAP values across resampling steps, offering a more robust understanding of a single cue’s impact. As some of our features were dynamically generated (i.e., word frequencies and topics as open vocabulary features), the feature sets varied across resampling steps. Therefore, we aggregated the SHAP values for each single cue across the resampling steps in which it was part of the training set. As our aggregation metric, we used the absolute mean, thus, preventing the potential cancellation of single-cue contributions. To assess the directional impact of a given single cue, we extracted Pearson correlations between the SHAP values and feature (i.e., cue) values across all targets and resampling steps. These correlations reflect the general relationship between feature values and their contributions to the predictions, capturing the direction of a feature’s influence. Also, we examined the relative frequency of how often a single cue was assigned a nonzero SHAP value to assess its overall relevance to the model. In addition to this single cue perspective, we performed an analysis at the cue group level. For each outer-loop fold, we calculated the sum of the absolute SHAP values for all single cues within the same cue group and then aggregated these values across all outer-loop folds to determine the group’s overall importance.

Results

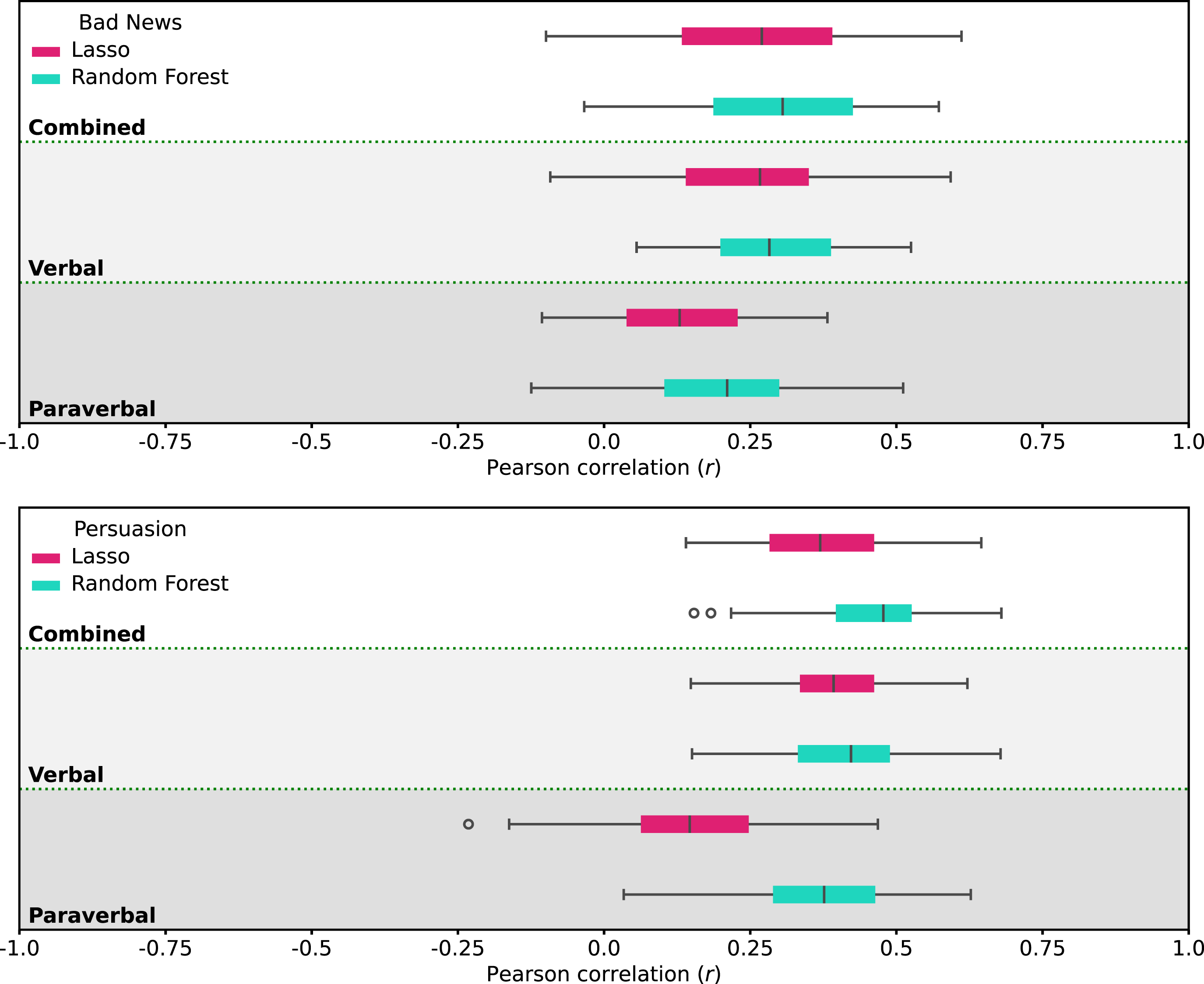

Both the linear (i.e., Lasso) and nonlinear (i.e., RF) models could predict performance judgments with considerable success across all three feature sets. For an overview of the predictive performance of all models across all exercises, see Figure 4. Predictive performance overview of all models and exercises.

To address RQ1, that is, the predictivity of targets’ language behavior for perceivers’ performance judgments, we assessed the performance of the linear and nonlinear models trained on the combined feature set (verbal and paraverbal information). Across exercises, the Lasso model achieved a predictive performance 2 of r = .32 (SD = .16). The RF model surpassed Lasso’s predictive performance with a performance of r = .38 (SD = .15). Higher predictive performances were achieved for the Persuasion exercise (Lasso: r = .38, SD = .12; RF: r = .46, SD = .11) compared to the Bad News exercise (Lasso: r = .26, SD = .16; RF: r = .31, SD = .14), with the RF models again outperforming their Lasso counterparts.

For RQ2, that is, which role verbal and paraverbal behavior play in predicting the performance judgments, we compared the predictive performance of the Lasso and RF models trained on the verbal feature set with their counterparts trained on the paraverbal feature set. Across exercises, the verbal models’ performance exceeded their paraverbal counterparts’ predictive performance for both Lasso (verbal: r = .32, SD = .15; paraverbal: r = .14, SD = .13) and RF (verbal: r = .35, SD = .14; paraverbal: r = .29, SD = .15), with the RF models again outperforming their Lasso counterparts. On an exercise level, this pattern was replicated (see Figure 4). When integrating the results from RQ1 and RQ2, we find that the models trained on the verbal behaviors consistently outperform the models trained on the paraverbal behavior and that across exercises, the combination of verbal and paraverbal cues in the combined feature set only brought marginal benefits in predictive performance for the RF model. In contrast, the predictive performance of the Lasso models trained on the combined and verbal-only feature sets is almost identical.

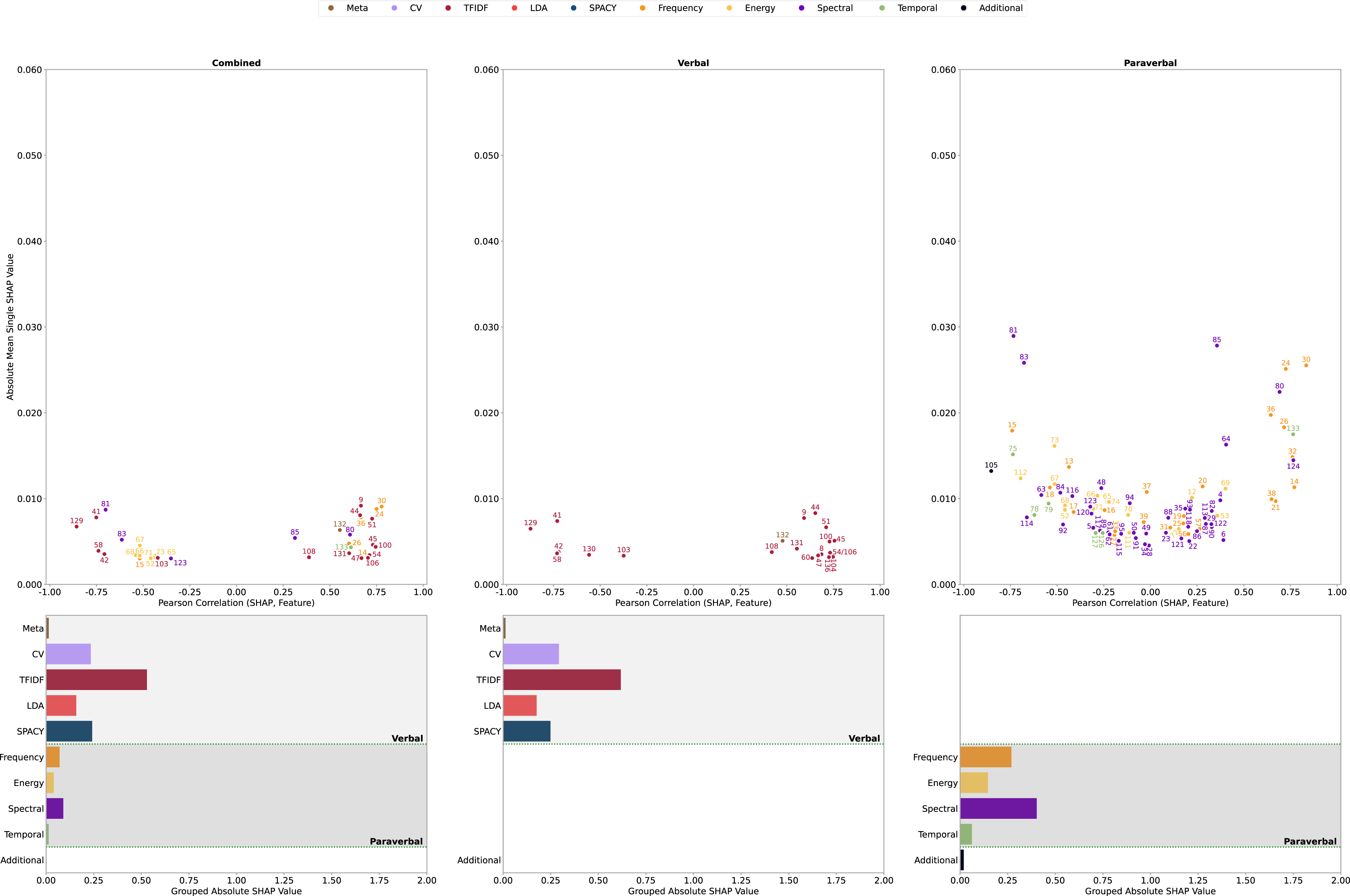

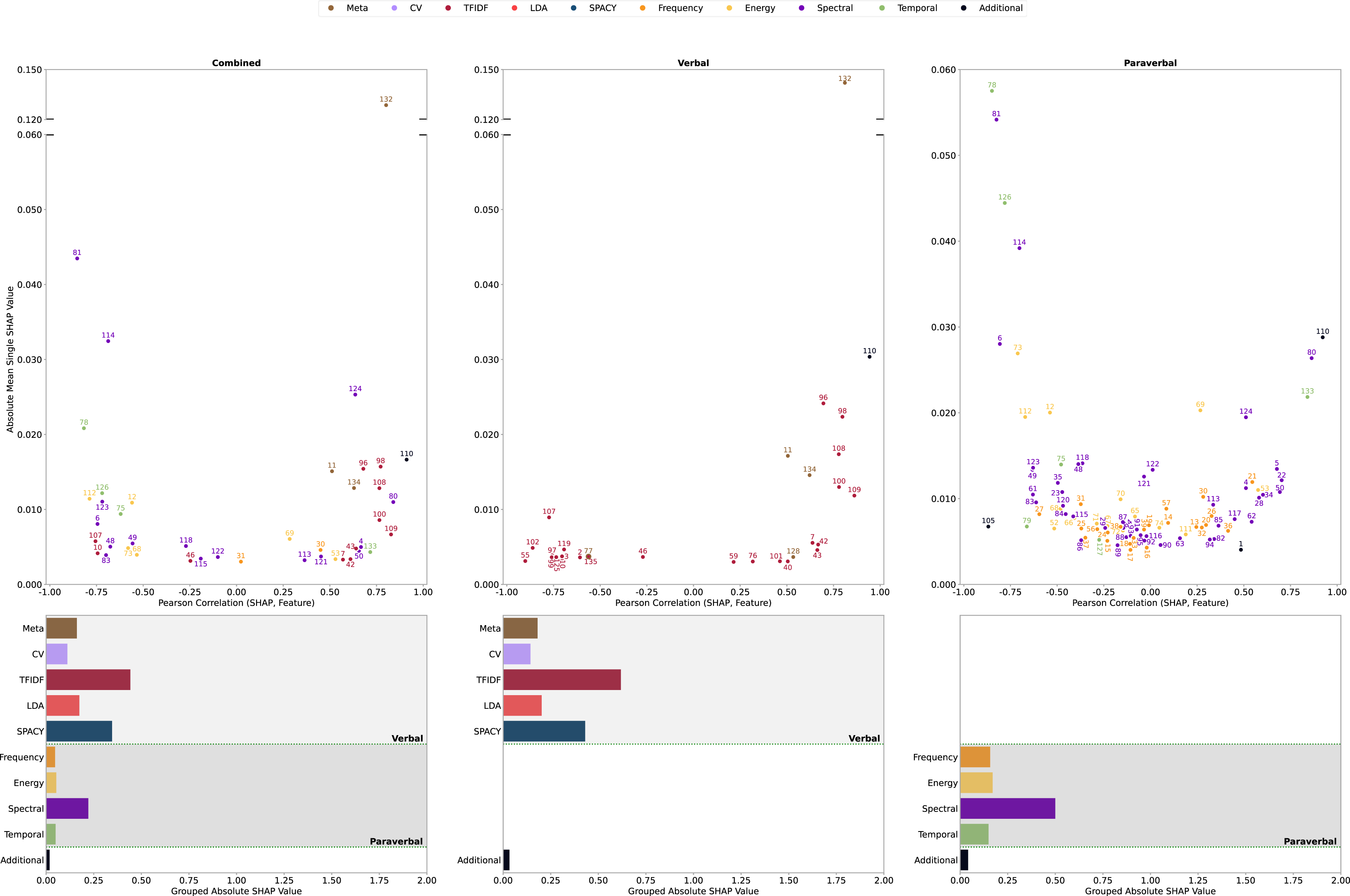

To address RQ3, we investigated which groups of cues and which specific single cues drove the predictive performance of our models. Since the RF models outperformed the Lasso models, we focus on the SHAP importance analyses for the RF models (see Appendix C for Lasso). We included single cues with an average absolute SHAP value of at least 0.003 and nonzero SHAP values in at least 50% of resampling steps, given they were part of the respective training data. We then calculated and averaged the Pearson correlation of (the non-absolute) SHAP values and corresponding feature values (r) across resampling steps to assess a single cue’s directional impact. The directions of these correlations indicate whether higher feature values (e.g., a higher pitch) are associated with more positive (positive correlation) or more negative (negative correlation) predicted performance judgments. The absolute magnitude of the correlation reflects the proportion of the effect that can be attributed to a linear relationship, with higher values indicating a stronger linear association with the prediction. This approach yielded many important single cues per model. We focus on the most important single cues per important group of cues in the text and provide an overview in Figures 5 and 6 (see appendices D and E for a list of single cue SHAP importance scores). Each figure displays the results of the SHAP analyses for one of the investigated exercises. Each column displays the results for one model, that is, the respective combined, verbal, and paraverbal model. The top row of each figure displays the results for the analyses on the single cue level. Each point in these plots represents a specific cue, with its position indicating both how strongly the model relied on it (higher values on the y-axis mean greater importance) and whether higher values of a cue were associated with higher or lower predicted performance (positive or negative values on the x-axis). Additionally, the strength of the correlation (distance from zero on the x-axis) reflects how much of the effect can be attributed to a linear relationship between the cue and the prediction, with stronger correlations indicating a stronger linear association. The distribution of points across the plot helps illustrate which cues were most influential, whether they tended to contribute positively or negatively to performance judgments, and if they contributed to it linearly. The bottom row contains the results of the group-level SHAP analyses (overall importance of each cue group), displaying the sum of the absolute SHAP values per cue group for each of the respective models (overall importance of each cue group). Random forest cue analysis Bad News exercise. Random forest cue analysis Persuasion exercise.

First, for the Bad News exercise, we found that for the RF model trained on the combined feature set (Figure 5, column 1; Appendix D1), all groups of language cues were important 3 . For weighted word frequencies (TFIDF), two terms emerged as particularly predictive. Both words were associated with accurately conveying the specific content of the bad news in the respective exercise (bescheid [engl. inform—informing about bad news, central task of the Bad News exercise]: SHAP = 0.0092, r = .67, freq = 1; großonkel [engl. great-uncle—a key figure in the Bad News exercise]: SHAP = 0.0081, r = .66, freq = 1). There were no unweighted word frequencies (CV) that exceeded the 0.003 SHAP value and .50 frequency threshold. However, insights from spectral-related cues suggested that individuals with deeper (characterized by greater energy accumulation in lower frequency ranges; MFCC1 M 4 : SHAP = 0.0058, r = .61, freq = 1) and those with more stable (MFCC1 SD : SHAP = 0.0087, r = −.70, freq = 1) voices received higher performance judgments. The bandwidths of the first two formants turned out to be relevant frequency-related cues (F2 bandwidth M : SHAP = 0.0091, r = .78, freq = 1; F1 bandwidth M : SHAP = 0.0088, r = .75, freq = 1), both indicating that perceivers favored softer-sounding target voices. For the energy-related cues, two loudness descriptives were important (loudness M Rising Slope: SHAP = 0.0045, r = −.52, freq = 1; loudnessIPR 80%–20%: SHAP = 0.0034, r = −.54, freq = 1), suggesting that perceivers rated calmly speaking targets higher. Additionally, perceivers rated targets that applied a greater vocabulary (meta-feature, vocabulary size: SHAP = 0.0063, r = .55, freq = 1) and those who made fewer speech pauses as better (temporal-related, voiced segments per second: SHAP = 0.0043, r = .61, freq = 1).

For the RF model trained on the verbal feature set (Figure 5, column 2; Appendix D2), all groups of verbal cues were relevant, with weighted word frequencies (TFIDF) being the most and meta-features the least important. Of the weighted word frequencies, the same two terms relevant to the combined model were relevant to the verbal model (großonkel [engl. great-uncle]: SHAP = 0.0083, r = .65, freq = 1; bescheid [engl. inform]: SHAP = 0.0077, r = .59, freq = 1). Again, there were no unweighted word frequencies (CV) that exceeded the 0.003 SHAP value and .50 frequency threshold, and the targets’ vocabulary size (SHAP = 0.0051, r = .48, freq = 1) was the most important meta-feature, which was positively associated with the perceivers’ judgments.

For the RF model trained on the paraverbal feature set (Figure 5, column 3; Appendix D3), all groups of paraverbal cues were relevant, with spectral-related cues being the most important and temporal-related cues being the least important. Moreover, the group of additional cues was relevant, even though its impact was small. For the spectral-related cues, targets that had stable voices (MFCC1 SD : SHAP = 0.0289, r = −.73, freq = 1) but still appeared engaged (MFCC2 SD : SHAP = 0.0278, r = .36, freq = 1) received more positive judgments. Again, the bandwidths of the first two formants were relevant cues, indicating that soft-voiced targets received better performance judgments (F2 bandwidth M : SHAP = 0.0255, r = .83, freq = 1; F1 bandwidth M : SHAP = 0.0251, r = .72, freq = 1). Relevant energy-related cues suggested that perceivers preferred more calmly speaking targets (loudness SD : SHAP = 0.0161, r = −.51, freq = 1) with a healthy-sounding voice (shimmer local decibel SD : SHAP = 0.0124, r = −.70, freq = 1). In addition, regarding temporal-related cues, targets that made fewer speech pauses (voiced segments per second: SHAP = 0.0175, r = .76, freq = 1) and had fewer loudness peaks (loudness peaks per second: SHAP = 0.0152, r = −.74, freq = 1) received better performance judgments, while, regarding additional cues, human medicine targets received better ratings than dentistry targets (program: SHAP = 0.0132, r = −.85, freq = 1).

Second, for the Persuasion exercise, we found that for the RF model trained on the combined feature set (Figure 6, column 1; Appendix E1), all groups of cues were important, with weighted word frequencies (TFIDF) being the most impactful and additional cues being the least. For the weighted word frequencies, the frequent use of terms signaling persistence in persuading the role-player (…, ne? [engl. colloq. …, no?/…, right?]: SHAP = 0.0157, r = .77, freq = 1; see Appendix B2), as well as terms expressing understanding and reassurance fitting the context of the Persuasion exercise (mhm: SHAP = 0.0154, r = .68, freq = 1), led to higher performance judgments. An analysis of the spectral-related cues revealed that targets who had stable voices (MFCC1 SD : SHAP = 0.0435, r = −.85, freq = 1) and those who maintained a soft speech style (slopeUV M (500–1500): SHAP = 0.0325, r = −.69, freq = 1) received higher performance judgments. Additionally, for meta-features, targets who demonstrated a more extensive vocabulary (vocabulary size: SHAP = 0.1286, r = .80, freq = 1) and spoke more overall (character count: SHAP = 0.0151, r = .51, freq = 1) received higher performance judgments. Again, no unweighted word frequencies (CV) surpassed the 0.003 SHAP value or the .50 frequency threshold. Regarding energy-related cues, targets with a healthy-sounding (shimmer local decibel SD : SHAP = 0.0114, r = −.79, freq = 1), calm voice (decibel sound level: SHAP = 0.0109, r = −.56, freq = 1) achieved greater success. From a temporal cue perspective, overly long speech pauses were negatively associated with performance judgments (unvoiced segment length M : SHAP = 0.0208, r = −.82, freq = 1; unvoiced segment length SD : SHAP = 0.0122, r = −.72, freq = 1). For frequency-related cues, insights suggested that perceivers favored targets with soft-sounding voices (F2 bandwidth M : SHAP = 0.0046, r = .45, freq = 1; F2 bandwidth SD : SHAP = 0.0030, r = .02, freq = 1). Overall, targets from the final semester batch included in this study received higher ratings compared to those from earlier batches (semester1: SHAP = 0.0167, r = .91, freq = 1).

For the RF model trained on the verbal feature set (Figure 6, column 2; Appendix E2), weighted word frequencies (TFIDF) were the most relevant, whereas additional cues were the least, with all groups of cues being somewhat important. On a single cue level, single cues relevant to the combined model were also relevant to the verbal model. Specifically, more frequent usage of the same terms (i.e., weighted word frequencies, TFIDF), signaling reassurance (mhm: SHAP = 0.0241, r = .70, freq = 1) and demonstrating persistence in persuading the role-player (…, ne? [engl. colloq. …, no? / …, right?]: SHAP = 0.0224, r = .80, freq = 1), led to higher performance judgments. Regarding meta-features, perceivers evaluated targets that showcased a more extensive vocabulary (vocabulary size: SHAP = 0.1420, r = .81, freq = 1) and spoke more overall (character count: SHAP = 0.0171, r = .51, freq = 1) as better. Again, no unweighted word frequency single cues (CV) surpassed the 0.003 SHAP value and .50 frequency threshold, and the final semester batch considered in this study (i.e., additional cues) generally received higher performance judgments than the remaining batches (semester1: SHAP = 0.0304, r = .94, freq = 1).

For the RF model trained on the paraverbal feature set (Figure 6, column 3; Appendix E3), all groups of cues were significant, with spectral-related cues being the most influential and additional variables the least impactful. At the level of individual cues, the pattern was largely similar to the combined model. Specifically, for spectral-related cues, targets with stable voices (MFCC1 SD : SHAP = 0.0542, r = −.82, freq = 1) and those who maintained a soft speech style (slopeUV M (500–1500): SHAP = 0.0392, r = −.70, freq = 1) were judged more positively. Complementing this, regarding energy-related cues, perceivers favored targets whose voices were calm (loudness SD : SHAP = 0.0269, r = −.71, freq = 1) yet still easy to understand (loudness20%: SHAP = 0.0203, r = .27, freq = 1). Frequency-related cues further indicated that targets who displayed a higher variation in voice pitch increases, potentially signaling engagement and expressiveness (F0Rising Slope SD : SHAP = 0.0119, r = .55, freq = 1), and those who maintained a soft-voiced tone (F2 bandwidth M : SHAP = 0.0102, r = .28, freq = 1) were more successful. From a temporal cue perspective, targets who avoided making overly long speech pauses received higher performance judgments (unvoiced segment length M : SHAP = 0.0575, r = −.85, freq = 1; unvoiced segment length SD : SHAP = 0.0444, r = −.78, freq = 1). Targets from the final semester batch (semester1: SHAP = 0.0288, r = .92, freq = 1), as well as those studying human medicine (program: SHAP = 0.0067, r = −.87, freq = 1), were rated more favorably overall.

In sum, these analyses revealed that important single cues referred to the clarity of speech, acting with confidence, and task-oriented behavior. Interestingly, we found this pattern not only across exercises but also across behavioral domains, with the verbal and paraverbal cues delineating conceptually very similar target behaviors. At the same time, the analyses highlighted that while several cues stood out as particularly important, the predictive performance arises from the combination of many different cues. Furthermore, any interpretation of these cues should always be considered within the specific context of the exercise, as their relevance and impact may vary depending on the behavioral and situational demands.

Discussion

In this paper, we set out to demonstrate the utility of an ML-based approach to cue extraction and cue integration for research investigating judgments of individual differences. We argue that this approach holds great potential for a more fine-grained, behavioral investigation not only of performance judgments but of all kinds of interpersonal judgments, including, for example, personality judgments (Back & Nestler, 2016; Cannata et al., 2022; Funder, 2012; Letzring et al., 2021) and interpersonal attraction (Back, Schmukle, & Egloff, 2011; Eastwick, Joel, et al., 2023; Joel et al., 2017). It allows researchers to (a) drastically reduce the cost of cue extraction, thus, (b) a much more comprehensive extraction of behavioral cues, while at the same time (c) reducing the subjectivity of the assessments. Second, by applying ML models for cue integration analyses, one is able to deal with (a) a larger number of (b) correlated and partially redundant cues, and (c) their potentially complex interactive and nonlinear effects. Additionally, (d) through cross-validation, they can provide more generalizable estimates of the predictivity of behavioral cues for social judgments.

Here, we demonstrated these advantages for the case of judgments of individual differences in performance by leveraging a natural language processing approach combined with ML prediction models for cue integration on transcripts and audio data from two interpersonal exercises. We extracted a wide range of fine-grained verbal (c = 20,201) and paraverbal (c = 88) language cues and trained different ML models to predict perceivers’ performance judgments. This led to three key contributions.

First, we provide a novel benchmark for the predictive value of fine-grained language behavior conceptualized as a combination of verbal and paraverbal behavior for judgments of interpersonal performance. We thereby extend prior research that focused on selections of manually extracted verbal or paraverbal cues or remained on a relatively high level of abstraction (e.g., McFarland et al., 2005; Oliver et al., 2016). A direct comparison of our models’ performance to prior research on predictions of performance judgments in interpersonal situations is not trivial since research relying on interpersonal behaviors is relatively scarce and most of the research was performed using in-sample model evaluations (e.g., Breil et al., 2023; Klehe et al., 2014; McFarland et al., 2005; Oliver et al., 2016).

With a cross-exercise performance of r = .38, our results underscore the significance of language behavior as a pertinent factor in predicting perceivers’ performance judgments in interpersonal settings and fit well into the range of model performances reported by Hickman et al. (2023; r avg = .44) who investigated the prediction of performance judgments in similar interpersonal challenges leveraging verbal behavior, albeit, in a lower-stakes context. The cue extraction approach leveraged by Hickman et al. (2023) is broadly comparable to our study’s extraction approach for verbal cues, although their work further integrated LIWC features (Pennebaker et al., 2015). Interestingly, an investigation of assessors’ cue integration strategies using broader, manually coded, theoretically derived interpersonal behaviors on a different subset of data from this study’s admission test (spanning verbal, paraverbal, and nonverbal behaviors) achieved a considerably higher predictive performance of r avg = .52 (Grunenberg et al., 2024). Whereas the results by Hickman et al. (2023) underline the importance of verbal behavior for judgments of interpersonal performance, the results of Grunenberg et al. (2024) highlight the potential of taking a wider variety of interpersonal behaviors, including nonverbal cues, into account to gain a better understanding of social judgments. It is important to recognize that the current set of features was extracted from transcripts and audio data of the role-play interactions without any involvement of human raters. Thus, the feature extraction process was perfectly objective, as it was not influenced by human judgment variance. This eliminates potential biases, such as halo or spillover effects, where an observer’s overall impression of an individual (e.g., physical attractiveness) unconsciously influences the perception and rating of specific behaviors, artificially inflating their association with overall performance. Our results further fit well into a series of emerging models predicting other psychological variables from similar interpersonal situations, such as interviewer-rated personality based on applicants’ general behavior (r avg = .42, Hickman et al., 2022) and specific language cues (r avg = .39, Hickman et al., 2021). Thus, by reporting cross-validated estimates for the predictivity of language behavior, our findings offer a valuable reference point for the predictive performance of language behavior for performance judgments in interpersonal settings specifically and also for the performance of the presented ML-based approach in general.

Second, considering language behavior more comprehensively allowed us to create novel insights into the predictive interplay of verbal and paraverbal behavior, more closely mirroring language expressions in actual social interactions. We found that both verbal (i.e., what someone says) and paraverbal (i.e., how someone says something) behaviors were predictive of the performance judgments, with the verbal models outperforming their paraverbal counterparts. This finding aligns with previous research arguing that verbal behavior should be especially relevant to perceivers in consequential judgment contexts as they carry the most information (Kahneman, 2003; Martín-Raugh et al., 2023). Interestingly, combining verbal and paraverbal behavioral information into one model only led to marginal benefits at best, indicating that both domains were used to express similar information. Our findings further imply that interactive patterns that combine paraverbal and verbal information were not particularly important. That is, predictive interactive effects were mainly situated within the verbal and paraverbal domains, respectively. These findings open a new avenue for future research: Under which conditions does paraverbal information add additional predictive performance to verbal information and do the interactive patterns of verbal and paraverbal information have predictive value?

Third, leveraging an interpretable ML approach, we investigated which cue groups and individual cues were most predictive. In line with the results of our model comparisons and prior research on ability judgments, our analyses revealed that for both, verbal and paraverbal behavior, cues relating to calm, clear, and fluent speech as well as exercise-specific, task-oriented cues (i.e., cues related to the correct delivery of the bad news for the Bad News exercise and cues related to persistence for the Persuasion exercise) were most predictive (Breil et al., 2021; Murphy, 2007; Reynolds & Gifford, 2001). Interestingly, age and sex, which are typically considered potential bias variables, did generally not increase the predictive performance beyond verbal and non-verbal information. Thus, there was little evidence for general performance biases due to age and sex. Since the applied RF models approximate both linear and nonlinear patterns (i.e., potential interactions between variables, for example, loudness of voice was not rated positively for men but negatively for women), the absence of any observed feature importance of age and sex suggests that these variables were not strongly utilized by the model in modeling the perceivers’ cue integration strategies - neither in an additive nor in an interactive way. This is an important finding supporting the quality of the applied perceiver training for this high-stakes selection context. Having access to information on a fine-grained behavioral level through the presented ML-based approach provides an important new opportunity for the investigation of the behavioral underpinnings of judgments and the identification of biases. Additionally, it allows for novel ways of analyzing the success of interventions beyond usual assessments on the score level (e.g., differences in performance scores for men and women). Providing scalable and fast cue extraction and cue integration analyses also allows one to test if the underlying behavioral mechanisms were affected by an intervention (e.g., Are increased performance judgments mirrored by increased expressions of specific desired behaviors? Is a reduction in sex bias in performance judgments mirrored in a reduced differential judgment of certain behaviors for men and women?).

Beyond these three main contributions, our findings also provide more general information for cue integration research, particularly regarding the role of linear versus nonlinear models – a research topic with a long history in judgment research (Dawes, 1979; Einhorn, 1970; Meehl, 1954). Most of the research building on the lens model and related frameworks relied on linear models (Karelaia & Hogarth, 2008), and many have provided evidence for their relevance as well as their superiority over more complex nonlinear models (Goldberg, 1971; Hogarth & Karelaia, 2007; Ogilvie & Schmitt, 1979). There is, however, also relevant evidence for nonlinear effects (Brannick & Brannick, 1989; Dougherty & Thomas, 2012; Einhorn, 1971). One key factor that might explain this heterogeneity, as well as the often found dominance of linear models, is the considered (behavioral) information: Many prior studies have used some type of human-reported score variables (e.g., questionnaire scores) as cues (Dawes, 1979; Goldberg, 1971; Ogilvie & Schmitt, 1979). Research has shown that measurement error, which to some extent is inherent to self-report and other questionnaire-based scores, can obscure the true nonlinear relationship between cues and a target variable, masking genuine nonlinear effects in random error (Jacobucci & Grimm, 2020). Consistent with this perspective, our results demonstrate that while linear models trained on a large set of fine-grained, objectively extracted behavioral cues captured a substantial portion of the variance in performance judgments, nonlinear models consistently yielded a higher predictive performance. Although the improvement was moderate in magnitude, it was valuable in demonstrating the added value of nonlinear models for capturing more complex cue relationships. This also fits with other research using behavioral cues, demonstrating nonlinear relations of behavioral variables with judgments (e.g., Ames & Flynn, 2007; Berry & Lucas, 2022; Dougherty & Thomas, 2012; Hu et al., 2019; Pierce & Aguinis, 2013). Our findings highlight the importance of an objective, behavior-oriented perspective in research on judgments of all sorts of individual differences (e.g., performance, personality attraction) and might reinvigorate the research on nonlinear behavioral effects.

Limitations and future research

This paper made a case for the utility of an ML-based approach for cue extraction and cue integration for research dealing with judgments of individual differences, using performance judgments as an example. However, as with any demonstration, we were limited to only capturing a selection of the actual potentials and boundaries of the presented approach. Thus, several limitations and opportunities for future applications must be noted.

First, this study focused on performance judgments as an example of how the presented ML-based approach can be applied. However, it is important to acknowledge the broader applicability of this approach. It can be applied to any type of social judgment given that relevant behavioral recordings and judgments are available. For example, we see strong potential to contribute to established lines of research by providing a more fine-grained understanding of the behavioral underpinnings of key social judgments such as, for example, personality judgments (Back, 2021; Cannata et al., 2022; Funder, 2012). Similarly, this approach also holds potential for the investigation of evaluative social judgments that, to this date, are hardly predictable based on self-report information alone—for example, relationship effects of interpersonal attraction (Back, Schmukle, & Egloff, 2011; Eastwick, Joel, et al., 2023; Joel et al., 2017).

Second, we focused on language as the main channel to convey information in interpersonal situations. This approach can be extended to other channels and domains. While having received only limited attention in psychological research, there is, for example, an increasing variety of models available that allow to consistently extract behavioral cues from visual data. Their application in judgment research would allow researchers to include additional domains of cues, such as nonverbal cues (i.e., differences in how people move and position their bodies including body language; e.g., Baltrusaitis et al., 2018; Brahmbhatt, 2013; Cao et al., 2021) or static cues (i.e., differences in how people look; for an overview, see, for example, Cheng et al., 2021) to capture the universe of interpersonal behaviors even more comprehensively (Breil et al., 2021). Given that for many prior studies of interpersonal judgments video data is available, the extension of the presented approach to include behavioral cues from the visual channel seems like a promising avenue for future research.

Third, while the applied ML-based approach to cue extraction and integration allowed us to extract a wide variety of fine-grained cues, we still limited our extraction to summary statistics on the temporal dimension. Even though some of our cues considered time-dependent information (e.g., the variability of the MFCC over time), we were not able to consider this time-dependence for all cues and cue combinations. Since both domains of language behavior are highly temporal and context-dependent, there might be additional predictive potential in even more fine-grained analyses that incorporate a matching of the domains on the temporal dimension. Recent research in machine and deep learning architecture demonstrates this potential by showcasing the advantages of transformer-driven architectures that extract and integrate verbal cues in a temporally and contextually dependent manner (Gong et al., 2021; Vaswani et al., 2017; Wolf et al., 2020). For paraverbal behaviors, suitable adaptations of the transformer architecture exist (e.g., Dosovitskiy et al., 2020). However, due to their complexity, these architectures are challenging to train, as, among other challenges, a large amount of training data and computational resources are needed.

Fourth, to limit the (computational) complexity of the presented example, we only reported simple SHAP values that do not distinguish between main and interactive effects. There are, however, adaptations of the SHAP logic to interactive effects, making it possible to analyze the importance of second-order, third-order, and higher-order interactions (Lundberg et al., 2020). However, their computational cost and their challenging interpretation for models trained on large numbers of features would necessitate exploring mitigation strategies such as advanced feature selection and aggregation techniques, potentially reducing the granularity of the extracted insights on the behavioral level. While beyond the scope of this study, exploring the application of SHAP interaction values could be a valuable extension of this approach in future research.

Fifth, although a sample size of n = 556 participants in a high-stakes, real-world application context using interpersonal role-play exercises is a strong improvement compared to typical research with actual behavioral data, it is still limited in the number of participants and exercises considered. One key reason for this limitation, beyond the high costs for the execution of the interpersonal role-plays themselves, is the cost of manually creating the relevant transcripts. The combination of privacy considerations due to the high-stakes nature of the data and the specialized vocabulary used in the medical field made it necessary to create the transcripts manually. However, progress in the field of natural language processing has now led to the development of several services that show remarkable precision for automatically transcribing audio streams, such as OpenAI’s whisper model (Radford et al., 2023), allowing for future research without such restrictions to easily automate this preprocessing step as well.

Sixth, another limitation of this study is the composition of the sample, which was predominantly White, highly educated, and somewhat skewed toward female applicants (73% female). While this reflects the typical demographics of applicants in the study context, this imbalance may have slightly reduced the model’s sensitivity to detecting subtle or subgroup-specific effects. For the issue of sex imbalance, the absence of any predictive signal for sex across all variable importance measures suggests that this information was not meaningfully utilized by the model. Future research should aim to include more balanced and diverse samples to validate these findings and ensure that the models are robust across different demographic groups.