Abstract

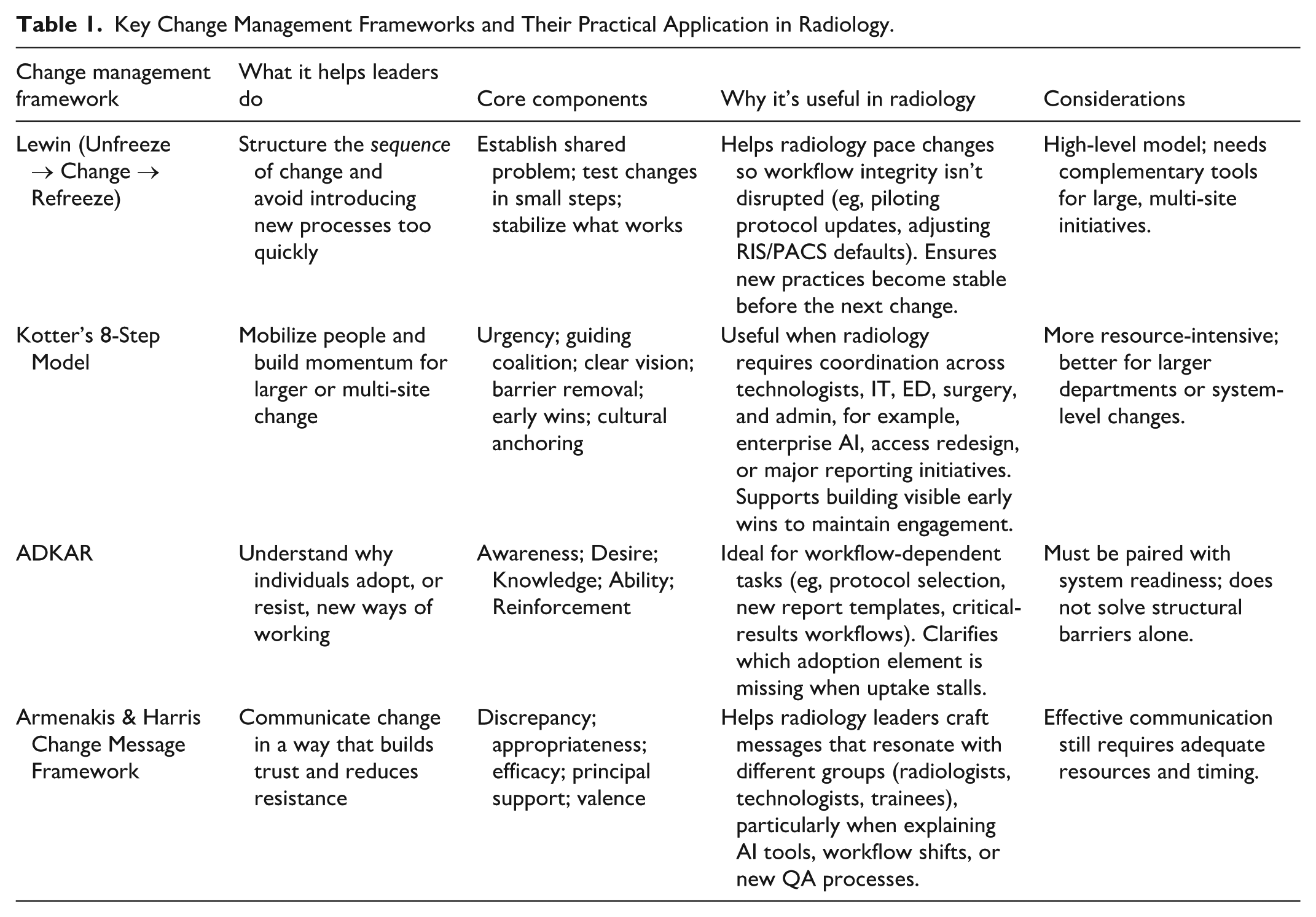

Radiology is experiencing rapid and interconnected change, including rising imaging volumes, expanding access demands, and the introduction of artificial intelligence into daily practice. However, many radiologists have limited exposure to structured approaches for leading change in complex clinical environments. Change management research provides a practical vocabulary and set of concepts that can help radiology leaders design and sequence change more effectively. Organizational readiness encompassing cognitive, operational, trust, and resource dimensions is consistently associated with successful transitions. Classic frameworks such as Lewin’s change stages, Kotter’s 8-step model for mobilizing teams, the ADKAR model for individual adoption, and Armenakis’ evidence-based change-messaging principles offer radiology-specific value when planning workflow adjustments, introducing new processes, or shaping departmental culture. Attention to workflow reality, early engagement of key groups, understanding human responses to change, appropriate pacing, particularly during leadership transitions, and clarity of communication further support sustainable change. Applying contemporary change management concepts can help radiology departments and leaders navigate evolving demands while maintaining coherence, stability, and high-quality patient care.

The Case for Effective Change Management in Radiology

Radiology is a specialty characterized by periods of change. 1 Currently, changes facing radiologists include rising imaging volumes outpacing staffing, piloting of artificial intelligence (AI) tools to assist with workflows, increased access to imaging, and increasing expectations around reporting quality, communication, and patient experience. 2 Radiologists have always adapted to technological changes in the past, but the pace and interdependence of current changes is marked. Radiologists are increasingly pulled into roles where they must redesign workflows, lead digital initiatives, and steward quality programs, often across multiple sites.

What is often missing is a shared language and evidence base for how change succeeds inside complex, clinically constrained environments. Many radiologists have watched a promising AI tool, reporting template, or access redesign fail because the transition was not managed deliberately. 3 Change management research, much of it from business and organizational science, can provide radiologists a set of tested concepts that help innovations take root. Change management principles can explain why some quality improvement initiatives flourish, while others fall short, and why the same AI tool may integrate smoothly at one site, but stall at another. Change management purports that workflow redesigns succeed when they fit how people actually work rather than how we imagine they work. 4 There is an opportunity for radiology as a specialty to draw on a substantial evidence base from organizational psychology and business research and adapt those insights to the rhythms of imaging departments.

Radiologists are now expected to manage change continuously; however, many are not trained or familiar with change management principles to effectively lead changes in line with their vision. This article outlines the key frameworks that radiologists can use to design, communicate, and sustain change in a way that protects workflow integrity and enhances the clinical work of the department.

Organizational Readiness: The Strongest Predictor of Change Management Success

Among the most consistent findings in change management research is that organizational readiness predicts whether change will actually occur. Weiner’s theory defines “readiness” as a shared sense of commitment and confidence that the organization can execute a change. 5 A recent systematic review reinforces readiness as one of the strongest predictors of successful uptake across healthcare contexts. 6 In radiology, readiness is best understood across 4 dimensions:

Cognitive readiness: people need to need to understand why the change is needed and see evidence relevant to their own work. Radiology leaders must ask themselves: do radiologists, technologists, clerical staff, and trainees understand the problem the change is attempting to solve, supported by local data? Examples include variation in CT contrast protocols, timing of stroke CT reporting, or follow-up of incidental findings.7,8

Operational readiness: the radiology department must have enough capacity: staffing, scanners throughput, IT support to absorb temporary disruption by a change. Radiology leaders must ask themselves: is there enough capacity in scanners, staffing, and IT to tolerate the disruption and learning curve that change will introduce?

Trust readiness: people believe leadership will adjust workflows based on feedback and will not penalize early challenges. Radiology leaders must ask themselves: do people believe that if early difficulties arise, leadership will respond by adjusting the plan rather than blaming individuals?

Resource readiness: training time, technical support, hardware/software stability, and protected space exist to make adoption feasible. Radiology leaders must ask themselves: are time, training, and technical support genuinely available, or is the plan implicitly asking people to “fit it in” around everything else?

When readiness is high, even complex initiatives can succeed. 9 When readiness is low, even well-designed changes struggle. Readiness is a diagnostic tool, and should be used by radiology leaders to ask rather “in what order, at what pace, and with what supports should change occur?” For example, a department might be cognitively ready for implementation of AI in stroke imaging but operationally saturated, suggesting an early focus on unlocking capacity or starting with a tightly scoped pilot.

The Introduction of a New Leader

One commonly overlooked insight is about timing change. When a new chief, site director, or program lead begins a role, the department is already adjusting. People are watching for signals about priorities, culture, and expectations.10,11 That leadership transition itself is a form of change, or “unfreezing” in Lewin’s terms. This means existing patterns loosen and people become more attentive to what might shift. 12 This is rarely the main story in a department’s life; however, it is a background change. If major initiatives are piled on immediately at the same time of the introduction of a new leader, they compete with this adjustment and can create a sense of saturation. If major initiatives are implemented before the department settles to the introduction of a new leader, they can inadvertently compete with that adjustment. 13 The main takeaway for radiology leaders in new positions is that they themselves are significant change, and to pause before introducing new changes, to not overwhelm with new changes faster than the department can realistically absorb. Recent reflections from Canadian radiology leaders reinforce this point, noting that the first months of a new leadership role are an adjustment period in which observing, listening, and understanding departmental culture often have greater impact than initiating rapid change. 14

Designing the Arc of Change: Applying Lewin and Kotter to Radiology

Kurt Lewin’s Unfreeze, Change, Refreeze model remains one of the most influential frameworks in organizational psychology. 12 Its main strength is in giving leaders a disciplined way to sequence change in tightly coupled environments, like radiology. Unfreezing in radiology means creating a shared view that the current state is no longer acceptable, grounded in data that clinicians recognize: turnaround times, safety incidents, equity of access, or patient experience. The change phase is where pilots and iterative adaptation occur. Refreezing means building successful practices into the structures that make behavior stick: Radiology Information System (RIS) and PACS defaults, structured report templates, checklists, orientation materials, routine quality reviews, or event patient-reported data.15,16 Weiner’s reappraisal of Lewin’s work emphasizes that the “refreeze” step in fast-changing environments marks a period of stability before the next wave of adaptation. 17

For larger or multi-site work, John Kotter’s 8-step framework adds a more granular way of mobilizing people at scale. This model, derived from analysis of numerous organizations, describes a sequence that includes: establishing urgency, building a guiding coalition, crafting and communicating a clear vision, removing barriers, generating short-term wins, sustaining acceleration, and anchoring changes in culture. 18 A radiology-relevant example might be an AI triage tool for intracranial hemorrhage. Urgency comes from local data on delayed reads overnight. The coalition includes neuroradiologists, ED clinicians, technologists, residents, PACS and IT leads. The vision is concrete: every emergent bleed is prioritized within minutes of image acquisition. Barriers are identified and removed, such as separate AI dashboards that require extra clicks. Early wins are highlighted and shared. New patterns are then anchored by building AI into call orientation, quality assurance processes, and budgeting. Educational work has shown that Kotter’s framework can successfully guide redesign of complex curricula, which suggests its applicability for clinical and workflow change in radiology as well. 19 Kotter’s framework can therefore also be used guide changes to improve radiology curricula, 20 or for improving radiology workflows to be more patient-centered by introducing patient-reported outcome measures (PROMs), where change management can learn from PROM introduction in other clinical fields.21-24

Supporting Individual Adoption and Clear Change Messaging

Where Lewin and Kotter focus on systems, the ADKAR model focuses on people. The ADKAR framework identifies 5 outcomes that individuals need to achieve for a change to succeed: Awareness of the need for change, Desire to participate, Knowledge of how to change, Ability to implement required skills and behaviors, and Reinforcement to sustain the change. 25

Radiology is an ideal setting for ADKAR because change in radiology depends on individual behavior in real time. Harmonizing CT protocols across sites, for example, requires that technologists understand why variation matters, they actually want the consistency it brings, know which protocol to select in what scenario, can apply it accurately on a busy shift, and receive signals that the new practice is noticed and valued. If any component of ADKAR is missing, the effort will feel fragile. Case reports from nursing and informatics show that using ADKAR explicitly can improve communication and training during complex clinical change.26,27

Armenakis and Harris add a complementary lens on communication and change messaging. They propose that effective change messages consistently conveys 5 key ideas: that there is a meaningful gap between the current and desired state (discrepancy), that the proposed solution is appropriate, that the organization can succeed (efficacy), that key leaders support the change (principal support), and that the change has personal relevance and benefit (valence). 28 In a radiology context, introducing a peer learning model for quality improvement, a new critical-results communication process, or an AI-assisted workflow will land differently if the message staff hear answers those questions clearly. The framework encourages leaders to design their narrative, moving beyond solely announcing an implementation date to changes rooted in strategic dialogue.

Understanding Human Reactions to Change

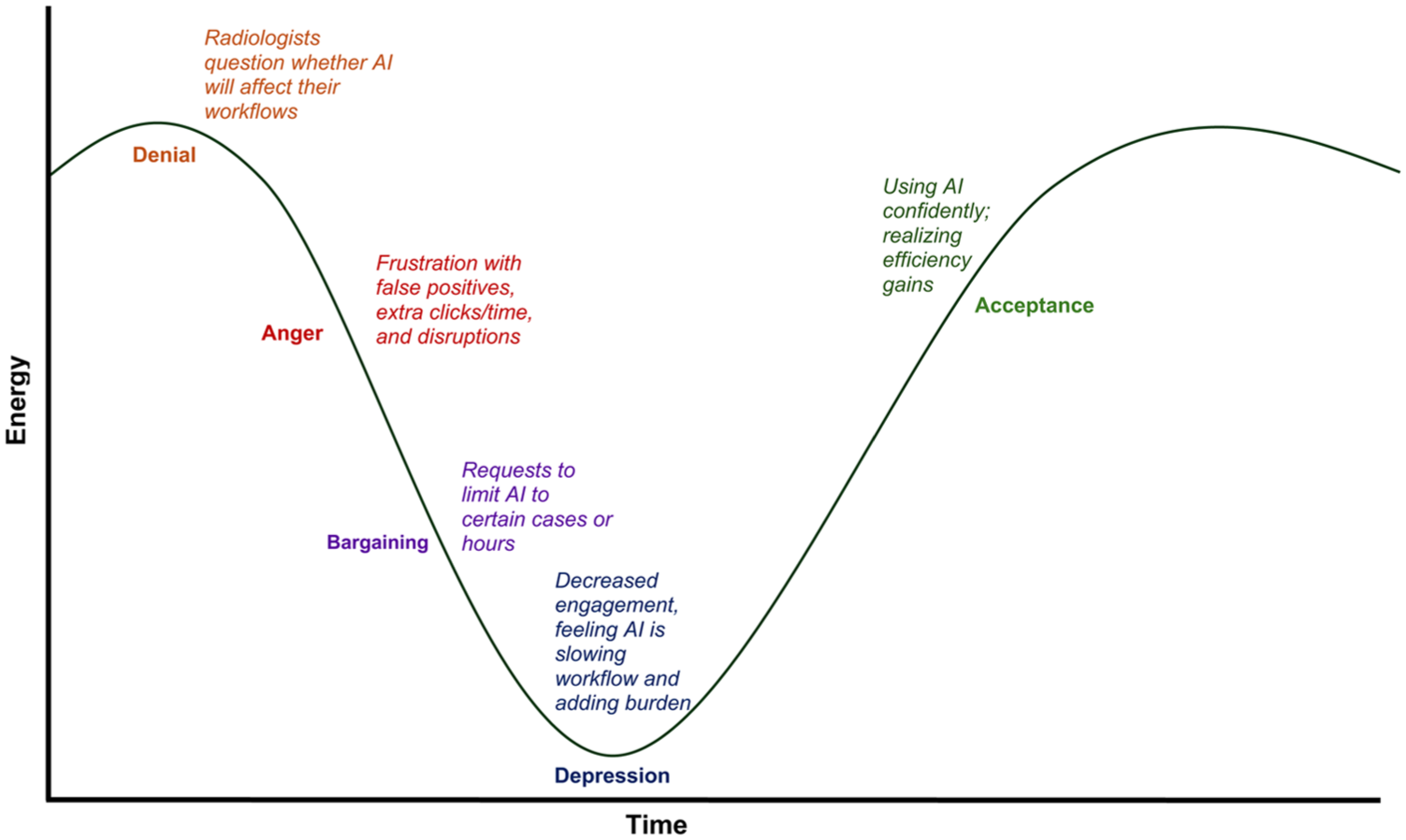

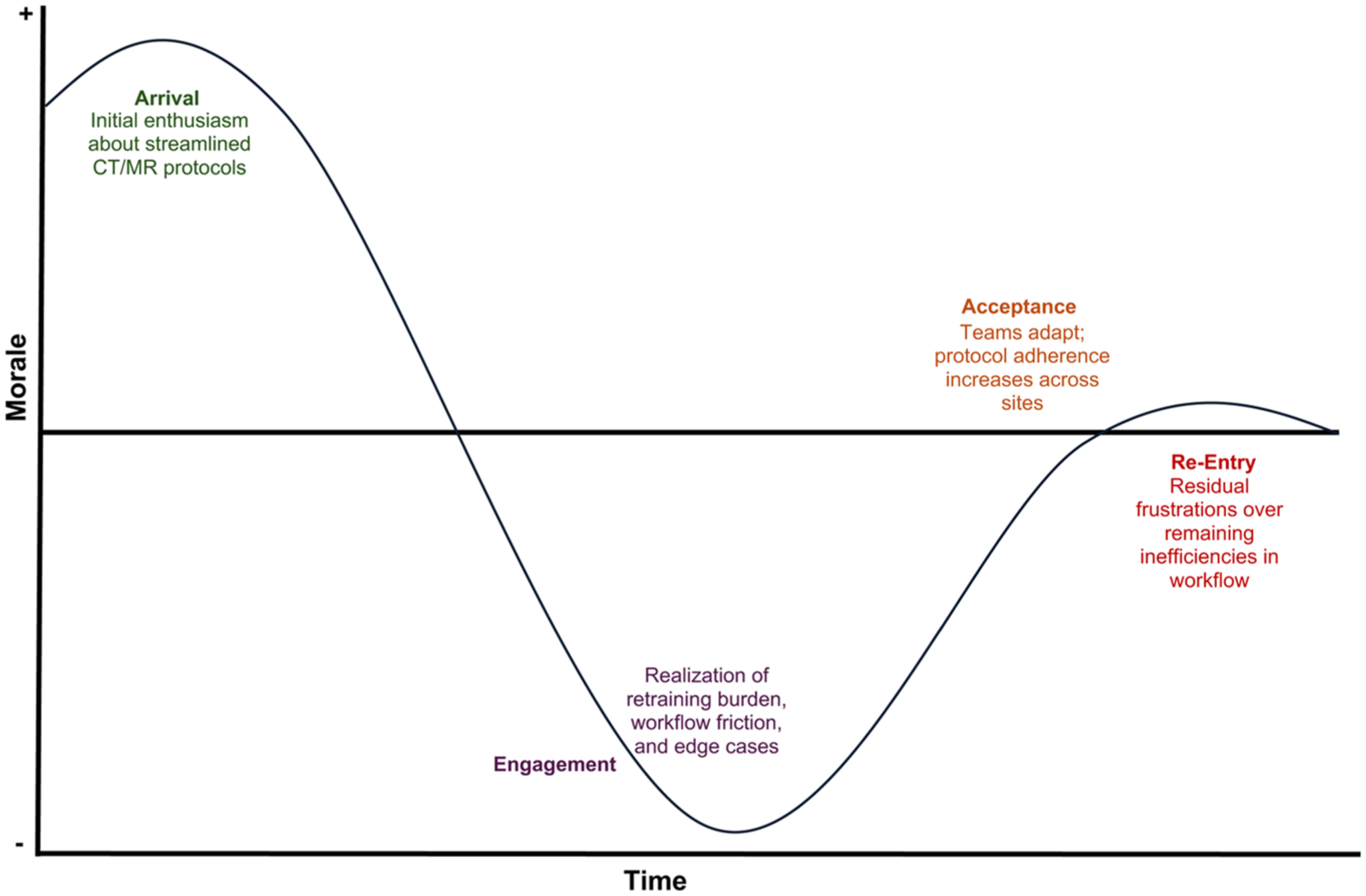

In addition to adoption and messaging frameworks, 2 psychological models: the Kübler-Ross Change Curve and the Menninger Change Curve, offer useful insights into how individuals experience transition. Originally developed to describe emotional responses to loss, the Kübler-Ross model has since been widely adapted to organizational change, outlining common stages such as shock, denial, frustration, exploration, and eventual acceptance.29,30 Radiology teams may move through similar reactions when confronted with new reporting templates, altered workflows, or AI decision-support tools, especially when these changes affect pace, cognitive load, or autonomy. The Menninger Change Curve, developed through research on organizational transitions, describes a related arc that includes immobilization, denial, depression, testing, and acceptance. 31 Both models underscore that early discomfort is often a natural psychological response rather than resistance to improvement. For radiology leaders, their value lies in normalizing these reactions, supporting staff through the “low points,” and creating structured opportunities for exploration and competence-building as teams move toward sustained adoption for new changes.

These concepts can be visualized using adapted change-curve examples from radiology practice. In a Kübler-Ross-style trajectory applied to AI workflow adoption (Figure 1), radiologists may begin by questioning whether the tool affects them, move through frustration with early false positives or added clicks, and enter a bargaining phase where they request narrower use. A temporary dip in engagement often follows before confidence and routine use emerge as the tool stabilizes. 32 Similarly, the Menninger curve (Figure 2) captures the morale pattern common in protocol-harmonization efforts: initial optimism about standardization, a predictable dip as retraining demands and workflow friction surface, and gradual recovery as teams adapt, sometimes followed by a smaller “re-entry” dip when unresolved inefficiencies persist. These curves can help radiology leaders anticipate normal patterns and support teams through the emotional and practical demands of change.

Adjustment curve for AI adoption in radiology (Kubler-Ross adaptation).

Menninger curve applied to radiology protocol harmonization.

Grounding Change in the Everyday Radiology Workflow

Much of the recent literature on AI in radiology repeatedly emphasizes accuracy is not enough for successful adoption. Many AI tools with excellent technical performance fail in practice if they do not fit the way radiologists and technologists actually work. Daye et al describe a framework for AI implementation that emphasizes infrastructure, workflow integration, and governance questions such as who decides which tools to adopt, how they are introduced into clinical practice, and how performance is monitored over time. 33 AI performance also lacks relevancy if it adds clicks, creates parallel workflows, or disrupts reading-room flow. Davis writes about AI and equity, arguing that radiologists should consider the entire imaging pathway, from who gets imaged, through acquisition and interpretation, to follow-up and communication. 34

One practical technique that follows from this work is “workflow storyboarding.” 35 Rather than starting from the algorithm or policy, radiology leaders trace what each stakeholder does before, during and after an imaging study: the referring clinician ordering, the clerk scheduling, the technologist preparing the patient and scanning, the images entering PACS, the radiologist reading, reporting and communicating, and the downstream team acting on the report. For each change, the questions become concrete: at what screen will AI output appear, in what sequence will cases be queued, how will discrepancies be handled, what happens when the system degrades at 3 a.m., and who owns each step. This way of working naturally leads to co-production. Radiologists, technologists, IT specialists, clerical staff and sometimes patients are involved early in design. This improves engagement practice, and the quality of the change itself.

Pilots, Safe-to-Fail Experiments, and the “Invisible” Architecture That Shapes Change

Another key lesson from business literature which can be applied to radiology is the value of safe-to-fail pilots. 36 Radiology is almost designed for this. Radiology is well suited to piloted, iterative change because modalities, scanners, and sites create natural units of experimentation. Starting with a single modality, pathway or site allows teams to learn quickly and safely, generate early benefits, and identify unintended consequences before scaling. Literature on changes from AI pilots recommend phased deployment with careful monitoring for exactly this reason. 33

Once a pilot works, sustainability depends on the “invisible architecture” of the department. This includes RIS and PACS defaults, structured report templates, contrast and protocol libraries, order sets, call-room workflows, orientation materials for new staff and trainees, QA dashboards, and peer learning systems. Schein’s work on culture emphasizes that what leaders pay attention to, measure and build into structures eventually becomes “how we do things here.” 37 When a change is written into this architecture, it is far more likely to survive beyond even the enthusiasm of its champions.

Culture itself of a radiology department matters in more direct ways. Departments with stronger psychological safety, where people can raise concerns or admit uncertainty without fear of ridicule, being dismissed, or punishment, are more capable of learning through change. 38 That is particularly important when introducing technologies such as AI, where the right response to an unexpected error is open discussion and system adjustment, rather than individual blame.

Small and resource-limited radiology departments encounter structural considerations even more sharply. Sites with a single scanner, minimal technologist coverage, or limited IT support have far less buffer to absorb the temporary turbulence that accompanies change. Protected time for training or redesign may be difficult to secure, and even small workflow interruptions can cascade into access bottlenecks or delays. In these environments, realistic pacing and tight scoping become essential; and change must be introduced in pilotable, low-risk units that the department can absorb without destabilizing daily operations. Explicit planning for micro-supports: brief huddles, troubleshooting windows, or remote IT standby can prevent avoidable disruption and help ensure that change progresses at a pace aligned with local capacity.

Why Change Management Matters for the Future of Radiology

Radiology operates at a unique leverage point in modern healthcare. Medical imaging informs diagnoses and management in almost all specialties. The way radiology changes has consequences that propagate across entire systems. Radiology also has a longstanding history of operating on the cutting edge, being early adopters for changes in healthcare, and paving the way for modern healthcare changes. AI adoption, protocol harmonization, access redesign, and new quality frameworks affect when patients are imaged, how quickly they are diagnosed, how reliably critical information is communicated, and how equitably services are distributed. Change management, in this context, is part of professional skill set required to translate good ideas into stable, safe, and meaningful improvements. Readiness theory, classic models such as Lewin and Kotter, individual frameworks like ADKAR, and message design principles from Armenakis, combined with workflow storyboarding, anticipating human responses to change, piloting and attention to invisible architecture, give radiologists a precise and evidence-informed way to shape the future of their departments. A summary table of key change management principles is provided in Table 1.

Key Change Management Frameworks and Their Practical Application in Radiology.

The future of radiology almost certainly will involve more change, not less. The advantage for radiology is that it already has many of the habits needed for effective change work: comfort with data, pattern recognition, attention to detail and interdisciplinary collaboration. Bringing contemporary change management concepts into that mix increases the chances that when radiology changes, it does so in ways that are coherent, sustainable, and aligned with the care patients receive.

Footnotes

Author Contributions

RK was involved with conceptualizing the study. RK led the writing of the manuscript. RK and MP were involved with critical revision of the manuscript. All co-authors approve the submission.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.