Abstract

The introduction of artificial intelligence (AI) in interventional radiology (IR) will bring about new challenges and opportunities for patients and clinicians. AI may comprise software as a medical device or AI-integrated hardware and will require a rigorous evaluation that should be guided based on the level of risk of the implementation. A hierarchy of risk of harm and possible harms are described herein. A checklist to guide deployment of an AI in a clinical IR environment is provided. As AI continues to evolve, regulation and evaluation of the AI medical devices will need to continue to evolve to keep pace and ensure patient safety.

This is a visual representation of the abstract.

Introduction

As artificial intelligence (AI) begins to be used in clinical practice in interventional radiology (IR), clinicians will be tasked with the selection, implementation, and on-going evaluation of these clinical tools. The AI tools may either include software as a medical device (SaMD) or hardware with AI tools built in. Surveys have shown that the majority of radiologists do not believe that AI will have a significant impact on clinical practice in the short term, but will in 5 or more years. 1 Indeed, there has been an exponential increase in AI related publications in radiology since the mid-2000s. 2 A recent Food and Drug Administration publication noted over 100 devices were approved for use in radiology in 2022, the highest number out of all other specialties. 3 In preparation for this change, interventional radiologists who are interested should consider developing domain expertise in the field of AI to be able to act as informed consumers and collaborators. In part 2 of this guide to AI implementation in IR, we explore types of implementations and associated risk management strategies along with consideration of policy and regulations. Finally, an 11-point checklist is proposed to provide clinicians with a structured approach when faced with AI implementation.

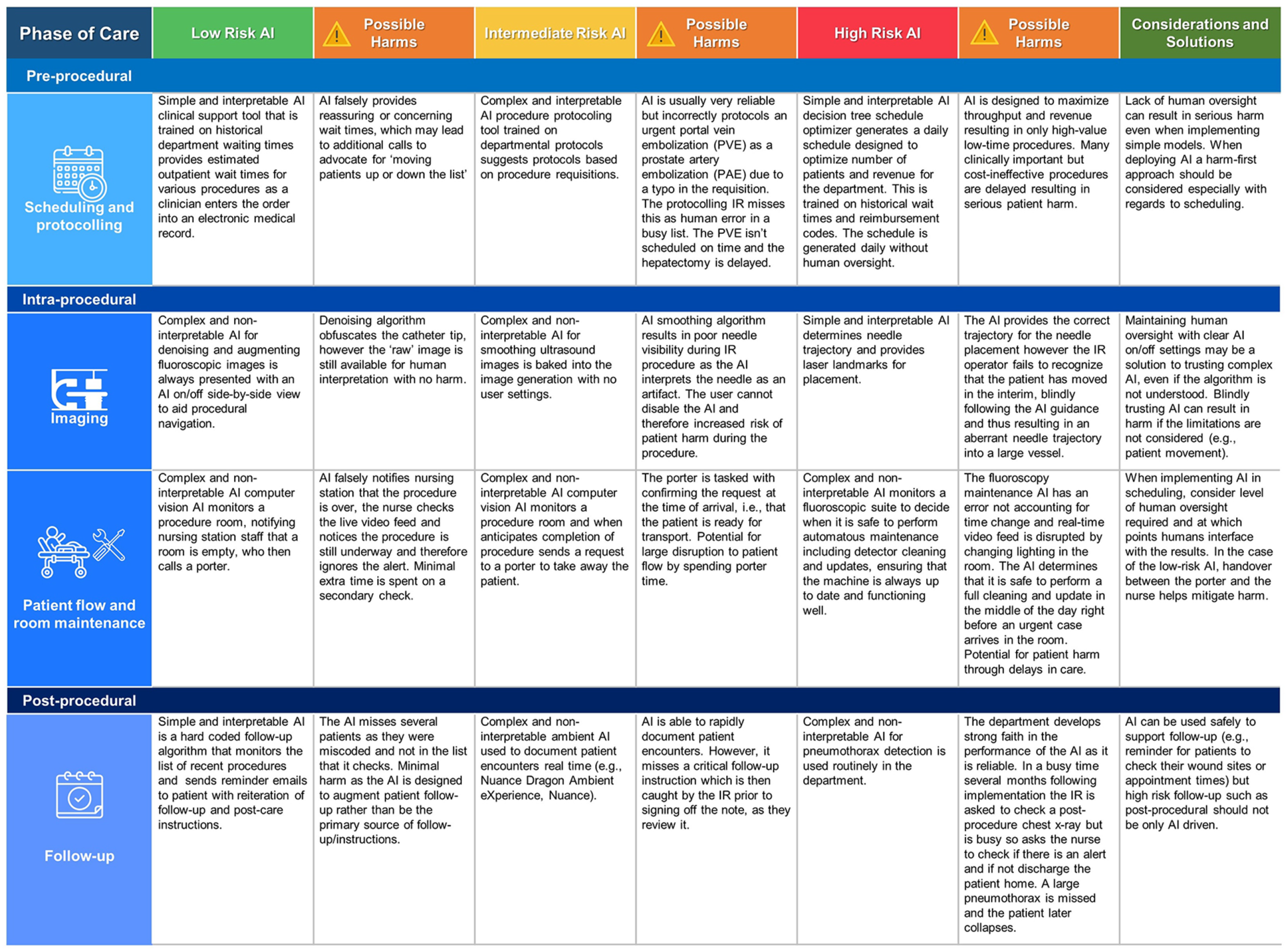

Risk of Harm

The World Health Organization released a guide on the ethics and governance of AI in healthcare. 4 In this guide, they reinforced the requirement for rigorous evaluation in the context of utilization (ie, real-world assessment), regular evaluation of models, and the need for independent oversight. There is increasing recognition of the possibility of harms related to AI in medicine and otherwise, to the point that the White House recently issued an executive order related to AI to ensure the protection of the security, privacy, and rights of Americans. 5 Increasingly, AI is treated like a medical device, requiring oversight from government regulators. 6 The black-box phenomenon related to several types of AI however presents a challenge in the oversight and evaluation of AI, as the mathematical underpinnings may not be well understood. Additionally, as AI tools are largely mathematical models that are inherently fragile, minor changes to the underlying model may merit complete re-evaluation whereas a physical device may not require such extensive evaluation. 7 Broadly, there are 2 key components to risk management of AI in IR: (1) risk of the underlying AI, and (2) risk of implementation.

Risks that may become inherent or baked into the AI can be related to model selection and creation as well as the underlying data, discussed in part 1. In brief, many AI tools are inherently greedy, aiming for the “easiest” solution to the problem, occasionally finding unsatisfactory shortcuts to problems. 8 Machine learning based techniques are built in such a way that they cannot be easily repaired with code patches, but rather may require re-training of the underlying model. 7 Additionally, pre-existing discrimination can be hard to identify in the data, and subsequently propagated and reinforced through model implementation. This is especially true in the context of high dimensional data, where it may not be readily appreciable to humans.4,8 Considering these factors, we recommend a risk-based categorization approach to evaluation and implementation of AI in clinical settings. This categorization is adopted from the International Medical Device Regulators Forum categories for types of SaMD. 9

Low risk implementation: The AI model is isolated from clinical or administrative decisions or is sandboxed and provides a clinical tool without direct integration/autonomous decision making, remaining heavily supervised.

Intermediate risk implementation: AI guides clinical and administrative decisions with direct human oversight. Examples include integrated clinical support tools, AI-augmented imaging analysis, and patient monitoring tools.

High risk implementation: AI is charged with making clinical decisions or administrative decisions that may significantly alter patient care or flow with limited human oversight or completely autonomous AI without any human oversight. This unsupervised or minimally supervised implementation may include performing procedures (or portions of procedures), administering drugs, directing other healthcare providers, or AI only output (eg, only AI post-processed imaging with no raw/unprocessed data to interrogate).

The level of risk may guide the extent to which evaluation is required (Figure 1). Simple and explainable AI in a low-risk implementation may not require much consideration prior to implementation. The level of risk may also guide more in-depth assessment for several specific types of risks including malicious use, patient risks, and organizational risks. 8 Nonetheless, regardless of the AI tool complexity, unforeseen circumstances can arise in our very complex healthcare systems and hence must be anticipated and planned for.8,10 In the following section several risk-focused considerations are raised.

Examples of implementation of types of IR as they relate to phases of patient care in IR with varying level of risk.

Considerations for AI Tool Implementation

AI implementation requires ongoing maintenance and updates, like other software and medical devices, with higher maintenance possibly required for SaMD. Long term costs and resources associated with system implementation and maintenance should be considered at the outset. 4 Due to the fragility of AI systems, updates to the input data may necessitate the entire AI system to be updated or replaced. For example, a local IR department may use an AI integrated into their PACS to detect incidental bone lesions on vascular MRI, trained on local data and current generation MRI machines. After several years pass by the MRI is replaced, and the AI has depreciated in value as it no longer matches prior performance due to changes in the input data (MRI sequences) for inference, thus requiring either substantial update, replacement, or removal.

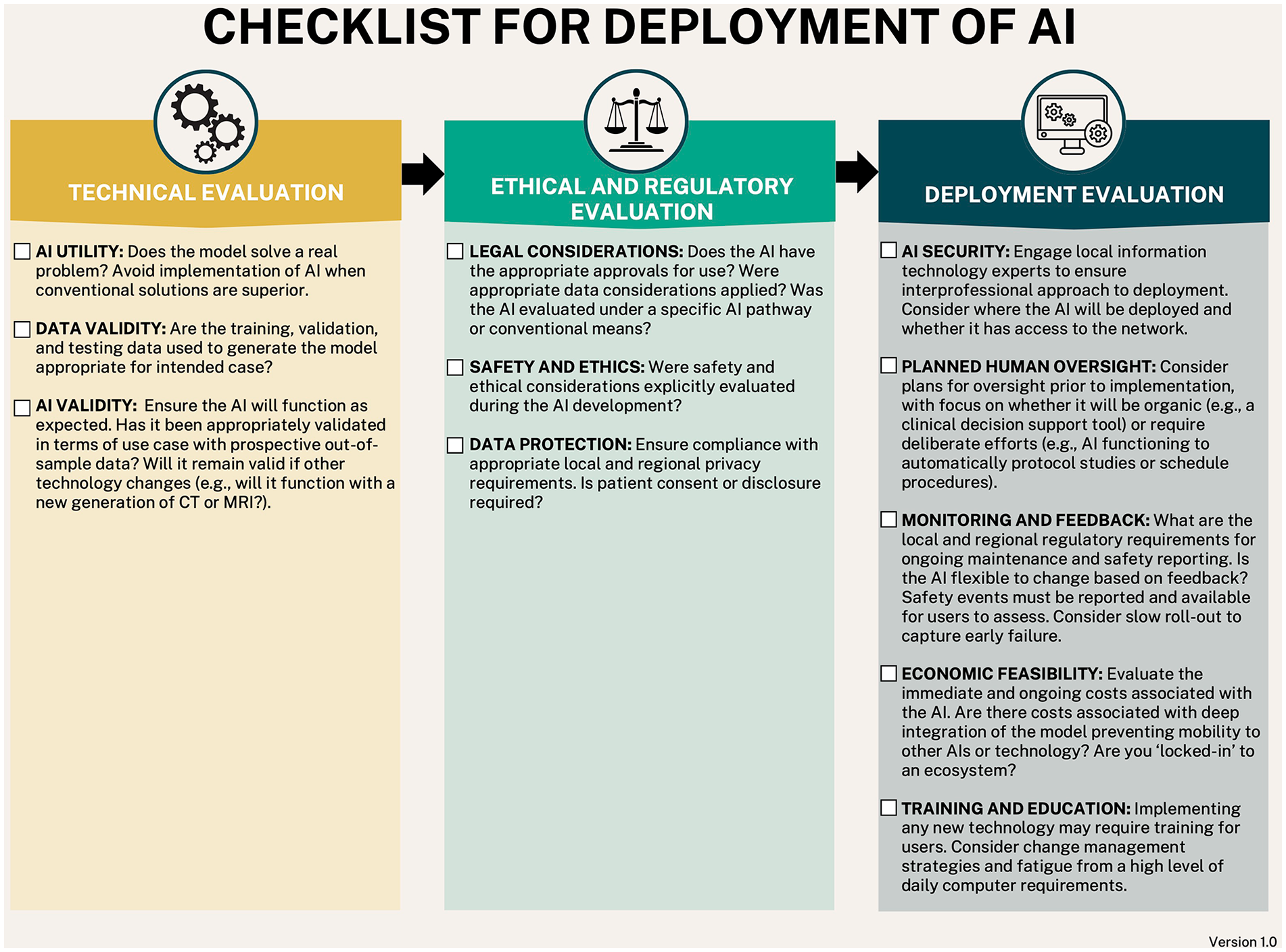

To date many different value propositions have been made for AI. For example, one recent neuroimaging study demonstrated that quality of care, reduced costs, and saving user time were the most common value propositions. 11 This is of critical importance in healthcare where incompletely evaluated products can lead to patient harm or wasted resources. Frameworks for evaluation of technology have been previously proposed, and one framework that lends itself well to AI implementation is a framework for connected sensor technologies. 12 This approach focuses on validation, security, data, utility, usability, and economic feasibility. The final point on economic feasibility remains at the forefront of many administrators concern, with payers likely to only fund AI if it can demonstrate value either through financial means (reduced cost or increased productivity) or outcomes. 13 Several checklists have been developed for various AI tasks, including dataset standards, peer review, and academic publishing. 14 For example, the Checklist for Artificial Intelligence in Medical Imaging is widely adopted across many medical imaging specialties and focuses on the development of AI models and research dissemination. 15 As IR departments aim to follow principles of high reliability organizations, it is the responsibility of the department to remain preoccupied with safety and failure rather than just cost. 16 Therefore, consideration of the risk of implementation that should drive further evaluation of an AI prior to implementation, be it as a hardware device or SaMD. Checklists may act to support decision making and ensure uniformity. 16

We propose a modified version of such a framework in the form of a checklist to match the considerations for implementation of AI in IR (Figure 2).

11-Item checklist for AI tool evaluation and implementation.

There are additionally specific considerations for implementation of AI in specialized settings. For example, if the AI is allowed to have continued learning (eg, reinforcement learning, self-updating), in the intermediate or high risk setting, there is a possibility for goal drift and catastrophic events without human oversight.7,8 AI models by design may exploit a given problem finding any shortcut to the optimal solution, which may lead to unexpected results or an infeasible solution in the real world, which overall has the possibility for catastrophic consequences. For example, an AI agent designed to reduce room turnover time in an angiography suite may eventually “discover” that the optimal solution is to only book highly complicated procedures that are likely to go overtime, thus reducing the number of room turnovers and consequently total daily turnover time. Alternatively, “poisoned” data can be used to alter the model as it learns to adapt to the poisoned data rather than normal clinical data. 17 Use of a continually updating AI should prompt the user to consider upgrading the risk category of the implementation. Importantly, these unexpected consequences may not be obvious at the time of implementation but later after the model is already in use. 8

In the context of understanding the underlying AI tool, the source code used to generate the model should be considered. 18 Some commercial models may be difficult to audit due to confidential source code. Many tools are now open source with numerous developers contributing and allowing code inspection to understand AI models. The possibility for malicious code rises as these frameworks become more complicated. 19 It may be that the AI developers are unaware of the security implications of the code they are using. If working in concert with AI developers to generate a local model, consideration needs to be given to data sharing and the model used. Several machine learning algorithms can be used to store training data in obfuscated ways, for example, a decision tree with a leaf size of 1 could be used to memorize the training data or a neural network could be used to reconstruct the training images. 20 It has also been demonstrated that patient re-identification can be performed with chest X-rays. 21 It may therefore initially be unclear what the risks of a model are. Ideally, clinical teams looking to implement AI tools into their practice should have IR departments with dual domain expertise such that they are able to create a bridge between computer science and clinical practice. This reinforces the importance of introducing AI curriculums in medical training.

Documentation and Regulation

The relatively slow implementation of AI in IR provides an opportunity to develop necessary regulatory guidance and frameworks for AI implementation. 22 In October 2021 a joint document titled Good Machine Learning Practice for Medical Device Deployment: Guiding Principles was produced by combined efforts of Health Canada, the United States Food and Drug Administration (FDA) and the United Kingdom Medicines & Healthcare products Regulatory Agency. 23 The document mirrors many of the guiding principles seen in other AI frameworks as well as in this two-part series.7,24,25 It highlights the interprofessional nature of such medical devices and the need for a socio-technical approach (ie, the focus on a human-AI team). Health Canada also recently released draft guidance in August 2023 on machine learning in medical devices (MLMD) including clear expectations such as declarations regarding whether a device uses machine learning (ML), for example, a hardware device with ML. 26 They outlined a lifecycle for MLMD with 8 stages, emphasizing the iterative nature of ML products both in healthcare and non-health care applications. Similar documents have been released in other countries, for example, the United Kingdom laid out a framework for software and AI as a medical device. 27 However, change is slow. In the US, the FDA evaluates medical devices with AI by conventional means and has approved multiple AI/ML powered SaMDs on the basis of similarity to old technology using the 510(k) pathway, including software pre-dating the 1990s. 28 Nevertheless, the aforementioned recognition by government authorities that AI medical devices do not necessarily behave like traditional software or physical devices is a positive step towards safe evaluation and deployment of such tools.

Further blurring the lines of product evaluation is because AI is an umbrella term ranging from very simple logic-based AI to highly complex and non-interpretable AI.29,30 Because of the broad nature of AI, greater clarity is needed as to which should undergo alternative regulatory approval and what that might look like. The healthcare industry must define what is considered an AI that warrants a more in-depth analysis versus a simple, weak, or classic AI tool. This differentiation is required as a complex non-interpretable AI may be under-evaluated relative to conventional pathways for medical device (software or hardware) evaluation. Conversely, a blanket AI regulatory framework may risk capturing simple or low-risk AI tools in unnecessary regulatory hurdles, thereby delaying important advances for patient safety. For example, a recent FDA publication outlined over 100 new radiology AI devices approved in 2022, however it is unclear if that number is accurate or not since the definition of AI and inclusion within the criteria are vague. 3 Given the advances in the technology and rising concern from AI industry regarding regulations, it likely that AI safety experts, such as the Center for AI Safety (CAIS), will need to weigh in on the discussion.4,31 Additionally, organizations focused on AI safety and ethics will continue to provide insights on possible adverse events that may arise, with the hope that safety research parallels progression in AI research.32,33 It ultimately remains to be determined whether a device that uses AI or ML should be separately classified from other medical devices and where that differentiation should be.

Moving forward, close documentation of device experiences will also be required and should be appropriately responsive to patterns of errors or harm relative to deployment scale. For example, a province-wide AI requires heightened sensitivity to errors as the risk of harm is potentially much greater due to scale when compared to a small locally developed and maintained AI. In the setting of interventional radiology, 2 types of AI will likely see implementation: (1) AI augmented medical devices and (2) AI software as a medical device (SaMD), both of which should be monitored with a risk-based level of care as either can result in error or harm. The ongoing development of AI registries will be key for IR physicians to keep pace with the latest technology and issues that arise.34,35 At present, given that AI marketed as medical devices are under the auspices of their respective country’s medical device pathways, malfunctions should be recorded, similar to Canada’s mandatory reporting for therapeutic products under Vanessa’s Law. 36 Additionally, close collaboration between AI industry and radiology clinicians is vital to support progress of AI in radiology. Early involvement of clinicians will help guide which tasks are solved by AI, ensuring ongoing transparency and rigorous evaluation of AI technologies with a view to improving the quality and safety of patient care.37,38

Conclusion and Advice for the Interested Interventional Radiologist

When considering new technology, many factors need to be evaluated including the problem to be solved, underlying methods/technical details, and expected cost. AI brings forward several challenges which are in part related to nomenclature, with AI found both in software (SaMD) and hardware. Additionally, the underlying AI tool itself may range from very simple to highly complex. Therefore, an IR department must consider both the risk of the AI and the level of risk of its implementation. For AI in the IR clinical setting, possible patient harm, both physical and otherwise must be also considered. The framework for level of implementation risk and checklist for AI presented herein may be used as a guide in the planning for AI. We expect that teams charged with decision making for AI devices in an IR will perform better when staffed with IR clinicians or other team members who understand both the underlying problem (clinical domain knowledge) and a grasp of AI techniques (AI domain knowledge). Close collaboration between AI industry and clinicians will be a keystone in the development of safe AI in interventional radiology.

Footnotes

Acknowledgements

None.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Alexander Bilbily reports a relationship with 16 Bit Inc. that includes employment and equity or stocks. Alexander Bilbily is Co-CEO of 16 Bit Inc.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.