Abstract

Artificial intelligence (AI) is rapidly evolving and has transformative potential for interventional radiology (IR) clinical practice. However, formal training in AI may be limited for many clinicians and therefore presents a challenge for initial implementation and trust in AI. An understanding of the foundational concepts in AI may help familiarize the interventional radiologist with the field of AI, thus facilitating understanding and participation in the development and deployment of AI. A pragmatic classification system of AI based on the complexity of the model may guide clinicians in the assessment of AI. Finally, the current state of AI in IR and the patterns of implementation are explored (pre-procedural, intra-procedural, and post-procedural).

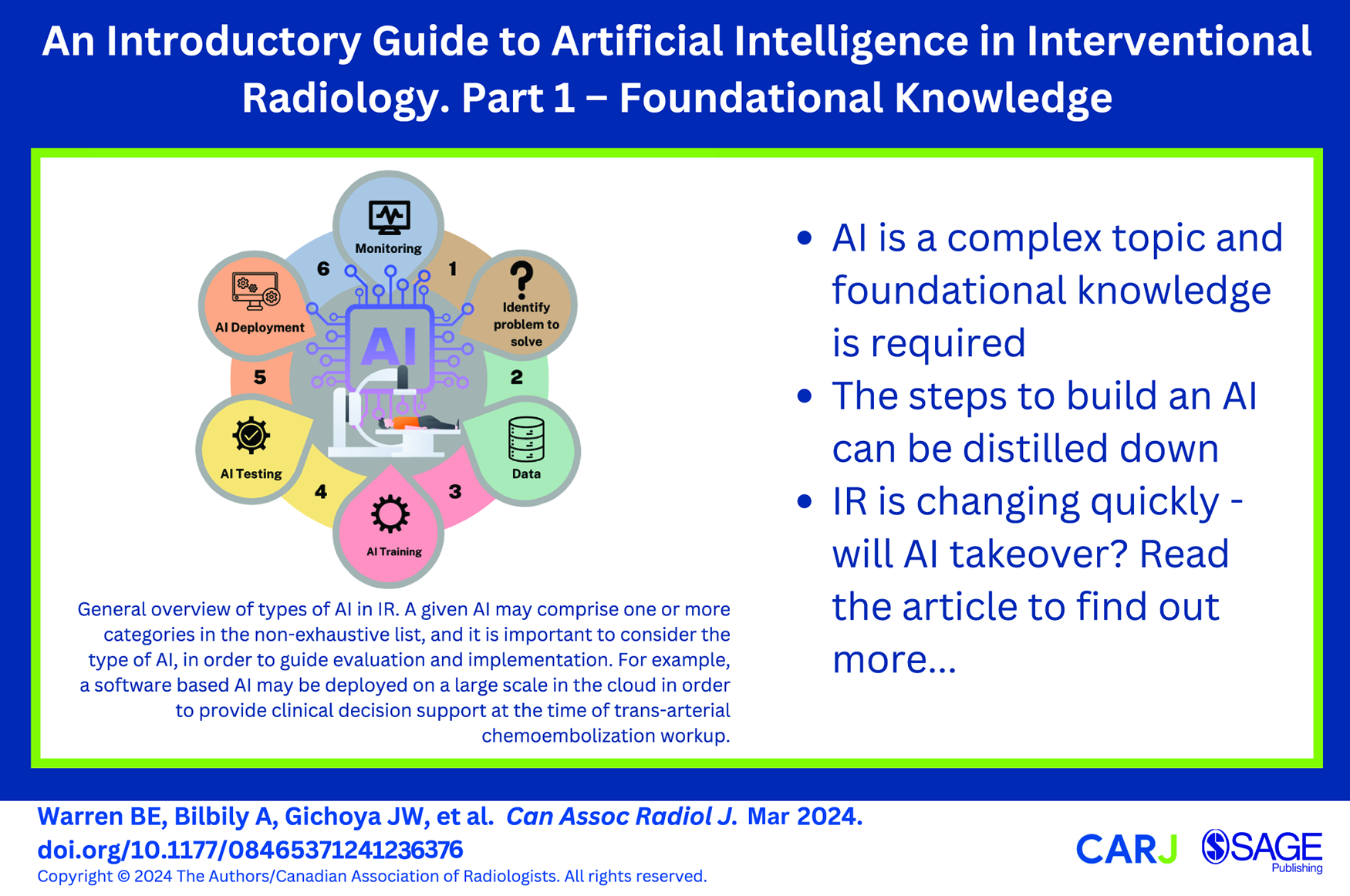

This is a visual representation of the abstract.

Introduction

The year is 2050, you arrive to start the day in interventional radiology. It was a busy night on call, but the artificial intelligence (AI) booking system already accounted for this and rescheduled the non-urgent procedures that were originally planned for the morning so that you and the interventional radiology (IR) team could be well rested to optimize team and patient care. Overnight, the hospital electronic medical record AI monitored all CTs performed in the emergency department. One such patient, Jane, has an acute pulmonary embolism and continues to desaturate despite oxygen and optimal medical therapy. The AI flags this case as possibly requiring further therapy and at that point you receive an automated message recommending you see the patient for consultation with a pre-calculated risk profile. The system flagged her recent brain surgery as a contraindication for thrombolysis and suggests suction thrombectomy with a specific risk reduction in mortality percentage and improved quality of life after the procedure. You go see the patient, discuss findings with her and the referring team, and ultimately agree with and convey the AI’s predicted risk profile: 35% probability of death within 30 days and a 75% probability of disabling shortness of breath if no intervention is performed. Jane used to enjoy recreational sports and based on the AI prediction, it is unlikely she could return to daily running, and has a 5.4% probability of developing chronic thromboembolic pulmonary hypertension without intervention. You speak to the patient and offer suction thrombectomy, to which she consents following a discussion of the risks and benefits. The ambient AI tool in the room documents your entire encounter and when you sign off the consultation, an alert pops up asking if you intend to perform the procedure today. You click “yes” and the interventional radiology inventory robot immediately begins collecting the equipment needed for the case, tailored to the patient based on their imaging and physical characteristics. The 3D printer begins printing a catheter personalized to Jane’s anatomy. The patient had some questions she forgot to ask you, but thanks to an AI chat-bot, the questions were answered to her satisfaction and automatically documented in Jane’s medical record. During the procedure, the AI-augmented fluoroscopy suite generates real-time CT-fluoroscopy fusion, allowing you to swiftly select what would otherwise be challenging anatomy. Multi-modal sensors tracking Jane’s pain, stress levels and physiological status tailored titration of sedation and analgesia to her specific needs and preferences. After you complete the procedure, you open your phone to review and sign off the AI generated procedural report and post-procedure orders.

This tale may be a distant future for today’s interventional radiologists. However, work is already underway, for example, a recent artificial intelligence (AI) challenge in 2022 was aimed at the automated diagnosis of pulmonary embolism, with potential for high levels of diagnostic performance. 1 AI is soon going to change the way radiologists and interventional radiologists practice medicine, even if little has changed in current day-to-day practice. 2 Fortunately, the gradual path to widespread implementation of AI allows the opportunity to prepare for the uncertainty and risks that must be faced for this imminent change. Indeed, there are increasing concerns from both AI experts, governments, and medical societies regarding the ethics, challenges, and risks associated with implementing AI.3-5 The interventional radiology community has begun such preparation: a meeting of the Society of Interventional Radiology Foundation in December 2021 brought together multiple key stakeholders to form a society consensus statement. 6 Similarly, the Cardiovascular and Interventional Radiological Society of Europe recently released a 2023 position paper on AI in IR where they explored clinical, technical, and ethical limitations. 7 Additionally, several frameworks have also been suggested to describe where AI may fit into the IR workflow, largely broken down by the periprocedural impact/utilization of AI: (1) pre-procedural, (2) peri-procedural, (3) post-procedural/follow-up.8,9

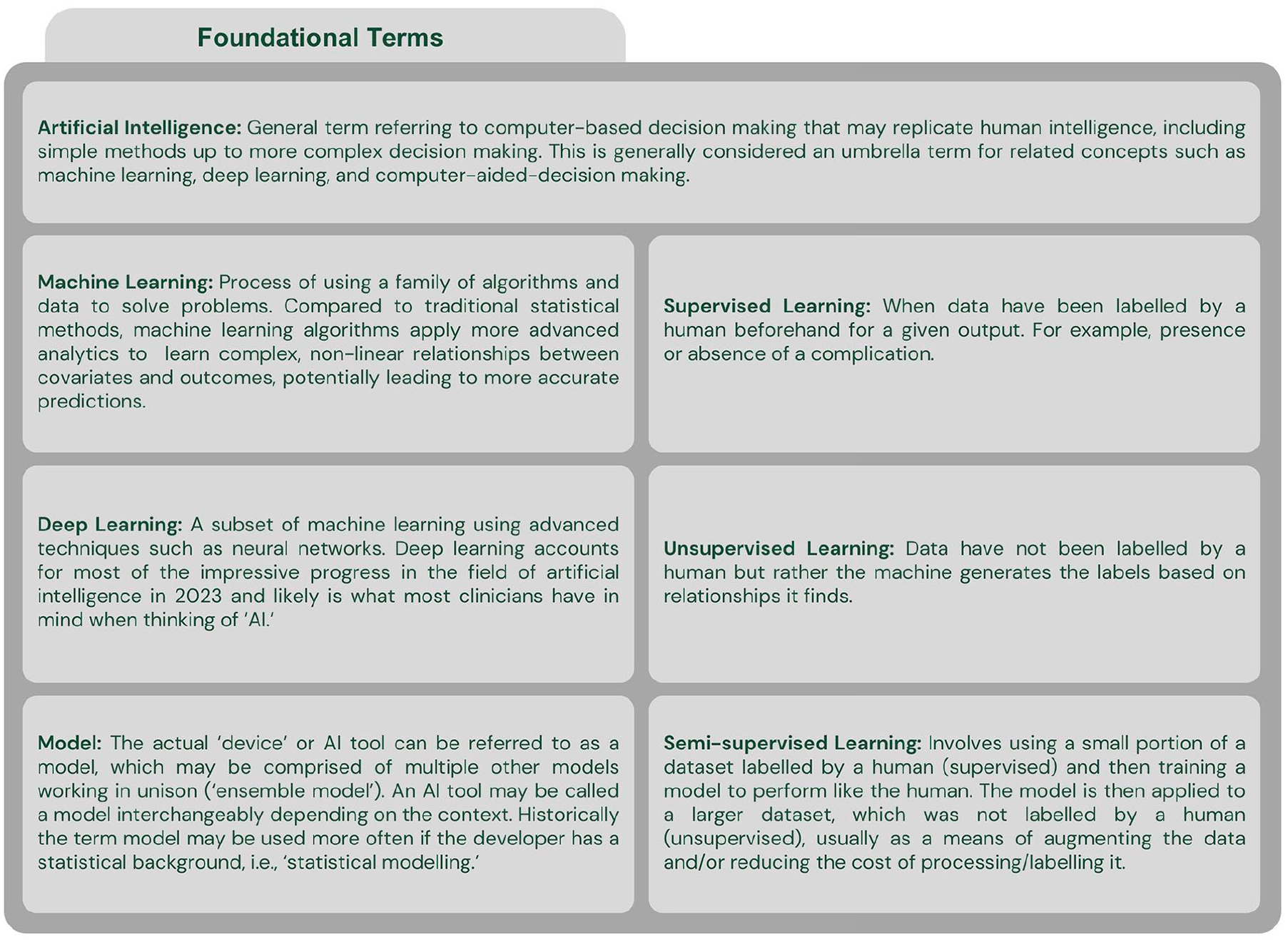

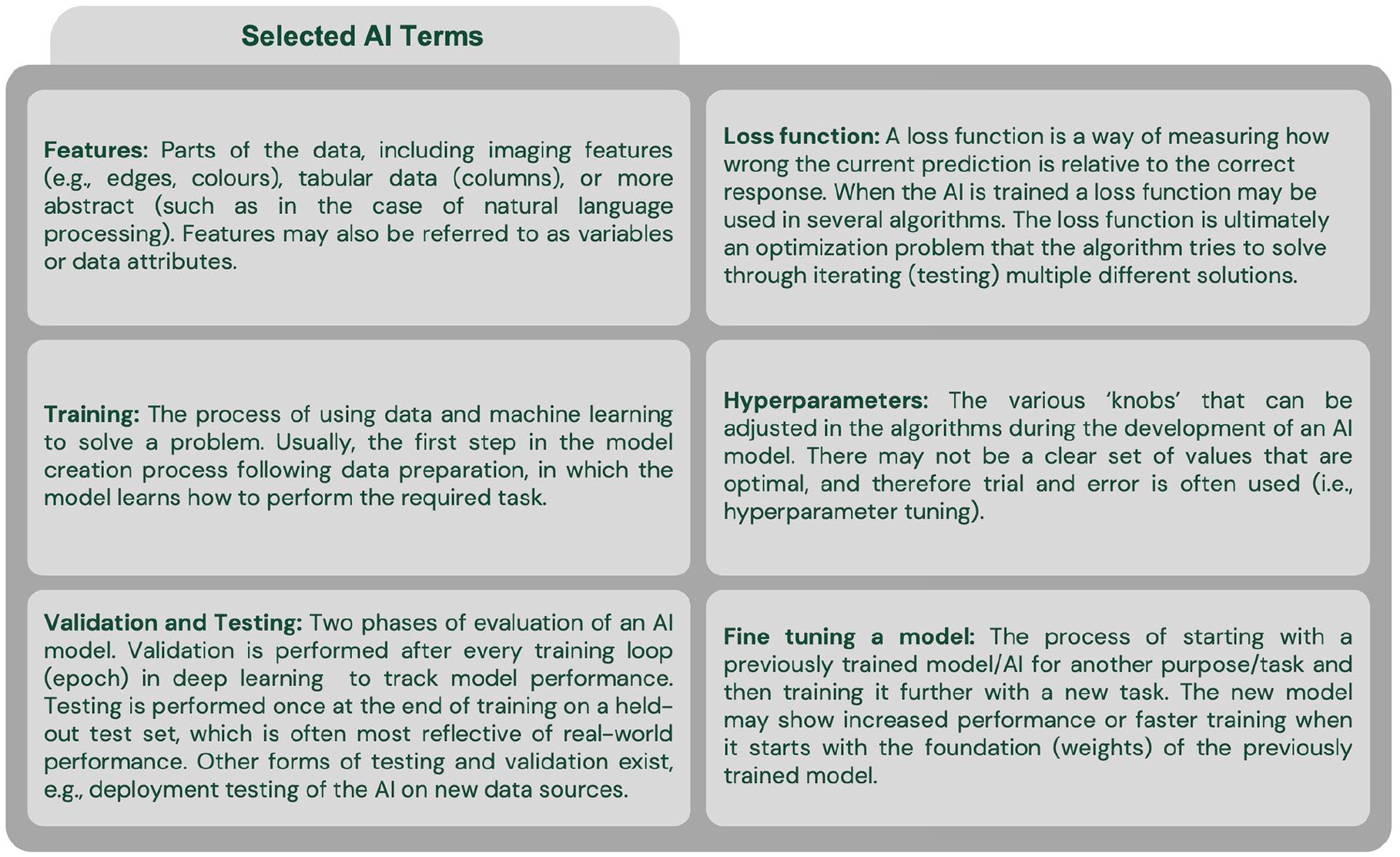

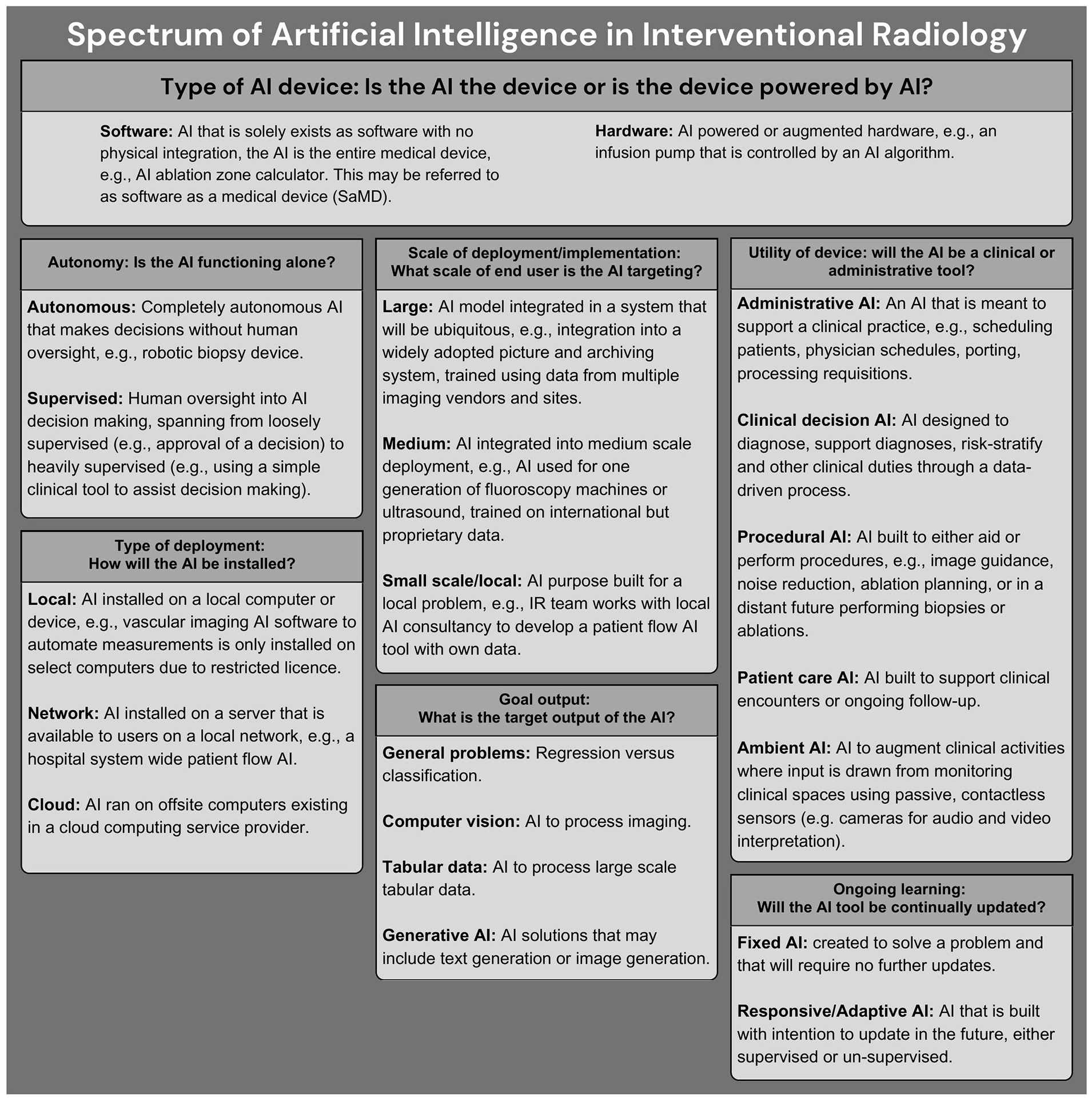

One of the fundamental problems with AI in medicine today is the rapid technology evolution coupled with a lack of broad understanding amongst practicing clinicians. Few radiologists have formal training on AI and it is a requested curriculum amongst radiology residents.10-12 This is compounded by marketing by the makers of commercial AI tools and few guidelines that may leave physicians feeling unprepared when faced with the challenge of implementing AI into clinical practice. The terminology used in AI can be an initial hurdle to understanding its implementation and development (Figures 1 and 2). Additionally, multiple definitions of AI have been proposed, which may further contribute to confusion, especially among non-computer scientists.13,14 In the absence of a singular definition of AI, use of the term may encompass multiple aspects (Figure 3). There is also a prevailing discourse that the uninterpretable nature of predictions generated from complex deep learning algorithms inhibits their use in clinical practice. However, in many ways, AI is like any other tool the interventional radiologist uses. For example, an interventional radiologist will need to understand the differences between various ablation technologies to use the devices correctly and troubleshoot, with some understanding of the underlying technology, but not to the degree an engineer might understand the device.

General overview of types of AI in IR. A given AI may comprise one or more categories in the non-exhaustive list, and it is important to consider the type of AI, in order to guide evaluation and implementation.13,16,19,20 For example, a software based AI may be deployed on a large scale in the cloud in order to provide clinical decision support at the time of trans-arterial chemoembolization workup.

In this first article of a two-part series, we aim to provide foundational knowledge important for the implementation of AI in interventional radiology. Basic terminology, a general overview of AI development as it relates to IR, and types of AI will be explored. In the second part, conceivable risks and harms in the development and implementation of AI models will be explored, with integration of emerging AI safety literature and a suggested checklist for AI evaluation and implementation.

Development of AI As It Relates to Interventional Radiology

Generally speaking, AI is computer aided decision making; this may range in complexity from traditional methods such as hard coded knowledge, increasing in complexity up to generative artificial intelligence produced by a neural network or other more advanced techniques (Figure 1).13,15,16,21 One issue that is not often discussed in medicine is that the definition of artificial intelligence continues to evolve with time, which can make it difficult to keep abreast of the changes in the AI landscape.22,23 Machine learning and deep learning are both considered to be within the field of artificial intelligence.16,17 There are many different types of algorithms that can be used either alone or in combination with one-another.

The general steps to develop and implement an AI tool include 17 :

Determine the problem space: Prior to starting development of an AI, a problem should be identified with a goal output, and possible input data and approach should be determined. In this phase, interventional radiologists’ domain knowledge is critical to ensuring that the problem is important, reasonable to be supported by an AI, and the level of risk of implementation is appropriate for the task. The scale and goal output of deployment (Figure 3) may guide the level of radiologist involvement. For example, an interventional radiologist should likely participate in the development of an automated IR procedure scheduling AI, as they will have domain knowledge regarding length of procedures and patient characteristics which may alter the length of a procedure.

Data collection and cleaning: High quality data are required. The efforts by the Radiological Society of North America (RSNA) and the American College of Radiology (ACR) to develop common data elements (CDEs) describing imaging findings can help improve the data quality for both training and testing of AI.24,25 At present these organizations however do not have approved CDEs for IR. Interventional radiology generates a large volume of both clinical and imaging data, however collection, cleaning, and preparation of data can be costly.26,27 The issue of data ownership, patient confidentiality, equity, and bias due to social determinants must remain a concern when considering data.3,5,28 Standardized collection of data as a routine process may help build high quality data and is likely required to generate meaningful large scale databases. For example, the Society of Interventional Radiology has created the VIRTEX registry and the Society for Vascular Surgery has the Vascular Quality Initiative.29,30 However, there must be recognition regarding the increasing administrative demands associated with these registries.

AI Training: The data will then be used to train the AI tool to solve the task. Usually a partition of “training” data is used to train models, with evaluation of performance on a different partition of “validation” data. 16 The training process is iterative, with multiple different algorithms, architectures, data processing or augmentation often being evaluated. Many different types of AI tools could be seen in IR, with varying complexity and functions (Figure 3). In the early training phase, the interventional radiologist’s domain knowledge may provide insight into early results found by the AI developer. For example, the AI developer may not understand why the AI tool is underperforming on specific fluoroscopic images and the interventional radiologist may provide clinical insight that can aid in the investigation, thus guiding further model development.

AI Testing: The AI created through the training process will need to be tested on data not previously seen. The test data should be kept untouched to prevent or limit data leakage, which is when non-training data contribute to AI model development and may artificially inflate performance metrics, whether intentional or not. A common example of data leakage is when a patient with multiple datapoints exists in both the training and testing datasets. At this point, an interventional radiologist presented with an AI should carefully consider if the test data the AI is evaluated on will reflect real-world data and whether the performance metrics match the problem. Additionally, regulatory approval may be sought by the team when appropriate, but this is country specific and rapidly evolving.

AI Deployment: Once the AI tool has been developed and tested, if the performance and function are satisfactory, deployment is planned. There are many considerations at the time of deployment (Figures 1-3), which often reflect the initial planning. However, for the interventional radiologist who may be charged with deploying or considering implementing an AI tool in their local department, this phase may be the final safeguard prior to deployment. Therefore, the complexity of the AI tool and the planned purpose must be considered with a harm reduction perspective.

Ongoing evaluation and improvement: Like most tools or software, ongoing evaluation is required once the AI model is deployed. Depending on the function of the AI tool, evaluation may be natural (eg, a human inspects the output before any action is taken) or require purposeful action (eg, an autonomous triaging system for procedure requisitions may require audits to ensure it is accurate). It is expected that updates will be required, and a plan should be in place for these as well as model inspection to ensure that it is functioning as designed.

Bias can be introduced at every step of AI development. 31 Perhaps the most important potential source of bias is the data itself, as the model learns from it to produce a given output. The bias may arise from the population sampled, type of sampling, data processing, or even ownership of the data.26,27 This may include noise that is built into a given task or the labels by which it is assessed. 32 Data bias in the data may result in reinforcement of discrimination encoded in the data and through feedback loops further reinforced in a real-world implementation.33,34 For example, an AI tool may inadvertently discover that patients who live close to the hospital have better outcomes because they attend follow-up appointments more often. If it were to use outcome data to triage who should receive care first, it may reinforce this bias. Unfortunately, due to the complexity of data, it may not always be apparent to a human how one feature could represent social determinants of health. During AI model development, developers and target users like IR departments should have an understanding of how the model was created and by extension, the data used to create it with a view to mitigate inadvertent harms. This may include following suggestions by organizations focused on AI safety, such as the Center for AI Safety. 35 The type of AI, plan for implementation, interpretability, and complexity of the AI guides the degree of human oversight and risk of harm.

Types of AI: A Pragmatic Complexity and Interpretability-Based Approach

AI can be classified in many ways including by level of intelligence, underlying architecture, or functionality. 28 We outline a pragmatic classification system of AI for the IR clinician that relies on explainability.

Simple and Interpretable AI

These are simple models that are well explained such as a classification system based on logistic regression wherein each contributing factor can be examined or a decision tree where the pathway to each final decision can be traced. These simple AI methods that are robust and suitable for many applications, however, users may find that these may not be marketed as AI, but possibly other terms such as “computer aided.” 13 Such techniques could be used for a simple monitoring system on a medical imaging day unit designed to alert the nursing team to changes in clinical status based on vital signs. These AI are explainable and due to the interpretable nature, are easier to determine if the output produced was inappropriate for a given situation, leading to a reduced risk when used in clinical practice.

Complex and Interpretable AI

This may be a combination of multiple simple models resulting in complex decision making. Alternatively, these could be very large in scope, with the ability to be broken down into smaller components that can be understood with careful attention. These may be labelled as AI by developers, but also may be “machine learning” or “computer aided” devices. Examples include a complex AI tool that predicts IR department patient flow with various sub-models (ie, an “ensemble” model) that explain components of the department. Implementation of these AI tools should consider their risks and benefits, acknowledging that their increased complexity makes evaluation of these models for catastrophic events or misadventure more challenging. A higher level of attention is likely required when considering implementation relative to the simple and interpretable AI.

Complex and Non-Interpretable AI

These are complex AI systems for which the intended user likely is unlikely to easily understand the underlying architecture and calculations that produce the generated output. This may include AI trained on high dimensional datasets with components of non-supervised labelling or, in the case of interventional radiology, most likely computer vision AI. It is likely that these will be marketed as “AI” devices or “AI-powered” devices. For example, these types of computer vision AI may include denoising AI, segmentation, computer aided diagnosis software, and treatment response prediction algorithms. These models require close scrutiny of the AI model including the training data (which is not always available) and the implementation plan, as machine learning/AI models can be inherently fragile.34,36 Unfortunately, these models may lack explainability (a “black box”) and require expensive data and computational resources for creation, and therefore the developer may also withhold important trade information. Furthermore, due to model complexity, it is challenging to understand how an algorithm makes a specific prediction. Testing for harmful or catastrophic events may be challenging due to the underlying architecture and stochastic nature of some of these AIs. 4

AI may be seen as a black box, however this is not entirely true, as there are mechanisms to inspect the inner workings, which range from inspecting the model directly to creating visual representations.37,38 Therefore, non-interpretable models require additional caution and strategies when being implemented.

State and Directions of AI in IR

Interventional radiology will likely see many exciting AI implementations as the specialty combines imaging, therapeutics, and clinical medicine. Companies are well underway developing AI powered products to be integrated into many clinical IR practices. The collaboration between radiologists, developers, and industry AI vendors will be important in establishing guidelines for product development, deployment, ongoing transparency, and ultimately improving the patient outcomes. 39 The expected pattern of AI implementation in IR has been explored by several authors and is overall expected to fall into one of 3 categories: (1) pre-procedural, (2) intra-procedural, or (3) post-procedural.6,8,9,40

Pre-Procedural

AI may be used to automate diagnosis, predict treatment response, perform patient selection, plan treatment and procedures and augment patient and operator education or simulation. Example work to date has included models to segment and predict the response of hepatocellular cancer to chemoembolization to guide treatment planning. 41 In neurointerventional radiology, detection of large vessel occlusion and aneurysms has progressed into real-world deployment.42,43 This area of development may see the most rapid evolution due to the availability of vast imaging datasets generated during routine diagnostic radiology.

Intra-Procedural

AI may enable more advanced image fusion, perform device selection, monitor/sedate the patient, automate segmentation, and optimize imaging equipment to reduce radiation dose. A suction thrombectomy system has seen real world deployment with computer aided thrombectomy. 44 Future late stage AI devices may include integration with robotics or novel medical imaging technology to reduce radiation dose.

Post-Procedural

Clinical follow-up will be augmented by AI tools including chat bots while imaging follow-up will become automated. AI will enable more remote smart patient monitoring with alerts triggered only when clinician intervention is required. Ambient clinical monitoring for automated note generation and real-time translation may facilitate patient encounters and is an area of active development, with software such as Dragon Ambient eXperience being marketed at present (Nuance Communications, Burlington, Massachusetts). General chatbots such as ChatGPT have shown early promise answering basic interventional radiology questions that patients may have. 45 However, other studies have shown clear limitations with only 40% of technical IR questions answered completely correctly by ChatGPT. 46 As chatbots and associated technologies continue to evolve, their ability to enhance clinicians non-technical skills such as empathy may also improve with downstream effects on patient satisfaction and clinician burnout. 47

Will AI Replace Interventional Radiologists?

At this point, some readers may be wondering if AI will replace interventional radiologists, and, if so, when. This concept has been widely discussed in the diagnostic radiology realm and is succinctly answered by the statement, “radiologists who use AI will replace radiologists who don’t.” 48 This is likely to be similar for interventional radiologists where AI will have a role in image interpretation, procedural planning, decision making, and outcomes prediction—these non-technical aspects lend themselves well to AI. The procedural aspect of the specialty lends itself less well to being “replaced,” despite work in procedural robotics that utilize AI, procedures thus far have only been facilitated by robotics and AI rather than replaced. 49 However, emerging evidence supports the “AI Augmented Physician” concept whereby intraprocedural AI augmented analysis can facilitate clinical decision making. 50

This may however lead to concerns, if IR becomes easier to perform—might IRs lose ground as medical colleagues seek to perform the procedures themselves? Another concern could be AI’s influence on treatments of disease rendering traditional IR procedures less needed. Or, AI might deem certain radiology procedures futile thus further reducing need but overall improving patient outcomes.

One of the criticisms of interventional radiologists has been a lack of “clinical” training due to traditional radiology curricula. As AI clinical abilities expand, this could partially offset a lack of clinical exposure in radiology residency training programs as reliance on AI becomes more widespread in medicine overall.

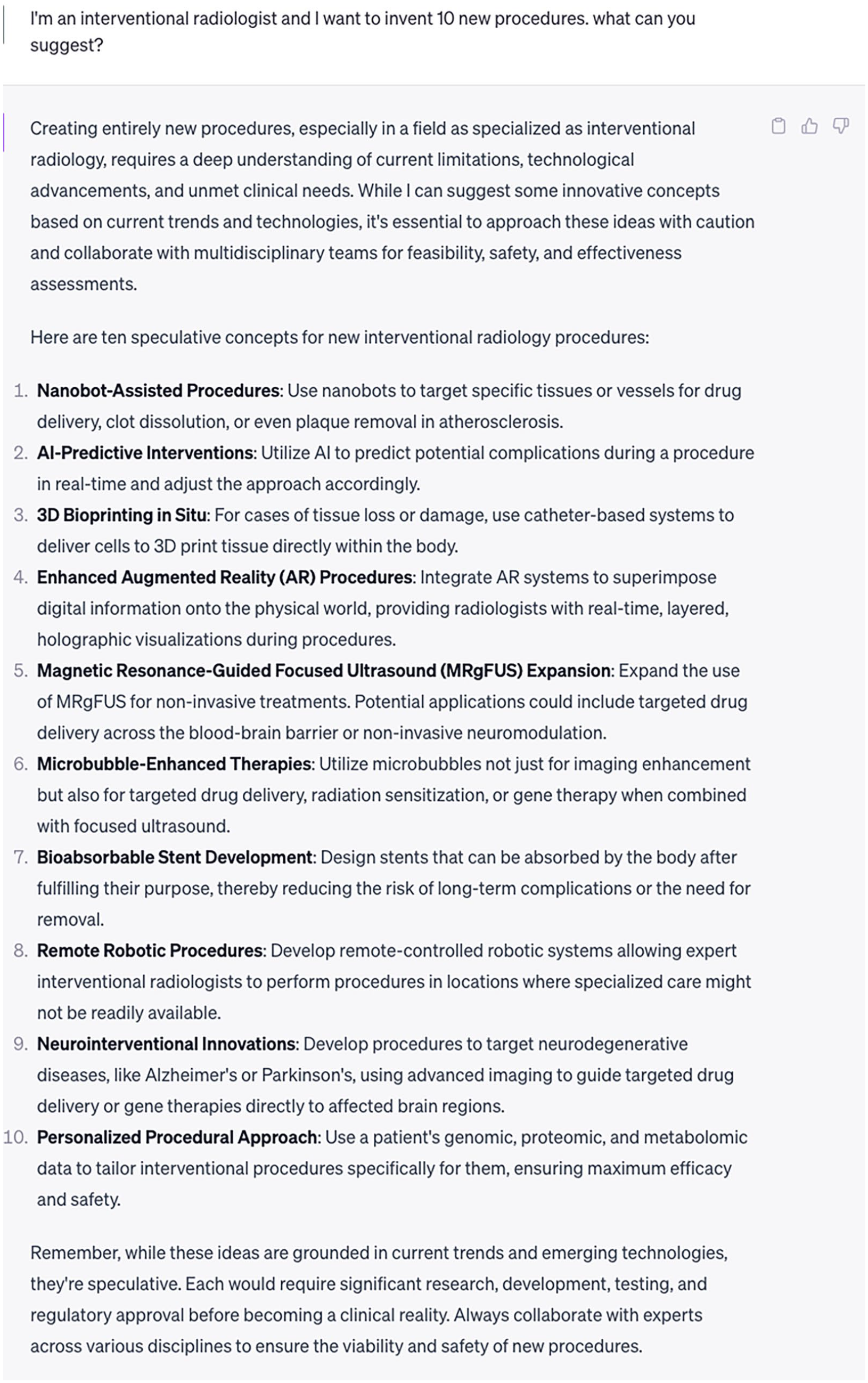

Innovation runs at the heart of interventional radiology. As has been previously suggested, it is now more important than ever for IR to remain at the forefront of modern medicine with intelligent data collection and the development/refinement of novel procedures. 29 If we continue to do this and use AI to augment this journey, it is highly unlikely IRs will be replaced by AI. The opening story may be a reality sooner than we think. Ultimately, AI will make interventional radiologists better clinicians, allow IR to perform more challenging cases, and improve clinical decision making and support. And, if you are ever lacking inspiration, a chatbot might be able to inspire creativity (Figure 4)!

Screenshot from a prompt asking for new procedure inventions. 51 ChatGPT can act as a non-judgmental colleague for brainstorming and generating ideas.

Conclusions

AI is rapidly evolving and is likely to change IR in ways that cannot yet be predicted. It is conceivable that many of the day-to-day tasks of an interventional radiologist today will be either performed by an AI tool or greatly augmented by AI. The role of AI may even evolve to performing procedures on patients. Early clinician involvement during the development and implementation of AI can also be used to encourage a human-centred approach. Ideally, AI should be used to increase time spent with patients, support evidence-based decision making, and improve overall quality and efficiency of care. Like any other tool used in IR, interventional radiologists must equip themselves to use and interact with AI through foundational knowledge. This document may be a reference for foundational AI knowledge, including an outline of AI development as it relates to interventional radiology. The pragmatic classification system for AI complexity can be used by healthcare teams who are considering implementing AI into their clinical practice.

In the next part of this series, evaluation of AI from the interventional radiologist’s perspective with a focus on risks and possible harms will be explored.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Alexander Bilbily reports a relationship with 16 Bit Inc. that includes employment and equity or stocks. Alexander Bilbily is Co-CEO of 16 Bit Inc.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.