Abstract

This article presents a first step in identifying the ethical issues of AI-based financial advice. Consumers must navigate an ever more complex array of financial decisions. (Generative) AI-based financial advice may increase access to and acceptance of financial advice and strengthen consumers’ financial well-being. However, significant ethical challenges exist in designing, developing, and deploying AI-based financial advice. To analyze the perils and pitfalls of AI-based financial advice, the authors develop a definition of what constitutes good AI-based financial advice and provide a first assessment of ethical challenges related to AI-based financial advice. The iterative multistakeholder approach, including workshops and semistructured interviews with consumers and experts, results in an ethical discourse structured around the four fundamental values of the European Commission's Ethics Guidelines for Trustworthy AI—human autonomy, explicability, fairness, and prevention of harm—and trust as the overall objectives. Based on the analyses, the authors derive a simple yet comprehensive AI Ethics Framework for Financial Advice. This reflection framework guides public policy makers, managers of financial service providers, and technology developers in incorporating ethical discourse in developing and deploying (generative) AI-based financial advice.

Consumers must navigate various financial decisions, including saving, finding insurance, getting mortgages, and planning for retirement (Allgood and Walstad 2016; Chardon 2011). Consumers often lack the necessary financial knowledge to understand even simple financial products or feel insecure when making these major decisions (Alyousif and Kalenkoski 2017; Bruhn and Asher 2021; Calcagno and Monticone 2015; Eberhardt et al. 2022). Consumers may benefit from professional financial advice 1 to support their finances. However, less affluent and vulnerable groups, who would benefit from financial advice the most (Jaroszewicz 2022; Van Gaalen et al. 2017; Westermann et al. 2020), often do not have access to financial advice due to cost or other barriers. A recent study finds that only 33% of Americans have spoken to a financial advisor (Pinkerton 2023). Half of Dutch citizens indicate that the costs of financial advice form a barrier for them to seek financial advice, and one-third indicate that they are not sure whether a financial advisor would work in their best interest (Van Gaalen et al. 2017).

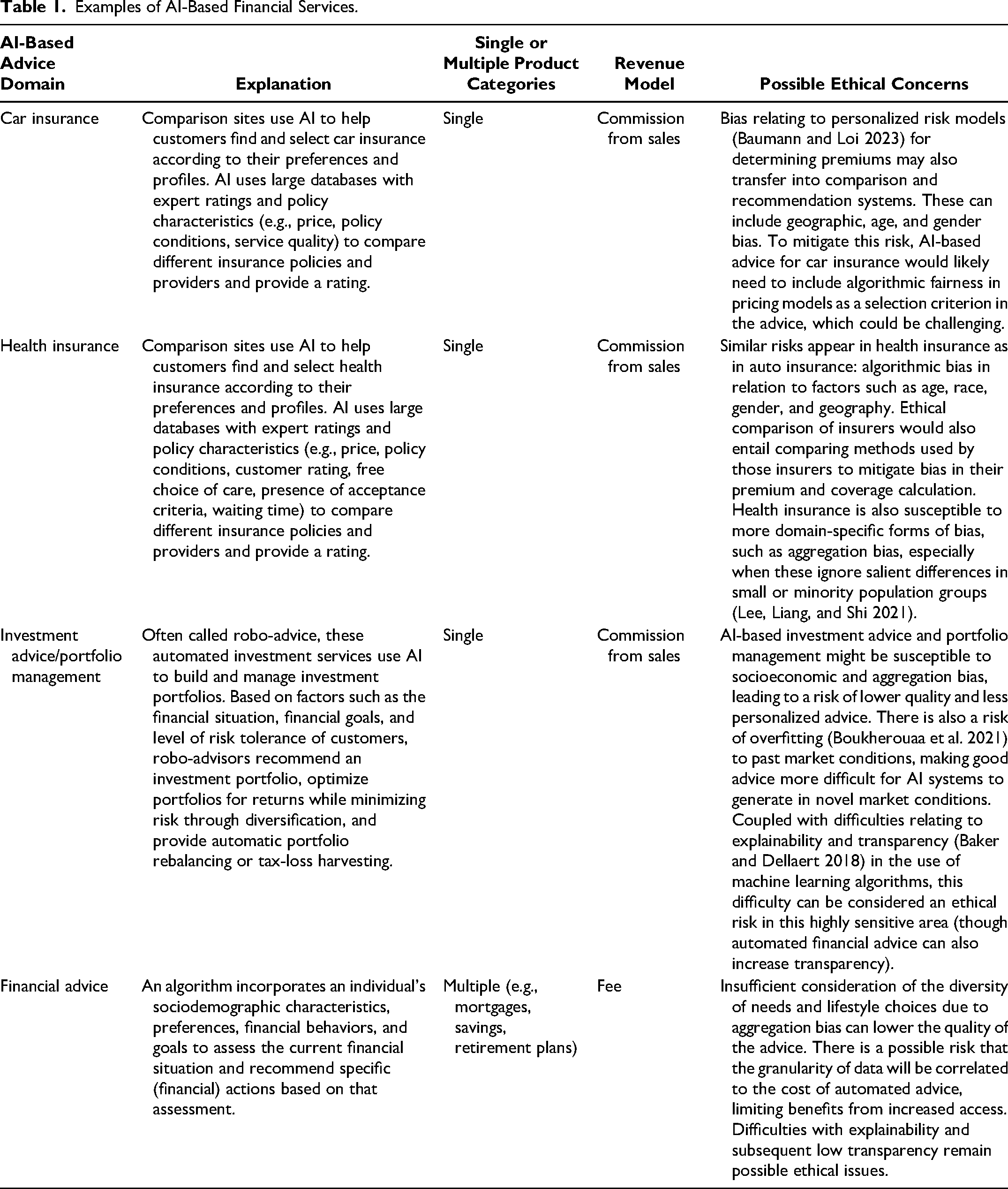

Artificial intelligence (AI) in general and generative AI in particular offer new possibilities to increase access to and acceptance of financial advice and, consequently, consumers’ financial well-being. One such possibility is AI-based financial advice (sometimes also referred to as robo-advice 2 ). We define AI-based financial advice as an algorithm that incorporates an individual's sociodemographic characteristics, preferences, financial behaviors, and goals to assess their current financial situation and recommend specific (financial) actions based on that assessment. With AI-based financial advice, the financial advice process is based on an autonomous algorithm and provided through a digital medium. AI-based advice exists for various financial products, such as car insurance, health insurance, and portfolio management (see Table 1 for examples). Ooi et al. (2025) describe the potential for generative AI to offer financial advice and recommendations. A recent study in finance has demonstrated that generative AI like Chat GPT-4 can provide suitable financial investment advice, even though the suggested investment portfolios display home bias and are relatively insensitive to the investment horizon (Fieberg, Hornuf, and Streich 2023).

Examples of AI-Based Financial Services.

Holistic AI-based financial advice—the focus of this article—does not yet exist in the market. Using (generative) AI in this context may offer multiple advantages compared with traditional forms of financial advice (Kaya 2017; Maume 2019): It can reduce costs, expand the quantitative level of analysis and potential solutions, and improve the objectivity and overall quality of the advice. Combined with the fact that such AI-based services can be readily accessed with an internet or mobile network connection from anywhere and at any time, this could significantly increase access to and uptake of financial advice. Thus, AI-based financial advice could help more consumers get better support to make far-reaching financial decisions.

However, there are also significant challenges in designing, developing, and deploying holistic AI-based financial advice. For example, in a sensitive domain like personal finance, digital tools such as AI-based financial advice must work clearly, demonstrably, and explicably in consumers’ best interests. 3 Still, a definition of what constitutes “good” financial advice is lacking. Moreover, AI-based financial advice design has significant ethical implications. Discrimination, paternalism, and lack of transparency in the underlying objectives of AI-based financial advice can have severe repercussions, as those can potentially harm certain groups and affect trust in the algorithmic translation of financial advice (Coeckelbergh 2019; Crawford 2021). Thus, when automating human processes in the sensitive domain of financial advice, special attention to unintended (as well as intended) effects is required from the outset. For example, AI systems must be designed with robust mechanisms to detect and mitigate biases. This includes diverse and representative training datasets and continuous monitoring for biased outcomes. Transparency and explainability must be prioritized to foster trust and ensure users understand how AI-derived advice is generated. Addressing fraud concerns requires implementing stringent security measures and regular audits to protect against malicious activities and ensure the integrity of the AI systems. Moreover, designing AI for financial advice should include ethical considerations in every stage of the AI life cycle, from conception through deployment. However, even though more and more ethicists are involved in discussions around AI-based services, advocating concepts such as “ethics by design” (Brey and Dainow 2024), it often remains challenging to identify ethical concerns at the outset and integrate them into the design, development, and deployment of AI applications. The question of exactly how to do this in practice remains largely unanswered.

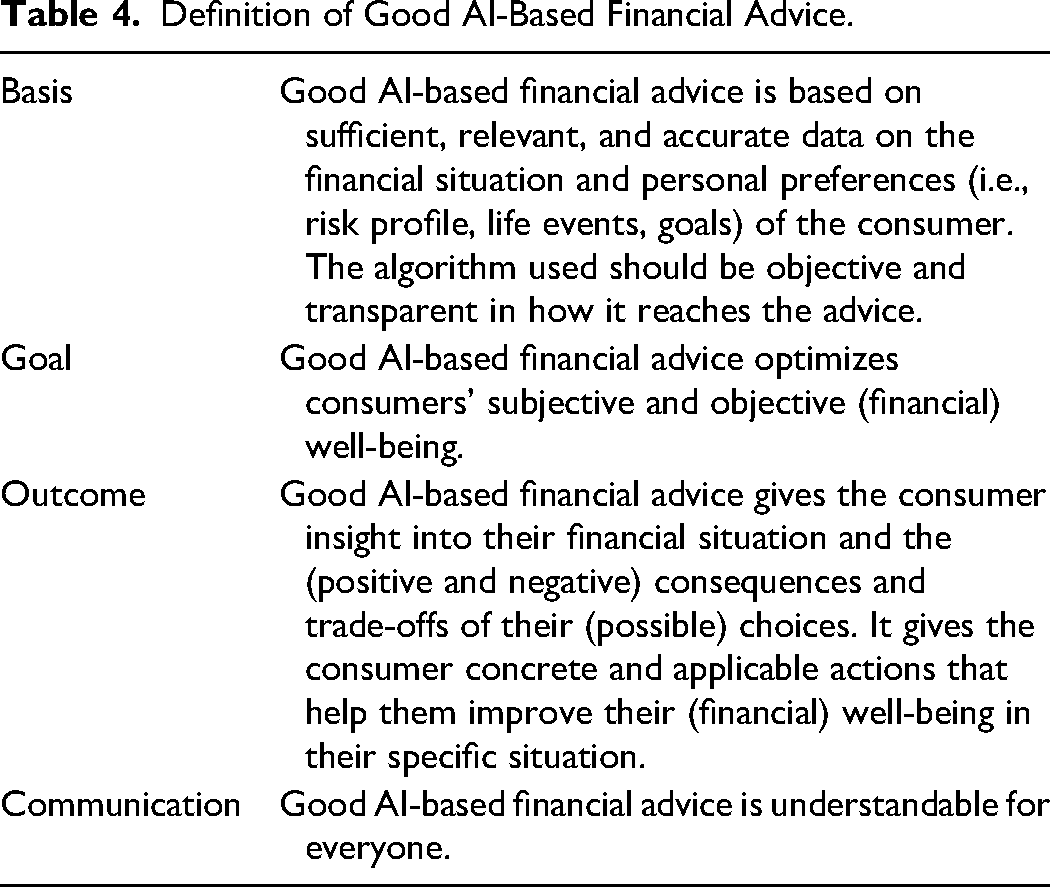

This article presents a first step toward understanding AI-based financial advice. We first develop a definition of “good” AI-based financial advice, created with input from academic experts, industry experts, and consumers, which forms the necessary basis for AI-based financial advice. We then identify the ethical challenges related to AI-based financial advice through interviews with industry experts and develop a reflection framework, the AI Ethics Framework for Financial Advice (AIFA), to guide policy makers and practitioners in their understanding of ethics and its implementation in the design of AI-based financial advice.

Our work makes two main contributions to the literature. First, in response to the calls for more research on ethical issues associated with generative AI (Van Dis et al. 2023), AI for social good (Cowls et al. 2023; Du and Sen 2023; Floridi et al. 2018, 2020; Vinuesa et al. 2020), and rethinking marketing for a better world and the greater good (Chandy et al. 2021; Madan and Ashok 2023; Mende and Scott 2021), we carve out the most pressing ethical issues facing AI-based financial advice. Specifically, we provide a comprehensive assessment of ethical dilemmas in AI-based financial advice, using ethical guidelines and principles identified in the current literature (Hagendorff 2020; Jobin, Ienca, and Vayena 2019; Mittelstadt 2019) as well as semistructured interviews with experts in the field of financial advice. This is an important contribution as better access to “good,” fair, and less-biased financial advice has the potential to aid people in making complex financial decisions, mitigating inequalities, and improving financial well-being, a key component of overall well-being (Netemeyer et al. 2017), thereby aligning with the United Nations’ Sustainable Development Goals (SDGs) of ensuring health and well-being (SDG 3) and reducing inequalities (SDG 10) (Cowls et al. 2023; Vinuesa et al. 2020). However, successful implementation requires addressing the potential ethical risks and pitfalls. Thus, we contribute to the literature on generative AI, AI for social good, and rethinking marketing for a better world and the greater good by thoroughly analyzing both the perils and pitfalls of AI-based financial advice.

Second, research in marketing has primarily studied individuals’ psychological and emotional reactions toward AI, identifying trust, transparency, reliability, explainability, privacy, and data security as key drivers of user acceptance (Aysolmaz, Müller, and Meacham 2023; Hentzen et al. 2022; Hermann 2022; Hermann, Williams, and Puntoni 2023). In contrast, this article takes a multistakeholder perspective. We include insights from consumers, financial technology providers, pension funds, public policy makers from ministries and supervisory bodies, and financial planners, capturing various perspectives on AI-based financial advice. Based on our analyses, we derive a simple yet comprehensive framework, the AIFA, to assess ethical questions in AI-based financial advice. The AIFA addresses the challenge that despite the increasing adoption of AI, many organizations face difficulties in designing, developing, and deploying AI-based financial advice in an ethical and value-sensitive manner. Organizations frequently confine their examination of ethical dimensions to compliance with existing laws and standards, overlooking the incorporation of more comprehensive reflective processes that encompass essential aspects such as dignity, fairness, and the overall well-being of individuals. Our framework aids public policy makers, managers of financial service providers, and technology developers in efforts to incorporate ethical reflection in designing, developing, and deploying AI-based financial advice.

The remainder of the article is structured as follows. We first define and provide further explanations of AI-based financial advice and discuss the differences between personal, hybrid, and AI-based financial advice and their advantages and disadvantages. We then summarize the literature on “good” financial advice and ethical issues in AI-based financial services. In Stage 1 of our research, we develop a definition of “good” financial advice—the necessary basis for AI-based financial advice—and justify using the European Commission's Ethics Guidelines for Trustworthy AI (European Commission 2019) as the framework for our ethical analysis. In Stage 2 of our research, we identify and present the results of our identification of the ethical concerns of AI-based financial advice based on qualitative interviews with experts, a literature analysis, and two expert workshops. Finally, we explain our reflection framework (the AIFA) and discuss implications for policy makers, researchers, managers, and information technology (IT) specialists.

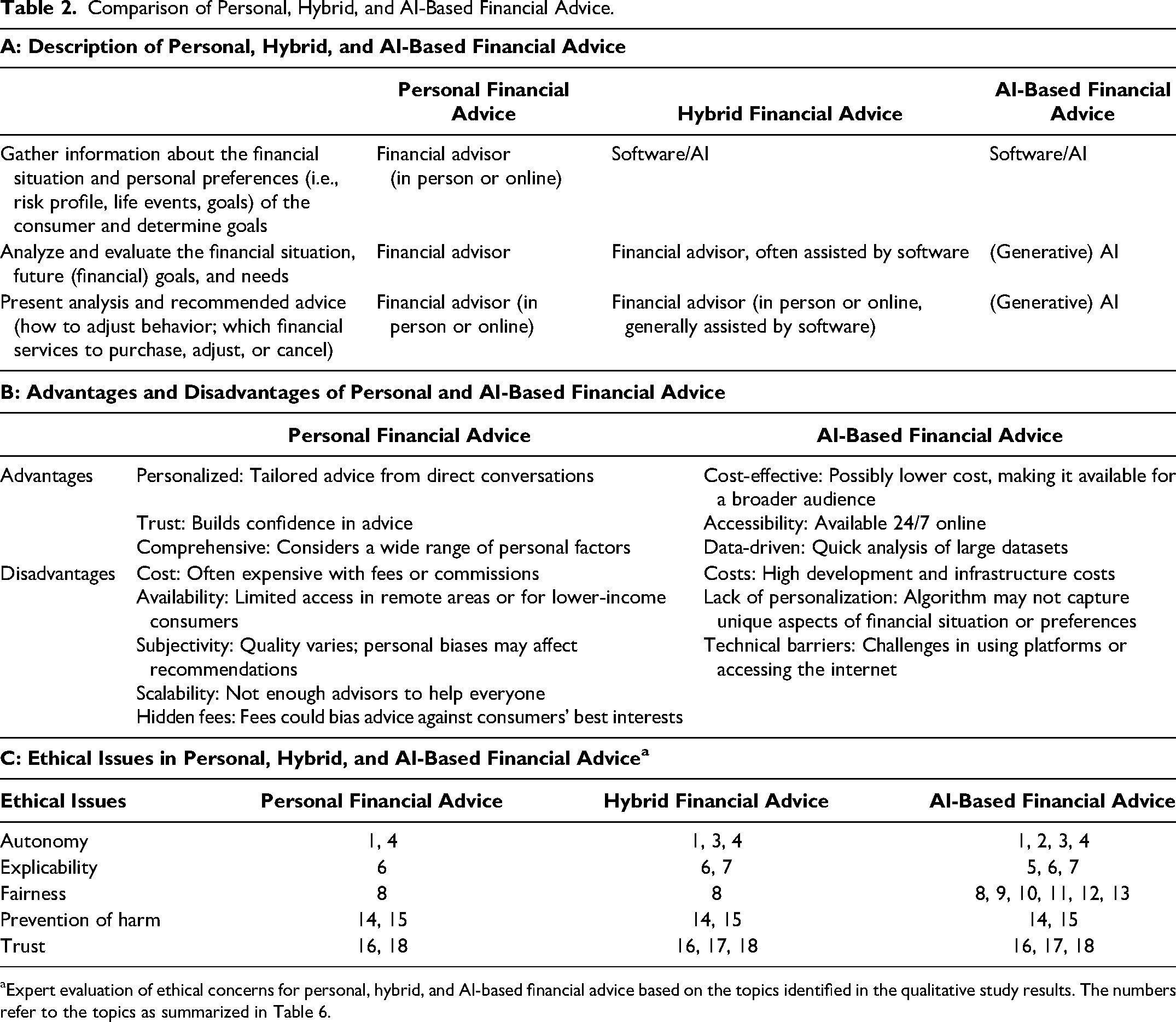

AI-Based Financial Advice

Holistic AI-based financial advice considers consumers’ entire situations, including their preferences, financial behaviors, goals, and sociodemographic characteristics, to assess their current financial status and recommend specific actions. This process is driven by an autonomous algorithm and delivered through a digital medium. The analogous offline comparison is personal financial advice, where a financial advisor or planner gathers all relevant information about the consumer, analyzes and evaluates the situation, and provides tailored advice. Holistic AI-based financial advice does not yet exist in the market. However, hybrid financial advice services, which incorporate software or AI into parts of the process, are already available. Table 2, Panel A, provides a comparison of personal, hybrid, and AI-based financial advice. Panel B lists the advantages and disadvantages of the spectrum's endpoints, from completely personal to completely AI-based financial advice. Panel C reveals the extent to which the ethical issues identified for AI-based financial advice (further detailed in the “Results” section) also apply to personal and hybrid financial advice. The main conclusion is that while several ethical issues are relevant for both personal and hybrid advice, AI-based financial advice is characterized by a larger number of ethical issues, particularly concerning fairness, autonomy, and explicability.

Comparison of Personal, Hybrid, and AI-Based Financial Advice.

Expert evaluation of ethical concerns for personal, hybrid, and AI-based financial advice based on the topics identified in the qualitative study results. The numbers refer to the topics as summarized in Table 6.

The literature agrees that there is a need to develop benchmarks for good financial advice (Buie and Yeske 2011; Collins 2010; Lander 2018; Lyons and Neelakantan 2008). It is, however, unclear how one would define “good” financial advice. The literature defining “good” or “high-quality” advice is scarce and mainly focuses just on a subset of financial decisions, such as investor portfolio choice. That is, the literature focuses on how an investor should distribute money over different investment options, such as stocks and bonds, and the role of advice therein. In such settings, Angelova and Regner (2013) consider advice “good” when the advisor is “truthful” and recommends a low-fee mutual fund to the client. Bluethgen, Meyer, and Hackethal (2008) state that “high-quality financial advice should aim to enhance investor utility” (p. 3), which they operationalize as an advisor’s recommendation to the investor to participate in the stock market and use low-fee index funds. Mullainathan, Nöth, and Schoar (2009) argue that “good” advisors should reduce investment biases in investors’ portfolios (e.g., too low diversification). Kotlikoff (2006) is a notable exception as the definition goes beyond portfolio choice but is still rather vague by stating that “sound” advice should lead to preserving a “family's living standard through time and in unforeseen, but not unforeseeable, circumstances” (p. 18). Thus, the literature fails to define “good” financial advice in more complex and holistic financial advice situations across financial decisions such as optimally balancing emergency savings, paying off a mortgage, saving for children's education, and planning for retirement. The lack of a comprehensive and clear definition of “good” financial advice becomes especially relevant with the developments toward AI-based financial advice. Thus, a definition forms the basis for the financial advice AI algorithm.

Literature Review on Ethical Issues in AI-Based Financial Services

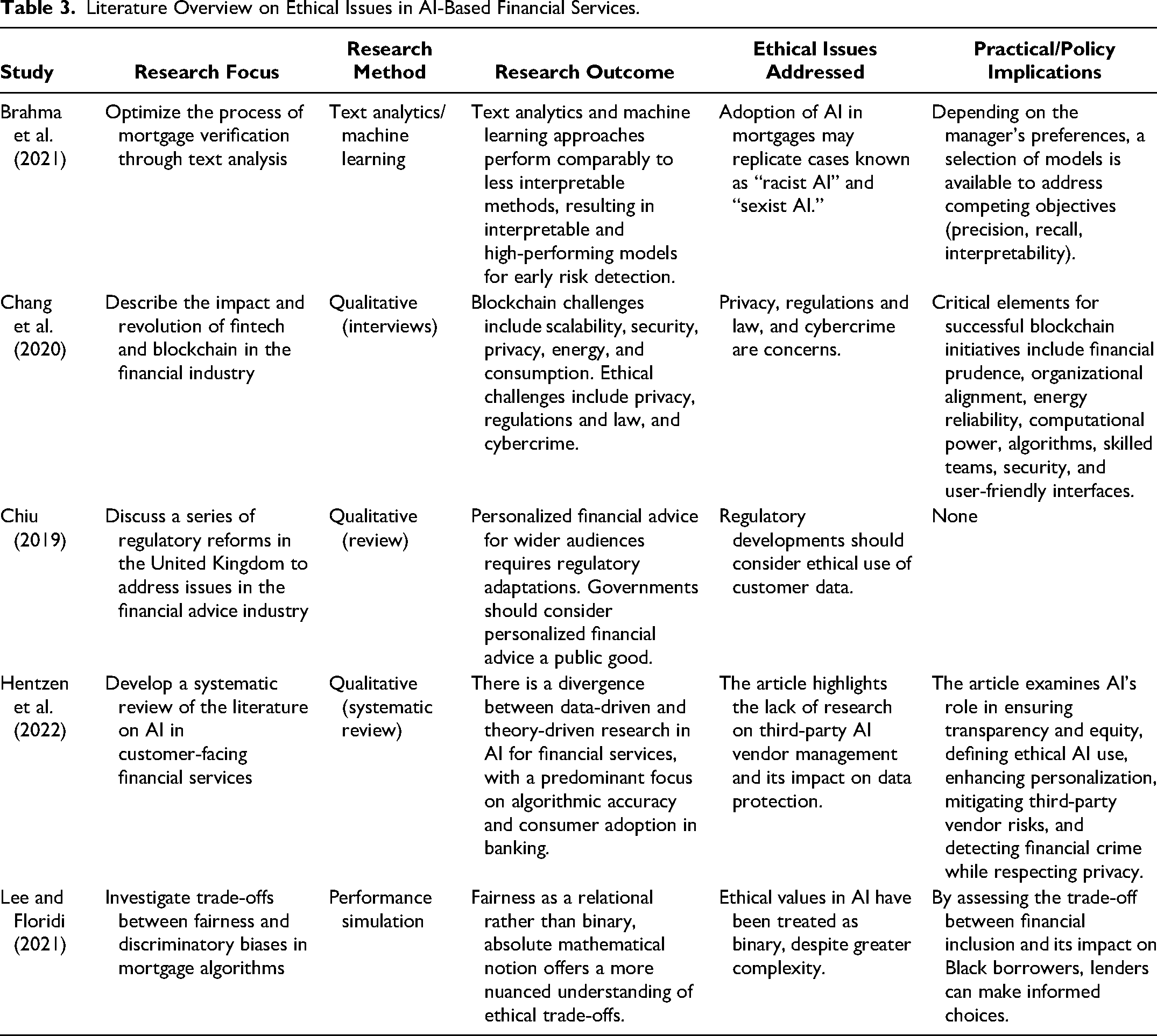

Relevant articles were identified by searching through titles, abstracts, introductions, and key terms on Google Scholar and academic databases (e.g., EBSCO) for references to AI and its variants (such as “artificial intelligence”) in the context of financial services. The overview only contains articles that explicitly discuss ethical issues in AI-based financial services.

As Table 3 illustrates, the existing literature often addresses narrow aspects of AI applications, with only limited attention to the broader ethical implications. Moreover, research has primarily concentrated on areas like robo-advice, mortgages, and retail banking, leaving a gap in exploring holistic AI-based financial advice.

Literature Overview on Ethical Issues in AI-Based Financial Services.

Several studies have analyzed the use of AI in areas like mortgage processing and investment advice. For instance, Brahma et al. (2021) examine how text analytics and machine learning can optimize mortgage verification processes, finding that these approaches can produce interpretable, high-performing models for early risk detection. However, they also raise significant ethical concerns, highlighting the risk of AI perpetuating biases, such as “racist AI” and “sexist AI,” which could replicate existing social inequalities. Similarly, Lee and Floridi (2021) focus on the ethical trade-offs inherent in mortgage algorithms, specifically addressing the balance between fairness and discriminatory biases. Their findings echo those of Brahma et al. by challenging the oversimplified, binary treatment of ethical values in AI, instead advocating for a more nuanced understanding of fairness. Both studies underscore the ethical complexities of AI in mortgage-related services, where decisions can significantly impact financial inclusion and equity.

Expanding beyond mortgages, Chang et al. (2020) explore the broader financial industry, analyzing the impact of fintech and blockchain technologies. Their study identifies key challenges such as scalability, security, and privacy—issues that are critical not just for mortgages but across the entire financial sector. They also emphasize ethical concerns like privacy, regulation, and cybercrime, which are pivotal in successfully implementing blockchain initiatives. Chiu (2019) reviews a series of regulatory reforms in the United Kingdom to improve the financial advice industry. Although primarily regulatory, Chiu's study also touches on the ethical use of customer data, a theme resonant with the privacy concerns of Chang et al. Hentzen et al. (2022) also take a broader perspective and conduct a systematic review of AI applications in customer-facing financial services. They note a predominant focus on algorithmic accuracy and consumer adoption, particularly in banking, while ethical issues, such as third-party AI vendor management and data protection, are often neglected.

Despite the insights provided by these studies, the existing literature remains focused on specific financial services, such as investment advice and mortgages, without addressing the broader concept of holistic AI-based financial advice. Moreover, the ethical considerations discussed are often limited to issues like bias, privacy, and data protection, with little attention paid to the full spectrum of ethical challenges that could arise in more integrated financial advisory systems. This article aims to bridge these gaps by extending the scope from robo-investment advice and other unidimensional advice settings to holistic financial advice. Additionally, we address the limited focus on ethical issues in AI-based financial services by providing a more comprehensive and complete assessment of these ethical concerns.

Stage 1: Defining “Good” AI-Based Financial Advice

Personal finance advice that is not in the client's best interest can negatively impact the well-being of individuals and households (e.g., Drentea 2000; Drentea and Lavrakas 2000; Dunn, Gilbert, and Wilson 2011; Lane 2016). As the outcome of financial advice is contingent on many factors, a clear definition of what constitutes good advice is a necessary criterion for establishing that AI-based advice is in the best interests of clients at the time it is given.

Methodology

To develop a definition of good AI-based financial advice, 4 we first organized an interactive ideation workshop aimed at creating an initial definition. Participants were experts from one of the world's largest pension providers (APG); the Dutch Authority for the Financial Markets, which supervises the conduct of the entire financial market sector in the Netherlands; Ortec Finance, an international company developing technology for investment decision making; the Dutch National Institute for Family Finance Information (Nibud), an independent foundation that provides information and advice on financial matters to private households; a financial planner who is a board member of the Dutch Federation for Financial Planners; and three academic experts with a background in finance, consumer financial decision-making, and social psychology.

In addition, we conducted semistructured qualitative interviews with 16 consumers (9 male and 7 female consumers, varying in age, profession, financial knowledge, and experience; interview lengths between 21 and 54 minutes; for details, see Web Appendix A). We derived six key elements of good financial advice based on the input: (1) increased well-being, (2) alignment with short- and long-term goals, (3) ease of understanding, (4) incorporation of individual preferences, (5) implementation ease, and (6) transparency. We used this input on what constitutes good financial advice to draft a first version of the definition. To validate whether this definition truly encompassed what experts see as good financial advice, we presented it to professionals (academic experts, policy makers, pension providers) at a digital round table gathering organized by Netspar, the Network for Studies on Pensions, Aging and Retirement, in January 2022. In breakout sessions, participants discussed their understanding of “good financial advice.” We asked all experts to evaluate our working definition in a follow-up survey.

The main feedback from the sessions and the survey was that the initial version of the definition was too complicated and was limited to providing strategies, while it should also incorporate positive and negative consequences of choices, as well as emphasize the independence of the advice and that the advice should be based on accurate and complete data. The feedback was used to refine the definition further. In a third session (March 2022) with experts from a pension provider (APG), the Dutch Authority for the Financial Markets, an insurance company (a.s.r.), a technology provider (Ortec Finance), and four academic experts, this new definition was evaluated, leading to some further minor adjustments. As a final iteration, the definition was presented during a Netspar conference to around 50 Netspar partners (policy makers from the Ministry of Social Affairs and Employment, the Ministry of Finance, the Ministry of Economic Affairs, and the Ministry of the Interior and Kingdom Relations of the Netherlands, pension funds, insurance companies, labor unions, banks, supervisory bodies) and research fellows. These changes led to the final definition of good AI-based financial advice (Table 4), which is divided into four components and defines (1) which goal good AI-based financial advice should have, (2) which information the algorithm should be based on and how it should operate, (3) what the outcome of good financial advice should look like, and (4) how it should be communicated. Putting this together, we define good AI-based financial advice as shown in Table 4.

Definition of Good AI-Based Financial Advice.

Ethics and AI-Based Financial Advice

The definition of good AI-based financial advice forms a necessary but not sufficient condition for creating an ethically sensitive algorithm for providing financial advice. In this section, we discuss ethical issues related to AI-based financial advice.

In response to widespread cross-sectoral concerns about AI ethics, major institutional bodies and private companies have increasingly embraced the uptake of self-regulatory actions that follow general principles and should be contextually implemented by the relevant actors. This has led to the introduction of multiple ethical guidelines and regulatory bodies for the design, deployment, and implementation of responsible AI. According to the AI Index Report (Zhang et al. 2021), 117 documents addressing AI principles were published between 2015 and 2020. Private companies, including Microsoft, Meta, and Google, have issued the largest number of publications on AI principles, especially between 2018 and 2020 (Zhang et al. 2021). There is a growing agreement in the public and private sectors that designing and implementing robust regulatory frameworks for AI use can significantly improve social acceptance and adoption of these technologies, though differences certainly remain concerning the scope and enforcement of such frameworks. For instance, Floridi et al. (2018) argue that adopting an ethical stance in developing AI ecosystems entails a “dual advantage.” First, it can allow companies to identify societal needs and select the most acceptable strategies for end users. Second, it can help prevent counterproductive side effects or harm generated by ethical issues or legally opaque implementations. Both aspects can help mitigate negative effects and increase the value of a service or a product for companies and organizations. However, these ethical approaches have also faced several criticisms. Some scholars argue that tech companies use “ethics” to emphasize self-regulation and avoid stricter legal frameworks (Bietti 2020; Wagner 2018). Despite their importance, principles of transparency and accountability are rarely implemented in practice, making enforcement difficult (Article 19 2019). In addition, different domains may prioritize different ethical values, and varying social and cultural norms complicate the fair translation of these values into practice. Cultural, social, and individual factors make it hard to predetermine which values will be most important in a particular context. Without proper training, ethical principles can be challenging to apply to specific ecosystems, including the organizations, processes, and context (Hagendorff 2020). Therefore, greater stakeholder engagement is necessary to ensure that values align with user expectations and needs (Borning and Muller 2012). Including diverse actors in ethical discussions and the practical application of guidelines helps institutions better understand user needs and improves project efficiency.

Hagendorff (2020) shows that most AI ethics frameworks demonstrate broad similarities (see Web Appendix B): Fairness, privacy, and accountability are included in nearly 80% of AI ethical guidelines to design ethically sound AI. For three reasons, we follow the principles and terminology provided in the Ethics Guidelines for Trustworthy AI published by the European Commission (2019) as a baseline for further research. First, the guidelines were drafted based on expert interviews with respondents from different disciplinary fields and backgrounds. Second, the guidelines build on long-standing human rights analyses and policies. Third, these guidelines are useful because of their clearness in making explicit the values behind the requirements and their related functionality in moving from more abstract to more pragmatic levels. The Ethics Guidelines for Trustworthy AI (European Commission 2019) are based on four fundamental values and seven principles, as Web Appendix C shows.

Stage 2: Identification of Ethical Concerns in AI-Based Financial Advice

Methodology

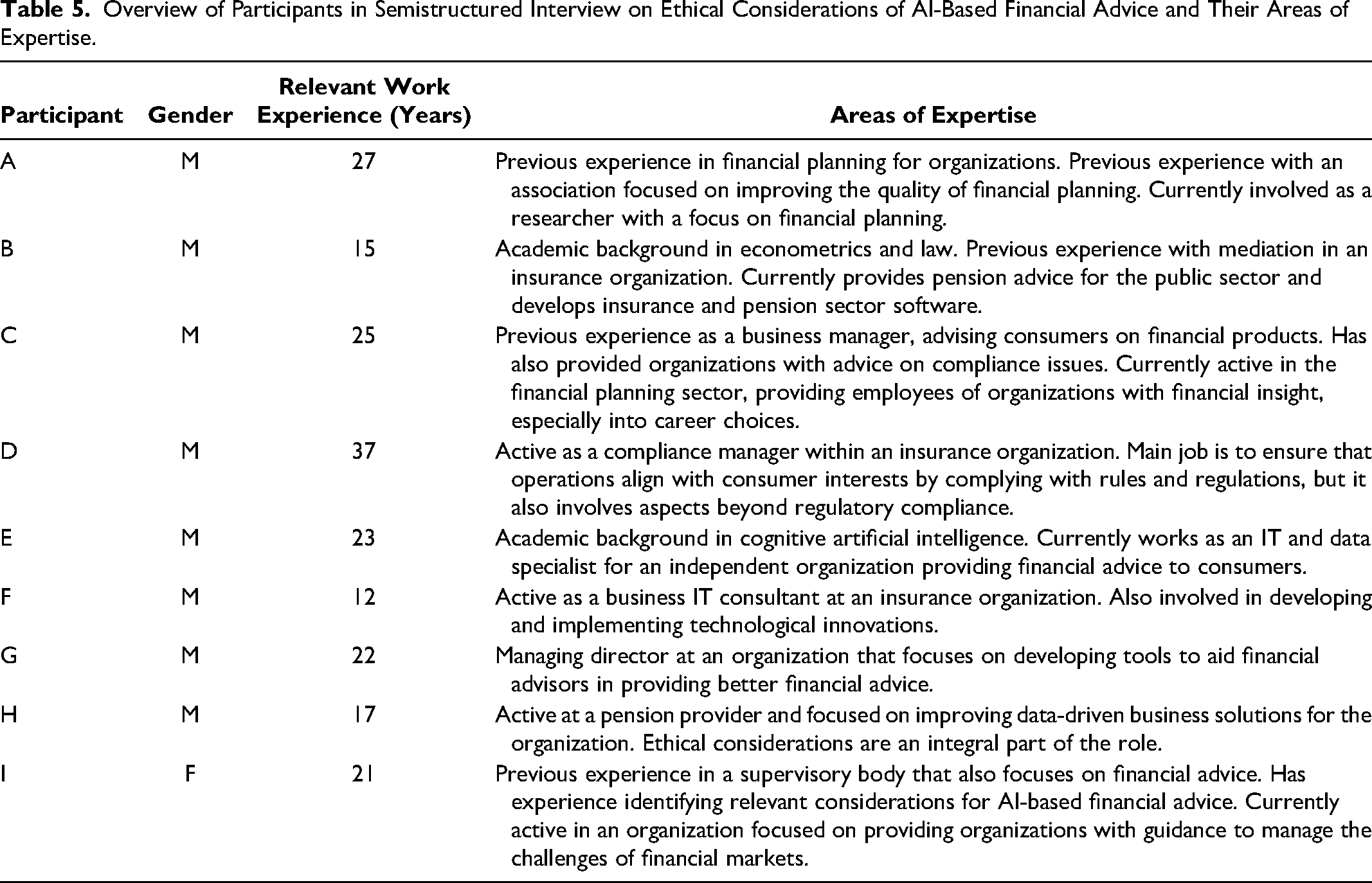

The analysis of ethical concerns in AI-based financial advice was based on the literature review and insights from the workshops and consumer interviews from Stage 1. In addition, we conducted semistructured qualitative interviews with nine public policy makers and industry experts (banks, pension funds, supervisory bodies responsible for the financial sector, independent financial advisors, and financial planners) with at least ten years of work experience (for more details, see Table 5; average interview length was 46 minutes). Web Appendix D contains the translated English interview guide used in semistructured interviews, following Arsel's (2017) design. After introductions and establishing a common understanding of AI-based financial advice, we explored the differences between traditional and AI-based advice, along with opportunities and challenges. Ethical aspects were addressed by discussing the experts’ perspectives on their organization's social values, how products or services reflect these values, and the role social values play in AI-based financial advice development. Dutch was the primary language used for all interviews. We first used an automated transcription process (Sonix). However, the software often misinterpreted words, significantly lowering transcription accuracy. Therefore, each transcript was manually checked and compared with the audio data during the coding process. Data was coded in four steps: repetitions, transitions, similarities and differences, cutting, and sorting. ATLAS.ti (9.1.7.0) (2022) was used to analyze the data. Specifically, we employed grounded theory (Corbin and Strauss 1990), engaging in an iterative process between the literature and our data, and conducting triangulation to integrate data from various sources until reaching theoretical saturation.

Overview of Participants in Semistructured Interview on Ethical Considerations of AI-Based Financial Advice and Their Areas of Expertise.

Results

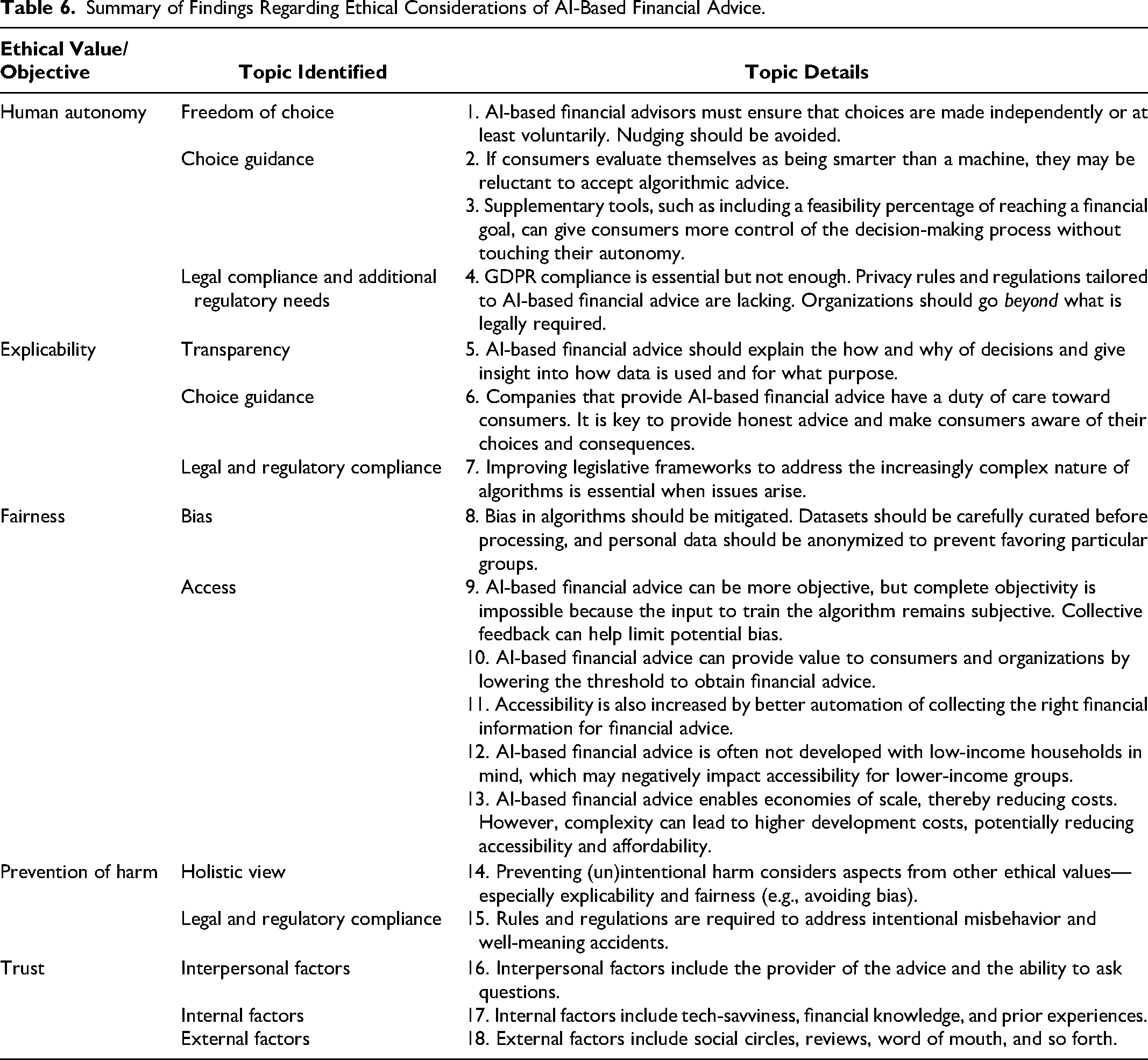

In reporting the topics that we identified from our interviews, we follow the structure of the Ethics Guidelines for Trustworthy AI values (European Commission 2019): human autonomy, explicability, fairness, and prevention of harm. In addition, we added the category “trust,” as it emerged as highly salient during the interviews. Overall, our research revealed the following topics: freedom of choice, choice guidance, legal compliance and additional regulatory needs, transparency, biases, access, and interpersonal, internal, and external factors relating to trust. Some of those topics were relevant for multiple value categories (e.g., legal compliance and additional regulatory needs), while others (e.g., bias and access) were only for one value category. Next, we comprehensively present the results for each value category. Table 6 summarizes and details the identified topics.

Summary of Findings Regarding Ethical Considerations of AI-Based Financial Advice.

Human autonomy

Respect for human autonomy means that the AI models that generate and deliver financial advice should be designed and implemented in such a manner that they augment, complement, and empower the capacities of users and do not endanger or diminish them. Moreover, it requires expanding choices for user/client action and not restricting them. This requires that human beings must be able to maintain a certain level of control over AI-based decision-making processes as well as assess and validate whether autonomy is preserved. In the case of AI-based financial advice, human control requires that service providers have control of the process and that end users are free to choose the extent of AI processing. Participant G favors more human control to achieve greater human autonomy: “If you put people more in control and let them make more choices, they become the owner of the journey to their goal. Research shows that people are happier when they are goal oriented. Also, they become happier during the journey, not only when the goal is realized.” Participant C stated that autonomy pertains to paternalism because the financial advisor has a duty of care toward consumers. This perspective means that a financial advisor, when talking to a consumer who is not an expert, must explain what they consider the best advice while considering possible reactions the consumer may have. Consumers can accept, be skeptical of, or completely disagree with the advice. Because autonomy touches on personal values, it is essential to consider how the advice will be received: “Therefore, in communicating with the customer, I must watch that the customer does not get the idea that they are being influenced and ensure that they make their choices independently, or at least on a voluntary basis” (Participant C). A point of contention with AI-based financial advice is that consumers may evaluate themselves as better equipped to make decisions than machines: “Who would do something that a machine tells them? We are superior to machines, so why would I accept advice from a machine? Despite this, consumers do often accept the advice from an authority” (Participant C). However, “The question is whether consumers understand what they are doing” (Participant G). If a consumer fills out a questionnaire and receives a neutral risk profile, multiple advice options can be considered, ranging from a short-term to long-term risk outlook. To help consumers understand why they made a particular choice, Participant G emphasized the importance of providing consumers with feedback on how feasible it is to reach their financial goals with AI-based financial advice. By doing so, consumers can better decide whether they agree with the possible advice options.

Privacy and data governance are two other aspects of ensuring autonomy. Ensuring appropriate levels of privacy through robust data governance helps guarantee that sensitive client information, which could be used by other parties to unduly influence users, thus negatively affecting autonomy, is either not collected or safely stored. Several participants (F, H, I) addressed safeguarding consumer privacy. Participant H emphasized the importance of complying with the General Data Protection Regulation (GDPR). Because their sector has a lot of supplementary data on hand, they stated that certain data could not be used without asking specific permission from consumers. Participants also stressed the importance of organizations paying attention to their back-end IT infrastructure to reduce the risk of a privacy breach. Participant F voiced concerns for the future regarding privacy, for example, building a privacy dossier to track which data is collected and how it is used over time. This example was mentioned as relevant because companies should keep an account of how they collect and process data. However, there is a lack of privacy rules and regulations tailored to AI-based financial advice to enforce this. Therefore, Participants A, C, E, F, and I argued for regulatory bodies to get involved and develop new rules and regulations. From a consumer point of view, Participant F stated that consumers fear that their personal data will be breached: Look, an email address we share easily, a phone number slightly less easily, name and address are also still fine. About this, the majority [of consumers] agrees. However, to derive financial insights you also need insights into the current financial situation of a customer. In other words, how much money do you have in the bank, and how much do you earn? It also depends on cultural factors, but we are hesitant about submitting this data in the Netherlands. If our address gets leaked, it is a nuisance. If your entire financial situation gets leaked for the entire world to see, it will have a much bigger impact on the customer.

Participant D stated that organizations should consider how data collection can be minimized while still being able to provide the best possible advice (data minimization is a GDPR requirement). Data minimization would also reduce the extent of information being leaked in case of a privacy breach. However, Participant D added that there is an ulterior motive behind this reasoning. Within their sector, the participant found that asking too many questions or questions that are too convoluted resulted in dropping conversion rates. Therefore, the plea for data minimization was supported by an economic incentive.

Explicability

Explicability is crucial for building and maintaining user trust in AI-based financial advice. To attain explicability, transparency and effective communication are crucial. Multiple participants (C, E, G, H, I) mentioned the importance of transparency. Varying explanations of what transparency meant to them were stated. Participant E mentioned transparency as being able to justify why an algorithm came to a decision. This finding was further elaborated on by Participant G, who emphasized the importance of communicating and explaining choices to consumers, advisors, or regulators. Data analyses can then be used to improve algorithms further, for example, by identifying why consumers deviate from the choices they are presented with. Moreover, Participant H gave the broadest and most demanding explanation of transparency: First, transparency entails adhering to legal rules and regulations, and there is a difference between what is allowed and what organizations should do. Second, transparency entails always asking permission from consumers before using their data. Third, transparency should provide insights into which consumer data is used and what purpose the data is used for. Fourth, transparency is the ability to show and explain to consumers the consequences of their actions. Participant H finalized their reasoning by stating that from an interorganizational perspective, organizations should prioritize making their advice process transparent to prevent the algorithm from becoming a black box. From a practical perspective, Participant G viewed transparency as the most important ethical issue: “If your aim is to deliver the best advice possible to a customer, you have to ensure that this consideration is fair and transparent, and that they understand why certain choices are made.” Participant C used “traceability” to describe a transparent advice process. The participant considered being able to demonstrate that they deliver trustworthy and consistent advice as part of their organizational values. This involves two components: first, adhering to legal rules and regulations, and, second, being able to reconstruct advice: “When a customer returns and contacts us, I want to be able to reconstruct the advice based on their characteristics and explain how we made certain decisions. It must be a logical story; I do not want to guess how the advice came to be” (Participant C). Participant I stated that transparency as an ethical issue is crucial for financial organizations. Financial organizations have a duty of care toward consumers enlisting their services. Incorporating the aspects mentioned previously, transparency can ensure that consumers are not misled and are provided with independent advice. Given the heavy emphasis on transparency, we understand explicability to combine transparency with clear communication as illustrated in the preceding comment from Participant C.

Several participants mentioned the duty of care, which has several components. Participants C, D, G, H, and I stated that there is an important distinction between putting the customer first and putting the needs of customers first. According to Participant C, organizations want to put the customer first from a commercial point of view. However, from an ethical point of view, they should put customers’ needs first. This prioritization can entail advising something against the wishes of the customer. Participant D continued this reasoning: “Customer satisfaction does not always equal customer needs. The fact that a customer can be very satisfied with your service does not necessarily mean that you did a good job.” Participant I gave an example to illustrate this point: Imagine a customer is allowed to borrow against the maximum mortgage. They might be pleased about this, but if your aim is to best serve the customer, you would recommend that they choose a different product. This advice might go against their wishes, but it would be the most suitable for their financial situation. Because of scenarios like this, Participant C advocated that customers be proactively made aware of potentially suboptimal choices even though they may not appreciate the input. The participant added that communication is vital in influencing acceptance of a given piece of advice, thereby reducing a potentially negative response from a consumer. Participant G proposed another perspective from an investing point of view, illustrating how customers can be presented with options that will often be agreeable to both parties: As an advisor, you have a range of good solutions. We do not believe in one optimal solution; there is always more than one. However, you can say that the solution must be within a certain range. Often, these are two or three choices. An example of this is the risk profile. You fill in a questionnaire, which results in a neutral risk profile. Now you get two scenarios. Defensive with a 95% feasibility and an equity of 100 or a neutral risk profile with a feasibility of 90% and an equity of 110. When it [the scenario] does not go to plan, they will get −10, but for the defensive profile only −2. Now, what is a good choice? Is the highest chance good? Is the highest potential equity within a given risk attitude good? Or is the lowest downside risk in a year good?

Participant G stated that experts often have a good indication of the best option for a given customer. However, the customer always decides which advice to take, and it is the role of the advisor to fulfill their duty of care by providing honest insights into the potential risk to yield trade-offs during this process. Participant H stated that several legal documents also represent the duty of care. Both Participants H and I specifically mentioned a law regarding financial oversight in the Netherlands (Dutch Financial Supervision Act, abbreviated Wft) as an example that includes elements of the duty of care. Despite measures to ensure that the duty of care is incorporated in the financial advice process, Participant I stated that organizations often prioritize economic incentives over abiding by the duty of care more than is legally required. While these examples refer to traditional financial advice, AI-based financial advice is bound to the same regulatory framework. Therefore, these findings extend to AI-based financial advice as well. From participant responses, we can see that care is closely linked to explicability as it relates to clear communication of choices and options as well as clear indication of differences between satisfying customer desires versus needs as defined by goals. Rather than being paternalistic, care, within the framework of explicability, also expands autonomy rather than limiting it.

Furthermore, to ensure that organizations reflect on the development of their algorithms, Participant E argued that organizations must be held accountable for the consequences of faulty AI-based financial advice. Participant A stated that creating rules and regulations specific to AI-based financial advice would be beneficial. However, this participant added that AI-based financial advice is in an early stage of development, and regulatory bodies often only consider additional rules and regulations when accidents occur. As an example of faulty AI-based financial advice in practice, Participant E mentioned the Dutch childcare benefit scandal, where a biased algorithm resulted in discriminatory consumer outcomes. Moreover, because the tax authorities failed to ensure interorganizational transparency, the algorithm operated in a black box, resulting in the harm to consumers going undetected for a prolonged period.

The broad category of explicability includes these dimensions: transparency, communication, care, and accountability. Explicability, in this broad sense, complements and facilitates autonomy.

Fairness

The development, deployment, and use of AI-based financial advice must be fair. This means that designers and organizations implementing such generative AI-based systems should ensure a fair distribution of costs and benefits and establish procedures based on unbiased and equal criteria. Participants D, E, and H referred to fairness in terms of potential bias in an algorithm. Participant D stated that there is existing pressure on AI-based models because they could discriminate or act in unwanted ways, but also sees the same issue with traditional forms of financial advice: “I think that it is often wrongly assumed that this is a problem with AI-based advice, while you can also wonder whether this issue is not bigger when it is not AI-based. I think that this would often lead to a surprising answer.” Participant D elucidated this perspective by offering an example related to consumer credit scores. Some organizations heavily depend on consumer credit scores to approve or deny loan applications, basing their decisions on sensitive credit data that can be purchased. While generally reliable, this data may be outdated or tainted in specific instances, leading to an unjustifiably low credit score when, for instance, a previous tenant or partner has unresolved debts that unjustly affect one's credit score. The participant emphasized the importance of organizations conducting manual human checks on such data, a step often overlooked when a consumer is flagged. Consequently, the participant thinks a financial advisor would often not make better decisions than an AI-based financial advisor.

Furthermore, Participants D, E, and H agreed that algorithm bias is a challenge that must be addressed. While it is debatable whether the input data in the previous example is biased, it illustrates that biased data can result in the unfair treatment of consumers. Therefore, examples were provided by Participants E and H to mitigate bias at the data input stage. These examples can prevent an algorithm from continuing to learn from biased input data, which could result in repeatedly flawed output data. Participants E and H stated the importance of carefully curating a dataset and relevant variables. Moreover, personal data must be anonymized or removed to prevent historical data bias or nonrepresentative sample bias. Participant E mentioned that with correct data curation, an algorithm without undesirable bias could, in theory, be developed. They added that this would be nearly impossible for a human financial advisor.

Several participants (A, B, C, D) agreed that AI-based financial advice can create value by offering more objective advice than traditional financial advice. Participant A stated: “You could say that the preferences of an advisor when advising an individual are not included in AI-based financial advice, which would make it more objective.” However, objectivity is relative and dependent on the developers of an algorithm. Participant C stated: “I am very cautious in claiming objectivity. That is not true, because the programmer is also a human. However, the risk is lower because it is a one-time activity you can approach from different angles. You can incorporate socially accepted norms established by multiple humans, while a single traditional advisor must consider this awareness repeatedly. We are not equipped for that, while a machine or algorithm is.” Instead of stating the importance of establishing relevant social norms, Participant D emphasized curating mathematical models: “We take the input from multiple advisors that condense this information to a formula or multiple formulas through a mathematical model. Because of this, you can also capture multiple views and opinions. Thereby, an algorithm is less susceptible to the opinions of a single advisor.” Participants nonetheless acknowledged that this method would result in the algorithm being unable to accommodate outliers, a minority of consumers whose cases deviate from what the algorithm can comprehend based on these models. As a result of more robust objectivity measurements through collective efforts, AI-based financial advice can provide a more standardized advice process than traditional financial advice. Participant B stated that when a process is developed correctly, every consumer will receive the same experience, and the resulting advice will be of higher quality than traditional financial advice. Moreover, Participant C mentioned that, through collective efforts, their organization strives to provide consumers with fully objective advice, which will always have the same result for customers in the same scenario. However, achieving full objectivity is nearly impossible because the input from advisors remains subjective. Measures are taken to limit the risk of potential bias being incorporated into the algorithm through collective feedback efforts. Consequently, the advice may reflect more objectively on a consumer's financial situation.

Another aspect of fairness is that there should be a fair distribution of costs and benefits. Participants agreed that AI-based financial advice can provide value to consumers and organizations by lowering the threshold to obtain financial advice. Participant A highlighted the value to consumers by stating that searching for AI-based advice online may be easier because it can provide an adequate solution right away, compared with searching for a suitable traditional financial advisor. Participant B stated this is especially relevant when organizations target a younger demographic: “You [younger demographic] must make an appointment with these people [traditional financial advisors], and they are not interested in that. They want financial advice to be on-demand. Therefore, per definition, this must be achieved through an online application, and ideally without human intervention.”

Accessibility can be improved by further automating the collection of financial information from consumers to develop an overview of their financial situation. Participant B stated: “We developed an app that lets you log in to governmental websites through DigiD [Dutch app to access sensitive personal information from the government safely], and it will automatically scrape relevant data from these websites. … Because you are directly connected to the source of information, you are guaranteed of the data being transferred one to one.”

Despite the potential for adding value regarding accessibility, Participants A, E, and F also highlighted fairness concerns that can negatively impact the value created for consumers and organizations. Participant E said: “You can imagine that financial advice is, first and foremost, developed for people who can pay for it. These are not necessarily the same people who need financial advice the most.” They elaborated on this by saying that organizations offering AI-based financial advice currently do not develop their advice process around targeting the needs of lower-income groups who may struggle with requesting certain government subsidies. Instead, more affluent consumers who are, for example, looking to buy a property are targeted. Participant A further highlighted the relation between costs and accessibility by stating: “The more complex I make it [the algorithm], the higher development costs will be. Then, you must also look at your target demographic. Is my target demographic large enough to recuperate the costs of the financial advice offered?” They further emphasized this statement: “The moment that costs become a threshold that must be recuperated, it will be at the cost of accessibility.” These findings highlight friction between being able to provide financial advice to specific groups within the low-income demographic and the potential to recuperate the development costs of an algorithm.

Yet, in general, Participants A, D, E, F, G, H, and I agreed that AI-based financial advice can create value through reduced costs to obtain financial advice, compared with traditional methods. Participant D stated: “If you look at it from an economic point of view, it is a lot cheaper, of course. I mean, it is an algorithm that you develop once and then must maintain and tune, but of course, a lot cheaper than if you let a human do this repeatedly.” One explanation for this statement is the economies of scale that can be realized because AI-based financial advice is offered through a digital medium. Participant E corroborated this finding in practice: “Our organization is very small, and with our personalized budget advice tool we can serve hundreds of thousands of people per year.”

While AI-based financial advice is discussed as a cost-effective alternative to traditional financial advice, Participant A voiced concern regarding complexity as a cost driver for AI-based financial advice, which may obstruct additional value from being created for customers and organizations. Participant A stated that an algorithm with low complexity would be easy to automate at a relatively low cost. However, as mentioned under accessibility, increased complexity will exponentially heighten development costs. Participant A explained their reasoning further: “The Netherlands has a complex fiscal system. If you would like to incorporate all exemptions and applications, the complexity [of the algorithm] will massively increase. This will, in the end, impact costs.” Multiple participants (E, F, G, H) agreed that complexity is a major challenge for AI-based financial advice. Participant H stated: “I think it is relatively difficult to discuss and quantify goals in AI-based financial advice.” They followed this statement with an example of a personal experience with a financial advisor in which they wanted to achieve a goal, but they were not entirely sure what the goal entailed. They clarified these goals through a conversation and adjusted the financial advice accordingly. It was hard to imagine having a prolonged conversation with an AI-based financial advisor in which goals could be discussed and, subsequently, changes could be made to the advice. These questions surrounding accessibility, value, and complexity, though perhaps at first glance seemingly distinct, are closely connected to the overarching value of fairness.

Prevention of harm

AI-based financial advice systems should be designed, deployed, and implemented according to the highest levels of technical robustness. These systems should be monitored to prevent negative impacts due to asymmetries of power, information, and other forms of vulnerability. The prevention of both intentional and unintentional harm was only mentioned by Participant A: “If everyone would act with the right intention in mind, then rules and regulations would only be required to prevent accidents. So, unintentional harm instead of intentional. The protection of the consumer should be placed first regardless of whether we are talking about traditional or AI-based financial advice. When there is the intention to make consumers do something that they should not be doing under the guise of advice and mainly benefits the advisor is what should be regulated first.” Participant A continued that their main concern is intentional misbehavior from actors as opposed to unintended accidents from organizations with experience in automation that would like to develop AI-based financial advice for the masses as a low-cost alternative to traditional financial advice.

Furthermore, participants discussed the prevention of harm as a component of other ethical issues. For example, adhering to the duty of care, as mentioned by Participants B, C, D, F, G, H, and I in the section on explicability, is a method of preventing intentional or unintentional harm. Moreover, mitigating bias in an algorithm to prevent potential discriminatory outcomes, as discussed by Participants D, E, and H in the section on fairness, is an alternative method to prevent unintentional harm. Last, compliance with the GDPR to prevent potential data breaches, as discussed by Participants F and H in the section on autonomy, is another method to prevent harm.

Trust

The European Commission (2019) Ethics Guidelines for Trustworthy AI do not include trust as a separate principle or requirement but as an overall objective. Among the ethical issues discussed with experts, trust was referred to the most. Participants A, C, D, F, G, and H identified three main factors influencing trust: interpersonal, individual, and external factors. Interpersonal trust relates to the relationship between a consumer and an advisor. Participant H stated that they consider advice from an independent source more trustworthy because it reduces the likelihood of the advice being influenced by ulterior motives. Larger organizations, such as banks, may tailor their advice to the products they offer and omit other suitable options. However, Participant G stated that research has shown that consumers often consider organizations with an established market presence more trustworthy than an independent party. Additionally, Participant H stated that AI-based financial advice promotes consumer trust by playing the expert role. Participant G added that an advisor could also play the expert role in confirming that a consumer chose a financially beneficial option.

Despite AI-based financial advice being able to react to choices made during the advice process, it is currently difficult to have a prolonged conversation. Participants A, C, and F emphasized the importance of being able to ask an advisor questions because their responses can serve as an indicator of trustworthiness. Because AI-based financial advice does not currently offer this functionality, Participants A, C, and F found it more challenging to determine whether it is more trustworthy than traditional financial advice. Participant A stated: “The moment a machine tries to win my trust, I am inclined to think, that is nice, and you seem to understand me. You are programmed impressively, but do I really trust you? I do not know what drives the algorithm. I cannot ask any questions to the machine to test whether I trust it. This is possible with an advisor who sits across from me. I can ask them questions to determine whether I trust them.” Participant F added that individual factors moderate the relationship between a consumer and an advisor. The personal experiences of consumers, their tech-savviness, and prior experience with financial products were mentioned as examples of individual factors. Consumers may see a traditional financial advisor as the more trustworthy option when these aspects are absent. Participants C and F stated that this might be because a traditional financial advisor can provide more guidance during the advice process and explain potential questions in more detail, which can positively influence trustworthiness. Moreover, Participants C and H discussed how age might influence trust. The participants mentioned that the threshold of interacting with AI-based financial advice might be lower for the younger demographic because they are often more accustomed to technology. Contrarily, the elderly demographic is often less tech-savvy and may appreciate the additional guidance a traditional financial advisor provides.

Furthermore, Participants A, C, D, F, G, and H mentioned external factors such as influence from social circles, online reviews, and the perceived authority of an organization or advisor. Participant F stated that positive word of mouth and positive experiences from other consumers could grow trust in AI-based financial advice. Participant G mentioned that they had a conversation with an academic who stated that trust stems from two components: either an expert stating that the advice is correct or confirmation from friends or family with a financial background. However, research conducted by Participant A showed that consumers are more satisfied when the source of advice is a traditional financial advisor rather than from friends, family, or AI-based financial advice. Among the probable reasons for these findings, the participant noted a lack of trust in nonfinancial experts and insecurities related to previously discussed individual factors. They continued that traditional financial advisors can be persuasive in alleviating potential concerns through a conversation.

Considerations for the Future Development of AI-Based Financial Advice

This section explores how developers of AI-based financial advice can best address ethical issues encountered during development or after algorithm deployment.

Outlook on the integration of ethical norms and values

Participants differed in their outlook on whether it would become more important to incorporate ethical norms and values into AI-based financial advice in the future. Participants A, H, and I stated that ethical norms and values would become more significant for AI-based financial advice in the future. Other participants (C, D, F) had a pessimistic outlook on incorporating ethical norms and values. Participant D stated that there is pressure to deliver efficient and quality advice to consumers. While this can be a positive development, it may result in ethical norms and values not being prioritized. Moreover, economic incentives instead of ethical incentives often drive organizations, and there currently are few incentives for organizations to incorporate ethical norms and values.

Two incentives that participants mentioned were codifying ethical norms and values into rules and regulations and the potential of organizations losing face. Despite these incentives, they continued that organizations should not want to forgo a focus on ethical practices regardless of whether these practices are mandatory by law or could result in a negative organizational image. Participant C stated that their leading cause of concern was the lack of a conversation about values: “The ethical discussion is key for the future of AI-based financial advice. Currently, there is a crisis of trust. This also relates to the nitrogen crisis 5 and all other aspects we clash with as a society. One of the main causes of this crisis is that we do not engage in a conversation about values. When you start talking about values, the conversation changes to another level and can result in a better understanding and acceptance of each other.”

Participant F feared that consumers would attach more value to the name of an organization and the look and feel of its platform than to the prioritization of ethical norms in an organization. Two reasons for this were stated. First, consumers often do not care enough to read the terms of service, let alone research how an organization operates from within. Second, when this information is available and sometimes explained, consumers often lack the knowledge to comprehend this information. While the participant expects consumers to become more knowledgeable about AI-based financial advice in the future, they cautioned that this will, in practice, only benefit a small percentage of consumers who want to better understand the terms of service or organizational governance structures.

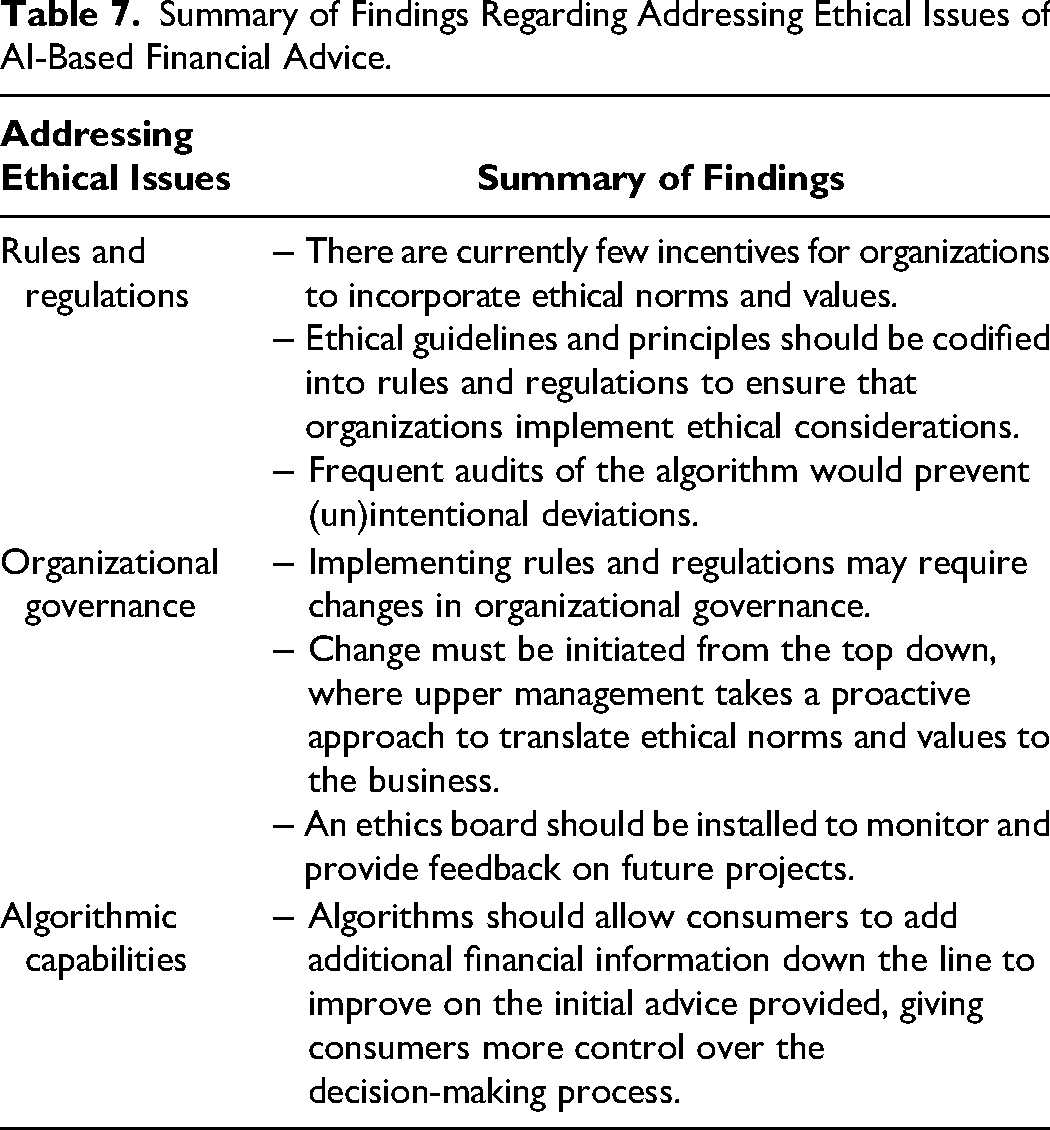

Addressing ethical issues of AI-based financial advice

Regarding how organizations can best prepare for ethical issues in the future, participants identified aspects related to legal rules and regulations, algorithmic capabilities, and organizational governance (Table 7). Participants A, C, E, F, and I emphasized the need for rules and regulations tailored to AI-based financial advice. Participant F argued that regulatory bodies should develop new rules and regulations for privacy. Participant A discussed rules and regulations related to intentional and unintentional harm, as mentioned in the previous section on nonmaleficence, and continued this reasoning by stating that rules and regulations, such as a mandatory algorithmic audit, will mainly impact actors with bad intentions and not matter much to those with good intentions. Moreover, Participant C argued that organizations should collaborate with regulatory bodies to open a conversation regarding potential regulatory improvements. Because there currently is a lack of rules and regulations for AI-based financial advice, Participant C added: “I think that if financial institutions want to win the trust of a customer and consumers, they should not only follow legal rules and regulations but should go beyond the law and to conduct business according to the right ethical path.” Participant E stated that rules and regulations are important for the future of AI-based financial advice because, otherwise, organizations would mainly prioritize economic incentives over ethical considerations. Participant I argued that rules and regulations could encompass most ethical issues. Organizations can ensure ethical business practices by developing algorithms around these rules and regulations. Also, frequent audits of the algorithm can prevent intentional or unintentional deviations from what is codified in these rules and regulations. Despite the potential benefits of regulations, Participant I added that ethical norms and values change over time and that updated regulations frequently lag behind the development of ethical norms and values

Summary of Findings Regarding Addressing Ethical Issues of AI-Based Financial Advice.

Regarding algorithmic capabilities, Participants B and C proposed an algorithm that would allow dynamic adjustments and update consumers’ financial situations in real time. A consumer would permit an app to let an application programming interface (API) access financial information. When changes in their financial situation are detected, the API will send an updated financial situation to the app. Current methods only provide consumers with a snapshot of their financial information. Accessing updated financial information in real time would help consumers provide the most current information when obtaining financial advice and enable them to address potential financial issues proactively. However, the participants noted that legal hurdles currently prevent this idea from becoming a reality.

Multiple participants (C, D, G, H, I) addressed changes required in organizational governance. Participants H and I stated that companies must first consider what type of organization they want to be. Participant H stated that there are three components to consider. First is the legal aspect of what organizations are allowed to do. Second is what can be done in terms of technology, now or in the future. Third, what do you want to do to integrate ethical norms and values as an organization? Participant I corroborated this finding by stating that organizations must consider ethical norms and values most relevant to them. Also, organizations must proactively translate these strategic choices to the business side. Currently, organizations often act reactively instead of proactively. A reactive strategy can result in considering what could have been improved from an ethical perspective in hindsight, while ethical norms and values may have already changed at that point. Therefore, organizations need to anticipate changes better and act accordingly. The participant continued that these changes start with vision and guidance from top management, which would subsequently influence the entire organization from the top down. Participant H added that creating awareness about the importance of ethical norms and values is key to achieving this. Participants C and D stated that organizations, from this point onward, must develop a consensus on ethical norms and values with relevant guidelines for future projects. Participant D added that an ethics board should be introduced to monitor and provide feedback on future projects to ensure that organizations comply with this ethical compass.

AI Ethics Framework for Financial Advice

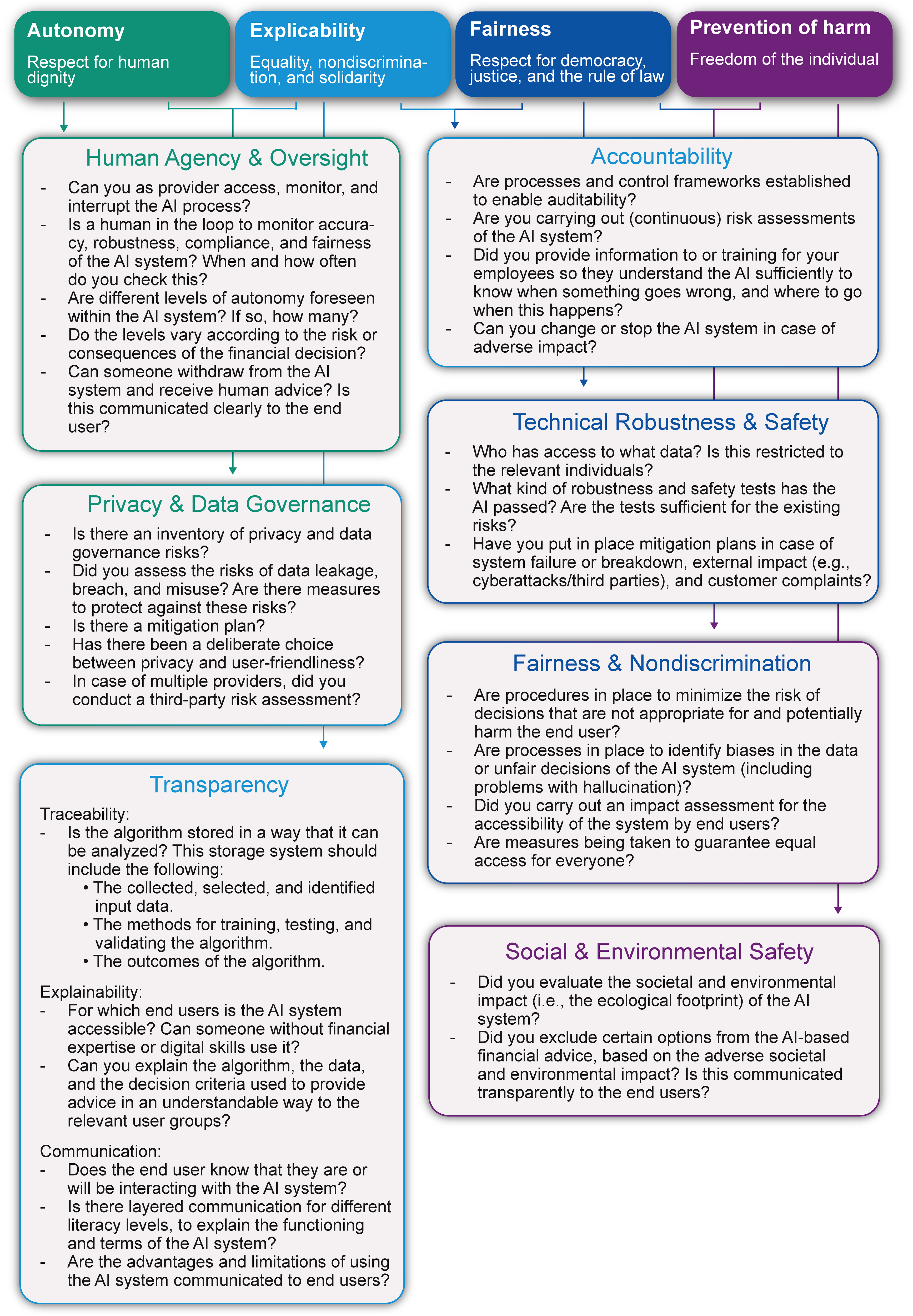

Our analysis focused on the principles and requirements of the Ethics Guidelines for Trustworthy AI (European Commission 2019). However, these principles and the accompanying requirements proposed by the guideline are often too abstract to use in practice. To provide more concrete guidance to public policy makers, researchers, managers of financial service providers, and technology developers for integrating ethical discourse in AI-based financial advice systems, we developed the AI Ethics Framework for Financial Advice (AIFA; Figure 1). In our framework, we translate the seven requirements of the Ethics Guidelines for Trustworthy AI (European Commission 2019) into a set of concrete questions that can guide public policy makers, researchers, managers of financial service providers, and technology developers. The questions in the reflection framework are examples of how the different requirements can be translated into practical actions and can be tailored and extended to the capacity of specific organizations.

A first version of this framework was developed based on the insights from the research. To validate whether the questions in this first version of the framework are comprehensive, understandable, and valuable, a feedback session (May 2024) with five additional industry experts (pension provider [two representatives], insurance company, technical provider, and supervisory body) was organized. Their feedback led to adjustments that made the questions more concrete and suitable for practice. In addition, these industry experts confirmed the value of the AIFA next to legal frameworks, especially as a conversation starter to discuss ethics within AI-based financial advice.

The set of proposed questions does not claim to be exhaustive of all possible ethical aspects of AI-based financial advice, but it offers guidance for a significant range of these aspects. Furthermore, responses to all the questions in the reflection framework, or their relevance for good financial advice, are often not immediately visible to developers or responsible figures within financial advice organizations. The depth of such answers might also vary and not extend in equal ways in all organizations. Accordingly, organizations are encouraged to ensure that diverse teams work on such challenges.

The framework can be used at any stage of AI development. However, in the spirit of ethics by design (Brey and Dainow 2024), we recommend incorporating it continuously and iteratively in designing, developing, and deploying AI-based financial advice. Additionally, even after the AI is deployed, using the framework is recommended in the case of important changes in legislation or shifts in societal acceptance.

Discussion

There is broad enthusiasm that AI in general and generative AI, in particular, can improve service delivery, support the development of new services, improve customer experiences and well-being, or help address societal challenges, for example, in medical care, public health, industry, or transportation. At the same time, (generative) AI use in sensitive domains can have unintended (or intended) negative consequences. There are also risks of harm due to inadvertent overuse. Misunderstandings in applying key terms such as “well-being” or the instrumentalization of processes associated with user engagement and the enhancement of customer experience can result in outcomes that give rise to ethical concerns. Technicians, concerned primarily with development and innovation, might overlook the need to give enough consideration to risks of negative consequences, which can result in detrimental outcomes for service providers, such as distrust, user reluctance, or social controversy, affecting current and future firm outcomes. People trust people, not things (Stilgoe 2023). (Generative) AI systems will be trusted if humans working around them have ensured high technical and ethical robustness and can rectify mistakes if necessary. Accordingly, trust is earned by meeting public expectations, rather than built on expertise. Therefore, institutions and organizations should be receptive to a broader set of considerations when designing (generative) AI systems for financial advice.

This article presents a first step toward understanding AI-based financial advice. First, using an iterative multistakeholder approach, we developed a definition of “good” AI-based financial advice, a necessary (though not sufficient) basis for AI-based financial advice. Second, this article assesses the ethical challenges associated with AI-based financial advice. Our research discusses two aspects of human autonomy: (1) individual autonomy, ensuring consumers are able to make independent choices and facilitating their capacity for informed decision-making and ultimately (financial) self-determination, and (2) algorithmic autonomy, highlighting limitations in holistic AI-based financial advice. Privacy concerns underscore the need for GDPR compliance. Explicability is crucial, calling for transparent algorithms to enhance understanding and mitigate undue complexity. Our findings also emphasize an ethical duty of care on the side of financial advice providers, urging improved legislative frameworks to address accountability for algorithmic misconduct. Fairness is discussed primarily in terms of mitigating algorithmic bias through input data handling, dispelling misconceptions regarding inherent bias in AI-based advice compared with human counterparts, and remaining attentive to questions concerning accessibility and value creation. Study participants highlighted intentional and unintentional harms, emphasizing the need for legal frameworks that address both. We identify trust as a key ethical consideration, influenced by diverse factors affecting perceptions of trustworthiness, and note that decision-making is often driven by trust rather than rational assessment. Our research highlights the value of AI in increasing objectivity and accessibility, reducing costs, and providing rapid advice. Challenges include algorithmic complexity and considering the impact of technological limitations on AI accessibility and development costs.

We used the insights from our analyses to develop the AIFA, which can guide policy makers and practitioners in their understanding of ethics and its implementation in designing AI-based financial advice. Specifically, we used the seven requirements from the Ethics Guidelines for Trustworthy AI (European Commission 2019) to provide a set of concrete questions that can serve as a reflection framework for policy makers, researchers, managers, and technology developers in designing, developing, and deploying AI-based financial advice.

By examining the ethical issues of AI-based financial advice, we respond to calls for more research on ethical issues associated with generative AI (Van Dis et al. 2023), AI for social good (Cowls et al. 2023; Du and Sen 2023; Floridi et al. 2018, 2020; Vinuesa et al. 2020), and rethinking marketing for a better world and the greater good (Chandy et al. 2021; Madan and Ashok 2023; Mende and Scott 2021), and we extend work on ethics and public policy research (Milne 2000; Sirgy 2008). Complementing research primarily focused on individuals’ psychological and emotional reactions toward AI, this article takes a multistakeholder perspective. It includes perspectives on AI-based financial advice from consumers, financial technology providers, pension funds, public policy makers from ministries and supervisory bodies, and financial planners.

Policy Recommendations

The interviewees clearly stated the need for ethical guidelines and principles to be codified into rules and regulations. AI-based financial advice is a rapidly advancing technology in its early stages, lacking comprehensive regulatory frameworks to define ethical boundaries for its development. Regulatory bodies should initiate the formulation of specific rules and regulations tailored to AI-based financial advice to prepare for its further evolution. This preparedness is essential because the complexity of AI-based financial advice may lead organizations to expand their capabilities hastily, potentially overlooking ethical considerations.

Providers of AI-based financial advice should be incentivized to surpass mere compliance with legal requirements. This entails integrating ethical considerations from the outset of development and adopting ethics-by-design principles. Since ethical norms change over time, organizations must adopt a proactive approach to ethics. This enables organizations to anticipate changes in ethical norms and values and incorporate a forward-looking perspective into their decision-making rather than addressing ethical concerns only in hindsight. Enacting positive organizational change requires aligning strategic goals with business objectives, including balancing economic incentives and ethical norms. These objectives need not be mutually exclusive; organizations should seek a middle ground where ethics and economic incentives coexist at their respective optimum. Adopting AI-based financial advice through ethics-by-design methodologies can help organizations fulfill their duty of care to consumers.

Some ethical issues identified in this article are also present in traditional financial advice (see Table 2, Panel C), but historically, these issues have received little attention. Therefore, public policy makers, regulatory bodies, and providers of traditional financial advice should consider the findings from this research to also improve human financial advice. This consideration is essential because both traditional and AI-based financial advice require determining which ethical norms and values are deemed to constitute good financial advice. Thus, improving traditional financial advice through the implementation of ethical considerations can ultimately benefit the future development of AI-based financial advice.

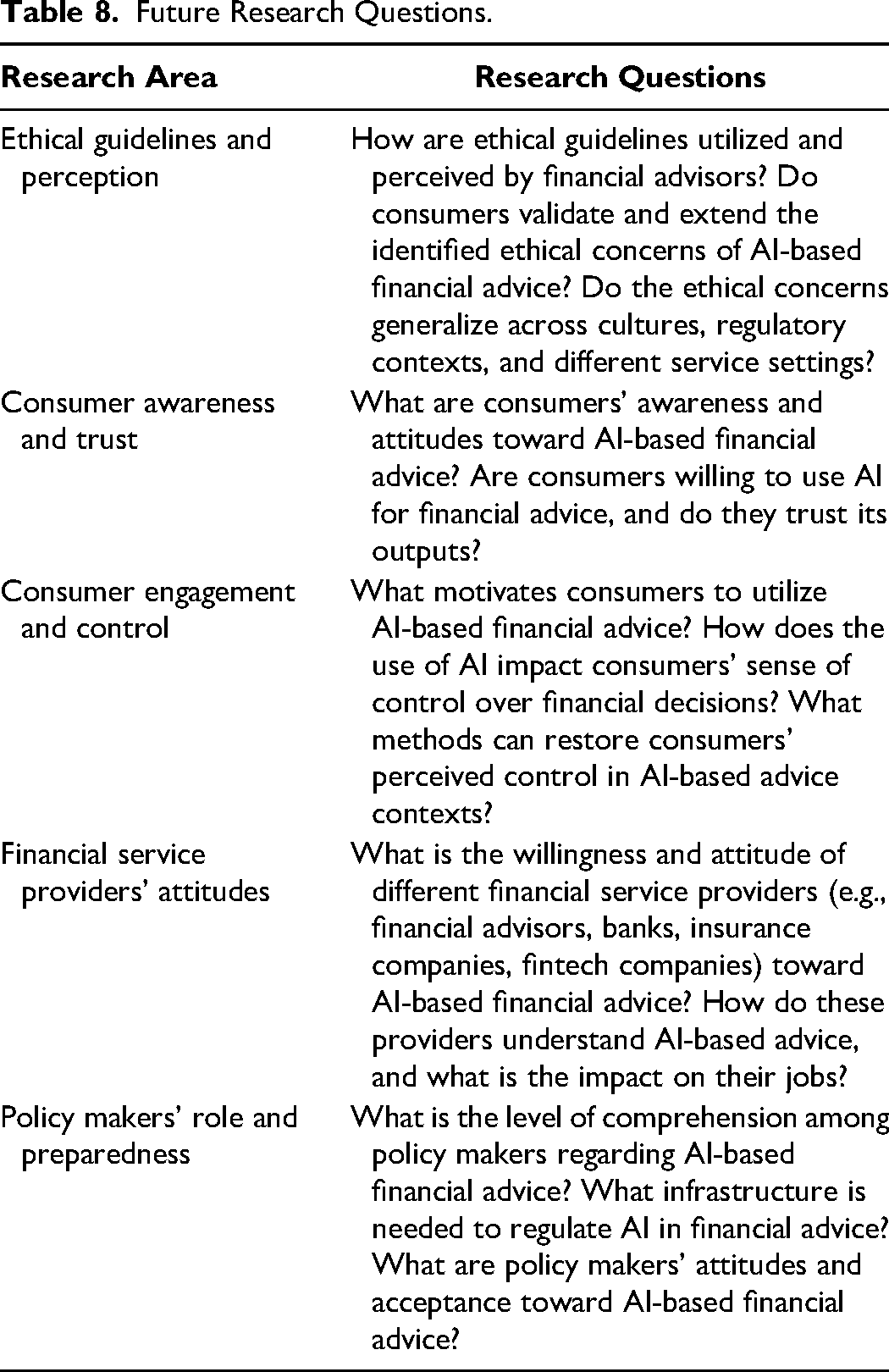

This article aims to stimulate discussion on ethical guidelines for AI-based financial advice, laying the groundwork for future research in this emerging field. Table 8 outlines key areas for future research in the field of AI-based financial advice.

Future Research Questions.

Future research should extend our analyses of the ethical issues in AI-based financial advice, particularly how human financial advisors implement and view these guidelines. Additionally, it is crucial to investigate whether consumers validate the identified ethical concerns and whether these concerns apply across different cultures, regulatory environments, and financial service settings. Future research should also assess consumers’ attitudes toward AI-based financial advice, their willingness to use it, and the level of trust they place in AI-generated decisions. Understanding users’ motivations for utilizing AI-supported financial advice is crucial for designing technology and implementing ethical principles. A key consideration in this context is the degree of personal control over financial decisions that users might give up when entrusting the decision-making process to AI. There is limited understanding of perceived control in financial advice contexts and how to restore users’ sense of control, irrespective of whether the advice is generated by a human or AI. Research into financial service providers’ attitudes should assess how different providers—such as banks, insurance companies, and fintech firms—perceive AI-based advice, their readiness to embrace AI, and the potential impact on their roles. Service providers are increasingly deploying AI to support employees (i.e., financial advisors). In future research, exploring how ethical guidelines are utilized and perceived by human financial advisors who use AI to support their financial advice would be insightful. Finally, policy makers’ role and preparedness are critical in shaping the regulatory landscape for AI in financial services. Future research should evaluate policy makers’ understanding of AI developments, the necessary infrastructure for effective regulation, and their acceptance of AI's role in financial advice.

AI Ethics Framework for Financial Advice.